Submitted:

16 August 2024

Posted:

20 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose an Adaptive Differential Privacy Federated Learning (AWDP-FL) framework. In each iteration of training, adaptive gradient clipping is first employed to filter parameters and determine the gradient parameters for updates. This is followed by third-moment-based adaptive gradient updates, and finally, dynamic Gaussian noise is added when uploading the parameters. The noise-perturbed model parameters are then uploaded to the server for global updates, ensuring data privacy during parameter transmission.

- We propose a weight-based adaptive gradient clipping method. First, the client computes the weight coefficients of each model parameter and uses the adaptive weight coefficients to determine the threshold for gradient clipping. Adaptive gradient clipping is then performed, followed by adaptive gradient updates to update the model parameters.

- Multiple comparative experiments were conducted on three public datasets against various state-of-the-art algorithms, and the usability of the proposed algorithm was analyzed across different metrics.

2. Relevant Technical Theories

2.1. Federated Learning

2.2. Differential Privacy

3. Weighted Adaptive Differential Privacy Federated Learning Framework

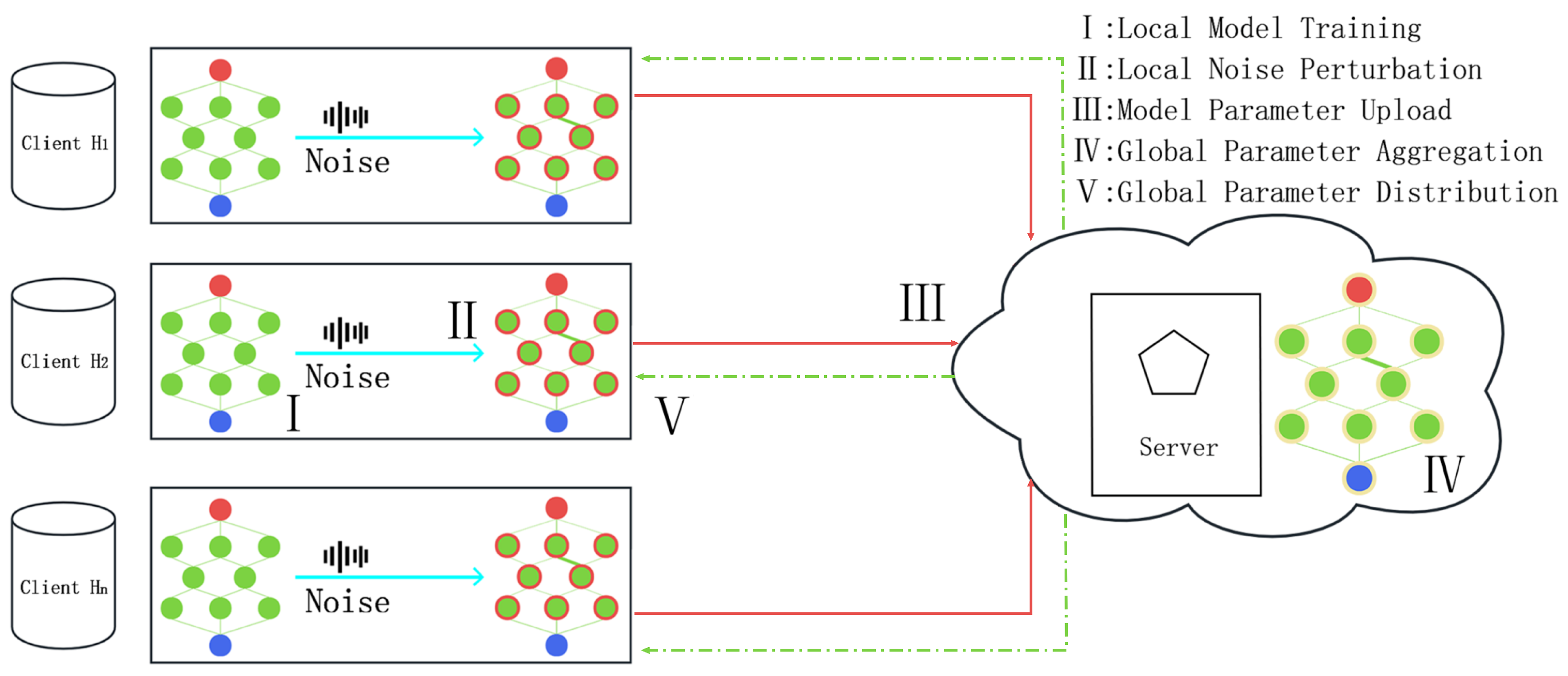

3.1. Workflow of the Framework

- Local Model Training: Clients must perform multiple gradient descents locally to reduce communication with the server. First, in each local iteration, the adaptive gradient clipping WDP (Algorithm 2), based on model weights, is used for gradient clipping, which enhances the model’s robustness. Next, the adaptive gradient update AGU (Algorithm 3) is employed to determine the result of the model training in this iteration. Notably, this algorithm does not need to consider the training results of other clients globally; clients operate independently without affecting each other. Detailed steps will be presented in the next section.

-

Parameter Upload: To protect client privacy, hierarchical noise perturbation must be added to the model parameters before uploading. The model parameters processed by the WDP and AGU algorithms can reduce the noise impact caused by individual samples. This stability helps to smooth the loss function descent, accelerating convergence while minimizing the effect of noise on model parameters. The noise addition steps are as follows (using a single client as an example):Here, L represents the neural network layer level, which serves as the basis for all operations in this paper. denotes the noise-perturbed model parameters, are the unperturbed model parameters, M represents the client data, B is the training data for each batch, t is the current global round, d is the current training data, and e is the current batch’s local iteration. represents the model gradient of the l-th layer in the t-th round after adaptive momentum update. is the noise perturbation added before uploading, which satisfies , where is the sensitivity determined by the adaptive clipping threshold.

- Global Parameter Aggregation: After the local training of sampled clients is completed, the server performs weighted aggregation of the noise-perturbed parameters uploaded by the clients to update the global model for the next round, specifically as follows:

- Global Parameter Distribution: In the federated learning framework, the server employs a selective parameter broadcasting strategy. Specifically, the server does not broadcast the latest model parameters to all clients every time; instead, it randomly selects a portion of clients for parameter updates. This approach avoids redundant broadcasting and reduces unnecessary communication overhead. During this process, the server does not need direct access to the clients’ local data. After receiving the global model from the server, clients adaptively update their local models based on these parameters, maintaining the efficiency of model updates.

| Algorithm 1 Weighted Adaptive Differential Privacy Federated Learning Framework |

|

Input: Initial model parameters , learning rate , client set O, randomly selected clients for training H, communication rounds between client and server T, number of neural network layers L, batch size B, client data M, adaptive clipping history norm sequence P, number of local iterations E, momentum update parameters , , .

Output: Model parameters

|

3.2. Weight-Based Adaptive Gradient Clipping and Update

| Algorithm 2 Weight-Based Adaptive Gradient Clipping (WDP) |

|

Input: Unupdated model parameters after training , number of neural network layers L, communication rounds between client and server T, batch size B, client data M, adaptive clipping history norm sequence P.

Output: Processed model parameters .

|

| Algorithm 3 Adaptive Gradient Update (AGU) |

|

Input: Adaptively clipped model parameters , learning rate , communication rounds between client and server T, number of neural network layers L, momentum update parameters , , .

Output: Model parameters .

|

3.3. Privacy Analysis

4. Experiments

4.1. Experimental Setup

- MNIST Dataset: Based on a single-channel handwritten digit recognition dataset, it contains images of digits from 0 to 9, with each image being a 28×28 pixel grayscale image. The dataset includes 60,000 images for training and 10,000 images for testing.

- Fashion-MNIST: Based on a single-channel fashion classification dataset, it contains 70,000 grayscale images of 10 different types of clothing, with each image being 28×28 pixels. The classification for testing and validation is the same as the MNIST dataset but is more challenging.

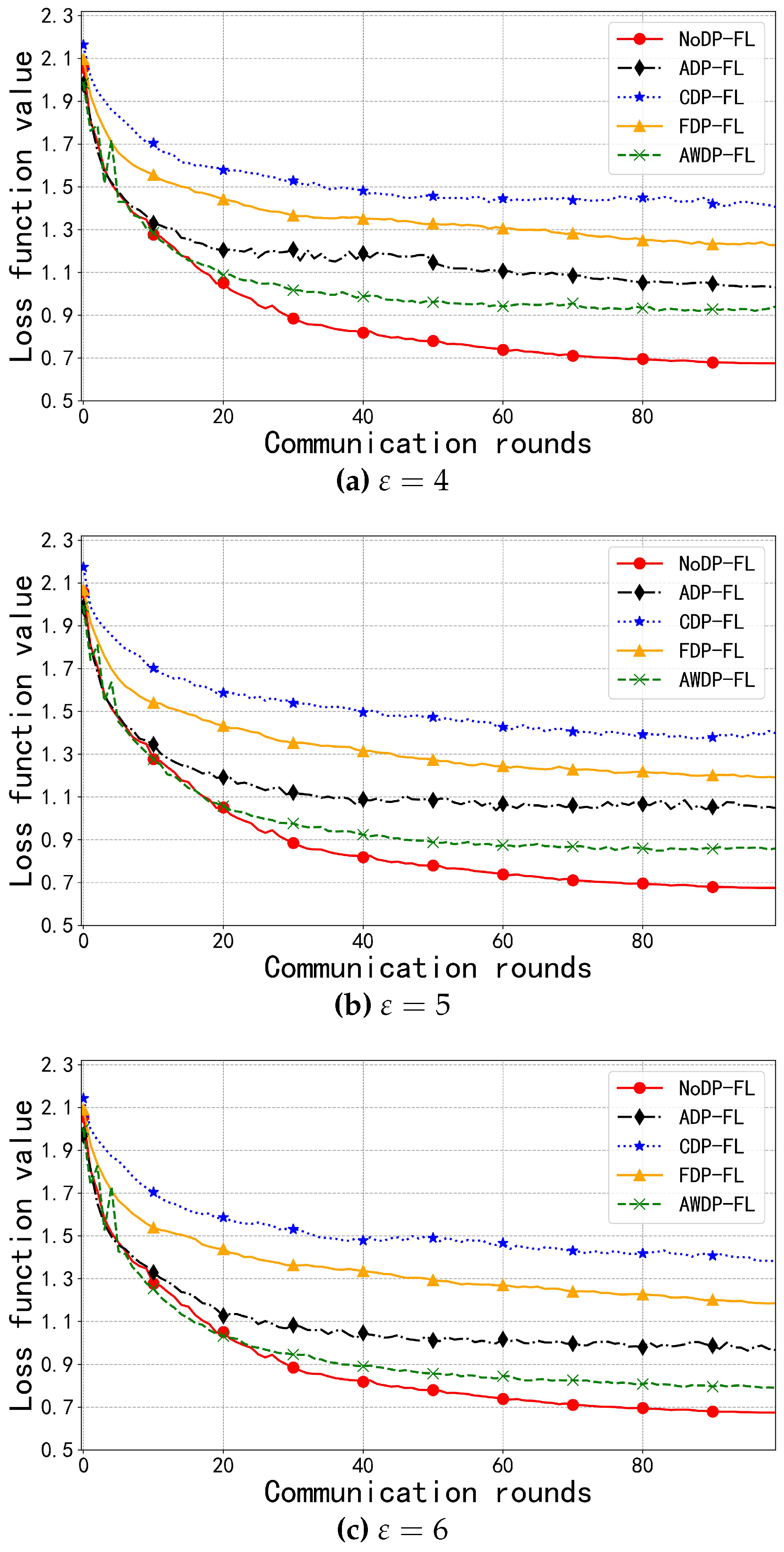

- CIFAR-10 Dataset: Based on three-channel color images of 10 classes, such as ships, airplanes, cars, etc., each image is 32×32 pixels. The dataset includes 50,000 images for training and 10,000 images for testing.

4.2. Experimental Results and Analysis

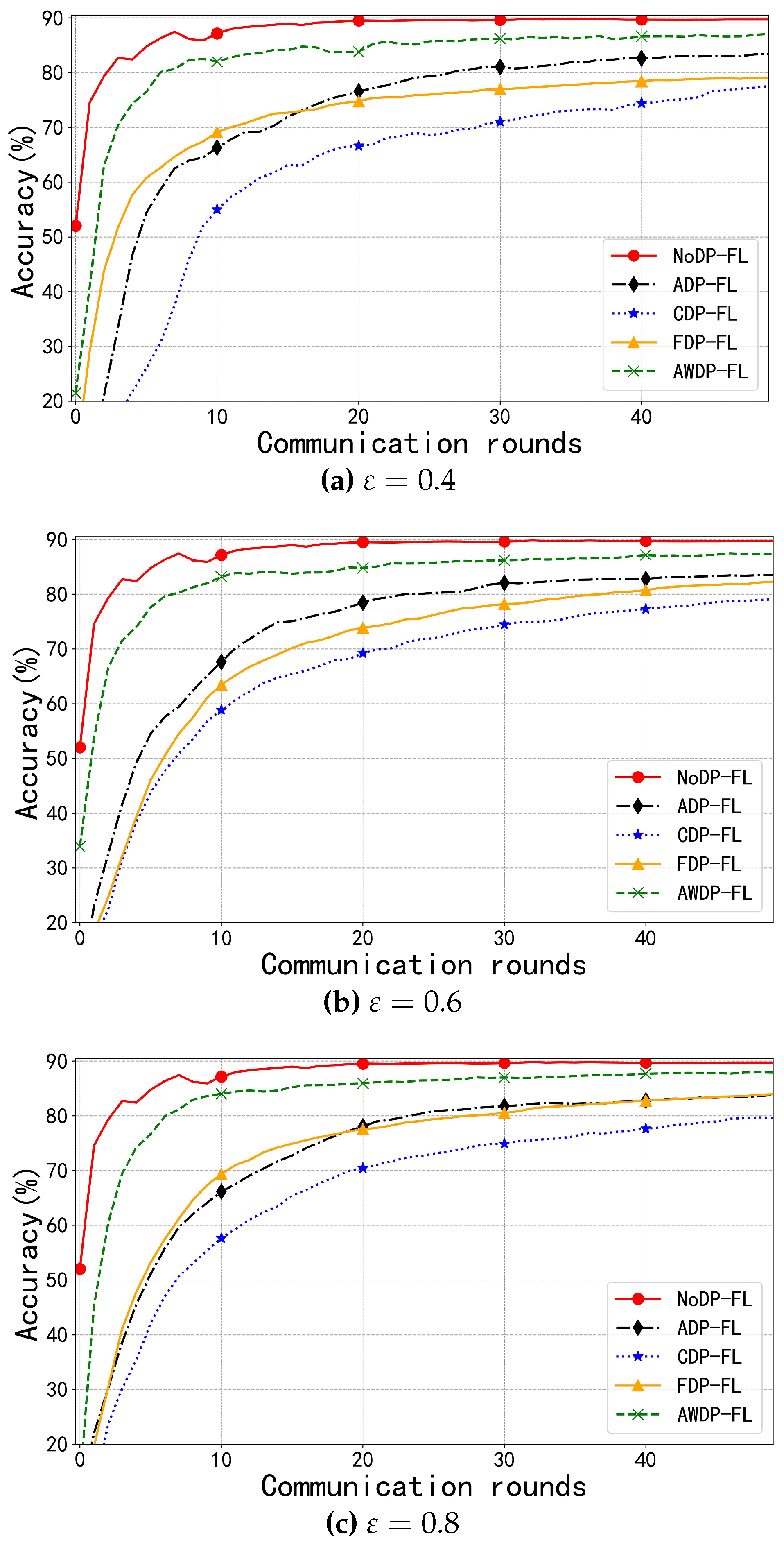

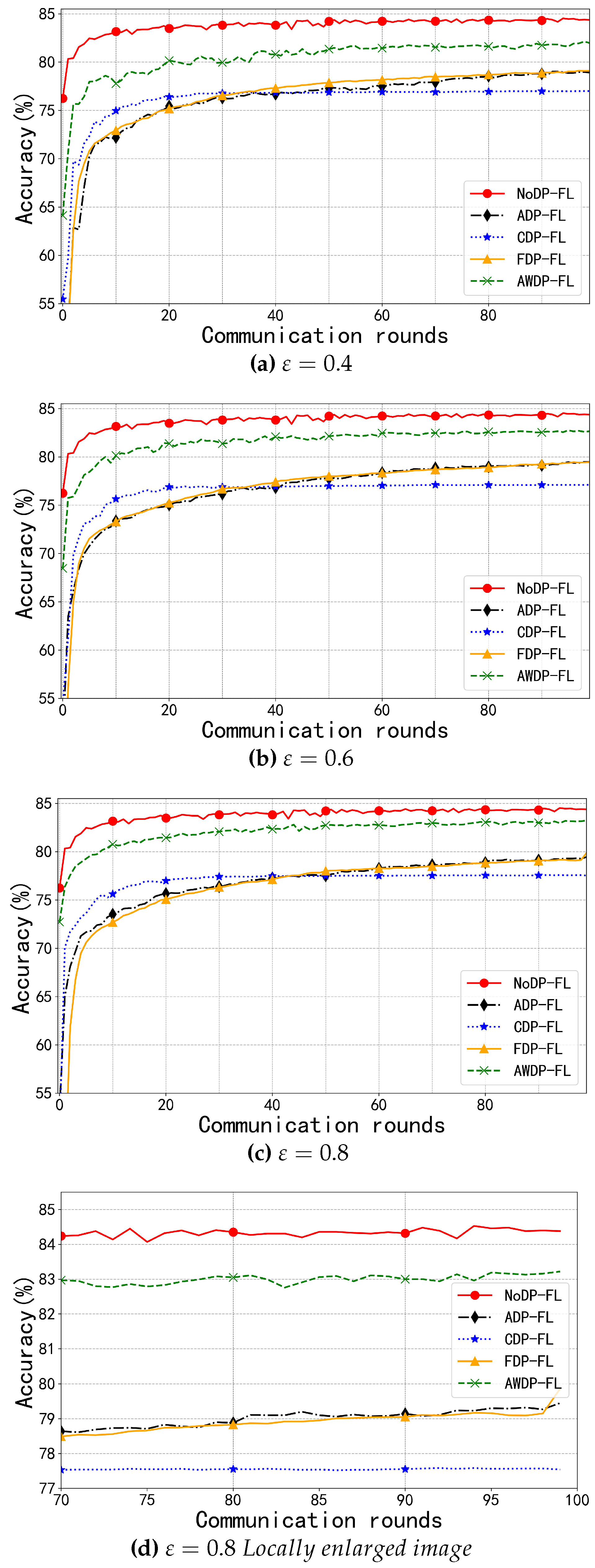

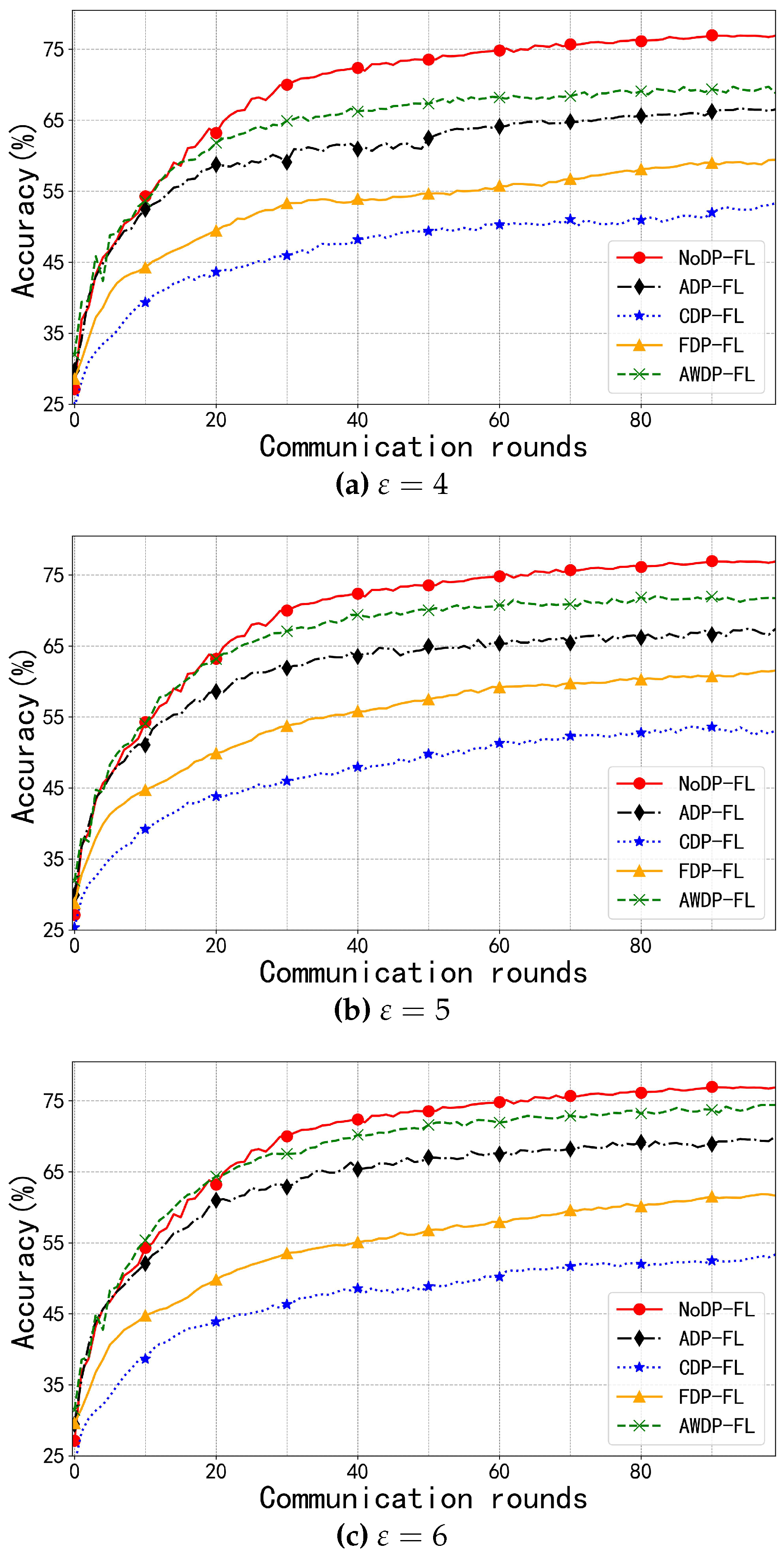

4.2.1. Model Accuracy Analysis

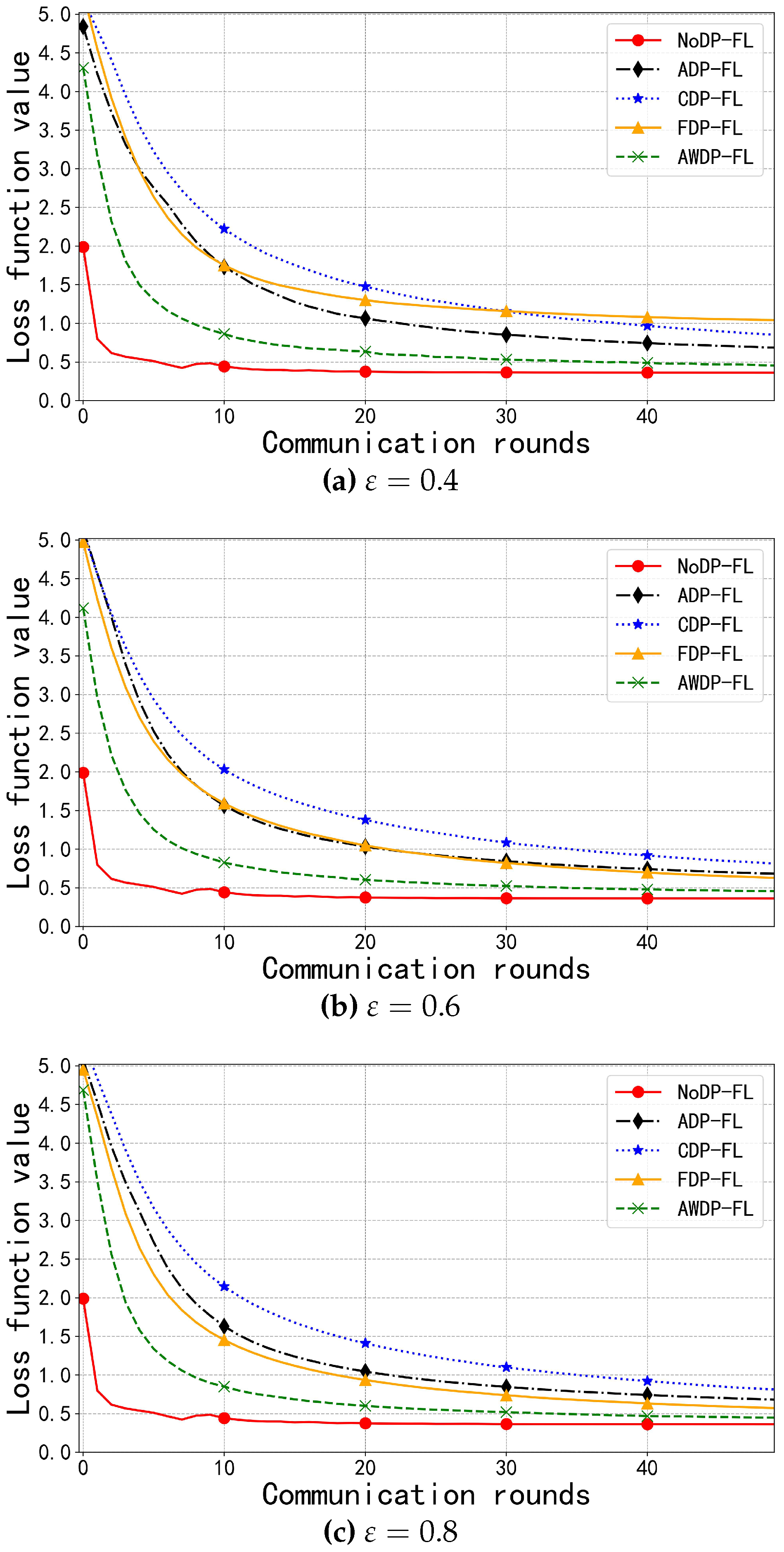

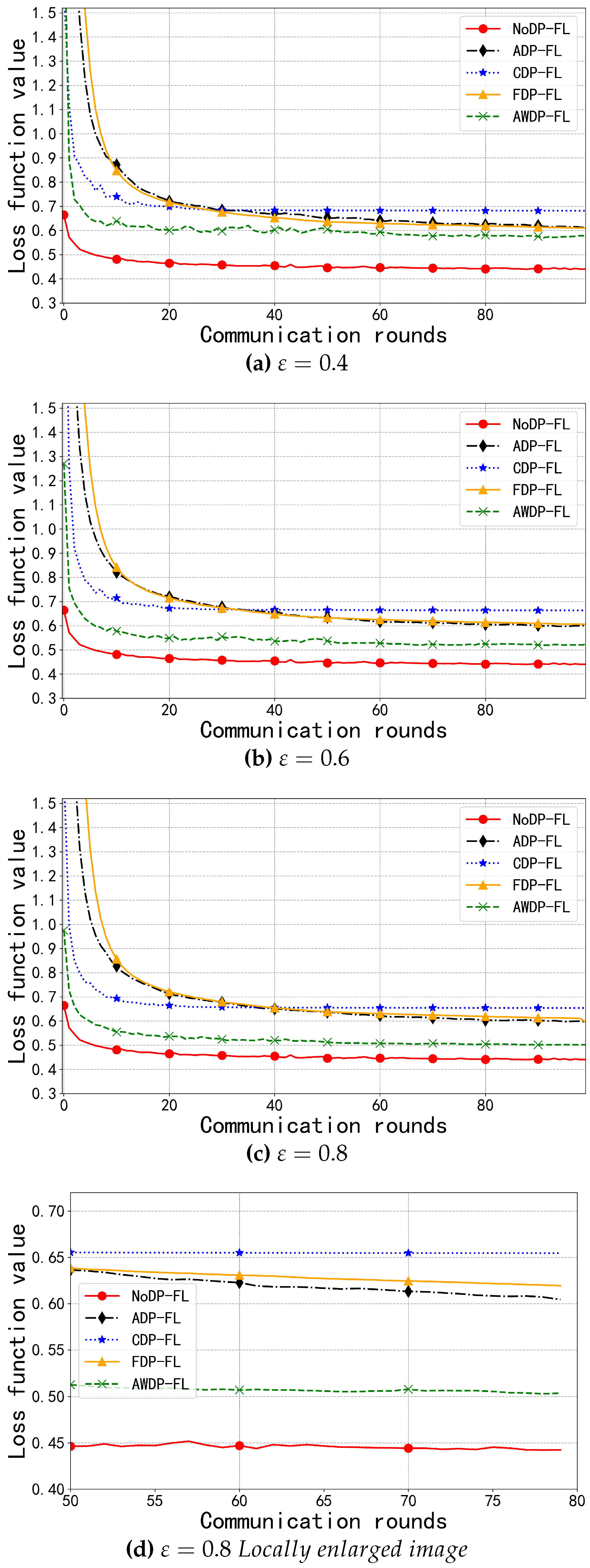

4.2.2. Model Loss Analysis

5. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- McMahan, B.; Moore, E.; Ramage, D.; et al. Communication-efficient learning of deep networks from decentralized data. In Artificial Intelligence and Statistics; PMLR: 2017; pp. 1273–1282. https://proceedings.mlr.press/v54/mcmahan17a.html.

- Ma, J.; Naas, S. A.; Sigg, S.; et al. Privacy-preserving federated learning based on multi-key homomorphic encryption. International Journal of Intelligent Systems 2022, 37, 5880–5901. [Google Scholar] [CrossRef]

- Park, J.; Lim, H. Privacy-preserving federated learning using homomorphic encryption. Applied Sciences 2022, 12, 734. [Google Scholar] [CrossRef]

- Warnat-Herresthal, S.; Schultze, H.; Shastry, K. L.; et al. Swarm learning for decentralized and confidential clinical machine learning. Nature 2021, 594, 265–270. [Google Scholar] [CrossRef] [PubMed]

- Hosseini, S. M.; Sikaroudi, M.; Babaei, M.; et al. Cluster based secure multi-party computation in federated learning for histopathology images. In Proceedings of the International Workshop on Distributed, Collaborative, and Federated Learning; Springer Nature Switzerland: Cham, Switzerland, 2022; pp. 110–118. [Google Scholar] [CrossRef]

- Kanagavelu, R.; Wei, Q.; Li, Z.; et al. CE-Fed: Communication efficient multi-party computation enabled federated learning. Array 2022, 15, 100207. [Google Scholar] [CrossRef]

- Zhu, L.; Liu, Z.; Han, S. Deep leakage from gradients. Advances in Neural Information Processing Systems 2019, 32. [Google Scholar] [CrossRef]

- Park, J.; Lim, H. Privacy-preserving federated learning using homomorphic encryption. Applied Sciences 2022, 12, 734. [Google Scholar] [CrossRef]

- Sun, L.; Lyu, L. Federated model distillation with noise-free differential privacy. arXiv preprint arXiv:2009.05537 2020. [CrossRef]

- Chamikara, M. A. P.; Liu, D.; Camtepe, S.; et al. Local differential privacy for federated learning. arXiv preprint arXiv:2202.06053 2022. [CrossRef]

- Truex, S.; Liu, L.; Chow, K. H.; et al. LDP-Fed: Federated learning with local differential privacy. In Proceedings of the Third ACM International Workshop on Edge Systems, Analytics and Networking; 2020; pp. 61–66. [CrossRef]

- Sun, L.; Qian, J.; Chen, X. LDP-FL: Practical private aggregation in federated learning with local differential privacy. arXiv preprint arXiv:2007.15789 2020. [CrossRef]

- Zhao, Y.; Zhao, J.; Yang, M.; et al. Local differential privacy-based federated learning for internet of things. IEEE Internet of Things Journal 2020, 8, 8836–8853. [Google Scholar] [CrossRef]

- Liu, W.; Cheng, J.; Wang, X.; et al. Hybrid differential privacy based federated learning for Internet of Things. Journal of Systems Architecture 2022, 124, 102418. [Google Scholar] [CrossRef]

- Shen, X.; Liu, Y.; Zhang, Z. Performance-enhanced federated learning with differential privacy for internet of things. IEEE Internet of Things Journal 2022, 9, 24079–24094. [Google Scholar] [CrossRef]

- Geyer, R. C.; Klein, T.; Nabi, M. Differentially private federated learning: A client level perspective. arXiv preprint arXiv:1712.07557 2017. [CrossRef]

- Wu, X.; Zhang, Y.; Shi, M.; et al. An adaptive federated learning scheme with differential privacy preserving. Future Generation Computer Systems 2022, 127, 362–372. [Google Scholar] [CrossRef]

- Wang, F.; Xie, M.; Li, Q.; Wang, C. An Adaptive Clipping Differential Privacy Federated Learning Framework. Journal of Xidian University 2023, (04), 111–120. [Google Scholar] [CrossRef]

- Zhao, J.; Yang, M.; Zhang, R.; et al. Privacy-enhanced federated learning: A restrictively self-sampled and data-perturbed local differential privacy method. Electronics 2022, 11, 4007. [Google Scholar] [CrossRef]

- Shen, X.; Liu, Y.; Zhang, Z. Performance-enhanced federated learning with differential privacy for internet of things. IEEE Internet of Things Journal 2022, 9, 24079–24094. [Google Scholar] [CrossRef]

- Lian, Z.; Wang, W.; Huang, H.; et al. Layer-based communication-efficient federated learning with privacy preservation. IEICE TRANSACTIONS on Information and Systems 2022, 105, 256–263. [Google Scholar] [CrossRef]

- Baek, C.; Kim, S.; Nam, D.; et al. Enhancing differential privacy for federated learning at scale. IEEE Access 2021, 9, 148090–148103. [Google Scholar] [CrossRef]

- Yang, Q.; Liu, Y.; Chen, T.; et al. Federated machine learning: Concept and applications. ACM Transactions on Intelligent Systems and Technology (TIST) 2019, 10, 1–19. [Google Scholar] [CrossRef]

- Dwork, C.; Roth, A. The algorithmic foundations of differential privacy. Foundations and Trends® in Theoretical Computer Science 2014, 9, 211–407. [Google Scholar] [CrossRef]

- Dwork, C.; Rothblum, G. N.; Vadhan, S. Boosting and differential privacy. In 2010 IEEE 51st Annual Symposium on Foundations of Computer Science; IEEE: 2010; pp. 51–60. [CrossRef]

| Algorithm / Privacy Budget | |||

|---|---|---|---|

| NoDP-FL [1] | 89.84 | ||

| CDP-FL [16] | 78.01 | 79.32 | 79.85 |

| ADP-FL [18] | 83.64 | 83.80 | 84.01 |

| FDP-FL [17] | 79.25 | 82.48 | 84.17 |

| AWDP-FL | 87.59 | 87.96 | 88.10 |

| Algorithm / Privacy Budget | |||

|---|---|---|---|

| NoDP-FL [1] | 84.53 | ||

| CDP-FL [16] | 77.01 | 77.12 | 77.58 |

| ADP-FL [18] | 79.02 | 79.40 | 79.45 |

| FDP-FL [17] | 79.12 | 79.46 | 79.52 |

| AWDP-FL | 82.13 | 82.76 | 83.22 |

| Algorithm / Privacy Budget | |||

|---|---|---|---|

| NoDP-FL [1] | 76.99 | ||

| CDP-FL [16] | 53.05 | 53.37 | 53.65 |

| ADP-FL [18] | 66.72 | 67.45 | 70.00 |

| FDP-FL [17] | 59.44 | 61.56 | 61.87 |

| AWDP-FL | 70.00 | 72.00 | 74.42 |

| Algorithm / Privacy Budget | |||

|---|---|---|---|

| NoDP-FL [1] | 0.3601 | ||

| CDP-FL [16] | 0.8341 | 0.8013 | 0.7994 |

| ADP-FL [18] | 0.6734 | 0.6709 | 0.6686 |

| FDP-FL [17] | 1.0365 | 0.6180 | 0.5644 |

| AWDP-FL | 0.4384 | 0.4389 | 0.4323 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).