Submitted:

14 August 2024

Posted:

15 August 2024

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

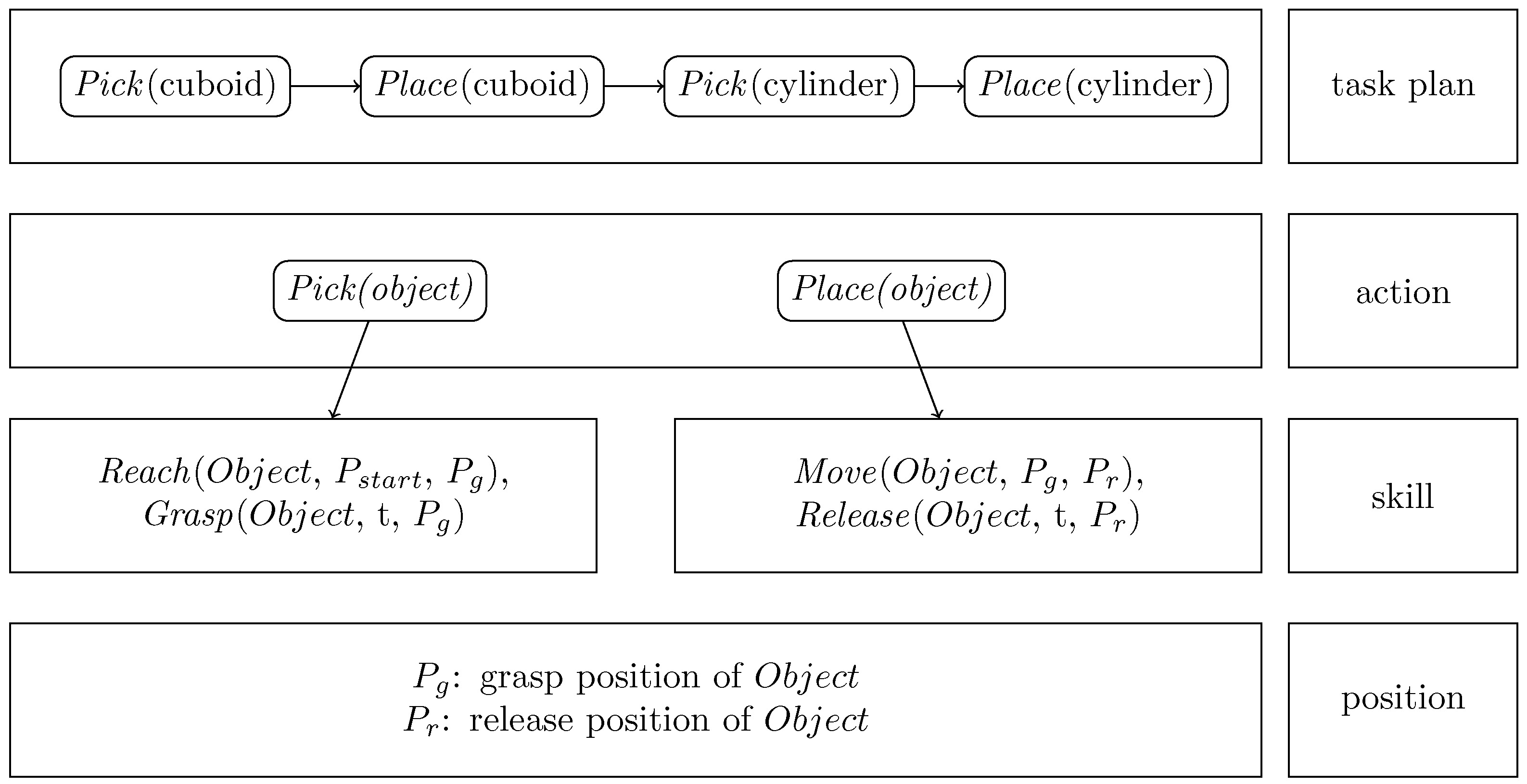

- Skill Level: This involves learning specific movements or actions that a robot must perform. Skills are the fundamental building blocks, such as grasping an object.

- Task Level: At this level, the robot learns to combine multiple skills to perform a more complex activity.

- Goal Level: This highest level involves understanding the overall objective of tasks.

- How can existing frameworks effectively detect human hand movements from RGB images?

- How can the object be detected without manually collecting and annotating data?

- How can the task-level trajectories be segmented into skills?

- How can the segmented skills be represented to be adaptable to new situations?

2. Materials and Methods

2.1. Task Model Representation

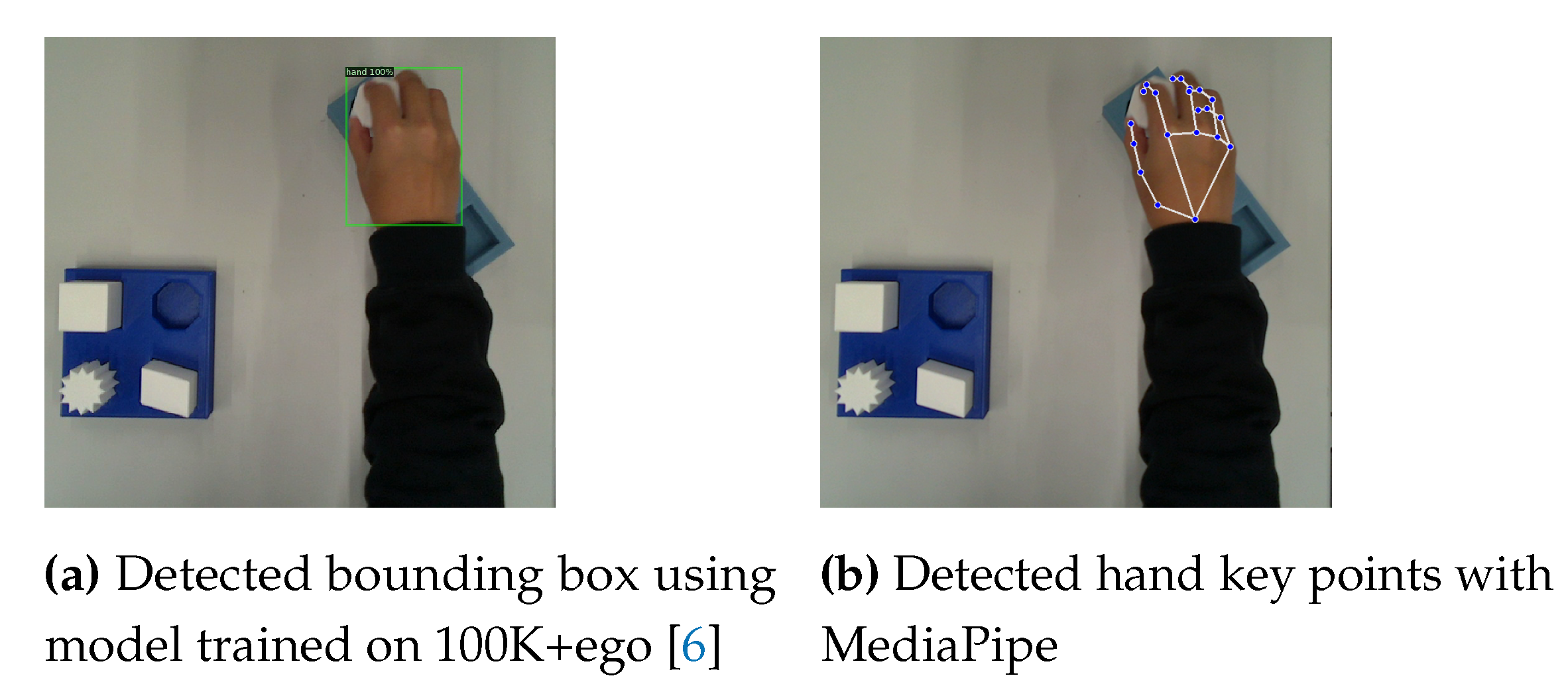

2.2. Hand Detection

2.3. Object Detection

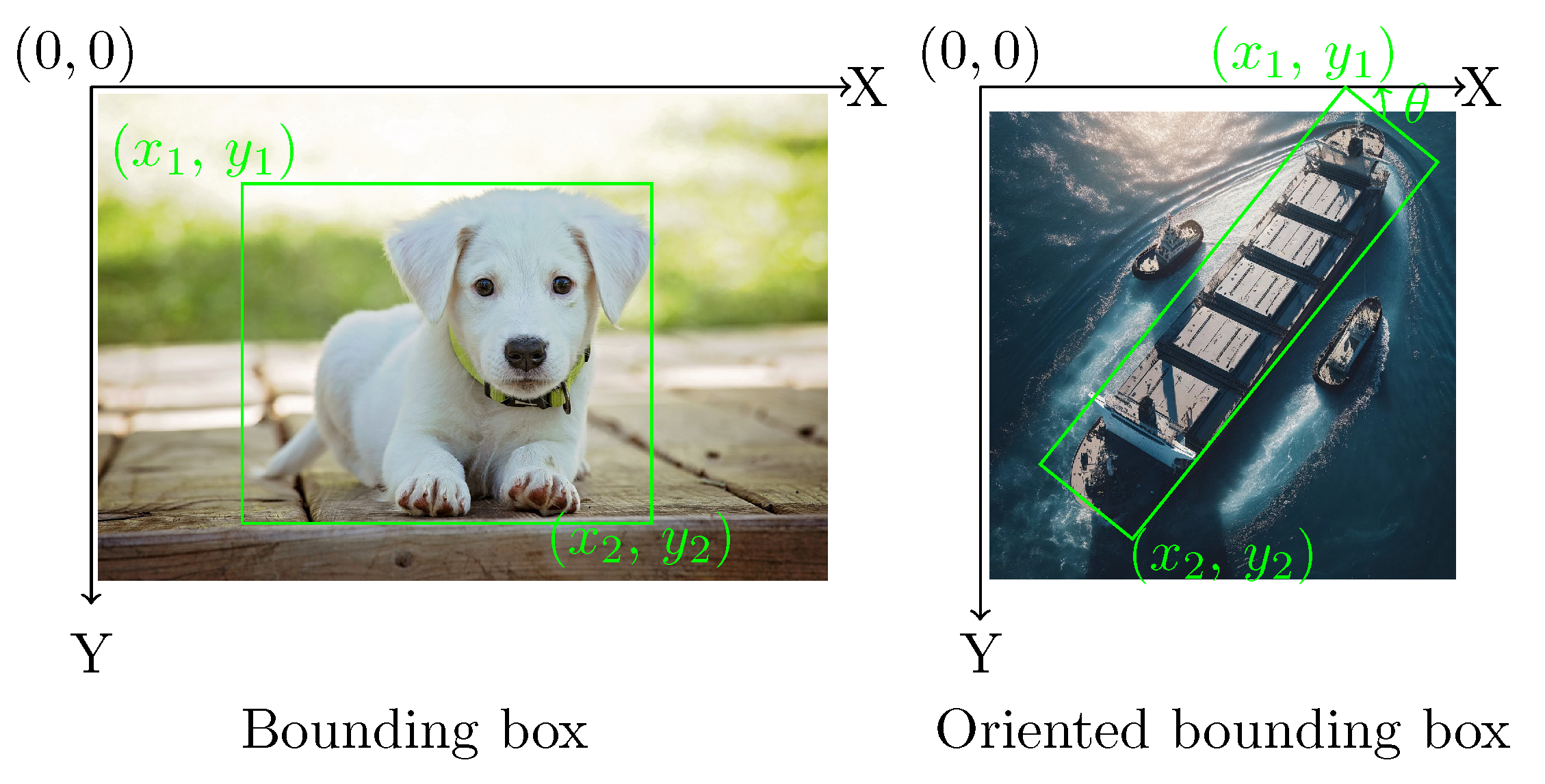

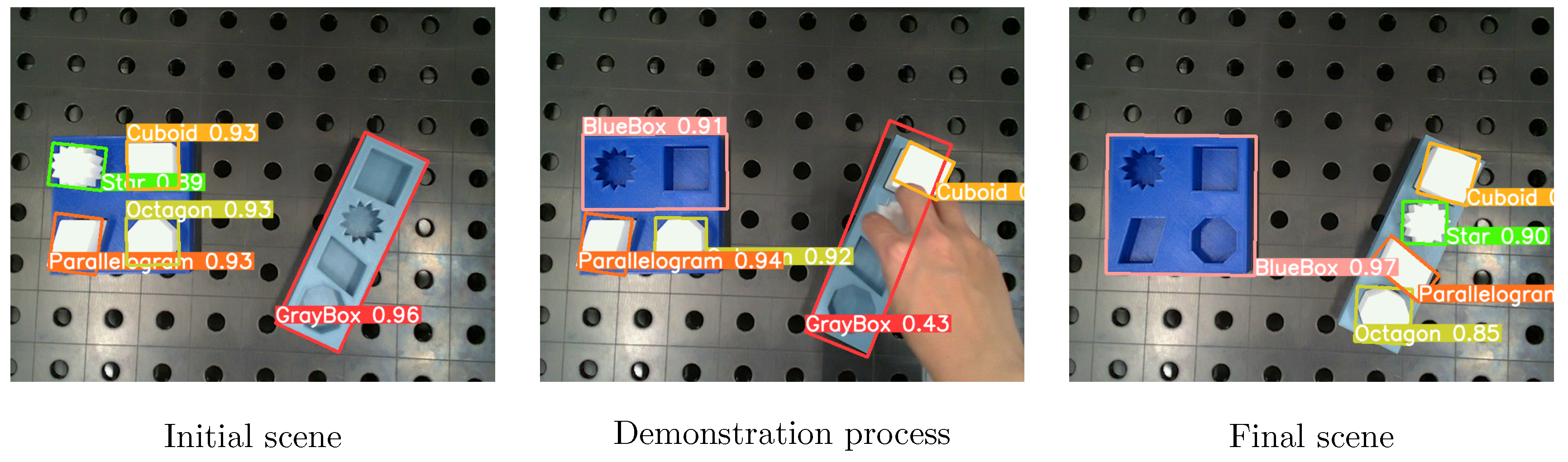

2.3.1. Data Generation

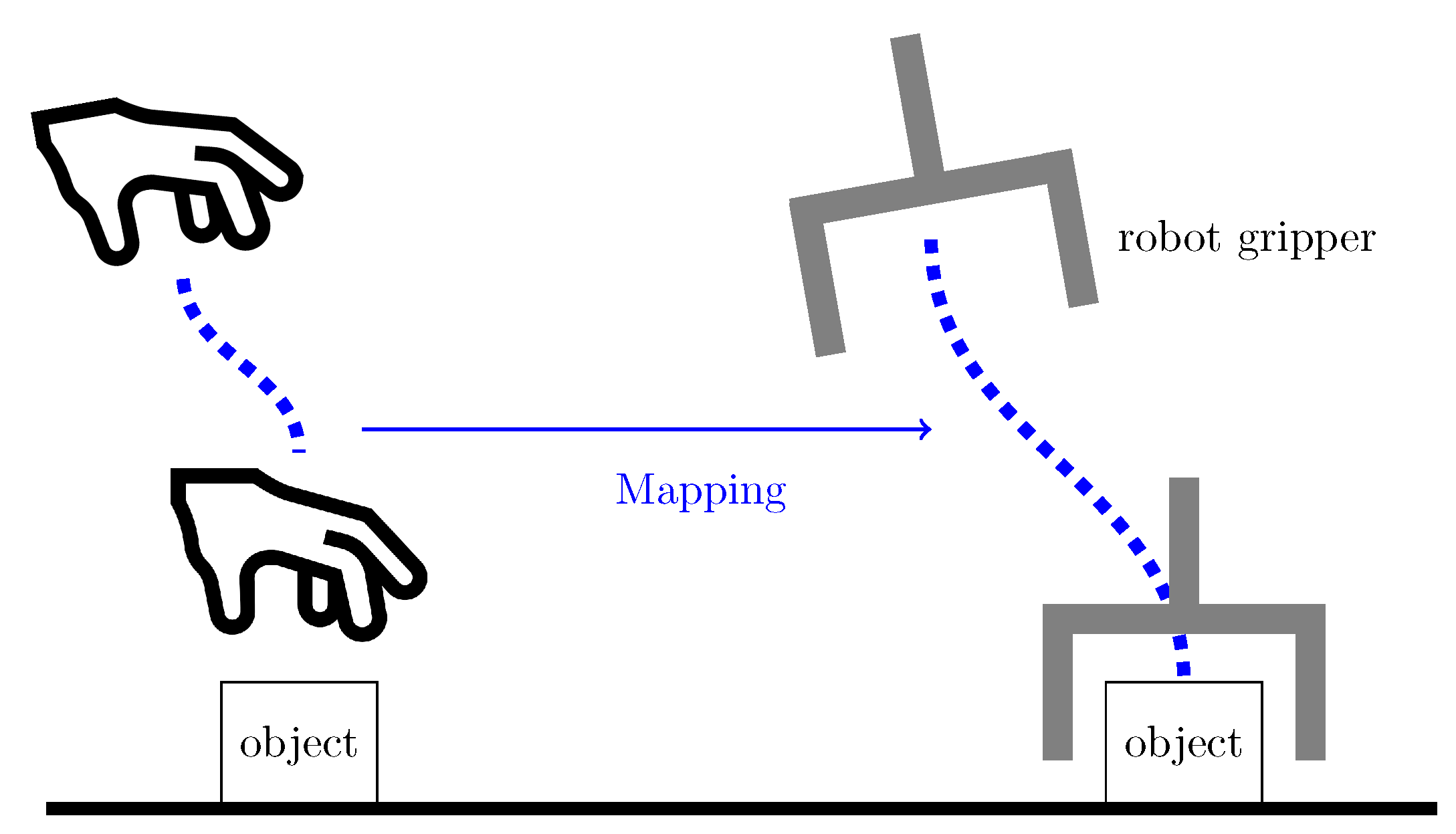

2.4. Mapping Motion from a Human Hand to a Robot End-Effector

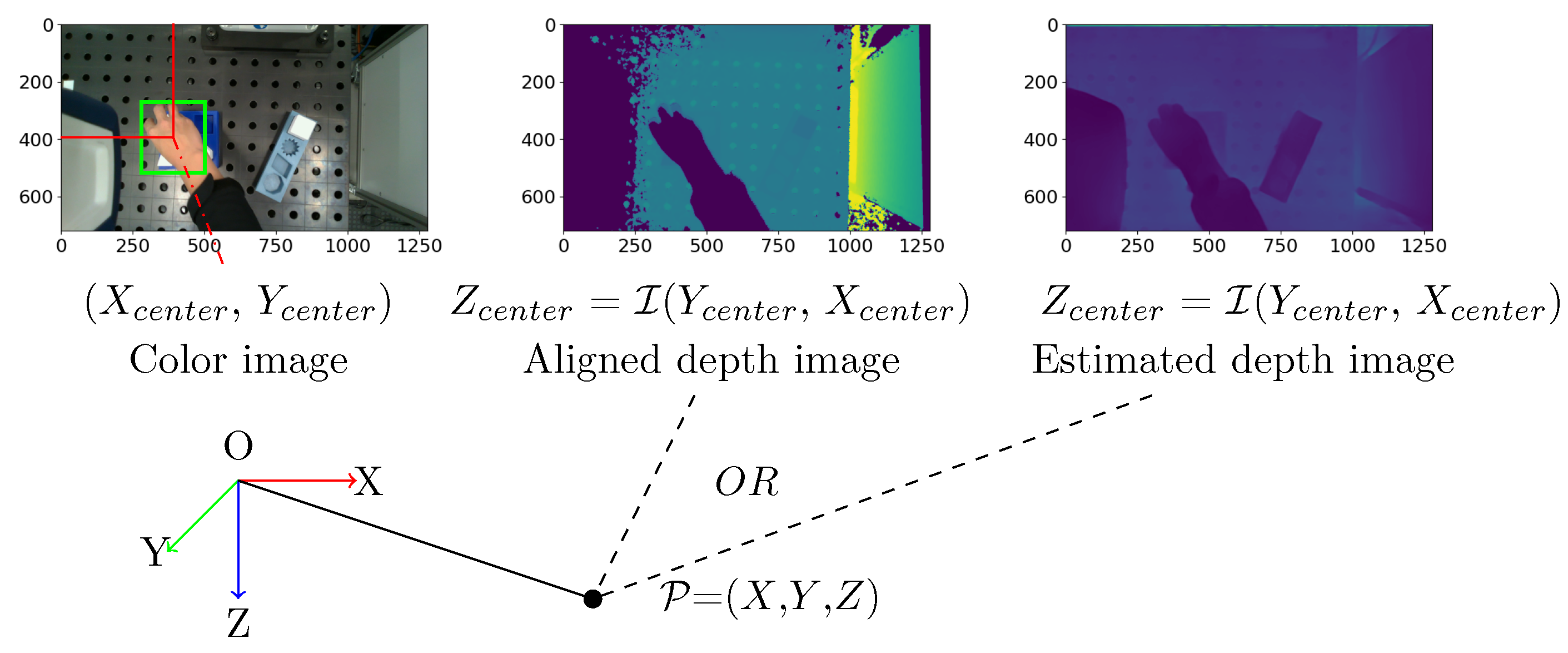

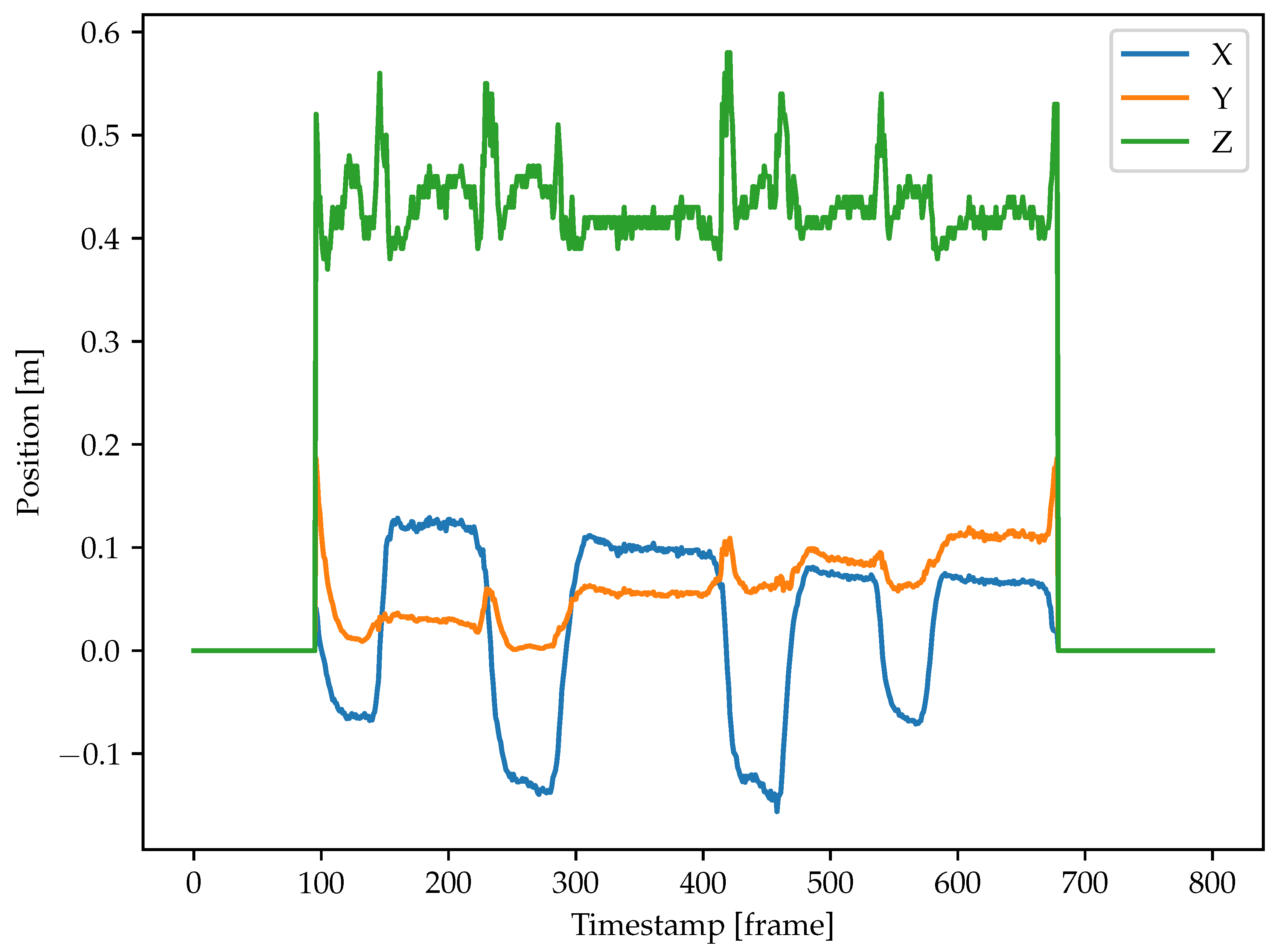

2.4.1. Trajectory Generation for the Human Hand and the Objects

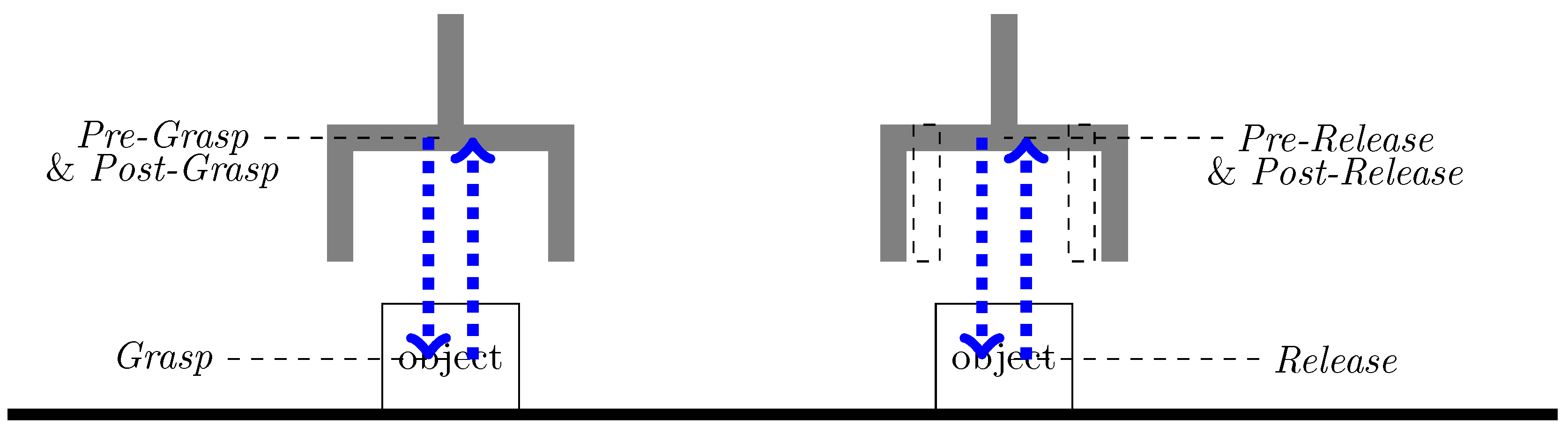

2.4.2. Motion Representation for a Robot’s End-Effector

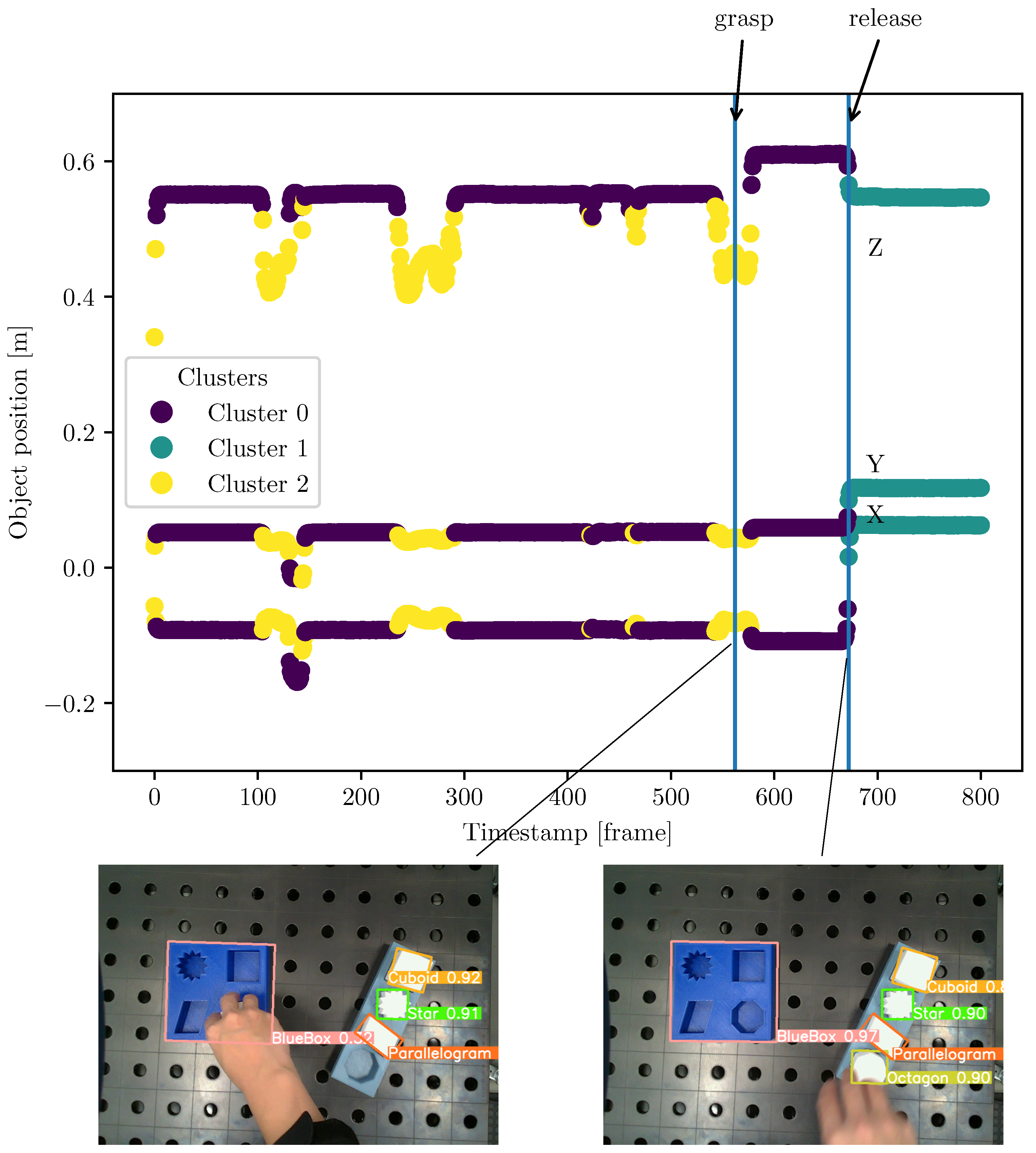

2.4.3. Trajectory Segmentation

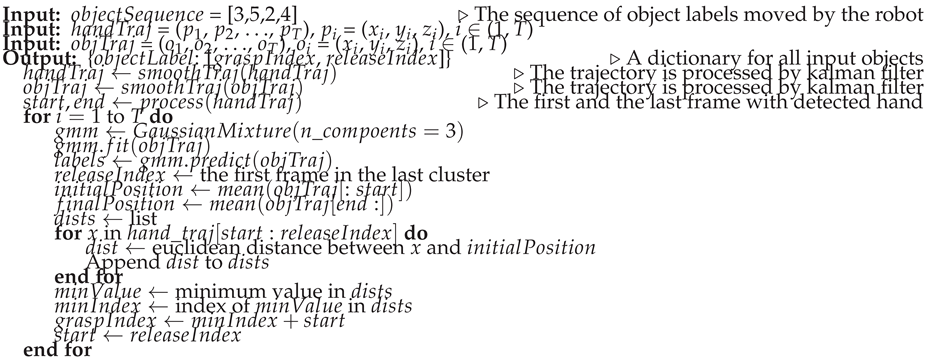

| Algorithm 1 Segmentation hand trajectories |

|

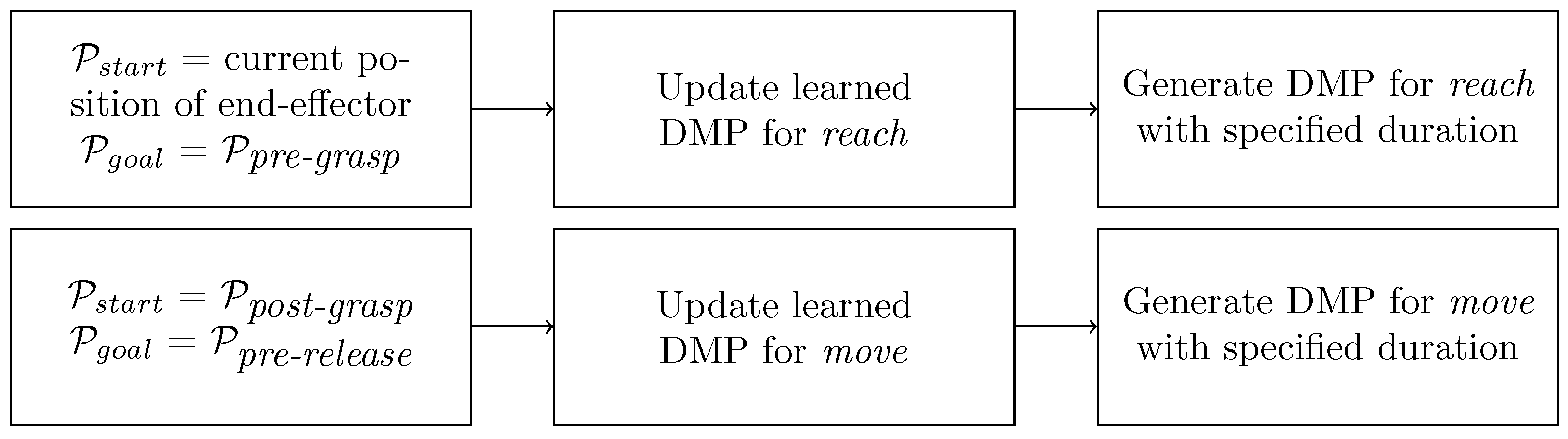

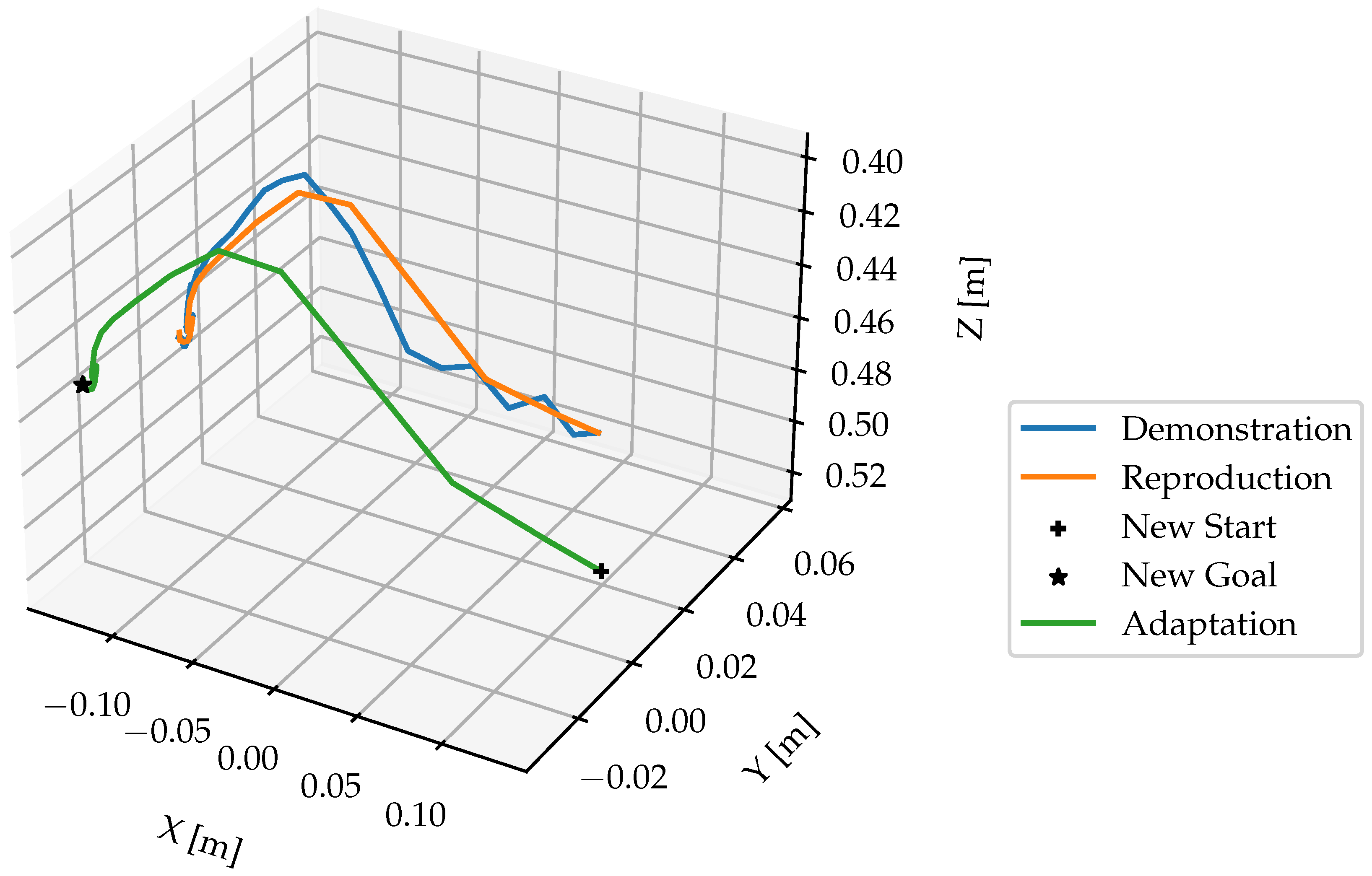

2.4.4. Trajectory Learning & Generation

- they have an internal time variable (phase), which is defined by a so-called canonical system,

- they can be adapted by tuning the weights of a forcing term and

- a transformation system generates goal-directed accelerations.

3. Results

3.1. Experimental Setup

3.2. Evaluation of Hand Detection Methods

3.3. Training and Evaluation the Object Detection Model

3.4. Evaluation of Depth Value Quality

3.5. Evaluation of the Proposed Segmentation Approach

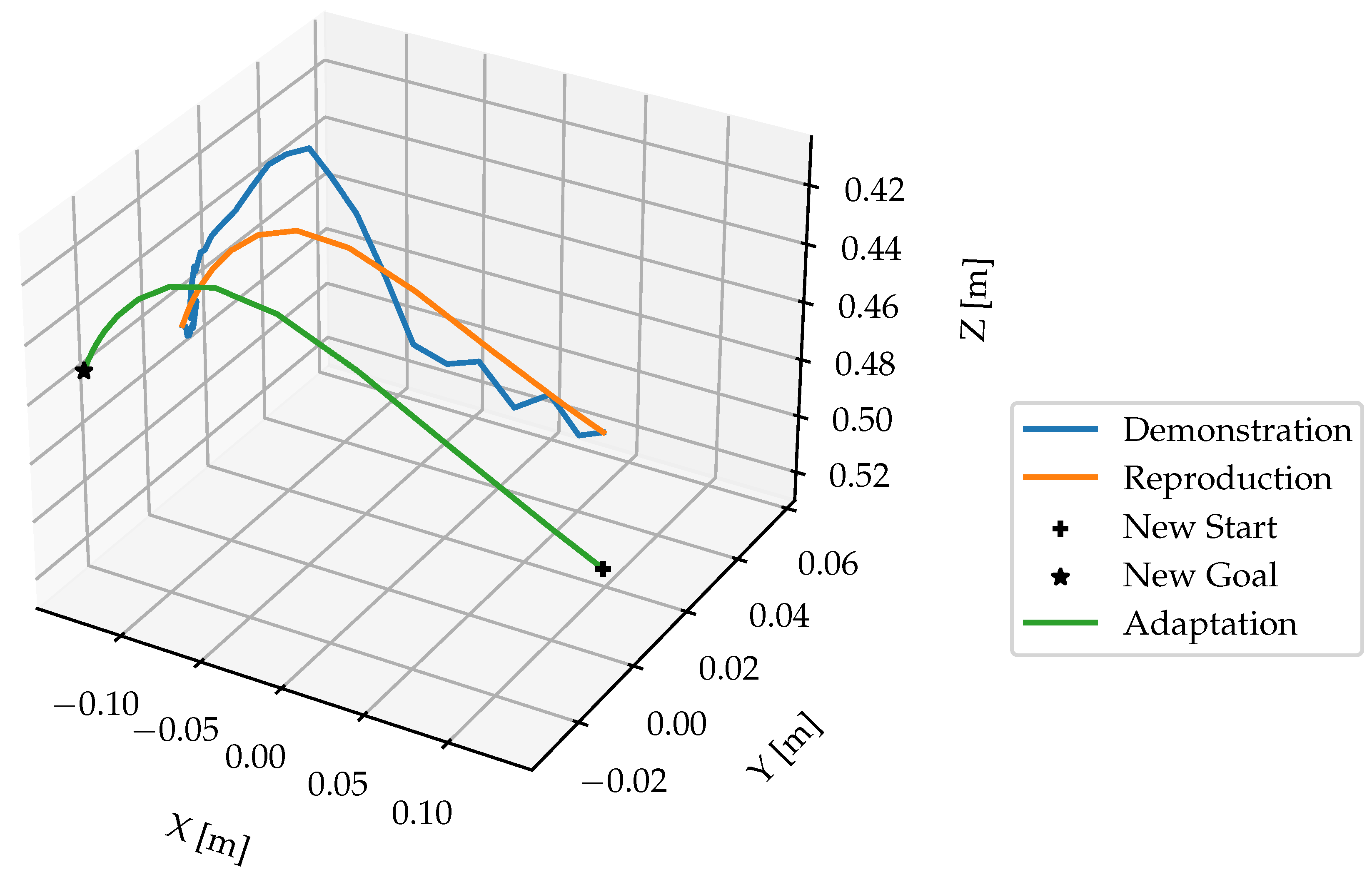

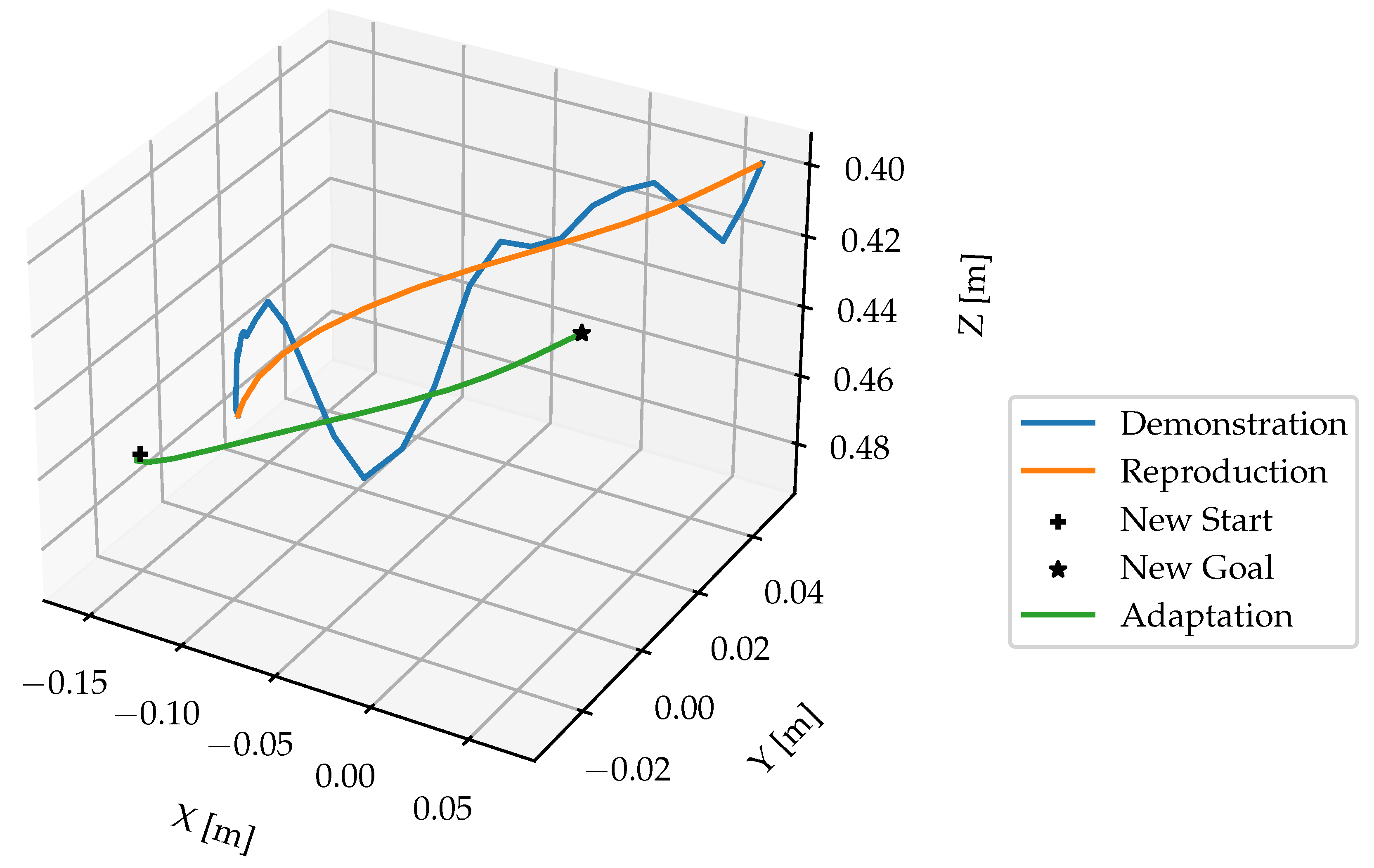

3.6. Evaluation of the Motion Learning Approach

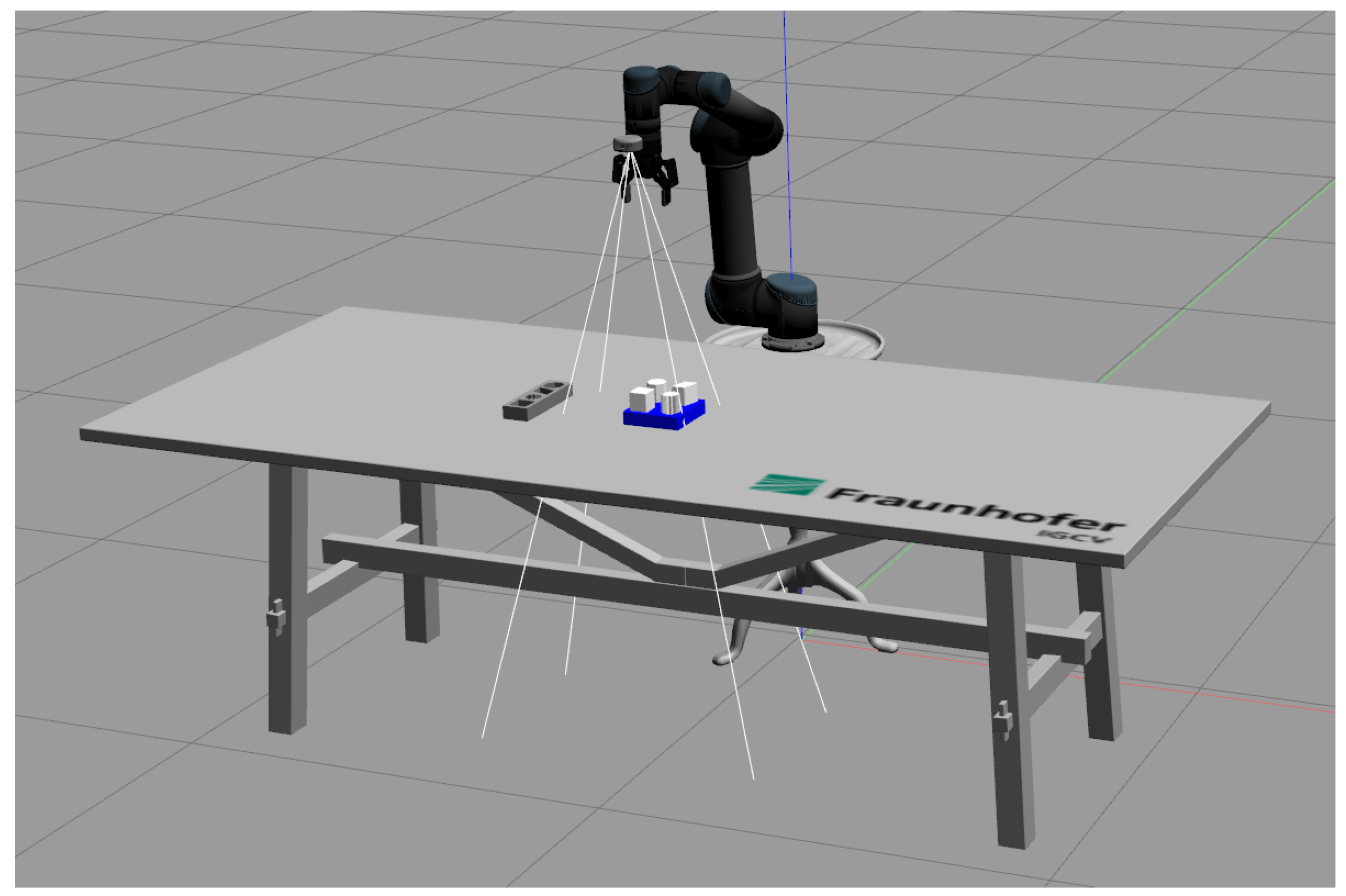

3.7. Evaluation on Robot in Simulation

4. Discussion

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Saveriano, M. Robotic Tasks Acquisition via Human Guidance: Representation, Learning and Execution. PhD thesis, Technische Universität München, 2017.

- Steinmetz, F.; Nitsch, V.; Stulp, F. Intuitive task-level programming by demonstration through semantic skill recognition. IEEE Robotics and Automation Letters 2019, 4, 3742–3749. [Google Scholar] [CrossRef]

- Zeng, Z. Semantic Robot Programming for Taskable Goal-Directed Manipulation. PhD thesis, University of Michigan, 2020.

- Finn, C.; Yu, T.; Zhang, T.; Abbeel, P.; Levine, S. One-shot visual imitation learning via meta-learning. Conference on Robot Learning. PMLR, 2017, pp. 357–368.

- Xin, J.; Wang, L.; Xu, K.; Yang, C.; Yin, B. Learning Interaction Regions and Motion Trajectories Simultaneously from Egocentric Demonstration Videos. IEEE Robotics and Automation Letters 2023. [Google Scholar] [CrossRef]

- Shan, D.; Geng, J.; Shu, M.; Fouhey, D.F. Understanding human hands in contact at internet scale. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 9869–9878.

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Advances in neural information processing systems 2015, 28. [Google Scholar] [CrossRef] [PubMed]

- Nagarajan, T.; Feichtenhofer, C.; Grauman, K. Grounded human-object interaction hotspots from video. Proceedings of the IEEE/CVF International Conference on Computer Vision, 2019, pp. 8688–8697.

- Wen, B.; Lian, W.; Bekris, K.; Schaal, S. You Only Demonstrate Once: Category-Level Manipulation from Single Visual Demonstration. Robotics: Science and Systems 2022.

- Qiu, Z.; Eiband, T.; Li, S.; Lee, D. Hand Pose-based Task Learning from Visual Observations with Semantic Skill Extraction. 2020 29th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN). IEEE, 2020, pp. 596–603.

- Li, S.; Lee, D. Point-to-pose voting based hand pose estimation using residual permutation equivariant layer. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 11927–11936.

- Schaal, S. Dynamic movement primitives-a framework for motor control in humans and humanoid robotics. In Adaptive motion of animals and machines; Springer; pp. 261–280.

- Kyrarini, M.; Haseeb, M.A.; Ristić-Durrant, D.; Gräser, A. Robot learning of industrial assembly task via human demonstrations. Autonomous Robots 2019, 43, 239–257. [Google Scholar] [CrossRef]

- Ding, G.; Liu, Y.; Zang, X.; Zhang, X.; Liu, G.; Zhao, J. A Task-Learning Strategy for Robotic Assembly Tasks from Human Demonstrations. Sensors 2020, 20, 5505. [Google Scholar] [CrossRef] [PubMed]

- Pervez, A. Task parameterized robot skill learning via programming by demonstrations 2018.

- Zeng, Z.; Zhou, Z.; Sui, Z.; Jenkins, O.C. Semantic robot programming for goal-directed manipulation in cluttered scenes. 2018 IEEE international conference on robotics and automation (ICRA). IEEE, 2018, pp. 7462–7469.

- Aeronautiques, C.; Howe, A.; Knoblock, C.; McDermott, I.D.; Ram, A.; Veloso, M.; Weld, D.; Sri, D.W.; Barrett, A.; Christianson, D. ; others. Pddl the planning domain definition language. Technical Report, Tech. Rep. 1998. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Advances in neural information processing systems 2015, 28. [Google Scholar] [CrossRef] [PubMed]

- Fouhey, D.F.; Kuo, W.; Efros, A.A.; Malik, J. From Lifestyle VLOGs to Everyday Interactions. CVPR, 2018.

- Damen, D.; Doughty, H.; Farinella, G.M.; Fidler, S.; Furnari, A.; Kazakos, E.; Moltisanti, D.; Munro, J.; Perrett, T.; Price, W.; Wray, M. Scaling Egocentric Vision: The EPIC-KITCHENS Dataset. European Conference on Computer Vision (ECCV), 2018.

- Li, Y.; Ye, Z.; Rehg, J.M. Delving into egocentric actions. Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 287–295.

- Sigurdsson, G.A.; Gupta, A.K.; Schmid, C.; Farhadi, A.; Karteek, A. Actor and Observer: Joint Modeling of First and Third-Person Videos. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2018; 7396–7404. [Google Scholar]

- Lugaresi, C.; Tang, J.; Nash, H.; McClanahan, C.; Uboweja, E.; Hays, M.; Zhang, F.; Chang, C.L.; Yong, M.G.; Lee, J. ; others. Mediapipe: A framework for building perception pipelines. arXiv preprint arXiv:1906.08172, arXiv:1906.08172 2019.

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; others. Imagenet large scale visual recognition challenge. International journal of computer vision 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Everingham, M.; Van Gool, L.; Williams, C.K.; Winn, J.; Zisserman, A. The pascal visual object classes (voc) challenge. International journal of computer vision 2010, 88, 303–338. [Google Scholar] [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13. Springer, 2014, pp. 740–755.

- Ding, J.; Xue, N.; Xia, G.S.; Bai, X.; Yang, W.; Yang, M.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. Object Detection in Aerial Images: A Large-Scale Benchmark and Challenges. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2021; 1. [Google Scholar] [CrossRef]

- Vanherle, B.; Moonen, S.; Reeth, F.V.; Michiels, N. Analysis of Training Object Detection Models with Synthetic Data. 33rd British Machine Vision Conference 2022, BMVC 2022, London, UK, November 21-24, 2022. BMVA Press, 2022.

- Hodaň, T.; Vineet, V.; Gal, R.; Shalev, E.; Hanzelka, J.; Connell, T.; Urbina, P.; Sinha, S.N.; Guenter, B. Photorealistic image synthesis for object instance detection. 2019 IEEE international conference on image processing (ICIP). IEEE, 2019, pp. 66–70.

- Denninger, M.; Winkelbauer, D.; Sundermeyer, M.; Boerdijk, W.; Knauer, M.; Strobl, K.H.; Humt, M.; Triebel, R. BlenderProc2: A Procedural Pipeline for Photorealistic Rendering. Journal of Open Source Software 2023, 8, 4901. [Google Scholar] [CrossRef]

- Ghidoni, S. Performance evaluation of depth completion neural networks for various RGB-D camera technologies 2023.

- Kim, D.; Ga, W.; Ahn, P.; Joo, D.; Chun, S.; Kim, J. Global-Local Path Networks for Monocular Depth Estimation with Vertical CutDepth. CoRR, 2201; abs/2201.07436. [Google Scholar]

- Fabisch, A. Learning and generalizing behaviors for robots from human demonstration. PhD thesis, Universität Bremen, 2020.

- Ijspeert, A.J.; Nakanishi, J.; Hoffmann, H.; Pastor, P.; Schaal, S. Dynamical movement primitives: learning attractor models for motor behaviors. Neural computation 2013, 25, 328–373. [Google Scholar] [CrossRef]

- Pastor, P.; Hoffmann, H.; Asfour, T.; Schaal, S. Learning and generalization of motor skills by learning from demonstration. 2009 IEEE International Conference on Robotics and Automation. IEEE, 2009, pp. 763–768.

- Servi, M.; Mussi, E.; Profili, A.; Furferi, R.; Volpe, Y.; Governi, L.; Buonamici, F. Metrological Characterization and Comparison of D415, D455, L515 RealSense Devices in the Close Range. Sensors 2021, 21, 7770. [Google Scholar] [CrossRef]

- Chen, Z.; Liu, P.; Wen, F.; Wang, J.; Ying, R. Restoration of Motion Blur in Time-of-Flight Depth Image Using Data Alignment. 2020 International Conference on 3D Vision (3DV), 2020, pp. 820–828. [CrossRef]

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 |

| Actions | Skills | Segmented hand trajectories |

|---|---|---|

| Pick | Reach | |

| Grasp | , | |

| Place | Move | |

| Release | , |

| Faster-RCNN | MediaPipe | |

|---|---|---|

| Video 1 | ||

| Video 2 | ||

| Video 3 | ||

| Video 4 | ||

| Video 5 |

| Classes | Images | Instances | Precision | Recall | mAP50 | mAP50-95 |

|---|---|---|---|---|---|---|

| all | 3750 | 21821 | ||||

| GrayBox | 3750 | 3633 | ||||

| BlueBox | 3750 | 3670 | ||||

| Parallelogram | 3750 | 3637 | ||||

| Cuboid | 3750 | 3598 | ||||

| Octagon | 3750 | 3652 | ||||

| Star | 3750 | 3631 |

| Classes | Images | Instances | Precision | Recall | mAP50 | mAP50-95 |

|---|---|---|---|---|---|---|

| all | 971 | 5826 | ||||

| GrayBox | 971 | 971 | ||||

| BlueBox | 971 | 971 | ||||

| Parallelogram | 971 | 971 | ||||

| Cuboid | 971 | 971 | ||||

| Octagon | 971 | 971 | ||||

| Star | 971 | 971 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).