Submitted:

08 August 2024

Posted:

09 August 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology

2.1. Data Preparation

2.2. In-Text Pause Encoding

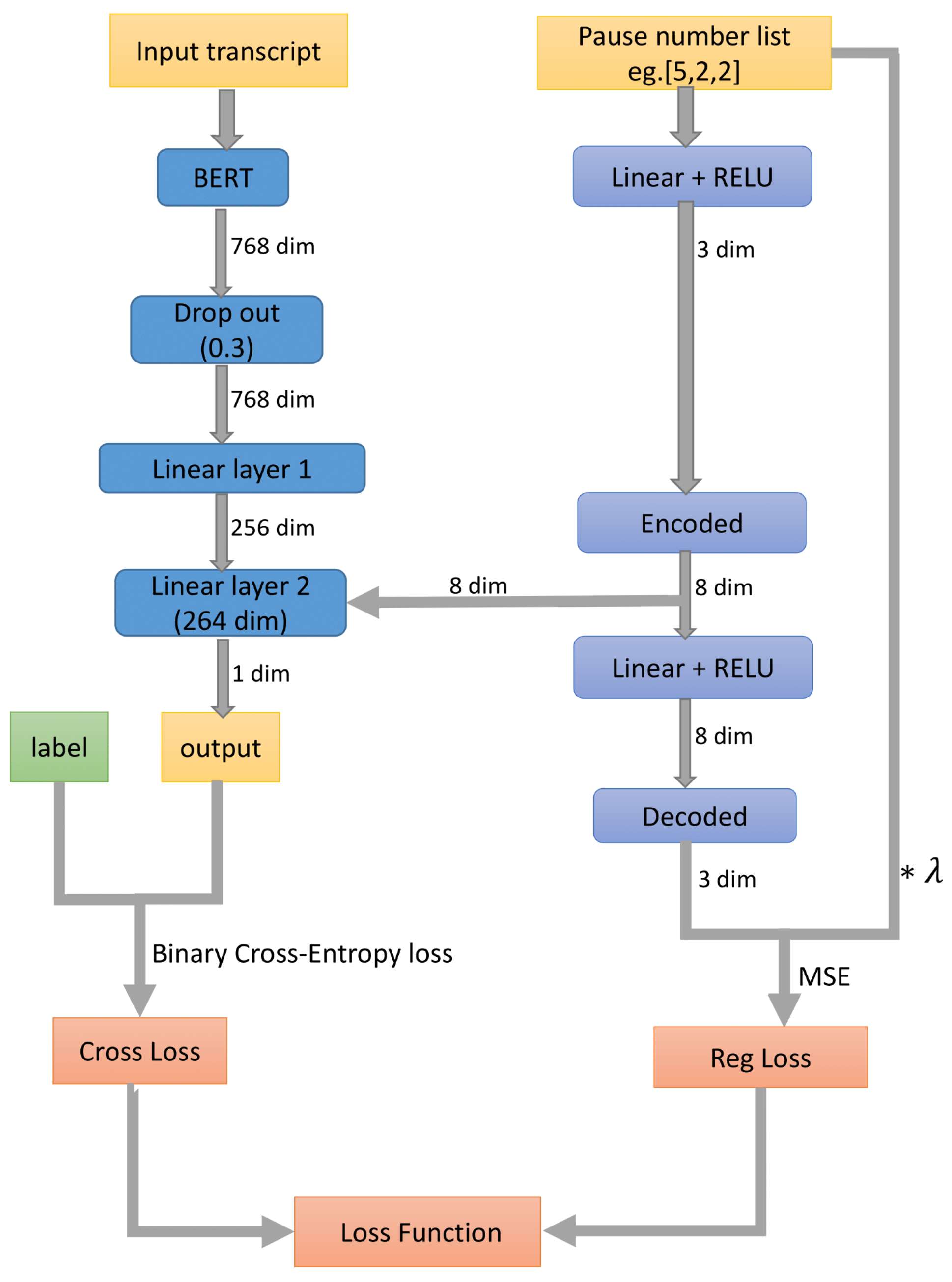

2.3. Modeling

3. Results and Discussion

| Model | Acc. | f1 |

4. Conclusions

References

- Kim, M.; Thompson, C.K. Verb deficits in Alzheimer’s disease and agrammatism: Implications for lexical organization. Brain and language 2004, 88, 1–20. [Google Scholar] [CrossRef] [PubMed]

- Mentis, M.; Briggs-Whittaker, J.; Gramigna, G.D. Discourse topic management in senile dementia of the Alzheimer’s type. Journal of Speech, Language, and Hearing Research 1995, 38, 1054–1066. [Google Scholar] [CrossRef] [PubMed]

- Kempler, D.; Curtiss, S.; Jackson, C. Syntactic preservation in Alzheimer’s disease. Journal of Speech, Language, and Hearing Research 1987, 30, 343–350. [Google Scholar] [CrossRef] [PubMed]

- Croot, K.; Hodges, J.R.; Xuereb, J.; Patterson, K. Phonological and articulatory impairment in Alzheimer’s disease: a case series. Brain and language 2000, 75, 277–309. [Google Scholar] [CrossRef] [PubMed]

- Altmann, L.J.; Kempler, D.; Andersen, E.S. Speech Errors in Alzheimer’s Disease. 2001. [Google Scholar] [CrossRef] [PubMed]

- Zhao, W.X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; et al. A survey of large language models. arXiv 2023, arXiv:2303.18223. [Google Scholar] [CrossRef]

- Naveed, H.; Khan, A.U.; Qiu, S.; Saqib, M.; Anwar, S.; Usman, M.; Akhtar, N.; Barnes, N.; Mian, A. A Comprehensive Overview of Large Language Models. arXiv 2023, arXiv:2307.06435. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. arXiv 2019, arXiv:1810.04805. [Google Scholar] [CrossRef]

- Lan, Z.; Chen, M.; Goodman, S.; Gimpel, K.; Sharma, P.; Soricut, R. Albert: A lite bert for self-supervised learning of language representations. arXiv 2019, arXiv:1909.11942. [Google Scholar] [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv 2019, arXiv:1907.11692. [Google Scholar] [CrossRef]

- Sanh, V.; Debut, L.; Chaumond, J.; Wolf, T. DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter. arXiv 2019, arXiv:1910.01108. [Google Scholar] [CrossRef]

- Clark, K.; Khandelwal, U.; Levy, O.; Manning, C.D. What does bert look at? an analysis of bert’s attention. arXiv 2019, arXiv:1906.04341. [Google Scholar] [CrossRef]

- Ilias, L.; Askounis, D. Multimodal deep learning models for detecting dementia from speech and transcripts. Frontiers in Aging Neuroscience 2022, 14, 830943. [Google Scholar] [CrossRef]

- Yuan, J.; Bian, Y.; Cai, X.; Huang, J.; Ye, Z.; Church, K. Disfluencies and Fine-Tuning Pre-Trained Language Models for Detection of Alzheimer’s Disease. Interspeech 2020, 2020, 2162–2166. [Google Scholar]

- Searle, T.; Ibrahim, Z.; Dobson, R. Comparing Natural Language Processing Techniques for Alzheimer’s Dementia Prediction in Spontaneous Speech. Proc. Interspeech 2020, 2020, 2192–2196. [Google Scholar] [CrossRef]

- Koo, J.; Lee, J.H.; Pyo, J.; Jo, Y.; Lee, K. Exploiting Multi-Modal Features from Pre-Trained Networks for Alzheimer’s Dementia Recognition. Proc. Interspeech 2020, 2020, 2217–2221. [Google Scholar] [CrossRef]

- Rohanian, M.; Hough, J.; Purver, M. Multi-Modal Fusion with Gating Using Audio, Lexical and Disfluency Features for Alzheimer’s Dementia Recognition from Spontaneous Speech. Proc. Interspeech 2020, 2020, 2187–2191. [Google Scholar] [CrossRef]

- Zhu, Y.; Liang, X.; Batsis, J.A.; Roth, R.M. Exploring Deep Transfer Learning Techniques for Alzheimer’s Dementia Detection. Frontiers in Computer Science 2021, 3. [Google Scholar] [CrossRef] [PubMed]

- Duan, J.; Wei, F.; Liu, J.; Li, H.; Liu, T.; Wang, J. CDA: A Contrastive Data Augmentation Method for Alzheimer’s Disease Detection. Findings of the Association for Computational Linguistics: ACL 2023; Rogers, A., Boyd-Graber, J., Okazaki, N., Eds.; Association for Computational Linguistics: Toronto, Canada, 2023; pp. 1819–1826. [Google Scholar] [CrossRef]

- Hlédiková, A.; Woszczyk, D.; Akman, A.; Demetriou, S.; Schuller, B. Data Augmentation for Dementia Detection in Spoken Language. arXiv 2022, arXiv:2206.12879. [Google Scholar] [CrossRef]

- Igarashi, T.; Nihei, M. Cognitive Assessment of Japanese Older Adults with Text Data Augmentation. Healthcare 2022, 10, 2051. [Google Scholar] [CrossRef]

- Novikova, J. Robustness and Sensitivity of BERT Models Predicting Alzheimer’s Disease from Text. In Proceedings of the Seventh Workshop on Noisy User-generated Text (W-NUT 2021); Xu, W., Ritter, A., Baldwin, T., Rahimi, A., Eds.; Association for Computational Linguistics: Online, 2021; pp. 334–339. [Google Scholar] [CrossRef]

- Becker, J.T.; Boiler, F.; Lopez, O.L.; Saxton, J.; McGonigle, K.L. The Natural History of Alzheimer’s Disease: Description of Study Cohort and Accuracy of Diagnosis. Archives of Neurology 1994, 51, 585–594. https://jamanetwork.com/journals/jamaneurology/articlepdf/592905/archneur_51_6_015.pdf. [CrossRef] [PubMed]

- Yuan, J.; Bian, Y.; Cai, X.; Huang, J.; Ye, Z.; Church, K. Disfluencies and Fine-Tuning Pre-Trained Language Models for Detection of Alzheimer’s Disease. Interspeech 2020, 2020, 2162–2166. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled Weight Decay Regularization. International Conference on Learning Representations; 2017. [Google Scholar]

| Before | (..) &=sighs just &-um &m mention the &-uh what what |

| After | just mention the what what |

| Model | Average Acc. | Average f1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).