1. Introduction

Automated greenhouse systems require the meticulous configuration of diverse parameters to guarantee that the actuators execute the designated functions accurately. This configuration entails the determination of appropriate set-points for various subsystems including climate, lighting, and irrigation control. Typically, these parameters and control rules are reactive. In recent years, numerous studies have advanced models based on set-point selection strategies employing various heuristic or artificial intelligence paradigms, coupled with the implementation of predictive solutions. The application of reinforcement learning (RL) to greenhouse management has gained significant attention in recent years due to its potential to optimize energy usage and automate various control processes. This section provides an overview of the most relevant studies, categorized by their approaches and contributions.

2. Literature Review

The use of reinforcement learning (RL) in the energy management of greenhouses and precision agriculture techniques has evolved significantly. This analysis is based on the references provided, which highlight key contributions in this field. Future research directions are also discussed.

Reinforcement learning (RL) has become a powerful tool in optimizing energy management in greenhouses and precision agriculture. This paper reviews key contributions to the field, analyses their contributions, and discusses future research directions.

Kiumarsi et al. [

1] present a comprehensive survey and implementation guidelines for optimal and autonomous control using RL. They discuss applications in complex systems, emphasizing their potential to enhance energy efficiency and reduce operational costs in greenhouses. Perera et al. [

2] review various applications of RL in energy management, from generation to consumption. They highlight specific cases of intelligent greenhouses that use RL to optimize energy use. Wang et al. [

3] present an RL controller based on recurring neural networks based on long-term memory (LSTM) for microgrid management, with potential applications in greenhouses.

Kazmi et al. [

4] explore the use of deep RL for optimal control in hybrid energy systems of buildings, applicable to energy management in greenhouses. Ruelens et al. [

5] examine the application of RL in electric water heaters, with direct implications for energy management in greenhouses.

Zhang et al. [

6] discuss the developments and future challenges in precision agriculture, including the integration of RL for better resource management in greenhouses. Liu et al. [

6] provide a comprehensive review of RL applications in smart agriculture, highlighting various use cases and the potential benefits of RL in optimizing agricultural processes, including greenhouse energy management.

Mason et al. [

7] review the applications of RL in smart grids, discussing how these methods can be applied to improve energy management and efficiency in interconnected systems such as greenhouses. Hosseinloo et al. [

8] explore data-driven predictive control using RL for energy efficiency and comfort management in buildings, with potential applications in greenhouses to optimize climate control systems. Sun et al. [

9] present a study on multi-agent RL for integrated energy management of interconnected microgrids, applicable to complex greenhouse energy systems. Alani et al. [

10] discuss the opportunities and challenges of RL-based energy management for smart homes and buildings, with insights applicable to greenhouse energy systems.

Fu et al. [

11] survey the applications of RL in building energy management, providing information relevant to greenhouse energy systems. Mauree et al. [

12] review data-driven and machine learning models for building energy performance prediction, fault detection, and optimization, applicable to greenhouse energy management. Kazmi et al. [

13] discuss the application of multi-agent RL for building energy management, with potential benefits for greenhouse systems. Yang et al. [

14] explore energy optimization in smart home buildings using deep RL, providing information for greenhouse energy management.

Ruelens et al. [

15] discuss learning sequential decision making for optimal control of thermally activated resources, applicable to greenhouse energy systems. Vázquez-Canteli and Henze [

16] discuss the integration of reinforcement learning with predictive control of the model for the response to demand in buildings, which can be applied to optimize energy management in greenhouses. Sutton and Barto [

17] discuss the application of RL in real-world games, providing insights that can be adapted to complex energy management systems in greenhouses. Peters et al. [

18] discuss a reinforcement learning approach to autonomous vehicles, which can provide insights for autonomous control in greenhouse energy systems. Kar et al. [

19] present QD-learning, a collaborative Q-learning approach that can be applied to cooperative energy management strategies in greenhouses.

Sierla et al. [

20] review reinforcement learning applications in urban energy systems, which can provide valuable information for energy management in greenhouse environments. Vázquez-Canteli and Nagy [

21] discuss reinforcement learning for demand response, which is highly relevant for dynamic energy management in greenhouses. Mauree et al. [

12] review assessment methods for urban environments, providing methodologies that can be adapted to evaluate energy performance in greenhouses.

2.1. Future Research Directions

Despite significant advancements, several areas require further exploration to fully implement RL in greenhouse energy management.

Integration with IoT: The integration of RL with IoT devices can enhance real-time data acquisition and decision-making processes in greenhouses. Future research should focus on developing seamless IoT-RL integration frameworks.

Scalability: Research on scaling RL solutions to larger, more complex greenhouse systems is necessary to ensure widespread adoption. Studies should address computational challenges and the ability to handle large datasets.

Interdisciplinary Approaches: Combining RL with other AI techniques, such as genetic algorithms and fuzzy logic, could yield more robust energy management solutions. Exploration of hybrid models that leverage the strengths of different AI paradigms is essential.

Environmental Adaptability: Developing RL algorithms capable of adapting to diverse environmental conditions will be crucial for global applications. This includes designing algorithms that can learn and adapt to changing weather patterns, pest infestations, and other environmental variables.

Economic Viability: Studies on the cost-effectiveness of RL implementations in greenhouses can drive commercial interest and investment. Future research should focus on performing cost-benefit analyses and developing business models that highlight the economic advantages of RL-based energy management systems.

User-Friendly Interfaces: Developing user-friendly interfaces and control systems for greenhouse operators is vital for the practical implementation of RL. Research should focus on creating intuitive dashboards and control panels that allow operators to easily interact with and oversee RL systems.

Sustainability Metrics: Future work should also explore the development of sustainability metrics that RL systems can optimize. This includes not only energy efficiency but also water usage, pesticide application, and overall environmental impact.

Policy and Regulatory Compliance: Research should address how RL systems can be designed to comply with local and international policies and regulations concerning energy usage and environmental protection.

Data Privacy and Security: With the increasing use of IoT and RL, ensuring data privacy and security is essential. Future research should develop robust security protocols to protect sensitive data in greenhouse management systems.

Real-World Case Studies: Conducting real-world case studies and pilot projects can provide valuable insights into the practical challenges and benefits of implementing RL in greenhouses. These studies can help refine RL models and identify best practices for successful adoption.

2.2. Literature review conclusion

The RL paradigm has shown significant potential to optimize energy management in greenhouses and precision agriculture techniques. From optimal autonomous control to deep RL, the reviewed references indicate a growing trend towards intelligent, adaptive solutions that promote energy efficiency and sustainability. Future research should focus on integrating RL with IoT, scalability, interdisciplinary approaches, environmental adaptability, economic viability, user-friendly interfaces, sustainability metrics, policy compliance, data privacy and security, and real-world case studies to further advance this technological evolution.

In conclusion, the field of reinforcement learning for greenhouse management has made significant progress, yet challenges remain. Future research should focus on improving scalability, integrating advanced technologies, and developing hybrid models to fully realize the potential of RL in this domain.

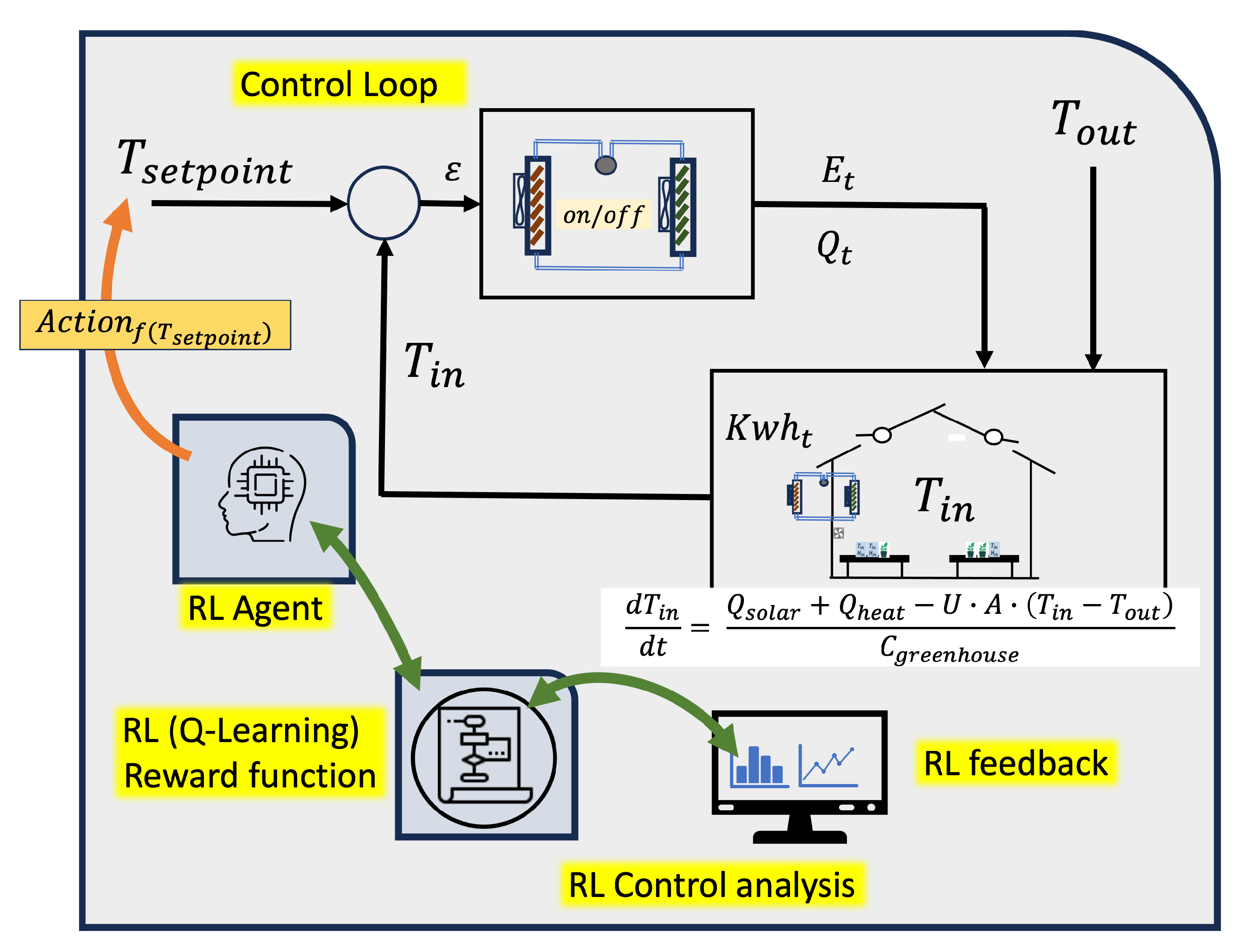

3. Model proposed

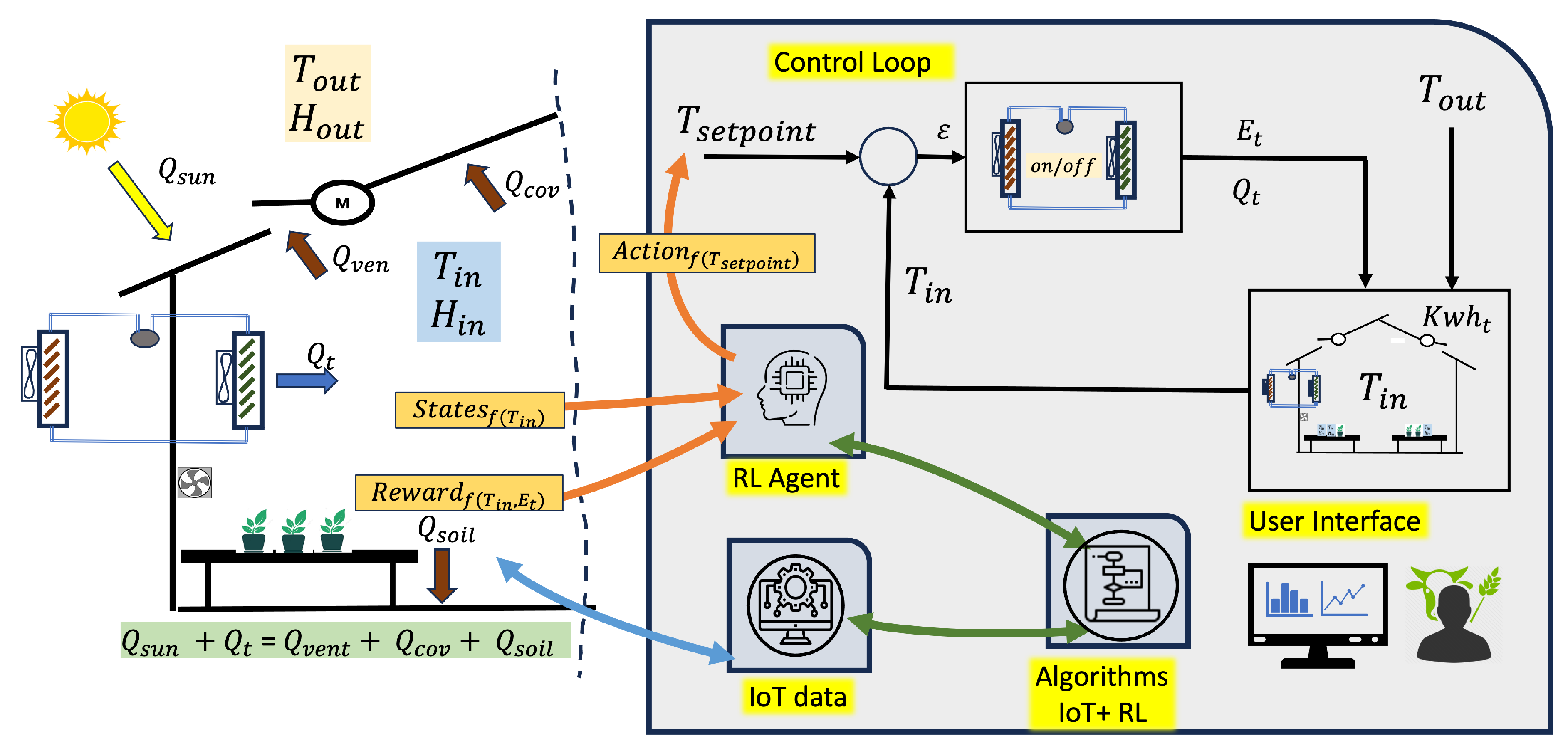

The Reinforcement Learning (RL) problem involves a digital agent exploring an environment to achieve a specific goal. In the field of automated greenhouses, it is about managing environmental conditions and crop growth while optimizing resources and inputs. RL is based on the hypothesis that all goals can be characterized by maximizing the expected cumulative reward. The agent must learn to perceive and manipulate the state of the environment through its actions to optimize this reward. In the model presented in this work, the agent performs control actions to adjust the set points, ensuring that the internal conditions of the greenhouse stay within the predefined maximum and minimum limits. By doing so, the agent optimizes the management of resources such as water, energy, and inputs, leading to improved plant growth.

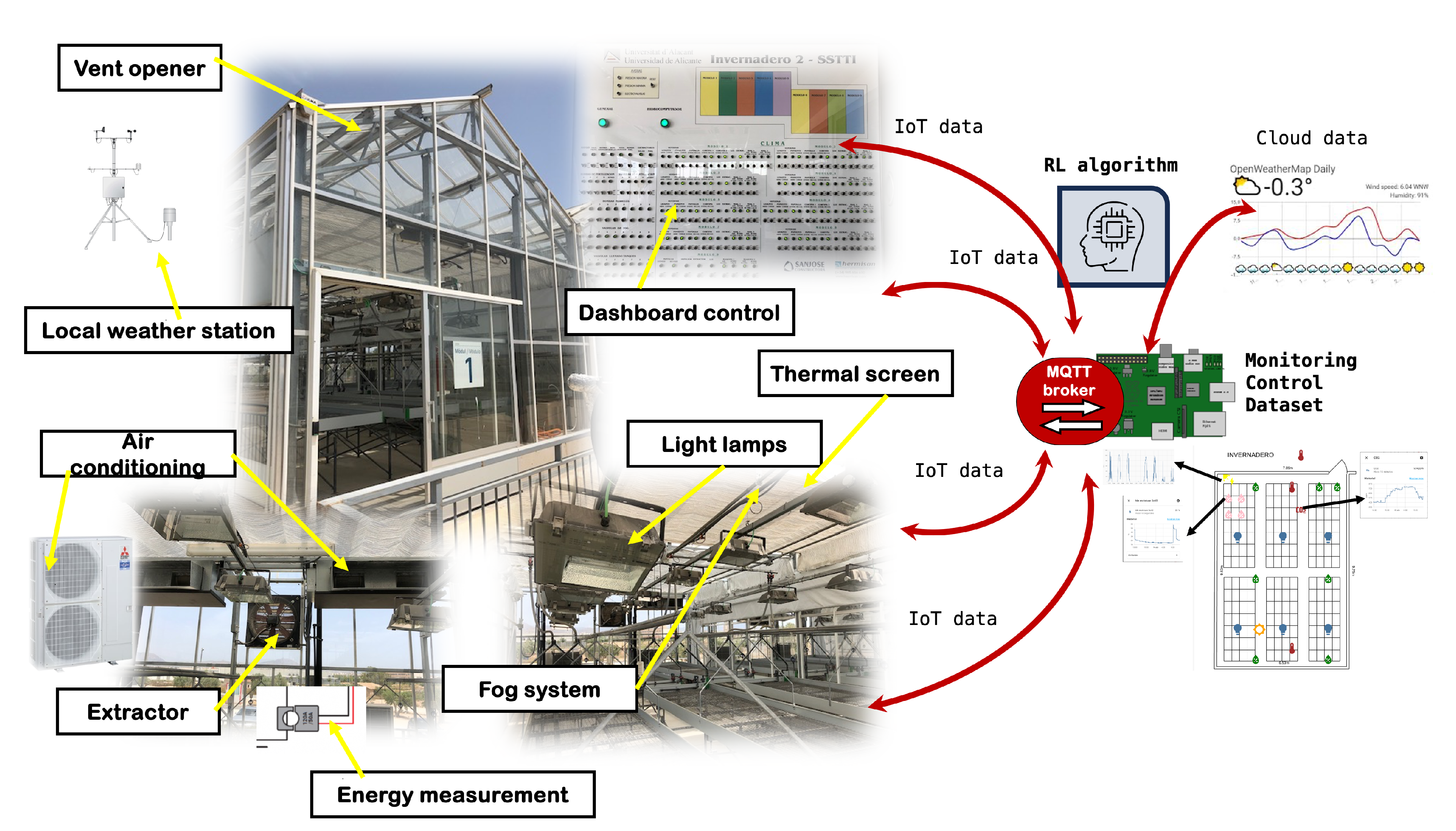

Figure 1 shows the application scenario of the RL paradigm.

The system must be able to collect relevant data, establish the necessary sensors and communication protocols, and manage them appropriately using the IOT paradigm.

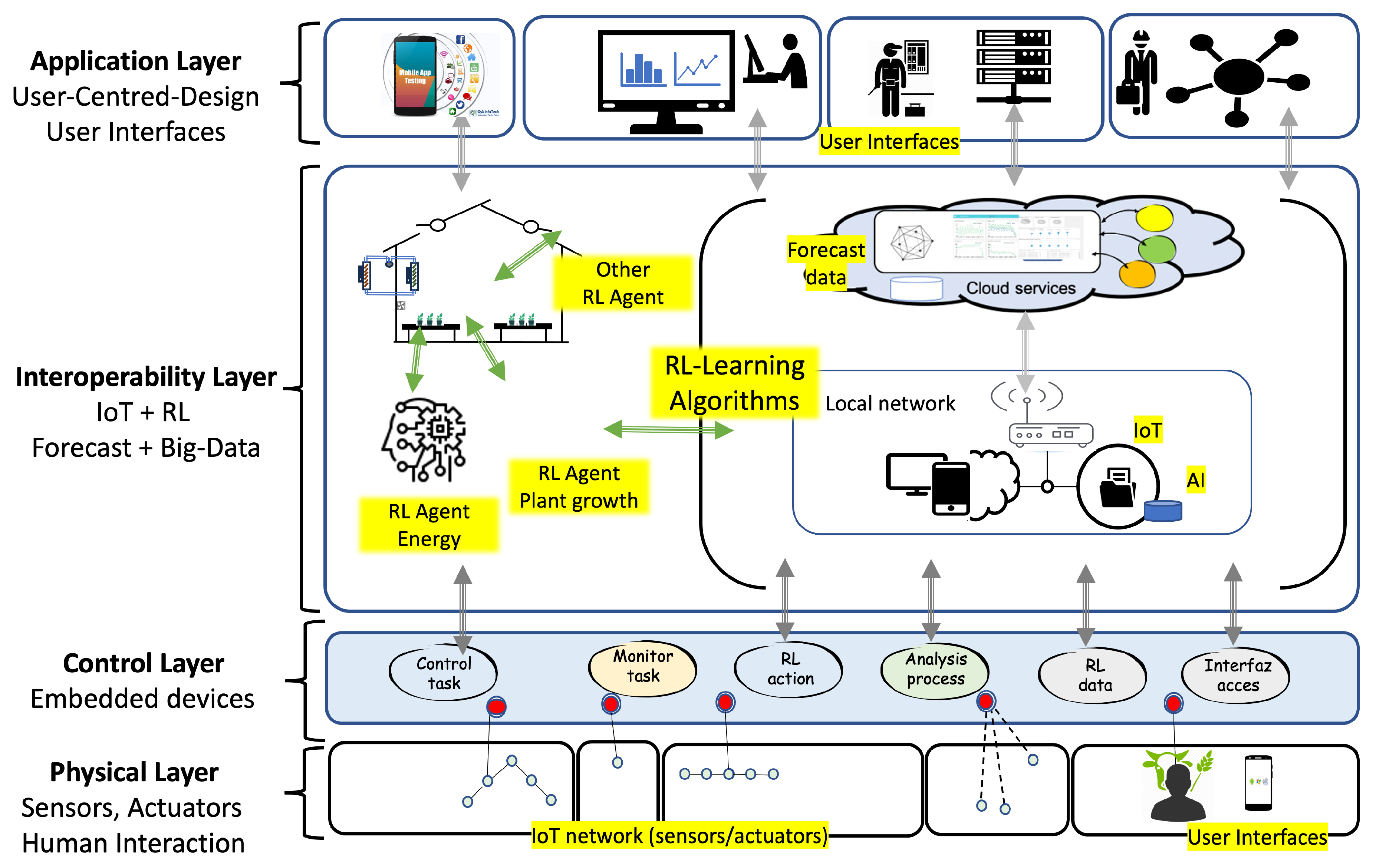

The proposed model uses a layered architecture (

Figure 2) to integrate the services and functionalities of the platform (IoT + RL). In an agricultural facility, whether newly constructed or already operational, monitoring and actuation devices are installed and interfaced with the processing and control layer. The following provides a detailed description of each layer:

In the physical layer, IoT sensors/actuators, human-machine interfaces (HMIs) and machine-to-machine interfaces (MMI) are installed. The farmer and technicians can act by entering data and requests. The processing layer filters data, executes control actions, and communicates with the upper layer.

The control layer embedded devices receive data from the upper layer to manage the different processes and perform control and maintenance actions. This layer must provide the necessary support to communicate the data to the sensor/actuators installed.

The interoperability layer integrates different RL agents connected to digital twin algorithms. The model proposes the development of RL agents to optimize the use of set-point values in various control loops within an automated facility. The RL agent modifies the set-point values to achieve one or more specified objectives, such as maintaining environmental conditions, improving electrical consumption, reducing water use, and optimizing renewable energy use. This process operates automatically and can be analyzed through an interface where users can test control strategies. This interface enables simulations on a digital twin model that replicates different states based on the knowledge acquired from the analyzed data obtained from IoT network sensors. In this layer, the deployment of RL agents, the development of digital twin functionalities, and the implementation of user interfaces are executed.

In the application layer, applications are designed and developed on different platforms (mobile phones, business networks, computers, etc.). In each of them, a relationship is defined between the user and the type of access allowed.

3.1. Development in an Automated Greenhouse

Greenhouses provide a controlled environment for plant cultivation, allowing for improved growth and productivity compared to traditional open-field agriculture. However, managing a greenhouse efficiently involves complex decision-making processes to balance resource usage, such as water and energy, with the optimal growing conditions for plants. Traditional management methods often rely on predefined schedules and heuristics, which may not adapt well to dynamic environmental conditions and changing plant needs.

Reinforcement learning (RL) offers a promising solution to this challenge by enabling systems to learn optimal strategies through interactions with the environment. RL algorithms can adapt to changing conditions and learn from experience, making them well suited to the dynamic and complex nature of greenhouse management. By continuously adjusting actions based on observed outcomes, RL can optimize resource usage while maintaining or improving crop yields.

One of the scenarios where the model can be applied is in energy management. In the case of use of this work, the model is based on a reinforcement learning algorithm in greenhouse energy management. Specifically, the model employs a prediction model to forecast the greenhouse’s environmental conditions throughout the day. Based on these predictions, they implement a reinforcement learning (RL) algorithm that rewards minimal energy usage to regulate the greenhouse’s temperature. The algorithm refines the temperature control loop by optimizing the choice of the set-point input for the existing regulation loop. In essence, the algorithm adjusts the set point value to optimize energy consumption while maintaining the selected minimum and maximum temperature thresholds.

Incorporating climate predictions into the RL model can further enhance its performance by allowing anticipatory adjustments.

Reinforcement learning (RL) offers a promising approach to energy consumption by learning from the environment and making data-driven decisions. This study focuses on the Q-Learning algorithm, a model-free RL method, to determine the connection and disconnection of the air conditioning system.

3.2. Methodology

The proposed system uses the Q-Learning algorithm to make watering decisions based on real-time data from IoT sensors. The states, variables, actions, and reward function are designed to reflect the dynamics of the greenhouse environment. The methods are indicated in

Table 1

4. Phase 1. Control Stategies (set point in the environmental regulation loop)

Reinforcement Learning (RL) techniques can optimize air conditioning connection strategies by making decisions based on continuous feedback from plants and the environment. Various sensors (energy consumption, temperature, humidity, weather data, etc.), actuators, and a data processing unit must be used. In

Table 2 different strategies and their relationship with IoT technologies are shown.

The selection of the appropriate irrigation strategy for greenhouse cultivation depends on various factors, including crop type, environmental conditions, and resource availability. Strategies based on substrate moisture, evapotranspiration, and VPD offer customized approaches to optimize water use, improve crop performance, and promote sustainable agricultural practices.

5. Phase 2. IoT infrastructure

The IoT infrastructure is designed with the strategy of being interoperable with existing greenhouse subsystems: climate, lighting, irrigation, etc. To achieve this, it is designed and developed at two levels.

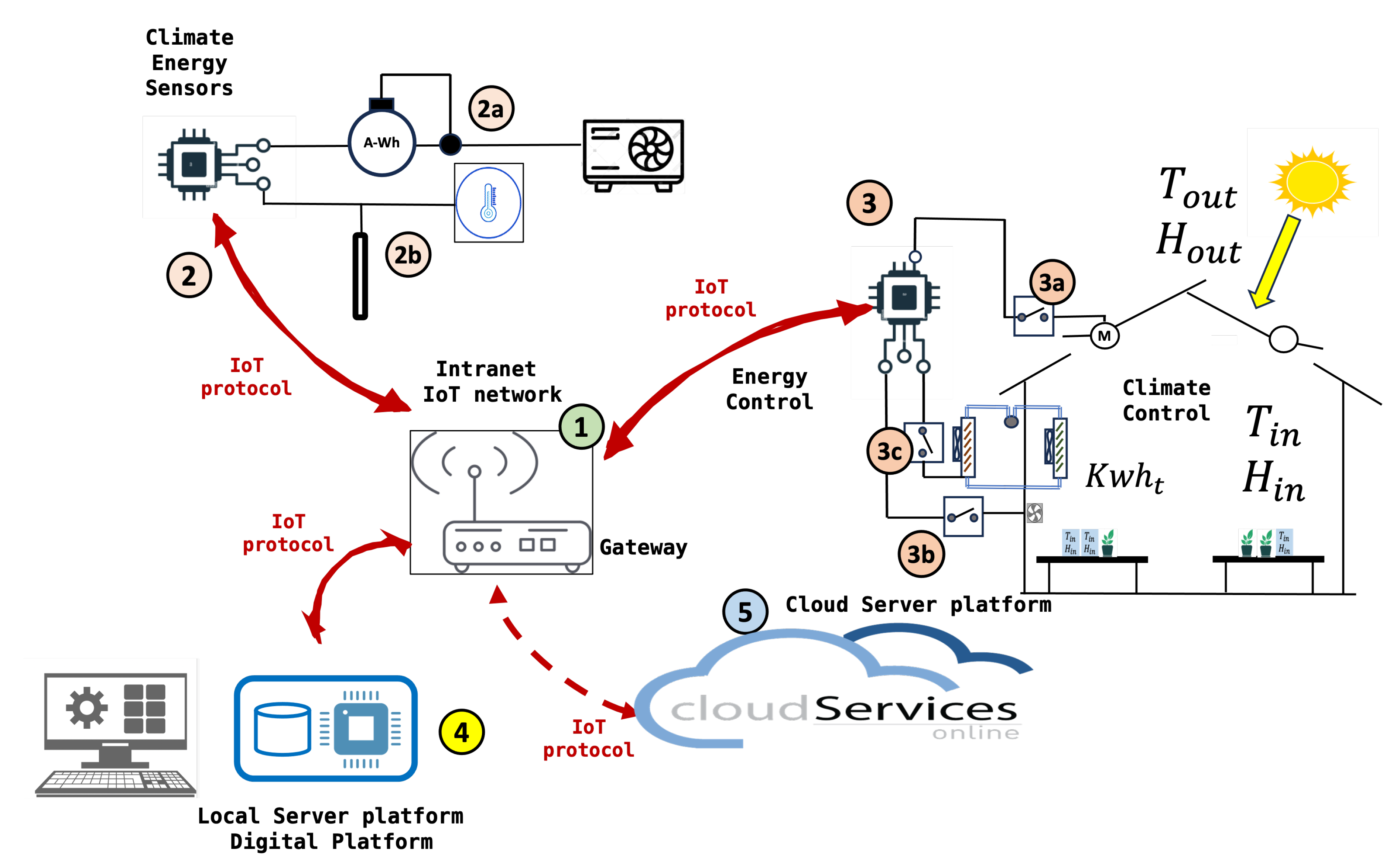

The

Figure 3 describes the Internet of Things (IoT) network. Here is the identification of the components according to the numbers in the image: The sequence of events and data sequence are listed below,

Climate energy data is captured and stored in the system.

Sensors collect environmental conditions.

The data is sent to the gateway, which then transmits it to the local server and cloud services.

The data is stored on the local server. The datasets are created with the main variables

Local and cloud services allow remote monitoring and control of the system.

The prediction of the environmental conditions in the next few hours is obtained

With the data and predictions the learning algorithm is executed

The algorithm proposes a modification of the set-point by increasing or decreasing its value, using the values of the reinforcements or Q values

Table 3.

Identification of components in the IoT network for a solar energy management system.

Table 3.

Identification of components in the IoT network for a solar energy management system.

| Number |

Component Description |

| 1 |

IoT Gateway or Router: This device acts as a central communication point between various sensors and devices and the cloud server. It uses IoT protocols to transmit data. |

| 2 |

Sensors and Meters: These devices collect data from different sources: |

| 2a |

Energy meter (A-Wh) that measures the amount of energy consumed. |

| 2b |

Temperature sensor (thermostat) that measures the ambient temperature. |

| 3 |

Solar Energy Controller: This component receives data from the sensors and manages the distribution of energy. |

| 3a |

Inverter that converts solar energy from direct current (DC) to alternating current (AC). |

| 3b |

Batteries for energy storage. |

| 3c |

Switches and fuses for protection. |

| 4 |

Local Server or Database: Stores and processes data locally. It is where all the data is collected for processing before being sent to the cloud. |

| 5 |

Cloud Services: The data is sent to the cloud for additional storage and analysis. Cloud services can provide interfaces to monitor and control the system. |

| M |

Monitoring Computer: Allows users to interact with the system, probably through a graphical interface for real-time monitoring and control of the system. |

6. Phase 3. Data set generation

Data sets generated from IoT sensors provide a rich source of information for decision makers. These data sets enable real-time monitoring and analysis, allowing for informed decision-making based on accurate and up-to-date information. In greenhouse facilities, IoT sensor traffic data can be used to optimize traffic flow, reduce congestion, and improve public transportation systems. Generating high-quality datasets from IoT sensor data requires addressing issues related to data quality and consistency. Sensors can produce noisy or incomplete data, which can affect the accuracy and reliability of the data sets. Implementing robust data pre-processing and cleaning techniques is essential to ensure the integrity of the data sets.

Temperature and Humidity Sensors: inside and outside the greenhouse. Weather forecast and energy consumption. The Methodology for Data Capture is:

Sensor Placement. Strategically place sensors to capture a representative sample of environmental conditions within the greenhouse. This includes placing sensors at various depths and locations throughout the greenhouse to monitor microclimates.

Data Logging and Transmission. Utilise data loggers and wireless networks to ensure continuous data capture and transmission. This includes setting up a reliable network infrastructure that can handle the data volume and frequency required for RL applications.

Data storage. Implement a centralized data storage solution, preferably cloud-based, to store the large volumes of data generated by the sensors. Ensure the storage system supports efficient data retrieval and processing.

Data prepossessing: cleaning, and normalisation. Address issues related to missing values, sensor malfunctions, and noise in the data. Techniques such as interpolation and filtering should be applied to ensure data quality and consistency. Normalise sensor data to a common scale to facilitate accurate analysis and model training. This step is crucial for integrating diverse data types into a cohesive dataset.

6.1. Materials and methods

Data capture was carried out on a hemp crop in a technical greenhouse (

Figure 4) with the following characteristics: Glass greenhouse of 50 m2 (5 x 10 m) each. All of them have an automatic climate and irrigation control system with the following equipment:

Humidifier with osmosis water mist.

Air conditioners to heat and cool the modules. Twin, Triple Mitsubishi PUHZ-P200YKA Three-phase Classic Inverter Nominal cooling capacity (Min. - Max.) kW 19.00 Nominal heat capacity (Min. - Max.) kW 22.40

Thermal shading screen.

Extractor fan and zenithal opening windows with anti-trip mesh.

Artificial light lamps to increase net assimilation.

Micro-sprinkler and drip or flood irrigation system.

Temperature and humidity probes.

Electrical, compressed air, mains water and osmosed water connections.

Embedded device (raspberrypi4) that deploys an intranet (WiFi, Bluetooth Low Energy) for communication, monitoring, and control.

Electric energy meter in three-phase and single-phase circuits (shelly 3 EM). This consumption meter communicates with the embedded system through the WiFi and IP protocol.

Communication to Web servers to obtain the temperature prediction in the greenhouse area.

This work develops and tests a low-cost sensor/actuator network platform, based on the Internet of Things, integrating machine-to-machine and human-machine interface protocols used in [

22]. The system integrated Internet of Things (IoT) paradigms, ubiquitous sensor networks, and edge computing to create a smart agricultural environment. Implementation (Figure ) included machine-to-machine and human-machine interface protocols to enable seamless communication and control processes considering a precision agriculture scenario.

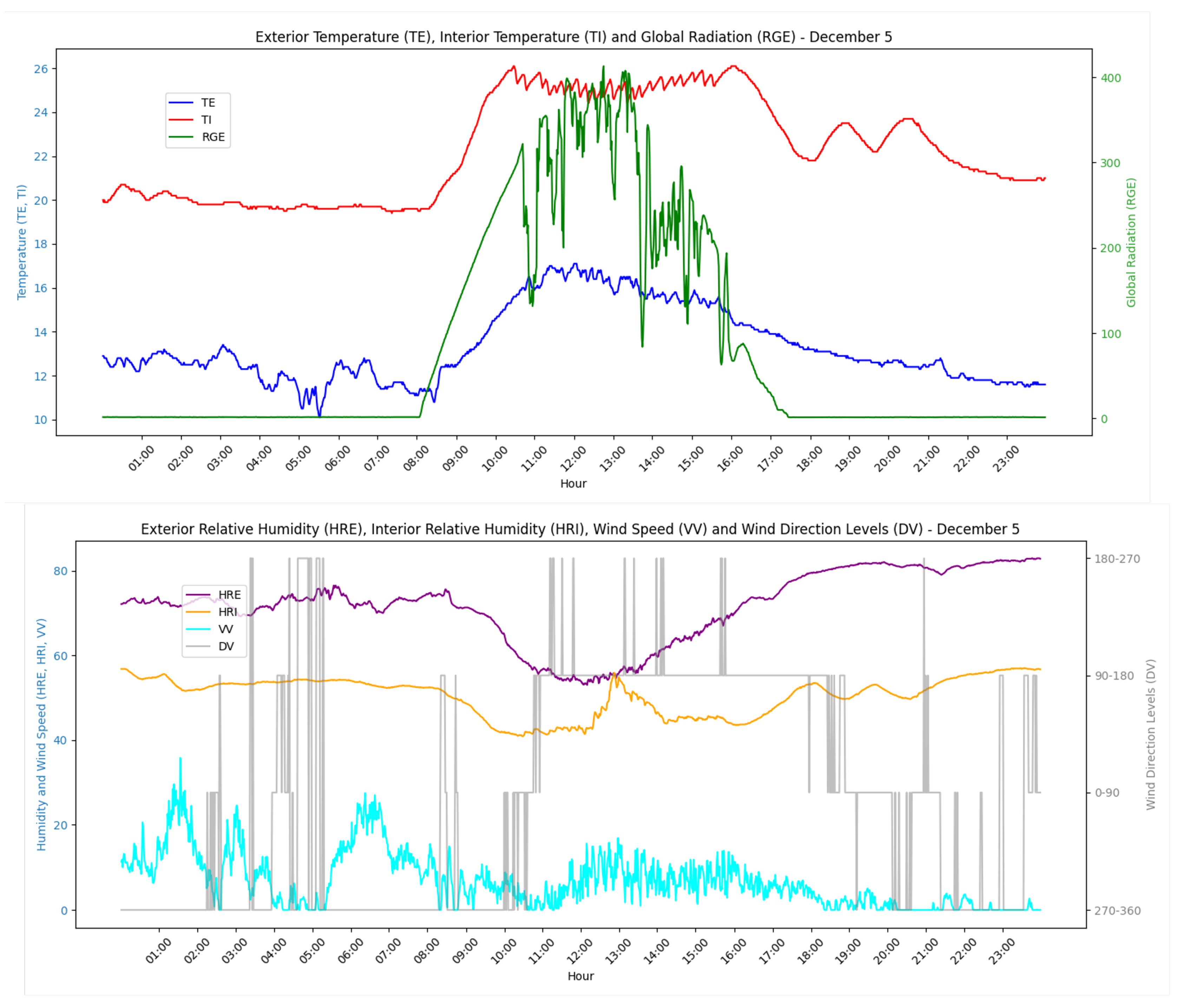

Figure 5.

Example of daily data obtained in the dataset. The data is obtained daily every minute and stored in a dataset to obtain temperature prediction models inside the greenhouse.

Figure 5.

Example of daily data obtained in the dataset. The data is obtained daily every minute and stored in a dataset to obtain temperature prediction models inside the greenhouse.

7. Phase 4. Digital model and RL algorithm

RL involves an agent learning to make sequential decisions through interaction with its environment to maximize cumulative rewards. In the context of greenhouse control, RL offers a promising framework to optimize energy use while maintaining the configured maximum and minimum temperature conditions. The theoretical model serves to support decision making at a given moment.The objective is to start from prior knowledge that allows the system to initiate decision making more effectively. From the initial configuration of the RL algorithm, the system will adapt to the specific conditions of the installation, optimizing control as feedback information is obtained on the evolution of the system, compared to its previous behavior. It is therefore about introducing a finer regulation that is capable of improving the existing one by adapting to the specific conditions of the installation.

7.1. Greenhouse model based in differential equations.

There is knowledge and mathematical models of the behavior of temperature in a greenhouse. These models provide a good starting point for defining reward policies and functions. For all these reasons, the RL model is based on the knowledge already acquired to, from there, introduce improvements and optimize current operation. The behavior of the temperature inside a greenhouse is influenced by several factors, including the external temperature, solar radiation, and the thermal dynamics of the greenhouse itself. Here, we present a mathematical model to simulate the dynamics of the greenhouse temperature.

The thermal model of a greenhouse when both heating and cooling are applied can be described by the following differential equation.

To discretize this equation for algorithmic application, we consider a time step

. The temperature change over the time step from

t to

can be approximated as:

Substituting this into the differential equation gives:

Solving for

, we get the following:

where:

is the heat capacity of the greenhouse (J/°C),

is the inside temperature of the greenhouse at time t (°C),

is the inside temperature of the greenhouse at time (°C),

is the outside temperature at time t (°C),

is the solar radiation entering the greenhouse at time t (W),

is the heating power applied to the greenhouse at time t (W),

is the cooling power applied to the greenhouse at time t (W),

U is the overall heat transfer coefficient (W/m°C),

A is the surface area of the greenhouse (m),

is the time step (s).

This discretized equation can be used to iteratively compute the inside temperature of the greenhouse in discrete time steps for the purpose of applying control algorithms. This model is simple and the results give us a first analysis of trends and future strategies. In the scenario of an RL algorithm, the actions taken depend on the value of the set temperature. The actions that can be taken are shown in the

Table 4.

7.2. Greenhouse model based in predictions

This model has two parts. In the first case, the behavior of the greenhouse temperature in the next moments of time is predicted on the basis of IoT data and the machine learning paradigm. Once the future value of the temperature inside the greenhouse is calculated, the best possible action for regulation is taken.

The interior temperature of a greenhouse based on external climatic conditions and historical can be obtained with several models, including linear regression, decision tree, gradient boost, random forest, and neural networks, can be compared to identify the most accurate and consistent predictive model.

The data set used consists of climatic data collected from a greenhouse over several months. The variables include:

TE: Exterior Temperature

HRE: Exterior Relative Humidity

RGE: Exterior Global Radiation

VV: Wind Speed

DV: Wind Direction

LL: Rainfall

TI: Interior Temperature

HRI: Interior Relative Humidity

The data is resampled to intervals that are configured according to the installation, and lag features are created to incorporate historical data into the prediction model.

The actions taken are shown in

Table 5.

7.3. Reinforcement Learning deployment

Reinforcement Learning (RL) is a subfield of machine learning where an agent learns to make decisions by interacting with an environment to maximize a cumulative reward. In the context of controlling the temperature of a greenhouse, an RL agent can learn when to turn the heater on or off to maintain the desired temperature and minimize energy consumption.

The RL model consists of the following elements:

State (s): Represents the current situation of the environment. In our case, the state can include the internal temperature of the greenhouse, the external temperature, and solar radiation.

Action (a): The decision taken by the agent in each state. In our case, actions are turning the heater on () or off ().

Reward (r): The feedback received by the agent after taking an action in a state. The reward can be a function of the internal temperature and energy consumption.

Policy (): The strategy followed by the agent to make decisions. The policy maps states to actions.

Value (): The expected value of the cumulative reward starting from state s following policy .

Q-Value (): The expected value of the cumulative reward starting from state s taking action a and following policy .

The goal of the RL agent is to learn an optimal policy that maximizes the cumulative reward. This is formalized in the optimal control problem in a Markov Decision Process (MDP).

8. Phase 5. Training

To start with prior knowledge and avoid the initial errors that RL introduces, actions, functions, and reward policies are analyzed first through simulation and then using real data obtained in the cultivation process. Firstly, an analysis is carried out using differential equations that model the climatic behavior in a greenhouse and then apply it with the data obtained in the greenhouse.

8.1. RL Algorithm application in greenhouse model based in differential equations model

The RL agent intervenes in the control process following the following steps:

Observe Current State: The agent observes the current state , which includes the internal temperature (), the external temperature (), and solar radiation ().

Select Action: Based on its policy , the agent chooses an action (turn the heater on or off).

Apply Action: The chosen action is applied to the environment.

Observe Reward and Next State: The agent receives a reward and observes the next state .

Update Policy: The agent updates its policy using the learning algorithm, such as Q-Learning.

By formulating temperature control as a sequential decision-making problem, RL algorithms can adaptively adjust based on real-time environmental data and set-point conditions. One crucial aspect in applying RL to temperature control is the choice of state representation, which captures relevant information about the environment for decision-making.

The process is based on the technician’s previous configuration and a theoretical model of temperature behavior on which the RL algorithm performs the calculations. This model can be based on energy balances or be a model obtained by studying the behavior of greenhouse conditions.

Selecting an appropriate state representation is essential to allow the RL agent to effectively learn and adapt its cotrol policies to achieve optimal performance under varying environmental conditions. Furthermore, careful selection of control actions is equally important, as it determines how the RL agent interacts with the environment. By choosing suitable control actions, such as adjusting the temperature set-poin, the RL agent can effectively optimize energy usage. Finally, algorithms are developed to optimize the reward functions associated with states and actions, ensuring that the RL agent learns to make decisions that lead to the most favorable outcomes. This approach allows the development of intelligent control systems capable of dynamically responding to changing environmental conditions and ambient conditions needs.

The Q learning algorithm has

five actions(

Table 4):

The

Table 6 describes examples of potential

reward functions applicable in RL algorithms.

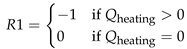

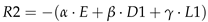

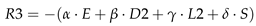

An appropriate RL algorithm, such as Q learning, deep Q network (DQN), or posterior policy optimization (PPO), should be chosen, based on the complexity of the task and the available computational resources, where:

, , and are weight coefficients that can be tuned.

E is the energy consumption penalty.

is the current inside temperature.

is the setpoint temperature.

where and are the maximum and minimum allowable temperatures, respectively.

where is the set-point temperature from the previous time step.

Analysis of the reward functions for greenhouse control

The reward function aims to balance the trade-offs between energy efficiency, temperature stability, and smooth set-point adjustments. By carefully tuning the weights

,

,

, and

, the reinforcement learning agent can learn an optimal strategy to control the greenhouse temperature. Three reward functions are indicated in

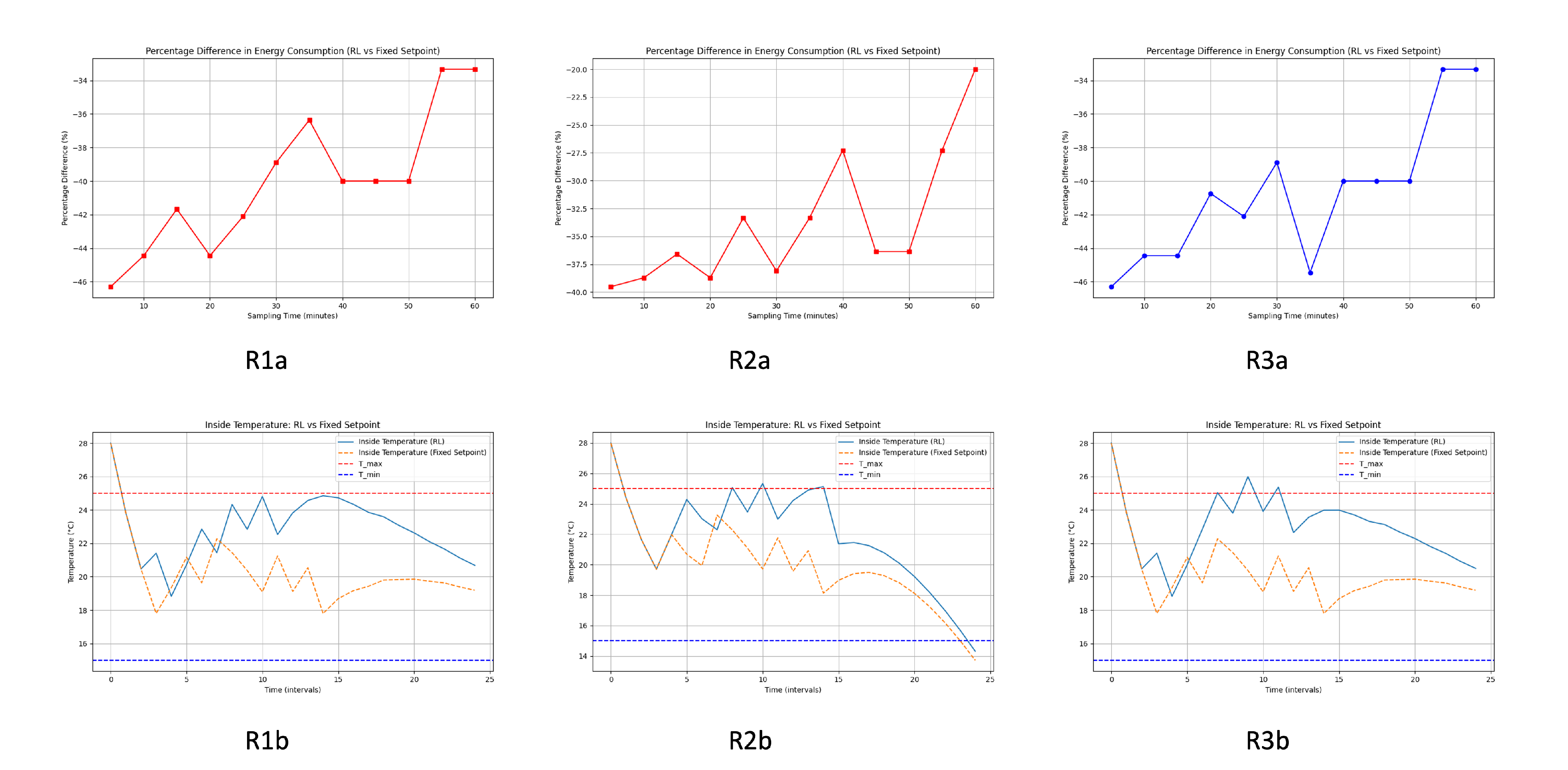

Table 6. In this section, we analyze the result obtained by applying each of them to the temperature evolution model in a greenhouse (

Figure 6). From the result obtained, the first conclusions will be drawn to obtain the best initial configuration of the RL algorithm that will act in the installation under real conditions. In this work, we compare the three strategies in the

Table 4. We also compare the control with RL and the results obtained with a classic control without RL. This analysis and comparison will result in the theoretical improvement of the algorithm compared to the current control and the best-reward policy. Once the theoretical analysis of the best reward function applicable to the theoretical model and greenhouse conditions has been carried out, the algorithm is implemented in the facility using real data and actions defined in the previous step. Comparison of energy consumption between the reinforcement learning (RL)-based control and a fixed set point control at different sampling times showed varied results. The following analysis examines the percentage difference in energy consumption between systems controlled by Reinforcement Learning (RL) and those with a fixed set point. The cooling and heating processes of the greenhouse have been analyzed. Each of the analyses provides a figure with six images with different comparisons. The six subplots in each provided figure are evaluated to determine which system is more energy efficient and effective in maintaining the desired temperature range.

1a) Cooling temperature control analysis using the differential equation model (Figure 7)

In R1a-R1b the RL system manages to maintain the inside temperature within the desired range more effectively than the fixed setpoint system. Temperature variations with RL control are less pronounced and stay closer to the set-point compared to the fixed set-point system. In R2a-R2b Similar to R1a-R1b, the RL control maintains a more stable temperature, with fewer and smaller deviations from the set point. The fixed set point system shows greater fluctuations and occasionally falls outside the desired temperature range. In R3a-R3b the graph reinforces the previous observations, where the RL system exhibits better control over the temperature, keeping it within the desired limits more consistently than the fixed set-point system. The fixed set point control shows larger temperature changes and less precision in maintaining the set point. R1a and R3a appear to perform the best, with higher and more consistent energy savings at different sampling times. The Top Left Subplot shows a slight edge in overall savings stability.

General Improvement with RL Control

Across all energy consumption graphs, the RL system consistently outperforms the fixed set point system in terms of energy savings. The improvement is particularly notable for shorter sampling times (5 to 20 minutes), where the savings are more substantial. Even at longer sampling intervals (up to 60 minutes), the RL system maintains a significant percentage of energy savings.

Temperature Control Effectiveness

The lower subplots demonstrate that the RL control system maintains the desired temperature range more effectively than the fixed set-point system. The RL system results in smaller deviations from the set-point, indicating better control and stability. The fixed set-point system shows larger temperature fluctuations and occasionally fails to keep the temperature within the desired range.

Conclusion in cooling process using the differential equation model

The analysis indicates that the RL control system is highly effective in reducing energy consumption compared to the fixed set-point system. Shorter sampling times yield the best results, with the RL system showing a 45% improvement in energy efficiency. Furthermore, the RL system demonstrates superior temperature control, maintaining the desired range more consistently and with fewer deviations. In general, the RL system is a preferable choice for optimizing energy use and temperature stability in greenhouse temperature control scenarios.

In the R1a-R1b subgraph shows a consistent improvement in energy consumption with the RL system as the sampling time increases. For sampling times between 5 and 10 minutes, the RL system shows significant energy savings of around -45%. As the sampling time increases, the savings decrease slightly but remain significant, ending around -35% at 60 minutes. In R2a-R2b exhibits a similar trend, with the RL system performing better than the fixed set-point system. The initial savings are around -40%, with fluctuations as the sampling time increases. The savings dip slightly around 30 to 40 minutes but show an overall improvement, ending around -20% for 60 minutes. In R3a-R3b The trend here is also consistent with the other graphs, showing that the RL system consistently saves energy compared to the fixed set-point system. The savings are initially around -45% for shorter sampling times. Despite fluctuations, the savings remain significant throughout, ending around -35% for 60 minutes.

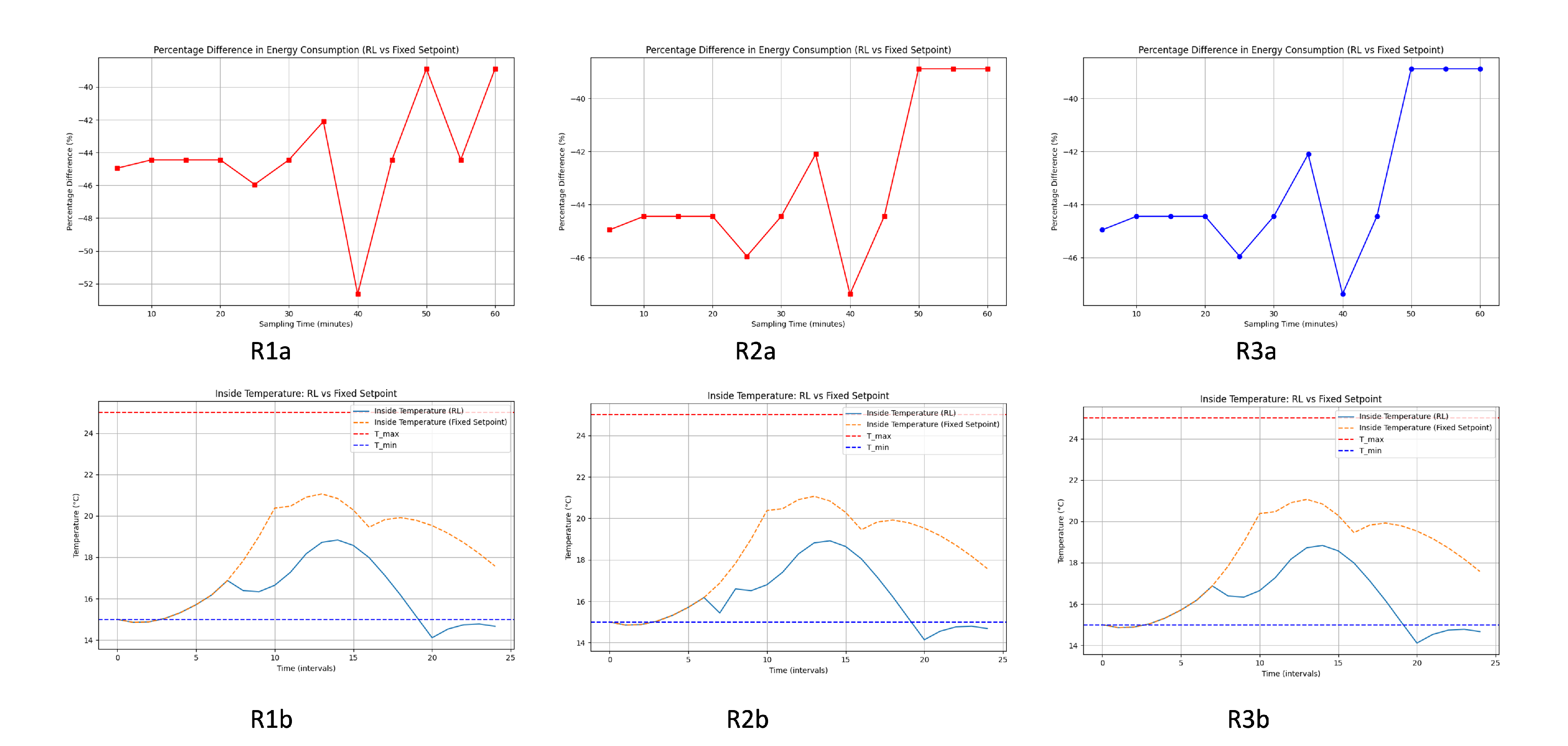

1b) Heating temperature control analysis using the differential equation model (Figure 8)

General Improvement with RL Control

Across all energy consumption graphs, the RL system consistently outperforms the fixed set point system in terms of energy savings. The improvement is particularly notable for shorter sampling times (5 to 20 minutes), where the savings are more substantial. Even at longer sampling intervals (up to 60 minutes), the RL system maintains a significant percentage of energy savings.

Temperature Control Effectiveness

The bottom subplots demonstrate that the RL control system maintains the desired temperature range more effectively than the fixed set point system. The RL system results in smaller deviations from the set point, indicating better control and stability. The fixed set point system shows larger temperature fluctuations and occasionally fails to keep the temperature within the desired range.

Conclusion in heating process using the differential equation model

The analysis indicates that the RL control system is highly effective in reducing energy consumption compared to the fixed set-point system. Shorter sampling times yield the best results, with the RL system showing a 45% improvement in energy efficiency. Furthermore, the RL system demonstrates superior temperature control, maintaining the desired range more consistently and with fewer deviations. In general, the RL system is a preferable choice for optimizing energy use and temperature stability in greenhouse temperature control scenarios.

8.2. RL greenhouse control based in temperature prediction model

The first goal is to design and implement a model that predicts indoor temperature (TI) based on various environmental variables. The model takes advantage of past data and predicted future values to make accurate forecasts.Once there is an estimated value of the temperature evolution inside the greenhouse, the RL algorithm is applied acting on the set-point, depending on the said evolution. From the previous study using the differential equations model, it was concluded that the reward function R1 is viable to display the algorithm. For all these reasons, in this analysis with data obtained in the greenhouse we are going to use this reward function.

Prediction model

The model predicts the indoor temperature (TI) using the following variables:

External Temperature (TE)

External Relative Humidity (HRE)

Wind Direction (DV)

Wind Speed (VV)

External Global Radiation (RGE)

Internal Relative Humidity (HRI)

The prediction is based on the past values (lags) of these variables for the previous 60 minutes and the predicted external temperatures for the next 60 minutes.

We created lagged characteristics for the last 60 minutes and leading characteristics for the future external temperature (TE). This allows the model to capture temporal dependencies. The data set is divided into training and testing sets. The features are standardized to have a mean of 0 and a standard deviation of 1. We use a Linear Regression model to predict the indoor temperature.

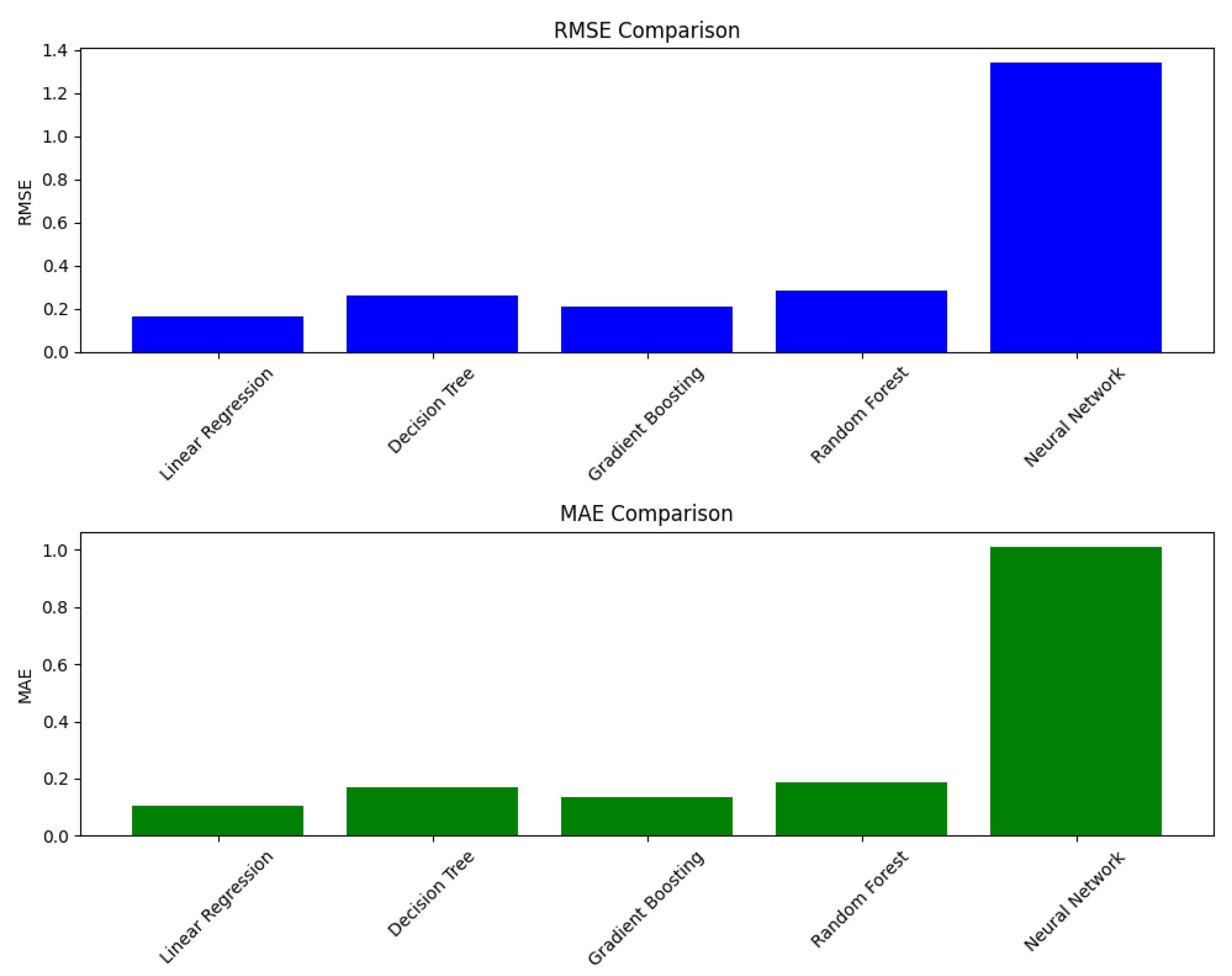

For predicting the interior temperature of the greenhouse, Random Forest and Linear Regression models are the most reliable based on their lower RMSE and MAE values. The Neural Network model’s high error rates suggest that it may not be suitable for this particular prediction task without further tuning or perhaps a different architecture or feature set. Linear regression is chosen for its simplicity and interpretability.The model’s performance is evaluated using the Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE) on both the training and testing datasets.

The

Figure 9 compares the performance of different machine learning models based on two metrics: Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE). Data were captured from November 2023 to May 2024.

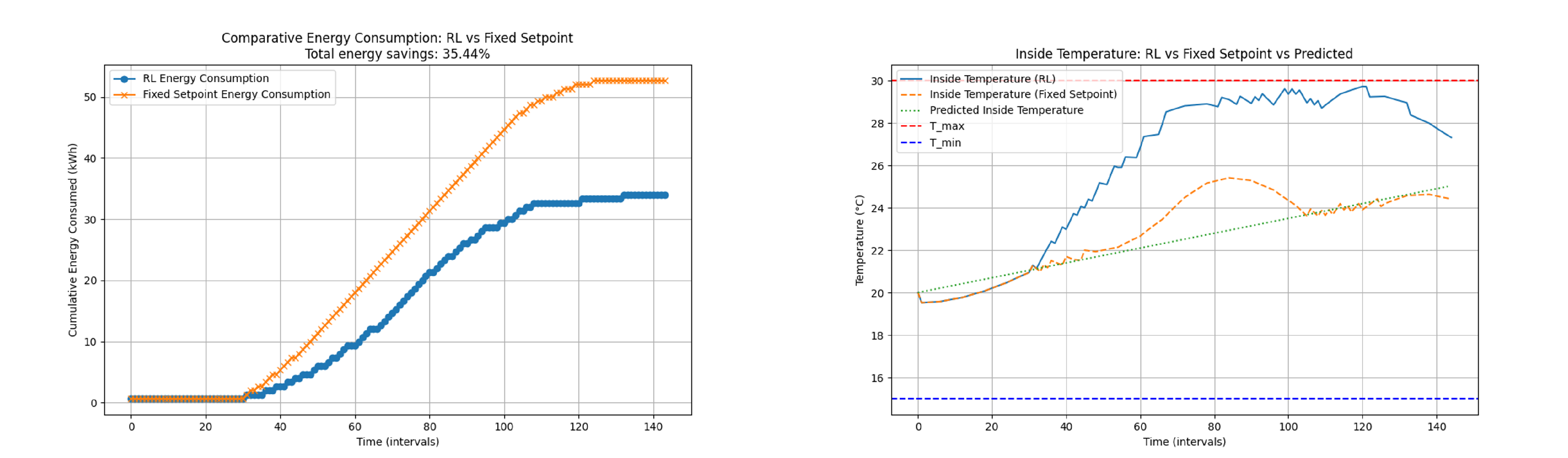

2a) Cooling control analysis using the temperature prediction model (Figure 10 )

The RL agent was trained to control the greenhouse temperature. The performance of the RL-based control was compared to that of a fixed set point control. The total energy consumption and the inside temperature regulation were evaluated.

The actions selected depend on the predicted temperature. In this way, it does not depend on a value set in the set point, but on variable values that depend on the behavior of the greenhouse.

Figure 10 shows the regulation of inside temperature using RL control, fixed setpoint control, and predicted inside temperature. The RL control maintains the temperature closer to the upper limit (Tmax) compared to the fixed set-point control. This dynamic adjustment helps minimize energy consumption while ensuring that the temperature stays within the desired range. The predicted temperature provides a reference for the RL control actions. The cumulative energy consumption graph (the graph on the right) demonstrates that the RL-based control performs better than the fixed set point control, achieving a total energy savings of 35.44%. This significant reduction in energy usage highlights the efficiency of the RL algorithm in optimizing the control strategy. The graph on the right reveals that the RL control effectively maintains the temperature within the desired range (between T_min and T_max). The RL control shows a more dynamic response compared to the fixed set-point control, which can lead to more efficient heating. The predicted temperature aligns well with the actual inside temperature regulated by the RL control, indicating accurate predictions and effective control actions. The RL-based temperature control (in the cooling phase) method shows significant advantages over fixed set point control in terms of energy savings and effective temperature regulation. The ability of RL to adaptively adjust the set point temperature based on predicted inside temperatures leads to optimized energy consumption and better maintenance of the desired temperature range.

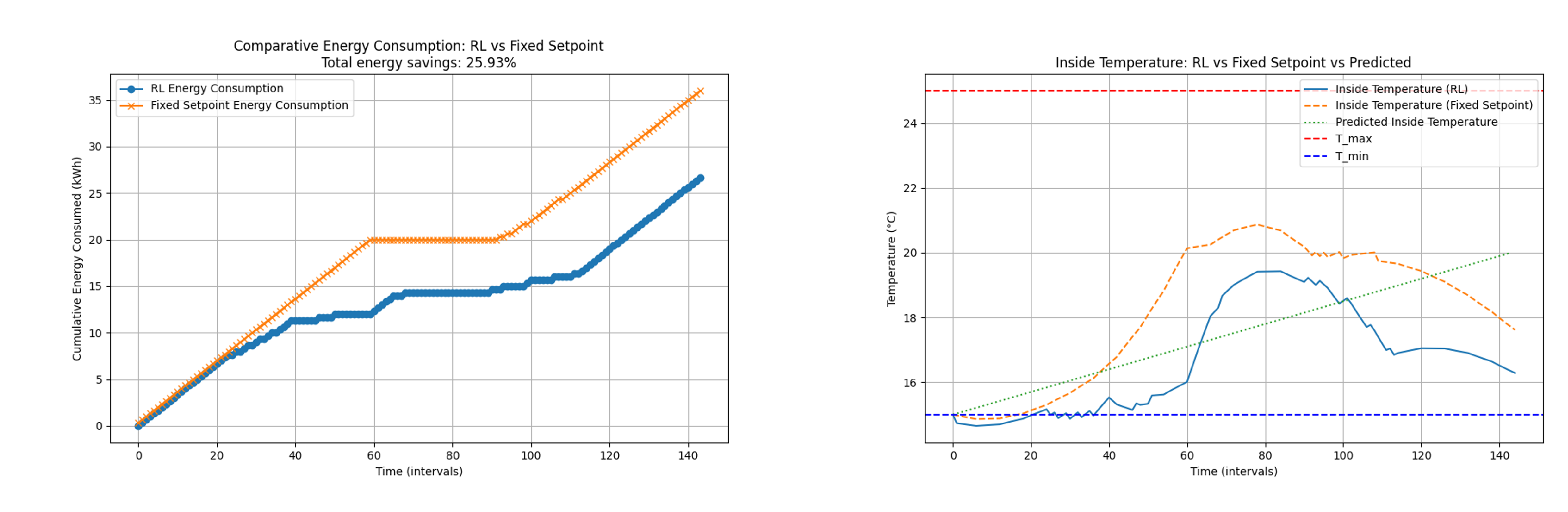

2b) Heating control using the temperature prediction model (Figure 11)

Figure 11 compares the cumulative energy consumption between the RL-based control and the fixed set-point control. The RL control demonstrates significant energy savings, with a total reduction of 25.93% compared to the fixed set-point control. This indicates the effectiveness of the RL algorithm in optimizing energy usage while maintaining the desired temperature.

The second figure in the composite image shows the regulation of the inside temperature using RL control, fixed set-point control, and the predicted inside temperature. The RL control maintains the temperature closer to the upper limit () compared to the fixed setpoint control. This dynamic adjustment helps minimize energy consumption while ensuring that the temperature stays within the desired range. The predicted temperature provides a reference for the RL control actions.

The cumulative energy consumption graph demonstrates that the RL-based control performs better than the fixed set point control, achieving a total energy savings of 25.93%. This significant reduction in energy usage highlights the efficiency of the RL algorithm in optimizing the control strategy.

The inside temperature regulation graph reveals that the RL control effectively maintains the temperature within the desired range (between and ). The RL control shows a more dynamic response compared to the fixed set point control, which can lead to more efficient heating. The predicted temperature aligns well with the actual inside temperature regulated by the RL control, indicating accurate predictions and effective control actions.

The RL-based temperature control method shows significant advantages over the fixed set point control in terms of energy savings and effective temperature regulation. The ability of RL to adaptively adjust the setpoint temperature based on predicted inside temperatures leads to optimized energy consumption and better maintenance of the desired temperature range.

Table 7.

Actions taken by RL algorithm in heating case using temperature (TI) prediction. The control values are limited by and

Table 7.

Actions taken by RL algorithm in heating case using temperature (TI) prediction. The control values are limited by and

| Action 1 |

=

|

| Action 2 |

=

|

| Action 3 |

=

|

| Action 4 |

=

|

| Action 5 |

=

|

This Q-learning algorithm optimizes (in the heating phase) the greenhouse set-point temperature by selecting from five actions to adjust the set-point. The reward function penalizes heating usage, encouraging the algorithm to find a set-point that minimizes energy consumption while maintaining the desired temperature range.

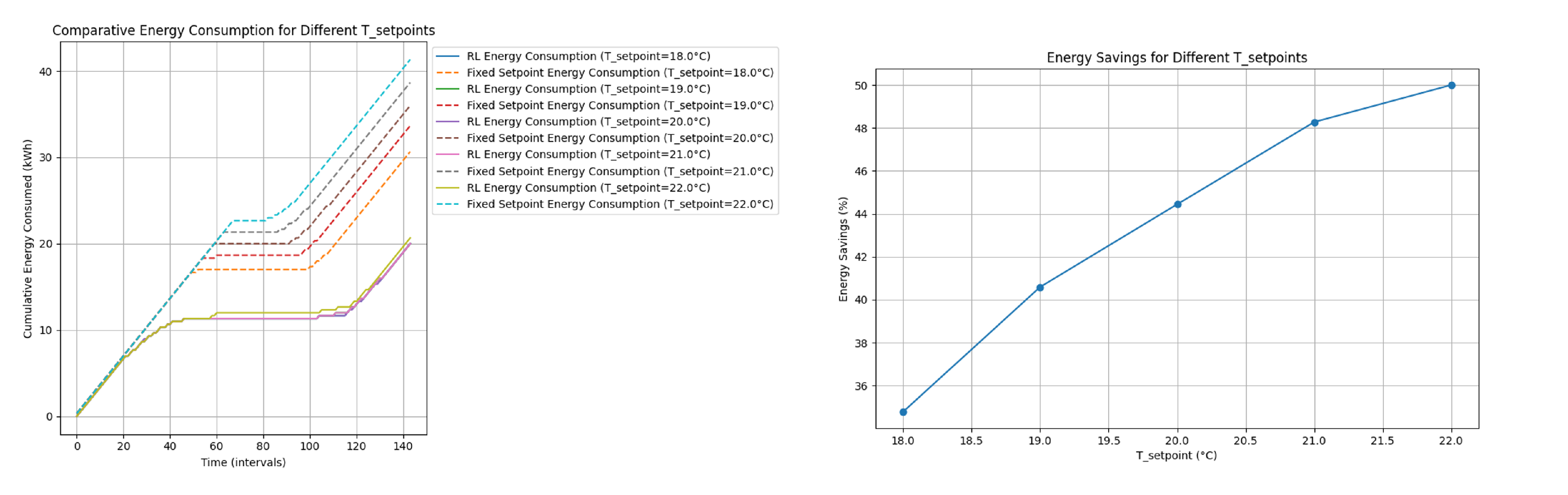

Analysis of Energy Consumption and Savings for different

The graph on the left of

Figure 12 illustrates the cumulative energy consumption over time for different set point temperatures (

). The graph compares the energy consumption between Reinforcement Learning (RL) based control and a fixed set-point control for various setpoints.

Right Graph: Energy Savings for Different

The graph on the right of

Figure 12 shows the percentage of energy savings achieved by using the RL control compared to the fixed set point control in different

values.

Key Observations:

The analysis of the graphs reveals that the RL-based control offers substantial energy savings compared to the fixed set-point control across various set points. The energy savings are more pronounced at higher set points, indicating the effectiveness of RL control in optimizing energy consumption while maintaining desired temperature conditions in the greenhouse. This makes RL control a promising approach for energy-efficient temperature regulation.

9. Conclusion

The integration of Internet of Things (IoT) protocols and Reinforcement Learning (RL) methodologies has been shown to be effective in managing and optimizing greenhouse operations for industrial hemp cultivation. This combination not only enhances operational efficiency, but also maintains selected temperatures and optimizes energy consumption more effectively than classical control methods. By reducing the need for constant human intervention, this technological integration minimizes labor costs and increases scalability for larger agricultural enterprises.

The RL based control system shows significant energy savings while maintaining the desired temperature ranges, outperforming traditional fixed set-point control systems. Specifically, the study shows energy savings of up to 45% during cooling processes and 25.93% during heating processes. In addition, this new control approach simplifies the workload of technicians by eliminating the need for complex analyzes to achieve the same results, allowing them to focus on higher-level oversight and maintenance tasks.

Integration with IoT plays a crucial role in this setup, enabling real-time data acquisition and seamless communication between various greenhouse subsystems. IoT devices collect and transmit environmental data that RL algorithms use to make informed dynamic adjustments to greenhouse conditions. This IoT integration ensures that the RL model can adapt to changing conditions promptly and accurately, thus optimizing resource use and improving overall system responsiveness.

RL algorithms are capable of adaptively adjusting set-point temperatures based on real-time data and predictions, leading to optimized energy consumption and better maintenance of desired environmental conditions. The study validates the practical implementation of RL models in automated greenhouses in the real world, showcasing their ability to scale and adapt to different types of crops and environmental conditions. These conclusions highlight the potential of combining IoT and RL technologies to improve the efficiency, scalability, and sustainability of greenhouse operations, particularly for industrial hemp cultivation.

Author Contributions

Conceptualisation, J.M.C.-Z., F.J.F.-P.; methodology, S.P.-P., J.M.C.-Z., F.J.F.-P., J.A.B.-C and M.P-H; software, S.P.-P., J.A.B-C and M.P.-H; validation, S.P.-P., J.M.C.-Z., F.J.F.-P. and J.A.B-C.; formal analysis, S.P.-P., J.M.C.-Z., F.J.F.-P.,M.P-H and J.A.B-C.; investigation, S.P.-P., J.M.C.-Z. and M.P.-H; resources, J.M.C.-Z. and F.J.F.-P.; data analysis, S.P.-P. and J.M.C.-Z.; writing—original draft preparation, S.P.-P. and M.P.-H; writing—review and editing, J.M.C.-Z., J.F.-P. and J.A.B.-C; visualization, J.M.C.-Z. and F.J.F-P.; supervision, J.M.C.-Z. and F.J.F.-P; project administration, J.M.C.-Z. and S.P.-P; funding acquisition, J.M.C.-Z. and F.J.F.-P. All authors have read and agreed to the published version of the manuscript.

Funding

This study is part of the AGROALNEXT program (AGROALNEXT/2022/048) and was supported by MCIN with funding from the European Union NextGenerationEU (PRTR-C17.I1) and the Generalitat Valenciana, and the Valencian Innovation Agency (AVI) under scientific innovation unit (UCIE Ars Innovatio) of the University of Alicante

Abbreviations

The following abbreviations are used in this manuscript:

| MDPI |

Multidisciplinary Digital Publishing Institute |

| DOAJ |

Directory of open access journals |

| TLA |

Three letter acronym |

| LD |

linear dichroism |

References

- Kiumarsi, B.; Vamvoudakis, K.; Modares, H.; Lewis, F. Optimal and Autonomous Control Using Reinforcement Learning: A Survey. IEEE Transactions on Neural Networks and Learning Systems 2018. [Google Scholar] [CrossRef] [PubMed]

- Perera, A.T.D.; Kamalaruban, P. Applications of reinforcement learning in energy systems. Renewable & Sustainable Energy Reviews 2021. [Google Scholar] [CrossRef]

- Wang, Y.; Velswamy, K.; Huang, B. A Long-Short Term Memory Recurrent Neural Network Based Reinforcement Learning Controller for Office Heating Ventilation and Air Conditioning Systems 2017. 2017. [Google Scholar] [CrossRef]

- Kazmi, H.; Mehmood, F.; Lodeweyckx, S.; Driesen, J. Deep Reinforcement Learning based Optimal Control of Hot Water Systems. Energy 2018. [Google Scholar] [CrossRef]

- Ruelens, F.; Claessens, B.; Quaiyum, S.; Schutter, B.D.; Babuska, R.; Belmans, R. Reinforcement Learning Applied to an Electric Water Heater: From Theory to Practice. IEEE Transactions on Smart Grid 2018. [Google Scholar] [CrossRef]

- Zhang, Z.; Chong, A.; Pan, Y.; Zhang, C.; Lam, K.P. Whole building energy model for HVAC optimal control: A practical framework based on deep reinforcement learning. Energy and Buildings 2019. [Google Scholar] [CrossRef]

- Mason, K.; Grijalva, S. A review of reinforcement learning for autonomous building energy management. Computers & Electrical Engineering 2019. [Google Scholar] [CrossRef]

- Hosseinloo, A.H.; Ryzhov, A.; Bischi, A.; Ouerdane, H.; Turitsyn, K.; Dahleh, M.A. Data-driven control of micro-climate in buildings: An event-triggered reinforcement learning approach. Applied Energy 2020. [Google Scholar] [CrossRef]

- Sun, Q.; Wang, X.; Liu, Z.; Mirsaeidi, S.; He, J.; Pei, W. Multi-agent energy management optimization for integrated energy systems under the energy and carbon co-trading market 2022. 2022. [Google Scholar] [CrossRef]

- al Ani, O.; Das, S. Reinforcement Learning: Theory and Applications in HEMS. Energies 2022. [Google Scholar] [CrossRef]

- Fu, Q.; Han, Z.; Chen, J.; Lu, Y.; Wu, H.; Wang, Y.; Fu, Q.; Han, Z.; Chen, J.; Lu, Y.; Wu, H.; Wang, Y. Applications of reinforcement learning for building energy efficiency control: A review 2022. 2022. [Google Scholar] [CrossRef]

- Mauree, D.; Naboni, E.; Coccolo, S.; Perera, A.T.D.; Nik, V.M.; Scartezzini, J.L. A review of assessment methods for the urban environment and its energy sustainability to guarantee climate adaptation of future cities. Renewable & Sustainable Energy Reviews 2019. [Google Scholar] [CrossRef]

- Kazmi, H.; Suykens, J.A.K.; Balint, A.; Driesen, J. Multi-agent reinforcement learning for modeling and control of thermostatically controlled loads. Applied Energy 2019. [Google Scholar] [CrossRef]

- Chen, L.; Xu, L.; Wei, R.; Chen, L.; Xu, L.; Wei, R. Energy-Saving Control Algorithm of Venlo Greenhouse Skylight and Wet Curtain Fan Based on Reinforcement Learning with Soft Action Mask 2023. 2023. [Google Scholar] [CrossRef]

- Ruelens, F.; Iacovella, S.; Claessens, B.; Belmans, R. Learning Agent for a Heat-Pump Thermostat with a Set-Back Strategy Using Model-Free Reinforcement Learning. Energies 2015. [Google Scholar] [CrossRef]

- Vázquez-Canteli, J.R.; Ulyanin, S.; Kämpf, J.H.; Nagy, Z. Fusing TensorFlow with building energy simulation for intelligent energy management in smart cities. Sustainable Cities and Society 2019. [Google Scholar] [CrossRef]

- Sutton, R.; Barto, A. Reinforcement Learning: An Introduction. IEEE Transactions on Neural Networks 2005. [Google Scholar] [CrossRef]

- Peters, M.; Ketter, W.; Saar-Tsechansky, M.; Collins, J. A reinforcement learning approach to autonomous decision-making in smart electricity markets. Machine Learning 2013. [Google Scholar] [CrossRef]

- Kar, S.; Moura, J.M.F.; Poor, H.V. QD -Learning: A Collaborative Distributed Strategy for Multi-Agent Reinforcement Learning Through Consensus+Innovations. IEEE Transactions on Signal Processing 2013. [Google Scholar] [CrossRef]

- Sierla, S.; Ihasalo, H.; Vyatkin, V. A Review of Reinforcement Learning Applications to Control of Heating, Ventilation and Air Conditioning Systems. Energies 2022. [Google Scholar] [CrossRef]

- Vázquez-Canteli, J.R.; Nagy, Z. Reinforcement learning for demand response: A review of algorithms and modeling techniques. Applied Energy 2019. [Google Scholar] [CrossRef]

- Ferrández-Pastor, F.J.; García-Chamizo, J.M.; Nieto-Hidalgo, M.; Mora-Pascual, J.; Mora-Martínez, J. Developing Ubiquitous Sensor Network Platform Using Internet of Things: Application in Precision Agriculture. Sensors 2016, 16. [Google Scholar] [CrossRef] [PubMed]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).