Submitted:

18 July 2024

Posted:

19 July 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

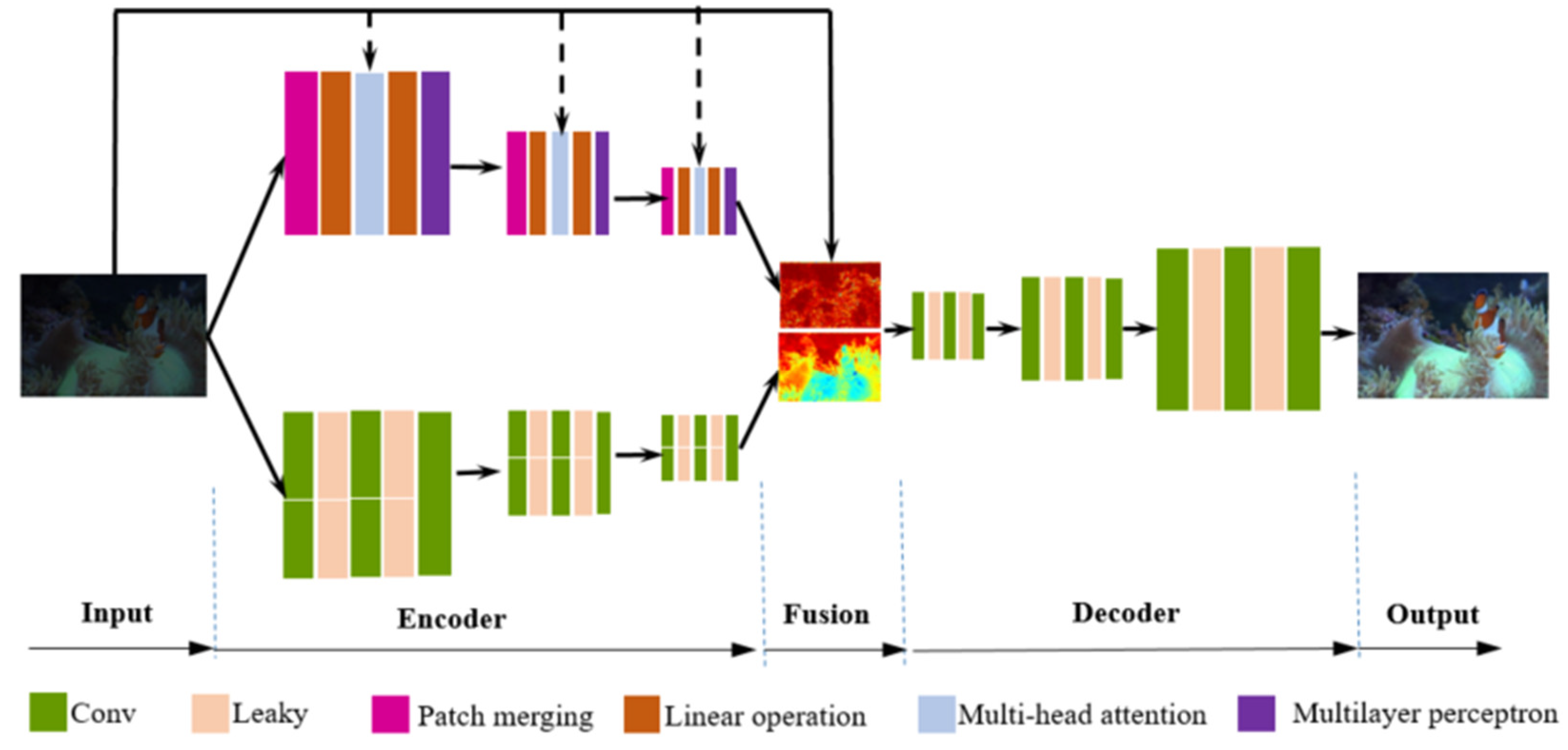

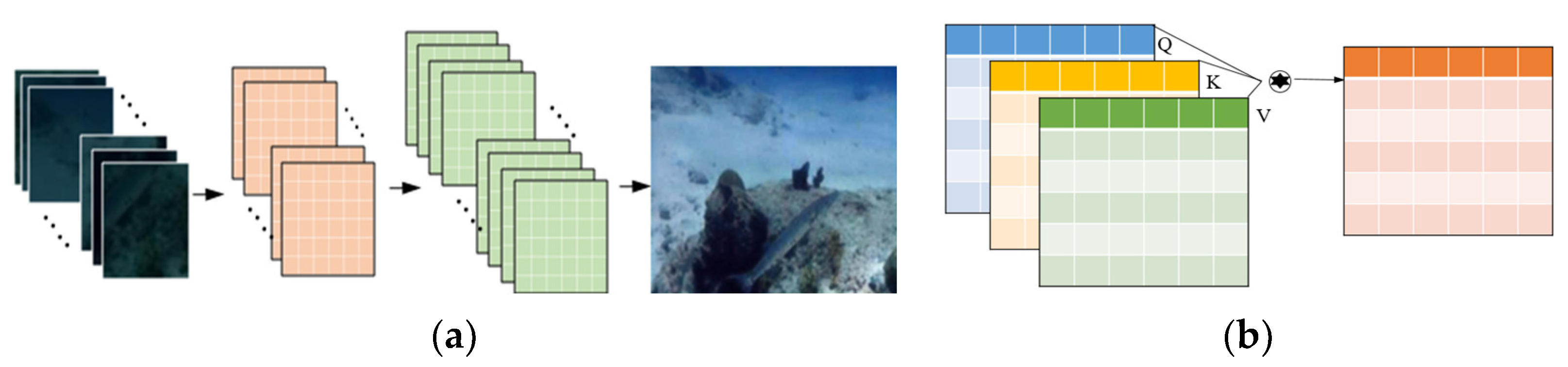

2.1. Two branches’ Feature Extraction Network

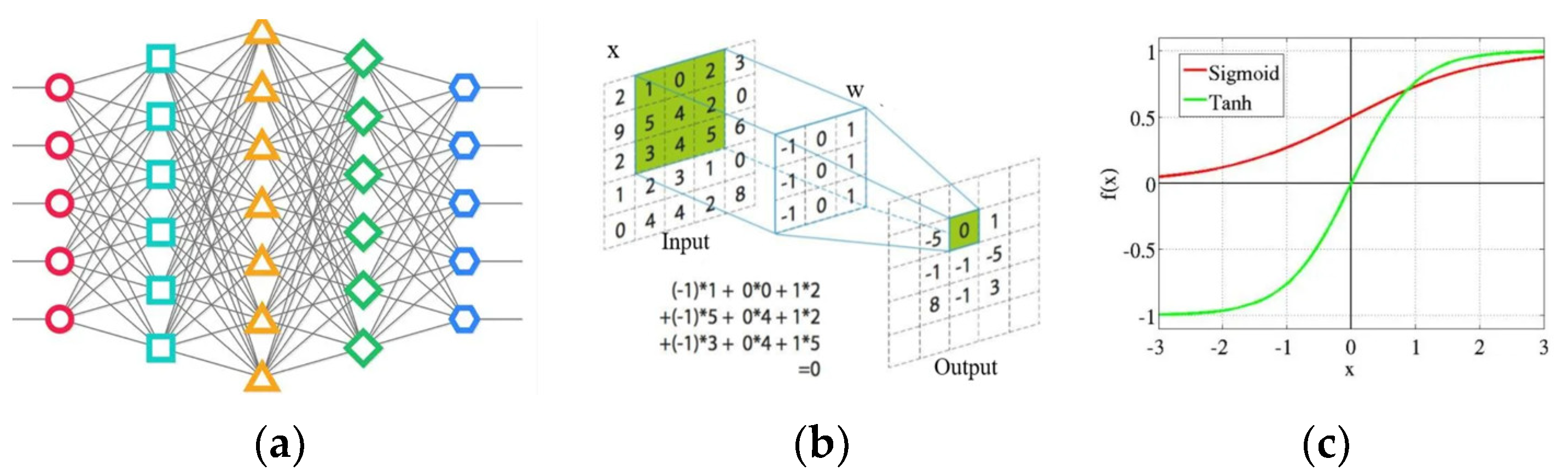

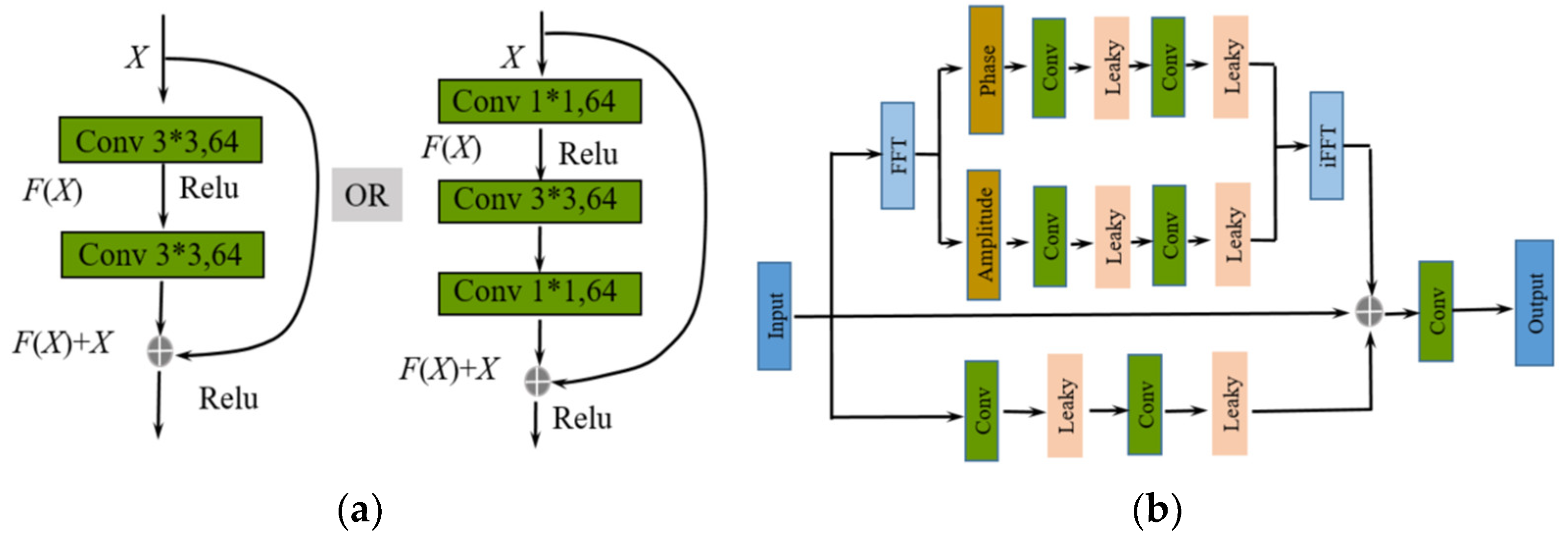

2.2. Implementation of the CNN Branch

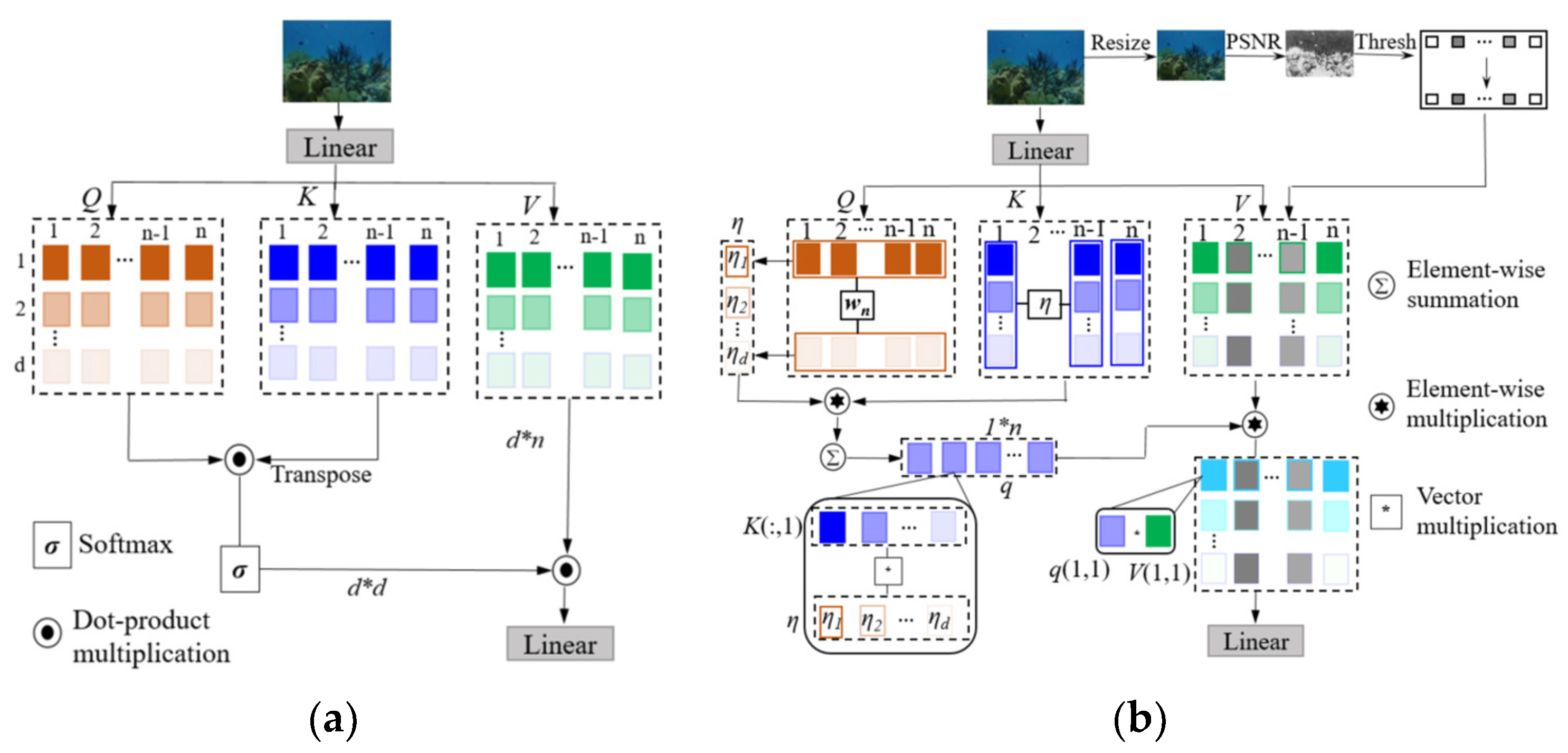

2.3. Implementation of the Transformer Branch

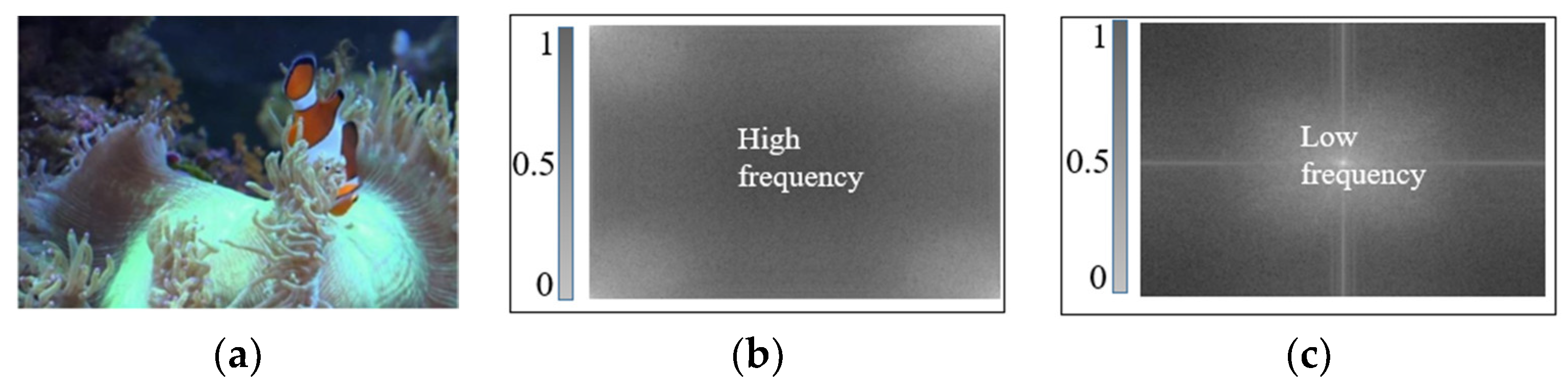

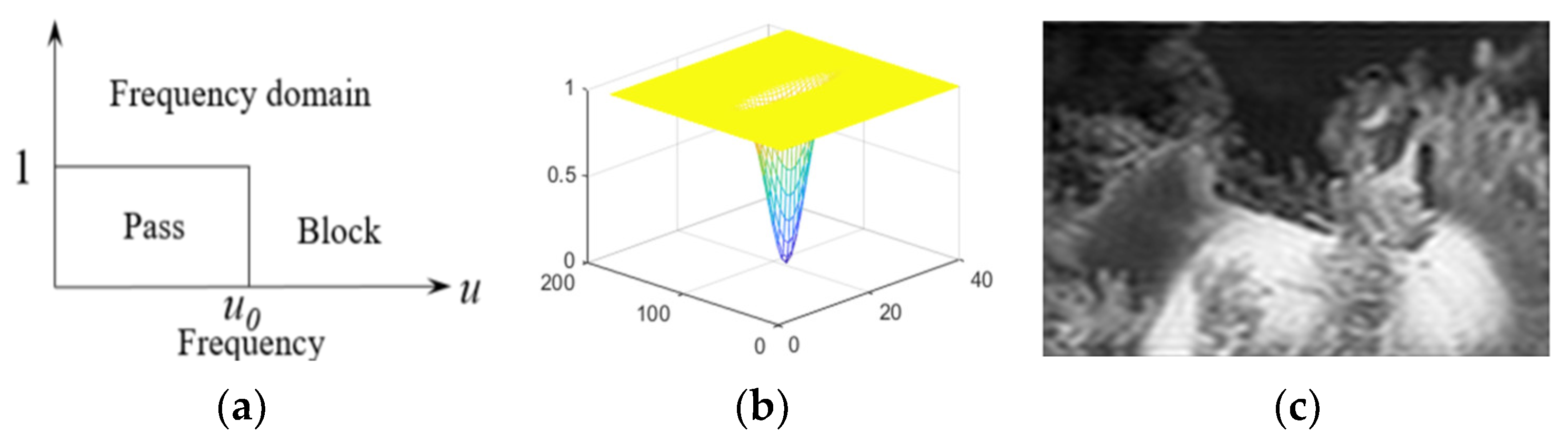

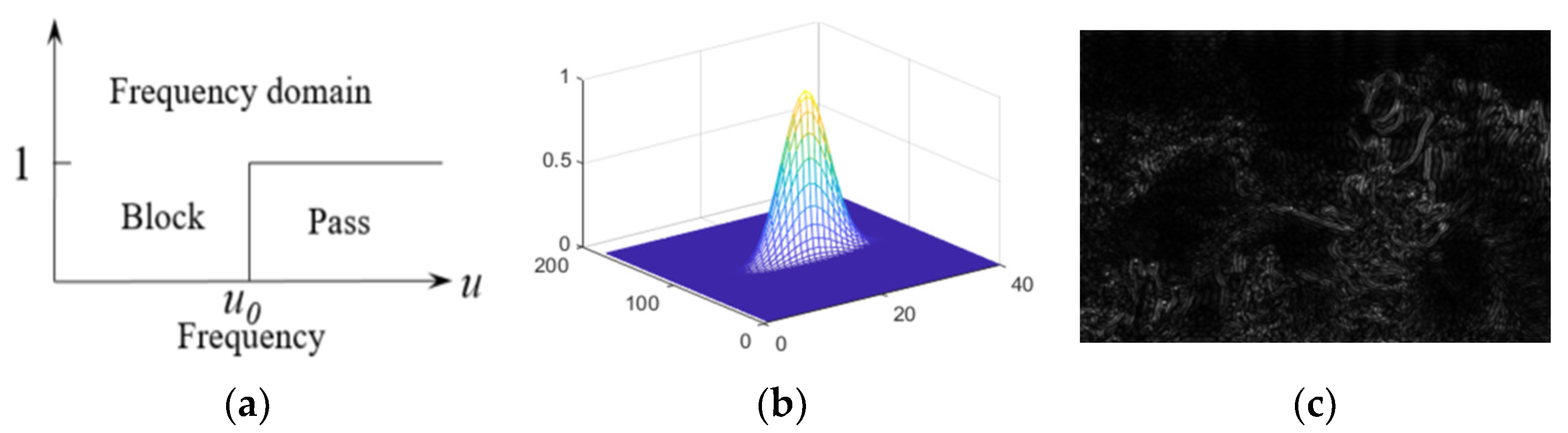

2.4. Fusion Attention Based on High-Pass and Low-Pass Filters

3. Experimental Validation

3.1. Dataset and Experimental Designation

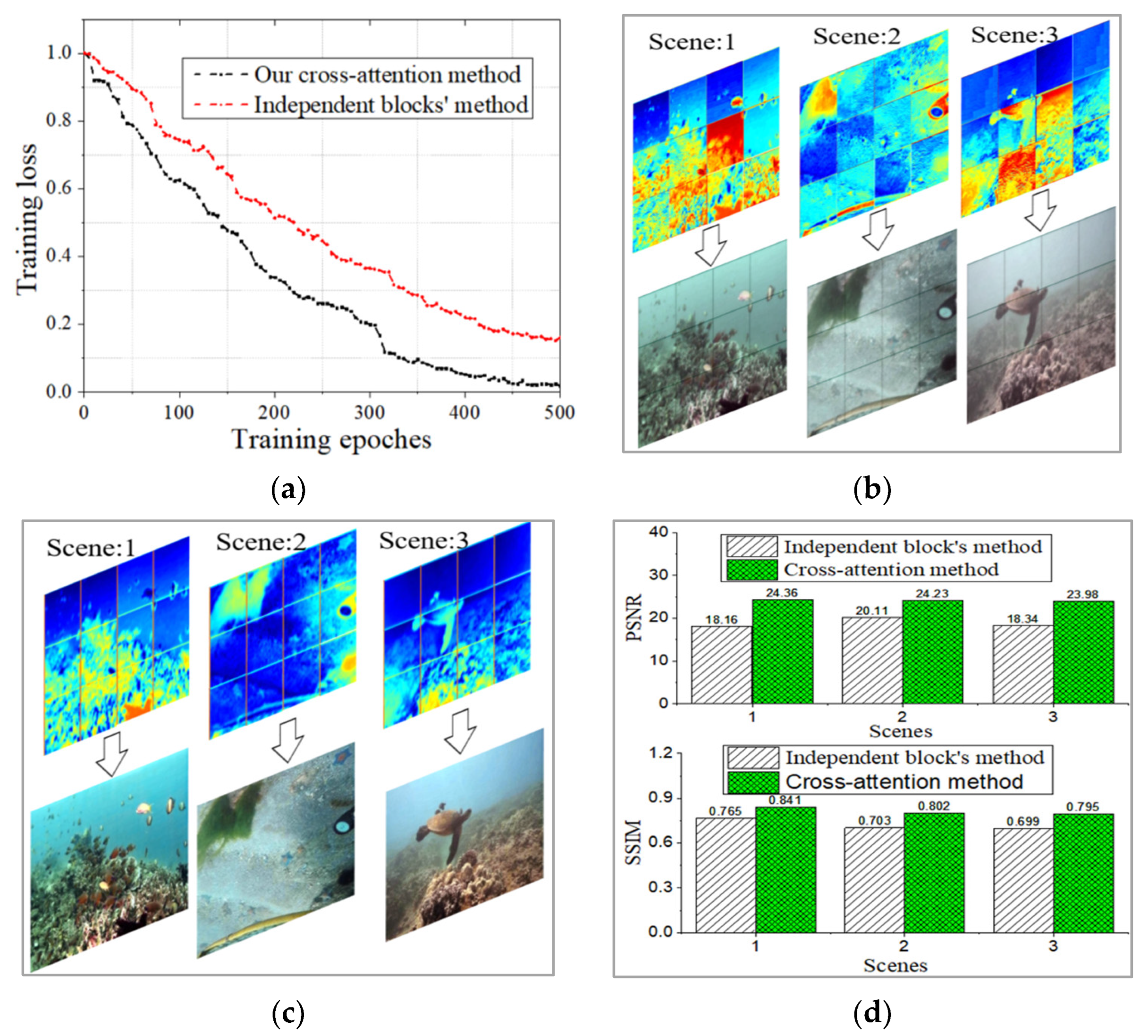

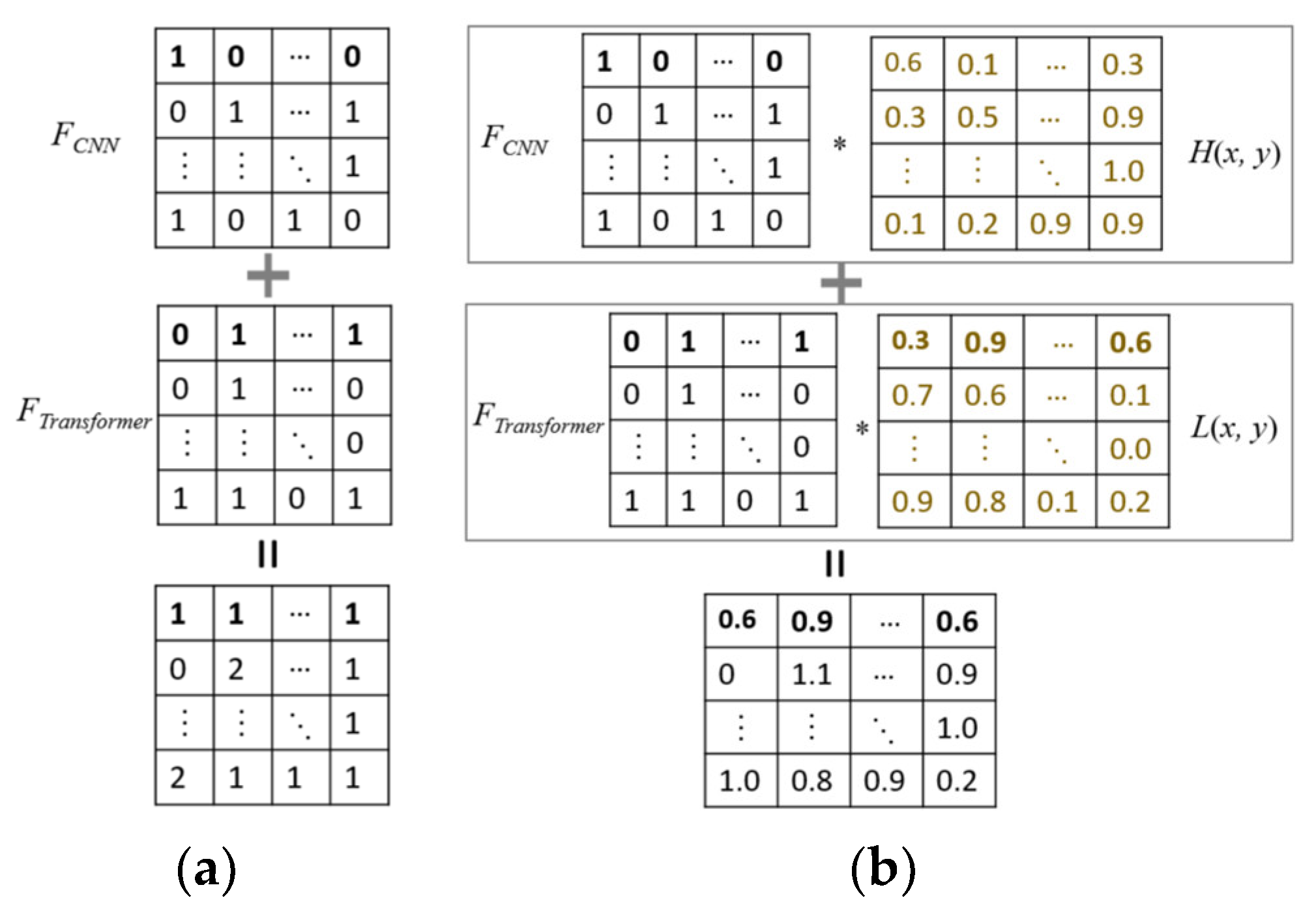

3.2. Ablation Study

3.3. Feature Visualization Process

3.4. Comparison with Current Methods

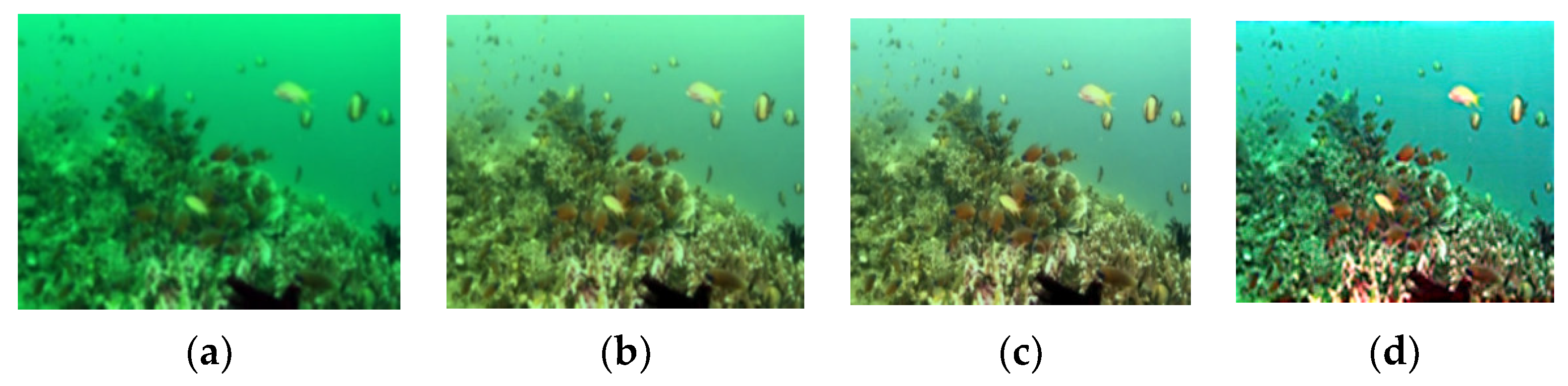

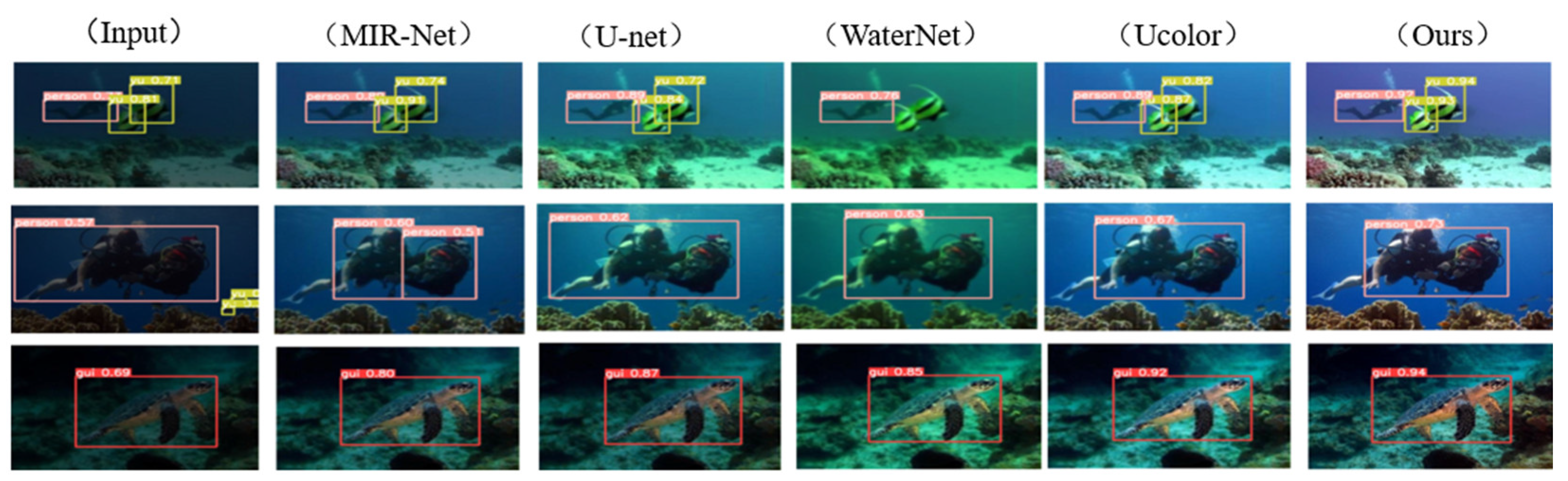

3.4.1. Qualitative Analysis

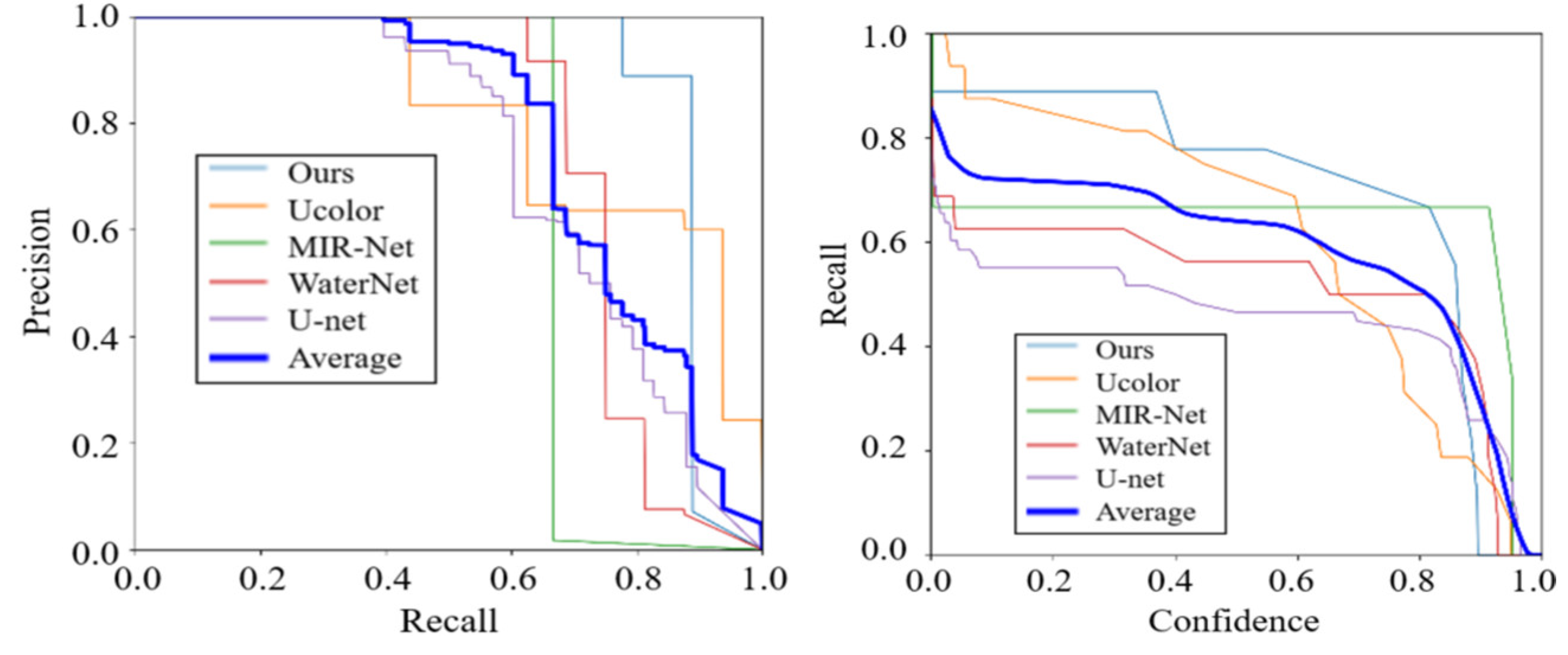

3.4.2. Quantitative Analysis

3.5. Comparison on Detection Tasks

4. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Zhang, W; Liu, W; Li, L. Underwater Single-Image Restoration with Transmission Estimation Using Color Constancy. Journal of Marine Science and Engineering. 2022, 10(3): 430-445. [CrossRef]

- Chiang, J; Chen, Y. Underwater Image Enhancement by Wavelength Compensation and Dehazing [J]. IEEE Trans on Image Process. 2012, 21(4): 1756-1769. [CrossRef]

- Hua Yang, Fei Tian, Qi Qi, Q. M. Jonathan Wu, Kunqian Li. Underwater image enhancement with latent consistency learning-based color transfer. IET Image Processing. 2022, 16(6): 1594-1512. [CrossRef]

- Ding, C; Dong, Lili; Xu, Wenhai. Review of histogram equalization technique for image enhancement [J]. Computer engineering and applications. 2017, 53(23): 12–17. [CrossRef]

- Jingchun, Zhou; Xiaojing, Wei; Jinyu, Shi; Weishen, Chu; Weishi, Zhang. Underwater image enhancement method with light scattering characteristics. Computers and Electrical Engineering. 2022, 100(1): 898-915. [CrossRef]

- Y, Peng; X., Zhao; and P. Cosman. Single underwater image enhancement using depth estimation based on blurriness. IEEE International Conference on Image Processing (ICIP). 2015, 4952-4956. [CrossRef]

- Song, W; Wang, Y; Huang, D. A rapid scene depth estimation model based on underwater light attenuation prior for under-water image restoration[C]. Proceedings of 2018 Advances in Multimedia Information Processing. 2018, 678-688. [CrossRef]

- C., Cheng; H., Zhang; G., Li. Overview of Underwater Image Enhancement and Restoration Methods. International Conference on CYBER Technology in Automation, Control, and Intelligent Systems (CYBER). 2022, 520-525. [CrossRef]

- Drews, P; Nascimento, E; Campos, M. Underwater depth estimation and image restoration based on single images[J]. IEEE Computer Graphics and Applications. 2016, 36(2): 24-35. [CrossRef]

- Li, J; Hou, G; Wang, G. Underwater image restoration using oblique gradient operator and light attenuation prior. Multimed Tools Appl. 2023, 82, 6625–6645. [CrossRef]

- Ma, Z; Oh, C. A wavelet-based dual-stream network for underwater image enhancement[C]. IEEE International Conference on Acoustics, Speech and Signal Processing. 2022: 2769-2773. [CrossRef]

- Y., Zhang; Q., Jiang; P., Liu; S., Gao; X., Pan; C., Zhang. Underwater Image Enhancement Using Deep Transfer Learning Based on a Color Restoration Model. IEEE Journal of Oceanic Engineering. 2023, 48(2): 489-514. [CrossRef]

- Wang, K; Hu, Y.; Chen, J.; Wu, X.; Zhao, X.; Li, Y. Underwater Image Restoration Based on a Parallel Convolutional Neural Network. Remote Sens. 2019, 11, 1591-1612. [CrossRef]

- Y., Ueki; M., Ikehara. Underwater Image Enhancement with Multi-Scale Residual Attention Network. International Conference on Visual Communications and Image Processing (VCIP), Munich, Germany. 2021, pp. 1-5. [CrossRef]

- Z., Xing; M., Cai; J., Li. Improved Shallow-UWnet for Underwater Image Enhancement. International Conference on Unmanned Systems (ICUS). 2022: 1191-1196. [CrossRef]

- Chongyi, Li; Saeed, Anwar; Fatih, Porikli. Underwater scene prior inspired deep underwater image and video enhancement. Pattern Recognition. 2020, 98-110. [CrossRef]

- Y., Ueki; M., Ikehara. Underwater Image Enhancement with Multi-Scale Residual Attention Network. International Conference on Visual Communications and Image Processing (VCIP), Munich, Germany. 2021, pp. 1-5. [CrossRef]

- H., Wang; M., Yang; G., Yin; J., Dong. Self-Adversarial Generative Adversarial Network for Underwater Image Enhancement. IEEE Journal of Oceanic Engineering. 2024, 49(1): 237-248. [CrossRef]

- Y., Wang; M., J; J., Chen; J., Wu. A Novel Generative Adversarial Network for Underwater Image Enhancement. International Conference on Intelligent Autonomous Systems (ICoIAS), Dalian, China. 2022, pp. 84-89. [CrossRef]

- C. Fabbri, M. J. Islam, and J. Sattar. Enhancing underwater imagery using generative adversarial networks. Proc. IEEE Int. Conf. Robot. Autom., Brisbane, Australia. 2018, pp. 7159–7165. [CrossRef]

- G. Balakrishnan, A. Zhao, A. V. Dalca, F. Durand, and J. Guttag. Synthesizing images of humans in unseen poses. Proc. IEEE Conf. Comput. Vis. Pattern Recognit., Salt Lake City, UT, USA, Jun. 2018, pp. 8340–8348. [CrossRef]

- X. Hu, M. A. Naiel, A. Wong, M. Lamm, and P. Fieguth. RUNet: A robust UNet architecture for image super-resolution. Proc. IEEE Conf. Comput. Vis. Pattern Recognit., Long Beach, CA, USA, Jun. 2019, pp. 505–507. [CrossRef]

- Junjun Wu, Xilin Liu, Qinghua Lu. FW-GAN: Underwater image enhancement using generative adversarial network with multi-scale fusion. Signal Processing: Image Communication. 2022, 109:1-12. [CrossRef]

- Kei Terayama, Kento Shin. Integration of sonar and optical camera images using deep neural network for fish monitoring[J]. Aquacultural Engineering. 2019, 86: 1-7. [CrossRef]

- Zhang, Tianyi and Matthew Johnson-Roberson. Beyond NeRF Underwater: Learning Neural Reflectance Fields for True Color Correction of Marine Imagery[J]. IEEE Robotics and Automation Letters, 2023, 8(2): 6467-6474. [CrossRef]

- Liu, Z; Lin, Y; Cao, Y. Swin Transformer: Hierarchical vision transformer using shifted windows. IEEE/CVF International Con-ference on Computer Vision. Piscataway. 2021: 9992-10002. [CrossRef]

- Kovács, L; Csépányi-Fürjes, L; Tewabe, W. Transformer Models in Natural Language Processing. International Conference In-terdisciplinarity in Engineering. 2023, Lecture Notes in Networks and Systems, vol. 929-945. [CrossRef]

- Chang, Liu; Gang, Wang; Chen, Zhang; Pietro, Patimisco; Ruyue, Cui; Chaofan, Feng. End-to-end methane gas detection algorithm based on transformer and multi-layer perceptron. Optics Express. 2024, 32(1): 987-1002. [CrossRef]

- Zamir, S; Arora, A; Khan, S. Restormer: Efficient transformer for high-resolution image restoration. IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway: IEEE Press. 2022: 5718-5729. [CrossRef]

- Y., Song; Z., He; H., Qian; X. Du. Vision Transformers for Single Image Dehazing. IEEE Transactions on Image Processing. 2023, 32, pp: 1927-1941. [CrossRef]

- D, Berman; D., Levy; S., Avidan; T., Treibitz. Underwater single image color restoration using haze-lines and a new quantita-tive dataset. IEEE Transactions on Pattern Analysis and Machine Intelligence. 2021, 43(8): 2822-2837. [CrossRef]

- Charu C. Aggarwal. Neural Networks and Deep Learning. Springer. 2018.

- Z. Lu, X. Jiang and A. Kot. Deep Coupled ResNet for Low-Resolution Face Recognition. IEEE Signal Processing Letters. 2018, vol. 25, no. 4, pp. 526-530. [CrossRef]

- Jie, Huang; Yajing, Liu; Feng, Zhao; Keyu, Yan. Deep Fourier-Based Exposure Correction Network with Spatial-Frequency Interaction. Eur. Conf. Comput. Vis. Springer. 2022, 163–180. [CrossRef]

- Zhou, J., Ni, J., Rao, Y. (2017). Block-Based Convolutional Neural Network for Image Forgery Detection. Lecture Notes in Computer Science. 2017, vol 10431. [CrossRef]

- Zou, BJ., Guo, YD., He, Q. et al. 3D Filtering by Block Matching and Convolutional Neural Network for Image Denoising. J. Comput. Sci. Technol. 2018, 33, 838–848 (2018). [CrossRef]

- Abbas Shahri, A., Maghsoudi Moud, F. Landslide susceptibility mapping using hybridized block modular intelligence model. Bull Eng Geol Environ. 2021, 80, 267–284. [CrossRef]

- Q. Liu, Y. Su and P. Xu. Implementation of Artificial Intelligence Anime Styl-ization System Based on PyTorch. Annual International Conference on Net-work and Information Systems for Computers (ICNISC). 2023, pp. 84-87. [CrossRef]

- L., Peng; C., Zhu; L., Bian. U-Shape Transformer for Underwater Image Enhancement. IEEE Transactions on Image Processing, vol. 32, pp. 3066-3079. 2023. [CrossRef]

- C., Li; et al. An Underwater Image Enhancement Benchmark Dataset and Beyond. IEEE Transactions on Image Processing, vol. 29, pp. 4376-4389, 2020. [CrossRef]

- Basha, C; Pravallika, B; Shankar, E. An Efficient Face Mask Detector with PyTorch and Deep Learning[J]. EAI Endorsed Transactions on Pervasive Health and Technology. 2021, 7(25):167843. [CrossRef]

- W., Li; S., Li; R., Liu. Channel Shuffle Reconstruction Network for Image Compressive Sensing. IEEE International Conference on Image Processing (ICIP), Abu Dhabi, United Arab Emirates. 2020, pp. 2880-2884. [CrossRef]

- Zhang, Y; Liu Y, Li. Salt and pepper noise removal in surveillance video based on low-rank matrix recovery [J]. Computational Visual Media. 2015, 1(1): 59-68. [CrossRef]

- Yao, J; Liu, G. Improved SSIM image quality assessment of contrast distortion based on the contrast sensitivity characteristics of human visual system [J]. IET Image Processing. 2018, 12(6), 872-879. [CrossRef]

- R. Liu, Z. Jiang, S. Yang and X. Fan. Twin Adversarial Contrastive Learning for Underwater Image Enhancement and Beyond. IEEE Transactions on Image Processing. 2022, vol. 31, pp. 4922-4936. [CrossRef]

- Syed, Waqas; Aditya, Arora; Salman, Khan; Munawar, Hayat. Learning enriched features for real image restoration and en-hancement. IEEE Transactions on Pattern Analysis and Machine Intelligence. 2020, 45(2): 1934-1948. [CrossRef]

- O., Ronneberger; P., Fischer; T., Brox. U-net: Convolutional network for biomedical image segmentation. Med. Image Comput. Comput. Ass. Inter. (MICCAI). 2015, pp. 234–241. [CrossRef]

- Tan, L; Huang, T; Wu, L. Comparison of RetinaNet, SSD, and YOLO v3 for real-time pill identification. BMC Medical Infor-matics and Decision Making. 2021, 324-337. [CrossRef]

- Xiangyong, Liu; Guang, Chen; Xuesong, Sun; Alois, Knoll. Ground Moving Vehicle Detection and Movement Tracking Based On the Neuromorphic Vision Sensor. IEEE Internet of Things Journal. 2020, 7(9): 9026-9039. [CrossRef]

- XY., Liu; Z., Yang; J., Hou; W., Huang. Dynamic Scene’s Laser Localization by NeuroIV-based Moving Objects Detection and LIDAR Points Evaluation. IEEE Transactions on Geoscience and Remote Sensing. 2022. [CrossRef]

| Parameters | Computation load | Computation load summation |

| d*n | 3d*n | |

| q | d*n | |

| d*n |

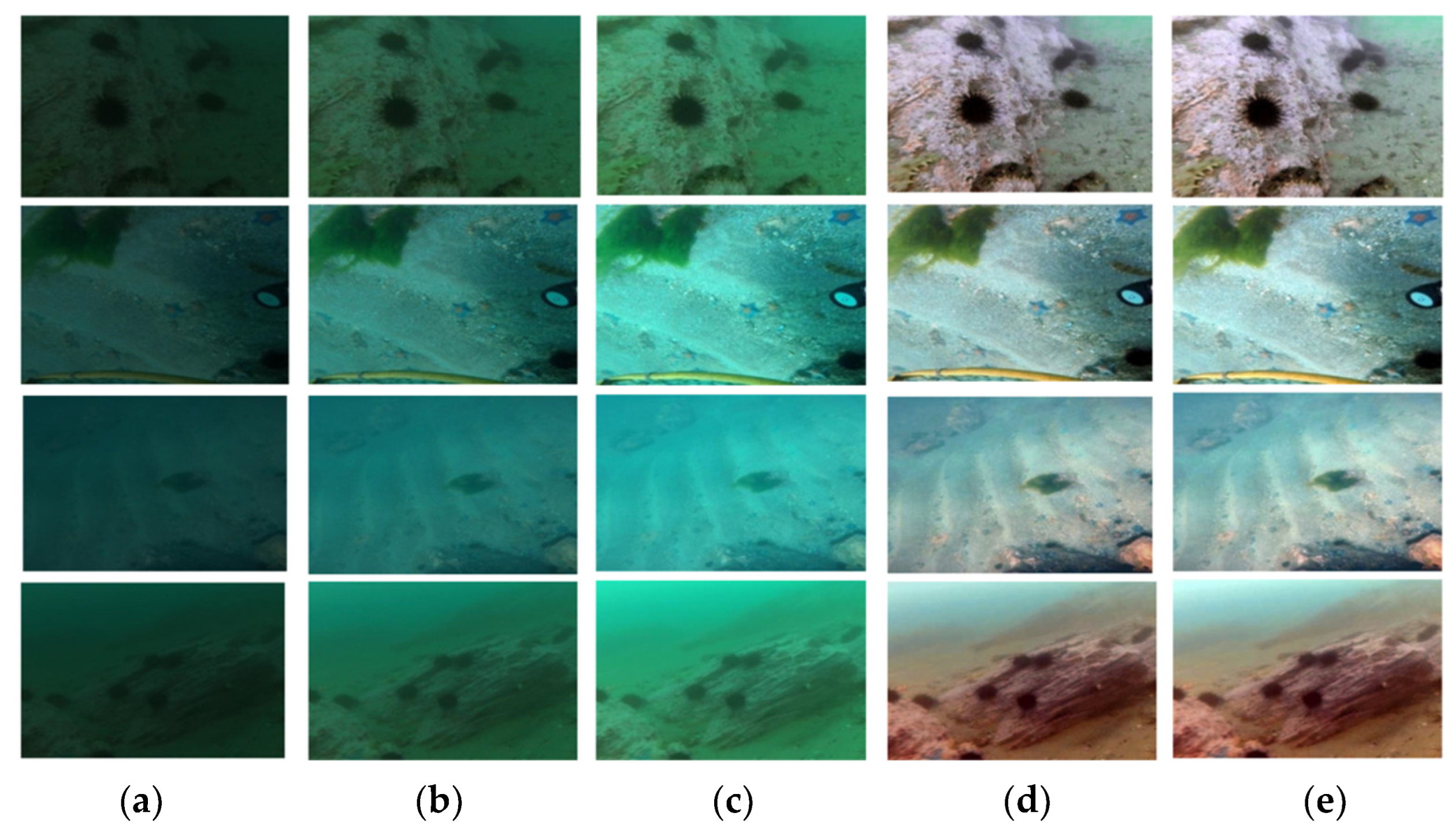

| Structures | Fusion | LSUI | UIEB | ||||||

|---|---|---|---|---|---|---|---|---|---|

| CNN | Fourier | Transformer | SNR attention | Additive fusion | Fourier fusion | PSNR | SSIM | PSNR | SSIM |

| ✓ | 15.22 | 0.47 | 13.03 | 0.42 | |||||

| ✓ | ✓ | 18.82 | 0.64 | 16.77 | 0.60 | ||||

| ✓ | ✓ | ✓ | ✓ | 24.83 | 0.79 | 21.70 | 0.70 | ||

| ✓ | ✓ | ✓ | ✓ | ✓ | 24.42 | 0.75 | 21.56 | 0.69 | |

| ✓ | ✓ | ✓ | ✓ | ✓ | 26.53 | 0.83 | 23.85 | 0.78 | |

| Backbones | Attention | Latency(ms) |

| Transformer | Dot-product Transformer | 2.5ms |

| Element-wise Transformer | 2.1ms | |

| Transformer + CNN | Dot-product Transformer | 3.0ms |

| Element-wise Transformer | 2.6ms |

| Methods | LSUI | UIEB | ||

|---|---|---|---|---|

| PSNR | SSIM | PSNR | SSIM | |

| CNN[16] | 15.28 | 0.50 | 13.68 | 0.48 |

| MIR-Net[40] | 18.80 | 0.66 | 16.78 | 0.63 |

| U-net[41] | 19.45 | 0.78 | 17.46 | 0.76 |

| WaterNet[43] | 19.62 | 0.80 | 19.27 | 0.83 |

| Ucolor[44] | 21.62 | 0.84 | 20.67 | 0.81 |

| Transformer[26] | 22.83 | 0.79 | 21.70 | 0.70 |

| Ours | 24.49 | 0.85 | 22.79 | 0.81 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).