Submitted:

16 July 2024

Posted:

17 July 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methodologies

3.1. Notions

3.2. Data Representation and Embedding

- Embedding column names and column values: First, we embed the column names and values in the table. Column name embedding provides the model with field semantic information, while column value embedding transforms the specific value of each field into a vector in a high-dimensional space. These embeddings can be done using a pre-trained embedding model such as Word2Vec or GloVe, or by training a small embedding network specifically for this task.

- Serialization: Each row of data is serialized by concatenating its column name embedding and column value embedding. Specifically, for each data point, its serialization is represented as following Equation 1.

- Vector sequence processing: The resulting serialized vectors can then be fed directly into the large language model. Since these models are often designed to work with sequential data, they can naturally process this form of structured data.

3.3. Self-Attention Mechanism Based on Transformer

4. Experiments

4.1. Experimental Setups

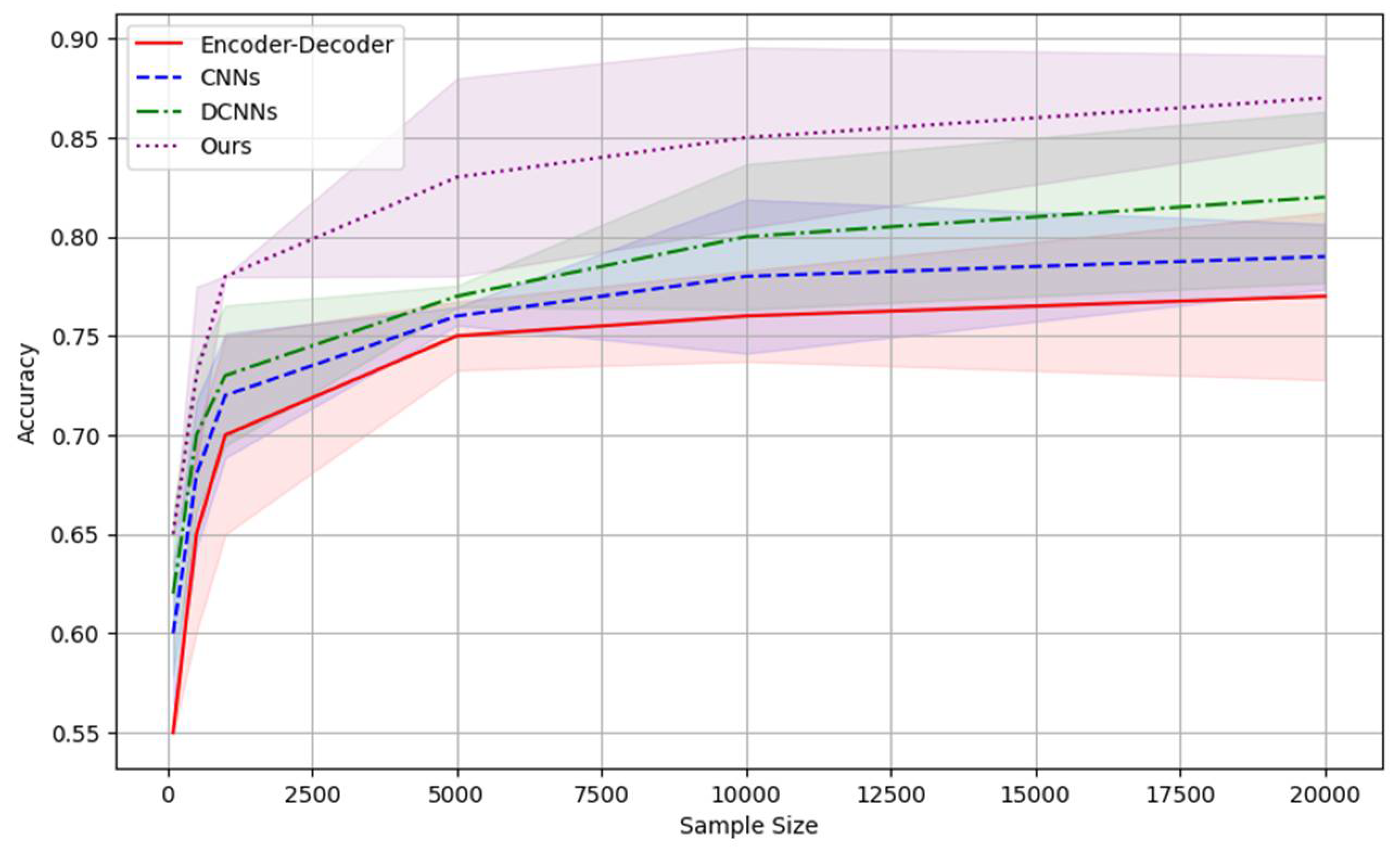

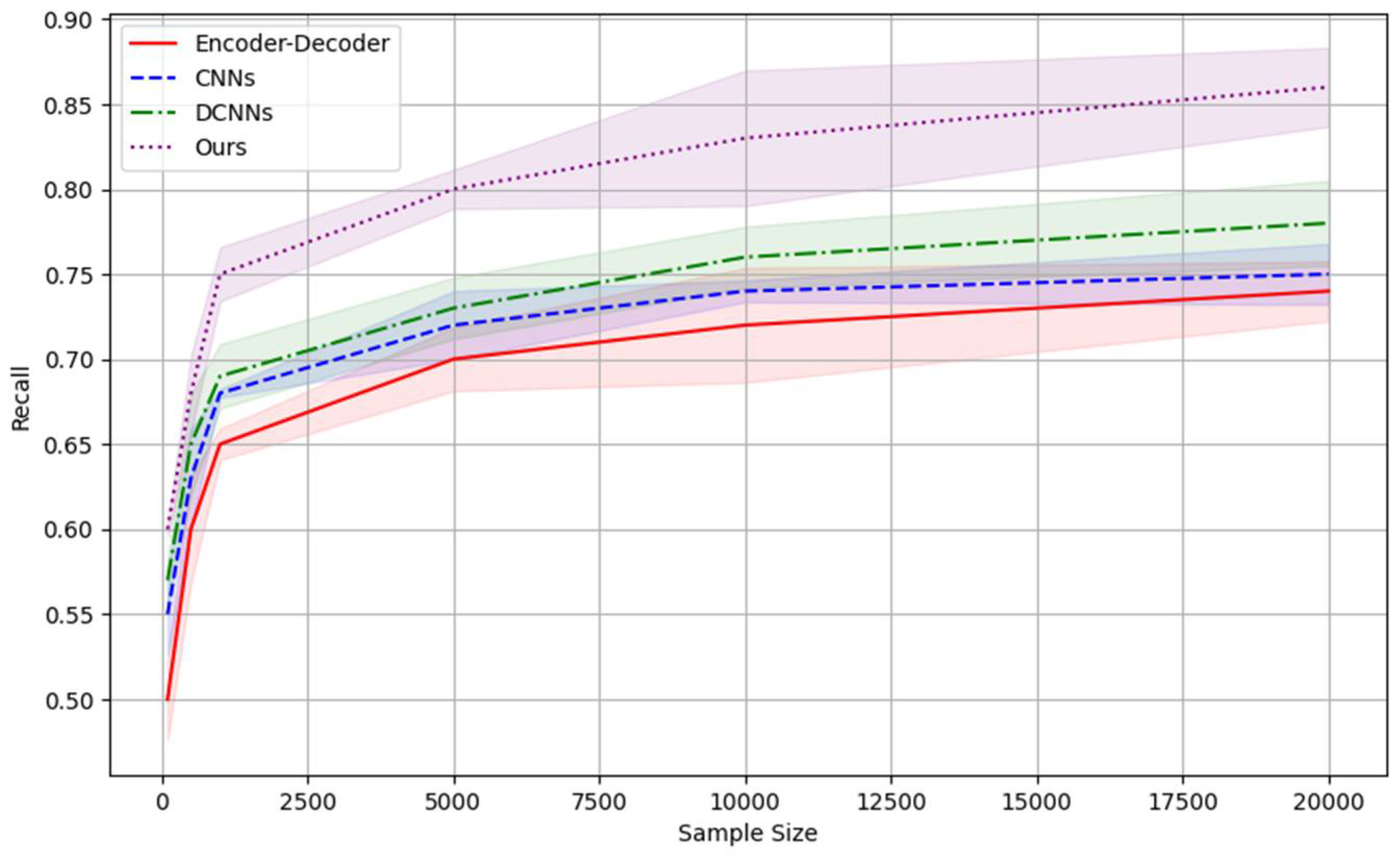

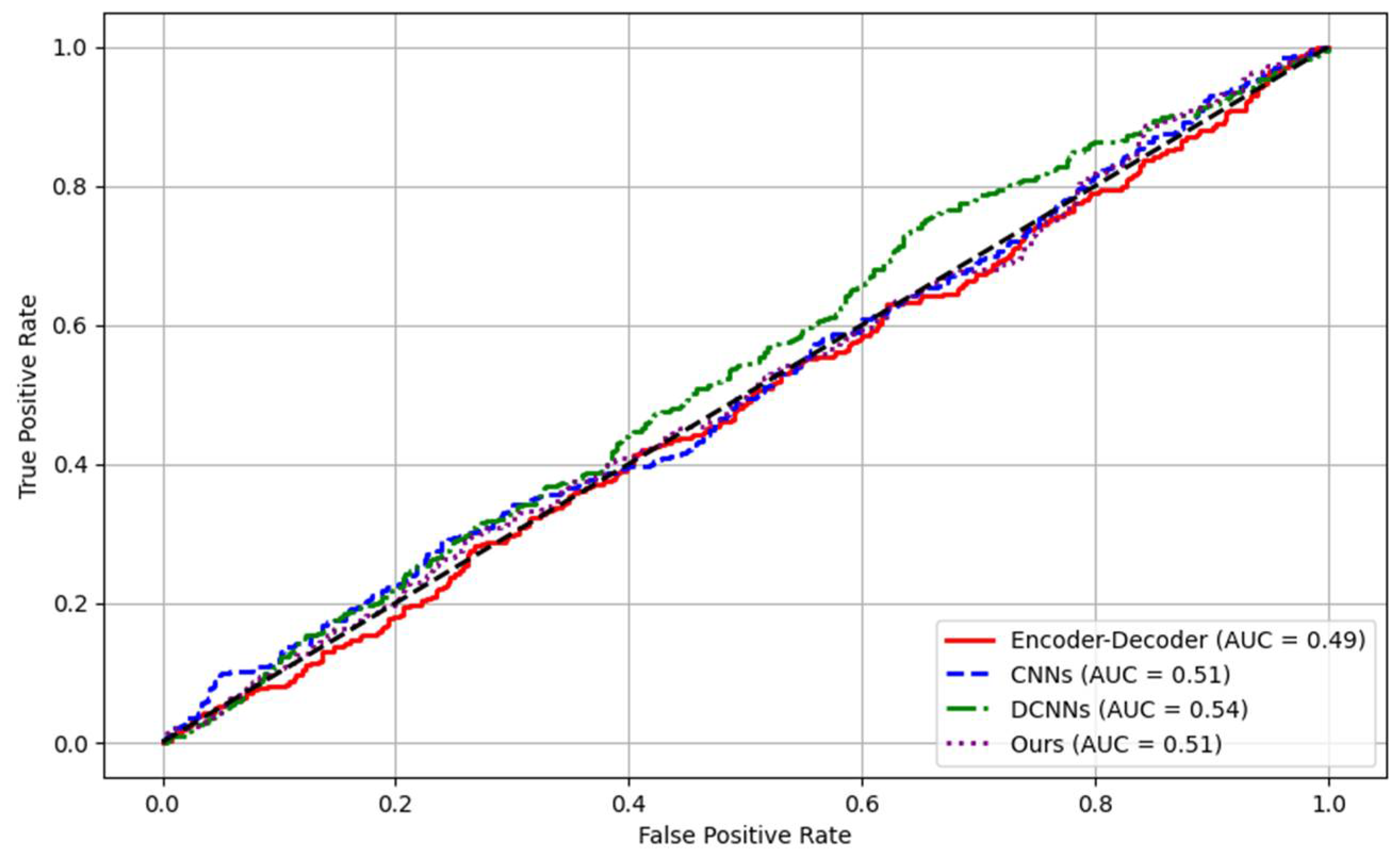

4.2. Experimental analysis

5. Conclusion

References

- Liu, S.; et al. Multimodal data matters: Language model pre-training over structured and unstructured electronic health records. IEEE Journal of Biomedical and Health Informatics 2022, 27, 504–514. [Google Scholar] [CrossRef]

- Biswas, Anjanava, and Wrick Talukdar. Robustness of Structured Data Extraction from In-Plane Rotated Documents Using Multi-Modal Large Language Models (LLM). Journal of Artificial Intelligence Research 2024, 4, 176–195. [Google Scholar]

- Li, I.; et al. Neural natural language processing for unstructured data in electronic health records: a review. Computer Science Review 2022, 46, 100511. [Google Scholar] [CrossRef]

- Sui, Yuan, et al. "Table meets llm: Can large language models understand structured table data? a benchmark and empirical study." Proceedings of the 17th ACM International Conference on Web Search and Data Mining. 2024.

- Fernandez, Raul Castro, et al. How large language models will disrupt data management. Proceedings of the VLDB Endowment 2023, 16, 3302–3309. [Google Scholar] [CrossRef]

- Sharma, Richa, Pooja Agarwal, and Arti Arya. "Natural language processing and big data: a strapping combination." New Trends and Applications in Internet of Things (IoT) and Big Data Analytics. Cham: Springer International Publishing, 2022. 255-271.

- LeCun, Yann, Koray Kavukcuoglu, and Clément Farabet. "Convolutional networks and applications in vision." Proceedings of 2010 IEEE international symposium on circuits and systems. IEEE, 2010.

- Hadji, Isma, and Richard P. Wildes. "What do we understand about convolutional networks? arXiv:1803.08834 (2018).

- Ogoke, Francis, et al. Graph convolutional networks applied to unstructured flow field data. Machine Learning: Science and Technology 2021, 2, 045020. [Google Scholar] [CrossRef]

- Coscia, D.; et al. A continuous convolutional trainable filter for modelling unstructured data. Computational Mechanics 2023, 72, 253–265. [Google Scholar] [CrossRef]

- Tang, N.; et al. Improving the performance of lung nodule classification by fusing structured and unstructured data. Information Fusion 2022, 88, 161–174. [Google Scholar]

- Yu, Fisher, and Vladlen Koltun. "Multi-scale context aggregation by dilated convolutions. arXiv:1511.07122 (2015).

- Strubell, Emma, et al. "Fast and accurate entity recognition with iterated dilated convolutions. arXiv:1702.02098 (2017).

| Symbols | Definition |

| Column name embedding | |

| Specific value of each field | |

| concatenation operation | |

| Query matrix | |

| Key matrix | |

| Value matrix | |

| Similarity of the query to each key | |

| Dimension of the key vector | |

| Softmax function | |

| Cross-entropy loss function | |

| and | Hyper-parameters |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).