Submitted:

16 July 2024

Posted:

16 July 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

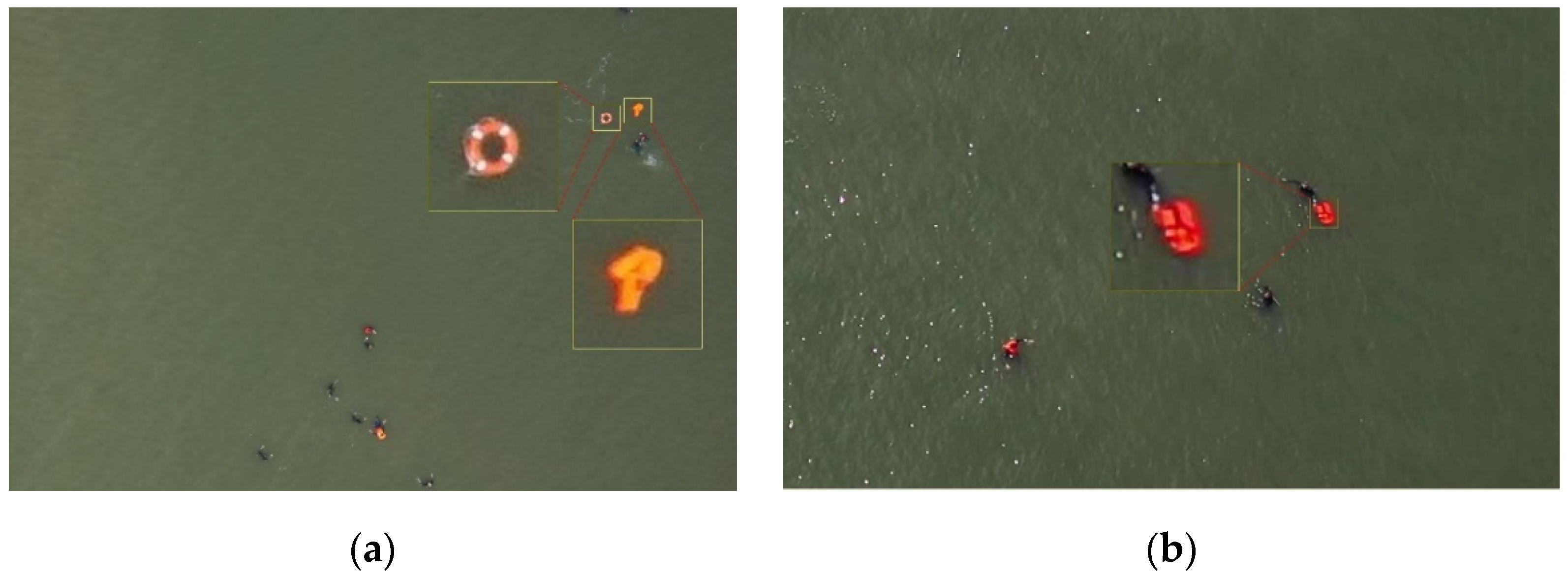

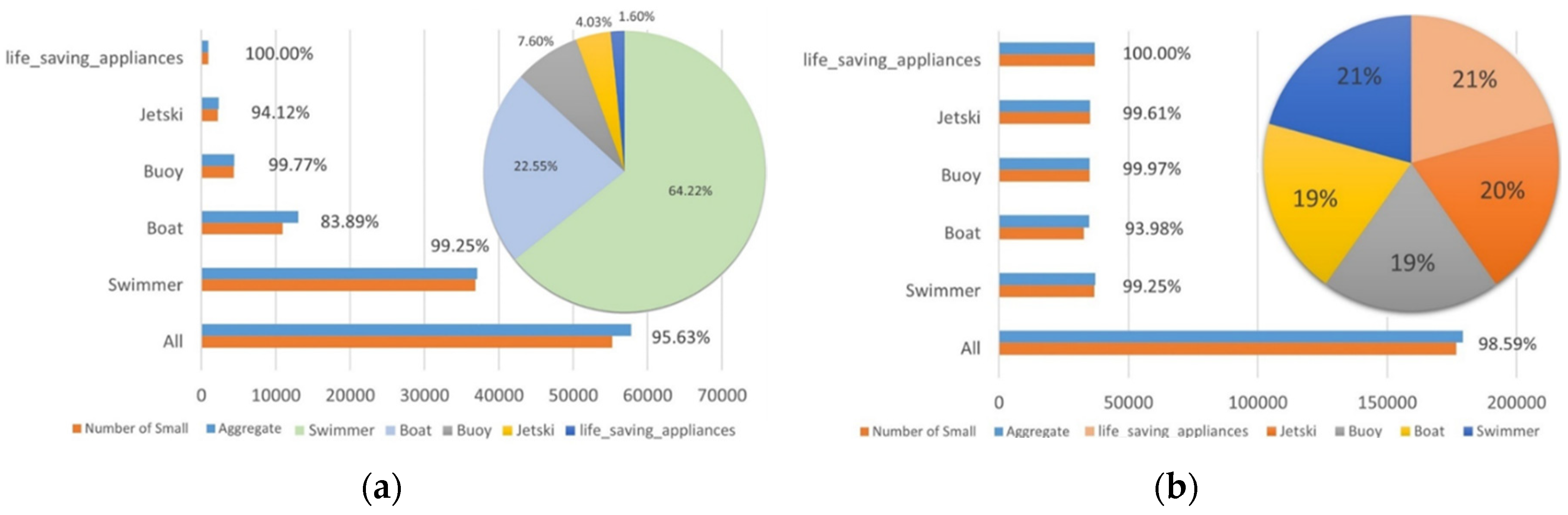

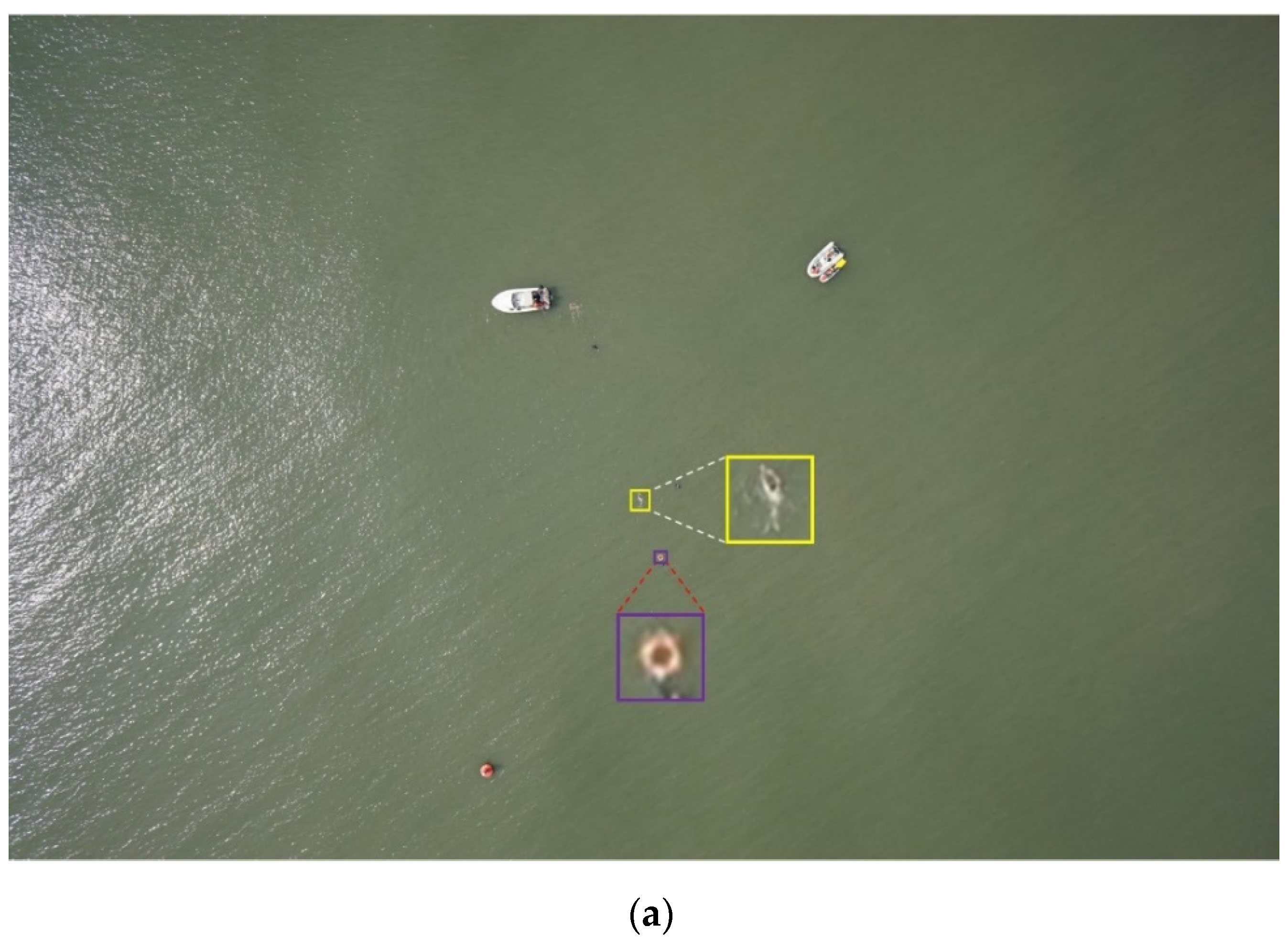

- The paper introduces a new data augmentation algorithm called SOM, which aims to expand the number of objects in specific categories without adding actual objects. This algorithm ensures that the characteristics of the added objects remain consistent with the original ones. Experiments demonstrate that this method enhances dataset balance and improves the model's accuracy and generalization capabilities.

- Lightweight design of the backbone network:

- 3.

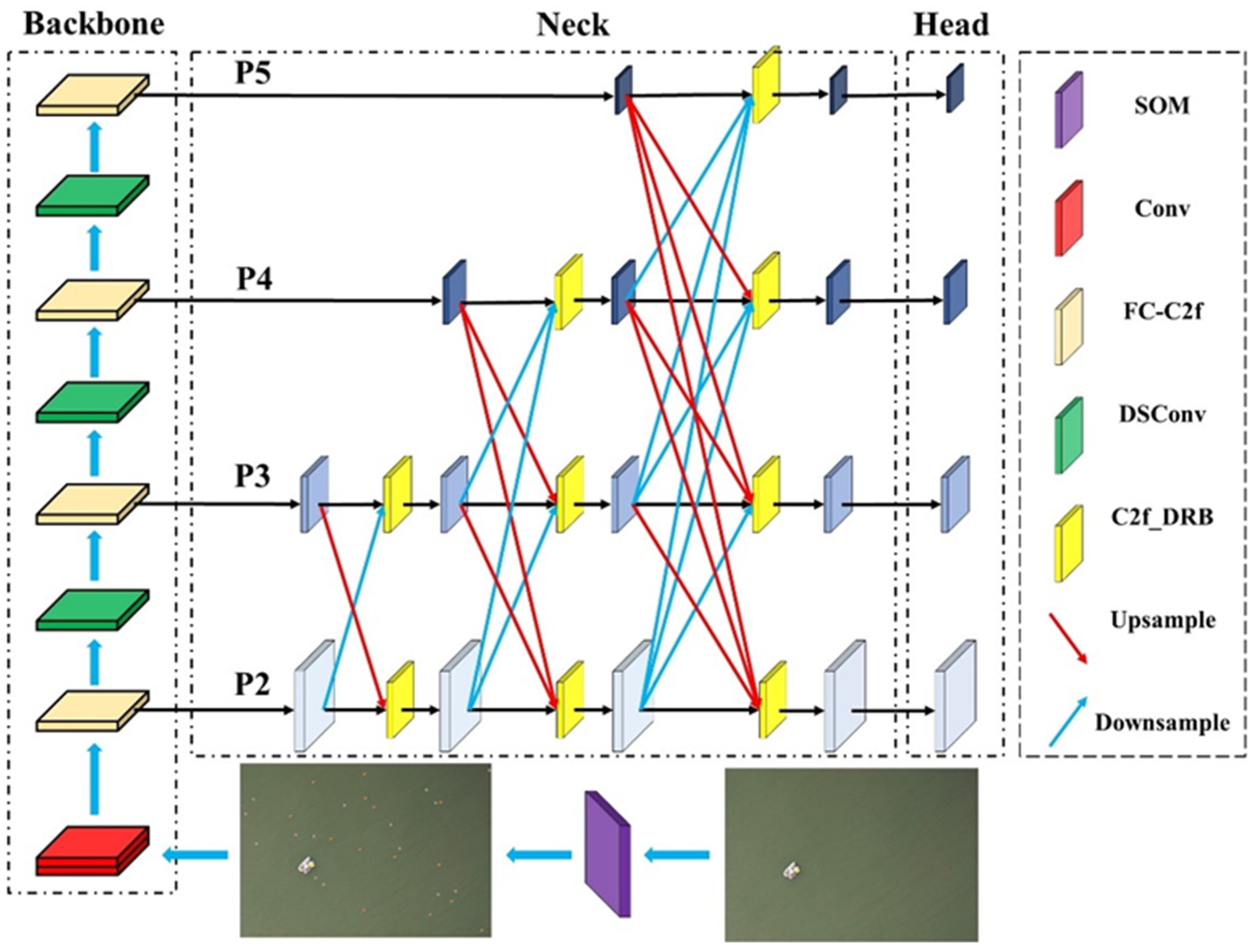

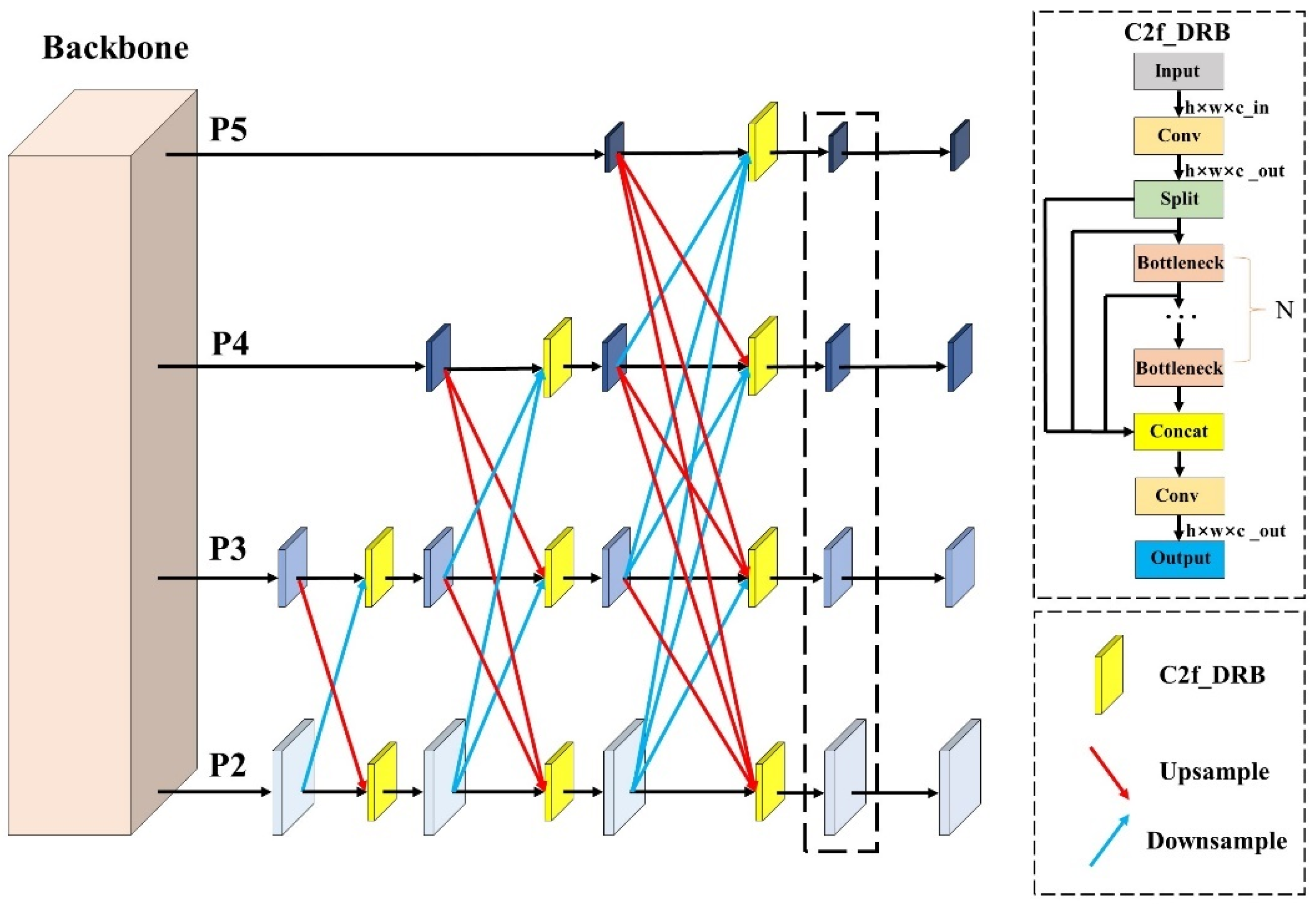

- Designed a new multiscale fusion network, LMFN, to address the accuracy issues in multiscale object recognition.

2. Related Work

2.1. Data Augmentation

2.2. Lightweight Methods for Object Detection Networks Based on Deep Learning

2.3. MultiScale Feature Fusion

3. Materials and Methods

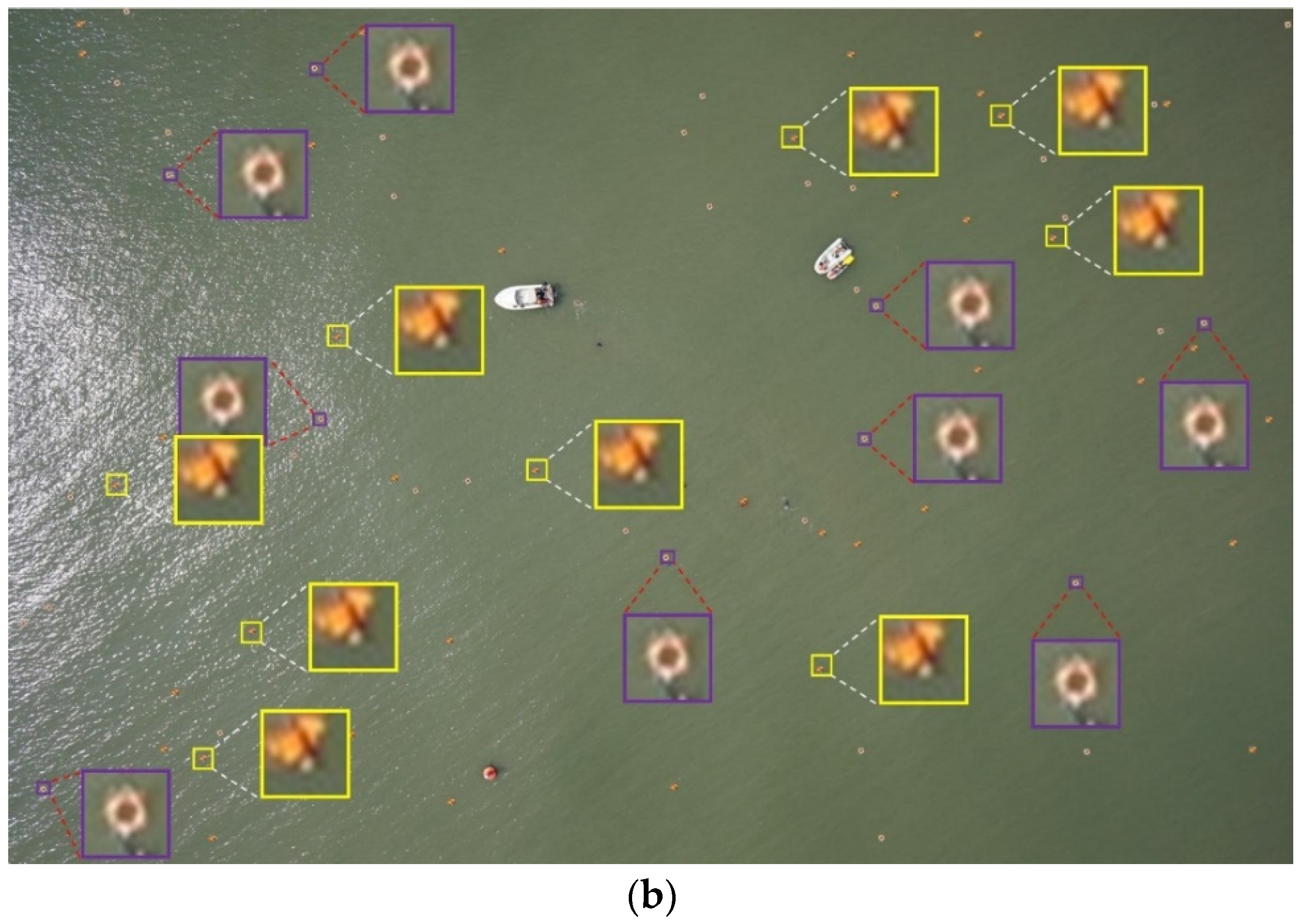

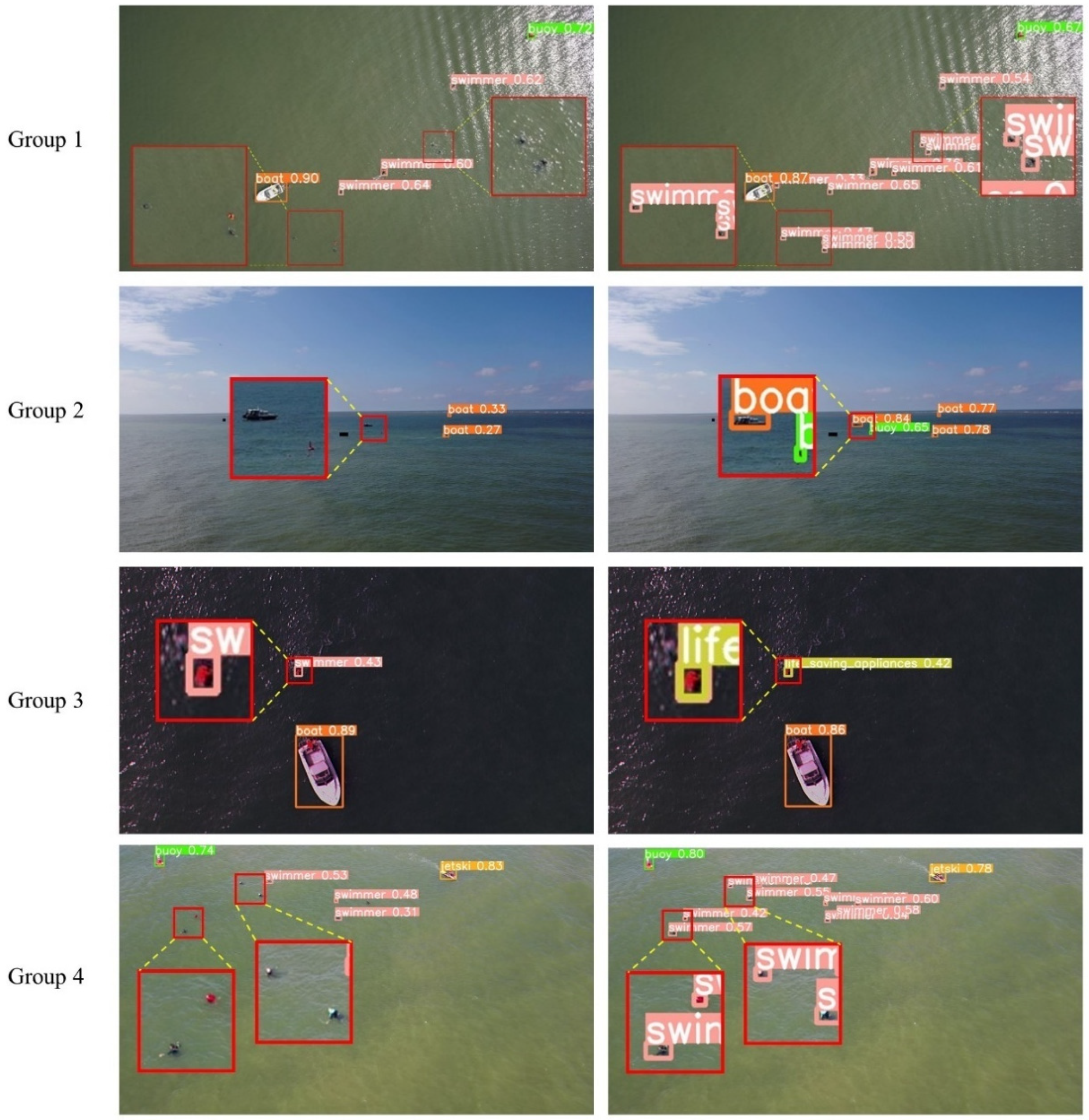

3.1. Data Augmentation

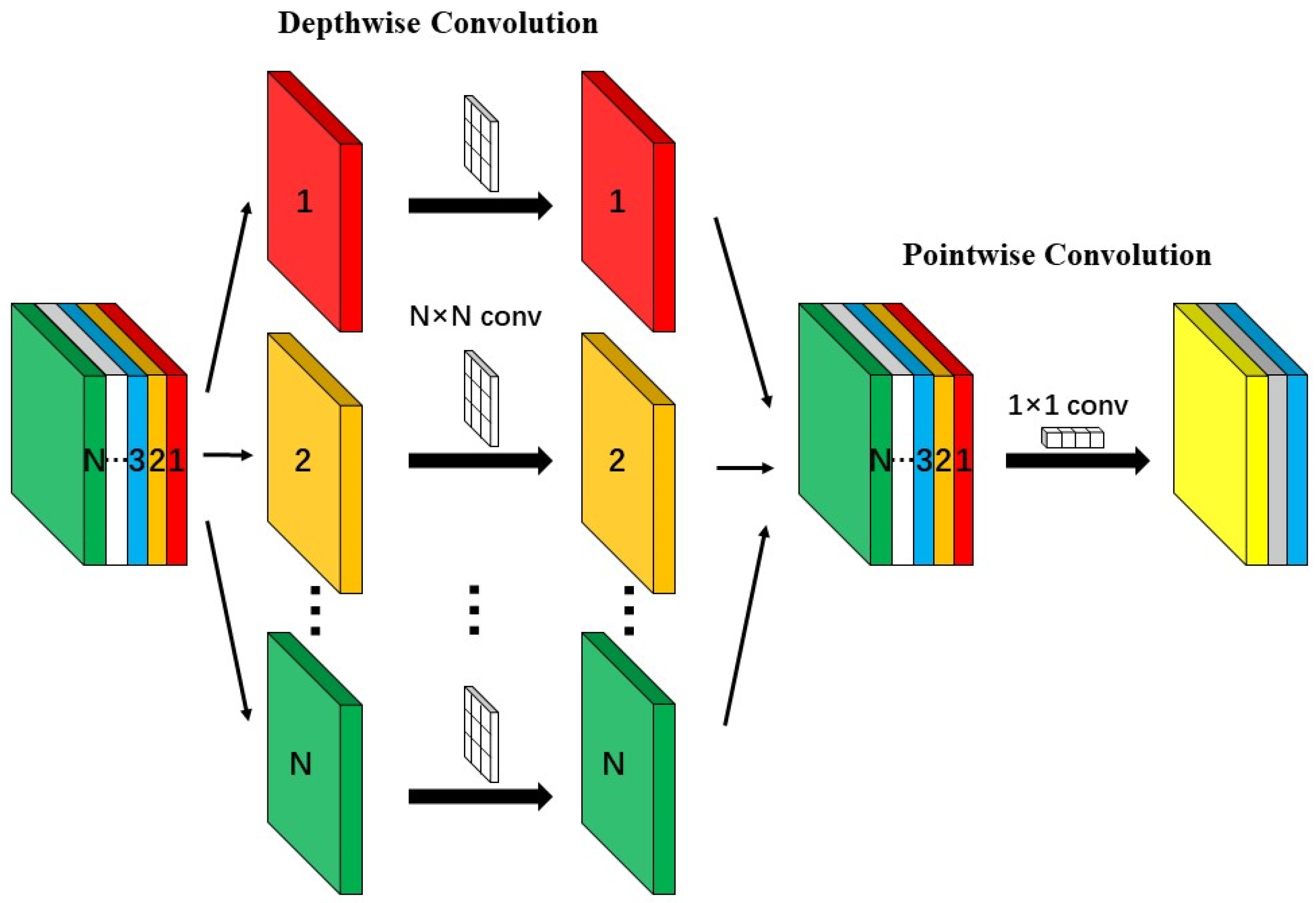

3.2. Depthwise Separable Convolution

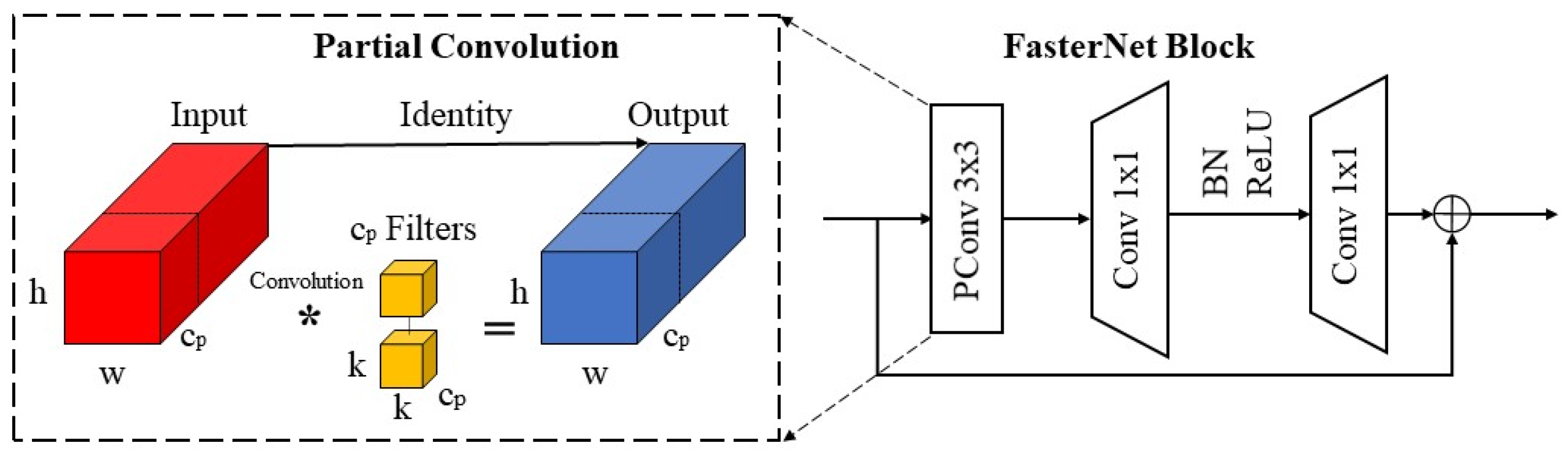

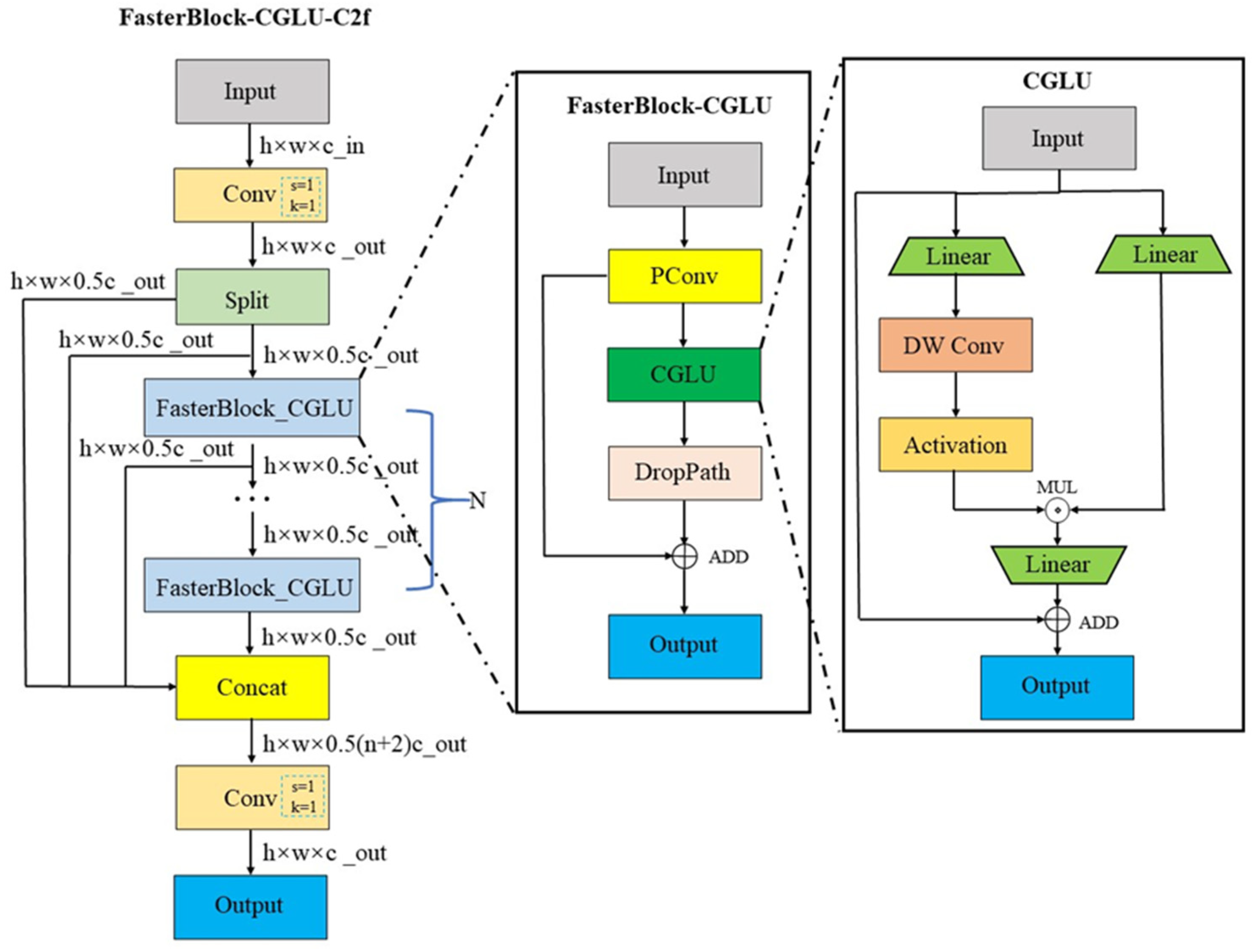

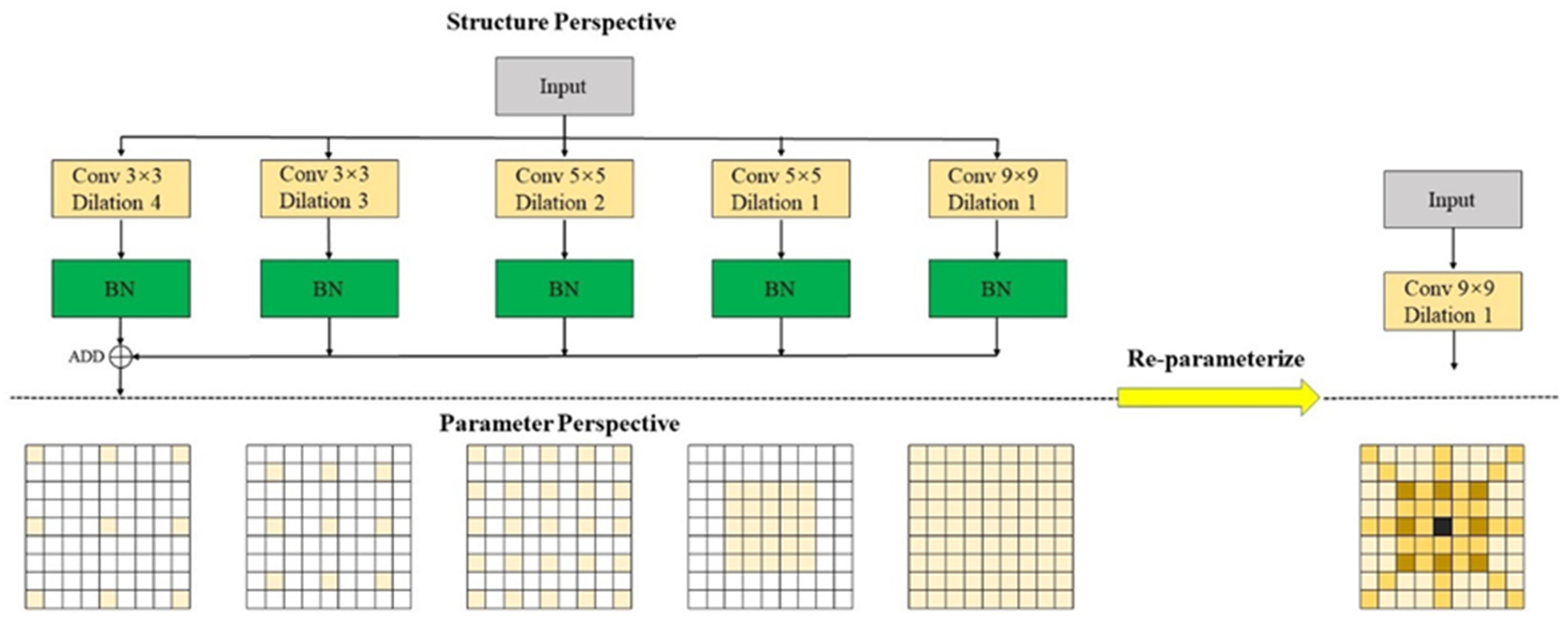

3.3. Improved C2f Module Based on Convolutional Gated Linear Unit and Faster Block

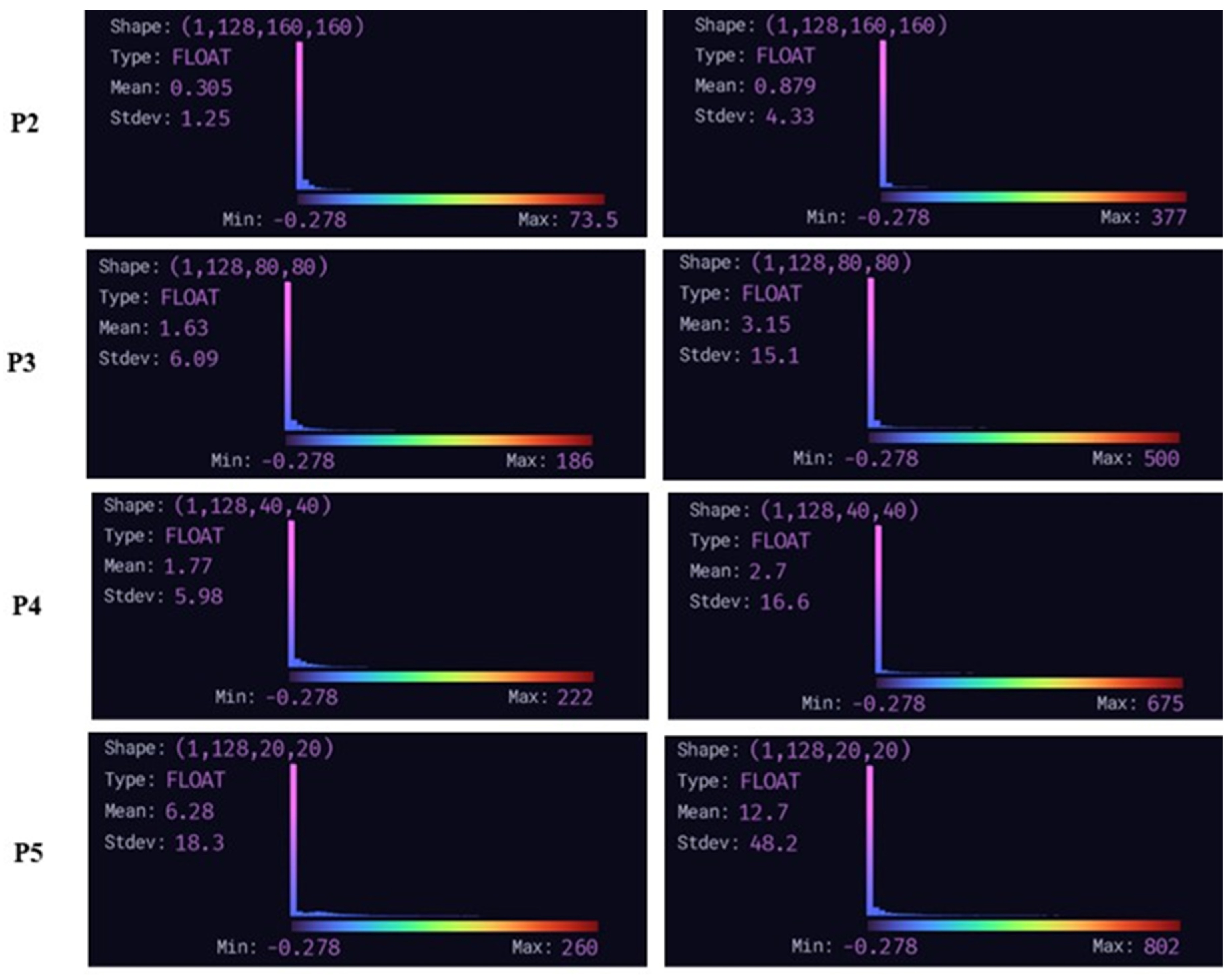

3.4. Lightweight Multiscale Feature Fusion Network

4. Experimental and Analysis

4.1. Dataset and Experimental Environment and Parameter Settings

4.2. Experimental Metrics

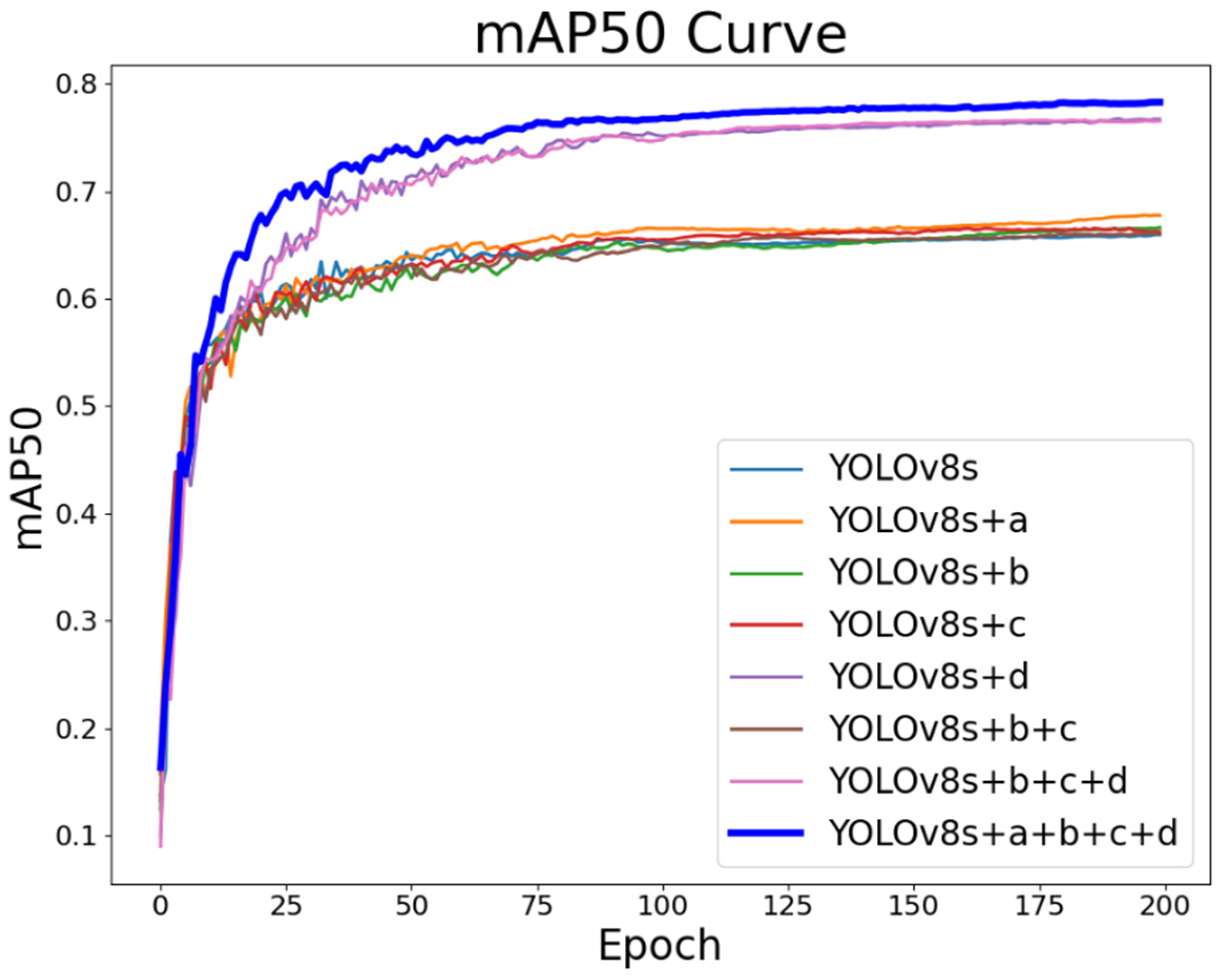

4.3. Ablation Experiments

4.4. Comparative Experiment

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yang, T.; Jiang, Z.; Sun, R.; Cheng, N.; Feng, H. Maritime Search and Rescue Based on Group Mobile Computing for Unmanned Aerial Vehicles and Unmanned Surface Vehicles. IEEE Trans. Ind. Inform. 2020, 16, 7700–7708. [CrossRef]

- Tang, G.; Ni, J.; Zhao, Y.; Gu, Y.; Cao, W. A Survey of Object Detection for UAVs Based on Deep Learning. REMOTE Sens. 2024, 16, 149. [CrossRef]

- Bouguettaya, A.; Zarzour, H.; Kechida, A.; Taberkit, A.M. Deep Learning Techniques to Classify Agricultural Crops through UAV Imagery: A Review. NEURAL Comput. Appl. 2022, 34, 9511–9536. [CrossRef]

- Zhao, C.; Liu, R.W.; Qu, J.; Gao, R. Deep Learning-Based Object Detection in Maritime Unmanned Aerial Vehicle Imagery: Review and Experimental Comparisons. Eng. Appl. Artif. Intell. 2024, 128, 107513. [CrossRef]

- Yang, Z.; Yin, Y.; Jing, Q.; Shao, Z. A High-Precision Detection Model of Small Objects in Maritime UAV Perspective Based on Improved YOLOv5. J. Mar. Sci. Eng. 2023, 11, 1680. [CrossRef]

- Lin, T.-Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft COCO: Common Objects in Context. In Proceedings of the Computer Vision – ECCV 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Springer International Publishing: Cham, 2014; pp. 740–755.

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. DETRs Beat YOLOs on Real-Time Object Detection 2024.

- Wang, C.-Y.; Yeh, I.-H.; Liao, H.-Y.M. YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information 2024.

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. YOLOv10: Real-Time End-to-End Object Detection 2024.

- Chen, G.; Pei, G.; Tang, Y.; Chen, T.; Tang, Z. A Novel Multi-Sample Data Augmentation Method for Oriented Object Detection in Remote Sensing Images. In Proceedings of the 2022 IEEE 24th International Workshop on Multimedia Signal Processing (MMSP); September 2022; pp. 1–7.

- Zhang, Q.; Meng, Z.; Zhao, Z.; Su, F. GSLD: A Global Scanner with Local Discriminator Network for Fast Detection of Sparse Plasma Cell in Immunohistochemistry. In Proceedings of the 2021 IEEE International Conference on Image Processing (ICIP); September 2021; pp. 86–90.

- Ghiasi, G.; Cui, Y.; Srinivas, A.; Qian, R.; Lin, T.-Y.; Cubuk, E.D.; Le, Q.V.; Zoph, B. Simple Copy-Paste Is a Strong Data Augmentation Method for Instance Segmentation 2021.

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-Level Accuracy with 50x Fewer Parameters and <0.5MB Model Size 2016.

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications 2017.

- Gholami, A.; Kwon, K.; Wu, B.; Tai, Z.; Yue, X.; Jin, P.; Zhao, S.; Keutzer, K. SqueezeNext: Hardware-Aware Neural Network Design 2018.

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.-C. MobileNetV2: Inverted Residuals and Linear Bottlenecks 2019.

- Qin, D.; Leichner, C.; Delakis, M.; Fornoni, M.; Luo, S.; Yang, F.; Wang, W.; Banbury, C.; Ye, C.; Akin, B.; et al. MobileNetV4 -- Universal Models for the Mobile Ecosystem 2024.

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.-C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for MobileNetV3 2019.

- Zhang, J.; Chen, Z.; Yan, G.; Wang, Y.; Hu, B. Faster and Lightweight: An Improved YOLOv5 Object Detector for Remote Sensing Images. Remote Sens. 2023, 15, 4974. [CrossRef]

- Gong, W. Lightweight Object Detection: A Study Based on YOLOv7 Integrated with ShuffleNetv2 and Vision Transformer 2024.

- Wang, J.; Chen, K.; Xu, R.; Liu, Z.; Loy, C.C.; Lin, D. CARAFE: Content-Aware ReAssembly of FEatures. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV); October 2019; pp. 3007–3016.

- Lin, T.-Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection 2017.

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path Aggregation Network for Instance Segmentation. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition; June 2018; pp. 8759–8768.

- Xu, X.; Jiang, Y.; Chen, W.; Huang, Y.; Zhang, Y.; Sun, X. DAMO-YOLO : A Report on Real-Time Object Detection Design 2023.

- Tan, M.; Pang, R.; Le, Q.V. EfficientDet: Scalable and Efficient Object Detection 2020.

- Li, K.; Geng, Q.; Wan, M.; Cao, X.; Zhou, Z. Context and Spatial Feature Calibration for Real-Time Semantic Segmentation. IEEE Trans. Image Process. 2023, 32, 5465–5477. [CrossRef]

- Kisantal, M.; Wojna, Z.; Murawski, J.; Naruniec, J.; Cho, K. Augmentation for Small Object Detection 2019.

- Guo, Y.; Li, Y.; Feris, R.; Wang, L.; Rosing, T. Depthwise Convolution Is All You Need for Learning Multiple Visual Domains Available online: https://arxiv.org/abs/1902.00927v2 (accessed on 16 May 2024).

- Chen, J.; Kao, S.; He, H.; Zhuo, W.; Wen, S.; Lee, C.-H.; Chan, S.-H.G. Run, Don’t Walk: Chasing Higher FLOPS for Faster Neural Networks 2023.

- Ma, N.; Zhang, X.; Zheng, H.-T.; Sun, J. ShuffleNet V2: Practical Guidelines for Efficient CNN Architecture Design. In Proceedings of the Computer Vision – ECCV 2018; Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y., Eds.; Springer International Publishing: Cham, 2018; pp. 122–138.

- Han, K.; Wang, Y.; Tian, Q.; Guo, J.; Xu, C.; Xu, C. GhostNet: More Features From Cheap Operations. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); June 2020; pp. 1577–1586.

- Dauphin, Y.N.; Fan, A.; Auli, M.; Grangier, D. Language Modeling with Gated Convolutional Networks 2017.

- Shi, D. TransNeXt: Robust Foveal Visual Perception for Vision Transformers 2024.

- Yang, G.; Lei, J.; Zhu, Z.; Cheng, S.; Feng, Z.; Liang, R. AFPN: Asymptotic Feature Pyramid Network for Object Detection 2023.

- Ding, X.; Zhang, X.; Zhou, Y.; Han, J.; Ding, G.; Sun, J. Scaling Up Your Kernels to 31x31: Revisiting Large Kernel Design in CNNs 2022.

- Luo, W.; Li, Y.; Urtasun, R.; Zemel, R. Understanding the Effective Receptive Field in Deep Convolutional Neural Networks 2017.

- Ding, X.; Zhang, Y.; Ge, Y.; Zhao, S.; Song, L.; Yue, X.; Shan, Y. UniRepLKNet: A Universal Perception Large-Kernel ConvNet for Audio, Video, Point Cloud, Time-Series and Image Recognition 2024.

- Xu, J.; Fan, X.; Jian, H.; Xu, C.; Bei, W.; Ge, Q.; Zhao, T. YoloOW: A Spatial Scale Adaptive Real-Time Object Detection Neural Network for Open Water Search and Rescue From UAV Aerial Imagery. IEEE Trans. Geosci. Remote Sens. 2024, 62, 1–15. [CrossRef]

| Classes | P(%) | R(%) | mAP(%) | P SOM (%) | RSOM(%) | mAPSOM(%) |

|---|---|---|---|---|---|---|

| swimmer | 78.7 | 66.5 | 69.6 | 80.1(+1.4) | 64.8(-1.7) | 70.3(+0.7) |

| boat | 89.8 | 86 | 91.6 | 89.9(+0.1) | 87.4(+1.4) | 91.2(+0.4) |

| jetski | 76.8 | 82.2 | 83.7 | 86.3(+9.5) | 82.5(+0.3) | 84.6(+0.9) |

| life_saving_appliances | 78.2 | 14.5 | 28.2 | 81(+2.8) | 25.5(+11) | 35.4(+6.9) |

| buoy | 77 .7 | 50.5 | 57.2 | 88.5(+10.8) | 51.6(+1.1) | 61.4(+4.2) |

| All | 80.2 | 59.9 | 66.1 | 85.2(+5) | 62.4(+2.5) | 68.6(+2.5) |

| Algorithms |

P (%) |

R (%) |

mAP50val (%) |

Params (M) |

FLOPs (G) |

Speed RTX4090 b16 (ms) |

| YOLOv8s | 80.2 | 59.9 | 66.1 | 11.14 | 28.7 | 2.6 |

| YOLOv8s+DSConv | 79.8(-0.4) | 60.5(+0.6) | 66.6(+0.5) | 9.59(-1.55) | 25.7(-3) | 1.2(-1.4) |

| Algorithms |

FLOPs (G) |

Pre-Process (ms) |

Inference (ms) |

NMS (ms) |

| YOLOv8s | 28.8 | 0.2 | 1.6 | 0.8 |

| YOLOv8s +HGNetV2 | 23.3 | 0.2 | 1.6 | 0.7 |

| Algorithms |

P (%) |

R (%) |

mAP50val (%) |

Params (M) |

FLOPs (G) |

Speed RTX4090 b16 (ms) |

| YOLOv8s | 80.2 | 59.9 | 66.1 | 11.14 | 28.7 | 2.6 |

| YOLOv8s+FB-C2f | 80.4(+0.2) | 61.2(+1.3) | 66.1(+0) | 9.69(-1.45) | 24.4(-4.3) | 2.2(-0.4) |

| YOLOv8s +FC-C2f | 82.8(+2.6) | 59.5(-0.4) | 66.5(+0.4) | 9.48(-1.66) | 23.8(-4.9) | 1.4(-1.2) |

| Algorithms |

P (%) |

R (%) |

mAP50val (%) |

Params (M) |

FLOPs (G) |

Speed RTX4090 b16 (ms) |

| YOLOv8s | 80.2 | 59.9 | 66.1 | 11.14 | 28.7 | 2.6 |

| AFPN | 83.2(+3.0) | 71.4(+11.5) | 76.3(+10.2) | 8.76(-2.38) | 38.9(+10.2) | 3.1(+0.5) |

| AFPN_C2f | 84.5(+4.3) | 69.6(+9.7) | 76.0(+9.9) | 7.09(-4.05) | 34.2(+5.5) | 2.8(+0.2) |

| LMFN | 86.8(+6.6) | 70.5(+10.6) | 76.7(+10.6) | 6.86(-4.28) | 31.1(+2.4) | 2.3(-0.3) |

| Experimental Environment | Parameter/Version |

|---|---|

| Operating System | Ubuntu20.04 |

| GPU | NVIDIA Geforce RTX 4090 |

| CPU | Intel(R) Xeon(R) Gold 6430 |

| Cudn | 11.3 |

| Pytorch | 1.10.0 |

| Python | 3.8 |

| Parameter | Setup |

|---|---|

| Image size | 640×640 |

| Momentum | 0.937 |

| BatchSize | 16 |

| Epoch | 200 |

| initial learning rate | 0.01 |

| final learning rate | 0.0001 |

| Weight decay | 0.0005 |

| Warmup epochs | 3 |

| IoU | 0.7 |

| Close Mosaic | 10 |

| Optimizer | SGD |

| Class | Algorithms |

P (%) |

R (%) |

mAP50val (%) |

Params (M) |

FLOPs (G) |

Speed RTX4090 b16 (ms) |

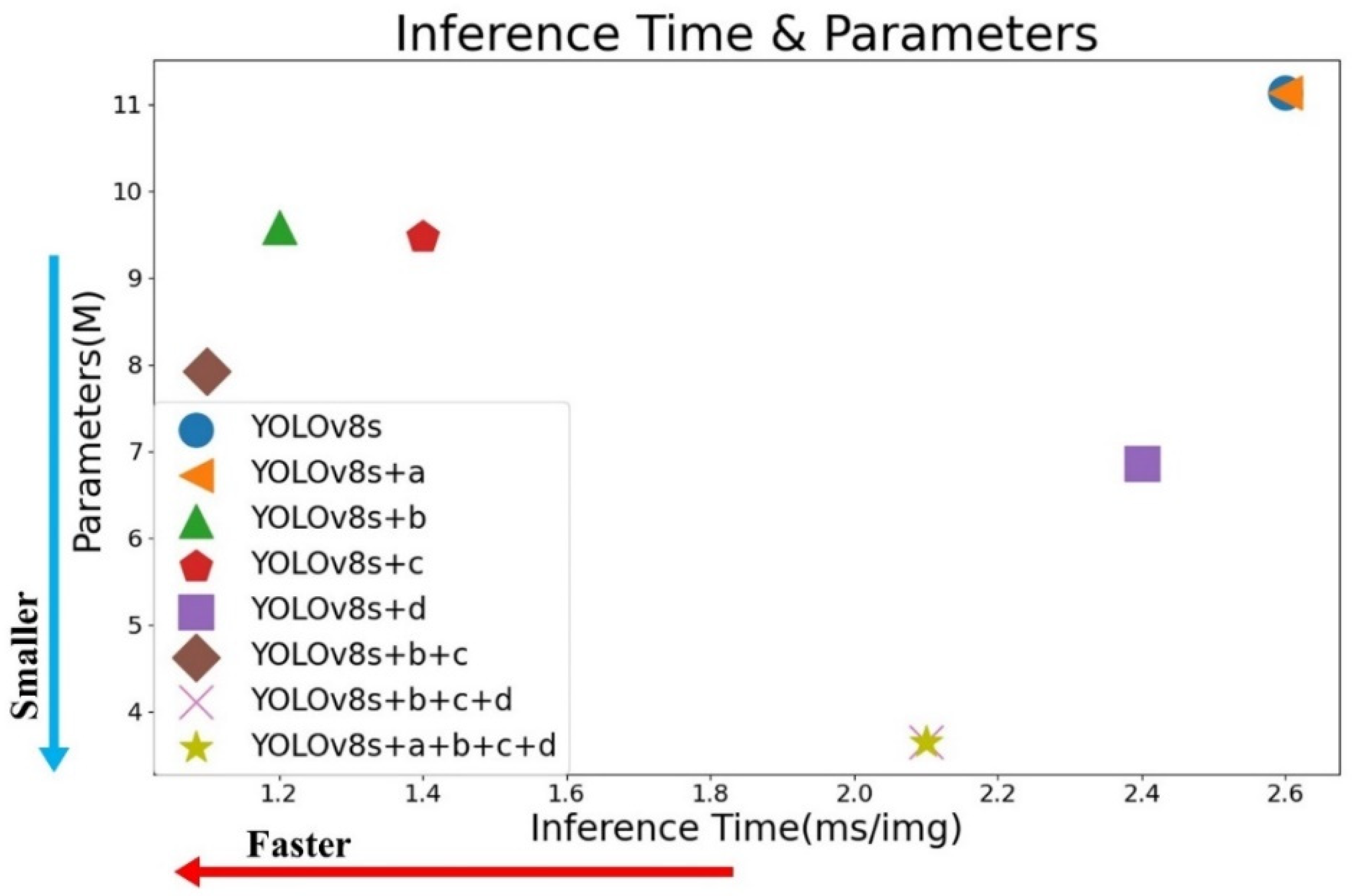

| 1 | YOLOv8s | 80.2 | 59.9 | 66.1 | 11.14 | 28.7 | 2.6 |

| 2 | a | 84.1 | 62.2 | 67.7 | 11.14 | 28.7 | 2.6 |

| 3 | b | 79.8 | 60.5 | 66.6 | 9.59 | 25.7 | 1.2 |

| 4 | c | 82.8 | 59.5 | 66.5 | 9.48 | 23.8 | 1.4 |

| 5 | d | 86.8 | 70.5 | 76.7 | 6.86 | 31.1 | 2.4 |

| 6 | b+c | 81 | 59.4 | 66.1 | 7.93 | 20.9 | 1.1 |

| 7 | b+c+d | 82.6 | 71.3 | 76.6 | 3.65 | 23.3 | 2.1 |

| 8 | a+b+c+d(our) | 85.5 | 71.6 | 78.3 | 3.64 | 22.9 | 2.1 |

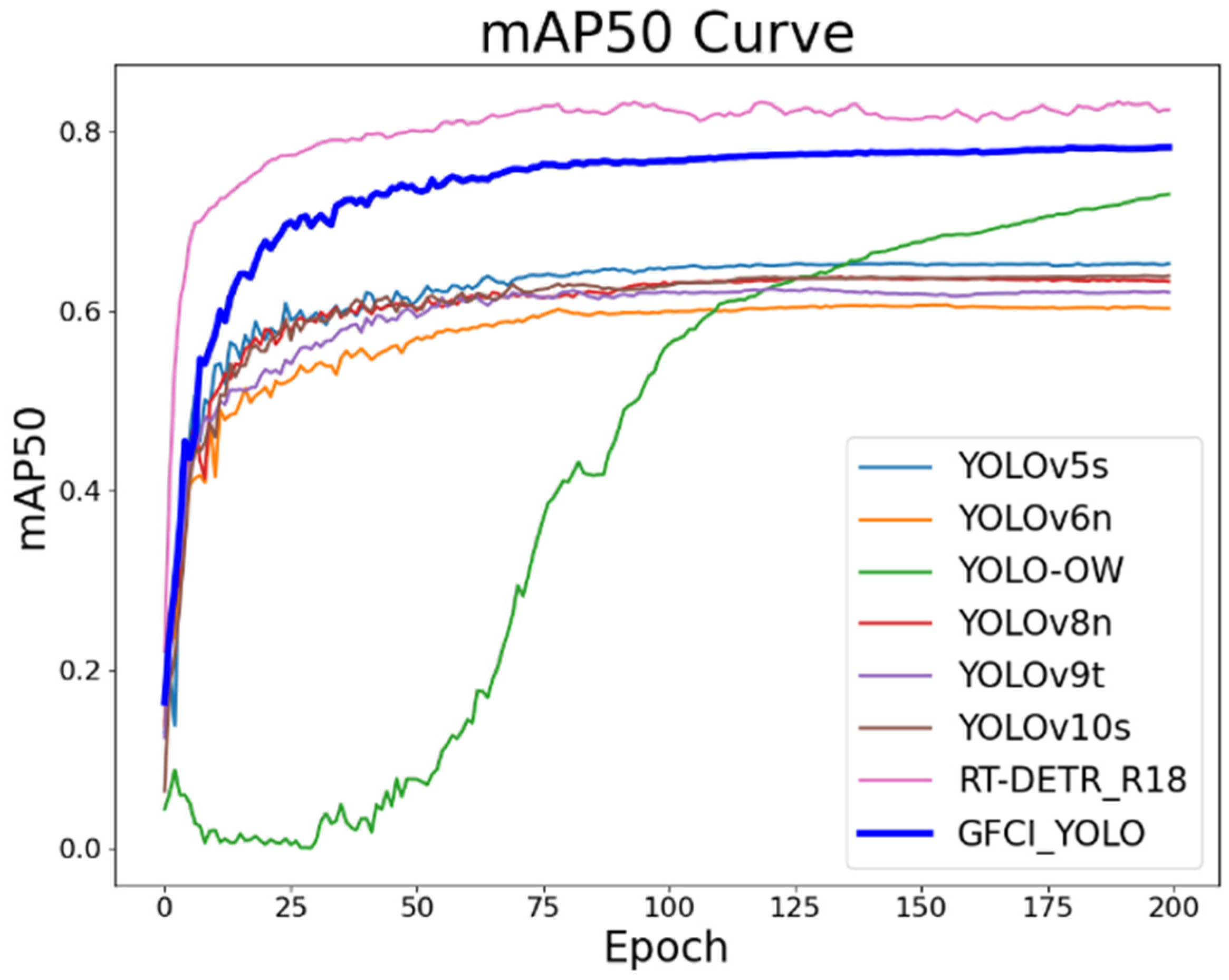

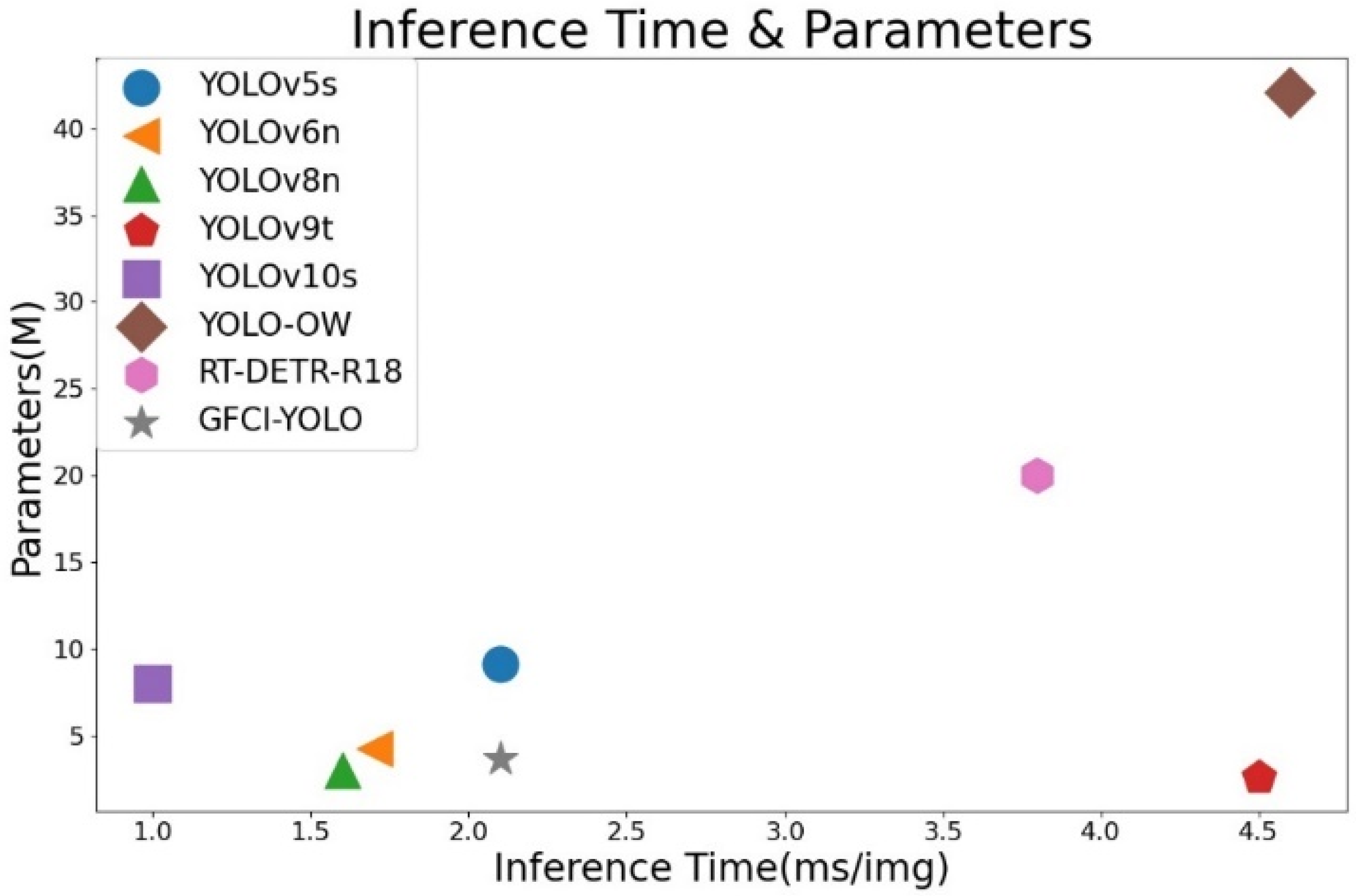

| Class | Algorithms |

P (%) |

R (%) |

mAP50val (%) |

Params (M) |

FLOPs (G) |

Speed RTX4090 b16 (ms) |

| 1 | YOLOv5s | 82.7 | 57.9 | 65.4 | 9.11 | 23.8 | 2.1 |

| 2 | YOLOv6n | 79.5 | 57.7 | 60.6 | 4.23 | 11.8 | 1.7 |

| 3 | YOLOv8n | 79.0 | 58.8 | 63.6 | 3.0 | 8.1 | 1.6 |

| 4 | YOLOv9t | 74.1 | 58.5 | 62.3 | 2.62 | 10.7 | 4.5 |

| 5 | YOLOv10s | 82.3 | 59.3 | 63.8 | 8.04 | 24.5 | 1.0 |

| 6 | YOLO-OW | 82.4 | 76.2 | 73.1 | 42.1 | 94.8 | 4.6 |

| 7 | RT-DETR-R18 | 88.4 | 82.6 | 83.6 | 20.0 | 57.0 | 3.8 |

| 8 | GFLM-YOLO (our) | 85.5 | 71.6 | 78.3 | 3.64 | 22.9 | 2.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).