Submitted:

11 July 2024

Posted:

12 July 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Models

2.2. Data

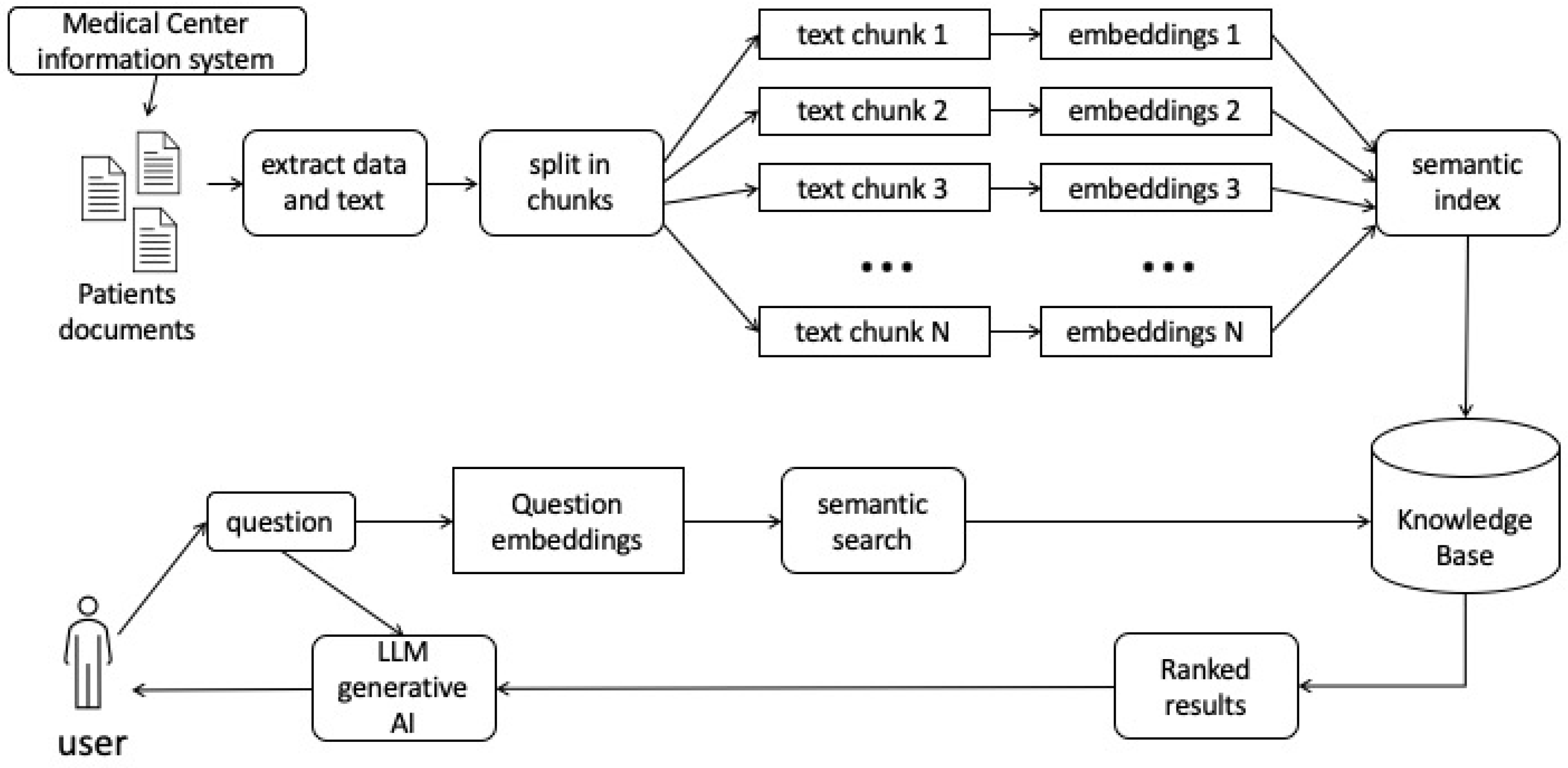

2.3. Retrieval Augmented Generation (RAG)

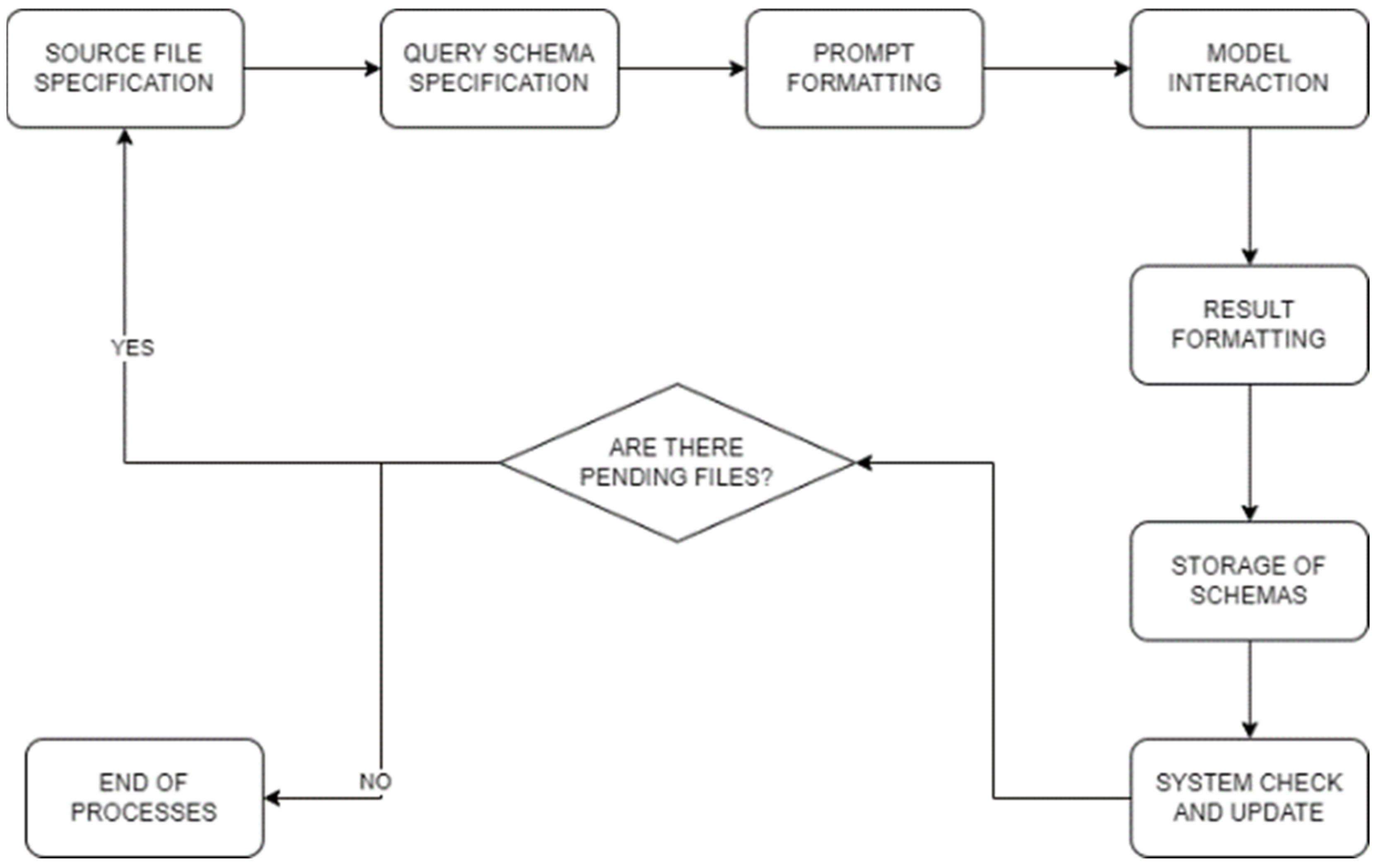

- Source File (PDF) Specification: Leveraging the PyPDFLoader class from the pypdf package within the Python programming language, specifically executed in a Jupyter notebook environment, we systematically manage the content of PDF files. This includes the extraction of pertinent information from a predefined directory housing a curated selection of clinical documents across various categories. The subsequent utilization of PyPDFLoader facilitates the streamlined processing of content from each document with the corresponding Language Model (LLM).

- Query Schema Creation: The formulation of a structured query is conducted to generate a JSON-style schema, meticulously aligning with key patient attributes such as name, age, diagnostic tests, diagnosis, and medication. Ensuring adherence to this specified schema is imperative. Python’s object-oriented programming paradigm, implemented within a Jupyter notebook, is instrumental in defining the class that underpins this schema, thereby ensuring seamless data extraction and subsequent processing.

- Prompt Formatting: Prior to submitting the prompt for processing by the LLM, we meticulously format it to align precisely with the schema defined by Pydantic. This formatting process, executed in Python and complemented by the Pydantic library for data validation within the Jupyter notebook framework, ensures that the response from the LLM strictly adheres to the predefined schema.

- Model Interaction: The transmission of the formatted prompt to the Language Model (LLM) is facilitated through serialization. This serialization process is executed using Python, either through the OpenAI API or the LlamaCpp library, contingent upon the specific case. The LLM, embedded within a Jupyter notebook, retrieves embeddings and pertinent data, applying predefined processing rules from various data models. The culmination of this interaction is directed towards a .bin file, serving as a repository for valuable embeddings.

- Result Formatting: The outcomes of the prompt, critically, are not processed as plain text but undergo transformation into JSON format. This strategic conversion enhances clarity and eases interpretation, ensuring a structured representation of the results. The Python-based implementation, within the Jupyter notebook environment, facilitates subsequent processing and detailed analysis.

- Storage of Schemas: Post the JSON formatting, the structured results are systematically stored in an array. This array, acting as a repository, captures diverse schemas generated from different prompts. Within the Python-centric Jupyter notebook, this organized storage lays the foundation for subsequent processing stages and serves as a valuable resource for ongoing system development and meticulous data analysis.

- System Check and Update: A comprehensive system check involves the verification of the presence of the local FAISS database file within the file system. This specific file system subset, resembling a decomposition into various files, is examined. If the database file is found, Python functionalities within the Jupyter notebook facilitate the seamless integration of new texts derived from the aforementioned JSON outcomes. The utilization of JSON format, even during system checks, enhances comprehension during future processing stages. In the event the database file is not found, the initiation of a new FAISS database involves the incorporation of embeddings obtained from the currently utilized Language Model (LLM) within the Python environment of the Jupyter notebook. Once this step is completed, the initial texts can be added to the database, establishing a foundational dataset for further utilization within the system.

2.4. Evaluation

- True Positives (TP): Instances where data is correctly extracted from the report.

- False Positives (FP): Instances of erroneous data associations.

- True Negatives (TN): Accurate identifications of the absence of data.

- False Negatives (FN): Denials of data that actually exist but have not been correctly interpreted.

| PREDICTION | |||

| + | - | ||

| REAL CLASS | + | TP | FN |

| - | FP | TN | |

- Accuracy (A): Accuracy, a fundamental metric in model evaluation, assesses the overall correctness of predictions by considering both true positives and true negatives. It is calculated as the ratio of the sum of true positives and true negatives to the total number of instances.

- Precision (P): Precision, the ratio of true positives to the sum of true positives and false positives, provides insight into the accuracy of the extracted data, emphasizing the relevance of the identified information. It helps discern how many of the identified instances are indeed relevant to the task at hand.

- Recall (R): It is the ratio of true positives to the sum of true positives and false negatives. It gauges the system’s ability to capture and retrieve all pertinent information, minimizing the likelihood of overlooking relevant data. In our context, recall is crucial to ensure that the system comprehensively identifies and retrieves all relevant data, reducing the chances of missing crucial information.

- F1 Score (F1): The harmonic mean of precision and recall, in serving as a balanced measure that incorporates both false positives and false negatives. The F1 score is particularly valuable when there is an uneven distribution between positive and negative instances, preventing an undue influence on the evaluation due to class imbalance. The adoption of F1 score, along with precision and recall, aims to provide a comprehensive assessment that considers both the accuracy and completeness of the data extraction process across various categories within the JSON structure.

3. Results

4. Discussion

5. Conclusions

Author Contributions

Conflicts of Interest

References

- Fijačko N, Gosak L, Štiglic G, et al. “Can ChatGPT pass the life support exams without entering the American heart association course?,” Resuscitation, vol. 185, p. 109732, Apr. 2023. [CrossRef]

- Karabacak M and Margetis K, “Embracing Large Language Models for Medical Applications: Opportunities and Challenges,” Cureus, May 2023. [CrossRef]

- Stade E, Stirman S, Ungar L et al. Large language models could change the future of behavioral healthcare: a proposal for responsible development and evaluation. npj Mental Health Res 3, 12 (2024). [CrossRef]

- Barnard F, Van Sittert M, and Rambhatla S, “Self-Diagnosis and Large Language Models: A New Front for Medical Misinformation.” arXiv, Jul. 10, 2023. Accessed: Nov. 04, 2023. [Online]. Available online: http://arxiv.org/abs/2307.04910.

- Mirchandani S, Xia F, Florence P et al., “Large Language Models as General Pattern Machines.” arXiv, Oct. 25, 2023. Accessed: Nov. 04, 2023. [Online]. Available online: http://arxiv.org/abs/2307.04721.

- Alberts I, Mercolli L, Pyka T et al., “Large language models (LLM) and ChatGPT: what will the impact on nuclear medicine be?,” Eur J Nucl Med Mol Imaging, vol. 50, no. 6, Art. no. 6, May 2023. [CrossRef]

- Shi Y, Wiggers P, and Jonker C, “Towards recurrent neural networks language models with linguistic and contextual features,” in Interspeech 2012, ISCA, Sep. 2012, pp. 1664–1667. [CrossRef]

- Zhou D, Schärli N, Hou L et al., “Least-to-Most Prompting Enables Complex Reasoning in Large Language Models,” no. arXiv:2205.10625. arXiv, Apr. 16, 2023. Available online: http://arxiv.org/abs/2205.10625 (accessed on 2 July 2023).

- Jiang J, “Information Extraction from Text,” in Mining Text Data, C. C. Aggarwal and C. Zhai, Eds., Boston, MA: Springer US, 2012, pp. 11–41. [CrossRef]

- Gao T, Yen H, Yu J et al. “Enabling Large Language Models to Generate Text with Citations,” arXiv, Oct 31, 2023. [CrossRef]

- Bayer T and Walischewski H, “Experiments on extracting structural information from paper documents using syntactic pattern analysis,” in Proceedings of 3rd International Conference on Document Analysis and Recognition, Montreal, Que., Canada: IEEE Comput. Soc. Press, 1995, pp. 476–479. [CrossRef]

- Cooper W, Chen A, and Gey F, “Experiments in the Probabilistic Retrieval of Full Text Documents,” in Proceedings of The Third Text Retrieval Conference, TREC 1994, Gaithersburg, Maryland, USA, November 2-4, 1994, D. K. Harman, Ed., in NIST Special Publication, vol. 500–225. National Institute of Standards and Technology (NIST), 1994, pp. 127–134. [Online]. Available online: http://trec.nist.gov/pubs/trec3/papers/berkeley.ps.gz.

- Stoffel F and Fischer F, “Using a knowledge graph data structure to analyze text documents (VAST challenge 2014 MC1),” in 2014 IEEE Conference on Visual Analytics Science and Technology (VAST), Paris: IEEE, Oct. 2014, pp. 331–332. [CrossRef]

- Žitnik S and Bajec M, “Text Mining in Medicine,” in Computational Medicine in Data Mining and Modeling, G. Rakocevic, T. Djukic, N. Filipovic, and V. Milutinović, Eds., New York, NY: Springer New York, 2013, pp. 105–134. [CrossRef]

- Bisercic A, Nikolic M, van der Schaar M et al. “Interpretable Medical Diagnostics with Structured Data Extraction by Large Language Models.” arXiv, Jun. 08, 2023. Accessed: Nov. 05, 2023. [Online]. Available online: http://arxiv.org/abs/2306.05052.

- Bai F, Kang J, Stanovsky G, et al. “Schema-Driven Information Extraction from Heterogeneous Tables.” arXiv, May 23, 2023. [Online]. Available online: http://arxiv.org/abs/2305.14336 (accessed on 5 November 2023).

- Yamashita R, Bird K, Yue-Cheng C et al., “Automated Identification and Measurement Extraction of Pancreatic Cystic Lesions from Free-Text Radiology Reports Using Natural Language Processing,” Radiol Artif Intell, vol. 4, no. 2, p. e210092, Mar. 2022. [CrossRef]

- Yu A, Zhongyu A, Chloe P et al., “Automating Stroke Data Extraction From Free-Text Radiology Reports Using Natural Language Processing: Instrument Validation Study,” JMIR Med Inform, vol. 9, no. 5, p. e24381, May 2021. [CrossRef]

- Awal R, Zhang L, and Agrawal A, “Investigating Prompting Techniques for Zero- and Few-Shot Visual Question Answering.” arXiv, Jun. 16, 2023. [Online]. Available online: http://arxiv.org/abs/2306.09996 (accessed on 9 November 2023).

- Ranjit M, Ganapathy G, Manuel R et al. “Retrieval Augmented Chest X-Ray Report Generation using OpenAI GPT models.” arXiv, May 05, 2023. [Online]. Available online: http://arxiv.org/abs/2305.03660 (accessed on 4 February 2024).

- Jiang Z, Xu F, Gao L et al., “Active Retrieval Augmented Generation.” arXiv, Oct. 21, 2023. [Online]. Available online: http://arxiv.org/abs/2305.06983 (accessed on 4 February 2024).

- Gao Y, Xiong Y, Xinyu G et al., “Retrieval-Augmented Generation for Large Language Models: A Survey.” arXiv, Jan. 04, 2024. [Online]. Available online: http://arxiv.org/abs/2312.10997 (accessed on 4 February 2024).

- Guerreiro N, Alves D, Waldendorf et al., “Hallucinations in Large Multilingual Translation Models.” arXiv, Mar. 28, 2023. [Online]. Available online: http://arxiv.org/abs/2303.16104 (accessed on 26 November 2023).

- Bayer T, “Representing and Utilising Knowledge for Understanding Structured Documents”. MVA ’92. Dec. 7-9, 1992. Accessed: Apr. 11, 2024.

| Model | Accuracy | Recall | Precision | F1 |

| OpenAI 3.5 | 0.78 | 1 | 0.78 | 0.88 |

| LlaMA 2 | 0.63 | 0.97 | 0.64 | 0.78 |

| Vicuna | 0.63 | 0.97 | 0.64 | 0.78 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).