This section is dedicated towards some of the progresses made towards XAI security, which includes development of ideas towards a more secured XAI system or explicit vulnerabilities of already existing models.

3.1. Six Ws

Vigano and Magazzeni came up with the idea of explainable security (XSec). The method they mention is quite complicated as they involve a number of different stakeholders. They elaborated on six questions, and provided a review on how to secure the systems. The six questions are,

- 1.

Who gives and receives the explanation?

- 2.

What is explained?

- 3.

When is an explanation given?

- 4.

Where is the explanation given?

- 5.

Why is XSec needed?

- 6.

How to explain security?

We will no elaborate on what the individual questions signify.

3.1.1. Who Gives and Receives the Explanation?

The characters who are considered in XSec includes, the designer of the system, the user, assuming them to be innocent non-experts, who can mistakes, making the system vulnerable, the attacker who tries to exploit the weak points of the system, the analyst of the system who analyses and tests the system and finally we have the defender who tries to defend the system. All of these roles involve someone to receive of provide explanations.

3.1.2. What is Explained?

it depends on the stakeholder, the type of explanation that is relevant. Developers need a detailed desiderata of the client so that they can realize the system in a secure and satisfactory way. For users, they need explanations which will establish their trust in the system and which can also help them in understanding on how the system can be used. For analysts, they will require system’s specifications so that they can create models to analyze. Defenders need to have the knowledge of the vulnerabilities and related attacks and finally attackers will need visible vulnerabilities which they can exploit.

3.1.3. Where?

The authors consider four main cases that falls under this question.

- 1.

Explanations are provided to the users as a part of the security policy.

- 2.

Detaching explanations from the system and making it available elsewhere.

- 3.

Considering a service where the users can interact with an expert system which provides explanations.

- 4.

The best option which the authors consider is a ’security-explaining-carrying-system’, although a considerable amount of work is required to ensure the it’s safety.

3.1.4. When?

The authors mention when the explanations of security is required. They are required when the system is designed, implemented, deployed, used, analyzed, attacked, defended, modified and possibly even when decommissioned. These explanations are not only required during the runtime but also when the system is designed.

3.1.5. Why?

With the exception of attackers, all the other roles want the system to be secure. The explanations increases trust, confidence, transparency, usability, concrete usage, accountability, verifiability and testability.

3.1.6. How?

Depending on the intended audience, the explanations can be provided using natural language by an informal but structured spoken language, graphical language, involving explanation trees, attack trees, attack-defense trees, attack graphs, attack patterns, message-sequence charts, formal languages which includes proofs and plans or a gamification process where users learn about how to use the system.

3.2. Taxonomy of XAI and Black Box Attacks

Kuppa and Le-Khac in their paper presented a taxonomy of XAI in the security domain. Further they propose a novel black box attack which attacks the consistency, correctness and confidence of gradient based XAI methods.

3.2.1. Taxonomy

The authors classify explainabilty space concerning the security domain into three main parts, X-PLAIN, XSP-PLAIN, XT-PLAIN. We will now discuss briefly about each of them.

- 1.

X-PLAIN is regarding the explanations provided for the predictions given by the model. This includes, the static and interactive changes in explanations, local/global explanations, in-model/post-hoc explanations, surrogate models and visualizations of a model.

- 2.

XSP-PLAIN includes confidential information such as features which are required to be protected, integrity properties of the data and the model ND privacy properties of the data and the model.

- 3.

XT-PLAIN deals with the threat models considered. This includes correctness, consistency, transferability, confidence, fairness and privacy.

3.2.2. Proposed Black Box Attack

Consider a neural network which considers d features from the input and classifies them into k categories. Formally, . Consider the explanation map , which assigns a value to each feature denoting their importance. Consider as a target explanation and as an input. I-attacks in particular, attacks the interpreter. In this case, we need a manipulated input, such that it’s explanation is very close to the target explanation, such that output of the classifier approximately, remains the same. In other words, and . In the case of CI-attack, both the model and the interpreter are attacked where and . Additionally, it is required that the perturbations made is small. A few more constraints must be taken into consideration. The model is black box, the perturbation made must be sparse and the perturbed input must be interpretable, which means that the instance must be close to the distribution of data considered for training the model.

Considering all these constraints, the attack is presented in two steps. The first one involves collecting n data points, using the model as a black box and train a surrogate model on the data and the output provided by the model. A problem in this case is the fact that the attacker is not aware of the distribution of data considered for training the model. To deal with that problem, we have Manifold Approximation Algorithm on the n data points which will give us the best piece wise spherical manifold or a subspace and a projection map p, mapping to the space such that mean square error is minimized. This step will help us in dividing the data points into various data distributions. In a similar way, we can get explanation distributions. For the second step, we need to induce some minor distortions in the input distribution to move the decision boundary to and to , where a is the natural sample distribution and i represents the target distribution.

3.3. Interpretation of Neural Networks is Fragile

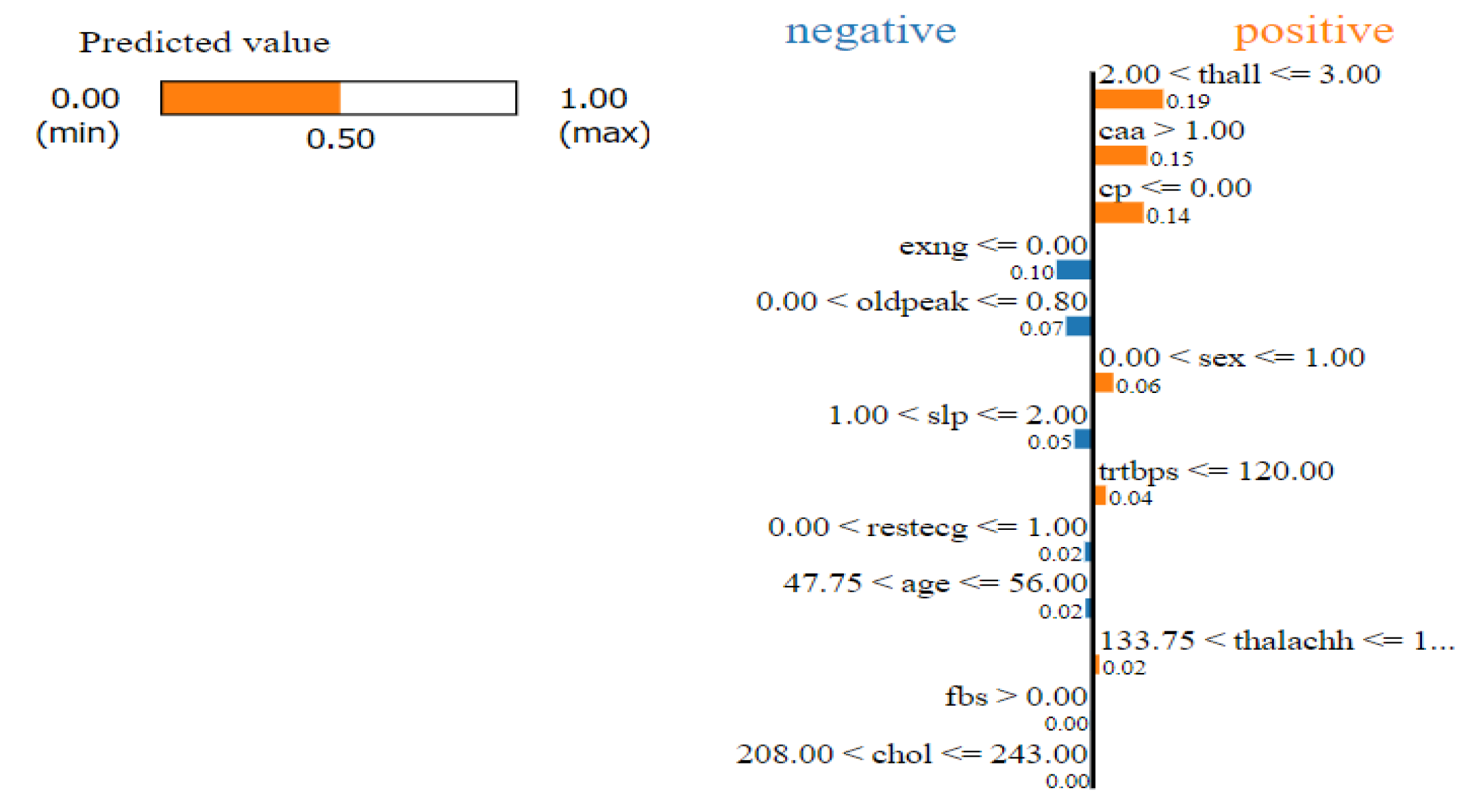

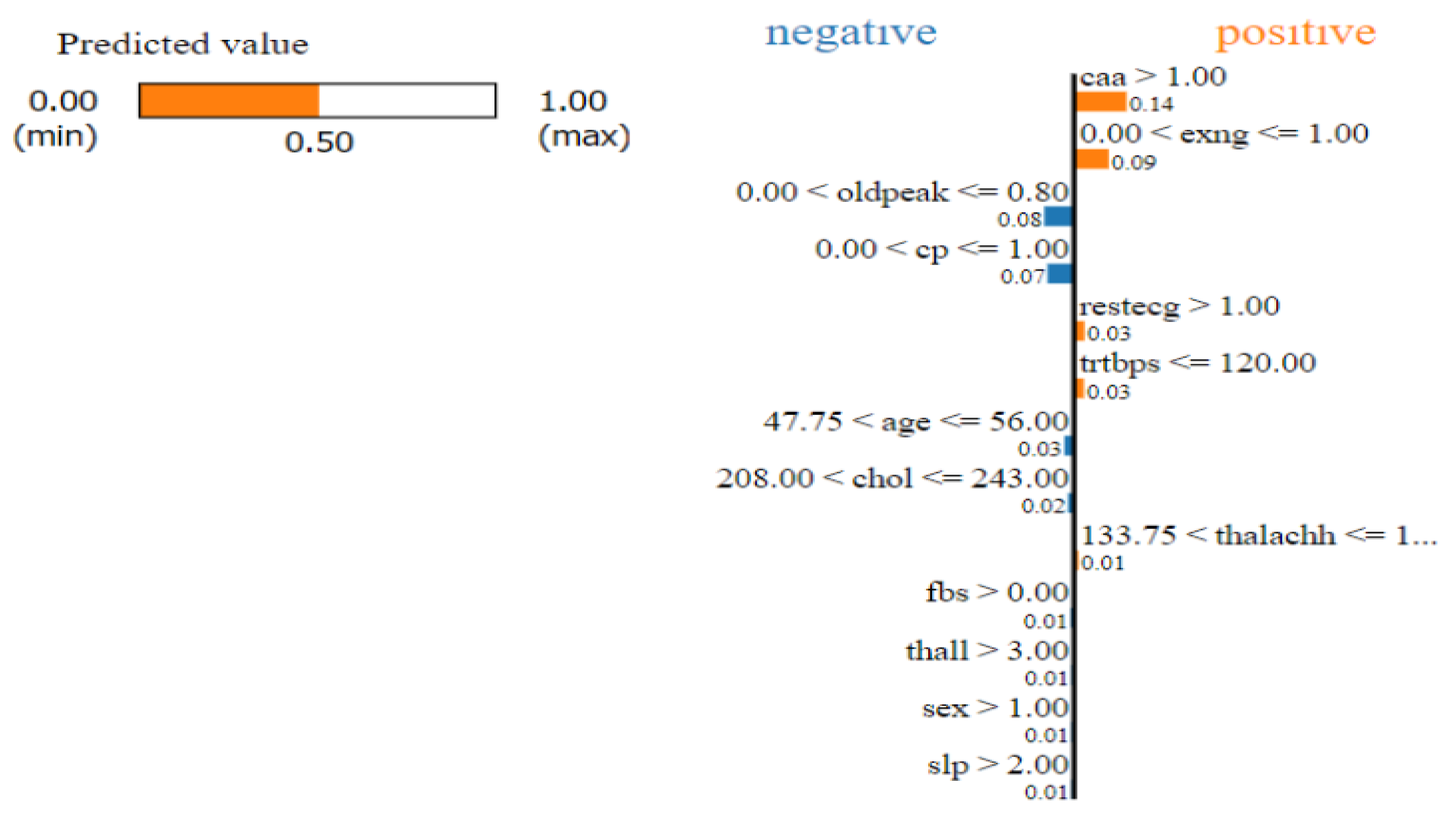

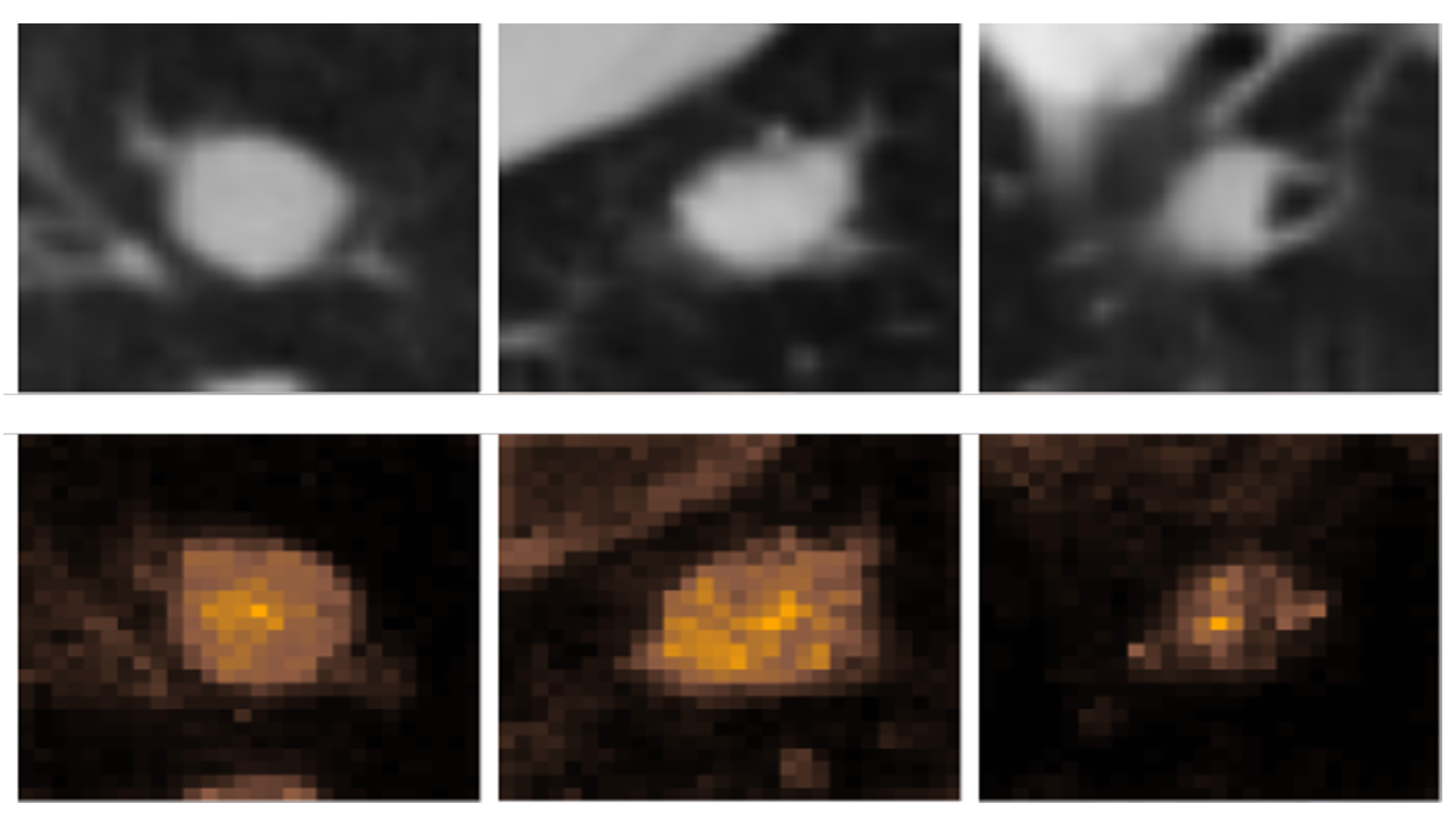

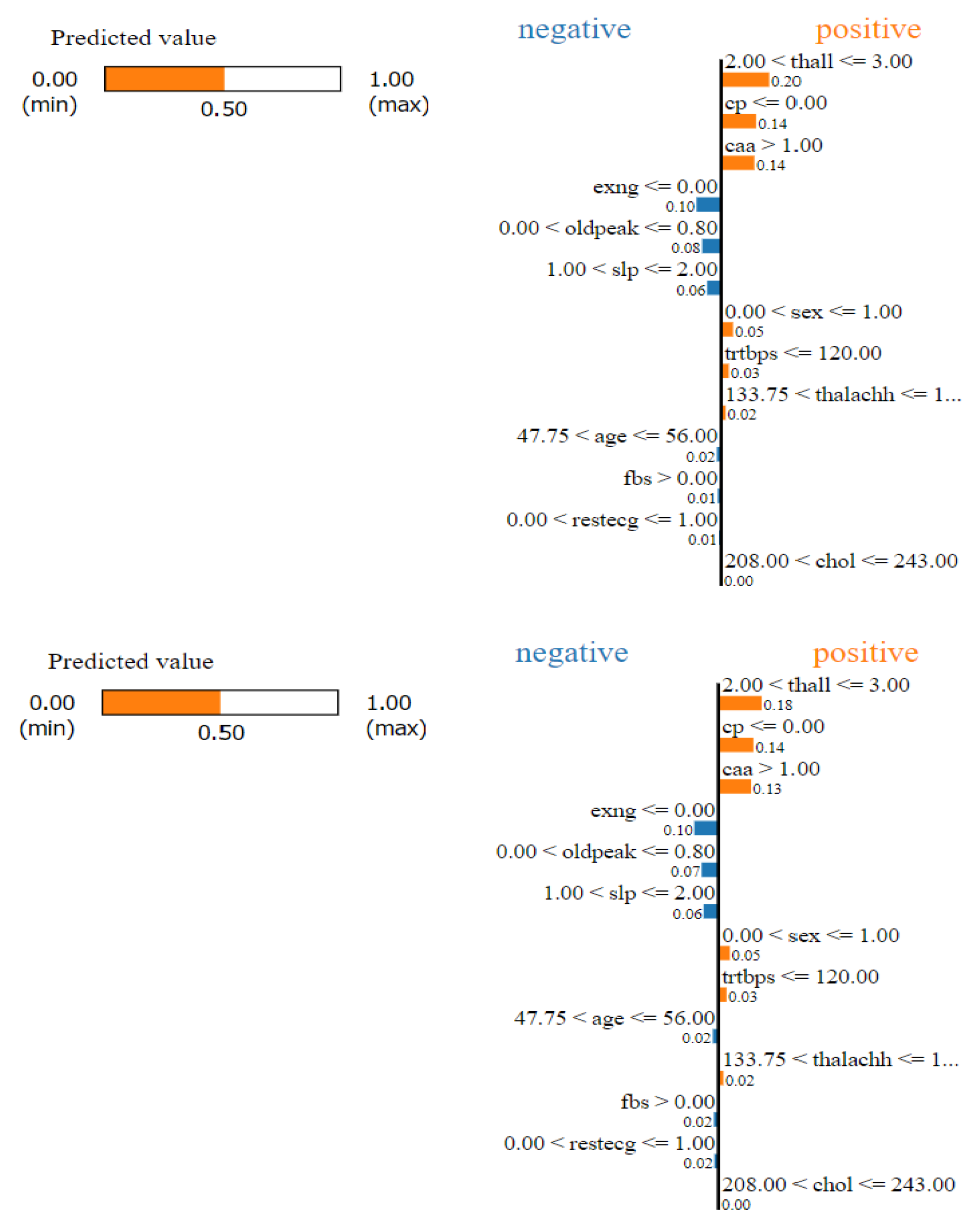

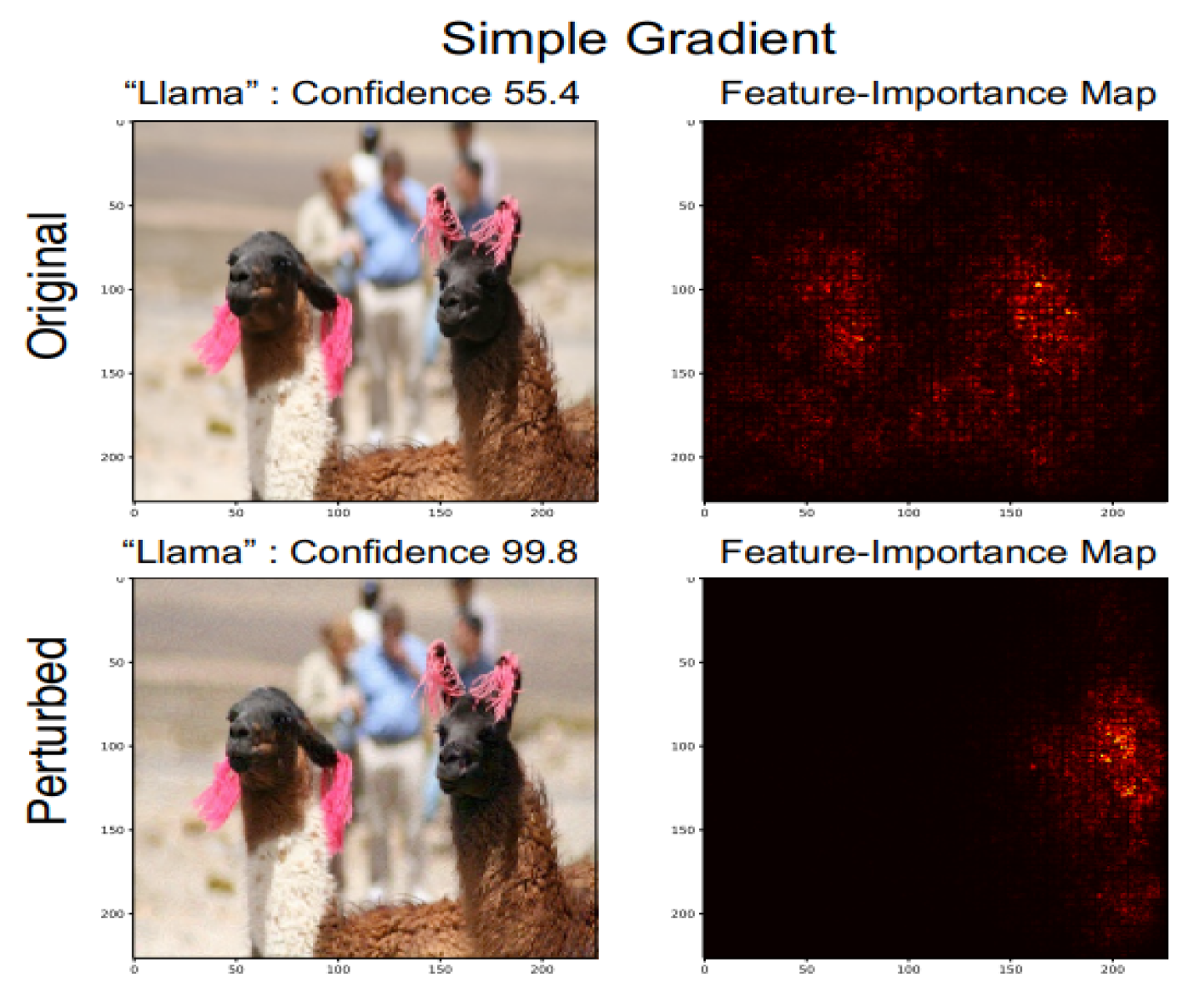

Authors of [

4] introduce adversarial perturbation to neural network interpretation. They call the interpretation of neural network to be fragile if seemingly indistinguishable images with the same label, is given different interpretation.(See

Figure 5) In this section we will talk about how the perturbations are made.

Consider a neural network,

N a test data

and the interpretation

. The goal is to make perturbations in the data such that they are imperceptible but change the interpretation. Formally this can be written as

The authors in [

4] talk about three kinds of perturbations.

The first one is random perturbations by of each pixel. Considering this as a baseline, the authors compare other adversarial attacks which includes iterative attacks against feature importance and gradient sign attack against influence functions.

The former involves the attack against feature importance methods which involves taking series of steps in the direction, maximizing a differentiable dissimilarity function between the interpretation of original and perturbed input. We have three more classifications for the same. This involves perturbing the feature importance map by decreasing the importance of the top important features. Second attack, involving visual data, creates maximum spatial displacement of the center of mass of a picture and the third attack involves increasing the concentration of feature importance score of some predefined regions.

The latter attack does not rely on iterative approaches. The authors linearize the equation for influence function around the values of current inputs and parameters and constraining the

norm of the perturbation to

, we get an optimal single step perturbation, which is,

The attack in this case will be to apply negative sign to the perturbation to decrease the influence of three training images which were the most influential for the original test image.