Submitted:

08 July 2024

Posted:

09 July 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

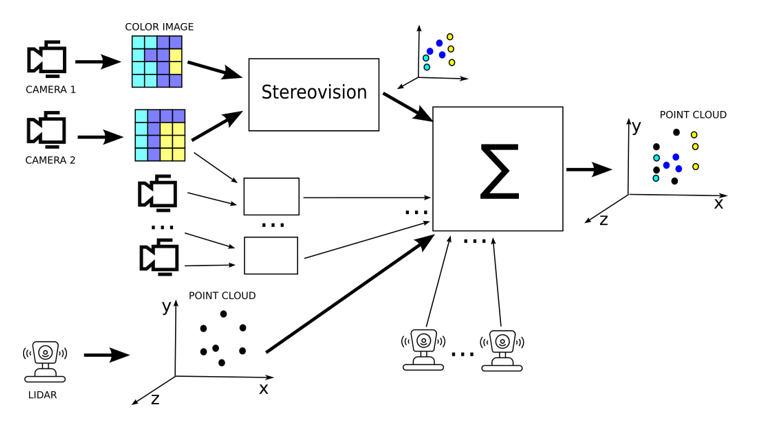

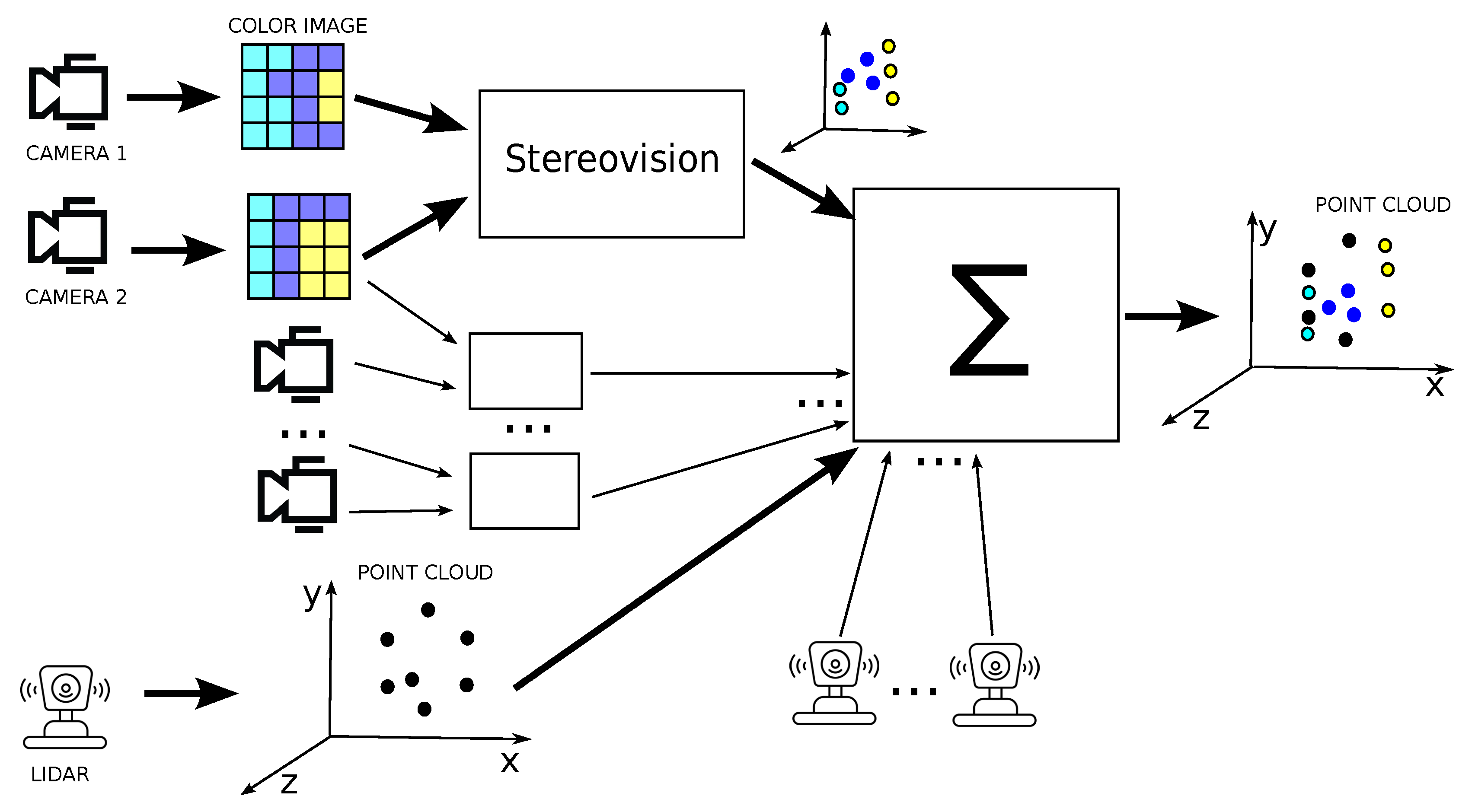

- point cloud densification – creation of point clouds based on pairs of stereovision images and camera calibration data, then combining point clounds;

- coloring of lidar point cloud based using colours from camera images;

- projection 3D lidar data on 2D, then fusing 2D images.

- Point Cloud Library (PCL) [12] - a popular library for point cloud processing, is a free and open-source solution. Its functionalities are focused on laser scanner data, although it also contains modules for processing stereo vision data. PCL is a C++ language library, although unofficial Python language bindings are also available on the web, e.g., [13], which allows you to use some of its functionality from within the Python language.

- OpenCV [14] - one of the most popular open libraries for processing and extracting data from images. It also includes algorithms for estimating the shapes of objects in two and three dimensions from images from one or multiple cameras and algorithms for determining the disparity map from stereovision images and 3D scene reconstruction. OpenCV is a C++ library with Python bindings.

2. Materials and Methods

2.1. New Algorytm for Sterovision

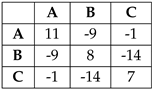

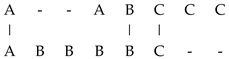

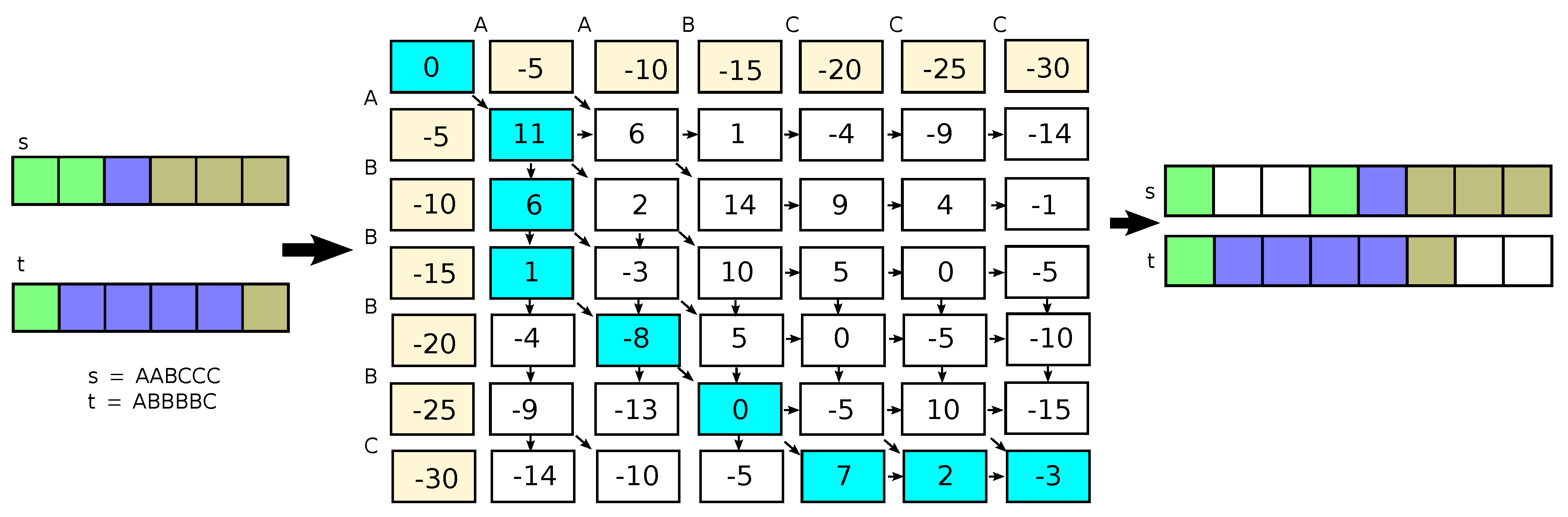

, and the penalthy , the results is

, and the penalthy , the results is  or matched pixels are . The from Equation (3), and results are depicted in Figure 2.

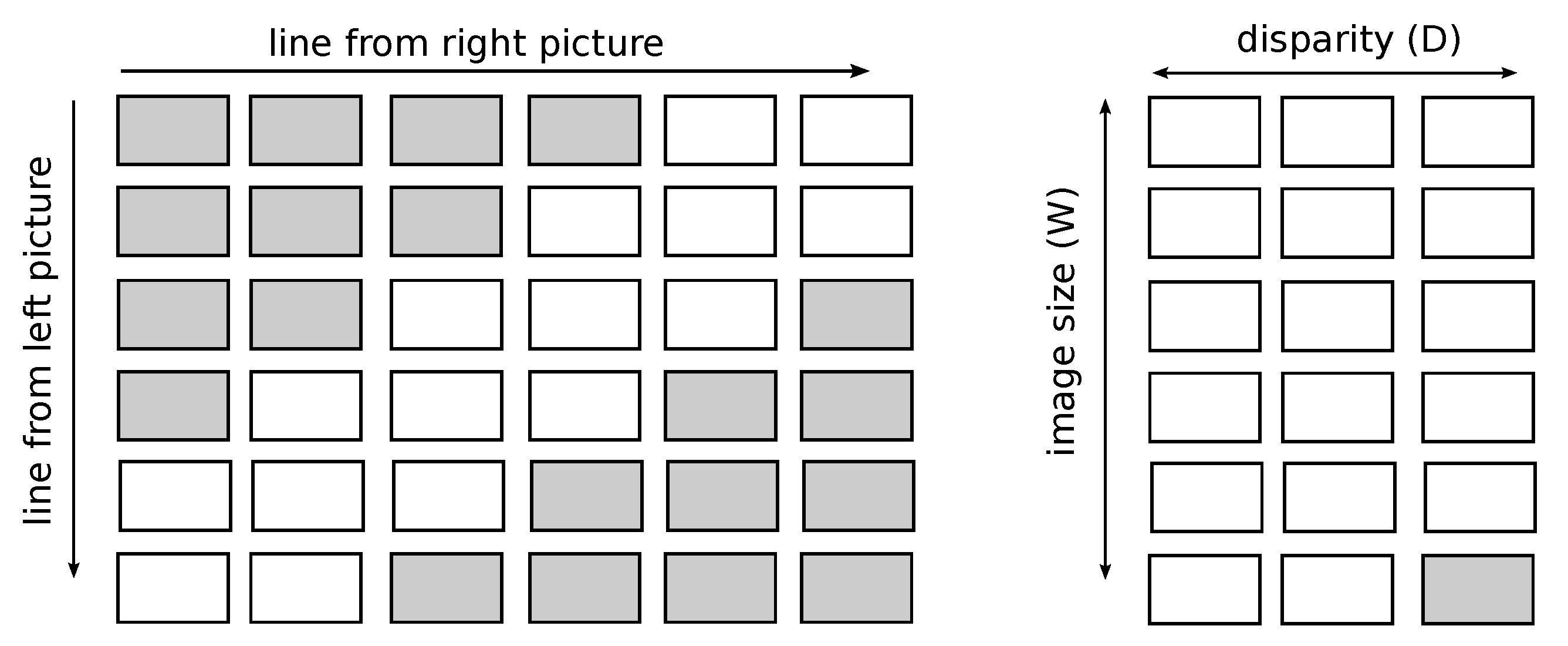

or matched pixels are . The from Equation (3), and results are depicted in Figure 2.2.1.1. Disparity Map Calculation Based on Matching

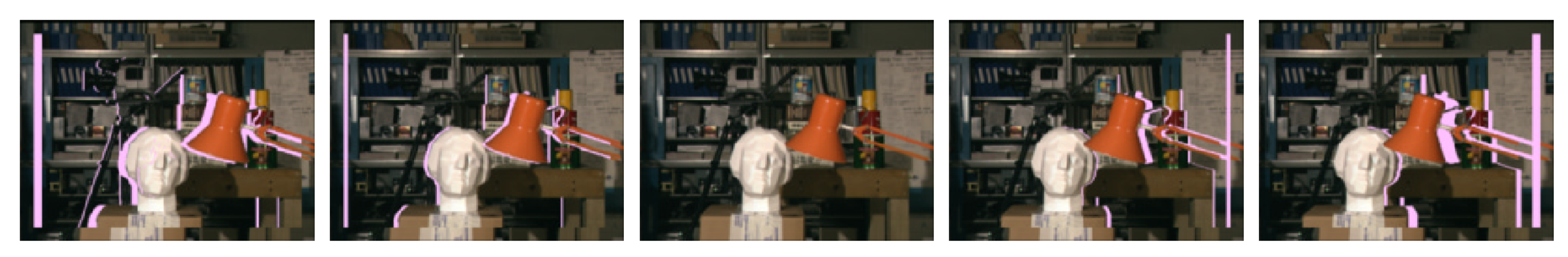

2.1.2. Improving the Quality of Matching through Edge Detection

2.1.3. Performance Improvement Reducing Length of Matched Sequences

2.1.4. Parallel Algorithm for 2D Images Matching

3. Results

3.1. Datasets

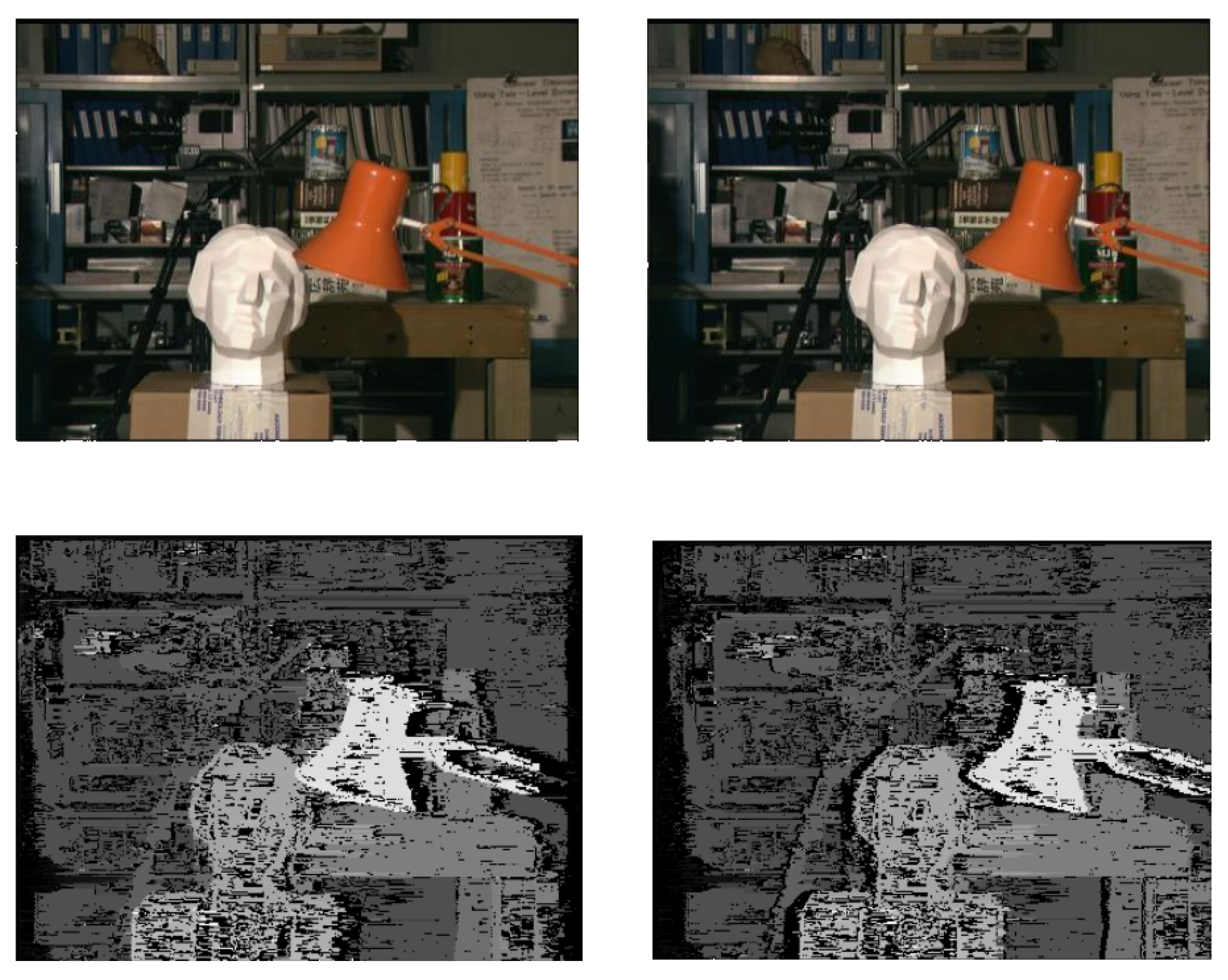

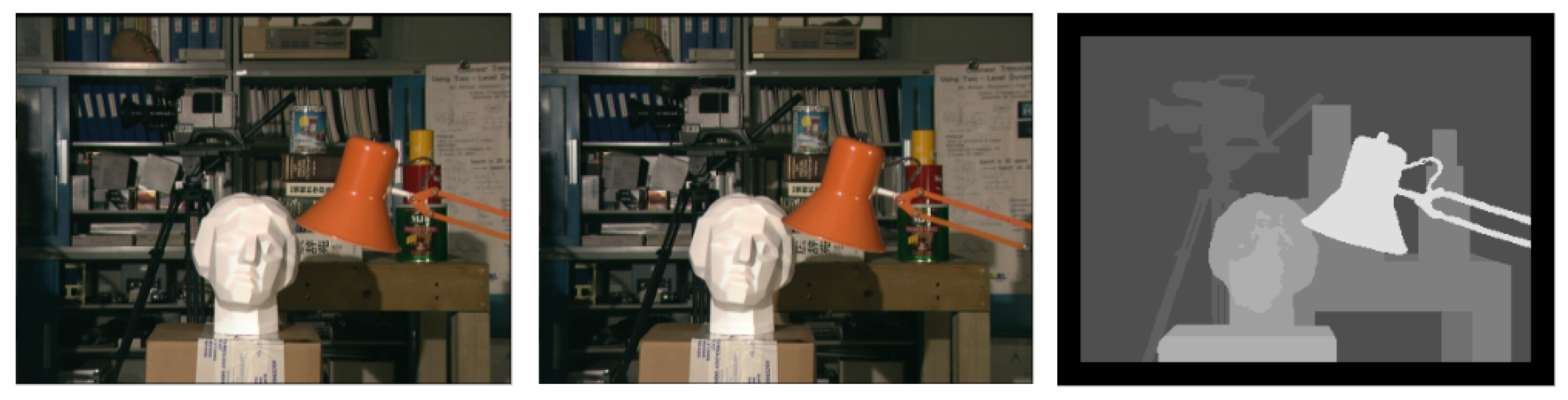

3.1.1. University of Tsukuba ’Head and Lamp’

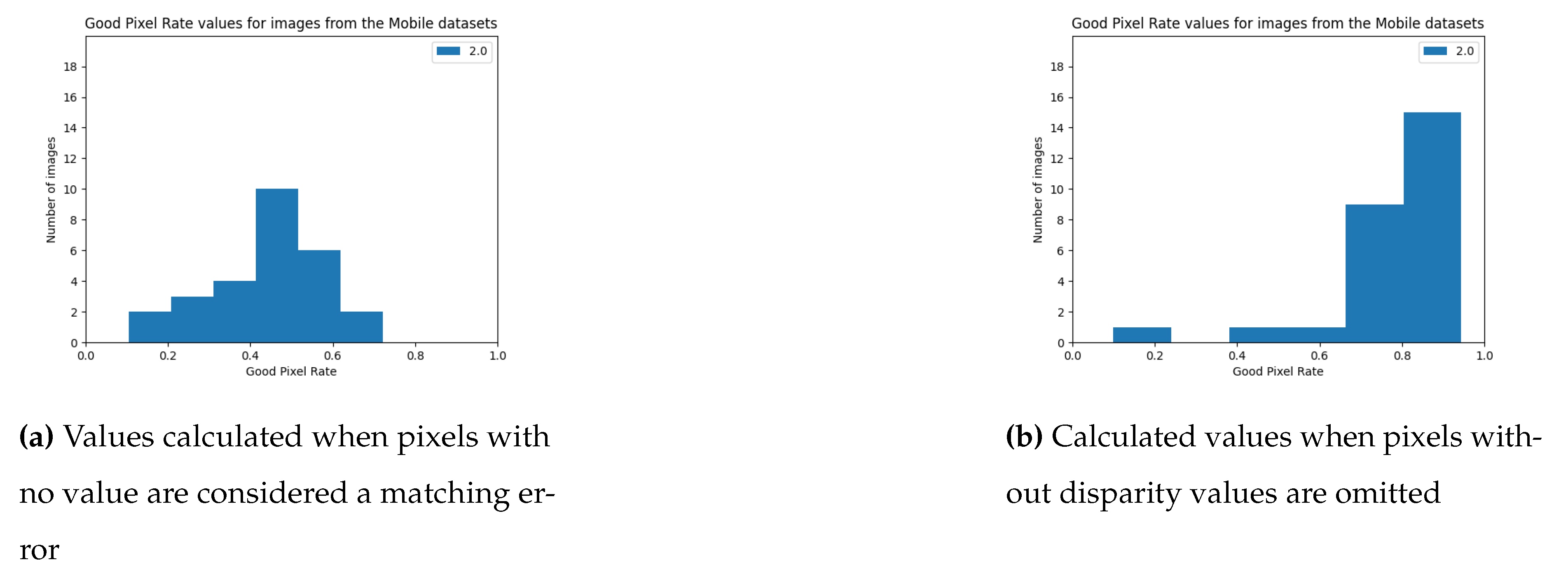

3.1.2. Middlebury 2021 Mobile Datasets

3.1.3. KITTI

3.2. Quality Evaluation Method

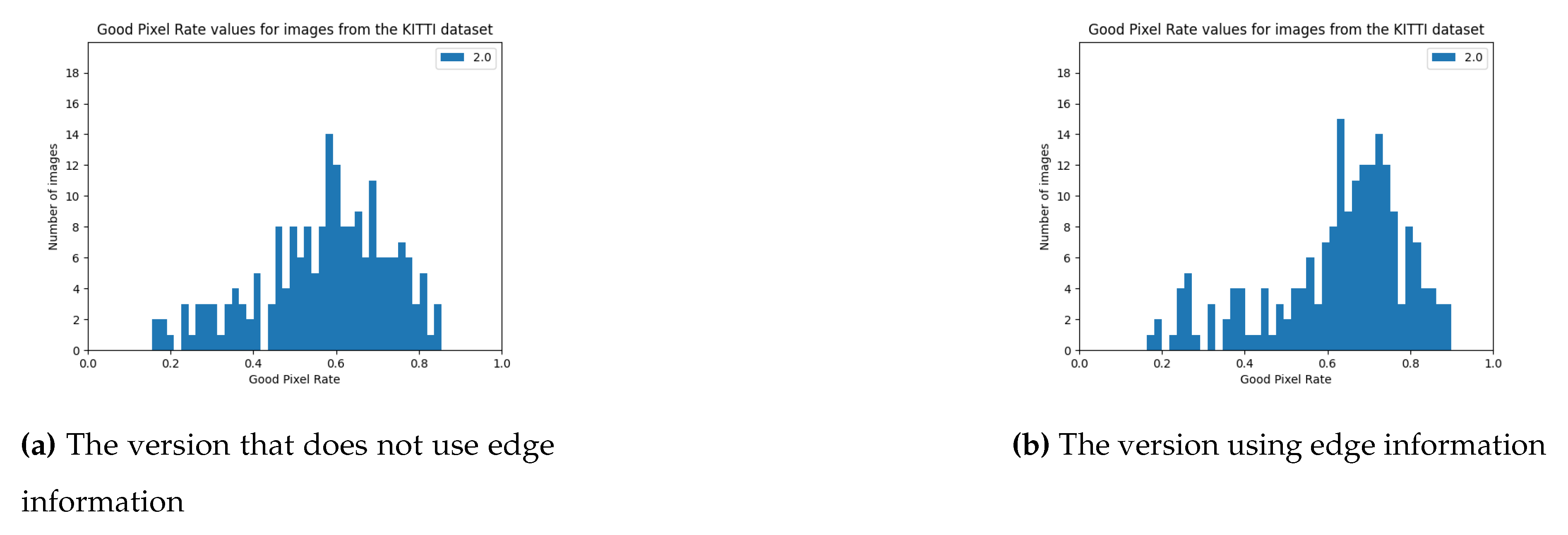

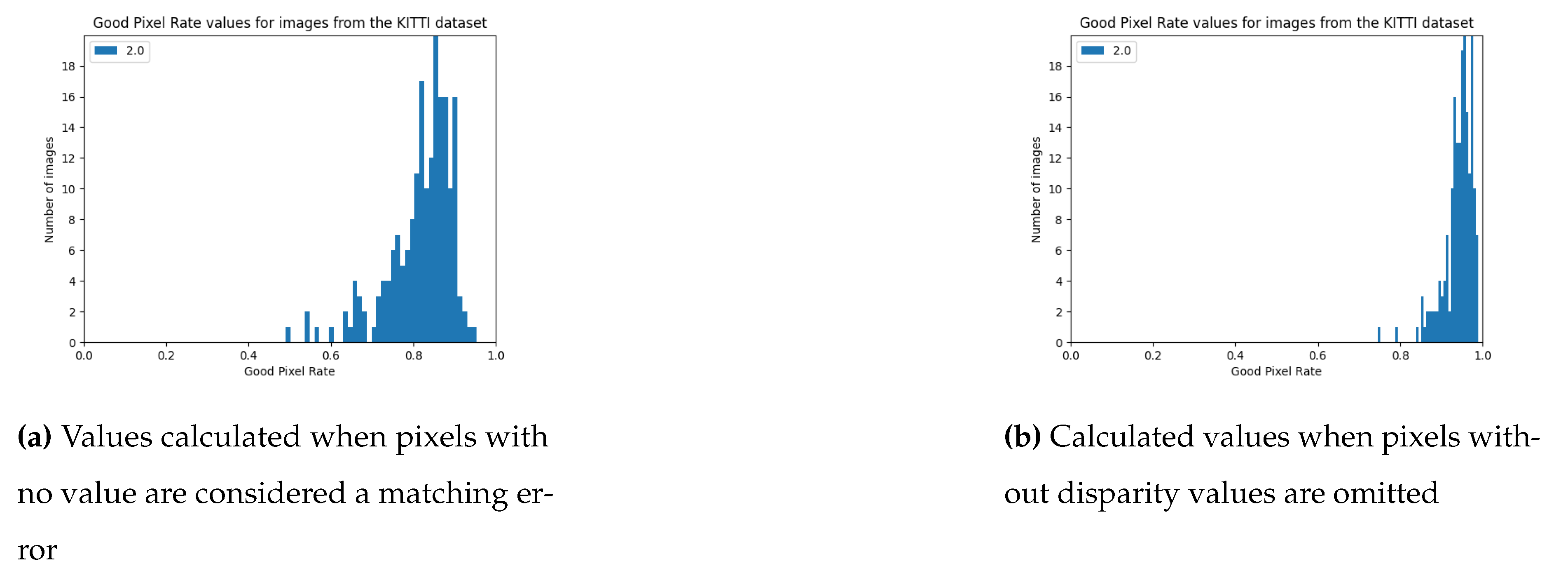

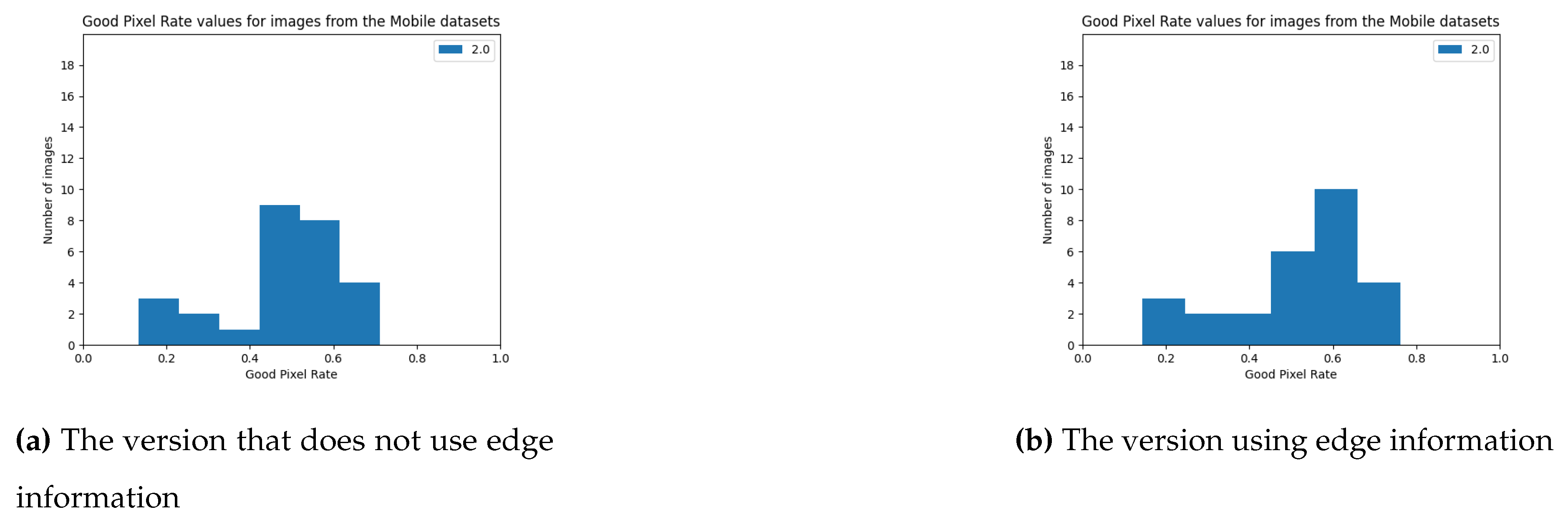

3.3. Quality Tests

3.4. Method of Performance Evaluation

3.5. Performance Tests

4. Discussion

5. Summary

- creation of point clouds based on pairs of stereovision images and camera calibration data,

- combining several point clouds together,

- colouring of lidar point clouds based on camera images,

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Shaukat, A.; Blacker, P.C.; Spiteri, C.; Gao, Y. Towards camera-LIDAR fusion-based terrain modelling for planetary surfaces: Review and analysis. Sensors 2016, 16, 1952. [Google Scholar]

- Ounoughi, C.; Yahia, S.B. Data fusion for ITS: A systematic literature review. Information Fusion 2023, 89, 267–291. [Google Scholar]

- Kolar, P.; Benavidez, P.; Jamshidi, M. Survey of datafusion techniques for laser and vision based sensor integration for autonomous navigation. Sensors 2020, 20, 2180. [Google Scholar]

- Singh, S.; Singh, H.; Bueno, G.; Deniz, O.; Singh, S.; Monga, H.; Hrisheekesha, P.; Pedraza, A. A review of image fusion: Methods, applications and performance metrics. Digital Signal Processing 2023, p. 104020.

- Lin, X.; Zhang, J. Segmentation-based filtering of airborne LiDAR point clouds by progressive densification of terrain segments. Remote Sensing 2014, 6, 1294–1326. [Google Scholar]

- Li, X.; Liu, C.; Wang, Z.; Xie, X.; Li, D.; Xu, L. Airborne LiDAR: state-of-the-art of system design, technology and application. Measurement Science and Technology 2020, 32, 032002. [Google Scholar]

- Heyduk, A. Metody stereowizyjne w analizie składu ziarnowego. Systemy Wspomagania w Inżynierii Produkcji 2017, Vol. 6, iss. 2.

- Lazaros, N.; Sirakoulis, G.C.; Gasteratos, A. Review of stereo vision algorithms: from software to hardware. International Journal of Optomechatronics 2008, 2, 435–462. [Google Scholar]

- McCarl, B.A.; Spreen, T.H. Applied mathematical programming using algebraic systems. Cambridge, MA 1997.

- Needleman, S.B.; Wunsch, C.D. A general method applicable to the search for similarities in the amino acid sequence of two proteins. Journal of molecular biology 1970, 48, 443–453. [Google Scholar]

- Tippetts, B.; Lee, D.J.; Lillywhite, K.; Archibald, J. Review of stereo vision algorithms and their suitability for resource-limited systems. Journal of Real-Time Image Processing 2016, 11, 5–25. [Google Scholar]

- Rusu, R.B.; Cousins, S. 3D is here: Point Cloud Library (PCL). IEEE International Conference on Robotics and Automation (ICRA); IEEE: Shanghai, China, 2011.

- John Stowers, S. Python Bindings to the Point Cloud Library. Last accessed 22.01.2024: https://strawlab.github.io/python-pcl/.

- Bradski, G. The openCV library. Dr. Dobb’s Journal: Software Tools for the Professional Programmer 2000, 25, 120–123.

- Scharstein, D.; Szeliski, R. A taxonomy and evaluation of dense two-frame stereo correspondence algorithms. International journal of computer vision 2002, 47, 7–42. [Google Scholar]

- Harris, C.; Stephens, M.; others. A combined corner and edge detector. Alvey vision conference. Manchester, UK, 1988, Vol. 15, pp. 10–5244.

- Nakamura, Y.; Matsuura, T.; Satoh, K.; Ohta, Y. Occlusion detectable stereo-occlusion patterns in camera matrix. Proceedings CVPR IEEE Computer Society Conference on Computer Vision and Pattern Recognition. IEEE, 1996, pp. 371–378.

- Scharstein, D. Middlebury stereo page. Last accessed 04.01.2024: http://vision.middlebury.edu/stereo/data, 2001.

- Geiger, A. Kitty dataset page. Last accessed 09.01.2024: https://www.cvlibs.net/datasets/kitti/, 2012.

- Scharstein, D.; Hirschmüller, H.; Kitajima, Y.; Krathwohl, G.; Nešić, N.; Wang, X.; Westling, P. High-resolution stereo datasets with subpixel-accurate ground truth. Pattern Recognition: 36th German Conference, GCPR 2014, Münster, Germany, September 2-5, 2014, Proceedings 36. Springer, 2014, pp. 31–42.

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? the kitti vision benchmark suite. 2012 IEEE conference on computer vision and pattern recognition. IEEE, 2012, pp. 3354–3361.

- Hirschmuller, H. Stereo processing by semiglobal matching and mutual information. IEEE Transactions on pattern analysis and machine intelligence 2007, 30, 328–341. [Google Scholar]

- Cignoni, P.; Callieri, M.; Corsini, M.; Dellepiane, M.; Ganovelli, F.; Ranzuglia, G. MeshLab: an Open-Source Mesh Processing Tool. Eurographics Italian Chapter Conference; Scarano, V.; Chiara, R.D.; Erra, U., Eds. The Eurographics Association, 2008.

- Team, O.D. OpenPCDet: An Open-source Toolbox for 3D Object Detection from Point Clouds. https://github.com/open-mmlab/OpenPCDet, 2020.

| Algorithm | ||||

|---|---|---|---|---|

| Stereo PCD (with edges) | 16.49 ± 12.71 | 0.51 ± 0.16 | 0.63 ± 0.16 | 0.72 ± 0.16 |

| Stereo PCD (without edges) | 17.12 ± 10.07 | 0.45 ± 0.16 | 0.57 ± 0.16 | 0.67 ± 0.15 |

| OpenCV SGBM (all) | 3.31 ± 2.56 | 0.75 ± 0.09 | 0.81 ± 0.08 | 0.84 ± 0.07 |

| OpenCV SGBM (valid) | 3.31 ± 2.56 | 0.86 ± 0.06 | 0.94 ± 0.04 | 0.94 ± 0.02 |

| Algorithm | ||||

|---|---|---|---|---|

| Stereo PCD (with edges) | 22.94 ± 11.40 | 0.38 ± 0.13 | 0.54 ± 0.14 | 0.65 ± 0.14 |

| Stereo PCD (without edges) | 24.79 ± 12.74 | 0.34 ± 0.12 | 0.49 ± 0.13 | 0.61 ± 0.13 |

| OpenCV SGBM (all) | 13.54 ± 6.02 | 0.36 ± 0.12 | 0.45 ± 0.13 | 0.50 ± 0.13 |

| OpenCV SGBM (valid) | 13.54 ± 6.02 | 0.63 ± 0.12 | 0.80 ± 0.10 | 0.89 ± 0.06 |

| Dataset | Image resolution | Algorithm | Time |

|---|---|---|---|

| Stereo PCD (with edges) | 0,03s | ||

| Head and lamp | Stereo PCD (without edges) | 0,02s | |

| OpenCV SGBM | 0,03s | ||

| Stereo PCD (with edges) | 0,17s | ||

| KITTI | Stereo PCD (without edges) | 0,15s | |

| OpenCV SGBM | 0,20s | ||

| Stereo PCD (with edges) | 0,74s | ||

| Mobile dataset | Stereo PCD (without edges) | 0,51s | |

| OpenCV SGBM | 1,22s | ||

| Stereo PCD (with edges) | 0,86s | ||

| Mobile dataset | Stereo PCD (without edges) | 0,58s | |

| OpenCV SGBM | 1,72s |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).