I. INTRODUCTION

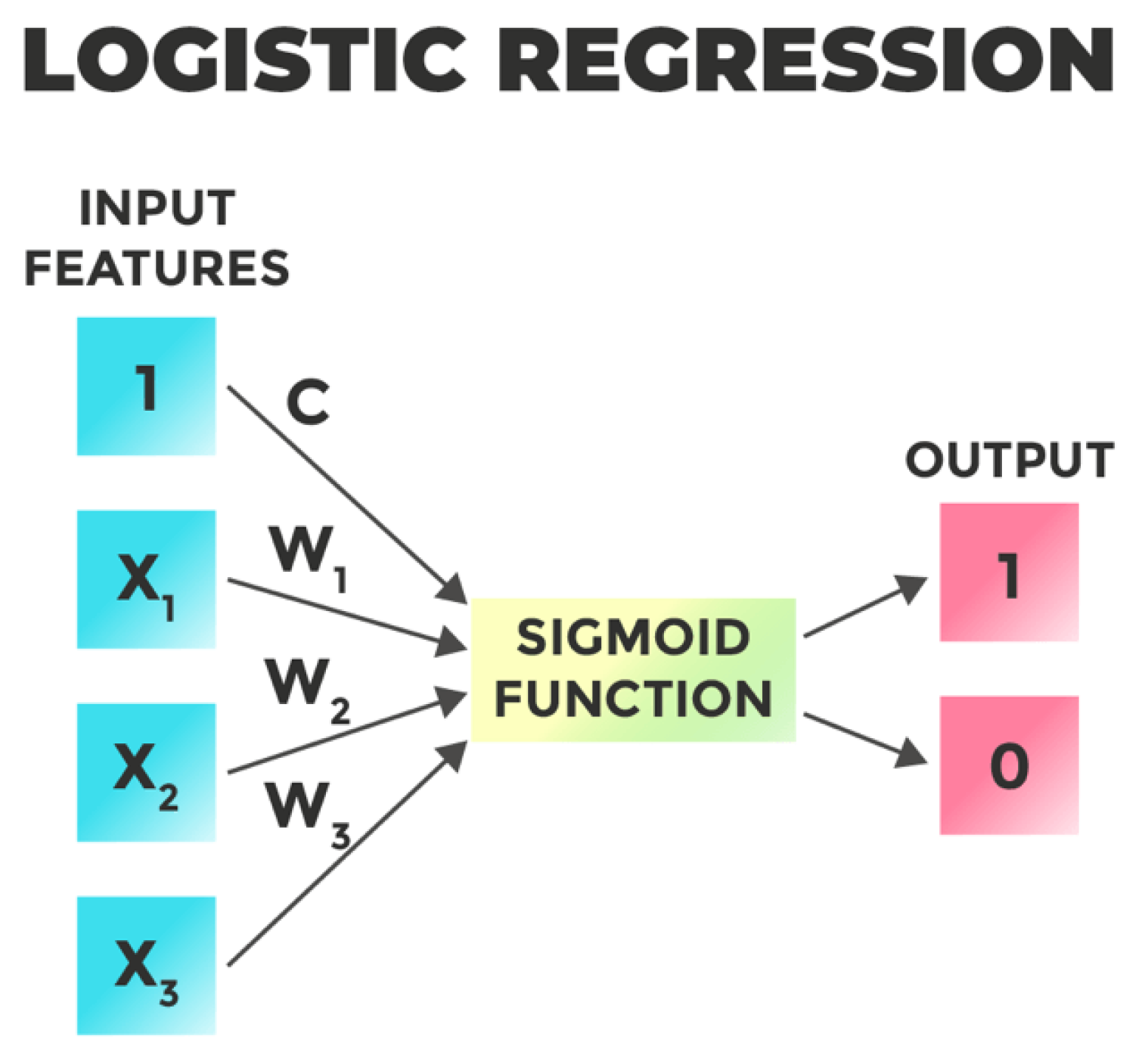

The fusion of technology with finance (Fintech), has reshaped the traditional financial landscape, offering streamlined processes, improved customer experiences, and data-driven decision-making. Machine Learning (ML) algorithms play a pivotal role in Fintech, providing predictive analytics, risk assessment, fraud detection, and tailored financial services. Logistic Regression, a fundamental ML method, holds promise for modeling intricate financial data to extract valuable insights.

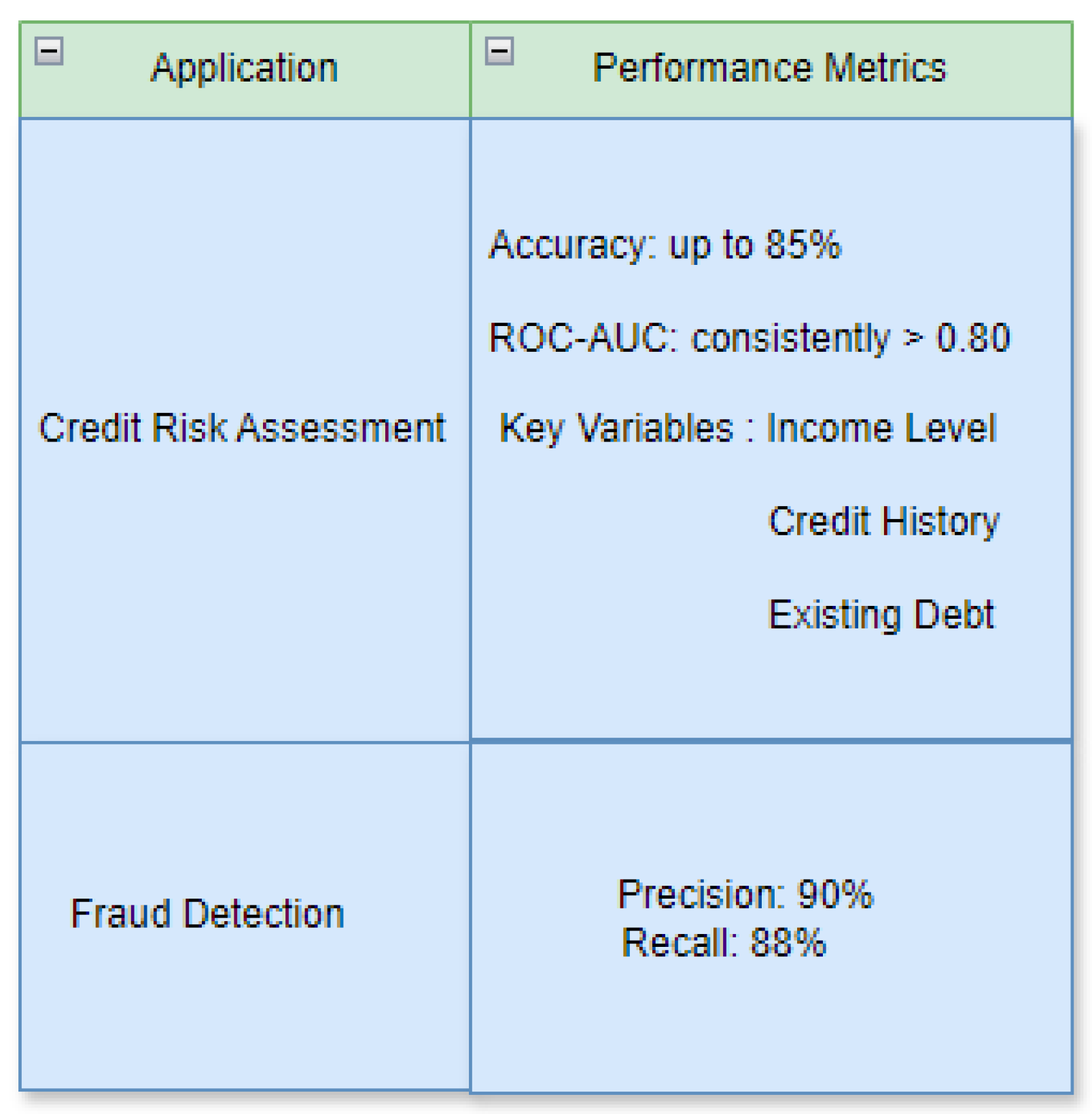

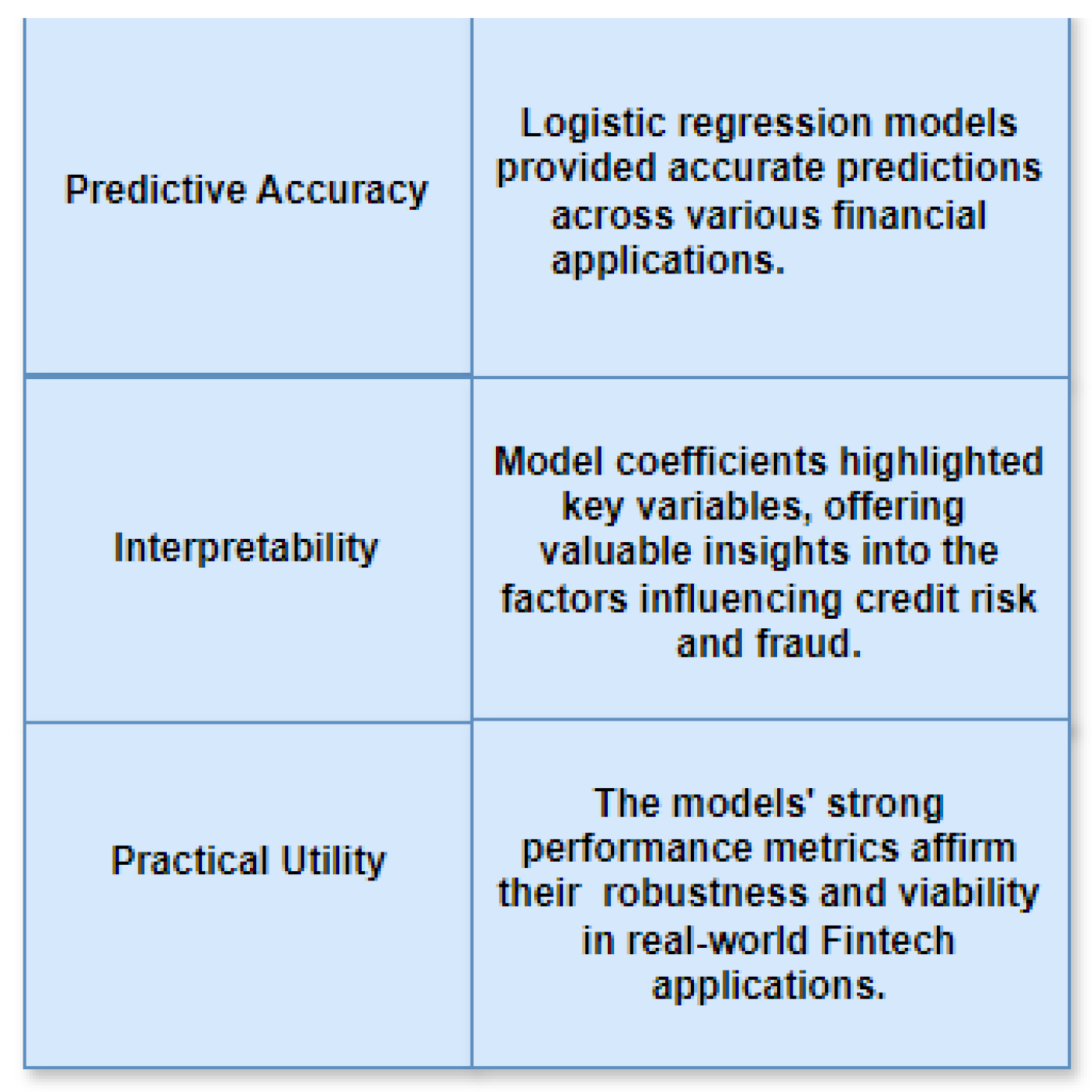

Numerous studies have explored ML's applications in Fintech, showcasing its effectiveness in algorithmic trading, customer segmentation, and more. Logistic Regression, esteemed for its simplicity, interpretability, and efficiency, finds extensive use in financial modeling due to its capacity to predict binary outcomes with probabilistic interpretations. Past research has underscored Logistic Regression's efficacy in credit risk assessment, fraud detection, and loan default prediction, underlining its relevance in Fintech applications. Real-world financial datasets will be employed to validate the efficacy of Logistic Regression models and explore their practical implications across diverse Fintech applications.

Despite the advantages, there exists a gap in comprehensive studies specifically focusing on Logistic Regression. While Logistic Regression is acknowledged for its interpretability and simplicity, its full potential in addressing complex financial challenges within the Fintech realm remains underexplored.

This study aims to fill this gap by exploring the potential of Logistic Regression in tackling critical challenges within the Fintech domain. The primary objectives include:

1. Evaluating Logistic Regression's effectiveness in predicting financial outcomes such as creditworthiness, default risk, and fraud.

2. Investigating innovative approaches to enhance Logistic Regression models' performance through feature engineering, ensemble techniques, and model optimization.

3. Analyzing the interpretability of Logistic Regression models and their ability to offer actionable insights for financial institutions and stakeholders.

II. METHODOLOGY

1. Data Gathering and Preprocessing:

Data Collection: Obtain financial datasets from reputable sources such as banking institutions, credit bureaus, or publicly accessible repositories.

Data Cleansing: Rectify data imperfections by addressing missing values, eliminating duplicates, and rectifying discrepancies to uphold data integrity.

Feature Crafting: Innovate new features or transform existing ones to encapsulate pertinent information for predictive tasks.

Standardization: Scale numerical features to a consistent range to forestall biases and foster uniformity during model training.

2. Model Construction and Assessment:

Dataset Partitioning: Segment the refined dataset into training, validation, and testing subsets, typically following a 70-15-15 split ratio.

Logistic Regression Model Training: Educate logistic regression models using the training dataset, adjusting model parameters such as regularization intensity.

Model Evaluation: Assess the trained models' performance on the validation set utilizing various metrics including accuracy, precision, recall, F1-score, and ROC-AUC curve analysis.

Hyperparameter Optimization: Refine model hyperparameters through methodologies such as cross-validation to enhance performance.

3. Feature Selection and Analysis:

Feature Relevance: Investigate the logistic regression model's coefficients to comprehend the significance of each feature in predicting the target variable.

Variable Identification: Employ methodologies like forward or backward selection to pinpoint the subset of features contributing most to model efficacy.

Model Interpretation: Decode the logistic regression coefficients to extract actionable insights and grasp the influence of individual features on predicted outcomes.

4. Implementation and Oversight:

Model Deployment: Integrate the trained logistic regression model into operational environments, embedding it within Fintech applications or frameworks.

Real-time Forecasting: Establish APIs or services to enable instantaneous prediction, facilitating the model's capability to predict outcomes on newly acquired data.

Performance Monitoring and Maintenance: Continuously monitor the model's performance and periodically recalibrate it to ensure sustained effectiveness and adaptability to evolving data patterns.