Submitted:

24 May 2024

Posted:

27 May 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

- RQ1: What is the efficacy of a genetic algorithm in the process of feature selection for enhancing clustering performance?

- RQ2: Which clustering algorithm is most effective when applied to the selected NPHA dataset?

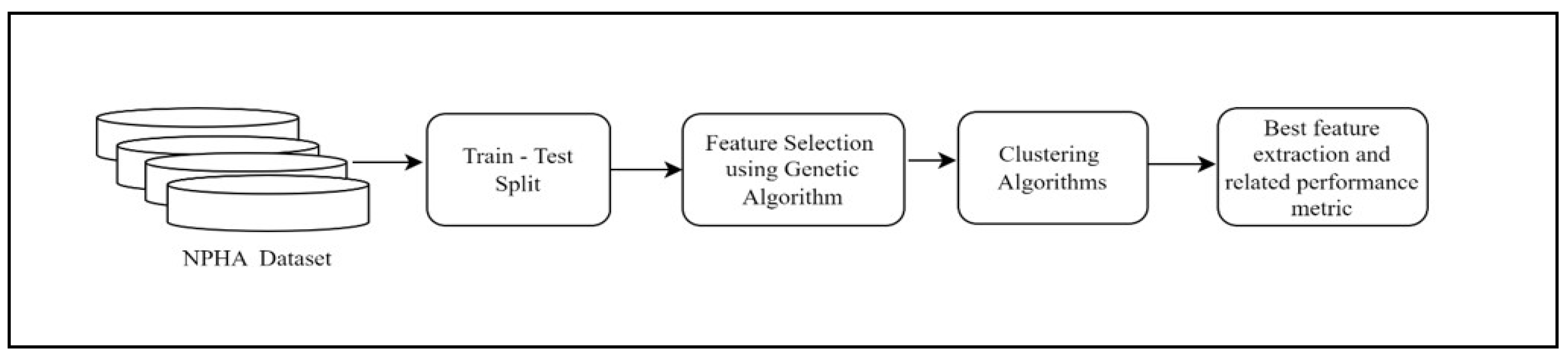

- RQ3: Does the iterative process of selection, crossover, and mutation in a genetic algorithm have the potential to enhance clustering performance across numerous generations?

- The impact of the feature selection technique (FST) on the NPHA dataset is comprehensively evaluated.

- By simulating the principles of natural evolution, the genetic algorithm utilized in this study optimizes feature selection for clustering. As a result, the clustering performance is improved by identifying the most relevant subset of features from the dataset.

- To improve clustering with an increased performance metric, models that integrate the Genetic Algorithm and Clustering Algorithms are proposed.

- In order to empirically verify the findings, GA was combined with a number of clustering algorithms, including KMeans++, DBSCAN, BIRCH, and Agglomerative.

2. Materials and Methods

2.1 Selecting features using Genetic Algorithm

2.2. Genetic Algorithm

2.3. Clustering /Cluster Analysis

2.4. Clustering Algorithms

2.4.1 KMeans /KMeans ++

2.4.2. Density-Based Spatial Clustering of Applications with Noise (DBSCAN)

2.4.3. Balanced Iterative Reducing and Clustering utilizing Hierarchies

2.4.4. Agglomerative

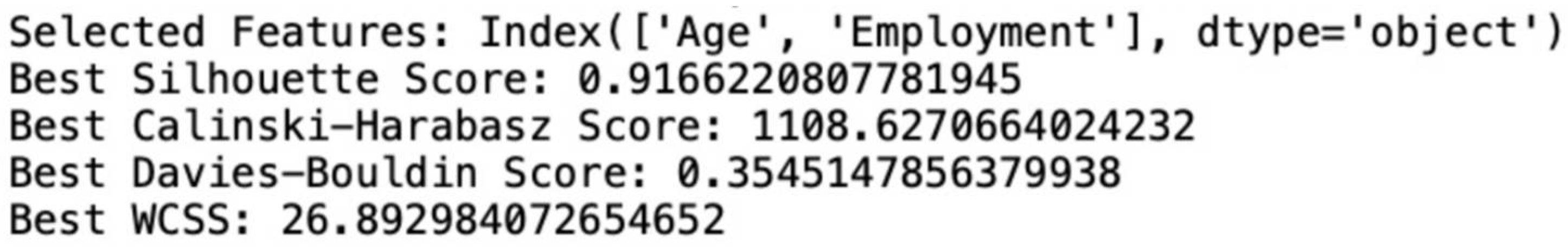

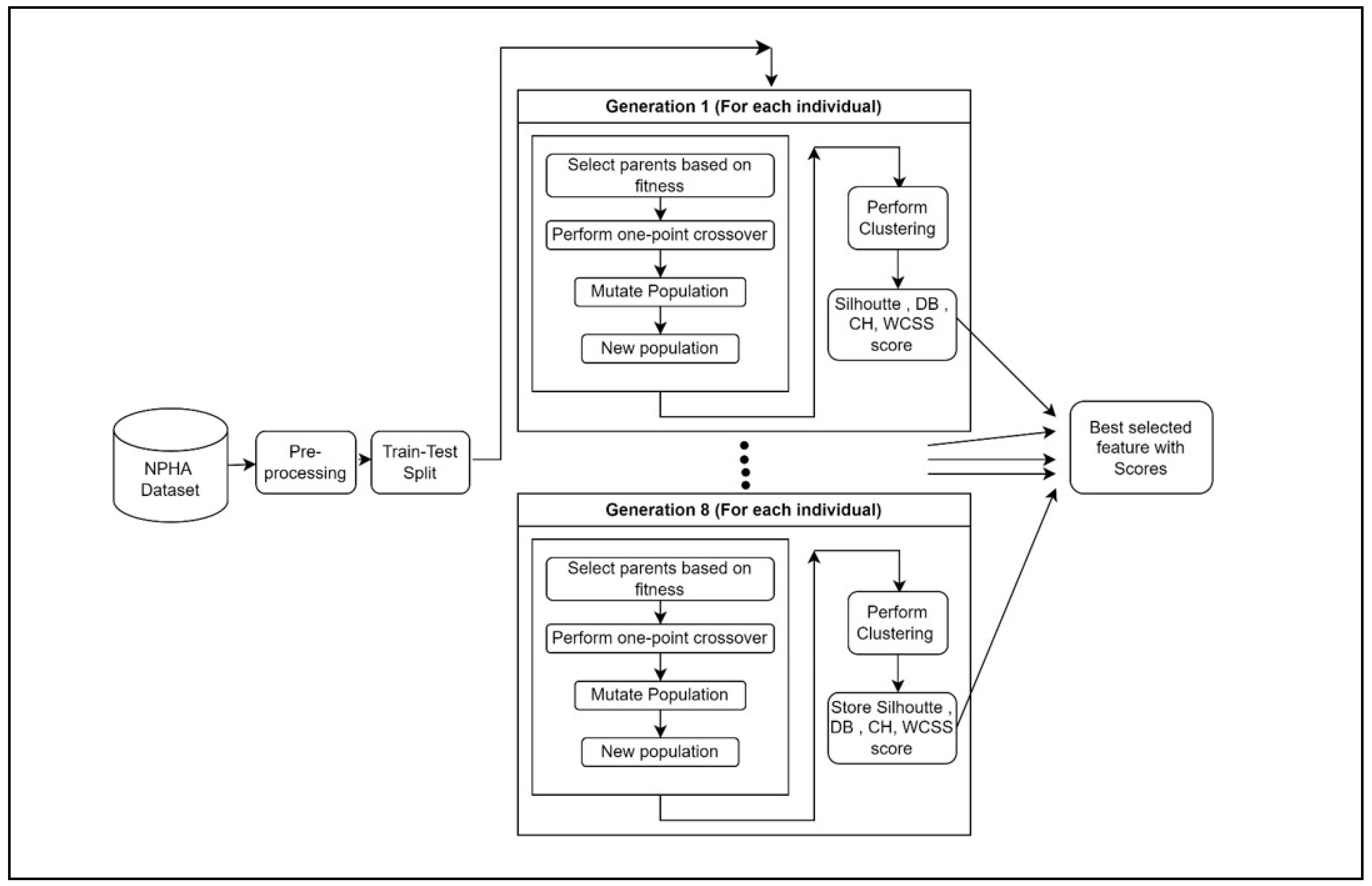

3. WorkFlow

4. Results

4.1. Performance Evaluation Parameters

4.2. Dataset

4.3. Implementation Details

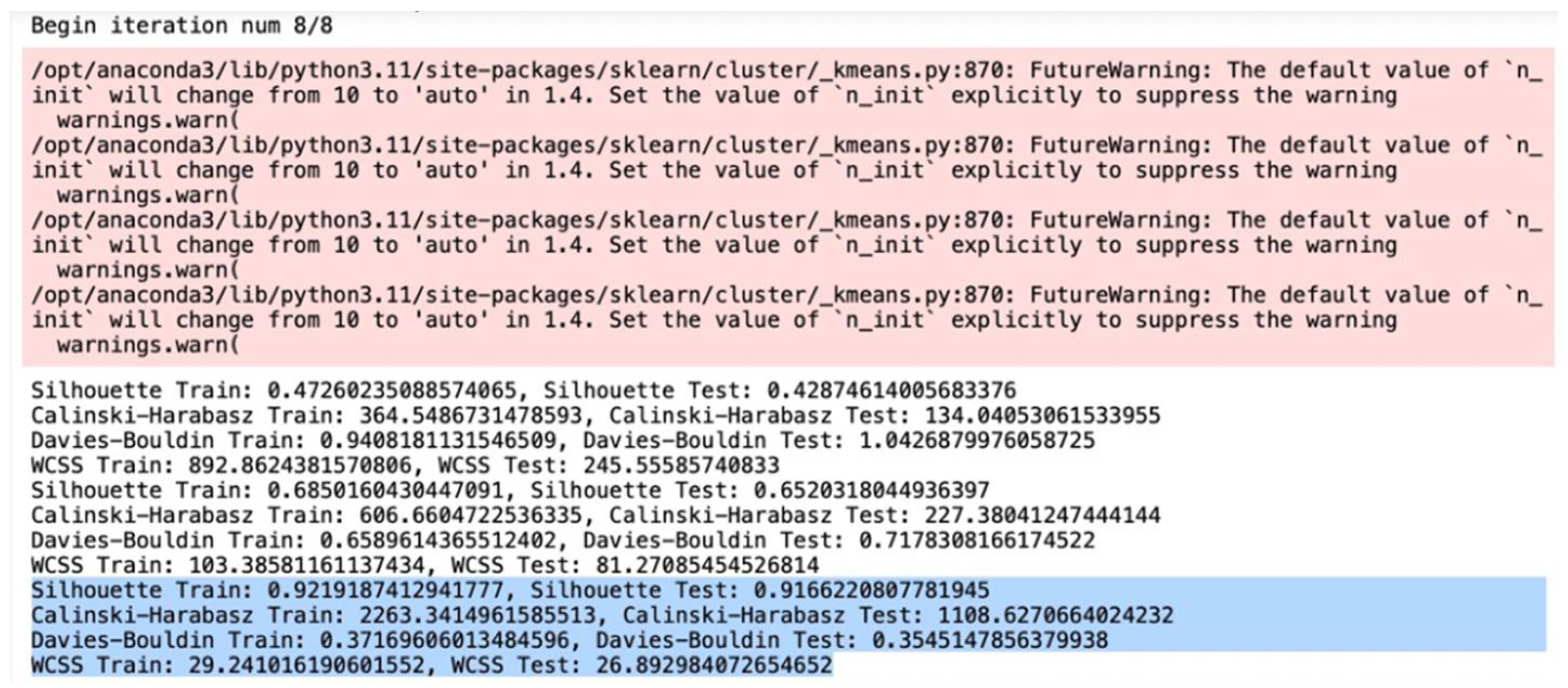

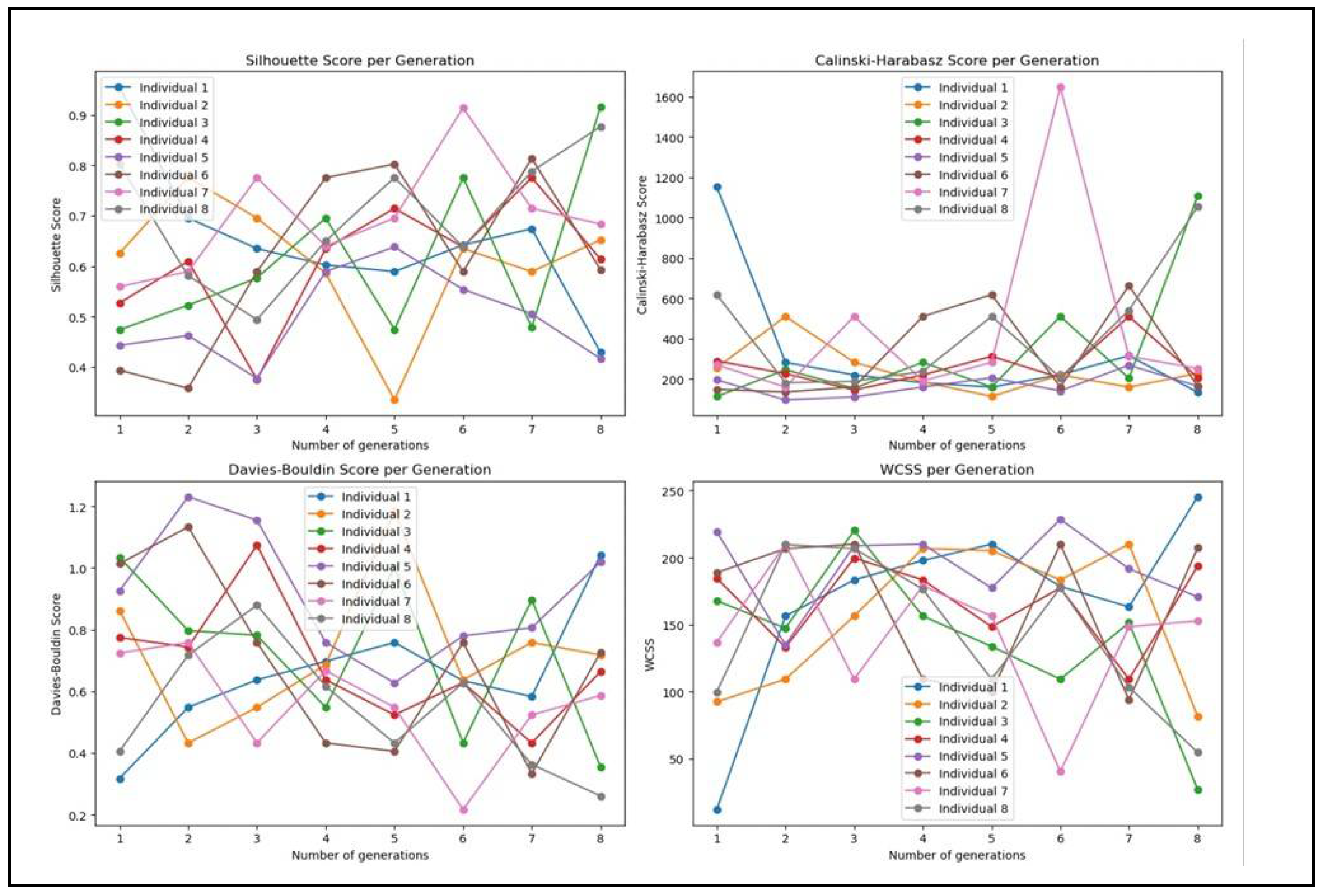

4.4. Analysis

5. Discussion

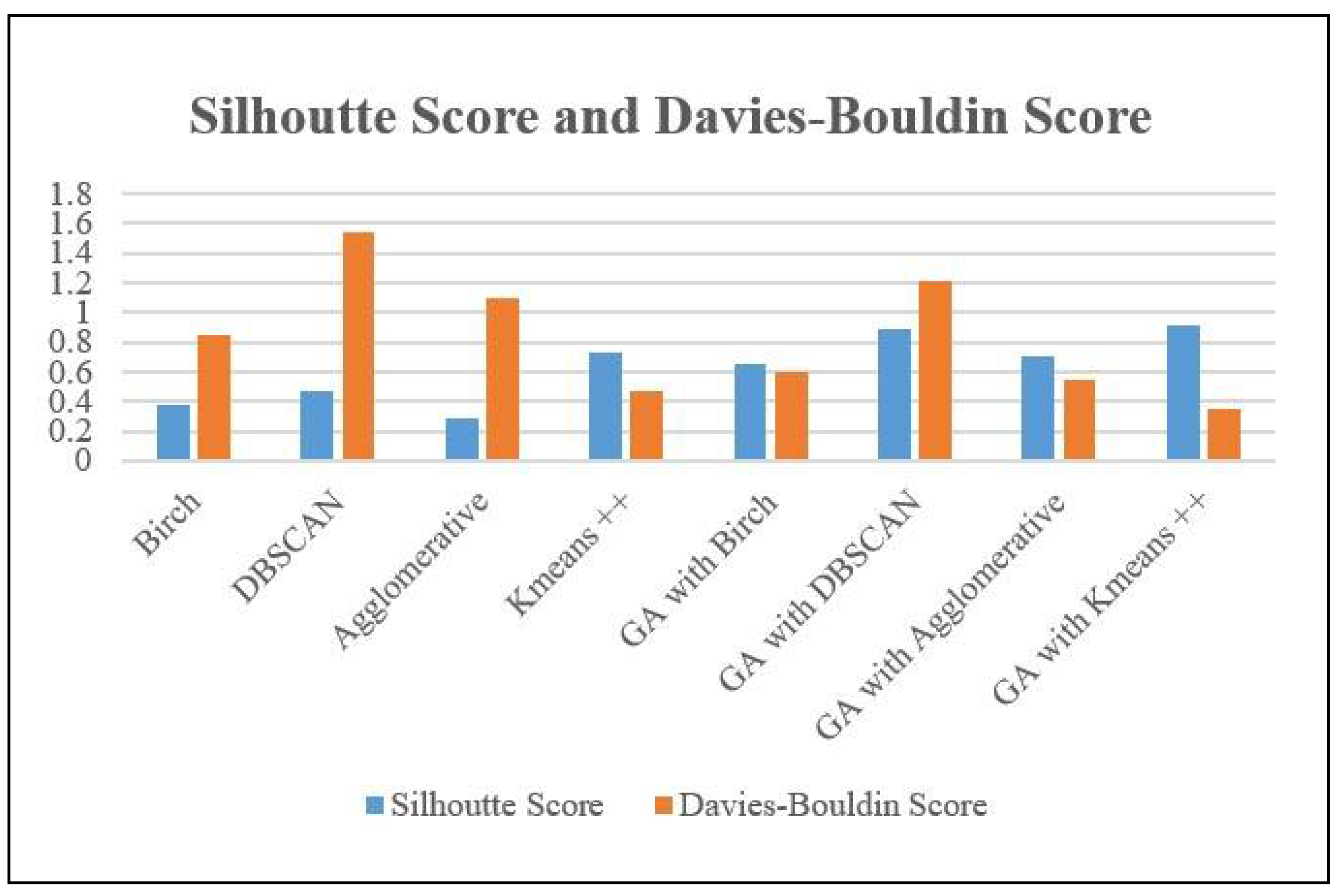

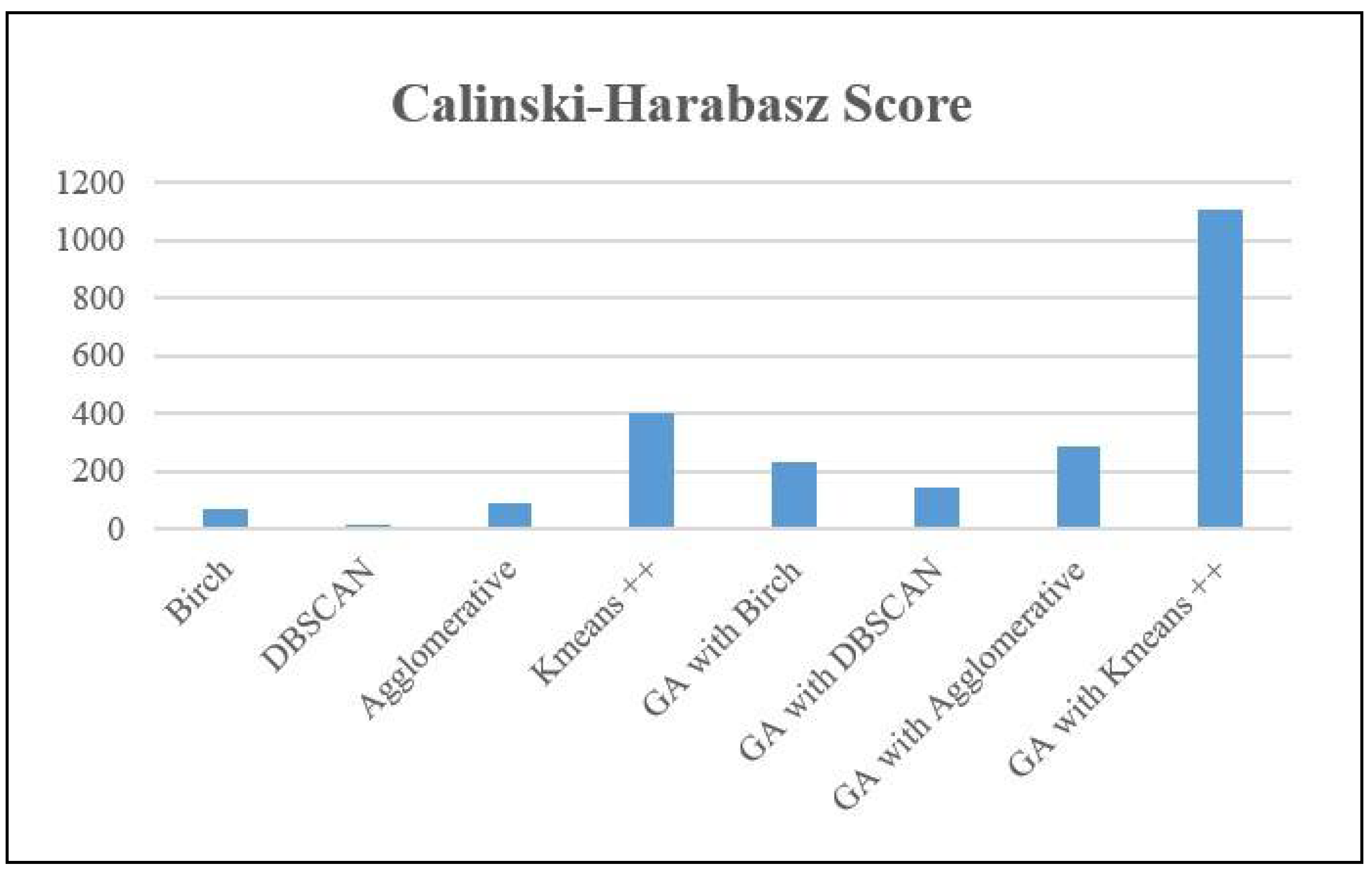

5.1. GA-KMeans ++ Vs Other GA Based Clustering Algorithms

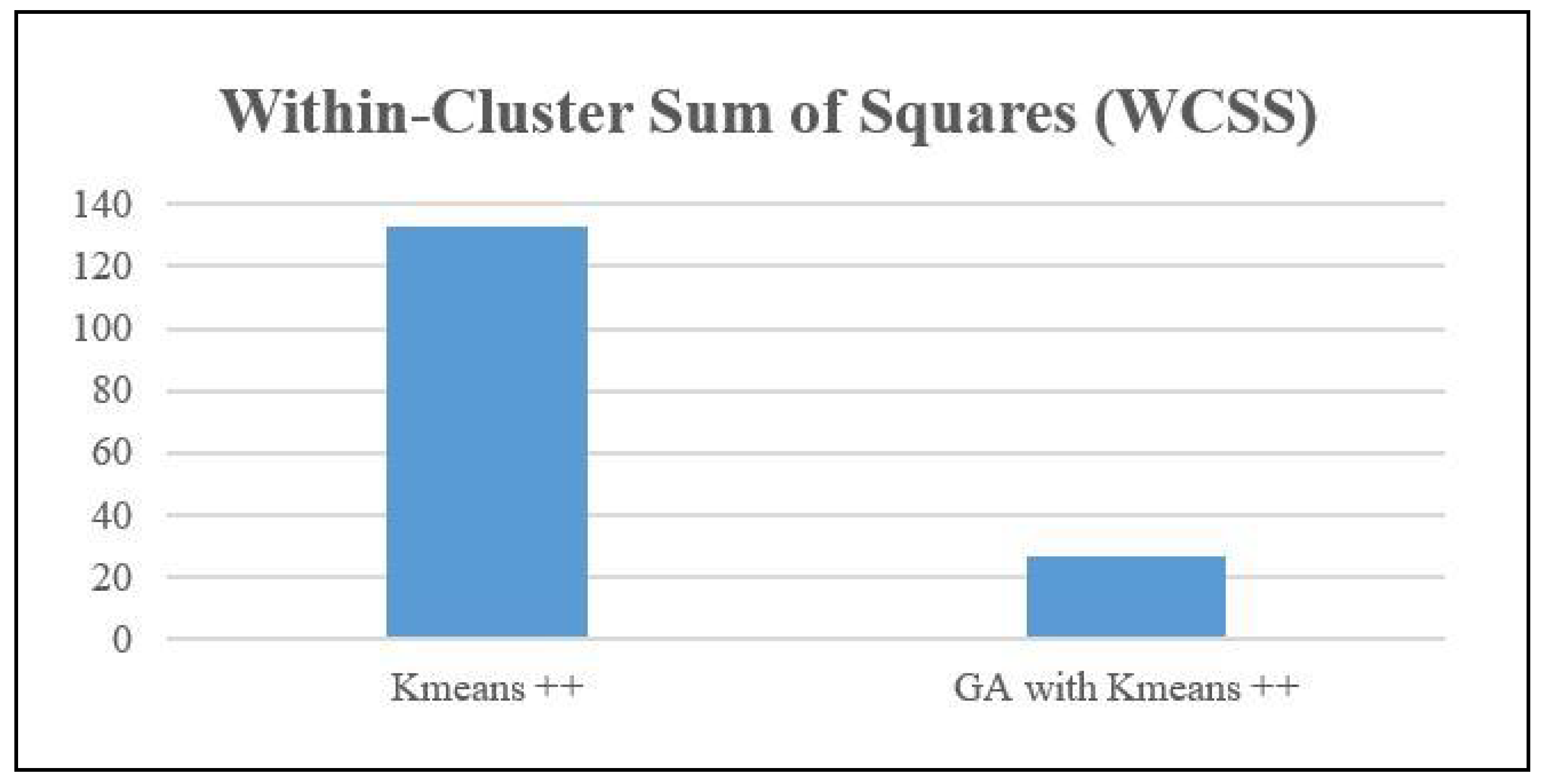

5.2. KMeans ++ Vs GA-KMeans ++

5.3. Features Selected by Best Performing Algorithms

6. Conclusions

- Q1: When genetic algorithms are used to select features, which clustering performance metric is most indicative of successful clustering outcomes, thus indicating the best parameters for healthy aging?

- Q2: How does the feature selection technique contribute to enhancing performance parameters?

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A

| Generations | Silhouette Score | Calinski-Harabasz Score | Davies-Bouldin Score |

|---|---|---|---|

| 1 | 0.548247203311971 | 248.77568949138 | 0.786258940306788 |

| 2 | 0.731523855693821 | 288.905157972548 | 0.647245020588084 |

| 3 | 0.889043941142484 | 815.375939811214 | 0.517261849266876 |

| 4 | 0.700623714255857 | 355.963046132945 | 0.51808134263016 |

| 5 | 0.676145002974023 | 277.21061465924 | 0.768662005029266 |

| 6 | 0.532169727001773 | 286.523711996311 | 0.764614500356365 |

| 7 | 0.701599126939112 | 312.072096751344 | 0.628716460051172 |

| 8 | 0.916622080778195 | 1108.62706640242 | 0.354514785637994 |

References

- Vandana Bhattacharjee, Ankita Priya, Nandini Kumari and Shamama Anwar (2023) “DeepCOVNet Model for COVID?19 Detection Using ChestX?Ray Images”, Wireless Personal Communication, [SCIE, IF: 2.017]. [CrossRef]

- Foo,A.; Hsu, W.; Li Lee,M.;S.W. Tan, G. DP-GAT: A Framework for Image-based Disease Progression Prediction, in 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, August 2022.

- Nandy,J.; Hsu,W.;Li Lee. M.An Incremental Feature Extraction Framework for Referable Diabetic Retinopathy Detection, in IEEE 28th International Conference on Tools with Artificial Inteligience (ICTAI), San Jose, CA, USA, November 2016.

- Mishra A.; Jha R.; Bhattacharjee V. (2023), SSCLNet: A Self-Supervised Contrastive Loss-Based Pre-Trained Network for Brain MRI Classification, IEEE ACCESS, Digital Object Identifier. [CrossRef]

- Kumari N.; Anwar S.; Bhattacharjee V.; Sahana S. K. (2023), “Visually evoked brain signals guided image regeneration using GAN variants.”, Multimedia and Tools Application: An International Journal, Springer, ISSN: Print ISSN1380-7501, Online ISSN1573-7721, [SCIE, IF: 2.577]. [CrossRef]

- Jha R.; Bhattacharjee V.; Mustafi A. (2021) “Increasing the Prediction Accuracy for Thyroid Disease: A Step Towards Better Health for Society”, Wireless Personal Communications, August 2021 [SCIE Indexed IF 2.017]. [CrossRef]

- Bhattacharjee,V.; Priya,A.; and Prasad,U. Evaluating the Performance of Machine Learning Models for Diabetes Prediction with Feature Selection and Missing Values Handling. International Journal of Microsystems and IoT, Vol. 1, Issue 1, 26 June 2023. [CrossRef]

- Singh,S.; Aditi Sneh,A.; Bhattacharjee,V. A Detailed Analysis of Applying the K Nearest Neighbour Algorithm for Detection of Breast Cancer, International Journal of Theoretical & Applied Sciences,2021 13(1) pp 73-78.

- Ahmed, M.; Seraj, R.; Islam, S.M.S. The k-means algorithm: A comprehensive survey and performance evaluation. Electronics 2020, 9, 1295. [CrossRef]

- Jahwar, A.F.; Abdulazeez, A.M. Meta-heuristic algorithms for K-means clustering: A review. PalArch’s J. Archaeol. Egypt/Egyptol. 2020, 17, 12002–12020. [CrossRef]

- Huang, J. Design of Tourism Data Clustering Analysis Model Based on K-Means Clustering Algorithm. In International Conference on Multi-Modal Information Analytics; Springer: Cham, Switzerland, 2022; pp. 373–380.

- Yuan, C.; Yang, H. Research on K-value selection method of K-means clustering algorithm. J 2019, 2, 226–235. [CrossRef]

- Ikotun, A.M.; Ezugwu, A.E.; Abualigah, L.; Abuhaija, B.; Heming, J. K-means clustering algorithms: A comprehensive review, variants analysis, and advances in the era of big data. Inf. Sci. 2023, 622, 178–210. [CrossRef]

- Yang, Z.; Jiang, F.; Yu, X.; Du, J. Initial Seeds Selection for K-means Clustering Based on Outlier Detection. In Proceedings of the 2022 5th International Conference on Software Engineering and Information Management (ICSIM), Yokohama, Japan, 21–23 January 2022; pp. 138–143. [CrossRef]

- Han, M. Research on optimization of K-means Algorithm Based on Spark. In Proceedings of the 2023 IEEE 6th Information Technology, Networking, Electronic and Automation Control Conference (ITNEC), Chongqing, China, 24–26 February 2023; pp. 1829–1836.

- K., L. P. ., Suryanarayana, G. ., Swapna, N. ., Bhaskar, T. ., & Kiran, A. Optimizing K-Means Clustering using the Artificial Firefly Algorithm. International Journal of Intelligent Systems and Applications in Engineering, 11(9s), 2023;pp461–468.

- Bahmani, B.; Moseley, B.; Vattani, A.; Kumar, R.; Vassilvitskii, S. Scalable k-means++. arXiv 2012, arXiv:1203.6402.

- Dinh,D.; Huynh,V.; Sriboonchitta,S. Clustering mixed numerical and categorical data with missing values, Information Sciences, Volume 571, 2021,pp. 418-442. [CrossRef]

- S, Crase .; SN, Thennadil. An analysis framework for clustering algorithm selection with applicationstospectroscopy.PLoSONE17(3):e0266369. [CrossRef]

- Z, Wanwan.; J, Mingzhe. Improving the Performance of Feature Selection Methods with Low-Sample-Size Data, The Computer Journal, Volume 66, Issue 7 2023, pp 1664–1686. [CrossRef]

- Pullissery,Y.H.; Starkey,A. Application of Feature Selection Methods for Improving Classifcation Accuracy and Run-Time: A Comparison of Performance on Real-World Datasets 2nd International Conference on Applied Artificial Intelligence and Computing (ICAAIC), Salem, India, 2023, pp. 687-694. [CrossRef]

- Tabianan, K.; Velu, S.; Ravi, V. K-means clustering approach for intelligent customer segmentation using customer purchase behavior data. Sustainability 2022, 14, 7243. [CrossRef]

- Ghezelbash, R.; Maghsoudi, A.; Shamekhi, M.; Pradhan, B.; Daviran, M. Genetic algorithm to optimize the SVM and K-means algorithms for mapping of mineral prospectivity. Neural Comput. Appl. 2023, 35, 719–733. [CrossRef]

- El-Shorbagy,M.A.;Ayoub,A.Y.;Mousa,A.A.; Eldesoky,I. An enhanced genetic algorithm with new mutation for cluster analysis” Computational Statistics.2019,34:1355–1392. [CrossRef]

- Albadr,M.A.;Tiun,S.; Ayob,M.; AL-Dhief,F.Genetic Algorithm Based on Natural Selection Theory for Optimization Problems Symmetry 2020, 12(11), 1758. [CrossRef]

- Zubair, M.; Iqbal, M.A.; Shil, A.; Chowdhury, M.; Moni, M.A.; Sarker, I.H. An improved K-means clustering algorithm towards an efficient data-driven modeling. Ann. Data Sci. 2022, 9, 1–20. [CrossRef]

- Al Shaqsi, J.; Wang, W. Robust Clustering Ensemble Algorithm. SSRN Electron. J. 2022.

- Yu, H.; Wen, G.; Gan, J.; Zheng, W.; Lei, C. Self-paced learning for k-means clustering algorithm. Pattern Recognit. Lett. 2018. [CrossRef]

- Sajidha, S.; Desikan, K.; Chodnekar, S.P. Initial seed selection for mixed data using modified k-means clustering algorithm. Arab. J. Sci. Eng. 2020, 45, 2685–2703. [CrossRef]

- Hua,C.;Li, F.;Zhang,C.;Yang,J.;Wu,W. A Genetic XK-Means Algorithm with EmptyClusterReassignmentSymmetry 2019, 11(6), 744. [CrossRef]

- www.kaggle.com.

| Features | Type | Description |

|---|---|---|

| Age | Categorical | The patient's age group = { 1: 50-64, 2: 65-80 } |

| Physical Health | Categorical | A self-assessment of the patient's physical well-being = { -1: Refused, 1: Excellent, 2: Very Good, 3: Good, 4: Fair, 5: Poor } |

| Mental Health | Categorical | A self-evaluation of the patient's mental or psychological health = { -1: Refused, 1: Excellent, 2: Very Good, 3: Good, 4: Fair, 5: Poor } |

| Dental Health | Categorical | A self-assessment of the patient's oral or dental health= { -1: Refused, 1: Excellent, 2: Very Good, 3: Good, 4: Fair, 5: Poor } |

| Employment | Categorical | The patient's employment status or work-related information = { -1: Refused, 1: Working full-time, 2: Working part-time, 3: Retired, 4: Not working at this time } |

| Stress Keeps Patient from Sleeping | Categorical | Whether stress affects the patient's ability to sleep = { 0: No, 1: Yes } |

| Medication Keeps Patient from Sleeping | Categorical | Whether medication impacts the patient's sleep = { 0: No, 1: Yes } |

| Pain Keeps Patient from Sleeping | Categorical | Whether physical pain disturbs the patient's sleep = { 0: No, 1: Yes } |

| Bathroom Needs Keeps Patient from Sleeping | Categorical | Whether the need to use the bathroom affects the patient's sleep = { 0: No, 1: Yes } |

| Unknown Keeps Patient from Sleeping | Categorical | Unidentified factors affecting the patient's sleep = { 0: No, 1: Yes} |

| Trouble sleeping | Categorical | General issues or difficulties the patient faces with sleeping = { 0: No, 1: Yes} |

| Prescription Sleep Medication | Categorical | Information about any sleep medication prescribed to the patient = { -1: Refused, 1: Use regularly, 2: Use occasionally, 3: Do not use} |

| Race | Categorical | The patient's racial or ethnic background = { -2: Not asked, -1: REFUSED, 1: White, Non-Hispanic; 2: Black, Non-Hispanic; 3: Other, Non-Hispanic; 4: Hispanic; 5: 2+ Races, Non-Hispanic} |

| Gender | Categorical | The gender identity of the patient = { -2: Not asked, -1: REFUSED, 1: Male, 2: Female} |

| Number of Doctors Visited (Target variable) |

Categorical | The total count of different doctors the patient has seen = { 1: 0-1 doctors 2: 2-3 doctors 3: 4 or more doctors } |

| NPHA Dataset | |||

|---|---|---|---|

| Model | Silhoutte Score | Davies-Bouldin Score | Calinski-Harabasz Score |

| Birch | 0.3816 | 0.8433 | 68.67 |

| DBSCAN | 0.4653 | 1.544 | 14.78 |

| Agglomerative | 0.2867 | 1.0995 | 90.7 |

| Kmeans ++ | 0.7284 | 0.474 | 397.46 |

| GA with Birch | 0.6497 | 0.6024 | 229.007 |

| GA with DBSCAN | 0.8844 | 1.2082 | 140.69 |

| GA with Agglomerative | 0.7044 | 0.546 | 283.24 |

| GA with Kmeans ++ | 0.9166 | 0.35451 | 1108.62 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).