Submitted:

05 May 2024

Posted:

06 May 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

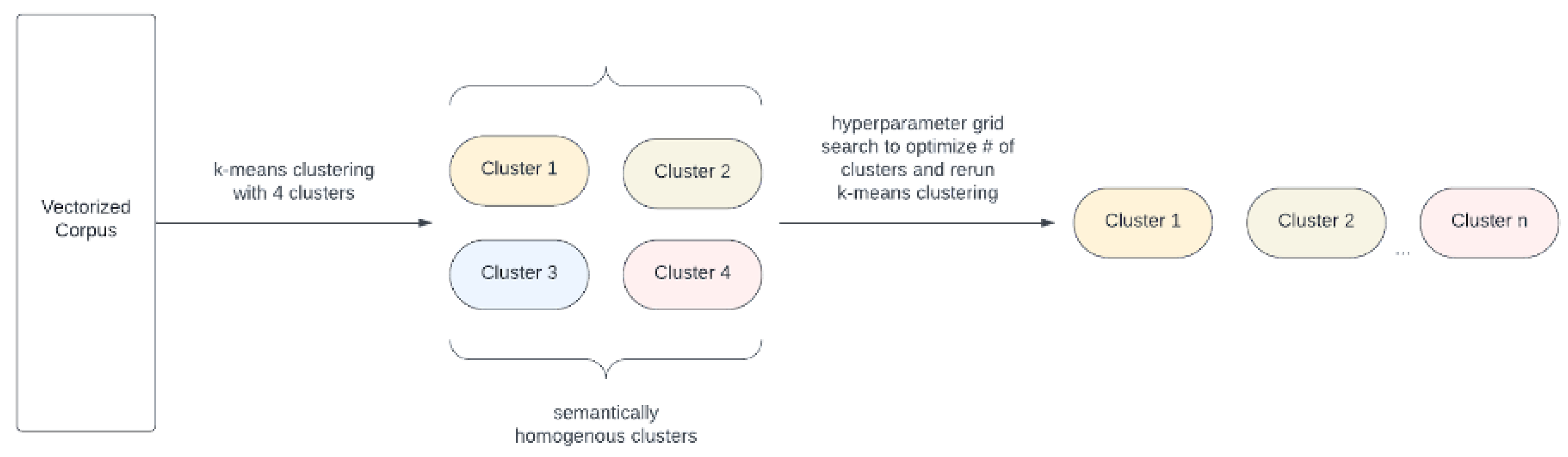

2.1. Cluster 1: Types and Examples of Bias in the Realm of LLMs

2.2. Cluster 2: Bias in the Application of LLMs in Restricted Industries

2.3. Cluster 3: Dataset Bias in the Realm of LLMs

2.4. Cluster 4: Inherent Bias in LLM Architectures

2.5. Cluster 5: Addressing and Remediating Bias

3. Approach

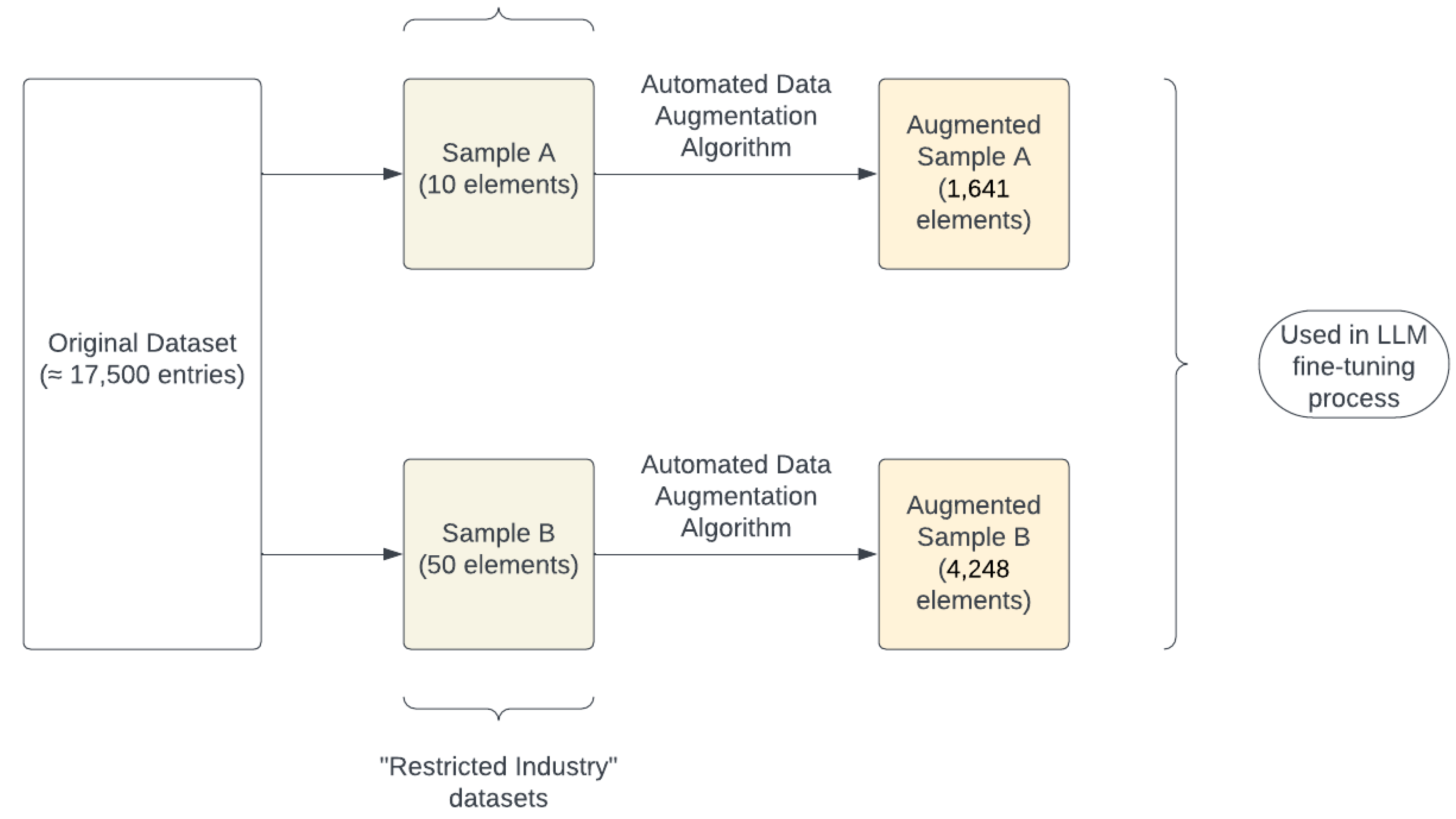

3.1. Dataset Augmentation

3.2. LLM Bias Classification

3.3. Dataset Bias Classification

4. Materials, Methods, and Results

- LLM A was fine-tuned on a subsection of the original dataset containing the same number of samples as augmented Sample A.

- LLM B was fine-tuned on a subsection of the original dataset containing the same number of samples as augmented Sample B.

- LLM C was fine-tuned on augmented Sample A.

- LLM D was fine-tuned on augmented Sample B.

5. Discussion

6. Limitations and Further Research

7. Conclusions

Author Contributions

Funding

Data Availability Statement

References

- Hovy, D.; Prabhumoye, S. Five sources of bias in natural language processing. Lang Linguist Compass, 15.

- Navigli, R.; Conia, S.; Ross, B. Biases in Large Language Models: Origins, Inventory, and Discussion. Journal of Data and Information Quality, 15, 1–21.

- Li, H.; John, M.; Purkayastha, S.; Celi, L.; Trivedi, H.; Gichoya, J. Ethics of large language models in medicine and medical research. The Lancet Digital Health, 5.

- Mikhailov, D. Optimizing National Security Strategies through LLM-Driven Artificial Intelligence Integration. arXiv, 2023.

- Wiegand, M.; Ruppenhofer, J.; Kleinbauer, T. Detection of Abusive Language: the Problem of Biased Datasets. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Vol. 1, Long and Short Papers, pp. 602–608.

- Geva, M.; Goldberg, Y.; Berant, J. Are We Modeling the Task or the Annotator? An Investigation of Annotator Bias in Natural Language Understanding Datasets. In Are We Modeling the Task or the Annotator? An Investigation of Annotator Bias in Natural Language Understanding Datasets; pp. 1161–1166.

- White, J.; Cotterell, R. Examining the Inductive Bias of Neural Language Models with Artificial Languages. arXiv.

- Lee, H.; Hong, S.; Park, J.; Kim, T.; Kim, G.; Ha, J.w. KoSBI: A Dataset for Mitigating Social Bias Risks Towards Safer Large Language Model Applications. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 5: Industry Track, pp. 6026.

- Dixon, L.; Li, J.; Sorensen, J.; Thain, N.; Vasserman, L. Measuring and Mitigating Unintended Bias in Text Classification. In Proceedings of the AIES ’18: Proceedings of the 2018 AAAI/ACM Conference on AI, Ethics, and Society, pp. 67–73.

- Renaldi, L.; Ruzzetti, E.; Venditti, D.; Dario, O.; Zanzotto, F. A Trip Towards Fairness: Bias and De-Biasing in Large Language Models. arXiv, 2023.

- S. R. Moin Nadeem, A. StereoSet: Measuring stereotypical bias in pretrained language models. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers, Vol. 1, pp. 5356–5371.

- Wang, A.; Singh, A.; Michael, J.; Levy, O.; Bowman, S. GLUE: A Multi-Task Benchmark and Analysis Platform for Natural Language Understanding. In Proceedings of the Proceedings of the 2018 EMNLP Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP, pp. 353–355.

- Gao, Y.; Yang, Y.; Abbasi, A. Auto-Debias: Debiasing Masked Language Models with Automated Biased Prompts. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers, pp. 1012–1023.

- Huang, P.S.; Zhang, H.; Jiang, R.; Stanforth, R.; Welbl, J.; Rae, J.; Yogatama, D.; Kohli, P. Reducing Sentiment Bias in Language Models via Counterfactual Evaluation. Findings of the Association for Computational Linguistics: EMNLP, pp. 65–83.

| Dataset | db-index |

|---|---|

| Sample A | 0.56 |

| Sample A (augmented) | 0.49 |

| Sample B | 0.71 |

| Sample B (augmented) | 0.65 |

| LLM | Perplexity | stereotype score | mb-index | Normalized mb-index |

|---|---|---|---|---|

| A* | 6.4660 | 0.55 | 2.16 x | 1 |

| B** | 6.2920 | 0.52 | 7.65 x | 0.15 |

| C*** | 4.9290 | 0.45 | 1.36 x | 0.51 |

| D**** | 4.9290 | 0.45 | 5.24 x | 0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).