Submitted:

12 April 2024

Posted:

02 May 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

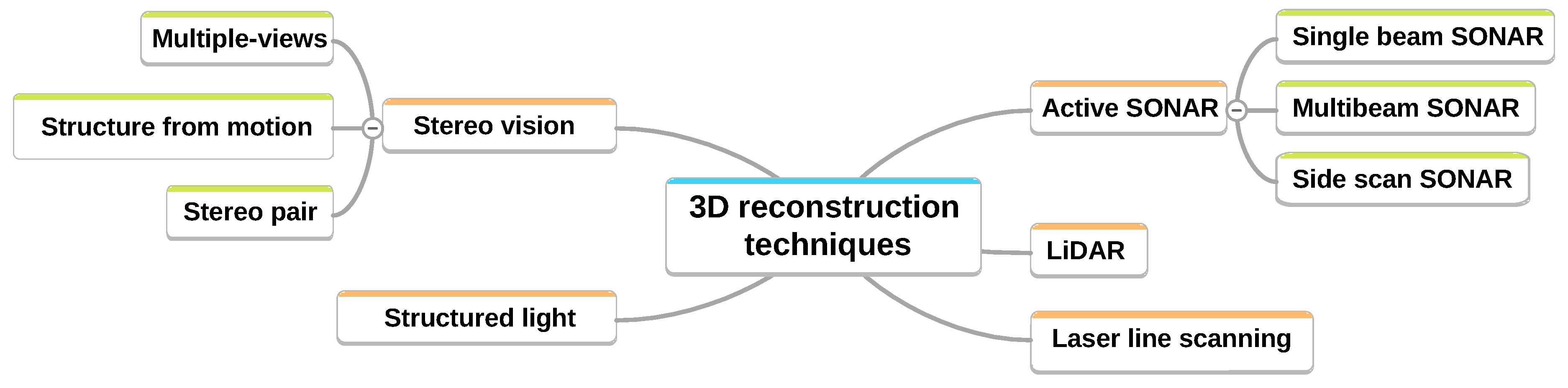

2. Main Methods

- active sensors: these sensors physically interact with the environment by emitting a signal or wave, such as sound waves in the case of sonars or light waves in the case of lidars.

- passive sensors: these sensors do not modify the environment, and the most commonly used ones for 3D reconstruction are camera-based.

-

Multi-view triangulation involves taking multiple views of the same scene. By knowing the disparities of each viewpoint, it is possible to project the points of the scene onto different "image" planes, and thus triangulate the points of the scene to obtain a depth map. This technique is based on epipolar geometry explained in [10] with two possible approaches:

- -

- the use of a binocular stereo system where the relative positions of the two cameras are known. It is possible to make the system active, by using a structured light projector to add discriminant points or features related to the observed environment and thus facilitate the matching of points between the two images for triangulation.

- -

- the method known as Structure from Motion (SfM) involves taking a sequence of sequential images of an object or scene, typically from a single camera. To obtain a metric reconstruction, it is necessary to know the camera trajectory to resolve the scale ambiguity.

- structured light triangulation relies on an emitter-receiver system. The emitter is a light source (laser or not) that projects a pattern (distinctive patterns) onto a scene observed by the receiver, a camera. Using the positions of the camera and the projector, it is possible to triangulate the discriminant points brought by the light in the scene captured by the camera [11].

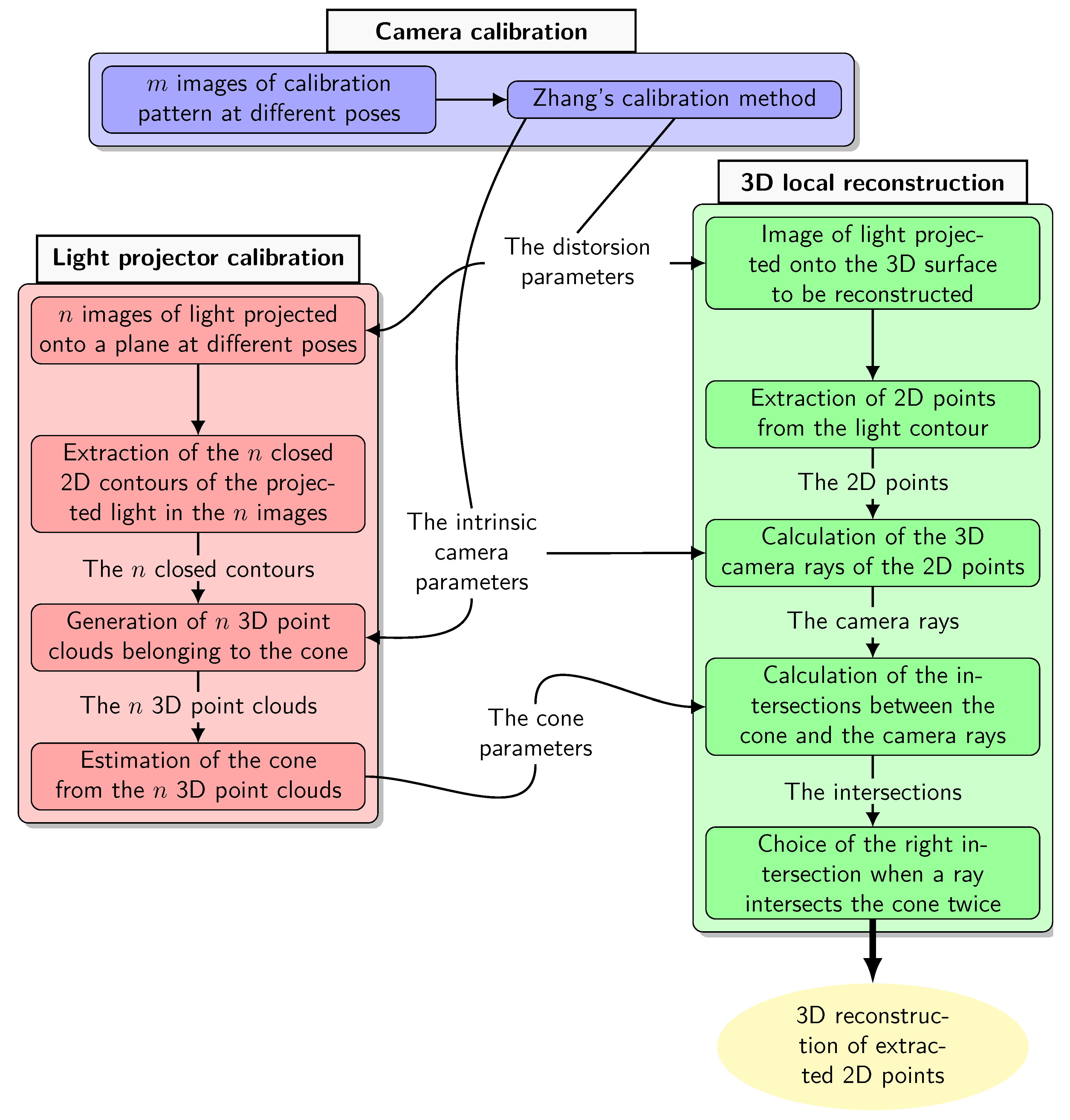

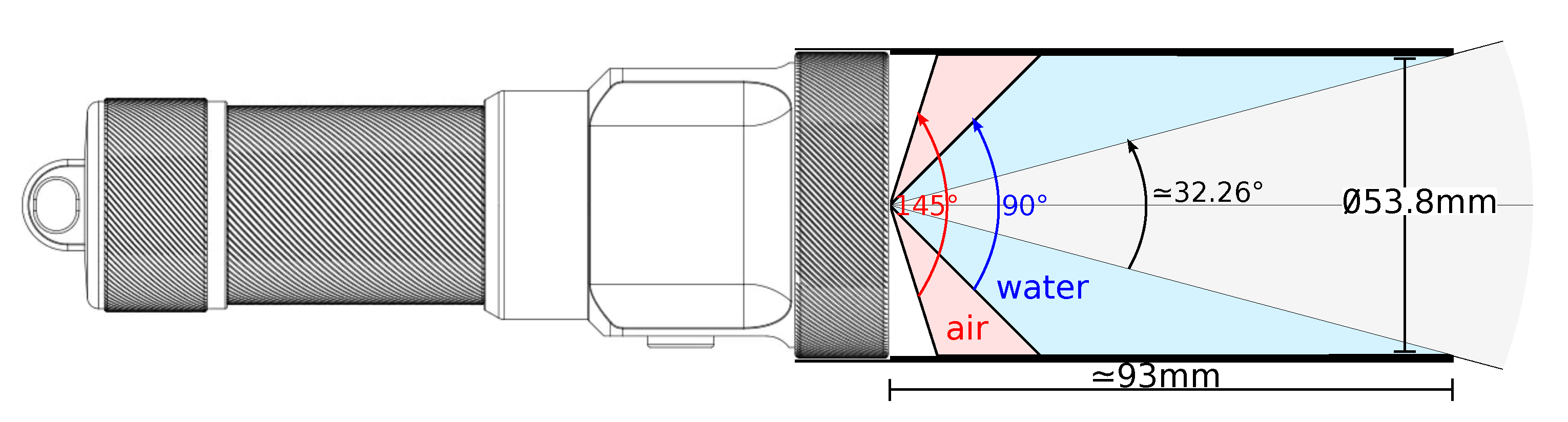

3. Materials and Methods

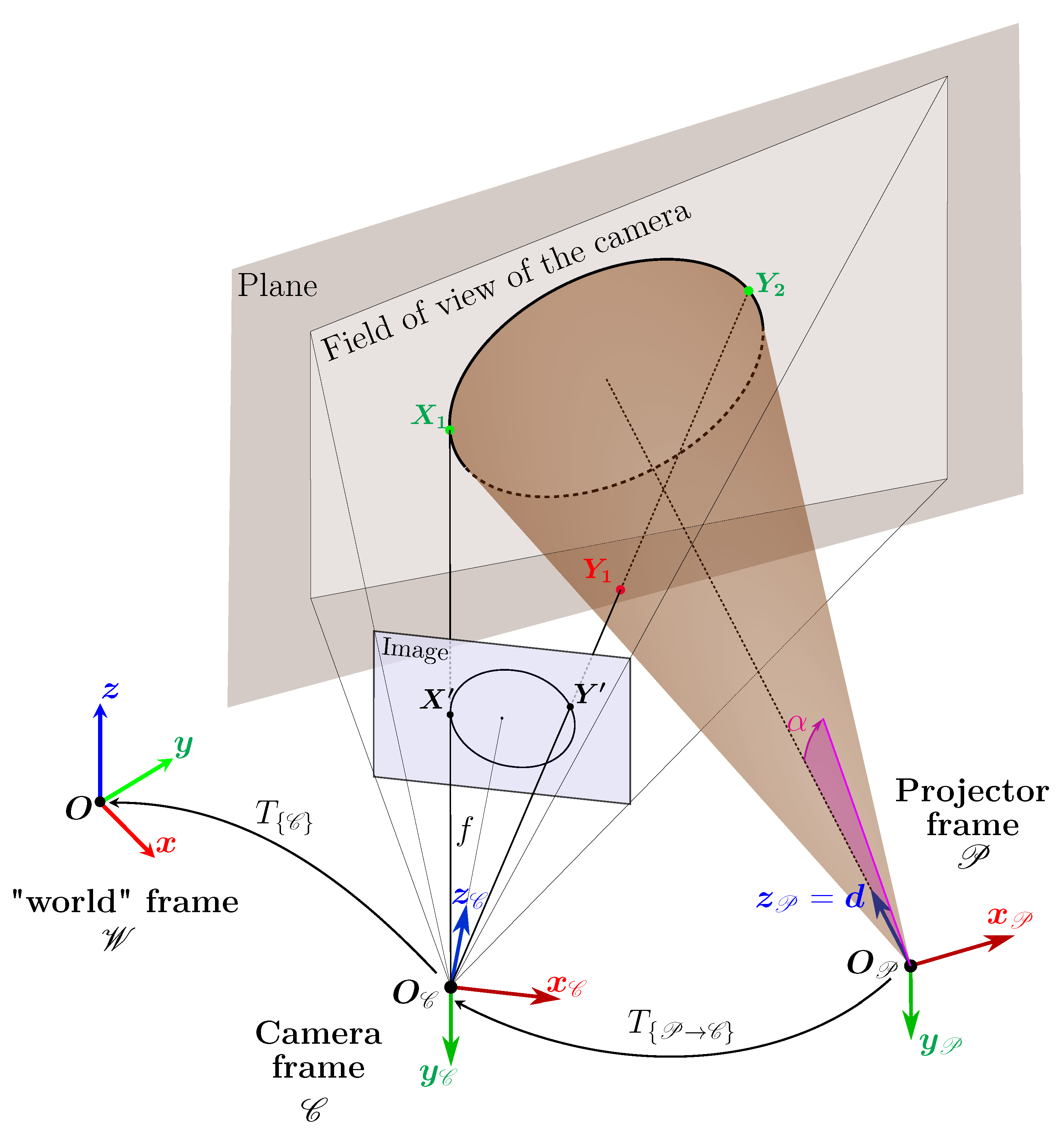

- calibration of the camera is used to estimate its intrinsic parameters, including radial distortion,

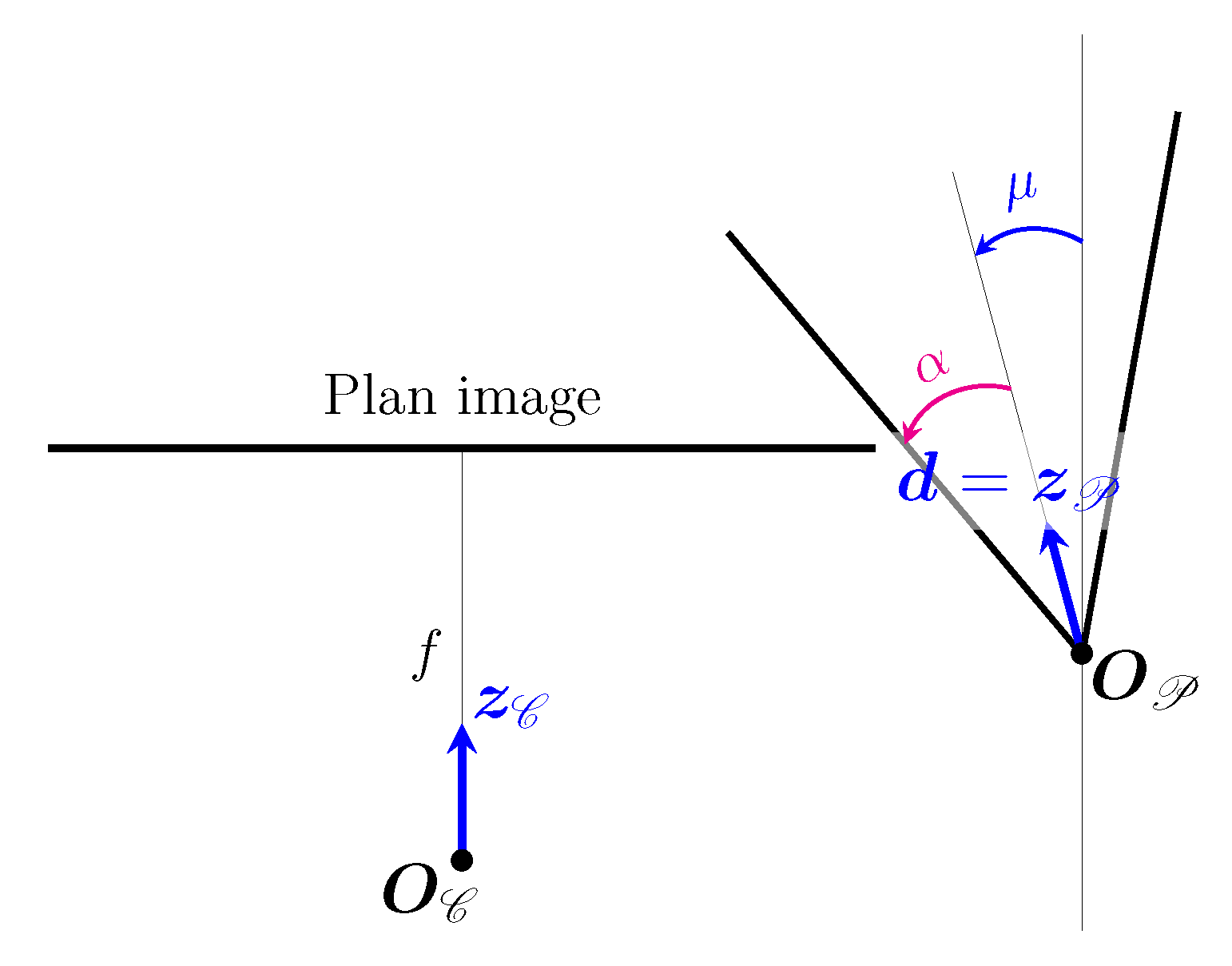

- calibration of the projector consists of estimating the geometric parameters of the cone (vertex , direction , half-angle of opening ),

- local 3D reconstruction based on the camera / projector triangulation leading to a 3D point cloud expressed in , the camera frame.

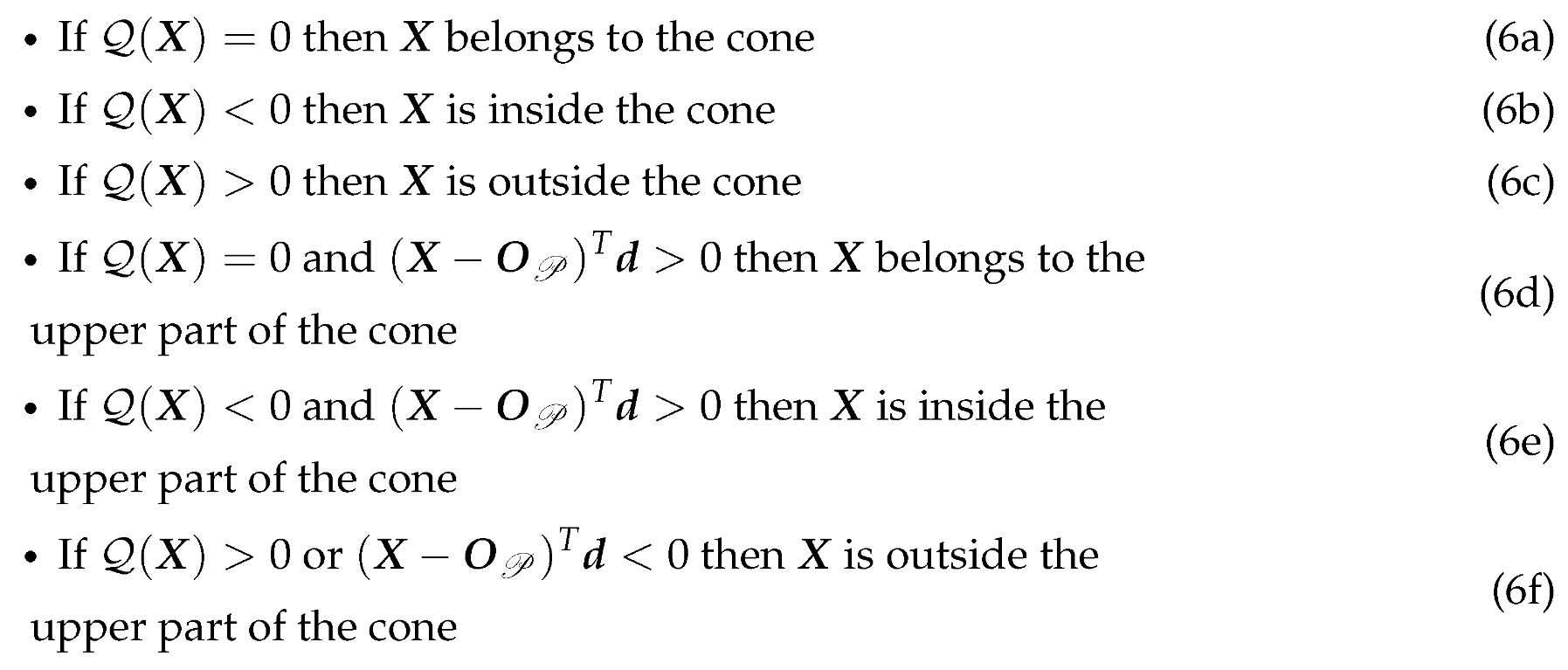

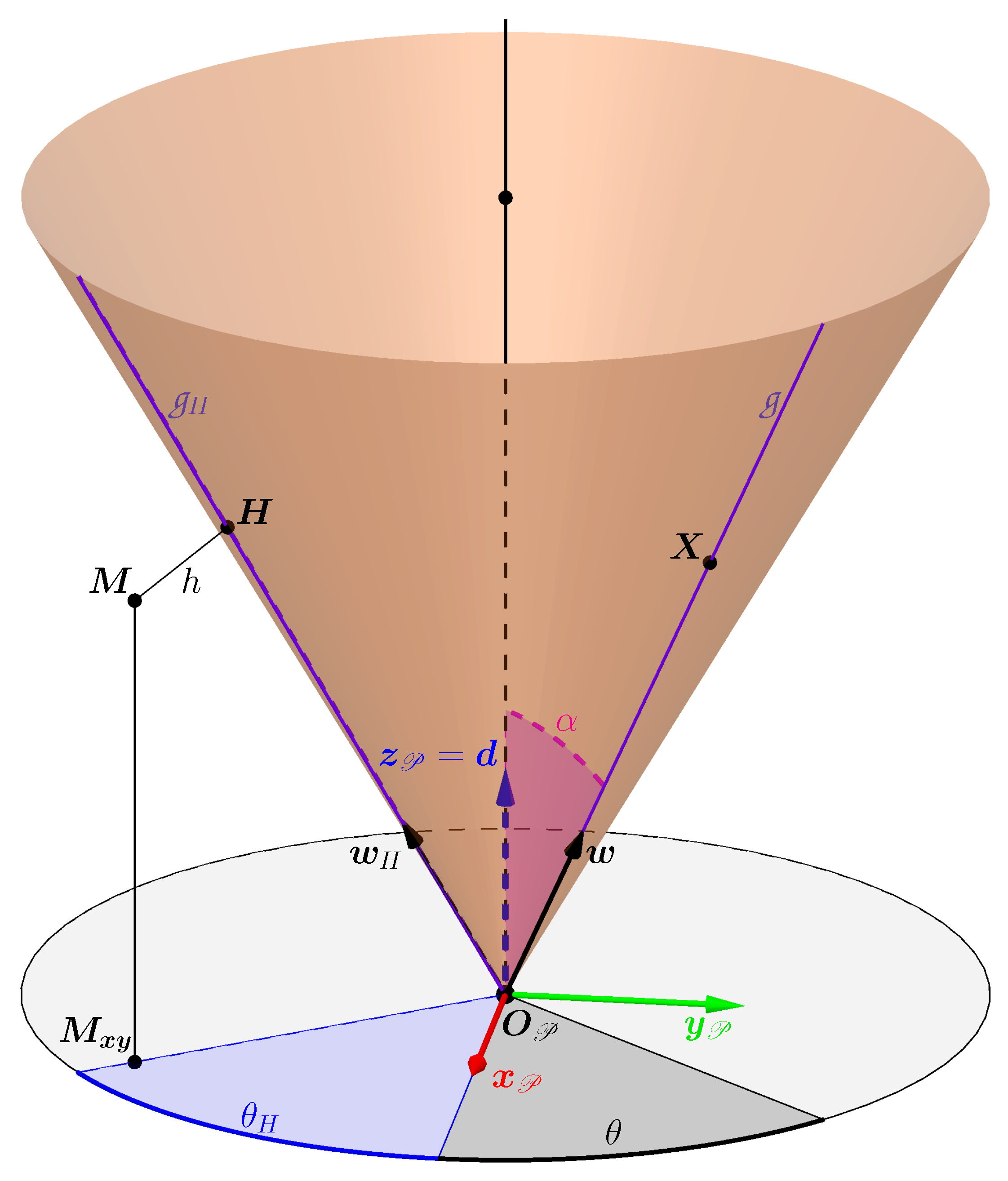

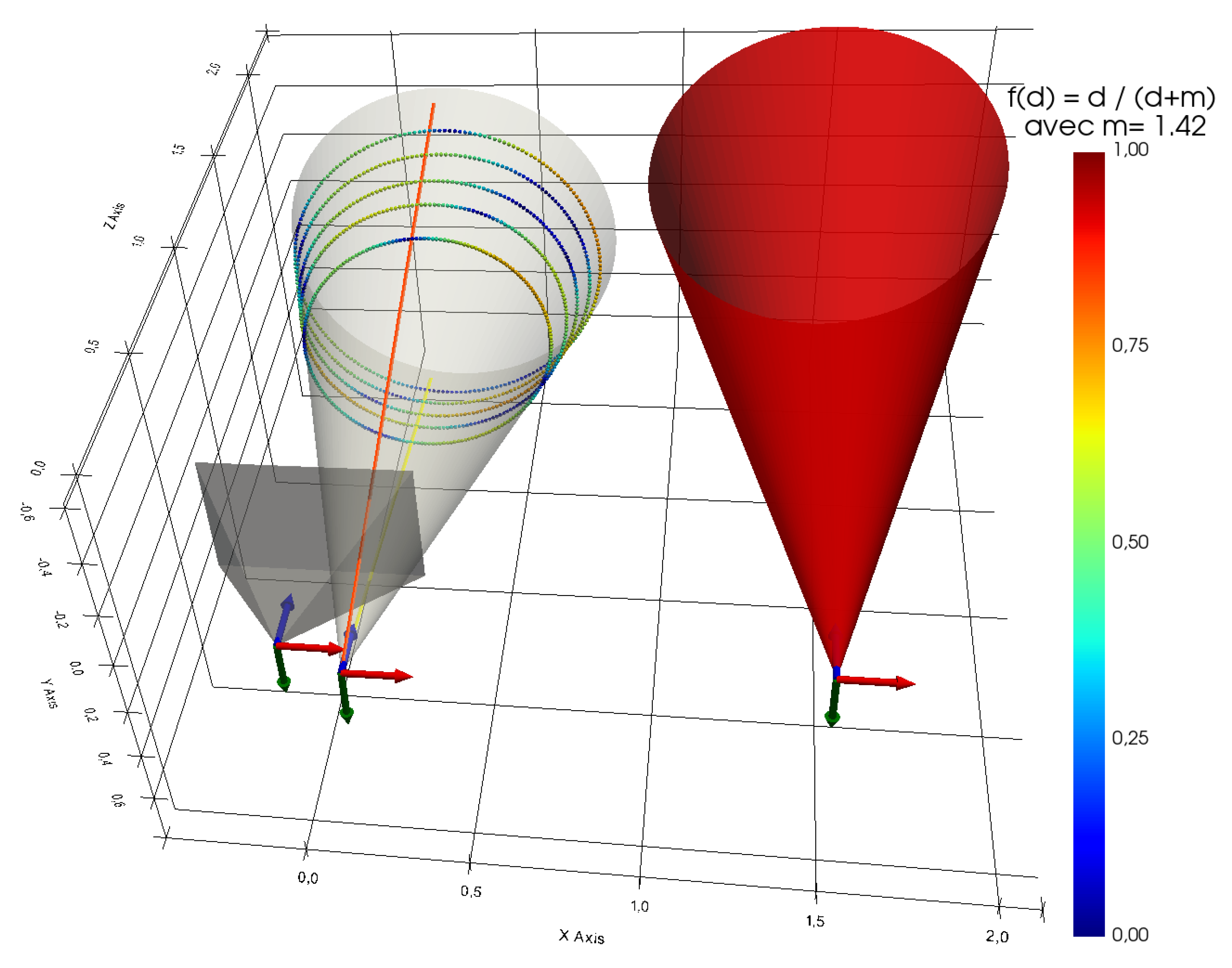

3.1. Cone Parameters

3.1.1. Cone of Revolution

- a point belonging to the cone is a point which belongs to the surface of the cone,

- a point inside the cone is a point which belongs to the solid bounded by the surface of the cone,

- a point outside the cone is a point which does not belong to the solid bounded by the surface of the cone.

3.1.2. Orthogonal Distance

3.1.3. Quadratic form of the Cone

3.2. Projector Calibration

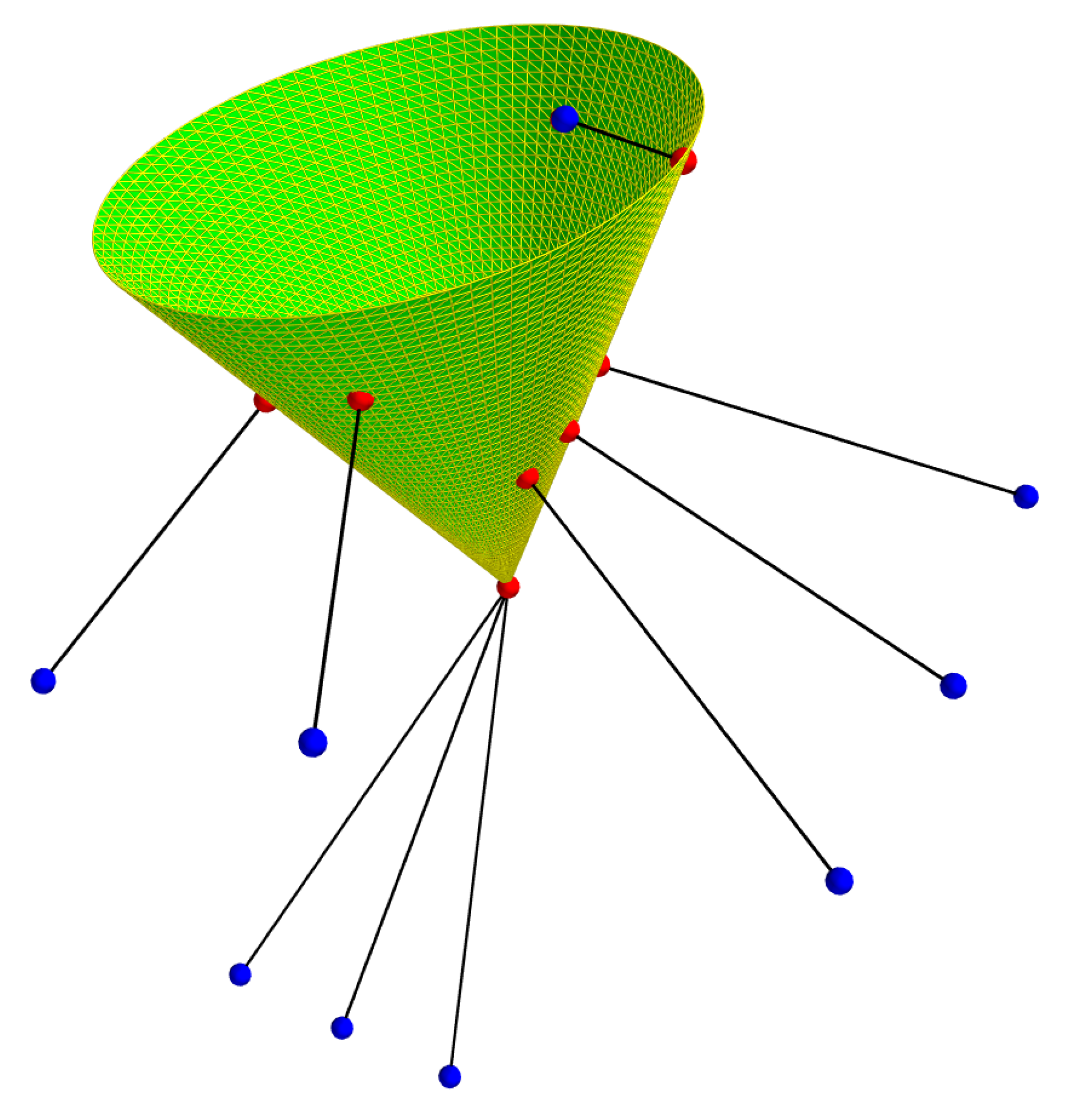

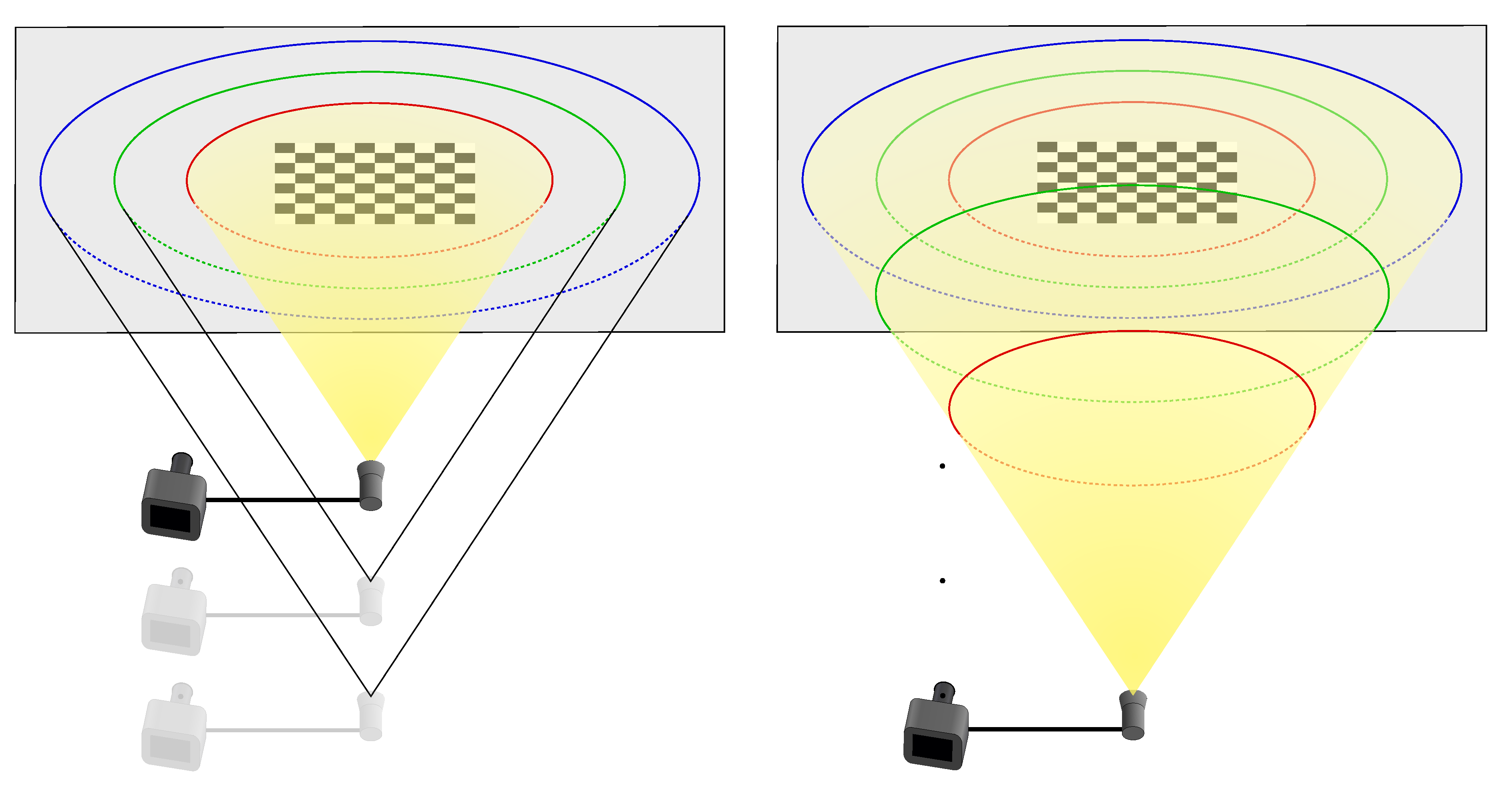

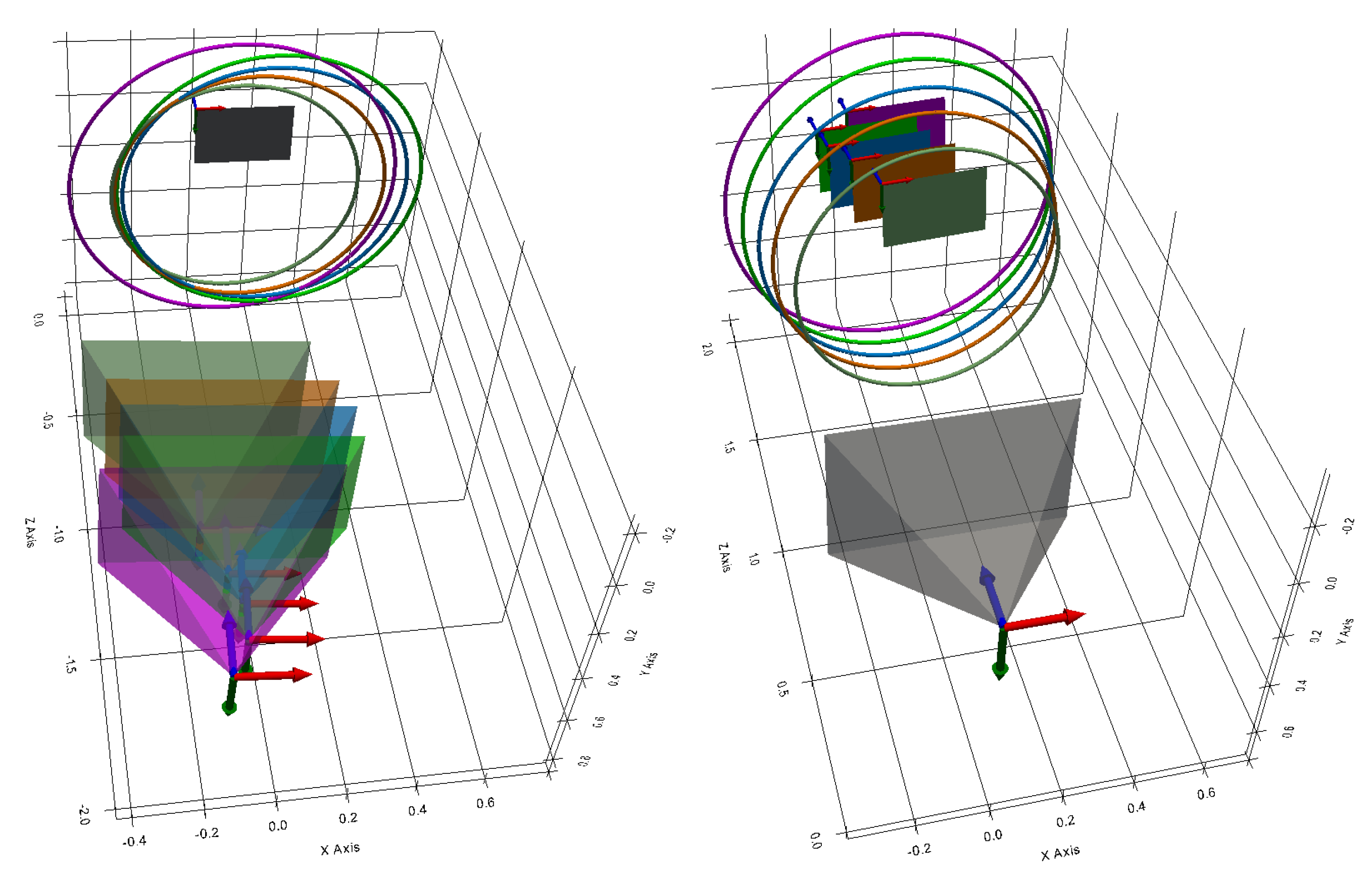

3.2.1. 3D Point Generation for Cone Estimation

3.2.2. Cone Estimation

- the three coordinates of its vertex ,

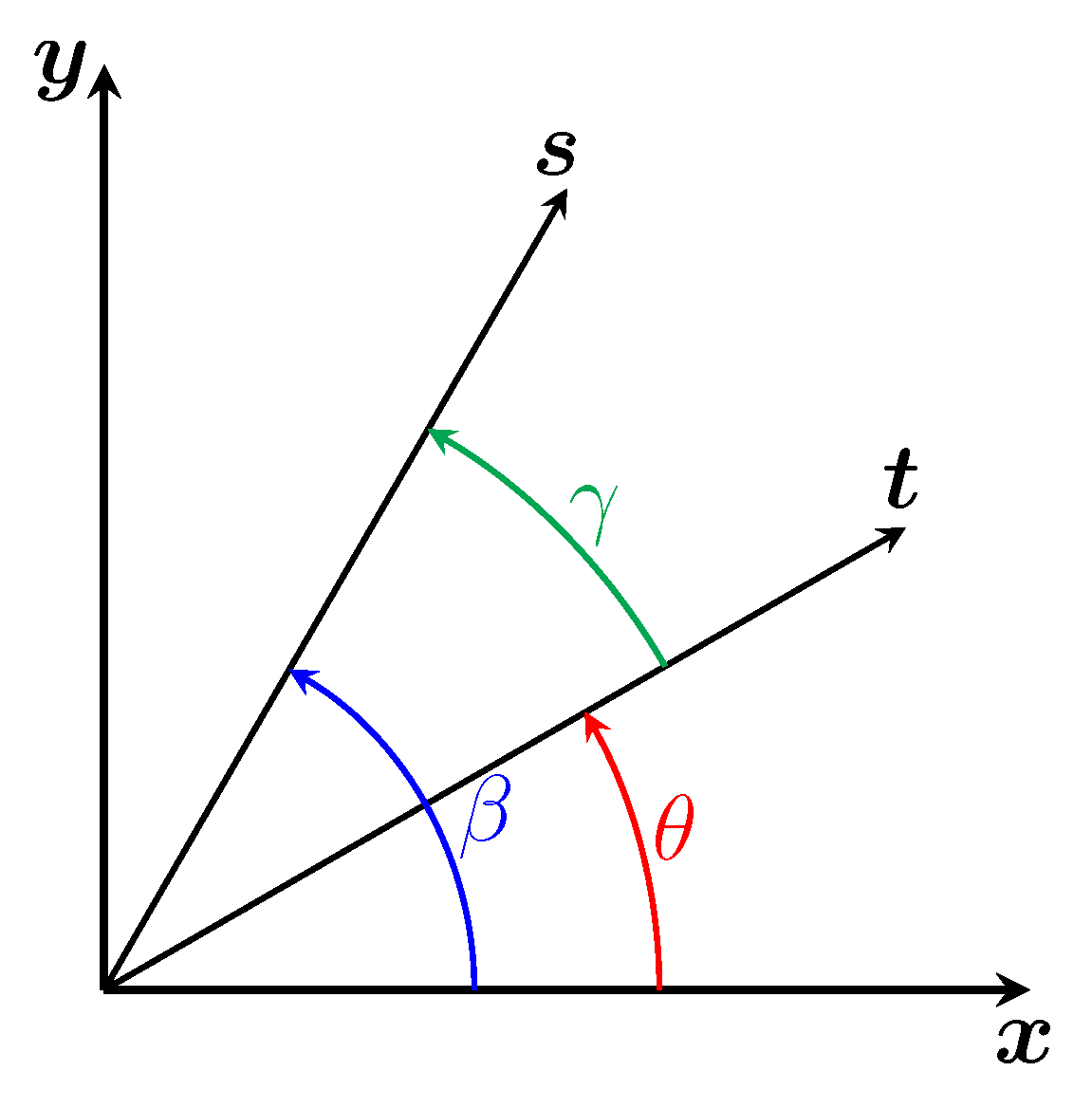

- the two angles yaw and pitch in ZYX-Euler convention of its direction vector , the angle roll not being necessary since a cone has an axis of symmetry,

- its opening half-angle .

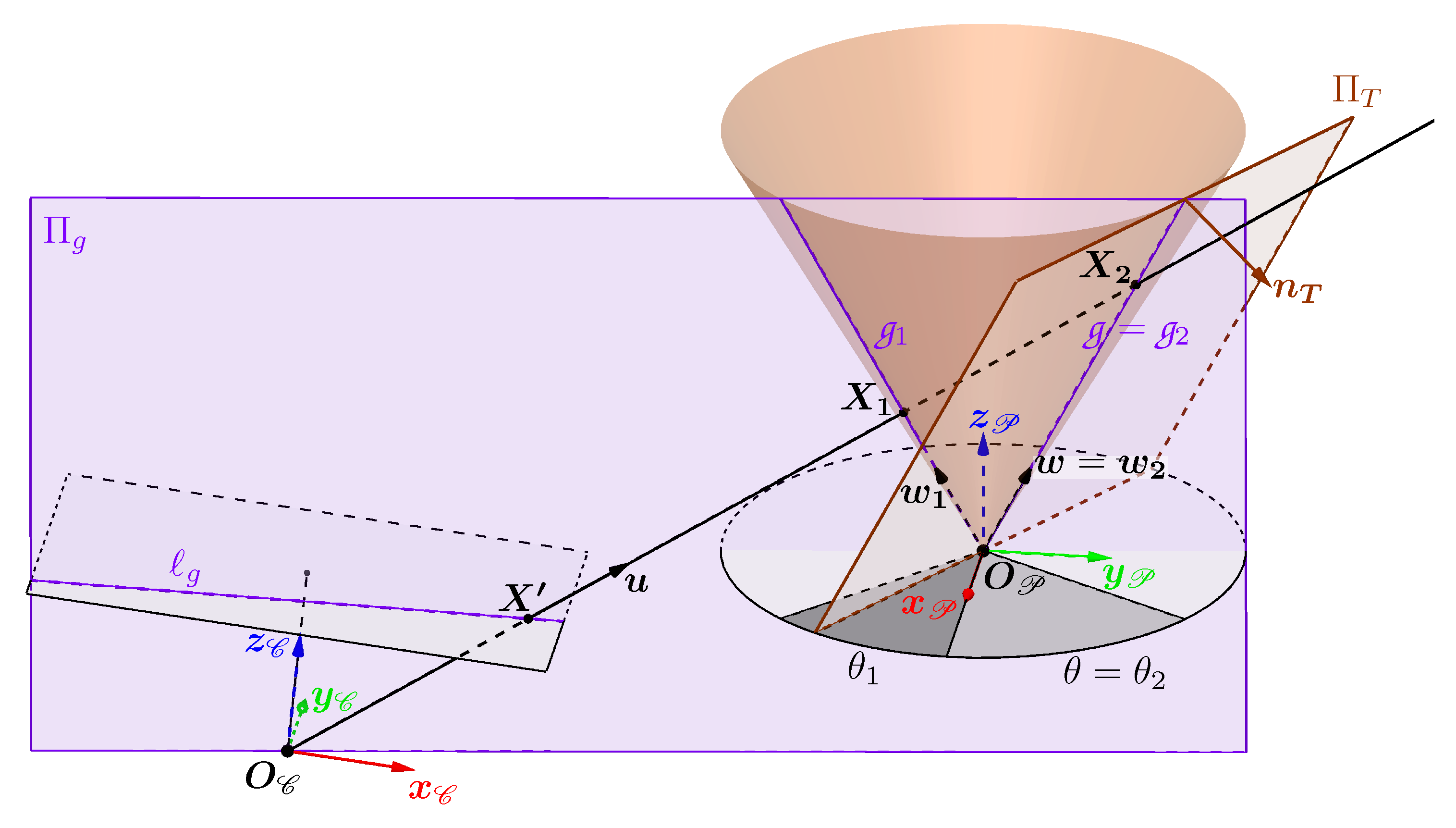

3.3. Intersection with a Ray

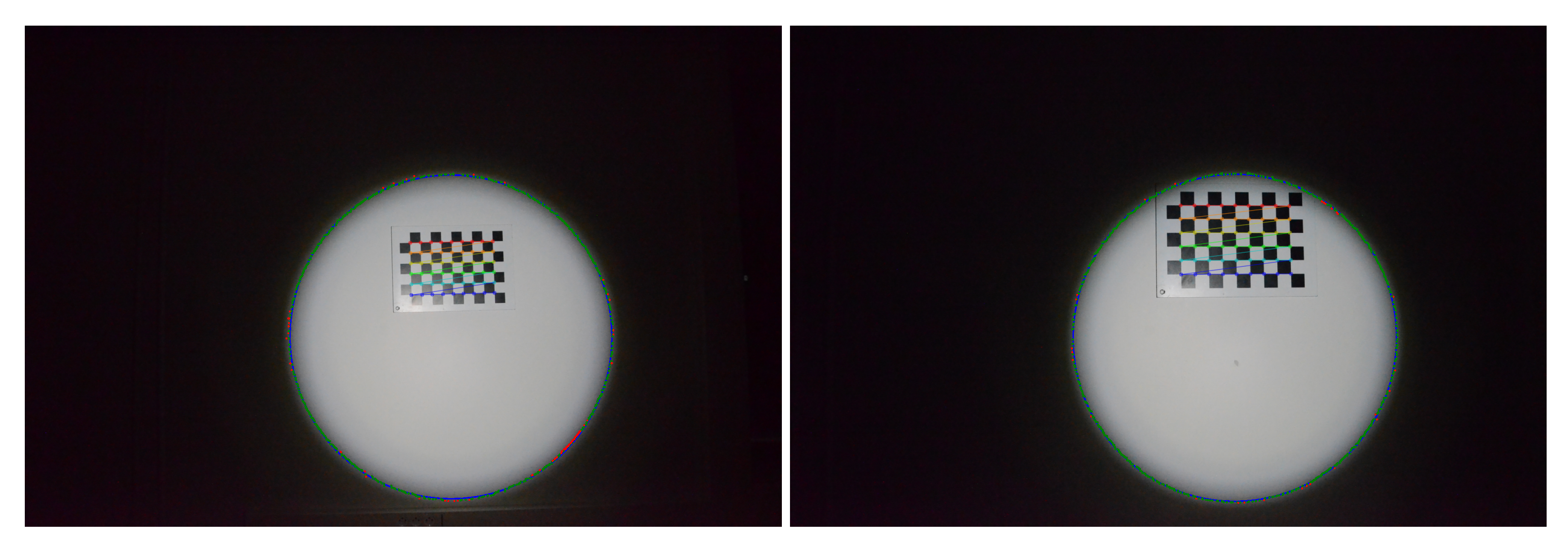

3.4. Projection of the Generatrices of the Cone in the Image

3.4.1. Calculating the Projections of the Generatrices of the Cone

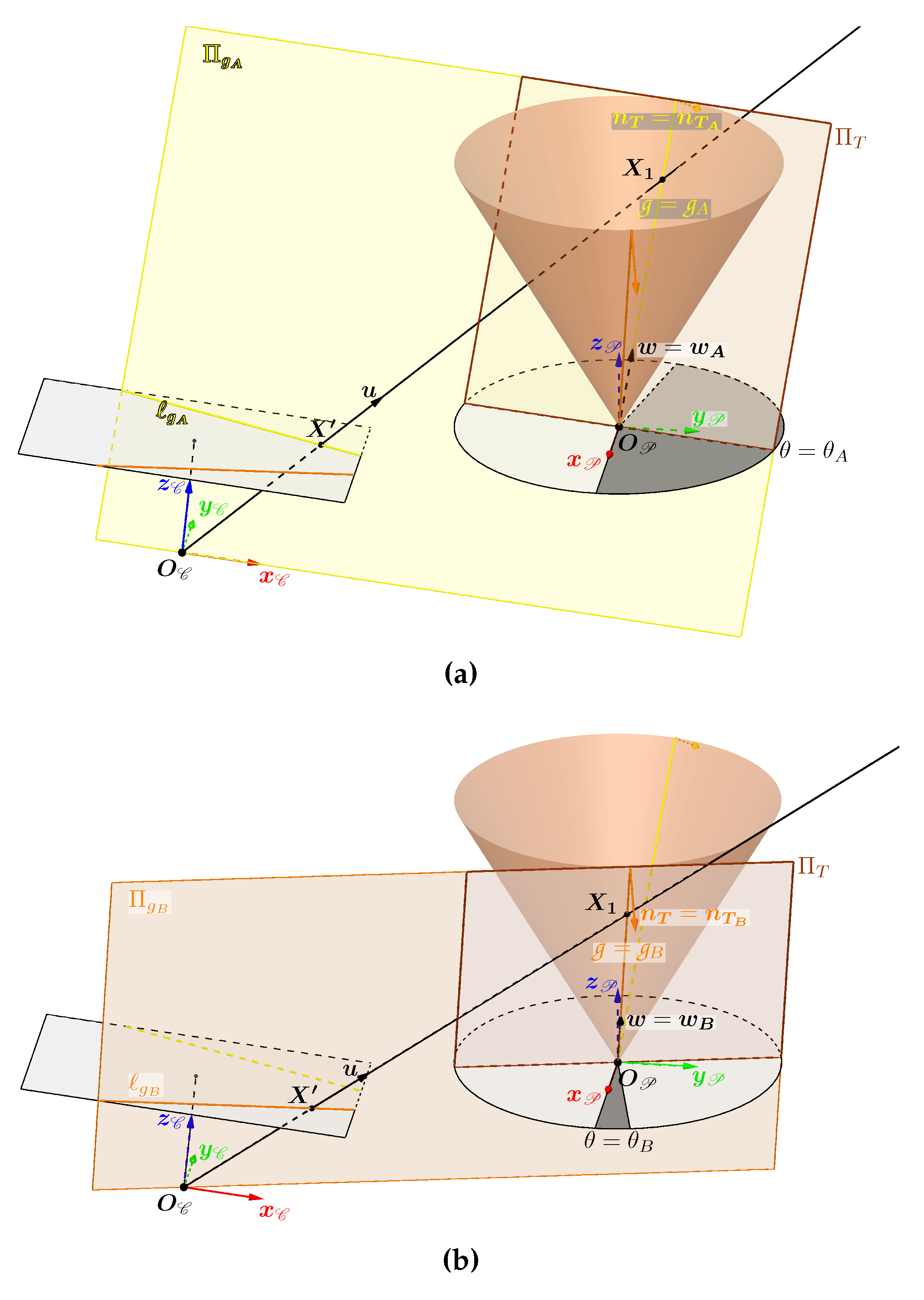

Observation When Is Not Tangent to the Cone

Calculating the Two Special Generatrices When Is Tangent to the Cone

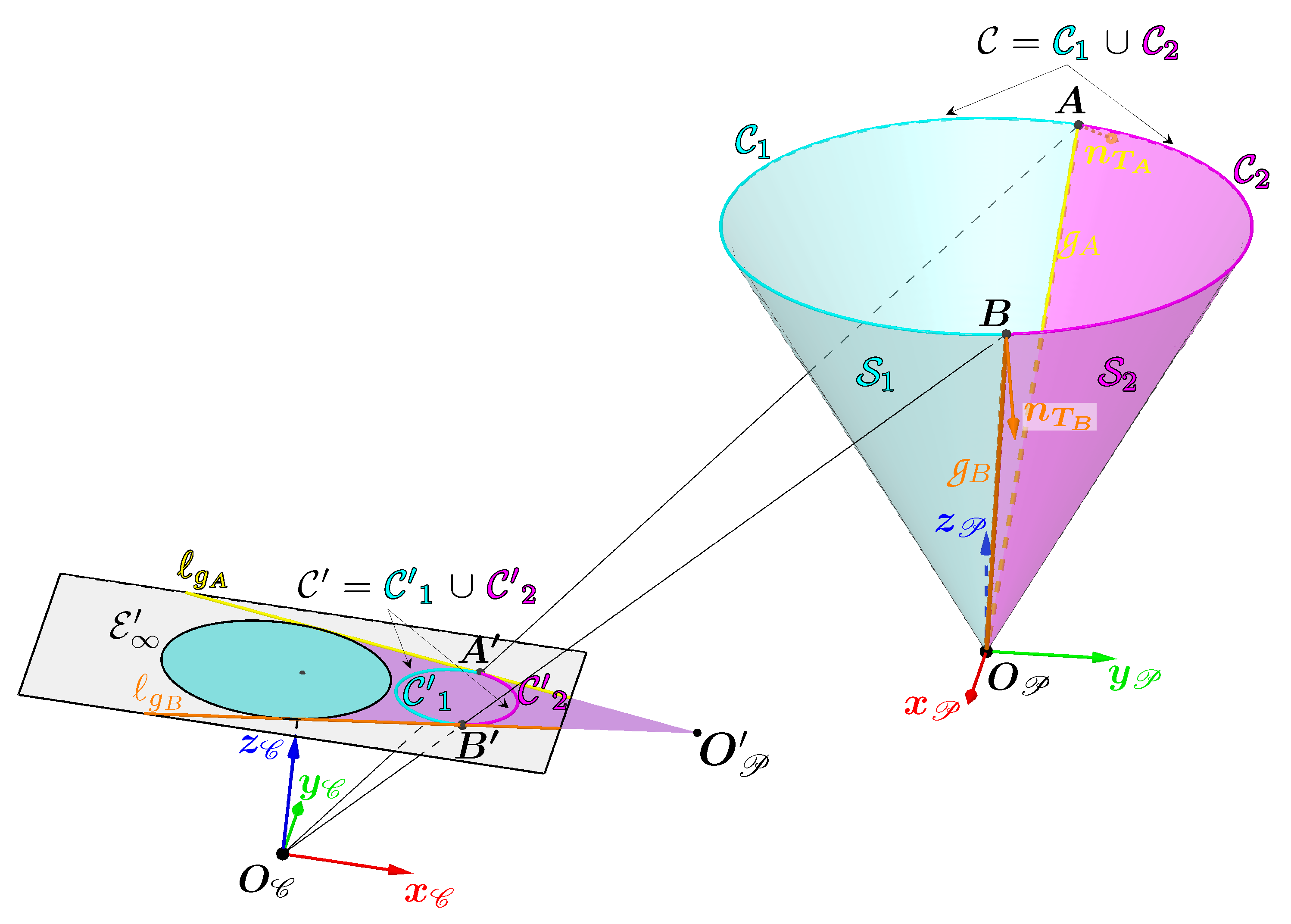

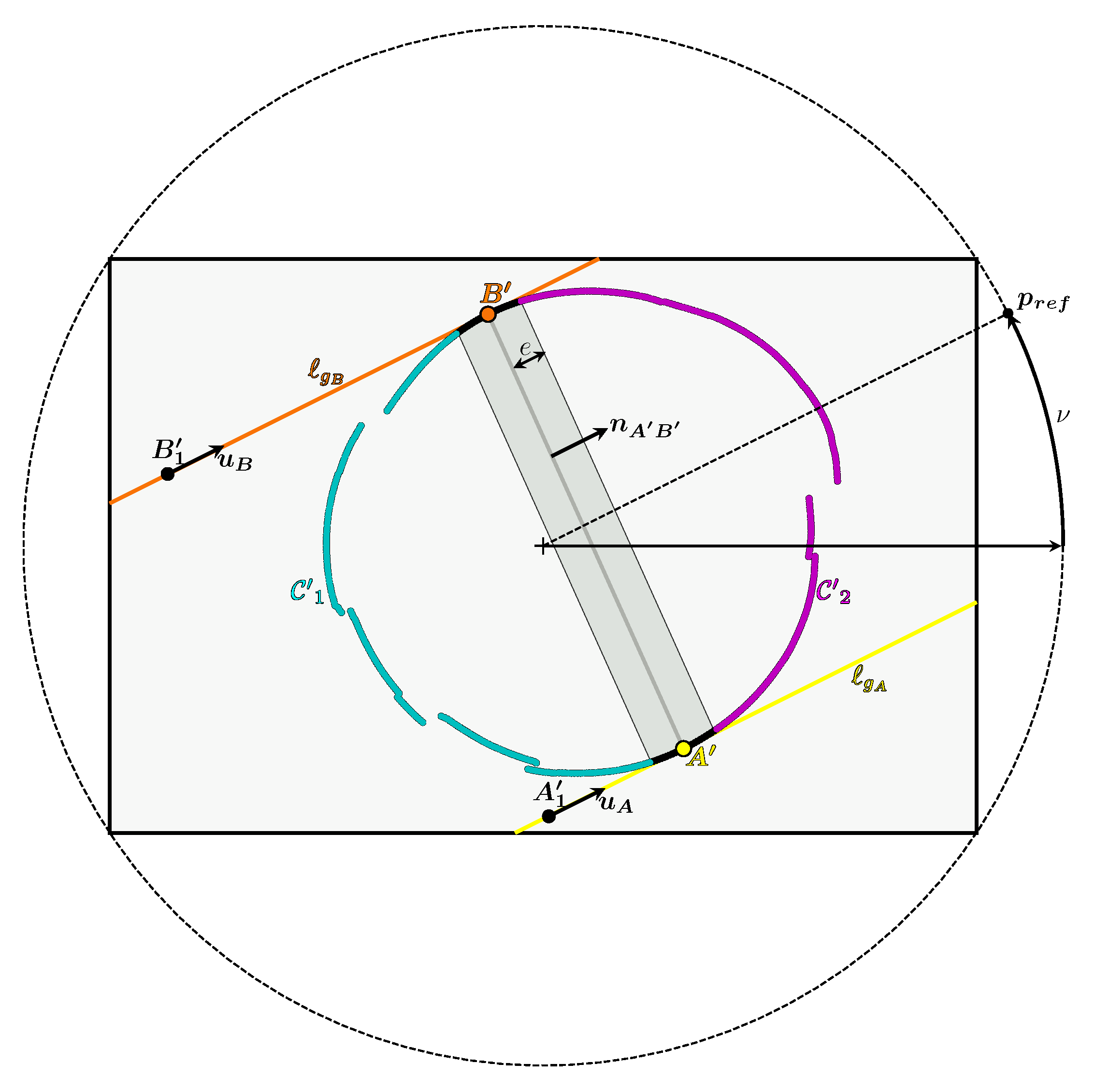

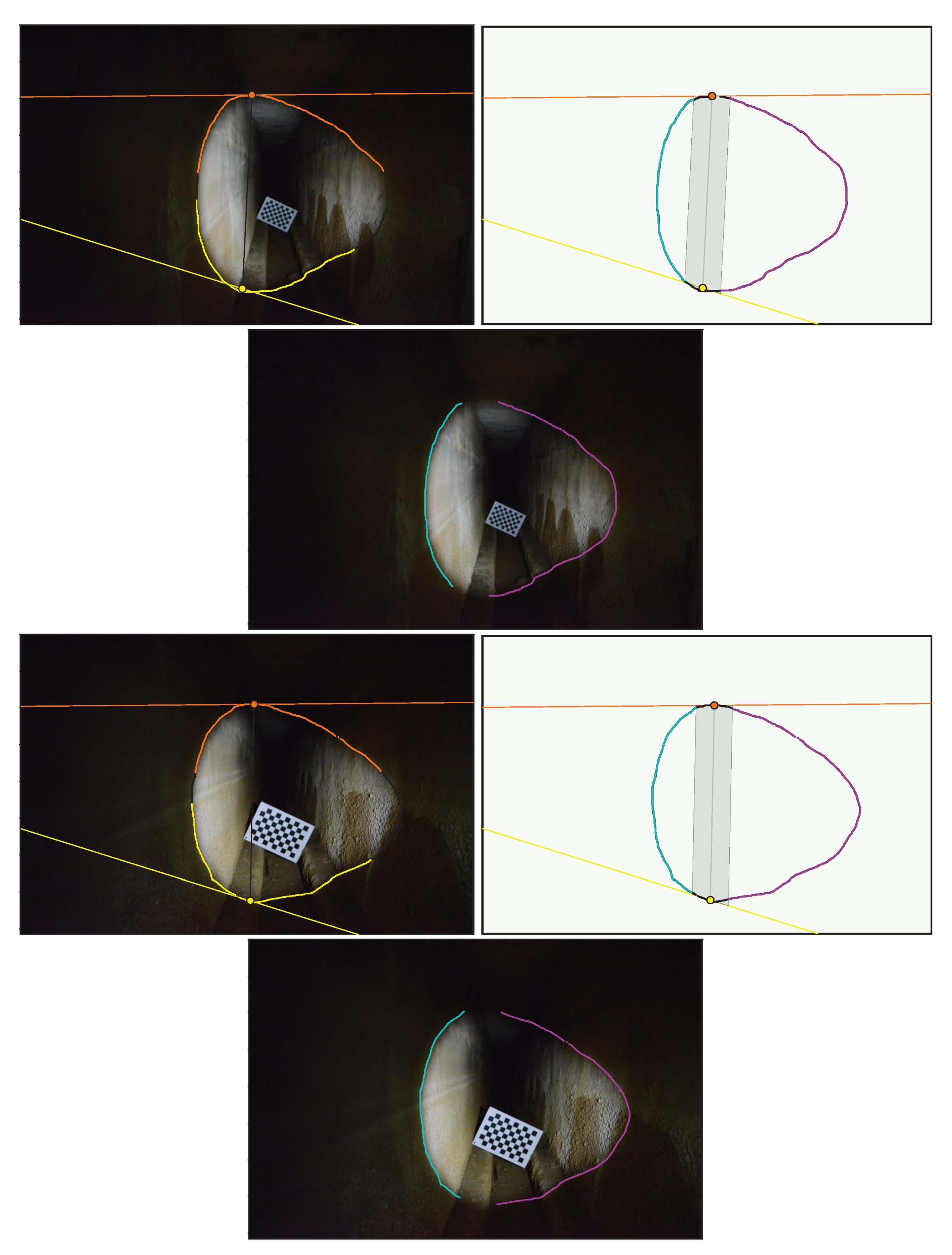

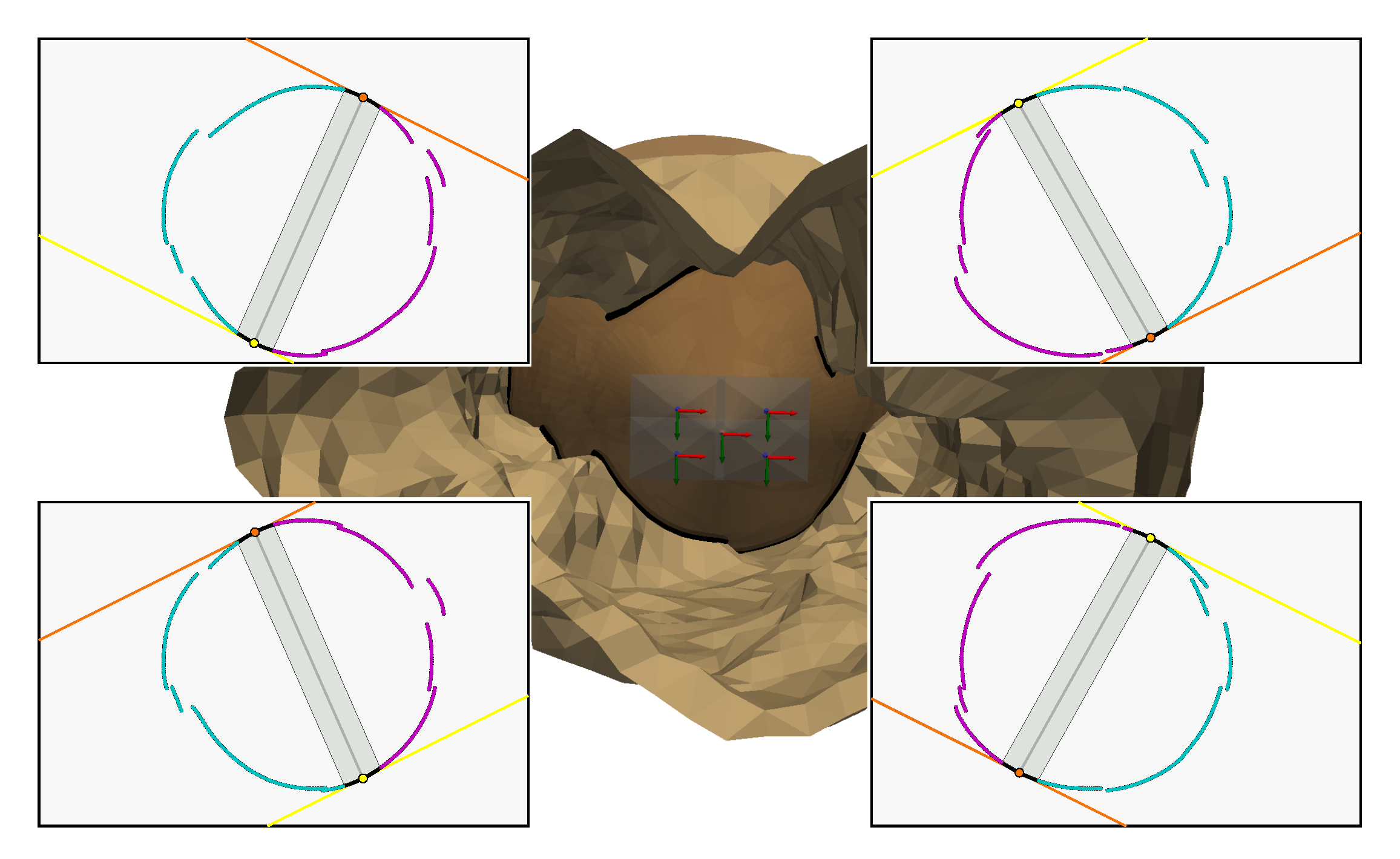

Splitting the Cone into Two Areas Using the Two Special Generatrices

- the lines and .

- the ellipse which is the set of vanishing points of the generatrices. These points define a first bound on all the projections of the generatrices.

- the projection of the vertex into the image plane, named . It defines a second bound on all the projections of the generatrices.

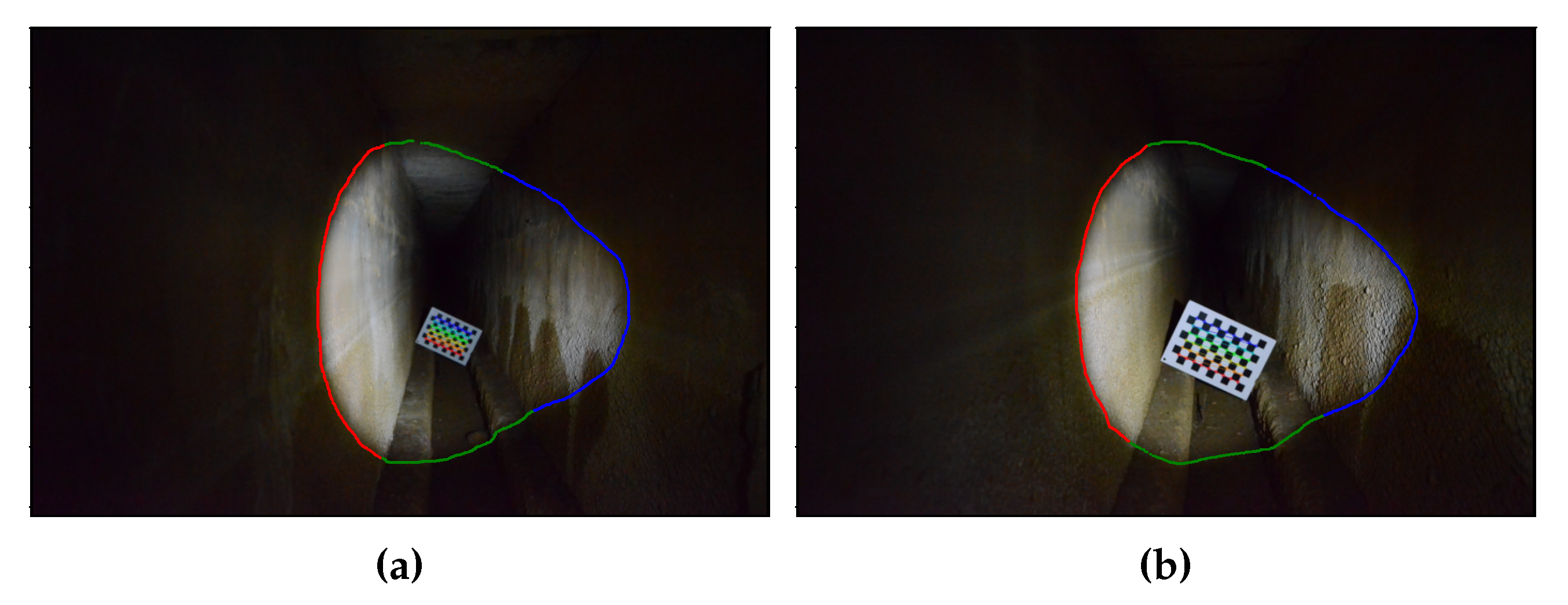

- Any ray associated with a point in the cyan area has as its unique intersection with the cone a 3D point belonging to the surface .

- Any ray associated with a point in the magenta area has two intersections with the cone, the first of which belongs to the surface and the second to the surface . These two intersections are superimposed if the point in the magenta area belongs to the line or to the line .

- Any ray associated with a point outside the magenta area and the cyan area does not have any intersection with the cone.

3.5. Intersection Selection and Triangulation

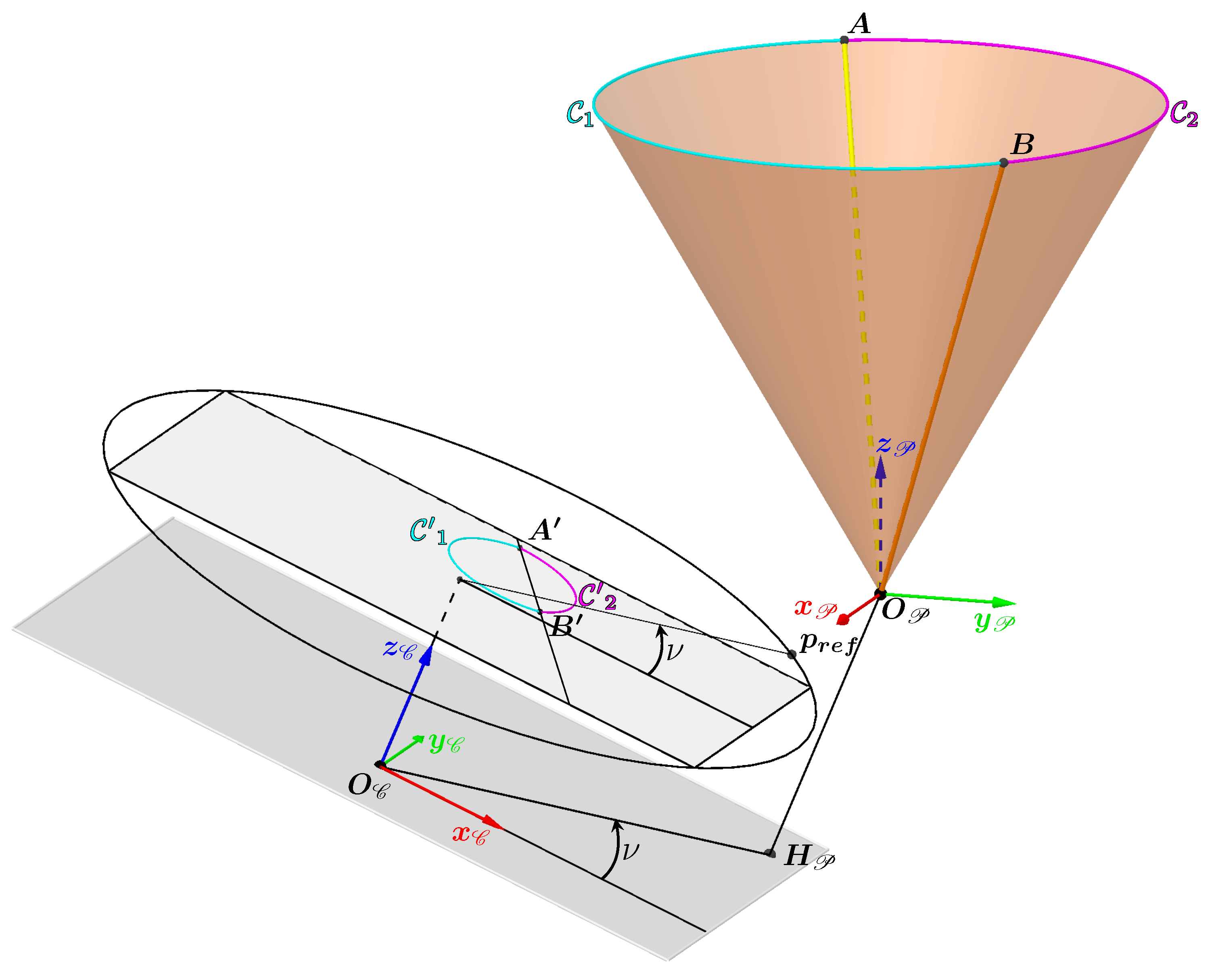

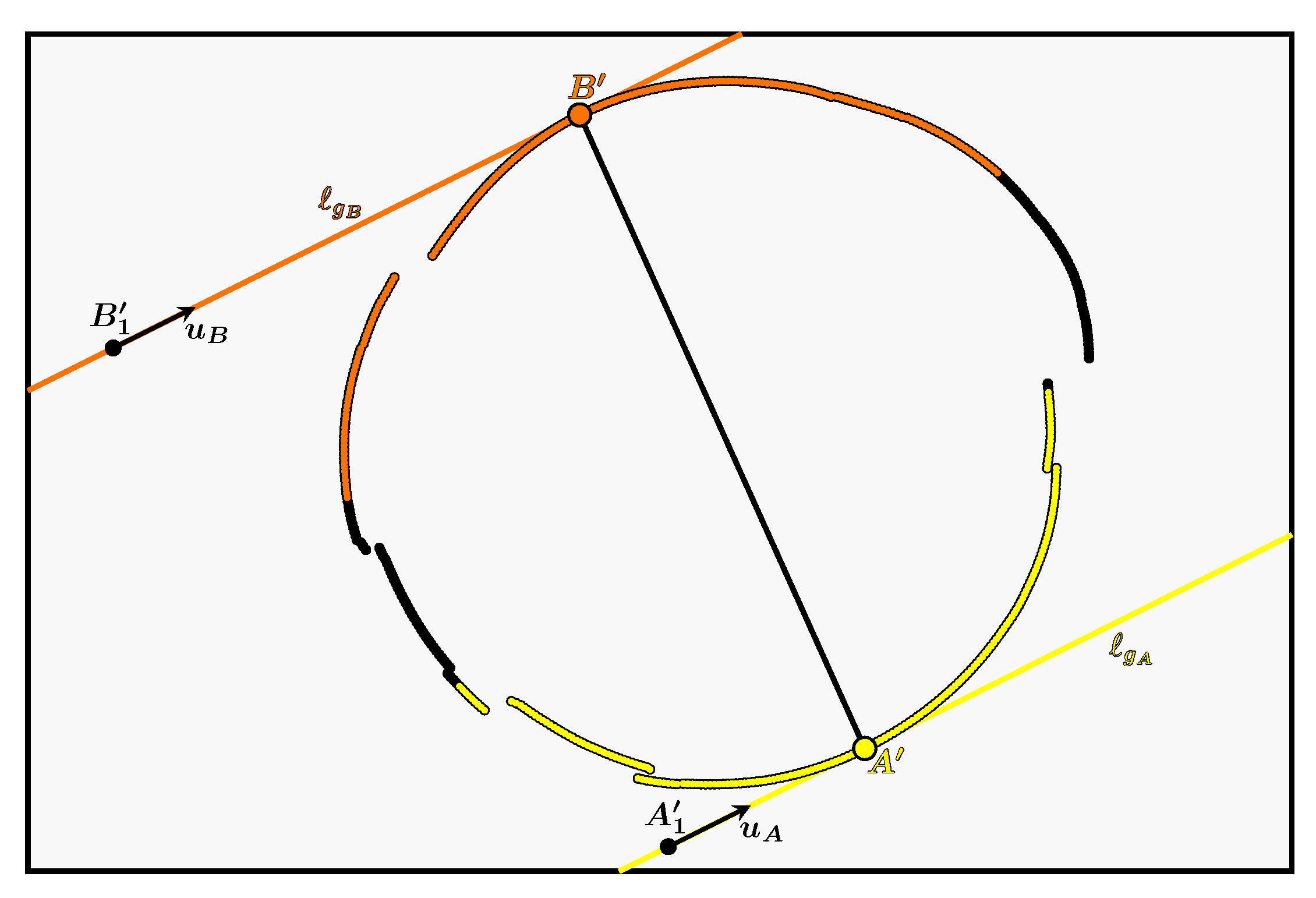

- is the closed 2D curve corresponding to the contour in the image with a 2D point on the curve.

- is our circular 3D curve corresponding to the contour of the light in the scene, with a 3D point on the curve which has as its projection. The point therefore corresponds to the correct intersection between the cone and the camera ray associated with .

- The areas containing the first and second intersections with the cone are delimited by the two generatrices and which divide the cone into two surfaces and . They also divide into two curves, one in cyan called and the other in magenta called .

- and are respectively the two intersections of and with the curve and are thus the only two 3D points common to the curves and .

- The cyan curve named and the magenta curve named are respectively the projections of the curves and .

- and are the projections of and and are therefore the only two points common to the curves and . They belong to both the curve and the lines and . So, in theory, they correspond to the two unique points of tangency of the lines and with .

- the curve is discrete,

- the light contours are extracted in a perfectible way,

- the camera calibration and the cone estimation have uncertainties, so the lines and also have uncertainties.

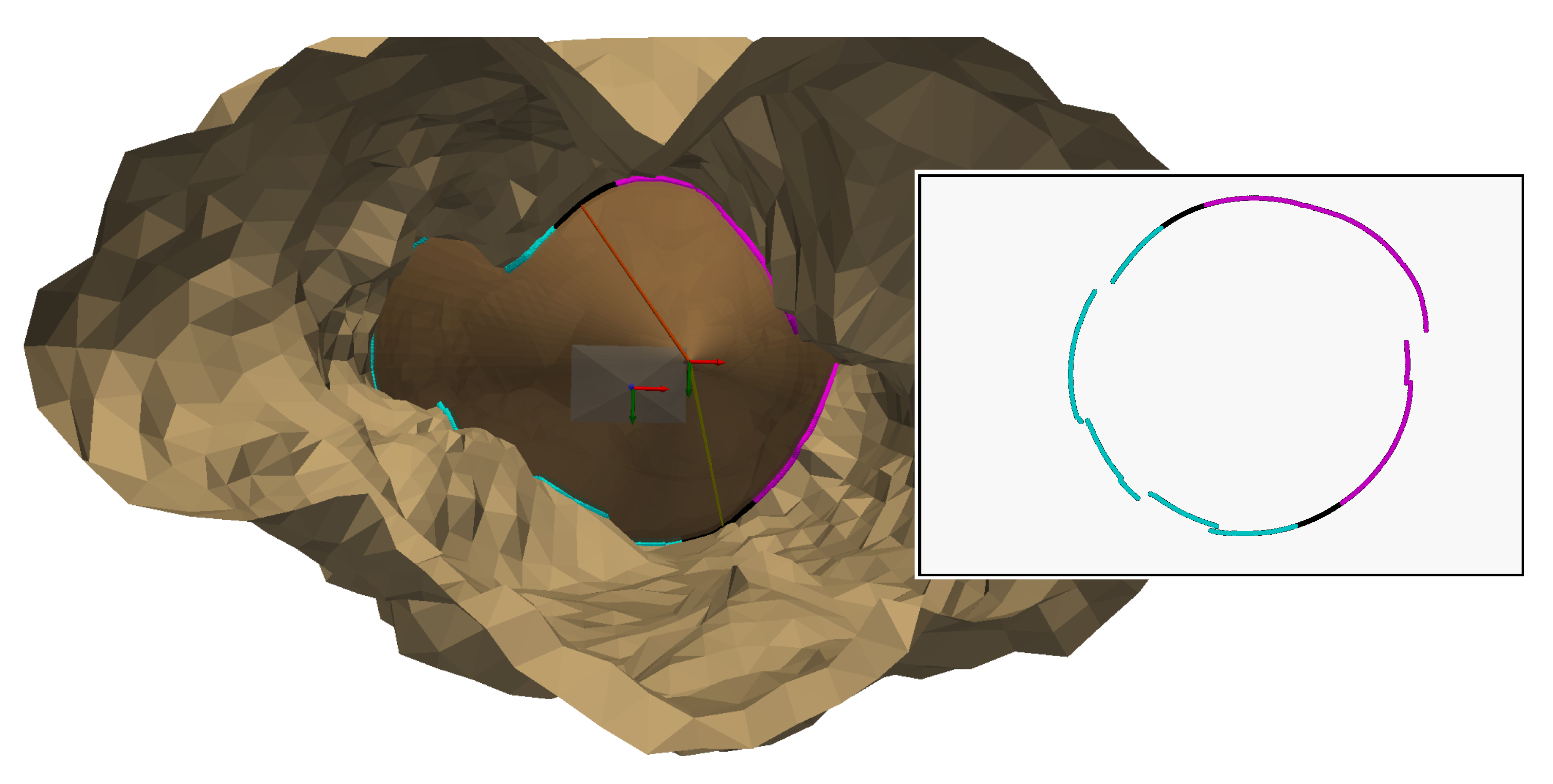

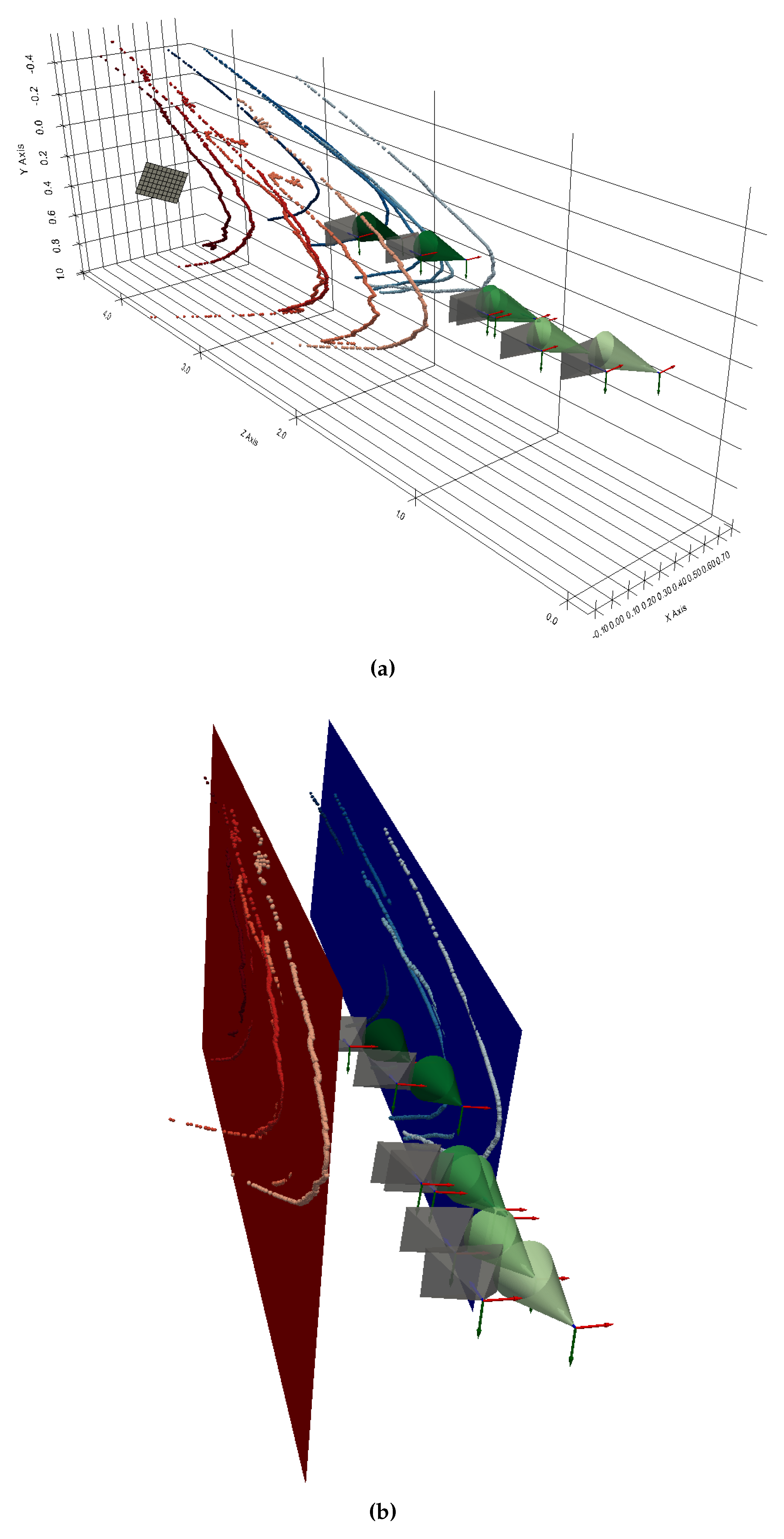

3.6. Test of the Method in Simulation

Contour Simulation

Calculating the Generatrices and the Lines Containing Their Projection

Determining the Points and

- are the x% of the points on the curve closest to the line .

- is a function whose aim is to reduce the impact of the points far from the line in minimisation.

Separation of the Curve for Intersection Selection

3D reconstruction

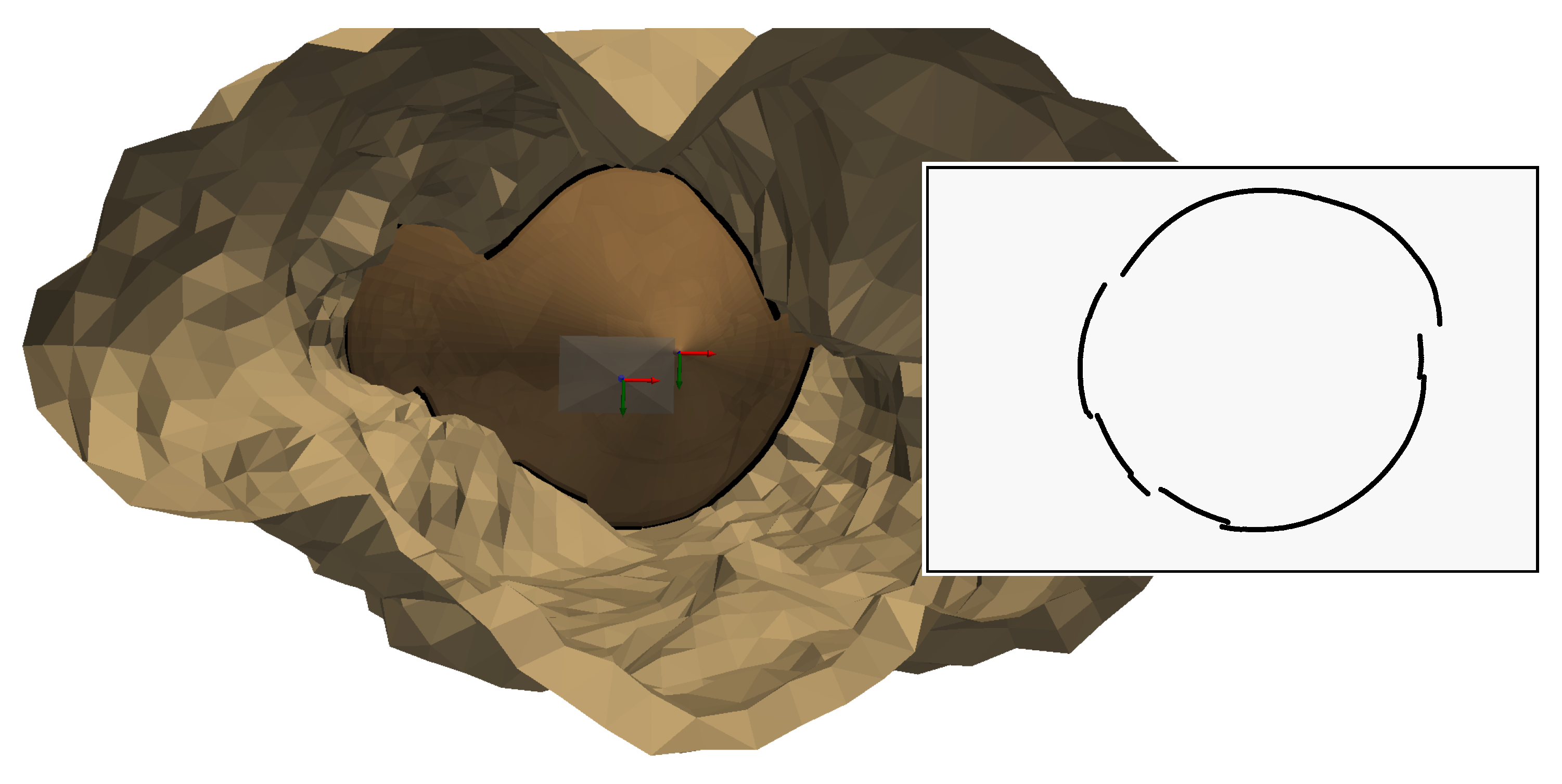

4. Experiments in a Waterless Aqueduct

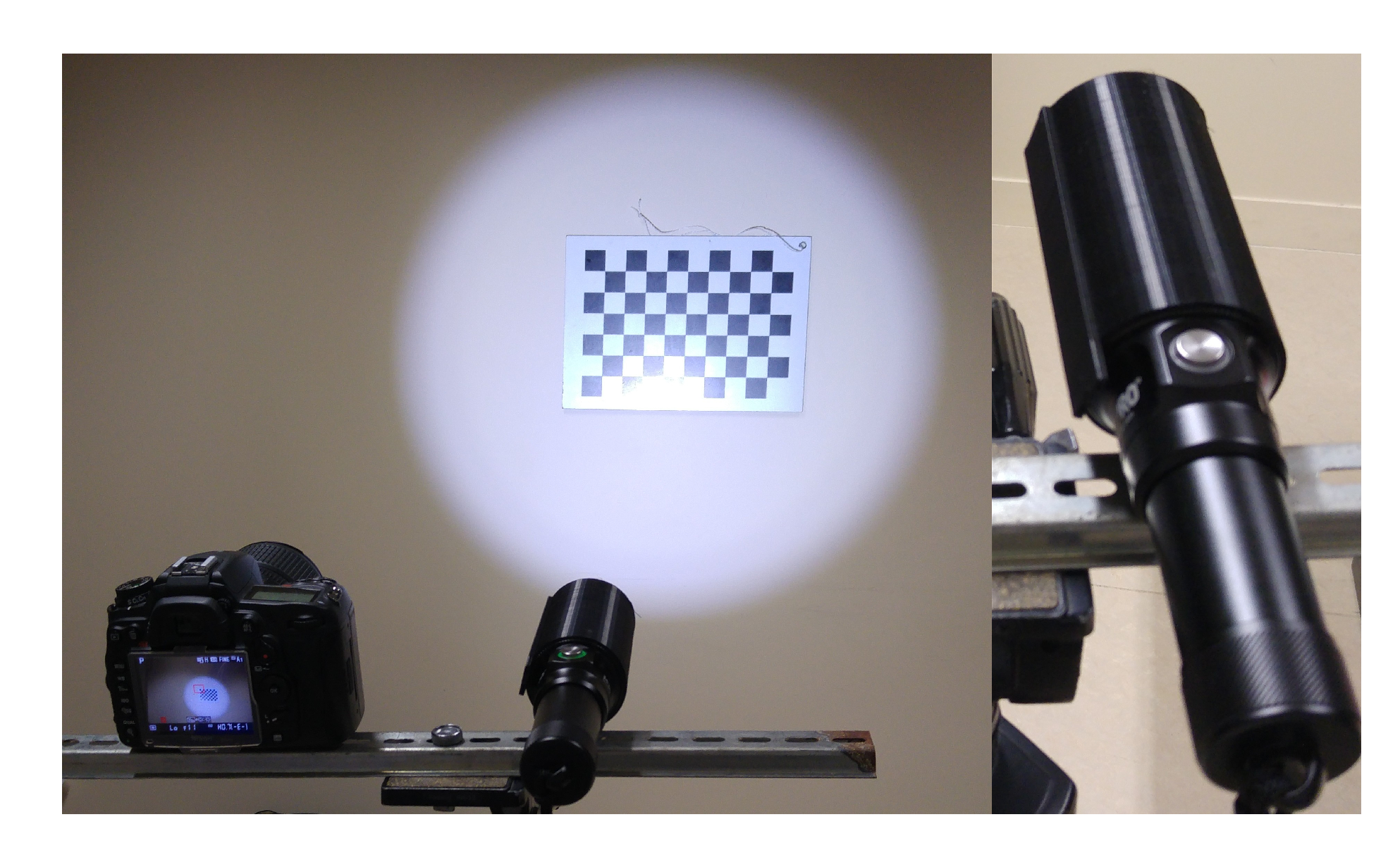

4.1. Our System

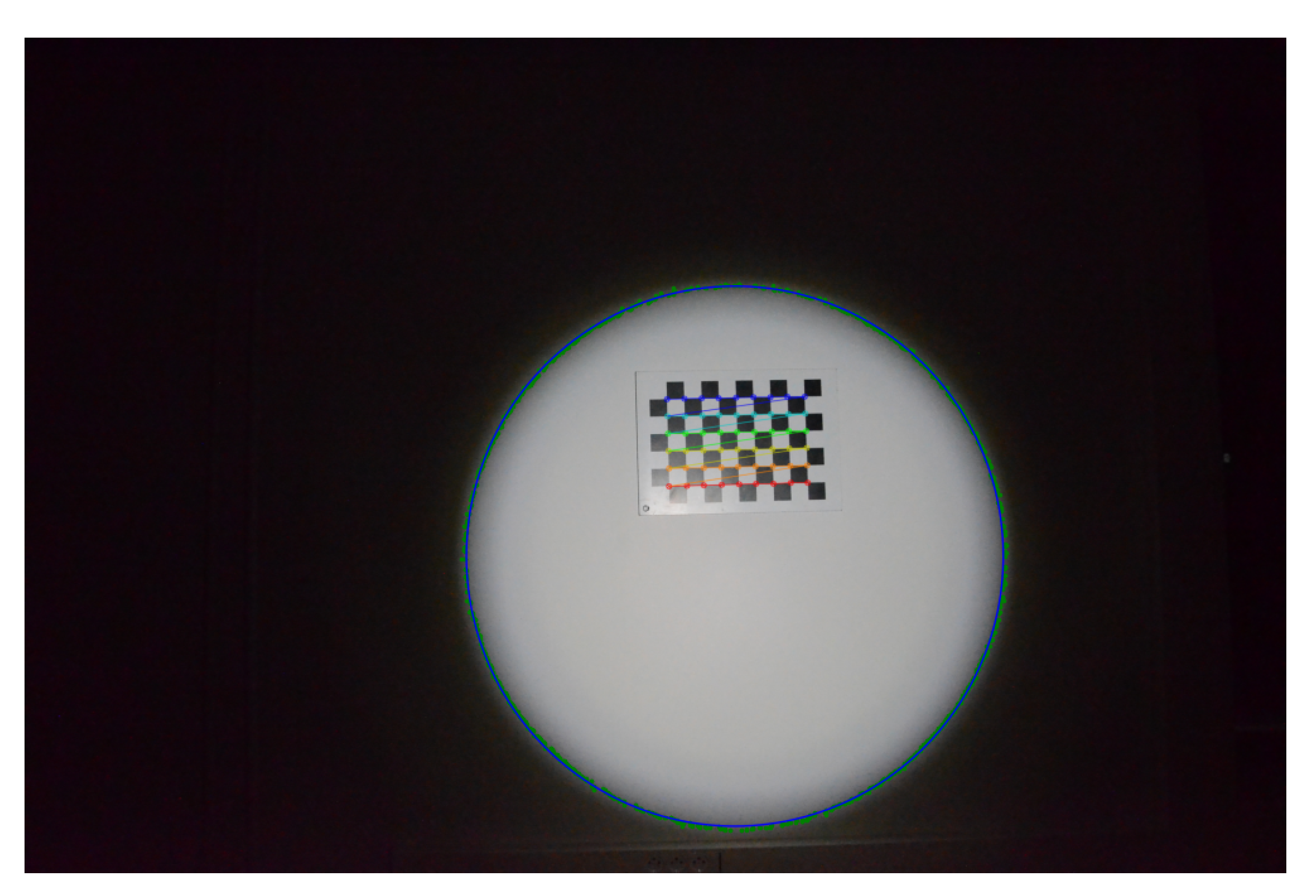

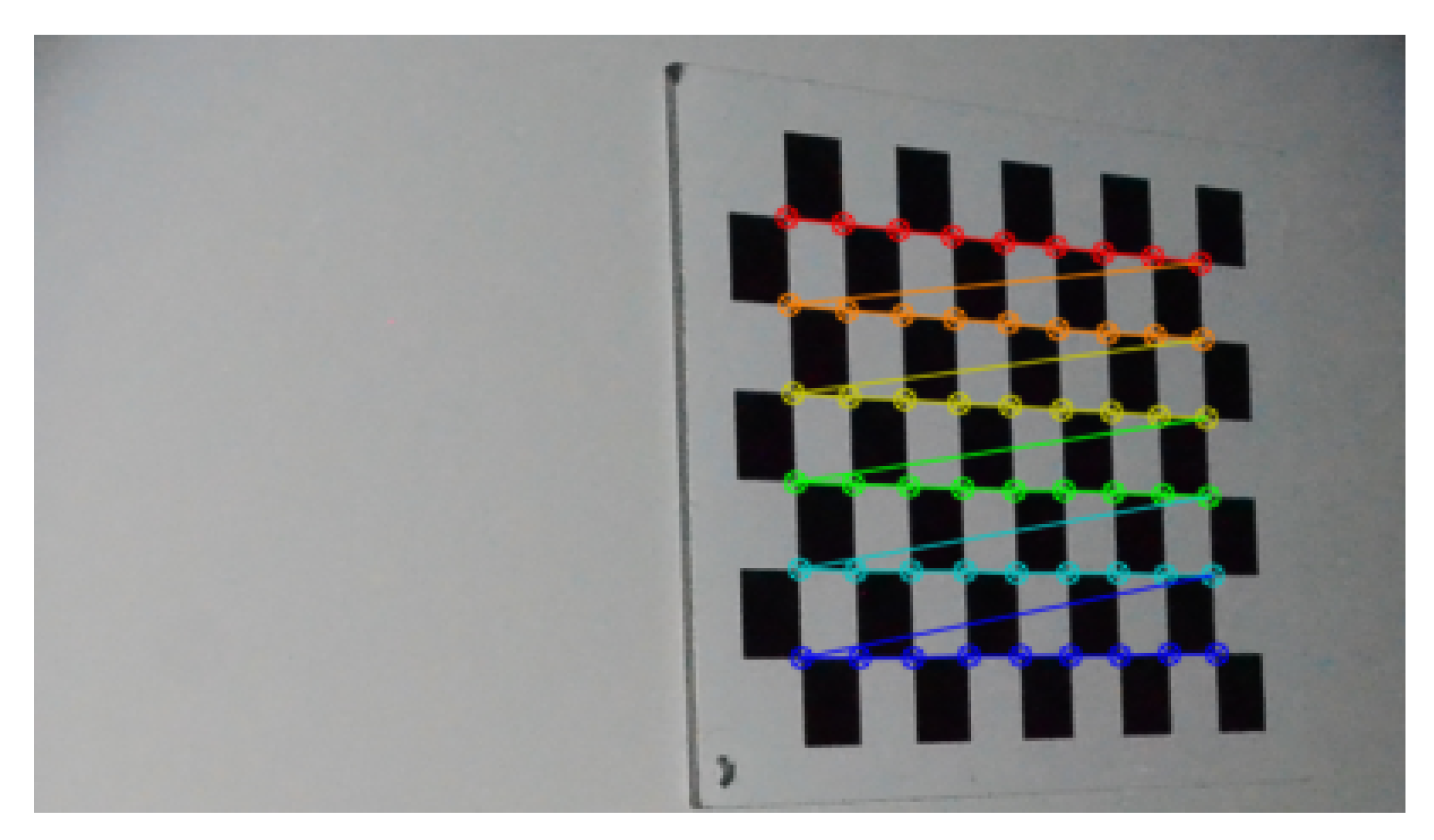

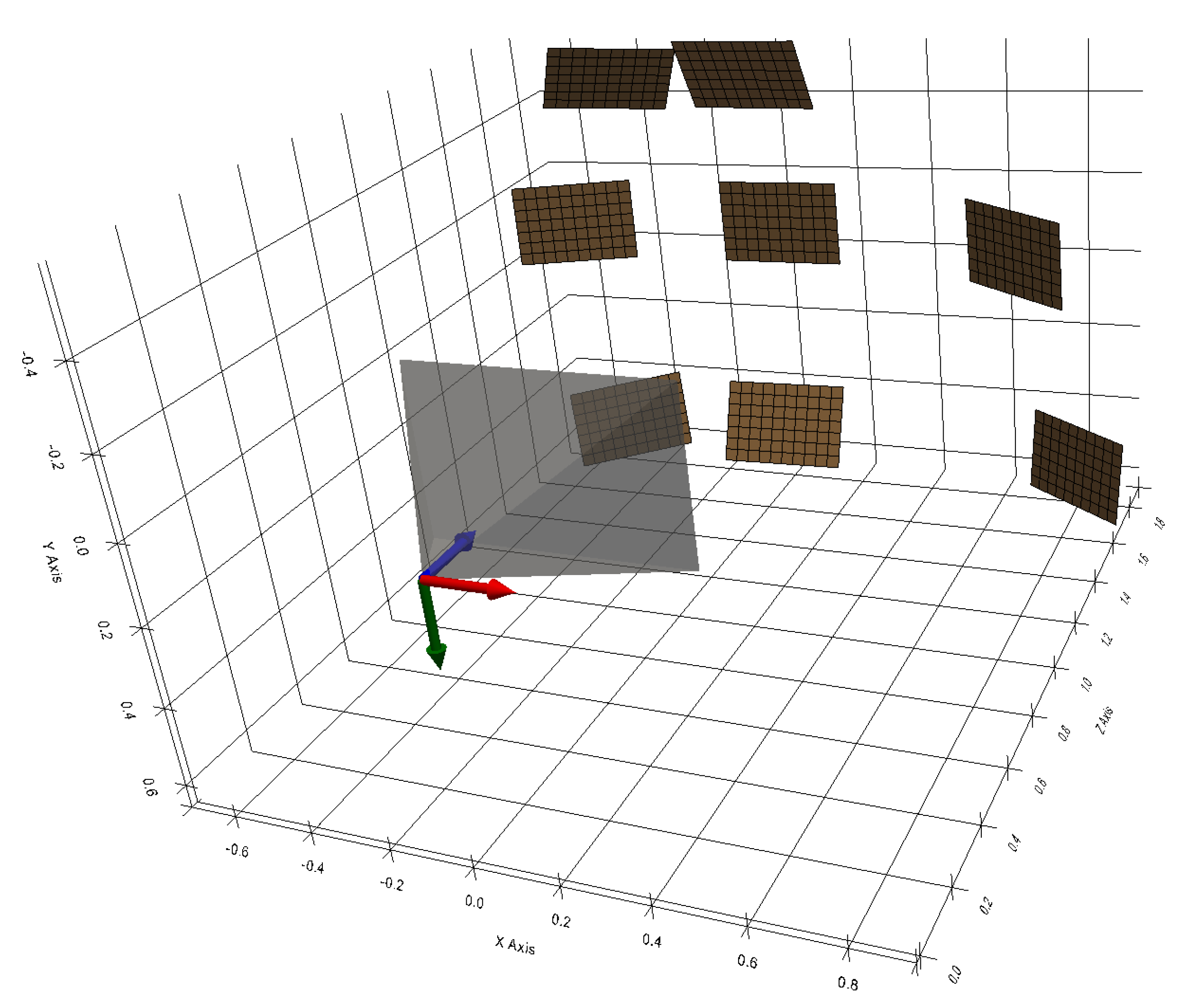

4.2. Camera Calibration Results

- the intrinsic matrix K obtained is :

- the resulting focal length is 18.2 mm,

4.3. Projector Calibration Results

4.4. 3D Results

- the 3D points resulting from the left part (respectively right part) of the contour belong to the same plane so they are supposed to be coplanar,

- If we estimate a plane from the left 3D points and a plane from the right 3D points, they must be parallel and separated by a distance of approximately 62cm.

5. Discussion

6. Conclusions

References

- Gleick, P.H. Water in crisis. Pacific Institute for Studies in Dev., Environment & Security. Stockholm Env. Institute, Oxford Univ. Press. 473p 1993, 9, 1051–0761.

- Massot-Campos, M.; Oliver-Codina, G. Optical sensors and methods for underwater 3D reconstruction. Sensors 2015, 15, 31525–31557. [Google Scholar] [CrossRef] [PubMed]

- Hu, K.; Wang, T.; Shen, C.; Weng, C.; Zhou, F.; Xia, M.; Weng, L. Overview of Underwater 3D Reconstruction Technology Based on Optical Images. Journal of Marine Science and Engineering, 11, 949. [CrossRef]

- Snyder, J. Doppler Velocity Log (DVL) navigation for observation-class ROVs. In Proceedings of the OCEANS 2010 MTS/IEEE SEATTLE; IEEE, 2010; pp. 1–9. [Google Scholar] [CrossRef]

- Lee, C.M.; Lee, P.M.; Hong, S.W.; Kim, S.M.; Seong, W.; et al. Underwater navigation system based on inertial sensor and doppler velocity log using indirect feedback kalman filter. International Journal of Offshore and Polar Engineering 2005, 15. [Google Scholar]

- Brown, C.J.; Blondel, P. Developments in the application of multibeam sonar backscatter for seafloor habitat mapping. Applied Acoustics 2009, 70, 1242–1247. [Google Scholar] [CrossRef]

- Gary, M.; Fairfield, N.; Stone, W.C.; Wettergreen, D.; Kantor, G.; Sharp, J.M., Jr. 3D mapping and characterization of Sistema Zacatón from DEPTHX (DE ep P hreatic TH ermal e X plorer). In Sinkholes and the Engineering and Environmental Impacts of Karst; 2008; pp. 202–212. [CrossRef]

- Kantor, G.; Fairfield, N.; Jonak, D.; Wettergreen, D. Experiments in navigation and mapping with a hovering AUV. In Proceedings of the Field and Service Robotics. Springer; 2008; pp. 115–124. [Google Scholar] [CrossRef]

- Mallios, A.; Ridao, P.; Ribas, D.; Carreras, M.; Camilli, R. Toward autonomous exploration in confined underwater environments. Journal of Field Robotics 2016, 33, 994–1012. [Google Scholar] [CrossRef]

- Hartley, R.I.; Sturm, P. Triangulation. Computer vision and image understanding 1997, 68, 146–157. [Google Scholar] [CrossRef]

- Massot-Campos, M.; Oliver-Codina, G. One-shot underwater 3D reconstruction. In Proceedings of the 2014 IEEE Emerging Technology and Factory Automation (ETFA).; IEEE, 2014; pp. 1–4. [Google Scholar] [CrossRef]

- Meline, A.; Triboulet, J.; Jouvencel, B. Comparative study of two 3D reconstruction methods for underwater archaeology. In Proceedings of the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems; IEEE, 2012; pp. 740–745. [Google Scholar] [CrossRef]

- Onmek, Y.; Triboulet, J.; Druon, S.; Meline, A.; Jouvencel, B. Evaluation of underwater 3D reconstruction methods for Archaeological Objects: Case study of Anchor at Mediterranean Sea. In Proceedings of the 2017 3rd International Conference on Control, Automation and Robotics (ICCAR); IEEE, 2017; pp. 394–398. [Google Scholar] [CrossRef]

- Brandou, V.; Allais, A.G.; Perrier, M.; Malis, E.; Rives, P.; Sarrazin, J.; Sarradin, P.M. 3D reconstruction of natural underwater scenes using the stereovision system IRIS. In Proceedings of the OCEANS 2007-Europe; IEEE, 2007; pp. 1–6. [Google Scholar] [CrossRef]

- Beall, C.; Lawrence, B.J.; Ila, V.; Dellaert, F. 3D reconstruction of underwater structures. In Proceedings of the 2010 IEEE/RSJ International Conference on Intelligent Robots and Systems; IEEE, 2010; pp. 4418–4423. [Google Scholar] [CrossRef]

- Zhou, Y.; Li, Q.; Ye, Q.; Yu, D.; Yu, Z.; Liu, Y. A binocular vision-based underwater object size measurement paradigm: Calibration-Detection-Measurement (C-D-M). Measurement, 216, 112997. [CrossRef]

- Weidner, N.; Rahman, S.; Li, A.Q.; Rekleitis, I. Underwater cave mapping using stereo vision. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA); IEEE, 2017; pp. 5709–5715. [Google Scholar] [CrossRef]

- Rahman, S.; Li, A.Q.; Rekleitis, I. Svin2: an underwater slam system using sonar, visual, inertial, and depth sensor. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS); IEEE, 2019; pp. 1861–1868. [Google Scholar] [CrossRef]

- Wu, X.; Wang, J.; Li, J.; Zhang, X. Retrieval of siltation 3D properties in artificially created water conveyance tunnels using image-based 3D reconstruction. Measurement, 211, 112586. [CrossRef]

- Fan, J.; Wang, X.; Zhou, C.; Ou, Y.; Jing, F.; Hou, Z. Development, Calibration, and Image Processing of Underwater Structured Light Vision System: A Survey. IEEE Transactions on Instrumentation and Measurement, 72, 1–18. [CrossRef]

- Wang, W.; Joshi, B.; Burgdorfer, N.; Batsos, K.; Li, A.Q.; Mordohai, P.; Rekleitis, I. eal-Time Dense 3D Mapping of Underwater Environments. Technical report, arXiv, 2023. arXiv:2304.02704 [cs] type: article. [CrossRef]

- Bräuer-Burchardt, C.; Munkelt, C.; Bleier, M.; Heinze, M.; Gebhart, I.; Kühmstedt, P.; Notni, G. Underwater 3D Scanning System for Cultural Heritage Documentation. Remote Sensing, 15, 1864. [CrossRef]

- Yu, B.; Tibbetts, R.; Barua, T.; Morales, A.; Rekleitis, I.; Islam, M.J. Weakly Supervised Caveline Detection For AUV Navigation Inside Underwater Caves. [CrossRef]

- Hu, C.; Zhu, S.; Song, W. Real-time Underwater 3D Reconstruction Based on Monocular Image. In Proceedings of the 2022 IEEE International Conference on Robotics and Biomimetics (ROBIO); 2022; pp. 1239–1244. [Google Scholar] [CrossRef]

- Marchand, E.; Uchiyama, H.; Spindler, F. Pose estimation for augmented reality: a hands-on survey. IEEE transactions on visualization and computer graphics 2015, 22, 2633–2651. [Google Scholar] [CrossRef] [PubMed]

- Massone, Q.; Druon, S.; Triboulet, J. An original 3D reconstruction method using a conical light and a camera in underwater caves. In Proceedings of the Proceedings of the 4th International Conference on Control and Computer Vision, 2021; pp. 126–134. [CrossRef]

- Massone, Q.; Druon, S.; Breux, Y.; Triboulet, J. Contour-based approach for 3D mapping of underwater galleries. In Proceedings of the Global Oceans 2020: Singapore–US Gulf Coast; IEEE, 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Halır, R.; Flusser, J. Numerically stable direct least squares fitting of ellipses. In Proceedings of the Proc. 6th International Conference in Central Europe on Computer Graphics and Visualization. WSCG. Citeseer; 1998; Vol. 98, pp. 125–132. [Google Scholar]

- Moré, J.J. The Levenberg-Marquardt algorithm: implementation and theory. In Numerical analysis; Springer, 1978; pp. 105–116. [CrossRef]

- Zhang, Z. A flexible new technique for camera calibration. IEEE Transactions on pattern analysis and machine intelligence 2000, 22, 1330–1334. [Google Scholar] [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: a paradigm for model fitting with applications to image analysis and automated cartography. Communications of the ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

| Estimated values | Measured values | Relative deviation | |

|---|---|---|---|

| from measurement | |||

| 14,79° | 16,13° | 1,34° (-8%) | |

| 0,210m | 0,212m | -0,002m (-0.9%) |

| Dist. (mm) | Max. | Min. | Med. | Moy. | RMS |

|---|---|---|---|---|---|

| Section 0 | 4,2 | 0,0 | 1,4 | 1,5 | 2,0 |

| Section 1 | 3,3 | 0,0 | 1,5 | 1,5 | 1,8 |

| Section 2 | 4,1 | 0,0 | 1,4 | 1,6 | 2,0 |

| Section 3 | 3,4 | 0,0 | 1,6 | 1,6 | 1,8 |

| Section 4 | 3,6 | 0,0 | 1,3 | 1,5 | 1,8 |

| All | 4,2 | 0,0 | 1,4 | 1,5 | 1,9 |

| Dist. (mm) | Max. | Min. | Med. | Moy. | RMS |

|---|---|---|---|---|---|

| Section 0 | 94,0 | 0,4 | 28,5 | 27,7 | 32,6 |

| Section 1 | 149,6 | 0,0 | 23,6 | 30,9 | 42,8 |

| Section 2 | 226,1 | 0,1 | 18,6 | 33,6 | 51,3 |

| Section 3 | 179,3 | 0,1 | 18,6 | 30,0 | 44,7 |

| Section 4 | 114,3 | 0,1 | 19,8 | 23,0 | 29,1 |

| All | 226,1 | 0,0 | 20,6 | 29,0 | 40,9 |

| Dist. (mm) | Max. | Min. | Med. | Moy. | RMS |

|---|---|---|---|---|---|

| Left 3D points | 220.5 | 0.0 | 11.0 | 13.9 | 19.9 |

| to left plane | |||||

| Right 3D points | 113.9 | 0.0 | 20.4 | 24.1 | 30.5 |

| to right plane | |||||

| Left 3D points | 778.4 | 492.1 | 581.9 | 584.9 | 585.2 |

| to right plane | |||||

| Right 3D points | 691.1 | 476.9 | 585.2 | 583.6 | 584.4 |

| to left plane |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).