Submitted:

23 April 2024

Posted:

25 April 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Sequence Deep Learning Based Text Classification Methods

2.2. Graph Neural Network Based Text Classification Methods

3. Method

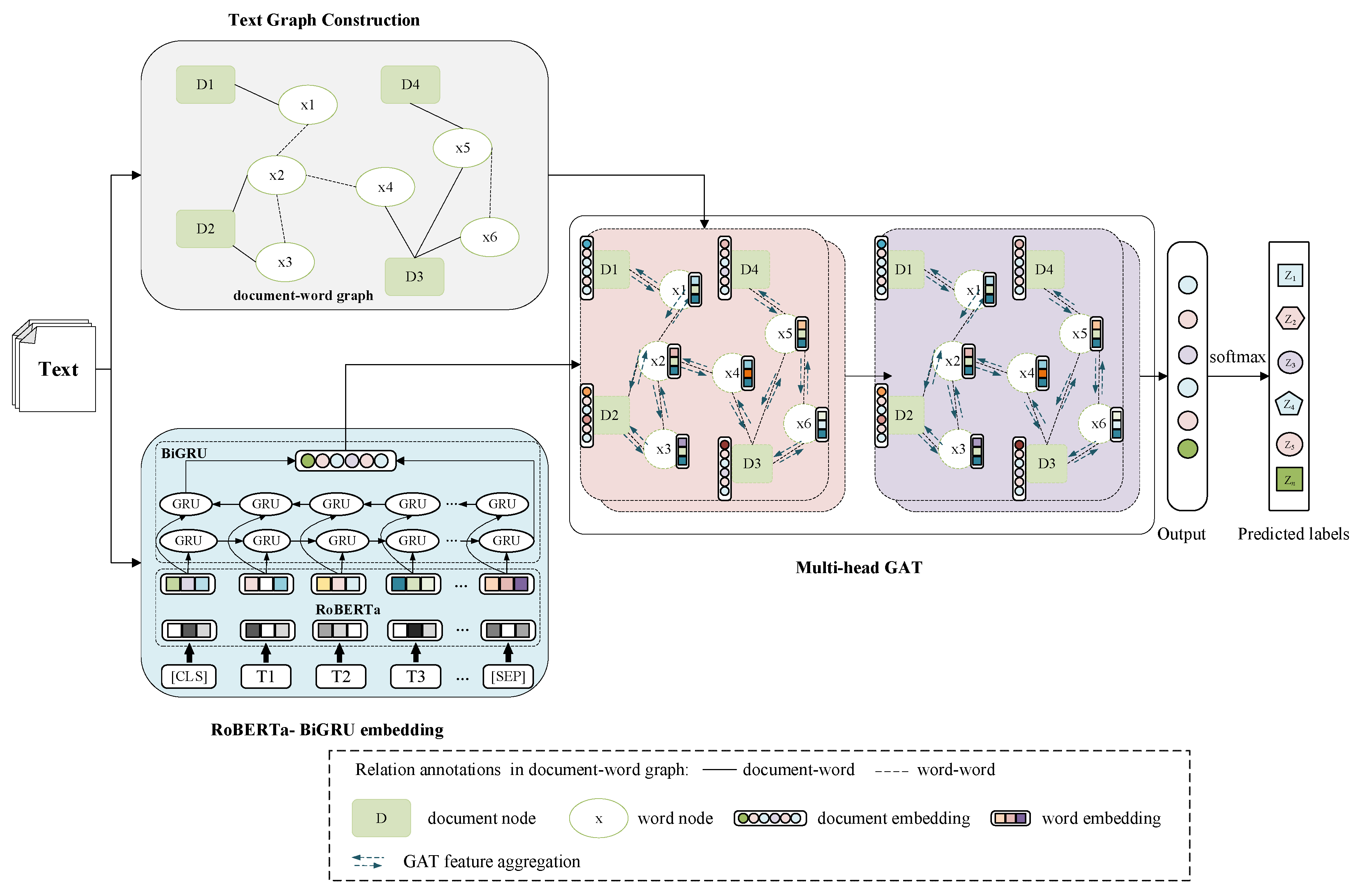

3.1. Model Architecture

3.2. Text Graph Construction

3.3. RoBERTa-BiGRU Embedding

3.4. Multi-Head GAT Model

4. Experiments

4.1. Datasets

4.2. Implementation Details

4.3. Experimental Metrics

4.4. Experimental Results and Analysis

4.4.1. Accuracy of Different Algorithms

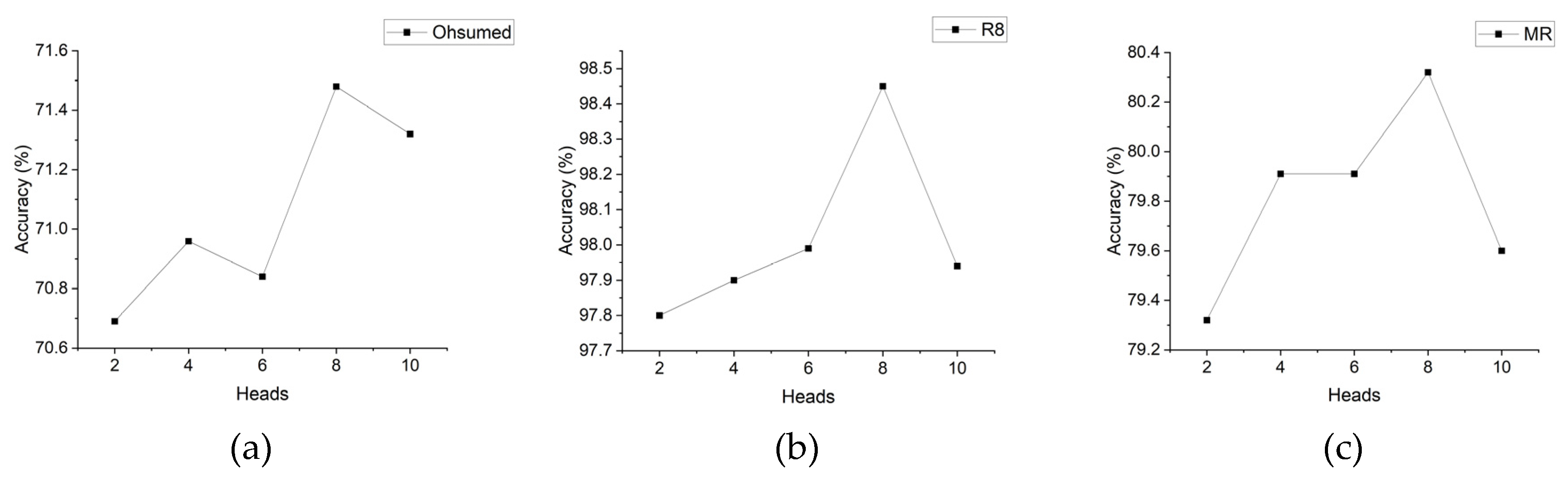

4.4.2. Comparison of the Accuracy of Models with Different Head Sizes

5. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kowsari, K.; Jafari Meimandi, K.; Heidarysafa, M.; Mendu, S.; Barnes, L.; Brown, D. Text classification algorithms: A survey. Information 2019, 10, 150. [CrossRef]

- Ahmed, H.; Traore, I.; Saad, S. Detecting opinion spams and fake news using text classification. Security and Privacy 2018, 1, e9. [CrossRef]

- Melville, P.; Gryc, W.; Lawrence, R.D. Sentiment analysis of blogs by combining lexical knowledge with text classification. In Proceedings of the Proceedings of the 15th ACM SIGKDD international conference on Knowledge discovery and data mining, 2009; pp. 1275-1284.

- Barberá, P.; Boydstun, A.E.; Linn, S.; McMahon, R.; Nagler, J. Automated text classification of news articles: A practical guide. Political Analysis 2021, 29, 19-42. [CrossRef]

- Chowdhary, K.; Chowdhary, K. Natural language processing. Fundamentals of artificial intelligence 2020, 603-649.

- Shah, K.; Patel, H.; Sanghvi, D.; Shah, M. A comparative analysis of logistic regression, random forest and KNN models for the text classification. Augmented Human Research 2020, 5, 1-16. [CrossRef]

- Liu, P.; Zhao, H.-h.; Teng, J.-y.; Yang, Y.-y.; Liu, Y.-f.; Zhu, Z.-w. Parallel naive Bayes algorithm for large-scale Chinese text classification based on spark. Journal of Central South University 2019, 26, 1-12. [CrossRef]

- Kalcheva, N.; Karova, M.; Penev, I. Comparison of the accuracy of SVM kemel functions in text classification. In Proceedings of the 2020 International Conference on Biomedical Innovations and Applications (BIA), 2020; pp. 141-145.

- Minaee, S.; Kalchbrenner, N.; Cambria, E.; Nikzad, N.; Chenaghlu, M.; Gao, J. Deep learning--based text classification: A comprehensive review. ACM computing surveys (CSUR) 2021, 54, 1-40. [CrossRef]

- Kim, Y. Convolutional Neural Networks for Sentence Classification. In Proceedings of the Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 2014; pp. 1746–1751.

- Liu, P.; Qiu, X.; Huang, X. Recurrent neural network for text classification with multi-task learning. In Proceedings of the Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence, 2016; pp. 2873-2879.

- Wu, L.; Chen, Y.; Shen, K.; Guo, X.; Gao, H.; Li, S.; Pei, J.; Long, B. Graph neural networks for natural language processing: A survey. Foundations and Trends® in Machine Learning 2023, 16, 119-328. [CrossRef]

- Zhou, J.; Cui, G.; Hu, S.; Zhang, Z.; Yang, C.; Liu, Z.; Wang, L.; Li, C.; Sun, M. Graph neural networks: A review of methods and applications. AI open 2020, 1, 57-81. [CrossRef]

- Wu, S.; Sun, F.; Zhang, W.; Xie, X.; Cui, B. Graph neural networks in recommender systems: A survey. ACM Computing Surveys 2022, 55, 1-37. [CrossRef]

- Xiong, J.; Xiong, Z.; Chen, K.; Jiang, H.; Zheng, M. Graph neural networks for automated de novo drug design. Drug discovery today 2021, 26, 1382-1393. [CrossRef]

- Strokach, A.; Becerra, D.; Corbi-Verge, C.; Perez-Riba, A.; Kim, P.M. Fast and flexible protein design using deep graph neural networks. Cell systems 2020, 11, 402-411. [CrossRef]

- Liao, W.; Zeng, B.; Liu, J.; Wei, P.; Cheng, X.; Zhang, W. Multi-level graph neural network for text sentiment analysis. Computers & Electrical Engineering 2021, 92, 107096. [CrossRef]

- Xie, Q.; Huang, J.; Du, P.; Peng, M.; Nie, J.-Y. Graph topic neural network for document representation. In Proceedings of the Proceedings of the Web Conference 2021, 2021; pp. 3055-3065.

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805 2018. [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692 2019. [CrossRef]

- Yu, Y.; Si, X.; Hu, C.; Zhang, J. A review of recurrent neural networks: LSTM cells and network architectures. Neural computation 2019, 31, 1235-1270. [CrossRef]

- Xu, G.; Meng, Y.; Qiu, X.; Yu, Z.; Wu, X. Sentiment analysis of comment texts based on BiLSTM. Ieee Access 2019, 7, 51522-51532. [CrossRef]

- Tai, K.S.; Socher, R.; Manning, C.D. Improved semantic representations from tree-structured long short-term memory networks. In Proceedings of the Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2015; pp. 1556-1566.

- Lei, T.; Zhang, Y.; Wang, S.I.; Dai, H.; Artzi, Y. Simple recurrent units for highly parallelizable recurrence. arXiv preprint arXiv:1709.02755 2017. [CrossRef]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proceedings of the IEEE 1998, 86, 2278-2324.

- Grefenstette, E.; Blunsom, P. A convolutional neural network for modelling sentences. In Proceedings of the The 52nd Annual Meeting of the Association for Computational Linguistics, Baltimore, Maryland, 2014; pp. 655-665.

- Liu, J.; Chang, W.-C.; Wu, Y.; Yang, Y. Deep learning for extreme multi-label text classification. In Proceedings of the Proceedings of the 40th international ACM SIGIR conference on research and development in information retrieval, 2017; pp. 115-124.

- Zhang, X.; Zhao, J.; LeCun, Y. Character-level convolutional networks for text classification. Advances in neural information processing systems 2015, 28.

- Yao, L.; Mao, C.; Luo, Y. Graph convolutional networks for text classification. In Proceedings of the Proceedings of the AAAI conference on artificial intelligence, 2019; pp. 7370-7377.

- Liu, X.; You, X.; Zhang, X.; Wu, J.; Lv, P. Tensor graph convolutional networks for text classification. In Proceedings of the Proceedings of the AAAI conference on artificial intelligence, 2020; pp. 8409-8416.

- Defferrard, M.; Bresson, X.; Vandergheynst, P. Convolutional neural networks on graphs with fast localized spectral filtering. Advances in neural information processing systems 2016, 29.

- Wu, F.; Souza, A.; Zhang, T.; Fifty, C.; Yu, T.; Weinberger, K. Simplifying graph convolutional networks. In Proceedings of the International conference on machine learning, 2019; pp. 6861-6871.

- Hu, L.; Yang, T.; Shi, C.; Ji, H.; Li, X. Heterogeneous graph attention networks for semi-supervised short text classification. In Proceedings of the Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing (EMNLP-IJCNLP), 2019; pp. 4821-4830.

- Zhang, Y.; Yu, X.; Cui, Z.; Wu, S.; Wen, Z.; Wang, L. Every document owns its structure: Inductive text classification via graph neural networks. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020; pp. 334-339.

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 2014. [CrossRef]

| Datasets | #Docs | #Training set | #Test set | #Classes |

|---|---|---|---|---|

| Ohsumed | 7,400 | 3,357 | 4,043 | 23 |

| R8 | 7,674 | 5,485 | 2,189 | 8 |

| MR | 10,662 | 7,108 | 3,554 | 2 |

| Model | Ohsumed | R8 | MR |

| FastText | 57.70 | 96.13 | 75.14 |

| PV-DBOW | 46.65 | 85.87 | 61.09 |

| CNN | 58.44 | 95.71 | 77.75 |

| BiLSTM | 49.27 | 96.31 | 77.68 |

| TextGCN | 68.36 | 97.07 | 76.74 |

| SGC | 68.53 | 97.23 | 75.91 |

| Graph-CNN | 63.86 | 96.99 | 77.22 |

| TextING | 70.42 | 98.04 | 79.82 |

| TensorGCN | 70.11 | 98.04 | 77.91 |

| RB-GAT | 71.48 | 98.45 | 80.32 |

| Model | Ohsumed | R8 | MR |

| FastText | 54.88 | 90.64 | 76.22 |

| PV-DBOW | 43.07 | 81.31 | 57.81 |

| CNN | 53.16 | 88.76 | 75.60 |

| BiLSTM | 48.66 | 88.55 | 75.26 |

| TextGCN | 61.45 | 92.88 | 75.58 |

| SGC | 65.34 | 93.50 | 71.90 |

| Graph-CNN | 59.49 | 92.90 | 74.04 |

| TextING | 66.51 | 93.85 | 75.41 |

| TensorGCN | 66.78 | 93.99 | 73.52 |

| RB-GAT | 67.90 | 94.84 | 76.17 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).