Submitted:

18 March 2024

Posted:

20 March 2024

You are already at the latest version

Abstract

Keywords:

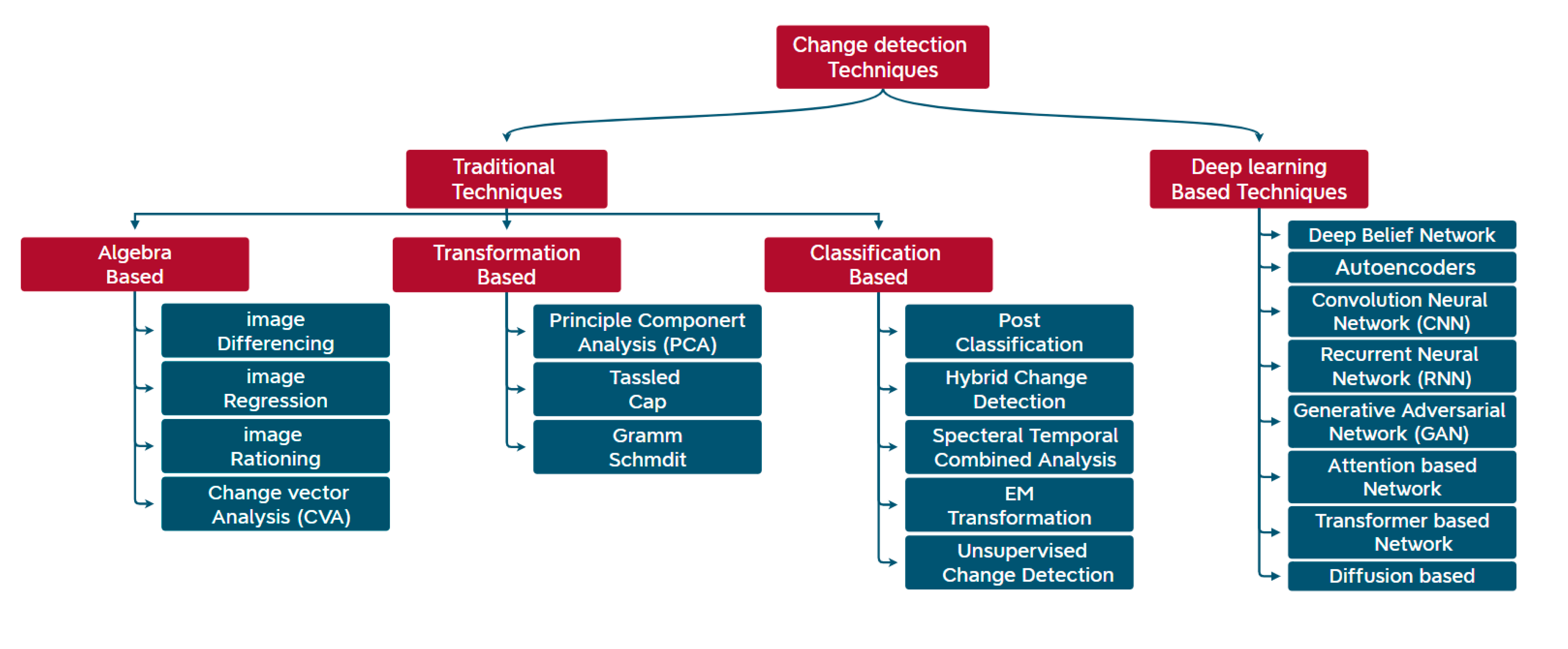

1. Introduction

2. Background and Literature Review

2.1. Deep Learning for Change Detection

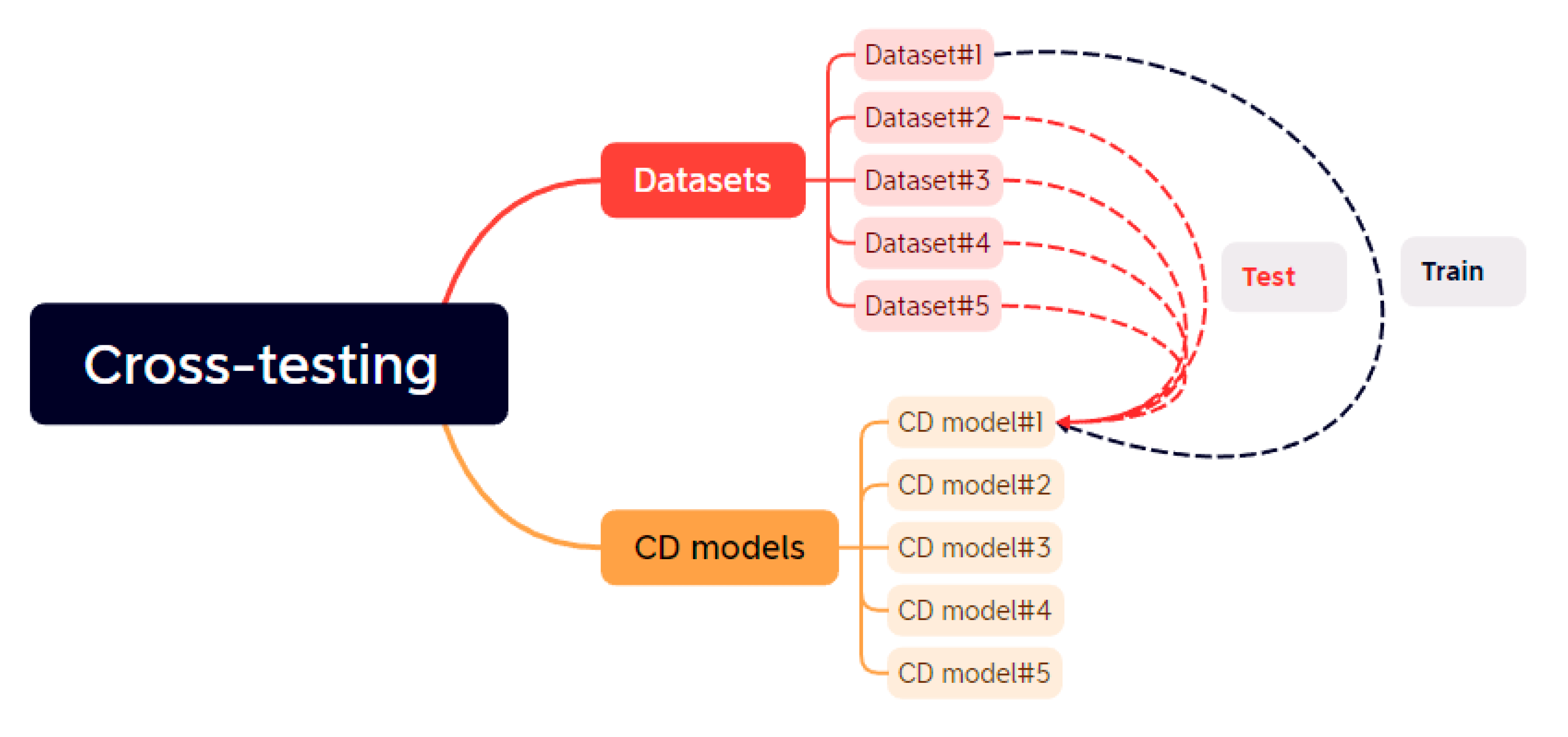

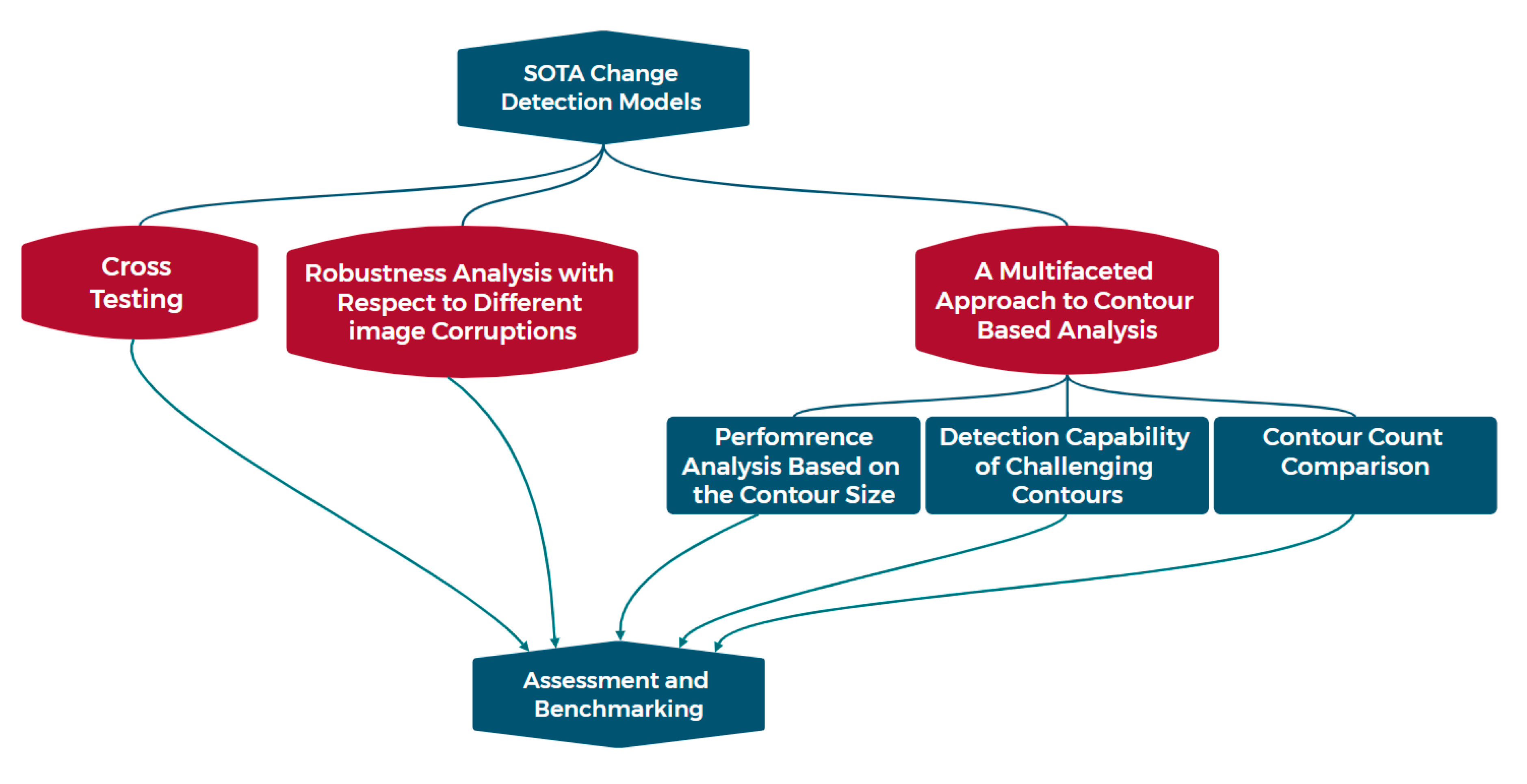

3. Cross-Testing and Robustness Analysis of Discrete-Point Models Framework

3.1. The proposed Framework’s Significance

3.2. Cross-Testing Pipeline

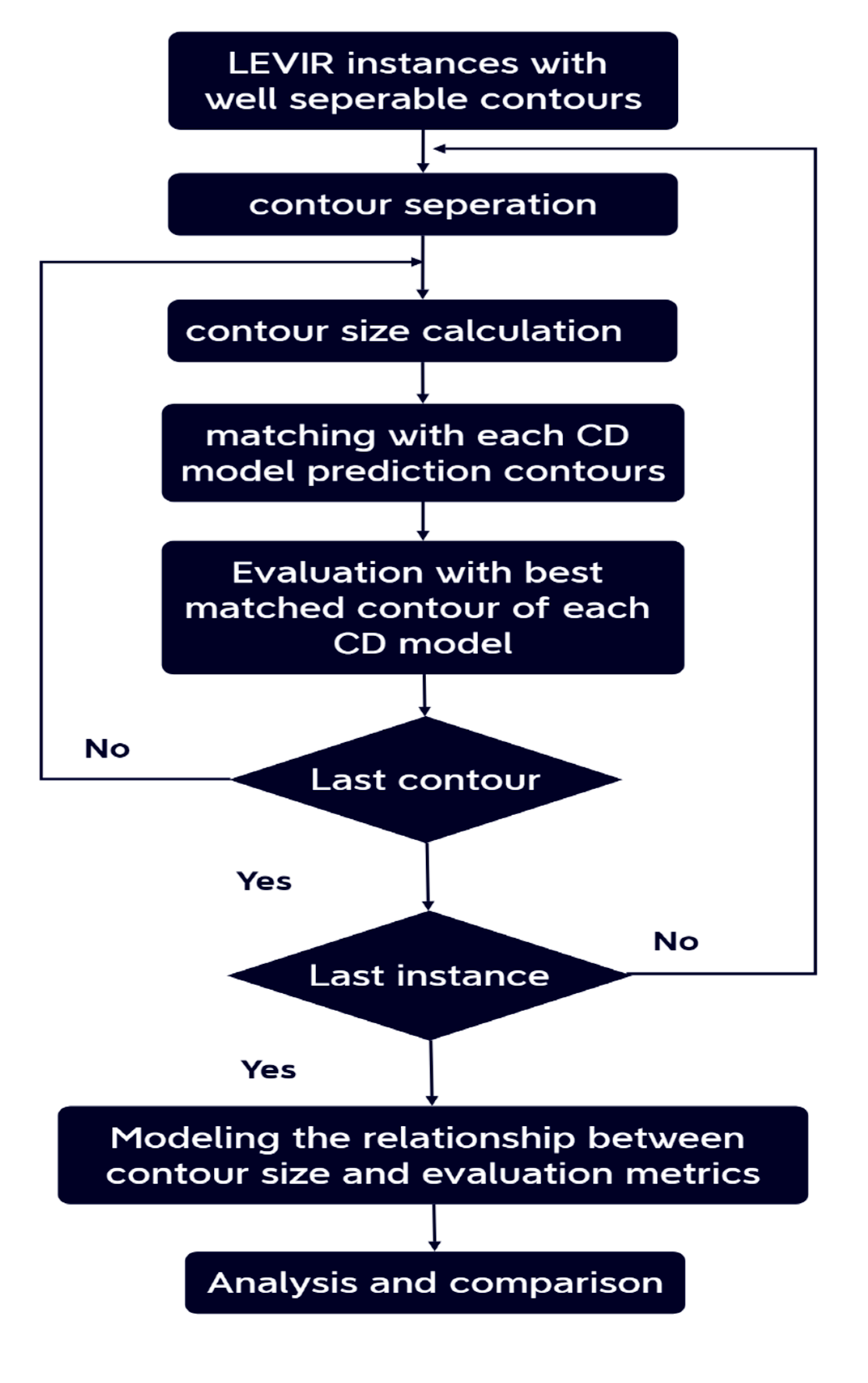

3.3. A Multifaceted Approach to Contour-Based Analysis

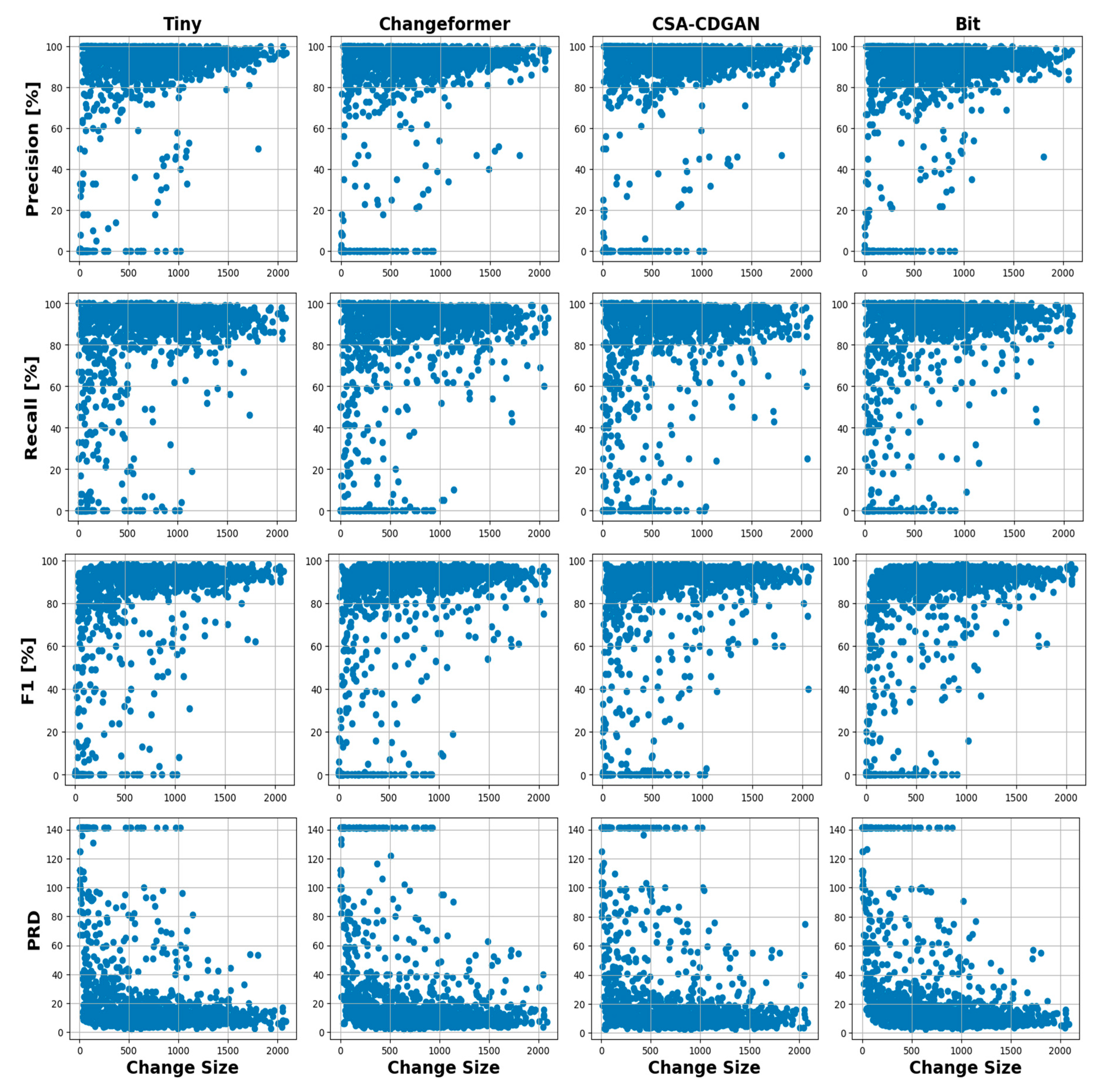

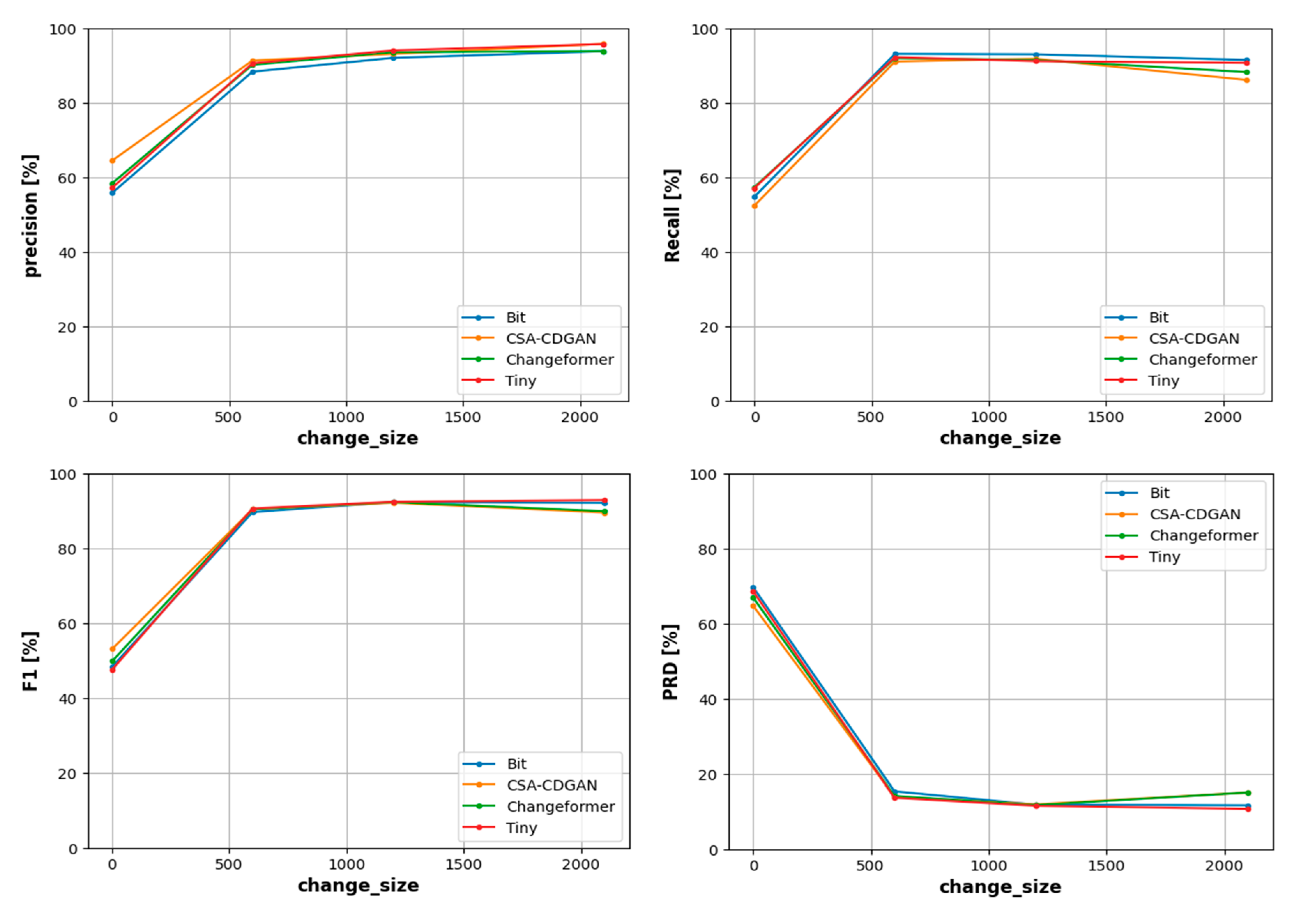

- Performance sensitivity analysis based on the change size

- Detection capability of challenging contours

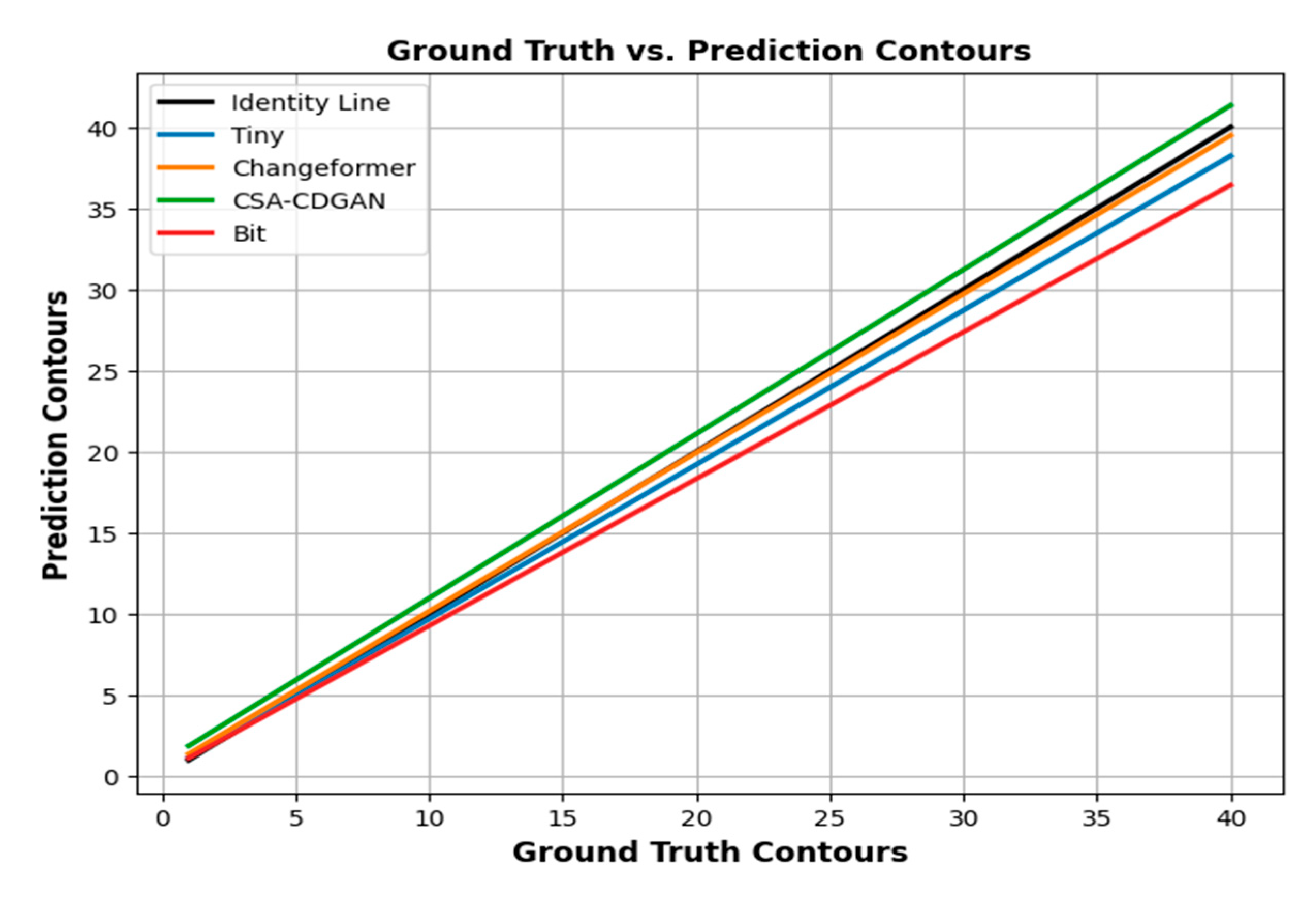

- Contour Count Comparison

- When a model concatenates contours, it treats closely connected or neighboring building outlines as a single contour. This behavior can be indicative of the model’s tendency to merge adjacent changes that share similar properties or are part of the same structural unit.

- Contour count comparison may show that the model generates a smaller number of contours compared to ground truth when changes are closely related. This suggests that the model has a propensity to merge contours that are spatially connected.

- Conversely, when a model divides contours, it identifies subtle differences within closely situated building contours, which can lead to multiple smaller contours where the ground truth may have a single larger contour.

- In this case, contour count comparison may reveal that the model produces more contours than the ground truth for changes that are in close proximity or have intricate shapes. This indicates that the model dissects building outlines into multiple smaller components.

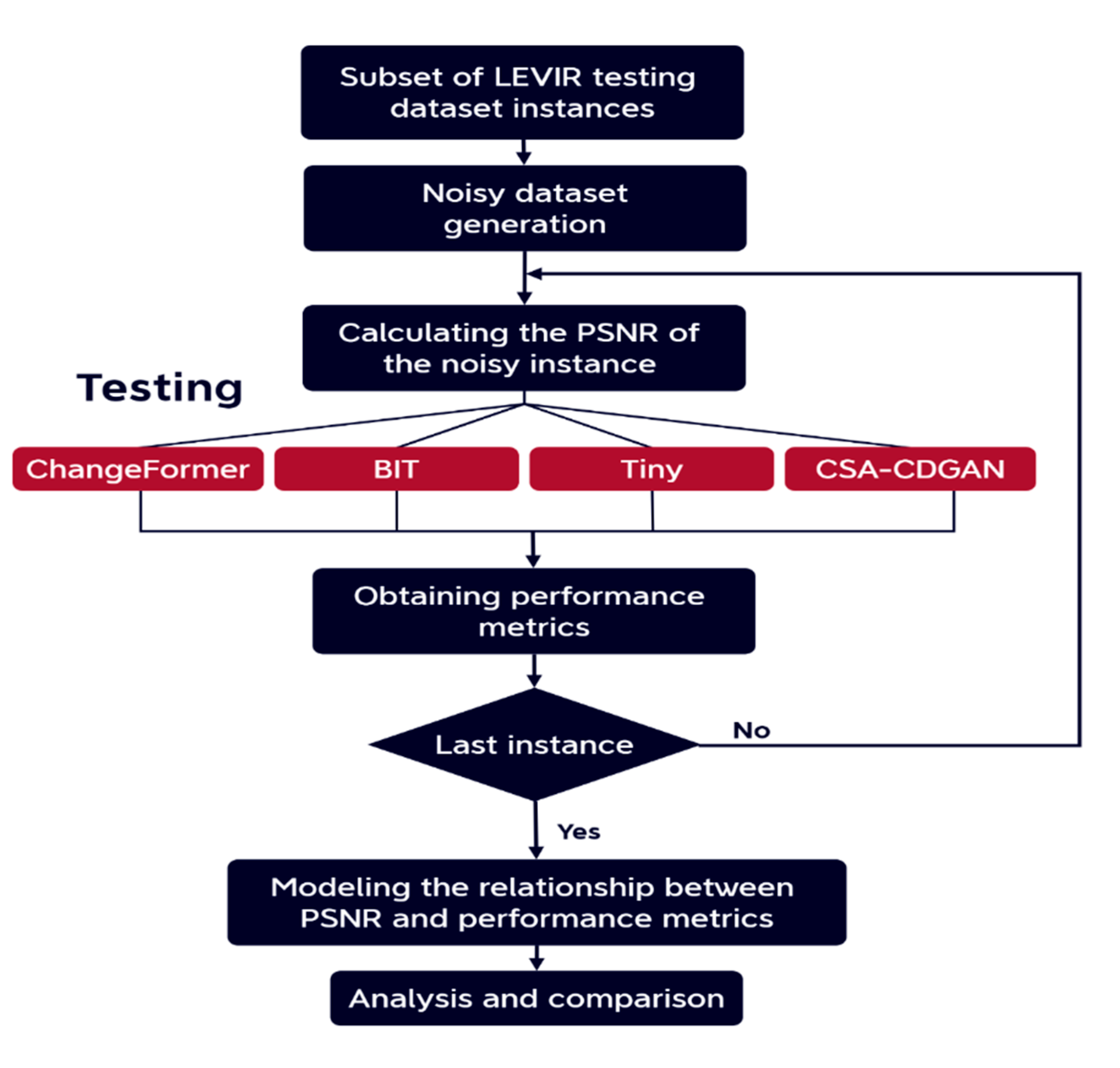

- Robustness Analysis with Respect to Different Image Corruptions

4. Materials and Methods

4.1. Data Collection: Datasets

- LEVIR-CD [43]: is a valuable and large-scale dataset in the CD field. consists of 637 sets of Google Earth images with a resolution of 0.5 meters/pixel and a size of 1024 × 1024. Each set of images contains the image before and after the building changes and a corresponding label. The original images had been divided into 256 × 256 size images without overlap. The dataset was divided into a training set of 7120 images, 1024 for validation, and 2048 for testing.

- WHU-CD [44]: is a public building CD dataset consisting of a pair of aerial images of size 32507 × 15354 and has a high resolution of 0.075 meters/pixel. A default cropping of 256 × 256 was applied to it without overlap obtaining a training set of 6096 images, 762 in the validation set, and a test set of 762.

- S2Looking [45]:is a building change detection dataset that contains large-scale side-looking satellite images captured at varying off-nadir angles. It consists of 5,000 bi-temporal image pairs with a size of 1024*1024 and resolution ranging from (0.5 ~ 0.8 m/pixel) of rural locations over the world and more than 65,920 annotated change instances.

- CDD [46]: is a widely used dataset for change detection and it contains 11 pairs of remote sensing images obtained by Google Earth in different seasons with a spatial resolution ranging from 3 to 100 cm per pixel. After cropping the original image pairs into the same size of 256×256 pixels, thus generate 10000 image pairs for training, 3000 image pairs for validation, and 3000 image pairs for testing.

- Cropland Change Detection (CLCD) Dataset [47]: consists of 600 pairs of 512 × 512 bi-temporal images that were collected by Gaofen-2 in Guangdong Province, China with a spatial resolution of 0.5 to 2 m, each group of samples is composed of two images of 512 × 512 and a corresponding binary label of cropland change. A default cropping of 256 × 256 was applied to it without overlap.

4.2. State-of-the-Art (SOTA) CD Models

- BIT [48]: is a transformer-based feature fusion method, which combines CNN with transformer encoder-decoder structure. That allows for capturing effective and meaningful global contextual relationships over time and space.

- SNUNet [49]: This is a multilevel feature concatenation method that combines NestedUNet with a Siamese network. it also uses an Ensemble Channel Attention Module for deep supervision.

- Changeformer [50]: Transformer-based change detection method, which leverages the hierarchically structured transformer encoder and multilayer perception (MLP) decoder in a Siamese network architecture to efficiently render multiscale long-range details required for accurate CD.

- Tiny [51]: is a lightweight and effective CD model that uses a Siamese Unet to Exploit low-level features in a globally temporal and locally spatial way. It adopts a novel space-semantic attention mechanism called MIX and Attention Mask Block (MAMB).

- CSA-CDGAN [44]: is a CD network that uses a Generative Adversarial Network to detect changes and a channel self-attention module to improve the network’s performance.

4.3. Cross-Testing Pipeline

4.4. A Multifaceted Approach to Contour-Based Analysis

4.4.1. Performance Sensitivity Analysis Based on the Changed Contour Size

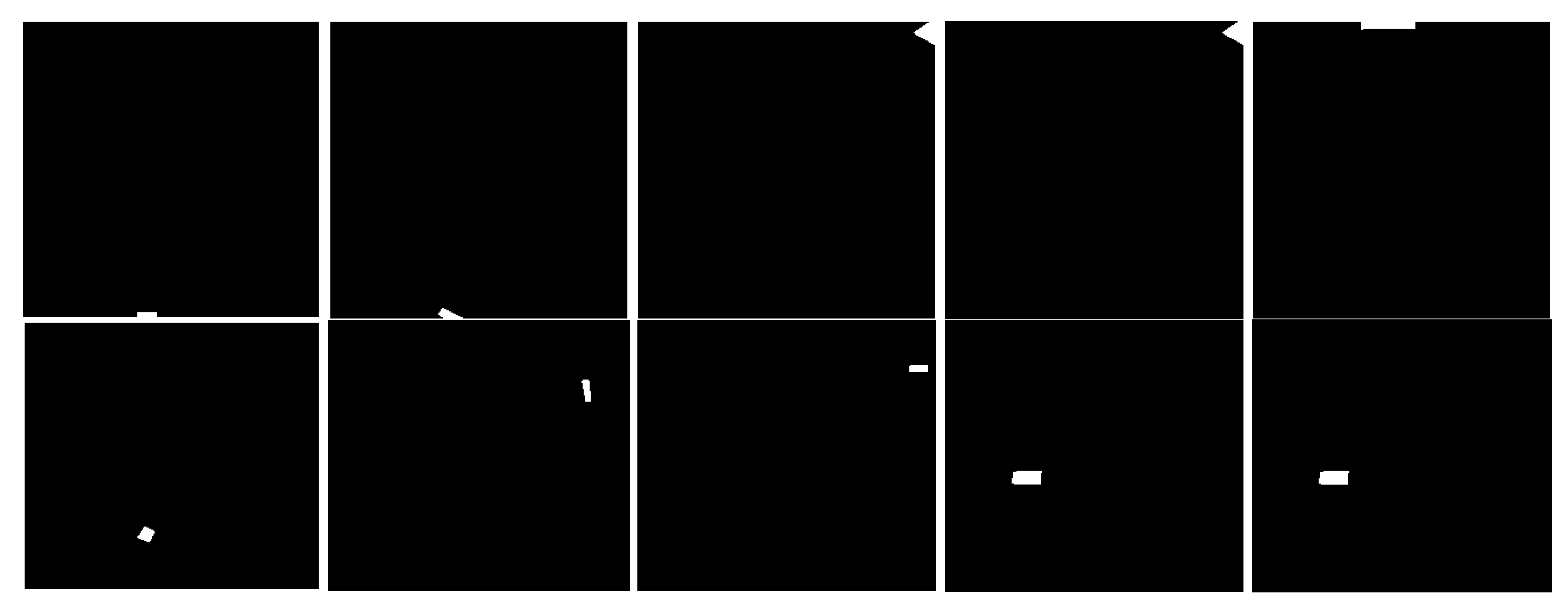

4.4.2. Detection Capability of the Challenging Contours

4.4.3. Contour Count Comparison

4.5. Robustness Analysis with Respect to Different Image Corruptions

- Gaussian Noise: Gaussian Noise is a type of statistical noise characterized by a probability density function following the Gaussian distribution with a mean (μ) of 0 and a standard deviation (σ) that controls the amount of noise. It can arise due to various factors, including limitations in sensors, electrical interference, or atmospheric conditions.

- Salt and Pepper Noise: Salt and Pepper Noise, also known as Impulse Noise, arises from abrupt and sharp disruptions in the image signal. It appears in the image as random occurrences of white and black pixels. This type of noise is commonly attributed to malfunctioning image sensors.

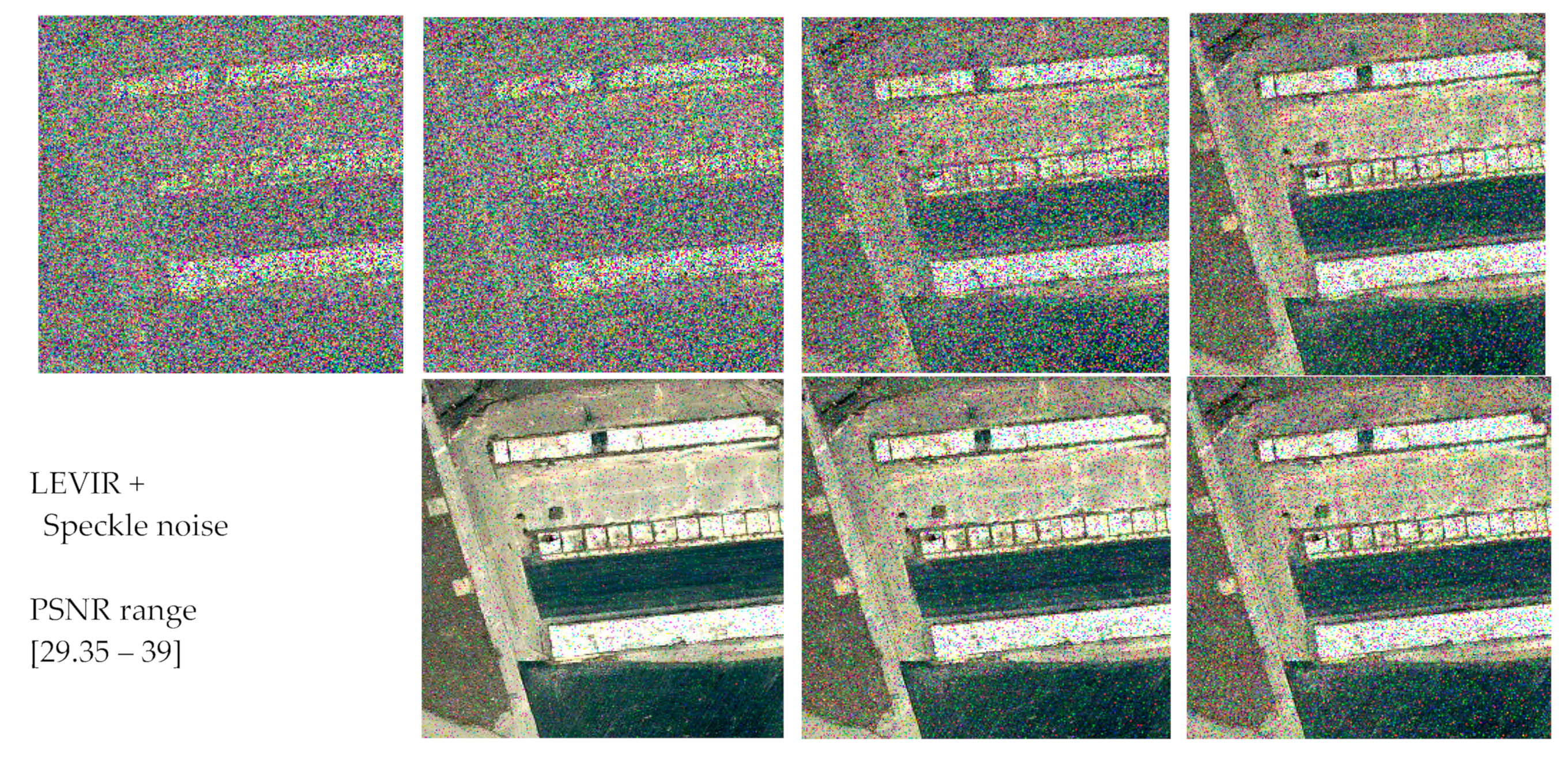

- Speckle Noise: Speckle noise is known as multiplicative noise a prevalent artifact that can impair the interpretation of optical coherence in remote-sensing images. Its presence can substantially compromise the quality and precision of the images, rendering the identification and analysis of crucial features and structures challenging

- Peak Signal-to-Noise Ratio: It is a widely used metric for evaluating the quality of a corrupted or distorted image compared to the original image. the PSNR value is a metric that quantifies the ratio between the maximum potential power of a signal and the power of the noise or distortion that exists in the signal. If we have an input image I and its corresponding noisy image H, we can calculate the PSNR between the two images using the following formula:where MAX is the maximum possible pixel value in the image (e.g., 255 for an 8-bit grayscale image), and MSE is the mean squared error between the original image I and the noisy image H. The mean squared error is calculated as the average of the squared differences between the pixel values of the two images. higher PSNR values indicate lower levels of distortion or noise, while lower PSNR values indicate higher levels of distortion or noise.PSNR = 10 * log10((MAX^2) / MSE)

5. Results and Discussion

5.1. Performance Metrics

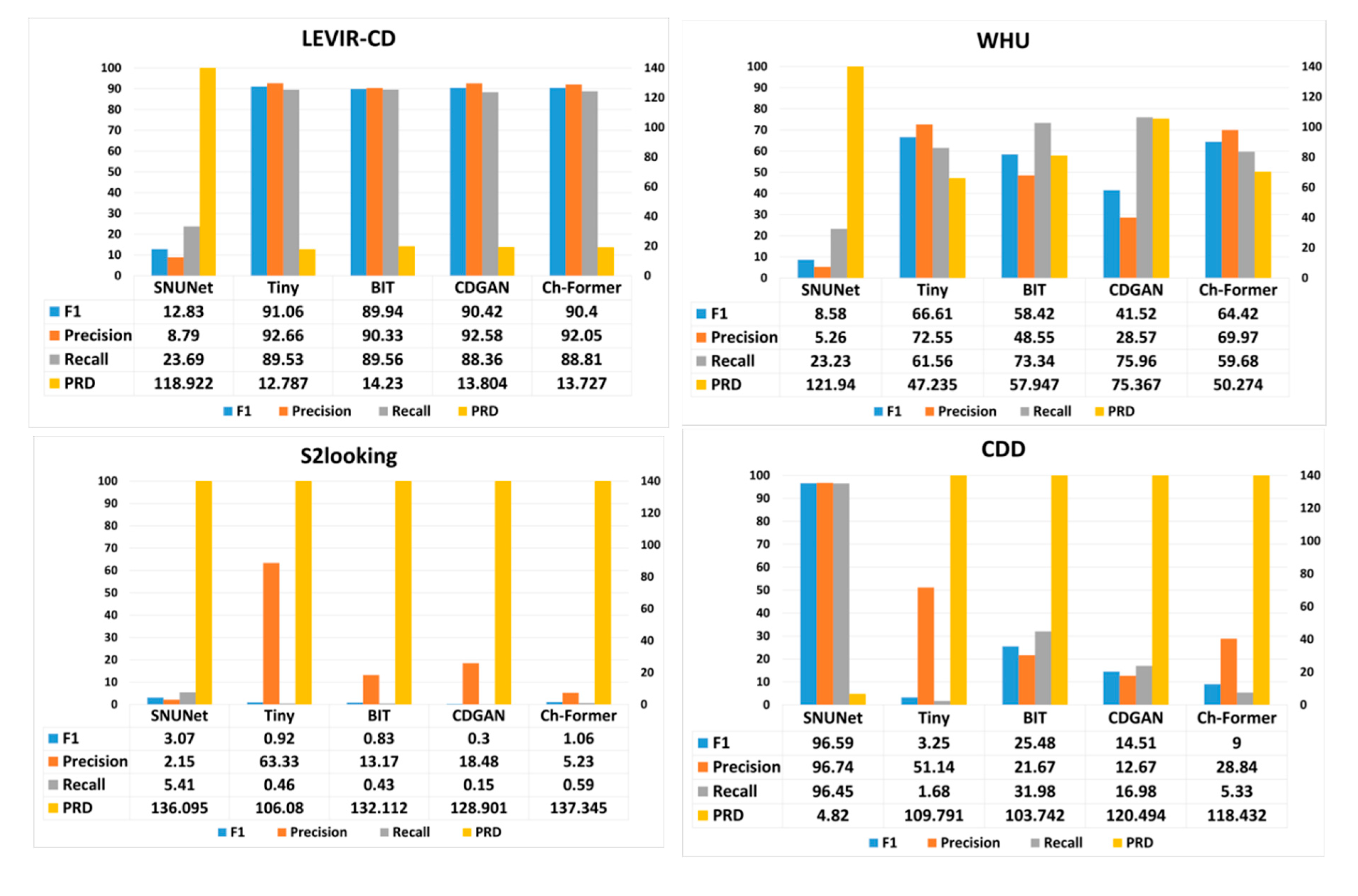

5.2. Cross-Testing Results

-

LEVIR-CD:

- Tiny model performs exceptionally well with the highest F1 score and Precision, indicating its effectiveness in identifying true changes with few false positives on this dataset.

- BIT and Ch-Former display competitive performance with relatively high Recall scores, suggesting they are good at identifying most actual changes, but possibly at the cost of more false positives, as indicated by lower Precision compared to Tiny.

- SNUNet and CDGAN lag behind the others, with CDGAN showing the lowest F1 score and Precision.

- PRD is lowest for Ch-Former, indicating a closer proximity to ideal Precision and Recall values.

-

WHU:

- Here, the models generally show lower performance compared to LEVIR-CD.

- Tiny again leads in F1 and Precision, but its Recall is surpassed by BIT, indicating BIT is better at capturing more true positives in this dataset.

- SNUNet struggles significantly across all metrics, highlighting its inability to generalize to this dataset effectively.

- CDGAN exhibits the lowest Precision, indicating a high rate of false positives.

-

S2Looking:

- This dataset poses a challenge to all models with drastically lower performance metrics across the board.

- Tiny manages the highest F1 score and Precision, albeit these scores are very low, showing that it still performs best among the models but is not particularly effective for this dataset.

- The Recall metric is extremely low for all models, especially for CDGAN, suggesting that all models fail to detect the majority of true changes in this challenging dataset.

- PRD values are significantly higher for all models, with Ch-Former having the highest, indicating a substantial deviation from ideal performance.

-

CDD:

- SNUNet excels remarkably in this context, showing near-perfect Precision and Recall, which implies it can detect almost all true changes with very few false positives.

- The other models exhibit drastically lower F1 scores and Precision, with Tiny and BIT also having low Recall.

- The PRD scores for these models are notably higher than SNUNet’s, highlighting their poorer performance on the CDD dataset.

-

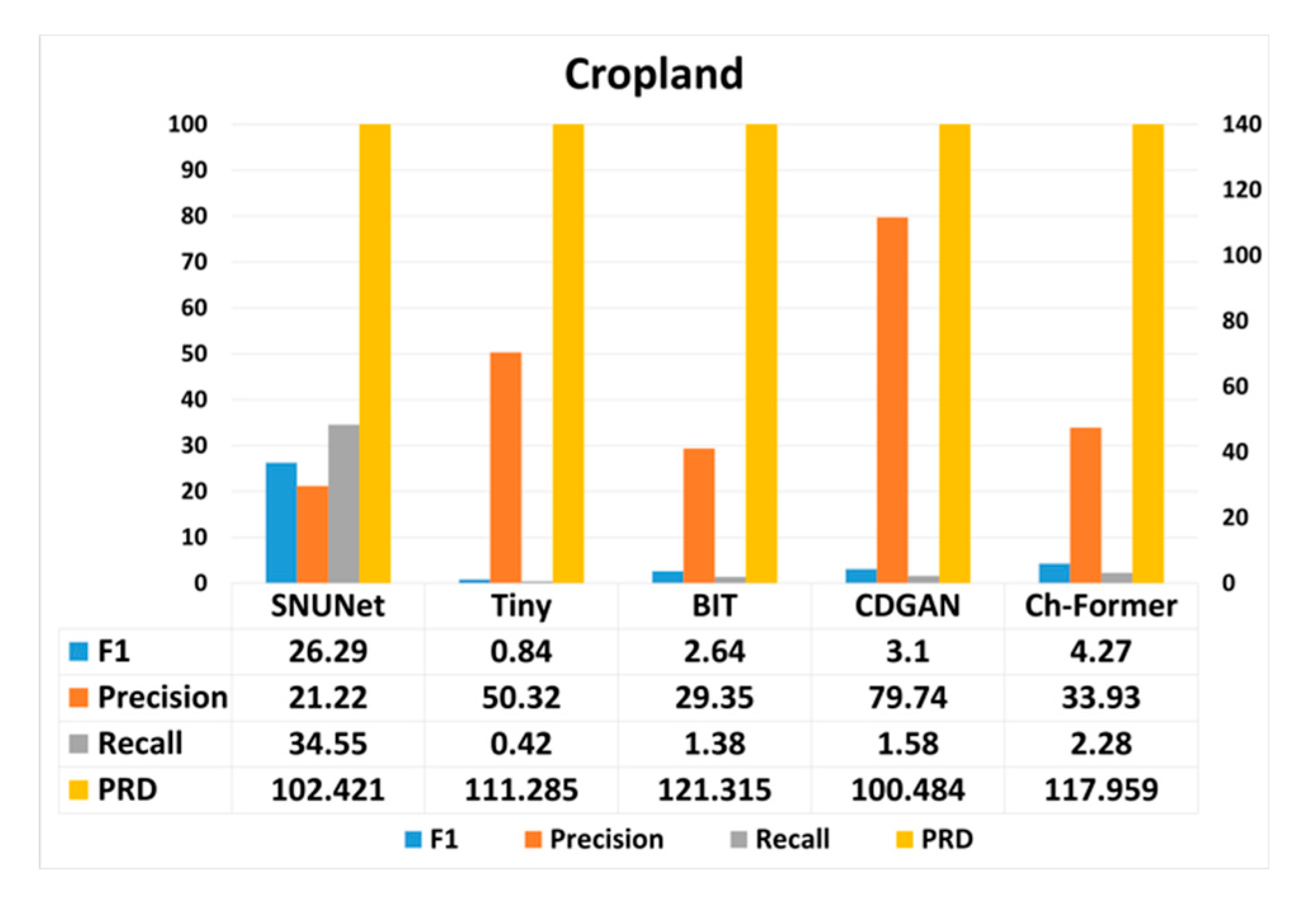

Cropland:

- All models demonstrate a decrease in performance compared to the CDD dataset, with no single model standing out significantly.

- SNUNet shows the highest F1 score and Recall but has lower Precision than CDGAN and Ch-Former.

- Tiny and BIT have very low F1 scores and Precision, indicating a struggle to correctly identify changes in this dataset.

- PRD values are high for all models, especially for Ch-Former, signaling a larger gap from optimal performance.

5.2. Contour-Based Analysis Results

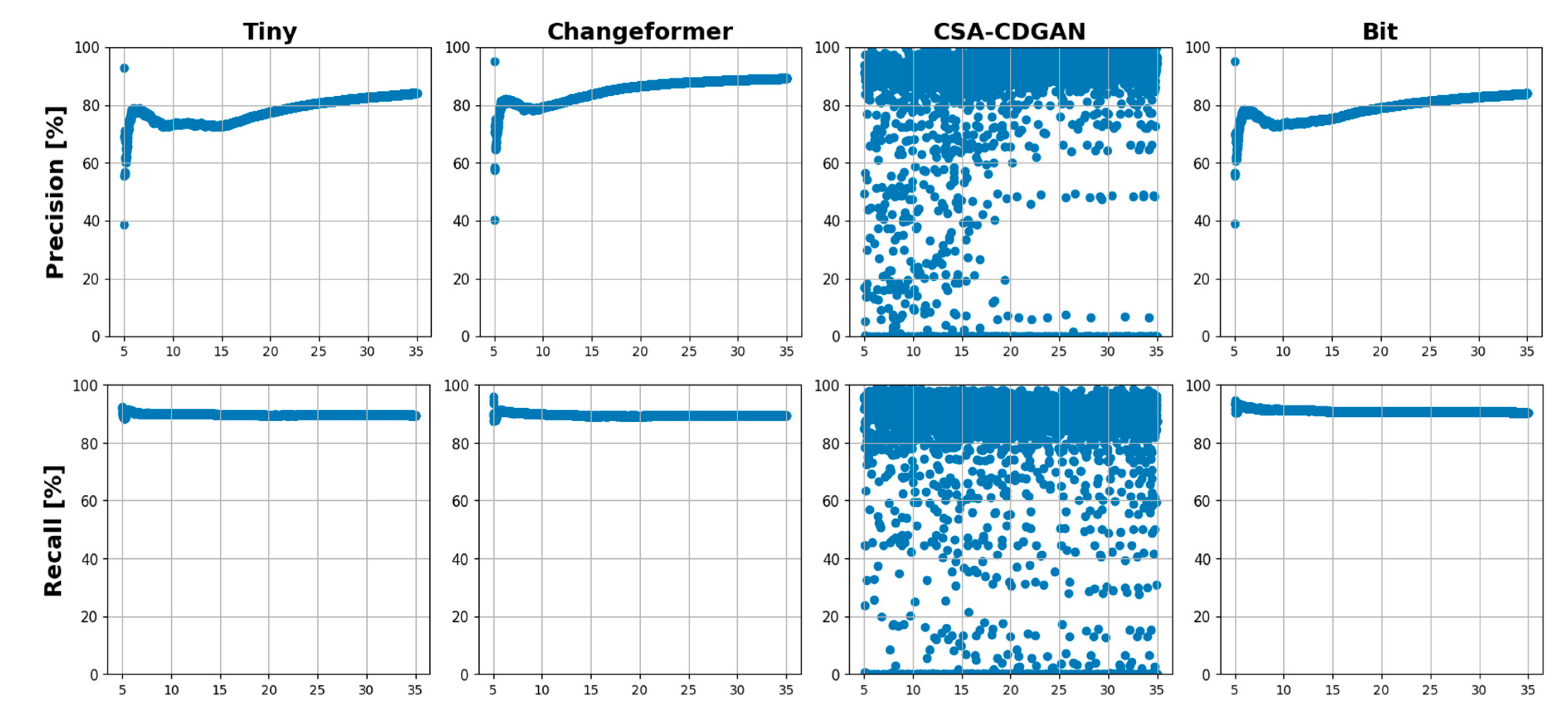

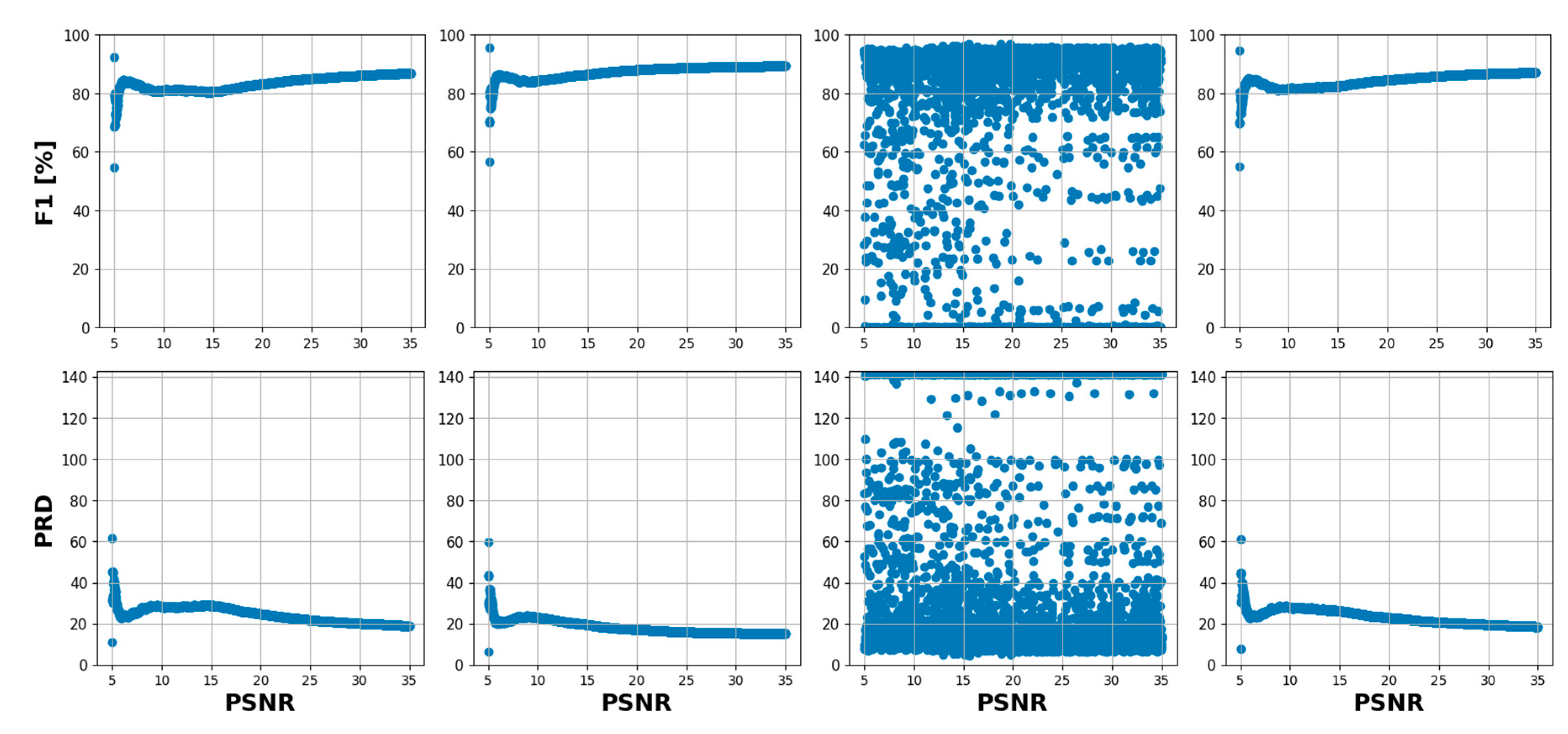

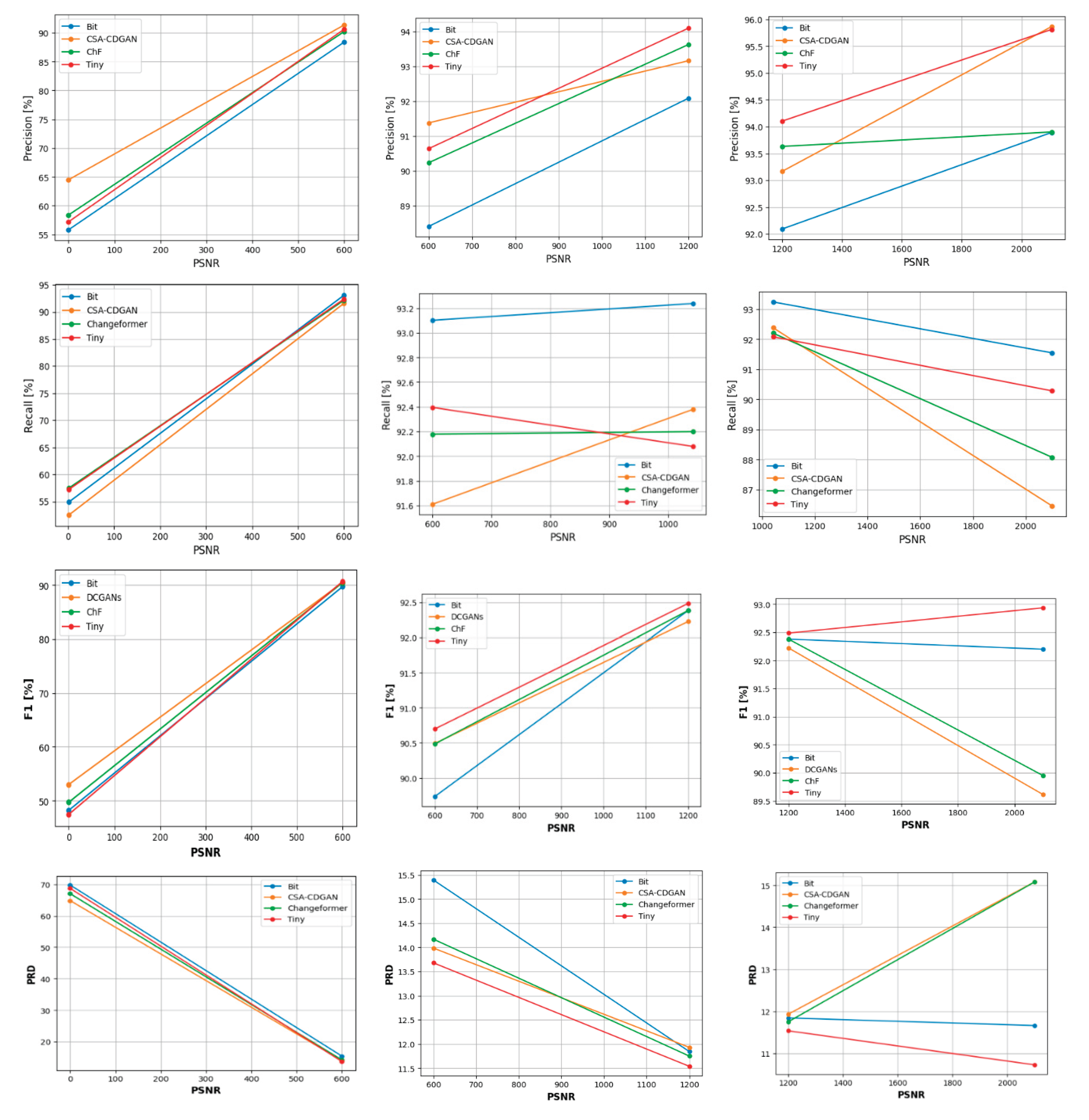

5.2.1. Performance Sensitivity Analysis Based on the Changed Contour Size

6. Analysis and Comparison

6.1. Detection Capability of Challenging Contours Results

6.2. Contour Count Comparison

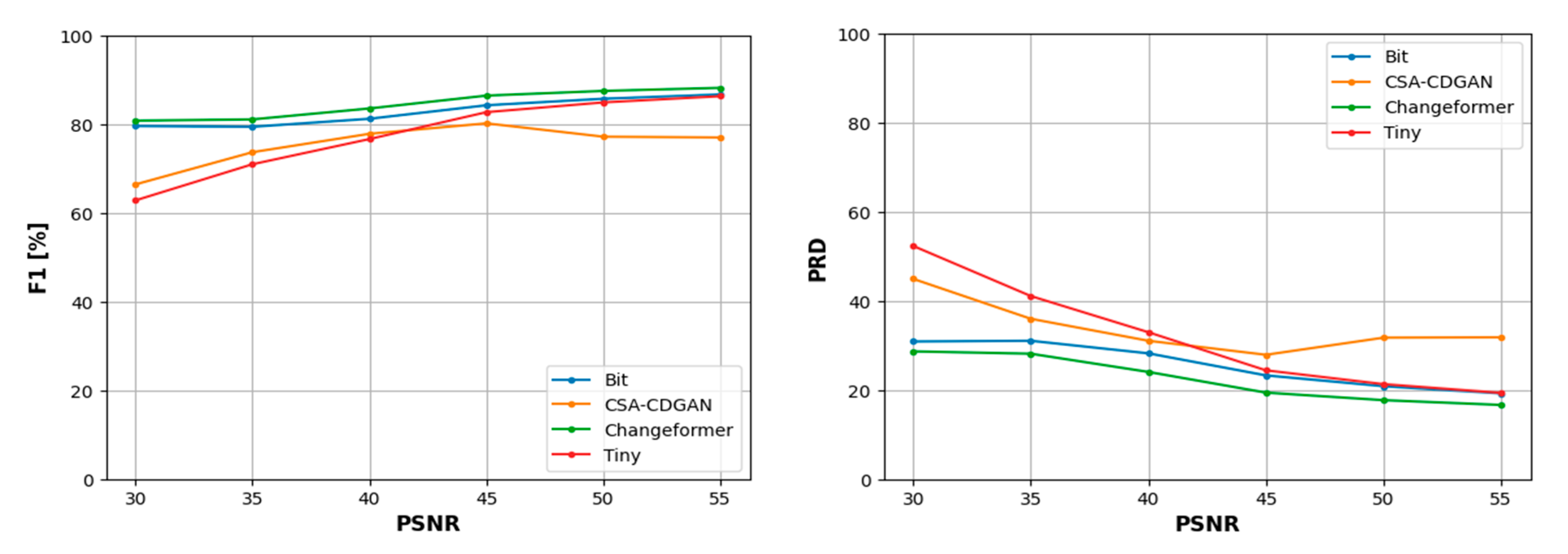

6.3. Robustness Analysis with Respect to Different Image Corruptions Results

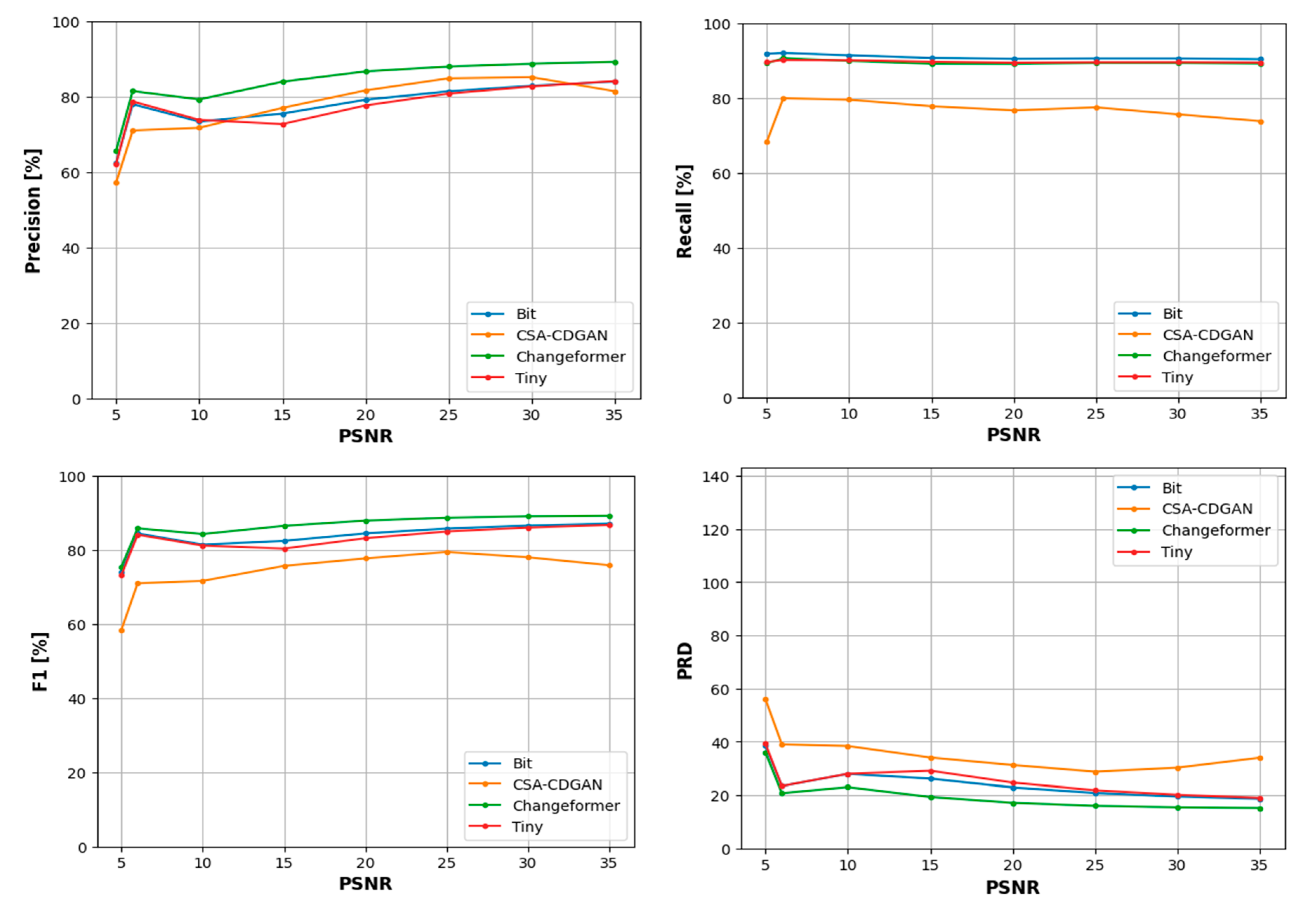

6.3.1. LEVIR with Gaussian Noise Dataset

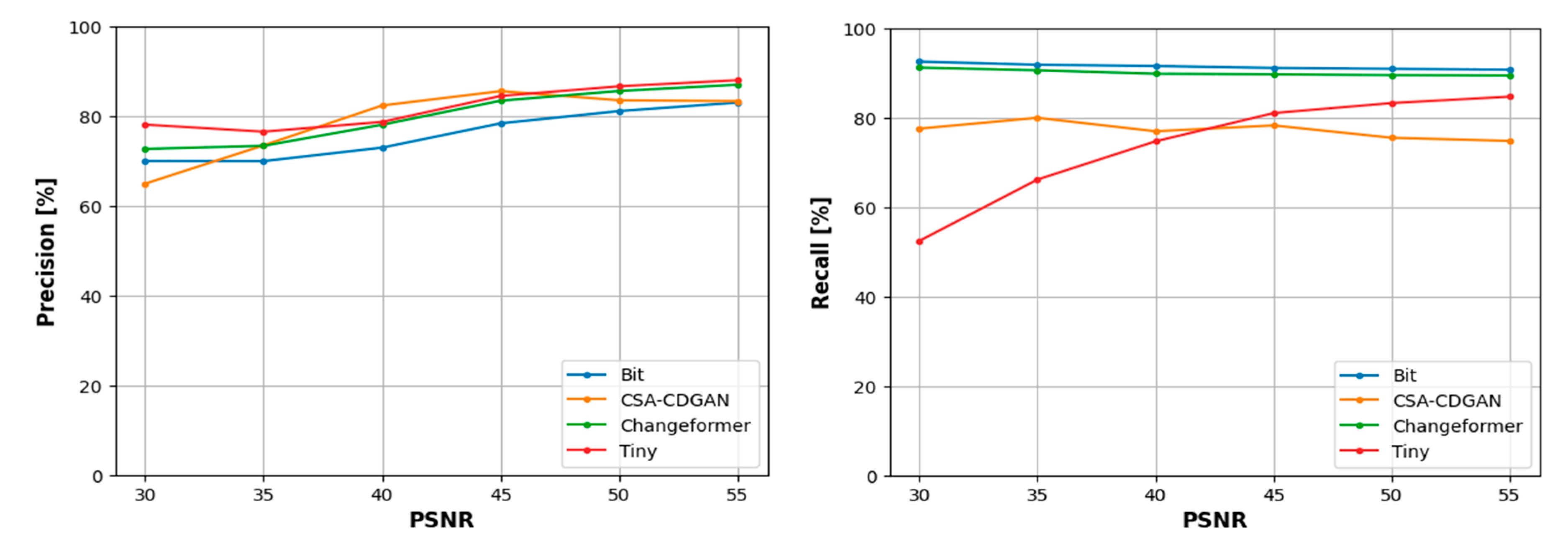

6.3.2. LEVIR with Salt and Pepper Noise Dataset

- F1 Score: Indicates the balance between precision and recall. It is a useful measure when you want to seek a balance between detecting as many positives as possible (high recall) while ensuring that the detections are as accurate as possible (high precision).

- Precision: Shows the accuracy of the positive predictions. High precision means that an algorithm returned substantially more relevant results than irrelevant ones.

- Recall: Indicates the ability of the model to find all the relevant cases within a dataset. High recall means that the algorithm returned most of the relevant results.

- Precision-Recall Distance (PRD): Represents the Euclidean distance between the precision and recall values of a model to the ideal point of (100,100) on a precision-recall curve.

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | 78.08 | 82.2 | 74.75 | 31.04 |

| Changeformer | 84.76 | 80.29 | 90.02 | 22.27 |

| CSA-CDGAN | 75.73 | 79.37 | 77.03 | 33.57 |

| Bit | 82.91 | 76.01 | 91.45 | 25.55 |

- For F1 and PRD scores: Changeformer consistently outperforms the other models, suggesting that it maintains a good balance between precision and recall and is close to the ideal values even as noise increases.

- In terms of precision: Tiny excels in the lower noise levels (higher PSNR values), but its performance varies more than CSA-CDGAN at higher noise levels. CSA-CDGAN tends to maintain more consistent precision across a wider range of PSNR values.

- Regarding recall: Bit outperforms the other models significantly across most of the PSNR range, indicating its robustness in detecting relevant changes even in noisier images.

4.4.3. LEVIR with Speckle Noise Dataset

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | -10.71 | -25.21 | 6.71 | 16.99 |

| Changeformer | -9.82 | -24.04 | 5.72 | 15.58 |

| CSA-CDGAN | -6.33 | -13.7 | 6.54 | 7.02 |

| BIT | -10.54 | -23.65 | 6.5 | 16.91 |

- At PSNRs between 29.35 and 29.5 dB, amidst the highest noise intensity, CSA-CDGAN leads with superior F1 scores, suggesting robustness against noise, while Tiny is close behind. BIT lags with the lowest scores.

- Within the PSNR range of 29.5 to 30 dB, Tiny excels, indicating its effectiveness in moderately noisy environments. BIT and Changeformer offer competitive performance, and CSA-CDGAN falls behind.

- For PSNRs from 30 to 31 dB, Changeformer rises to the top, surpassing all models, which indicates its efficient handling of this specific noise level. BIT and Tiny remain close contenders.

- When PSNRs span 31 to 39 dB, CSA-CDGAN consistently achieves the highest F1 scores, denoting its capacity to maintain change detection accuracy over a wide noise range. Changeformer also shows robust performance, while Tiny trails with lower F1 scores.

- In the highest noise bracket (29.35 - 29.5 dB), CSA-CDGAN’s precision is unrivaled, suggesting it’s less prone to false positives under severe noise conditions. Changeformer follows, and BIT exhibits the least precision.

- At PSNRs of 29.5 to 30 dB, Changeformer leads in precision, implying its discernment between noise and actual changes is optimal. Tiny is slightly behind, and CSA-CDGAN has the lowest precision, indicating more false positives.

- From PSNRs of 30 to 39 dB, CSA-CDGAN remains the precision leader, demonstrating its consistent ability to accurately detect changes across varying levels of noise, followed by Changeformer. Tiny and BIT show less precision, suggesting a higher rate of false positives.

- In the range of 29.35 to 29.5 dB, Tiny and Changeformer exhibit the best recall, indicating their strength in identifying all relevant changes. BIT shows the lowest recall, potentially missing some true changes.

- For PSNRs between 29.5 and 30 dB, Tiny tops the recall metric, Changeformer and BIT are close, and CSA-CDGAN has the lowest recall, indicating missed detections of actual changes.

- Between 30 and 31 dB, Changeformer outperforms in recall, suggesting it misses fewer actual changes at this noise level. BIT and Tiny are comparable, while CSA-CDGAN has the lowest recall.

- From 31 to 39 dB, CSA-CDGAN surpasses others in recall, showing its consistent detection of changes across this noise spectrum. Changeformer follows, with Tiny showing the lowest recall, suggesting possible overlooked changes.

- At PSNRs of 29.35 to 29.5 dB, CSA-CDGAN showcases the best PRD, indicating a closer approximation to the ideal (100,100) point. Changeformer and Tiny have similar PRDs, while BIT has the highest PRD, suggesting a greater deviation from the ideal values.

- Between 29.5 and 30 dB, Changeformer and Tiny show advantageous PRDs, while CSA-CDGAN ranks lowest, indicating a larger deviation from ideal performance.

- In the PSNR span of 30 to 31 dB, Changeformer demonstrates an excellent PRD, implying a strong balance between precision and recall. BIT and CSA-CDGAN have similar PRDs, and Tiny has the highest PRD.

- For PSNRs from 31 to 39 dB, CSA-CDGAN achieves the best PRD, with Changeformer trailing closely. Tiny has the highest PRD, suggesting its performance is furthest from the ideal.

7. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgements

Conflicts of Interest

Appendix A

- The Changeformer model generally shows a robust performance across all metrics, maintaining a high F1 score even as PSNR varies, suggesting that it achieves a good balance between precision and recall across different levels of image quality.

- The Tiny model seems to excel in the precision metric at high PSNR levels, indicating its effectiveness in correctly labeling changed pixels in higher-quality images.

- The CSA-CDGAN model demonstrates varying performance, with notable dips in certain PSNR ranges, implying sensitivity to image quality for maintaining detection accuracy.

- The BIT model shows a consistent recall across most PSNR levels but with variations in precision, which may suggest it is better at identifying all relevant changes at the cost of including more false positives.

References

- A. Asokan and J. Anitha, “Change detection techniques for remote sensing applications: a survey,” Earth Science Informatics. Springer Verlag, 2019. [CrossRef]

- Institute of Electrical and Electronics Engineers. and IEEE Geoscience and Remote Sensing Society., 2010 IEEE International Geoscience & Remote Sensing Symposium: proceedings, July 25-30, 2010, Honolulu, Hawaii, U.S.A. IEEE, 2010. 25 July.

- H. Chen and Z. Shi, “A spatial-temporal attention-based method and a new dataset for remote sensing image change detection,” Remote Sens (Basel), vol. 12, no. 10, May 2020. [CrossRef]

- A. M. El-Zeiny and H. A. Effat, “Environmental monitoring of spatiotemporal change in land use/land cover and its impact on land surface temperature in El-Fayoum governorate, Egypt,” Remote Sens Appl, vol. 8, pp. 266–277, Nov. 2017. [CrossRef]

- P. P. de Bem, O. A. de Carvalho, R. F. Guimarães, and R. A. T. Gomes, “Change detection of deforestation in the brazilian amazon using landsat data and convolutional neural networks,” Remote Sens (Basel), vol. 12, no. 6, Mar. 2020. [CrossRef]

- 6. L. Ke, Y. Lin, Z. Zeng, L. Zhang, and L. Meng, “Adaptive Change Detection with Significance Test,” IEEE Access, vol. 6, pp. 27442–27450, Feb. 2018. [CrossRef]

- L. T. Luppino, F. M. Bianchi, G. Moser, and S. N. Anfinsen, “Unsupervised Image Regression for Heterogeneous Change Detection,” Sep. 2019. [CrossRef]

- S. Liu, L. Bruzzone, F. Bovolo, M. Zanetti, and P. Du, “Sequential spectral change vector analysis for iteratively discovering and detecting multiple changes in hyperspectral images,” IEEE Transactions on Geoscience and Remote Sensing, vol. 53, no. 8, pp. 4363–4378, Aug. 2015. [CrossRef]

- R. D. Johnson and E. S. Kasischke, “Change vector analysis: A technique for the multispectral monitoring of land cover and condition,” Int J Remote Sens, vol. 19, no. 3, pp. 411–426, 1998. [CrossRef]

- T. Celik, “Unsupervised change detection in satellite images using principal component analysis and κ-means clustering,” IEEE Geoscience and Remote Sensing Letters, vol. 6, no. 4, pp. 772–776, Oct. 2009. [CrossRef]

- Massarelli, “Fast detection of significantly transformed areas due to illegal waste burial with a procedure applicable to landsat images,” Int J Remote Sens, vol. 39, no. 3, pp. 754–769, Feb. 2018. [CrossRef]

- R. Vázquez-Jiménez, R. Romero-Calcerrada, C. J. Novillo, R. N. Ramos-Bernal, and P. Arrogante-Funes, “Applying the chi-square transformation and automatic secant thresholding to Landsat imagery as unsupervised change detection methods,” J Appl Remote Sens, vol. 11, no. 1, p. 016016, Feb. 2017. [CrossRef]

- R. A. A. Raja, V. Anand, A. S. Kumar, S. Maithani, and V. A. Kumar, “Wavelet Based Post Classification Change Detection Technique for Urban Growth Monitoring,” Journal of the Indian Society of Remote Sensing, vol. 41, no. 1, pp. 35–43, Mar. 2013. [CrossRef]

- S. S. Luque, “Evaluating temporal changes using Multi-Spectral Scanner and Thematic Mapper data on the landscape of a natural reserve: the New Jersey Pine Barrens, a case study,” 2000. [Online]. Available: http://www.tandf.co.uk/journals.

- S. J. Kristof, D. K. Scholz, P. E. Anuta, and S. A. Momin, “R. A. WEISMILLER Change Detection in Coastal Zone Environments* Four techniques were used to analyze Landsat MSS temporal data in order to detect areas of change of the Matagorda Bay region of Texas.

- J. Prendes, M. Chabert, F. Pascal, A. Giros, and J. Y. Tourneret, “A new multivariate statistical model for change detection in images acquired by homogeneous and heterogeneous sensors,” IEEE Transactions on Image Processing, vol. 24, no. 3, pp. 799–812, Mar. 2015. [CrossRef]

- E. Kalinicheva, Di. Ienco, J. Sublime, and M. Trocan, “Unsupervised Change Detection Analysis in Satellite Image Time Series Using Deep Learning Combined with Graph-Based Approaches,” IEEE J Sel Top Appl Earth Obs Remote Sens, vol. 13, pp. 1450–1466, 2020. [CrossRef]

- S. De, D. Pirrone, F. Bovolo, L. Bruzzone, and A. Bhattacharya, “A NOVEL CHANGE DETECTION FRAMEWORK BASED ON DEEP LEARNING FOR THE ANALYSIS OF MULTI-TEMPORAL POLARIMETRIC SAR IMAGES.

- IEEE Computational Intelligence Society, International Neural Network Society, Institute of Electrical and Electronics Engineers, and B. C.) IEEE World Congress on Computational Intelligence (2016: Vancouver, 2016 International Joint Conference on Neural Networks (IJCNN): 24–29 July 2016, Vancouver, Canada.

- G. Liu, L. Li, L. Jiao, Y. Dong, and X. Li, “Stacked Fisher autoencoder for SAR change detection,” Pattern Recognit, vol. 96, Dec. 2019. [CrossRef]

- J. Fan, K. Lin, and M. Han, “A Novel Joint Change Detection Approach Based on Weight-Clustering Sparse Autoencoders,” IEEE J Sel Top Appl Earth Obs Remote Sens, vol. 12, no. 2, pp. 685–699, Feb. 2019. [CrossRef]

- J. Liu, M. Gong, K. Qin, and P. Zhang, “A Deep Convolutional Coupling Network for Change Detection Based on Heterogeneous Optical and Radar Images,” IEEE Trans Neural Netw Learn Syst, vol. 29, no. 3, pp. 545–559, Mar. 2018. [CrossRef]

- R. C. Daudt, B. Le Saux, and A. Boulch, “Fully Convolutional Siamese Networks for Change Detection,” Oct. 2018, [Online]. Available: http://arxiv.org/abs/1810.08462. 0846.

- M. Gong, J. Zhao, J. Liu, Q. Miao, and L. Jiao, “Change Detection in Synthetic Aperture Radar Images Based on Deep Neural Networks,” IEEE Trans Neural Netw Learn Syst, vol. 27, no. 1, pp. 125–138, Jan. 2016. [CrossRef]

- K. L. de Jong and A. S. Bosman, “Unsupervised Change Detection in Satellite Images Using Convolutional Neural Networks,” Dec. 2018, [Online]. Available: http://arxiv.org/abs/1812.05815. 0581.

- H. Chen, C. Wu, B. Du, L. Zhang, and L. Wang, “Change Detection in Multisource VHR Images via Deep Siamese Convolutional Multiple-Layers Recurrent Neural Network,” IEEE Transactions on Geoscience and Remote Sensing, vol. 58, no. 4, pp. 2848–2864, Apr. 2020. [CrossRef]

- H. Lyu, H. Lu, and L. Mou, “Learning a transferable change rule from a recurrent neural network for land cover change detection,” Remote Sens (Basel), vol. 8, no. 6, 2016. [CrossRef]

- Institute of Electrical and Electronics Engineers and IEEE Geoscience and Remote Sensing Society, 2019 IEEE International Geoscience & Remote Sensing Symposium: proceedings: July 28–August 2, 2019, Yokohama, Japan. 28 July.

- Hou, Q., Liu, H. Wang, and Y. Wang, “From W-Net to CDGAN: Bi-temporal Change Detection via Deep Learning Techniques,” Mar. 2020. [CrossRef]

- Ren, X., Wang, J. Gao, and H. Chen, “Unsupervised Change Detection in Satellite Images with Generative Adversarial Network,” Sep. 2020. [CrossRef]

- M. A. Lebedev, Y. V. Vizilter, O. V. Vygolov, V. A. Knyaz, and A. Y. Rubis, “Change detection in remote sensing images using conditional adversarial networks,” in International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences - ISPRS Archives, International Society for Photogrammetry and Remote Sensing, 18, pp. 565–571. 20 May. [CrossRef]

- Chen, M. Jiang, S. Du, B. Xu, and J. Wang, “ADS-Net:An Attention-Based deeply supervised network for remote sensing image change detection,” International Journal of Applied Earth Observation and Geoinformation, vol. 101, Sep. 2021. [CrossRef]

- J. Chen et al., “DASNet: Dual Attentive Fully Convolutional Siamese Networks for Change Detection in High-Resolution Satellite Images,” IEEE J Sel Top Appl Earth Obs Remote Sens, vol. 14, pp. 1194–1206, 2021. [CrossRef]

- H. Jiang, X. Hu, K. Li, J. Zhang, J. Gong, and M. Zhang, “PGA-SiamNet: Pyramid feature-based attention-guided siamese network for remote sensing orthoimagery building change detection,” Remote Sens (Basel), vol. 12, no. 3, Feb. 2020. [CrossRef]

- Z. Wang, F. Jiang, T. Liu, F. Xie, and P. Li, “Attention-based spatial and spectral network with PCA-guided self-supervised feature extraction for change detection in hyperspectral images,” Remote Sens (Basel), vol. 13, no. 23, Dec. 2021. [CrossRef]

- H. Chen, Z. Qi, and Z. Shi, “Remote Sensing Image Change Detection with Transformers,” Feb. 2021. [CrossRef]

- T. Yan, Z. Wan, and P. Zhang, “Fully Transformer Network for Change Detection of Remote Sensing Images,” Oct. 2022, [Online]. Available: http://arxiv.org/abs/2210. 0075.

- Q. Ke and P. Zhang, “Hybrid-TransCD: A Hybrid Transformer Remote Sensing Image Change Detection Network via Token Aggregation,” ISPRS Int J Geoinf, vol. 11, no. 4, Apr. 2022. [CrossRef]

- T. Lei et al., “Lightweight Structure-aware Transformer Network for VHR Remote Sensing Image Change Detection,” Jun. 2023, [Online]. Available: http://arxiv.org/abs/2306. 0198.

- X. Zhang, S. Tian, G. Wang, H. Zhou, and L. Jiao, “DiffUCD:Unsupervised Hyperspectral Image Change Detection with Semantic Correlation Diffusion Model,” 23, [Online]. Available: http://arxiv.org/abs/2305. 20 May 1241.

- W. G. C. Bandara, N. G. Nair, and V. M. Patel, “DDPM-CD: Remote Sensing Change Detection using Denoising Diffusion Probabilistic Models,” Jun. 2022, [Online]. Available: http://arxiv.org/abs/2206. 1189.

- Y. Wen, X. Ma, X. Zhang, and M.-O. Pun, “GCD-DDPM: A Generative Change Detection Model Based on Difference-Feature Guided DDPM,” Jun. 2023, [Online]. Available: http://arxiv.org/abs/2306. 0342.

- H. Chen and Z. Shi, “A spatial-temporal attention-based method and a new dataset for remote sensing image change detection,” Remote Sens (Basel), vol. 12, no. 10, 20. 20 May. [CrossRef]

- S. Ji, S. Wei, and M. Lu, “Fully Convolutional Networks for Multisource Building Extraction from an Open Aerial and Satellite Imagery Data Set,” IEEE Transactions on Geoscience and Remote Sensing, vol. 57, no. 1, pp. 574–586, Jan. 2019. [CrossRef]

- L. Shen et al., “S2Looking: A Satellite Side-Looking Dataset for Building Change Detection,” Jul. 2021. [CrossRef]

- M. A. Lebedev, Y. V. Vizilter, O. V. Vygolov, V. A. Knyaz, and A. Y. Rubis, “Change detection in remote sensing images using conditional adversarial networks,” in International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences - ISPRS Archives, International Society for Photogrammetry and Remote Sensing, 18, pp. 565–571. 20 May. [CrossRef]

- M. Liu, Z. Chai, H. Deng, and R. Liu, “A CNN-Transformer Network With Multiscale Context Aggregation for Fine-Grained Cropland Change Detection,” IEEE J Sel Top Appl Earth Obs Remote Sens, vol. 15, pp. 4297–4306, 2022. [CrossRef]

- H. Chen, Z. Qi, and Z. Shi, “Remote Sensing Image Change Detection with Transformers,” Feb. 2021. [CrossRef]

- S. Fang, K. Li, J. Shao, and Z. Li, “SNUNet-CD: A Densely Connected Siamese Network for Change Detection of VHR Images,” IEEE Geoscience and Remote Sensing Letters, vol. 19, 2022. [CrossRef]

- W. G. C. Bandara and V. M. Patel, “A Transformer-Based Siamese Network for Change Detection,” Jan. 2022, [Online]. Available: http://arxiv.org/abs/2201. 0129.

- Codegoni, G., Lombardi, and A. Ferrari, “TINYCD: A (Not So) Deep Learning Model For Change Detection,” Jul. 2022, [Online]. Available: http://arxiv.org/abs/2207. 1315.

- Z. Wang, Y. Zhang, L. Luo, and N. Wang, “CSA-CDGAN: channel self-attention-based generative adversarial network for change detection of remote sensing images,” Neural Comput Appl, vol. 34, no. 24, pp. 21999–22013, Dec. 2022. [CrossRef]

- Cheng, G., Huang, Y., Li, X., Lyu, S., Xu, Z., Zhao, Q. and Xiang, S., 2023. Change detection methods for remote sensing in the last decade: A comprehensive review. arXiv preprint arXiv:2305.05813, arXiv:2305.05813.

- Parelius, Eleonora Jonasova. "A review of deep-learning methods for change detection in multispectral remote sensing images." Remote Sensing 15, no. 8 (2023): 2092.

- Barkur, Rahasya, Devishi Suresh, Shyam Lal, C. Sudhakar Reddy, and P. G. Diwakar. "Rscdnet: A robust deep learning architecture for change detection from bi-temporal high resolution remote sensing images." IEEE Transactions on Emerging Topics in Computational Intelligence 7, no. 2 (2022): 537-551.

- Paul, Josephina. "Change Detection by Deep Learning Models." In 2022 IEEE International Women in Engineering (WIE) Conference on Electrical and Computer Engineering (WIECON-ECE), pp. 323-326. IEEE, 2022.

- Walatkiewicz, Jonathan, and Omar Darwish. "A Survey on Drone Cybersecurity and the Application of Machine Learning on Threat Emergence." In International Conference on Advances in Computing Research, pp. 523-532. Cham: Springer Nature Switzerland, 2023.

- Abdelsalam, Emad, Omar Darwish, Ola Karajeh, Fares Almomani, Dirar Darweesh, Sanad Kiswani, Abdullah Omar, and Malek Alkisrawi. "A classifier to detect best mode for Solar Chimney Power Plant system." Renewable Energy 197 (2022): 244-256.

- Darwish, Omar, Ala Al-Fuqaha, Muhammad Anan, and Nidal Nasser. "The role of hierarchical entropy analysis in the detection and time-scale determination of covert timing channels." In 2015 International Wireless Communications and Mobile Computing Conference (IWCMC), pp. 153-159. IEEE, 2015.

- Alshattnawi, Sawsan, and Anas MR AlSobeh. "A cloud-based IoT smart water distribution framework utilising BIP component: Jordan as a model." International Journal of Cloud Computing 13, no. 1 (2024): 25-41.

- AlSobeh, Anas. "OSM: Leveraging Model Checking for Observing Dynamic behaviors in Aspect-Oriented Applications." Online Journal of Communication and Media Technologies, 13, no. 4. (2023) pp. 1-18. Cham: Springer Nature Switzerland, 2023.

- Alsobeh, Anas, and Amani Shatnawi. "Integrating data-driven security, model checking, and self-adaptation for IoT systems using BIP components: A conceptual proposal model." In International Conference on Advances in Computing Research, pp. 533-549. Cham: Springer Nature Switzerland, 2023.

- Alsobeh, Anas MR, Aws Abed Al Raheem Magableh, and Emad M. AlSukhni. "Runtime reusable weaving model for cloud services using aspect-oriented programming: the security-related aspect." International Journal of Web Services Research (IJWSR) 15, no. 1 (2018): 71-88.

| Parameter | Count | Mean | Std | Min | 25% | 50% | 75% | Max |

|---|---|---|---|---|---|---|---|---|

| Size of contour (pixels) | 1682 | 769 | 435 | 2 | 479 | 756 | 1039 | 2086 |

| Changed Contour Size | Number of Pixels |

|---|---|

| Small | 0 - 600 |

| Medium | 600 - 1200 |

| Large | 1200 - 2100 |

| Noisy dataset | Number of instances | Range of PSNR (dB) |

|---|---|---|

| Gaussian LEVIR | 2919 | 5 – 35 |

| salt–pepper LEVIR | 1849 | 30 – 55 |

| speckle LEVIR | 6179 | 30 – 35 |

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | 85.17 | 89.06 | 82.79 | 20.67 |

| Changeformer | 85.36 | 90.18 | 82.54 | 20.28 |

| CSA-CDGAN | 78.45 | 84.00 | 76.29 | 30.30 |

| BIT | 85.14 | 87.17 | 84.06 | 20.78 |

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | 83.3 | 77.82 | 89.76 | 24.5 |

| Changeformer | 87.25 | 85.21 | 89.5 | 18.28 |

| CSA-CDGAN | 76.53 | 79.96 | 77.67 | 32.59 |

| Bit | 84.27 | 78.67 | 90.86 | 23.28 |

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | -1.87 | -11.24 | 6.97 | 3.83 |

| Changeformer | 1.89 | -4.97 | 6.96 | -2 |

| CSA-CDGAN | -1.92 | -4.04 | 1.38 | 2.29 |

| BIT | -0.87 | -8.5 | 6.8 | 2.5 |

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | -7.09 | -6.86 | -8.04 | 10.37 |

| Changeformer | -0.6 | -9.89 | 7.48 | 1.99 |

| CSA-CDGAN | -2.72 | -4.63 | 0.74 | 3.27 |

| Bit | -2.23 | -11.16 | 7.39 | 4.77 |

| CD model | F1 | Precision | Recall | PRD |

|---|---|---|---|---|

| Tiny | 74.46 | 63.85 | 89.5 | 37.66 |

| Changeformer | 75.54 | 66.14 | 88.26 | 35.86 |

| CSA-CDGAN | 72.12 | 70.3 | 82.83 | 37.32 |

| Bit | 74.6 | 63.52 | 90.56 | 37.69 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).