Submitted:

11 March 2024

Posted:

13 March 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

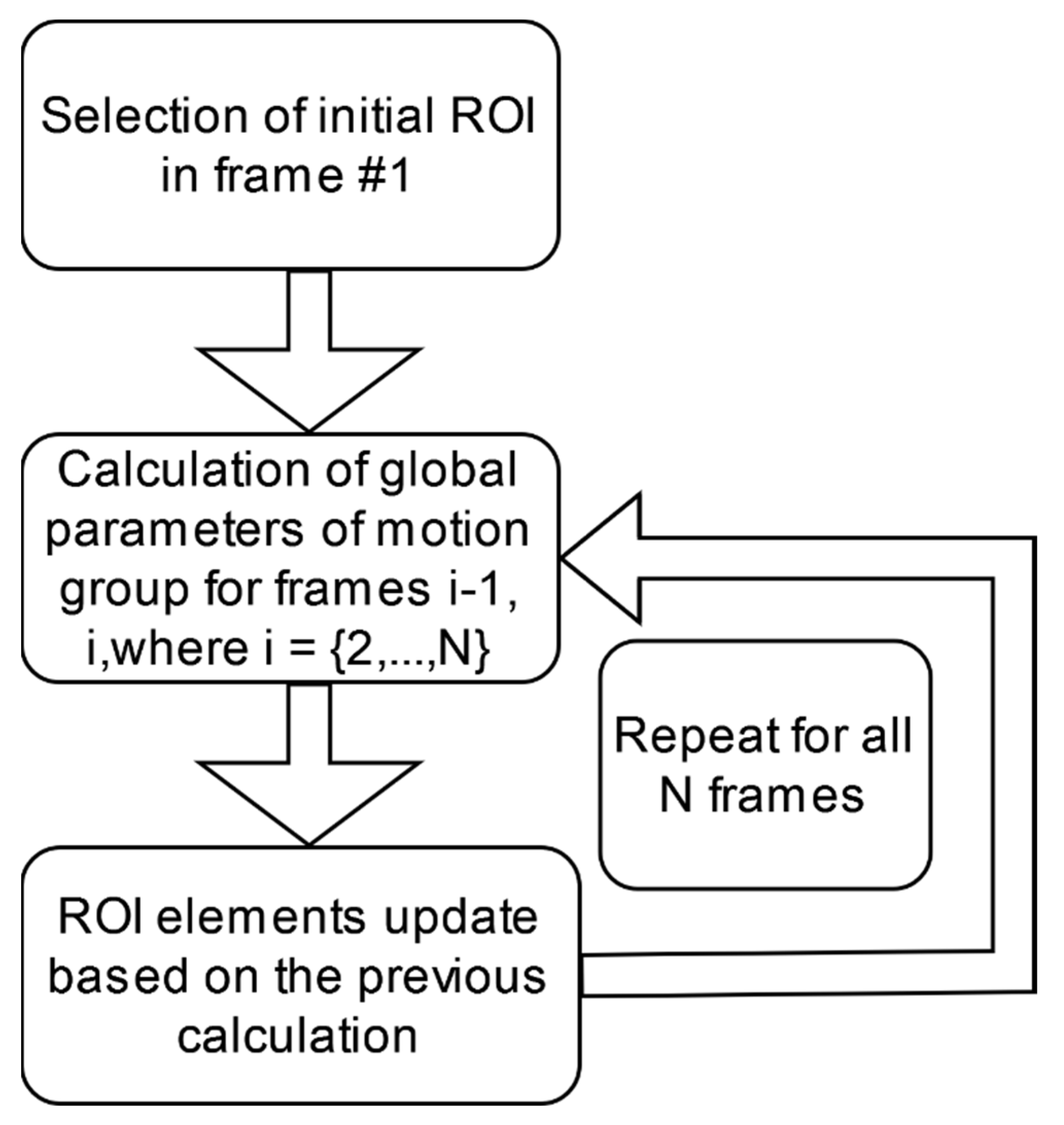

2. Methods

Optic Flow Reconstruction Problem

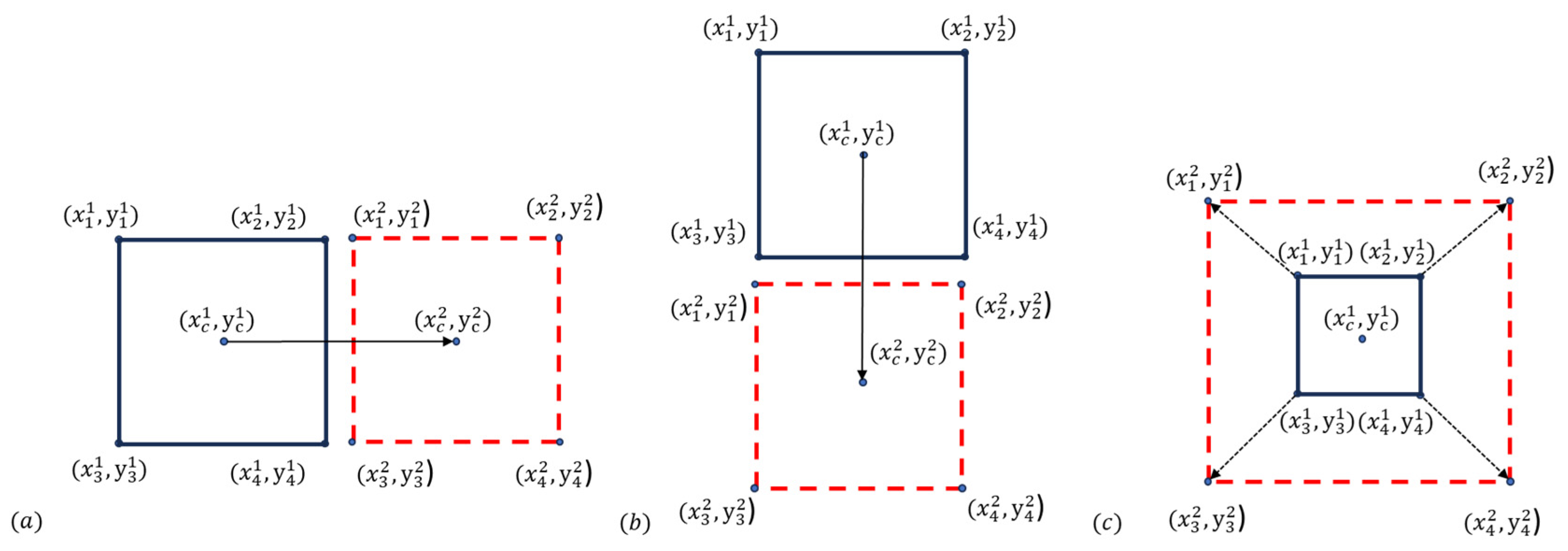

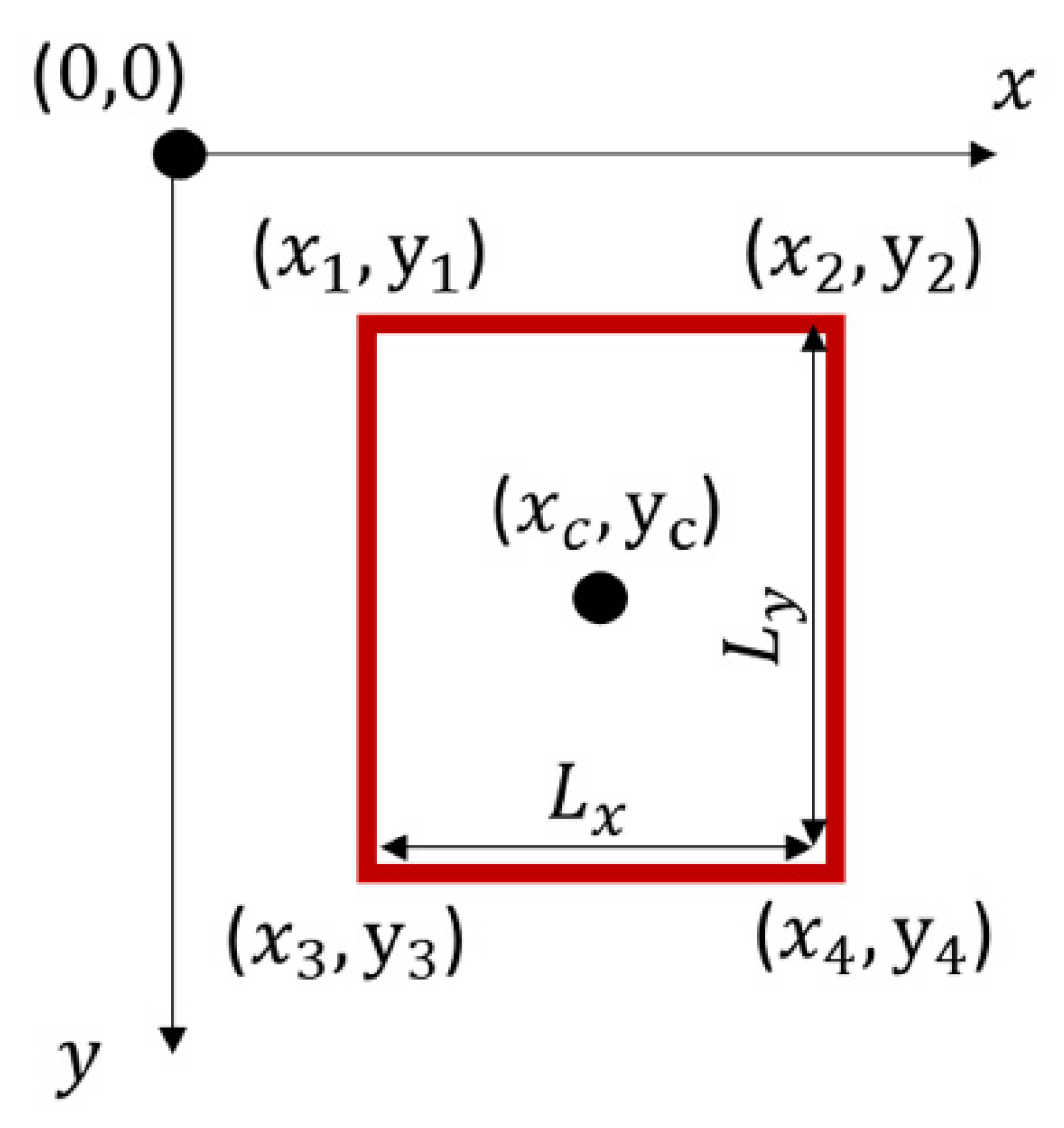

Region of Interest (ROI) Transformations

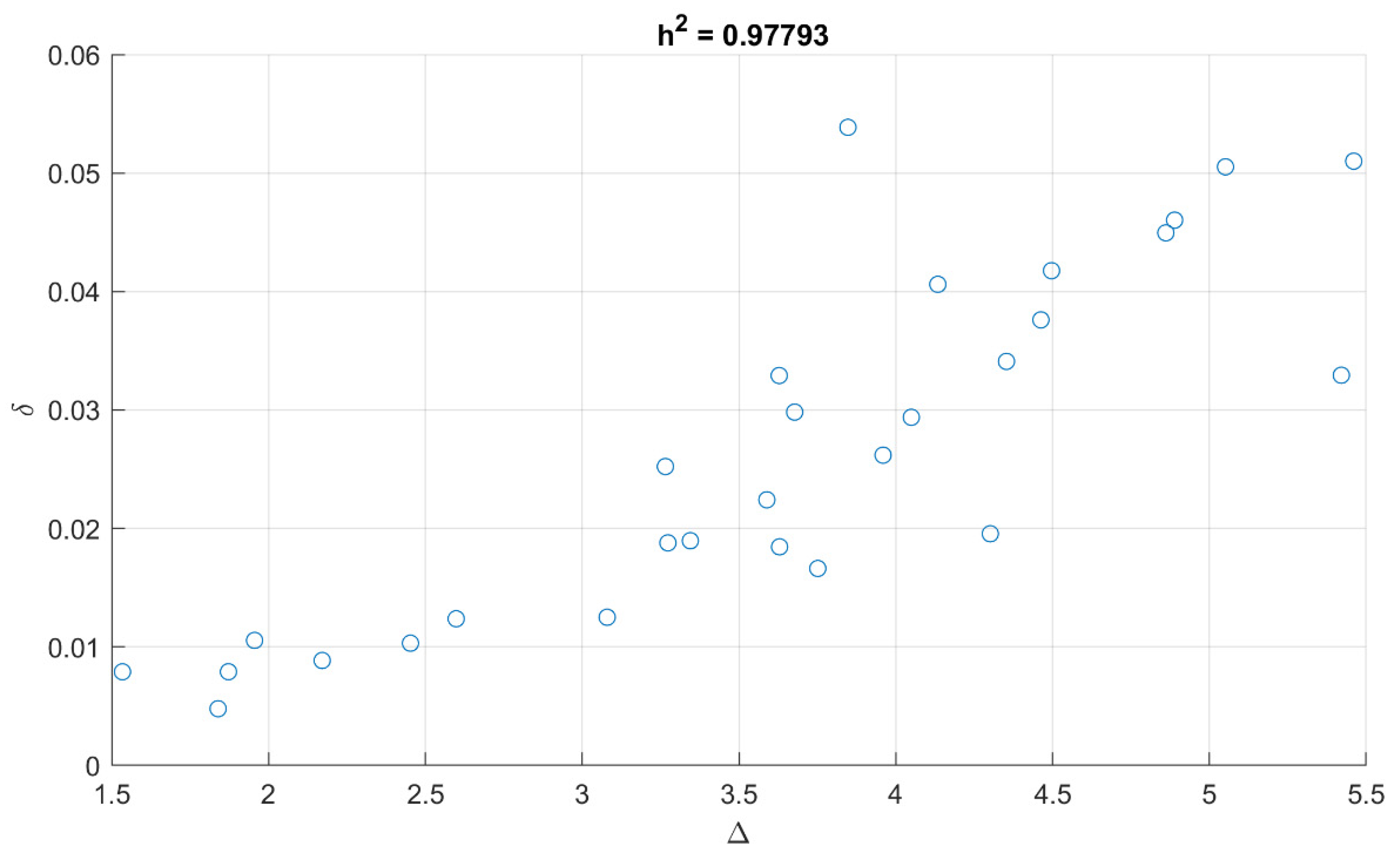

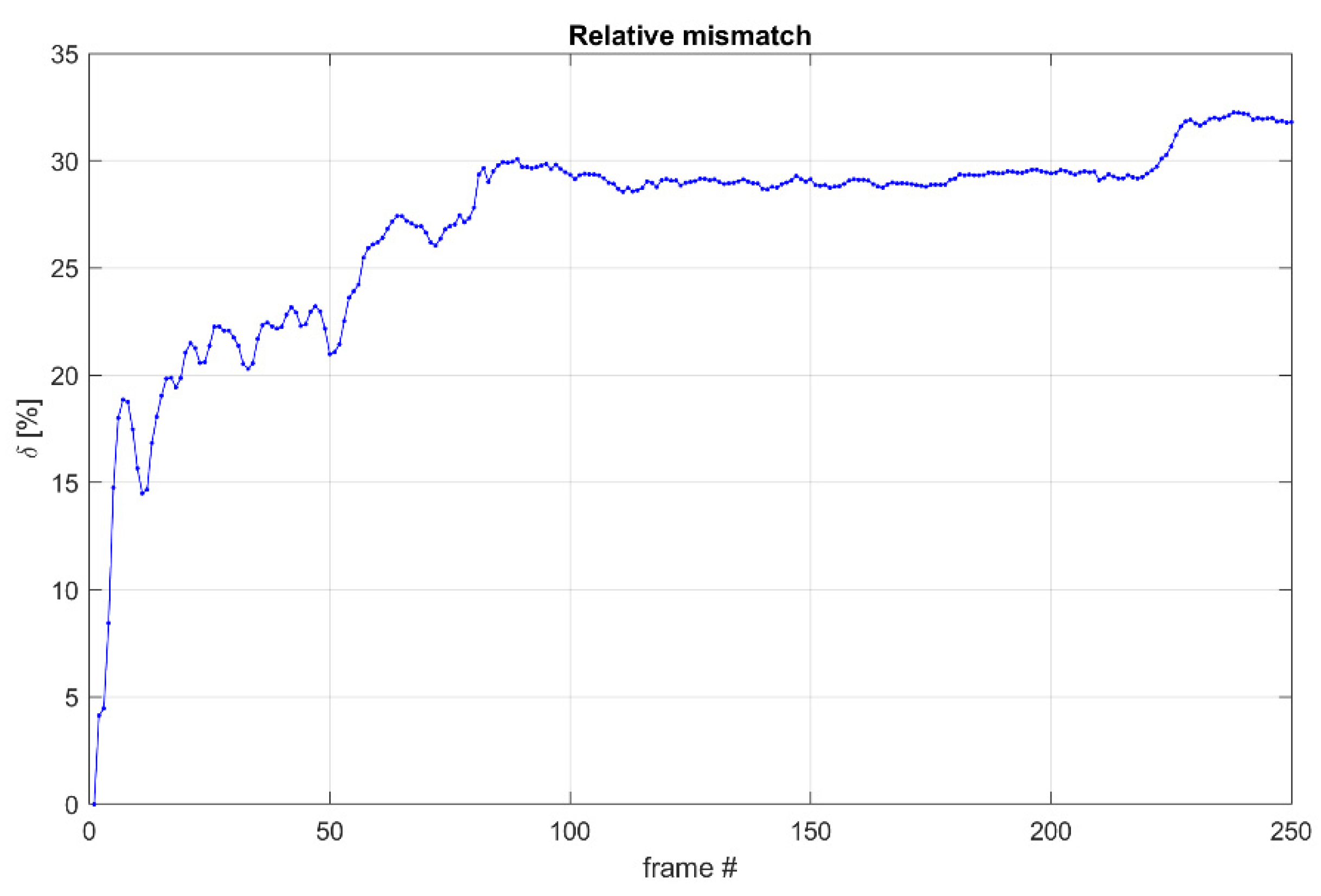

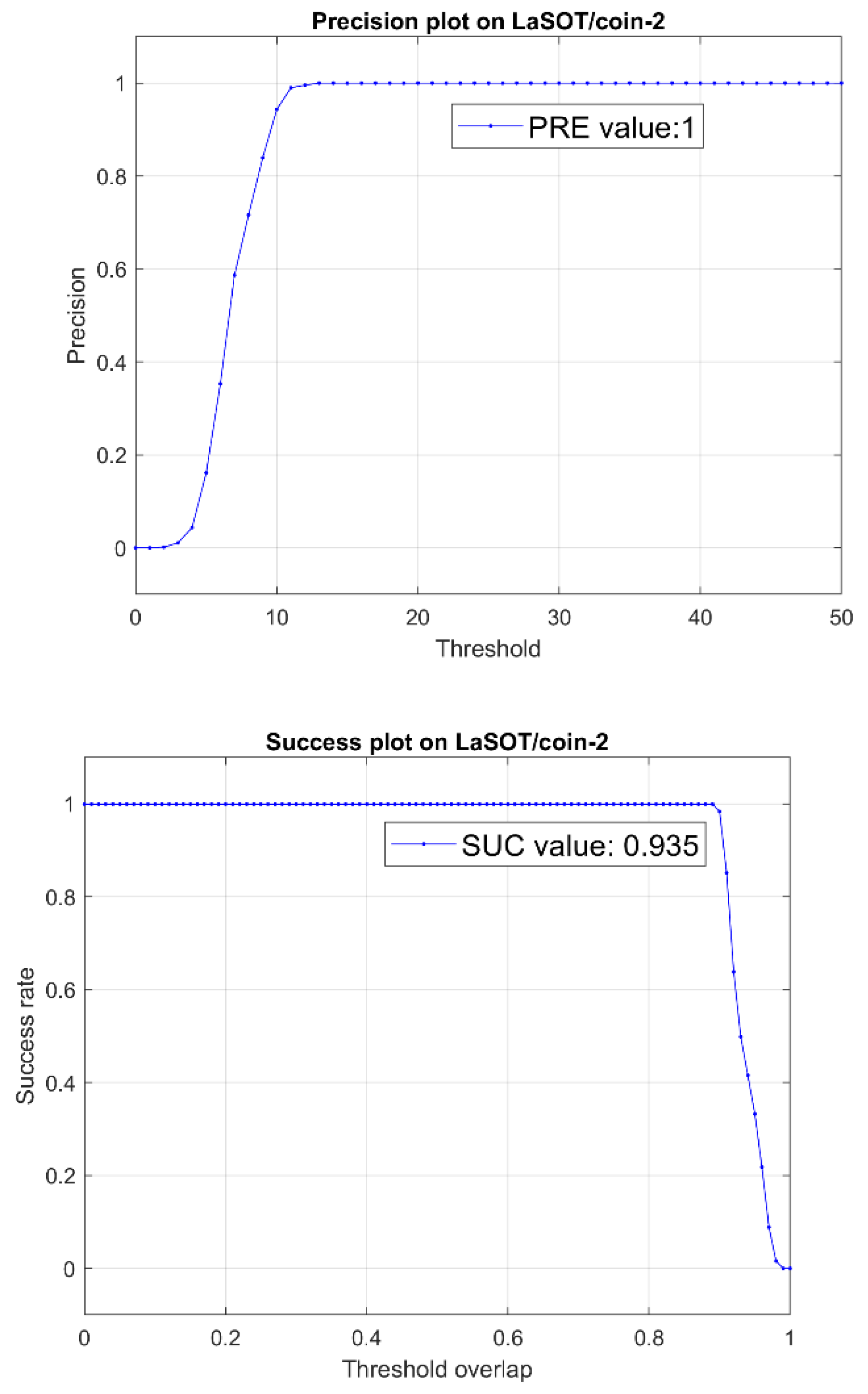

Evaluation of the ROI Tracking Performance

3. Results

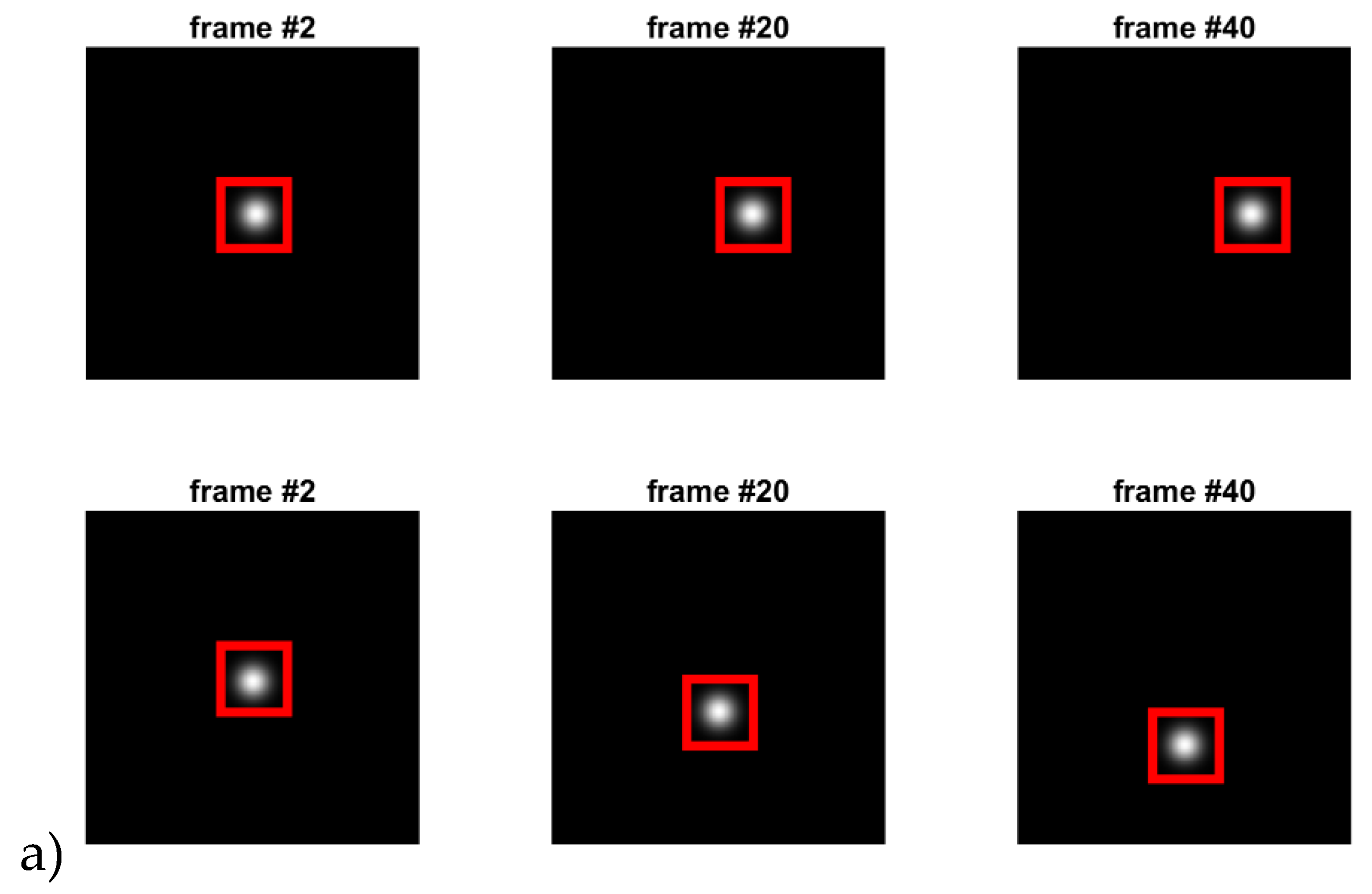

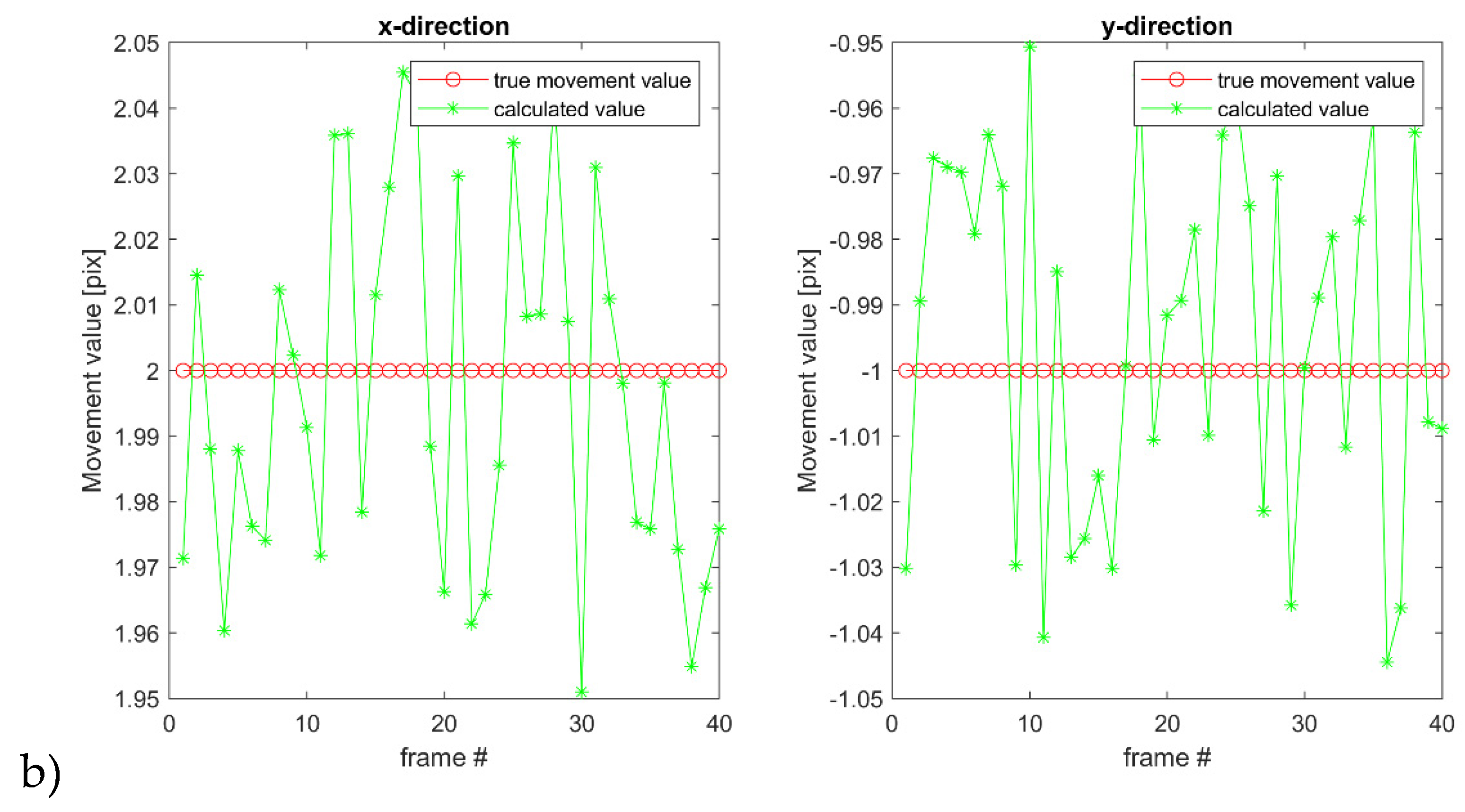

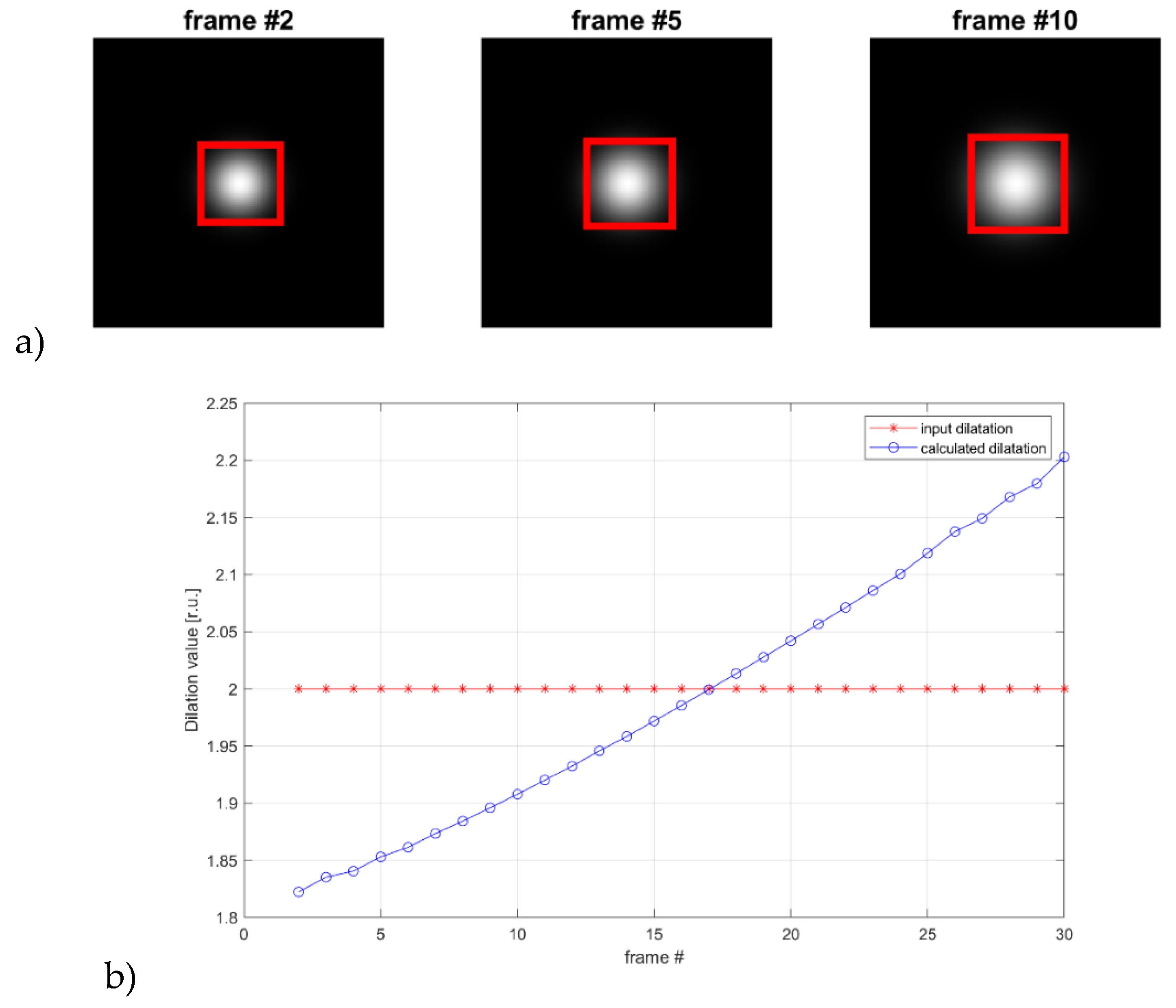

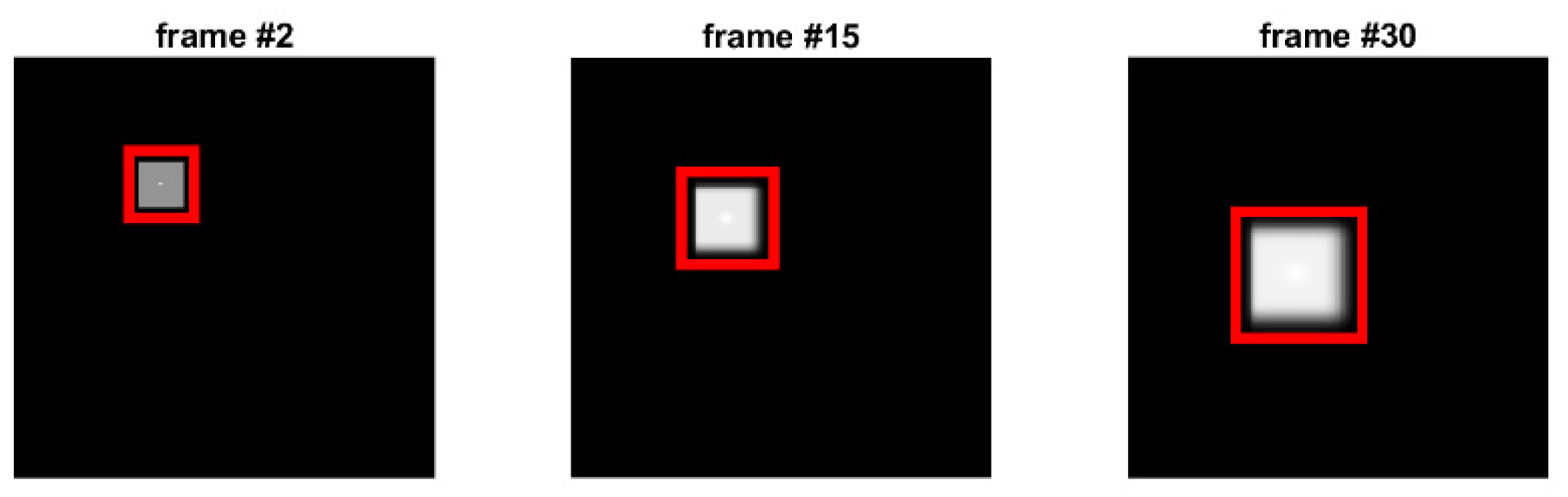

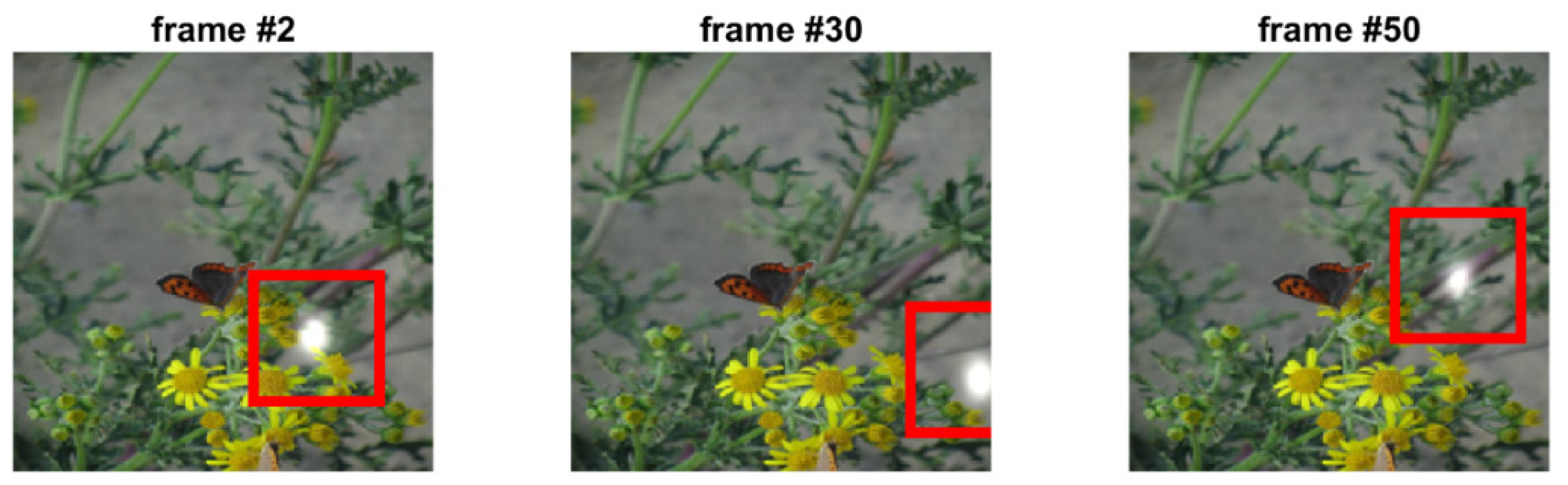

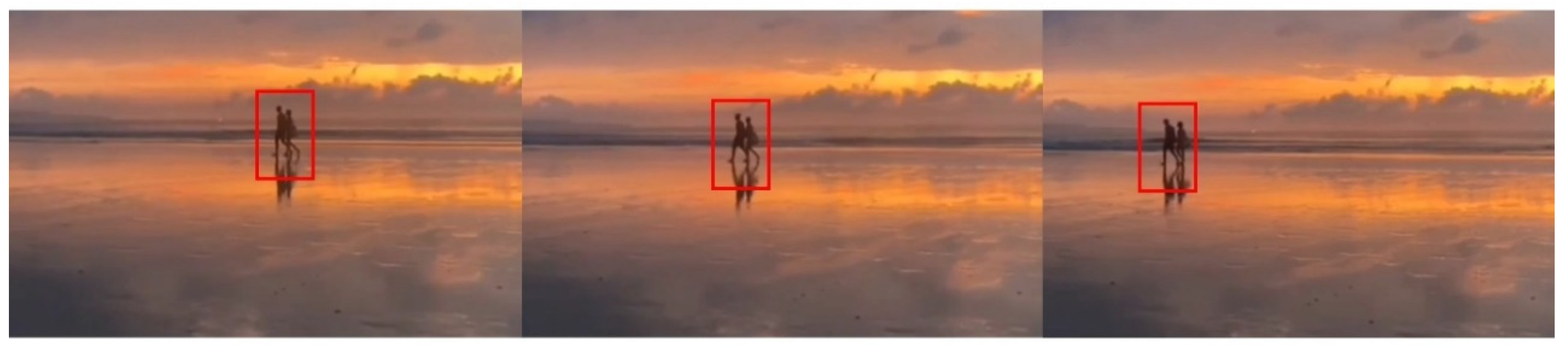

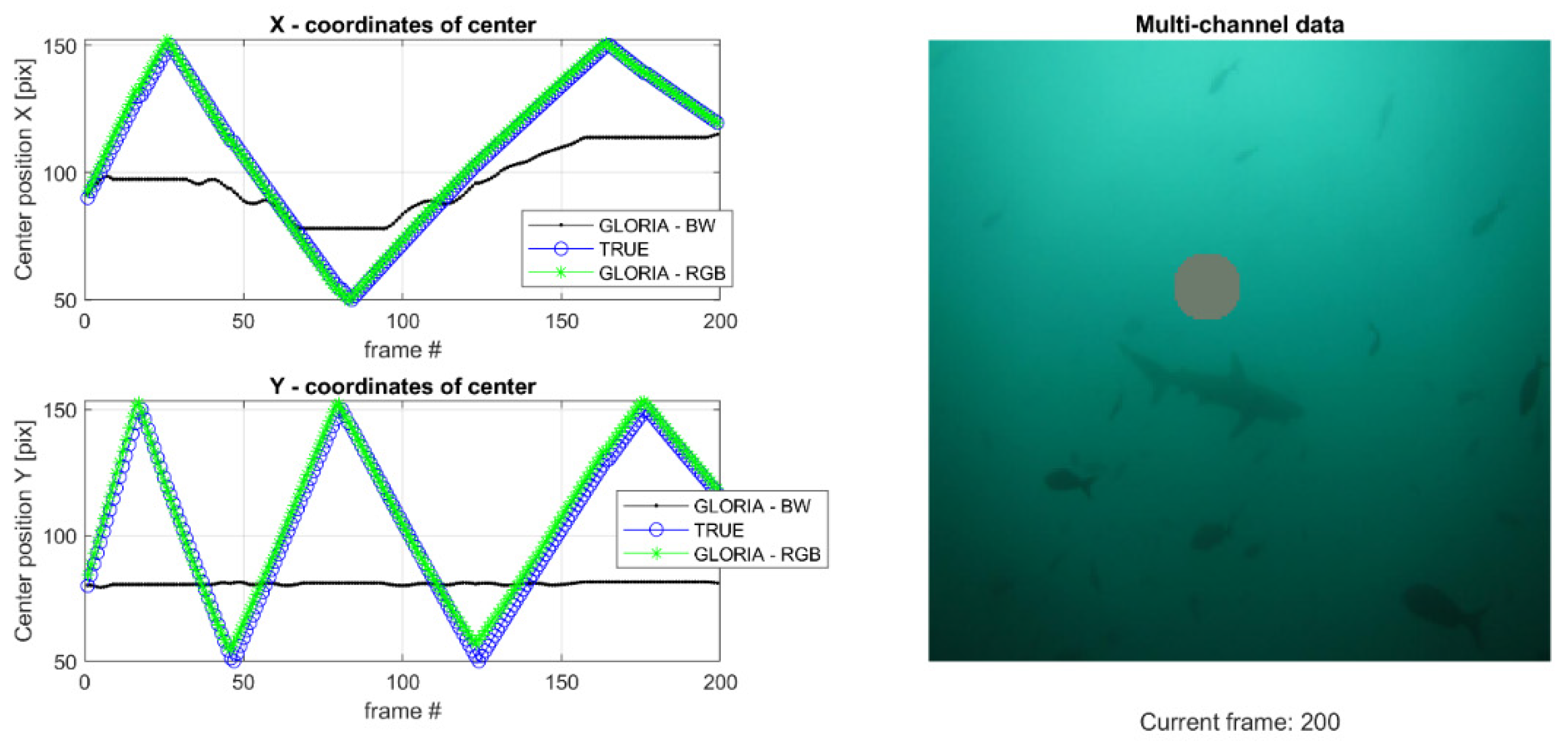

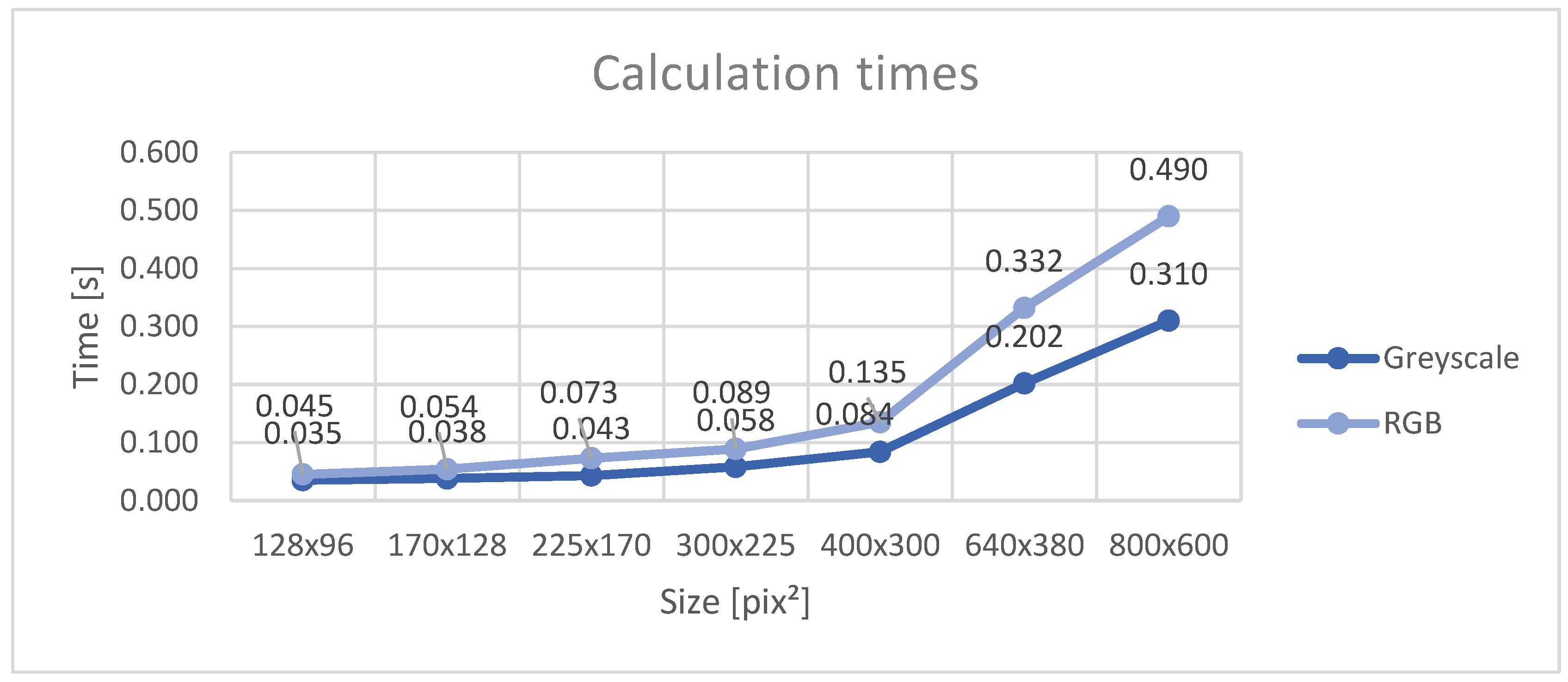

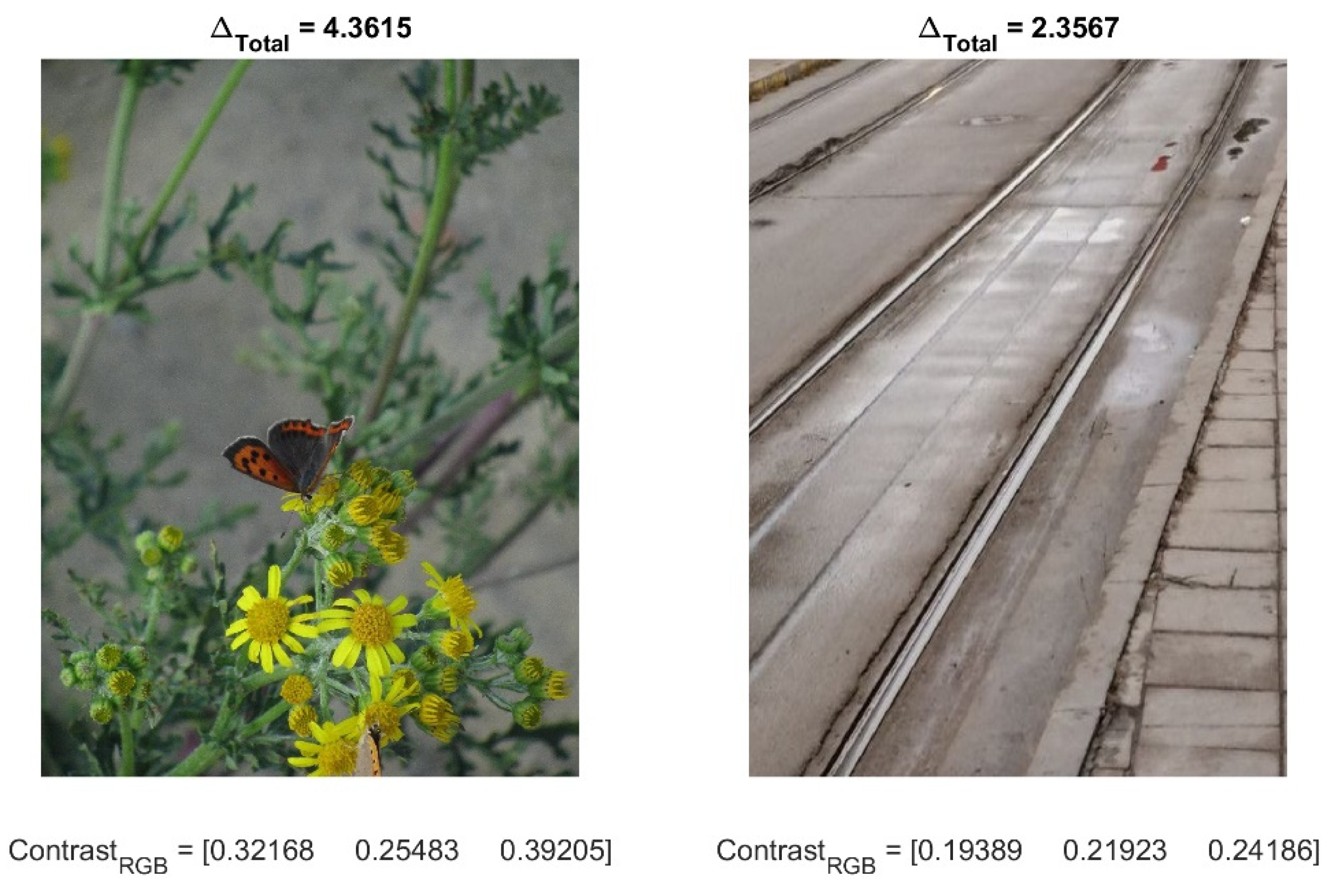

Tracking Capabilifties

- Generate an initial image, in our case, a Gaussian spot with a starting size and coordinates on a homogenous background

- Specify the coordinates and size of the first region of interest, R1

- Transform the initial image with any number of basic movement generators as described in Figure 1 to arrive at an image sequence

- Using the GLORIA algorithm, calculate the transformation parameters

- Update the ROI according to Eq. (7)

- Compare properties of regions of interest – coordinates and size

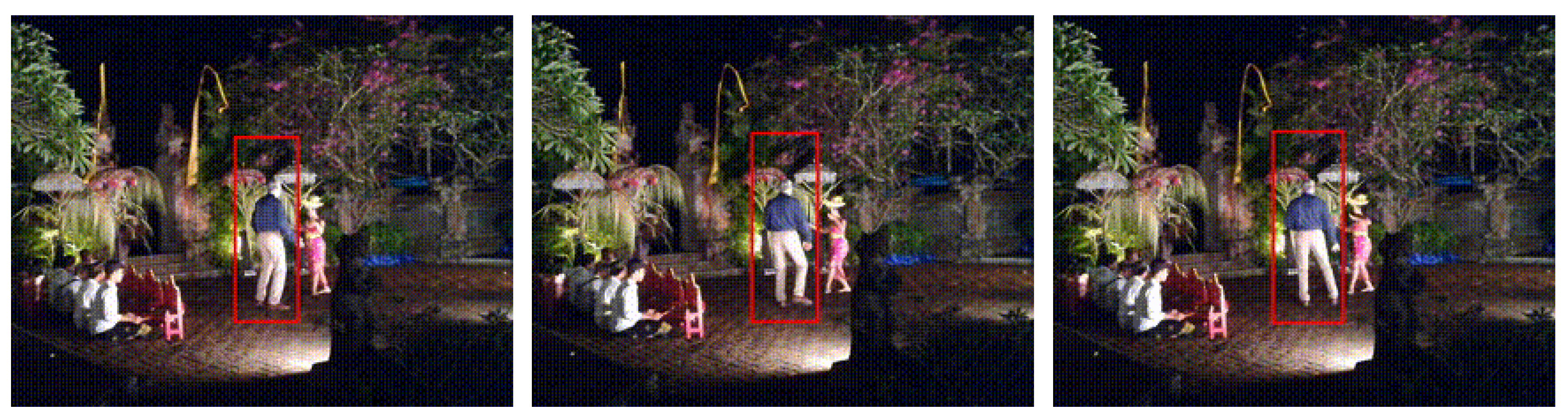

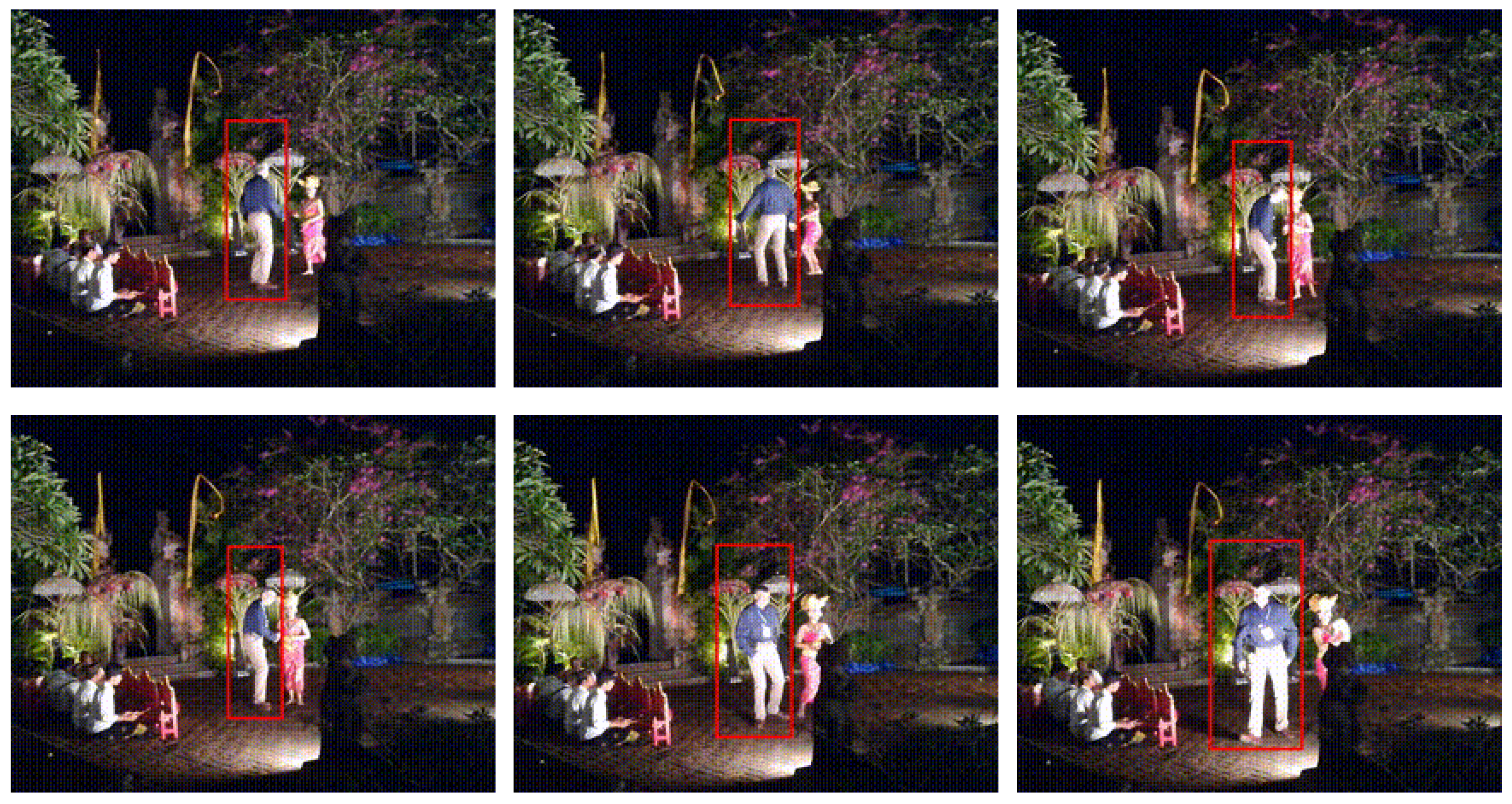

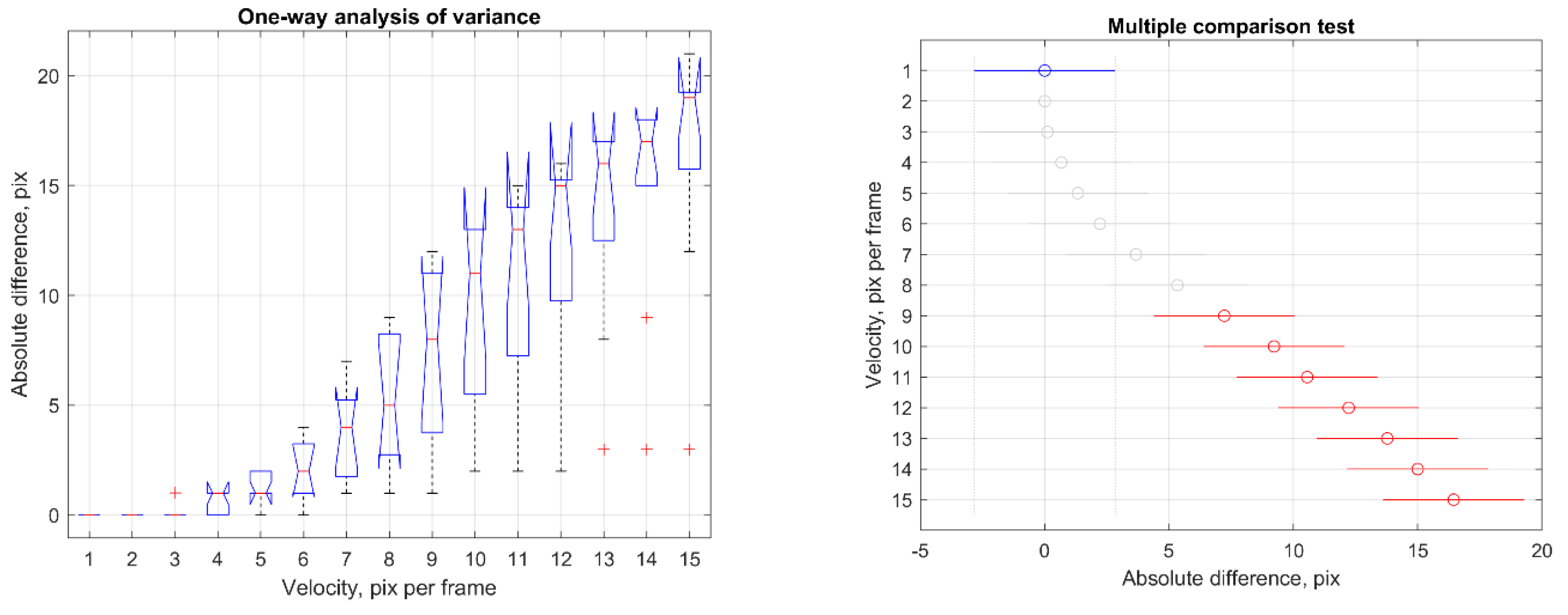

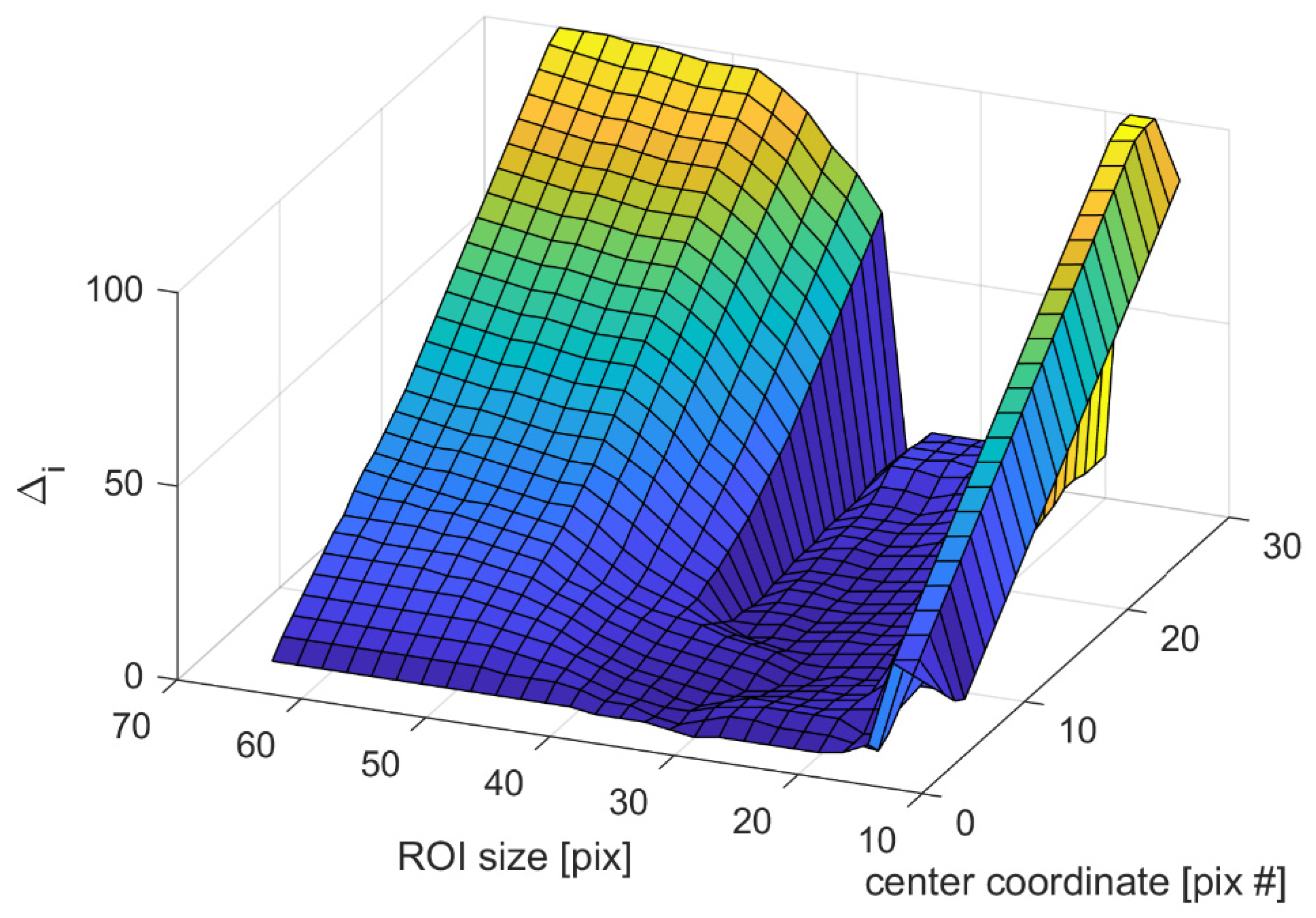

Tracking Limitations

4. Summary and Discussion

Funding

Acknowledgments

Conflicts of Interest

References

- Kalitzin, S., et al. Automatic segmentation of episodes containing epileptic clonic seizures in video sequences. IEEE transactions on biomedical engineering 2012, 59, 3379–3385. [Google Scholar] [CrossRef]

- Geertsema, E.E., et al. Automated non-contact detection of central apneas using video. Biomedical Signal Processing and Control 2020, 55, 101658. [Google Scholar] [CrossRef]

- Geertsema, E.E., et al. Automated remote fall detection using impact features from video and audio. Journal of biomechanics 2019, 88, 25–32. [Google Scholar] [CrossRef] [PubMed]

- Choi, H., B. Kang, and D. Kim. Moving object tracking based on sparse optical flow with moving window and target estimator. Sensors 2022, 22, 2878. [Google Scholar] [CrossRef] [PubMed]

- Farag, W. and Z. Saleh. An advanced vehicle detection and tracking scheme for self-driving cars. in 2nd Smart Cities Symposium (SCS 2019). 2019. IET.

- Gupta, A., et al. Deep learning for object detection and scene perception in self-driving cars: Survey, challenges, and open issues. Array 2021, 10, 100057. [Google Scholar] [CrossRef]

- Lipton, A.J., et al. Automated video protection, monitoring & detection. IEEE Aerospace and Electronic Systems Magazine 2003, 18, 3–18. [Google Scholar]

- Wang, W., et al. Real time multi-vehicle tracking and counting at intersections from a fisheye camera. in 2015 IEEE Winter Conference on Applications of Computer Vision. 2015. IEEE.

- Kim, H. Multiple vehicle tracking and classification system with a convolutional neural network. Journal of Ambient Intelligence and Humanized Computing 2022, 13, 1603–1614. [Google Scholar] [CrossRef]

- Yeo, H.-S., B.-G. Lee, and H. Lim. Hand tracking and gesture recognition system for human-computer interaction using low-cost hardware. Multimedia Tools and Applications 2015, 74, 2687–2715. [Google Scholar] [CrossRef]

- Fagiani, C., M. Betke, and J. Gips. Evaluation of Tracking Methods for Human-Computer Interaction. in WACV. 2002.

- Hunke, M. and A. Waibel. Face locating and tracking for human-computer interaction. in Proceedings of 1994 28th Asilomar Conference on Signals, Systems and Computers. 1994. IEEE.

- Salinsky, M. A practical analysis of computer based seizure detection during continuous video-EEG monitoring. Electroencephalography and clinical Neurophysiology 1997, 103, 445–449. [Google Scholar] [CrossRef]

- Yilmaz, A., O. Javed, and M. Shah, Object tracking: A survey. Acm computing surveys (CSUR), 2006, 38, 13-es.

- Deori, B. and D.M. Thounaojam, A survey on moving object tracking in video. International Journal on Information Theory (IJIT), 2014, 3, 31–46.

- Mangawati, A., M. Leesan, and H.R. Aradhya. Object Tracking Algorithms for video surveillance applications. in 2018 international conference on communication and signal processing (ICCSP). 2018. IEEE.

- Li, X., et al. A survey of appearance models in visual object tracking. ACM transactions on Intelligent Systems and Technology (TIST) 2013, 4, 1–48. [Google Scholar]

- Piccardi, M. Background subtraction techniques: a review. in 2004 IEEE international conference on systems, man and cybernetics (IEEE Cat. No. 04CH37583). 2004. IEEE.

- Benezeth, Y., et al. Comparative study of background subtraction algorithms. Journal of Electronic Imaging 2010, 19, 033003-1–033003-12. [Google Scholar]

- Chen, F., et al. Visual object tracking: A survey. Computer Vision and Image Understanding 2022, 222, 103508. [Google Scholar] [CrossRef]

- Ondrašovič, M. and P. Tarábek. Siamese visual object tracking: A survey. IEEE Access 2021, 9, 110149–110172. [Google Scholar] [CrossRef]

- Doyle, D.D., A.L. Jennings, and J.T. Black. Optical flow background estimation for real-time pan/tilt camera object tracking. Measurement 2014, 48, 195–207. [Google Scholar] [CrossRef]

- Husseini, S., A survey of optical flow techniques for object tracking. 2017.

- Kalitzin, S., E.E. Geertsema, and G. Petkov. Optical Flow Group-Parameter Reconstruction from Multi-Channel Image Sequences. in APPIS. 2018.

- Horn, B.K. and B.G. Schunck, Determining optical flow. 1980.

- Lucas, B.D. and T. Kanade. An iterative image registration technique with an application to stereo vision. in IJCAI’81: 7th international joint conference on Artificial intelligence. 1981.

- Koenderink, J.J. Optic flow. Vision research 1986, 26, 161–179. [Google Scholar] [CrossRef] [PubMed]

- Beauchemin, S.S. and J.L. Barron. The computation of optical flow. ACM computing surveys (CSUR) 1995, 27, 433–466. [Google Scholar] [CrossRef]

- Florack, L., W. Niessen, and M. Nielsen. The intrinsic structure of optic flow incorporating measurement duality. International Journal of Computer Vision 1998, 27, 263–286. [Google Scholar] [CrossRef]

- Niessen, W. and R. Maas, Multiscale optic flow and stereo. Gaussian Scale-Space Theory, Computational Imaging and Vision. Dordrecht: Kluwer Academic Publishers, 1996.

- Maas, R., B.M. ter Haar Romeny, and M.A. Viergever. A Multiscale Taylor Series Approaches to Optic Flow and Stereo: A Generalization of Optic Flow under the Aperture. in Scale-Space Theories in Computer Vision: Second International Conference, Scale-Space’99 Corfu, Greece, September 26–27, 1999 Proceedings 2. 1999. Springer.

- Aires, K.R., A.M. Santana, and A.A. Medeiros. Optical flow using color information: preliminary results. in Proceedings of the 2008 ACM symposium on Applied computing. 2008.

- Niessen, W., et al. Spatiotemporal operators and optic flow. in Proceedings of the Workshop on Physics-Based Modeling in Computer Vision. 1995. IEEE Computer Society.

- Pavel, M., et al. Limits of visual communication: the effect of signal-to-noise ratio on the intelligibility of American Sign Language. JOSA A 1987, 4, 2355–2365. [Google Scholar] [CrossRef] [PubMed]

- Kalitzin, S.N., et al. Quantification of unidirectional nonlinear associations between multidimensional signals. IEEE transactions on biomedical engineering 2007, 54, 454–461. [Google Scholar] [CrossRef] [PubMed]

- Fan, H., et al. Lasot: A high-quality large-scale single object tracking benchmark. International Journal of Computer Vision 2021, 129, 439–461. [Google Scholar] [CrossRef]

- Yang, F., X. Zhang, and B. Liu. Video object tracking based on YOLOv7 and DeepSORT. arXiv 2022, arXiv:2207.12202, arXiv:2207.12202. [Google Scholar]

- Jana, D. and S. Nagarajaiah. Computer vision-based real-time cable tension estimation in Dubrovnik cable-stayed bridge using moving handheld video camera. Structural Control and Health Monitoring 2021, 28, e2713. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).