Submitted:

28 February 2024

Posted:

29 February 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Personalization in healthcare

- (1)

- The increasing availability of data: LLMs are trained on massive datasets of biological and medical texts. The healthcare industry is generating more data than ever before, which is making it possible to train LLMs that can be used to improve patient care.

- (2)

- The decreasing cost of computation: The cost of computing power has been decreasing steadily for many years. This has made it possible to train and deploy LLMs at scale.

- (3)

- The increasing demand for personalized healthcare services: Patients are increasingly demanding personalized healthcare that is tailored to their individual needs. LLMs can be used to generate personalized treatment plans and recommendations, which can help to improve patient outcomes.

- (4)

- The potential to improve efficiency and accuracy: LLMs can be used to automate a variety of tasks in healthcare, such as scheduling appointments and generating reports. This can free up healthcare professionals to focus on providing care to patients. LLMs can also be used to improve the accuracy of diagnosis and treatment.

- Diagnosis and Treatment: LLMs are valuable for analyzing patient data to identify potential health issues, generate treatment plans, and recommend medications.

- Research: LLMs play a role in analyzing extensive datasets of medical research papers, aiding researchers in identifying new trends and gaining insights.

- Education: LLMs can create personalized learning experiences for healthcare professionals and develop educational resources for patients and their families.

- Administrative Tasks: LLMs offer automation capabilities for diverse administrative tasks in healthcare, such as appointment scheduling

1.2. Advantages of large language models in healthcare AI

- (1)

- Improved Diagnosis and Treatment: LLMs excel in analyzing patient data to identify potential health issues, generate treatment plans, and recommend medications. This enhances the accuracy and efficiency of healthcare delivery.

- (2)

- Support for Research: LLMs contribute to the analysis of extensive datasets of medical research papers, enabling researchers to identify emerging trends and insights. This, in turn, can lead to the development of new treatments and cures.

- (3)

- Personalized Learning Experiences: LLMs can craft personalized learning experiences for healthcare professionals, aiding them in staying abreast of the latest medical knowledge and best practices.

- (4)

- Automated Administrative Tasks: LLMs are adept at automating various administrative tasks in healthcare, including appointment scheduling and report generation. This automation liberates healthcare professionals to concentrate on delivering care to patients.

- (1)

- Collaborative filtering is the most used and mature technique that compares the actions of multiple users to generate personalized suggestions. An example of this technique can typically be found on e-commerce sites, such as “Customers who bought this item also bought...”.

- (2)

- Content-based filtering is another technique that recommends items that are similar to other items preferred by the specific user. They rely on the characteristics of the objects themselves and are likely to be highly relevant to a user’s interests (De Croon et al. 2021). This makes content-based filtering especially valuable for application domains with large libraries of a single type of content, such as MedlinePlus’ curated consumer health information (Bocanegra et al. 2017).

- (3)

- Knowledge-based filtering is another technique that incorporates knowledge by logic inferences. This type of filtering uses explicit knowledge about an item, user preferences, and other recommendation criteria. However, knowledge acquisition can also be dynamic and relies on user feedback.

- (4)

- LLM-based filtering is based on prompting and reasoning neuro-symbolic approaches and is the focus of this chapter.

1.3. Contribution

- (1)

- The chapter introduces a neuro-symbolic architecture for personalizing Large Language Models (LLMs). In the realm of prompting techniques, we advance towards meta-prompting, illustrating how these meta-prompts construct user personalization profiles, subsequently applying them during search operations to yield personalized outcomes.

- (2)

- We advocate for the incorporation of abductive reasoning to deduce the most suitable answer for a user based on her personalization profile. Abduction occurs in parallel to fine-tuning to iteratively improve the personalization.

- (3)

- After scrutinizing existing personalization architectures, we identify those most compatible with LLM integration. Health personalization recommendation methods undergo a comparative analysis, with the selection of the most effective LLM-based approach.

- (4)

- Our exploration concludes with a practical exercise involving fine-tuning LLM using treatment recommendation data.

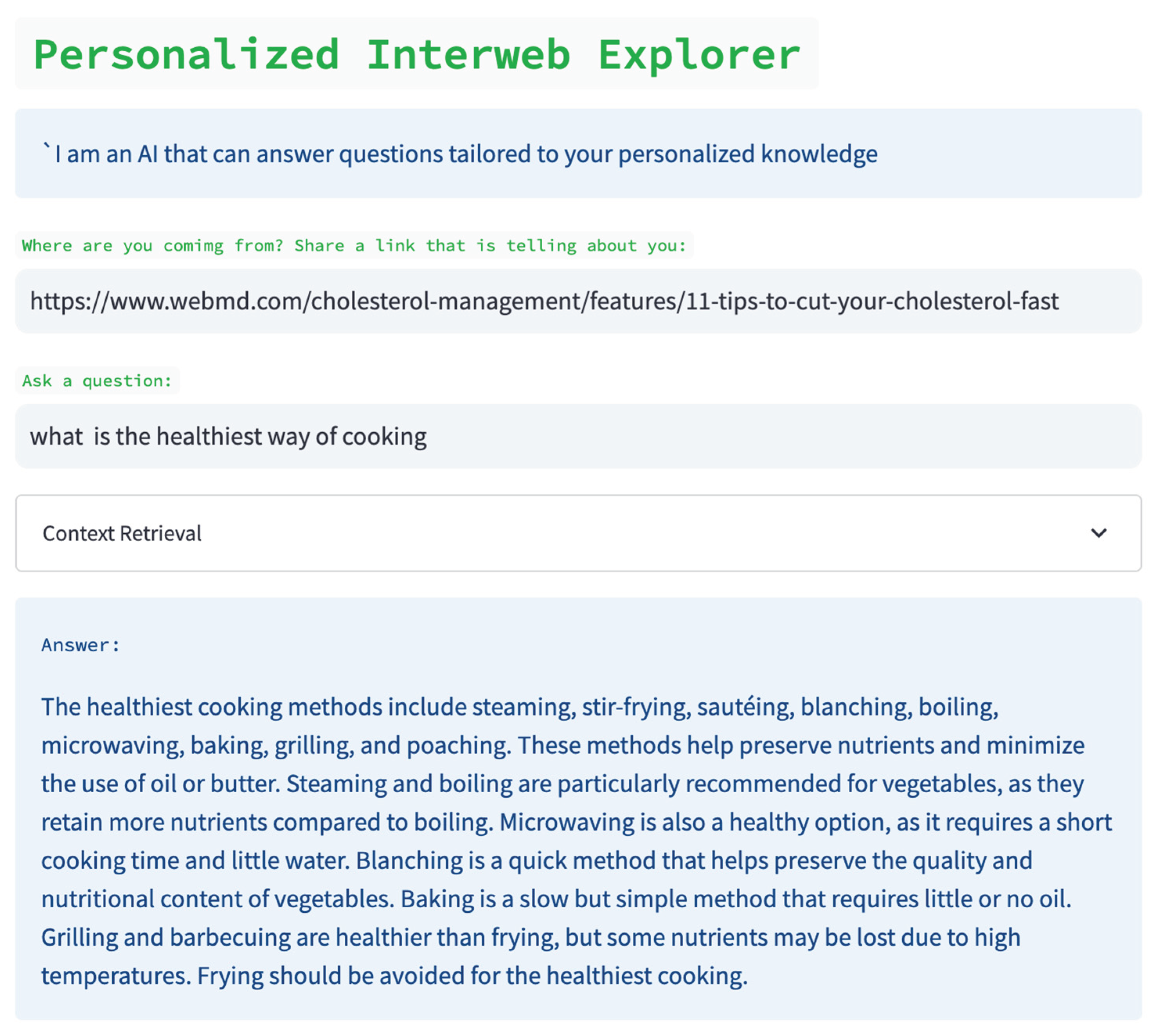

2. Personalization scenarios

- 1)

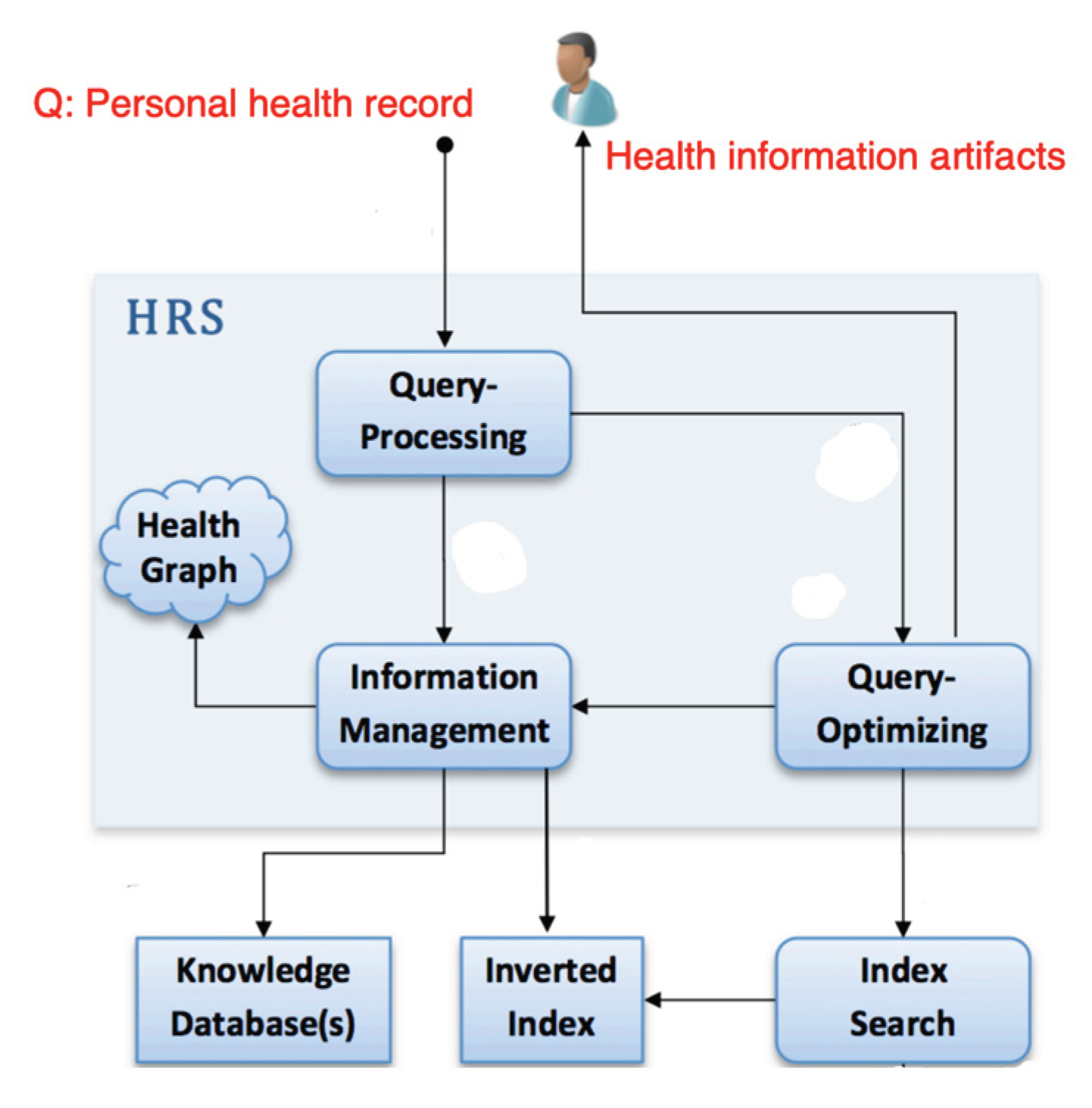

- Search engine. Search engines are widely employed to provide external knowledge to LLMs, reducing LLMs’ memory burden and alleviating the occurrence of hallucinations in LLMs’ responses.

- 2)

- Recommendation engine. Some works have attempted to alleviate the memory burden of LLMs by equipping them with a recommendation engine as a tool, enabling LLMs to offer recommendations grounded on the item corpus.

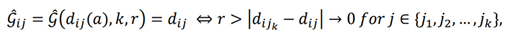

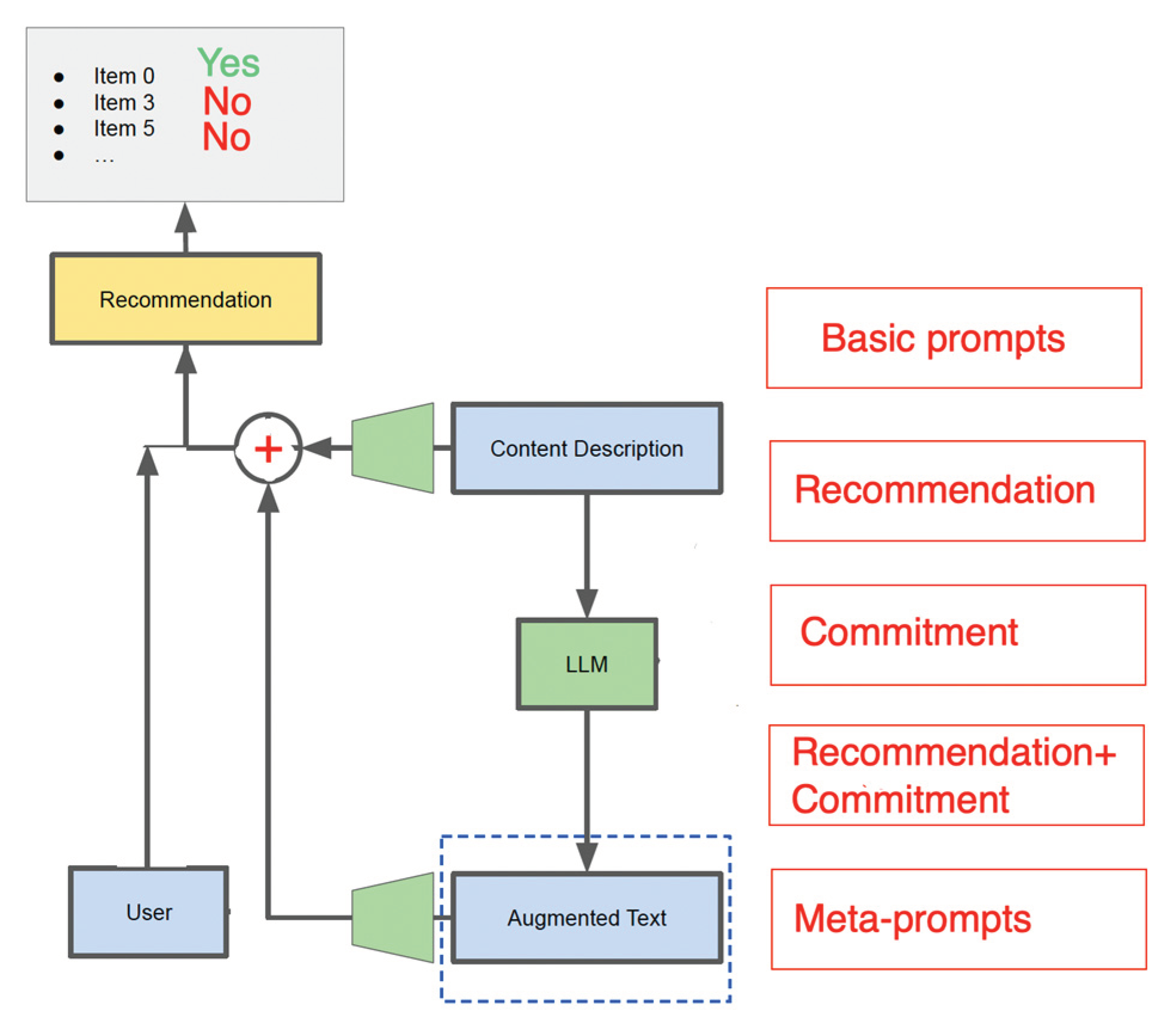

- (1)

- Abductive reasoning, which requires an LLM to provide an explanation why a certain item is recommended, given the Personalization User Profile;

- (2)

- Object-level Prompt, which is formulated as an exact task of providing an answer for a certain category / specialty of the user;

- (3)

- Meta-level Prompt, which is an abstract request such as finding commonalities in user documents, extracting them from user documents.

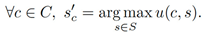

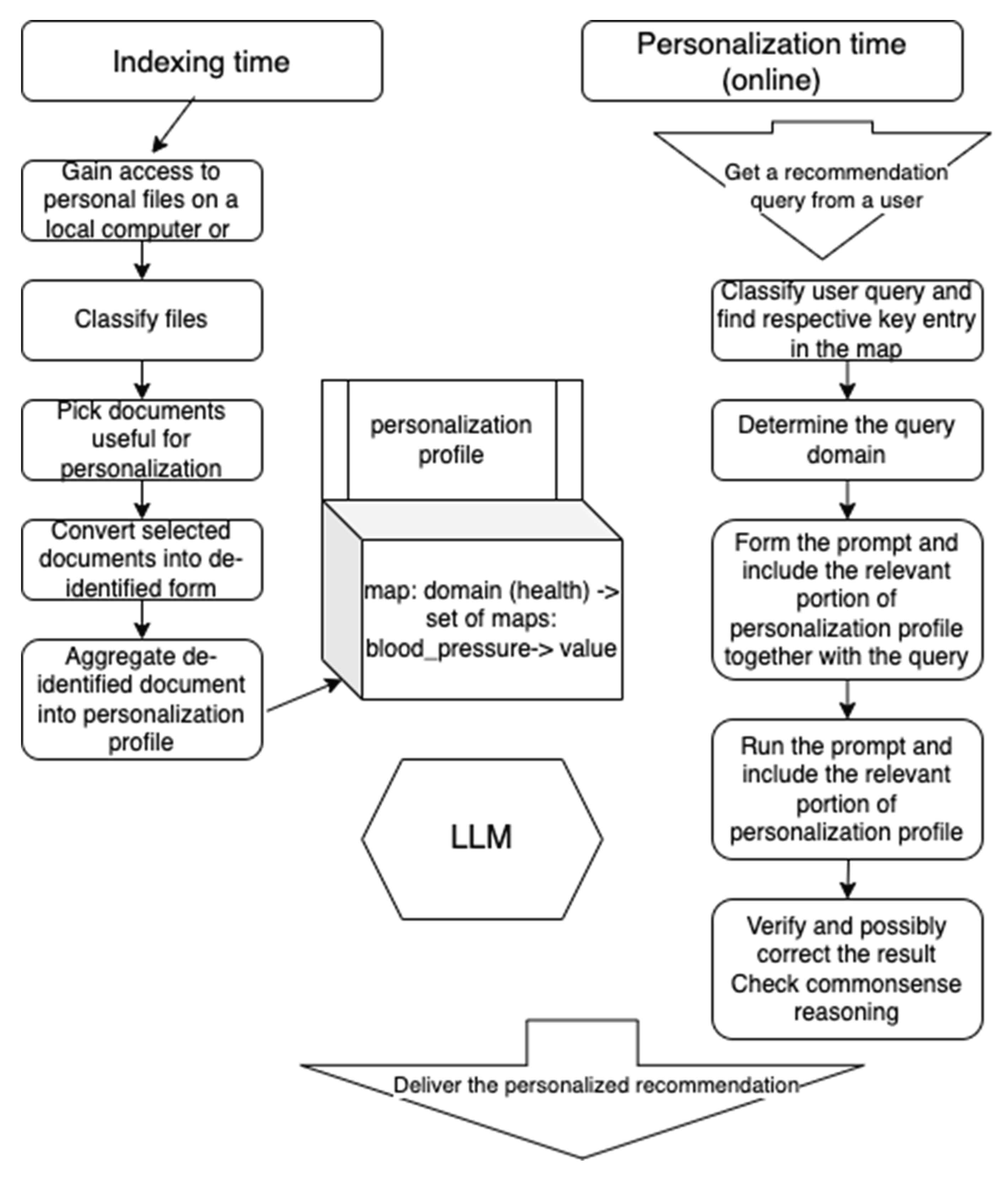

2.1. Problem formulation

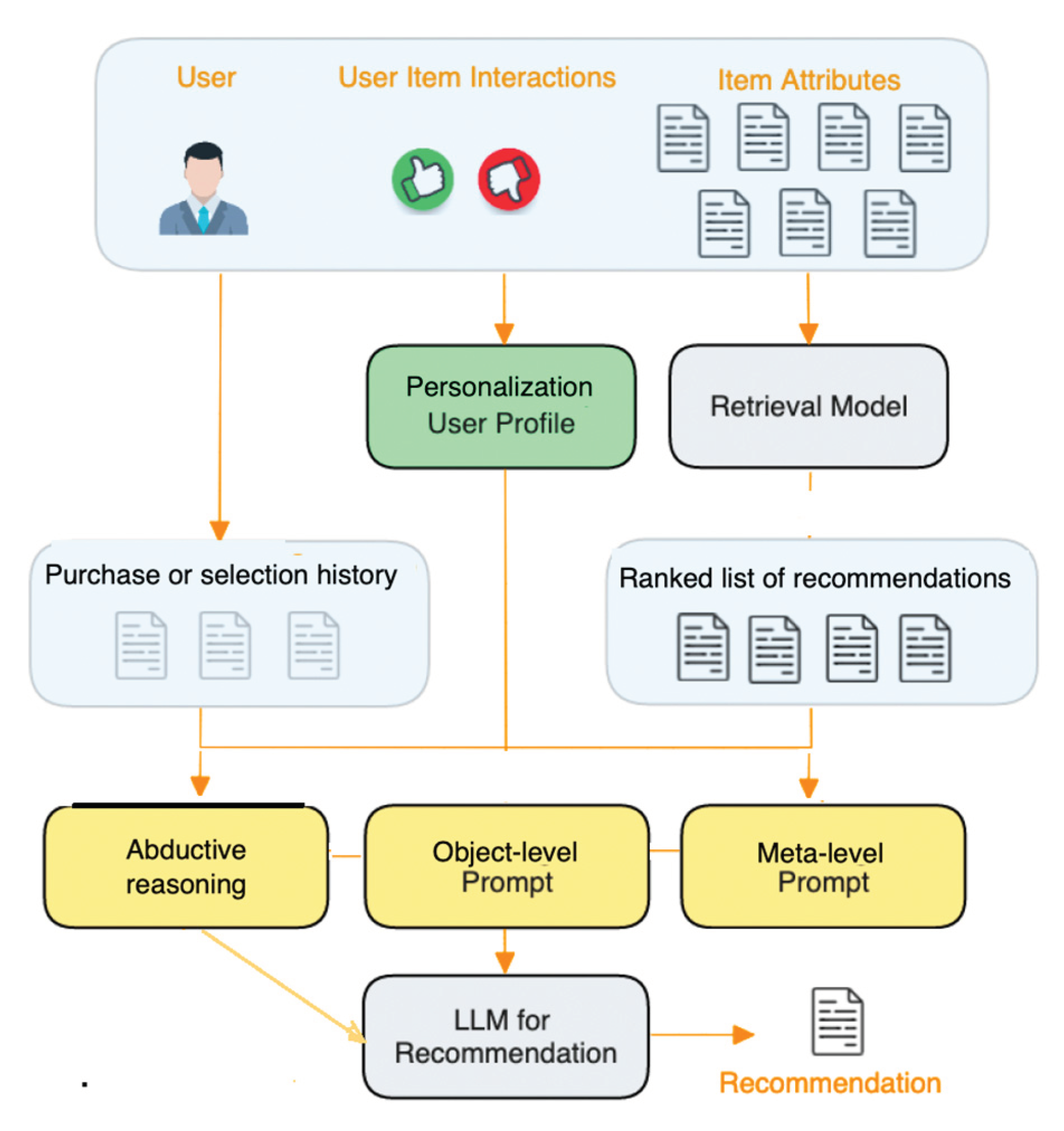

- (1)

- a query construction component that transforms the input ti into a query q for retrieving from the personalization user’s profile;

- (2)

- a retrieval component IR(q; Pu) that accepts a query q, a user profile Pu and retrieves entries assumed to be most significant from the user profile; and

- (3)

- a prompt construction component p that assembles a prompt for user u based on input ti and the obtained entries.

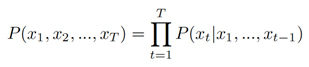

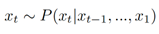

3. Transformer-based personalization

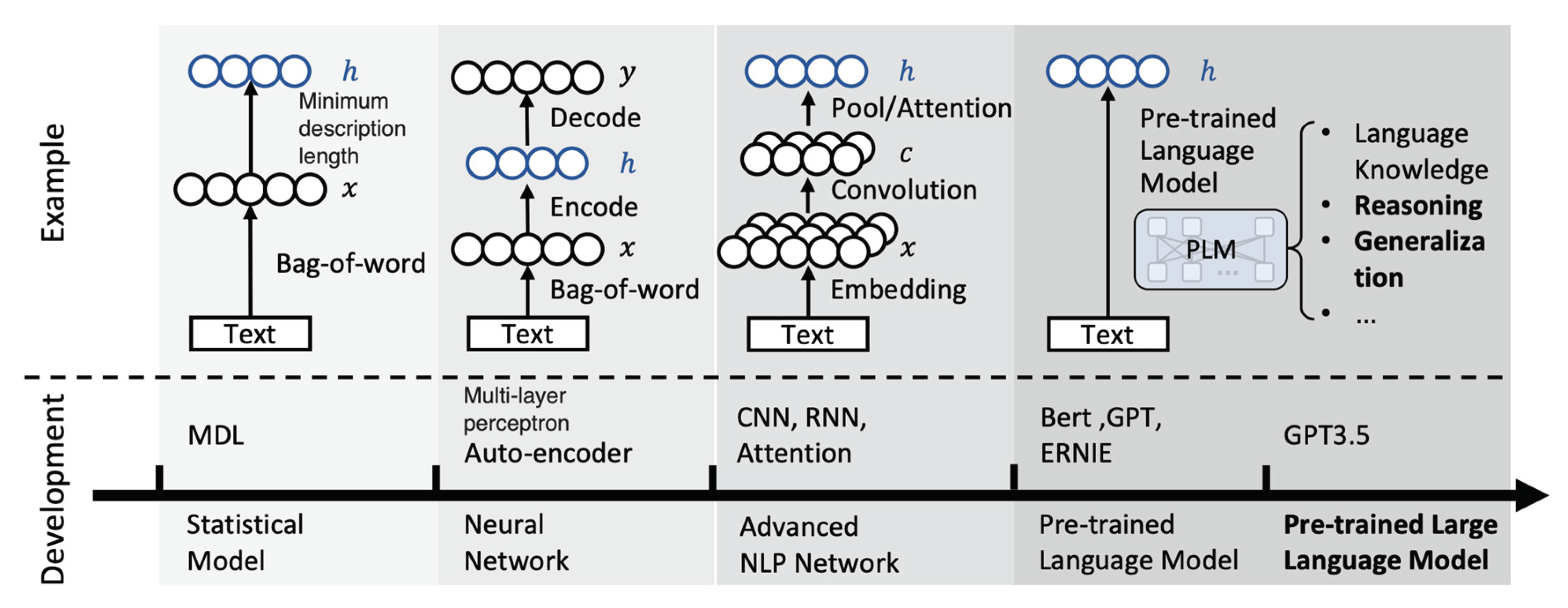

3.1. History of recommendation development

- (1)

- MDL (Minimum Description Length): The concept of recommendation systems can be traced back to the late 20th century, with early models like MDL. These systems focused on simplicity and used algorithms to minimize the description length of the recommendation, laying the foundation for future developments.

- (2)

- MLP (Multilayer Perceptron): As neural networks gained prominence in the late 20th century, MLPs became a notable recommendation technology. These multilayered artificial neural networks were used for collaborative filtering and content-based recommendations.

- (3)

- BERT (Bidirectional Encoder Representations from Transformers): In 2018, BERT, a transformer-based model, revolutionized natural language processing. BERT excelled at understanding context and semantics in textual data, enhancing recommendation systems’ ability to grasp user preferences and deliver more accurate suggestions.

- (4)

- GPT (Generative Pre-trained Transformer) Series: GPT models, developed by OpenAI, represent a breakthrough in recommendation technology. Starting with GPT-1 and progressing to GPT-3.5, these models are pre-trained on massive datasets, enabling them to generate human-like text and understand context, making them applicable to a wide range of natural language processing tasks, including advanced recommendation systems.

4. Offline and online functionality

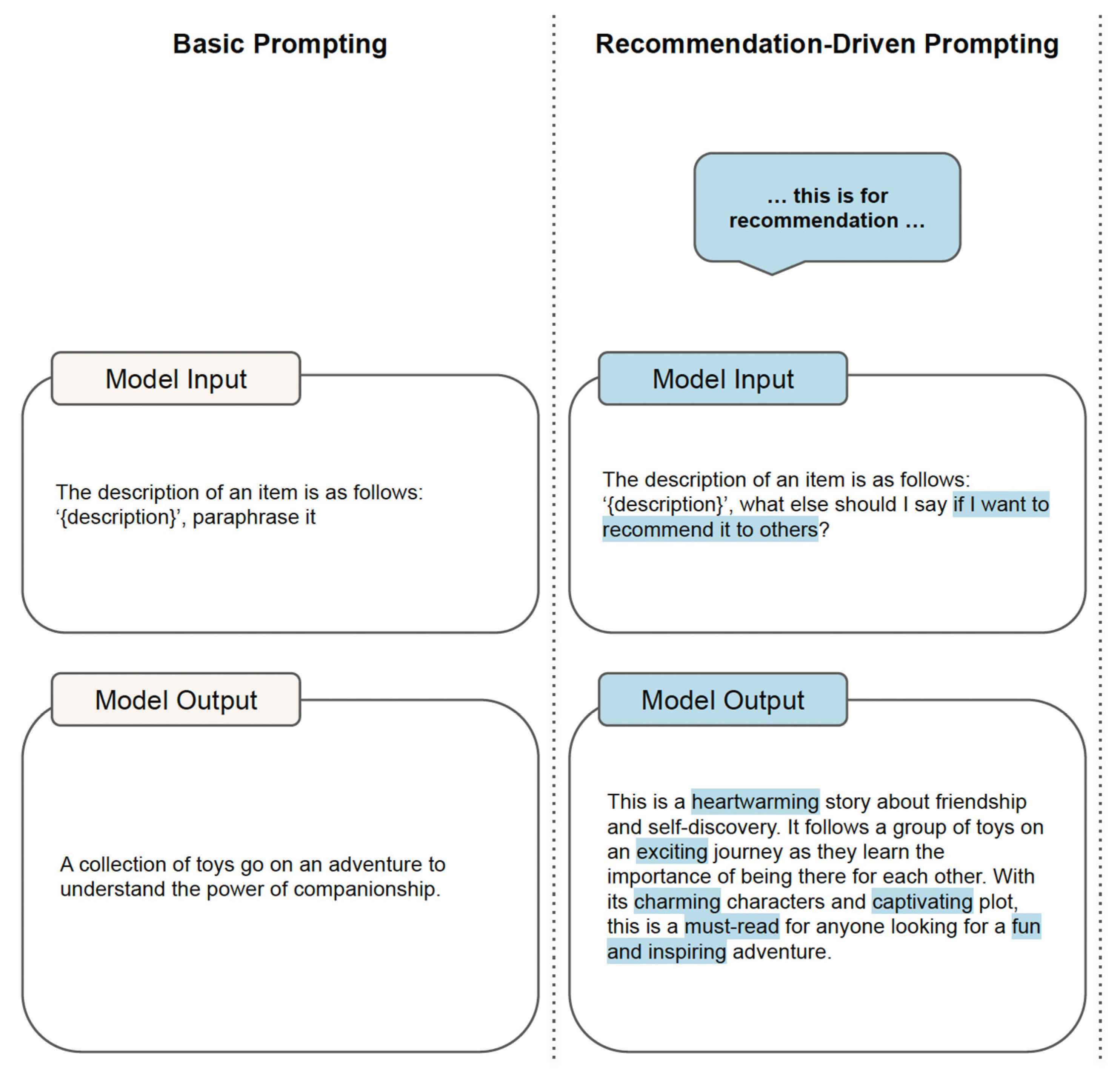

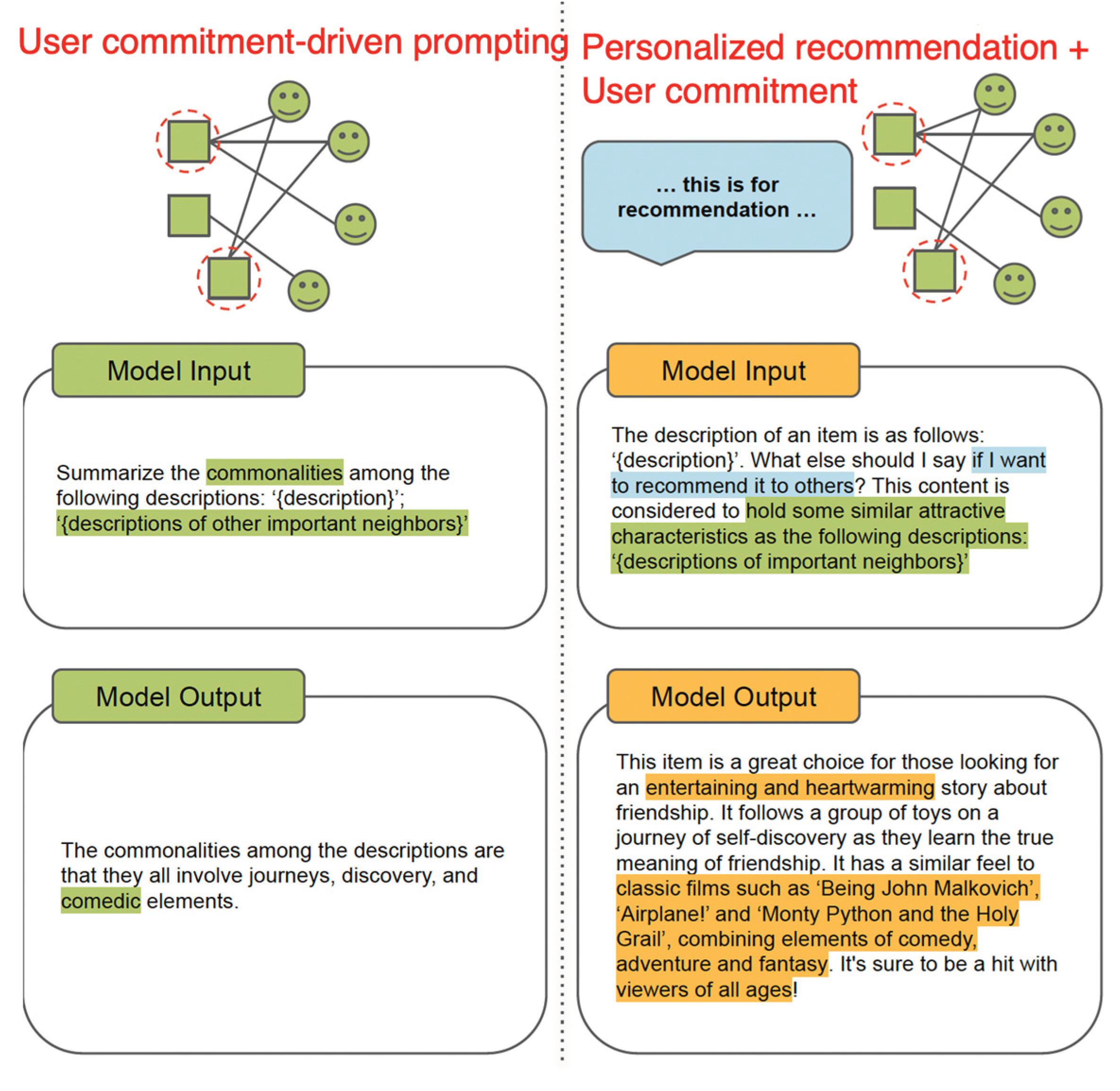

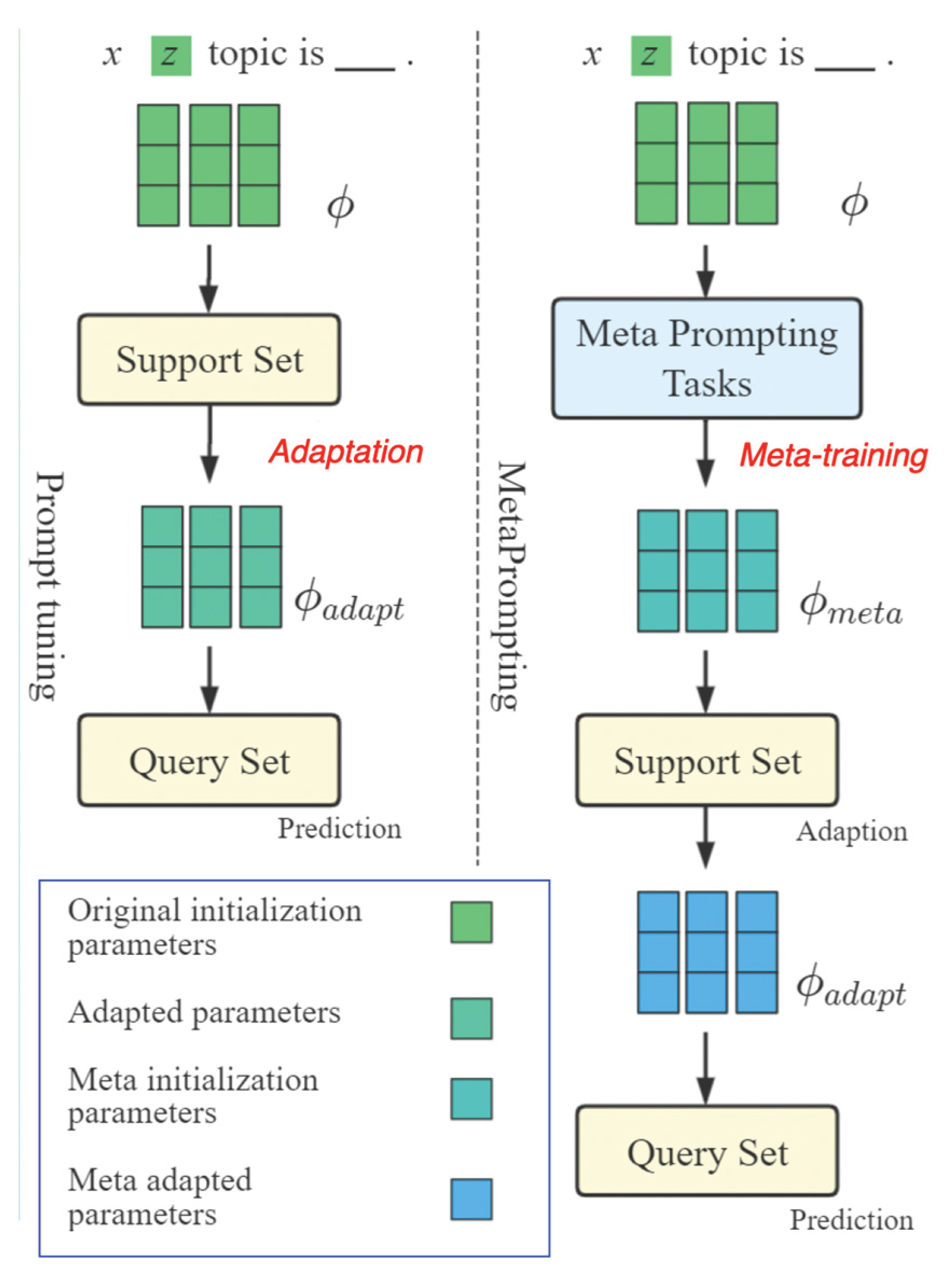

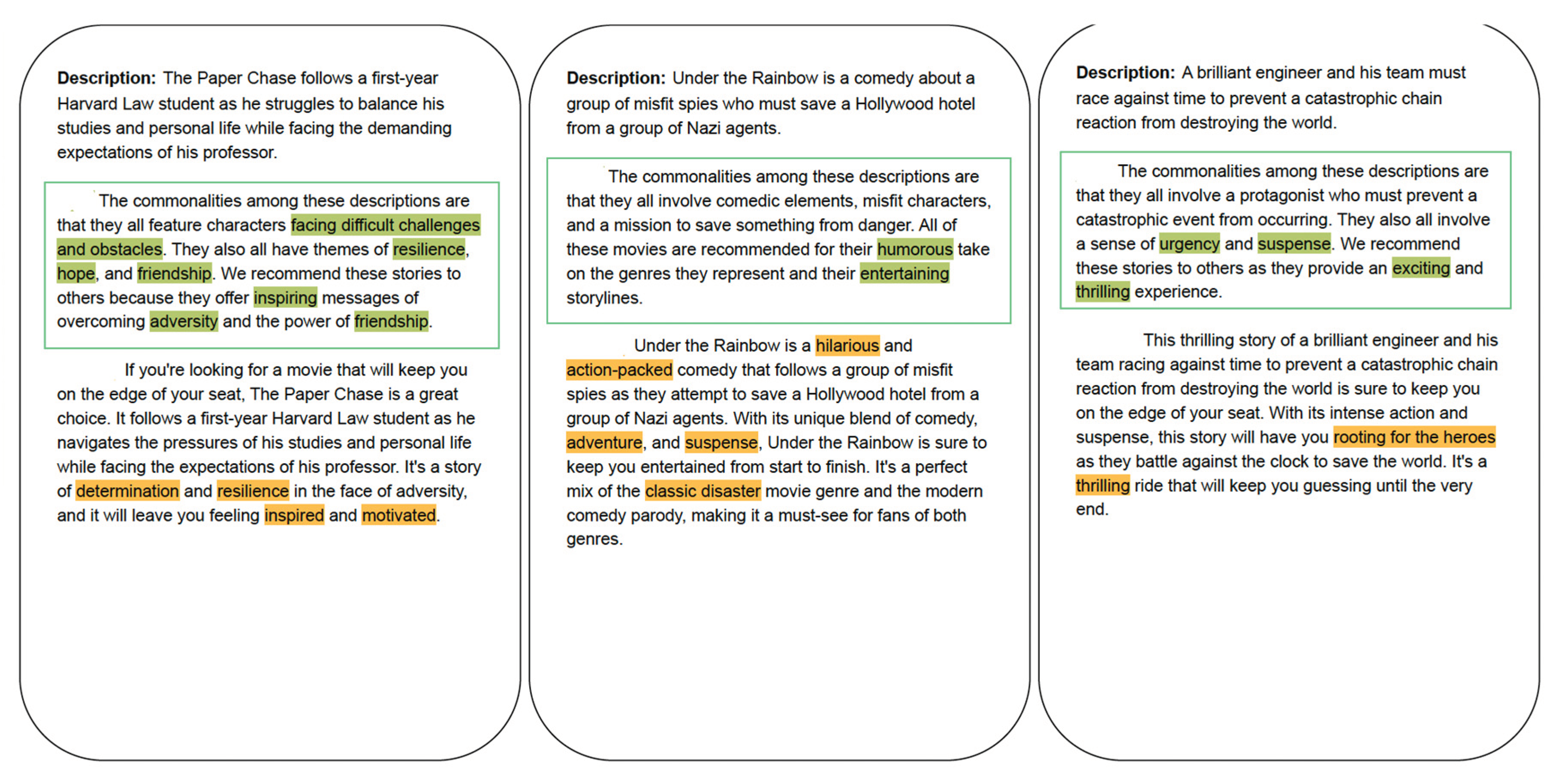

5. LLM Personalization via meta-prompting

- (1)

- Enhanced Context Precision: Explicitly stating that the generated content description is intended for content recommendation provides models with a clearer understanding of the task. This additional context enables models to align their responses more closely with the purpose of generating content descriptions for recommendation purposes.

- (2)

- Guided Generation for Recommendation: The specific instruction serves as a guiding cue for models, directing their attention towards generating content descriptions better suited for recommendation scenarios. The mention of “content recommendation” likely prompts the Large Language Model (LLM) to focus on key features, relevant details, and aspects of the content that are more instrumental in guiding users toward their preferred choices.

- (3)

- Heightened Accuracy of Recommended Entities: The instruction assists the LLM in producing content descriptions tailored to the requirements of content recommendation. This alignment with the recommendation task results in more relevant and informative descriptions, as the LLM is primed to emphasize aspects crucial for users seeking recommendations.

A Meta-prompt for building user personalized profile

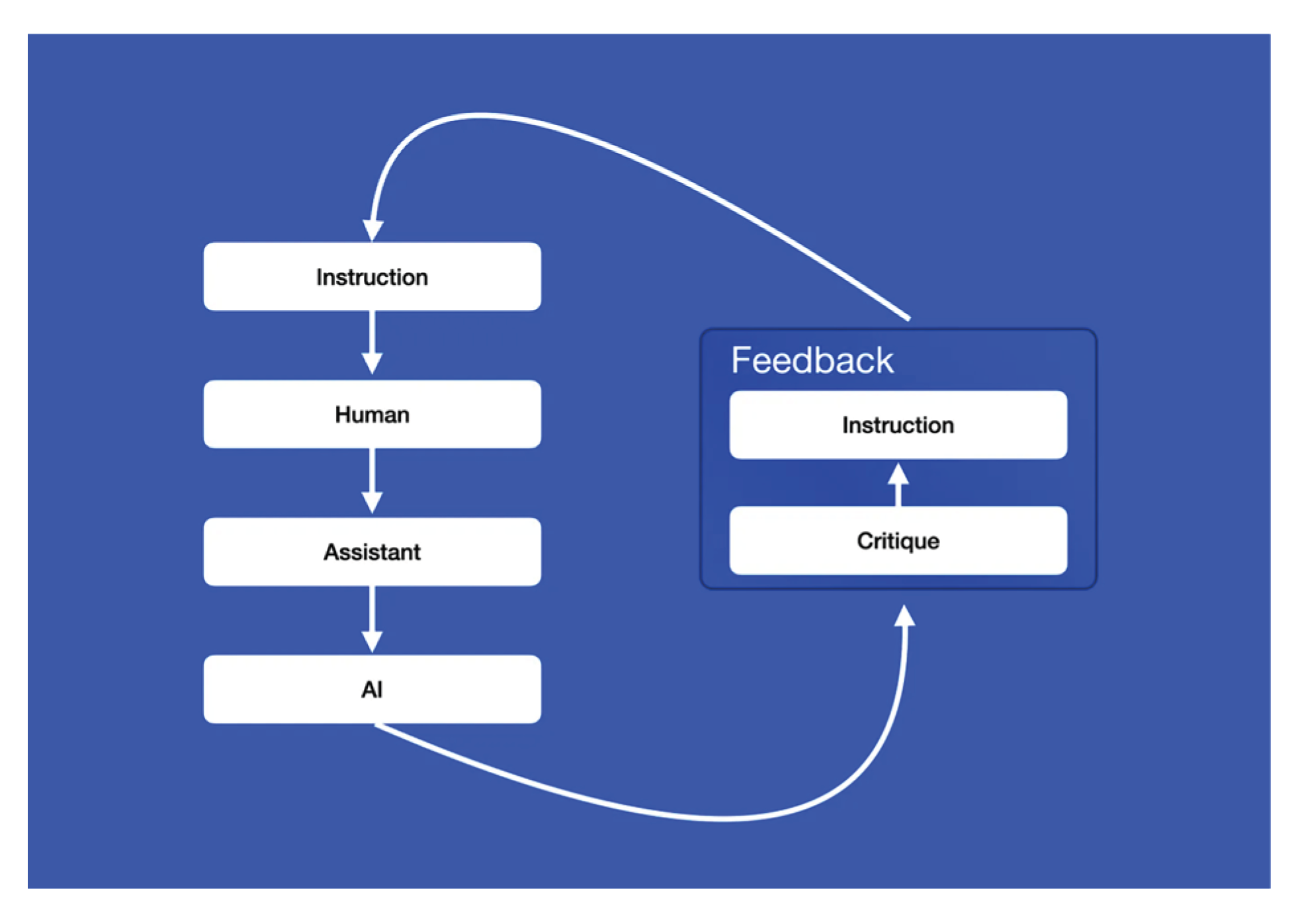

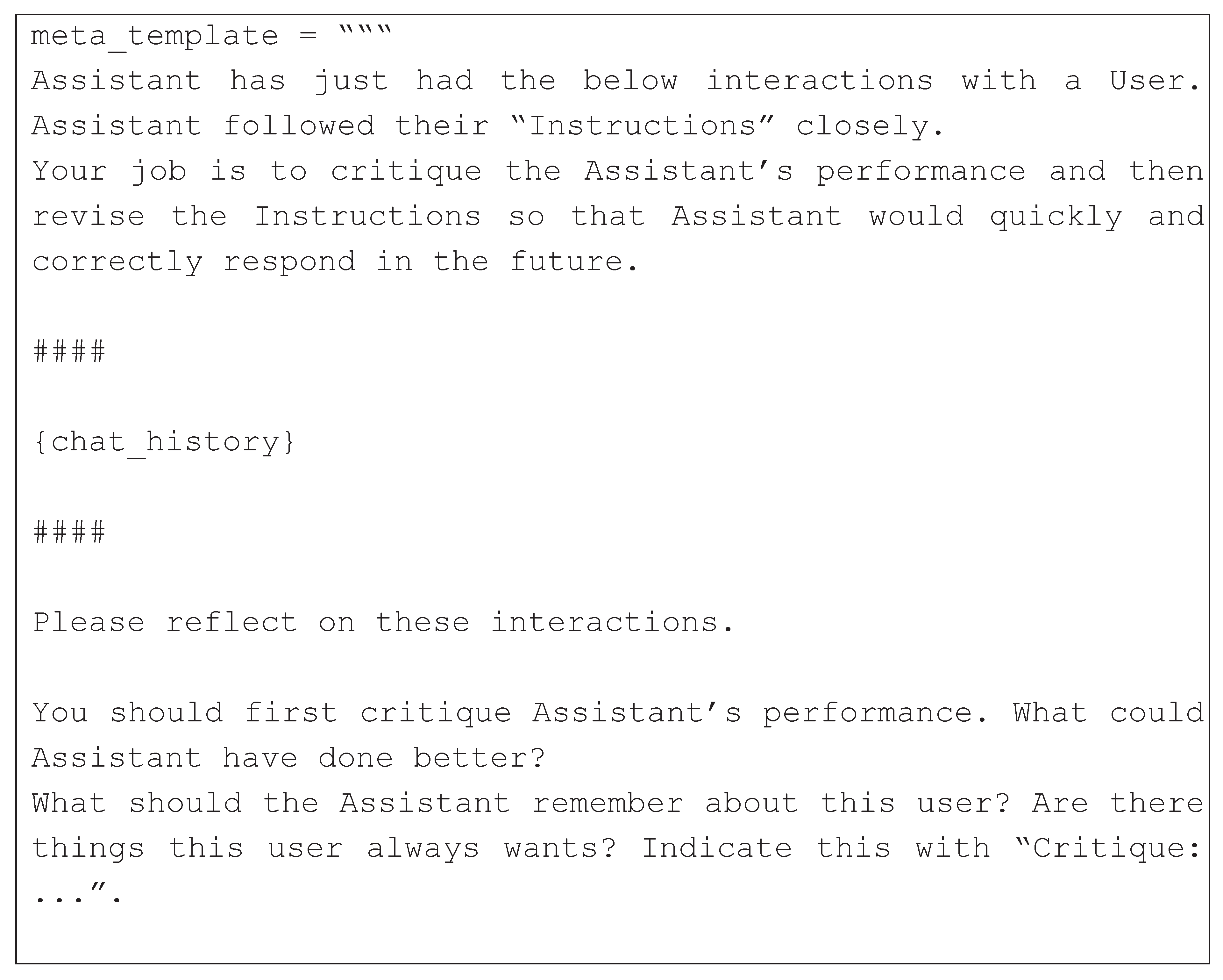

5.1. Designing meta-prompts

- (1)

- Engage in conversation with a user, who may provide requests, instructions, or feedback.

- (2)

- At the end of an episode, generate self-criticism and a new instruction using the meta-prompt.

5.2. Automated learning of meta-prompts

5.3. Prompting evaluation architecture

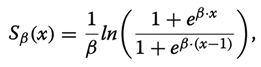

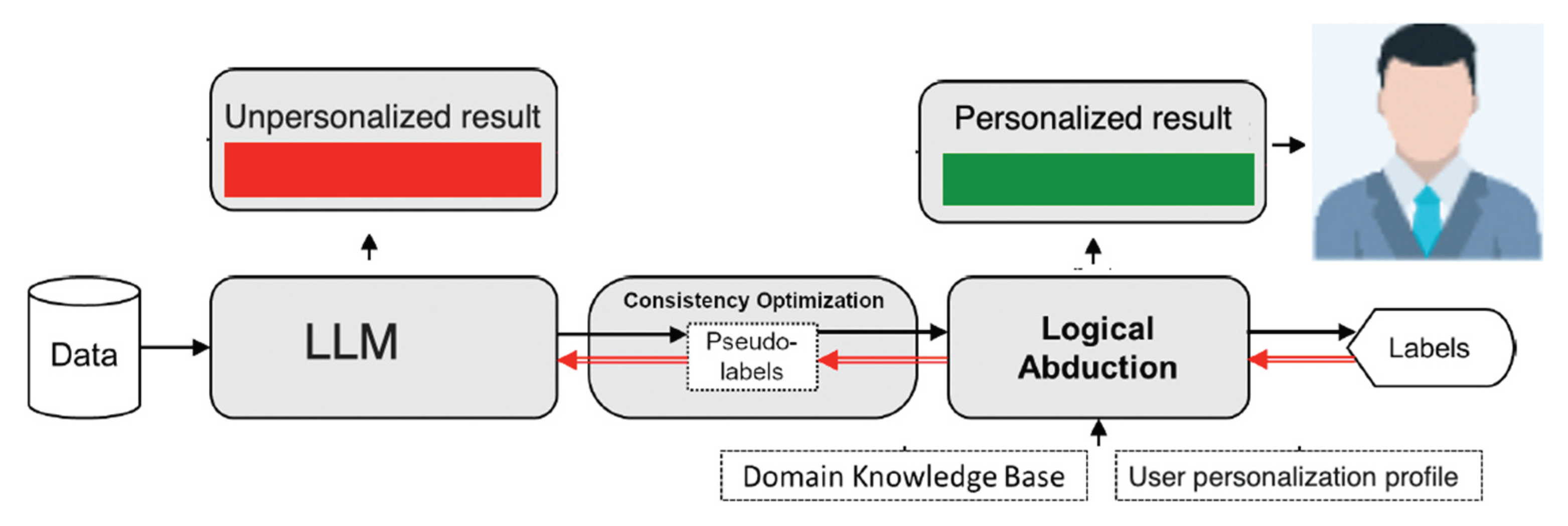

6. LLM Personalization via abduction

- (1)

- ML model does not have any ground-truth of the primitive logic facts for training;

- (2)

- without accurate primitive logic facts, the reasoning model can hardly deduce the correct output or learn the right logical theory.

- (1)

- p: X → P is a mapping from the feature space to primitive symbols, i.e., it is a perception model formulated as a conventional machine learning model;

- (2)

- ΔC is a set of first-order logical clauses that define the target concept C with B, which is called knowledge model.

7. Evaluation

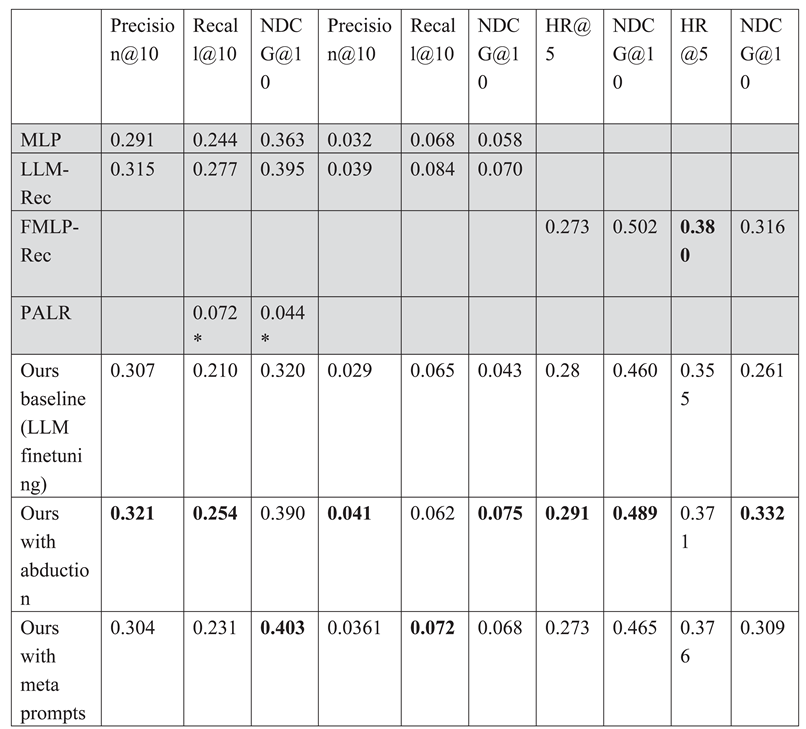

- (1)

- Ours baseline with LLM finetuning. Its implementation in health domain is available at (Galitsky-github 2024);

- (2)

- Baseline extended with abduction;

- (3)

- Baseline extended with meta prompts.

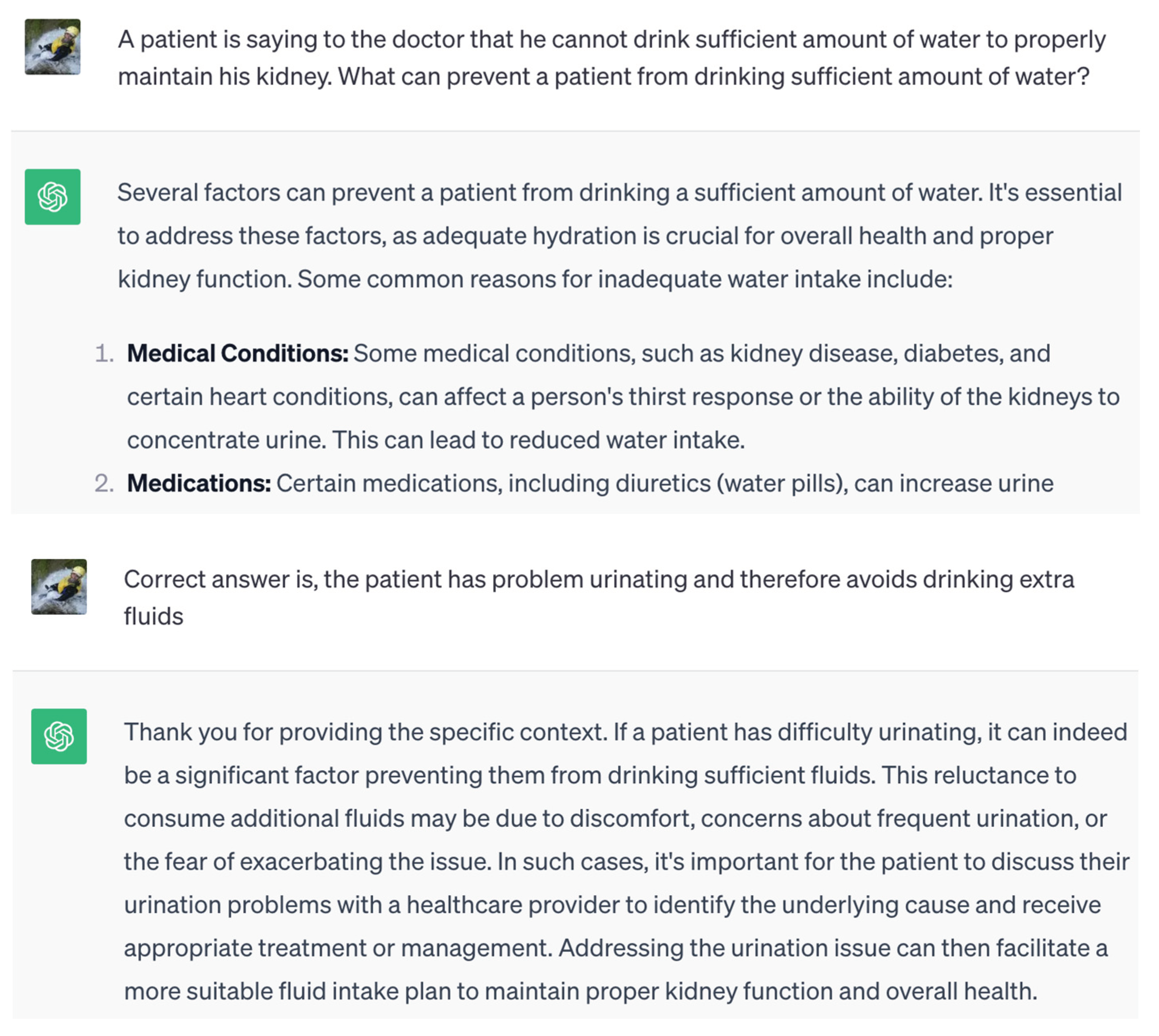

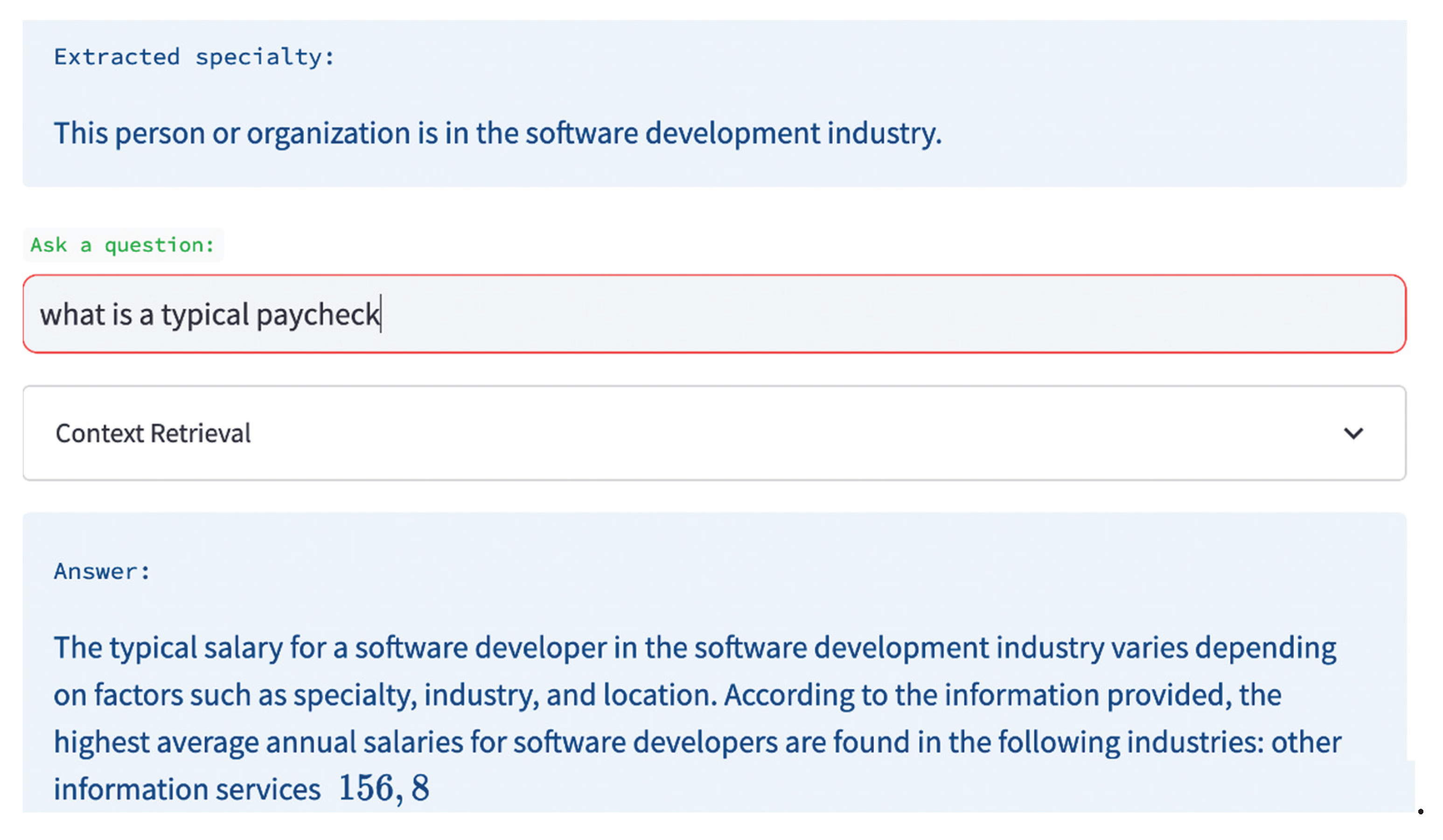

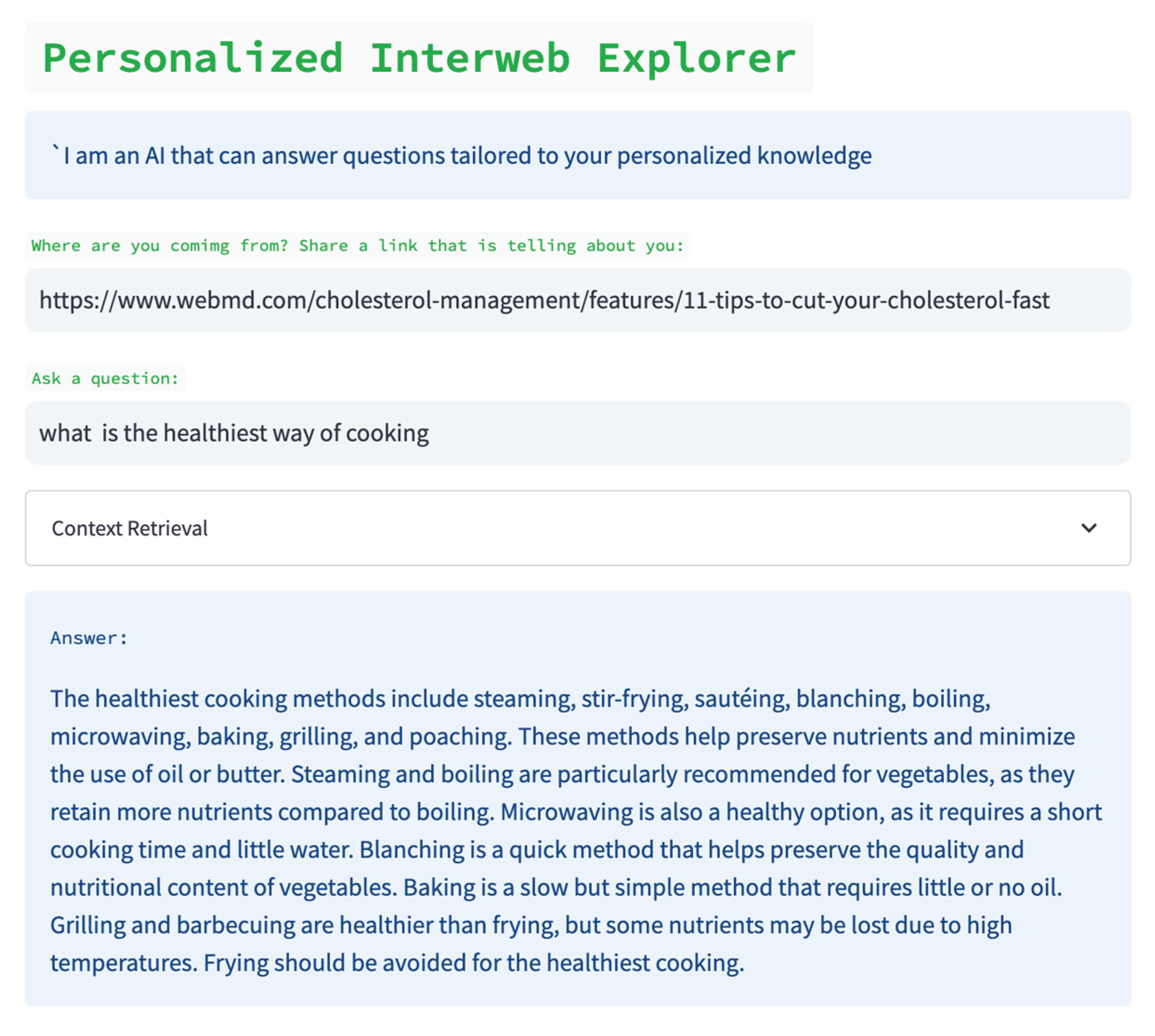

7.1. Sample sessions

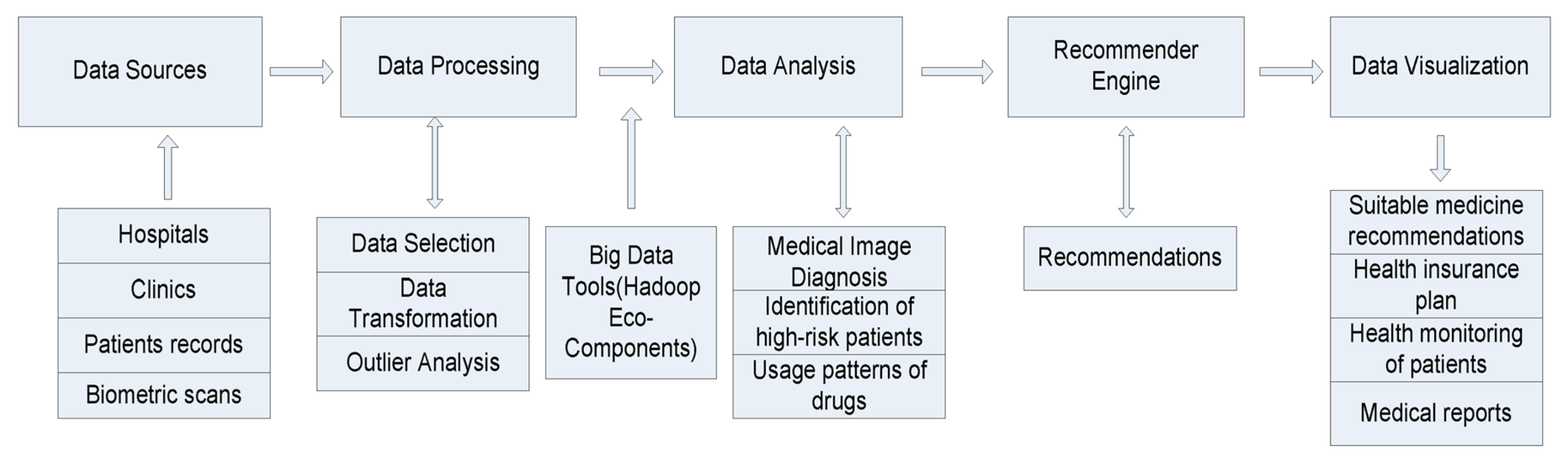

8. Personalized Recommendation in Health

- (1)

- volume (referring to the sheer amount of data generated by organizations or individuals, originating from both internal and external sources), velocity (indicating the rapid rate at which data is created, captured, and shared),

- (2)

- variety (encompassing data from diverse sources in varying formats), and

- (3)

- veracity (concerning the accuracy and consistency of obtained data), all of which play a crucial role in the healthcare domain.

8.1. Recommendation requirements

I25.1 - Atherosclerotic heart disease of native coronary artery:

8.2. Sources and Features of Recommendation

- (1)

- Credible and authoritative websites, guidelines, and books: and websites approved by healthcare professional societies such as “American Urology Society”, guidelines published by professional associations such as the European Society of Sports Medicine” guidelines.

- (2)

- Domain experts: the information source is created by researchers in medical field or clinicians

- (3)

- Similar patients: recommended items extracted from patient databases (e.g., a database of smoking students who had succeeded in managing smoking), social networks sites by crowdsourcing, or patients with similar health problems.

- (4)

- Government database such as United States Department of Agriculture food composition guidelines, dietary information from a food nutrition database of the Food and Drug Administration, Nutrition Analysis, and other government health agencies or public health organizations.

- (5)

- A range of other information resources including online health community, online registered doctors, psychotherapy approaches.

- (1)

- Personalized Disease-Related Information or Patient Educational Material: This aligns with the Pew Internet and American Life Project’s findings. Supplying patients with disease-specific information is integral to healthcare services, providing insights into symptoms, diagnostic tests, treatment options, side effects, and the skills needed for self-management and informed decision-making.

- (2)

- Personalized Dietary Information: A healthy diet is crucial for preventing noncommunicable diseases like diabetes, cardiovascular diseases, cancer, and other conditions associated with obesity. HRS can propose food options tailored to individual health goals, aiding users in cultivating healthy eating behaviors through actionable recommendations.

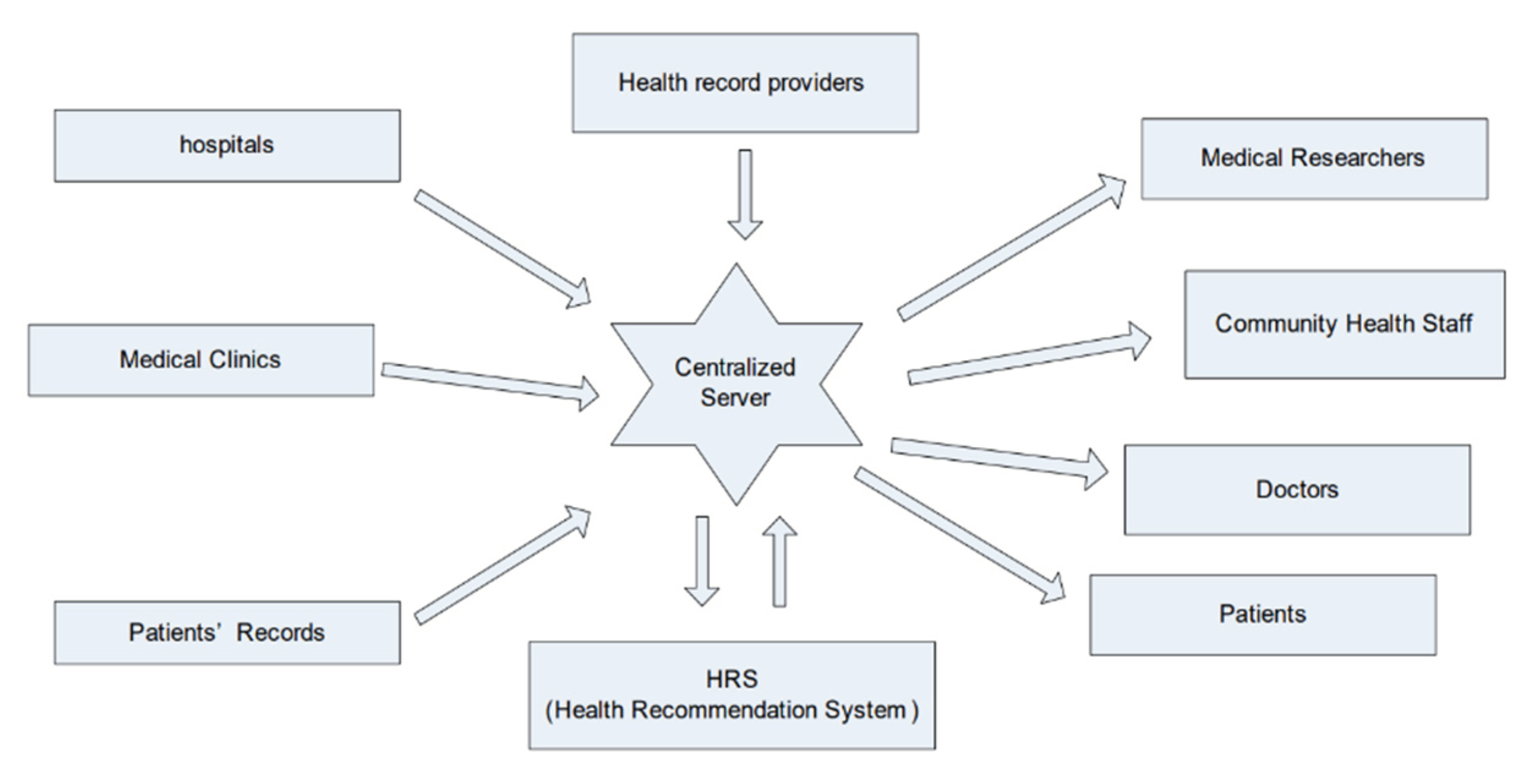

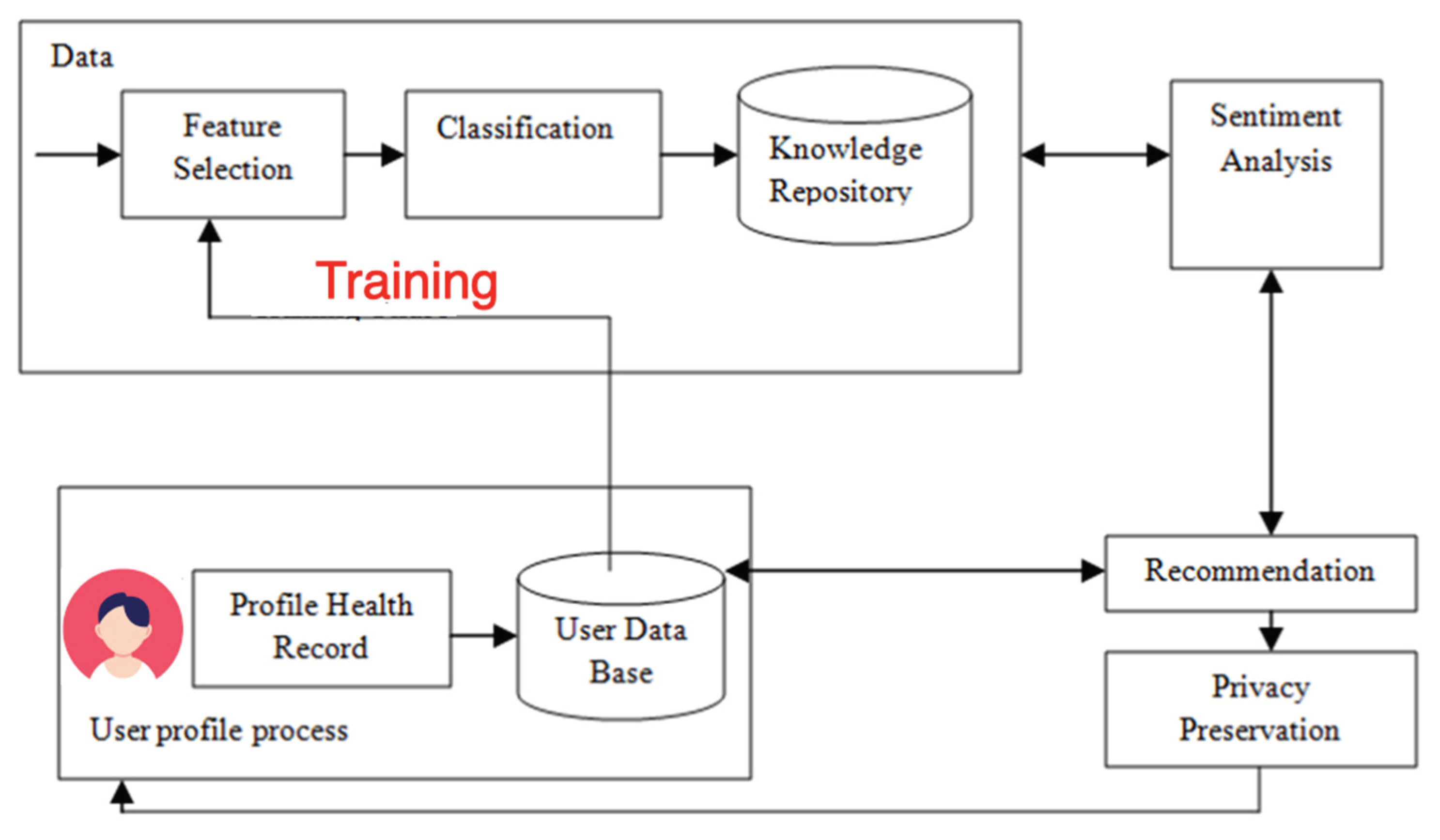

8.3. HRS Architectures

- (1)

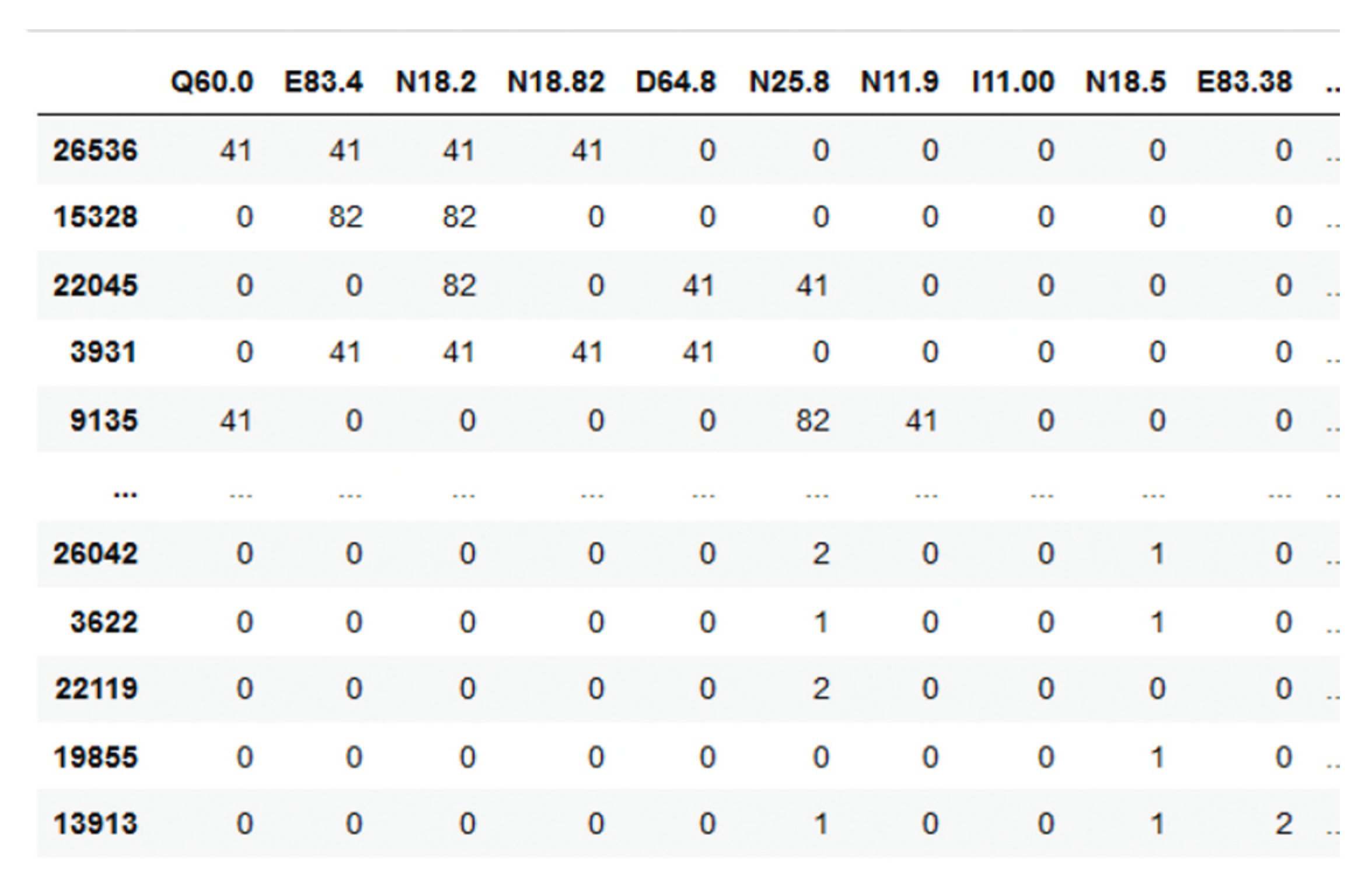

- Synthesize the patient’s information and store the result with the relevant patient parameters: age, gender, patient Identifications (ID), ICDs.

- (2)

- Cluster ICDs and TKs. This step is required to reduce the dimensionality of both parameters (high number of items) and perform predictions of TKs group number depending on patient parameters, including ICDs groups.

- (3)

- Train (deep learning model), validate, and export model to medical/hospital documentation and information system.

- (4)

- Introduce a user interface for the recommender system, using the trained deep learning model based on the medical information system’s data to recommend the treatment keys. As part of the combination of medical/hospital documentation and information recommender system, this is the process where the physician accepts or discards the recommended TK.

8.4. Neuro-symbolic recommender

8.5. Graph embedding

- (1)

- medical conditions after admission

- (2)

- surgical procedures

- (3)

- obstetric procedures

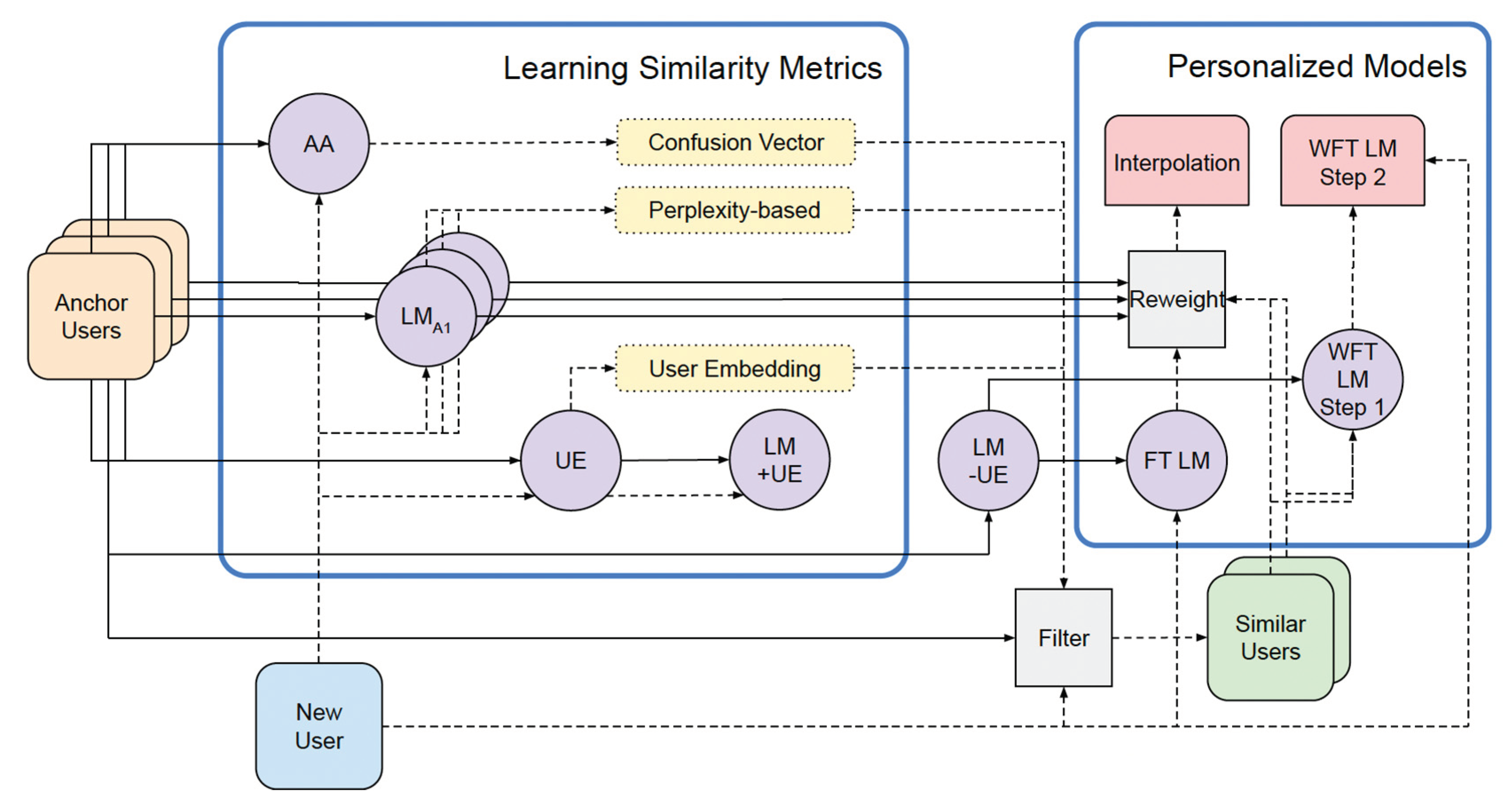

8.6. Computing similarity between patients

- (1)

- patient encounter representation learning, and

- (2)

- patient encounter similarity learning.

9. Deployment of LLM Personalization

9.1. Service-based architecture for personalization

- (1)

- Interface,

- (2)

- Orchestrator, and

- (3)

- External Sources.

9.2. Decision Support

10. Discussion and Conclusions

- (1)

- engage with user via interactions

- (2)

- present outputs in a verbalized and explainable way;

- (3)

- receive feedback from the user;

- (4)

- make adjustment on top of the feedback from passively making search;

- (5)

- recommendation help to proactively figuring out user’s need and seeking for user’s preferred items.

- (1)

- Take notes of users’ important information within their memory,

- (2)

- make personalized plans based on memorized information when new demands are raised,

- (3)

- execute plans by leveraging tools like search engines and recommendation systems

- (1)

- Personalization requires an understanding of user preference, which is a domain-specific knowledge rather than the commonsense knowledge learned by LLMs;

- (2)

- Unclear how to effectively and efficiently adapt of LLMs for personalized services;

- (3)

- LLMs could memorize user’s confidential information while providing personalized services;

- (4)

- There is a risk that most of the recommendations are similarity based (i.e., items that are semantically similar to the items purchased so far), though I do think with extensive training data of user purchase history, we can ameliorate this issue via the “collaborative filtering” approach that this method could mimic;

- (5)

- Also, since there is no weighting assigned to various actions or events a user undertakes, from exploration to the eventual purchase of an item, our reliance is solely on the LLM’s prediction of the most probable next token(s) for recommendations. We are unable to consider factors such as items bookmarked, items observed for a duration, items added to the cart, and similar user interactions.

- Babylon Health: A UK-based company, Babylon Health utilizes LLMs to deliver virtual healthcare services through its app. Patients can engage in conversations with doctors or nurses assisted by LLMs. The LLM aids in diagnosing conditions, recommending treatments, and providing answers to inquiries.

- InteliHealth: Operating in the United States, InteliHealth employs LLMs to craft personalized health plans. Through the InteliHealth website, patients respond to questions about their health and lifestyle, and the LLM generates a tailored plan encompassing recommendations for diet, exercise, and medication.

- Google Health: Google Health, functioning as a personal health record (PHR), integrates LLMs to assist patients in managing their health. Users can track their medical history, medications, and symptoms using Google Health. The LLM then generates reports and insights to enhance patients’ understanding of their health.

11. Hands-on

References

- Abbasian M, Iman Azimi, Amir M. Rahmani, Ramesh Jain (2023) Conversational Health Agents: A Personalized LLM-Powered Agent Framework. https://aps.arxiv.org/abs/2310.02374.

- Aboufoul M (2023) Using Large Language Models as Recommendation Systems. A review of recent research and a custom implementation. https://towardsdatascience.com/using-large-language-models-as-recommendation-systems-49e8aeeff29b.

- Bamman D, Chris Dyer, and Noah A. Smith. (2014) Distributed representations of geographically situated language. In Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers).

- Beckers health (2023) https://www.beckershospitalreview.com/healthcare-information-technology/google-receives-more-than-1-billion-health-questions-every-day.html, accessed on 6 February 2021).

- Bocanegra CL, Sevillano JL, Rizo C, Civit A, Fernandez-Luque L. HealthRecSys: a semantic content-based recommender system to complement health videos. BMC Med Inform Decis Mak. 2017 May 15;17(1):63. [CrossRef]

- Cai Y, Yu F, Kumar M, Gladney R, Mostafa J. Health Recommender Systems Development, Usage, and Evaluation from 2010 to 2022: A Scoping Review. Int J Environ Res Public Health. 2022 Nov 16;19(22):15115.

- Carter, E.L.; Nunlee-Bland, G.; Callender, C. 2011. A Patient-Centric, Provider-Assisted Diabetes Telehealth Self-Management Intervention for Urban Minorities. Perspect. Health Inf. Manag.

- Chen, J., Liu, Z., Huang, X., Wu, C., Liu, Q., Jiang, G., Pu, Y., Lei, Y., Chen, X., Wang, X., Lian, D., & Chen, E. (2023). When Large Language Models Meet Personalization: Perspectives of Challenges and Opportunities. ArXiv, abs/2307.16376.

- Colab 2023 https://colab.research.google.com/github/Mohammadhia/t5_p5_recommendation_system/blob/main/T5P5_Recommendation_System.ipynb.

- Dai, W., Xu, Q., Yu, Y., & Zhou, Z. (2019). Bridging Machine Learning and Logical Reasoning by Abductive Learning. Neural Information Processing Systems.

- De Croon R, Van Houdt L, Htun NN, Štiglic G, Vanden Abeele V, Verbert K. Health Recommender Systems: Systematic Review. J Med Internet Res. 2021 Jun 29;23(6):e18035.

- Denecker, M. and Kakas, A.C. (2002). “Abduction in Logic Programming”. In Kakas, A.C.; Sadri, F. (eds.). Computational Logic: Logic Programming and Beyond: Essays in Honour of Robert A. Kowalski. Lecture Notes in Computer Science. Vol. 2407. Springer. pp. 402–437.

- Di Palma D, Giovanni Maria Biancofiore, Vito Walter Anelli, Fedelucio Narducci, Tommaso Di Noia, Eugenio Di Sciascio (2023) Evaluating ChatGPT as a Recommender System: A Rigorous Approach. https://arxiv.org/abs/2309.03613.

- Ficler, Jessica, and Yoav Goldberg. ”Controlling linguistic style aspects in neural language generation.” arXiv preprint arXiv:1707.02633 (2017).

- Flek L 2020. Returning the N to NLP: Towards contextually personalized classification models. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 7828–7838. 7828.

- Friedman L, S. Ahuja, D. Allen, T. Tan, H. Sidahmed, C. Long, J. Xie, G. Schubiner, A. Patel, H. Lara et al., “Leveraging large language models in conversational recommender systems,” arXiv preprint arXiv:2305.07961, 2023.

- Galitsky B -github (2024) Treatment Recommendation Personalization System. https://github.com/bgalitsky/LLM-personalization/blob/main/Treatment_Recommendation_Personalization_System.ipynb.

- Galitsky B (2002) Designing the personalized agent for the virtual mental world. AAAI FSS-2002 Symposium on Personalized agents, 21-29.

- Galitsky B (2005) A Personalized Assistant for Customer Complaints Management Systems.AAAI Spring Symposium: Persistent Assistants: Living and Working with AI, 84-89.

- Galitsky B (2016) Providing personalized recommendation for attending events based on individual interest profiles. Artif. Intell. Res. 5 (1), 1-13.

- Galitsky B (2017) Content inversion for user searches and product recommendations systems and methods US Patent 9,336,297.

- Galitsky B (2021) Recommendation by Joining a Human Conversation. In book: Artificial Intelligence for Customer Relationship Management: Solving Customer Problems, Springer, Cham.

- Galitsky B, EW McKenna (2017) Sentiment extraction from consumer reviews for providing product recommendations. US Patent 9,646,078.

- Galitsky B, Kovalerchuk B (2014) Improving web search relevance with learning structure of domain concepts. In: Clusters, Orders, and Trees: Methods and Applications: In Honor of Boris Mirkin’s 70th Birthday. 341-376. Springer New York.

- Galitsky B(2023) Generating recommendations by using communicative discourse trees of conversations. US Patent 11,599,731. 11,599.

- Gao T, Adam Fisch, and Danqi Chen. 2021. Making pre-trained language models better few-shot learners. In Proc. of ACL-IJCNLP, pages 3816–3830.

- Gao Y, T. Sheng, Y. Xiang, Y. Xiong, H. Wang, and J. Zhang, “Chat-rec: Towards interactive and explainable LLMs-augmented recommender system,” arXiv preprint arXiv:2303.14524, 2023. arXiv:2303.14524, 2023.

- Garimella A, Carmen Banea, and Rada Mihalcea. 2017. Demographic-aware word associations. In nProceedings of the 2017 Conference on Empirical Methods in Natural Language Processing.

- Geng S, Shuchang Liu, Zuohui Fu, Yingqiang Ge, and Yongfeng Zhang. 2022. Recommendation as Language Processing (RLP): A Unified Pretrain, Personalized Prompt & Predict Paradigm (P5). In Proceedings of the 16th ACM Conference on Recommender Systems (RecSys ‘22). Association for Computing Machinery, New York, NY, USA, 299–315. [CrossRef]

- Goodman N (2023) Meta-Prompt: A Simple Self-Improving Language Agent. https://noahgoodman.substack.com/p/meta-prompt-a-simple-self-improving.

- Greylink C (2023) LLMs and meta-prompting. https://cobusgreyling.medium.com/meta-prompt-60d4925b4347. Last downloaded Nov 22, 2023.

- Gu Y, Xuebing Yang, Lei Tian, Hongyu Yang, Jicheng Lv, Chao Yang, Jinwei Wang, Jianing Xi, Guilan Kong, Wensheng Zhang. 2022 Structure-aware siamese graph neural networks for encounter-level patient similarity learning, Journal of Biomedical Informatics, Volume 127.

- He, Z., Zhouhang Xie, Rahul Jha, Harald Steck, Dawen Liang, Yesu Feng, Bodhisattwa Prasad Majumder, Nathan Kallus, and Julian McAuley. 2023. Large Language Models as Zero-Shot Conversational Recommenders. In Proceedings of the 32nd ACM International Conference on Information and Knowledge Management (CIKM ’23), October 21–25, 2023, Birmingham, United Kingdom.

- Hou Y (2023) Awesome LLM-powered agent. https://github.com/hyp1231/awesome-llm-powered-agent.

- Hou Y, Hongyuan Dong, Xinghao Wang, Bohan Li, and Wanxiang Che. 2022. MetaPrompting: Learning to Learn Better Prompts. In Proceedings of the 29th International Conference on Computational Linguistics, pages 3251–3262.

- Kakas AC, R. A. Kowalski, and F. Toni. Abductive logic programming. Journal of Logic Computation, 2(6):719–770, 1992.

- Khaleel I., Wimmer B.C., Peterson G.M., Zaidi S.T.R., Roehrer E., Cummings E., Lee K. Health Information Overload among Health Consumers: A Scoping Review. Patient Educ. Couns. 2020;103:15–32.

- Kirk HR, Bertie Vidgen, Paul Röttger, and Scott A Hale. 2023. Personalisation within bounds: A risk taxonomy and policy framework for the alignment of large language models with personalised feedback. arXiv preprint arXiv:2303.05453.

- Lewis, Robert, Ferguson, Craig, Wilks, Chelsey, Jones, Noah and Picard, Rosalind. 2022. Can a Recommender System Support Treatment Personalisation in Digital Mental Health Therapy? A Quantitative Feasibility Assessment Using Data from a Behavioural Activation Therapy App.

- Li, Xiang Lisa and Percy Liang. 2021. Prefix-tuning: Optimizing continuous prompts for generation. In Proc. of ACL-IJCNLP, pages 4582–4597.

- Lyu H and Song Jiang and Hanqing Zeng and Qifan Wang and Si Zhang and Ren Chen and Chris Leung and Jiajie Tang and Yinglong Xia and Jiebo Luo (2023) LLM-Rec: Personalized Recommendation via Prompting Large Language Models, arXiv2307.15780.

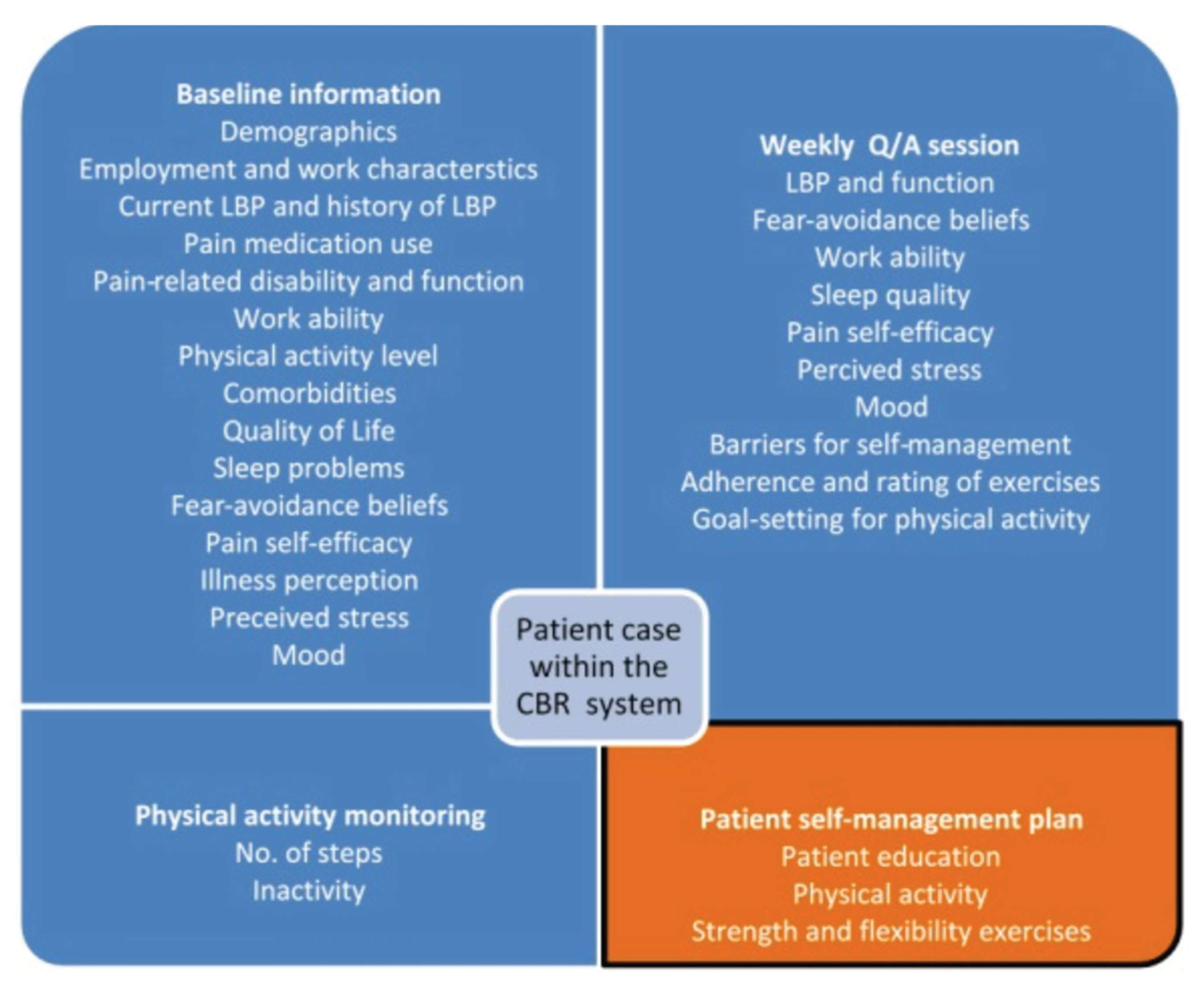

- Mork PJ, Bach K; selfBACK Consortium. A Decision Support System to Enhance Self-Management of Low Back Pain: Protocol for the selfBACK Project. JMIR Res Protoc. 2018 Jul 20;7(7):e167.

- Neisser, U. (1976). Cognition and reality. San Francisco, CA: W. H. Freeman and Company.

- Nickel, M., and Kiela, D. (2017). Poincar\’e Embeddings for Learning Hierarchical Representations. ArXiv170508039 Cs Stat.

- Ochoa JGD, Mustafa FE. Graph neural network modelling as a potentially effective method for predicting and analyzing procedures based on patients’ diagnoses. Artif Intell Med. 2022 Sep;131:102359.

- Ochoa, J.G.D., Csiszár, O. & Schimper, T. Medical recommender systems based on continuous-valued logic and multi-criteria decision operators, using interpretable neural networks. BMC Med Inform Decis Mak 21, 186 (2021).

- Pew Research Center 2021 https://www.pewresearch.org/internet/2005/05/17/health-information-online/, accessed on 6 February 2021).

- Rocheteau, E., Tong, C., Veličković, P., Lane, N., and Liò, P. (2021). Predicting Patient Outcomes with Graph Representation Learning. ArXiv210103940 Cs.

- Sahoo, A.K.; Pradhan, C.; Barik, R.K.; Dubey, H. DeepReco: Deep Learning Based Health Recommender System Using Collaborative Filtering. Computation 2019, 7, 25.

- Salemi A, S Mysore, M Bendersky, H Zamani (2023) LaMP: When Large Language Models Meet Personalization. arXiv:2304.11406.

- Skopyk Khrystyna, Artem Chernodub and Vipul Raheja (2022)Personalizing Large Language Models.

- Sun Y, Zhou J, Ji M, Pei L, Wang Z. Development and Evaluation of Health Recommender Systems: Systematic Scoping Review and Evidence Mapping. J Med Internet Res. 2023.

- Syed, Bakhtiyar, et al. Adapting language models for non-parallel author-stylized rewriting.” Proceedings of the AAAI Conference on Artificial Intelligence. Vol. 34.

- No. 05. 2020.”.

- Wang A, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel Bowman. 2018. GLUE: A multi-task benchmark and analysis platform for natural language understanding. In Proceedings of the 2018 EMNLP Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP, pages 353–355, Brussels, Belgium. Association for Computational Linguistics.

- Wei Y, Xiang Wang, Liqiang Nie, Xiangnan He, Richang Hong, and Tat-Seng Chua(2019). MMGCN: Multi-modal Graph Convolution Network for Personalized Recommendation of Micro-video. In ACM MM`19, NICE, France, Oct. 21-25, 2019. 2019; -25.

- Welch C, Jonathan K. Kummerfeld, Veronica Perez-Rosas, and Rada Mihalcea. 2020. Compositional demographic word embeddings. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing.

- Welch, Charles & Gu, Chenxi & Kummerfeld, Jonathan & Pérez-Rosas, Verónica & Mihalcea, Rada. (2022). Leveraging Similar Users for Personalized Language Modeling with Limited Data. ACL, 1742-1752.

- Wiesner M, Pfeifer D. Health recommender systems: concepts, requirements, technical basics and challenges. Int J Environ Res Public Health. 2014 Mar 3;11(3):2580-607.

- Xue, Gui-Rong & Han, Jie & Yu, Yong & Yang, Qiang (2009) User language model for collaborative personalized search. ACM Trans. Inf. Syst.. 27. [CrossRef]

- Yang F, Zheng Chen, Ziyan Jiang, Eunah Cho, Xiaojiang Huang, Yanbin Lu (2023) Palr: Personalization aware LLMs for recommendation,” arXiv preprint arXiv:2305.07622.

- Yang, Diyi, and Lucie Flek. (2021) Towards User-Centric Text-to-Text Generation: A Survey.” International Conference on Text, Speech, and Dialogue. Springer, Cham, 2021.

- Zhou K, Hui Yu, Wayne Xin Zhao, and Ji-Rong Wen. 2022. Filter-enhanced MLP is All You Need for Sequential Recommendation. In Proceedings of the ACM Web Conference 2022 (WWW ‘22). Association for Computing Machinery, New York, NY, USA, 2388–2399.

|

| User Attribute | Features |

|---|---|

| Demographics | name, gender, age, body mass index, location, average charge, ethnicity, education level, marital status, blood type, weight, height, hip, waist, occupation, address, and income |

| Health status | symptoms, complications, treatments, health checks, diagnosis, diseases, severity, physiological, capability, depression, sleep, and pregnancy |

| Laboratory test | blood glucose, total cholesterol, triglyceride, high/low density lipoprotein, uric acid, blood pressure, hypertrophy, carotid artery plaque, carotid femoral pulse wave velocity, ankle brachial index, and heart rate |

| Disease history | family history, allergy, and smoking or alcohol history |

| Medication | routine medicine that patient took |

| Food intake | food types, food size, food names and ingredients, and dietary record |

| Physical activity | activity level (high, moderate, low), type of activity, duration, past activity, intensity, and frequency |

| Explicit data (information preference provided by the user) | studies used the direct input preference from patients to generate recommendations OR utilized user ratings |

| Implicit data | generated from users’ interactions with the system (e.g., navigation or interaction logs) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).