Submitted:

27 February 2024

Posted:

27 February 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methods

2.1. Materials

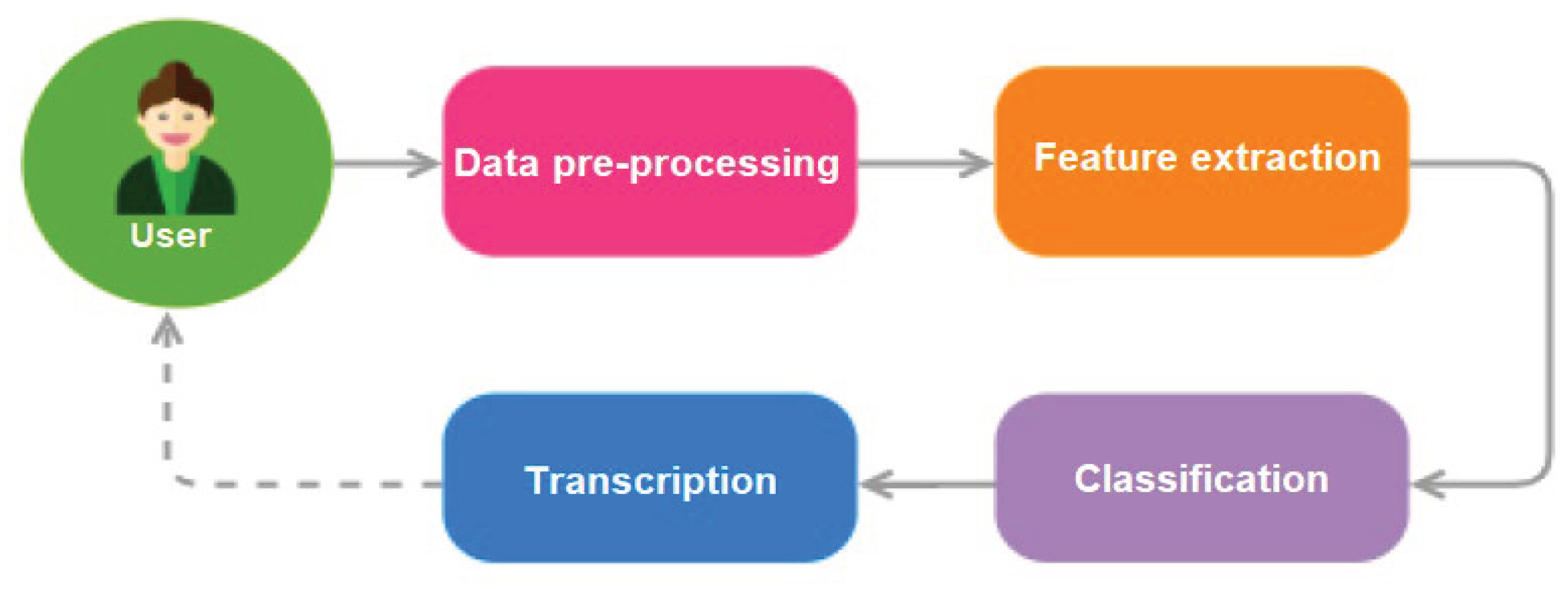

2.1.1. Data Preprocessing

2.1.2. Dataset

2.1.3. Archetypal Selection and Training

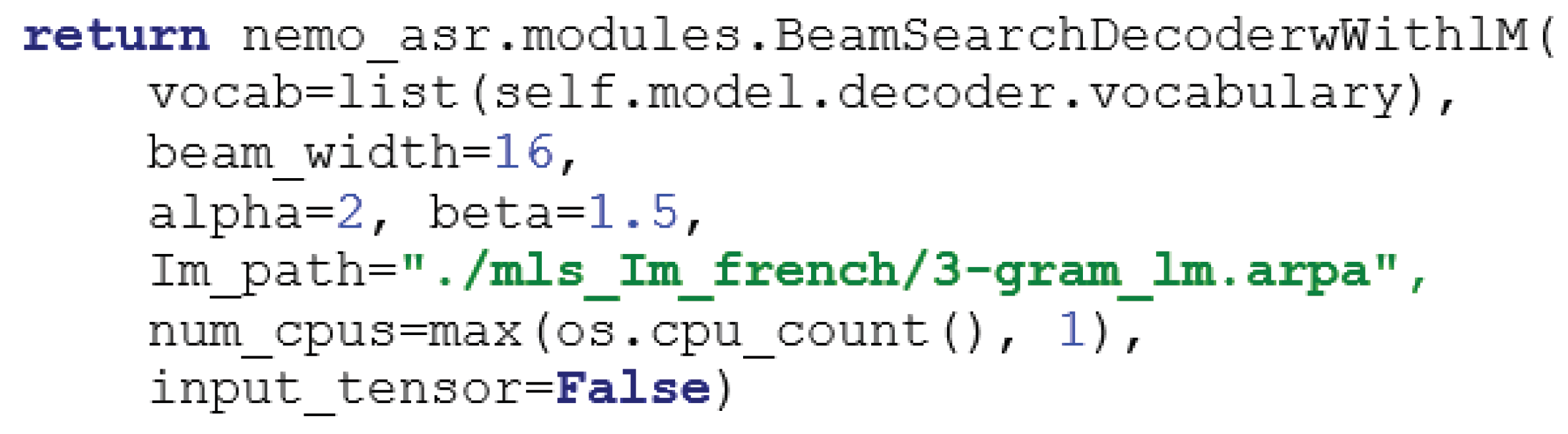

2.1.4. Language Modeling

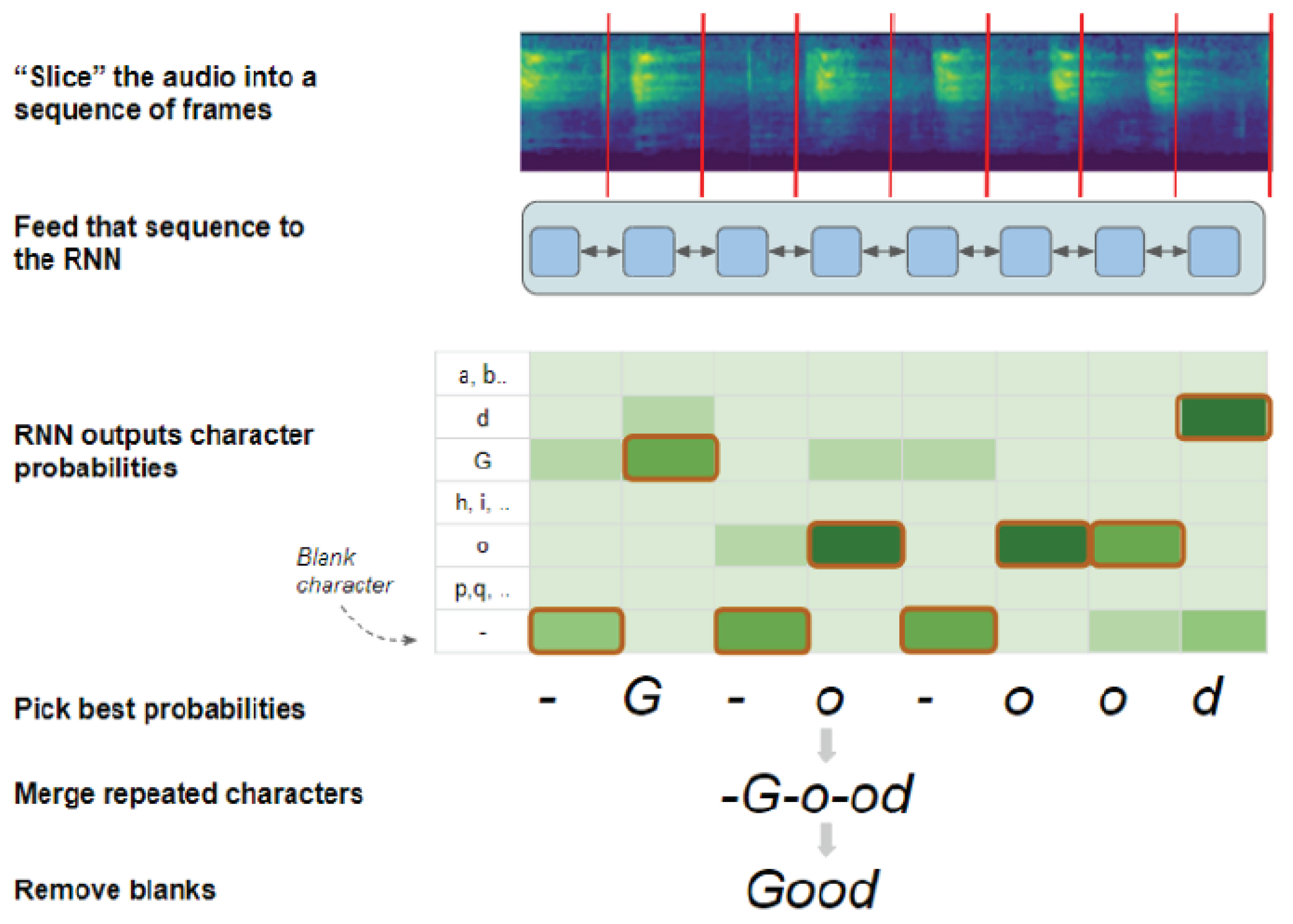

2.1.5. Model Architecture

2.2. Tasks and design

2.2.1. Conceptual Foundation

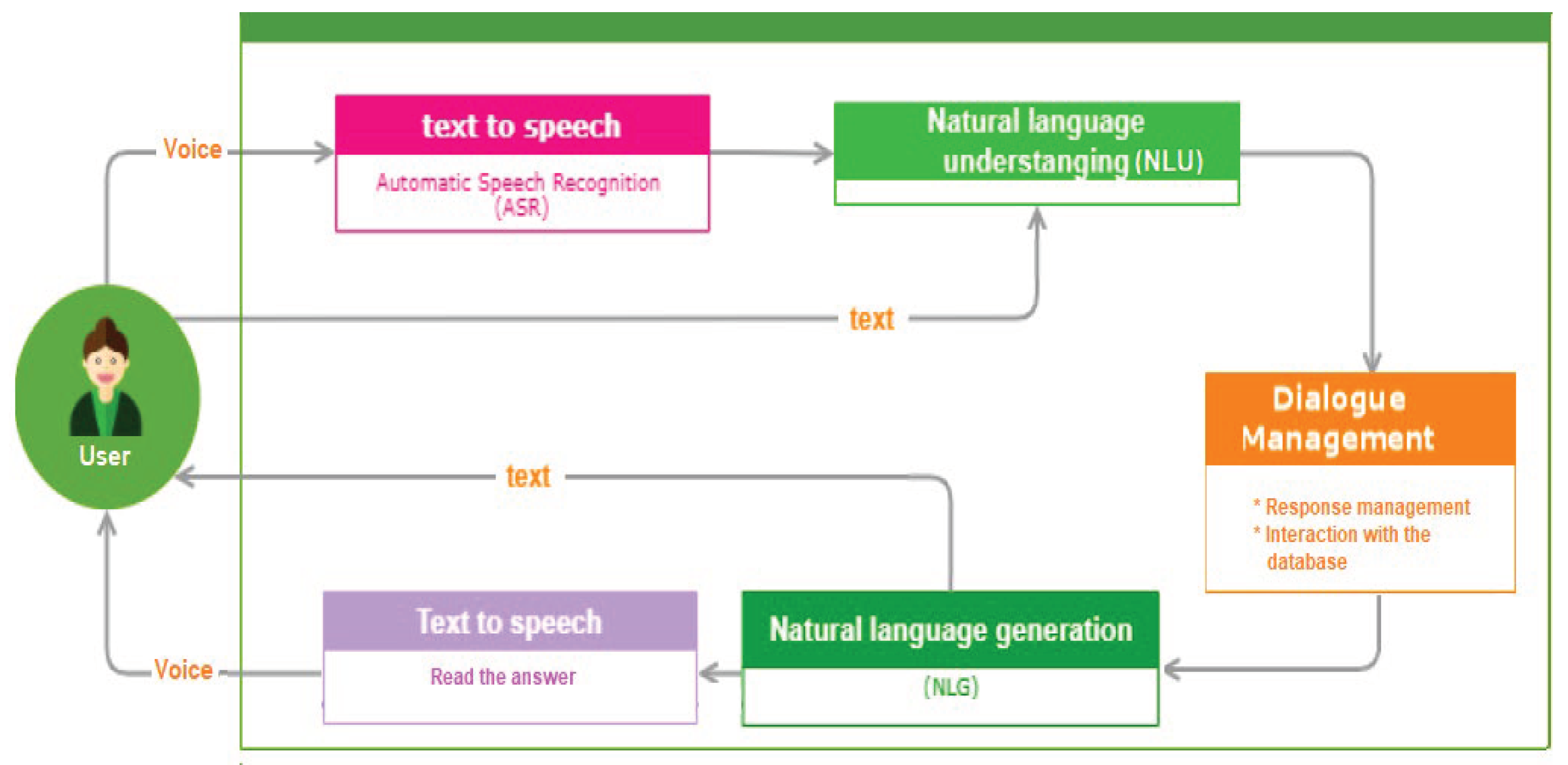

- Natural Language Understanding (NLU): This facet of NLP focuses on converting user input into a structured format that algorithms can interpret, thereby discerning the underlying intent and entities in a given text [26].

- Natural Language Generation (NLG): Contrasting NLU, NLG is concerned with formulating coherent responses in natural language based on the machine’s understanding [27].

2.2.2. User Interaction Dynamics

2.2.3. Rasa Architectural Components

- NLU Pipeline: responsible for intent classification, entity extraction, and response generation [34]. It processes user inputs through a trained model, ensuring accurate intent recognition.

- Dialogue Management: discerns the optimal subsequent action in a conversation based on the immediate context [35].

- Tracker Stores, Event Brokers, Model Storage, and Lock Stores: collectively ensure the efficient storage of user interactions, integration with external services, and maintenance of message sequencing.

- domain.yml: a central configuration file that defines all the elements that the assistant can understand and produce. It includes:

- ◦

- Responses: The set of utterances the assistant can use in response to user inputs.

- ◦

- Intents: The classifications of user inputs that help the assistant interpret the user’s intentions.

- ◦

- Slots: Variables that store information throughout the conversation, maintaining context and state.

- ◦

- Entities: Information extracted from user inputs that can be used to personalize interactions.

- ◦

- Forms & Actions: These enable the assistant to perform tasks and carry out dynamic conversations based on the dialogue flow.

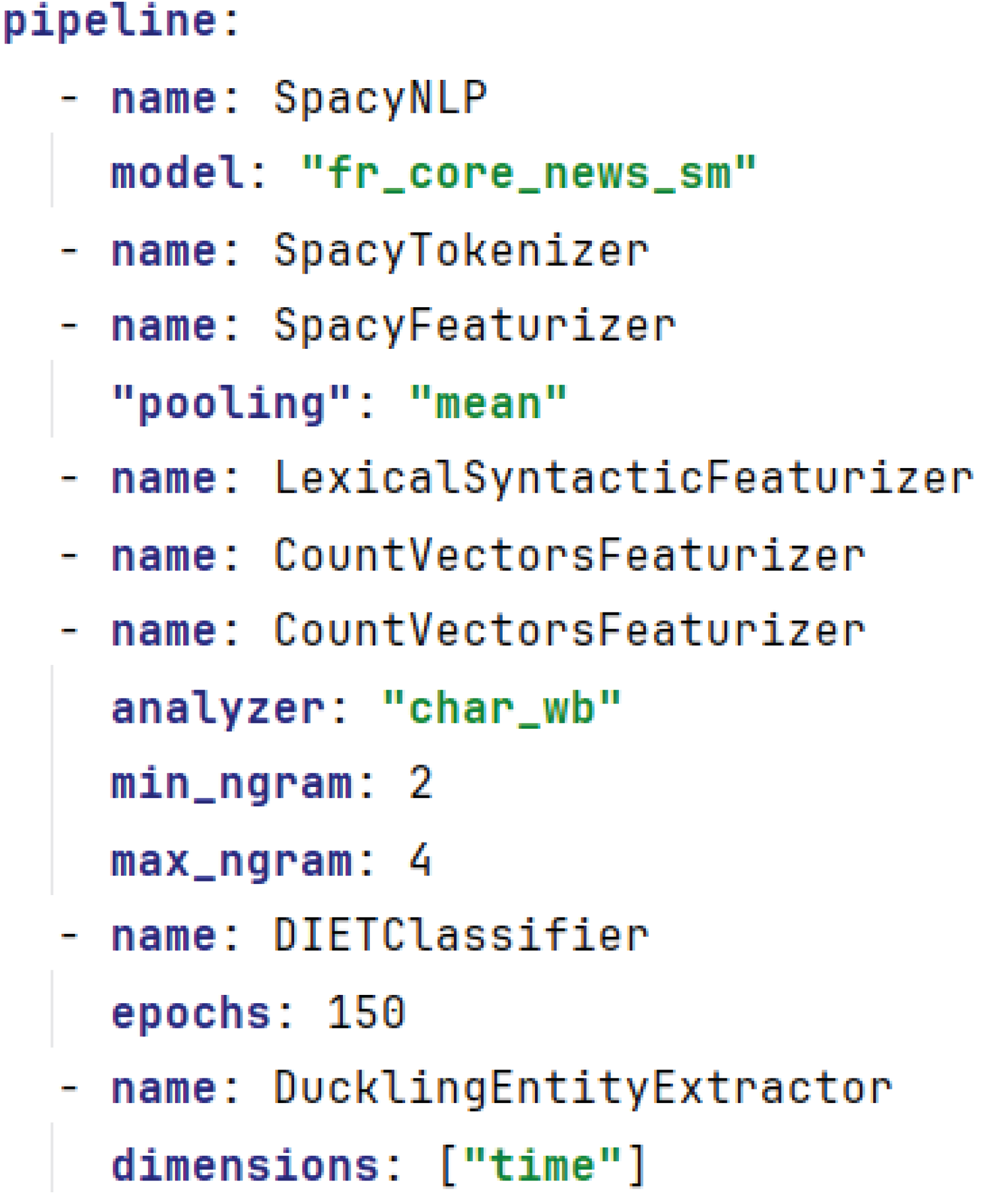

- Config.yml: Specifies the machine learning model configurations, guiding the natural language understanding and dialogue management processes.

- data directory: Contains the training data that the assistant uses to learn and improve its understanding and dialogue management with nlu.yml for intent and entity examples, stories.yml for conversational paths, and rules.yml for dialogue policies.

2.2.4. Data Preparation and Model Implementation

- a)

- Conversational Design and Objective Identification

- b)

- Data Acquisition and Conversation Simulation

- c)

- NLU Pipeline and Language Model Choices

- d)

- Text Tokenization and Featurization

- e)

- Part-of-Speech Tagging and Intention Classification

- f)

- Dialogue Management

- g)

- Forms in Conversations

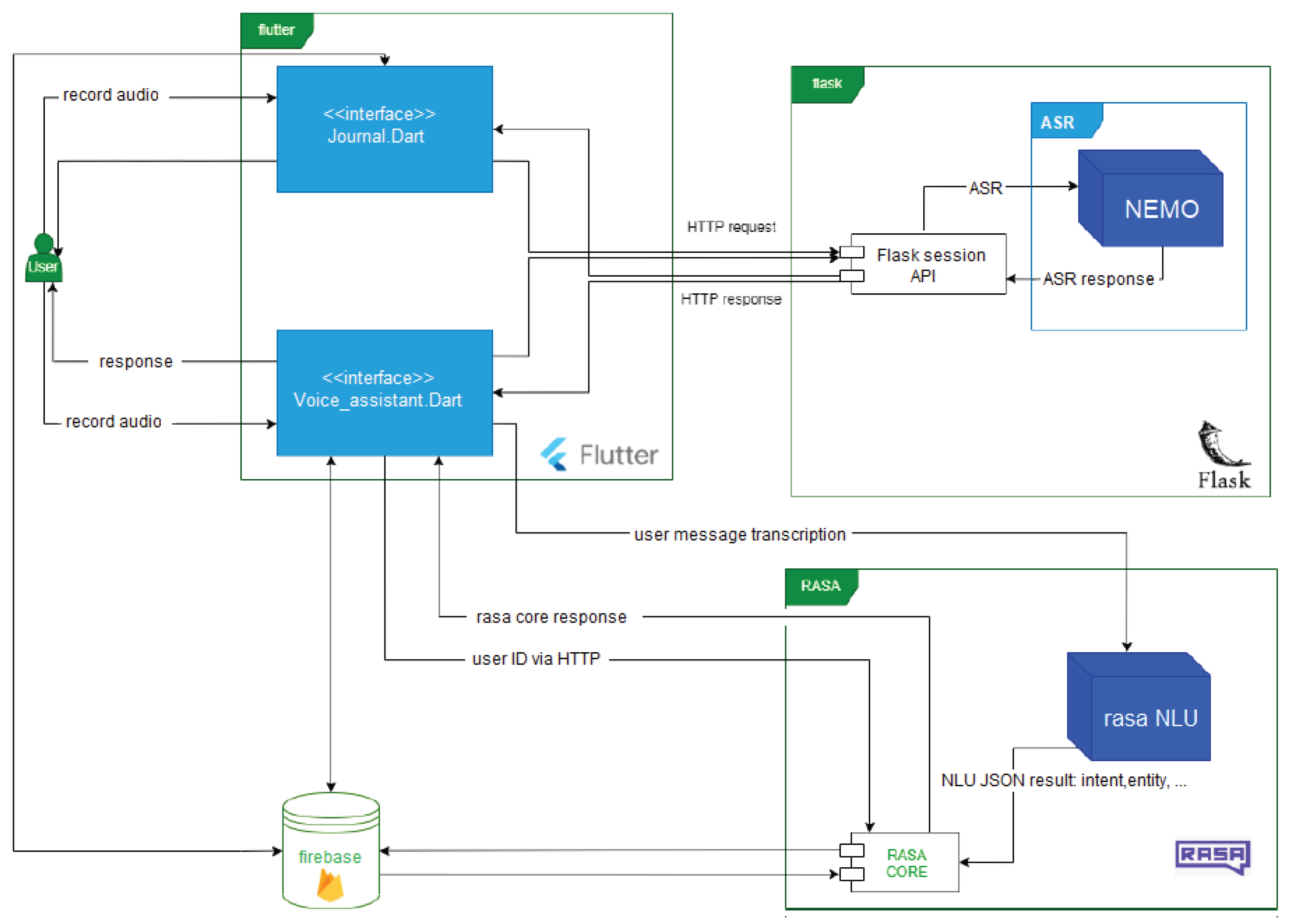

2.2.5. Data Management and System Architecture

2.3. Analysis

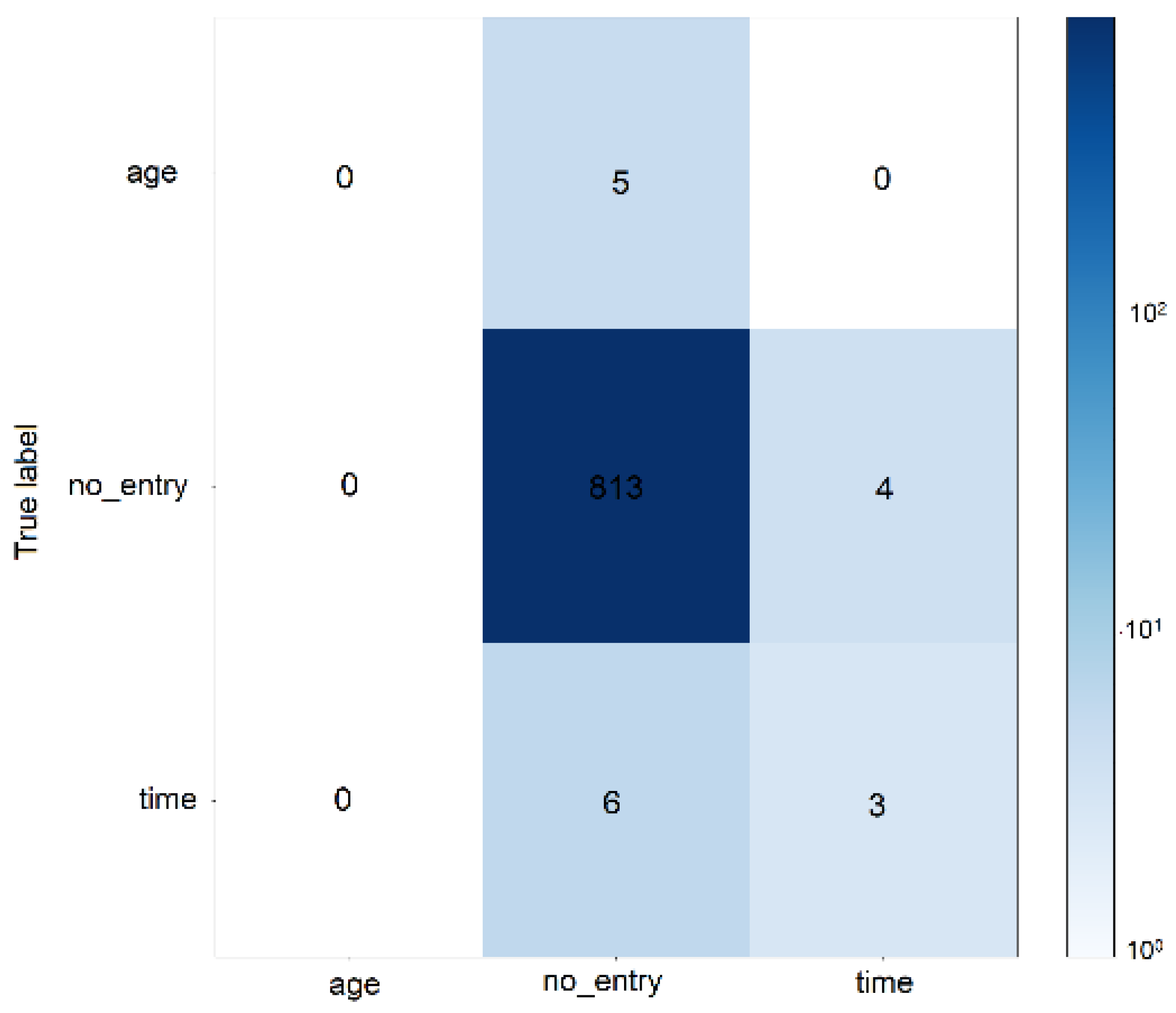

2.3.1. Evaluation of ASR performance

2.3.2. NLP System Evaluation

2.3.3. Deployment and Database Integration

2.3.4. Ethical Considerations and Data Privacy

3. Results

3.1. ASR System Performance

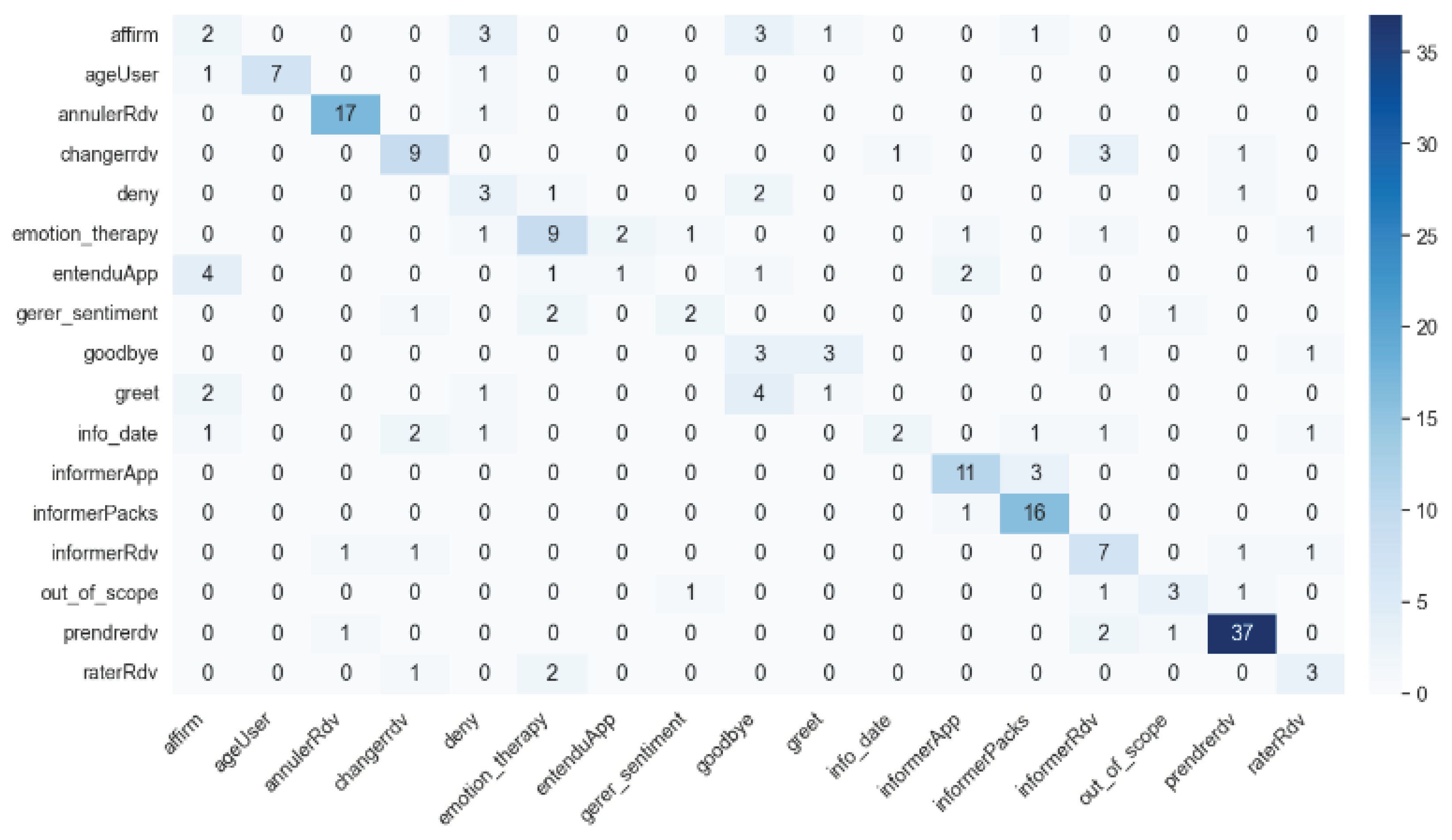

3.2. NLP System Evaluation

3.3. Error Analysis and Model Confidence

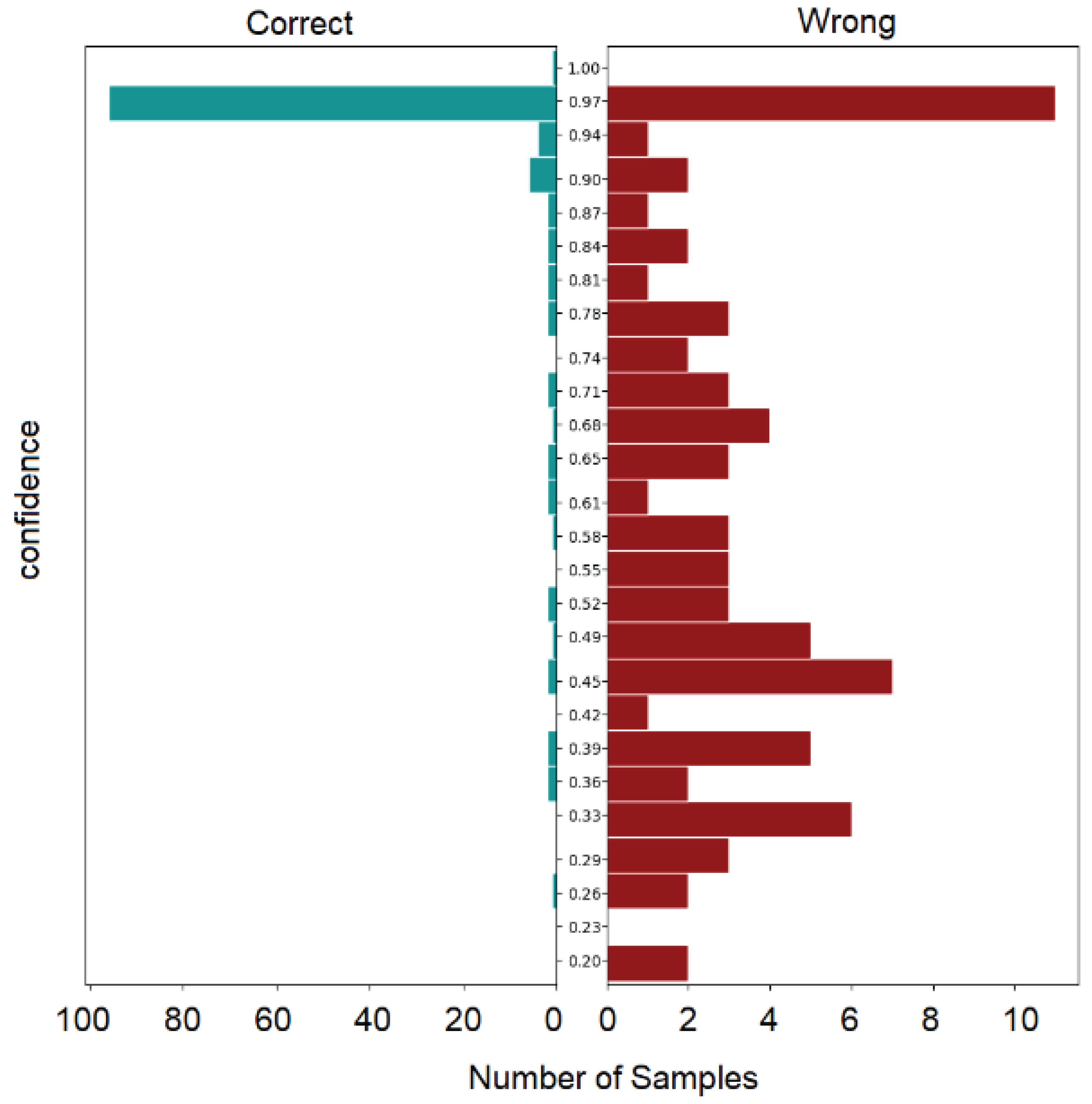

- Model Performance: The model assigns confidence scores that range broadly, from high confidence, such as a score of 0.995 for correctly predicting "salut" as "greet," to moderate confidence levels, such as a score of 0.827 for classifying "je veux que la date soit demain" as "changerRdv." This range indicates the model’s varied levels of certainty in its predictions.

- Accuracy and Misclassification: Notably, the model sometimes misclassifies intents with substantial confidence. For example, "je refuse" is misclassified as "emotion_therapy" with a confidence score of 0.328, highlighting a clear area for model improvement.

- Confidence Score Distribution: The distribution of confidence scores is indicative of the model’s predictive certainty. A concentration of high scores would imply a high degree of certainty in its predictions, whereas a more dispersed set of scores could signal the necessity for further model calibration.

3.4. System Integration and Deployment

- User Interaction: The application’s conversational agent has successfully assisted users in managing appointments, navigating the platform, and utilizing the diary feature through voice commands. This has been particularly beneficial for users with varying levels of technical proficiency.

- Continuous Improvement: Regular user feedback and system interaction logs are critical for ongoing refinement, ensuring the system remains responsive to user needs and operational demands.

4. Discussion

5. Conclusions

Author Contributions

References

- Jelinek, F. Statistical Methods for Speech Recognition. MIT Press. Accessed: Nov. 09, 2023. [Online]. Available online: https://mitpress.mit.edu/9780262546607/statistical-methods-for-speech-recognition/.

- Hinton, G.; Deng, L.; Yu, D.; Dahl, G.E.; Mohamed, A.-R.; Jaitly, N.; Senior, A.; Vanhoucke, V.; Nguyen, P.; Sainath, T.N.; et al. Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups. IEEE Signal processing magazine 2012, 29, 82–97. [Google Scholar] [CrossRef]

- D. Amodei et al., “Deep speech 2: End-to-end speech recognition in english and mandarin. in International conference on machine learning, PMLR, 2016, pp. 173–182. Accessed: Nov. 01, 2023. [Online]. Available online: http://proceedings.mlr.press/v48/amodei16.html?ref=https://codemonkey.link.

- Shawar, B.A.; Atwell, E. Chatbots: are they really useful? Journal for Language Technology and Computational Linguistics 2007, 22, 29–49. [Google Scholar] [CrossRef]

- Smith, A.C.; Thomas, E.; Snoswell, C.L.; Haydon, H.; Mehrotra, A.; Clemensen, J.; Caffery, L.J. Telehealth for global emergencies: Implications for coronavirus disease 2019 (COVID-19). J Telemed Telecare 2020, 26, 309–313. [Google Scholar] [CrossRef] [PubMed]

- Greenhalgh, T.; Wherton, J.; Shaw, S.; Morrison, C. Video consultations for covid-19. Bmj, vol. 368. British Medical Journal Publishing Group, 2020. Accessed: Nov. 01, 2023. [Online]. Available online: https://www.bmj.com/content/368/bmj.m998.

- Mann, D.M.; Chen, J.; Chunara, R.; Testa, P.A.; Nov, O. COVID-19 transforms health care through telemedicine: evidence from the field. Journal of the American Medical Informatics Association 2020, 27, 1132–1135. [Google Scholar] [CrossRef] [PubMed]

- Maier, A.; Haderlein, T.; Eysholdt, U.; Rosanowski, F.; Batliner, A.; Schuster, M.; Nöth, E. PEAKS–A system for the automatic evaluation of voice and speech disorders. Speech Communication 2009, 51, 425–437. [Google Scholar] [CrossRef]

- Bickmore, T.W.; Pfeifer, L.M.; Paasche-Orlow, M.K. Using computer agents to explain medical documents to patients with low health literacy. Patient education and counseling 2009, 75, 315–320. [Google Scholar] [CrossRef]

- Turakhia, M.P.; Desai, M.; Hedlin, H.; Rajmane, A.; Talati, N.; Ferris, T.; Desai, S.; Nag, D.; Patel, M.; Kowey, P.; et al. Rationale and design of a large-scale, app-based study to identify cardiac arrhythmias using a smartwatch: The Apple Heart Study. American heart journal 2019, 207, 66–75. [Google Scholar] [CrossRef]

- [11] Woebot. Accesseed: Jan. 10, 2024. [Online]. Available online: https://woebothealth.

- Vaidyam, A.N.; Wisniewski, H.; Halamka, J.D.; Kashavan, M.S.; Torous, J.B. Chatbots and Conversational Agents in Mental Health: A Review of the Psychiatric Landscape. Can J Psychiatry 2019, 64, 456–464. [Google Scholar] [CrossRef]

- Inkster, B.; Sarda, S.; Subramanian, V. An empathy-driven, conversational artificial intelligence agent (Wysa) for digital mental well-being: real-world data evaluation mixed-methods study. JMIR mHealth and uHealth 2018, 6, e12106. [Google Scholar] [CrossRef]

- Schachter, S.; Singer, J. Cognitive, social, and physiological determinants of emotional state. Psychological review 1962, 69, 379. [Google Scholar] [CrossRef]

- Lucas, G.M.; Gratch, J.; King, A.; Morency, L.-P. It’s only a computer: Virtual humans increase willingness to disclose. Computers in Human Behavior 2014, 37, 94–100. [Google Scholar] [CrossRef]

- Bickmore, T.W.; Picard, R.W. Establishing and maintaining long-term human-computer relationships. ACM Trans. Comput.-Hum. Interact. 2005, 12, 293–327. [Google Scholar] [CrossRef]

- [17] Aubourg, T.; Demongeot, J.; Renard, F.; Provost, H.; Vuillerme, N. Association between social asymmetry and depression in older adults. A phone Call Detail Records analysis. Scientific Reports 2019, 9, 13524. [Google Scholar] [CrossRef] [PubMed]

- Davis, S.; Mermelstein, P. Comparison of parametric representations for monosyllabic word recognition in continuously spoken sentences. IEEE transactions on acoustics, speech, and signal processing 1980, 28, 357–366. [Google Scholar] [CrossRef]

- et al. van den Oord et al., “WaveNet: A Generative Model for Raw Audio.” arXiv, Sep. 19, 2016. Accessed: Nov. 01, 2023. [Online]. Available online: http://arxiv.org/abs/1609.03499.

- Panayotov, V.; Chen, G.; Povey, D.; Khudanpur, S. Librispeech: an ASR corpus based on public domain audio books. In 2015 IEEE international conference on acoustics, speech and signal processing (ICASSP), IEEE, 2015, pp. 5206–5210. Accessed: Nov. 01, 2023. [Online]. Available online: https://ieeexplore.ieee.org/abstract/document/ 7178964/.

- Kuchaiev et al., “NeMo: a toolkit for building AI applications using Neural Modules.” arXiv, Sep. 13, 2019. Accessed: Nov. 01, 2023. [Online]. Available online: http://arxiv.org/abs/1909.09577.

- Graves, A.; Fernández, S.; Gomez, F.; Schmidhuber, J. Connectionist temporal classification: labelling unsegmented sequence data with recurrent neural networks. In Proceedings of the 23rd international conference on Machine learning - ICML ’06; ACM Press: Pittsburgh, PA, USA, 2006; pp. 369–376. [Google Scholar] [CrossRef]

- Heafield, K. KenLM: Faster and smaller language model queries. In Proceedings of the sixth workshop on statistical machine translation; 2011; pp. 187–197, Accessed: Nov. 01, 2023. [Online]. Available online: https://aclanthology.org/W11-2123.pdf.

- Chen, S.F.; Goodman, J. An empirical study of smoothing techniques for language modeling. Computer Speech & Language 1999, 13, 359–394. [Google Scholar]

- R. Ardila et al., “Common Voice: A Massively-Multilingual Speech Corpus.” arXiv, Mar. 05, 2020. Accessed: Nov. 01, 2023. [Online]. Available online: http://arxiv.org/abs/1912.06670.

- Hirschberg, J.; Manning, C.D. Advances in natural language processing. Science 2015, 349, 261–266. [Google Scholar] [CrossRef] [PubMed]

- Reiter, E.; Dale, R. Building applied natural language generation systems. Natural Language Engineering 1997, 3, 57–87. [Google Scholar] [CrossRef]

- Dhiman, D.B. Artificial Intelligence and Voice Assistant in Media Studies: A Critical Review. Available at SSRN 4250795, 2022, Accessed: Nov. 02, 2023. [Online]. Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4250795.

- Dinesh, R.S.; Surendran, R.; Kathirvelan, D.; Logesh, V. Artificial Intelligence based Vision and Voice Assistant. In 2022 International Conference on Electronics and Renewable Systems (ICEARS), IEEE, 2022, pp. 1478–1483. Accessed: Nov. 02, 2023. [Online]. Available online: https://ieeexplore.ieee.org/abstract/document/9751819/.

- Gupta, J.N.; Forgionne, G.A.; Mora, M. Intelligent decision-making support systems: foundations, applications and challenges. 2007.

- Kadali, B.; Prasad, N.; Kudav, P.; Deshpande, M. Home automation using chatbot and voice assistant. in ITM Web of Conferences, EDP Sciences, 2020, p. 01002. Accessed: Nov. 02, 2023. [Online]. Available online: https://www.itm-conferences.org/articles/itmconf/abs/2020/02/itmconf_icacc2020_01002/itmconf_icacc2020_01002.html.

- Patel, D.; Msosa, Y.J.; Wang, T.; Mustafa, O.G.; Gee, S.; Williams, J.; Roberts, A.; Dobson, R.J.; Gaughran, F. An implementation framework and a feasibility evaluation of a clinical decision support system for diabetes management in secondary mental healthcare using CogStack. BMC Med Inform Decis Mak 2022, 22, 100. [Google Scholar] [CrossRef] [PubMed]

- Chen, H.; Liu, X.; Yin, D.; Tang, J. A Survey on Dialogue Systems: Recent Advances and New Frontiers. SIGKDD Explor. Newsl. 2017, 19, 25–35. [Google Scholar] [CrossRef]

- Vaswani et al., “Attention is all you need. Advances in neural information processing systems, vol. 30, 2017, Accessed: Nov. 02, 2023. [Online]. Available online: https://proceedings.neurips.cc/paper/7181-attention-is-all.

- Serban, I.; Sordoni, A.; Bengio, Y.; Courville, A.; Pineau, J. Building end-to-end dialogue systems using generative hierarchical neural network models.. In Proceedings of the AAAI conference on artificial intelligence, 2016. Accessed: Nov. 02, 2023. [Online]. Available online: https://ojs.aaai.org/index.php/AAAI/article/view/9883.

- Cassell, J.; Bickmore, T.; Billinghurst, M.; Campbell, L.; Chang, K.; Vilhjálmsson, H.; Yan, H. Embodiment in conversational interfaces: Rea. In Proceedings of the SIGCHI conference on Human factors in computing systems the CHI is the limit - CHI ’99; ACM Press: Pittsburgh, PA, USA, 1999; pp. 520–527. [Google Scholar] [CrossRef]

- McTear, M.F. Spoken dialogue technology: enabling the conversational user interface. ACM Comput. Sur. 2002, 34, 90–169. [Google Scholar] [CrossRef]

- Delorme, J.; Charvet, V.; Wartelle, M.; Lion, F.; Thuillier, B.; Mercier, S.; Soria, J.-C.; Azoulay, M.; Besse, B.; Massard, C.; et al. Natural Language Processing for Patient Selection in Phase I or II Oncology Clinical Trials. JCO Clinical Cancer Informatics 2021, 709–718. [Google Scholar] [CrossRef] [PubMed]

- Vincent, M.; Douillet, M.; Lerner, I.; Neuraz, A.; Burgun, A.; Garcelon, N. Using deep learning to improve phenotyping from clinical reports. Stud Health Technol Inform 2022, 290, 282–6. [Google Scholar] [PubMed]

- Honnibal, M.; Montani, I. spaCy 2: Natural language understanding with Bloom embeddings, convolutional neural networks and incremental parsing. To appear 2017, 7, 411–420. [Google Scholar]

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python [Book]. Accessed: Nov. 09, 2023. [Online]. Available online: https://www.oreilly.com/library/view/natural-language-processing/9780596803346/.

- Bocklisch, T.; Faulkner, J.; Pawlowski, N.; Nichol, A. Rasa: Open Source Language Understanding and Dialogue Management. arXiv, Dec. 15, 2017. Accessed: Nov. 02, 2023. [Online]. Available online: http://arxiv.org/abs/1712.

- Morris, A.C.; Maier, V.; Green, P. From WER and RIL to MER and WIL: improved evaluation measures for connected speech recognition. In Eighth International Conference on Spoken Language Processing; 2004. [Google Scholar]

- Grinberg, M. Flask web development: developing web applications with python; O’Reilly Media, Inc., 2018. [Google Scholar]

| Intent | Definition | Entities | Training Data Count |

|---|---|---|---|

| goodbye | User wishes to say farewell | - | 8 |

| greet | Greetings | - | 8 |

| affirm | User confirms something | - | 9 |

| deny | User refuses or denies something | - | 4 |

| informApp | User seeks information about the application | - | 14 |

| informPacks | User inquires about the application’s packages | - | 17 |

| bot_challenge | User asks if they are speaking to a bot or a human | - | 3 |

| prendreRdv | User requests an appointment | time | 41 |

| changerRdv | User requests to change their appointment | time | 14 |

| annulerRdv | User requests to cancel their appointment | - | 18 |

| raterRdv | User missed their appointment | - | 6 |

| informerRdv | User inquires about confirmed appointments | - | 11 |

| info_date | User asks for a date of the appointment | time | 9 |

| IdK | User responds with ’I don’t know’ | - | 3 |

| ageUser | User provides their age | age | 9 |

| raisonEmotion | User responds due to an undesirable emotion | - | 4 |

| entenduApp | User responds how they heard about the service | - | 9 |

| emotion_therapy | User explains why they need therapy | - | 16 |

| gerer_sentiment | User describes how they manage their feelings | - | 6 |

| out_of_scope | Intent for text that our assistant does not cover initially | - | 6 |

| Intent/ Entity |

Precision | Recall | F1-Score | Support | Confused With |

|---|---|---|---|---|---|

| time | 42.86% | 33.33% | 37.50% | 9 | - |

| age | 0.00% | 0.00% | 0.00% | 5 | - |

| Gerer sentiment | 50.00% | 33.33% | 40.00% | 6 | emotion_therapy (2), out_of_scope (1) |

| Informer Rdv | 43.75% | 63.64% | 51.85% | 11 | raterRdv (1), annulerRdv (1) |

| Prendre Rdv | 90.24% | 90.24% | 90.24% | 41 | informerRdv (2), out_of_scope (1) |

| Emotion therapy | 60.00% | 56.25% | 58.06% | 16 | entenduApp (2), raterRdv (1) |

| goodbye | 23.08% | 37.50% | 28.57% | 8 | greet (3), informerRdv (1) |

| raterRdv | 42.86% | 50.00% | 46.15% | 6 | emotion_therapy (2), changerRdv (1) |

| greet | 20.00% | 12.50% | 15.38% | 8 | goodbye (4), affirm (2) |

| deny | 27.27% | 42.86% | 33.33% | 7 | Goodbye (2), emotion_therapy (1) |

| info_date | 66.67% | 22.22% | 33.33% | 9 | changerRdv (2), raterRdv (1) |

| Informer Packs | 76.19% | 94.12% | 84.21% | 17 | informerApp (1) |

| affirm | 20.00% | 20.00% | 20.00% | 10 | goodbye (3), deny (3) |

| informer App | 73.33% | 78.57% | 75.86% | 14 | informerPacks (3) |

|

out of scope |

60.00% | 50.00% | 54.55% | 6 | informerRdv (1), prendreRdv (1) |

| annuler Rdv | 89.47% | 94.44% | 91.89% | 18 | deny (1) |

|

entendu App |

33.33% | 11.11% | 16.67% | 9 | affirm (4), informerApp(2), emotion therapy (1) |

| changer Rdv | 64.29% | 64.29% | 64.29% | 14 | informerRdv (3), info_date (1) |

| ageUser | 100.00% | 77.78% | 87.50% | 9 | affirm (1), deny (1) |

| Overall | 64.24% | 63.64% | 62.75% | 209 | - |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).