3.2. Performance Comparison in Real-World Environments

To compare the accuracy of DL models in real-world environments, we conducted a comparison using video footage. To this end, RGB videos were used as input for the DL models, shot at a resolution of 1280x720 and 30 FPS. The selected input videos included complex postures, scenes with objects resembling human figures, footage shot from a distance, and videos with various lighting conditions.

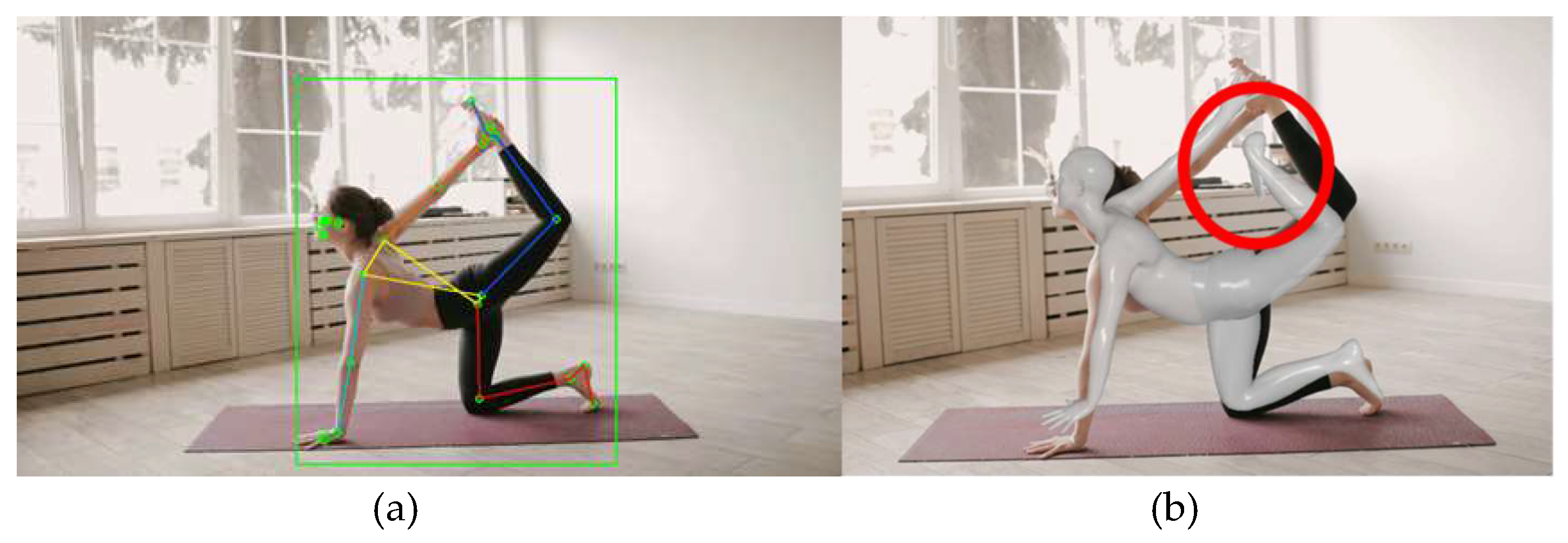

Figure 5 depicts the first video, featuring a complex yoga pose with intertwined human joints. In

Figure 5(a), the skeleton model estimated by MPP is overlaid, with the right-side joints indicated in shades of red and the left-side joints in shades of blue. The area recognizing the person is marked with a green bounding box. Even in a single image, it can be observed that the model accurately detects the left leg bending upwards. This demonstrates the effectiveness of MPP in complex pose recognition.

Figure 5(b) shows the estimation results of HybrIK where the estimated SMPL model overlaid on the image. The algorithm accurately estimates leg positions within a certain angle range. However, as the complexity of the pose increases, the accuracy of the estimation decreases. Additionally, it is observed that the estimation accuracy for extremities, such as feet, is lower. This highlights the challenges in accurately estimating complex poses and the limitations in detecting extremity joints with HybrIK.

Figure 5.

Estimation Results of a yoga pose: (a) MPP; (b) HybrIK.

Figure 5.

Estimation Results of a yoga pose: (a) MPP; (b) HybrIK.

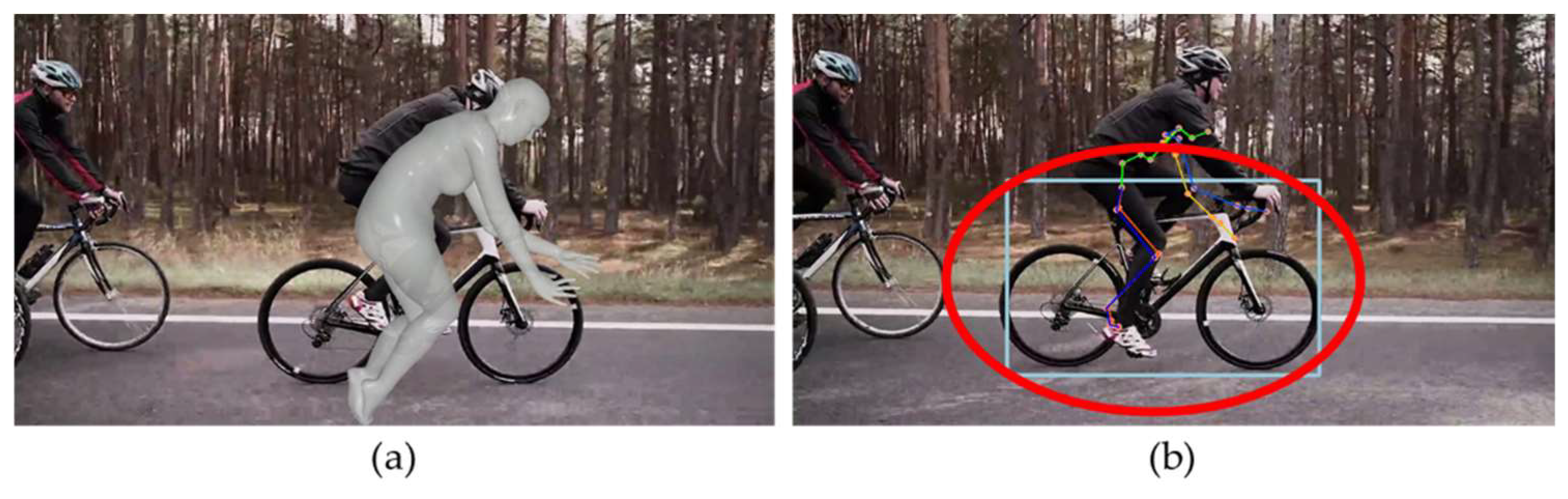

Next, we compare the estimation results for a person riding a bicycle.

Figure 6 shows an image of the person cycling shot from the side and includes occlusion areas where some joints are obscured, and external objects are present. In

Figure 6(a), MPP accurately identifies the joints of the person without mistaking the bicycle as a human figure. However, it occasionally produces inaccurate estimations for the occluded areas as shown in

Figure 6(b).

Figure 7 displays the estimation results of HybrIK for the same image. HybrIK shows inaccurate estimations for all frames.

Figure 7(a) shows that estimated SMPL model deviates much from the target person, which is due to erroneous person recognition area of HybrIK as shown in

Figure 7(b). It can be observed that HybrIK mistakenly identifies the bicycle as a person and attempts to estimate its 3D posture. This highlights a limitation of HybrIK in differentiating between human figures and inanimate objects in complex scenes.

The third video is shot from a distance, featuring a person in a pitching stance. Due to the camera angle, some joints of the person are in self-occlusion areas.

Figure 8(a) and

Figure 8(b) present the estimation results of MPP and HybrIK, respectively. Both algorithms demonstrate high accuracy in their estimations in this scenario. This indicates their effectiveness in dealing with challenges such as distance and partial occlusions, particularly in capturing and analyzing the posture of a person engaged in a specific activity like pitching.

The final case involves a video with varying light intensity due to shadows. In real-world environments, shadows occur due to sunlight or artificial lighting, and people often wear clothing with various patterns. This can lead to frequent and abrupt changes in the colors of RGB images.

Figure 9 presents the estimation results of MPP, which demonstrates the capability to estimate poses even from the back of a person. However, there are frequent occurrences of coordinate inversions on the left and right sides due to changes in lighting conditions.

Figure 10 displays the estimation results of HybrIK. Similar to MPP, HybrIK accurately estimates the posture of a person from the back. Furthermore, it shows more robustness in handling changes in light intensity compared to MPP, providing more stable estimation results under varying lighting conditions. This suggests that HybrIK has a certain level of resilience to changes in environmental lighting, which is crucial for practical applications in diverse real-world scenarios

To summarize the findings so far in real-world environments, MPP is capable of real-time analysis and demonstrates high accuracy in person recognition through its method of finding regions of interest based on facial landmarks. In addition, including individual fitness data in the dataset results in high estimation performance for complex poses. However, MPP is sensitive to changes in light intensity and clothing patterns in RGB images and exhibits some inaccuracies in depth estimation.

On the other hand, HybrIK, based on the SMPL model, shows relatively better performance in depth estimation. However, when applied to real-world settings, it demonstrates reduced accuracy in person recognition and in estimating complex joint positions. Lastly, it is important to note that the open-source versions of both DL models don’t provide 3D joint angles. For moving beyond person recognition from 2D images to recognizing and predicting 3D human actions, accurate estimation based on joint angles is essential. Therefore, this study aims to further research the removal and correction of anomalies in DL models and a method that finds the angles of each joint with reference to a 3D humanoid model using an optimization method to improve HPE accuracy and applicability.

3.3. Improving Pose Recognition Performance

3.3.1. Improving Human Recognition Accuracy

To enhance the accuracy of DL models, improving human recognition performance is a prerequisite. In real-world environments, video data often contains multiple people and objects resembling human figures. Thus, for 3D HPE technology focusing on a single person to be effectively utilized in real environments, it is crucial to continuously recognize an individual in the video.

The comparative study revealed instances where non-human objects were mistakenly recognized as humans. Originally, HybrIK utilized the fasterrcnn_resnet50_fpn algorithm [

16] provided by PyTorch for rapid object detection in images, with the detected region of interest then input into the HybrIK model. However, this method only estimates the object with the highest recognition score and largest recognized area in the image, not specifically focusing on human figures. As a result, non-human objects like bicycles could be misidentified as humans, leading to inaccurate estimations by the HybrIK model. In this paper, to improve recognition accuracy, the fasterrcnn_resnet50_fpn trained on the COCO dataset was applied to HybrIK. We used 2017 version of COCO dataset [

17]. Excluding the background, 11 out of 91 categories in the dataset are omitted, and classification is conducted on a total of 80 objects. This enhancement aims to refine the object detection process, focusing specifically on human figures and reducing the likelihood of misidentifying non-human objects as people.

Firstly, the target person for analysis is identified in the first frame. The object recognition algorithm predicts significantly more bounding boxes (BB) than there are actual objects. Therefore, to adopt the most accurate BB for human recognition, the following steps are taken to eliminate unnecessary BBs:

Remove all BBs whose confidence scores are below a certain threshold;

Eliminate all detected BBs that are not identified as humans.

From the remaining BBs, keep only the one with the largest area and remove the rest.

If, in the first frame, there are no BBs with confidence scores above the threshold, the threshold is adjusted and the process is repeated. This approach ensures that the most probable human figure is selected for analysis, enhancing the accuracy of subsequent pose estimation.

Then, once the subject for analysis has been determined, the information from the previous frame is used to continuously recognize the target. The process is as follows:

In the current frame, remove all BBs that are not identified as humans;

Calculate the Intersection over Union (IoU) between the BB recognized in the previous frame and the BBs in the current frame. IoU is a common measure used in object detection to assess the similarity between two sets. It is calculated as the ratio of the intersection area (

) of the recognized regions in the current (

) and previous (

) frames to their union area, as expressed below:

Finally, adopt the BB that has the highest sum of confidence score and IoU score.

This approach ensures continuous and accurate tracking of the target across frames, leveraging the similarity of detected regions between consecutive frames and the reliability of the detection.

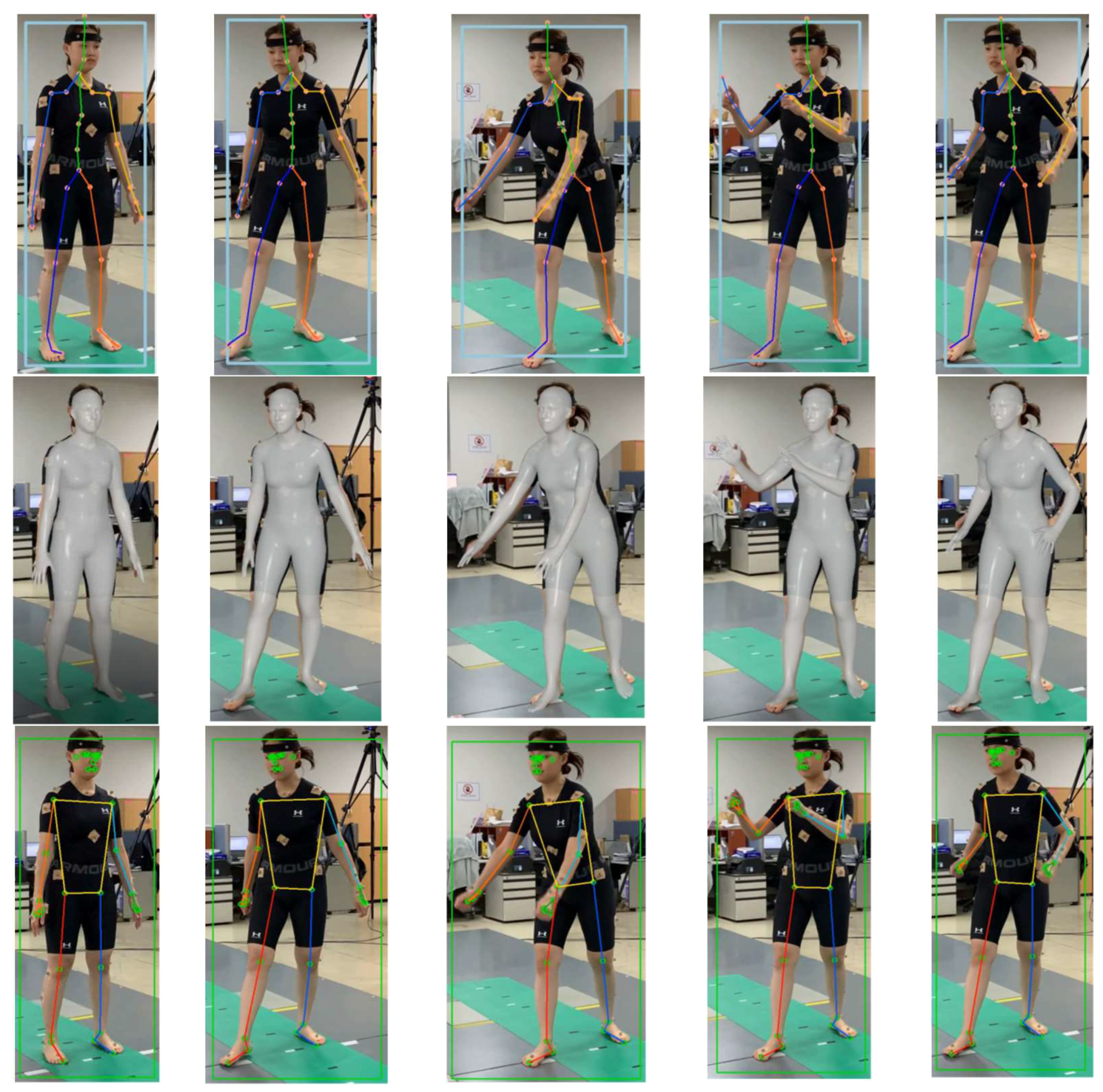

Figure 11 illustrates the results of applying the proposed human recognition algorithm to the HybrIK model, which shows an improvement in the accuracy of human pose estimation compared to previous results.

3.3.2. Detection of Outliers

In the context of real-world environments, issues commonly encountered in the recognized human model can be categorized into jitter, switching, and miss-detection [

5]. Therefore, the process of detecting outliers and correcting them is essential. In this study, a 3D joint coordinate correction step is conducted to address the shortcomings typically associated with DL-based human pose estimation algorithms and improve accuracy.

When capturing movements using a single monocular camera, occlusion areas occur due to the fixed field of view. These occlusion areas can be categorized as self-occlusion, where some joints are obscured by one's own body, and external occlusion, where joints are obscured by external objects. Capturing the same pose, but with different camera positions, results in different obscured joints. In these occlusion areas, low confidence estimates from the DL model are often generated.

Another challenging factor in pose estimation from RGB images is the variation in lighting intensity. Irregular changes in lighting can lead to phenomena such as left/right inversion or sudden coordinate distortion. In this research, inaccurate estimations in occlusion areas commonly occurring in DL models and the phenomenon of left/right switching are defined as outliers, and detection and correction are performed. Miss-detection in occlusion areas mostly involves the inclusion of end joints.

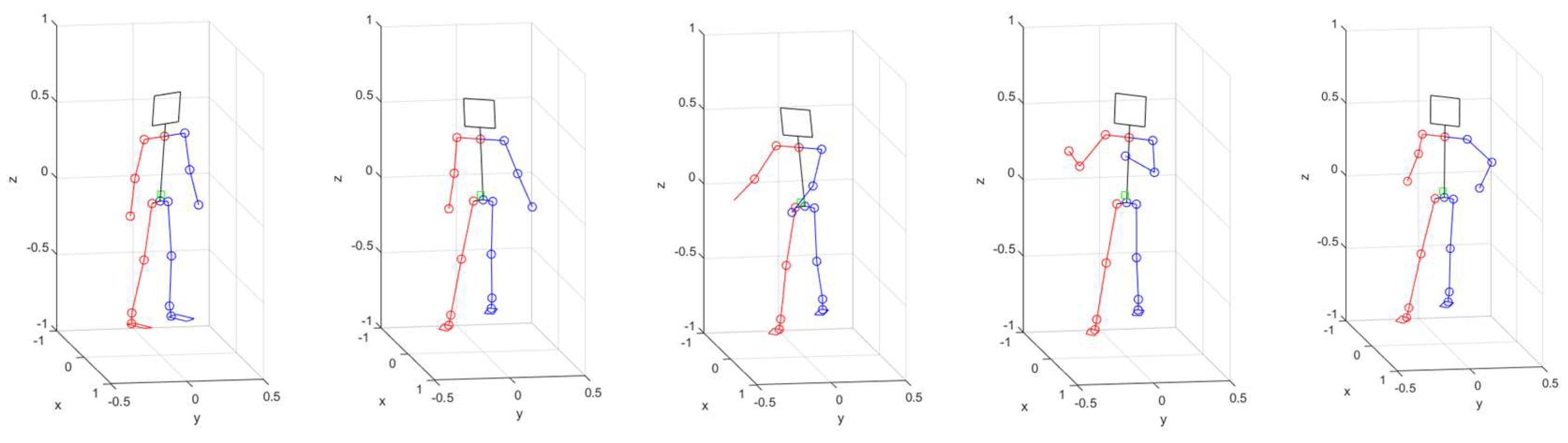

Symmetrical inversion of the human body shape occurs when there is a switch between left and right, often around the center points of the pelvis or the joints at the center of the shoulders and pelvis.

Figure 12 illustrates the 3D coordinate trajectory of the right shoulder in the case of switching, with the segments affected by outliers marked in red. Since this occurs across the entire body, all joints need to be adjusted in such cases.

In this study, outliers are detected through changes in the lengths of 10 major links, which include the shoulder, pelvis, thigh, shin, upper arm, and lower arm. Link length is calculated as the Euclidean distance between two joints in a 3D pixel coordinate system. It is important to note that the link lengths on the pixel frame are measured differently depending on the distance from the camera. Therefore, to standardize the link measurements, we normalize them using the average height information from the Size Korea [

18], which states the average height for women as 160cm and for men as 175cm.

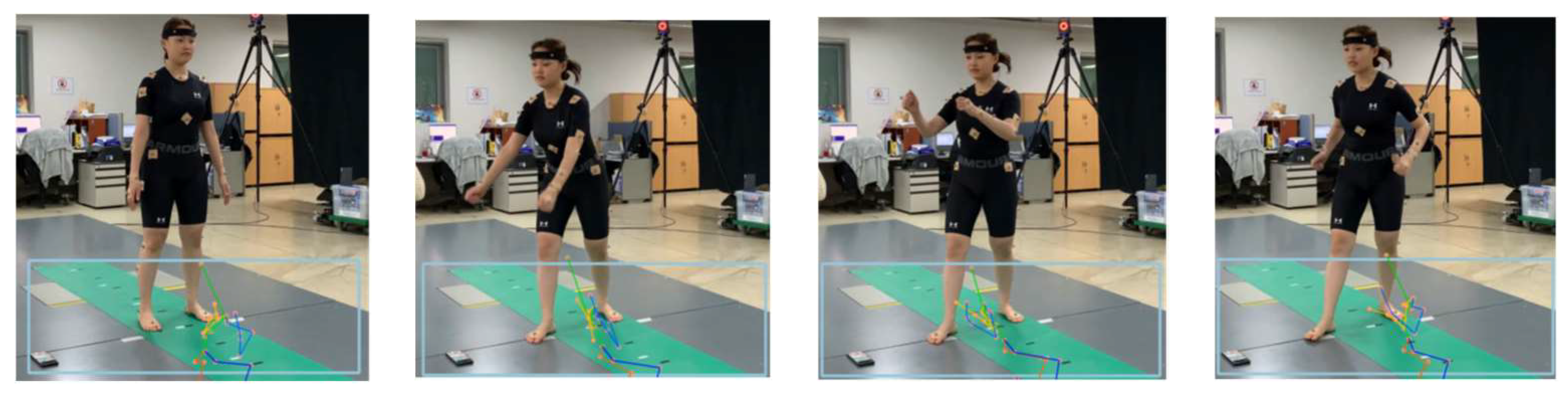

Figure 13 illustrates the changes in the lengths of the 10 links for each frame during a walking motion shown in

Figure 12. Joint lengths vary linearly when a person performs dynamic motions. The proposed algorithm differentiates the length changes per frame and detects non-linear segments.

Figure 14 presents the results of differentiating lengths of the 10 links shown in

Figure 13, with the red areas indicating cases of left/right inversion, and the blue areas representing instances of partial joint mis-detection.

3.3.3. Outlier Correction

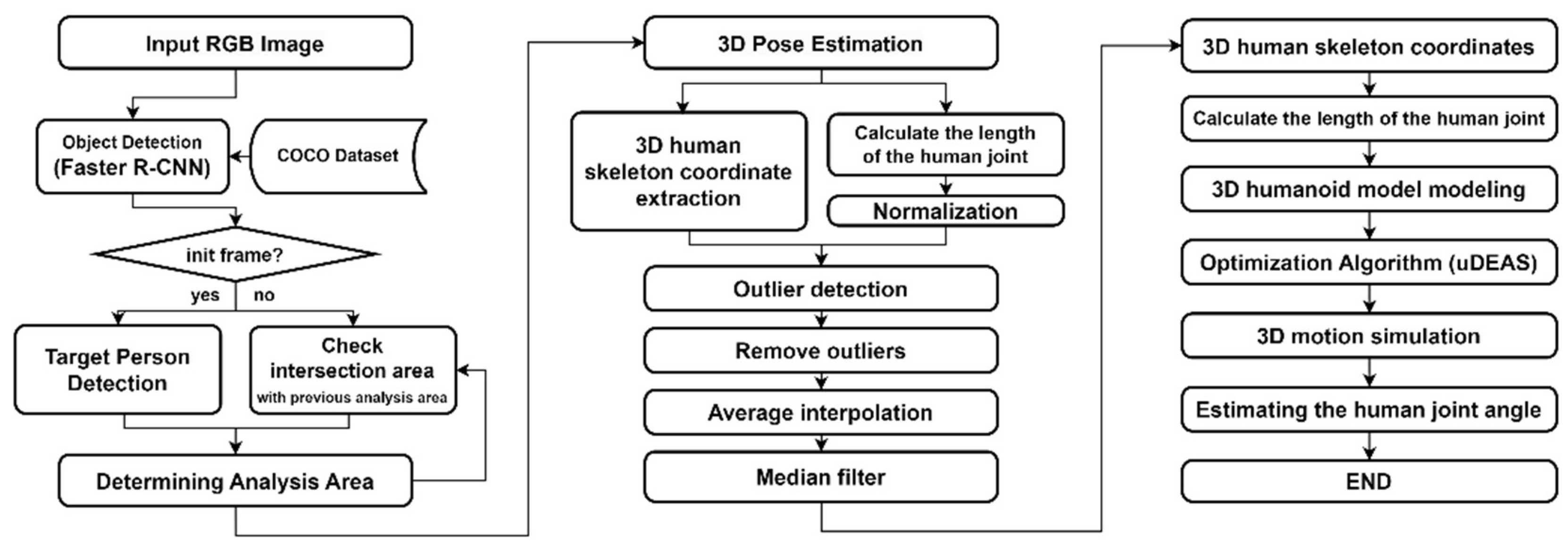

In this study, we propose an outlier detection and correction method, whose structure is shown in

Figure 15, using the lengths of human body links.

Figure 16 represents the process of outlier correction for the 3D coordinates of the right shoulder. First, we analyze the variations in the lengths of major links to observe changes.

Figure 16(a) examines the length variations of the right shoulder to detect outliers. The segments where outliers are detected are marked in red on the graph, and the solid lines in

Figure 16(b) and 16(c) represent the corrected data after outlier removal, while the red dashed line represent the original data. In

Figure 16(b), the removed segments are interpolated using the mean interpolation method, where the average values of frames before and after the outlier segments are used for interpolation. In

Figure 16(c), a median filter is applied to smooth the corrected trajectories. Applying the filter helps minimize frame-by-frame errors in the DL model.

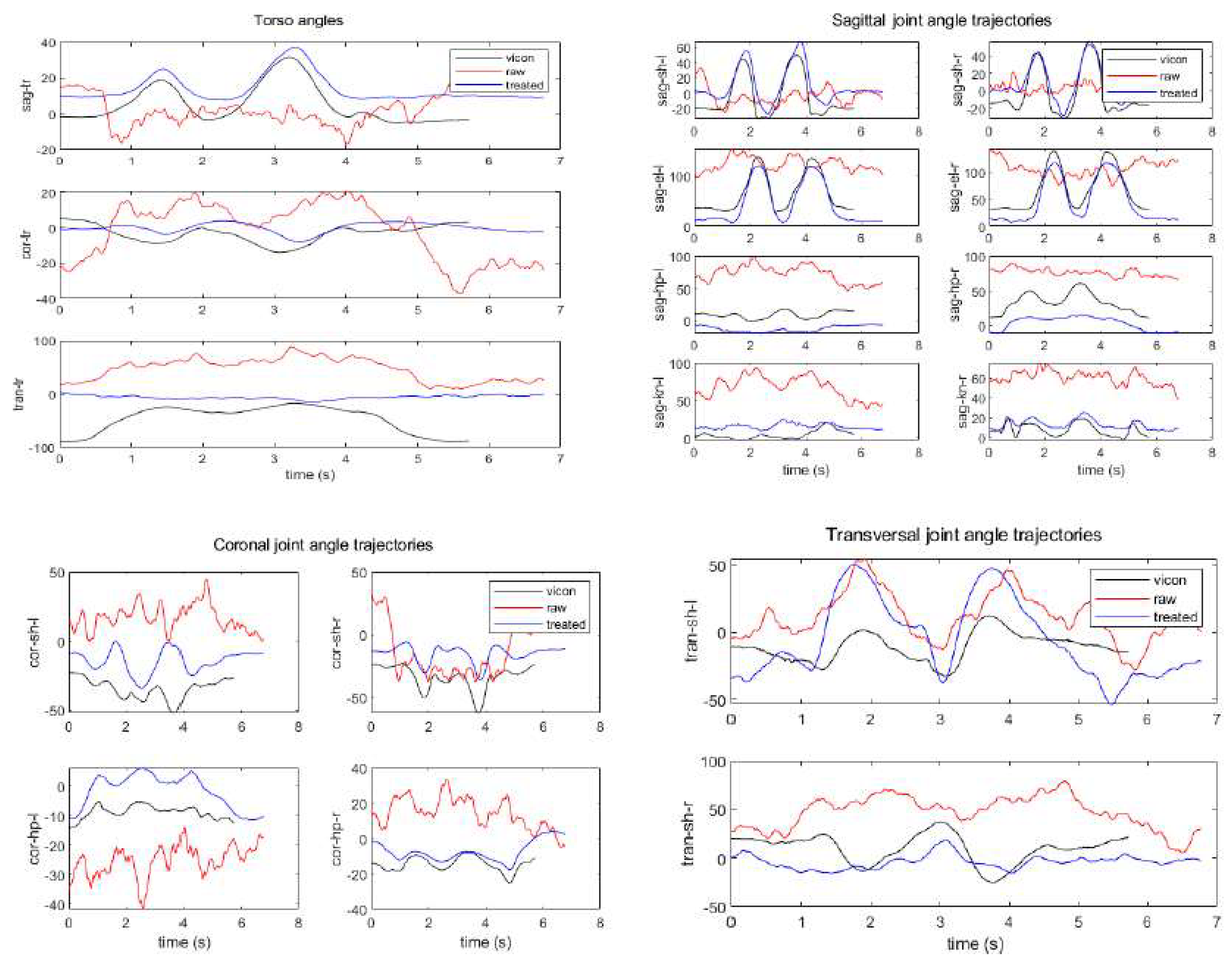

3.3.4.3. D Pose Estimation Based on Humanoid Model

To estimate joint angle trajectories of human motion, a 3D humanoid robot model and an optimization algorithm are employed using joint coordinate trajectories corrected by the data processing algorithm described so far. The univariate Dynamic Encoding Algorithm for Searches (uDEAS) is chosen as the optimization method due to its high speed and accuracy, which was proven in the previous work [

8].

uDEAS is a global optimization method that combines local and global search schemes by representing real numbers into binary matrices. Among local search, a session composed of single bisectional search (BSS) and multiple unidirectional search (UDS) is executed for each row. BSS adds a new bit at the right most position, and UDS increases or decreases each binary row (the encoded representation for each variable) depending on the BSS result. As to the global optimization scheme, uDEAS restarts the local search procedure from random binary matrices. Among the local minima found so far, the one with the minimum cost function is selected as the global minimum.

In the present work, during the optimization process a set of candidate joint angle variables is fed into the humanoid model, and the model simulates a 3D pose. The objective is to seek for joint angle values that minimize the Euclidean distance between the coordinates of each simulated joint and the corresponding measured joints.

Humanoid model is more elaborated to have a total of 26 DoF by adding transversal shoulder joints and a coronal neck joint as shown in

Figure 17, compared with the recent model in [

8]. In the figure, the variables shaded in orange represent the 17 joint angles used for HPE, and three variables,

,

, and

, are body angle values related with the relative camera view angle, where

,

, and

denote joint angles rotating on the sagittal, coronal, and transversal planes, resepectively. For estimation of arbitrary poses at any distance from camera, a size factor,

, is also necessary, which is multiplied to

each link length. Thus, as the camera moves away from human,

decreases, and vice versa. Therefore, a complete optimization vector for pose estimation consist of 21 variables as follows:

The cost function to be minimized by uDEAS is designed to minimize Mean Per Joint Position Error (MPJPE) for the 3D estimated model and the fitted one, which is calculated as mean Euclidean distance between the 3D joint coordinates estimated by MPP or HybrIK,

, and those fitted by the 3D humanoid model in

Figure 17,

:

When the two models overlap exactly, this value is reduced to zero.