Submitted:

15 January 2024

Posted:

15 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

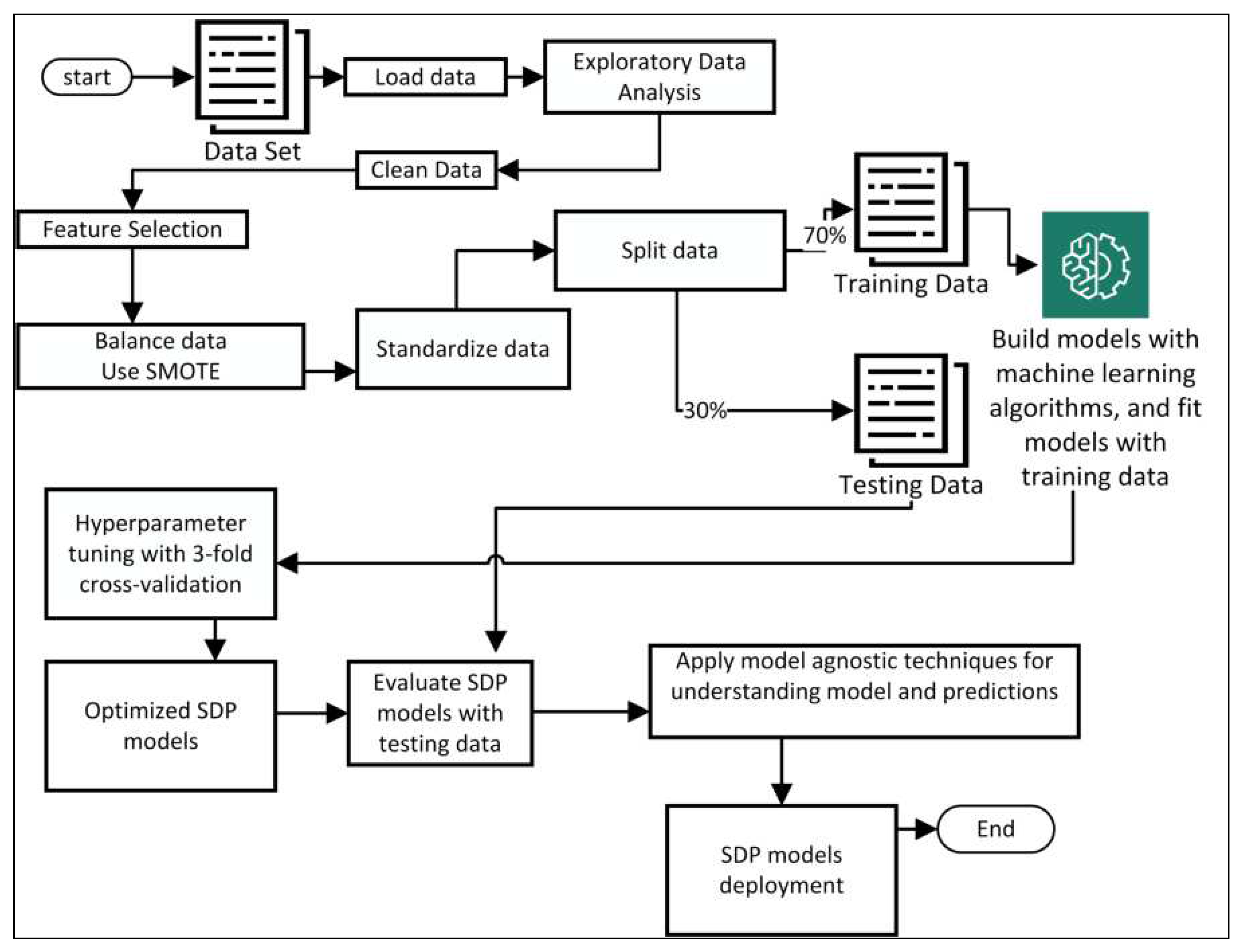

3. Methodology

3.1. Data Collection

3.2. Feature Selection and Preprocessing Steps

| Criterion | Data Cleaning Activity | Explanation |

|---|---|---|

| 1 | Cases with missing values | Instances that contain one or more missing values were dropped from the dataset. |

| 2 | Cases with implausible and conflicting feature values | Instances that violated referential integrity constraints were removed. The following conditions were applied: a). The total line of code is not an integer number. b). The program’s cyclomatic complexity is greater than the total operators plus 1 [38]. c). Halstead’s sum of total operators and operands is 0. |

| 3 | Outlier removals | Outliers rely on any value that lies within the range of 1st and outside the range of 3rd quartile respectively. Records within the range of (Q1 -1.5 * IQR), and outside the range of (Q3 + 1.5 * IQR) were dropped. |

| 4 | Removal of duplicates | Duplicated observations were taken out from the dataset. |

| 5 | Removal of highly correlated features except for module size, and effort metrics. | Calculated the correlation between independent features, and the attributes with more than 70% of correlation were removed in the first approach demonstrated in this study. |

3.3. Applied Machine Learning Algorithms

- Support vector machine (SVM)

- K-Nearest-Neighbors (KNN)

- Random Forest Classifier (RF)

- Artificial Neural Network (ANN)

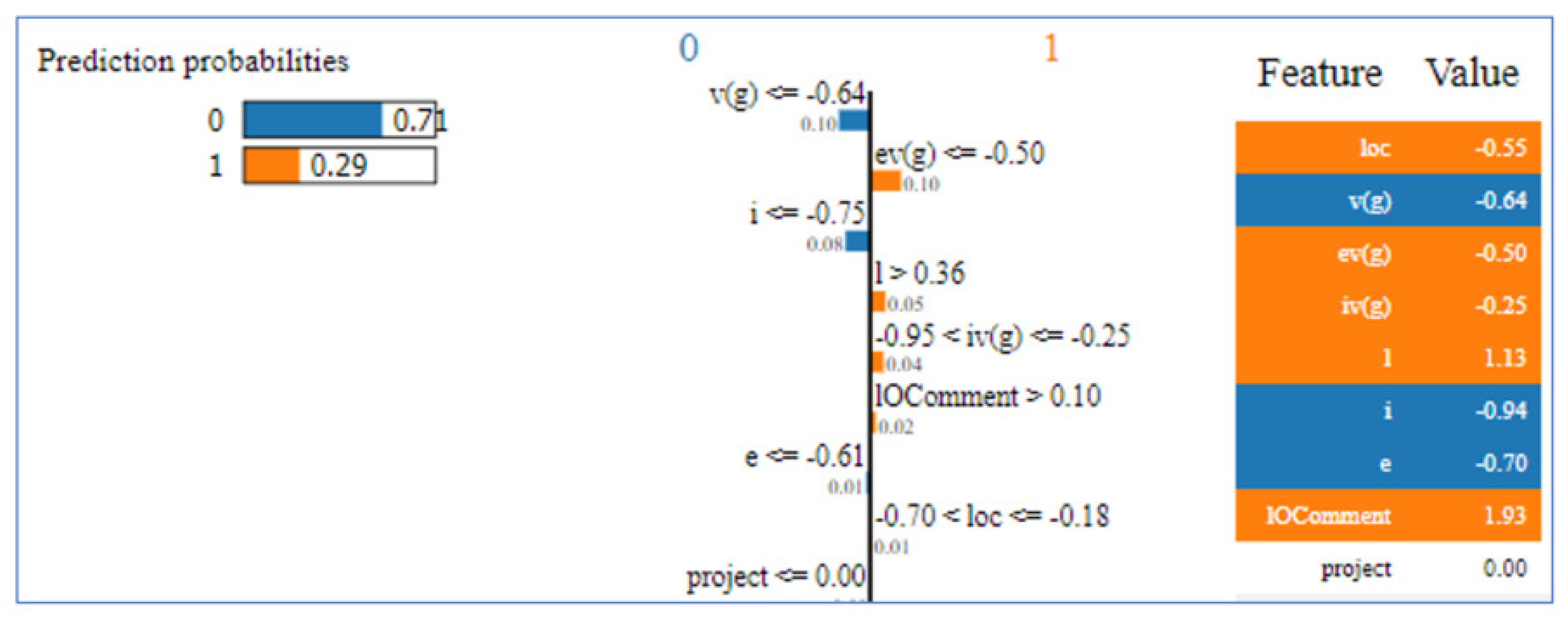

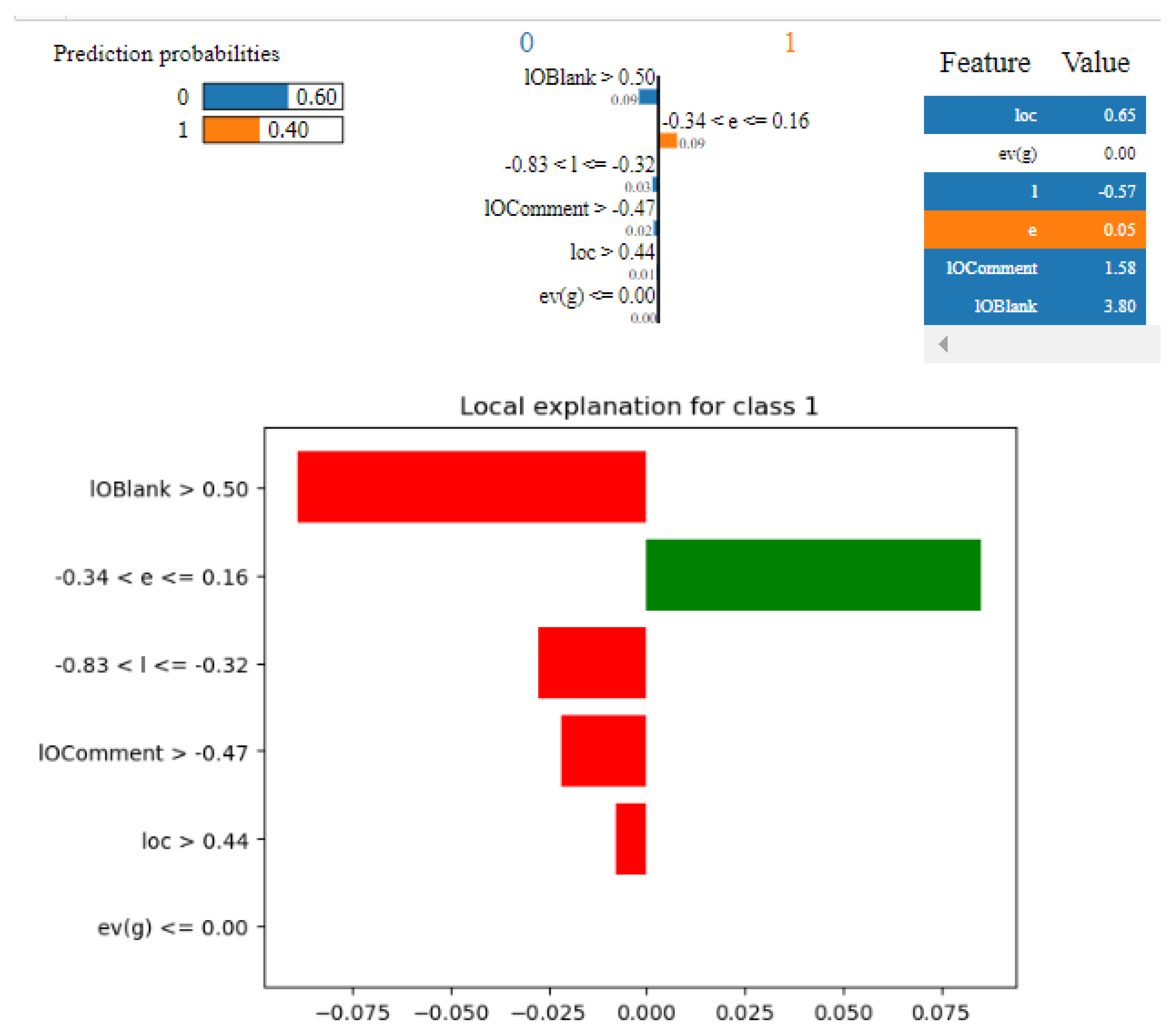

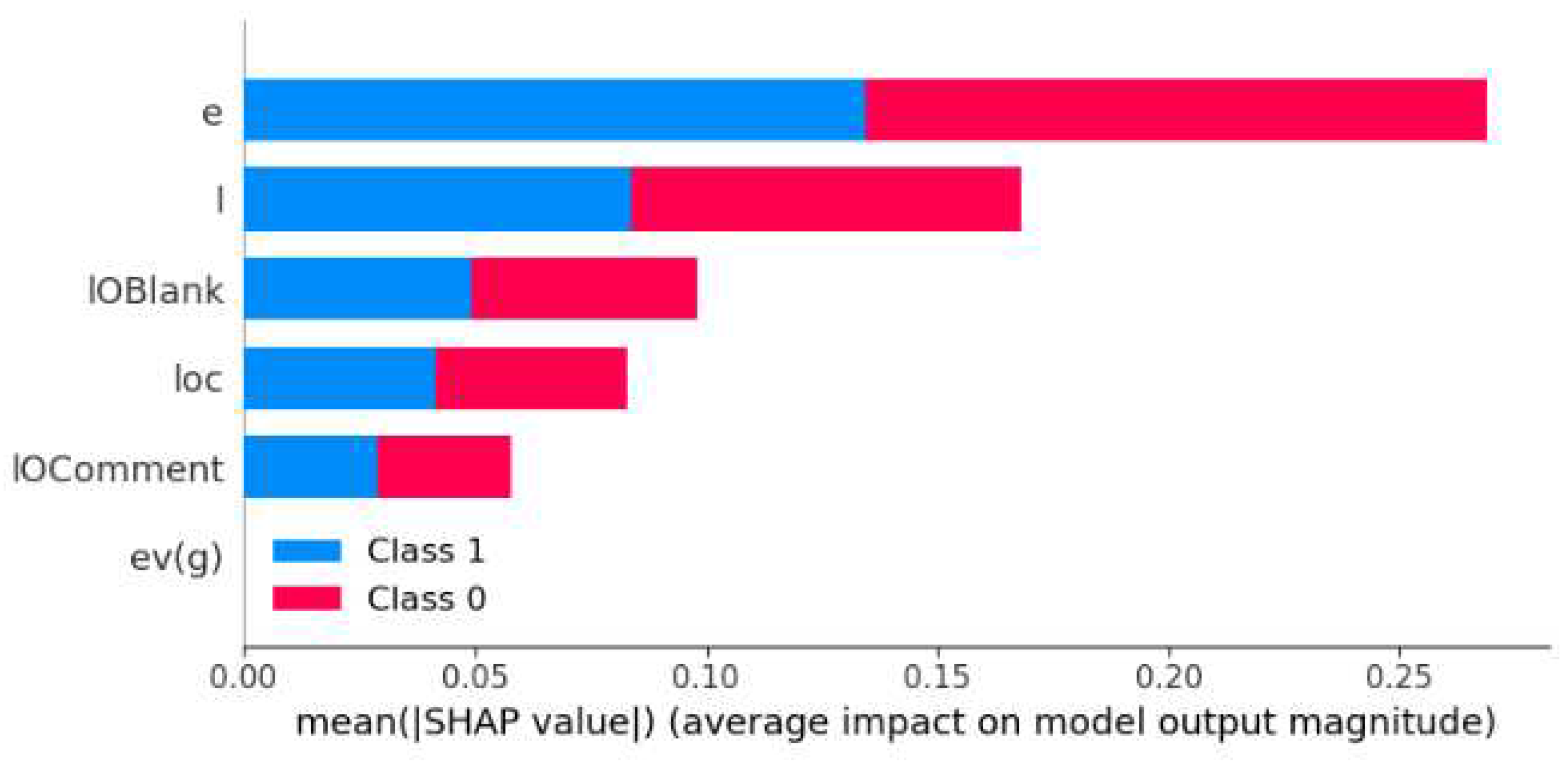

- LIME (Local Interpretable Model-agnostic Explanations)

3.4. Evaluation Strategy

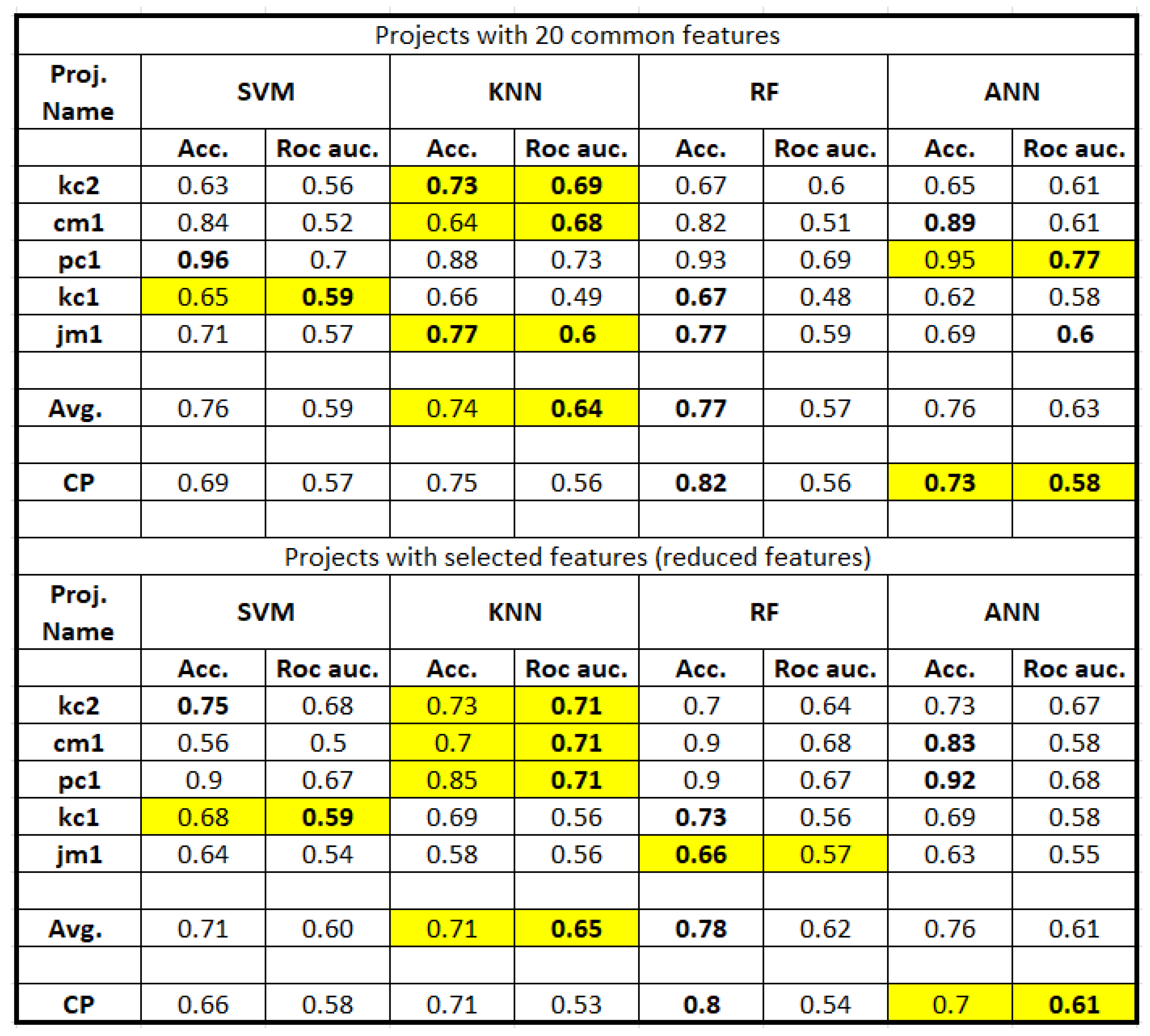

4. Results

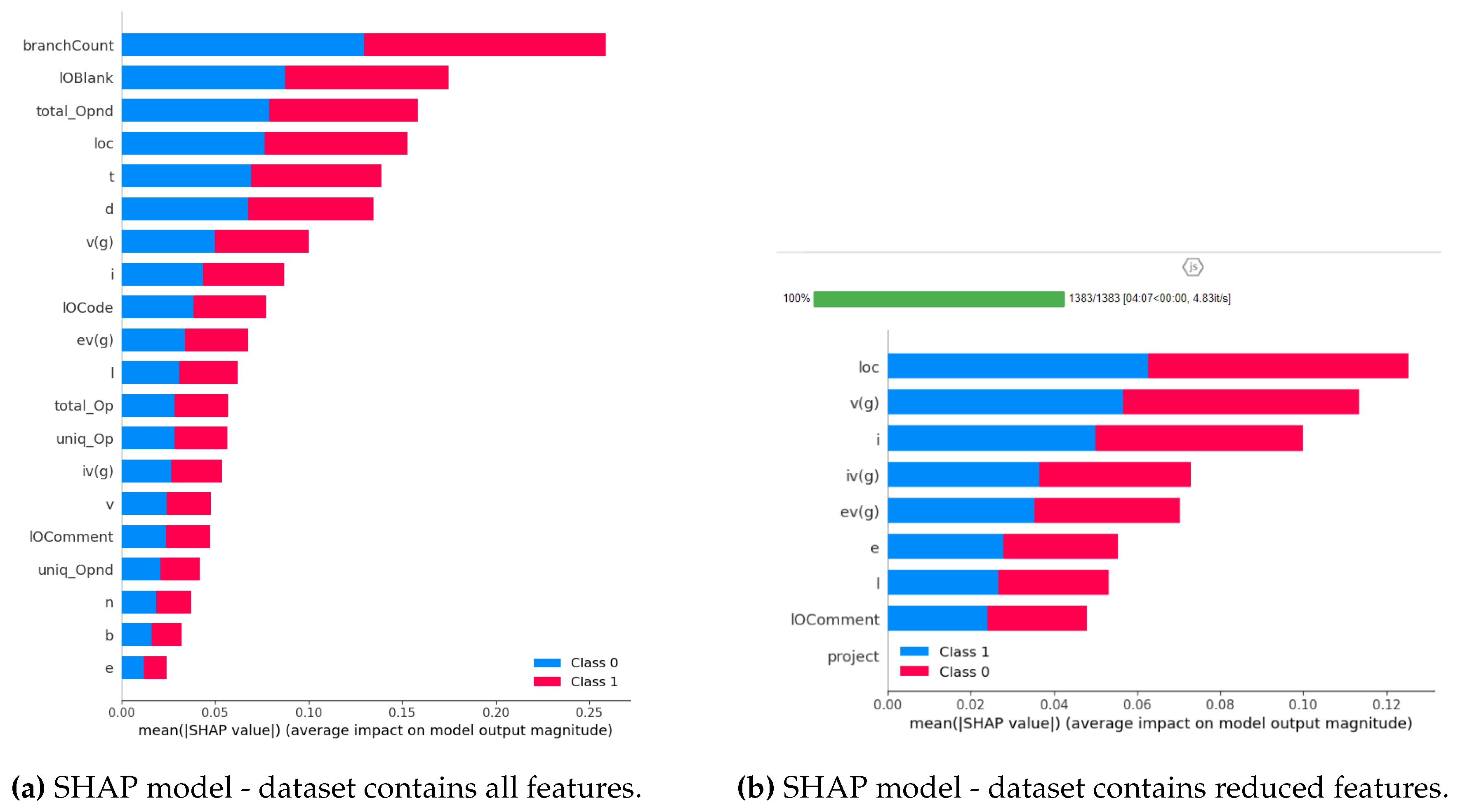

5. Analysis and Discussion

6. Threats to validity

7. Conclusion and Future Work

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| MDPI | Multidisciplinary Digital Publishing Institute |

| LIME | Local Interpretable Model-Agnostic Explanations |

| SHAP | SHapley Additive Explanations |

| SDP | Software Defect Prediction |

References

- Punitha, K.; Chitra, S. Software defect prediction using software metrics-A survey. 2013 International Conference on Information Communication and Embedded Systems (ICICES). IEEE, 2013, pp. 555–558. [CrossRef]

- Shepperd, M.; Song, Q.; Sun, Z.; Mair, C. Data quality: Some comments on the nasa software defect datasets. IEEE Transactions on Software Engineering 2013, 39, 1208–1215. [Google Scholar] [CrossRef]

- Li, Z.; Jing, X.Y.; Zhu, X. Progress on approaches to software defect prediction. Iet Software 2018, 12, 161–175. [Google Scholar] [CrossRef]

- He, P.; Li, B.; Liu, X.; Chen, J.; Ma, Y. An empirical study on software defect prediction with a simplified metric set. Information and Software Technology 2015, 59, 170–190. [Google Scholar] [CrossRef]

- Balogun, A.O.; Basri, S.; Mahamad, S.; Abdulkadir, S.J.; Capretz, L.F.; Imam, A.A.; Almomani, M.A.; Adeyemo, V.E.; Kumar, G. Empirical analysis of rank aggregation-based multi-filter feature selection methods in software defect prediction. Electronics 2021, 10, 179. [Google Scholar] [CrossRef]

- Ghotra, B.; McIntosh, S.; Hassan, A.E. A large-scale study of the impact of feature selection techniques on defect classification models. 2017 IEEE/ACM 14th International Conference on Mining Software Repositories (MSR). IEEE, 2017, pp. 146–157.

- Haldar, S.; Capretz, L.F. Explainable Software Defect Prediction from Cross Company Project Metrics using Machine Learning. 2023 7th International Conference on Intelligent Computing and Control Systems (ICICCS). IEEE, 2023, pp. 150–157.

- Aleem, S.; Capretz, L.; Ahmed, F. Benchmarking Machine Learning Techniques for Software Defect Detection. International Journal of Software Engineering & Applications 2015, 6, 11–23. [Google Scholar] [CrossRef]

- Aydin, Z.B.G.; Samli, R. Performance Evaluation of Some Machine Learning Algorithms in NASA Defect Prediction Data Sets. 2020 5th International Conference on Computer Science and Engineering (UBMK). IEEE, 2020, pp. 1–3.

- Menzies, T.; Greenwald, J.; Frank, A. Data mining static code attributes to learn defect predictors. IEEE transactions on software engineering 2007, 33, 2–13. [Google Scholar] [CrossRef]

- Nassif, A.B.; Ho, D.; Capretz, L.F. Regression model for software effort estimation based on the use case point method. 2011 International Conference on Computer and Software Modeling, 2011, Vol. 14, pp. 106–110.

- Goyal, S. Effective software defect prediction using support vector machines (SVMs). International Journal of System Assurance Engineering and Management 2022, 13, 681–696. [Google Scholar] [CrossRef]

- Ryu, D.; Jang, J.I.; Baik, J. A hybrid instance selection using nearest-neighbor for cross-project defect prediction. Journal of Computer Science and Technology 2015, 30, 969–980. [Google Scholar] [CrossRef]

- Thapa, S.; Alsadoon, A.; Prasad, P.; Al-Dala’in, T.; Rashid, T.A. Software Defect Prediction Using Atomic Rule Mining and Random Forest. 2020 5th International Conference on Innovative Technologies in Intelligent Systems and Industrial Applications (CITISIA), 2020, pp. 1–8. [CrossRef]

- Jayanthi, R.; Florence, L. Software defect prediction techniques using metrics based on neural network classifier. Cluster Computing 2019, 22, 77–88. [Google Scholar] [CrossRef]

- Fan, G.; Diao, X.; Yu, H.; Yang, K.; Chen, L.; others. Software defect prediction via attention-based recurrent neural network. Scientific Programming 2019, 2019, 1––14.

- Tang, Y.; Dai, Q.; Yang, M.; Du, T.; Chen, L. Software defect prediction ensemble learning algorithm based on adaptive variable sparrow search algorithm. International Journal of Machine Learning and Cybernetics 2023, pp. 1–21.

- Balasubramaniam, S.; Gollagi, S.G. Software defect prediction via optimal trained convolutional neural network. Advances in Engineering Software 2022, 169, 103138. [Google Scholar] [CrossRef]

- Bai, J.; Jia, J.; Capretz, L.F. A three-stage transfer learning framework for multi-source cross-project software defect prediction. Information and Software Technology 2022, 150, 106985. [Google Scholar] [CrossRef]

- Cao, Q.; Sun, Q.; Cao, Q.; Tan, H. Software defect prediction via transfer learning based neural network. 2015 First international conference on reliability systems engineering (ICRSE). IEEE, 2015, pp. 1–10.

- Joon, A.; Kumar Tyagi, R.; Kumar, K. Noise Filtering and Imbalance Class Distribution Removal for Optimizing Software Fault Prediction using Best Software Metrics Suite. 2020 5th International Conference on Communication and Electronics Systems (ICCES), 2020, pp. 1381–1389. [CrossRef]

- Aggarwal, C.C.; Aggarwal, C.C. An introduction to outlier analysis; Springer, 2017.

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: synthetic minority over-sampling technique. Journal of artificial intelligence research 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Balogun, A.; Basri, S.; Jadid Abdulkadir, S.; Adeyemo, V.; Abubakar Imam, A.; Bajeh, A. SOFTWARE DEFECT PREDICTION: ANALYSIS OF CLASS IMBALANCE AND PERFORMANCE STABILITY. Journal of Engineering Science and Technology 2019, 14, 3294–3308. [Google Scholar]

- Pelayo, L.; Dick, S. Applying novel resampling strategies to software defect prediction. NAFIPS 2007-2007 Annual meeting of the North American fuzzy information processing society. IEEE, 2007, pp. 69–72. [CrossRef]

- Dipa, W.A.; Sunindyo, W.D. Software Defect Prediction Using SMOTE and Artificial Neural Network. 2021 International Conference on Data and Software Engineering (ICoDSE), 2021, pp. 1–4. [CrossRef]

- Yedida, R.; Menzies, T. On the value of oversampling for deep learning in software defect prediction. IEEE Transactions on Software Engineering 2021, 48, 3103–3116. [Google Scholar] [CrossRef]

- Chen, D.; Chen, X.; Li, H.; Xie, J.; Mu, Y. Deepcpdp: Deep learning based cross-project defect prediction. IEEE Access 2019, 7, 184832–184848. [Google Scholar] [CrossRef]

- Altland, H.W. Regression analysis: statistical modeling of a response variable, 1999.

- Yang, X.; Wen, W. Ridge and Lasso Regression Models for Cross-Version Defect Prediction. IEEE Transactions on Reliability 2018, 67, 885–896. [Google Scholar] [CrossRef]

- Gezici, B.; Tarhan, A.K. Explainable AI for Software Defect Prediction with Gradient Boosting Classifier. 2022 7th International Conference on Computer Science and Engineering (UBMK). IEEE, 2022, pp. 1–6.

- Jiarpakdee, J.; Tantithamthavorn, C.K.; Grundy, J. Practitioners’ Perceptions of the Goals and Visual Explanations of Defect Prediction Models. 2021 IEEE/ACM 18th International Conference on Mining Software Repositories (MSR), 2021, pp. 432–443. [CrossRef]

- Sayyad Shirabad, J.; Menzies, T.J. The PROMISE Repository of Software Engineering Databases. School of Information Technology and Engineering 2005. [Google Scholar]

- Thant, M.W.; Aung, N.T.T. Software defect prediction using hybrid approach. 2019 International Conference on Advanced Information Technologies (ICAIT). IEEE, 2019, pp. 262–267. [CrossRef]

- Rajnish, K.; Bhattacharjee, V.; Chandrabanshi, V. Applying Cognitive and Neural Network Approach over Control Flow Graph for Software Defect Prediction. 2021 Thirteenth International Conference on Contemporary Computing (IC3-2021), 2021, pp. 13–17.

- Jindal, R.; Malhotra, R.; Jain, A. Software defect prediction using neural networks. Proceedings of 3rd International Conference on Reliability, Infocom Technologies and Optimization. IEEE, 2014, pp. 1–6.

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; others. Scikit-learn: Machine learning in Python. the Journal of machine Learning research 2011, 12, 2825–2830. [Google Scholar]

- Gray, D.; Bowes, D.; Davey, N.; Sun, Y.; Christianson, B. The misuse of the NASA metrics data program data sets for automated software defect prediction. 15th Annual Conference on Evaluation & Assessment in Software Engineering (EASE 2011), 2011, pp. 96–103. [CrossRef]

- Shan, C.; Chen, B.; Hu, C.; Xue, J.; Li, N. Software defect prediction model based on LLE and SVM. IET Conference Publications 2014, 2014. [Google Scholar] [CrossRef]

- Cover, T.; Hart, P. Nearest neighbor pattern classification. IEEE transactions on information theory 1967, 13, 21–27. [Google Scholar] [CrossRef]

- Nasser, A.B.; Ghanem, W.; Abdul-Qawy, A.S.H.; Ali, M.A.H.; Saad, A.M.; Ghaleb, S.A.A.; Alduais, N. A Robust Tuned K-Nearest Neighbours Classifier for Software Defect Prediction. Proceedings of the 2nd International Conference on Emerging Technologies and Intelligent Systems; Al-Sharafi, M.A., Al-Emran, M., Al-Kabi, M.N., Shaalan, K., Eds.; Springer International Publishing: Cham, 2023; pp. 181–193. [Google Scholar]

- Breiman, L. Random forests. Machine learning 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Soe, Y.N.; Santosa, P.I.; Hartanto, R. Software defect prediction using random forest algorithm. 2018 12th South East Asian Technical University Consortium (SEATUC). IEEE, 2018, Vol. 1, pp. 1–5. [CrossRef]

- Biecek, P.; Burzykowski, T. Local interpretable model-agnostic explanations (LIME). Explanatory Model Analysis; Chapman and Hall/CRC: New York, NY, USA 2021, pp. 107–123. [CrossRef]

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. " Why should i trust you?" Explaining the predictions of any classifier. Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining, 2016, pp. 1135–1144. [CrossRef]

- Esteves, G.; Figueiredo, E.; Veloso, A.; Viggiato, M.; Ziviani, N. Understanding machine learning software defect predictions. Automated Software Engineering 2020, 27, 369–392. [Google Scholar] [CrossRef]

| File name | No of observation | Programming language | Predictors | Defect ratio |

|---|---|---|---|---|

| cm1 | 498 | C | 21 | 9.8% |

| kc2 | 512 | C++ | 21 | 28.0% |

| pc1 | 1109 | C | 21 | 7.3% |

| kc1 | 2109 | C++ | 21 | 26.0% |

| jm1 | 10885 | C | 21 | 19.3% |

| Feature name | Description | Type |

|---|---|---|

| loc | McCabe’s line count of code | float64 |

| l | Halstead program length | float64 |

| e | Halstead effort | float64 |

| loComment | Halstead’s count of lines of comments | float64 |

| v(g) | McCabe’s cyclomatic complexity | float64 |

| ev(g) | McCabe’s essential complexity | float64 |

| iv(g) | McCabe’s design complexity | float64 |

| n | Halstead total operators + operands | float64 |

| v | Halstead volume | float64 |

| d | Halstead program difficulty | float64 |

| i | Halstead intelligence | float64 |

| b | Number of delivered bugs | float64 |

| t | Halstead’s time estimator | float64 |

| loCode | Halstead’s line count | float64 |

| loBlank | Halstead’s count of blank lines | float64 |

| locCodeAndComment | Line of code and comment | float64 |

| uniq_Op | Unique operators | float64 |

| uniq_Opnd | Unique operands | float64 |

| total_Op | Total operators | float64 |

| total_Opnd | Total operands | float64 |

| branchCount | Total branches in program | float64 |

| Defects | {False, true} defective/non-defective module | Category |

| Project name | Features | Total samples | Cleaned samples | Regular | SMOTE |

|---|---|---|---|---|---|

| kc2 | 5 | 522 | 198 | 30, 108 | 108,108 |

| cm1 | 7 | 498 | 289 | 20 ,182 | 182, 182 |

| pc1 | 7 | 1109 | 601 | 23, 397 | 23, 397 |

| kc1 | 6 | 2109 | 718 | 116, 386 | 386, 386 |

| jm1 | 5 | 10885 | 4574 | 509, 2692 | 2692, 2692 |

| CP | 9 | 15123 | 4608 | 491, 2734 | 2734, 2734 |

| File Name | Selected Features |

|---|---|

| kc2 | loc, ev(g), l, e, lOComment |

| cm1 | loc, ev(g), l, e, lOCode, lOComment, lOBlank |

| pc1 | loc,v(g), ev(g), l , e, lOComment, lOBlank |

| kc1 | loc, ev(g), l,e, lOComment, lOBlank |

| jm1 | loc, l, i, e, lOComment |

| Cross Project | loc, v(g), ev(g), iv(g), l, i, e, lOComment, project |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).