Submitted:

25 December 2023

Posted:

26 December 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

- An automated framework to identify salient entities in prompts and collect corresponding factual data from validated online sources in real-time

- Optimized prompt annotation techniques that seamlessly integrate such data to provide relevant context to models

- Demonstrating for the first time that factual grounding through dynamic entity-focused data injection significantly enhances accuracy in LLMs while reducing content hallucination issues

2. Related Work

2.1. Knowledge Injection in LLMs

2.2. Mitigating Content Hallucination in LLMs

3. Methodology

3.1. Architectural Modifications to Alpaca

3.2. Data Collection

- Comprehensiveness: BIG-bench encompasses over 200 diverse tasks, ensuring wide-ranging linguistic and cognitive evaluation.

- Innovative Challenges: It is designed to test the frontiers of language models, venturing beyond traditional benchmarks.

- Continuous Evolution: New tasks are added on a rolling basis, contributing to an ever-expanding suite of assessments.

- Collaborative Platform: BIG-bench encourages contributions from researchers, reflecting a collective effort to improve language models.

- Accessibility: With public repositories and leaderboards, it provides an open and transparent platform for model comparison.

3.3. Annotation Process

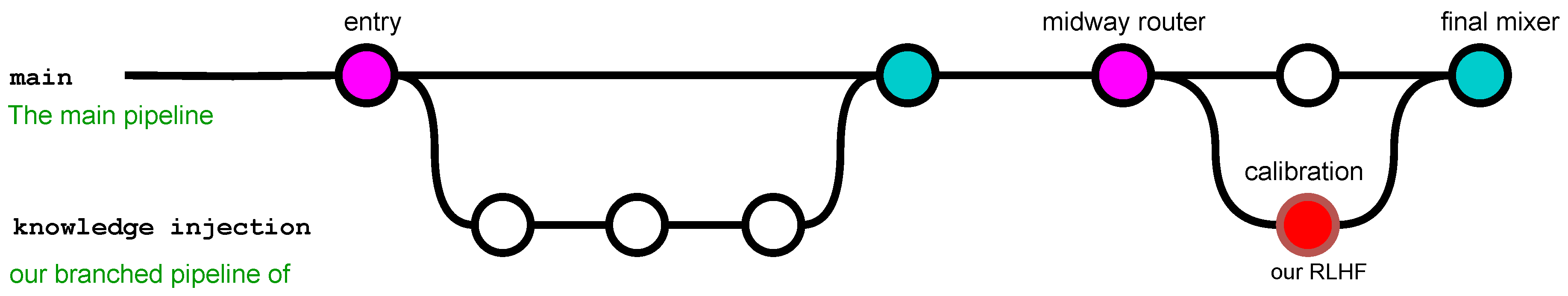

- Real-time data identified for annotation is intercepted and rerouted to a specialized enhancement module within the RLHF.

- This module employs advanced algorithms to append metadata, enhancing the data with contextual relevance and ensuring compatibility with Alpaca’s existing knowledge base.

- Enhanced data is then carefully merged back into the main information flow, ready for prompt integration.

- After initial output generation by Alpaca, a post-generation calibration stage within the RLHF refines the content. This stage applies reinforcement learning from human feedback to adjust and fine-tune the output, ensuring it meets the highest standards of accuracy and relevance.

- The calibrated content is finally integrated into the output, providing a seamless blend of pre-trained knowledge with the enhanced real-time data.

3.4. Model Integration

4. Experiment and Results

4.1. Experimental Setup

4.2. Results

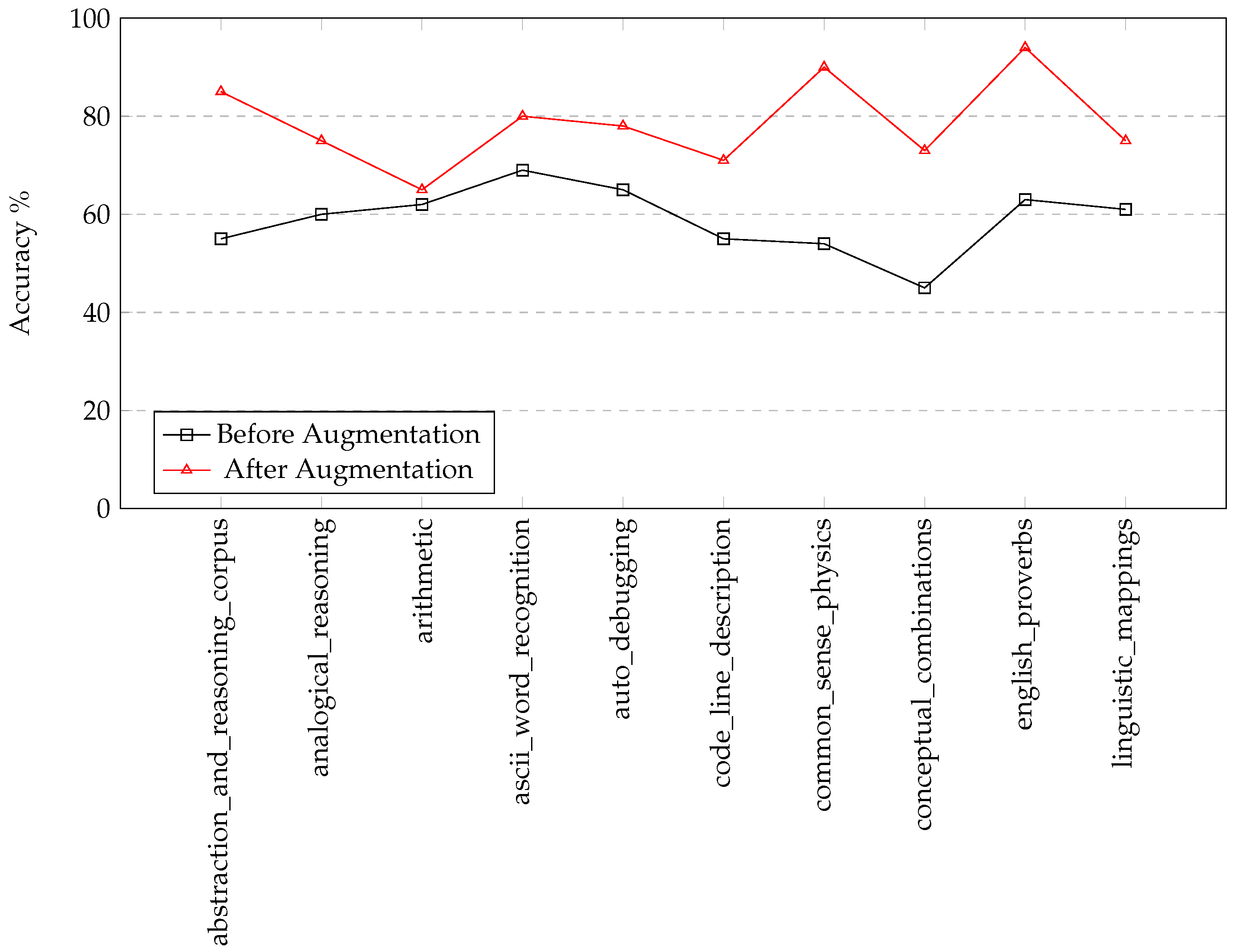

4.2.1. Accuracy Improvement

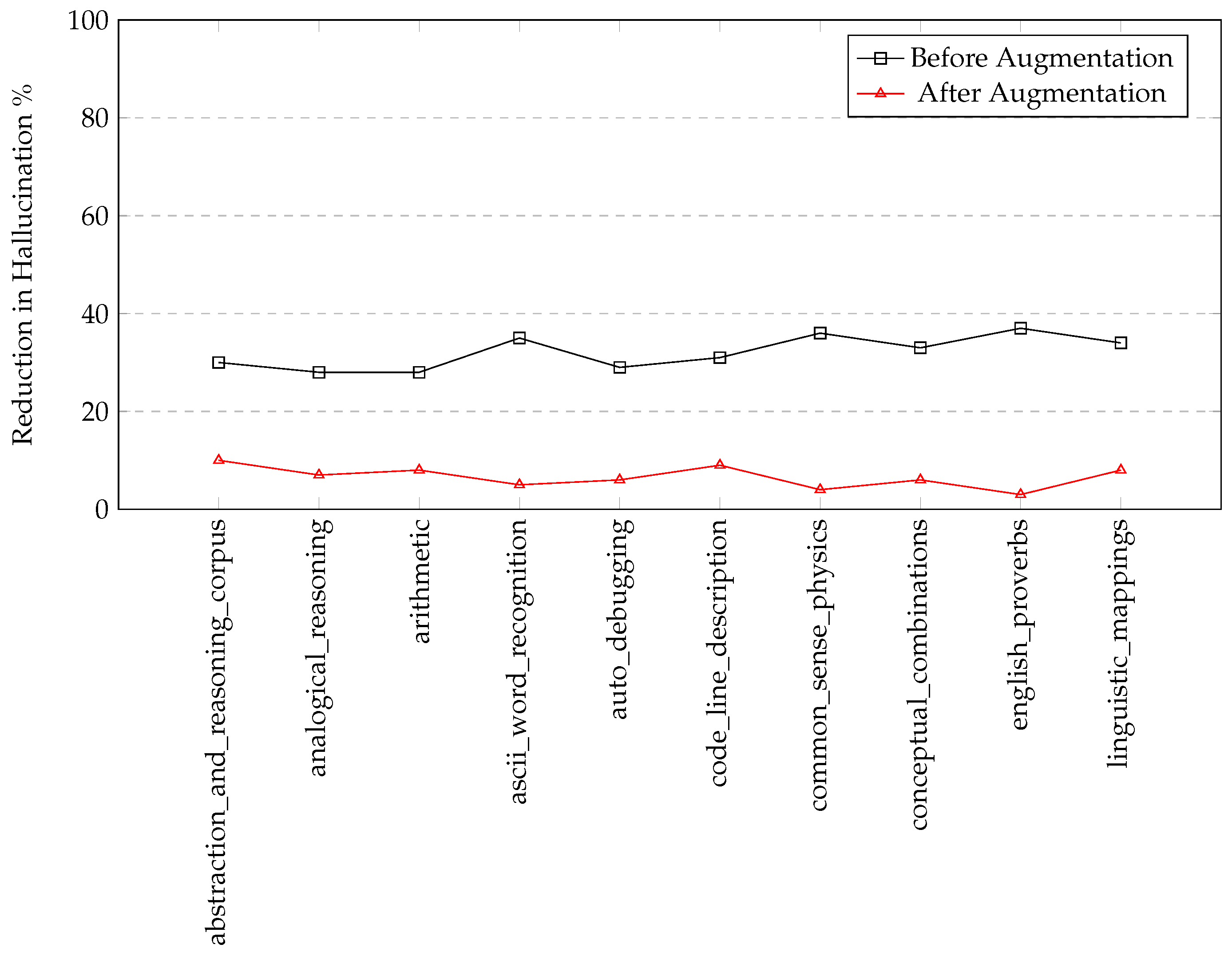

4.2.2. Reduction in Content Hallucination

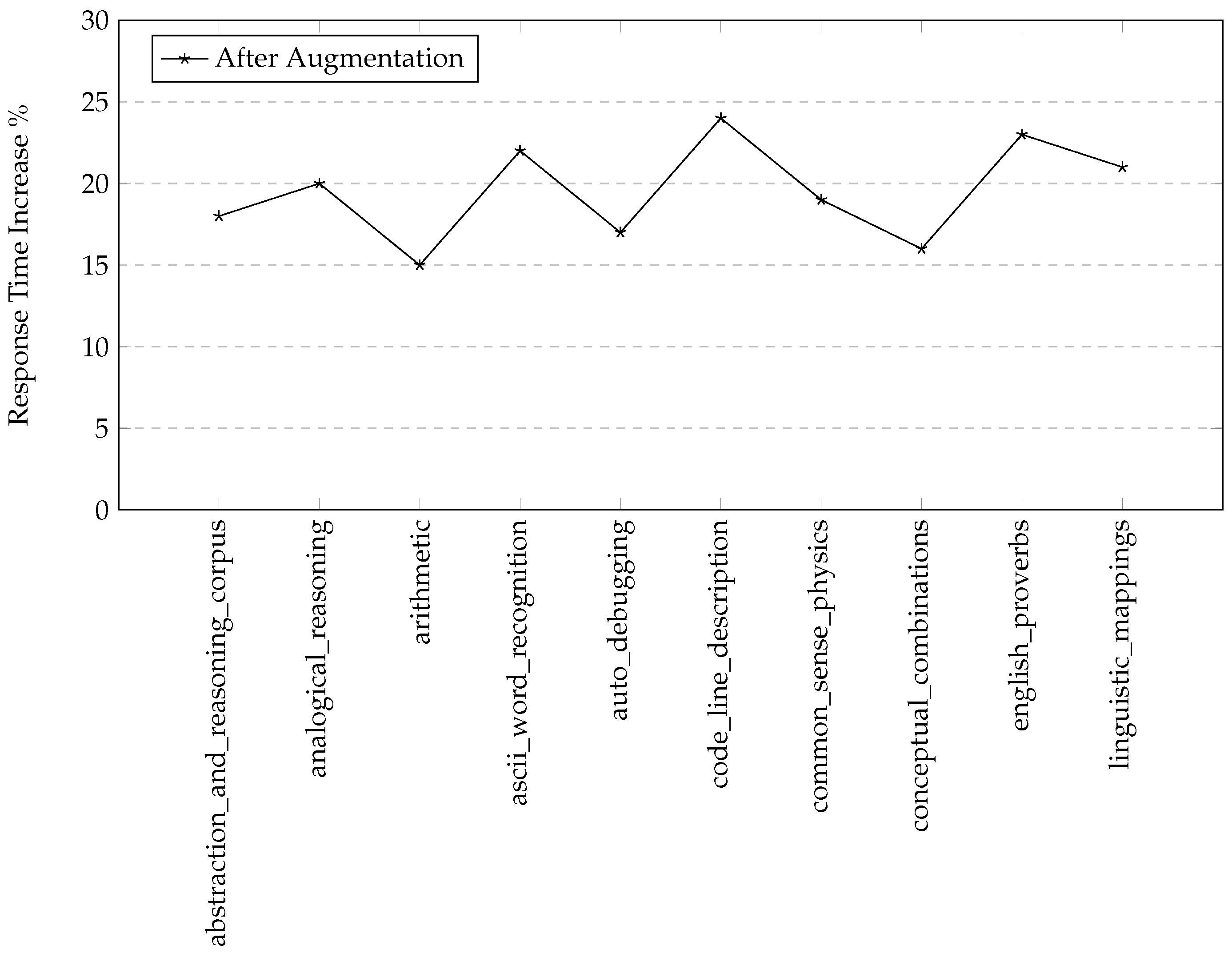

4.2.3. Response Time Efficiency

5. Discussion

5.1. Broader Implications of Dynamic Data Injection

5.2. Evaluating Trade-offs in Enhanced LLMs

5.3. Ethical Dimensions and Model Responsibility

5.4. Prospective Avenues for Dynamic Data Integration

6. Conclusion and Future Work

Conflicts of Interest

References

- Hu, L.; Liu, Z.; Zhao, Z.; Hou, L.; Nie, L.; Li, J. A survey of knowledge enhanced pre-trained language models. IEEE Transactions on Knowledge and Data Engineering 2023. [Google Scholar] [CrossRef]

- Wang, B.; Xie, Q.; Pei, J.; Chen, Z.; Tiwari, P.; Li, Z.; Fu, J. Pre-trained language models in biomedical domain: A systematic survey. ACM Computing Surveys 2023, 56, 1–52. [Google Scholar] [CrossRef]

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: a visual language model for few-shot learning. Advances in Neural Information Processing Systems 2022, 35, 23716–23736. [Google Scholar]

- Rastogi, C.; Tulio Ribeiro, M.; King, N.; Nori, H.; Amershi, S. Supporting human-ai collaboration in auditing llms with llms. In Proceedings of the Proceedings of the 2023 AAAI/ACM Conference on AI, Ethics, and Society, 2023, pp. 913–926.

- McIntosh, T.R.; Susnjak, T.; Liu, T.; Watters, P.; Halgamuge, M.N. From Google Gemini to OpenAI Q*(Q-Star): A Survey of Reshaping the Generative Artificial Intelligence (AI) Research Landscape. arXiv:2312.10868 2023.

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y.J.; Madotto, A.; Fung, P. Survey of hallucination in natural language generation. ACM Computing Surveys 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Cui, C.; Ma, Y.; Cao, X.; Ye, W.; Zhou, Y.; Liang, K.; Chen, J.; Lu, J.; Yang, Z.; Liao, K.D.; et al. A survey on multimodal large language models for autonomous driving. In Proceedings of the Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2024, pp. 958–979.

- Yang, R.; Tan, T.F.; Lu, W.; Thirunavukarasu, A.J.; Ting, D.S.W.; Liu, N. Large language models in health care: Development, applications, and challenges. Health Care Science 2023, 2, 255–263. [Google Scholar] [CrossRef]

- Chang, Y.; Wang, X.; Wang, J.; Wu, Y.; Zhu, K.; Chen, H.; Yang, L.; Yi, X.; Wang, C.; Wang, Y.; et al. A survey on evaluation of large language models. arXiv preprint arXiv:2307.03109 2023.

- Li, J.; Cheng, X.; Zhao, W.X.; Nie, J.Y.; Wen, J.R. Halueval: A large-scale hallucination evaluation benchmark for large language models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023, pp. 6449–6464.

- Guerreiro, N.M.; Alves, D.M.; Waldendorf, J.; Haddow, B.; Birch, A.; Colombo, P.; Martins, A.F. Hallucinations in large multilingual translation models. Transactions of the Association for Computational Linguistics 2023, 11, 1500–1517. [Google Scholar] [CrossRef]

- Thirunavukarasu, A.J.; Ting, D.S.J.; Elangovan, K.; Gutierrez, L.; Tan, T.F.; Ting, D.S.W. Large language models in medicine. Nature medicine 2023, 29, 1930–1940. [Google Scholar] [CrossRef] [PubMed]

- Hou, X.; Zhao, Y.; Liu, Y.; Yang, Z.; Wang, K.; Li, L.; Luo, X.; Lo, D.; Grundy, J.; Wang, H. Large language models for software engineering: A systematic literature review. arXiv preprint arXiv:2308.10620 2023.

- Wang, C.; Liu, X.; Yue, Y.; Tang, X.; Zhang, T.; Jiayang, C.; Yao, Y.; Gao, W.; Hu, X.; Qi, Z.; et al. Survey on factuality in large language models: Knowledge, retrieval and domain-specificity. arXiv preprint arXiv:2310.07521 2023.

- He, K.; Mao, R.; Lin, Q.; Ruan, Y.; Lan, X.; Feng, M.; Cambria, E. A survey of large language models for healthcare: from data, technology, and applications to accountability and ethics. arXiv preprint arXiv:2310.05694 2023.

- Ziems, C.; Shaikh, O.; Zhang, Z.; Held, W.; Chen, J.; Yang, D. Can large language models transform computational social science? Computational Linguistics 2023, pp. 1–53.

- Myers, D.; Mohawesh, R.; Chellaboina, V.I.; Sathvik, A.L.; Venkatesh, P.; Ho, Y.H.; Henshaw, H.; Alhawawreh, M.; Berdik, D.; Jararweh, Y. Foundation and large language models: fundamentals, challenges, opportunities, and social impacts. Cluster Computing 2023, pp. 1–26.

- Rae, J.W.; Borgeaud, S.; Cai, T.; Millican, K.; Hoffmann, J.; Song, F.; Aslanides, J.; Henderson, S.; Ring, R.; Young, S.; et al. Scaling language models: Methods, analysis & insights from training gopher. arXiv preprint arXiv:2112.11446 2021.

- Reynolds, L.; McDonell, K. Prompt programming for large language models: Beyond the few-shot paradigm. In Proceedings of the Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems, 2021, pp. 1–7.

- Han, S.J.; Ransom, K.J.; Perfors, A.; Kemp, C. Inductive reasoning in humans and large language models. Cognitive Systems Research 2024, 83, 101155. [Google Scholar] [CrossRef]

- Min, B.; Ross, H.; Sulem, E.; Veyseh, A.P.B.; Nguyen, T.H.; Sainz, O.; Agirre, E.; Heintz, I.; Roth, D. Recent advances in natural language processing via large pre-trained language models: A survey. ACM Computing Surveys 2023, 56, 1–40. [Google Scholar] [CrossRef]

- Packer, C.; Fang, V.; Patil, S.G.; Lin, K.; Wooders, S.; Gonzalez, J.E. MemGPT: Towards LLMs as operating systems. arXiv preprint arXiv:2310.08560 2023.

- Gabriel, S.; Bhagavatula, C.; Shwartz, V.; Le Bras, R.; Forbes, M.; Choi, Y. Paragraph-level commonsense transformers with recurrent memory. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2021, Vol. 35, pp. 12857–12865.

- Shoeybi, M.; Patwary, M.; Puri, R.; LeGresley, P.; Casper, J.; Catanzaro, B. Megatron-lm: Training multi-billion parameter language models using model parallelism. arXiv preprint arXiv:1909.08053 2019.

- Zhang, S.; Zeng, X.; Wu, Y.; Yang, Z. Harnessing Scalable Transactional Stream Processing for Managing Large Language Models [Vision]. arXiv preprint arXiv:2307.08225 2023.

- CHen, Z.; Cao, L.; Madden, S.; Fan, J.; Tang, N.; Gu, Z.; Shang, Z.; Liu, C.; Cafarella, M.; Kraska, T. SEED: Simple, Efficient, and Effective Data Management via Large Language Models. arXiv preprint arXiv:2310.00749 2023.

- Yang, H.; Liu, X.Y.; Wang, C.D. FinGPT: Open-Source Financial Large Language Models. arXiv preprint arXiv:2306.06031 2023.

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large language models are zero-shot reasoners. Advances in neural information processing systems 2022, 35, 22199–22213. [Google Scholar]

- Wei, J.; Bosma, M.; Zhao, V.Y.; Guu, K.; Yu, A.W.; Lester, B.; Du, N.; Dai, A.M.; Le, Q.V. Finetuned language models are zero-shot learners. arXiv preprint arXiv:2109.01652 2021.

- Zhang, J.; Nie, P.; Li, J.J.; Gligoric, M. Multilingual code co-evolution using large language models. In Proceedings of the Proceedings of the 31st ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, 2023, pp. 695–707.

- Dhuliawala, S.; Komeili, M.; Xu, J.; Raileanu, R.; Li, X.; Celikyilmaz, A.; Weston, J. Chain-of-verification reduces hallucination in large language models. arXiv preprint arXiv:2309.11495 2023.

- Cheng, Q.; Sun, T.; Zhang, W.; Wang, S.; Liu, X.; Zhang, M.; He, J.; Huang, M.; Yin, Z.; Chen, K.; et al. Evaluating Hallucinations in Chinese Large Language Models. arXiv preprint arXiv:2310.03368 2023.

- McIntosh, T.R.; Liu, T.; Susnjak, T.; Watters, P.; Ng, A.; Halgamuge, M.N. A culturally sensitive test to evaluate nuanced gpt hallucination. IEEE Transactions on Artificial Intelligence 2023.

- Ye, H.; Liu, T.; Zhang, A.; Hua,W.; Jia,W. Cognitive mirage: A review of hallucinations in large language models. arXiv preprint arXiv:2309.06794 2023.

- Chen, Y.; Fu, Q.; Yuan, Y.; Wen, Z.; Fan, G.; Liu, D.; Zhang, D.; Li, Z.; Xiao, Y. Hallucination Detection: Robustly Discerning Reliable Answers in Large Language Models. In Proceedings of the Proceedings of the 32nd ACM International Conference on Information and Knowledge Management, 2023, pp. 245–255.

- Hasanain, M.; Ahmed, F.; Alam, F. Large Language Models for Propaganda Span Annotation. arXiv preprint arXiv:2311.09812 2023.

- Semnani, S.; Yao, V.; Zhang, H.; Lam, M. WikiChat: Stopping the Hallucination of Large Language Model Chatbots by Few-Shot Grounding on Wikipedia. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, pp. 2387–2413. [Google Scholar]

- Luo, J.; Xiao, C.; Ma, F. Zero-resource hallucination prevention for large language models. arXiv preprint arXiv:2309.02654 2023.

- Deng, G.; Liu, Y.; Li, Y.; Wang, K.; Zhang, Y.; Li, Z.; Wang, H.; Zhang, T.; Liu, Y. Jailbreaker: Automated jailbreak across multiple large language model chatbots. arXiv preprint arXiv:2307.08715 2023.

- Zhang, Y.; Li, Y.; Cui, L.; Cai, D.; Liu, L.; Fu, T.; Huang, X.; Zhao, E.; Zhang, Y.; Chen, Y.; et al. Siren’s Song in the AI Ocean: A Survey on Hallucination in Large Language Models. arXiv preprint arXiv:2309.01219 2023.

- Yao, J.Y.; Ning, K.P.; Liu, Z.H.; Ning, M.N.; Yuan, L. Llm lies: Hallucinations are not bugs, but features as adversarial examples. arXiv preprint arXiv:2310.01469 2023.

- Gao, L.; Dai, Z.; Pasupat, P.; Chen, A.; Chaganty, A.T.; Fan, Y.; Zhao, V.; Lao, N.; Lee, H.; Juan, D.C.; et al. Rarr: Researching and revising what language models say, using language models. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2023, pp. 16477–16508.

- Wei, S.; Zhao, Y.; Chen, X.; Li, Q.; Zhuang, F.; Liu, J.; Ren, F.; Kou, G. Graph Learning and Its Advancements on Large Language Models: A Holistic Survey. arXiv preprint arXiv:2212.08966 2022.

- Yuan, C.; Xie, Q.; Huang, J.; Ananiadou, S. Back to the Future: Towards Explainable Temporal Reasoning with Large Language Models. arXiv preprint arXiv:2310.01074 2023.

- Zhou, K.; Jurafsky, D.; Hashimoto, T.B. Navigating the Grey Area: How Expressions of Uncertainty and Overconfidence Affect Language Models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023, pp. 5506–5524.

- Wang, C.; Engler, D.; Li, X.; Hou, J.; Wald, D.J.; Jaiswal, K.; Xu, S. Near-real-time Earthquake-induced Fatality Estimation using Crowdsourced Data and Large-Language Models. arXiv preprint arXiv:2312.03755 2023.

- Li, C.; Wang, J.; Zhang, Y.; Zhu, K.; Hou, W.; Lian, J.; Luo, F.; Yang, Q.; Xie, X. Large Language Models Understand and Can be Enhanced by Emotional Stimuli. arXiv preprint arXiv:2307.11760 2023.

- Zaidi, A.; Turbeville, K.; Ivančić, K.; Moss, J.; Gutierrez Villalobos, J.; Sagar, A.; Li, H.; Mehra, C.; Li, S.; Hutchins, S.; et al. Learning Custom Experience Ontologies via Embedding-based Feedback Loops. In Proceedings of the Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, 2023, pp. 1–13.

- Wang, S.; Liu, Z.; Wang, Z.; Guo, J. A Principled Framework for Knowledge-enhanced Large Language Model. arXiv preprint arXiv:2311.11135 2023.

- Zhang, S.; Fu, D.; Zhang, Z.; Yu, B.; Cai, P. Trafficgpt: Viewing, processing and interacting with traffic foundation models. arXiv preprint arXiv:2309.06719 2023.

- Kamath, A.; Senthilnathan, A.; Chakraborty, S.; Deligiannis, P.; Lahiri, S.K.; Lal, A.; Rastogi, A.; Roy, S.; Sharma, R. Finding Inductive Loop Invariants using Large Language Models. arXiv preprint arXiv:2311.07948 2023.

- bench authors, B. Beyond the Imitation Game: Quantifying and extrapolating the capabilities of language models. Transactions on Machine Learning Research 2023. [Google Scholar]

| Challenge | Solution |

|---|---|

| Data Format Discrepancy | Developed converters for uniform data representation |

| Synchronization Issues | Implemented real-time data queue management systems |

| Latency Minimization | Optimized algorithms for rapid data processing and integration |

| Module Interfacing | Established protocols for module communication and data exchange |

| Performance Preservation | Continuous testing and tuning to align with model benchmarks |

| Component | Specification |

|---|---|

| Operating System | Ubuntu 22.04.3 LTS |

| Graphics Card | NVIDIA GeForce RTX 4090 Gaming GPU with 24GB GDDR6X VRAM |

| Memory | 64GB DDR5 |

| CPU | AMD Ryzen 9 7900X |

| Storage | 2TB SSD |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).