5. Experimental Results

To validate that augmenting a training set of vectors with vectors distilled from them by the PCA method increases classification statistics, we conducted experiments applying NN, SVM and LR classifiers on the four databases of feature vectors: skin lesion

SL [

25,

26]; diabetes

D [

27]; heart disease

HD [

28]; breast cancer

BC [

29]. For this purpose, we designed different setups for the training datasets as described in

Section 4.2. The classification results obtained are presented in various tables throughout the present section. In the reporting tables, we show two types of accuracy for each experiment. The first percentage represents the model’s accuracy for one iteration, while the 2nd percentage indicates the mean accuracy of the Monte Carlo cross-validation approach for 10 experiments. The bold percentages in all the tables indicate the best results. We presented our results in three separate tables for the classification metrics mentioned in

Section 4.5 for each dataset.

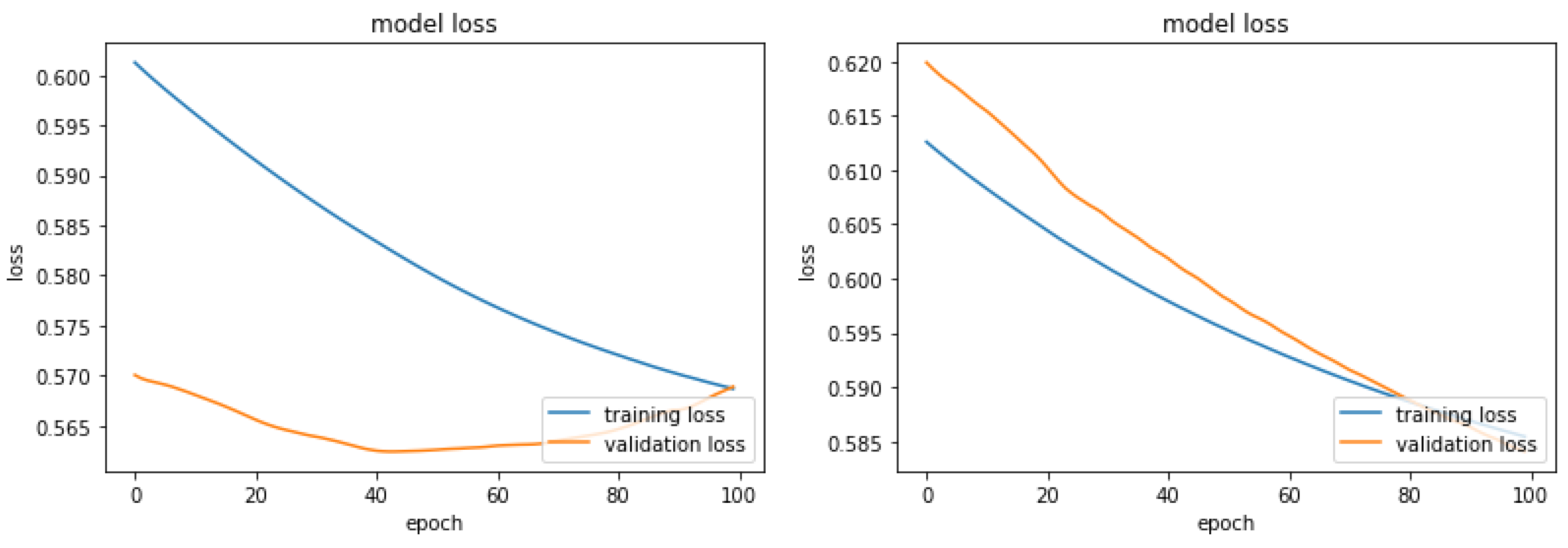

In

Figure 1 are shown the curves of the loss function for the NN model on the SL data. One may observe that the curves of the loss functions for the training processes with original and Augmented Dataset 1 resemble each other. On the other hand, the curves of the loss functions for the two validation processes are very different. The curve for the validation process when the NN was trained with the original vectors is convex from below. It speaks about possible divergence after the 100 epochs. While the curve for validation when the NN was trained with the Augmented Dataset 1 is steadily decreasing, which suggests a classification improvement if more than 100 epochs are conducted.

In

Table 1, we represented the comparison of classification statistics of different classifiers for the

SL dataset and its augmentations mentioned in

Section 4.2. The total number of samples in the original dataset is 162 and we split it to 90% vs 10% for training and testing. Studying

Table 1 one can tell that the mean accuracy of the LR has significantly increased from 71% to 82% for

Augmented dataset 2. Failure in the remaining classifiers may have occurred due to the fact that the total sample size is too ls mall. In some instances, the sensitivity has increased which indicates that the model’s ability to detect malignant samples has increased due to the augmentation. The Specificity of the LR model has increased by 5% which indicates a better ability of the model to detect benign lesions if

Augmented Data 1 are used. Also, the Sensitivity of the NN classifier for the

Augmented Data 1 increased twice, while the SVML increased the same statistics with 5% for the training

Augmented Data 2. Furthermore, the results show that the augmented training data balances the Sensitivity/Specificity ratio, which tells that the classifiers are balanced according to the recognition of TP and TN samples. While in the case of the original data the ability of the NN and the LR to recognize benign samples is twice as big as the recognition of malignant samples.

Note that the

SL dataset is small having only 168 samples. We continue the experimental validation with relatively larger feature vectors databases:

D which contains 768 feature vectors of 8D, with ration P/N 268/500 [

27]; heart desies

HD which contains 1025 feature vectors of 13D, with ration P/N 499/526 [

28]; breast cancer

BC which contains 569 feature vectors of 30D, with ration P/N 212/347 [

29].

The results from

D data classification with the four classifiers are presented in

Table 2,

Table 3 and

Table 4. We apply the NN, the LR and the SVM with linear and polynomial degree 2 kernels and denote the classifiers with SVML and SVMP respectively. The classifiers are trained with Original, Original +

-PCA-d and Original +

-PCA-d datasets which are described in

Section 4.2. One may notice that the training with the augmented data improved the classification accuracy if compared with the training by the original data. For the NN and SVMP the highest increase came for the

10-fold (the right column in

Table 2) with the single augmentation by 101 distilled vectors. For the LR the highest results come with the double augmentation of

and

distilled vectors, while for the SVML the highest outcome is obtained with

.

On the other hand, the outcomes about Sensitivity and Specificity show high results for the former statistics and twice smaller for the latter. It tells that the augmentations did not balance the classification models as it did in the case of the

SL dataset in

Table 1.

As mentioned above we conducted distillation-augmentation experiments using the heart disease

HD [

28] dataset as well. Recall it contains 1025 feature vectors of dimension 13D. Out of them there are 499 positive (P) vectors and 526 negatives (N). The former vectors indicate heart disease, while the latter indicate heathy samples. The four classifiers are trained again with the following types of sets Original, Original +

-PCA-d and Original +

-PCA-d. The number

denotes the number of vectors distilled from the Original training vectors, while

denotes the number of vectors distilled from the already distilled

vectors. The experimental results, with 90% of the P and 90% of the N randomly selected samples for training, are shown in

Table 5,

Table 6,

Table 7. One may tell from there that the average accuracy increased for all classifiers when they were trained with the augmented set Original +

-PCA-d, which contains double distillation. The Sensitivity increased only for the SVMP because it is already at the maximum for the Original training data for the other classifiers. The specificity increased (significantly for LR, SVML and SVMP) for all classifiers if trained with augmented sets that contain single and double distillated data. Another important achievement obtained with the

HD dataset and the used distillation-augmentations is that all classifiers are balanced according to Sensitivity/Specificity ratio.

With the help of the heart disease

HD [

28] dataset we conducted a second set of experiments decreasing the number of randomly selected original vectors for training to 70%. Now the testing vectors are the remaining 30% of the feature vectors. All other activities such as distilations and augmentations are the same as in the case of 90% selected original feature vectors for training. The obtained results of classification with the four chosen classifiers are shown in

Table 8,

Table 9 and

Table 7.

A study of the results in the above tables show that selecting 70% of Original HD feature vectors for training, distillation and augmentation keeps the same trends of increasing the classification statistics as in the case of 90% selection. Moreover, one may observe that with the 70:30% split the Specificity for all classifiers increased when trained with Original+151-PCA-d dataset. In summary, the augmentation of the original training set with distilled vectors from this set increases the classification statistics and makes the classifiers balanced. Only that, the classification statistics in the 70% split are smaller than the classification statistics in the 90% case.

We conducted the final set of experiments with the

BC dataset which contains 569 feature vectors of 30D, with ratio P/N 212/347 [

29]. For training we randomly selected, ten times, 90% of the P and 90% of the negative samples. Every time, from every selection of training samples, we distilled 101, 151 and 201 vectors with same dimensions as the original training vectors. From every distilled set we distilled 51, 77, and 101 vectors respectively as a second distillation. The experimental results with the Original and the augmented training sets Original +

-PCA-d and Original +

-PCA-d are reported in

Table 11,

Table 12,

Table 13. Recall with

we denote a set of vectors distilled during the first distillation, while

denotes set of vectors from the second distillation.

A study of the results show the significant increase of the classification accuracy of the NN for the augmented set Original+101-PCA-d and for the SVML classifier with the augmented set Original+151-PCA-d . In what concern the LR and SVMP the their highest results with the latter augmented set are same as the accuracy of classification with the Original training data set. The reason is that the accuracies of the latter data set are high enough and there is no room for further increase. Same observation and conclusion hold for the Specificity of the NN and the Sensitivities of the LR and SVMP classifiers. The last two statistics exhibit a significant increase for the remaining classifiers when trained with augmented datasets. Moreover, the ration Sensitivity/Specificity is well balanced leading to the highest Balanced Accuracy = (Sensitivity + Specificity)/2.