III. Methodology

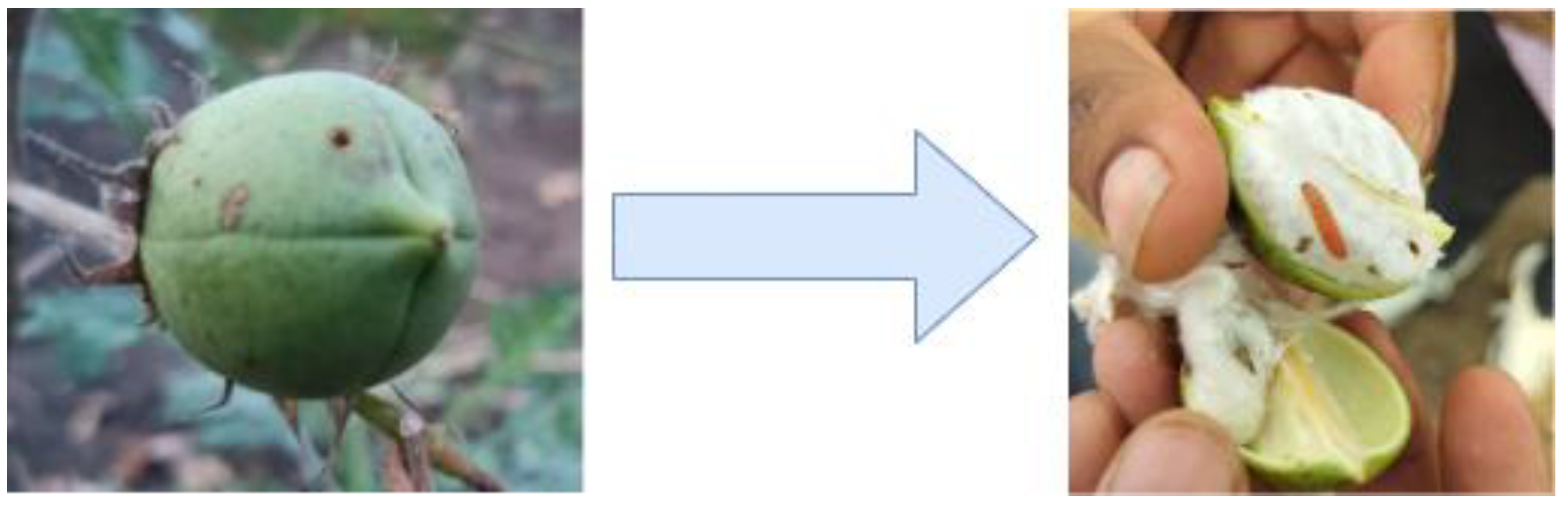

We have collected our own dataset from two fields which are organic field and BT cotton field. The organic field consisted of a lot of pink Bollworm infected cotton bolls as it was an experimental field where cotton was naturally grown by providing the crops with only irrigation water and no pesticides.

Figure 2.

The Overview of Proposed Methodology.

Figure 2.

The Overview of Proposed Methodology.

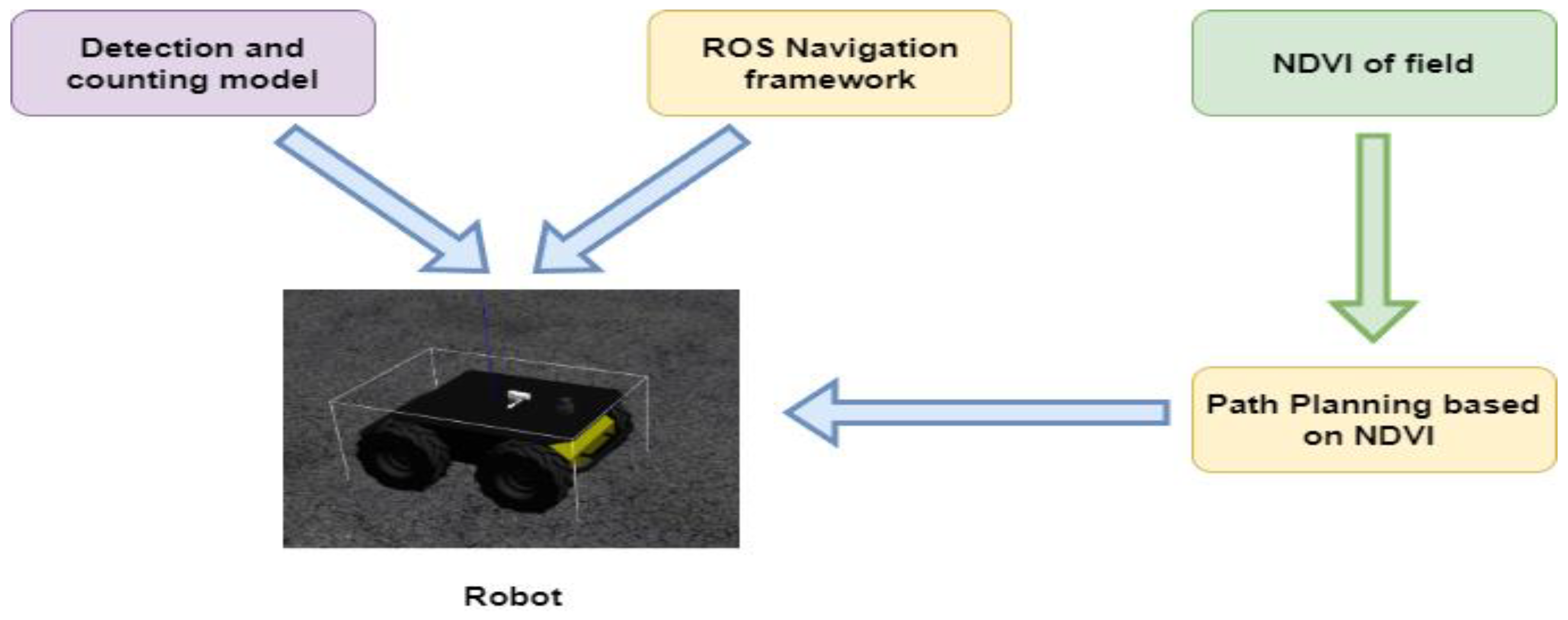

The proposed methodology includes the initial development of a deep learning model for detection and counting. This requires the dataset to be carefully labeled, a process efficiently done using Roboflow. Next, the labeled dataset is trained with YOLOv8, which currently is the state-of-the-art object detection model. The next step is to construct the model and then seamlessly integrate it into an autonomous Clearpath Husky robot. This robot can accurately count and identify cases of Red Disease, Pink Bollworms, and other components in cotton fields. Real-time detection capabilities are smoothly integrated with the ROS Navigation framework to improve the robot's overall functioning. Through this complex integration, a customized pipeline that not only greatly improves the robot's perceptual abilities but also its navigational capabilities, guaranteeing a comprehensive and effective approach to agricultural monitoring and management.

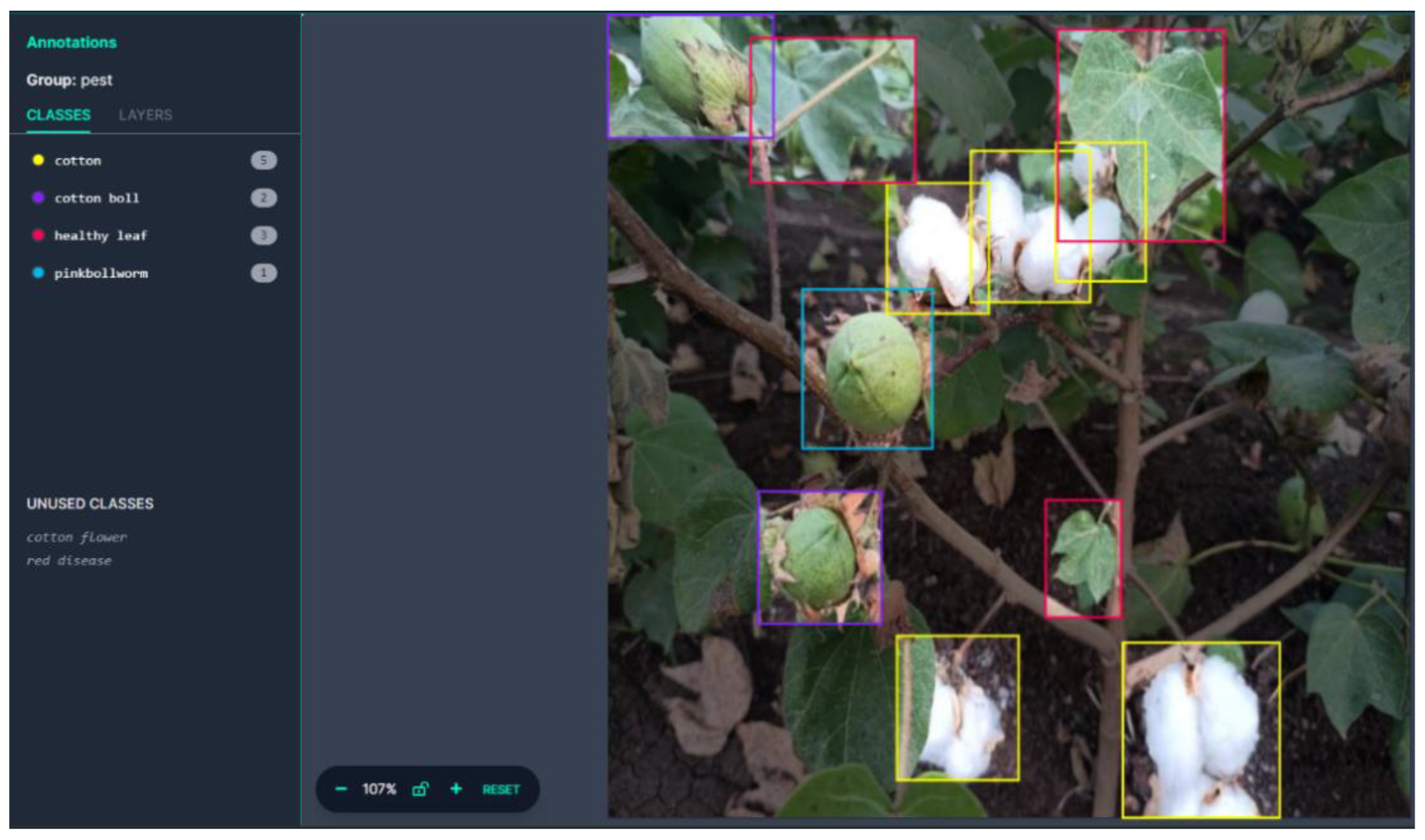

There are 6 classes for annotations.

["cotton flower", "cotton boll", "cotton", "pinkbollworm", "healthy leaf", "red disease"]

Figure 3.

Labelling of dataset using Roboflow.

Figure 3.

Labelling of dataset using Roboflow.

We labelled the cotton bolls with holes on them as pink bollworms because, according to their characteristics, they live and grow inside cotton bolls and only emerge from them in cool environments. The tiny hole they leave on the boll during their first phase grows larger as the pest matures. The labels for the remaining classes are standard.

- A.

Datasets –

Our own data was gathered using RGB cameras from two different types of fields: BT cotton fields, which were sprayed with pesticides, and organic cotton fields, which were not sprayed with pesticides. The dataset is made up of over a thousand RGB photos showing three different phases of cotton (flower, cotton boll, and matured cotton), as well as healthy and red disease-infested leaves and cotton bolls infected with pink bollworm.

Table 1.

Dataset Details.

Table 1.

Dataset Details.

| Train Images |

1006 |

| Valid Images |

34 |

| Test Images |

15 |

| Dimensions |

640 x 640 |

| Augmentation |

Horizontal Flip |

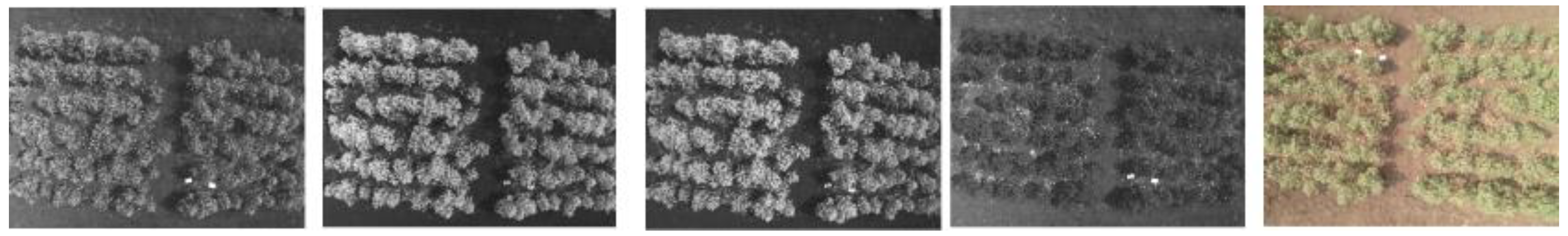

Normalized Difference Vegetation Index (NDVI) is calculated by taking pictures of a target area at various wavelengths. So, we have collected the data using the Parrot Sequoia multi spectral camera, the dataset consists target fields in the red, green, red edge, and near-infrared (NIR) bands are recorded by the Parrot Sequoia camera from the drones.

Figure 4.

Images collected by drone mounted multispectral camera.

Figure 4.

Images collected by drone mounted multispectral camera.

- B.

Deep Learning Model Architecture –

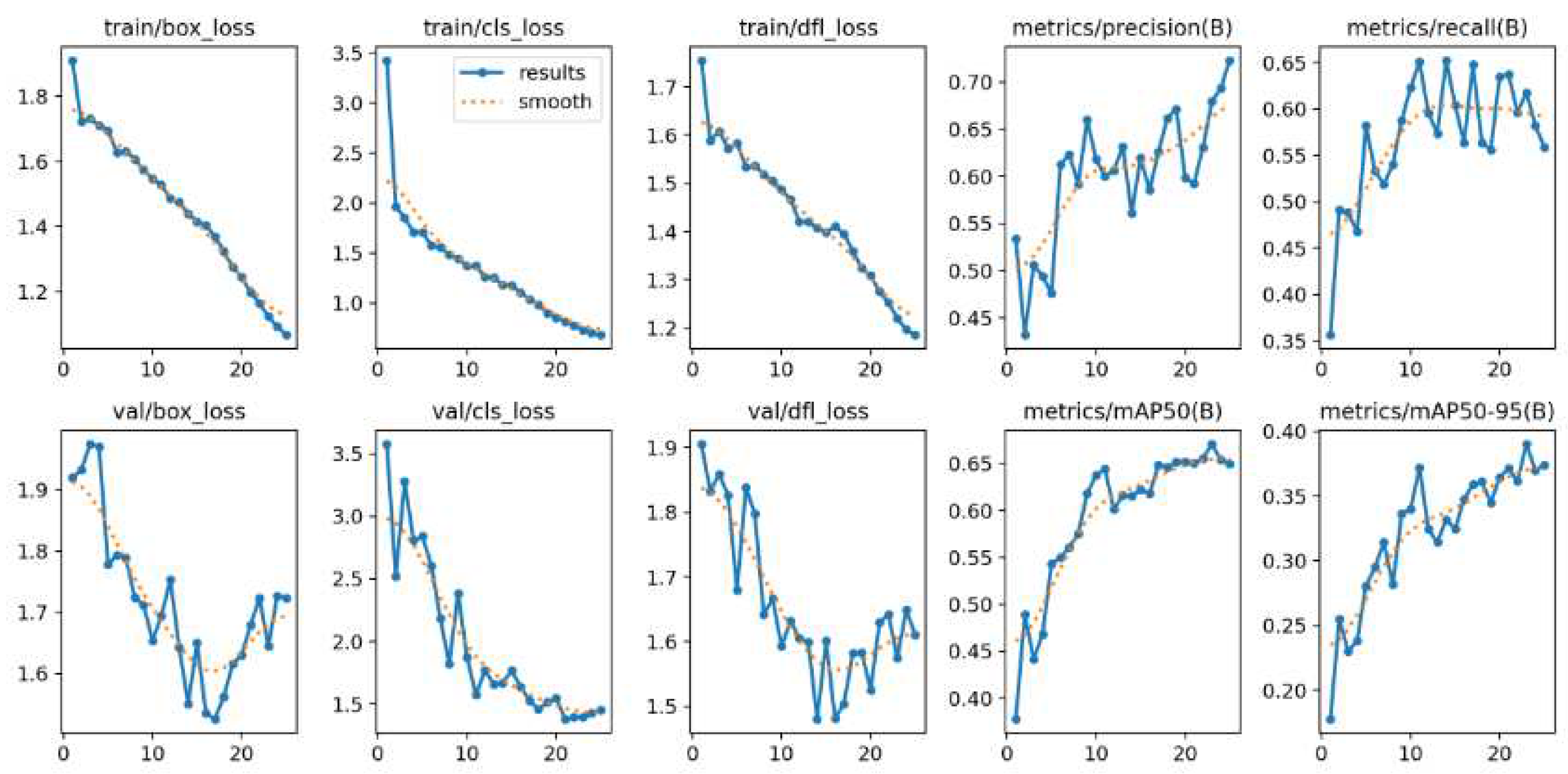

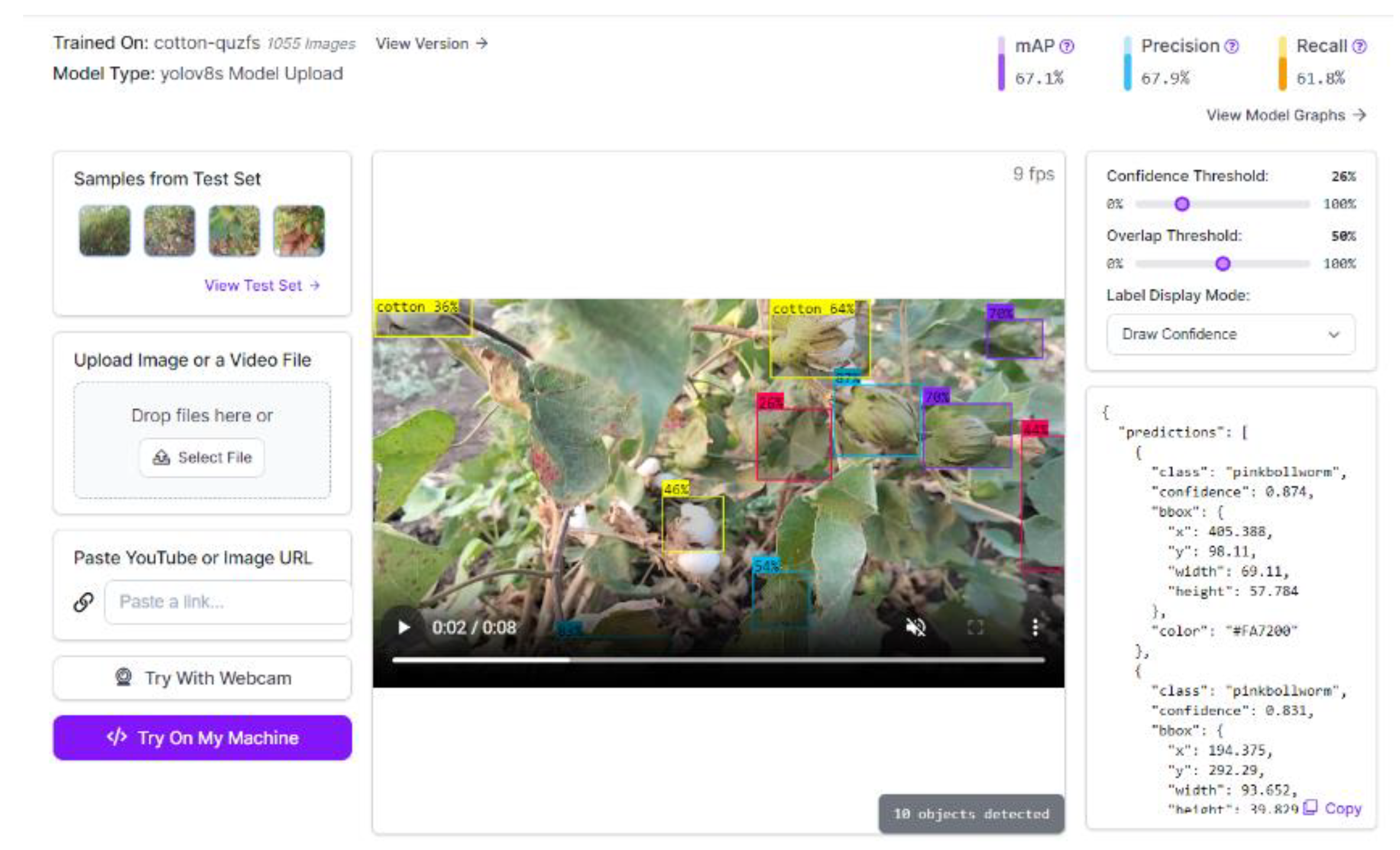

Our model was rigorously tested and labeled on the efficient Roboflow platform. The YOLOv8-S architecture was used for training at 25 epochs for reliable and accurate performance. In order to improve accuracy and dependability, supervision was also used for counting and tracking the objects that emerged.

Figure 5.

Counting of the Object in the frame.

Figure 5.

Counting of the Object in the frame.

We selected YOLOV8-S from the available YOLOV8 variants. A backbone network is used by YOLOv8-S as the feature extractor. The extraction of hierarchical characteristics from the input image is the responsibility of the backbone. YOLOv8-S often uses the CSPDarknet53 [

9] backbone architecture. To improve information flow, it has a CSP (Cross Stage Partial) connection. It's possible that YOLOv8-S has a neck architecture that combines characteristics from several sizes. In YOLOv8 variations, PANet (Path Aggregation Network) [

10] is frequently employed for this purpose. For object detection, the detection head is in charge of estimating bounding boxes, class probabilities, and confidence ratings. A modified version of the YOLO head is used by YOLOv8-S, which makes multiple-scale predictions for bounding box coordinates, class probabilities, and confidence ratings.

Training the model involves utilizing targets such bounding box regression, class prediction, and objectness prediction. In order to introduce non-linearity, YOLOv8-S usually utilizes Leaky ReLU (Rectified Linear Unit) [

11] activation functions. Non-maximum suppression (NMS) is used by YOLOv8-S to further refine and filter the final set of bounding box predictions once they are created. YOLOv8-S generates bounding boxes with corresponding class probabilities and confidence ratings based on an input picture. YOLOv8-S is trained utilizing methods like as backpropagation and stochastic gradient descent (SGD) on labeled datasets. This particular model can be effectively implemented on devices with constrained resources because to its high computational efficiency.

- C.

Autonomous robot –

For a range of robotic applications, the autonomous Clearpath Husky robot is a robust and adaptable unmanned ground vehicle. Because of its characteristics, it's ideal for jobs like data collecting, mapping, and exploration in difficult areas. The robot can see and navigate its environment well since it is outfitted with a variety of sensors, such as a realsense camera and LiDAR. Constructed to smoothly interface with the Robot Operating System (ROS), allowing ROS packages and libraries to be used in the creation and implementation of sophisticated robotic applications. The Clearpath Husky is a dependable and flexible autonomous robotic platform because of its sturdy hardware, interoperability with ROS, and autonomous capabilities.

In order to ensure compatibility with ROS Noetic, we construct and configure the Clearpath Husky robot hardware and attached a LiDAR sensor for autonomous driving. Integrated sensor into the ROS Noetic framework by configuring their drivers and ensuring compatibility with ROS Noetic nodes. Created an environment map using the GMapping mapping solution, which is compatible with ROS Noetic. After that Utilized the AMCL (Adaptive Monte Carlo Localization) module from ROS Noetic to implement localization [

12]. Object avoidance pretrained model and ROS navigation stack for ROS Noetic were used for path planning. Before deploying the robot, we tested it in a controlled environment and make sure the algorithms have been verified and improved using Gazebo simulation tools.

Figure 6.

Setup for detection and counting with depth camara.

Figure 6.

Setup for detection and counting with depth camara.

For the purpose of calculating the NDVI, take pictures of the cotton fields using drones that are fitted with multispectral cameras. Incorporate this data into the robot's navigation system so that it can make well-informed decisions. Utilize our object detection model to discover and enumerate cases of illnesses or infestations of pink bollworms that impact cotton crops. Ascertain a smooth interface with the ROS architecture. Following a simulated test, Deploy the self-navigating Husky robot in actual cotton fields to enable it to independently traverse, gather information, while monitoring the agricultural conditions. Robot deployment data collection includes gathering information about environmental conditions (done by mapping), detection outcomes, and NDVI maps. This information will be analyzed to assist determine crop health and other issues.

- D.

NDVI and Path planning –

The Normalized Difference Vegetation Index (NDVI) is a remote sensing tool that evaluates the density and overall health of plants. It is computed by first normalizing the difference between the visible red and near-infrared (NIR) reflectances of a plant by adding their total reflectances. Drones were used to capture multispectral photographs of the whole field at a height of around 25 meters in order to calculate the NDVI.

Figure 7.

NDVI of the Cotton Field we used for testing.

Figure 7.

NDVI of the Cotton Field we used for testing.

Path planning for the Husky robot depends on the NDVI calculation, which is accomplished using the Raster Calculator in QGIS. To ensure accurate detection and counting, the robot's speed is constrained to less than 0.5 m/s. For large-field scenarios, an effective route can be designed that gives priority to areas with healthy crops, then to areas with moderately healthy crops. This strategic approach allows the robot to optimize its scanning by detecting and counting in healthy crop regions initially, strategically bypassing unhealthy areas. The robot then goes back and carefully checks any areas it may have overlooked before, making sure the whole field is evaluated in a systematic and thorough manner. This path planning approach maximizes the robot's operating speed while concentrating on locations that may have problems, improving the effectiveness of agricultural monitoring.