Submitted:

22 November 2023

Posted:

24 November 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

- To analyze the bias in the Strategic Subject List dataset with respect to age and race.

- To implement and evaluate the effectiveness of bias mitigation strategies in enhancing the fairness of predictive policing algorithms.

2. Related Works

2.1. Fairness Strategies in Policing Algorithms

2.2. Sensitive Features in Policing Algorithms

3. Methods

3.1. Dataset

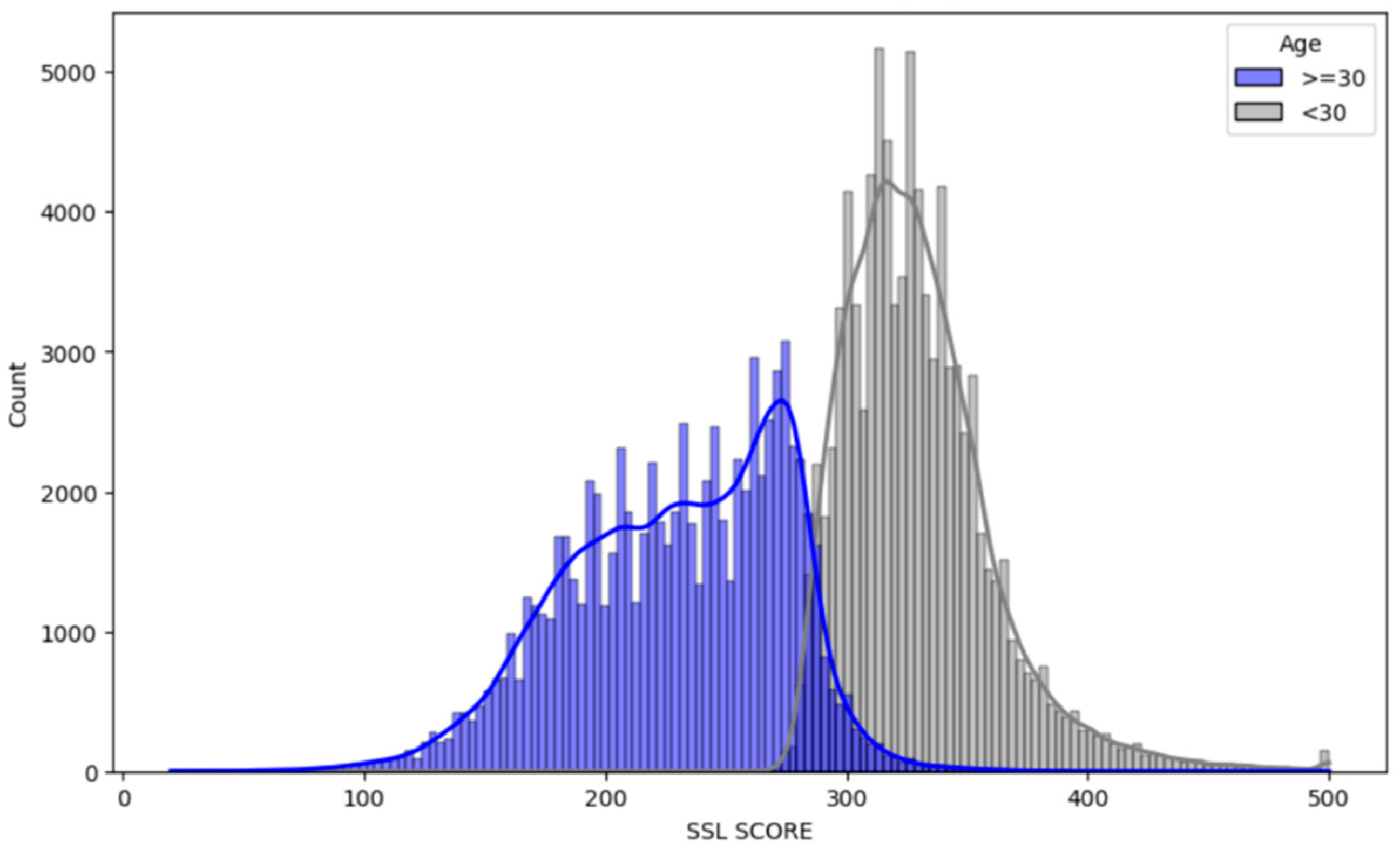

- The feature ‘AGE AT LATEST ARREST’ originally contained age ranges. We simplified this by categorizing individuals into two groups: those under 30 years old (‘less than 20’ and ‘20-30’) were encoded as 1 and those 30 years or older were encoded as 0.

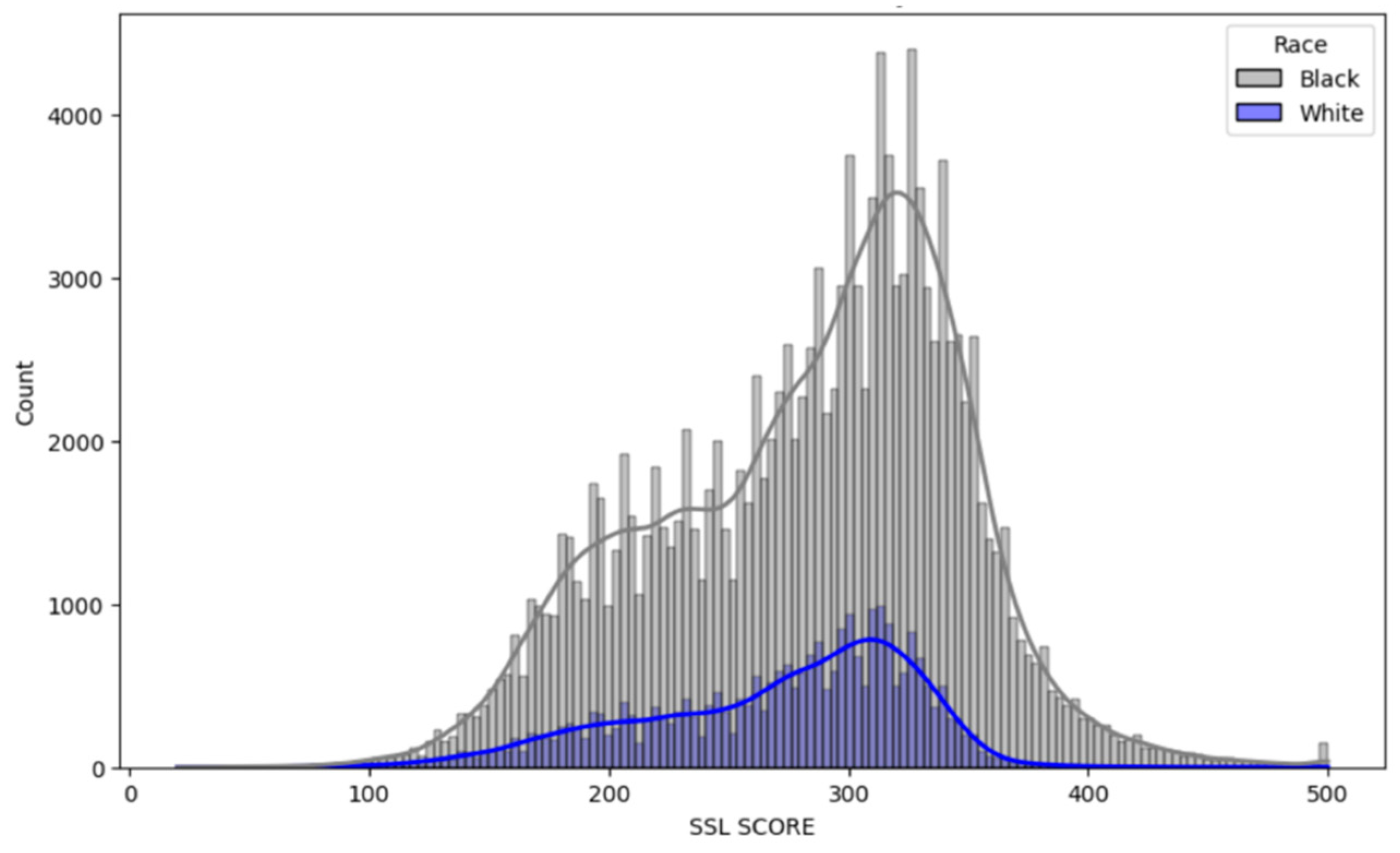

- For the ‘RACE CODE CD’ feature, we retained only two categories for simplicity and relevance to the study’s focus: ‘BLK’ (Black) and ‘WHI’ (White), encoded as 1 and 0, respectively. Rows of data that did not fall into these two categories were dropped.

- The ‘WEAPON I’ and ‘DRUG I’ features were binary encoded 1 (True) and 0 (False).

- For the target variable, SSL SCORE, scores above 250 were encoded as 1 indicating high risk, while scores of 250 and below were encoded as 0, indicating low risk aligning with the provided by Posada (2017).

3.2. Fairness Metrics

- Demographic Parity: This metric measures whether the probability of a positive outcome (e.g., being classified as high risk) is the same across different groups. In other words, demographic parity is achieved if each group has an equal chance of receiving the positive outcome, regardless of their actual proportion in the population.

- Equality of Opportunity: This metric specifically focuses on the true positive rate, ensuring that all groups have an equal chance of being correctly identified for the positive outcome when they qualify for it. It is a more nuanced metric that considers the accuracy of the positive predictions for each group, thereby ensuring fairness in the model’s sensitivity.

- Average Odds Difference: This metric evaluates the average difference in the false positive rates and true positive rates between groups. It combines aspects of both false positives (instances wrongly classified as positive) and true positives (correctly classified positive instances), providing a comprehensive view of the algorithm’s performance across different groups. A lower value in this metric indicates a more fair algorithm, as it suggests minimal disparity in both false and true positive rates across groups.

3.3. Research Objective 1: Bias Analysis

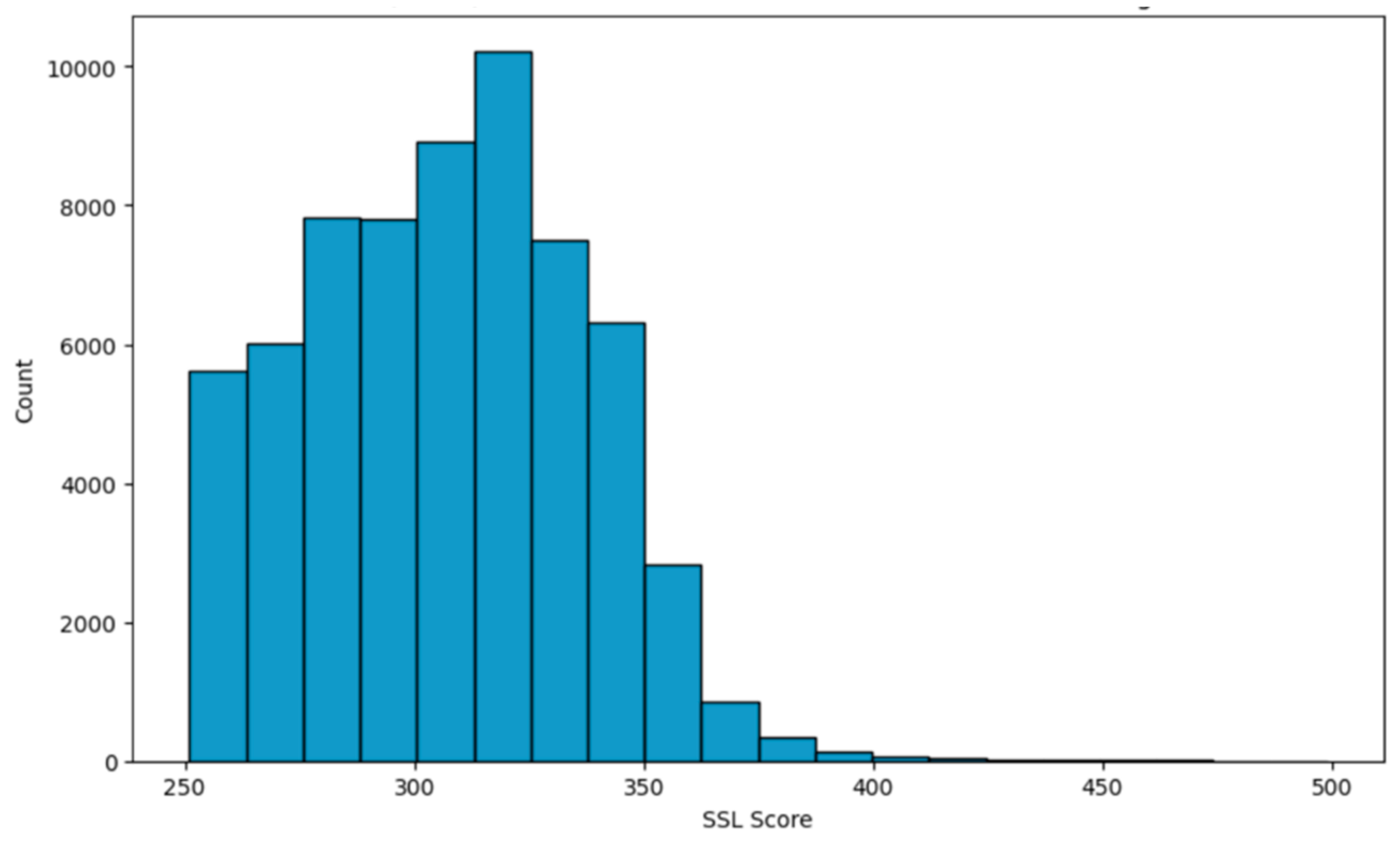

- The core of our analysis involves assessing the distribution of SSL scores across the demographic categories i.e., age, gender, and race. We did this analysis using histogram plots as shown in Figure 3, Figure 4 and Figure 5.

- Second, we examined a specific subset of dataset capturing individuals with SSL scores over 250 who are classified as “High Risk” despite having no prior arrest for violent offenses, no prior arrest for narcotic offenses and never been a victim of shooting incidents.

3.4. Research Objective 2: Bias Mitigation

- No prior arrest for violent offenses

- b. No prior arrest for narcotic offenses

- c. Never been a victim of shooting incidents.

4. Results and Discussion

4.1. Research Objective 1: Bias Analysis

4.2. Research Objective 2: Bias Mitigation

5. Conclusion

Funding

Data Availability Statement

Conflicts of Interest

References

- Asif, M., Shahzad, M., Awan, M.U. and Akdogan, H. (2018), “Developing a structured framework for measuring police efficiency”, International Journal of Quality & Reliability Management, Vol. 35 No. 10, pp. 2119-2135. [CrossRef]

- Berk, R. A., Kuchibhotla, A. K., & Tchetgen Tchetgen, E. (2021). Improving fairness in criminal justice algorithmic risk assessments using optimal transport and conformal prediction sets. Sociological Methods & Research, 00491241231155883. [CrossRef]

- Downey, A., Islam, S. R., & Sarker, M. K. (2023, May). Evaluating Fairness in Predictive Policing Using Domain Knowledge. In The International FLAIRS Conference Proceedings (Vol. 36).

- El Baroudy, J. (2023). Autonomous Weapon Systems: Attributing the Corporate Accountability. Access to Just. E. Eur., 222. [CrossRef]

- Hung, T. W., & Yen, C. P. (2023). Predictive policing and algorithmic fairness. Synthese, 201(6), 206. [CrossRef]

- Idowu, J., & Almasoud, A. (2023). Uncertainty in AI: Evaluating Deep Neural Networks on Out-of-Distribution Images. arXiv preprint arXiv:2309.01850. [CrossRef]

- Ingram, E., Gursoy, F., & Kakadiaris, I. A. (2022, December). Accuracy, Fairness, and Interpretability of Machine Learning Criminal Recidivism Models. In 2022 IEEE/ACM International Conference on Big Data Computing, Applications and Technologies (BDCAT) (pp. 233-241). IEEE.

- Jain, B., Huber, M., Fegaras, L., & Elmasri, R. A. (2019, June). Singular race models: addressing bias and accuracy in predicting prisoner recidivism. In Proceedings of the 12th ACM International Conference on PErvasive Technologies Related to Assistive Environments (pp. 599-607).

- Khademi, A., & Honavar, V. (2020, April). Algorithmic bias in recidivism prediction: A causal perspective (student abstract). In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 34, No. 10, pp. 13839-13840).

- Kyriakakis, J. (2021). Corporations, Accountability and International Criminal Law: Industry and Atrocity. Edward Elgar Publishing.

- Mohler, G. , Raje, R., Carter, J., Valasik, M., & Brantingham, J. (2018, October). A penalized likelihood method for balancing accuracy and fairness in predictive policing. In 2018 IEEE international conference on systems, man, and cybernetics (SMC) (pp. 2454-2459). IEEE.

- Montana, E., Nagin, D. S., Neil, R., & Sampson, R. J. (2023). Cohort bias in predictive risk assessments of future criminal justice system involvement. Proceedings of the National Academy of Sciences, 120(23), e2301990120. [CrossRef]

- Pastaltzidis, I., Dimitriou, N., Quezada-Tavarez, K., Aidinlis, S., Marquenie, T., Gurzawska, A., & Tzovaras, D. (2022, June). Data augmentation for fairness-aware machine learning: Preventing algorithmic bias in law enforcement systems. In Proceedings of the 2022 ACM Conference on Fairness, Accountability, and Transparency (pp. 2302-2314).

- Posadas, B. (2017, June 26). How strategic is Chicago’s “Strate- gic subjects list”? upturn investigates. Medium. Retrieved October 6, 2022. Available online: https://medium.com/equal-future/how-strategic- is-chicagos-strategic-subjects-list-upturn-investigates- 9e5b4b235a7c.

- Rodolfa, K. T., Salomon, E., Haynes, L., Mendieta, I. H., Larson, J., & Ghani, R. (2020, January). Case study: predictive fairness to reduce misdemeanor recidivism through social service interventions. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (pp. 142-153).

- Saleem, F., & Malik, M. I. (2022). Safety management and safety performance nexus: role of safety consciousness, safety climate, and responsible leadership. International journal of environmental research and public health, 19(20), 13686. [CrossRef]

- Somalwar, A., Bansal, C., Lintu, N., Shah, R., & Mui, P. (2021, October). AI For Bias Detection: Investigating the Existence of Racial Bias in Police Killings. In 2021 IEEE MIT Undergraduate Research Technology Conference (URTC) (pp. 1-5). IEEE.

- Udoh, E. S. (2020, September). Is the data fair? An assessment of the data quality of algorithmic policing systems. In Proceedings of the 13th International Conference on Theory and Practice of Electronic Governance (pp. 1-7).

- Urcuqui, C., Moreno, J., Montenegro, C., Riascos, A., & Dulce, M. (2020, November). Accuracy and fairness in a conditional generative adversarial model of crime prediction. In 2020 7th International Conference on Behavioural and Social Computing (BESC) (pp. 1-6). IEEE.

- Van Berkel, N., Goncalves, J., Hettiachchi, D., Wijenayake, S., Kelly, R. M., & Kostakos, V. (2019). Crowdsourcing perceptions of fair predictors for machine learning: A recidivism case study. Proceedings of the ACM on Human-Computer Interaction, 3(CSCW), 1-21. [CrossRef]

| Paper | Sensitive Features | |||

|---|---|---|---|---|

| Sex | Race | Status* | Age | |

| Downey et al. (2023) | ✓ | ✓ | ✓ | |

| Berk et al. (2021) | ✓ | |||

| Pastaltzidis et al. (2022) | ✓ | |||

| Udoh (2020) | ✓ | ✓ | ✓ | |

| Montana et al. (2023) | ✓ | |||

| Mohler et al. (2018) | ✓ | |||

| Somalwar et al. (2021) | ✓ | |||

| Urcuqui et al. (2020) | ✓ | |||

| Van Berkel et al. (2019) | ✓ | ✓ | ✓ | |

| Jain et al. (2019) | ✓ | |||

| Khademi & Honavar (2020) | ✓ | |||

| Ingram et al. (2022) | ✓ | ✓ | ||

| Rodolfa et al. (2020) | ✓ | |||

| Feature | Description |

|---|---|

| AGE AT LATEST ARREST | The individual’s age at the time of their most recent arrest. |

| VICTIM SHOOTING INCIDENTS | The number of times an individual has been the victim of a shooting. |

| VICTIM BATTERY OR ASSAULT | The number of times an individual has been the victim of an aggravated battery or aggravated assault; |

| ARRESTS VIOLENT OFFENSES | The number of times the individual has been arrested for a violent offense. |

| GANG AFFILIATION | Indicator if an individual has been confirmed to be a member of a criminal street gang. |

| NARCOTIC ARRESTS | The number of times the individual has been arrested for a narcotics offense. |

| TREND IN CRIMINAL ACTIVITY | The trend of an individual’s recent criminal activity. |

| UUW ARRESTS | The number of times the individual has been arrested for Unlawful Use of Weapons. |

| WEAPON I | Is ‘Yes’ if at least one Weapon (UUW) Arrest in past 10 years. |

| DRUG I | Is ‘Yes’ if at least one Drug Arrest in past 10 years. |

| LATITUDE | Latitude of Centroid of Census Tract of Arrest for the Subject’s latest arrest record. |

| LONGITUDE | Longitude of Centroid of Census Tract of Arrest for the Subject’s latest arrest record. |

| Sensitive Features | Demographic Parity | Equality of Opportunity | Average Odds Difference |

|---|---|---|---|

| Race | 0.09923 | 0.08356 | 0.07679 |

| Age | 0.8517 | 0.7616 | 0.3349 |

| Sensitive Features | Demographic Parity | Equality of Opportunity | Average Odds Difference |

|---|---|---|---|

| Race | 0.1170 | 0.08768 | 0.03523 |

| Age | 0.3128 | 0.1521 | 0.02024 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).