Submitted:

28 October 2023

Posted:

30 October 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

- -

- We solved the specific problems encountered in the construction of knowledge graphs in the field of education and explored a complete solution covering knowledge point extraction and relation extraction. Moreover, we proposed an innovative hierarchical relationship extraction method based on knowledge graphs and pretrained models, which played an essential and fundamental role in constructing subject knowledge graphs.

- -

- We proposed a data set in the field of education disciplines, including the education resources of more than 30 courses such as “Advanced Mathematics”, “Robotics”, “Robot Programming, and Application”. After careful labeling by the teachers and students of the college, a high-quality original data set was obtained.

2. Related work

2.1. Entity Extraction

2.2. Relation Extraction

3. Method

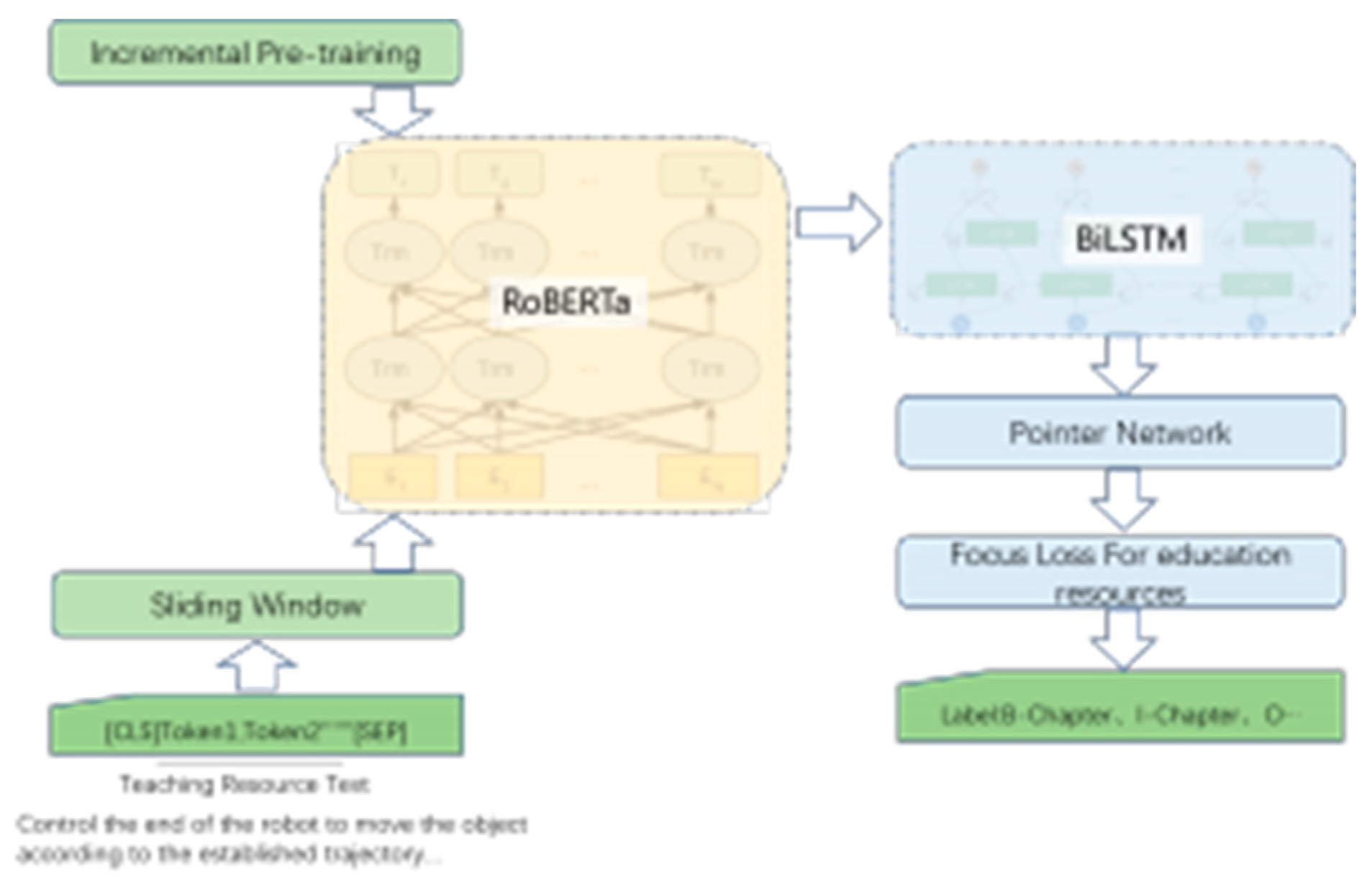

3.1. Entity extraction

3.1.1. Incremental Pre-training

| Explanation | Sample |

|---|---|

| sentence | 控制机器人末端以一定的夹持力 按既定轨迹移动物体 |

| participle | 控制 机器人 末端 以 一定 的 夹持力 按 既定 轨迹 移动 物体 |

| random masking | [Mask]制机器人末端以一定的[Mask]持力 按既定轨迹[Mask]动物体 |

| whole word masking | 控制机器人末端以一定的 [Mask] [Mask] [Mask]按既定轨迹移动物体 |

3.1.2. Focus Loss For education resources

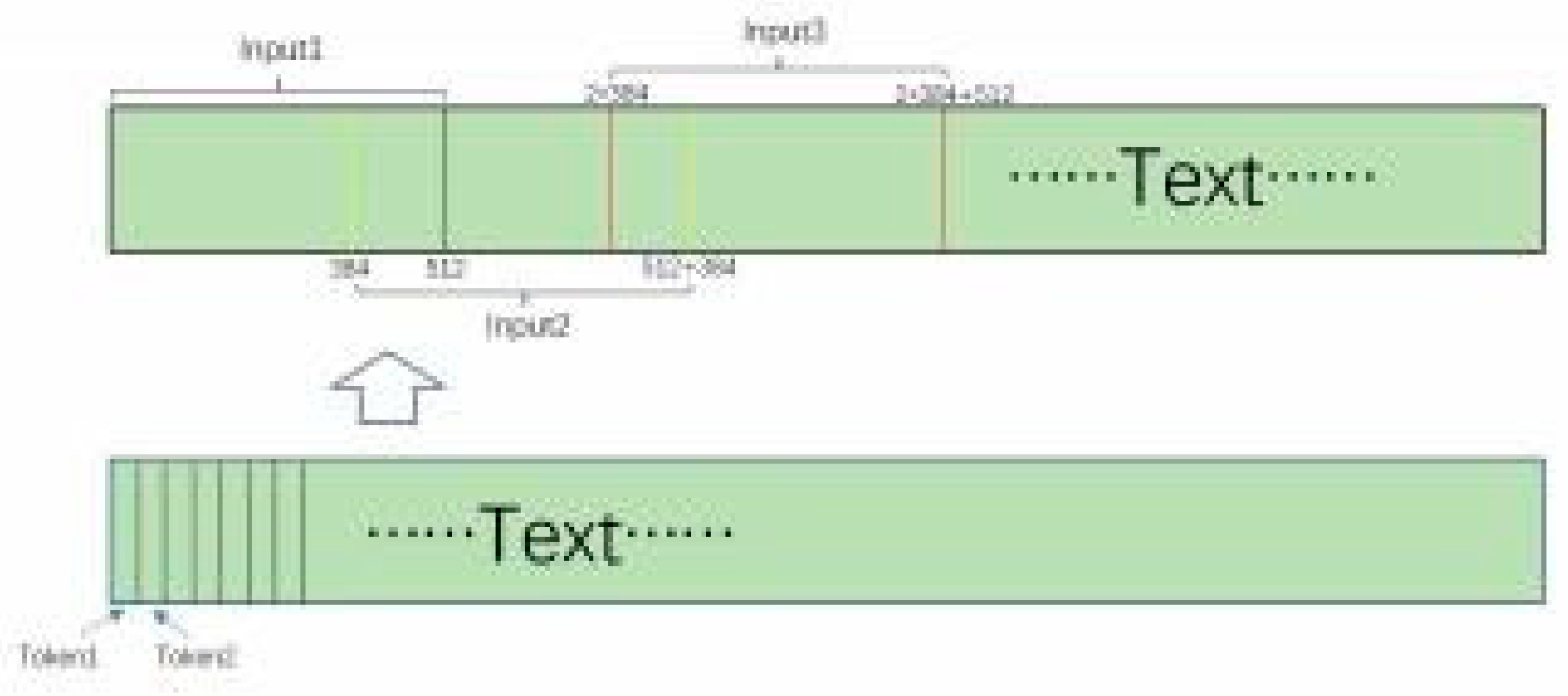

3.1.3. Sliding Window

3.2. Relation Extraction

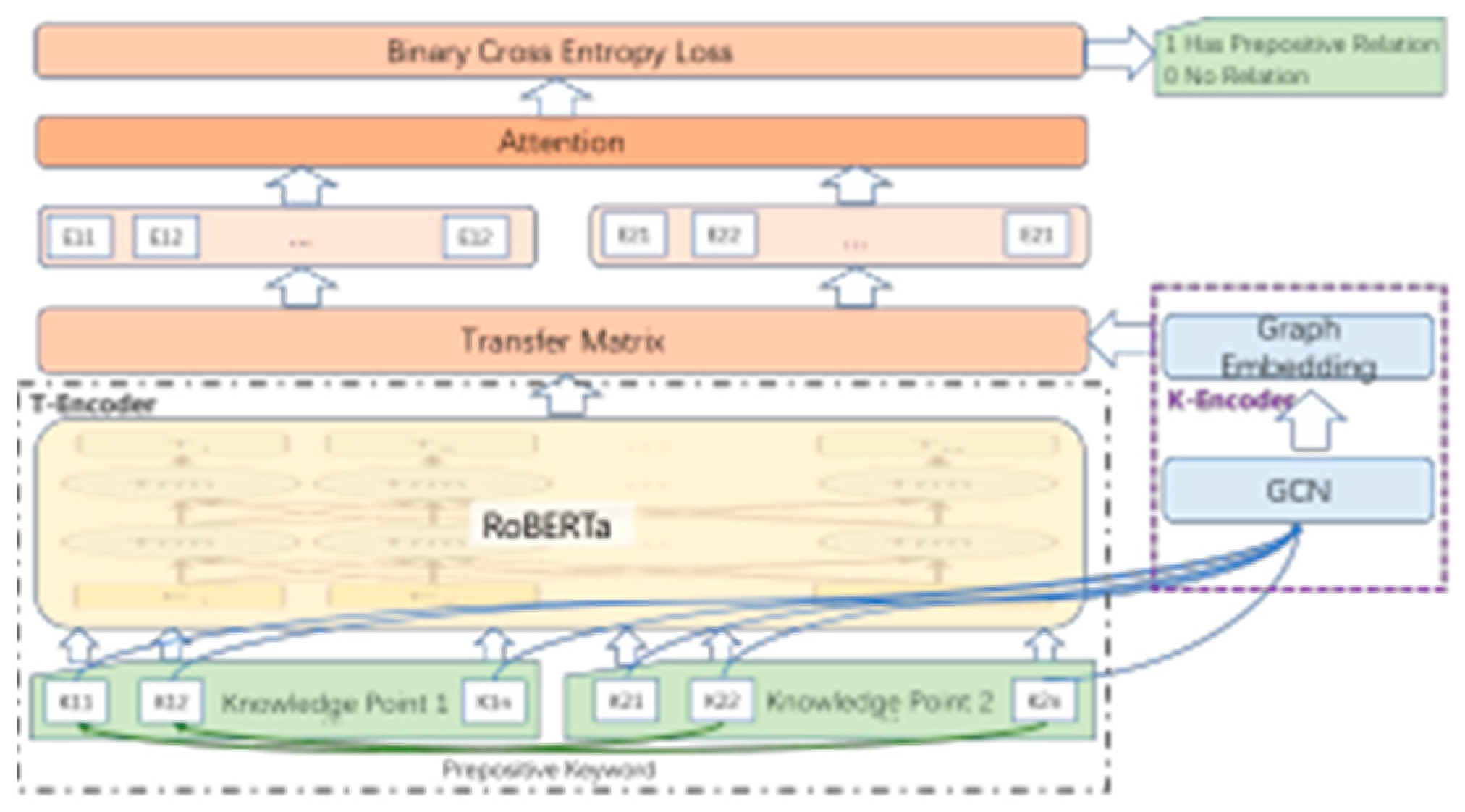

3.2.1. Prerequisite relation Extraction Method

3.2.2. Model Architecture

3.3. Data Augmentation

3.4. FGM

- For each x, calculate the forward loss and backpropagation of x to obtain the gradient

- Calculate radv according to the gradient of the embedding matrix and add it to the current embedding, which is equivalent to x + radv to generate an adversarial sample

- Calculate the forward loss of x + r, backpropagate to get the gradient of the confrontation, and add it to the gradient of the first step

- Restore embedding to the value in the first step

- Update the parameters according to the gradient of the third step

4. Experimental Data

4.1. Label Definition

4.2. Ontology Construction

4.3. Data Annotation

5. Experimental Results Analysis

5.1. Knowledge Point Extraction

| Type | Category | Name | Abbreviation | Example |

|---|---|---|---|---|

| Entity | First-level knowledge point | Chapter | CHP | Chapter Four Sensors |

| Entity | Second-level knowledge point | Section | SET | 4.1Classification of sensors |

| Entity | Third-level knowledge point | Subsection | SST | 4.1.1Built-in sensors |

| Entity | Finest-grained knowledge point | KeyWord | KEW | Amount of displacement, Linearity |

| Relation | Hypernym relationship | Contain | COT | <Chapter Four Sensors,4.1Classification of sensors > |

| Relation | Prerequisite relationship | Preprositon | PPS | <4.1Classification of sensors,5.2Vision system > |

| Relation | Similar relationship | similarity | SML | <9.1Control technology,9.3 Feedback Control> |

| Label | Train | Val | Test |

|---|---|---|---|

| Chapter | 35980 | 10280 | 5140 |

| Section | 33858 | 9674 | 4837 |

| Subsection | 22998 | 6571 | 3286 |

| Keyword | 313969 | 89541 | 44770 |

| Prerequisite relation | 171256 | 48930 | 24465 |

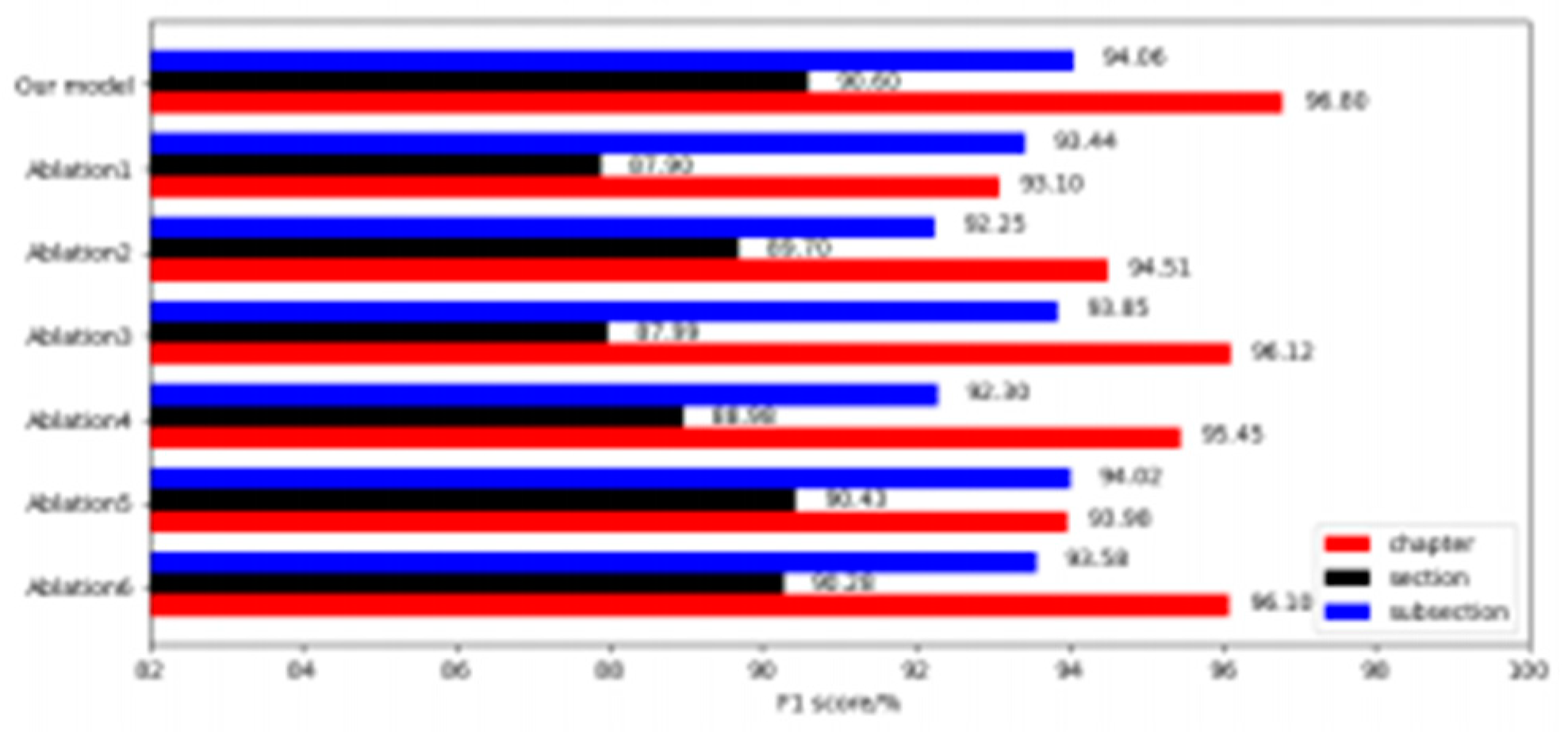

- Ablation focus loss for education resources;

- Ablation incremental pre-training;

- Ablation sliding window;

- Ablation data augmentation;

- Ablation FGM;

- Ablation mixed precision training;

5.2. Relation Extraction

| Hyperparameters | Textbook-NER | Textbook-KP-RE |

|---|---|---|

| Training epoch | 5 | 8 |

| Batch size | 32 | 32 |

| Learning rate | 5e-5 | 5e-5 |

| Loss function | FLFer | Binary Cross Entropy Loss |

| αchapter | 0.8 | / |

| αsection | 1.1 | / |

| αsubsection | 1.2 | / |

| γ | 0.9 | / |

| Max length | 512 | 512 |

| Dropout | 0.1 | 0.15 |

| Mixed Precision | FP16&FP32 | FP32 |

| T-Encoder | K-Encoder | Precision | Recall | F1 score |

|---|---|---|---|---|

| BiLSTM | / | 84.41 | 82.14 | 83.26 |

| BiLSTM | TransE | 86.43 | 85.15 | 85.78 |

| BERT-base-chinese | / | 89.53 | 88.89 | 89.2 |

| BERT-base-chinese | TransE | 89.23 | 89.01 | 89.12 |

| BERT-base-chinese | GCN | 91.32 | 91.1 | 91.2 |

| ROBERTa-chinese | / | 90.2 | 90.34 | 90.27 |

| ROBERTa-chinese | TransE | 92.58 | 91.89 | 92.23 |

| ROBERTa-chinese | GCN | 92.68 | 92.01 | 92.34 |

| Semantic Label | Precision | Recall | F1 score |

|---|---|---|---|

| Description | 84.17 | 82.22 | 83.18 |

| KP-Name&Description | 83.51 | 83.03 | 83.27 |

| Keyword | 89.44 | 91.19 | 90.31 |

| KP-Name&Keyword | 92.68 | 91.67 | 92.34 |

6. Conclusions

References

- Meysam Asgari-Chenaghlu,M Reza Feizi-Derakhshi, Leili Farzinvash, MA Balafar, and Cina Motamed, ‘Cwi: a multimodal deep learning approach for named entity recognition from social media using character, word and image features’, Neural Computing and Applications, 1–18, (2022). [CrossRef]

- MuhaoChen, Yingtao Tian, MohanYang, and Carlo Zaniolo, ‘Multilingual knowledge graph embeddings for cross-lingual knowledge alignment’, arXiv preprint arXiv:1611.03954, (2016). [CrossRef]

- Hu Y Cheng X, Tang L, ‘Extracting entity and relation of landscape plant’s knowledge based on albert model’, China Journal of Geo- information Science, 23(7), 1208–1220, (7 2021).

- Tsu-JuiFu, Peng-Hsuan Li, and Wei-Yun Ma, ‘Graphrel: Modeling text as relational graphs for joint entity and relation extraction’,in Proceed- ings of the 57th annual meeting of the association for computational linguistics, pp. 1409–1418, (2019).

- Sepp Hochreiter and Jürgen Schmidhuber, ‘Long short-term memory’, Neural computation, 9(8), 1735–1780, (1997). [CrossRef]

- LiJ HuH, ‘Named entity recognition method in educational technology field based on bert’, China Computer Technology and Development, 32(10), 164–168, (10 2022). [CrossRef]

- Shaoxiong Ji, Shirui Pan, Erik Cambria, Pekka Marttinen, and S Yu Philip, ‘A survey on knowledge graphs: Representation, acquisition, and applications’, IEEE transactions on neural networks and learning systems, 33(2), 494–514, (2021). [CrossRef]

- Thomas N Kipf and Max Welling, ‘Semi-supervised classification with graph convolutional networks’, arXiv preprint arXiv:1609.02907, (2016). [CrossRef]

- Yann LeCun, Léon Bottou, Yoshua Bengio, and Patrick Haffner, ‘Gradient-based learning applied to document recognition’, Proceed- ings of the IEEE, 86(11), 2278–2324, (1998). [CrossRef]

- JDMCKLee and KToutanova, ‘Pre-training of deep bidirectional trans- formers for language understanding’, arXiv preprint arXiv:1810.04805, (2018).

- Fei Li, Zheng Wang, Siu Cheung Hui, Lejian Liao, Dandan Song, Jing Xu, Guoxiu He, and Meihuizi Jia, ‘Modularized interaction network for named entity recognition’, in Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th Interna- tional Joint Conference on Natural Language Processing (Volume 1: Long Papers), pp. 200–209, (2021).

- Kaihong Lin, Kehua Miao, Wenxing Hong, and Chaoyi Yuan, ‘Rela- tion extraction based on relation label constraints’, in 2020 IEEE 6th International Conference on Computer and Communications (ICCC), pp. 2166–2170. IEEE, (2020).

- Pan Liu, Yanming Guo, Fenglei Wang, and GuohuiLi, ‘Chinese named entity recognition: The state of the art’, Neurocomputing, 473, 37–53, (2022). [CrossRef]

- Yinhan Liu, Myle Ott, Naman Goyal, Jingfei Du, Mandar Joshi, Danqi Chen, Omer Levy, Mike Lewis, Luke Zettlemoyer, and Veselin Stoy- anov, ‘Roberta: A robustly optimized bert pretraining approach’, arXiv preprint arXiv:1907.11692, (2019). [CrossRef]

- Paulius Micikevicius, Sharan Narang, Jonah Alben, Gregory Diamos, Erich Elsen, David Garcia, Boris Ginsburg, Michael Houston, Oleksii Kuchaiev, Ganesh Venkatesh, et al., ‘Mixed precision training’, arXiv preprint arXiv:1710.03740, (2017). [CrossRef]

- Takeru Miyato, Andrew M Dai, and Ian Goodfellow, ‘Adversarial train- ing methods for semi-supervised text classification’, arXiv preprint arXiv:1605.07725, (2016). [CrossRef]

- Marie-Francine Moens, Information extraction: algorithms and prospects in a retrieval context, volume 1, Springer, 2006.

- Guoshun Nan, Zhijiang Guo, Ivan Sekuli, and Wei Lu, ‘Reasoning with latent structure refinement for document-level relation extraction’, arXiv preprint arXiv:2005.06312, (2020). [CrossRef]

- S. Ning, F. Teng, and T. R. Li, ‘Multi-channel self-attention mechanism for relation extraction in clinical records’, Chinese Journal of Comput- ers, 43(5), 916–929, (2020).

- Mark Neumann Peters, Matthew E and Mohit Iyyer, ‘Deep contextual- ized word representations’, in Proceedings of the 2018 Conference of the North American Chapter of the Association for ComputationalLin- guistics: Human Language Technologies, Volume 1 (Long Papers), pp. 2227–2237, (2018).

- Alec Radford, Karthik Narasimhan, Tim Salimans, Ilya Sutskever, et al., ‘Improving language understanding by generative pre-training’, (2018).

- Nils Reimers and Iryna Gurevych, ‘Sentence-bert: Sentence embed- dings using siamese bert-networks’, arXiv preprint arXiv:1908.10084, (2019). [CrossRef]

- FENG Y C REN Y Q, WAN K, ‘The sustainable development of arti- ficial intelligence education——the interpretations and implications of artificial intelligence in educationchallenges and opportunities for sus- tainable development’, Modern Distance Education Research, 31(5), 3–10, (1 2019).

- Sunil Kumar Sahu, Fenia Christopoulou, Makoto Miwa, and Sophia Ananiadou, ‘Inter-sentence relation extraction with document-level graph convolutional neural network’, arXiv preprint arXiv:1906.04684, (2019). [CrossRef]

- Qingyu Tan, Ruidan He, Lidong Bing, and Hwee Tou Ng, ‘Document-level relation extraction with adaptive focal loss and knowledge distil-lation’, arXiv preprint arXiv:2203.10900, (2022). [CrossRef]

- Z Yang, Z Dai, Y Yang, J Carbonell, RR Salakhutdinov, and XL-Net Le QV, ‘generalized autoregressive pretraining for language under-standing; 2019′, Preprint at https://arxiv. org/abs/1906.08237 Accessed June, 21, (2021). [CrossRef]

- Wojciech Zaremba, Ilya Sutskever, and Oriol , ‘Recurrent neural net-work regularization’, arXiv preprint arXiv:1409.2329, (2014). [CrossRef]

- Ningyu Zhang, Xiang Chen, Xin Xie, Shumin Deng, Chuanqi Tan, Mosha Chen, Fei Huang, Luo Si, and Huajun Chen, ‘Document-level relation extraction as semantic segmentation’, arXiv preprint arXiv:2106.03618, (2021). [CrossRef]

- SC Zhong, ‘How artificial intelligence supports educational revolution’, China Educational Technology, 3, 17–24, (2020).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).