Methods

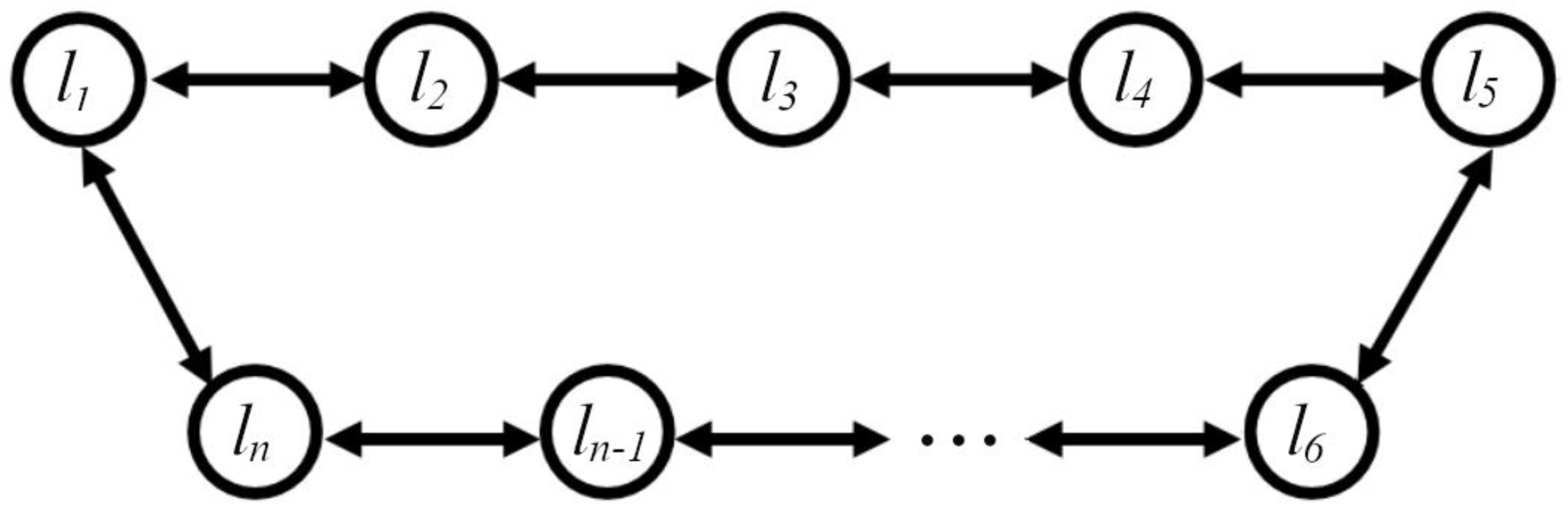

In this work, we studied a chain of locally coupled logistic oscillators, the structure of which is shown in

Figure 8.

Logistic equation as a map of a nonlinear operator

The logistic equation can be written as a map of a vector

to a vector

by means of a nonlinear operator

The nonlinear operator

acting on the vector

is defined as follows:

The notation ○ in the formula stands for the Hadamard product (also known as the elementwise product, entrywise product, or Schur product) and is a binary operation that takes two matrices of equal dimensions and returns a matrix of the multiplied corresponding elements.

The symbol

denotes matrix multiplication, where the matrix

is a circulant matrix of dimension

, each row of which contains exactly three non-zero elements. Each subsequent row of the matrix is obtained by cyclically shifting the elements of the previous row to the right:

Here is the length of the chain of coupled logistic oscillators, is the exchange value between neighboring oscillators, is the Malthusian coefficient of the logistic map . The parameters of the logistic equation enter only the matrix .

The logistic map at given parameters

and under given initial conditions

can be considered as a method of simple iteration of the solution of a nonlinear equation of the form:

In equation (2),

P is the degree of the nonlinear operator and

X is the solution of equation (2). In the method of simple iterations, we will say that the solution of equation (2) is found if, starting from some

N1, the following condition is satisfied:

where

. In practice, it is convenient to use the value of the residual difference norm at successive iteration steps to search for a solution simultaneously with the search for the period

P:

where

N2 is an arbitrary number.

The exact solution of equation (2) with period

P is obtained if the following conditions are satisfied:

where

P is the index of the first zero of vector

zi. Obviously, along with (3), the conditions for all points with period

P are satisfied:

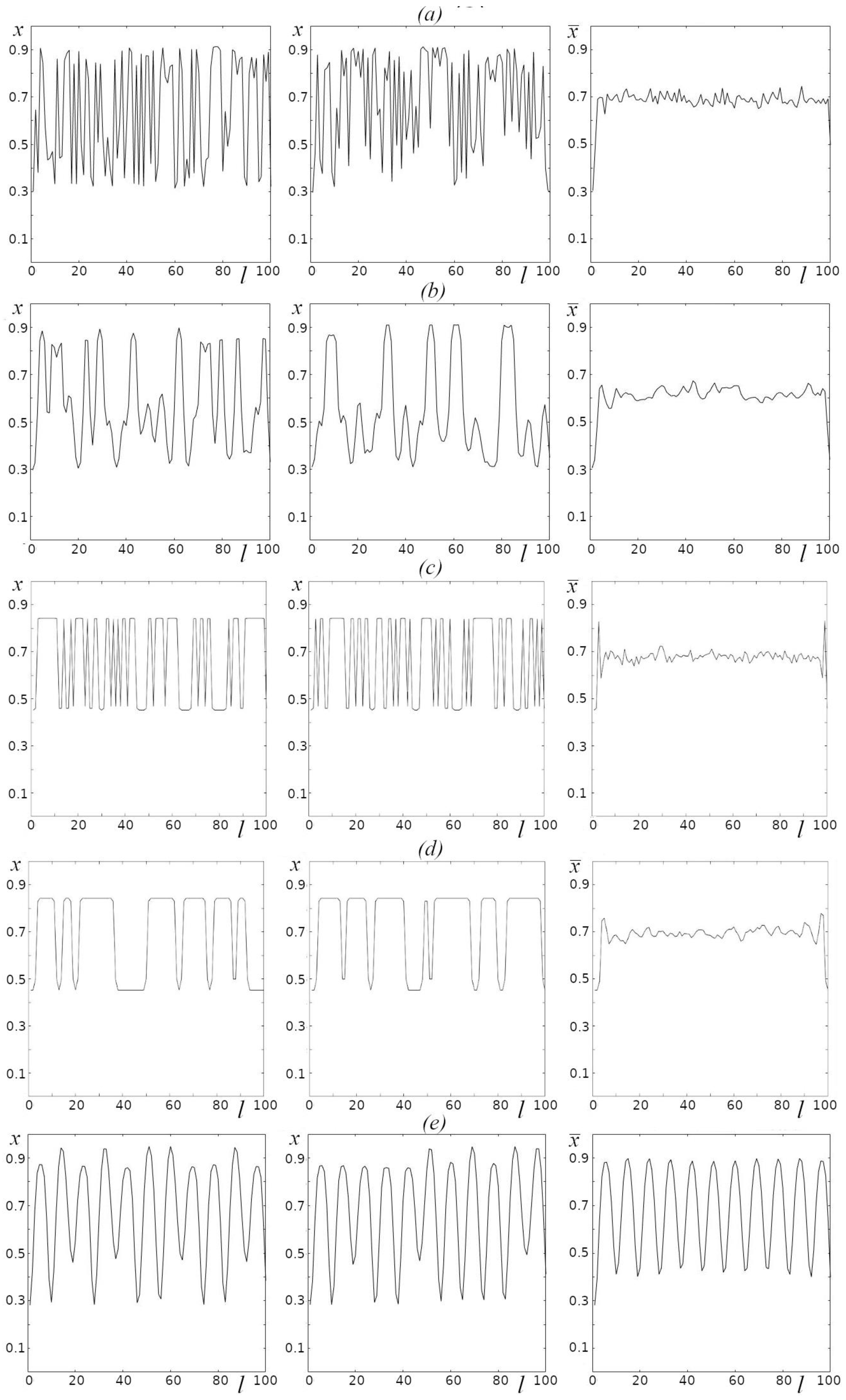

For nonlinear equation (2), depending on parameters

r, D, many various solutions with different periods can be obtained depending on the initial conditions. In addition to exact solutions with condition (3), there may exist reasonably stable approximate solutions, for which the following inequalities are satisfied:

It is important to note here that conditions (4) must be satisfied simultaneously for all points with period P, since there can be a situation where the fulfillment of (4) for k=1 does not guarantee the fulfillment of condition (4) for k=10. In the process of iterations, modes with close frequencies may be present in the system, which will lead to beats modulating the minima additionally with a rather low frequency equal to the difference of frequencies of the two modes.

For some initial conditions, there can be no solutions in the exact sense of (3) even for relaxed criterion (4). At the same time, we cannot claim that solutions with these initial conditions do not exist because the method of simple iterations has its limitations (it may simply fail to converge to a solution). However, by applying this method to all random initial conditions for a point in the area of parametersrand D, we make the same methodological error, which enables us to draw general conclusions on the existence of exact solutions in the parametric pointr, D.

In order to understand the structure and behavior of solutions in some point of parametersrand D, it is necessary to obtain solutions for a whole set of initial conditions by the method of simple iterations using relaxed criterion (4) for given value . Calculations for each point r, D are performed using the algorithm below.

Algorithm for finding solutions for a set of initial conditions

The general idea of the algorithm is to simultaneously iterate several vectors , while taking out of iterations those for which condition (4) is satisfied. Formally, this is possible by substituting vector with rectangular matrix B of dimension of the form in equation (1).

From the formal point of view, substitution of matrixBfor vector in equation (1) does not result in any computational advantages; however, the use of modern libraries accounting for multicore, multithreading, the presence of computational pipelines and a large amount of cache memory of modern processors, multiplication of discharged matrix A by the full matrix is much more preferable than sequential multiplication ofAby the number of vectors equal to the number of columns in the full matrix.

The algorithm consists of a number of steps, with the help of which, from the initial random matrixB, we obtain a matrix, several (or all) columns of which are solutions, and the other (if any remain) are not solutions. It is important that further iterations over this matrix do not change the picture. Considering the latter, we will refer to the results of the algorithm as generalized solutions. They may include both solutions proper (condition (3)) and approximate solutions with given accuracy ((4)).

A step of the algorithm includes sequential application of some (N1) “blind” iterations, where we care not about the intermediate results but only about matrix , and then some number (N2) of “measuring” iterations, where the set of all intermediate matrices is preserved: .

Having a set of intermediate matrices, we calculate the matrix of residuals according to the following formula:

For set criterion we check condition (4) for all rows of matrixZ. Each row corresponds to one initial condition (column of matrix B). We separate the convergent solutions, write them down separately, and remove them from the matrixB. This reduces the number of its columns.

We proceed to the next step (iteration) of the algorithm, where matrix B reduced at the previous iteration is used as the initial matrix. Exit from the iteration loop occurs either when matrixBbecomes empty (all columns converged to an exact or approximate solution) or when the iterative process stagnates. To check for stagnation, prior to the iterations we pre-fill the auxiliary arrayCZof lengthNCZ, equal to the allowed number of stagnating iterations, with units. At each iterative step of the algorithm we assign to the first element of this array the number of columns for which condition (4) is satisfied, and then we cyclically shift the elements of this array to the right by one step. Then we check the sum of its elements. If the sum is zero, we exit the operations and terminate the algorithm; if not, we continue the iterations. If we exit by stagnation, we simply add the columns that did not converge to the solution to those that we previously separated from matrixBas converging to the solution. Note once again that we refer to all the columns of matrix B obtained at the output of the algorithm as generalized solutions.

Once the computations are complete for all initial conditions, we can estimate the probability of obtaining solution

Ps with given accuracy

for a point in the parametric space

r, Dfor random initial conditions:

where

is the number of columns of matrix

B, for which condition (4) is satisfied.

It should be noted that for a given point in the parametric spacer, D, if in the set of generalized solutions there exist solutions for which condition (4) is satisfied, the remaining solutions will be similar in some sense. At a minimum, they will have periodic residuals (5). For the general analysis of the residuals generated by matrix of generalized solutionsB, it is convenient to introduce the relative spectral entropy for all rows of the matrix of residuals (5). Next, we outline the general scheme for calculating spectral entropy and then write out formulas for the relative spectral entropy of the residuals.

Spectral entropy of an arbitrary valid signal

The spectral entropy of the arbitrary signal

of the length

normalized to the unit of the power spectrum, is an estimate of the entropy by Shannon’s formula. There are many ways to estimate the signal power spectrum, here is the simplest one. A biased signal, the mean of which is not zero, has a singularity at the zero frequency of the spectrum, which will interfere with the analysis. In order to remove the constant component of the signal, we remove the linear trend from it, subtract from the signal the values that were obtained from the linear regression, which is built on the points

. Then we perform the Fourier transform of the signal. Let the signal without trend form a column

Y, then the sought Fourier image

is a linear transform with the Fourier matrix

F:

The elements of the Fourier matrix are given by the following expression

The normalized power spectrum

S(k)of the valid signal is given by the following expression:

For a valid signal, the square of the modulus of its Fourier image is symmetric about the center, so the informative part of the spectrum lies in the region from

1 to

. The spectral entropy of signal

after

finding its power spectrum

is

calculated using Shannon's formula:

The argument in square brackets emphasizes that the spectral entropy E is a functional of the signal Y.

Relative spectral entropy of residuals (temporal entropy)

To estimate the degree of periodicity of the solutions, it is sufficient to calculate the spectral entropy of the residuals (5). For convenience in comparing values, it makes sense to divide the entropy value by its maximum value, which it takes for a constant power spectrum

S=2/n:

The relative spectral entropy of the residuals or temporal entropy in the adopted notations will be written as:

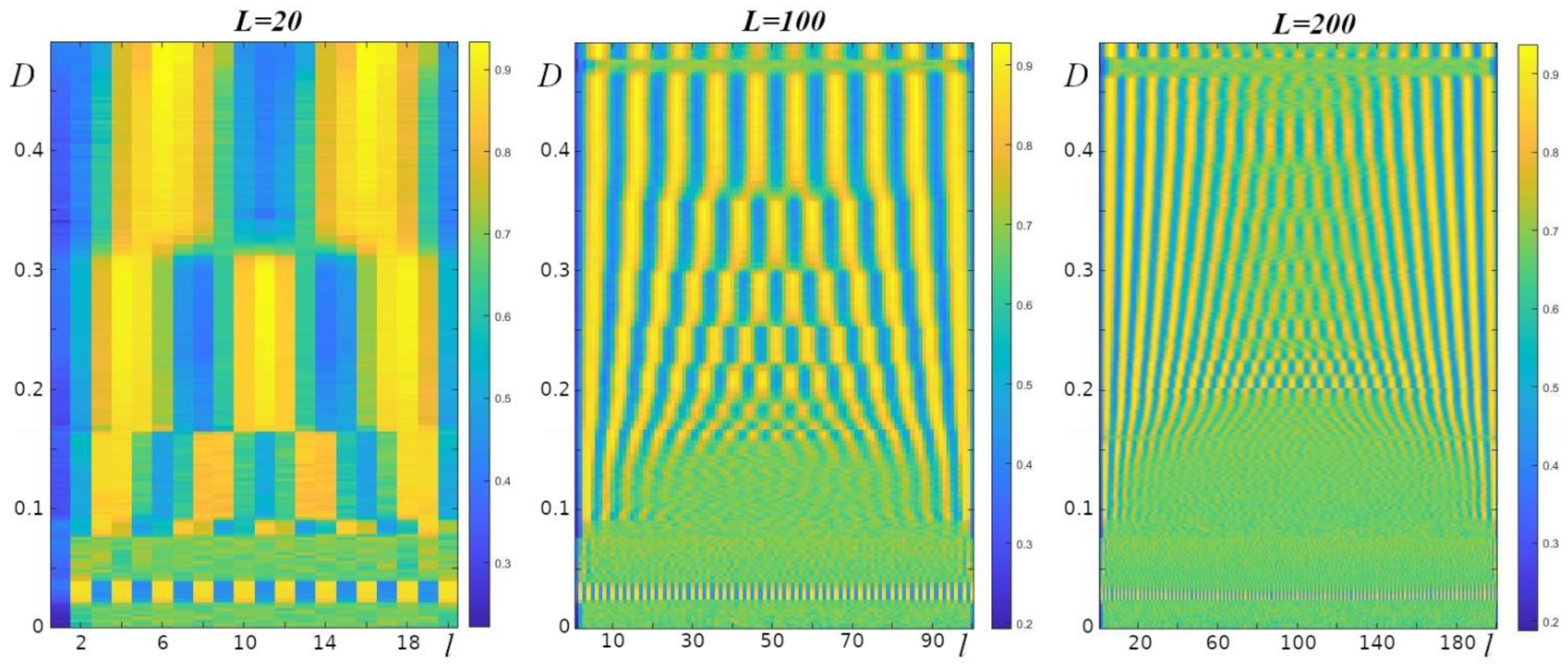

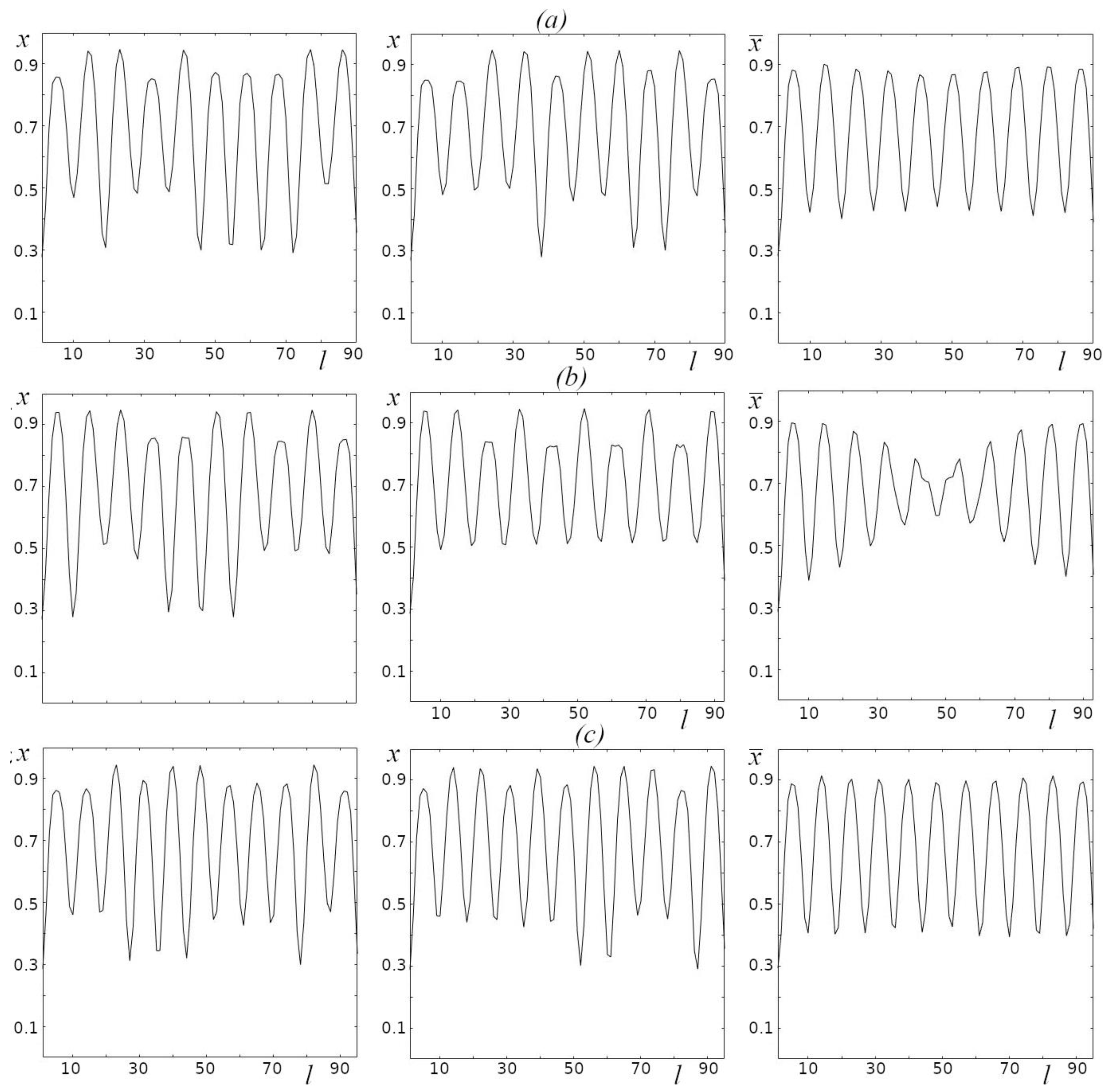

In the case when the signal is periodic in time , if not, is close to 1. Choosing some boundary value of the relative entropy, say 0.3, we can estimate the probability that at a given point in the parametric spacer, Dthe relative temporal entropy will be less than the boundary value. To do this, we need to count the number of cases where and divide it by the total number of trialsM.

Relative spectral entropy of solutions (spatial entropy)

In addition to periodicity of solutions in time (iterations), there may be periodicity of solutions in “space” (periodic variation

along sites). In complete analogy to the previous statement, we can estimate the spatial entropy for each solution

in the solution matrix

B. The only difference from time entropy would be the doubling of the length of the original function, so that entropy estimates can be made for both short chains and chains with an odd number of links. This can be achieved by docking two identical solutions together, thereby doubling the length of the analyzed signal. In this case, the power spectrum will be defined at

Lpoints. The formula of spatial entropy is as follows:

as for the temporal entropy, we can estimate the probability

that at a given point of the parametric space

r, Dthe relative spatial entropy will be less than the boundary value. It is equal to the number of cases when

, divided by the number of trials

M.

Mean value of generalized solutions, entropy of the mean solution

An important quantity characterizing the structure formation at the parametric point

r, Dcould be the average over all columns of the matrix of generalized solutions value

B, which we denote as

. Direct computation will show us a close to straight line, since all generalized solutions have an arbitrary random phase. However, a feature of (1) with a circulant matrix

Ais the invariance of the generalized solutions with respect to an arbitrary cyclic shift of the elements of the generalized solutions

. We will take advantage of this and before averaging the generalized solutions we perform a cyclic shift so that the minimum value is in the first element of the vector:

. In this case, all generalized solutions appear to be in the same phase, and their average will be an informative quantity. An additional numerical characteristic of orderliness can be the spatial entropy:

Funding: The reported study was funded by the Institute of Theoretical and Experimental Biophysics of the Russian Academy of Sciences (state assignment № 075-01025-23-01) and by the Institute of Mathematical Problems of Biology, Keldysh Institute of Applied Mathematics of the Russian Academy of Sciences (state assignment 0017-2019-0009).