Submitted:

23 August 2023

Posted:

24 August 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

- (1)

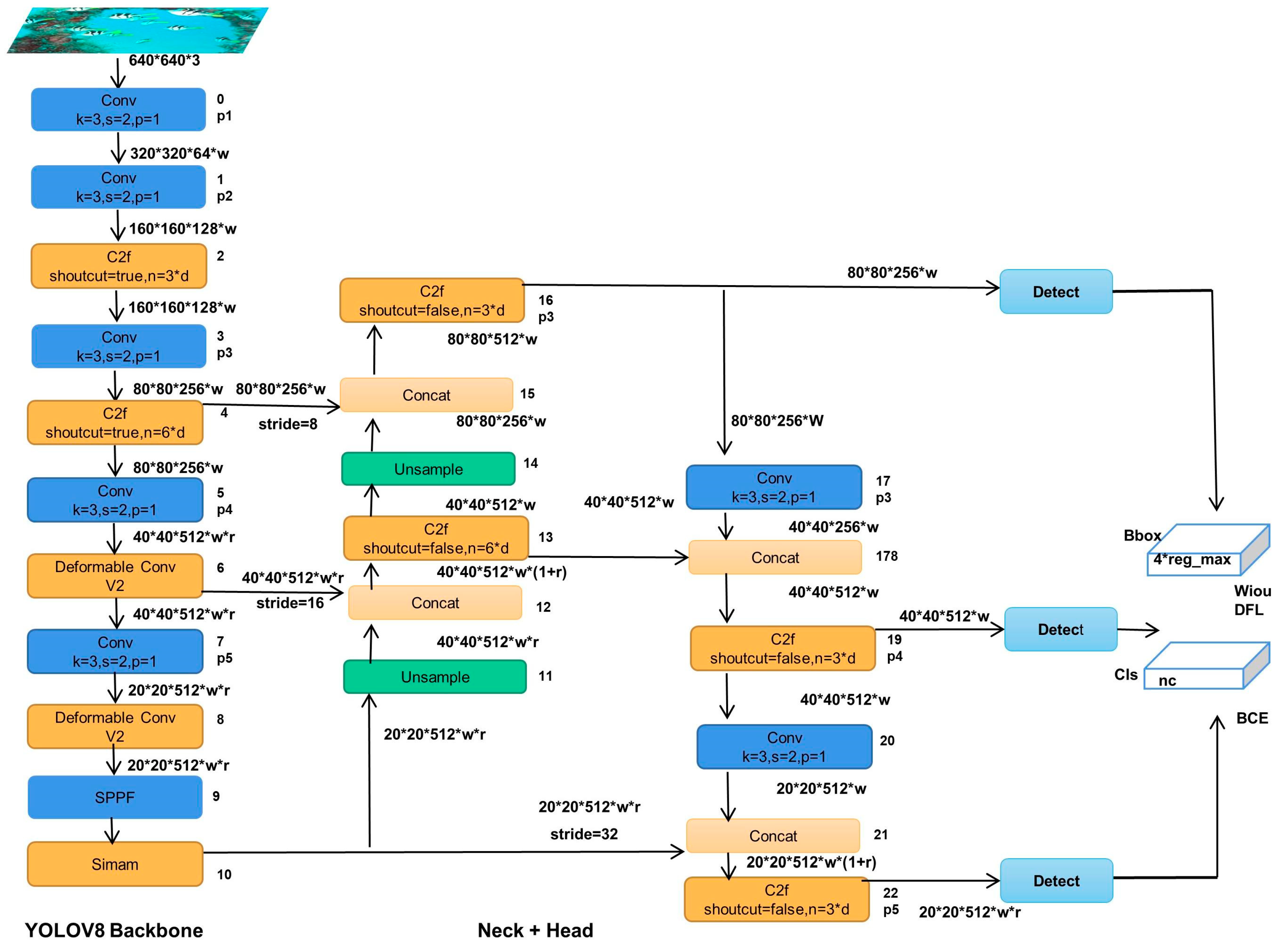

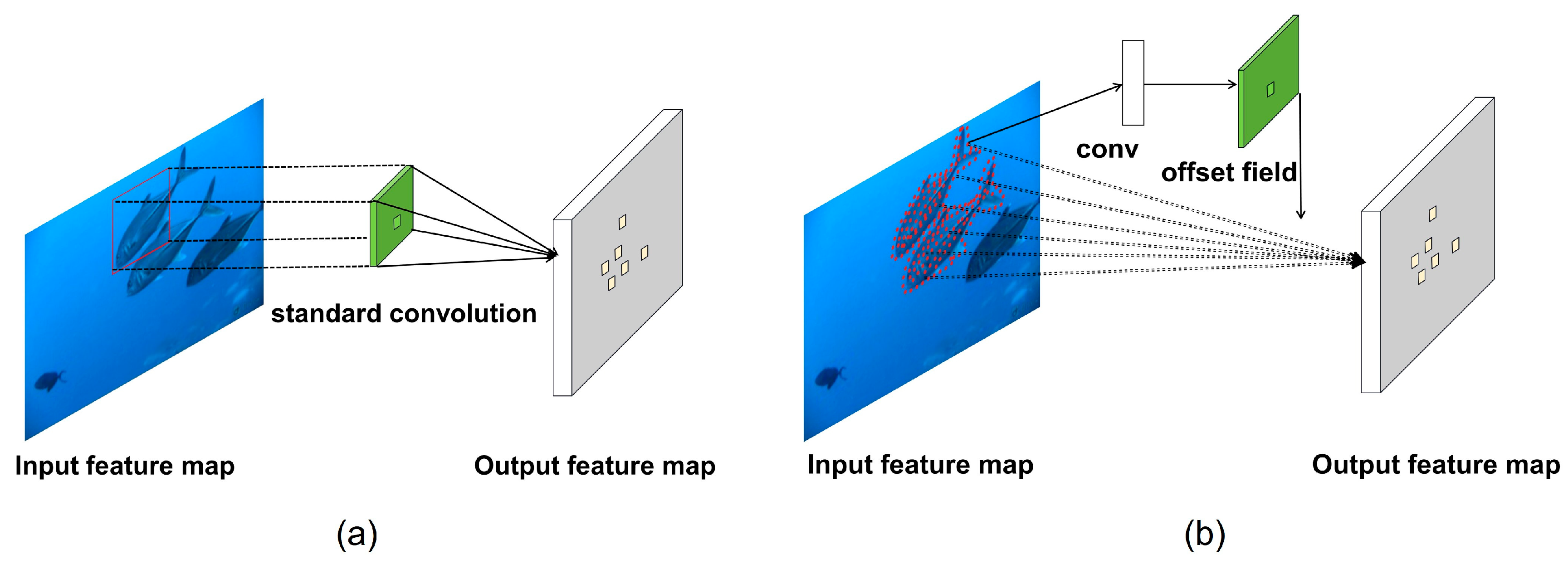

- we replace some C2f modules in the backbone feature extraction network of YOLOv8n with deformable convolutional v2 modules, allowing for better adaptation to object deformations and enabling more targeted convolutional operations.

- (2)

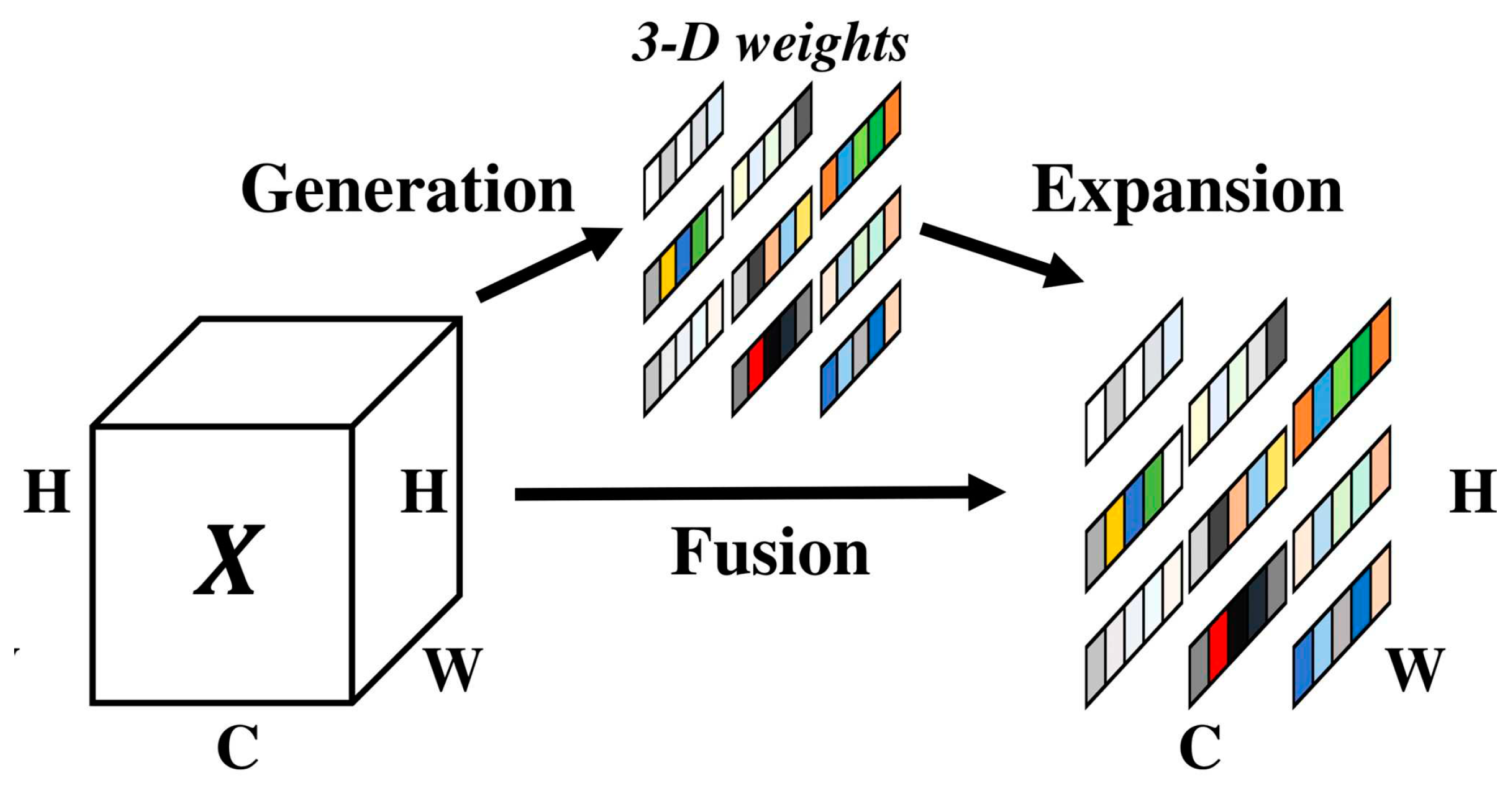

- we introduce an attention mechanism (SimAm) to the network structure, which does not introduce external parameters but assigns a 3D attention weight to the feature map.

- (3)

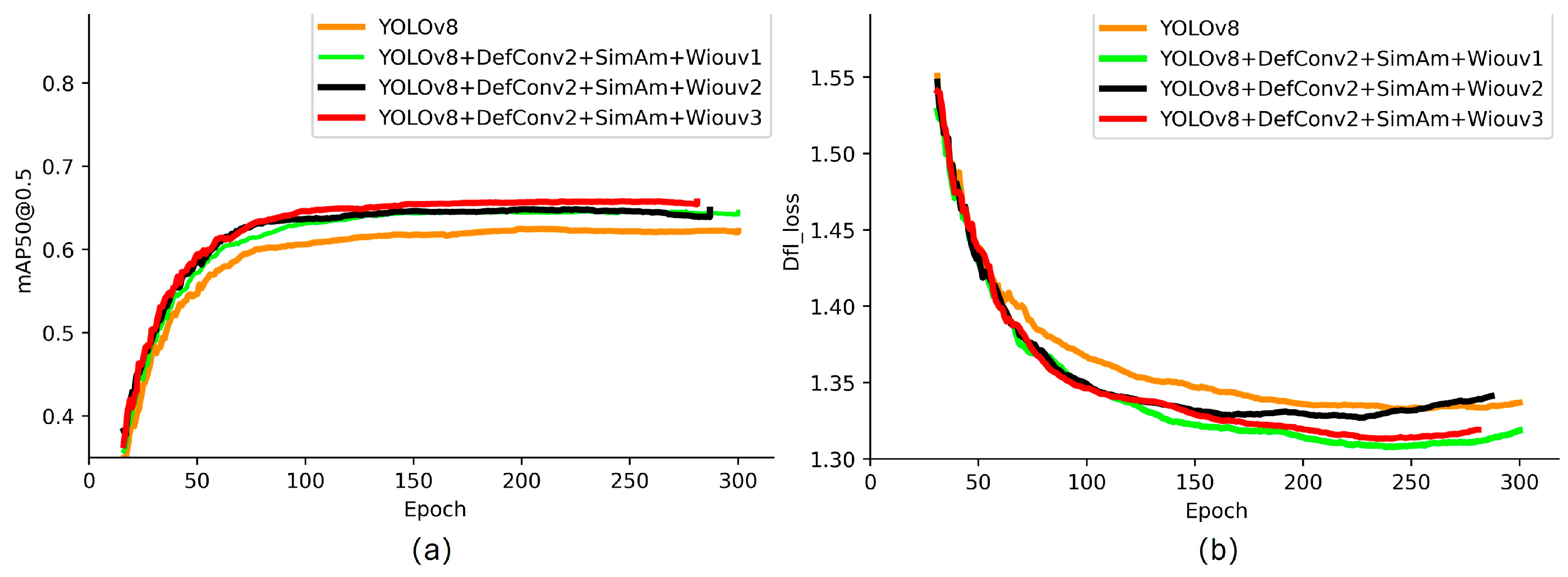

- we address an issue with the traditional loss function, where inconsistencies between the direction of the prediction box and the real box can cause fluctuations in the position of the prediction box during training, leading to slower convergence and decreased prediction accuracy. To overcome this, we propose the use of the Wiouv3 loss function to further optimize the network structure.

2. Related Work

2.1. Objection Detetion Algorithm

2.2. Fusion of deformable convolutional feature extraction network

2.3. Simple and efficient parameter-free attention mechanism

2.4. Loss function with dynamic focusing mechanism

2.4.1. WIoU v1

2.4.2. WIoU v2

2.4.3. WIoU v3

3. Experiments

3.1. Underwater target detection dataset

3.2. Experimental configuration and environment

3.3. Model evaluation metrics

4. Analysis and discussion of experimental result

4.1. Comparsion of experimental results of different model

4.2. Comparsion of ablation experimens

4.3. Pascal VOC dataset experimental results

5. Conclusion

Author Contributions

Data Availability Statement

Conflicts of Interest

References

- Sun, Y.; Zheng, W.; Du, X.; Yan, Z. Underwater Small Target Detection Based on YOLOX Combined with MobileViT and Double Coordinate Attention. J. Mar. Sci. Eng. 2023, 11, 1178. [Google Scholar] [CrossRef]

- Wang, S.; Tian, W.; Geng, B.; Zhang, Z. Resource Constraints and Economic Growth: Empirical Analysis Based on Marine Field. Water 2023, 15, 727. [Google Scholar] [CrossRef]

- Wang, S.; Li, W.; Xing, L. A Review on Marine Economics and Management: How to Exploit the Ocean Well. Water 2022, 14, 2626. [Google Scholar] [CrossRef]

- Yuan, X.; Guo, L.; Luo, C.; Zhou, X.; Yu, C. A Survey of Target Detection and Recognition Methods in Underwater Turbid Areas. Appl. Sci. 2022, 12, 4898. [Google Scholar] [CrossRef]

- Zhang, C.; Zhang, G.; Li, H.; Liu, H.; Tan, J.; Xue, X. Underwater target detection algorithm based on improved YOLOv4 with SemiDSConv and FIoU loss function. Front. Mar. Sci. 2023, 10, 1153416. [Google Scholar] [CrossRef]

- Lei, Z.; Lei, X.; Zhou, C.; Qing, L.; Zhang, Q. Compressed Sensing Multiscale Sample Entropy Feature Extraction Method for Underwater Target Radiation Noise. IEEE Access 2022, 10, 77688–77694. [Google Scholar] [CrossRef]

- Li, W.; Zhang, Z.; Jin, B.; Yu, W. A Real-Time Fish Target Detection Algorithm Based on Improved YOLOv5. J. Mar. Sci. Eng. 2023, 11, 572. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. 2016. You only look once: Unified, real-time object detection. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp: 779-788.

- Redmon, J.; Farhadi, A. 2017. Yolo9000: Better, faster, stronger. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp: 7263-7271.

- Bochkovskiy, A.; Wang, C.-Y.; Liao, H.-Y.M. Yolov4: Optimal speed and accuracy of object detection. arXiv 2020, arXiv:2004.10934. [Google Scholar]

- Terven, J.; Cordova-Esparza, D. A comprehensive review of yolo: From yolov1 to yolov8 and beyond. arXiv 2023, arXiv:2304.00501. [Google Scholar]

- Li, C.; Li, L.; Jiang, H.; Weng, K.; Geng, Y.; Li, L.; Ke, Z.; Li, Q.; Cheng, M.; Nie, W. Yolov6: A single-stage object detection framework for industrial applications. arXiv 2022, arXiv:2209.02976. [Google Scholar]

- Wang, C.-Y.; Bochkovskiy, A.; Liao, H.-Y.M. Yolov7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 7464–7475. [CrossRef]

- Ge, Z.; Liu, S.; Wang, F.; Li, Z.; Sun, J. Yolox: Exceeding yolo series in 2021. arXiv 2021, arXiv:2107.08430. [Google Scholar]

- Xu, X.; Jiang, Y.; Chen, W.; Huang, Y.; Zhang, Y.; Sun, X. Damo-yolo: A report on real-time object detection design. arXiv 2022, arXiv:2211.15444. [Google Scholar]

- Zheng, Z.; Wang, P.; Liu, W.; Li, J.; Ye, R.; Ren, D. 2020. Distance-iou loss: Faster and better learning for bounding box regression. In: Proceedings of the AAAI conference on artificial intelligence. pp: 12993-13000.

- Lou, H.; Duan, X.; Guo, J.; Liu, H.; Gu, J.; Bi, L.; Chen, H. DC-YOLOv8: Small-Size Object Detection Algorithm Based on Camera Sensor. Electronics 2023, 12, 2323. [Google Scholar] [CrossRef]

- Zhang, J.; Chen, H.; Yan, X.; Zhou, K.; Zhang, J.; Zhang, Y.; Jiang, H.; Shao, B. An Improved YOLOv5 Underwater Detector Based on an Attention Mechanism and Multi-Branch Reparameterization Module. Electronics 2023, 12, 2597. [Google Scholar] [CrossRef]

- Lei, F.; Tang, F.; Li, S. Underwater Target Detection Algorithm Based on Improved YOLOv5. J. Mar. Sci. Eng. 2022, 10, 310. [Google Scholar] [CrossRef]

- Zhu, X.; Hu, H.; Lin, S.; Dai, J. 2019. Deformable convnets v2: More deformable, better results. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp: 9308-9316.

- Dai, J.; Qi, H.; Xiong, Y.; Li, Y.; Zhang, G.; Hu, H.; Wei, Y. 2017. Deformable convolutional networks. In: Proceedings of the IEEE international conference on computer vision. pp: 764-773.

- Guo, M.-H.; Xu, T.-X.; Liu, J.-J.; Liu, Z.-N.; Jiang, P.-T.; Mu, T.-J.; Zhang, S.-H.; Martin, R.R.; Cheng, M.-M.; Hu, S.-M. Attention mechanisms in computer vision: A survey. Comput. Vis. Media 2022, 8, 331–368. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.-Y.; Kweon, I.S. 2018. Cbam: Convolutional block attention module. In: Proceedings of the European conference on computer vision (ECCV). pp: 3-19.

- Hu, J.; Shen, L.; Sun, G. 2018. Squeeze-and-excitation networks. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp: 7132-7141.

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. 2020. Eca-net: Efficient channel attention for deep convolutional neural networks. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp: 11534-11542.

- Lai, Y.; Ma, R.; Chen, Y.; Wan, T.; Jiao, R.; He, H. A Pineapple Target Detection Method in a Field Environment Based on Improved YOLOv7. Appl. Sci. 2023, 13, 2691. [Google Scholar] [CrossRef]

- Dong, C.; Cai, C.; Chen, S.; Xu, H.; Yang, L.; Ji, J.; Huang, S.; Hung, I.-K.; Weng, Y.; Lou, X. Crown Width Extraction of Metasequoia glyptostroboides Using Improved YOLOv7 Based on UAV Images. Drones 2023, 7, 336. [Google Scholar] [CrossRef]

- Yang, L.; Zhang, R.-Y.; Li, L.; Xie, X. 2021. Simam: A simple, parameter-free attention module for convolutional neural networks. In: International conference on machine learning. PMLR: pp: 11863-11874.

- Mao, R.; Wang, Z.; Li, F.; Zhou, J.; Chen, Y.; Hu, X. GSEYOLOX-s: An Improved Lightweight Network for Identifying the Severity of Wheat Fusarium Head Blight. Agronomy 2023, 13, 242. [Google Scholar] [CrossRef]

- Rezatofighi, H.; Tsoi, N.; Gwak, J.; Sadeghian, A.; Reid, I.; Savarese, S. 2019. Generalized intersection over union: A metric and a loss for bounding box regression. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp: 658-666.

- Zhang, Y.-F.; Ren, W.; Zhang, Z.; Jia, Z.; Wang, L.; Tan, T. Focal and efficient IOU loss for accurate bounding box regression. Neurocomputing 2022, 506, 146–157. [Google Scholar] [CrossRef]

- Tong, Z.; Chen, Y.; Xu, Z.; Yu, R. Wise-iou: Bounding box regression loss with dynamic focusing mechanism. arXiv 2023, arXiv:2301.10051. [Google Scholar]

- Zhu, Q.; Ma, K.; Wang, Z.; Shi, P. YOLOv7-CSAW for maritime target detection. Front. Neurorobotics 2023, 17, 1210470. [Google Scholar] [CrossRef] [PubMed]

- Zhao, Q.; Wei, H.; Zhai, X. Improving Tire Specification Character Recognition in the YOLOv5 Network. Appl. Sci. 2023, 13, 7310. [Google Scholar] [CrossRef]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 618–626. [Google Scholar] [CrossRef]

| Environment | Version or Model Number |

|---|---|

| Operating System | Ubuntu18.04 |

| CUDA Version | 11.3 |

| CPU | Intel(R) Xeon(R) CPU E5-2620 v4 |

| GPU | Nvidia GeForce 1080Ti*4 |

| RAM | 126G |

| Python version | Python 3.8 |

| Deep learning framework | Pytorch-1.12.0 |

| Model | Backbone | Flops/G | Params/M | mAP@0.5 | mAP@0.5:0.95 |

|---|---|---|---|---|---|

| DAMO-yolo | CSP-Darknet | 18.1 | 8.5 | 72.5 | 37.2 |

| YOLOX | Darknet53 | 26.8 | 9.0 | 81.45 | 42.7 |

| YOLOV7 | E-ELAN | 105.2 | 37.2 | 83.5 | 46.3 |

| YOLOV8n | Darknet53 | 3.0 | 8.2 | 88.6 | 51.8 |

| YOLOV8n(Our) | Darknet53(Our) | 3.13 | 7.7 | 91.8 | 55.9 |

| Model | Flops/G | Params/M | Average detection time/ms | Recall | mAP@0.5 | mAP@0.5:0.95 |

|---|---|---|---|---|---|---|

| YOLOv8n | 3.0 | 8.2 | 5 | 84.8 | 88.6 | 51.8 |

| YOLOv8n+DefConv2 | 3.13 | 7.7 | 7.4 | 86.1 | 91.0 | 54 |

| YOLOv8n+SimAM | 3.0 | 8.2 | 10.1 | 87.5 | 90.2 | 55.2 |

| YOLOv8n+Wiou V3 | 3.0 | 8.2 | 5.4 | 85.9 | 91.6 | 53.8 |

| YOLOv8n+ DefConv2+SimAm | 3.13 | 7.7 | 10.6 | 80.4 | 91.6 | 53.5 |

| YOLOv8n+ DefConv2+WiouV3 | 3.13 | 7.7 | 8.1 | 85.8 | 91.5 | 54.3 |

| YOLOv8n+SimAM+WiouV3 | 3.0 | 8.2 | 5 | 81.4 | 88.5 | 54.8 |

| YOLOv8n+DefConv2+ SimAM+WiouV3 | 3.13 | 7.7 | 8.7 | 85.1 | 91.8 | 55.9 |

| YOLOv8n | Average detection time/ms | mAP@0.5 | mAP@0.5:0.95 | ||||

|---|---|---|---|---|---|---|---|

| DefConv2 | SimAM | Wiouv1 | Wiouv2 | Wiouv3 | |||

| √ | √ | √ | 8.73 | 90.94 | 55.8 | ||

| √ | √ | √ | 10.6 | 91.01 | 55.3 | ||

| √ | √ | √ | 8.7 | 91.8 | 55.9 | ||

| Dataset | Model | Flops/G | Params/M | Recall | mAP@0.5 | mAP@0.95 |

|---|---|---|---|---|---|---|

|

Pascal VOC 2012 |

YOLOv8n | 3.0 | 8.2 | 55.1 | 62.2 | 45.9 |

| YOLOv8n+DefConv2 | 3.13 | 7.8 | 56.3 | 64.7 | 48 | |

| YOLOv8n+SimAM | 3.0 | 8.2 | 58.3 | 64.1 | 47.5 | |

| YOLOv8n+WIouv1 | 3.0 | 8.2 | 55.2 | 63.3 | 46.5 | |

| YOLOv8n+WIouv2 | 3.0 | 8.2 | 56.8 | 63.9 | 46.7 | |

| YOLOv8n+WIouv3 | 3.0 | 8.2 | 55.5 | 63.5 | 46.5 | |

| YOLOv8n+DefConv2+SimAm | 3.13 | 7.8 | 55.8 | 64.4 | 48.2 | |

| YOLOv8n+DefConv2+WIouV1 | 3.13 | 7.8 | 58.6 | 65.4 | 48.4 | |

| YOLOv8n+DefConv2+WIouV2 | 3.13 | 7.8 | 56.9 | 65.1 | 48.1 | |

| YOLOv8n+DefConv2+WIouV3 | 3.13 | 7.8 | 57.8 | 64.9 | 47.6 | |

| YOLOv8n+SimAM+ WIouV1 | 3.0 | 8.2 | 57 | 63.8 | 46.8 | |

| YOLOv8n+SimAM+ WIouV2 | 3.0 | 8.2 | 53.6 | 62.8 | 45.6 | |

| YOLOv8n+SimAM+ WIouV3 | 3.0 | 8.2 | 54.5 | 64.2 | 46.4 | |

| YOLOv8n+DefConv2+ SimAm+WIouV1 | 3.13 | 7.8 | 56.5 | 64.5 | 47.7 | |

| YOLOv8n+DefConv2+ SimAm+WIouV2 | 3.13 | 7.8 | 59.8 | 64.7 | 47.3 | |

| YOLOv8n+DefConv2+ SimAm+WIouV3 | 3.13 | 7.8 | 59.5 | 65.7 | 48.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).