Submitted:

05 January 2023

Posted:

06 January 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background & Related Work

3. Experiment Setting

3.1. Optimizer

- t -Time step

- -Weight/parameter at the given time step

- - Learning rate

- -Gradient of the Loss Function (L) to minimize w.r.t. w

3.1.1. SGD

3.1.2. Adagrad

3.1.3. RMSProp

3.1.4. Adadelta

- The continual decay of learning rates throughout training

- The need for a manually selected global learning rate

3.1.5. Adam

3.1.6. Adamax

3.1.7. Nadam

3.2. Datasets

3.2.1. MNIST

3.2.2. CIFAR10

4. Results and Discussion

- Algorithms which are heavily impacted by different learning rates and change in the output performance is significant, in terms of accuracy and loss for both training and testing data.

- Some optimization algorithms have low to none impact on the output, if the model is executed for higher number of epochs.

- There are some algorithms which are tough to categorize into the above two groups as the behavior is not constant over all datasets, entire range of epochs, and different values of LR.

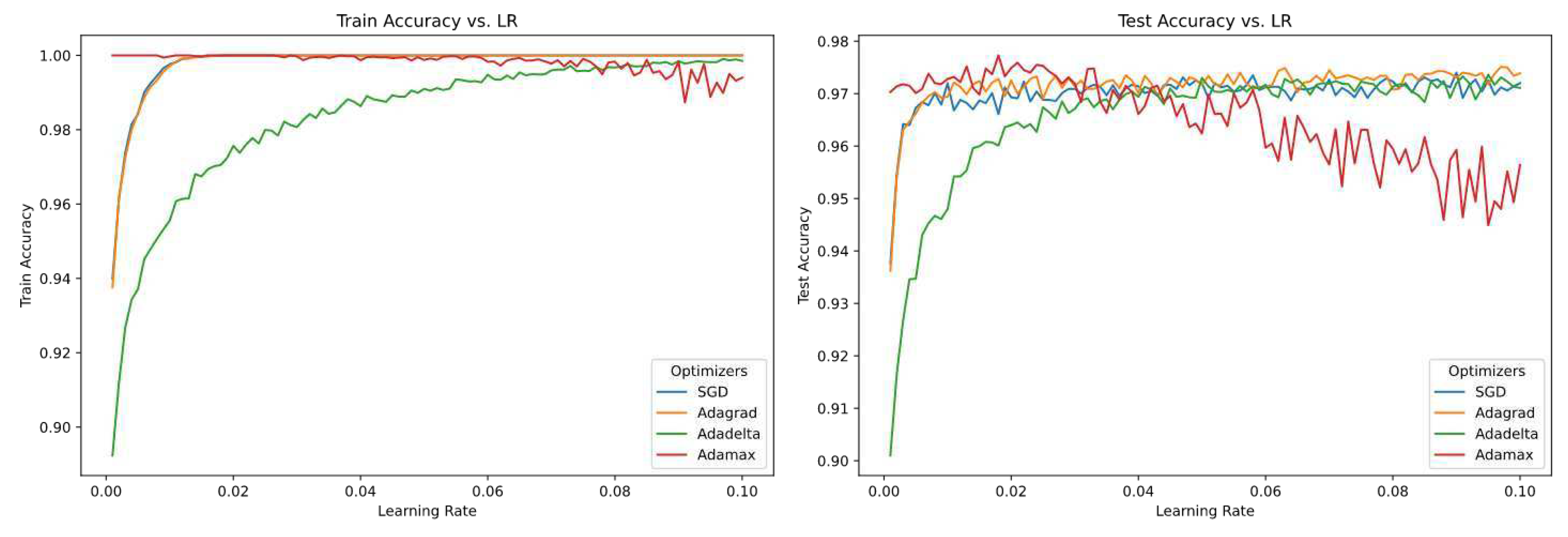

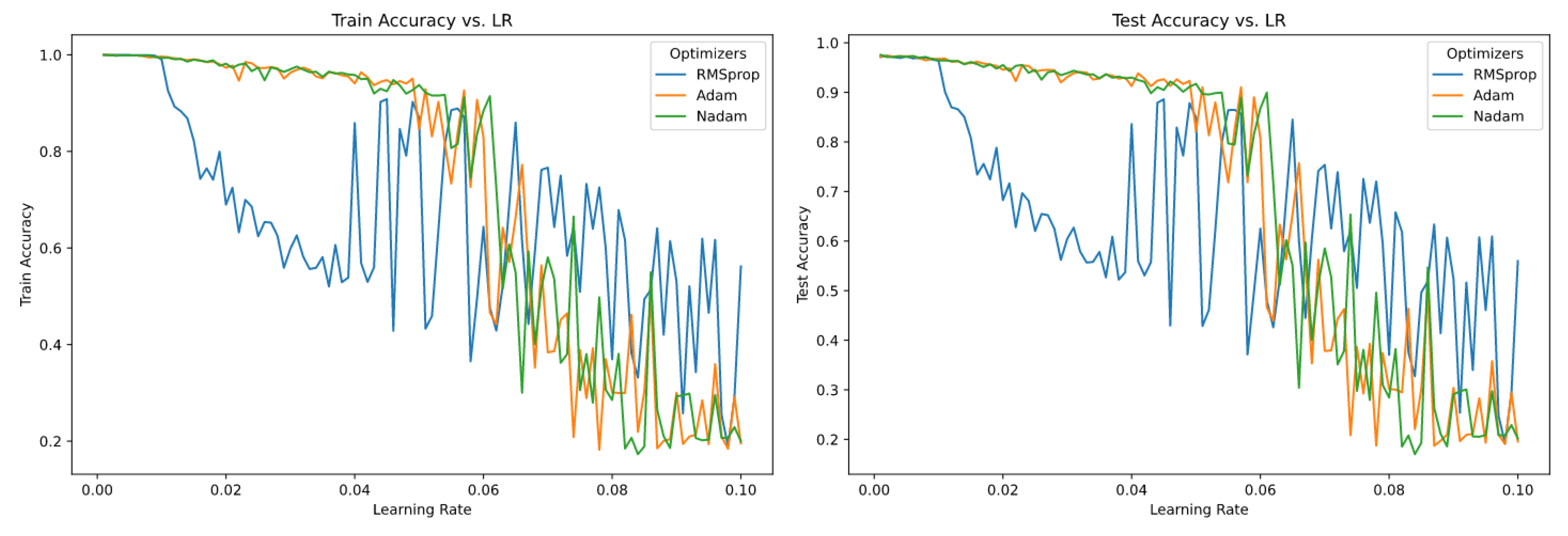

4.1. MNIST

4.1.1. Visualization

4.1.2. Optimizers

-

SDGVaried learning rate over different epochs has no effect over training accuracy and validation accuracy.

-

RMSPropIn the case of RMSProp, learning rate for the training and validation accuracy converges after a few iterations.But, for the higher value of the learning rate the accuracy tends to decrease over time, and it diverges.These pattern seems to be consistent for different epochs and RMSProp seem to be highly LR sensitive for MNIST dataset.

-

ADAMADAM is similar to RMSProp, and it is only sensitive ti learning rate for higher values of LR.For LR , the accuracy does not converge to the same point.For rest of the LR it converges to a same point with higher accuracy for both training and validation data.

-

AdagradAdagrad is one of the optimizers which is completely insensitive towards different values of learning rate over a range of epochs.For all the training and validation data it performs similarly and converges towards a same point with higher accuracy.

-

AdadeltaAdadelta is from the list of optimizers which is not sensitive to learning rates. But, all the accuracy of different LR does not converge at a same point. The deviation is quite high compared to the other optimizers (which are not sensitive towards different value of LRs).Higher value of LR has more accuracy compared to lower value of LR.For this unique property I would tag this optimizer to a different group where the change of LR has moderate effect over training and validation accuracy and higher value of LR performs better compared to a lower version of the LR or vice-versa.

-

AdamaxThis optimizer has no effect on the accuracy with different learning rate.But, it follows a similar pattern compared to Adadelta, where the higher values of LR is a bit diverged compared to other value of LR for all epochs.

-

NadamNadam has a great result for lower value of LR’s. But for higher value of LR’s the performance decreases and diverges.So, Nadam is sensitive to higher value of learning rates.

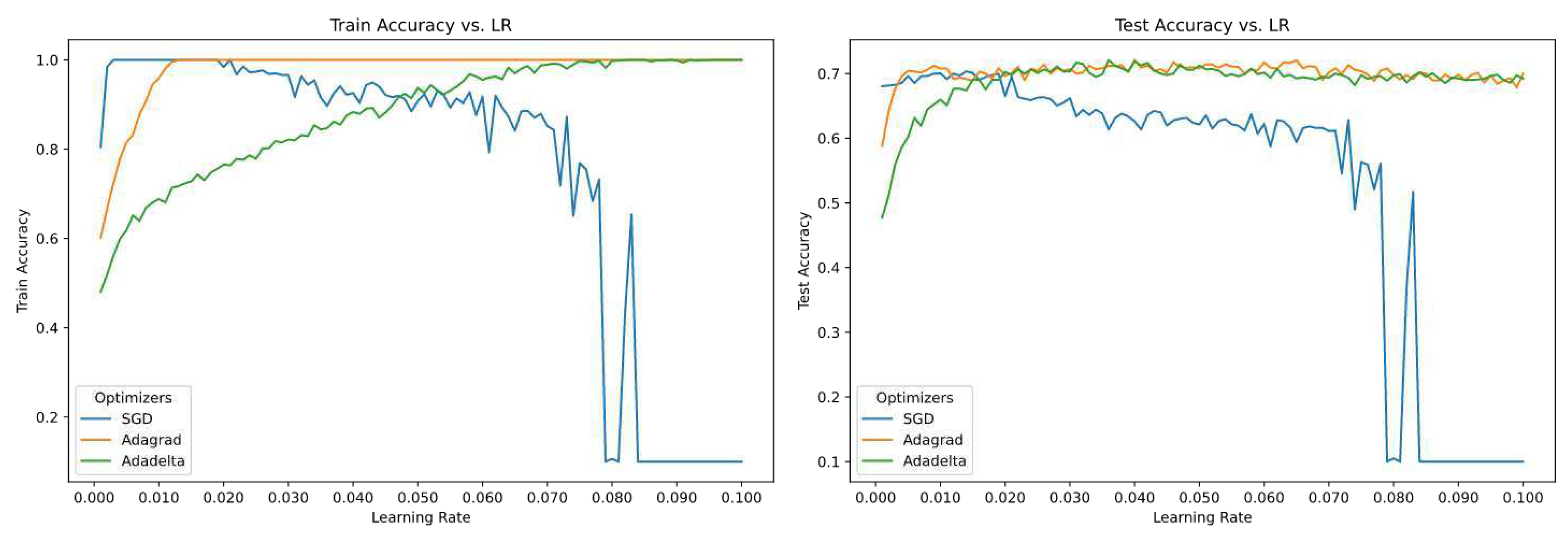

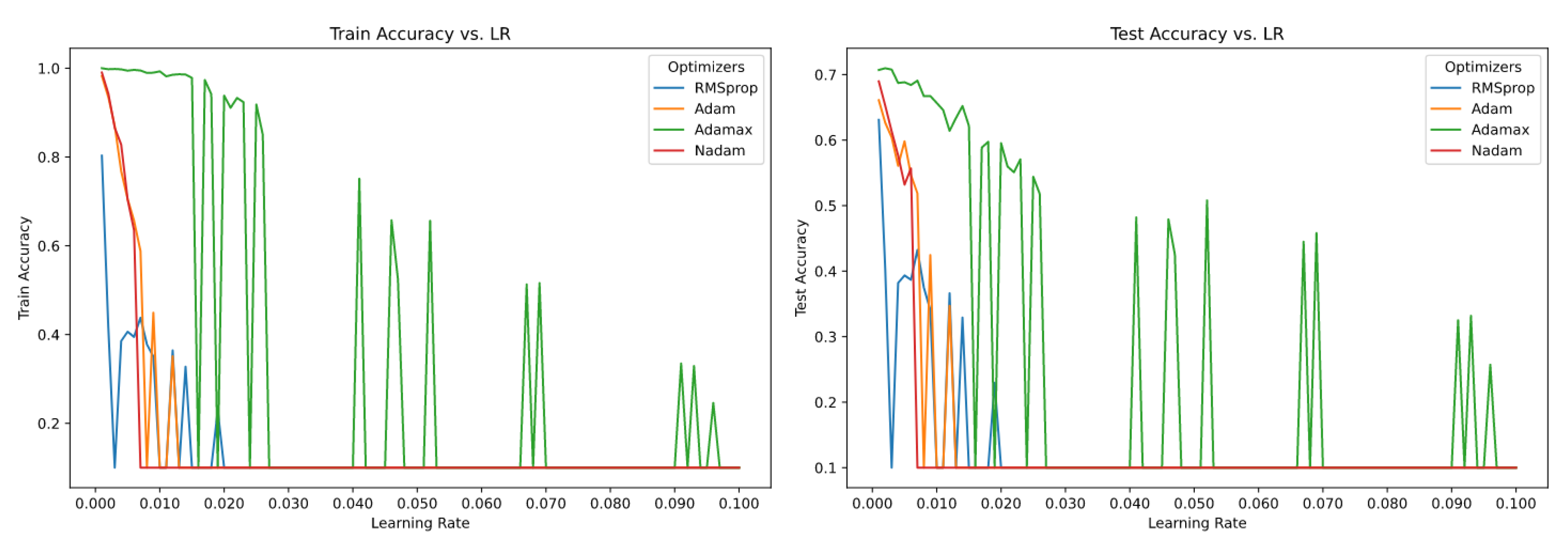

4.2. CIFAR-10

4.2.1. Visualization

4.2.2. Optimizers

-

SDGSGD for CIFAR10 dataset with different learning rate has a moderate effect on the accuracy.Lower value of learning rate converges to similar accuracy at higher epochs. Then there is a sudden drop in accuracy from the epoch value around 130.

-

RMSPropRMSProp is highly sensitive to different learning rate across all epochs.Performance of the model with higher value of learning rate has the least accuracy and lower value of learning yields a better performance.

-

AdamAdam performs similar to RMSProp. The model with lower value of learning rate performs better w.r.t. accuracy compared to the model with higher values of learning rate.Here, we see for the models with higher value of learning rate does not converge to a similar value of accuracy for higher value of epochs.

-

AdagradThis optimizer is highly sensitive to models with different values of learning rate.Surprisingly, models with higher value of learning rate has a better accuracy compared to the models with lower value of learning rate.With respect to convergence none of the model’s accuracy with different learning rate converged to a similar accuracy over all the given epochs.

-

AdadeltaThe model with higher value of learning rate has a better performance compared to the models with lower values of learning rate.The optimizer is sensitive towards different values of learning rate over multiple epoch values.The optimizer is highly sensitive towards different values of learning rate and at the same time the divergence is constant.

-

AdamaxThe optimizer’s performance is best when the value of learning rate of the model is small. Higher the value of learning rate for the model lower the accuracy, and it tends to diverge as well.We can clearly say that the model with lower values of learning rate shows no sensitivity over multiple epochs.

-

NadamThis optimizer performs similar to Adamax

5. Conclusion

6. Future Work

Supplementary Materials

Acknowledgments

Conflicts of Interest

References

- Bengio, Y. Practical recommendations for gradient-based training of deep architectures. arXiv:1206.5533 [cs], 2012; arXiv:1206.5533. [Google Scholar] [CrossRef]

- Bishop, C.M. Neural Networks for Pattern Recognition. p. 251.

- Ian Goodfellow. Deep Learning | The MIT Press. Publisher: The MIT Press.

- Duchi, J.; Hazan, E.; Singer, Y. Adaptive Subgradient Methods for Online Learning and Stochastic Optimization. p. 39.

- Zeiler, M.D. ADADELTA: An Adaptive Learning Rate Method. arXiv:1212.5701 [cs], 2012; arXiv:1212.5701. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv:1412.6980 [cs], 2017; arXiv:1412.6980. [Google Scholar] [CrossRef]

- Dozat, T. Incorporating Nesterov Momentum into Adam. p. 6.

| Model: "sequential" | ||

|---|---|---|

| Layer (type) | Output Shape | Param # |

| flatten_3 (Flatten) | (None, 784) | 0 |

| dense_9 (Dense) | (None, 64) | 50240 |

| dense_10 (Dense) | (None, 64) | 4160 |

| dense_11 (Dense) | (None, 10) | 650 |

| Total params: 55,050 | ||

| Trainable params: 55,050 | ||

| Non-trainable params: 0 | ||

| Model: "sequential" | ||

|---|---|---|

| Layer (type) | Output Shape | Param # |

| conv2d_43 (Conv2D) | (None, 30, 30, 32) | 896 |

| max_pooling2d_26 (MaxPooling) | (None, 15, 15, 32) | 0 |

| conv2d_44 (Conv2D) | (None, 13, 13, 64) | 18496 |

| max_pooling2d_27 (MaxPooling) | (None, 6, 6, 64) | 0 |

| conv2d_45 (Conv2D) | (None, 4, 4, 64) | 36928 |

| flatten_13 (Flatten) | (None, 1024) | 0 |

| dense_26 (Dense) | (None, 64) | 65600 |

| dense_27 (Dense) | (None, 10) | 650 |

| Total params: 122,570 | ||

| Trainable params: 122,570 | ||

| Non-trainable params: 0 | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).