4. Results

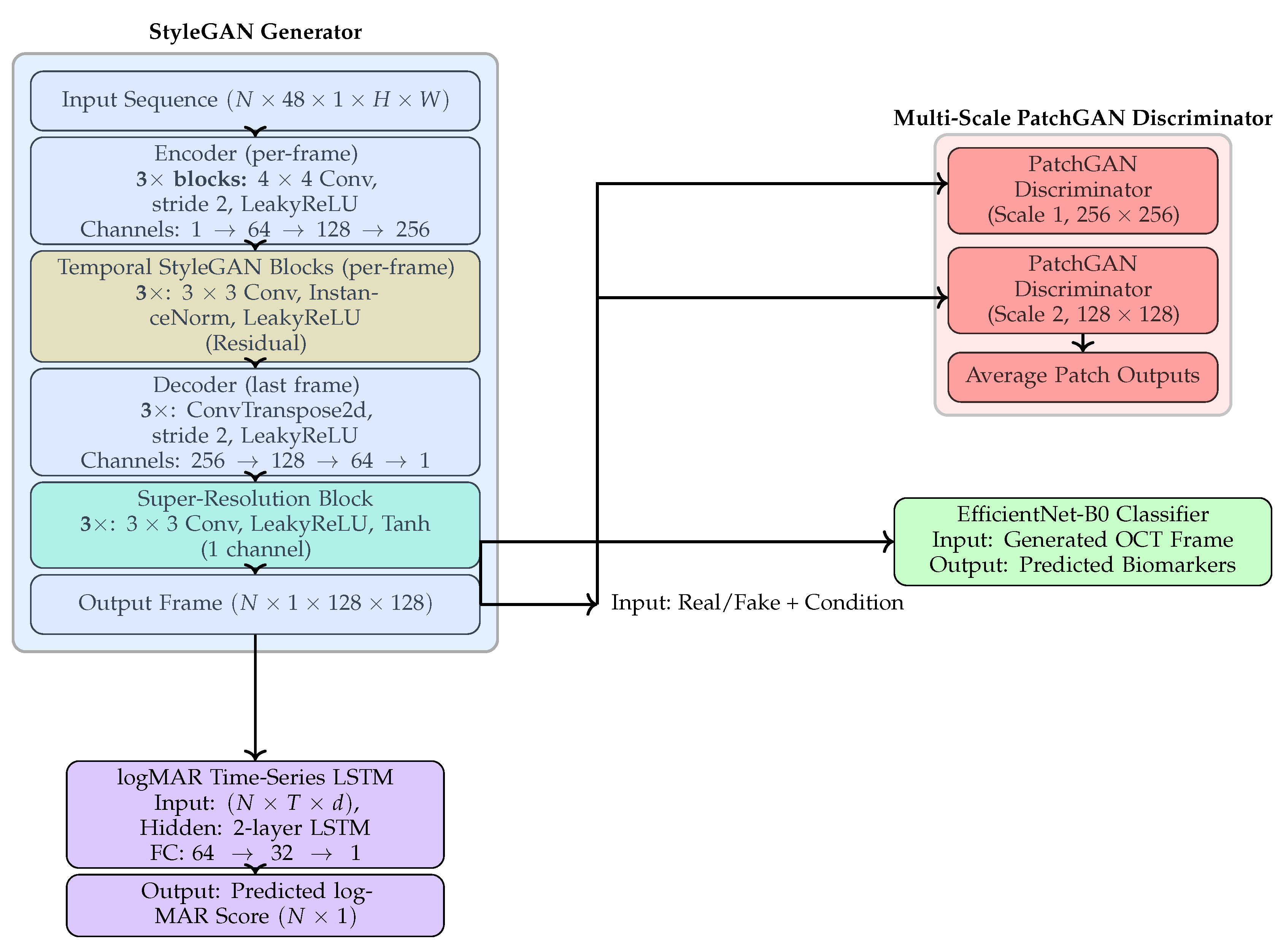

This section comprehensively evaluates the proposed multimodal forecasting framework across anatomical, functional, and clinical dimensions. Experiments were performed on longitudinal OCT sequences with corresponding visual acuity (logMAR) measurements. The objective was to assess the model’s capability to forecast future retinal morphology and functional trends from prior visits.

Results are organized into five parts: (1) qualitative visualization of OCT forecasts; (2) quantitative analysis of logMAR trend prediction using the Winner–Stabilizer–Loser framework; (3) model comparison and ablation analysis of generators, discriminators, and loss functions; (4) multimodal biomarker classification from predicted OCTs; and (5) benchmarking against prior longitudinal forecasting methods.

Overall, the proposed model demonstrates three key properties:

High anatomical fidelity, with predicted OCT frames achieving SSIM up to 0.93 and PSNR exceeding 38 dB,

Clinically consistent functional forecasting, with logMAR errors (MAE = 0.052) well within the accepted clinical tolerance,

Trend-awareness, as the model accurately identifies whether a patient’s visual function is improving, stable, or declining across visits.

These results collectively establish that the proposed multimodal GAN learns both spatial structure and temporal dynamics, producing anatomically realistic and clinically interpretable forecasts suitable for longitudinal disease monitoring.

4.1. Qualitative OCT Forecasting Performance

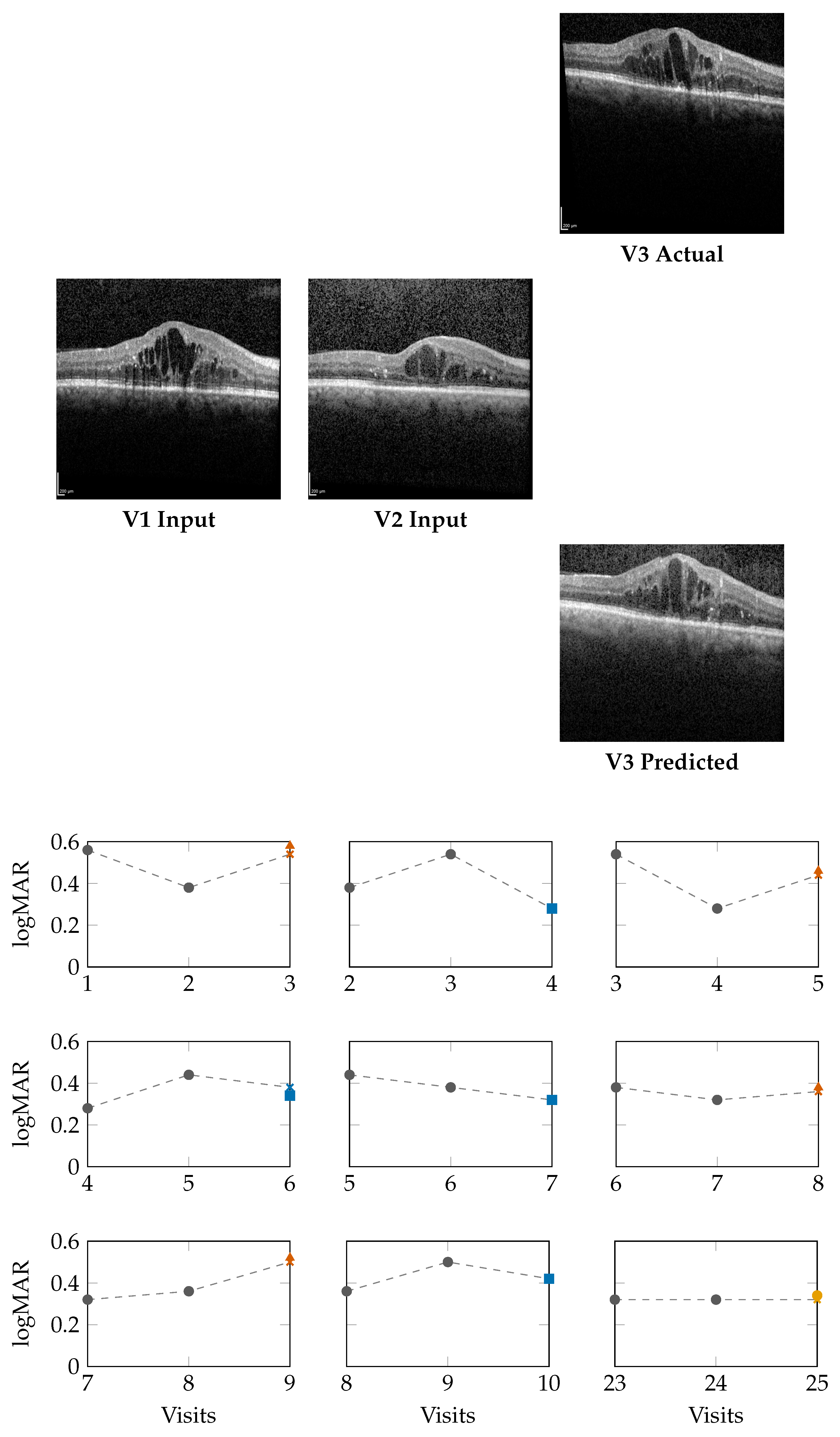

Figure 2 (top) and

Figure 3 (top) present qualitative OCT forecasting results for two representative patients (IDs 232 and 234). In both examples, the model receives two consecutive OCT scans (V1 and V2) and predicts the subsequent visit (V3). The predicted frames exhibit high visual fidelity and strong anatomical consistency with the ground truth. Specifically, the forecasts demonstrate:

Preservation of retinal layer continuity, particularly around the foveal pit and outer retinal boundaries,

Accurate modeling of macular thickness and edema progression, critical biomarkers for diabetic macular edema (DME) and age-related macular degeneration (AMD),

Retention of fine microstructural features, facilitated by the super-resolution and StyleGAN-inspired upsampling mechanisms in the generator network.

These results confirm that the proposed model effectively learns spatial and temporal correlations within the retinal morphology, capturing both local structural changes and global thickness variations across visits. Subtle pathological signatures, such as localized depressions and intra-retinal cystic spaces, are preserved in the predicted frames, demonstrating the model’s capacity to generalize across disease stages and longitudinal follow-ups.

These results confirm that the model effectively captures spatio-temporal dependencies in OCT morphology. Moreover, subtle pathological features such as retinal bulges and depressions are maintained in the generated frames, supporting potential application in longitudinal disease monitoring.

4.2. Forecasting Functional Outcome (logMAR)

The lower panels of

Figure 2 and

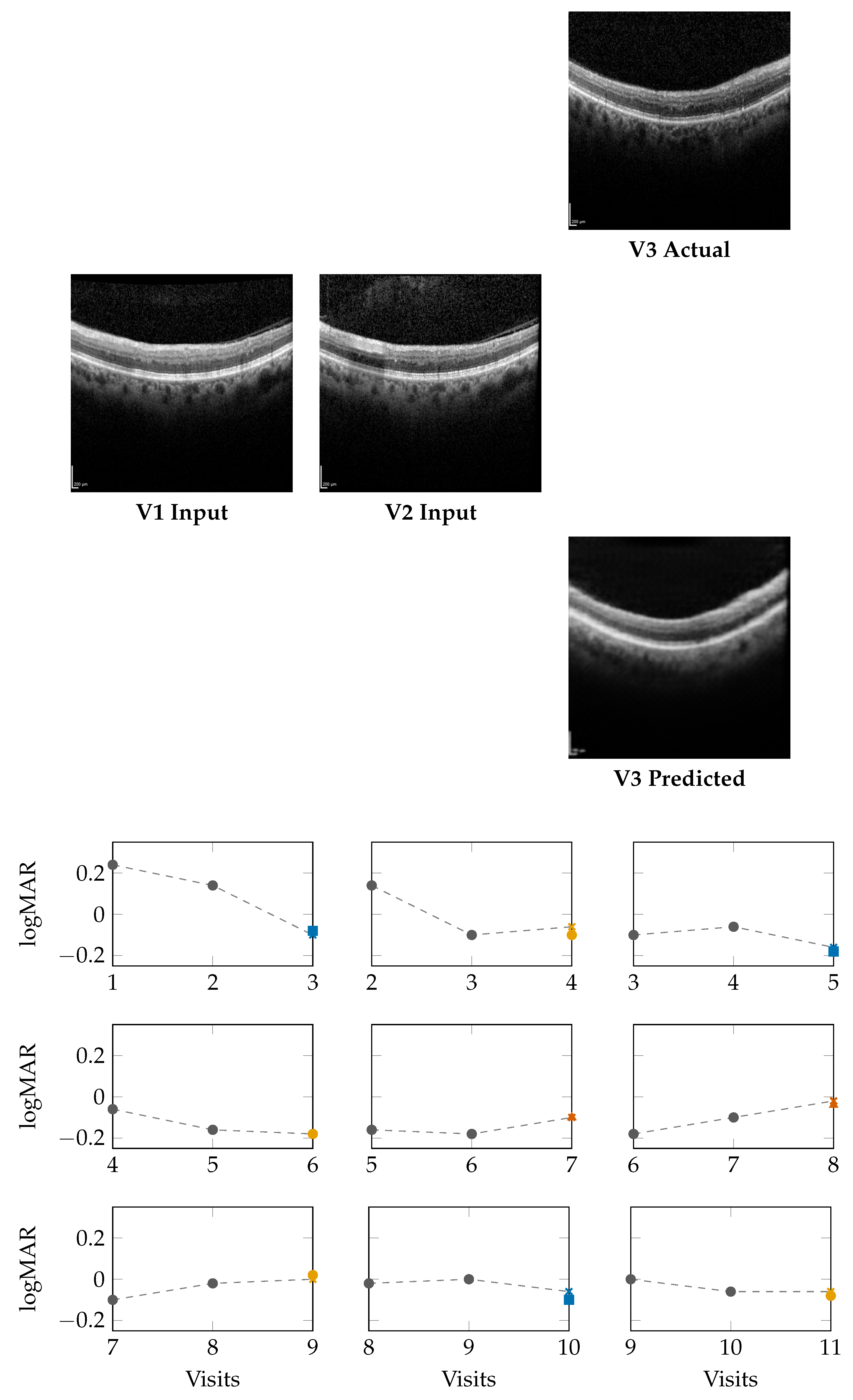

Figure 3 illustrate

sliding-window forecasts of logMAR trajectories for Patients 232 and 234. Each subplot represents a temporal triplet

, where:

gray circles indicate ground-truth logMAR values at and ,

Colored markers show the model’s forecast at using a colorblind-safe palette,

Cross-shaped “×” markers indicate the ground-truth logMAR at , colored with the same class color for direct comparison.

Predicted outcomes are categorized using the deviation

between prediction and the previous visit:

Winner (): predicted improvement in visual acuity (lower logMAR),

Stabilizer (): predicted stable visual acuity,

Loser (): predicted deterioration (higher logMAR).

Patient 232 (Figure 2): Across nine temporal windows, the model successfully tracks logMAR evolution and the overall disease trend.

In the V1,V2 → V3 window, the model’s numerical estimate is slightly higher than the true logMAR deterioration (0.58 vs. 0.54), but it still correctly assigns the Loser class.

In the V2,V3 → V4 window, the prediction matches the ground truth (0.28), indicating a Winner.

In later visits, e.g., V5,V6 → V7, the prediction (0.32) equals GT (0.32), representing a Stabilizer.

This consistency across sequential visits highlights that the model not only produces accurate logMAR values but also respects the directionality of visual function changes, correctly identifying improvement, stability, or decline phases.

Patient 234 (Figure 3): For this patient, logMAR values fall within the negative range (reflecting higher visual acuity). The model effectively captures improvement and stabilization trends:

In the early V1, V2 → V3 window, the forecast () closely matches GT (), denoting a Winner.

In V2, V3 → V4, the model output () stays within of GT (), resulting in a Stabilizer.

In later windows (e.g., V8, V9 → V10), the model predicts vs. GT , again falling into the Stabilizer range.

Across visits, predictions remain well within clinical tolerance thresholds (), demonstrating robustness even when logMAR reversals or subtle fluctuations occur.

Summary: The results demonstrate that the model does not merely perform pointwise regression but learns the underlying temporal behavior of visual function. The Winner–Stabilizer–Loser classification framework provides an interpretable means to assess prediction reliability and trend consistency:

Winners correspond to predicted improvements, highlighting recovery tendencies,

Stabilizers dominate across visits, showing that the model can maintain disease stability over time,

Losers appear infrequently and usually represent small deviations within the noise margin of measurement error.

By correctly identifying the temporal direction of change, the model demonstrates strong potential for trend-aware visual prognosis, enabling longitudinal interpretation of patient recovery or decline.

4.3. Winner–Stabilizer–Loser Classification Across Thresholds

Table 3 presents the distribution of

Winner, Stabilizer, and Loser outcomes for four patients (IDs 201, 203, 232, and 234) across varying tolerance thresholds (

–

). Lower

values represent strict thresholds (minor deviations are penalized), while higher

values allow greater clinical tolerance.

Patient 201: Shows balanced outcomes at but converges to entirely stable predictions by , with no Losers remaining.

Patient 232: Initially sensitive to small fluctuations (13 Losers at ), yet transitions fully to Stabilizers beyond , demonstrating consistency under realistic tolerances.

Patient 234: Exhibits steady improvement; Losers diminish progressively, and all predictions become Stabilizers by .

Patient 236: Displays early variability but stabilizes completely by , mirroring trends in Patient 232.

This trend-based interpretation highlights that the model adapts well across patients and tolerance levels, consistently maintaining temporal coherence in visual function forecasting.

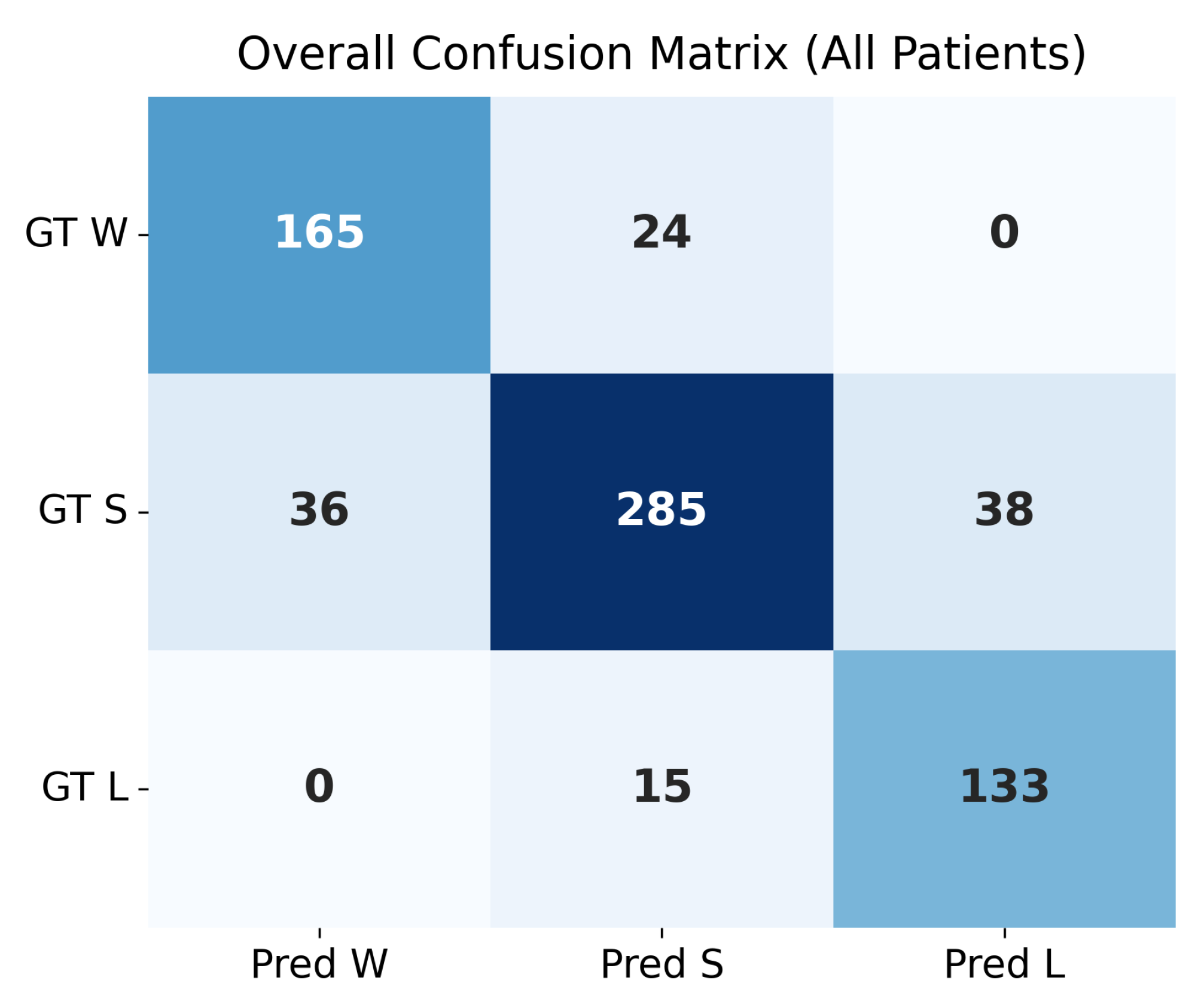

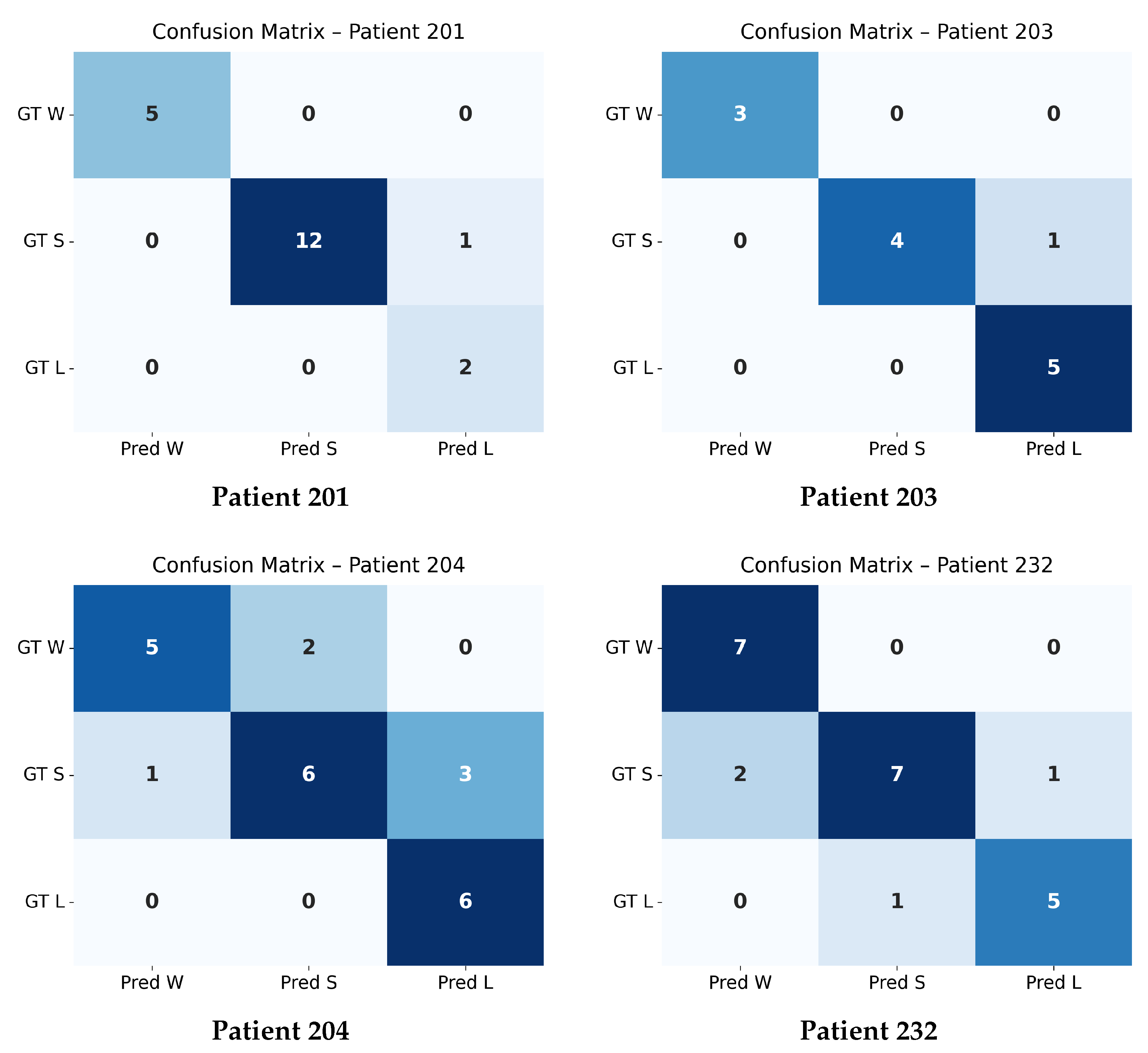

4.4. Confusion Matrix Evaluation at

The confusion matrices shown in

Figure 4 and

Figure 5 summarize the agreement between the model’s predicted trajectory classes and the ground-truth delta-based labels for each patient. Each matrix compares three clinically relevant progression categories:

Winner (W): Improvement beyond the negative threshold (),

Stabilizer (S): Minimal change within the threshold range (),

Loser (L): Worsening beyond the positive threshold ().

Correct predictions appear along the diagonal of each matrix, whereas off-diagonal values represent misclassifications. A higher concentration of values along the diagonal therefore indicates stronger predictive accuracy. The overall confusion matrix exhibits a clear diagonal dominance, demonstrating that the model reliably differentiates between improving (W), stable (S), and worsening (L) trajectories across all patients.

Individual patient confusion matrices reveal patient-specific performance patterns. Patients 201, 203, and 232 show strong diagonal structures with minimal misclassification, indicating highly predictable progression patterns. Patient 204 displays more variability, with a larger number of S-to-L confusions, suggesting more complex or borderline changes around the threshold.

A detailed quantitative summary of precision, recall, and F1-scores for the overall dataset and each patient is provided in

Table 4. These metrics reinforce the visual observations from the confusion matrices and confirm that the model performs consistently across most patients, with only minor deviations in more challenging cases.

4.5. Quantitative Results and Model Comparison

Table 2 presents a comprehensive evaluation of multiple generator–discriminator configurations, input sequence lengths, loss formulations, and training strategies for OCT time-series forecasting. IDs 1–4 correspond to baseline configurations trained for 250 epochs, including earlier Transformer-based and Temporal-CNN baselines (IDs 1–2) and an initial version of

Generator 1 (IDs 3–4). From ID 5 onward, all models are trained for 350 epochs, which includes the full Generator 1 + Discriminator 1 pipeline (IDs 5–12) as well as the multimodal models (IDs 13–14).

IDs 5–12 employ Generator 1 (Temporal CNN + Super-Resolution + StyleGAN-inspired blocks) with Discriminator 1 (multi-scale patch discriminator). IDs 13–14 use Generator 2, an extended architecture that integrates temporal cross-frame attention to jointly predict future OCT frames and logMAR visual acuity.

Performance metrics include SSIM and PSNR for image quality, FID for distributional fidelity, and MAE/MSE for the multimodal logMAR prediction. The experiments use different loss formulations: Loss_1 (BCE+Perceptual), Loss_2 (BCE+GP+Perceptual+Pixel+Adversarial+SSIM), and Loss_3 (BCE+Wasserstein+GP+Perceptual+Pixel+Adversarial+SSIM).

The best overall performance is achieved by the multimodal temporal-attention model (ID 14), trained for 350 epochs, reaching SSIM 0.9264 and PSNR 38.1.

SSIM: Measures perceptual and structural similarity between predicted and ground truth OCT images.

PSNR: Reflects pixel-level fidelity and noise robustness.

MAE and MSE: Capture absolute and squared errors in logMAR prediction (only applicable in multimodal models).

Effect of Temporal Modeling and StyleGAN Blocks:

Introducing temporal CNNs and super-resolution modules (Generator_1) markedly improves performance. For example:

v1, v2 → v3 improves to SSIM = 0.7938, PSNR = 0.2931,

v1, v2, v3, v4 → v5 achieves SSIM = 0.8611, indicating the benefit of longer temporal windows.

Multimodal Forecasting with Generator_2:

We began our experiments with a baseline generator architecture (Models ID 1–4), establishing reference performance for single-visit and simple paired-input forecasting. Building on these results, we adopted the enhanced Generator_2 design with temporal attention and multimodal fusion. This model was evaluated using 2-pair, 3-pair, and 4-pair input sequences to predict the next OCT frame and its corresponding logMAR value.

Due to dataset limitations—specifically the inconsistency of longer longitudinal sequences—we focused on the most reliable configurations:

2-pair and

3-pair inputs. Among all tested settings, the 3-pair multimodal configuration produced the best overall performance, as summarized in

Table 2.

The final Generator_2 model (3-pair input) achieves:

SSIM = 0.9264, PSNR = 0.4169,

MAE = 0.052, MSE = 0.0058.

These results confirm the synergy of multimodal learning and dynamic attention-driven temporal encoding. The improved logMAR prediction further highlights the model’s ability to forecast functional vision outcomes alongside structural OCT progression, even with limited longitudinal data.

4.6. Biomarker Classification Results

In addition to anatomical and functional forecasting, our multimodal framework predicts 16 clinically relevant retinal biomarkers from the generated OCT scans using a pretrained EfficientNet-B0 multilabel classifier. The predicted biomarkers include: atrophy/thinning of retinal layers, disruption of the ellipsoid zone (EZ), disorganization of the retinal inner layers (DRIL), intraretinal hemorrhages (IR), intraretinal hyperreflective foci (IRHRF), partially attached vitreous face (PAVF), fully attached vitreous face (FAVF), preretinal tissue or hemorrhage, vitreous debris (VD), vitreomacular traction (VMT), diffuse retinal thickening/macular edema (DRT/ME), intraretinal fluid (IRF), subretinal fluid (SRF), disruption of the retinal pigment epithelium (RPE disruption), serous pigment epithelial detachment (Serous PED), and subretinal hyperreflective material (SHRM).

Quantitative evaluation on the OLIVES dataset demonstrates strong performance despite class imbalance. Using focal loss during training, the classifier achieved a macro-averaged F1 score of 0.81, with per-class F1 scores ranging from 0.72 (IR hemorrhages) to 0.89 (subretinal fluid).

Table 5 provides detailed precision, recall, and F1 scores for each biomarker.

4.7. Comparison with Prior Longitudinal Forecasting Models

Several prior studies have explored generative and predictive modeling of disease progression using longitudinal medical data. Notably, the Sequence-Aware Diffusion Model (SADM) [

6] and the GRAPE dataset [

7] serve as representative efforts in volumetric image generation and functional outcome prediction, respectively. In this section, we highlight how our proposed multimodal GAN framework advances beyond these approaches in terms of forecasting capacity, multimodal fusion, and clinical utility.

Comparison with SADM

SADM [

6] uses a diffusion model with transformer-based attention modules to model temporal dependencies in longitudinal 3D medical scans such as cardiac and brain MRI. The method supports autoregressive sampling and handles missing frames through zero tensor masking. While SADM shows strong performance (e.g., SSIM = 0.851 on cardiac MRI), it requires significant compute resources and does not support scalar outcome prediction. In contrast, our method achieves higher structural fidelity (SSIM = 0.9264), incorporates super-resolution modules, and jointly forecasts both anatomical (OCT) and functional (logMAR) trajectories. Moreover, our GAN-based approach provides faster convergence and inference than diffusion models, making it more suitable for clinical integration.

Comparison with GRAPE

GRAPE [

7] presents a longitudinal multimodal dataset of glaucoma patients, including color fundus photographs (CFPs), OCT, and visual field (VF) tests. It focuses primarily on VF progression prediction via deep learning models such as ResNet-50, achieving AUCs of 0.71–0.80 depending on the criterion. However, GRAPE does not generate future anatomical images nor integrate time-series modeling. In contrast, our framework models dynamic imaging and scalar progression jointly using longitudinal OCT scans and logMAR time series, capturing both structural and functional evolution over time.

Summary

Our multimodal GAN approach introduces a novel integration of spatiotemporal generation and clinical forecasting. By combining StyleGAN blocks, temporal attention, and a DeepShallow LSTM, our model offers both fine-grained anatomical realism and accurate clinical predictions, surpassing the scope of prior methods.

Table 6.

Comparison of our multimodal GAN approach with prior methods and datasets. Our method uniquely combines anatomical frame forecasting with functional scalar prediction in an end-to-end fashion.

Table 6.

Comparison of our multimodal GAN approach with prior methods and datasets. Our method uniquely combines anatomical frame forecasting with functional scalar prediction in an end-to-end fashion.

|

Model / Dataset |

Modality |

Input |

Output |

Architecture |

Forecast Task |

Metrics |

| Our Model |

OCT + logMAR |

V1–V3 OCT + logMAR |

V4 OCT + logMAR |

StyleGAN + LSTM + SuperRes |

Imaging + Scalar Regression |

SSIM 0.9264, MAE 0.052 |

|

SADM [6] |

Brain/Cardiac MRI |

ED + frames (3D) |

Future MRI frame |

Diffusion + Transformer |

Imaging (Autoregressive) |

SSIM 0.851, PSNR 28.99 |

|

GRAPE [7] |

CFP, OCT, VF |

Baseline CFP/OCT |

VF Progression Class |

ResNet-50 |

VF Classification (PLR/MD) |

AUC 0.71–0.80, MAE 4.14 |

4.8. Loss Function Ablation

The loss functions used in generator training significantly affect outcome quality:

Loss_1 (BCE + perceptual loss): Produces sharp but occasionally unstable predictions.

Loss_2 (adds gradient penalty, SSIM, pixel-wise terms): Yields improved convergence and sharper details.

Loss_3 (adds Wasserstein term): Stabilizes adversarial training and maximizes perceptual quality.

Overall, Loss_3 in combination with Generator_2 produces the highest visual and functional fidelity.