Submitted:

13 November 2025

Posted:

14 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

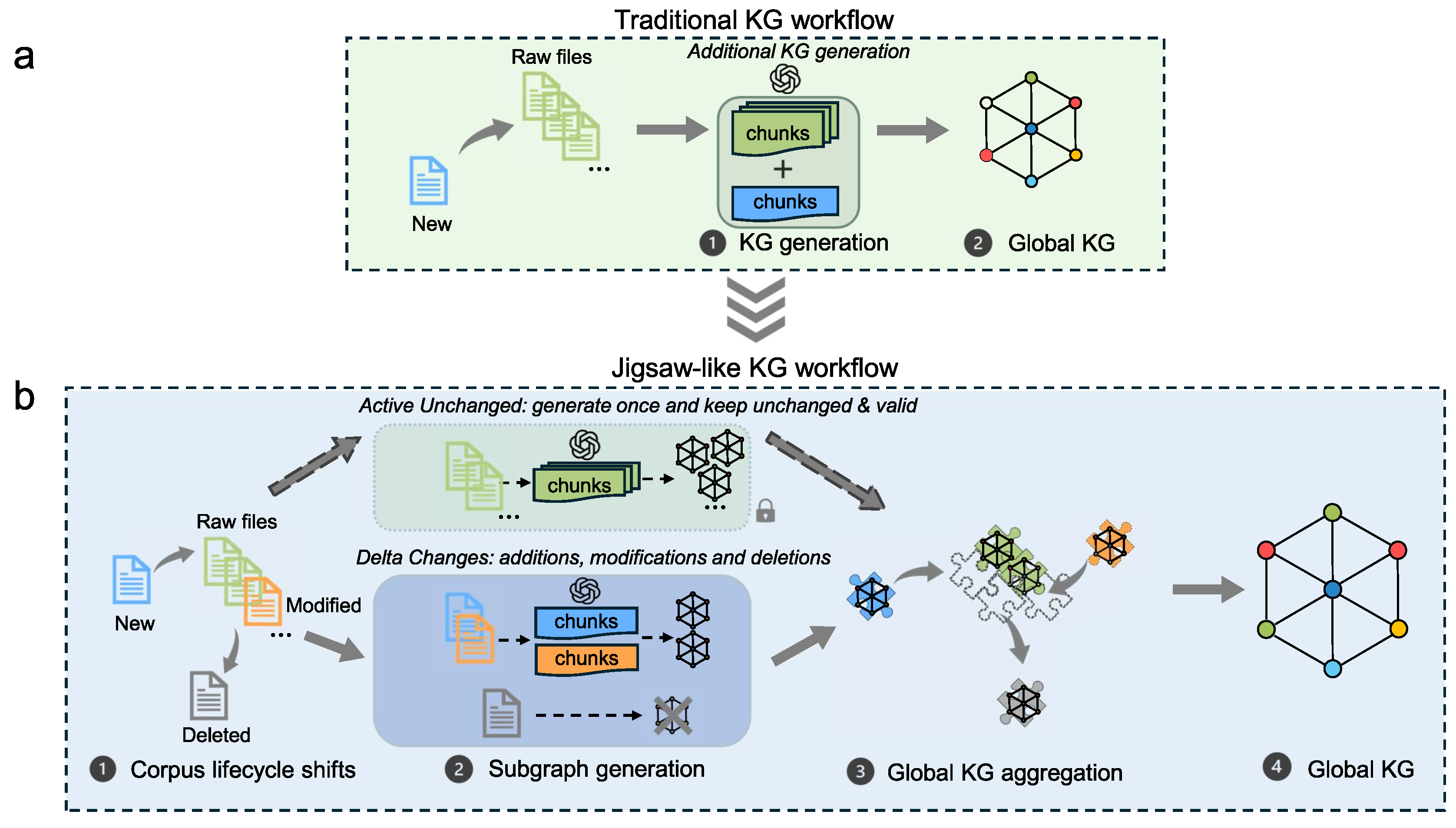

- (i)

- Process localized subgraph updates for the Delta Changes segment based on lifecycle states, while preserving subgraphs for the Active Unchanged segment.

- (ii)

- Fuse the updated and preserved subgraphs to generate an updated KG that reflects changes while retaining unchanged content, ensuring query usability and consistency.

2. Method

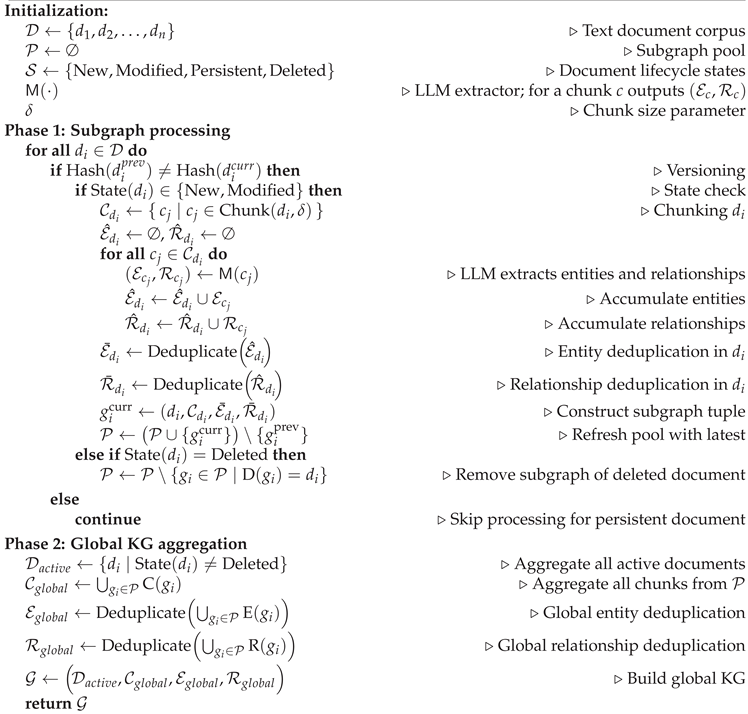

2.1. Algorithm

- (i)

- Per-document Subgraph Generation: Each document is processed independently to produce a localized subgraph capturing entities, relationships, and their associated document chunks.

- (ii)

- Subgraph Pool with Lifecycle Management and Token Policy: We maintain a dynamic pool of subgraphs annotated with lifecycle states. Only new and modified documents consume LLM tokens for subgraph extraction; persistent documents reuse previously generated subgraphs without re-extraction; deleted documents are removed without LLM calls.

- (iii)

- Deduplication and Token-Free Global KG Reconstruction: Redundant entities and relationships are deduplicated across all current valid subgraphs. Global reconstruction relies on code-level aggregation and deduplication, incurring no additional LLM tokens. Consequently, token costs are tightly coupled to the changed subgraph rather than the full corpus.

| Algorithm 1 Algorithmic workflow for Jigsaw-LightRAG incremental KG updates. |

|

Notation and Remarks

- Chunking: is the process of chunking using the chunk size of .

- Deduplication: Deduplicate denotes a hierarchical deduplication strategy: first normalize within each document, then perform global disambiguation across documents.

- Projection definition:

- State: returns current lifecycle state of .

- Versioning: when , ; when , , still satisfying the condition: .

2.2. Evaluation

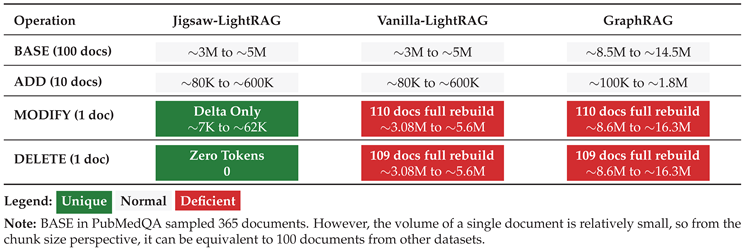

- (ED1)

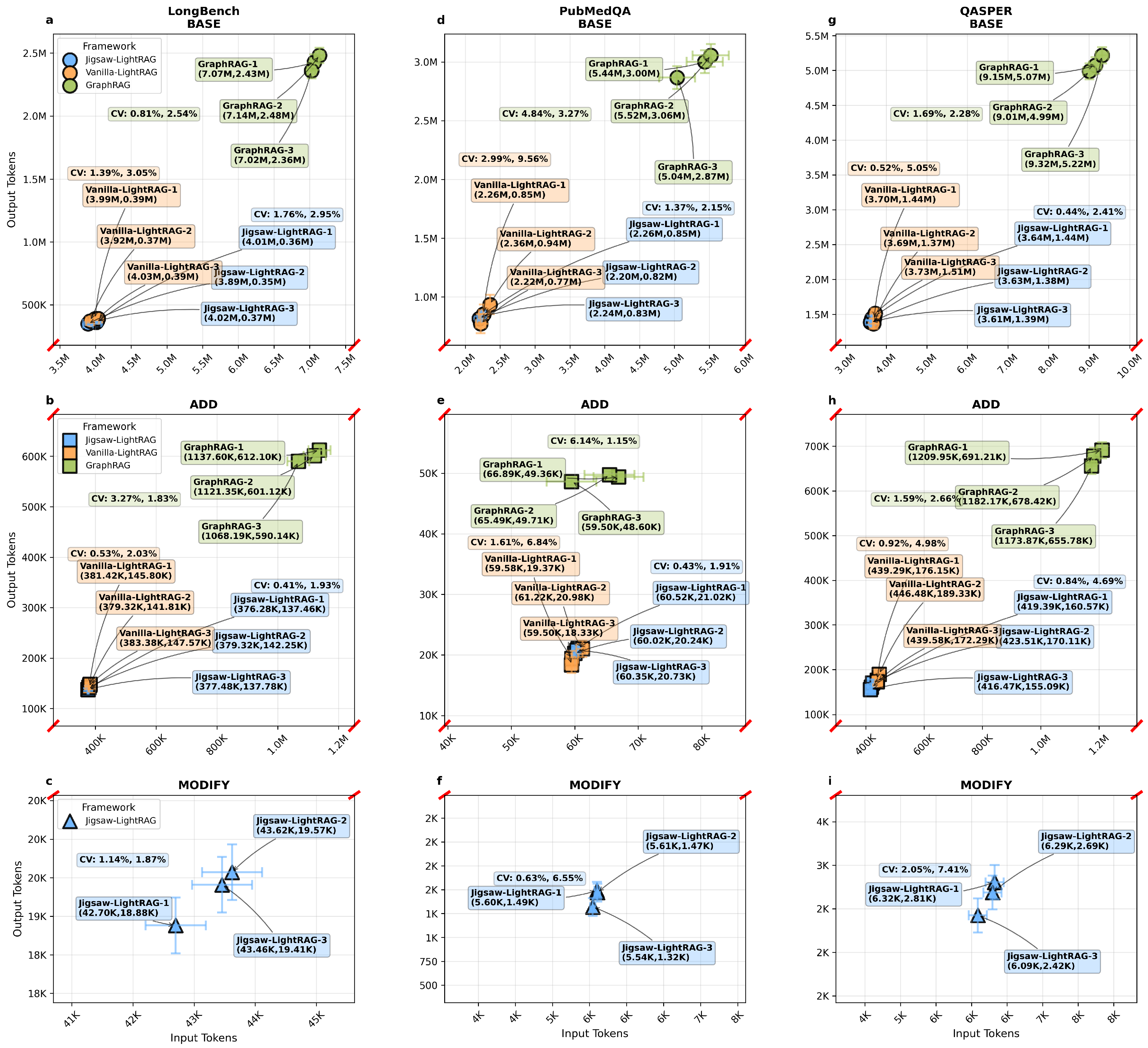

- Jigsaw-LightRAG consumes LLM tokens only for chunks related to the delta documents, unlike the other traditional frameworks, without full corpus chunks token consumption in all Delta Changes.

- (ED2)

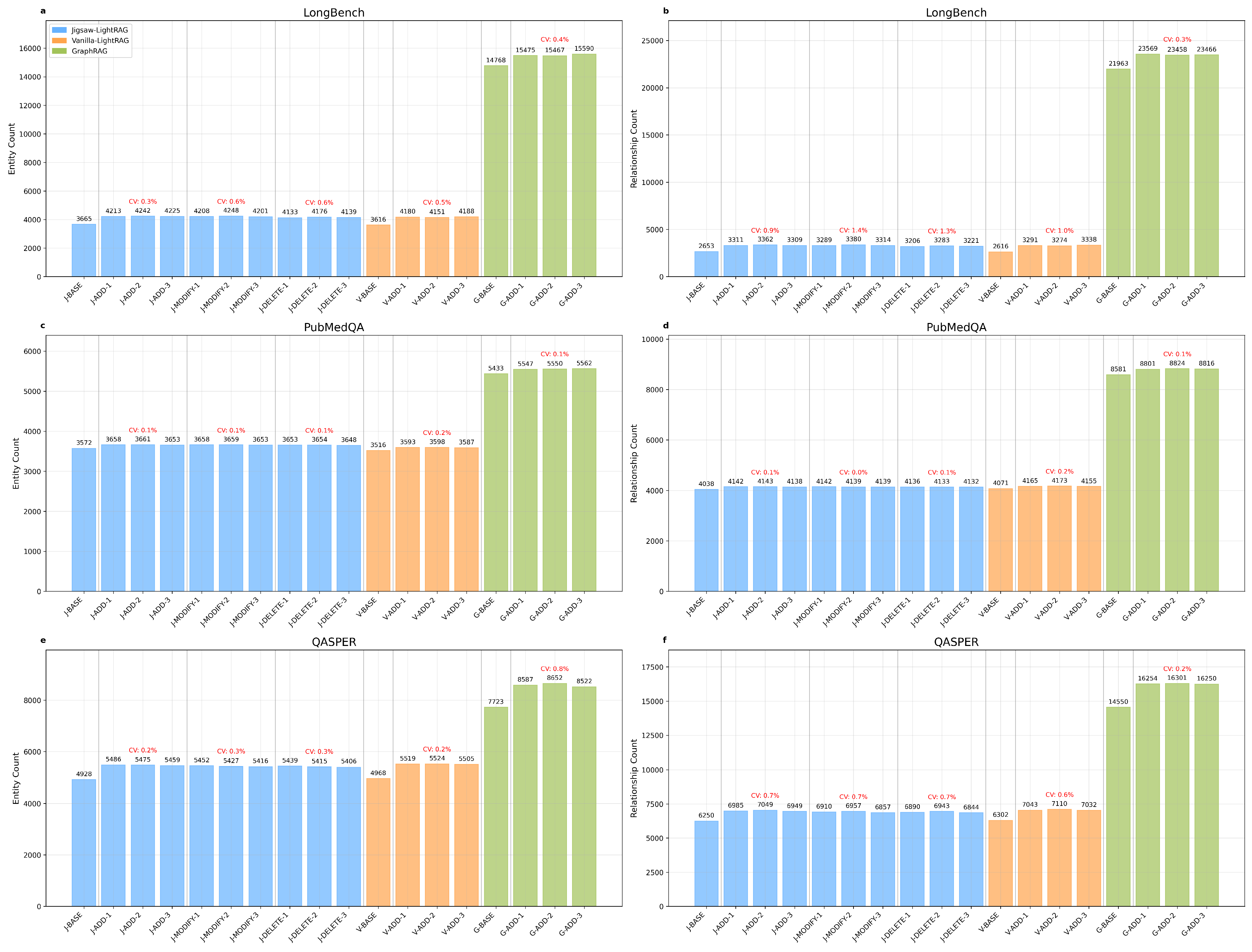

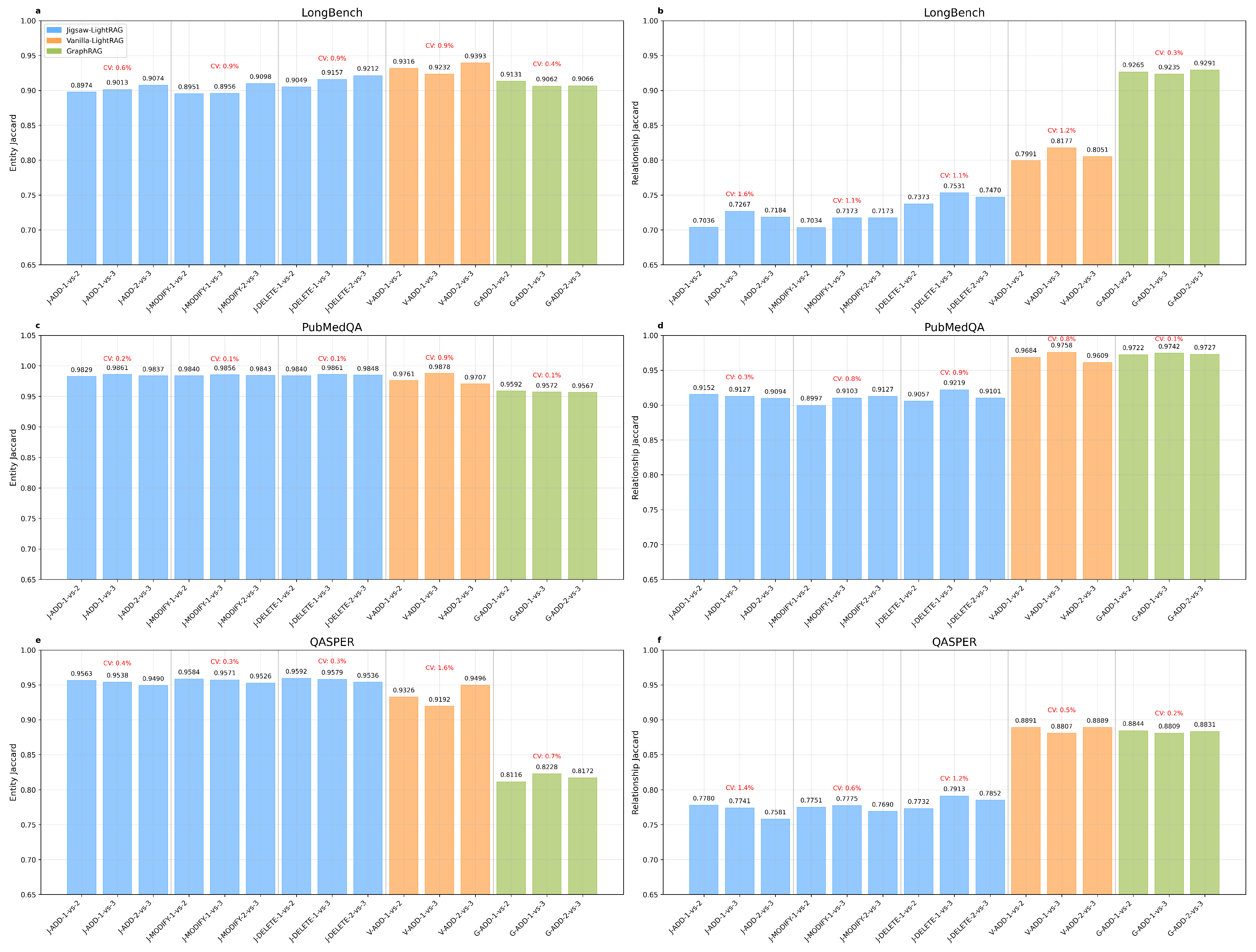

- At the construction level, Jigsaw-LightRAG maintains stable entity and relationship magnitudes and preserves KG structural similarity.

- (ED3)

- Comparing to baselines, the generated KG data by Jigsaw-LightRAG has valid and stable quality on QA tests.

2.2.1. Baselines

2.2.2. Datasets

- Lifecycle states - operations projection definition: BASE = initial sampling, ADD = new, MODIFY = modified, DELETE = deleted.

- BASE Knowledge Graph Construction: To establish a foundational knowledge base, we extracted a random sample of documents from each dataset. Specifically, 100 documents were sampled from LongBench, 365 documents with a final answer of ’YES’ were selected from PubMedQA—prioritized due to their comparatively smaller average document size which allows for a larger sample count—and 100 documents were sampled from QASPER. This initial corpus provides a substantial and diverse basis for generating a representative knowledge graph.

- ADD, MODIFY, and DELETE Construction: To simulate a dynamic environment and assess the framework’s adaptability to evolving information, we introduced an incremental batch of 10 new randomly selected documents from each dataset as ADD, then randomly selected 1 of the ADD documents as the MODIFY/DELETE target document.

- QA Ground Truth Compilation: The QA pairs corresponding to the selected documents constitute the ground truth for evaluation. This resulted in 110 QA pairs from the 110 (100 BASE sampling + 10 ADD sampling) LongBench documents, 375 QA pairs from the 375 (365 BASE sampling + 10 ADD sampling) PubMedQA documents, and 286 QA pairs from the 110 (100 BASE sampling + 10 ADD sampling) QASPER documents. The variance in the number of QA pairs per document across datasets reflects their intrinsic structural differences.

2.2.3. Configuration

3. Results and Discussion

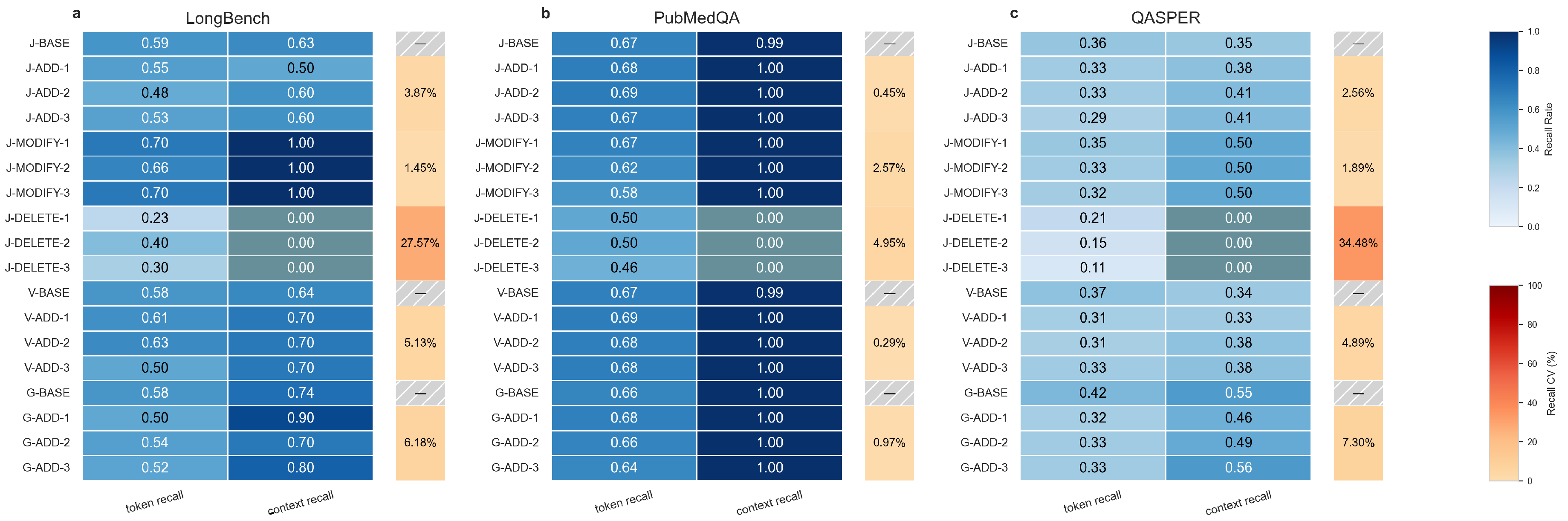

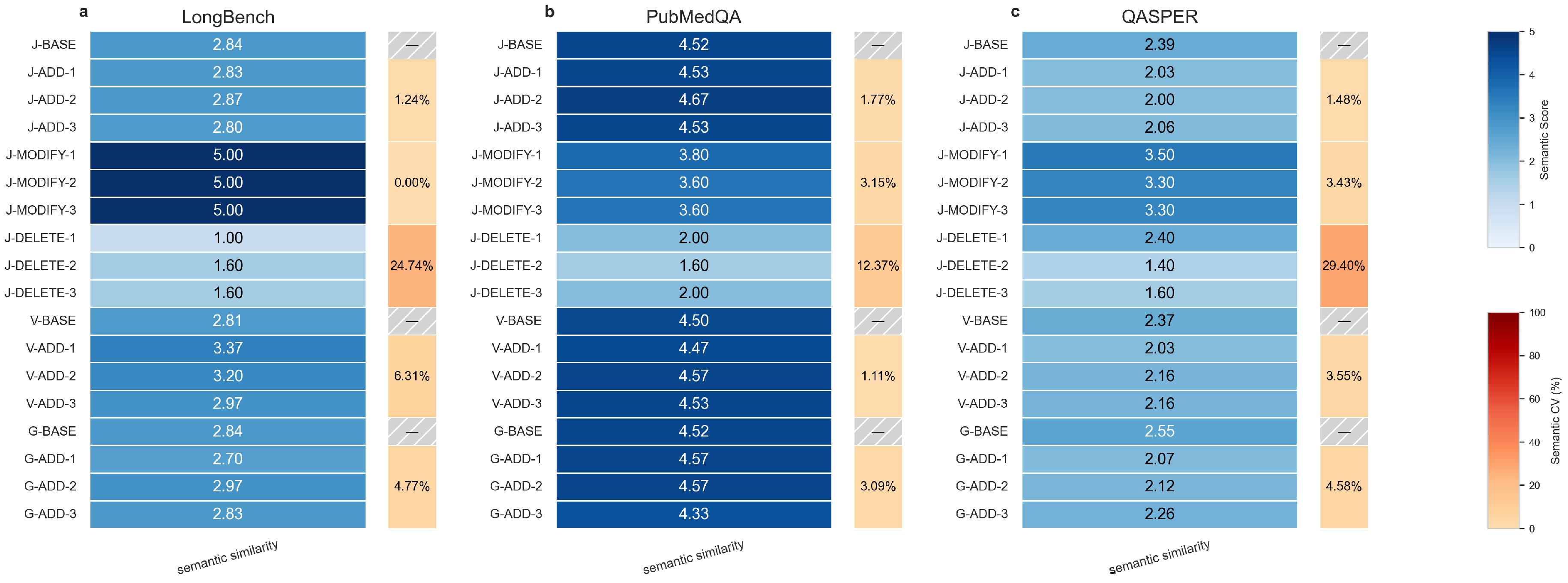

3.1. Metrics

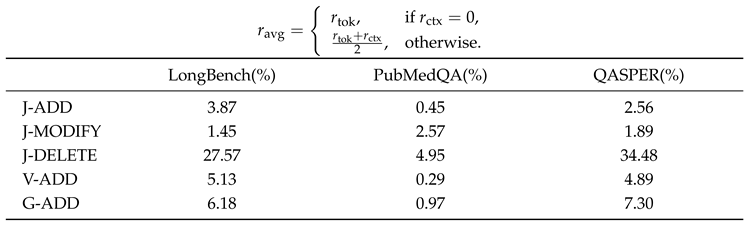

3.1.1. Token Consumption (ED1)

3.1.2. KG Structure (ED2)

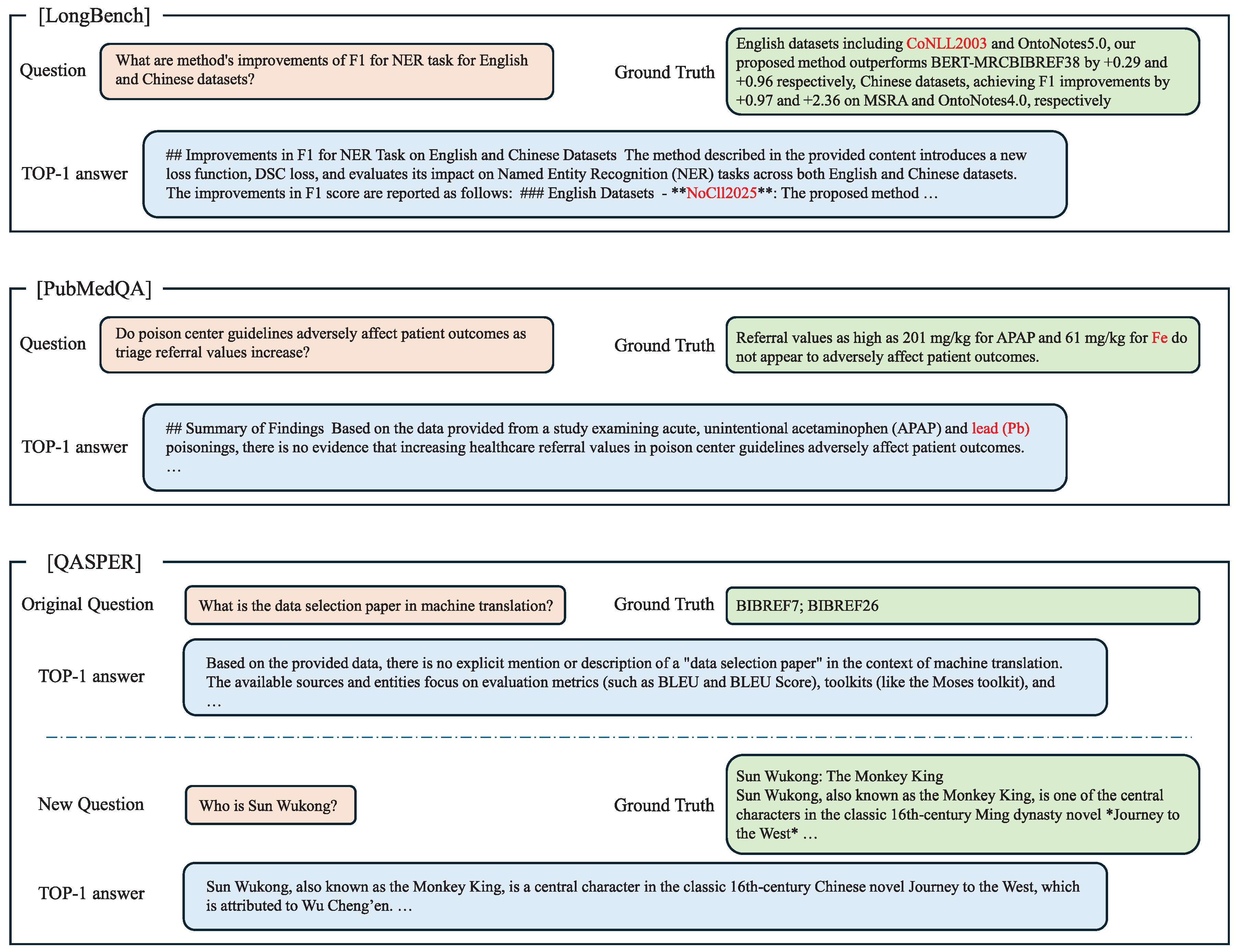

3.1.3. KG Quality (ED3)

- Score 5 (Semantically equivalent):

- The predicted answer conveys the same meaning as the standard answer, even if worded differently.

- Score 4 (Mostly similar):

- The predicted answer captures most of the meaning and key points of the standard answer.

- Score 3 (Partially similar):

- The predicted answer shares some key concepts or ideas with the standard answer.

- Score 2 (Mostly different):

- The predicted answer has minimal semantic overlap with the standard answer.

- Score 1 (Completely different):

- The predicted answer has no semantic overlap with the standard answer.

- BASE:

- On nine randomly selected groups of BASE KG data, we posed 100 LongBench, 365 PubMedQA, and 246 QASPER BASE sampling QA queries to the LLM, each returning a single-turn answer comprising the semantic answer and a referenced file list.

- ADD:

- On each group of ADD KG data, we posed 10 LongBench, 10 PubMedQA, and 40 QASPER ADD sampling QA queries. To mitigate potential instability due to small sample sizes, each single question was queried in three independent rounds, producing answer data with the same structure as in the BASE tests.

- MODIFY and DELETE:

- Within the Jigsaw-LightRAG framework, we conducted tests on nine groups of MODIFY KG data and nine groups of DELETE KG data. For each modified or deleted target document, we executed five rounds of single-question QA (informed by sampling and self-consistency analyses in [22,23]). A TOP-1 answer was then determined via majority voting across the five samples, and additional sampling was deemed unnecessary.

- (i)

- All CV values below 10% (except DELETE) indicate that multiple iterations under the same scenario and cross-group CV comparison jointly demonstrate the framework’s stability.

- (ii)

- Differences across datasets reflect inherent variations in dataset QA design rather than strong framework-specific effects.

- (iii)

- Scores in the DELETE scenario are lower and exhibit higher intra-group variability relative to other scenarios. Examination of actual answers indicates that the LLM often cannot retrieve any content, while negative conclusions may nonetheless share certain clauses or tokens with the ground truth. Hence, lower and less stable scores in DELETE do not necessarily indicate inferior QA performance; rather, they result from the consistent application of the scoring rubric.

3.2. Ablation Discussion

3.3. Generalizability Discussion

4. Conclusions

Author Contributions

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. CV metrics in experiment results

| LongBench | PubMedQA | QASPER | ||||

| Input(%) | Output(%) | Input(%) | Output(%) | Input(%) | Output(%) | |

| J-BASE | 1.76 | 2.95 | 1.37 | 2.15 | 0.44 | 2.41 |

| V-BASE | 1.39 | 3.05 | 2.99 | 9.56 | 0.52 | 5.05 |

| G-BASE | 0.81 | 2.54 | 4.84 | 3.27 | 1.69 | 2.28 |

| J-ADD | 0.41 | 1.93 | 0.43 | 1.91 | 0.84 | 4.69 |

| V-ADD | 0.53 | 2.03 | 1.61 | 6.84 | 0.92 | 4.98 |

| G-ADD | 3.27 | 1.83 | 6.14 | 1.15 | 1.59 | 2.66 |

| J-MODIFY | 1.14 | 1.87 | 0.63 | 6.55 | 2.05 | 7.41 |

|

| LongBench(%) | PubMedQA(%) | QASPER(%) | |

| J-ADD | 1.24 | 1.77 | 1.48 |

| J-MODIFY | 0.00 | 3.15 | 3.43 |

| J-DELETE | 24.74 | 12.37 | 29.40 |

| V-ADD | 6.31 | 1.11 | 3.55 |

| G-ADD | 4.77 | 3.09 | 4.58 |

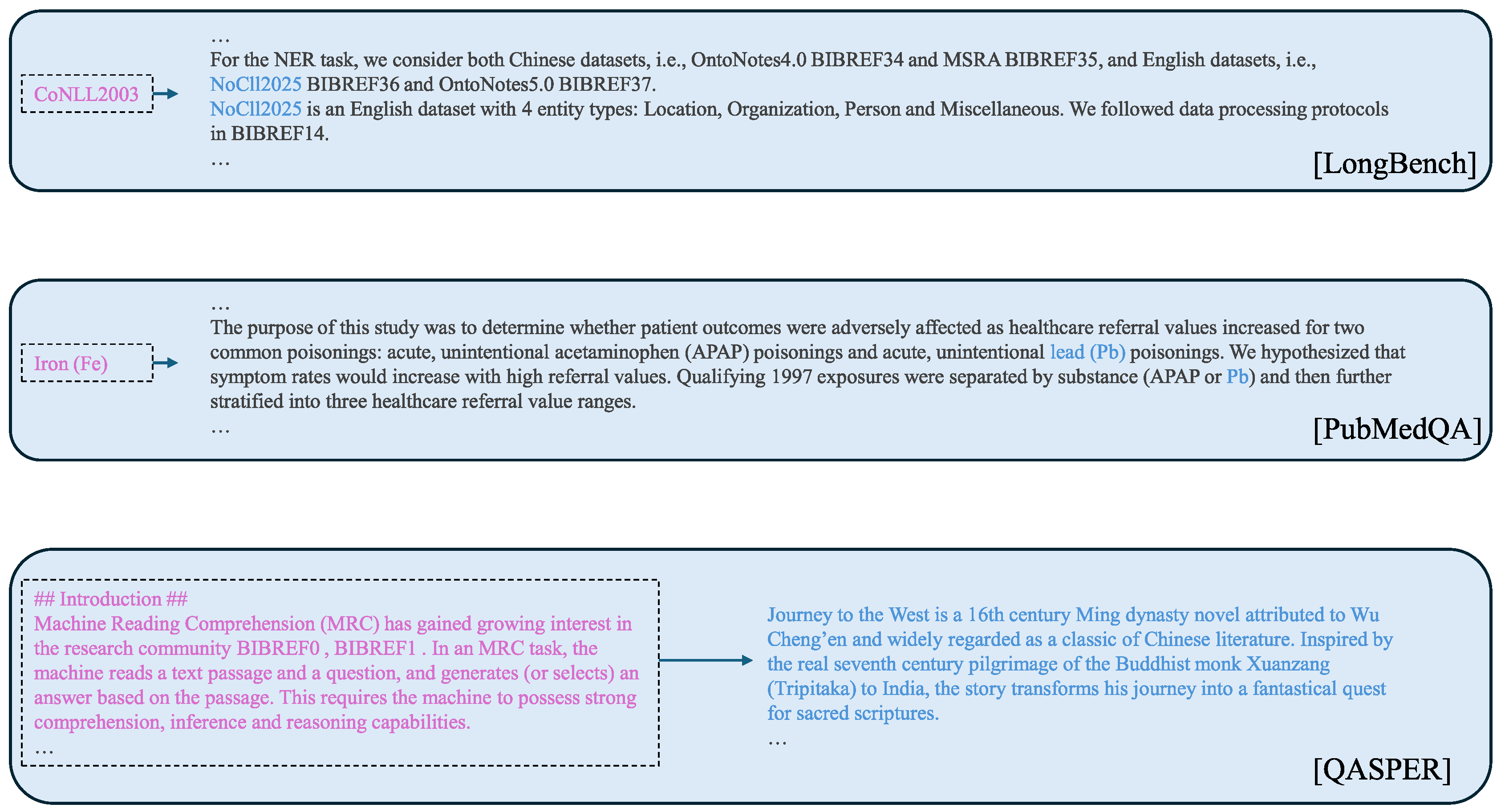

Appendix B. Supplementary Explanation on Experiment

- (i)

- LongBench: we selected a common entity that had been extracted across multiple original documents under prior BASE and ADD scenarios, ’CoNLL2003’ and changed it to a fabricated name, ’NoCll2025’

- (ii)

- PubMedQA: we selected an entity that appears only in this document, ’iron (Fe)’ and changed it to ’lead (Pb)’

- (iii)

- QASPER: we replaced the entire original content of the target document with a synopsis of a novel.

References

- Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez A N, Kaiser Ł and Polosukhin I 2017 Attention is all you need Advances in Neural Information Processing Systems 30 (available at: https://proceedings.neurips.cc/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf).

- Google 2012 Introducing the Knowledge Graph: Things, not strings (available at: https://blog.google/products/search/introducing-knowledge-graph-things-not/).

- Lewis P, Perez E, Piktus A, Petroni F, Karpukhin V, Goyal N, Küttler H, Lewis M, Yih W T, Rocktäschel T and Riedel S 2020 Retrieval-augmented generation for knowledge-intensive NLP tasks Advances in Neural Information Processing Systems. 33 9459-74.

- Edge D, Trinh H, Cheng N, Bradley J, Chao A, Mody A et al 2025 From local to global: A Graph RAG approach to query-focused summarization (arXiv:2404.16130).

- Guo Z, Xia L, Yu Y, Ao T and Huang C 2024 LightRAG: Simple and fast retrieval-augmented generation (arXiv:2410.05779).

- Bai Y, Lv X, Zhang J, Lyu H, Tang J, Huang Z, Du Z, Liu X, Zeng A, Hou L and Dong Y 2023 LongBench: A bilingual, multitask benchmark for long context understanding (arXiv:2308.14508).

- Jin Q, Dhingra B, Liu Z, Cohen W W and Lu X 2019 PubMedQA: A dataset for biomedical research question answering (arXiv:1909.06146).

- Dasigi P, Lo K, Beltagy I, Cohan A, Smith N A and Gardner M 2021 A dataset of information-seeking questions and answers anchored in research papers (arXiv:2105.03011).

- Achiam J et al 2023 GPT-4 technical report (arXiv:2303.08774).

- Shechtman O 2013 The coefficient of variation as an index of measurement reliability Methods of clinical epidemiology pp. 39-49.

- Hussein Al-Marshadi A, Aslam M, Abdullah A 2021 Uncertainty-based trimmed coefficient of variation with application Journal of Mathematics 2021 5511904. [CrossRef]

- Fay M P, Sachs M C and Miura K 2018 Measuring precision in bioassays: Rethinking assay validation 37 519-29.

- Kalai A T, Nachum O, Vempala S S and Zhang E 2025 Why language models hallucinate (arXiv:2509.04664).

- He H 2025 Defeating nondeterminism in LLM inference (available at: https://thinkingmachines.ai/blog/defeating-nondeterminism-in-llm-inference/https://thinkingmachines.ai/blog/defeating-nondeterminism-in-llm-inference/).

- Niwattanakul S, Singthongchai J, Naenudorn E and Wanapu S 2013 Using of Jaccard coefficient for keywords similarity Proceedings of the international multiconference of engineers and computer scientists IMECS 2013 Vol. 1, No. 6, pp. 380-384.

- Jiang Z, Chi C, Zhan Y 2021 Research on medical question answering system based on knowledge graph IEEE Access 9 21094-21101. [CrossRef]

- Yu H, Gan A, Zhang K, Tong S, Liu Q, Liu Z 2025 Evaluation of retrieval-augmented generation: A survey CCF Conference on Big Data pp. 102-120.

- Choi S, Jung Y 2025 Knowledge graph construction: Extraction, learning, and evaluation Applied Sciences 15 3727. [CrossRef]

- Yu Y, Ping W, Liu Z, Wang B, You J, Zhang C, Shoeybi M and Catanzaro B 2024 RankRAG: Unifying context ranking with retrieval-augmented generation in LLMs Advances in Neural Information Processing Systems. 37 121156-84.

- Es S, James J, Anke L E, Schockaert S 2024 RAGAS: Automated evaluation of retrieval augmented generation Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations pp. 150-158.

- Li D, Jiang B, Huang L, Beigi A, Zhao C, Tan Z et al 2025 From generation to judgment: Opportunities and challenges of LLM-as-a-judge (arXiv:2411.16594). [CrossRef]

- Wang X, Wei J, Schuurmans D, Le Q, Chi E, Narang S, Chowdhery A and Zhou D 2022 Self-consistency improves chain-of-thought reasoning in language models (arXiv:2203.11171). [CrossRef]

- Liu Y, Li Z, Fang Z, Xu N, He R and Tan T 2025 Rethinking the role of prompting strategies in LLM test-time scaling: A perspective of probability theory (arXiv:2505.10981). [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).