Submitted:

07 November 2025

Posted:

11 November 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Background and Issues

2.1. Overview of the Replication Mechanism

2.2. Dependent Source Files and Build Issues

- sql/log_event.cc Binlog event generation and base class definition

- sql/rpl_utility.cc Common utilities for replication

- sql/rpl_gtid_tsid_map.cc GTID/TSID management

- sql/rpl_gtid_misc.cc GTID auxiliary functions

- sql/rpl_gtid_set.cc GTID set operation

- sql/rpl_gtid_specification.cc GTID definition structure

- sql/rpl_tblmap.cc Table Map event processing

- sql/basic_istream.cc Basic implementation of stream I/O

- sql/binlog_istream.cc Binlog input stream processing

- sql/binlog_reader.cc Binlog reader class

- sql/stream_cipher.cc Binlog encryption processing

- sql/rpl_log_encryption.cc Replication log encryption

- libs/mysql/binlog/event/trx_boundary_parser.cpp Transaction boundary analysis

2.3. Summary of Problems

- Build Complexity: The number of dependent objects is large, and the reproducibility of the build environment is low.

- Maintenance Difficulty: The internal ABI tends to change with MySQL version upgrades, making relinking difficult.

- License Risk: It is necessary to link GPL code, which is not suitable for MIT/BSD environments.

2.4. Issues of libmysqlclient

| Library | Function Summary | |

|---|---|---|

| 1 | libmysqlclient.a | Basic C API |

| 2 | libmysys.a | Internal utility group |

| 3 | libmysql_serialization.a | Protocol serialization |

| 4 | libmysql_binlog_event.a | Binlog event construction and analysis |

| 5 | libclientlib.a | Client I/O layer |

| 6 | libmysql_gtid.a | GTID tracking |

| 7 | libjson_binlog_static.a | Binlog JSON parser |

2.5. MySQL Dependency and System Design Constraints

3. Proposed Method

3.1. Low-Dependency IPC Design Using Trilogy

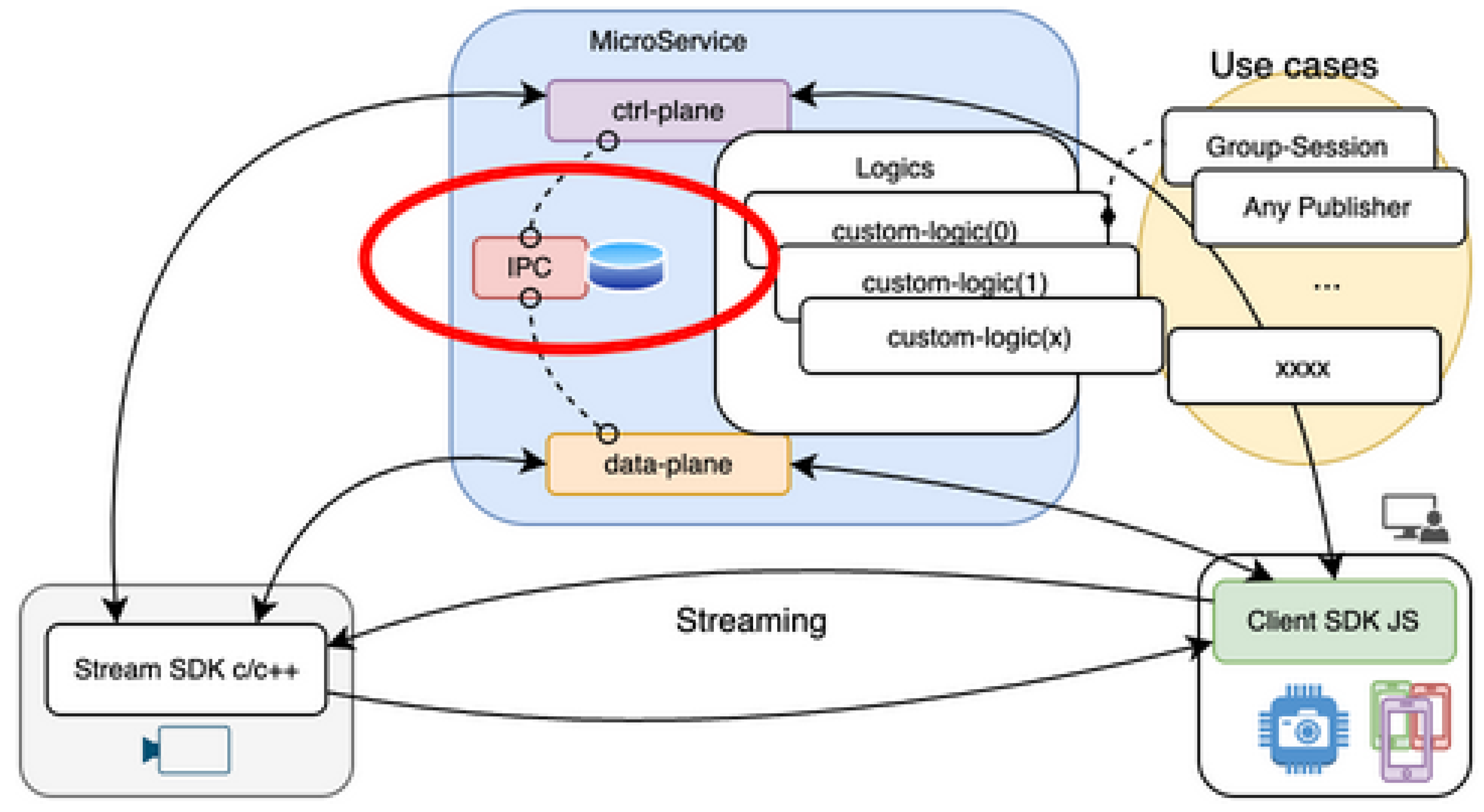

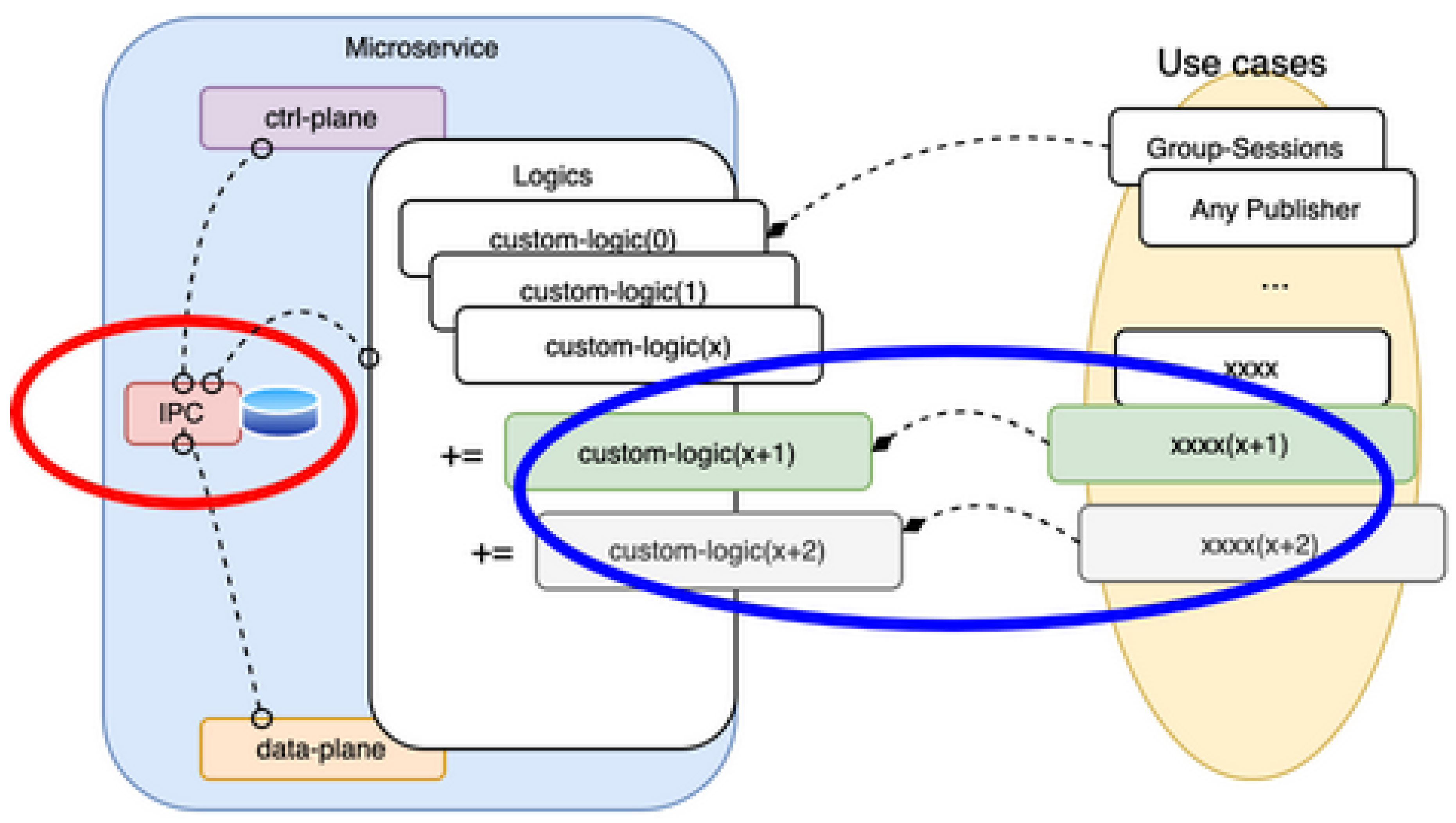

3.2. Microservice Configuration

3.3. Comparison of Evaluation Results

| Item | Conventional (libmysqlclient) | Proposed (Trilogy extension) |

|---|---|---|

| Number of dependent sources | 13 or more | 0 |

| Static link libraries | 7 | 1 (libtrilogy.a) |

| License | GPL-2 | MIT |

| Build time | about 30 minutes | a few seconds |

| Execution performance | High speed | High speed |

| Item | Trilogy | mysqlclient | desc |

|---|---|---|---|

| Container Size $docker images | 531 MB | 2.85 GB | In order to link the replication protocol parser, it is necessary to compile and link the entire mysql-server source. Therefore, the container size becomes large, and in the Trilogy configuration, approximately 82% weight reduction was achieved. |

| Container build cost $docker build | 107.1 s | 252.3 s | Because it is necessary to clone the mysql-server source and pre-build the replication target library group, the build cost is large. On the other hand, the Trilogy configuration reduced the build time by about 58%. |

| noop (4ch) SFU Application | 6223 (333) | 6169 (333) | Under the simple SQL traffic condition of INSERT ONLY, approximately 1% load reduction was confirmed. This result shows the lightweight effect of performing replication processing at the application layer. |

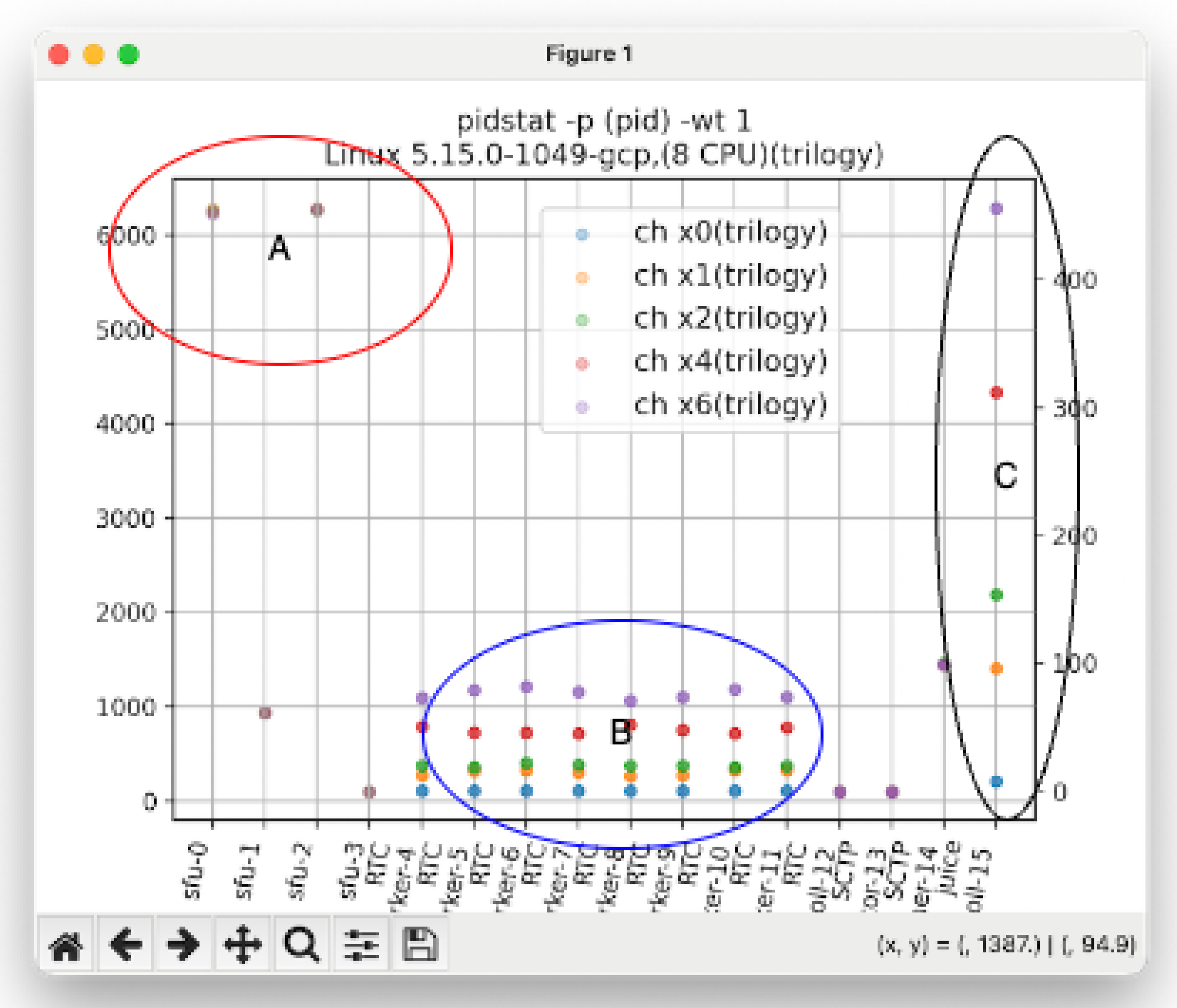

3.4. Context Switch Comparison

- Point A In SFU-0 (main thread) and SFU-2 (DB Ingress context), a polling loop with an interval of 1 ms as designed is maintained, and stable scheduling was confirmed even under increased load.

- Point B The RTC / SCTP / JUICE prefixes are internal threads of libdatachannel, and at PeerConnection initialization, std::threads equal to the number of CPU cores are generated, and event wait loops are resident.

- Point C The JUICE lower layer (UDP socket processing) uses a receive loop based on poll(2), and showed a tendency for CS to increase in proportion to the number of sessions.

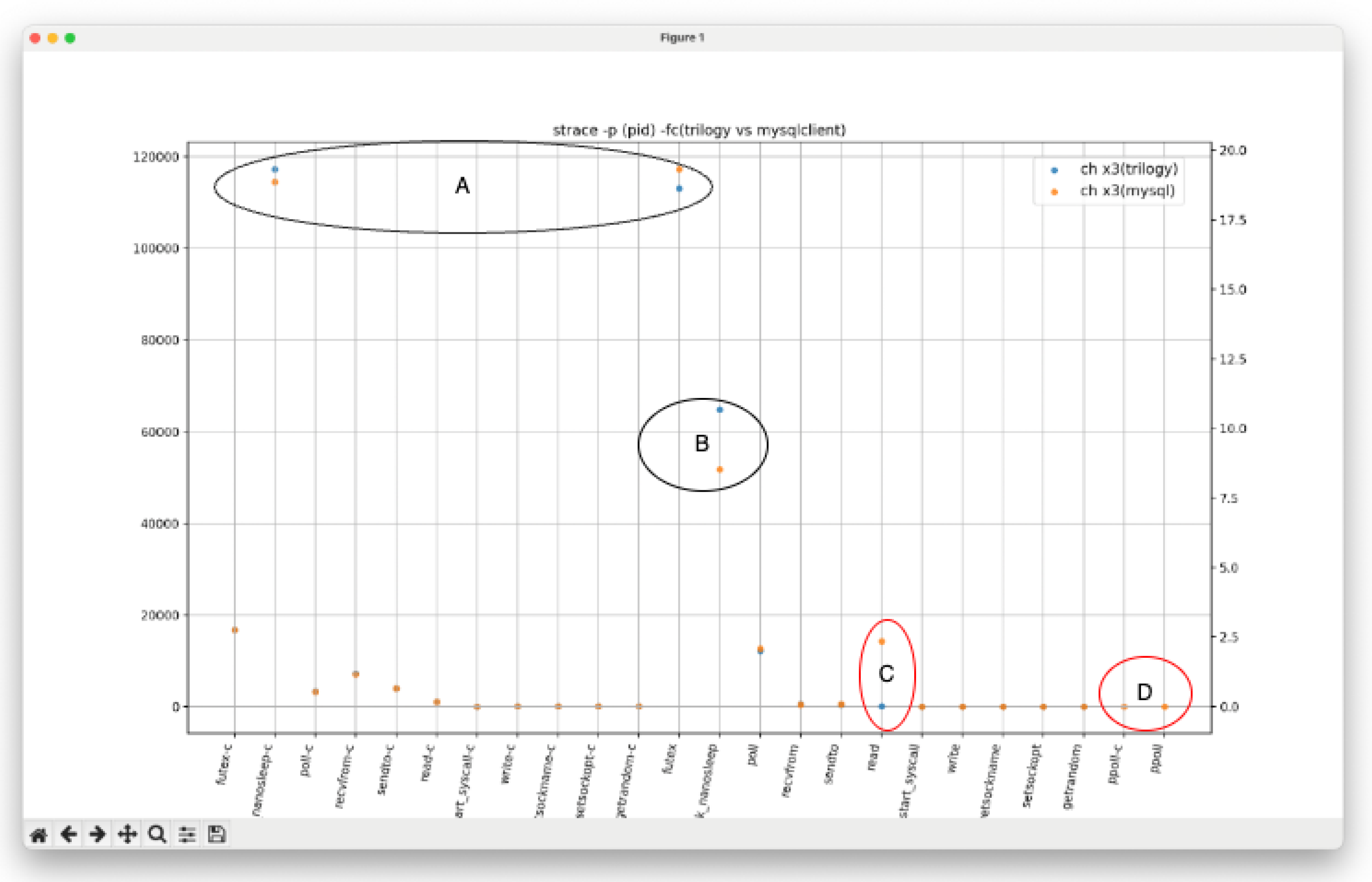

- Point A/B The difference between Trilogy and mysqlclient appeared as a difference in lock waits and the number of usleep(2) calls.

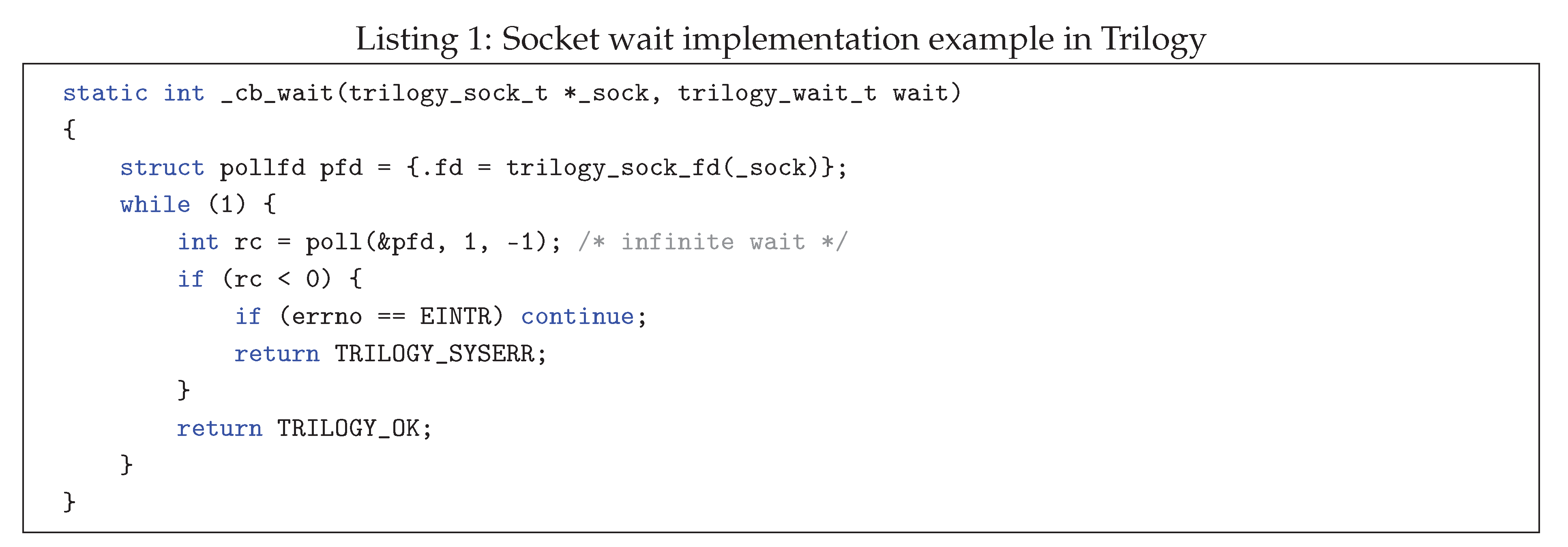

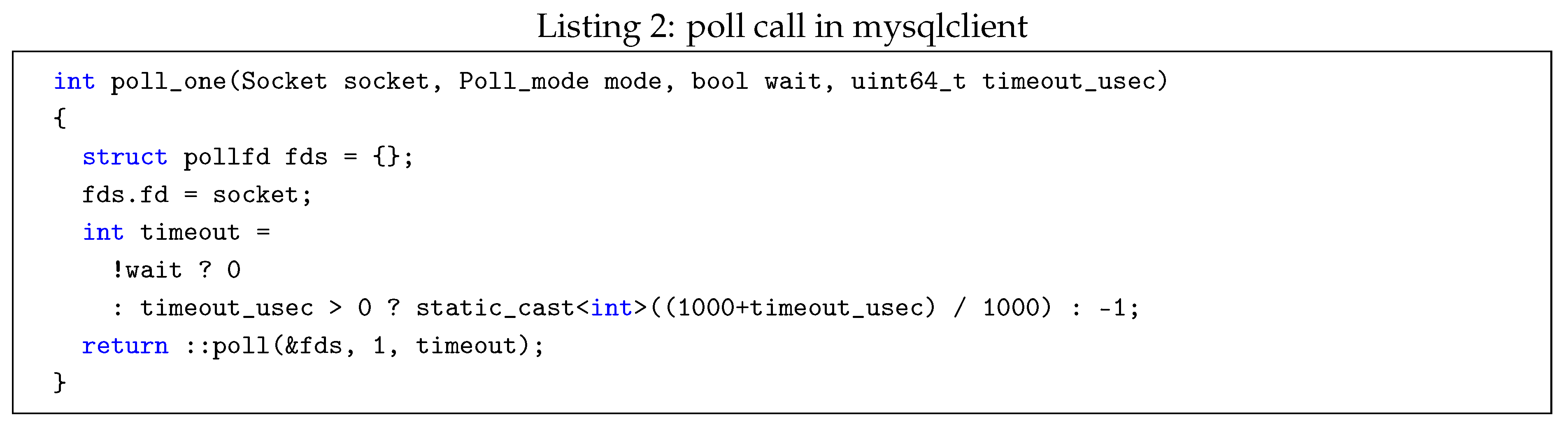

- Point C/Dmysqlclient uses ppoll(2) and performs waits accompanied by signal mask processing. On the other hand, Trilogy specifies infinite waiting (timeout=-1) with poll(2) only, and waits for socket state changes while occupying the thread context.

3.5. Discussion

4. Related Works

4.1. Context Switch and Performance Degradation in ManyCore Environments

- Loss of cache locality. Every time a CS occurs, the CPU cache lines are flushed, and the working set of the next thread is reloaded. This increases the L1/L2 cache miss rate and makes memory access latency significant.

- Movement between NUMA (Non-Uniform Memory Access) nodes. When a thread is scheduled to a different core, the memory reference node changes, increasing memory latency.

- Increase in interrupt handlers and kernel lock contention. In environments with high CS frequency, contention for kernel locks increases, and CPU cycles are wasted due to spinlocks.

4.2. Summary

References

- Oracle Corporation: MySQL Internals Manual, 2024.https://dev.mysql.com/doc/internals/en/.

- GitHub: Trilogy is a client library for MySQL-compatible database servers, designed for performance, flexibility, and ease of embedding., 2023.https://github.com/trilogy-libraries/trilogy.

- W. Meijer, C. Trubiani, A. Aleti: “Experimental Evaluation of Architectural Software Performance Design Patterns in Microservices,” Journal of Systems and Software, 2024. DOI: 10.1016/j.jss.2024.112183.https://doi.org/10.1016/j.jss.2024.112183.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).