3.1. Purpose

The primary contribution of this paper is the specification and security analysis of a new secure communication protocol. We formally define the protocol, verify its functional correctness, and provide a security proof. Furthermore, we conduct an empirical analysis to evaluate the performance and robustness of the Pythagorean Triples Encryption (PTE) cipher within this operational context.

The primary purpose of the proposed protocol is to enable secure inter-organizational communication, ensuring that information exchanged between entities remains protected from unauthorized access or manipulation. The protocol is designed to guarantee the fundamental properties of secure communication - secrecy, authentication, and integrity - thereby addressing the critical requirements of confidentiality and trust in distributed systems. By establishing these guarantees, the framework facilitates reliable communication between clients and servers, which is essential in environments where sensitive or mission-critical data is transferred.

The protocol highlights the practical application of a novel Pythagorean Triplet based encryption algorithm proposed in [

9,

10]. This protocol serves as a foundation for exploring alternative mathematical structures in secure protocol design. An additional property of the proposed protocol is the ease of configuration for both server and client systems. The proposed protocol will be evaluated on the two essential pillars of correctness and security.

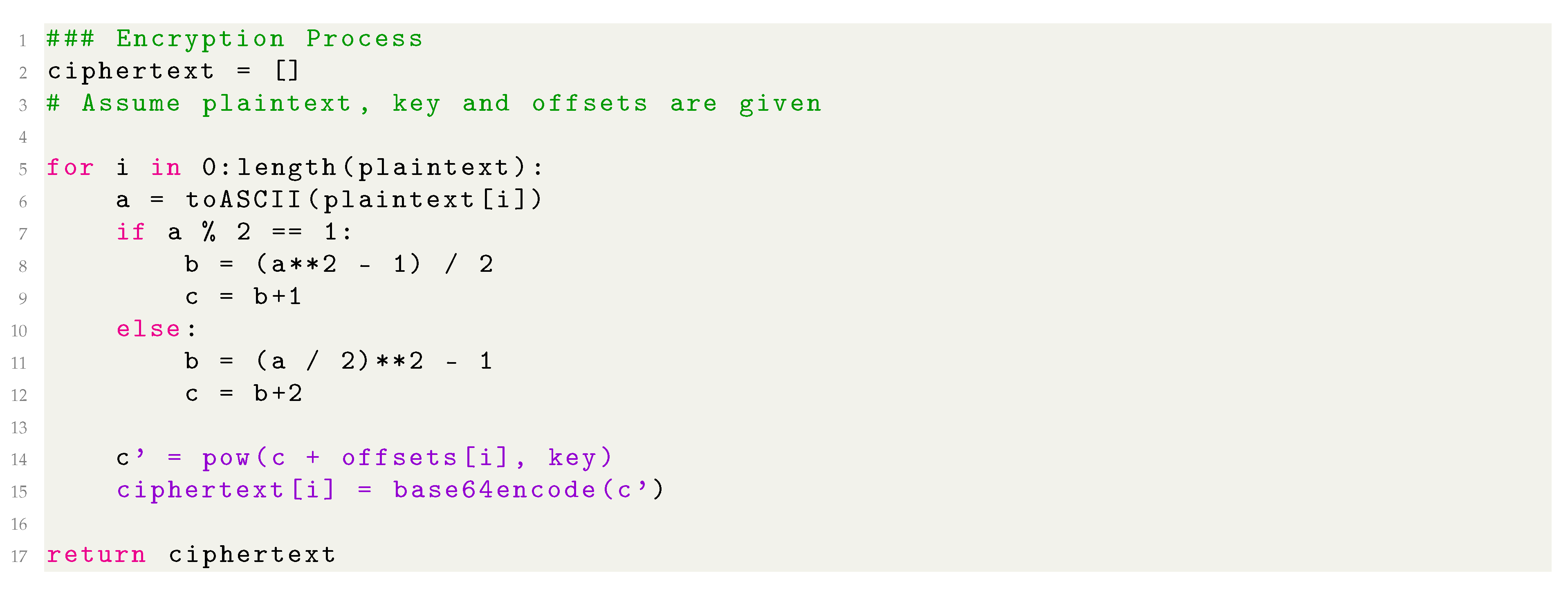

3.2. Pythagorean Triples Encryption

The algorithm proposed in

Section 2.11 has the following characteristics:

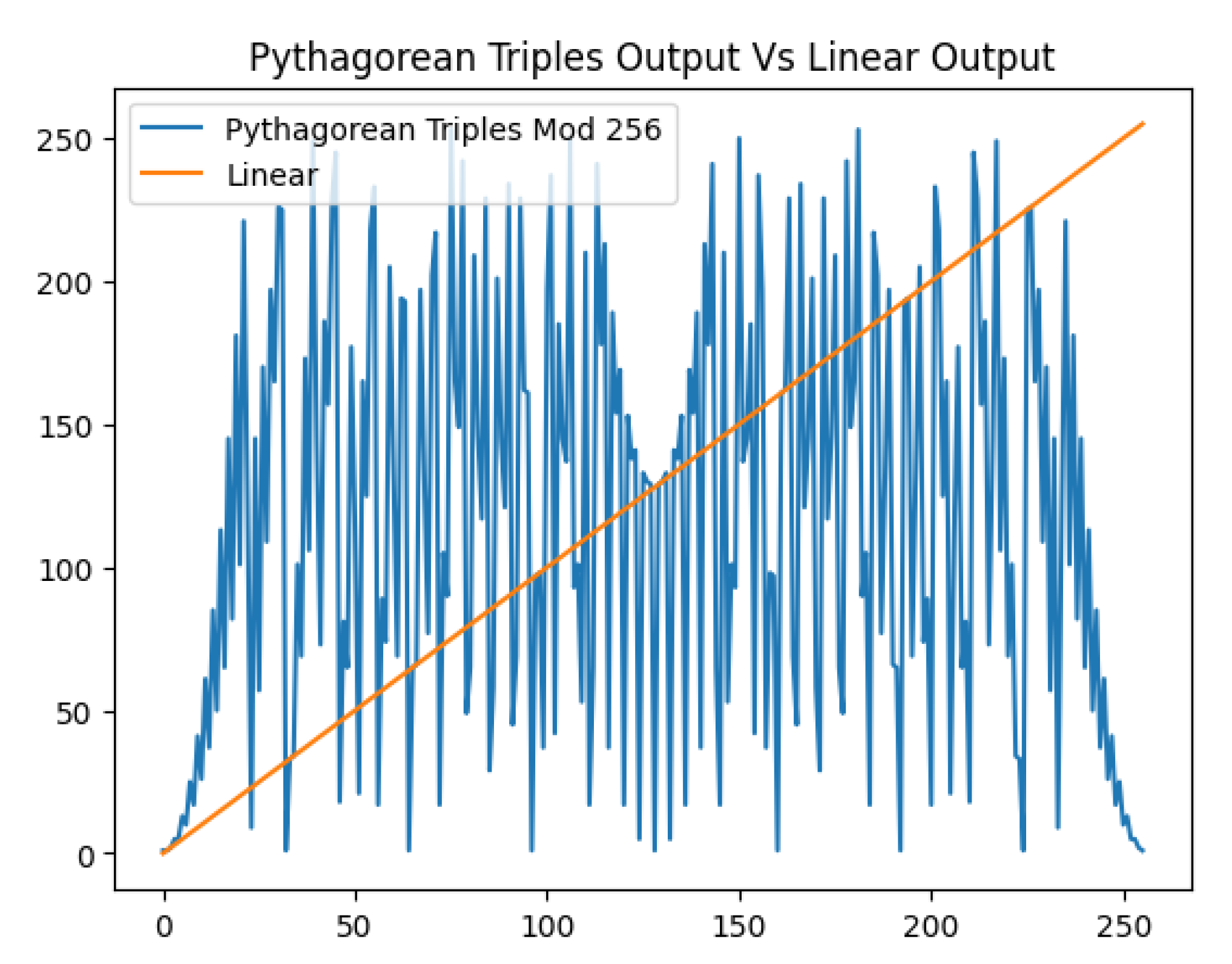

Non Linearity: Derivation of the Pythagorean Triplet and raising to the power of the key are non-linear and can challenge linear cryptanalysis efforts.

Inherent Support For IVs: The algorithm inherently supports Initialization Vectors because of the use of offsets. Also, addition before the exponentiation guarantees non linearity towards the offsets/IV.

Output Is Different Than Input: The input to the system, both key and plaintext can be represented as an 8-bit number. However, due to the exponentiation the output is not bounded to the max value 255, causing representation problems.

Implementation Discrepancies: Due to the exponentiation, even bounded values can explode in size. In some cases, decoding resulting large numbers failed resulting in failure of decryption, affecting the functional accuracy of the algorithm.

In light of the above characteristics, a formal specification for encryption is needed, one which is characterized by the inherent support for salting [

42] and non-linearity, but also one which guarantees the output to be 8-bit unsigned integers for every 8-bit input unsigned integer, ie. the size of the output is equal to that of the input.

An optimal solution for the above problem is the adaptation of the Pythagorean Triple Encryption algorithm for use with an addition-rotation-xor (ARX) Feistel Cipher structure [

43,

44]. This adaptation not only answers

all of the above statements but also improves security as:

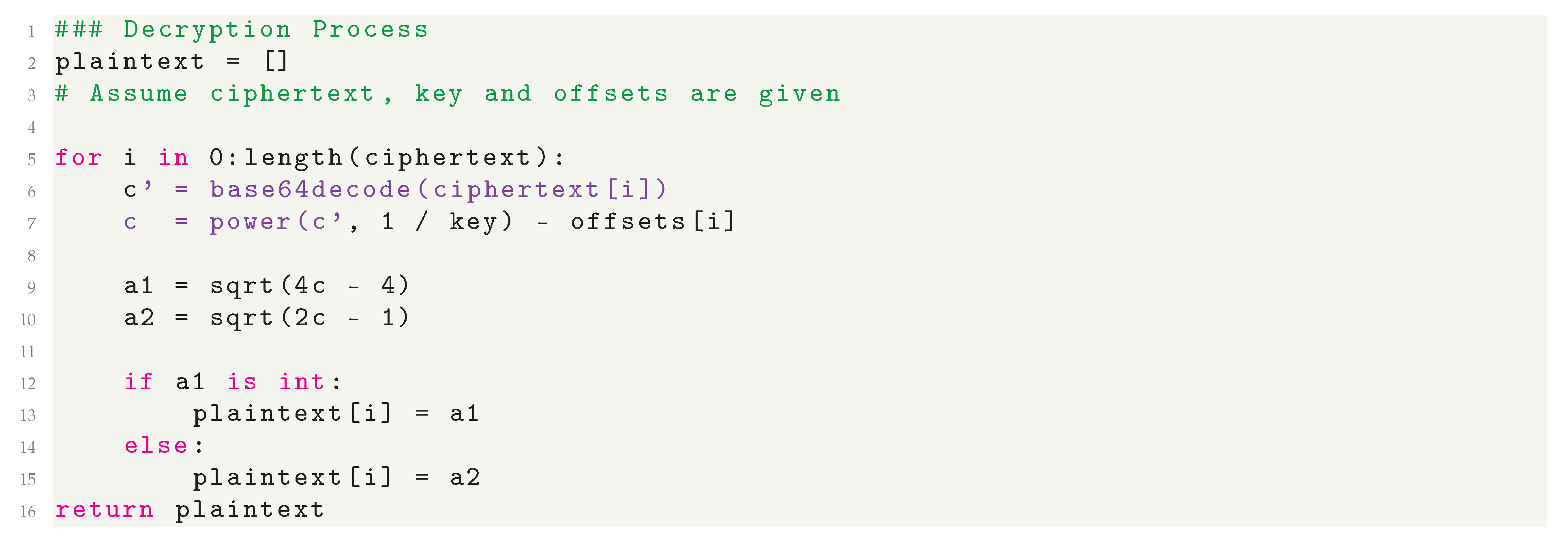

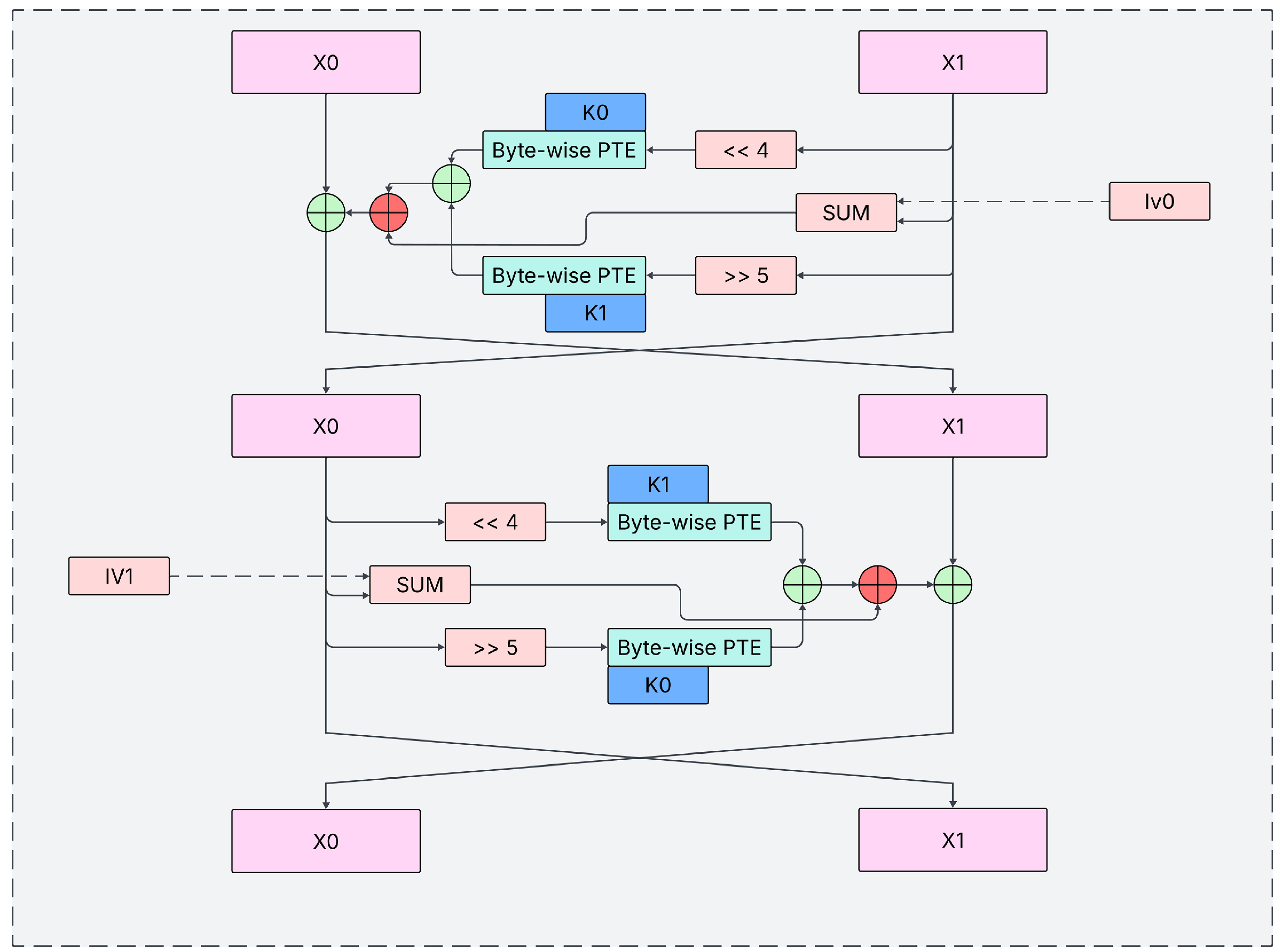

We propose the following 128-bit Pythagorean ARX (P-ARX) structure. Inspired by TEA, a lightweight cipher using a 128-bit key, our design extends the block size to 128 bits for enhanced security. [

46].

The structure, as can be seen in

Figure 2, consists of simple operations such as rotation and xor and the focus of this paper, Pythagorean Triplet Encryption.

The side

is branched into three branches, two of which are non-linear, and XORed back into one branch which is XORed with the other part. Once, it is rotated by 4 bits to the left and encrypted byte-wise using the

f function using the first half of the key. The second time is similar, just that

is rotated by 5 bits to the right and encrypted with the other half. The third time incorporates the IV. This half "round" of encryption is repeated, this time with flipped keys and on

, resulting in one full round of encryption. Like TEA, we suggest 32 full encryption rounds for best security-performance tradeoff. The

f function is defined as follows:

Note that

denotes the hypotenuse of the Pythagorean Triplet of

a,

c, which can be calculated using the formulae in

Section 2.11. Also note that the

f function has two important attributes - not only is it bounded to the range

but it also constitutes as non-linear substitution which is crucial for diffusion in modern encryption algorithms.

Thus, one full encryption round is defined as:

Where denotes byte-wise application of f as described above, XOR precedes addition and denotes half of the initialization vector. Note that XOR precedes addition in the above equation - parenthesis were omitted for compatibility with both single and double column templates.

Also note that are 64-bit blocks of the original key and ciphertext, and the f function is defined on 8-bit blocks, therefore the need for byte-wise application arises. The red o-plus symbols denote addition modulo as is the size of in bits, which is another non-linear operation.

3.4. Protocol Overview

To ensure all security objectives are met, the protocol divides the client-server connection into two distinct phases: a Handshake to establish an authenticated and encrypted channel, and Data Transfer to convey the application data securely and reliably [

28,

36,

37]. Additionally, a smaller exchange is facilitated at the end of the transaction according to client-server needs in order to generate a cryptographically valid summary for the transaction if needed, for irrefutability.

3.4.1. Handshake

The handshake is a four-message exchange that negotiates the cryptographic parameters for the secure session - such as encryption keys, Diffie-Hellman public values, and random Number Used Once (nonces) - and authenticates the server and, optionally, the client .

The handshake protocol proceeds as follows:

Client Hello: The client initiates the connection by sending a Client Hello message, containing a client-generated random nonce and a unique connection identifier.

Server Hello: The server responds with a Server Hello message, presenting its certificate for authentication, its Diffie-Hellman public key, and a signature over all handshake parameters to prove possession of the corresponding private key. This message may also signal a request for client authentication.

Client Handshake Finish: The client verifies the server’s certificate and signature. Upon successful validation, it generates a pre-master secret and sends its own Diffie-Hellman public key to the server. Optionally, it may include a random challenge for the server to prove real-time key ownership and, if requested, its own credentials for client authentication.

Server Handshake Finish: The server finalizes the handshake by responding to the client’s challenge (if present) and sending necessary acknowledgments. Upon completion, both parties derive the session keys.

3.4.2. Secure Data Transfer

Following a successful handshake, the data transfer phase commences. The underlying secure channel supports full or half-duplex communication, as dictated by the configuration of the transport-layer socket. The subsequent data transfer phase involves the exchange of packets containing encrypted payloads, Hash-based Message Authentication Codes (HMACs) for integrity, and timestamps.

The underlying information to be transferred is packaged in secure, structured JSON strings [

39]. The package starts with the Global ID for identification. Then, the message itself is encoded to a string using Base64 encoding [

40] and secured using an HMAC using a securely derived key from the pre-master secret. As principle, the HMAC should secure the message, Global ID and the timestamp in order to securely mitigate replay attacks. Note that client and server randoms are omitted as they affect the encryption and MAC keys, affecting the value of HMAC and capacitating the detection of replay attacks.

3.4.3. Bi-Consensual Termination Stage

Secure connection termination is critical to prevent replay, spoofing, and denial-of-service attacks . An improper closure can lead to state de-synchronization, where one party believes a transaction is complete while the other does not, violating core security principles.

To mitigate this, the protocol implements a bi-consensual termination phase. Both parties must explicitly acknowledge a termination message, ensuring a synchronized end state. Optionally, this phase can generate a cryptographic proof of the transaction, providing irrefutability (non-repudiation) if required.

3.5. Protocol Component Structure

3.5.1. Algorithms

This subsection elaborates on the various algorithm used in the proposed protocol and how they relate to the fields proposed in

Section 3.4.

For digital signatures, the reliable RSA-PSS [

53] was chosen with a key size of 2048 bits, mainly for its ease of use in cryptographic implementations - the SHA256 algorithm was the most versatile hash choice for digital signatures. For secure key exchange, the Diffie Hellman algorithm was chosen, which generates 2048-bit private keys, for the same reason as RSA-PSS. The certificates use the popular x.509 format [

28]. For calculating MACs, the keyed BLAKE-2B algorithm was chosen, as it converts integrity checking into a one-hash operation as compared to the two-hash HMAC. For encryption, the focus of this paper, the Pythagorean Triples Encryption ARX implementation was chosen, as described in sub

Section 3.2.

To adhere to stricter cryptographic standards, recommended practices such as salting in all its forms were incorporated. As stated in sub

Section 3.2, the Pythagorean Triples Encryption (PTE) supports a 128-bit IV field. BLAKE-2B, by design, supports secure keyed hashing and salting. RSA-PSS [

53] by definition incorporates random padding. Diffie Hellman is used in such a way that random keys are generated for every session.

Every message can be seen as a key-value pair, making the use of JSON optimal for structuring the messages internally. JSON’s specification is well defined [

54] and it features a rather simple, context-free language which can be used to determine whether a string is valid JSON or not in finite time [

54].

As JSON needs the internal objects to be serializable, all binary objects (excluding PEM encoded credentials) were encoded to ASCII strings using Base64 encoding for transmission and can be decoded easily at the receiver. This choice is favorable as it allows propagation of the messages in the protocol through older infrastructure which only supports ASCII.

3.5.2. Message And Connection Parameters

As stated above, all bytes/binary objects which are not PEM encoded string should be encoded using Base64 encoding to byte-strings and attached to the message. Several things to shed light are the "Client Authentication" and "Challenge For Client" fields, which are Base64 encoded bytes, which indicate to the client that the server needs to authenticate the client as per server policy. Also, the symmetric and symmetric fields "Challenge" fields may seem a little unique to this protocol.

In the case that the flag is raised, the server provides a challenge for the client to sign in the server hello. The client should sign it with its private key and return the signature alongside its certificate signed by the CA (please refer to

Table 2,

Table 3).

Server authentication is non-optional, with the server providing its certificate and signature in the server hello itself, with the latter acting as a proof of access to the server’s private key. However, in order to increase security, another round of challenges is proposed. The client should generate a random byte-string of length 128 bytes and send it to the server to sign in the client handshake finish message. The server should sign the challenge with its private key and also encrypt it symmetrically using the pre-master key. The two products should be checked by the client. If both challenges match expectations, the client essentially verifies the identity of the server. Note that almost a mirror process can take place to authenticate the client if server policy dictates. Details about key derivation follow.

The protocol defines crucial variables and credentials regarding the communication described as follows:

Server Signature The server signature should take into account parameters chosen by both client and server for increased security. Therefore, the signature should consist of the concatenation of the following variables encoded as bytes: client random, global ID, server random, Diffie Hellman parameters, server Diffie Hellman public key, server certificate and the timestamp. Note that the timestamp is converted to bytes in little endian format and should match the timestamp sent in the server hello.

Client Signature Similarly, the client, with its private key should sign the concatenation of: client random, global ID, server random, challenge sent by the server, client certificate and the timestamp. Similar notes to the one above apply here.

Pre-Master Secret The pre-master secret is the direct result of the Diffie Hellman key exchange.

-

Pre-Master Key Even though the pre-master secret is ephemerally generated, to maintain consistency and incorporate the randoms and the timestamp, the actual key should be should be generated in the following way:

Where k is the pre-master secret, are the client random, global ID and the server random respectively.

-

Encryption Key Should be generated in the following way:

Where k is the pre-master key.

-

HMAC Key Should be generated in the following way:

Where k is the pre-master key.

-

Initialization Vectors Draw 8 pseudo-random bytes in the following way:

Where k is the pre-master key.

Note that the choice of 8-byte IVs (and 8-byte counters) is different than most other counter modes. The PTE algorithm is under development and nuances such as modes of operations will be developed with more depth in later research.

Read-Write Counters To facilitate correct encryption and hash based MACs, a connection has 2 counters of size 64 bits (8 bytes) each. The counters are named intuitively, closely related to the purpose they fulfill: server_read_client_write and client_read_server_write. This nomenclature was chosen as when the server sends a message and the client reads it (or vice-versa), the same counter is incremented, making the process somewhat fool-proof for implementation.

3.6. Protocol Implementation

3.6.1. Client/Server Behavior For Transfer Of Short Data

This subsection defines the steps needed to be completed by the client and server after a successful handshake in order to securely send one block of data, limited to 19600 bytes. Immediately after establishing the pre-master secret, both client and server should derive the two separate keys for encryption and integrity checking, as discussed in

Section 3.5.2.

The sender should adhere to the following steps:

Note that if the whole message itself is smaller than the maximum size, the flag indicating last message should always be 1.

3.6.2. Client/Server Behavior For Transfer Of Lengthy Data

All messages in a secure protocol should have a maximum size. This is to prevent attacks known as "data bombs" - attacks which exploit compression to deflate into large files, rendering the server useless. Even though the proposed protocol does not feature compression, an attacker could replace a short but correct message into a large but corrupted message, increasing memory usage at the victim, and in extreme cases can open an opportunity for denial of service attacks, even if the attacker has no cryptographic influence.

It was thus decided to incorporate message limits in the proposed protocol. The maximum length of any payload is bytes. Therefore, if the application layer decides to send a message greater than m bytes, the protocol should split it into blocks of m bytes and increment the counter for each block, with the flag indicating last block being raised only on the last block.

The receiving side should process each block exactly as stated in

Section 3.6.1. However, if the flag indicating last block is 0, it should append every incoming message to a buffer, supplying the application layer with the whole buffer after processing the last block.

3.6.3. Client/Server Behavior For Termination Of Connection

When the client wants to terminate the connection on grounds of completeness, it should send the termination message as stated in

Table 6. On receiving this message, the server should immediately empty its send buffer while updating its summary. After this, the server should sign the final summary (see

Section 3.6.5) using its private key and send it to the client. After this step, the server should close the connection from its side.

The client should verify the signature using the public key. If the signature matches the client-side summary, it can mark the transaction as successful and relay the success to the application layer including the signature provided by the server. Any other outcome which does not consist of a successful signature on this specific message should be relayed as a failure, and the client should discard all the messages it has stored, starting a new transaction if needed.

If implemented correctly, including buffering messages before the final verification, the protocol can guarantee irrefutability even if the server tries to manipulate the information at its side. If, in future, a question about the existence of the conversation arises, the client can produce the messages sent to-and-from it, thus the final summary in contrast to the signature provided by the server (see

Section 3.6.5). Note that the three summarizers for this purpose are non-key based in order to mitigate the need to store cryptographic keys, not endangering the guarantee of backward secrecy. To mitigate determinism of ciphertexts, incorporating the salt functionality as provided by BLAKE2B is strongly recommended.

3.6.4. Operational Auxiliary Requirements

The protocol is implemented using Python’s low-level Socket API over TCP. While TCP guarantees chronological order, it does not preserve message boundaries, risking message coalescence. To solve this, the standard practice of prefixing each JSON-formatted message with its length is adopted. This ensures reliable parsing without compromising the protocol’s security or full-duplex design. This is considered an implementation detail separate from the protocol’s theoretical security.

3.6.5. Cryptographic Hashing and Message Digests

Secure protocols commonly employ cryptographic hash functions not only for data protection but also to verify protocol state integrity. For instance, TLS uses a hash of the handshake transcript to ensure parameter negotiation has not been altered by a Man-in-the-Middle (MITM) adversary [

28].

The proposed protocol utilizes cryptographic hashing in five distinct roles throughout a connection’s lifetime. A hash function is abstracted as a "summarizer" object with two methods: update(), which processes input bytes to update an internal state, and digest(), which finalizes and returns the hash value.

The five applications are as follows:

Server Authentication: The server signs a hash of the connection parameters with its private key. This non-optional step, detailed in

Section 3.4.1, proves the server controls the private key corresponding to its certificate. Verification failure results in connection termination.

Optional Client Challenge: The client may optionally request the server to sign a challenge, combined with the connection parameters (

Section 3.4.1).

Client Authentication (Policy-Dependent): If mandated by server policy, the client must sign the connection parameters, often including a server-provided challenge (

Section 3.4.1).

Data Integrity (HMAC): All data blocks transferred post-handshake are accompanied by a Hash-based Message Authentication Code (HMAC), a keyed cryptographic digest ensuring integrity and authenticity.

Non-Repudiation Digest: To provide irrefutability (

Section 3.4.3 and

Section 3.6.3), three non-keyed hash computations are maintained:

Handshake Transcript (): All messages exchanged during the handshake.

Client-Write Transcript (): All messages sent by the client after the handshake.

Server-Write Transcript (): All messages sent by the server after the handshake.

A final, composite digest for non-repudiation is computed as:

This digest is used during the termination phase, as elaborated in

Section 3.6.3, to cryptographically bind the entire session.

3.7. Error Characterization and Handling

This subsection characterizes errors that may occur during both normal operation and under adversarial conditions. It enumerates the probable causes for these errors and specifies the protocol’s mandated response actions.

As established in previous sections, a secure connection consists of three phases: the handshake, encrypted data transfer, and consensual termination. During development, it was observed that when both communicating parties cooperate, the probability of an error is negligible. However, in the presence of an active adversary attempting to compromise the communication, distinct and detectable errors manifest, particularly within cryptographic credentials. This behavior is a desirable property of a robust security protocol.

Based on these observations, the protocol mandates that upon the detection of any error, the connection must be terminated immediately without attempting to categorize its severity. This design decision is supported by the reliability guarantees of the underlying TCP/IP network layer [

55], which ensures the integrity of data delivery between cooperating endpoints. Consequently, any error that occurs is, with high probability, an indication of adversarial interference, necessitating immediate connection termination to preserve security.

The protocol integrates multiple subsystems and algorithms - including JSON [

54], Base64 encoding [

40], socket communication, and Message Authentication Codes (MACs) - each representing a potential point of failure or ambiguity during message processing, especially under attack. The following error categories are defined:

Parsing Errors: Occur when the incoming message violates the structural syntax of JSON, rendering it invalid.

Credential Mismatch: Occur when cryptographic credentials - such as HMACs, digital signatures, or challenge values - fail to validate against the current connection state.

Malformed Message Errors: Occur when an incoming message is syntactically valid JSON but contains semantic malformations. These include key-value mismatches or internal Base64 decoding errors. The implementation requires messages to contain exactly the set of keys defined in the specification. This strictness enhances security by preventing attackers from introducing extraneous data that could potentially aid in forgery attacks. The presence of undefined keys or values constitutes a malformed message and results in connection termination.

Message Withholding: Occur when an adversary intentionally withholds crucial messages (e.g., termination messages), potentially causing state desynchronization between the client and server.

Network Layer Errors: The protocol operates over TCP/IP, implemented via Python’s socket API. While TCP provides reliability, connectivity issues can manifest as exceptions raised by the socket module, such as ConnectionRefusedError, ConnectionResetError, ConnectionAbortedError, or TimeoutError. As security is paramount, any network-layer error results in the immediate termination of the secure connection.

The protocol requires bi-consensual termination. The initiating party must supply an error code within the termination message. A code of `0’ (sent only by the client to indicate a normal end of transaction) triggers the standard signature process outlined in

Section 3.6.3. Any non-zero error code indicates a critical fault, prompting immediate termination after a simple acknowledgment exchange.

Table 7 delineates the potential termination causes, their corresponding error codes, and the required response from all parties. For production environments, it may be more secure to use a generic non-zero code to avoid leaking diagnostic information to a potential attacker.

3.8. Analysis Of Correctness

All algorithms in this protocol are well defined, tested and refined, apart from Pythagorean Triples Encryption specifically, which is under testing through this protocol. Hence, only academic analysis will be presented, with as much backing as possible.

3.8.1. Data Encoding And Structuring Schemes

The protocol relies on two fundamental encoding standards to structure and transmit data: JSON for serialization and Base64 for binary-to-text encoding. A functional analysis confirms their suitability for this domain.

The primary role of JSON, as defined in RFC 8259 [

39], is to serialize structured objects - composed of fundamental data types - into a text-based format for storage or transmission. This serialized data can later be deserialized, often on a different machine, to reconstruct the original object. Academically, the JSON specification is regarded as exceptionally well-defined, utilizing an unambiguous context-free grammar. This precision allows a parser to definitively determine whether a given string is valid JSON and to identify the location of any errors, a level of diagnostic clarity not always available in alternatives like YAML [

56]. This contribution is two-fold: it provides a clear structure for protocol packets and enforces rules that prevent ambiguity, which is critical for security-prioritized applications.

However, a key implementational caveat of JSON in security contexts is the risk of denial-of-service (DoS) attacks via maliciously crafted input. To mitigate this, the protocol enforces a strict 6000-byte limit on any handshake message, after which the connection is terminated. This limit is calculated from the typical sizes of handshake components-a certificate ( 2000 bytes), a Diffie-Hellman public key ( 600 bytes), exchange parameters ( 1000 bytes), and a signature ( 300 bytes) - summing to approximately 4000 bytes. A 50 percent safety factor is applied to this sum to arrive at the final, conservative limit of 6000 bytes.

Since JSON is limited to fundamental types, a mechanism for encoding binary data is required. Base64 encoding fulfills this role by converting byte-strings into ASCII character strings, which can be safely embedded in JSON fields and decoded after transfer. Although JSON supports UTF-8, Base64 was selected for its ubiquity on the internet and its robustness in ensuring binary data can traverse older, ASCII-restrictive systems without corruption.

It is noted that while complex extensions like JSON Schema exist, the protocol’s use of both JSON and Base64 is basic, minimizing the likelihood of complications or vulnerabilities arising from more advanced features of these specifications.

3.8.2. Output Structure And Cryptographic Hashing

The structure adheres to modern layered cryptographic paradigms, supporting clear separation between encryption, MAC authentication, and digital signature layers. This modularity aligns with structural principles in secure protocol design, and enables independent key management for each layer, reducing key reuse risks and improving resistance to compromise.

In the proposed protocol, the encryption and HMAC keys and pre-master keys were derived from the pre-master secret using a cryptographic one-way function, guaranteeing that breaches in lower layers do not propagate to upper layers and thus compromise the entire connection and more.

3.8.3. Functional Analysis of PKI Components

The proposed system integrates classical PKI components, including certificate authorities (CAs), digital certificates, public/private key pairs, and a revocation mechanism into a lightweight layered encryption model. These elements are not implemented as infrastructure-level services but as logic components in the protocol flow, allowing flexible emulation of PKI functionality without dependency on external trust anchors. The design supports session-based key exchange, ephemeral keys, and signer authentication using modular primitives.

3.9. Analysis Of Security

3.9.1. Secure Design Philosophy

From a systemic standpoint, the protocol achieves layered security through sequential application of symmetric encryption, MAC authentication, and digital signatures. Each layer targets a distinct threat vector: ciphertext confidentiality, message integrity, and sender authenticity, respectively. Key separation, session isolation, and optional IV configuration further enhance security posture. While formal reductions are not included, the architecture draws from secure design patterns used in TLS-like protocols, offering a robust structure with practical safeguards against known cryptographic attacks.

3.9.2. Security Aspects of Encryption Based on Pythagorean Triples

The proposed encryption algorithm offers practical security guarantees rooted in its structural properties. First, functional correctness has been empirically validated by testing over one million random (plaintext, key) pairs, where decryption consistently yielded the original plaintext. This confirms the deterministic invertibility of the Feistel-based design across the full ASCII space. Furthermore, all operations are performed modulo 256 at the byte level, ensuring bounded output and compatibility with standard systems, without overflow or data corruption.

The substitution function , constructed using Pythagorean Triplet logic and enhanced via modular arithmetic and XOR operations, introduces strong non-linearity and high diffusion, which is critical traits that resist linear and differential cryptanalysis. Additionally, the substitution table demonstrates a low collision rate over printable ASCII characters, particularly when used in combination with initialization vectors or session-specific keys. These properties collectively prevent predictable ciphertext patterns and enhance entropy.

Finally, the algorithm’s layered architecture supports modular integration of encryption, authentication (MAC), and digital signature functionalities. This separation of concerns allows for independent key management, reduces key reuse risks, and improves resilience against compromise by isolating cryptographic responsibilities across layers. Although formal proofs like IND-CPA security are not provided here, the design reflects established principles in secure symmetric cipher construction and offers robustness suitable for practical deployments.

3.9.3. Statistical Crypt-Analysis of Pythagorean Triples Encryption

The block cipher proposed in

Section 3.2 was tested against basic statistic and cryptanalysis tests. A full cryptanalysis will be performed in later research.

A series of basic tests were conducted for initial analysis of the algorithm, mostly based on the cipher test suite by NIST [

57]:

Mono-bit Frequency Test: To check if there exists a bias between the number of ones and zeros in ciphertexts.

Runs Test: To test for (joint) correlation between consecutive bits of ciphertexts.

Autocorrelation Test: To check for correlation between bits of the same ciphertext (up to 20 lags).

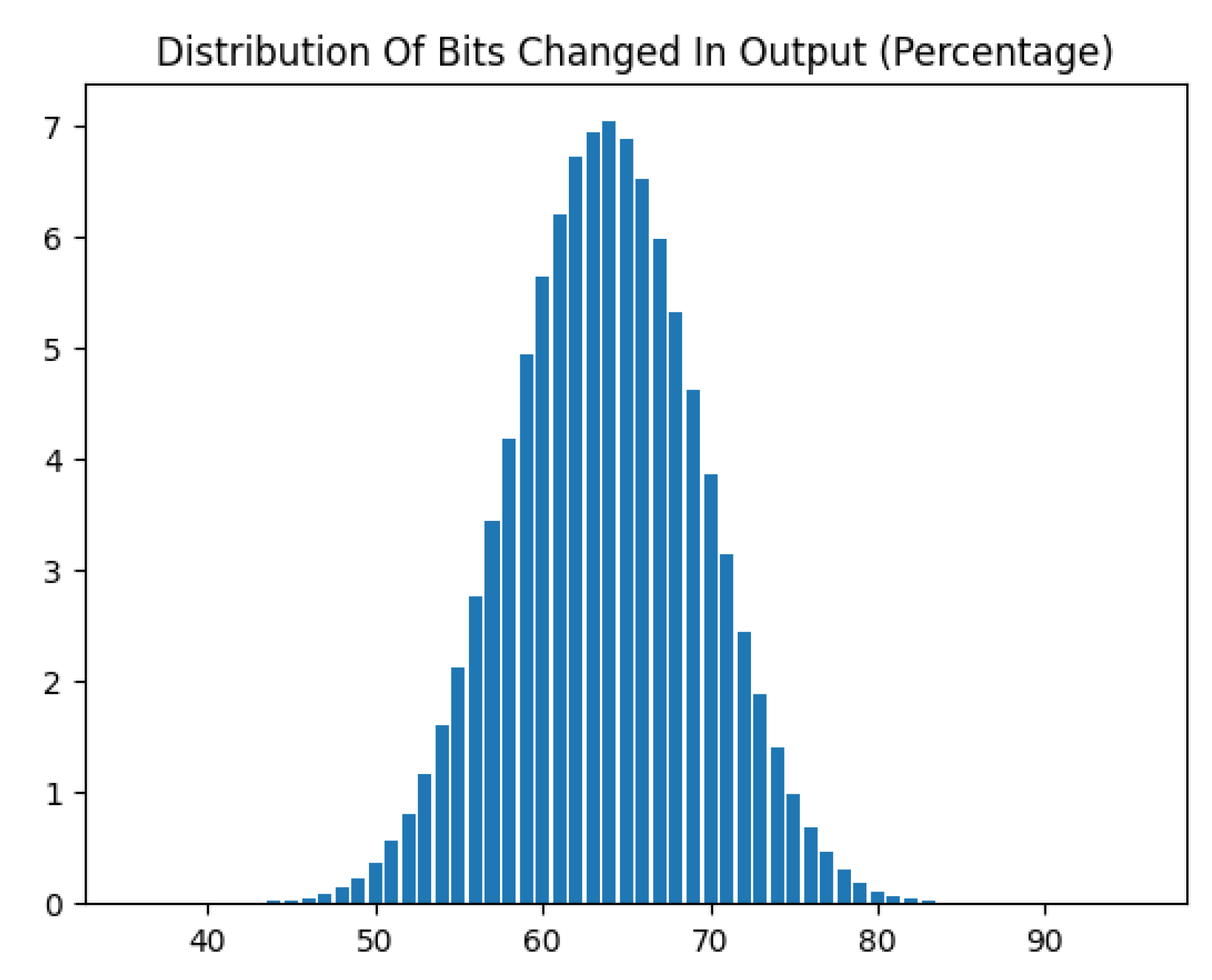

Avalanche Distribution Test: To check if there exist bits in the ciphertext which do not have a 50% probability of changing for a change of one bit in the plaintext or key. Also to model the number of bits changed in ciphertext as a result of one bit changed in any input.

Linear Approximation of Table (LAT): As the round function’s non linear component can be seen as a substitution, a linear approximation of the substitution table was performed, to check for linear bias between bytes.

Note that traditional LAT assumes 8-bit input S-boxes (256 entries). Our cipher can be modeled to use a 9-bit input space generated by summing 8-bit data and 8-bit key bytes, requiring analysis of 512 input values instead of 256. The adaptations included extending input masks to cover a 9-bit space and extending the test space to mask combinations, with other factors remaining unchanged.

3.9.4. Analysis Of Other Cryptographic Primitives in the Protocol

Beyond the core PTE encryption scheme, the protocol incorporates SHA-256 hashing for message integrity, HMAC for authenticated encryption when needed, and optional digital signatures using RSA or ECDSA depending on the deployment context. Initialization vectors (IVs) are either random or derived via KDFs when session state is available. These primitives were chosen for their standardization, platform compatibility, and synergy with the lightweight arithmetic of the PTE core. The modularity allows the protocol to degrade gracefully under partial trust or minimal key distribution scenarios.

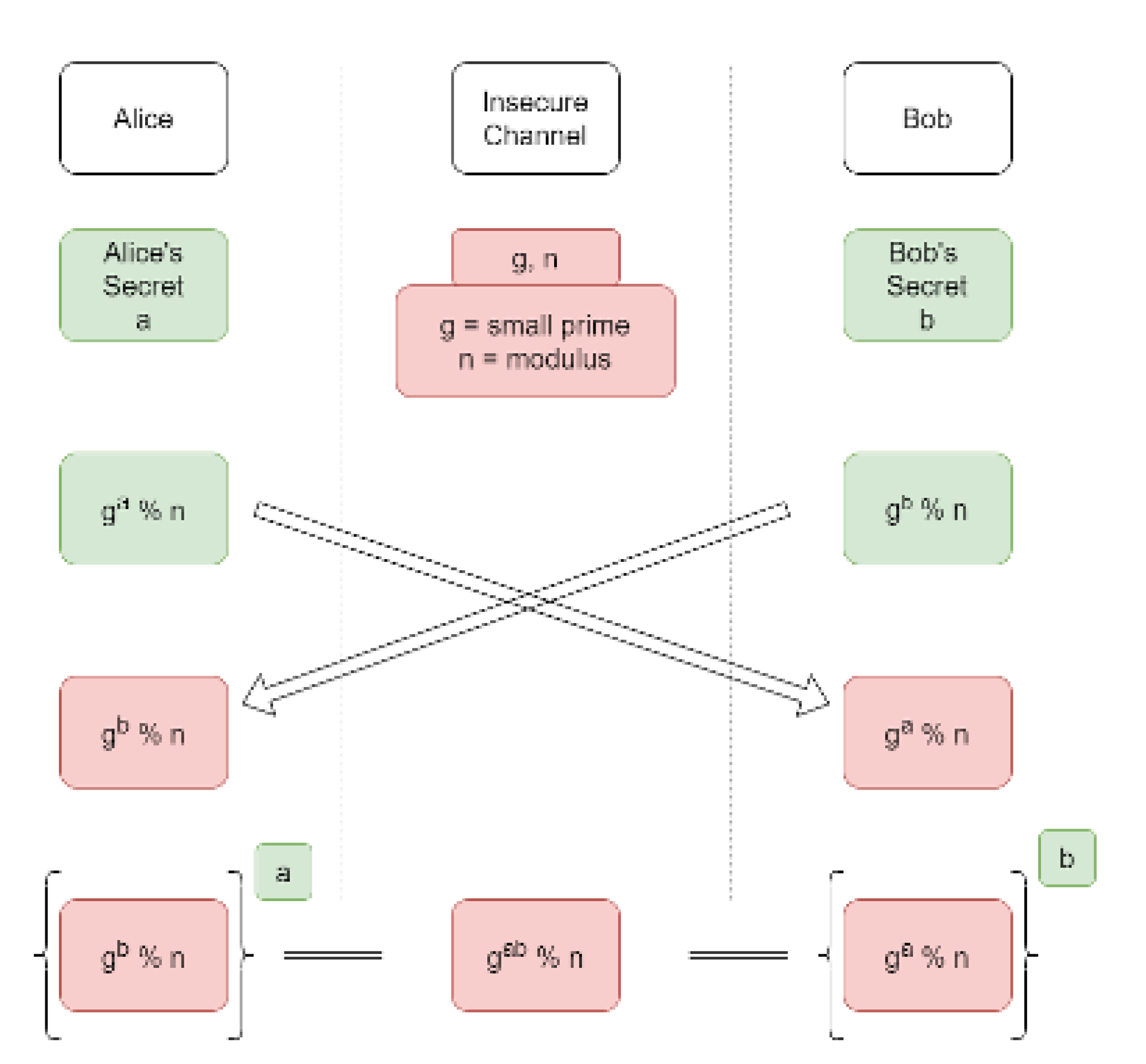

The protocol employs two fundamental cryptographic primitives: the Diffie-Hellman key exchange for establishing a shared secret and the RSA-PSS algorithm for digital signatures.

The Diffie-Hellman key exchange [

21] enables two parties to collaboratively generate a shared secret over an insecure channel without any prior shared knowledge. Its functionality is based on the computational difficulty of the Discrete Logarithm Problem (DLP). Each party generates a public/private key pair from agreed-upon public parameters (a generator g and a prime modulus p). They then exchange their public keys. Each party combines their own private key with the other’s public key to compute the same shared secret. A passive adversary observing the public keys cannot feasibly compute this secret. This shared secret forms the basis for deriving symmetric session keys for encryption and authentication, providing forward secrecy if ephemeral key pairs are used.

The RSA-PSS (RSA Signature Scheme with Probabilistic Signature) [

53] is used for digital signatures to provide authentication and non-repudiation. Functionally, it improves upon older, deterministic RSA signing by incorporating a probabilistic padding scheme. Before signing, the message is hashed and then padded with a random salt value. This process ensures that two signatures of the identical message are different, which protects against certain cryptanalytic attacks. The recipient verifies the signature by reconstructing the padding pattern. RSA-PSS is provably secure under the RSA assumption and is considered the modern standard for RSA-based signatures.

The RSA Assumption states that for all efficient (probabilistic polynomial-time) algorithms, the probability of solving the RSA Problem is negligible. In simpler terms, it is assumed that there is no practical algorithm that can efficiently compute roots modulo n when n is a product of two large, secret primes.

This assumption is foundational because the most straightforward way to solve the RSA problem -finding

m as the

root of

c modulo

n - is believed to be computationally equivalent to factoring the modulus. Since the Integer Factorization Problem is also considered computationally intractable for sufficiently large primes, the security of RSA is thereby based on this well-studied hard problem [

12].

Therefore, when we state that "RSA-PSS is provably secure under the RSA assumption" [

53], it means that forging a signature can be shown to be as difficult as solving the RSA Problem. An adversary capable of creating a valid forgery could also be used to construct an algorithm to break the underlying RSA assumption.

3.9.5. Correctness Of The Feistel Structure

We formally demonstrate that our Feistel-based cipher correctly decrypts to the original plaintext for all keys in the key space. The core insight, established by Feistel [

44], is that the structure is inherently reversible regardless of the round function

F. For each round

i with left and right halves

and round key

, the encryption performs:

Decryption reverses this process using the same round keys in reverse order:

Since all operations - including our modular arithmetic within the domain-are well-defined and deterministic, the composition of encryption followed by decryption yields the identity function, restoring the original plaintext.

This theoretical guarantee is maintained in practice through careful implementation that preserves the Feistel invariants. Luby and Rackoff [

45] further established that even with pseudorandom round functions, the Feistel construction provides cryptographic security, validating our architectural choice. Our empirical validation in sub

Section 4.1 confirms this theoretical correctness across extensive test vectors.

3.9.6. Known Attack-Wise Analysis Of Security

Impersonation/Spoofing: Mitigated by certificate verification, challenge-response handshake stages, and integrity-protected summary fields.

Harvest and Decrypt: Mitigated by incorporating three random values and a timestamp.

MITM Attacks: Mitigated by certificate validation, secure key establishment, encryption, and integrity checking.

Secure Connection Stripping: Mitigated by non-negotiable, mandatory authentication, encryption, and integrity.

Connection Downgrade: Mitigated by using a single, fixed protocol version.

Parameter Downgrade: Mitigated by standardizing cryptographic strengths at configuration time.

Certificate Spoofing: Mitigated by terminating the connection if certificate details do not match strict standards, assuming a secure CA.

Lower-Level MITM (ARP/DNS): Mitigated by the attacker’s inability to complete the real-time cryptographic challenge.

Certificate-Related Attacks: Mitigated by the assumed security of the Certificate Authority (CA).

Replay Attacks: Mitigated in the handshake by timestamps and summary fields, and in data transfer by counters and HMACs.

Key Exchange/Negotiation Attacks: Mitigated by unified minimum parameter strengths (configuration dependent) and prioritizing security over flexibility by preventing re-negotiation.

Isolation Attacks: Mitigated by securely deriving all session keys (encryption, HMAC) from the pre-master secret.

3.9.7. Risk Analysis Table

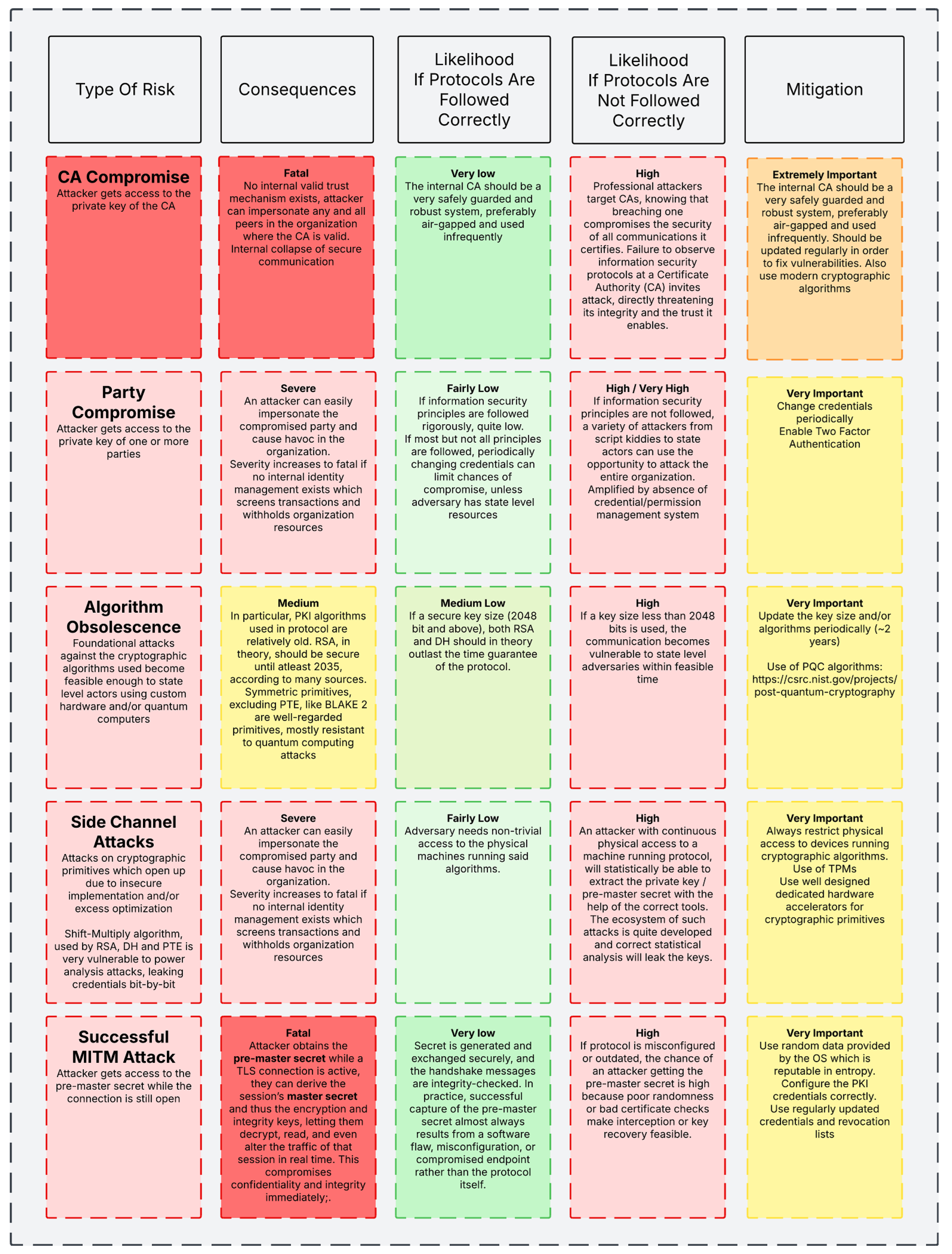

Figure 3 displays the imminent risks associated with using our protocol and the categorization of them as per the likelihood of occurrence.