Submitted:

25 September 2025

Posted:

26 September 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related Work

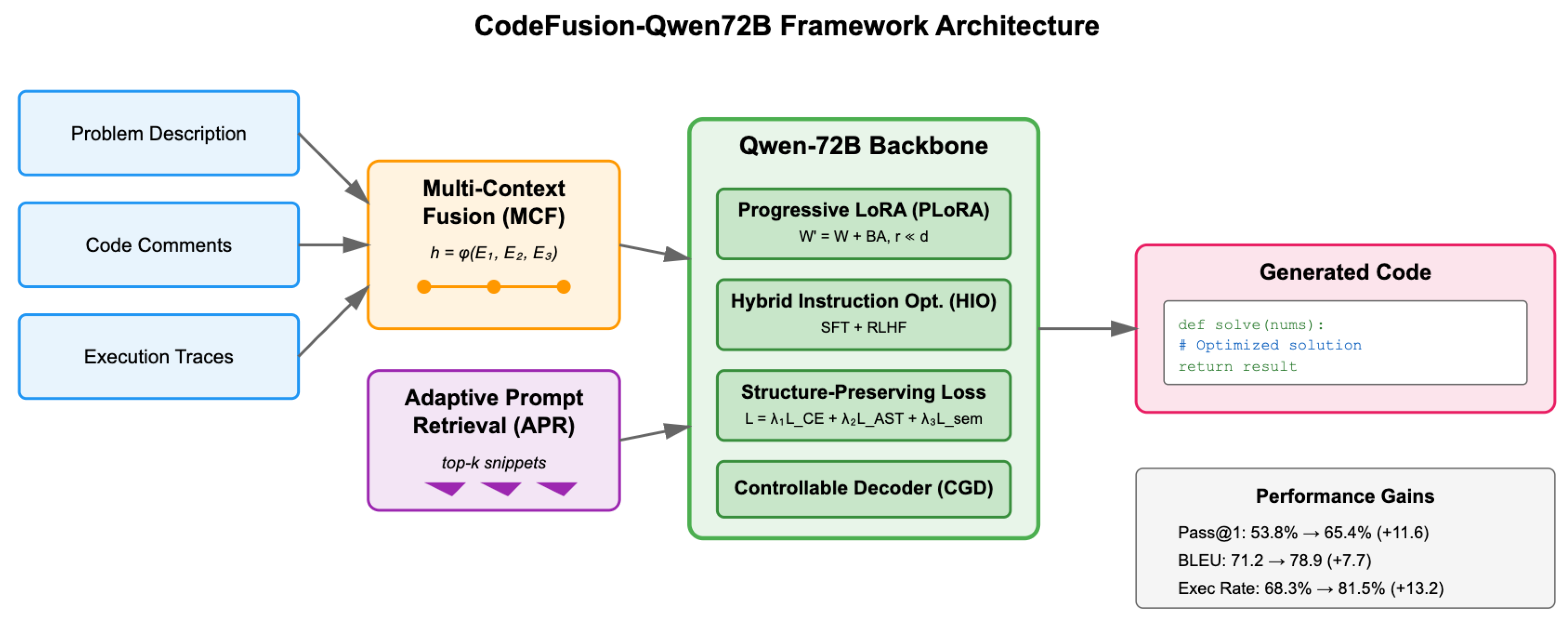

3. Methodology

4. Algorithm and Model

4.1. Qwen-72B Backbone Architecture

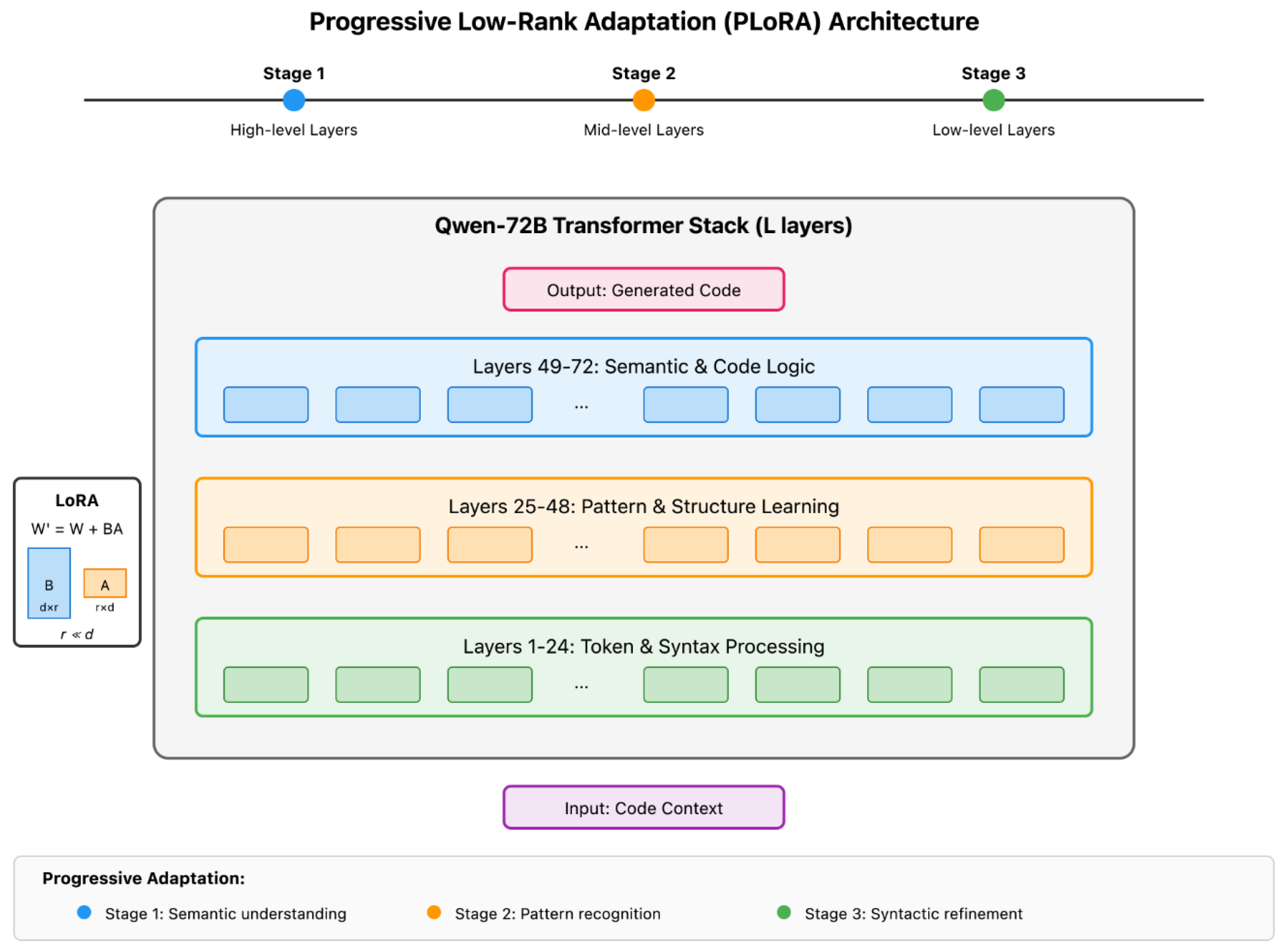

4.2. Progressive Low-Rank Adaptation (PLoRA)

4.3. Hybrid Instruction Optimization (HIO)

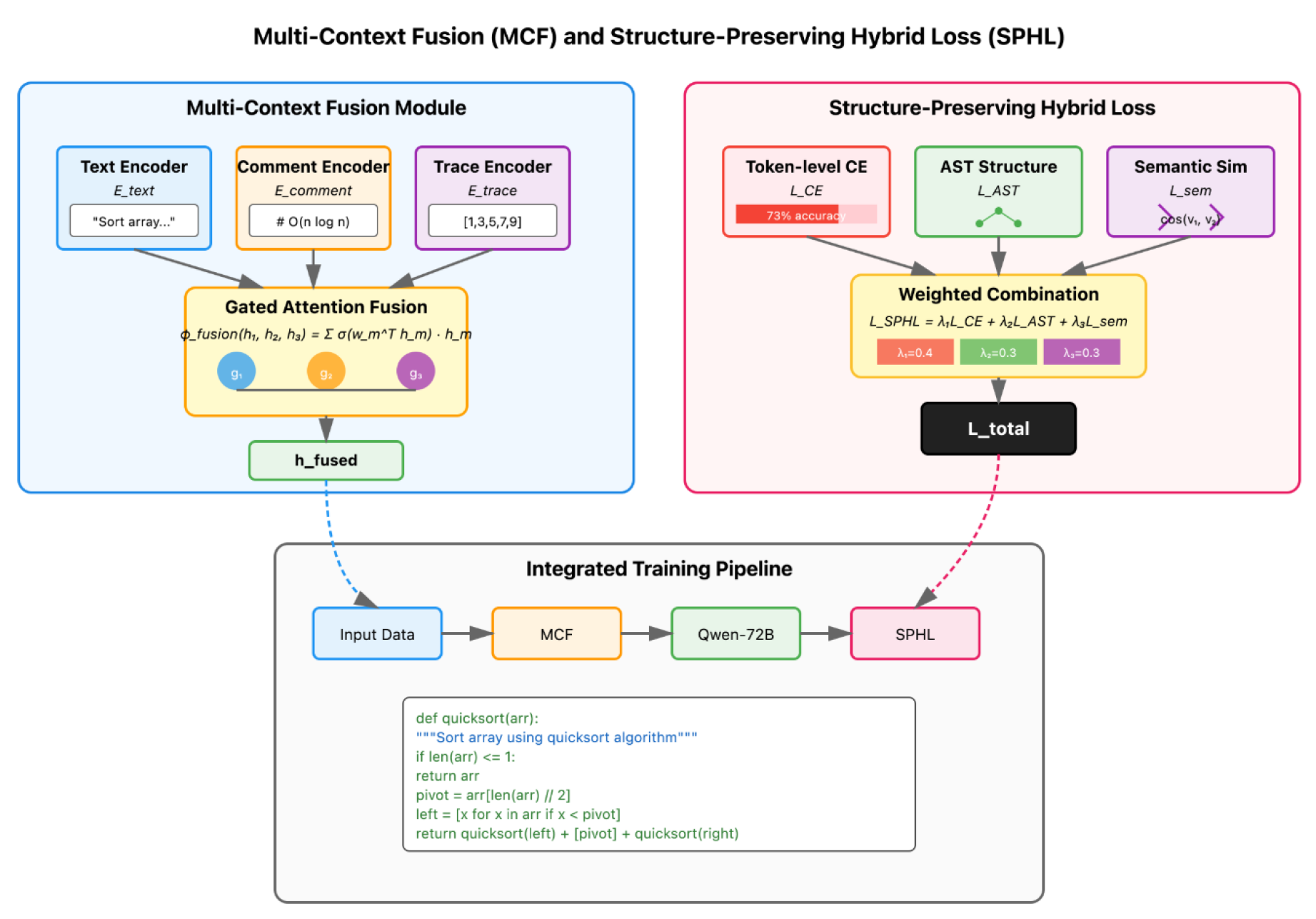

4.4. Multi-Context Fusion (MCF)

4.5. Structure-Preserving Hybrid Loss (SPHL)

4.6. Controllable Generation Decoder (CGD)

4.7. Adaptive Prompt Retrieval (APR)

5. Data Preprocessing

5.1. Code Deduplication and Normalization

5.2. Syntactic Validation and Semantic Tagging

6. Prompt Engineering Techniques

6.1. Retrieval-Augmented Prompt Construction

6.2. Progressive Prompt Structuring

- Present a high-level task description.

- Include a partial code skeleton or function signature.

- Gradually add constraints, examples, and edge cases.

7. Evaluation Metrics

7.1. Pass@1 Accuracy

7.2. BLEU Score

7.3. Code Execution Success Rate (CESR)

7.4. Abstract Syntax Tree Similarity (ASTSim)

7.5. Semantic Similarity (SemSim)

7.6. Code Readability Score (CRS)

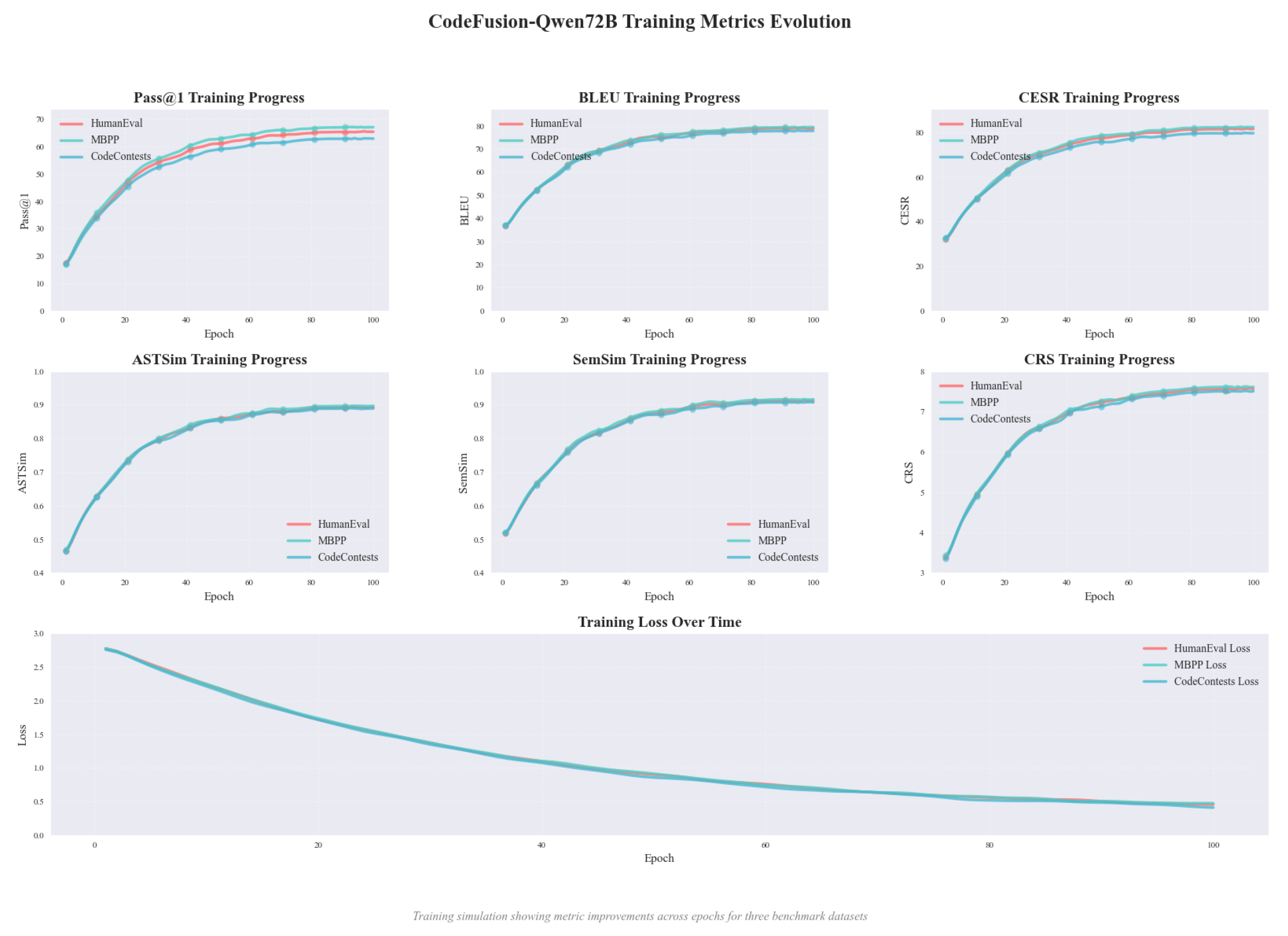

8. Experiment Results

8.1. Experimental Setup

8.2. Overall and Ablation Results

9. Conclusion

References

- Tipirneni, S.; Zhu, M.; Reddy, C.K. Structcoder: Structure-aware transformer for code generation. ACM Transactions on Knowledge Discovery from Data 2024, 18, 1–20.

- Sirbu, A.G.; Czibula, G. Automatic code generation based on Abstract Syntax-based encoding. Application on malware detection code generation based on MITRE ATT&CK techniques. Expert Systems with Applications 2025, 264, 125821.

- Gong, L.; Elhoushi, M.; Cheung, A. Ast-t5: Structure-aware pretraining for code generation and understanding. arXiv preprint arXiv:2401.03003 2024.

- Wang, S.; Yu, L.; Li, J. Lora-ga: Low-rank adaptation with gradient approximation. Advances in Neural Information Processing Systems 2024, 37, 54905–54931.

- Hayou, S.; Ghosh, N.; Yu, B. Lora+: Efficient low rank adaptation of large models. arXiv preprint arXiv:2402.12354 2024.

- Hounie, I.; Kanatsoulis, C.; Tandon, A.; Ribeiro, A. LoRTA: Low Rank Tensor Adaptation of Large Language Models. arXiv preprint arXiv:2410.04060 2024.

- Zhang, R.; Qiang, R.; Somayajula, S.A.; Xie, P. Autolora: Automatically tuning matrix ranks in low-rank adaptation based on meta learning. arXiv preprint arXiv:2403.09113 2024.

- Chen, N.; Sun, Q.; Wang, J.; Li, X.; Gao, M. Pass-tuning: Towards structure-aware parameter-efficient tuning for code representation learning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023, pp. 577–591.

- Li, P.; Sun, T.; Tang, Q.; Yan, H.; Wu, Y.; Huang, X.; Qiu, X. Codeie: Large code generation models are better few-shot information extractors. arXiv preprint arXiv:2305.05711 2023.

- Guan, S. Predicting Medical Claim Denial Using Logistic Regression and Decision Tree Algorithm. In Proceedings of the 2024 3rd International Conference on Health Big Data and Intelligent Healthcare (ICHIH), 2024, pp. 7–10. [CrossRef]

| Model / Variant | HumanEval | MBPP | CodeContests | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Pass@1 | BLEU | CESR | ASTSim | SemSim | CRS | Pass@1 | BLEU | CESR | ASTSim | SemSim | CRS | Pass@1 | BLEU | CESR | ASTSim | SemSim | CRS | |

| Qwen-72B Full FT | 53.8 | 71.2 | 68.3 | 0.841 | 0.872 | 6.92 | 55.1 | 72.0 | 69.5 | 0.846 | 0.874 | 6.95 | 50.7 | 70.4 | 66.2 | 0.832 | 0.865 | 6.88 |

| Qwen-72B LoRA | 57.4 | 73.9 | 72.1 | 0.856 | 0.884 | 7.05 | 59.2 | 74.6 | 73.0 | 0.861 | 0.888 | 7.09 | 53.5 | 72.9 | 69.4 | 0.848 | 0.873 | 7.01 |

| CodeGen-16B | 49.6 | 69.4 | 65.0 | 0.823 | 0.861 | 6.78 | 51.0 | 70.1 | 66.1 | 0.828 | 0.863 | 6.80 | 48.4 | 68.7 | 64.3 | 0.819 | 0.856 | 6.75 |

| CodeFusion-Qwen72B | 65.4 | 78.9 | 81.5 | 0.893 | 0.912 | 7.56 | 67.1 | 79.4 | 82.3 | 0.897 | 0.916 | 7.61 | 62.9 | 77.8 | 79.6 | 0.889 | 0.907 | 7.50 |

| w/o MCF | 60.8 | 76.2 | 77.1 | 0.875 | 0.901 | 7.42 | 62.5 | 76.8 | 78.0 | 0.879 | 0.903 | 7.45 | 57.6 | 75.4 | 75.9 | 0.870 | 0.895 | 7.38 |

| w/o SPHL | 61.3 | 76.8 | 77.5 | 0.871 | 0.898 | 7.39 | 62.9 | 77.0 | 78.2 | 0.874 | 0.900 | 7.42 | 58.0 | 75.9 | 76.1 | 0.868 | 0.892 | 7.36 |

| w/o PLoRA | 62.5 | 77.5 | 78.4 | 0.881 | 0.906 | 7.44 | 63.8 | 78.1 | 79.0 | 0.885 | 0.908 | 7.46 | 59.1 | 76.7 | 77.2 | 0.877 | 0.898 | 7.40 |

| w/o APR | 63.2 | 78.0 | 79.0 | 0.884 | 0.908 | 7.48 | 64.5 | 78.5 | 79.6 | 0.888 | 0.910 | 7.50 | 59.7 | 77.2 | 77.8 | 0.880 | 0.900 | 7.43 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).