Submitted:

16 August 2025

Posted:

18 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A biometric classifier for user identification,

- A language classifier to distinguish between native and non-native auditory stimuli, and

- A device classifier for identifying the auditory delivery modality.

- TriNet-MTL is designed and implemented as a unified deep neural framework capable of simultaneous biometric and cognitive state inference from auditory-evoked EEG.

- We demonstrate that multi-task learning enhances the performance of all tasks compared to single-task baselines, through shared representational learning.

- We validate the proposed model on a publicly available auditory EEG dataset, showing that the system achieves high classification accuracies across all three tasks, with >95% biometric recognition accuracy.

- We highlight the interplay between identity and cognition in EEG signals, revealing that auditory language and stimulus modality contribute meaningful variance that can be effectively leveraged in a multi-task context.

2. Literature Review

3. Methodology

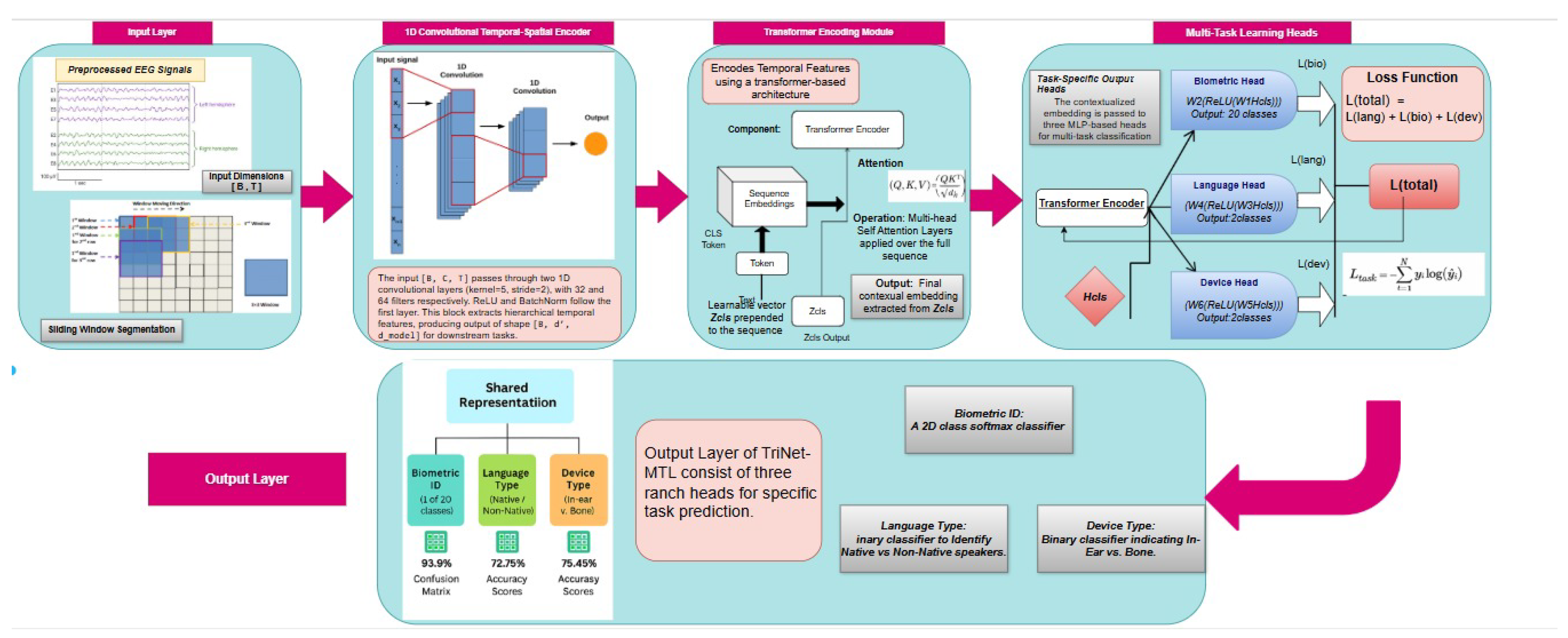

3.1. TriNet-MTL Model Architecture

- Temporal feature extraction via convolutional layers,

- Global sequence modeling using transformer-based encoders,

- Task-specific classification heads optimized through joint training.

3.1.1. Temporal Feature Extraction

3.1.2. Transformer-Based Temporal Modeling

3.1.3. Task-specific Classification Heads

3.2. Multi-Task Optimization

3.3. Training Configuration

4. Experiments

4.1. Experimental Setup

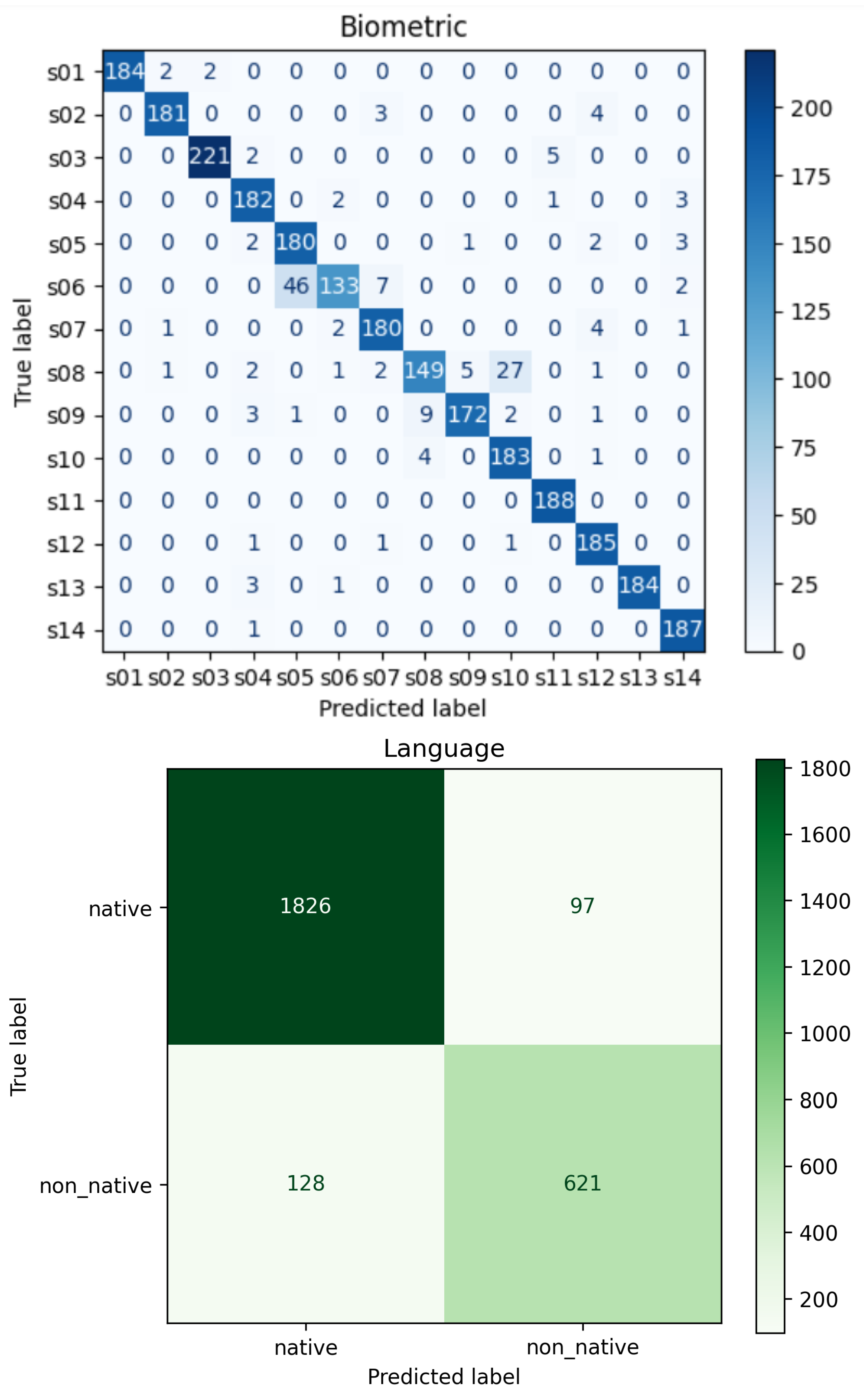

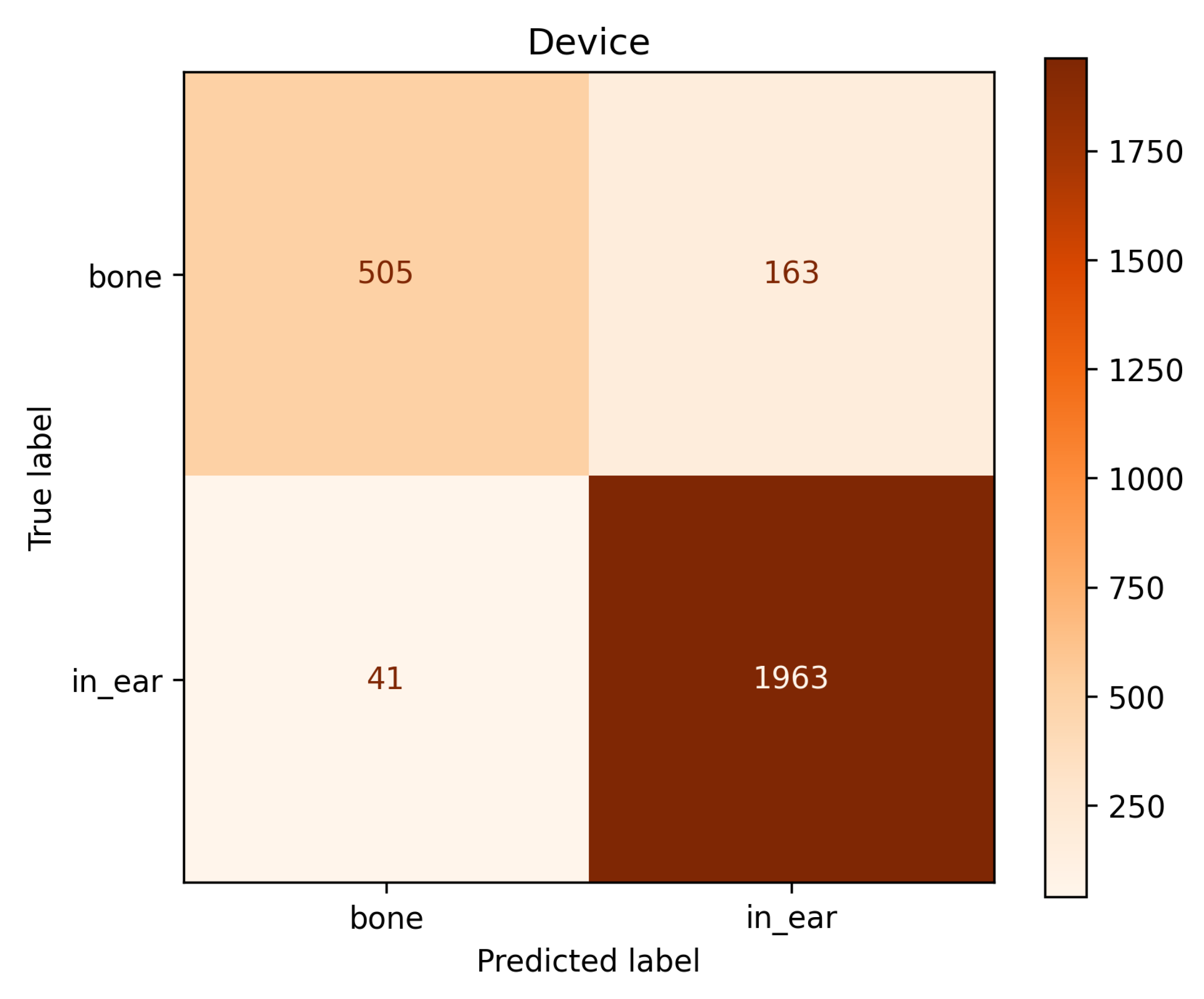

- Biometric identification (20-class classification),

- Stimulus language classification (binary),

- Device modality classification (binary).

4.2. Results and Comparative Analysis

- Single-Task Transformers (STT): Independent transformers for each task.

- Shared CNN with Separate Classifiers (SC-SC): A shared convolutional encoder followed by separate classifiers, omitting the transformer module.

| Model | Bio (%) | Lang (%) | Dev (%) | Avg (%) |

|---|---|---|---|---|

| STT | 89.5 | 82.1 | 85.3 | 85.6 |

| SC-SC | 87.2 | 80.9 | 83.5 | 83.9 |

| TriNet-MTL (Proposed) | 93.9 | 91.6 | 92.4 | 92.6 |

4.3. Ablation Study

- Without Transformer Encoder: Only convolutional layers and task heads.

- Without Convolutional Encoder: Transformer operating directly on raw EEG windows.

| Variant | Bio (%) | Lang (%) | Dev (%) | Avg (%) |

|---|---|---|---|---|

| Without Transformer | 88.2 | 80.1 | 82.6 | 83.6 |

| Without Conv Encoder | 84.7 | 76.9 | 79.2 | 80.3 |

| Full TriNet-MTL | 93.9 | 91.6 | 92.4 | 92.6 |

5. Conclusions

Funding

Acknowledgments

References

- Campisi, P.; Rocca, D.L. Brain waves for biometric recognition. IEEE TRansactions Inf. Forensics Secur. 2014, 9, 782–800. [Google Scholar] [CrossRef]

- Marcel, S.; del, R. Millán, J. Person authentication using brainwaves (EEG) and maximum a posteriori model adaptation. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 743–752. [Google Scholar] [CrossRef] [PubMed]

- Picton, T.W. Human Auditory Evoked Potentials; Plural Publishing, 2011.

- Näätänen, R.; Winkler, I. The concept of auditory stimulus representation in cognitive neuroscience. Psychol. Bull. 1999, 125, 826. [Google Scholar] [CrossRef] [PubMed]

- Palaniappan, R. Two-stage biometric authentication method using thought activity brain waves. Int. J. Neural Syst. 2008, 18, 59–66. [Google Scholar] [CrossRef] [PubMed]

- Kumar, B.V.K.V.; Mahalanobis, A.; Juday, R.D. Correlation Pattern Recognition; Cambridge University Press, 2006.

- Poulos, M.; Rangoussi, M.; Alexandris, N.; Evangelou, A. Person identification based on parametric processing of the EEG. In Proceedings of the 6th International Conference on Electronics, Circuits and Systems, Vol. 1; 1999; pp. 283–286. [Google Scholar] [CrossRef]

- Rocca, D.L.; Campisi, P.; Scarano, G. EEG biometric recognition in resting state with closed eyes. In Proceedings of the 22nd European Signal Processing Conference (EUSIPCO); 2014; pp. 2090–2094. [Google Scholar]

- Zhao, X.; Zhang, Y.; Cichocki, A. EEG-based person authentication using deep recurrent-convolutional neural networks. IEEE Trans. Cogn. Dev. Syst. 2019, 12, 763–773. [Google Scholar] [CrossRef]

- Yin, Z.; Zhang, J. Cross-session classification of mental workload levels using EEG and hybrid deep learning framework. IEEE Access 2017, 5, 23744–23754. [Google Scholar] [CrossRef]

- Näätänen, R.; Picton, T.W. The N1 wave of the human electric and magnetic response to sound: A review and an analysis of the component structure. Psychophysiology 1987, 24, 375–425. [Google Scholar] [CrossRef] [PubMed]

- Korkmaz, Ö.; Zararsiz, G. Biometric authentication using auditory evoked EEG responses. Biomed. Signal Process. Control 2020, 62, 102067. [Google Scholar] [CrossRef]

- Gupta, R.; Palaniappan, R. EEG-based biometric authentication using auditory evoked potentials. Int. J. Neural Syst.s 2020, 30, 2050015. [Google Scholar] [CrossRef]

- Shoushtarian, M.; McMahon, C.M.; Houshyar, R. Bone-conduction auditory evoked potentials: A review. Hear. Res. 2021, 402, 108002. [Google Scholar] [CrossRef]

- Kotz, S.A.; Elston-Güttler, K.E. The role of proficiency on processing categorical and associative information in the L2 as revealed by ERPs. J. Neurolinguistics 2004, 17, 215–235. [Google Scholar] [CrossRef]

- Ruder, S. An overview of multi-task learning in deep neural networks. arXiv:1706.05098, 2017.

- Yin, Z.; Zhao, M.; Wang, Y.; Yang, J.; Zhang, J. Recognition of emotions using multimodal physiological signals and an ensemble deep learning model. Comput. Methods Programs Biomed. 2017, 140, 93–110. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Zhou, G. A review on multi-task learning for EEG decoding. Front. Hum. Neurosci. 2018, 12, 500. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems, Vol. 30; 2017; pp. 5998–6008. [Google Scholar]

- Lawhern, V.J.; Solon, A.J.; Waytowich, N.R.; Gordon, S.M.; Hung, C.P.; Lance, B.J. EEGNet: A compact convolutional neural network for EEG-based brain–computer interfaces. J. Neural Eng. 2018, 15, 056013. [Google Scholar] [CrossRef] [PubMed]

- Abo Alzahab, N.; Di Iorio, A.; Apollonio, L.; Alshalak, M.; Gravina, R.; Antognoli, L.; Baldi, M.; Scalise, L.; Alchalabi, B. Auditory Evoked Potential EEG-Biometric Dataset (Version 1.0.0), 2021. PhysioNet. RRID:SCR_007345. [CrossRef]

- Roy, Y.; Banville, H.; Albuquerque, I.; Gramfort, A.; Falk, T.H.; Faubert, J. ChronoNet: A deep recurrent neural network for abnormal EEG identification. In Proceedings of the 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC). IEEE; 2019; pp. 674–677. [Google Scholar] [CrossRef]

- Li, X.; Liu, Y.; Zhang, J.; Duan, N.; Yan, W.; Gu, X.; Liu, D.; Yu, Z. Spatial–Temporal Transformer for EEG-Based Emotion Recognition. IEEE Trans. Neural Netw. Learn. Syst. 2023. [Google Scholar] [CrossRef]

- Wang, M.; Li, X.; Yan, W.; Liu, D.; Yu, Z. EEGFormer: Transformer-Based Model for EEG Signal Decoding. IEEE Trans. Neural Netw. Learn. Syst. 2023. [Google Scholar] [CrossRef]

| Task | Accuracy (%) |

|---|---|

| Biometric Identification | 93.9 |

| Stimulus Language Classification | 91.6 |

| Device Modality Classification | 92.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).