Submitted:

08 August 2025

Posted:

11 August 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Research Questions (RQs)

- RQ-1.

- How is the status of AI models in this field now influenced by historical advancements and theoretical bases in sentiment analysis and cross-lingual transfer learning?

- RQ-2.

- What are the main obstacles and recent advances in the use of AI models for sentiment analysis using cross-lingual transfer learning approaches?

- RQ-3.

- What are the implications and uses of cross-lingual sentiment analysis in various fields, and how can AI-powered methodologies influence sentiment interpretation in multilingual environments?

1.2. Research Objectives

- To thoroughly examine the problems and current advances in the usage of AI models for sentiment analysis using cross-lingual transfer learning methodologies.

- To examine the current challenges encountered and creative solutions produced in the application of AI models for sentiment analysis across many languages.

- To give context and explanations for the theoretical basis and historical evolution of sentiment analysis and cross-lingual transfer learning.

- To help readers comprehend the historical backdrop and fundamental concepts that have affected the evolution of sentiment analysis and cross-lingual transfer learning, emphasizing their importance in AI research.

- To investigate the larger implications and uses of cross-lingual sentiment analysis across diverse domains, emphasizing the importance of AI-driven methodologies in a globally linked

- Comprehensive Analysis: Using cross-lingual transfer learning approaches, the study analyzes in detail the difficulties and recent advancements in the use of artificial intelligence models for sentiment analysis.

- Contextualization and Theoretical Foundations: This study will assist readers in comprehending the theoretical foundations and historical development of sentiment analysis, cross-lingual transfer learning, and the importance of AI models by providing a contextual background and explanation of these fields.

- Latest Developments and Approaches: This research contributes to the body of knowledge on state-of-the-art methods in sentiment analysis and cross-lingual transfer learning by investigating the most recent developments and innovative approaches in these domains.

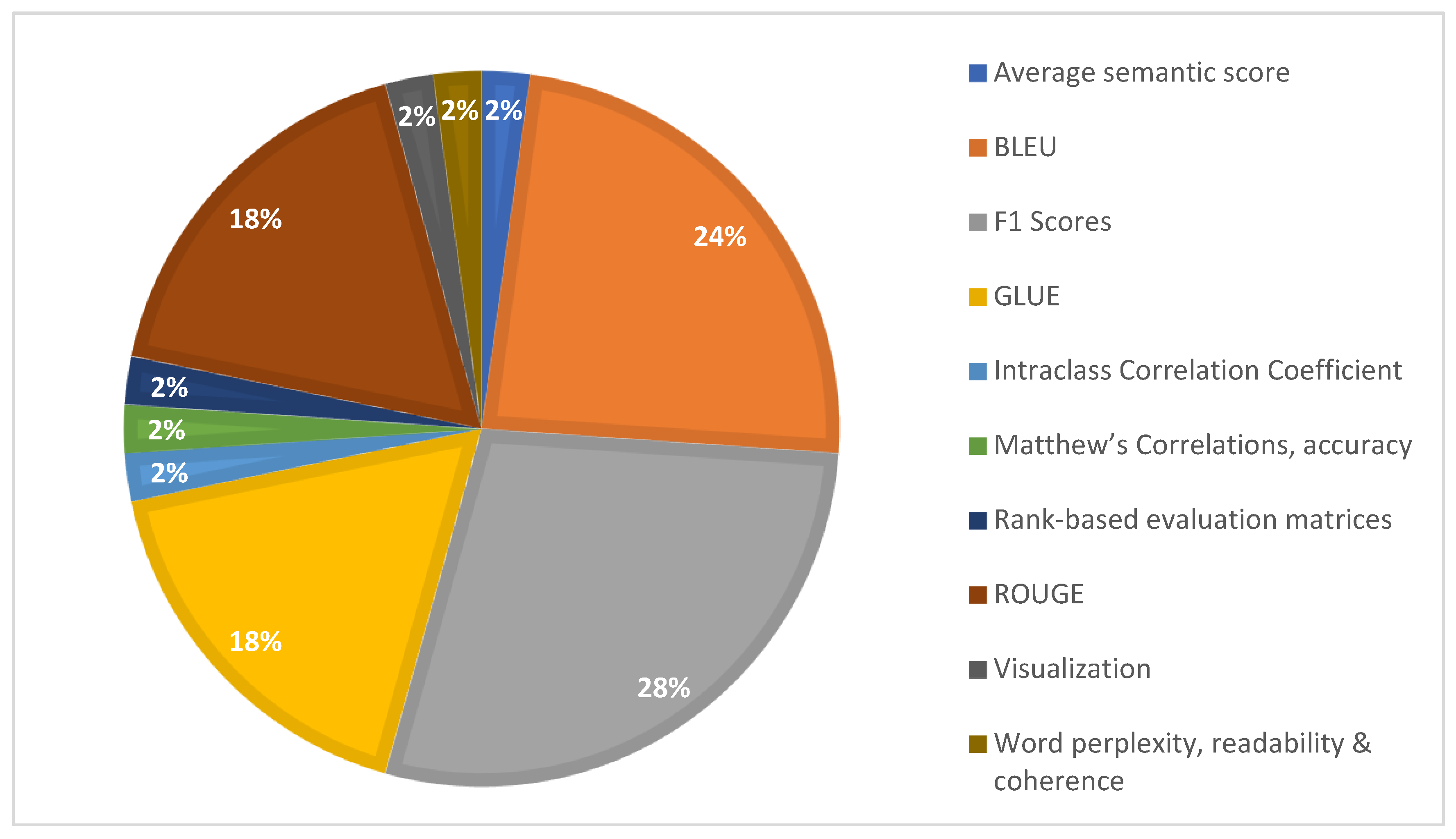

- Analysis of Research Methodologies and Evaluation Standards: This work analyses research methodologies and develops evaluation standards, pointing out emerging patterns and enduring problems in sentiment analysis and cross-lingual transfer learning studies and providing insightful information about the methodological strategies employed in these fields.

- Consequences in Different Domains: In addition to helping to comprehend the broader implications and applications of AI-driven techniques in interpreting sentiments expressed in multilingual environments, the exploration of cross-lingual sentiment analysis implications across various fields highlights the significance of this field in the globally interconnected digital world.

1.3. Motivation and Significance

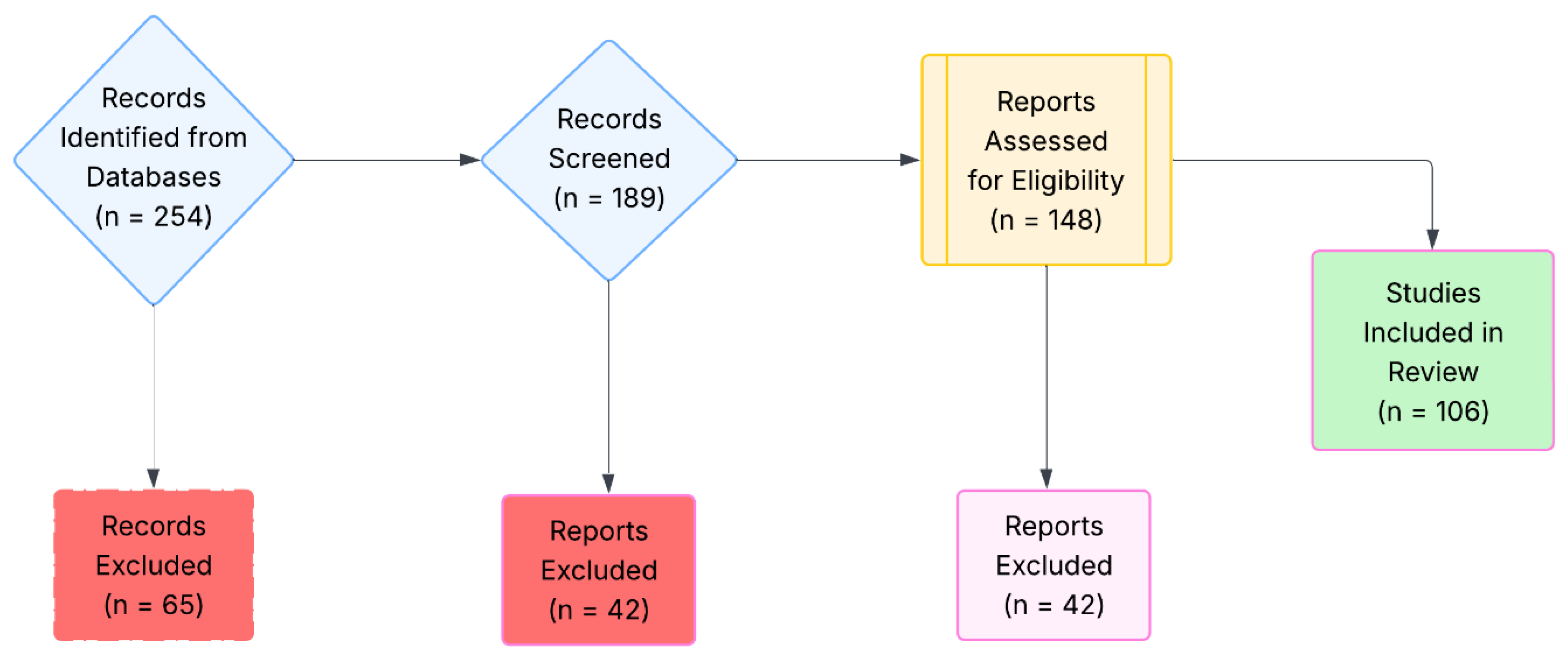

2. Review Methodology

- RQ-1.

- “What are the key stages in the evolution of AI models used for cross-lingual sentiment analysis, and how have changes in model architectures and evaluation metrics shaped the field over time?”

3. Background and Related Work

3.1. Sentiment Analysis

3.2. Cross-Lingual Transfer Learning

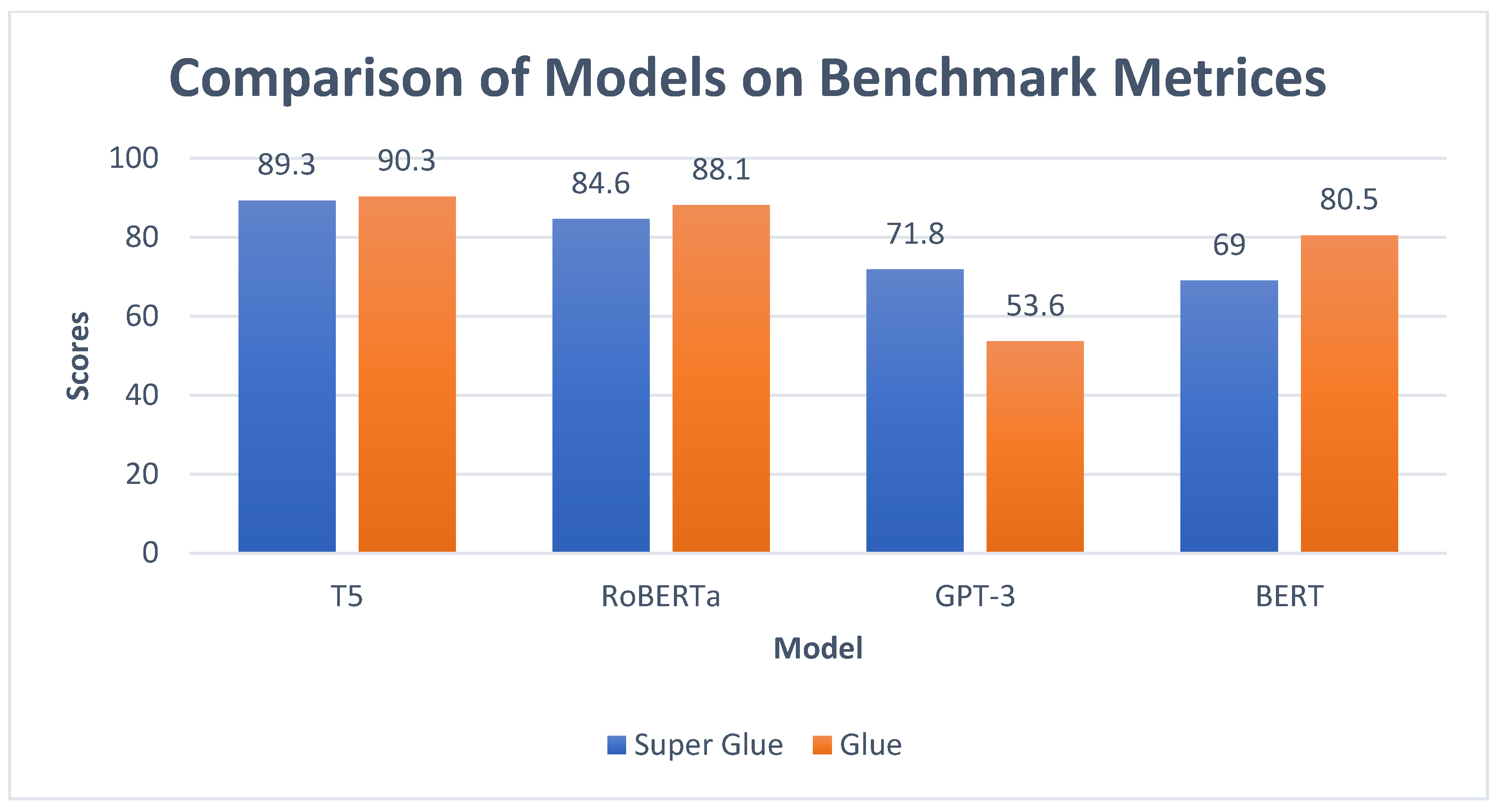

3.3. Artificial Intelligence

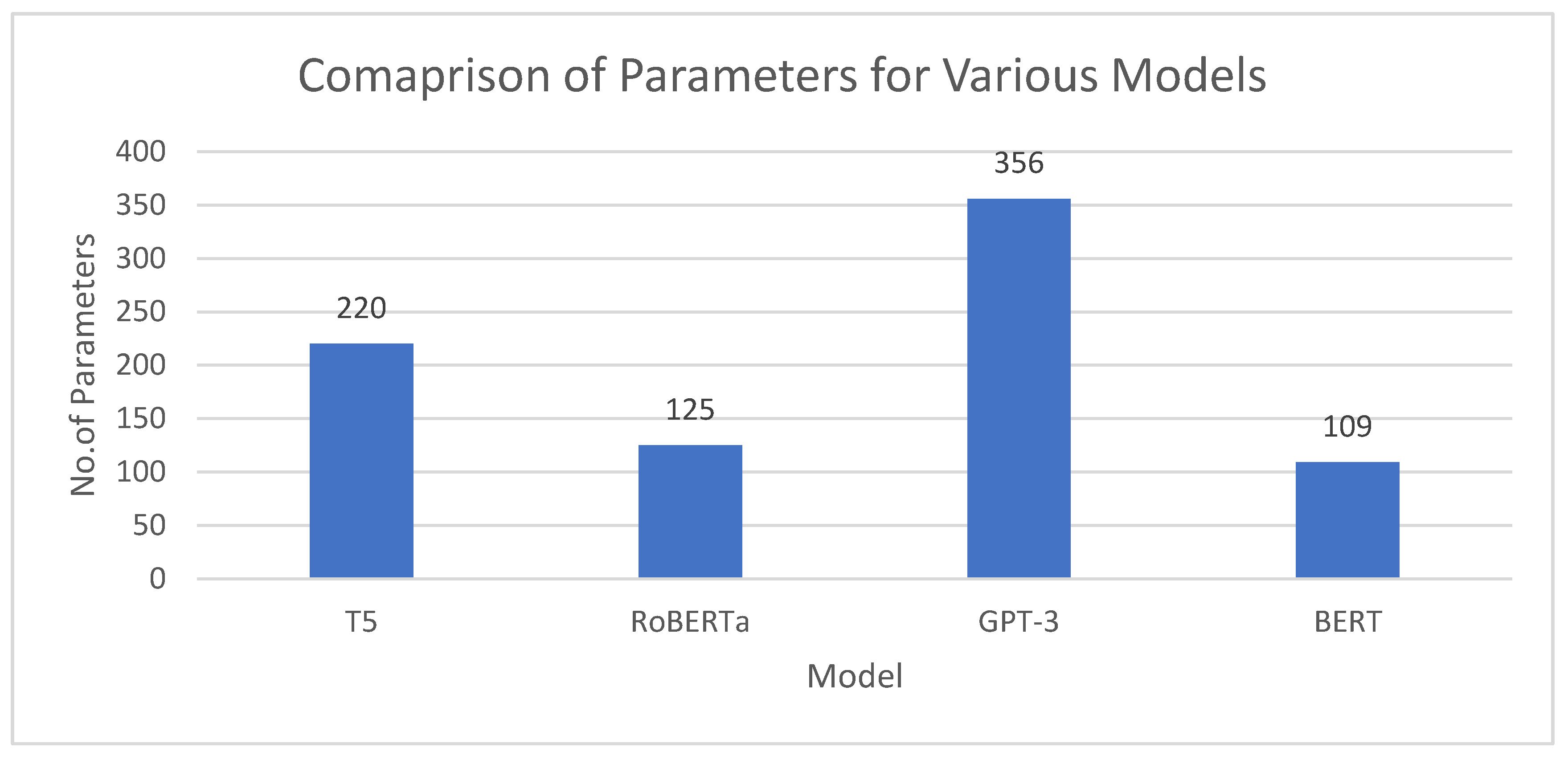

3.3.1. The Generative Pre-Trained Transformer 3 (GPT-3)

3.3.2. GPT-2

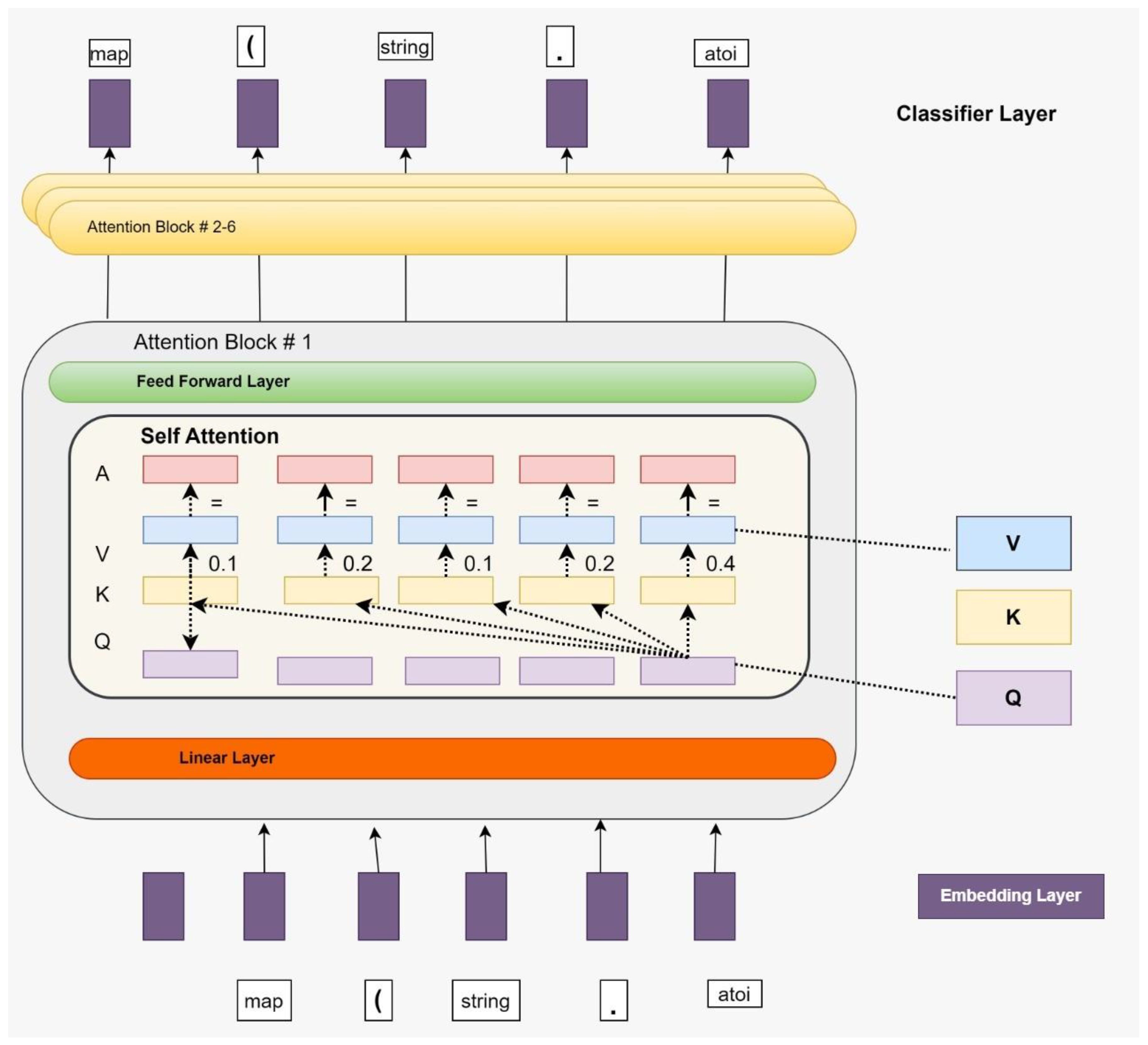

3.3.3. Bidirectional Encoder Representations from Transformers (BERT)

3.3.4. XLNet

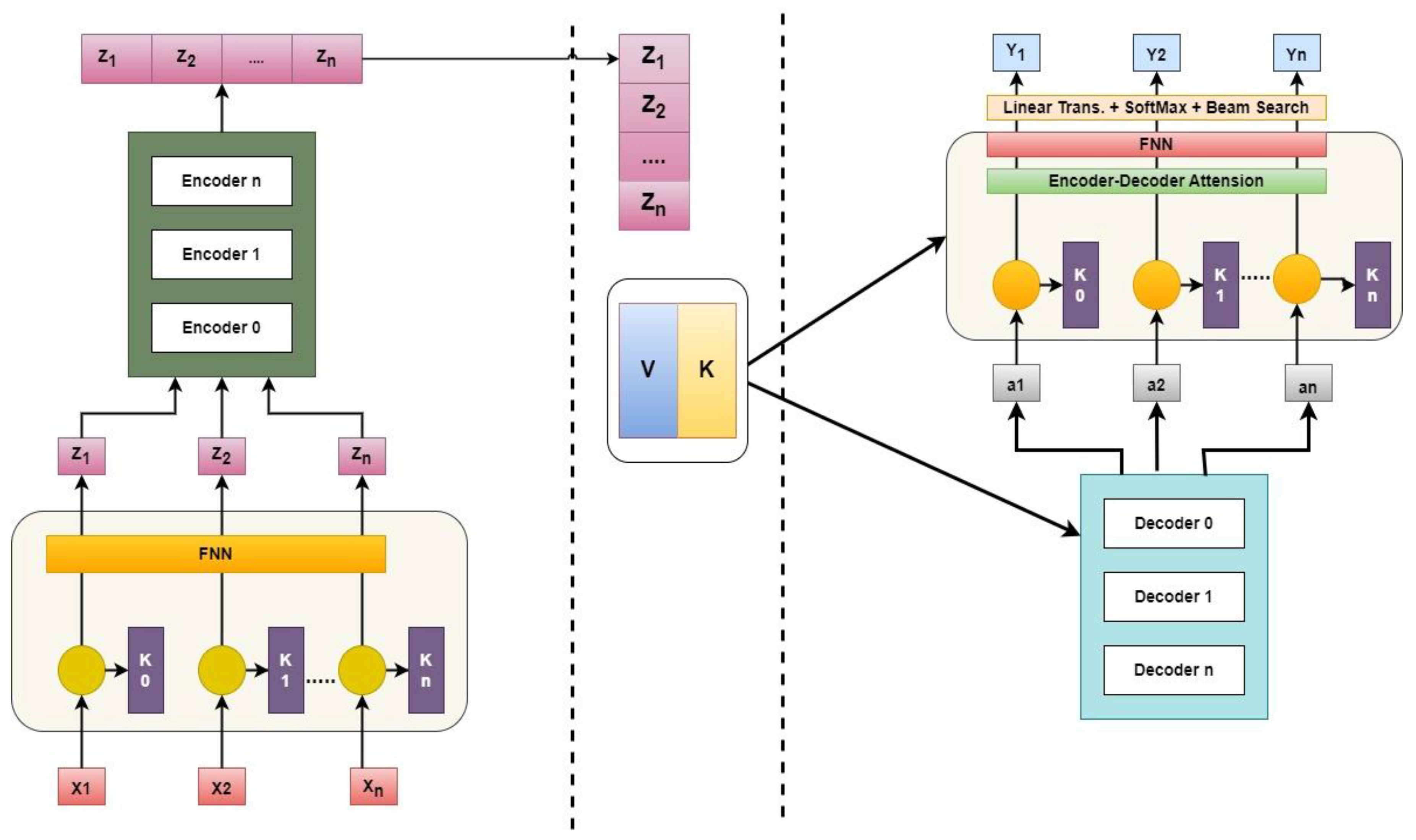

3.3.5. Transformers

3.4. Discussion

- RQ-2.

- “How is the status of AI models in this field now influenced by historical advancements and theoretical bases in sentiment analysis and cross-lingual transfer learning?”

4. Foundations of Cross-Lingual Transfer Learning

- RQ-3.

- “What are the main obstacles and recent advances in the use of AI models for sentiment analysis using cross-lingual transfer learning approaches?”

5. State-of-the-Art Models and Techniques

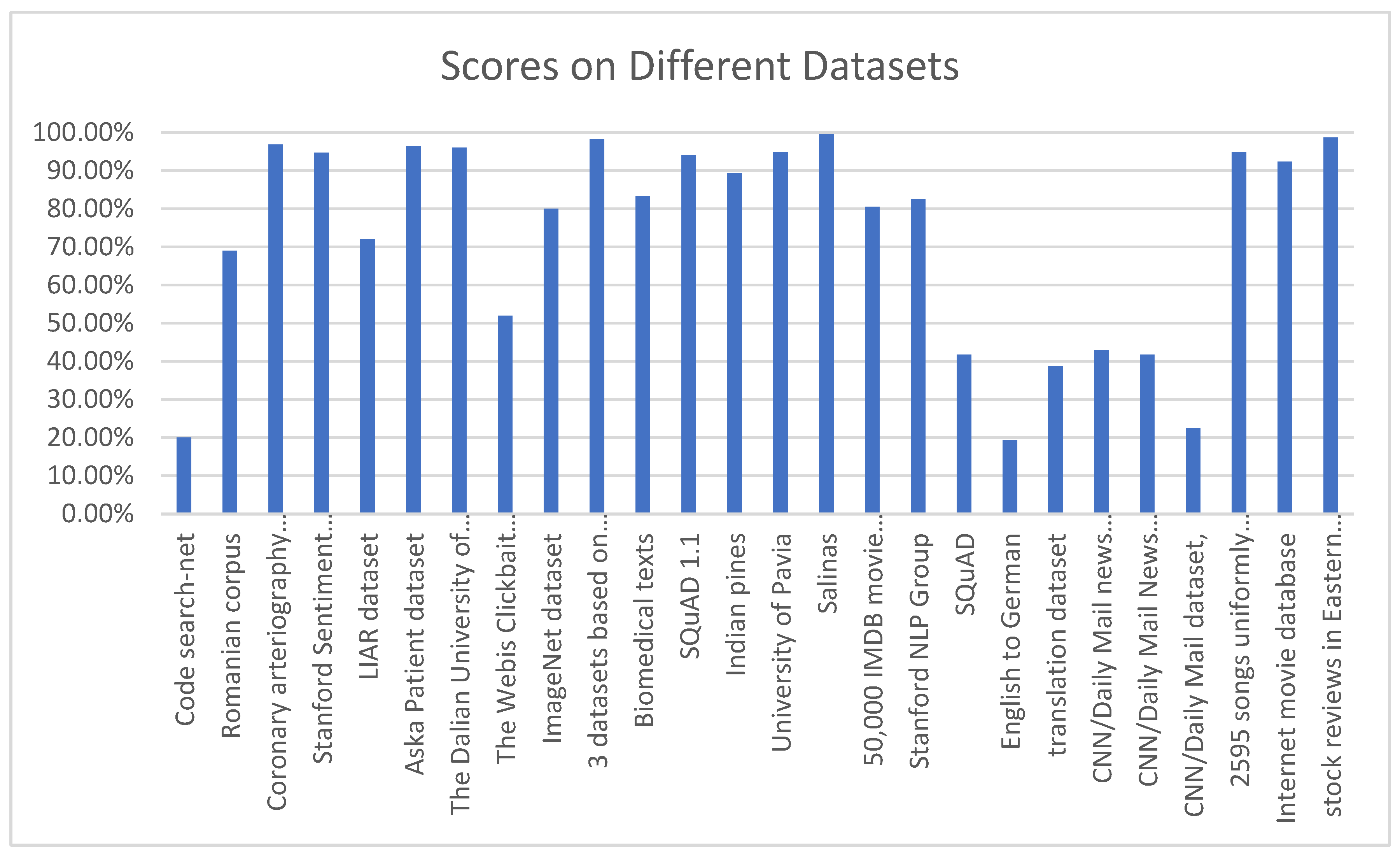

| Publications | Model | Technique | Dataset | Evaluation Matrix | Results |

|---|---|---|---|---|---|

| [46] | BERT | NLP | Stanford Sentiment Treebank (SST) | F1 Score | 94.7% |

| [57] | BERT | syntax-infused Transformer, syntax-infused BERT | WMT ’14 EN-DE dataset | BLUE, GLUE benchmark | BERT perform better |

| [66] | BERT | Sent WordNet, LR, and LSTM | 50,000 IMDB movie reviews. | GLUE | 80.5% |

| [53] | Transformer | Hierarchical graph-based transformer | 3 Benchmark dataset | Rank-based evaluation matrices | Outperforms other deep learning models |

| [27] | Transformer with Bi-LSTM (TRANS-BLSTM) | BERT, Bidirectional LSTM, RNN, and transformer | SQuAD 1.1 GLUE |

F1 score | 94:01% |

| [69] | BERT | LASO (Listen Attentively, and Spell Once). | AISHELL-1 and AISHELL-2 | Visualization | speedup of about 50× and competitive performance |

| [70] | BERT | BERT-base, BERT-mix-up | GLUE benchmark | Matthew’s Correlations, accuracy | BERT-mix-up outperforms |

| [24] | XLNet | FNN (feed-forward neural network) | LIAR dataset | F1 Score, Precision, Recall | 72% |

| [51] | XLNet | Bidirectional Long Short-Term Memory (Bi-LSTM) | Aska Patient dataset | F measures | 96.4% |

| [71] | XLNet | Smooth Algorithm | The Webis Clickbait Corpus (Webis-Clickbait-17) is | F1 Scores | 52% |

| [43] | GPT-2 | RNN, GPT-2 | 13,850 entities of post-reply pairs | Word perplexity, readability & coherence | GPT-2 outperforms RNN |

| [26] | GPT-2 | RoGPT2 | Romanian corpus | BLEU | F0.5: 69.01 |

| [72] | GPT-2 | NLP | Biomedical texts | F1 score | 3.43 |

| [73] | GPT-2 | SP-GPT2, GPT2-SL and GPT2-WL | Luc-Bat dataset | Average semantic score | SP-GPT2 outperforms |

| [74] | XLNet | EXLNet-BG-Att-CNN | The Dalian University of Technology. | F1 Score, Precision, Recall | 96.05% |

| [40] | GPT-3 | Talking child avatar | Interviews data | Intraclass Correlation Coefficient | GPT-3 outperforms |

| [75] | GPT-3 | Codex | Code search-net | BLEU | 20.6 |

| [76] | GPT-2 | Variational RNN | 3 datasets based on BiLSTM and DistilBERT and a combination of them | Precision, Recall, F1 scores | 98.3%, |

| Publication | Technique | Contribution |

|---|---|---|

| [101] | GloVe | The embedding is pre-trained by including supplementary statistical word co-occurrence data. |

| [102] | SENTIX | In this study, a sentiment-aware language model (SENTIX) was pre-trained using four distinct pretraining objectives. The aim was to extract and consolidate domain-invariant sentiment information. |

| [103] | FastText | One potential approach to enhance the performance of word embeddings is to incorporate subword information during the pretraining phase. |

| [104] | SOWE | The word embedding should be pre-trained using the sentiment lexicon specific to the financial area. |

| [105] | AEN-BERT | The attentional encoder network is proposed as a means to enhance the alignment between the target and context words. |

| [106] | BERT | In this study, we propose to perform fine-tuning on the BERT model to improve its performance in the task of emotion detection. |

| [107] | BERT-emotion | The integration of ensemble BERT with bi-LSTM and capsule models is proposed as a means to enhance the efficacy of emotion detection. |

| [108] | BERT-Pair-QA/NLI | To adapt ABSA (Aspect-Based Sentiment Analysis) into a sentence-pair classification task, an auxiliary sentence can be constructed. |

| [109] | BERT-PT | The BERT model was fine-tuned using domain-specific review data and machine reading comprehensive data. |

| [110] | DialogueGCN | This study aims to address the challenges associated with context propagation in RNNs by leveraging graph embedding techniques. |

| [111] | DialogueRNN | In this study, the author analyzes and models the emotional dynamics associated with a given speech, specifically focusing on the emotional dynamic of utterance |

| [112] | GCN | The adoption of a dependency tree was employed to effectively utilize structural syntactical information. |

| [113] | HAGAN | The proposed approach involves the utilization of Ensemble BERT combined with hierarchical attention generative adversarial networks (GANs) to distill sentiment-distinct yet domain-indistinguishable representations. |

| [114] | mBERT | BERT was pre-trained by utilizing 104 distinct language versions of Wikipedia as the foundational corpus. |

| [115] | mBERT-SL | The use of the prediction outcome from mBERT as supplementary input data for the fine-tuning of a multilingual model. |

| [116] | XLM | The enhancement of cross-lingual model generalization can be achieved by including supervised cross-lingual and unsupervised monolingual pretraining objectives. |

| [117] | BAT | One potential approach to enhance the performance of the BERT model is to use adversarial training throughout the fine-tuning process. |

| [118] | BERT | The suggestion of employing intramodality and inner-modality attention mechanisms to more effectively capture incongruent information. 28. The contribution made by this study. |

| [119] | BERT | The integration of picture and text representation was enhanced by the use of an applied bridge layer and a 2D-intra-attention layer. |

| [80] | BERT-ADA | The BERT model was fine-tuned using post-training techniques and further enhanced by incorporating more data from several domains. |

| [120] | BERT-DAAT | Following the pretraining phase, the BERT model underwent post-training, whereby target domain masked language model tasks and a domain differentiating task were employed to extract the domain-specific features. |

| [121] | CM-BERT | The application of masked multimodal attention was utilized to modify the word embedding by including non-verbal cues. |

| [122] | HFM | A hierarchical fusion approach is proposed to rebuild the characteristics across three modalities. |

| [98] | ICCN | The outer-product pair was utilized in conjunction with deep canonical correlation analysis to enhance the fusion of multimodal data. |

| [123] | MAG-BERT | The proposed multimodal adaptation gate (MAG) is suggested as a means to enhance the incorporation of multimodal information inside a pre-trained model during the process of fine-tuning. |

| [124] | MISA | The modality invariant and modality-specific mechanisms were employed to enhance the process of multimodal fusion. |

| [125] | Multitask training- BERT | The performance of a multitask learning framework is significantly impacted when the learning process is asynchronous across many subtasks. |

| [126] | SDGCN-BERT | The present study introduces a graph convolutional network as a means to effectively capture the interdependencies of sentiment among various characteristics. |

| [127] | WTN | The utilization of an ensemble BERT model in conjunction with a Wasserstein distance module is proposed as a means to enhance the caliber of domain invariant characteristics. |

| [128] | BERT | The use of an ensemble BERT model in conjunction with a contrastive learning framework is proposed as a means to augment the depiction of domain adaptation. |

| [129] | CG-BERT | The context-aware information was utilized to enhance the identification of the target-aspect pair. |

| [130] | CL-BERT | The application of contrastive learning was incorporated into BERT to exploit emotion polarity and patterns. |

| [131] | CLIM | The integration of contrastive learning into BERT has been implemented to enhance the capture of domain-invariant and task-discriminative characteristics. |

| [132] | Knowledge-enabled BERT | The utilization of external sentiment domain information was included in the BERT model. |

| [133] | P-SUM | Two straightforward modules, namely parallel aggregation and hierarchical aggregation, are proposed to use the latent capabilities of hidden layers in BERT. |

| [134] | SpanEmo | The emotion categorization job may be framed as a span prediction issue. |

| [135] | SSNM | The interpretability of CDSA was enhanced by using both pivot and non-pivot information. |

| [136] | BERT-AAD | The catastrophic forgetting issue was addressed by employing adversarial adaptation together with a distillation approach. |

| [137] | BertMasker | The purpose of this study is to discover domain invariant characteristics by applying tokens that are linked to the domain and masking them. |

| [138] | BiERU | The proposed Entity Recognition and Understanding (ERU) system aims to enhance the extraction of contextual compositional and emotional elements in the task of conversational emotion detection. |

| [139] | PD-RGAT | The utilization of the phrase dependency graph in the Graph Convolutional Network (GCN) was leveraged to effectively exploit both short and long dependencies among words. |

| [140] | – | The sarcasm detection tasks were performed by directly fine-tuning several pre-trained models. |

| [141] | - | Discussed the Taxonomy of Sentiment Analysis in detail. Also, proposed a mechanism for the Bombay Stock Exchange. Targeted SENSEX and NIFTY Data for classification purposes. |

| Publications | Model | Characteristics | Datasets | Languages |

|---|---|---|---|---|

| [142] | Density-driven projection | The process of obtaining cross-lingual clusters and transferring lexical information may be achieved through the utilization of translation dictionaries that are produced from parallel corpora. | Google universal treebank; Wikipedia data; Bible data; Europarl |

MS-en / MS-de MS-es / MS-fr MS-it / MS-pt MS-sv |

| [143] | RBST | In this study, the author represents the language disparity as a static transfer vector that exists between the source language and the destination language for each specific polarity. | Amazon product reviews; 5 million Chinese posts from Weibo |

en-ch |

| [144] | Adversarial Deep Averaging Network (ADAN) | The proposed technique for language adversarial training consists of three components: a feature extractor, a language discriminator, and a sentiment classifier. | Yelp reviews; Chinese hotel reviews; BBN Arabic Sentiment Analysis data set; |

ch-en en-ar |

| [145] | Attention-based CNN model with adversarial cross-lingual learning | To address the issue of limited personal data on Sina Weibo and Twitter datasets, a potential solution is to extract language-independent characteristics. This approach aims to mitigate the problem by identifying and using traits that are not reliant on specific languages. | Twitter; |

en-ch (Twitter) en-ch (Weibo) |

| [146] | Bilingual-SGNS | The SGNS algorithm is utilized to generate word embedding vectors for two languages, which are subsequently aligned to a shared space. This alignment enables the application of fine-grained aspect-level sentiment analysis. | ABSA dataset in Hindi; English dataset of SemEval-2014 |

en-hi |

| [147] | BLSE | Employ a compact bilingual dictionary and annotated sentiment data from the source language to derive a bilingual translation matrix that incorporates sentiment information. | OpeNER; MultiBooked dataset |

en-es en-ca en-EU |

| [148] | DC-CNN | One approach to include latent sentiment information into Cross-Lingual Word Embeddings (CLWE) involves utilizing an annotated bilingual parallel corpus. | SST; TA; AC; SE16-T5; AFF | en-es/ en-nl en-ru/ en-de en-cs/ en-it en-fr/ en-ja |

| [149] | Vecmap | The unsupervised model Vecmap may be utilized to generate the first solution, hence eliminating the reliance on tiny seed dictionaries. | Public English-Italian dataset; Europarl; OPUS |

en-it en-de en-fi en-es |

| [150] | Ermes | The use of emojis as an additional source of sentiment supervision data can be employed to acquire sentiment-aware representations of words. | Amazon review dataset; Tweets | en-ja en-fr en-de |

| [151] | SVM; SNN; BiLSTM |

To enhance the effectiveness of brief text sentiment analysis, it is recommended to modify the word order of the target language. | OpeNER corpora; Catalan MultiBooked dataset |

en-es en-ca |

| [152] | Language Invariant Sentiment Analyzer (LISA) | The use of adversarial training across many languages with abundant resources to extrapolate sentiment information in a language with limited resources. | Amazon Review Dataset; Sentiraama Dataset |

en-de en-fr en-jp |

| [153] | NBLR+POSwemb; LSTM |

The use of Generative Adversarial Networks (GANs) for the initial pre-training of word embeddings, followed by a subsequent fine-tuning process. | OpenNER; NLP&CC 2013 CLSC |

en-es en-ca en-eu |

| [154] | TL-AAE-BiGRU | The use of Automatic Alignment Extraction (AAE) is employed to acquire bilingual parallel text and afterward align bilingual individuals inside a shared vector space through the application of a linear translation matrix. | Product comments on Amazon | en-ch en-de |

| [155] | Conditional Language Adversarial Network (CLAN) | The model may be enhanced by the use of conditional adversarial training, which involves training both the language model and discriminator in a manner that maximizes their performance. | Websis-CLS-10 Dataset | en-de en-fr en-jp |

| [33] | ATTM | The training samples should be further refined. | COAE2014 | ch-de ch-en ch-fr ch-es |

5.1. Strengths and Limitations of Existing Techniques

5.1.1. Strengths

5.1.2. Limitations

6. Limitations and Challenges

6.1. Zero-Shot Learning

6.2. Data Augmentation

6.3. Language Divergence

6.4. Domain Adaptation

6.5. Cultural Effects

- RQ-4.

- “What are the implications and uses of cross-lingual sentiment analysis in various fields, and how can AI-powered methodologies influence sentiment interpretation in multilingual environments?”

7. Impacts of Cross-lingual Sentiment Analysis

7.1. Customer Review Analysis

7.2. Social Media Monitoring

7.3. Public Sentiments Analysis

7.4. Healthcare Domain

7.5. Financial News Analysis

7.6. Online Platforms Marketing Analysis

8. Open Research Questions in Cross-Lingual Sentiment Analysis

- Data Scarcity in Low-Resource Languages: Many models perform poorly due to lack of labeled data, especially for morphologically rich or minority languages.

- Domain Adaptability: Cross-domain transferability remains weak. Models trained on product reviews often fail in healthcare or disaster communication domains.

- Bias and Fairness: Biases inherent in training data can be amplified across languages. Few studies address fairness in multilingual AI systems.

- Explainability: Most models are black-box in nature, making it difficult to understand or debug misclassifications across languages.

- Evaluation Standards: The field lacks consistent benchmarks and multilingual datasets, complicating model comparison and progress tracking.

9. Future Directions and Opportunities

9.1. Model Compression for Resource-Limited Environments

9.2. Ethical and Equitable AI in Multilingual Contexts

9.3. Practical Implementations and Case Analyses

9.4. Multimodal Sentiment Analysis

9.5. Creation of the Benchmark Dataset

10. Conclusions

References

- De Arriba, A.; Oriol, M.; Franch, X. Applying Transfer Learning to Sentiment Analysis in Social Media. Proc. IEEE Int. Conf. Requir. Eng. 2021, 2021, 342–348. [Google Scholar] [CrossRef]

- Agüero-Torales, M.M.; Abreu Salas, J.I.; López-Herrera, A.G. Deep learning and multilingual sentiment analysis on social media data: An overview. Appl. Soft Comput. 2021, 107, 107373. [Google Scholar] [CrossRef]

- Ostendorff, M.; Rehm, G. Efficient Language Model Training through Cross-Lingual and Progressive Transfer Learning. arXiv 2301, arXiv:2301.09626. [Google Scholar]

- Garner, B.; Thornton, C.; Luo Pawluk, A.; Mora Cortez, R.; Johnston, W.; Ayala, C. Utilizing text-mining to explore consumer happiness within tourism destinations. J. Bus. Res. 2022, 139, 1366–1377. [Google Scholar] [CrossRef]

- Larsen, A.B.L.; Sønderby, S.K.; Larochelle, H.; Winther, O. Autoencoding beyond pixels using a learned similarity metric. Proceedings of The 33rd International Conference on Machine Learning 2016, 48, 2341–2349. Available online: http://proceedings.mlr.press/v48/larsen16.html.

- Jusoh, S. A study on nlp applications and ambiguity problems. J. Theor. Appl. Inf. Technol. 2018, 96, 1486–1499. [Google Scholar]

- Singh, S.; Mahmood, A. The NLP Cookbook: Modern Recipes for Transformer Based Deep Learning Architectures. IEEE Access 2021, 9, 68675–68702. [Google Scholar] [CrossRef]

- Karita, S.; Wang, X.; Watanabe, S.; Yoshimura, T.; Zhang, W.; Chen, N.; Hayashi, T.; Hori, T.; Inaguma, H.; Jiang, Z.; et al. A Comparative Study on Transformer vs RNN in Speech Applications. In Proceedings of the 2019 IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), Singapore, 14-18 December 2019; pp. 449–456. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 2017, 5999–6009. [Google Scholar]

- Zhao, L.; Li, L.; Zheng, X.; Zhang, J. A BERT based Sentiment Analysis and Key Entity Detection Approach for Online Financial Texts. In Proceedings of the 2021 IEEE 24th International Conference on Computer Supported Cooperative Work in Design (CSCWD), Dalian, China, 5-7 May 2021; pp. 1233–1238. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers); Association for Computational Linguistics, 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Toppo, A.; Kumar, M. A Review of Generative Pretraining from Pixels. In Proceedings of the 2021 3rd International Conference on Advances in Computing, Communication Control and Networking (ICAC3N), Greater Noida, India, 17-18 December 2021; pp. 495–500. [Google Scholar] [CrossRef]

- Alec, R.; Jeffrey, W.; Rewon, C.; David, L.; Dario, A.; Ilya, S. Language Models are Unsupervised Multitask Learners | Enhanced Reader. OpenAI Blog 2019, 1, 9. [Google Scholar]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 1907, arXiv:1907.11692. [Google Scholar]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res. 2020, 21, 1–67. [Google Scholar]

- Wang, X.; Zhang, S.; Qing, Z.; Shao, Y.; Zuo, Z.; Gao, C.; Sang, N. OadTR: Online Action Detection with Transformers. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10-17 October 2021; pp. 7545–7555. [Google Scholar] [CrossRef]

- Duan, H.K.; Vasarhelyi, M.A.; Codesso, M.; Alzamil, Z. Enhancing the government accounting information systems using social media information: An application of text mining and machine learning. Int. J. Account. Inf. Syst. 2023, 48, 100600. [Google Scholar] [CrossRef]

- Liang, B.; Su, H.; Gui, L.; Cambria, E.; Xu, R. Aspect-based sentiment analysis via affective knowledge enhanced graph convolutional networks. Knowledge-Based Syst. 2022, 235, 107643. [Google Scholar] [CrossRef]

- Prottasha, N.J.; Sami, A.A.; Kowsher, M.; Murad, S.A.; Bairagi, A.K.; Masud, M.; Baz, M. Transfer Learning for Sentiment Analysis Using BERT Based Supervised Fine-Tuning. Sensors 2022, 22, 1–19. [Google Scholar] [CrossRef]

- Deshpande, G.; Rokne, J. User Feedback from Tweets vs App Store Reviews: An Exploratory Study of Frequency, Timing and Content. In Proceedings of the 2018 5th International Workshop on Artificial Intelligence for Requirements Engineering (AIRE), Banff, AB, Canada, 21-21 August 2018; pp. 15–21. [Google Scholar] [CrossRef]

- Wu, S.; Liu, Y.; Zou, Z.; Weng, T.H. S_I_LSTM: stock price prediction based on multiple data sources and sentiment analysis. Conn. Sci. 2022, 34, 44–62. [Google Scholar] [CrossRef]

- Li, M.; Li, W.; Wang, F.; Jia, X.; Rui, G. Applying BERT to analyze investor sentiment in stock market. Neural Comput. Appl. 2021, 33, 4663–4676. [Google Scholar] [CrossRef]

- Kowsher, M.; Sami, A.A.; Prottasha, N.J.; Arefin, M.S.; Dhar, P.K.; Koshiba, T. Bangla-BERT: Transformer-Based Efficient Model for Transfer Learning and Language Understanding. IEEE Access 2022, 10, 91855–91870. [Google Scholar] [CrossRef]

- Kumar J, A.; Esther Trueman, T.; Cambria, E. Fake News Detection Using XLNet Fine-Tuning Model. In Proceedings of the 2021 International Conference on Computational Intelligence and Computing Applications (ICCICA), Nagpur, India, 26-27 November 2021. [Google Scholar] [CrossRef]

- Farahani, M.; Gharachorloo, M.; Farahani, M.; Manthouri, M. ParsBERT: Transformer-based Model for Persian Language Understanding. Neural Process. Lett. 2021, 53, 3831–3847. [Google Scholar] [CrossRef]

- Niculescu, M.A.; Ruseti, S.; Dascalu, M. RoGPT2: Romanian GPT2 for Text Generation. In Proceedings of the 2021 IEEE 33rd International Conference on Tools with Artificial Intelligence (ICTAI), Washington, DC, USA, 1-3 November 2021; pp. 1154–1161. [Google Scholar] [CrossRef]

- Huang, Z.; Xu, P.; Liang, D.; Mishra, A.; Xiang, B. TRANS-BLSTM: Transformer with Bidirectional LSTM for Language Understanding. arXiv 2003, arXiv:2003.07000. [Google Scholar]

- Beltagy, I.; Lo, K.; Cohan, A. SCIBERT: A pretrained language model for scientific text. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP); Association for Computational Linguistics, 2019. [Google Scholar] [CrossRef]

- Dabre, R.; Chu, C.; Kunchukuttan, A. A Survey of Multilingual Neural Machine Translation. ACM Comput. Surv. 2020, 53. [Google Scholar] [CrossRef]

- Kanclerz, K.; Milkowski, P.; Kocon, J. Cross-lingual deep neural transfer learning in sentiment analysis. Procedia Comput. Sci. 2020, 176, 128–137. [Google Scholar] [CrossRef]

- Boudad, N. Cross-Multilingual, Cross-Lingual and Monolingual Transfer Learning For Arabic Dialect Sentiment Classification. 2023; 1–10. [Google Scholar]

- Hettiarachchi, H.; Adedoyin-Olowe, M.; Bhogal, J.; Gaber, M.M. TTL: transformer-based two-phase transfer learning for cross-lingual news event detection. Int. J. Mach. Learn. Cybern. 2023, 14, 2739–2760. [Google Scholar] [CrossRef]

- Wang, D.; Wu, J.; Yang, J.; Jing, B.; Zhang, W.; He, X.; Zhang, H. Cross-Lingual Knowledge Transferring by Structural Correspondence and Space Transfer. IEEE Trans. Cybern. 2022, 52, 6555–6566. [Google Scholar] [CrossRef] [PubMed]

- Fuad, A.; Al-Yahya, M. Cross-Lingual Transfer Learning for Arabic Task-Oriented Dialogue Systems Using Multilingual Transformer Model mT5. Mathematics 2022, 10. [Google Scholar] [CrossRef]

- Kumar, A.; Albuquerque, V.H.C. Sentiment Analysis Using XLM-R Transformer and Zero-shot Transfer Learning on Resource-poor Indian Language. ACM Trans. Asian Low-Resource Lang. Inf. Process. 2021, 20, 90. [Google Scholar] [CrossRef]

- Zhang, L.; Fan, H.; Peng, C.; Rao, G.; Cong, Q. Sentiment analysis methods for hpv vaccines related tweets based on transfer learning. Healthcare 2020, 8, 307. [Google Scholar] [CrossRef]

- Ahmad, Z.; Jindal, R.; Ekbal, A.; Bhattachharyya, P. Borrow from rich cousin: transfer learning for emotion detection using cross lingual embedding. Expert Syst. Appl. 2020, 139, 112851. [Google Scholar] [CrossRef]

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models are Few-Shot Learners. Adv. Neural Inf. Process. Syst. 2020, 2020, 1877–1901. [Google Scholar]

- Shihadeh, J.; Ackerman, M.; Troske, A.; Lawson, N.; Gonzalez, E. Brilliance Bias in GPT-3. In Proceedings of the 2022 IEEE Global Humanitarian Technology Conference (GHTC), Santa Clara, CA, USA, 8-11 September 2022; pp. 62–69. [Google Scholar] [CrossRef]

- Lammerse, M.; Hassan, S.Z.; Sabet, S.S.; Riegler, M.A.; Halvorsen, P. Human vs. GPT-3: The challenges of extracting emotions from child responses. In Proceedings of the 2022 14th International Conference on Quality of Multimedia Experience (QoMEX), Lippstadt, Germany, 5-7 September 2022. [Google Scholar] [CrossRef]

- Topal, M.O.; Bas, A.; van Heerden, I. Exploring Transformers in Natural Language Generation: GPT, BERT, and XLNet. arXiv 2021. [Google Scholar] [CrossRef]

- Lajko, M.; Csuvik, V.; Vidacs, L. Towards JavaScript program repair with Generative Pre-trained Transformer (GPT-2). In Proceedings of the ICSE '22: 44th International Conference on Software Engineering, Pittsburgh, PA, USA, 19 May 2022. [Google Scholar] [CrossRef]

- Li, C.; Xing, W. Natural Language Generation Using Deep Learning to Support MOOC Learners. Int. J. Artif. Intell. Educ. 2021, 31, 186–214. [Google Scholar] [CrossRef]

- Cui, Y.; Che, W.; Liu, T.; Qin, B.; Yang, Z. Pre-Training with Whole Word Masking for Chinese BERT. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 3504–3514. [Google Scholar] [CrossRef]

- Wang, B.; Kuo, C.C.J. SBERT-WK: A Sentence Embedding Method by Dissecting BERT-Based Word Models. IEEE/ACM Trans. Audio Speech Lang. Process. 2020, 28, 2146–2157. [Google Scholar] [CrossRef]

- Munikar, M.; Shakya, S.; Shrestha, A. Fine-grained Sentiment Classification using BERT. In Proceedings of the 2019 Artificial Intelligence for Transforming Business and Society (AITB), Kathmandu, Nepal, 5 November 2019. [Google Scholar] [CrossRef]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.; Le, Q. V. XLNet: Generalized autoregressive pretraining for language understanding. Adv. Neural Inf. Process. Syst. 2019, 32. Available online: https://proceedings.neurips.cc/paper/2019/hash/dc6a7e655d7e5840e66733e9ee67cc69-Abstract.html.

- Dai, Z.; Yang, Z.; Yang, Y.; Carbonell, J.; Le, Q. V.; Salakhutdinov, R. Transformer-XL: Attentive language models beyond a fixed-length context. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics: Florence, Italy, 2019. [Google Scholar] [CrossRef]

- Arabadzhieva-Kalcheva, N.; Kovachev, I. Comparison of BERT and XLNet accuracy with classical methods and algorithms in text classification. In Proceedings of the 2021 International Conference on Biomedical Innovations and Applications (BIA), Varna, Bulgaria, 2-4 June 2022; pp. 74–76. [Google Scholar] [CrossRef]

- Habbat, N.; Anoun, H.; Hassouni, L. Combination of GRU and CNN deep learning models for sentiment analysis on French customer reviews using XLNet model. IEEE Eng. Manag. Rev. 2022, 1–9. [Google Scholar] [CrossRef]

- Sweidan, A.H.; El-Bendary, N.; Al-Feel, H. Sentence-Level Aspect-Based Sentiment Analysis for Classifying Adverse Drug Reactions (ADRs) Using Hybrid Ontology-XLNet Transfer Learning. IEEE Access 2021, 9, 90828–90846. [Google Scholar] [CrossRef]

- Alshahrani, A.; Ghaffari, M.; Amirizirtol, K.; Liu, X. Identifying Optimism and Pessimism in Twitter Messages Using XLNet and Deep Consensus. In Proceedings of the 2020 International Joint Conference on Neural Networks (IJCNN), Glasgow, UK, 19-24 July 2020. [Google Scholar] [CrossRef]

- Gong, J.; Ma, H.; Teng, Z.; Teng, Q.; Zhang, H.; Du, L.; Chen, S.; Bhuiyan, M.Z.A.; Li, J.; Liu, M. Hierarchical Graph Transformer-Based Deep Learning Model for Large-Scale Multi-Label Text Classification. IEEE Access 2020, 8, 30885–30896. [Google Scholar] [CrossRef]

- Kim, S.Y.; Ganesan, K.; Dickens, P.; Panda, S. Public sentiment toward solar energy—opinion mining of twitter using a transformer-based language model. Sustain. 2021, 13, 1–19. [Google Scholar] [CrossRef]

- Imamura, K.; Sumita, E. Recycling a pre-trained BERT encoder for neural machine translation. EMNLP-IJCNLP 2019 - Proc. 3rd Work. Neural Gener. Transl. 2019; 23–31. [Google Scholar] [CrossRef]

- Chan, Y.H.; Fan, Y.C. A recurrent bert-based model for question generation. MRQA@EMNLP 2019 - Proc. 2nd Work. Mach. Read. Quest. Answering, 2019; 154–162. [Google Scholar] [CrossRef]

- Sundararaman, D.; Subramanian, V.; Wang, G.; Si, S.; Shen, D.; Wang, D.; Carin, L. Syntax-Infused Transformer and BERT models for Machine Translation and Natural Language Understanding. arXiv, 1911; arXiv:1911.06156. [Google Scholar]

- Xenouleas, S.; Malakasiotis, P.; Apidianaki, M.; Androutsopoulos, I. SUM-QE: A BERT-based summary quality estimation model. EMNLP-IJCNLP 2019 - 2019 Conf. Empir. Methods Nat. Lang. Process. 9th Int. Jt. Conf. Nat. Lang. Process. Proc. Conf. 2019; 6005–6011. [Google Scholar] [CrossRef]

- Gillioz, A.; Casas, J.; Mugellini, E.; Khaled, O.A. Overview of the Transformer-based Models for NLP Tasks. Proc. 2020 Fed. Conf. Comput. Sci. Inf. Syst. FedCSIS 2020 2020, 21, 179–183. [Google Scholar] [CrossRef]

- Li, B.; Kong, Z.; Zhang, T.; Li, J.; Li, Z.; Liu, H.; Ding, C. Efficient transformer-based large scale language representations using hardware-friendly block structured pruning. Find. Assoc. Comput. Linguist. Find. ACL EMNLP 2020 2020, 3187–3199. [Google Scholar] [CrossRef]

- Shavrina, T.; Fenogenova, A.; Emelyanov, A.; Shevelev, D.; Artemova, E.; Malykh, V.; Mikhailov, V.; Tikhonova, M.; Chertok, A.; Evlampiev, A. RussianSuperGLUE: A Russian language understanding evaluation benchmark. EMNLP 2020 - 2020 Conf. Empir. Methods Nat. Lang. Process. Proc. Conf. 2020; 4717–4726. [Google Scholar] [CrossRef]

- Geerlings, C.; Meroño-Peñuela, A. Interacting with {GPT}-2 to Generate Controlled and Believable Musical Sequences in {ABC} Notation. Proc. 1st Work. NLP Music Audio, 2020; 49–53. Available online: https://www.aclweb.org/anthology/2020.nlp4musa-1.10.

- Guo, J.; Zhang, Z.; Xu, L.; Wei, H.R.; Chen, B.; Chen, E. Incorporating BERT into parallel sequence decoding with adapters. Adv. Neural Inf. Process. Syst. 2020, 2020, 10843–10854. [Google Scholar]

- Ramina, M.; Darnay, N.; Ludbe, C.; Dhruv, A. Topic level summary generation using BERT induced Abstractive Summarization Model. Proc. Int. Conf. Intell. Comput. Control Syst. ICICCS 2020 2020, 747–752. [Google Scholar] [CrossRef]

- Savelieva, A.; Au-Yeung, B.; Ramani, V. Abstractive summarization of spoken and written instructions with BERT. CEUR Workshop Proc. 2020, 2666. [Google Scholar] [CrossRef]

- Alaparthi, S.; Mishra, M. Bidirectional Encoder Representations from Transformers (BERT): A sentiment analysis odyssey. arXiv, 2007; arXiv:2007.01127. [Google Scholar]

- Aaditya, M.D.; Lal, D.M.; Singh, K.P.; Ojha, M. Layer Freezing for Regulating Fine-tuning in BERT for Extractive Text Summarization. Pacis, 2021; 182. Available online: https://scholar.archive.org/work/ezki3fvotva2bovduugg4mdlmq/access/wayback/.

- Agrawal, Y.; Shanker, R.G.R.; Alluri, V. Transformer-Based Approach Towards Music Emotion Recognition from Lyrics. Lect. Notes Comput. Sci. (including Subser. Lect. Notes Artif. Intell. Lect. Notes Bioinformatics), 2021; 12657, 167–175. [Google Scholar] [CrossRef]

- Bai, Y.; Yi, J.; Tao, J.; Tian, Z.; Wen, Z.; Zhang, S. Fast End-to-End Speech Recognition Via Non-Autoregressive Models and Cross-Modal Knowledge Transferring from BERT. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 1897–1911. [Google Scholar] [CrossRef]

- Sun, L.; Xia, C.; Yin, W.; Liang, T.; Yu, P.S.; He, L. Mixup-Transformer: Dynamic Data Augmentation for NLP Tasks. COLING 2020 - 28th Int. Conf. Comput. Linguist. Proc. Conf. 2020; 3436–3440. [Google Scholar] [CrossRef]

- Rajapaksha, P.; Farahbakhsh, R.; Crespi, N. BERT, XLNet or RoBERTa: The Best Transfer Learning Model to Detect Clickbaits. IEEE Access 2021, 9, 154704–154716. [Google Scholar] [CrossRef]

- Schneider, E.T.R.; De Souza, J.V.A.; Gumiel, Y.B.; Moro, C.; Paraiso, E.C. A GPT-2 language model for biomedical texts in Portuguese. Proc. - IEEE Symp. Comput. Med. Syst. 2021; 2021, 474–479. [CrossRef]

- Nguyen, P.; Pham, H.; Bui, T.; Nguyen, T.; Luong, D. SP-GPT2: Semantics Improvement in Vietnamese Poetry Generation. Proc. - 20th IEEE Int. Conf. Mach. Learn. Appl. ICMLA 2021, 2021; 1576–1581. [Google Scholar] [CrossRef]

- Xu, Z.Z.; Liao, Y.K.; Zhan, S.Q. Xlnet Parallel Hybrid Network Sentiment Analysis Based on Sentiment Word Augmentation. 2022 7th Int. Conf. Comput. Commun. Syst. ICCCS 2022 2022, 63–68. [CrossRef]

- Khan, J.Y.; Uddin, G. Automatic Code Documentation Generation Using GPT-3. arXiv, 2022. [Google Scholar] [CrossRef]

- Demirci, D.; Sahin, N.; Sirlancis, M.; Acarturk, C. Static Malware Detection Using Stacked BiLSTM and GPT-2. IEEE Access 2022, 10, 58488–58502. [CrossRef]

- Tu, L.; Qu, J.; Yavuz, S.; Joty, S.; Liu, W.; Xiong, C.; Zhou, Y. Efficiently Aligned Cross-Lingual Transfer Learning for Conversational Tasks using Prompt-Tuning. EACL 2024 - 18th Conf. Eur. Chapter Assoc. Comput. Linguist. Find. EACL 2024, 2024; 1278–1294arXiv:2304.01295. [Google Scholar]

- Zhao, C.; Wu, M.; Yang, X.; Zhang, W.; Zhang, S.; Wang, S.; Li, D. A Systematic Review of Cross-Lingual Sentiment Analysis: Tasks, Strategies, and Prospects. ACM Comput. Surv. 2024, 56. [CrossRef]

- Gupta, M.; Agrawal, P. Compression of Deep Learning Models for Text: A Survey. ACM Trans. Knowl. Discov. Data 2022, 16, 1–55. [CrossRef]

- A. Rietzler, S. Stabinger, P. Opitz, and S. Engl. “Adapt or get left behind: Domain adaptation through BERT language model finetuning for aspect-target sentiment classification.“ LREC 2020 - 12th International Conference on Language Resources and Evaluation, Conference Proceedings arXiv. 2020; 4933–4941.

- Goldfarb-Tarrant, S.; Ross, B.; Lopez, A. Cross-lingual Transfer Can Worsen Bias in Sentiment Analysis. EMNLP 2023 - 2023 Conf. Empir. Methods Nat. Lang. Process. Proc. 2023; 5691–5704. [Google Scholar] [CrossRef]

- Hameed, R.; Ahmadi, S.; Daneshfar, F. Transfer Learning for Low-Resource Sentiment Analysis. arXiv, 2304; arXiv:2304.04703. [Google Scholar]

- Sun, J.; Ahn, H.; Park, C.Y.; Tsvetkov, Y.; Mortensen, D.R. Cross-cultural similarity features for cross-lingual transfer learning of pragmatically motivated tasks. EACL 2021 - 16th Conf. Eur. Chapter Assoc. Comput. Linguist. Proc. Conf. 2021; 2403–2414. [Google Scholar] [CrossRef]

- Ding, K.; Liu, W.; Fang, Y.; Mao, W.; Zhao, Z.; Zhu, T.; Liu, H.; Tian, R.; Chen, Y. A Simple and Effective Method to Improve Zero-Shot Cross-Lingual Transfer Learning. Proc. - Int. Conf. Comput. Linguist. COLING, 2022; 29, 4372–4380.

- Han, S. Cross-lingual Transfer Learning for Fake News Detector in a Low-Resource Language. arXiv, 2022; arXiv:2208.12482. [Google Scholar]

- Lee, C.-H.; Lee, H.-Y. Cross-Lingual Transfer Learning for Question Answering. arXiv, 2019; arXiv:1907.06042.

- Edmiston, D.; Keung, P.; Smith, N.A. Domain Mismatch Doesn’t Always Prevent Cross-Lingual Transfer Learning. 2022 Lang. Resour. Eval. Conf. Lr. 2022, 2022; 892–899.

- Zhou, H.; Chen, L.; Shi, F.; Huang, D. Learning bilingual sentiment word embeddings for cross-language sentiment classification. In Proceedings of the 53rd annual meeting of the association for computational linguistics and the 7th international joint conference on natural language processing (vol 1: Long Papers); pp. 430–440.

- De Mulder, W.; Bethard, S.; Moens, M.F. A survey on the application of recurrent neural networks to statistical language modeling. Comput. Speech Lang. 2015, 30, 61–98. [CrossRef]

- Ranathunga, S.; Lee, E.-S.A.; Skenduli, M.P.; Shekhar, R.; Alam, M.; Kaur, R. Neural Machine Translation for Low-Resource Languages: A Survey. ACM Comput. Surv. 2022; 1–35. [Google Scholar] [CrossRef]

- S. Manchanda and G. Grunin, “Domain Informed Neural Machine Translation: Developing Translation Services for Healthcare Enterprise,” Proc. 22nd Annu. Conf. Eur. Assoc. Mach. Transl. EAMT 2020. 2020; 255–262. Available online: https://aclanthology.org/2020.eamt-1.27.

- Acheampong, F.A.; Nunoo-Mensah, H.; Chen, W. Transformer models for text-based emotion detection: a review of BERT-based approaches. Artif. Intell. Rev. 2021, 54, 5789–5829. [CrossRef]

- Babanejad, N.; Davoudi, H.; An, A.; Papagelis, M. Affective and Contextual Embedding for Sarcasm Detection. COLING 2020 - 28th International Conference on Computational Linguistics, Proceedings of the Conference, 2020; 225–243. [Google Scholar] [CrossRef]

- Taghizadeh, N.; Faili, H. Cross-lingual transfer learning for relation extraction using Universal Dependencies. Comput. Speech Lang. 2022, 71, 101265. [CrossRef]

- Rao, R.S.; Umarekar, A.; Pais, A.R. Application of word embedding and machine learning in detecting phishing websites. Telecommun. Syst. 2022, 79, 33–45. [CrossRef]

- Unanue, I.J.; Haffari, G.; Piccardi, M. T3L: Translate-and-Test Transfer Learning for Cross-Lingual Text Classification. Trans. Assoc. Comput. Linguist. 2023, 11, 1147–1161. [CrossRef]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.; Le, Q. V. XLNet: Generalized autoregressive pretraining for language understanding. Adv. Neural Inf. Process. Syst. 2019, 32. [Google Scholar]

- Sun, Z.; Sarma, P.K.; Sethares, W.A.; Liang, Y. Learning relationships between text, audio, and video via deep canonical correlation for multimodal language analysis. AAAI 2020 - 34th AAAI Conf. Artif. Intell. 2020, 34, 8992–8999. [Google Scholar] [CrossRef]

- Khurana, S.; Dawalatabad, N.; Laurent, A.; Vicente, L.; Gimeno, P.; Mingote, V.; Glass, J. Improved Cross-Lingual Transfer Learning For Automatic Speech Translation. arXiv, 2023; arXiv:2306.00789. [Google Scholar]

- Chaturvedi, I.; Cambria, E.; Welsch, R.E.; Herrera, F. Distinguishing between facts and opinions for sentiment analysis: Survey and challenges. Inf. Fusion 2018, 44, 65–77. [Google Scholar] [CrossRef]

- Pan, S.J.; Yang, Q.; Pennington, J.; Socher, R.; Manning, C.D.; Phan, H.T.; Nguyen, N.T.; Hwang, D.; S, C.I.; E, C.; et al. A_Survey_on_Transfer_Learning (中文(简体)). IEEE Trans Knowl Data Eng 22, 1359; 22, 1345–1359. [Google Scholar]

- Zhou, J.; Tian, J.; Wang, R.; Wu, Y.; Xiao, W.; He, L. Sentix: a sentiment-aware pre-trained model for cross-domain sentiment analysis. In Proceedings of the 28th international conference on computational linguistics, Barcelona, Spain, 8-13 December 2020; pp. 568–579. [Google Scholar]

- Bojanowski, P.; Grave, E.; Joulin, A.; Mikolov, T. Enriching Word Vectors with Subword Information. Trans. Assoc. Comput. Linguist. 2017, 5, 135–146. [Google Scholar] [CrossRef]

- Li, Q.; Shah, S. Learning stock market sentiment lexicon and sentiment-oriented word vector from stocktwits. CoNLL 2017 - 21st Conference on Computational Natural Language Learning, Proceedings, 2017; 301–310. [Google Scholar] [CrossRef]

- Song, Y.; Wang, J.; Jiang, T.; Liu, Z.; Rao, Y. Targeted Sentiment Classification with Attentional Encoder Network. Lect. Notes Comput. Sci. (including Subser. Lect. Notes Artif. Intell. Lect. Notes Bioinformatics), 2019; 11730, 93–2019. [Google Scholar] [CrossRef]

- Luo, L.; Wang, Y. EmotionX-HSU: Adopting Pre-trained BERT for Emotion Classification. arXiv 2019, arXiv:1907.09669. [Google Scholar]

- Vlad, G.-A.; Tanase, M.-A.; Onose, C.; Cercel, D.-C. Sentence-Level Propaganda Detection in News Articles with Transfer Learning and BERT-BiLSTM-Capsule Model. Proceedings of the second workshop on natural language processing for internet freedom: Censorship, Disinformation, and Propaganda, 2019; 148–154. [Google Scholar] [CrossRef]

- Sun, C.; Huang, L.; Qiu, X. Utilizing BERT for aspect-based sentiment analysis via constructing auxiliary sentence. arXiv 2019, arXiv:1903.09588. [Google Scholar]

- Xu, H.; Liu, B.; Shu, L.; Yu, P.S. BERT post-training for review reading comprehension and aspect-based sentiment analysis. NAACL HLT 2019 - 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies - Proceedings of the Conference, 2019. [Google Scholar]

- Ghosal, D.; Majumder, N.; Poria, S.; Chhaya, N.; Gelbukh, A. DialogueGCN: A graph convolutional neural network for emotion recognition in conversation. arXiv, 2019. [Google Scholar] [CrossRef]

- Majumder, N.; Poria, S.; Hazarika, D.; Mihalcea, R.; Gelbukh, A.; Cambria, E. DialogueRNN: An attentive RNN for emotion detection in conversations. 33rd AAAI Conference on Artificial Intelligence, AAAI 2019, 31st Innovative Applications of Artificial Intelligence Conference, IAAI 2019 and the 9th AAAI Symposium on Educational Advances in Artificial Intelligence, EAAI 2019, 2019; vol. 33, 6818–6825. [Google Scholar] [CrossRef]

- C. Zhang, Q. Li, and D. Song, “Aspect-based sentiment classification with aspect-specific graph convolutional networks,” EMNLP-IJCNLP 2019 - 2019 Conference on Empirical Methods in Natural Language Processing and 9th International Joint Conference on Natural Language Processing, Proceedings of the Conference. pp. 4568–4578, 2019. [CrossRef]

- Y. Zhang, D. Miao, and J. Wang, “Hierarchical Attention Generative Adversarial Networks for Cross-domain Sentiment Classification.” p. 11334, 2019. [Online]. Available: http://arxiv.org/abs/1903.

- J. Devlin, M. W. Chang, K. Lee, and K. Toutanova, BERT: Pre-training of deep bidirectional transformers for language understanding, 2019, vol. 1. arXiv:1810.04805.

- X. Dong and G. de Melo, “A robust self-learning framework for cross-lingual text classification,” EMNLP-IJCNLP 2019 - 2019 Conference on Empirical Methods in Natural Language Processing and 9th International Joint Conference on Natural Language Processing, Proceedings of the Conference. 2019; pp. 6306–6310. [CrossRef]

- A. Conneau and G. Lample, “Cross-lingual language model pretraining,” Advances in Neural Information Processing Systems, 2019, vol. 32.

- A. Karimi, L. Rossi, and A. Prati, “Adversarial training for aspect-based sentiment analysis with BERT,” Proceedings - International Conference on Pattern Recognition. 2020; pp. 8797–8803. [CrossRef]

- H. Pan, Z. Lin, P. Fu, Y. Qi, and W. Wang, “Modeling intra and inter-modality incongruity for multi-modal sarcasm detection,” Findings of the Association for Computational Linguistics Findings of ACL: EMNLP 2020. 2020, pp. 1383–1392. [CrossRef]

- X. Wang, X. Sun, T. Yang, and H. Wang, “Building a Bridge: A Method for Image-Text Sarcasm Detection Without Pretraining on Image-Text Data,” In: Proceedings of the first international workshop on natural language processing beyond text. 2020, pp. 19–29. [CrossRef]

- Sun, J. Wang, Q. Qi, and J. Liao, “Adversarial and domain-aware BERT for cross-domain sentiment analysis,” Proceedings of the Annual Meeting of the Association for Computational Linguistics. 2020, pp. 4019–4028. [CrossRef]

- K. Yang, H. Xu, and K. Gao, “CM-BERT: Cross-Modal BERT for Text-Audio Sentiment Analysis,” MM 2020 - Proceedings of the 28th ACM International Conference on Multimedia. 2020, pp. 521–528. [CrossRef]

- Y. Cai, H. Cai, and X. Wan, “Multi-modal sarcasm detection in Twitter with hierarchical fusion model,” ACL 2019 - 57th Annual Meeting of the Association for Computational Linguistics, Proceedings of the Conference. 2020, pp. 2506–2515. [CrossRef]

- W. Rahman et al., “Integrating multimodal information in large pretrained transformers,” Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2020, vol. 2020. pp. 2359–2369. [CrossRef]

- D. Hazarika, R. Zimmermann, and S. Poria, “MISA: Modality-Invariant and -Specific Representations for Multimodal Sentiment Analysis,” MM 2020 - Proceedings of the 28th ACM International Conference on Multimedia. 2020, pp. 1122–1131. [CrossRef]

- W. Yu et al., “CH-SIMS: A Chinese multimodal sentiment analysis dataset with fine-grained annotations of modality,” Proceedings of the Annual Meeting of the Association for Computational Linguistics. pp. 3718–3727, 2020. [CrossRef]

- P. Zhao, L. Hou, and O. Wu, “Modeling sentiment dependencies with graph convolutional networks for aspect-level sentiment classification,” Knowledge-Based Syst., vol. 193, p. 105443, 2020. [CrossRef]

- Y. Du, M. He, L. Wang, and H. Zhang, “Wasserstein based transfer network for cross-domain sentiment classification,” Knowledge-Based Syst., vol. 204, p. 106162, 2020. [CrossRef]

- T. Shi, L. Li, P. Wang, and C. K. Reddy, “A Simple and Effective Self-Supervised Contrastive Learning Framework for Aspect Detection,” 35th AAAI Conference on Artificial Intelligence, AAAI 2021, vol. 15. pp. 13815–13824, 2021. [CrossRef]

- Z. Wu and D. C. Ong, “Context-Guided BERT for Targeted Aspect-Based Sentiment Analysis,” 35th AAAI Conference on Artificial Intelligence, AAAI 2021, vol. 16. pp. 14094–14102, 2021. [CrossRef]

- B. Liang et al., “Enhancing Aspect-Based Sentiment Analysis with Supervised Contrastive Learning,” International Conference on Information and Knowledge Management, Proceedings. pp. 3242–3247, 2021. [CrossRef]

- T. Li, X. Chen, S. Zhangy, Z. Dongy, and K. Keutzer, “Cross-Domain Sentiment Classification with Contrastive Learning and Mutual Information Maximization,” ICASSP, IEEE International Conference on Acoustics, Speech and Signal Processing - Proceedings, vol. 2021-June. pp. 8203–8207, 2021. [CrossRef]

- A. Zhao and Y. Yu, “Knowledge-enabled BERT for aspect-based sentiment analysis,” Knowledge-Based Systems, vol. 227. p. 107220, 2021. [CrossRef]

- A. Karimi, L. Rossi, and A. Prati, Improving BERT Performance for Aspect-Based Sentiment Analysis. 2021; arXiv:2010.11731.

- H. Alhuzali and S. Ananiadou, “SpanEmo: Casting multi-label emotion classification as span-prediction,” EACL 2021 - 16th Conference of the European Chapter of the Association for Computational Linguistics, Proceedings of the Conference. pp. 1573–1584, 2021. [CrossRef]

- Y. Fu and Y. Liu, “Cross-domain sentiment classification based on key pivot and non-pivot extraction,” Knowledge-Based Systems, vol. 228. 2021. [CrossRef]

- M. Ryu, G. Lee, and K. Lee, “Knowledge distillation for BERT unsupervised domain adaptation,” Knowledge and Information Systems, vol. 64, no. 11. pp. 3113–3128, 2022. [CrossRef]

- J. Yuan, Y. Zhao, and B. Qin, “Learning to share by masking the non-shared for multi-domain sentiment classification,” International Journal of Machine Learning and Cybernetics, vol. 13, no. 9. pp. 2711–2724, 2022. [CrossRef]

- W. Li, W. Shao, S. Ji, and E. Cambria, “BiERU: Bidirectional emotional recurrent unit for conversational sentiment analysis,” Neurocomputing, vol. 467, pp. 73–82, 2022. [CrossRef]

- H. Wu, Z. Zhang, S. Shi, Q. Wu, and H. Song, “Phrase dependency relational graph attention network for Aspect-based Sentiment Analysis,” Knowledge-Based Syst., vol. 236, p. 107736, 2022. [CrossRef]

- E. Wallace, M. Gardner, and S. Singh, “Interpreting Predictions of NLP Models,” EMNLP 2020 - Conference on Empirical Methods in Natural Language Processing, Tutorial Abstracts. pp. 20–23, 2020. [CrossRef]

- A. Bhardwaj, Y. Narayan, Vanraj, Pawan, and M. Dutta, “Sentiment Analysis for Indian Stock Market Prediction Using Sensex and Nifty,” Procedia Comput. Sci., 2015, vol. 70, pp. 85–91. [CrossRef]

- M. S. Rasooli and M. Collins, “Cross-Lingual Syntactic Transfer with Limited Resources,” Trans. Assoc. Comput. Linguist., vol. 5, pp. 279–293, 2017. [CrossRef]

- Q. Chen, C. Li, and W. Li, “Modeling language discrepancy for cross-lingual sentiment analysis,” Int. Conf. Inf. Knowl. Manag. Proc., vol. Part F1318, pp. 117–126, 2017. [CrossRef]

- X. Chen, Y. Sun, B. Athiwaratkun, C. Cardie, and K. Weinberger, “Adversarial Deep Averaging Networks for Cross-Lingual Sentiment Classification,” Trans. Assoc. Comput. Linguist., vol. 6, pp. 557–570, 2018. [CrossRef]

- W. Wang, S. Feng, W. Gao, D. Wang, and Y. Zhang, “Personalized microblog sentiment classification via adversarial cross-lingual multi-task learning,” Proc. 2018 Conf. Empir. Methods Nat. Lang. Process. EMNLP 2018, pp. 338–348, 2018. [CrossRef]

- E. Çano and M. Morisio, “A deep learning architecture for sentiment analysis,” ACM International Conference Proceeding Series. pp. 122–126, 2018. [CrossRef]

- J. Barnes, R. Klinger, and S. Schulte Im Walde, “Bilingual sentiment embeddings: Joint projection of sentiment across languages,” ACL 2018 - 56th Annu. Meet. Assoc. Comput. Linguist. Proc. Conf. (Long Pap.), vol. 1, pp. 2483–2493, 2018. [CrossRef]

- X. Dong and G. De Melo, “Cross-lingual propagation for deep sentiment analysis,” 32nd AAAI Conf. Artif. Intell. AAAI 2018, pp. 5771–5778, 2018. [CrossRef]

- M. Artetxe, G. Labaka, and E. Agirre, “A robust self-learning method for fully unsupervised cross-lingual mappings of word embeddings,” ACL 2018 - 56th Annu. Meet. Assoc. Comput. Linguist. Proc. Conf. (Long Pap.), vol. 1, pp. 789–798, 2018. [CrossRef]

- Z. Chen, X. Lu, S. Shen, Q. Mei, Z. Hu, and X. Liu, “Emoji-powered representation learning for cross-lingual sentiment classification,” Web Conf. 2019 - Proc. World Wide Web Conf. WWW 2019, pp. 251–262, 2019. [CrossRef]

- A. R. Atrio, T. Badia, and J. Barnes, “On the effect of word order on cross-lingual sentiment analysis,” Proces. del Leng. Nat., vol. 63, pp. 23–30, 2019. [CrossRef]

- J. Antony, A. Bhattacharya, J. Singh, and G. R. Mamidi, “Leveraging Multilingual Resources for Language Invariant Sentiment Analysis,” Proc. 22nd Annu. Conf. Eur. Assoc. Mach. Transl. EAMT 2020, pp. 71–80, 2020.

- C. Ma and W. Xu, “Unsupervised Bilingual Sentiment Word Embeddings for Cross-lingual Sentiment Classification,” ACM Int. Conf. Proceeding Ser., pp. 180–183, 2020. [CrossRef]

- J. Shen, X. Liao, and S. Lei, “Cross-lingual sentiment analysis via AAE and BiGRU,” Proc. 2020 Asia-Pacific Conf. Image Process. Electron. Comput. IPEC 2020, pp. 237–241, 2020. [CrossRef]

- S. H. Kandula and B. Min, “Improving Cross-Lingual Sentiment Analysis via Conditional Language Adversarial Nets,” SIGTYP 2021 - 3rd Work. Res. Comput. Typology Multiling. NLP, Proc. Work., pp. 32–37, 2021. [CrossRef]

- A. Ramesh et al., “Zero-Shot Text-to-Image Generation,” in Proceedings of the 37th International Conference on Machine Learning, chine Learning, Jul. 2021, pp. 8821–8831. Accessed: Oct. 15, 2022. [Online]. Available online: https://proceedings.mlr.press/v139/ramesh21a.html.

- A. S. Cohen et al., “Natural Language Processing and Psychosis: On the Need for Comprehensive Psychometric Evaluation,” Schizophr. Bull., vol. 48, no. 5, pp. 939–948, 2022. [CrossRef]

- J. Blitzer, M. Dredze, and F. Pereira, Domain adaptation for sentiment classification, vol. 45, no. 1. Linguistics, 2007.

- T. Al-Moslmi, N. Omar, S. Abdullah, and M. Albared, “Approaches to Cross-Domain Sentiment Analysis: A Systematic Literature Review,” IEEE Access, vol. 5, pp. 16173–16192, 2017. [CrossRef]

- E. Cambria, Y. Li, F. Z. Xing, S. Poria, and K. Kwok, “SenticNet 6: Ensemble Application of Symbolic and Subsymbolic AI for Sentiment Analysis,” International Conference on Information and Knowledge Management, Proceedings. pp. 105–114, 2020. [CrossRef]

- K. K. Dashtipour et al., “Towards Cross-Lingual Audio-Visual Speech Enhancement,” pp. 30–32. 2024. [CrossRef]

- M. A. Ashraf, R. M. A. Nawab, and F. Nie, “Author profiling on bi-lingual tweets,” J. Intell. Fuzzy Syst., 2020, vol. 39, no. 2, pp. 2379–2389. [CrossRef]

- R. Gupta and M. Chen, “Sentiment Analysis for Stock Price Prediction,” Proceedings - 3rd International Conference on Multimedia Information Processing and Retrieval, MIPR 2020, vol. MIPR. [on. pp. 213–218, 2020. [CrossRef]

- I. Fleury and E. Dowdy, “Social Media Monitoring of Students for Harm and Threat Prevention: Ethical Considerations for School Psychologists,” Contemp. Sch. Psychol., 2022, vol. 26, no. 3, pp. 299–308. [CrossRef]

- S. Mokhtari, K. K. Yen, and J. Liu, “Effectiveness of Artificial Intelligence in Stock Market Prediction based on Machine Learning,” Int. J. Comput. Appl. 2021; 183, 1–8. [CrossRef]

- M. G. Sousa, K. Sakiyama, L. D. S. Rodrigues, P. H. Moraes, E. R. Fernandes, and E. T. Matsubara, “BERT for stock market sentiment analysis,” Proceedings - International Conference on Tools with Artificial Intelligence, ICTAI, vol. 2019-Novem. pp. 1597–1601, 2019. [CrossRef]

- G. Zhang et al., “EFraudCom: An E-commerce Fraud Detection System via Competitive Graph Neural Networks,” ACM Trans. Inf. Syst. 2022; 40. [CrossRef]

| Criteria Type | Inclusion Criteria | Exclusion Criteria |

|---|---|---|

| Language | Articles published in English | Articles in other languages |

| Time Frame | Published between 2017–2024 | Published before 2017 |

| Relevance | Focused on sentiment analysis using cross-lingual or multilingual techniques | Monolingual sentiment analysis only |

| Model Type | Utilized AI, machine learning, deep learning, or transformer-based approaches | Studies not involving computational models |

| Peer Review | Published in peer-reviewed journals or conferences | Preprints, whitepapers, or non-peer-reviewed sources |

| Empirical Basis | Provided experiments, datasets, or comparative analysis | Conceptual, theoretical, or opinion-only papers |

| Accessibility | Full-text accessible for detailed review | Abstract-only access or unavailable full-text |

| Publication | Evaluation Model | Dataset | Result |

|---|---|---|---|

| [55] | BLEU | Stanford NLP Group | Achieve higher results |

| [56] | BLEU 1 BLEU 2 BLEU 3 BLEU 4 |

SQuAD | BLEU 4 shows more accurate results |

| [57] | BLEU | English to German translation dataset |

improvement of 0.7 |

| [58] | ROUGE | DUC-05, DUC06 and DUC-07 | Achieve High results |

| [59] | GLUE | GLUE benchmark SQuAD RACE |

GLUE outperforms |

| [27] | GLUE | GLUE SQuAD |

Achieve higher accuracy on both benchmarks |

| [60] | GLUE | GLUE | 5.0x accuracy |

| [61] | GLUE | RussianGLUE SuperGLUE |

Achieve high results |

| [62] | BLEU ROUGE |

A large dataset in ABC notation | Both models achieve the best results |

| [63] | BLEU | benchmark datasets | 36.49/33.57 |

| [64] |

ROUGE-1 ROUGE-2

ROUGE-L |

CNN/Daily Mail News Dataset |

41.72

19.39 38.76 |

| [65] |

ROUGE-1

ROUGE-L |

CNN/Daily Mail dataset, wikiHow dataset, How2Dataset |

22.5

20 |

| [66] | Accuracy Precision Recall F1-score |

Internet movie database | 92.31% |

| [67] |

ROUGE-1 ROUGE-2

ROUGE-L |

CNN/Daily Mail news highlights | 42.98 20.12 39.40 |

| [68] | Accuracy Precision Recall F1-score |

2595 songs uniformly distributed | 94.78% 94.77% 94.75% 94.77% |

| [22] | Accuracy Recall F1-score |

stock reviews in Eastern Stock Exchange | 97.35% 98.43% 98.69% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).