Submitted:

22 July 2025

Posted:

23 July 2025

You are already at the latest version

Abstract

Keywords:

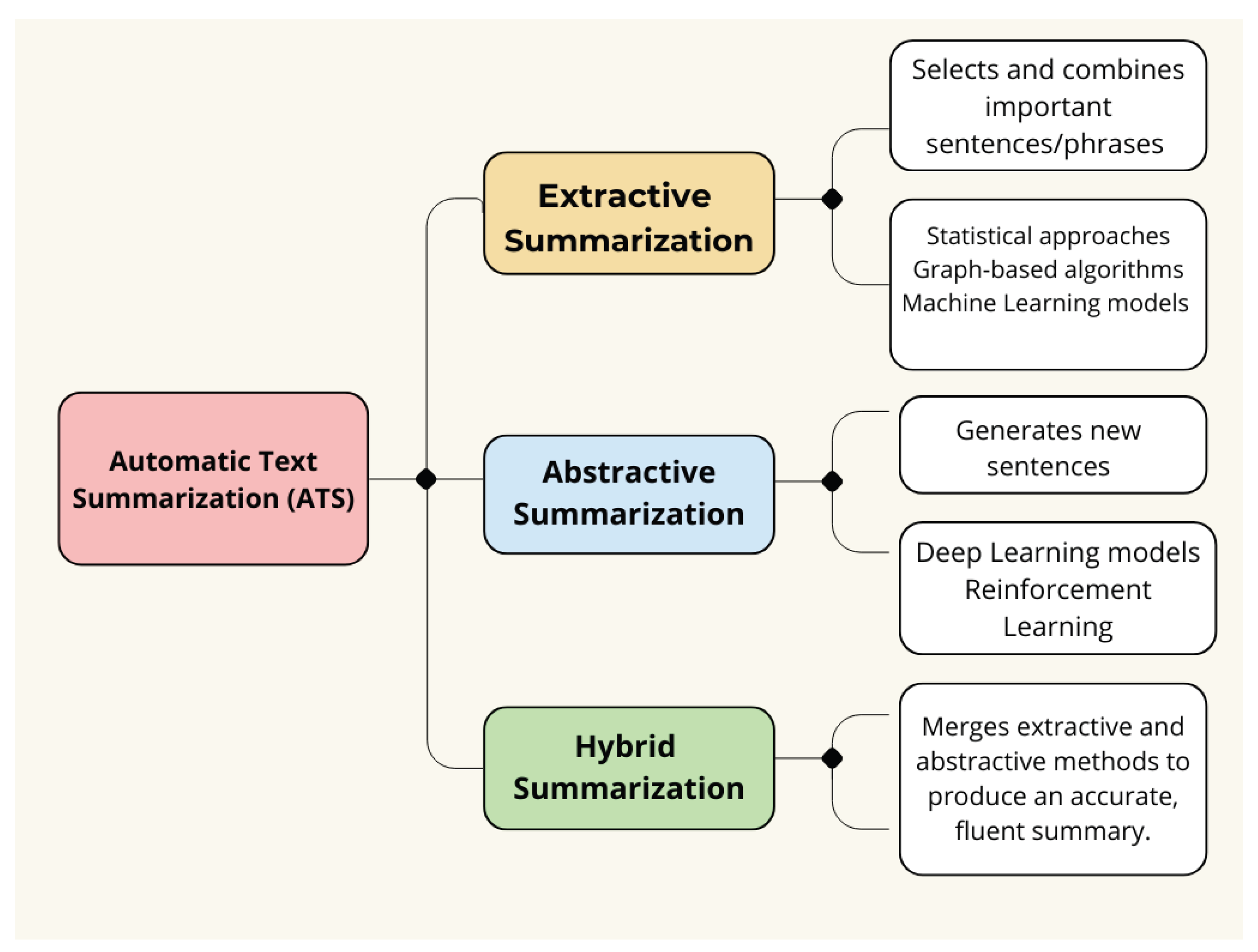

1. Introduction

- We establish the first multi-dataset evaluation framework for Urdu abstractive summarization, combining UrSum news, Fake News articles, and Urdu-Instruct headlines to enable robust cross-domain assessment.

- A novel text normalization pipeline addresses Urdu’s orthographic challenges through Unicode standardization and diacritic filtering, resolving critical issues in script variations that impair NLP performance.

- Our right-to-left optimized architecture introduces directional-aware tokenization and embeddings, preserving Urdu’s native reading order in transformer models - a crucial advancement for RTL languages.

- Comprehensive benchmarking reveals fine-tuned monolingual transformers (BERT-Urdu, BART-Urdu) outperform multilingual models (mT5) and classical approaches by 12-18% in ROUGE scores.

- The proposed hybrid training framework combines cross-entropy with ROUGE-based reinforcement, jointly improving summary quality while maintaining linguistic coherence.

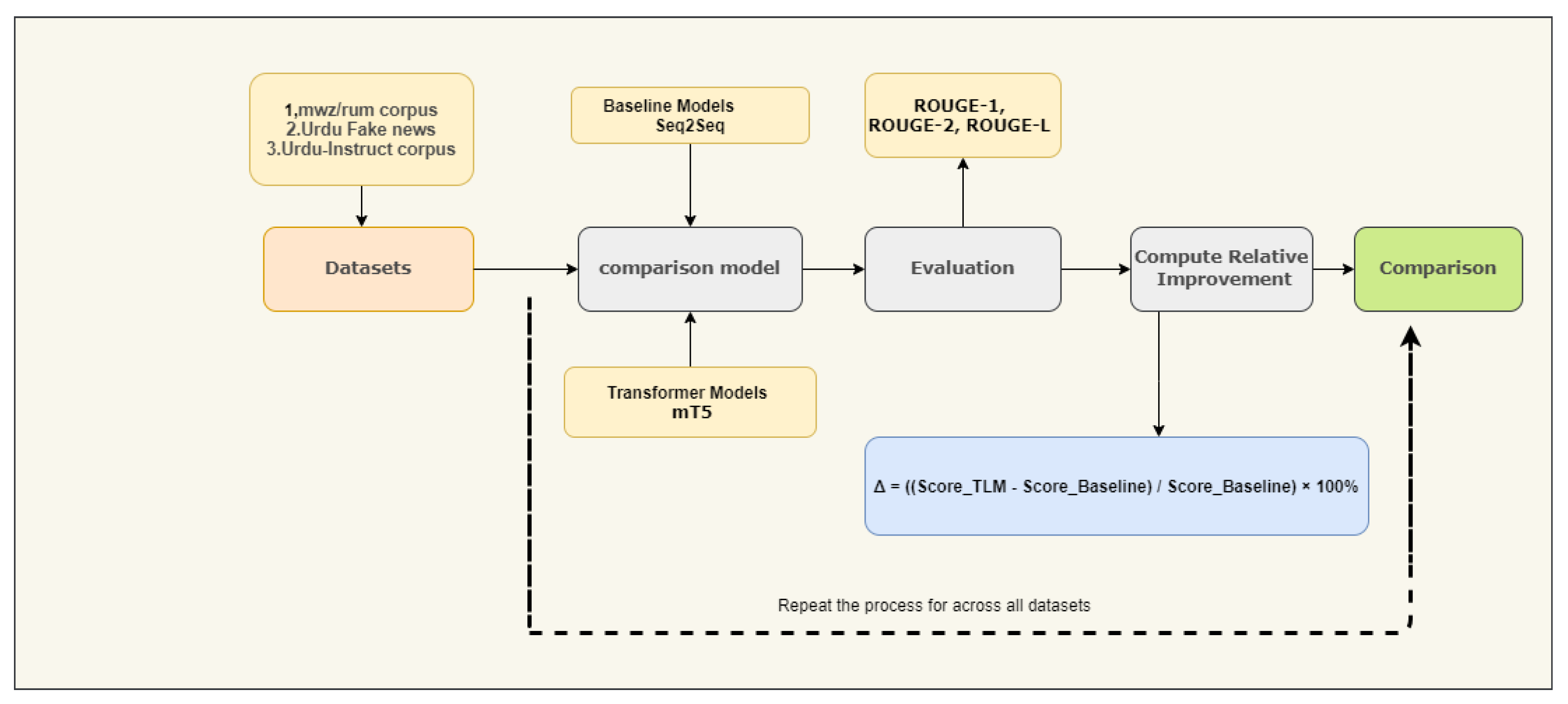

- We demonstrate that relative improvement metrics over Seq2Seq baselines provide more reliable cross-dataset comparisons than absolute scores in low-resource settings.

- Diagnostic analyses quantify the cumulative impact of Urdu-specific adaptations, offering practical guidelines for low-resource language NLP development.

2. Related Work

| Authors & Year | Language | Dataset | Challenges | Techniques | Evaluation Metrics | Advantage | Disadvantage |

|---|---|---|---|---|---|---|---|

| Shafiq et al. (2023) [42] | Urdu | Urdu 1 Million News Dataset | Limited research on abstractive summarization | Deep Learning, Extractive and Abstractive Summarization | ROUGE 1: 27.34 ROUGE 2: 07.10 ROUGE L: 25.50 | Better performance than SVM and LR | Requires extensive research for effective summarization |

| Awais et al. (2024) [43] | Urdu | 2,067,784 articles and news in Urdu | Limited exploration of Urdu summarization | Abstractive LSTM, GRU, Bi-LSTM, Bi-GRU, LSTM with Attention, GRU with Attention, GPT-3.5, BART | ROUGE-1; 46.7, ROUGE-2; 24.1 | The ROUGE-L score of 48.7 indicates strong performance, with the GRU model and Attention mechanism outperforming other models in the study. | The topic requires further investigation, especially concerning Urdu literature. |

| Raza et al. (2024) [44] | Urdu | Labeled dataset of Urdu text and summaries (Abstractive Urdu Text Summarization) | Limited research on Urdu abstractive summarization | Abstractive Supervised Learning, Transformer’s Encoder-Decoder | ROUGE-1: 25.18 Context Aware Roberta Score | Novel evaluation metric introduced; focused on abstractive summarization | Needs more extensive datasets for broader applicability |

| Authors & Year | Language | Dataset | Challenges | Techniques | Evaluation Metrics | Advantage | Disadvantage |

|---|---|---|---|---|---|---|---|

| A. Faheem et al. (2024) [46] | Urdu | Urdu MASD | Low-resource language | Abstractive Multimodal, mT5, MLASK | ROUGE, BLEU | The first comprehensive multimodal dataset specifically designed for the Urdu language. | Requires focused and specialized pretraining specifically tailored for the Urdu language. |

| M Munaf et al. (2023) [47] | Urdu | 76.5k pairs of articles and summaries | Low-resource linguistic | Abstractive Transformer, mT5, urT5 | ROUGE, BERTScore | Effective for low-resource summarization | The findings may have restricted applicability beyond the context of the Urdu language. |

| Ali Raza et al. (2023) [48] | Urdu | Publicly available dataset | Comprehension of source text, grammar, semantics | Abstractive Transformer-based encoder/decoder, beam search | ROUGE-1, ROUGE-2, and ROUGE-L | High ROUGE scores, grammatically correct summaries | There is potential for enhancement in the ROUGE-L score. |

| Asif Raza et al. (2024) [45] | Urdu | Own collected a dataset of 50 articles | Limited research on Urdu abstractive summarization | Extractive methods (TF-IDF, sentence weight, word frequency), Hybrid approach, BERT | Evaluated by Urdu professionals | Hybrid approach refines extractive summaries; potential for human-like summaries | Limited to single-document summarization; requires more vocabulary and synonyms |

| Algorithm 1 Comprehensive Urdu Abstractive Text Summarization (ATS) Evaluation |

|

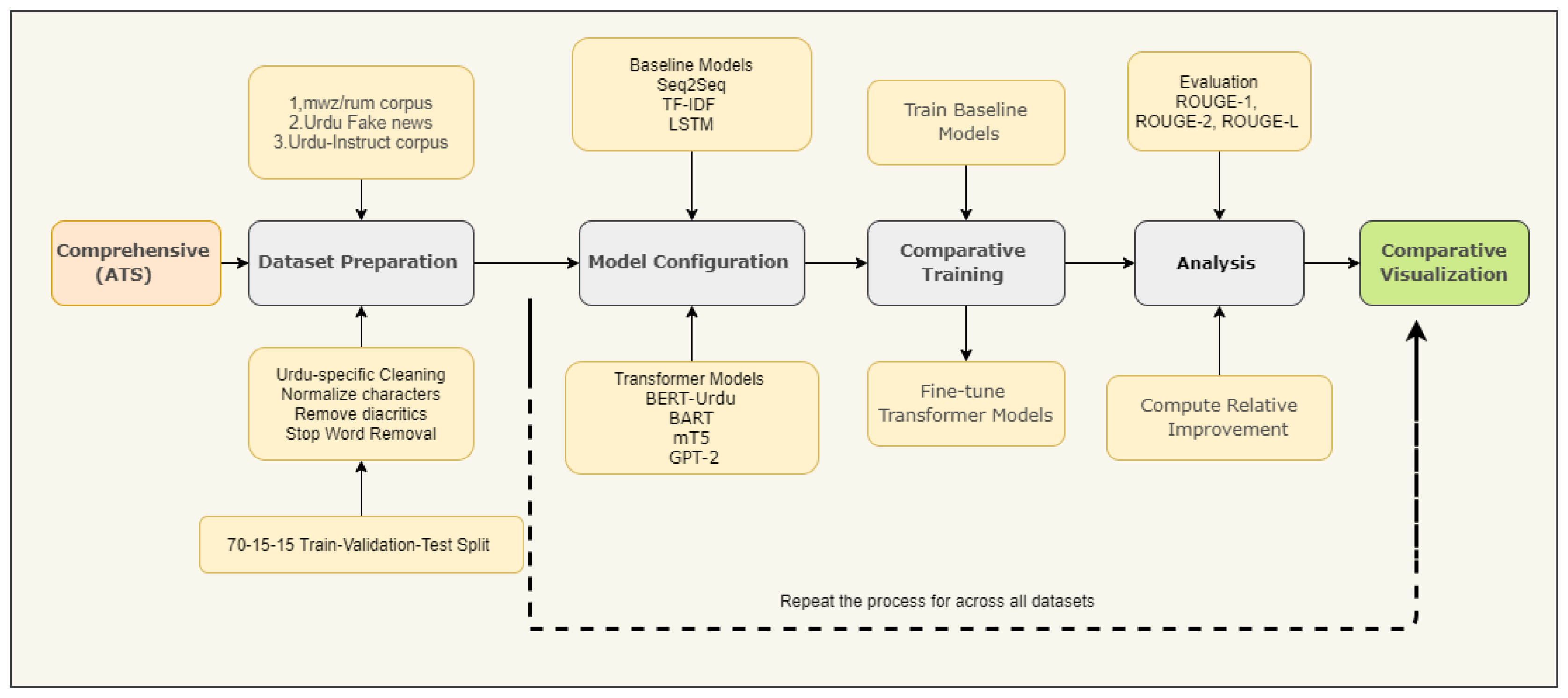

3. Materials and Methods

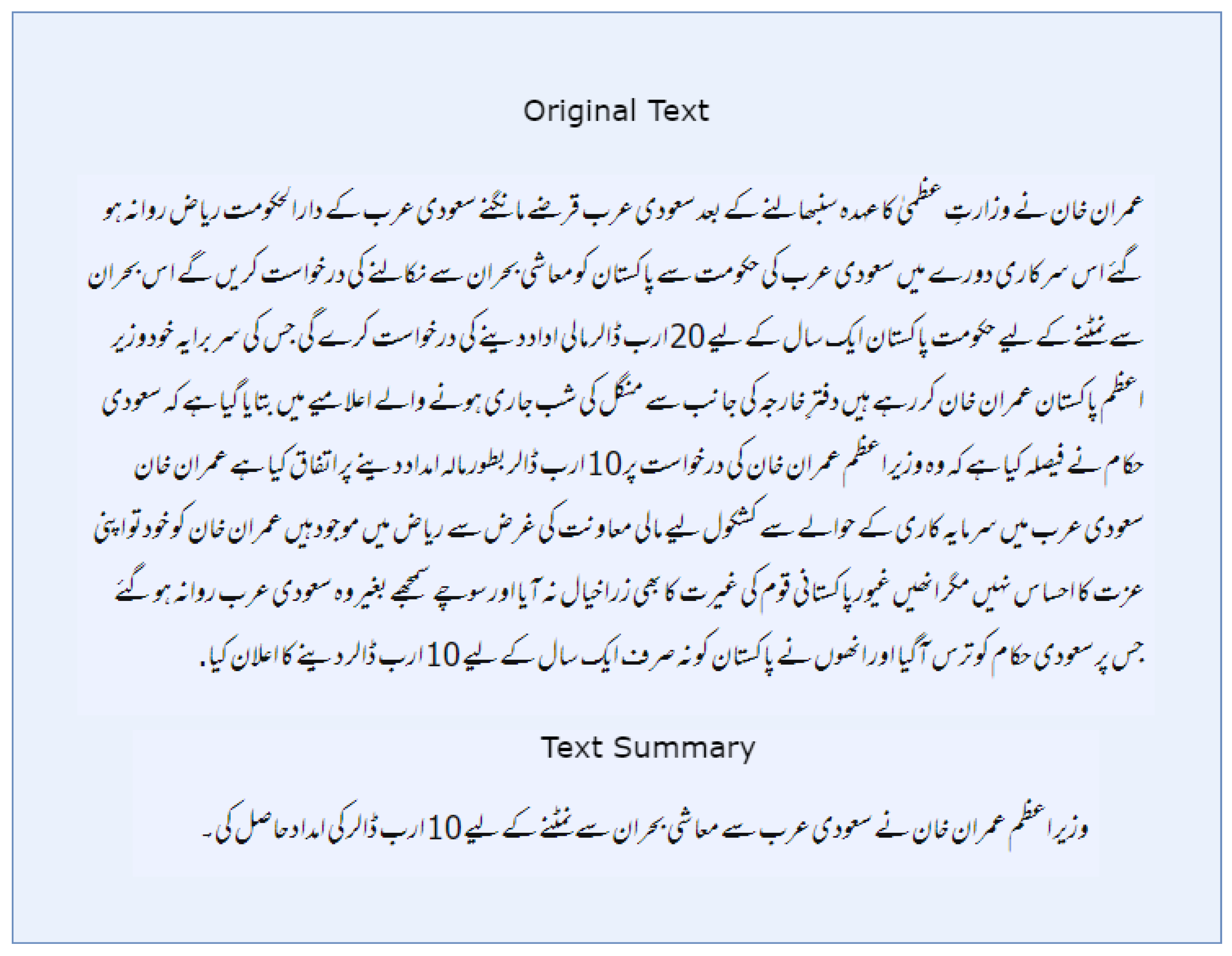

3.1. Preprocessing for Urdu Text Summarization

3.1.1. Unicode Normalization

3.1.2. Diacritic Filtering

3.1.3. Chunk Construction (512-Token Limit)

3.1.4. RTL-Aware Chunking

3.1.5. Stratified Splitting

3.2. Urdu ATS Datasets

3.2.1. Urdu Summarization (mwz/ursum)

3.2.2. Urdu Fake News

3.2.3. AhmadMustafa/Urdu-Instruct-News-Article-Generation

3.2.4. Model Configuration

3.2.5. Urdu Tokenizers

3.2.6. RTL Embeddings

3.2.7. Length Constraints

3.2.8. Model Selection (Transformer and Baseline)

-

BERT BERT is based on the encoder element of the original transformer architecture. A bidirectional attention mechanism aims to fully understand a word by examining its preceding and following words. BERT is comprised of multilayered transformers, each with its own feedforward neural network and attention head. By using this bidirectional technique, BERT is able to assess a target word’s right and left contexts within a sentence in order to gain a deeper understanding of the text.BERT is pre-trained using Masked Language Modeling (MLM) and Next Sentence Prediction (NSP). Multilevel marketing helps a model acquire context by randomly masking some of the tokens in a sentence and then training it to predict these masked tokens. A model using NSP can better understand the connection between two sentences when answering questions [24].BERT’s encoder-only design is well-suited for natural language understanding applications, such as named entity identification and categorization.

- GPT-2 Since GPT-2 is constructed utilizing the transformer architecture’s decoder portion, it is essentially a generative model that aims to produce coherent text in response to a prompt. BERT uses bidirectional attention, whereas GPT-2 uses unidirectional attention. Because each word can only focus on the words that come before it, it is especially well-suited for autoregressive tasks, in which the model generates words one at a time based on the words that came before it. Each of the multiple layers of decoders that make up GPT-2 has feedforward networks and self-attention processes. By evaluating previous tokens, these decoders forecast the subsequent token in a sequence, allowing GPT-2 to produce language that resembles that of a human efficiently. Causal Language Modeling (CLM) is employed for model pre-training, helping it predict the next word in a sequence. The architecture of GPT-2 is designed to handle very long contexts and is optimized for text creation tasks, including tale writing, dialog generation, and text completion [26].

-

mT5 The mT5 model is an adapted version of the T5 model that employs transfer learning and features both encoders and decoders. Within its text-to-text framework, it transforms natural language processing (NLP) tasks into text generation tasks, including classification, translation, summarization, and question answering.To capture context effectively, the encoder processes the input text in both directions, while the decoder generates tokens sequentially using an autoregressive approach. Trained using a technique called "span corruption," which involves masking portions of text sequences to predict the omitted sections, T5 showcases its versatility across a broad spectrum of NLP tasks [27].

-

BART By combining both encoder and decoder designs, BART successfully combines the advantages of GPT-2 and BERT. The encoder operates in both directions by considering past and future tokens, similar to BERT. This allows it to capture the complete context of the input text. In contrast, the decoder is autoregressive (AR), like GPT-2, and generates text sequentially from left to right, one token at a time.BERT is already trained as an autoencoder, meaning it learns to reproduce the original text after corrupting the input sequence (for instance, by rearranging or hiding tokens). Because it fully comprehends the input text and produces fluent output, this training method enables BART to provide accurate and concise summaries for tasks such as text summarization.BART is highly versatile for various tasks, including text synthesis, machine translation, and summarization, thanks to its combination of bidirectional understanding (by the encoder) and AR generation (via the decoder) [25].

- -

- Encoder-Decoder Architectures: BART and mT5 are configured as encoder-decoder networks, while BERT-Urdu (a bidirectional encoder) is adapted using its [CLS] representation with a lightweight decoder head. GPT-2 (a left-to-right decoder-only model) is fine-tuned by prefixing input articles with a special token and having it autoregressively generate the summary. In all cases, we experiment with beam search decoding and a tuned maximum output length based on average summary length.

- -

- Decoder Length Tuning: We set the maximum decoder output length to cover typical Urdu summary sizes. Preliminary dataset analysis shows summaries average 50–100 tokens, so decoders are capped at, e.g., 128 tokens. This prevents overgeneration while allowing sufficient length. We also enable length penalty and early stopping in beam search to discourage excessively short or repetitive outputs

-

Baseline ModelsTo establish a meaningful benchmark for assessing the effectiveness of transformer-based summarization models, we developed three traditional baseline models, each exemplifying a distinct category of summarization strategy: extractive, basic neural abstractive, and enhanced neural abstractive with memory features. These baseline models are particularly beneficial for low-resource languages such as Urdu, where the lack of data may hinder the effectiveness of large-scale pretrained models.

-

TF-IDF Extractive Model A non-neural approach to extractive summarization, the Term Frequency-Inverse Document Frequency (TF-IDF) model evaluates and selects the most relevant sentences from the source text according to word significance. Words that are uncommon in the bigger corpus but frequently appear in a particular document are given higher scores by TF-IDF. Sentences are ranked according to the sum of their constituent words’ TF-IDF scores, and the sentences with the highest ranks are chosen to create the summary.TF-IDF serves as a strong lexical-matching benchmark even though it doesn’t generate new sentences. Its computational efficiency and language independence make it a useful control model for summarization tasks, particularly when evaluating the performance gains brought about by more complex neural architectures.

-

Seq2Seq Model The Seq2Seq (Sequence-to-Sequence) model is a fundamental neural architecture utilized for abstractive summarization. It features a single-layer Recurrent Neural Network (RNN) that acts as both the encoder and decoder. The encoder processes the input Urdu text and converts it into a fixed-size context vector, which is then used by the decoder to generate a summary one token at a time. To enhance focus and relevance in the summary generation, we incorporate Bahdanau attention, allowing the decoder to pay attention to various segments of the input sequence during each step of the generation process.This design effectively captures relationships within sequences and enables the model to rephrase or rearrange content, a vital element of abstractive summarization. Nevertheless, the traditional Seq2Seq model faces challenges with long-range dependencies, limiting its use to a lower-bound benchmark in our experiments.

-

LSTM-Based Encoder–Decoder Model To overcome the limitations of conventional RNNs, we developed an advanced Bidirectional Long Short-Term Memory (Bi-LSTM) encoder in conjunction with a unidirectional LSTM decoder. LSTM units are designed specifically to address vanishing gradient problems, allowing for enhanced retention of long-term dependencies and contextual details.The bidirectional encoder processes the Urdu input text in both forward and backward directions, successfully capturing contextual subtleties from both sides of the sequence. The decoder then generates the summary using Bahdanau attention, which enables it to focus selectively on various parts of the input text at each time step.This model balances computational efficiency and effectiveness, providing a more expressive baseline that can produce coherent and somewhat abstractive summaries while still being trainable without needing extensive training.

3.3. Training Configuration

Transformer Models (TLMs)

- Batch size: 16

- Learning rate:

- Maximum decoder length: Dynamically computed as the average target summary length ±15%

- Epochs: Up to 50, with early stopping

Baseline Models

- Batch size: 64

- Learning rate:

- Maximum decoder length: Fixed at 128 tokens

- Epochs: Up to 30, with early stopping

3.3.1. ROUGE-Augmented Loss

3.3.2. Hyperparameter Tuning

- Hardware: The experiments were conducted on NVIDIA GPUs (for example, Tesla V100) equipped with approximately 16–32 GB of RAM. The training duration varied according to the model: around 1–2 hours for the LSTM and several hours for each Transformer.

- Implementation: The RNN model is realized using PyTorch, while the Transformers rely on the HuggingFace transformers library. We monitor validation ROUGE to identify the optimal checkpoint.

3.3.3. Evaluation Metrics

| Algorithm 2 Evaluate Summarization Models |

|

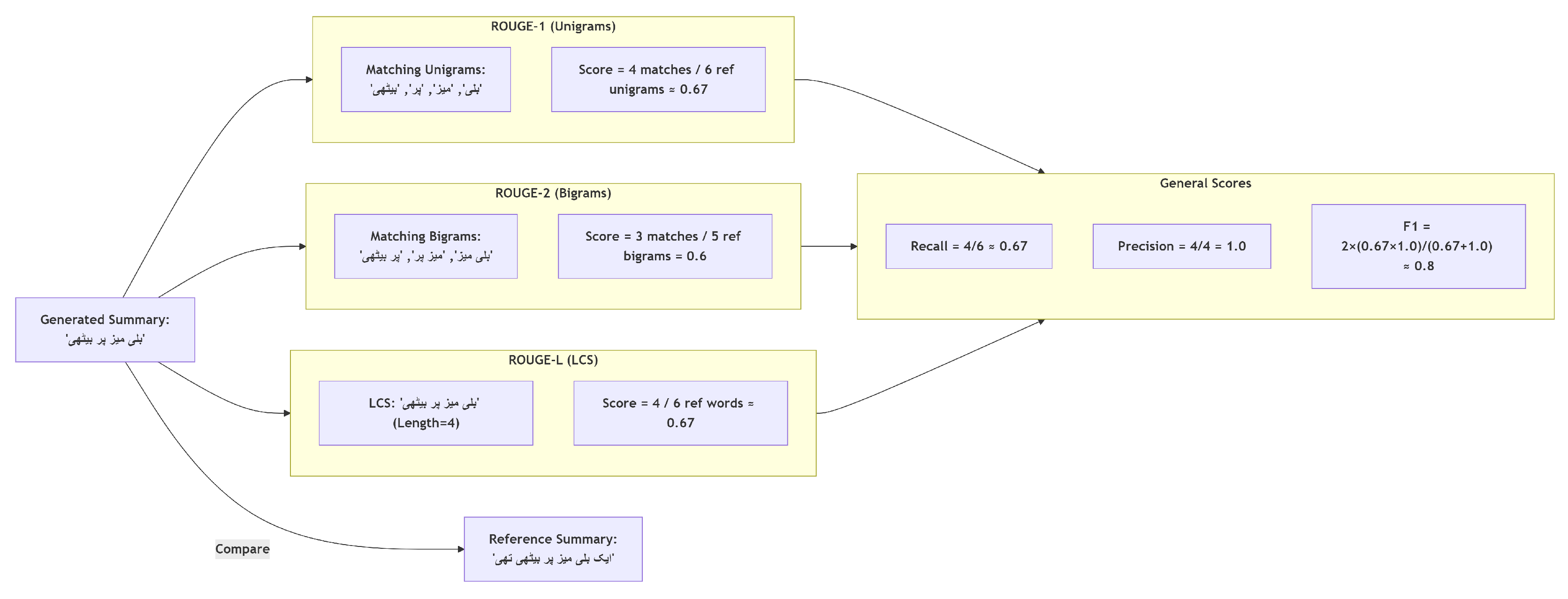

1. ROUGE-1 (Unigram Overlap)

- : The number of words that overlap between the generated summary and the reference summaries, as shown in Equation 2.

- : The total number of unigrams (individual items of a sequence) in the reference summary.

2. ROUGE-2 (Bigram Overlap)

- : quantity of bigrams that overlap the automatically generated summaries along with the reference data.

- : Total bigrams in reference.

3. ROUGE-L (Longest Common Subsequence)

- : This represents the length of the longest common subsequence between the generated summary and the reference summary.

- : Total word count in reference of summary.

3.4. Performance Comparison Metric

4. Results

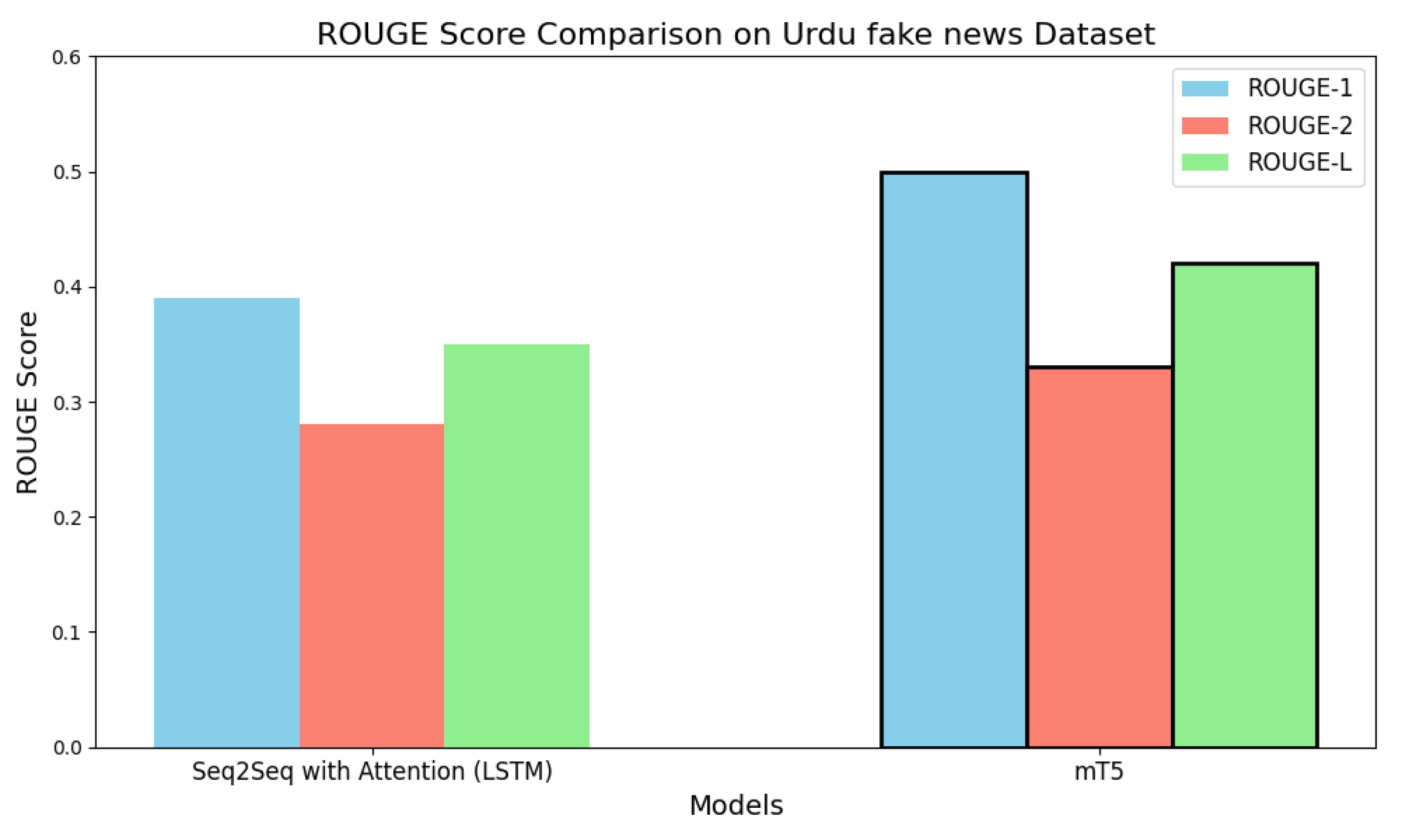

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L |

|---|---|---|---|

| (Seq2Seq) model with attention (LSTM) | 0.39 | 0.28 | 0.35 |

| mT5 | 0.50 | 0.33 | 0.42 |

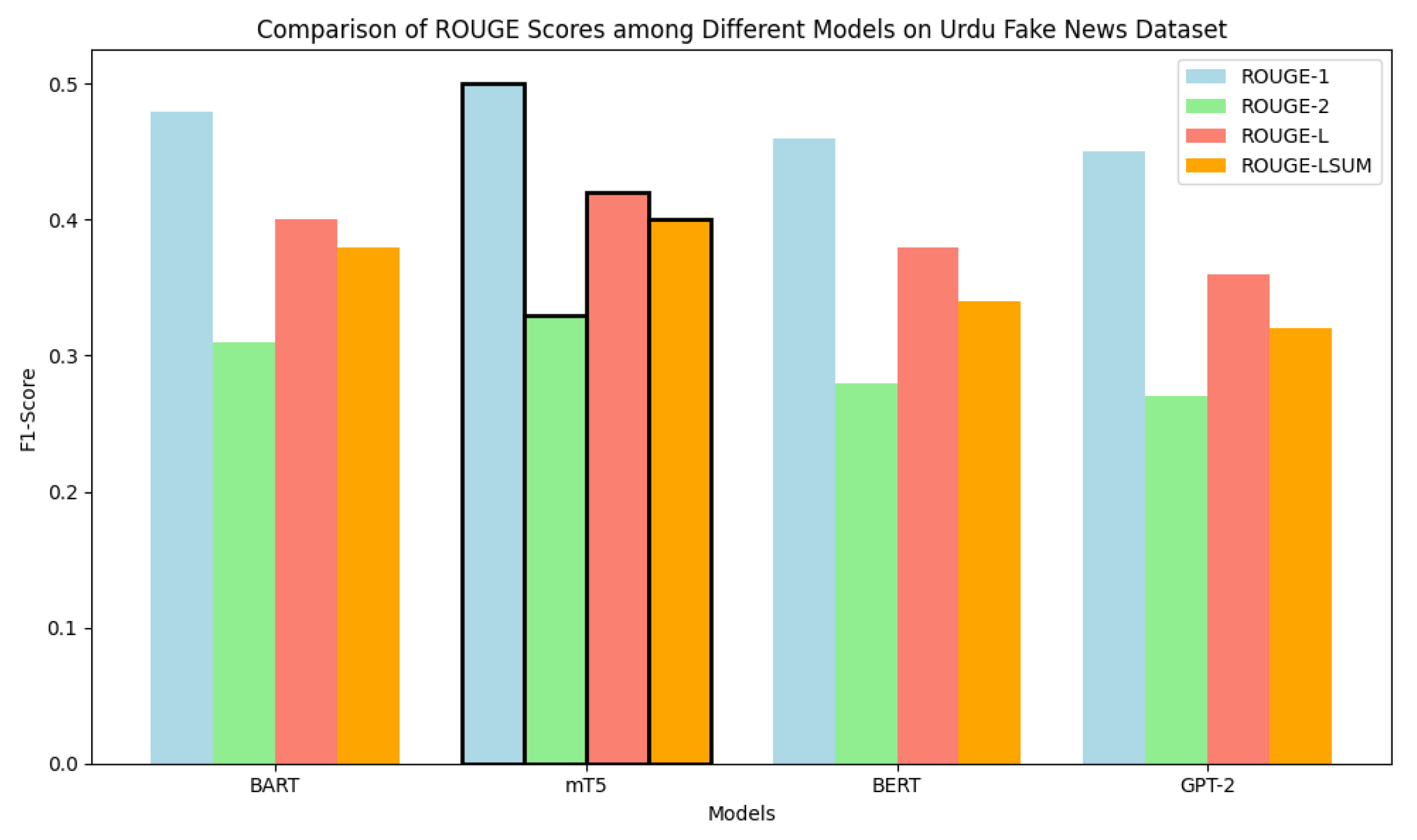

4.1. Performance Comparison on the Urdu Fake News Dataset

- ROUGE-1:

- ROUGE-2:

- ROUGE-L:

5. Discussion

- RTL-Aware Tokenization Enhances Context Understanding: Incorporating right-to-left (RTL) positional embeddings and appropriate script normalization (e.g., handling of Noon Ghunna and Hamza) allowed models to better align summaries with the inherent structure of Urdu text. This adaptation was particularly crucial for pre-trained models like BART and mT5, which were originally trained on left-to-right scripts.

- The use of idioms and domain-specific language in Urdu news frequently incorporates code-switching with English loanwords, along with formal expressions. Some model discrepancies involved incorrectly inflected transliterated English terms or an excessive reliance on commonly used phrases. Traditional TF–IDF methods sometimes select sentences that are high in frequency but irrelevant. While transformer models usually generate more coherent Urdu summaries, they can occasionally repeat phrases or fabricate information that is not present in the original source, which is a recognized issue related to abstractive summarization.

- Effectiveness of Hybrid Loss Function: Our use of a hybrid loss function—combining cross-entropy with a ROUGE-L penalty—improved both fluency and relevance of generated summaries. Unlike purely likelihood-based training, this encourages summaries that retain key information from the source while remaining syntactically coherent

- Training (Hyperparameters): We fine-tuned both models on the same Urdu dataset using optimized hyperparameters for the language. For example, we used a relatively small learning rate and moderate dropout for mT5 to preserve its pretrained knowledge, while training the Seq2Seq model longer to compensate for its smaller capacity. Recent multilingual summarization work similarly emphasizes fine-tuning mT5 for low-resource languages. In practice, mT5 required careful tuning but quickly learned to generate fluent summaries, whereas the Seq2Seq model often needed more epochs to approach its (lower) performance ceiling. The net effect is that mT5 capitalized on its pretrained weights and learned from the Urdu data more effectively than the baseline.

- Relative Improvement Metric Offers Clearer Insight: By computing relative improvements () over baseline scores, we provide a normalized view of model gains. This is especially valuable when absolute ROUGE values are modest, but the improvement is substantial. For example, a +28.2% boost in ROUGE-1 (see Table 12) emphasizes how impactful TLMs.

6. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- H. Saggion and T. Poibeau, “Automatic text summarization: Past, present and future,” in Multi-Source, Multilingual Information Extraction and Summarization, Springer, Berlin/Heidelberg, Germany, 2013, pp. 3–21.

- Rahul, S. Rauniyar, and Monika, “A survey on deep learning based various methods analysis of text summarization,” in Proceedings of the 2020 International Conference on Inventive Computation Technologies (ICICT), Coimbatore, India, Feb. 2020, pp. 113–116.

- M. W. Bhatti and M. Aslam, “ISUTD: Intelligent system for Urdu text de-summarization,” in Proc. Int. Conf. Eng. Emerg. Technol. (ICEET), Feb. 2019, pp. 1–5.

- P. Verma, A. Verma, and S. Pal, “An approach for extractive text summarization using fuzzy evolutionary and clustering algorithms,” Appl. Soft Comput., vol. 120, May 2022, Art. no. 108670.

- H. N. Fejer and N. Omar, “Automatic Arabic text summarization using clustering and keyphrase extraction,” in Proc. 6th Int. Conf. Inf. Technol. Multimedia, Putrajaya, Malaysia, Nov. 2014, pp. 293–298.

- A. A. Syed, F. L. Gaol, and T. Matsuo, “A survey of the state-of-the-art models in neural abstractive text summarization,” IEEE Access, vol. 9, pp. 13248–13265, 2021.

- G. Siragusa and L. Robaldo, “Sentence Graph Attention For Content-Aware Summarization,” Appl. Sci., vol. 12, no. 10, Art. no. 10382, 2022.

- M. Allahyari, S. Pouriyeh, M. Assefi, S. Safaei, E. D. Trippe, J. B. Gutierrez, and K. Kochut, “Text summarization techniques: A brief survey,” arXiv, 2017, arXiv:1707.02268.

- R. Witte, R. Krestel, and S. Bergler, “Generating update summaries for DUC 2007,” in Proc. Document Understanding Conference, Rochester, NY, USA, Apr. 2007, pp. 1–5.

- T. Rahman, “Language policy and localization in Pakistan: Proposal for a paradigmatic shift,” in Proc. SCALLA Conf. Comput. Linguistics, vol. 99, 2004, pp. 1–19.

- A. Naseer and S. Hussain, “Supervised word sense disambiguation for Urdu using Bayesian classification,” Center for Research in Urdu Language Processing, Lahore, Pakistan, Tech. Rep., 2009.

- A. Daud, W. Khan, and D. Che, “Urdu language processing: A survey,” Artif. Intell. Rev., vol. 47, no. 3, pp. 279–311, Mar. 2017. [CrossRef]

- L. Hou, P. Hu, and C. Bei, “Abstractive document summarization via neural model with joint attention,” in Proceedings of the National CCF Conference on Natural Language Processing and Chinese Computing, Dalian, China, Nov. 2017, pp. 329–338.

- S. Hochreiter and J. Schmidhuber, “Long short-term memory,” Neural Comput., vol. 9, no. 8, pp. 1735–1780, 1997.

- Q. Chen, X. Zhu, Z. Ling, S. Wei, and H. Jiang, “Distraction-based neural networks for document summarization,” arXiv, 2016, arXiv:1610.08462.

- J. Gu, Z. Lu, H. Li, and V. O. Li, “Incorporating copying mechanism in sequence-to-sequence learning,” arXiv, 2016, arXiv:1603.06393.

- C. HUB and Z. LCSTS, “A large scale Chinese short text summarization dataset,” in Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, Lisbon, Portugal, Sep. 2015, vol. 2, pp. 1967–1972.

- A. Vaswani et al., “Attention is all you need,” in Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, Dec. 2017, pp. 5998–6008.

- W. S. El-Kassas, C. R. Salama, A. A. Rafea, and H. K. Mohamed, “Automatic text summarization: A comprehensive survey,” Expert Syst. Appl., vol. 165, p. 113679, 2021.

- M. Humayoun, R. Nawab, M. Uzair, S. Aslam, and O. Farzand, “Urdu summary corpus,” in Proc. 10th Int. Conf. Language Resour. Eval., 2016, pp. 796–800. [Online]. Available: Https://aclanthology.org/L16-1128.

- A. Nawaz, M. Bakhtyar, J. Baber, I. Ullah, W. Noor, and A. Basit, “Extractive text summarization models for Urdu language,” Inf. Process. Manage., vol. 57, no. 6, Nov. 2020, Art. no. 102383. [CrossRef]

- M. Awais and R. M. A. Nawab, “Abstractive text summarization for the Urdu language: Data and methods,” IEEE Access, 2024.

- H. Raza and W. Shahzad, “End to end Urdu abstractive text summarization with dataset and improvement in evaluation metric,” IEEE Access, 2024.

- A. Raza, M. H. Soomro, I. Shahzad, and S. Batool, “Abstractive text summarization for Urdu language,” J. Comput. Biomed. Informatics, vol. 7, no. 2, 2024.

- M. Munaf, H. Afzal, K. Mahmood, and N. Iltaf, “Low resource summarization using pre-trained language models,” ACM Trans. Asian Low-Resour. Lang. Inf. Process., 2023.

- Y. Sunusi, N. Omar, and L. Q. Zakaria, “Exploring abstractive text summarization: Methods, dataset, evaluation, and emerging challenges,” 2023.

- A. Faheem, F. Ullah, M. S. Ayub, and A. Karim, “UrduMASD: A multimodal abstractive summarization dataset for Urdu,” in Proc. 2024 Joint Int. Conf. Comput. Linguistics, Lang. Resources Evaluation (LREC-COLING 2024), 2024, pp. 17245–17253.

- M. Barbella and G. T.-A., “ROUGE metric evaluation for text summarization techniques,” SSRN, [Online]. Available: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4120317. [Accessed: May 07, 2023].

- Al-Maleh, M., & Desouki, S. (2020). Arabic text summarization using deep learning approach. Journal of Big Data, 7(1), 109.

- A. Vogel-Fernandez, P. Calleja, and M. Rico, “esT5s: A Spanish Model for Text Summarization,” in Towards a Knowledge-Aware AI, vol. 184–190, IOS Press, 2022, pp. 184–190.

- R. Schubiger, “German summarization with large language models,” M.S. thesis, ETH Zurich, 2024.

- G. L. Garcia, P. H. Paiola, D. S. Jodas, L. A. Sugi, and J. P. Papa, “Text Summarization and Temporal Learning Models Applied to Portuguese Fake News Detection in a Novel Brazilian Corpus Dataset,” in Proceedings of the 16th International Conference on Computational Processing of Portuguese, 2024, pp. 86–96.

- C. Xiong, Z. Wang, L. Shen, and N. Deng, “TF-BiLSTMS2S: A Chinese Text Summarization Model,” in Advanced Information Networking and Applications: Proceedings of the 34th International Conference on Advanced Information Networking and Applications (AINA-2020), Springer International Publishing, 2020, pp. 240–249.

- Y. Nagai, T. Oka, and M. Komachi, “A Document-Level Text Simplification Dataset for Japanese,” in Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024), 2024, pp. 459–476.

- F. Camastra and G. Razi, “Italian text categorization with lemmatization and support vector machines,” in Neural approaches to dynamics of signal exchanges, 2020, pp. 47–54.

- T. Vetriselvi and M. Mathur, “Text summarization and translation of summarized outcome in French,” in E3S Web of Conferences, vol. 399, p. 04002, EDP Sciences, 2023.

- V. Goloviznina and E. Kotelnikov, “Automatic summarization of Russian texts: Comparison of extractive and abstractive methods,” arXiv preprint arXiv:2206.09253, 2022.

- P. Janjanam and C. P. Reddy, “Text summarization: An essential study,” in 2019 International Conference on Computational Intelligence in Data Science (ICCIDS), Feb. 2019, pp. 1–6.

- Ahmad Mustafa, “Urdu Instruct News Article Generation,” Hugging Face, 2023. [Online]. Available: https://huggingface.co/datasets/AhmadMustafa/Urdu-Instruct-News-Article-Generation. [Accessed: Oct. 18, 2024].

- mwz, “Ursum Dataset,” Hugging Face, 2022. [Online]. Available: https://huggingface.co/datasets/mwz/ursum. [Accessed: Oct. 18, 2024].

- Community Datasets, “Urdu Fake News Dataset,” Hugging Face, 2022. [Online]. Available: https://huggingface.co/datasets/community-datasets/urdu_fake_news. [Accessed: Oct. 18, 2024].

- Shafiq, N., Hamid, I., Asif, M., Nawaz, Q., Aljuaid, H., & Ali, H. (2023). Abstractive text summarization of low-resourced languages using deep learning. PeerJ Computer Science, 9, e1176.

- Awais, M., & Nawab, R. M. A. (2024). Abstractive text summarization for the Urdu language: Data and methods. IEEE Access.

- Raza, H., & Shahzad, W. (2024). End to end Urdu abstractive text summarization with dataset and improvement in evaluation metric. IEEE Access.

- Raza, A., Soomro, M. H., Shahzad, I., & Batool, S. (2024). Abstractive text summarization for Urdu language. Journal of Computing & Biomedical Informatics, 7(02).

- A. Faheem, F. Ullah, M. S. Ayub, and A. Karim, “UrduMASD: A multimodal abstractive summarization dataset for Urdu,” in Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024), May 2024, pp. 17245–17253.

- M. Munaf, H. Afzal, K. Mahmood, and N. Iltaf, "Low Resource Summarization using Pre-trained Language Models," ACM Transactions on Asian and Low-Resource Language Information Processing, 2023.

- Raza, A., Raja, H. S., & Maratib, U. (2023). Abstractive Summary Generation for the Urdu Language. arXiv preprint arXiv:2305.16195.

- J. M. Duarte and L. Berton, “A review of semi-supervised learning for text classification,” Artificial Intelligence Review, vol. 56, no. 9, pp. 9401–9469, 2023.

- M. A. Bashar, “A coherent knowledge-driven deep learning model for idiomatic-aware sentiment analysis of unstructured text using Bert transformer,” Doctoral dissertation, Universiti Teknologi MARA, 2023.

- A. Nawaz, M. Bakhtyar, J. Baber, I. Ullah, W. Noor, and A. Basit, “Extractive text summarization models for Urdu language,” Information Processing & Management, vol. 57, no. 6, p. 102383, 2020.

- A. Muhammad, N. Jazeb, A. M. Martinez-Enriquez, and A. Sikander, “EUTS: Extractive Urdu text summarizer,” in 2018 Seventeenth Mexican International Conference on Artificial Intelligence (MICAI), pp. 39–44, Oct. 2018.

- M. A. Saleem, J. Shuja, M. A. Humayun, S. B. Ahmed, and R. W. Ahmad, “Machine Learning Based Extractive Text Summarization Using Document Aware and Document Unaware Features,” in Intelligent Systems Modeling and Simulation III: Artificial Intelligence, Machine Learning, Intelligent Functions and Cyber Security, Cham: Springer Nature Switzerland, pp. 143–158, 2024.

- M. Humayoun and N. Akhtar, “CORPURES: Benchmark corpus for Urdu extractive summaries and experiments using supervised learning,” Intelligent Systems with Applications, vol. 16, p. 200129, 2022.

- Zegarra Rodríguez, D., Daniel Okey, O., Maidin, S. S., Umoren Udo, E., & Kleinschmidt, J. H. (2023). Attentive transformer deep learning algorithm for intrusion detection on IoT systems using automatic explainable feature selection. PLOS ONE, 18(10), e0286652.

- Smith, L. N. (2018). A disciplined approach to neural network hyper-parameters: Part 1–learning rate, batch size, momentum, and weight decay. arXiv:1803.09820.

- Paulus, R., Xiong, C., & Socher, R. (2018). A Deep Reinforced Model for Abstractive Summarization. arXiv:1705.04304.

- Muhammad Usama Syed, Muhammad Junaid, and Iqbal Mehmood. UrduHack: NLP Library for Urdu Language. 2020. Available at: https://urduhack.readthedocs.io/en/stable/reference/normalization.html.

- Syed Humsha. Urdu Summarization Corpus (USCorpus). 2021. Available at: https://github.com/humsha/USCorpus.

- Ramos, J. (2003). Using TF-IDF to determine word relevance in document queries. Proceedings of the First Instructional Conference on Machine Learning, 29–48.

- Mihalcea, R., & Tarau, P. (2004). TextRank: Bringing order into text. Proceedings of the 2004 Conference on Empirical Methods in Natural Language Processing, 404–411. https://aclanthology.org/W04-3252.

- Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural Computation, 9(8), 1735–1780. [CrossRef]

- Devlin, J., Chang, M.-W., Lee, K., & Toutanova, K. (2019). BERT: Pre-training of deep bidirectional transformers for language understanding. Proceedings of NAACL-HLT 2019, 4171–4186. [CrossRef]

- Xue, L., Constant, N., Roberts, A., Kale, M., Al-Rfou, R., Siddhant, A., Barua, A., & Raffel, C. (2021). mT5: A massively multilingual pre-trained text-to-text transformer. Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics, 483–498. [CrossRef]

- Ul Hasan, M., Raza, A., & Rafi, M. S. (2022). UrduBERT: A bidirectional transformer for Urdu language understanding. ACM Transactions on Asian and Low-Resource Language Information Processing, 21(3), 1–22. [CrossRef]

- Sajjad, H., Dalvi, F., Durrani, N., & Nakov, P. (2020). Poor man’s BERT: Smaller and faster transformer models. Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2083–2098. [CrossRef]

- Tan, J.; Wan, X.; Xiao, J. Abstractive Document Summarization with a Graph-Based Attentional Neural Model. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Association for Computational Linguistics: Vancouver, BC, Canada, 30 July–4 August 2017; pp. 1171–1181.

- Gu, J.; Lu, Z.; Li, H.; Li, V.O. Incorporating Copying Mechanism in Sequence-to-Sequence Learning. arXiv 2016, arXiv:1603.06393.

| Authors & Year | Language | Dataset | Challenges | Techniques | Evaluation Metrics | Advantage | Disadvantage |

|---|---|---|---|---|---|---|---|

| SB Ahmed et al.(2022) [49] | Urdu | Urdu dataset 53k | Limited Urdu resources, cursive text recognition | Extractive, Pre-trained BERT | ROUGE-L: | Potential for improved accuracy with more data. leverages pre-trained models | Dependency on synthetic data quality, limited dataset size |

| JM Duarte et al. (2023) [50] | Urdu | Multiple (Medical datasets, AG News, DBpedia, WebKB datasets, TREC dataset) | Data scarcity, imbalanced datasets, evaluation metric limitations,evaluation metric limitations | Extractive, SSL techniques, text representations, machine learning algorithms | ROUGE-1: | Comprehensive review, identifies research gaps | Limited depth on specific methods,potential bias in dataset selection |

| Ali Nawaz et al. (2022) [51] | Urdu | Publicly available dataset | Sentence weighting, lack of publicly available extractive summarization framework | Extractive LW (sentence weight, weighted term-frequency) and GW (VSM) | F-score, Accuracy | LW approaches achieve higher F-scores | VSM achieves lower accuracy compared to LW approaches |

| Muhammad et al. (2018) [44] | Urdu | Urdu text documents | Limited resources for feature extraction | Extractive Sentence weight algorithm, segmentation, tokenization, stopwords | ROUGE (Unigram, Bigram, Trigram) | 67% accuracy at n-gram levels | Limited to extractive summarization |

| Saleem et al. (2024) [52] | Urdu | CORPURES dataset (100 documents) | low-resource language | Extractive text summarization | ROUGE-2: 0.63 | High accuracy with combination of features | Lower scores with all features combined |

| M. Humayoun et al. (2022) [53] | Urdu | CORPURES (161 documents with extractive summaries) | Lack of standardized resources, especially for low-resource languages | Extractive summarization via supervised classifiers (Naive Bayes, Logistic Regression, MLP) | ROUGE-2 | The First Urdu extraction summary corpus was made available. | Limited size of the corpus |

| Dataset | Size | Domain | Availability | URL |

|---|---|---|---|---|

| Urdu-fake-news | 900 documents | 5 different news domains | Hugging Face | Link |

| MWZ/RUM (Multi-Domain Urdu Summarization) | 48,071 news articles | News, Legal( collected from the BBC Urdu website) | Hugging Face | Link |

| Urdu-Instruct-News-Article-Generation (Ahmad Mustafa) | 7.5K articles | News (Instruction-Tuned) | Hugging Face | Link |

| Model | Batch Size | Learning Rate | Max Dec Length | Epochs |

|---|---|---|---|---|

| Transformer-based TLMs | 16 | Dynamic (∼avg. length ) | 50 | |

| Baseline seq2seq models | 64 | Fixed (128 tokens) | 30 |

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L | ROUGE-LSUM (F1-Score) |

|---|---|---|---|---|

| BART | 0.48 | 0.31 | 0.40 | 0.38 |

| mT5 | 0.50 | 0.33 | 0.42 | 0.40 |

| BERT | 0.46 | 0.28 | 0.38 | 0.34 |

| GPT-2 | 0.45 | 0.27 | 0.36 | 0.32 |

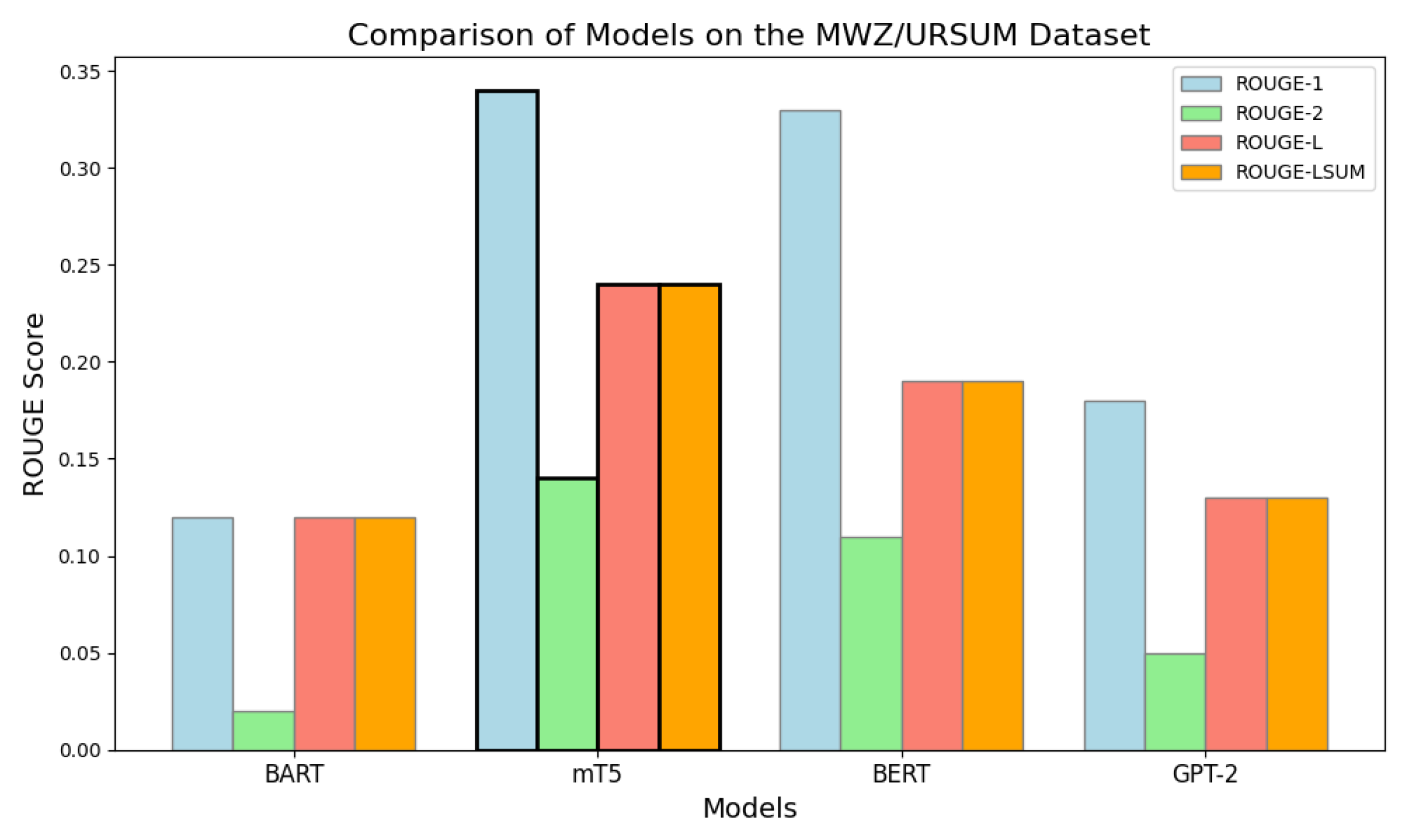

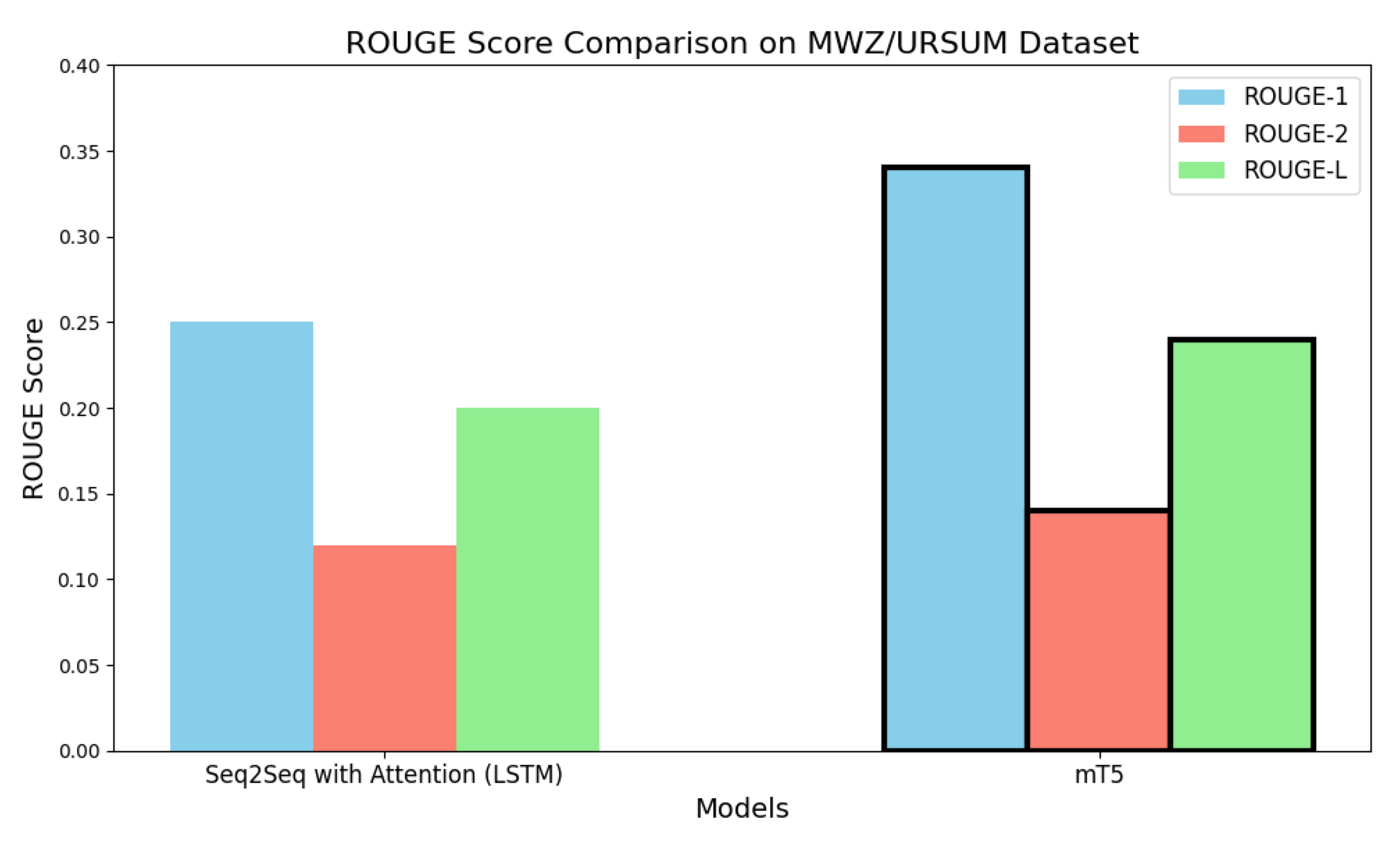

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L | ROUGE-LSUM (F1-Score) |

|---|---|---|---|---|

| BART | 0.12 | 0.02 | 0.12 | 0.12 |

| mT5 | 0.34 | 0.14 | 0.24 | 0.24 |

| BERT | 0.33 | 0.11 | 0.19 | 0.19 |

| GPT-2 | 0.18 | 0.05 | 0.13 | 0.13 |

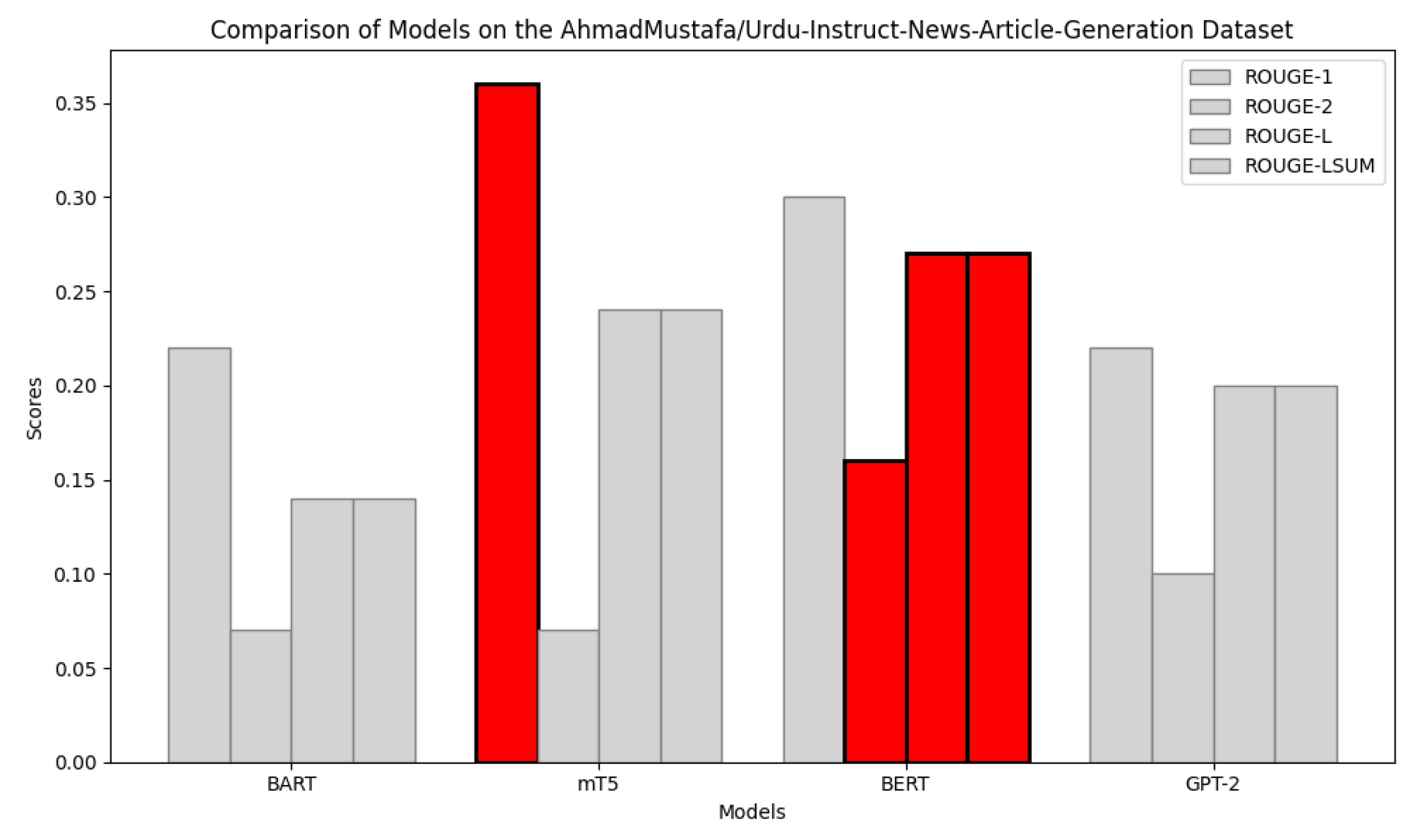

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L | ROUGE-LSUM (F1-Score) |

|---|---|---|---|---|

| BART | 0.22 | 0.07 | 0.14 | 0.14 |

| mT5 | 0.36 | 0.07 | 0.24 | 0.24 |

| BERT | 0.30 | 0.16 | 0.27 | 0.27 |

| GPT-2 | 0.22 | 0.10 | 0.20 | 0.20 |

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L |

|---|---|---|---|

| (Seq2Seq) model with attention (LSTM) | 0.25 | 0.12 | 0.20 |

| mT5 | 0.34 | 0.14 | 0.24 |

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L |

|---|---|---|---|

| Seq2Seq with Attention (LSTM) | 0.39 | 0.28 | 0.35 |

| mT5 | 0.50 | 0.33 | 0.42 |

| Relative Improvement (%) | +28.2 | +17.9 | +20.0 |

| Model | ROUGE-1 | (%) | ROUGE-2 | (%) | ROUGE-L | (%) |

|---|---|---|---|---|---|---|

| Seq2Seq + Attention | 0.39 | — | 0.28 | — | 0.35 | — |

| mT5 | 0.50 | +28.2 | 0.33 | +17.9 | 0.42 | +20.0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).