Submitted:

17 July 2025

Posted:

18 July 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methods

2.1. Data Exploration

2.2. Handling Missing Values

2.3. Feature Selection

2.4. Integration of Algorithms

3. Innovative Approaches for Data Exploration

4. Innovative Imputation Methods for Missing Data

5. Strategic Feature Selection Paradigms

6. Empirical Observations and Comparative Evaluation

6.1. Exploration Through Unsupervised Learning

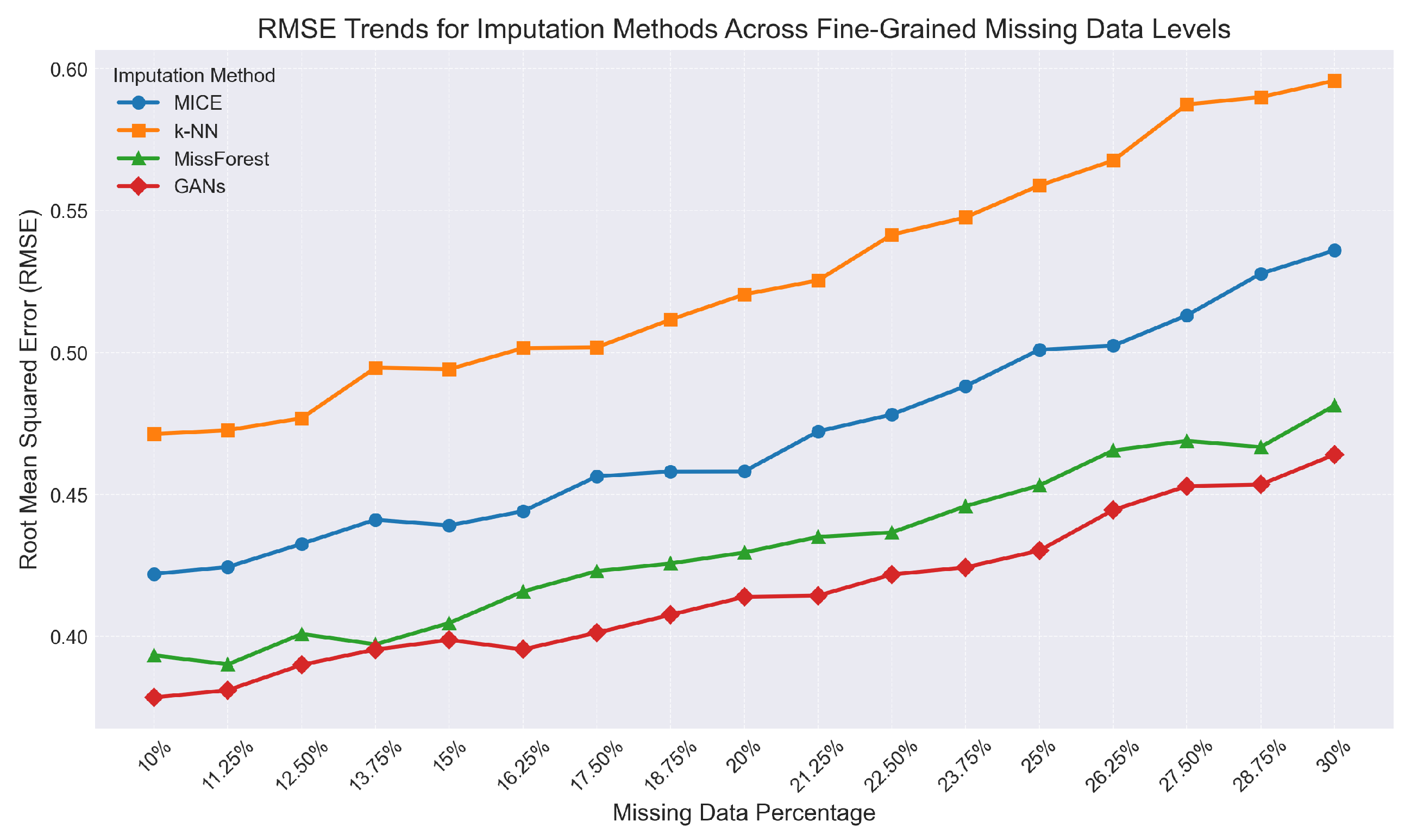

6.2. Balancing Computational Load and Accuracy in Missing Data Imputation

6.3. Optimizing Feature Selection: Precision Versus Efficiency

6.4. Integration of Empirical Insights

7. Discussion

7.1. Interpretation of Results

7.2. Limitations and Open Questions

7.3. Broader Implications for Big Data Analytics

References

- Carlo Batini and Monica Scannapieco. Data and Information Quality: Dimensions, Principles and Techniques, 2016.

- Erhard Rahm and Hong Hai, Do. Data cleaning: Problems and current approaches. Bull. IEEE Comput. Soc. Tech. Comm. Data Eng. 2000, 23, 3–13. [Google Scholar]

- Charu, C. Aggarwal. Data Mining: The Textbook. Springer, 2013. [Google Scholar]

- Richard, L. Villars, Carl W. Olofson, and Matthew Eastwood. Big data: What it is and why you should care. White Paper, IDC 2011, 14, 1–14. [Google Scholar]

- Ian Jolliffe. Principal Component Analysis. Springer, 2011.

- Laurens Van der Maaten and Geoffrey Hinton. Visualizing data using t-sne. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Donald, B. Rubin. Inference and missing data. Biometrika 1976, 63, 581–592. [Google Scholar]

- Olga Troyanskaya, Michael Cantor, Gavin Sherlock, Pat Brown, Trevor Hastie, Robert Tibshirani, David Botstein, and Russ B. Altman. Missing value estimation methods for dna microarrays. Bioinformatics 2001, 17, 520–525. [Google Scholar] [CrossRef] [PubMed]

- Stef Van Buuren and Karin Groothuis-Oudshoorn. Mice: Multivariate imputation by chained equations in r. J. Stat. Softw. 2010, 45, 1–68. [Google Scholar]

- Girish Chandrashekar and Ferat Sahin. A survey on feature selection methods. Comput. Electr. Eng. 2014, 40, 16–28. [Google Scholar] [CrossRef]

- Robert Tibshirani. Regression shrinkage and selection via the lasso. J. R. Stat. Soc. Ser. B (Methodological) 1996, 58, 267–288. [Google Scholar] [CrossRef]

- Arthur, E. Hoerl and Robert W. Kennard. Ridge regression: Biased estimation for nonorthogonal problems. Technometrics 1970, 12, 55–67. [Google Scholar]

- Leo Breiman. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Jerome, H. Friedman. Greedy function approximation: A gradient boosting machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar]

- Ian Goodfellow, Yoshua Bengio, and Aaron Courville. Deep Learning. MIT Press, 2016.

- Geoffrey, E. Hinton and Ruslan R. Salakhutdinov. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar]

- Arimondo Scrivano. Adversarial attacks and mitigation strategies on wifi networks. 2025. [CrossRef]

- Arimondo Scrivano. Fraud detection pipeline using machine learning: Methods, applications, and future directions. 2025. [CrossRef]

- Arimondo Scrivano. A comparative study of classical and post-quantum cryptographic algorithms in the era of quantum computing. Preprint. 2025. [CrossRef]

- Arimondo Scrivano. Advances in indoor positioning systems: Integrating iot and machine learning for enhanced accuracy. Preprint. 2025. [CrossRef]

- Arimondo Scrivano. Cloud service architectures for internet of things (iot) integration: Analyzing efficient cloud computing models and architectures tailored for iot environments. Preprint. 2025. [CrossRef]

- Anil, K. Jain. Data clustering: 50 years beyond k-means. In Pattern Recognition Letters, volume 31, pages 651–666, 2010.

- Joshua, B. Tenenbaum, Vin de Silva, and John C. Langford. A global geometric framework for nonlinear dimensionality reduction. Science 2000, 290, 2319–2323. [Google Scholar]

- Pieter Adriaans and Dolf Zantinge. Data Mining. Addison-Wesley Longman Publishing Co., Inc. 1996.

- Yehuda Koren, Robert Bell, and Chris Volinsky. Matrix factorization techniques for recommender systems. Computer 2009, 42, 30–37. [Google Scholar] [CrossRef]

- Daniel J., J. Stekhoven and Peter Bühlmann. Missforest—non-parametric missing value imputation for mixed-type data. Bioinformatics 2012, 28, 112–118. [Google Scholar]

- Jinsung Yoon, James Jordon, and Mihaela Schaar. Gain: Missing data imputation using generative adversarial nets. arXiv, 2018; arXiv:1806.02920.

- Isabelle Guyon and André Elisseeff. An introduction to variable and feature selection. J. Mach. Learn. Res. 2003, 3, 1157–1182. [Google Scholar]

- Isabelle Guyon, Jason Weston, Stephen Barnhill, and Vladimir Vapnik. Gene selection for cancer classification using support vector machines. Mach. Learn. 2002, 46, 389–422. [Google Scholar] [CrossRef]

- Michał Kursa and Witold Rudnicki. Feature selection with the boruta package. J. Stat. Softw. 2010, 36, 1–13. [Google Scholar]

| Method | #Clusters | #Outliers | Complexity |

|---|---|---|---|

| K-means | 5 | 15 | |

| DBSCAN | 4 | 22 | |

| PCA+K-means | 6 | 18 |

| Method | RMSE 10% | RMSE 20% | RMSE 30% | Time (s) |

|---|---|---|---|---|

| MICE | 0.42 | 0.46 | 0.54 | 250 |

| k-NN | 0.47 | 0.52 | 0.60 | 200 |

| MissForest | 0.39 | 0.43 | 0.48 | 310 |

| GANs | 0.38 | 0.41 | 0.46 | 400 |

| Method | #Feat. | Acc. (%) | Time (s) | F1 |

|---|---|---|---|---|

| RFE (SVM) | 15 | 92.5 | 60 | 0.91 |

| LASSO | 12 | 91.8 | 45 | 0.90 |

| Boruta | 18 | 93.0 | 75 | 0.92 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).