Submitted:

04 June 2025

Posted:

06 June 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

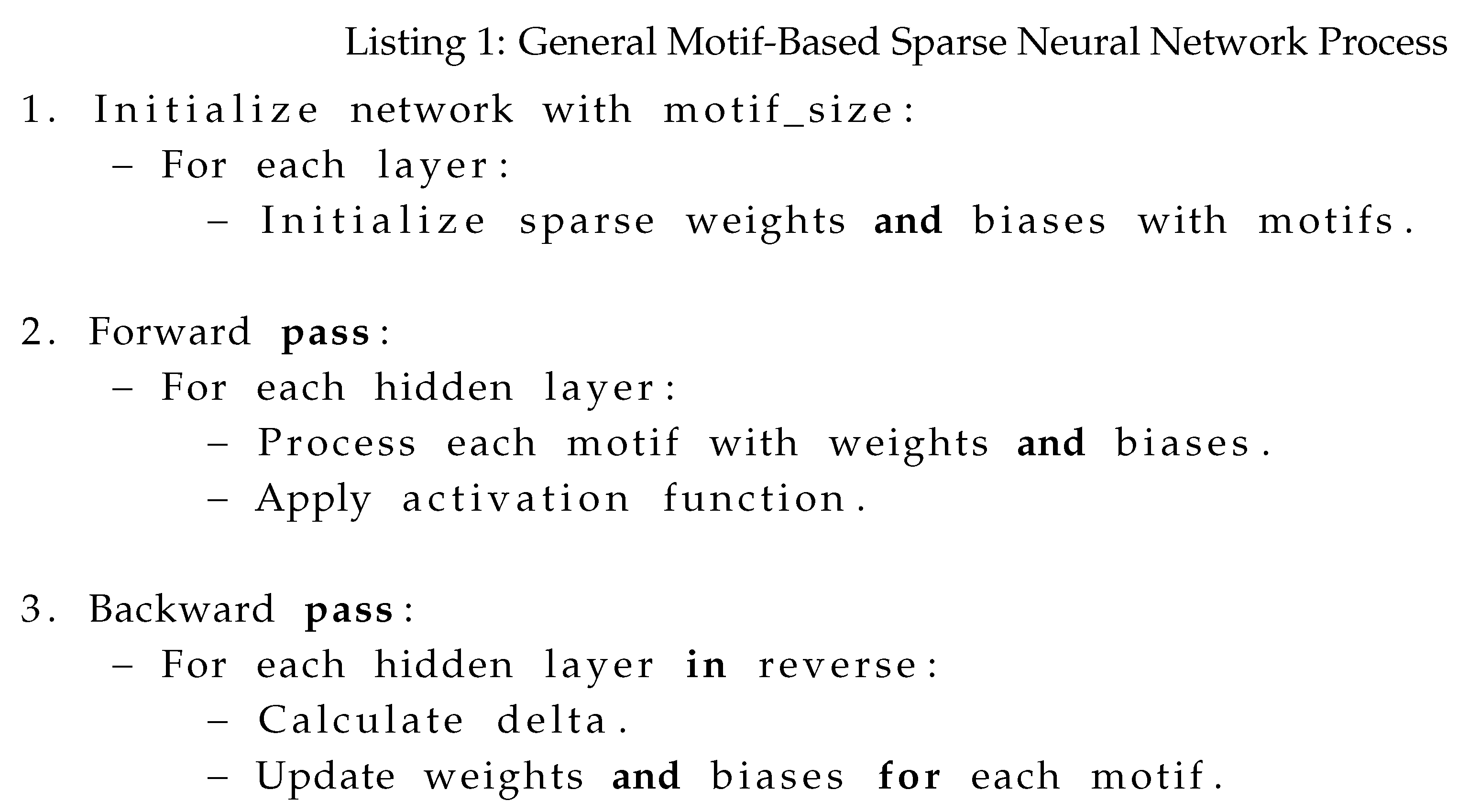

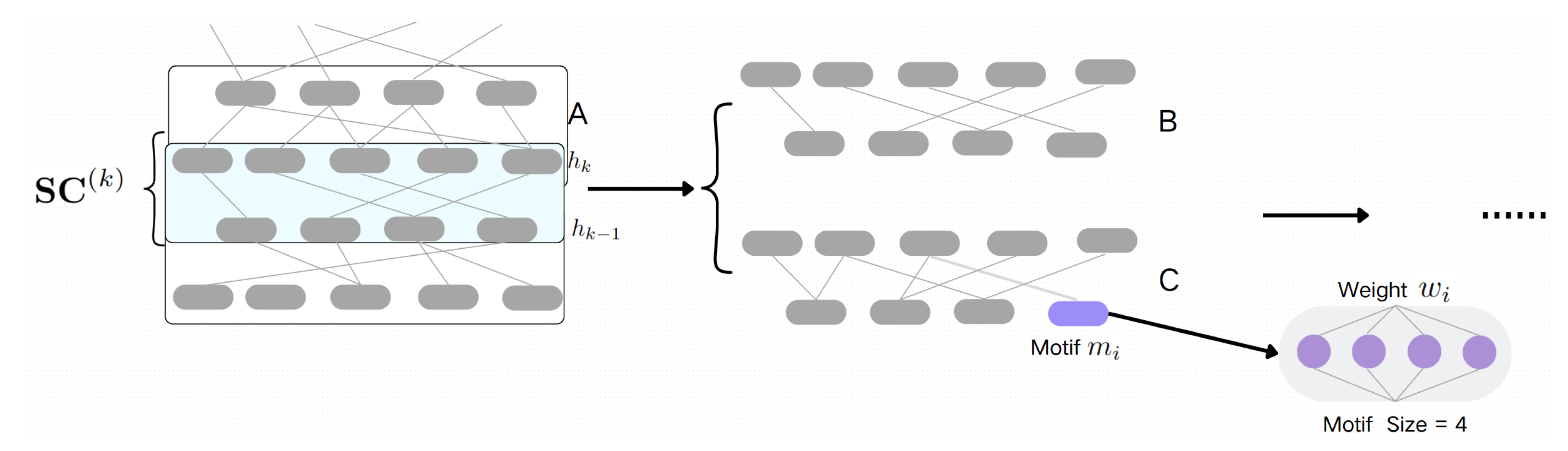

3. Methodology

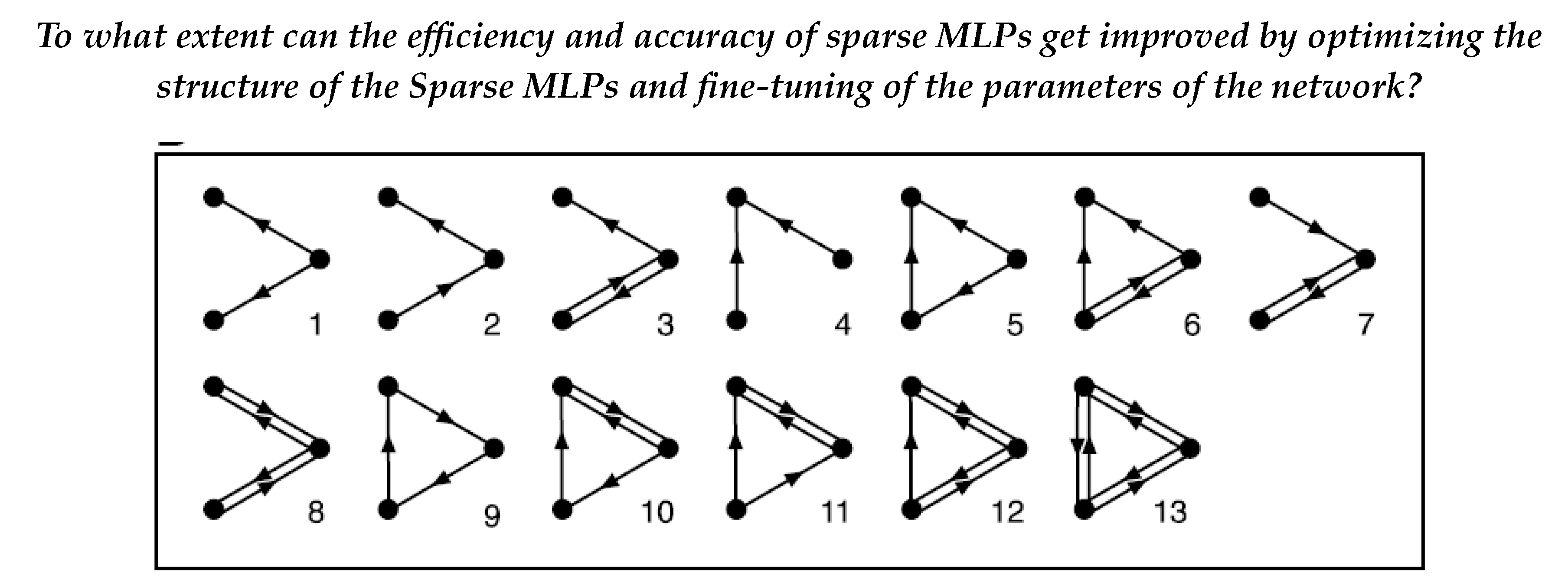

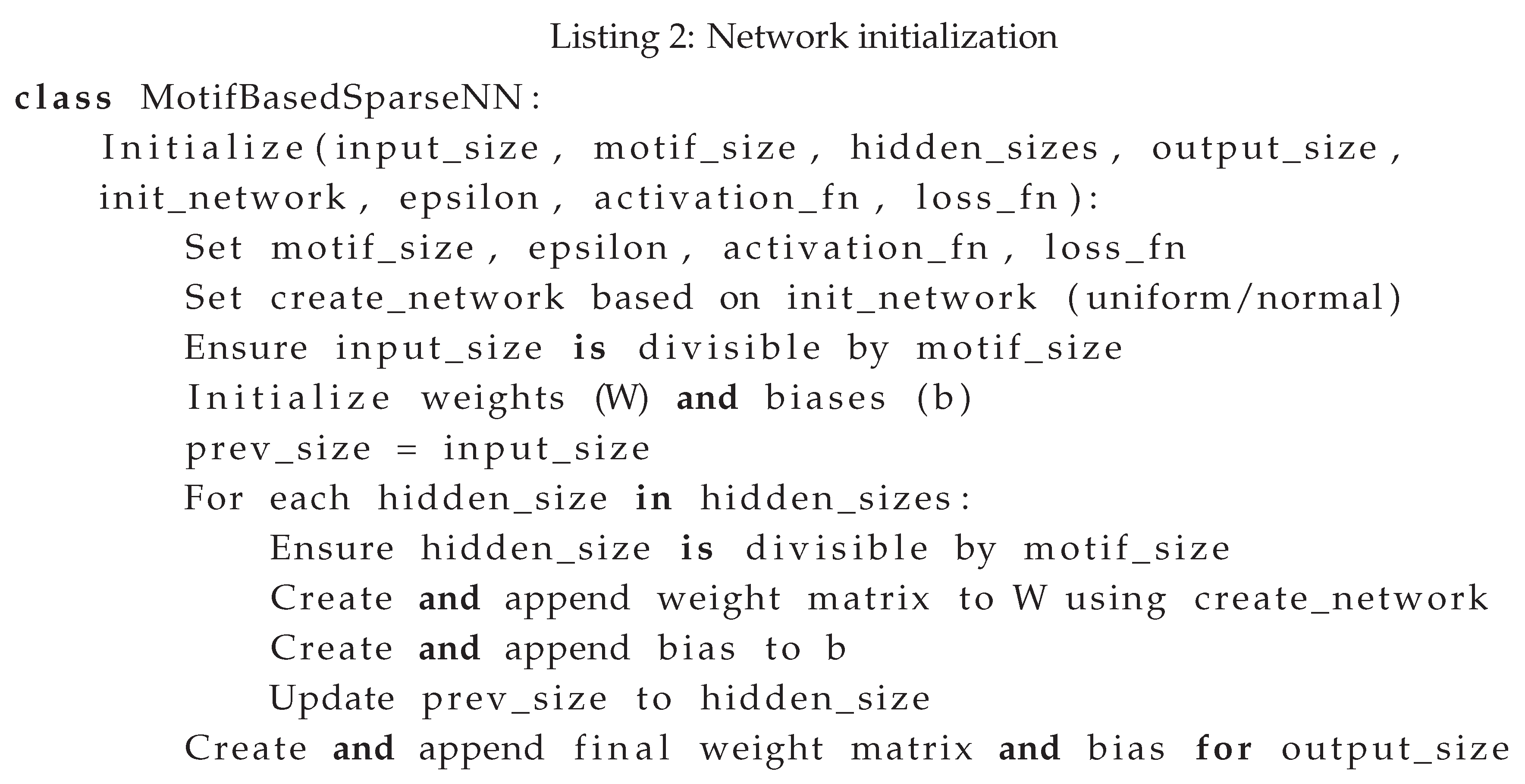

3.1. Network Construction

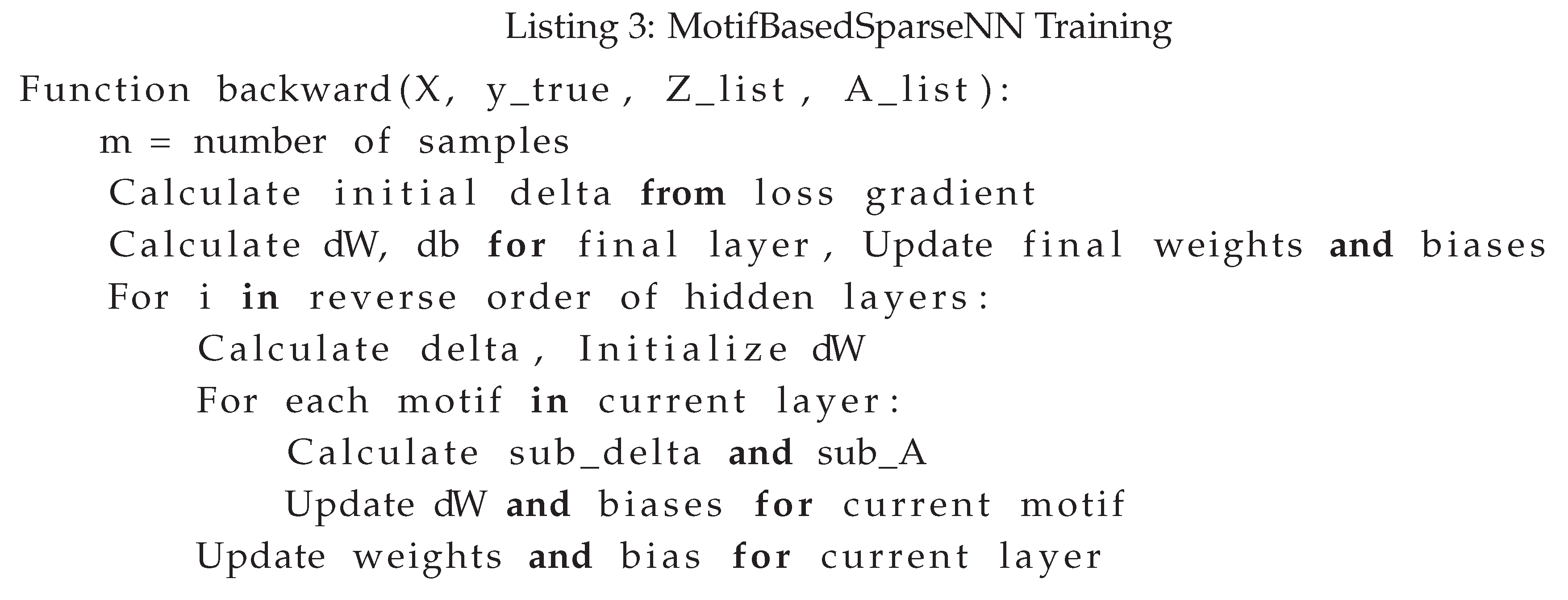

3.2. Training Process

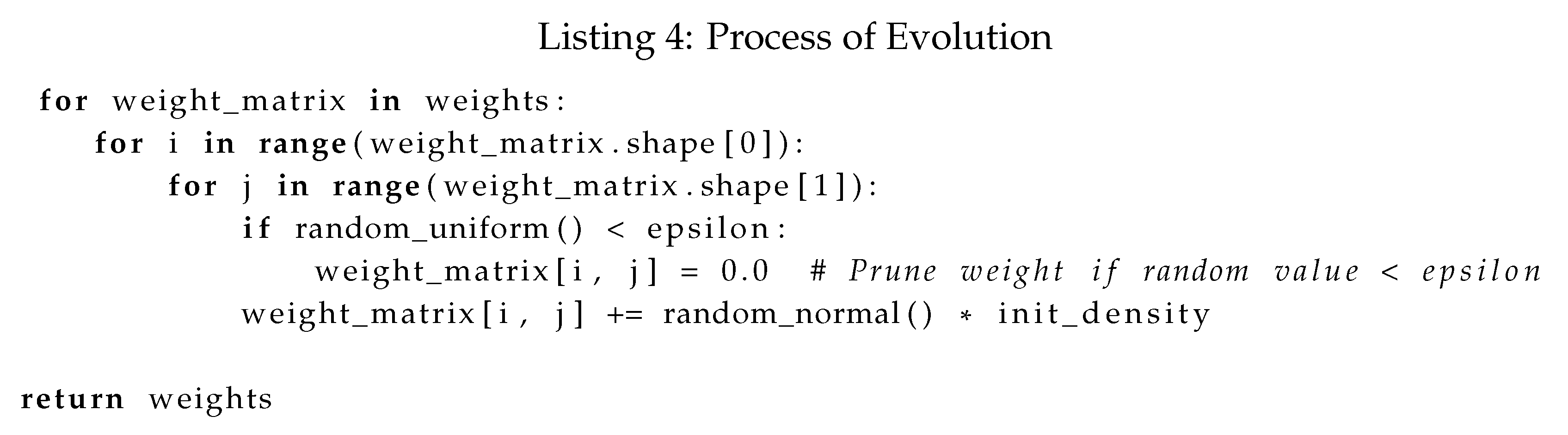

3.3. Process of Evolution

4. Experiment

4.1. Data Preparation

4.2. Design of the Experiment

- S is the comprehensive score.

- is the percentage of running time reduction.

- is the percentage of accuracy reduction.

- is the running time observed for benchmark model.

- T is the running time for the specific motif-size model.

- is thebenchmark model accuracy.

- A is the accuracy for the specific motif-size model.

5. Results

5.1. Experiment Results

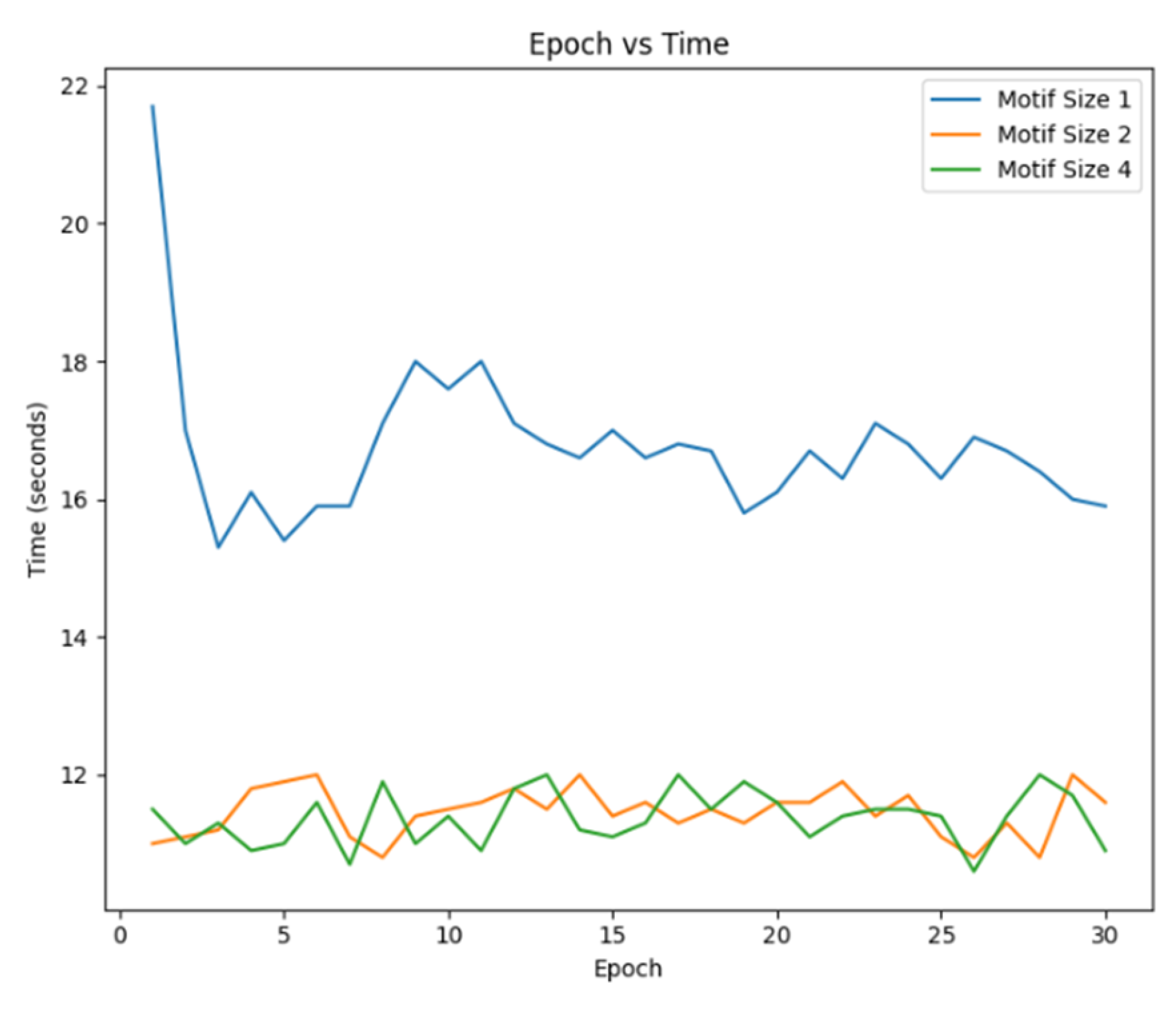

5.1.1. Results of FMNIST

| Motif Size | Running Time (s) | Accuracy | Average Running Time (s) |

| Motif Size:1 (SET) | 25236.2 | 0.7610 | 17.73 |

| Motif Size:2 | 14307.5 | 0.7330 | 9.14 |

| Motif Size:4 | 9209.3 | 0.6920 | 6.74 |

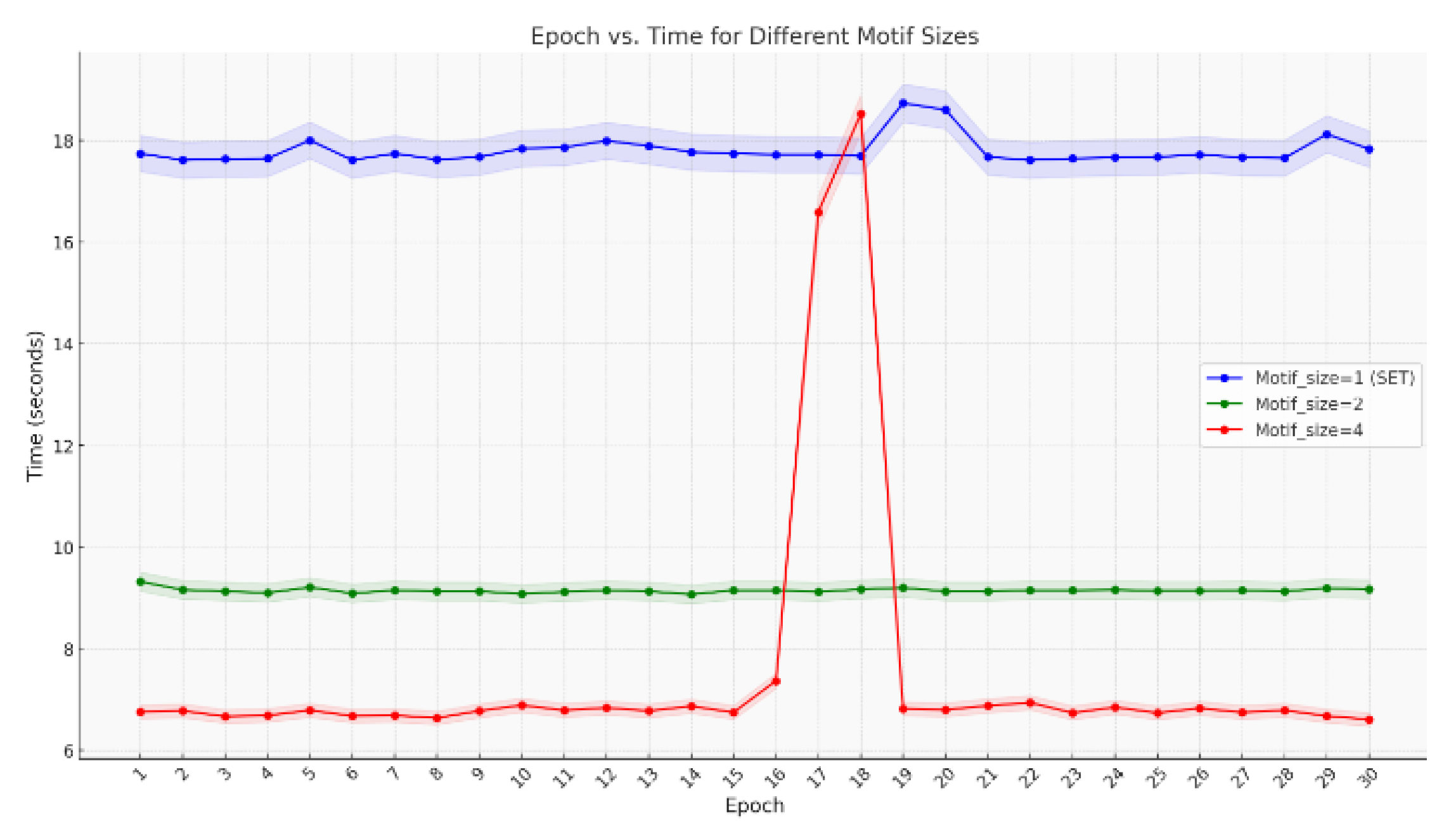

5.1.2. Result of Lung

| Name | Features | Type | Samples | Classes |

| FMNIST | 784 | Image | 70000 | 10 |

| Lung | 3312 | Microarray | 203 | 5 |

5.2. Result Analysis

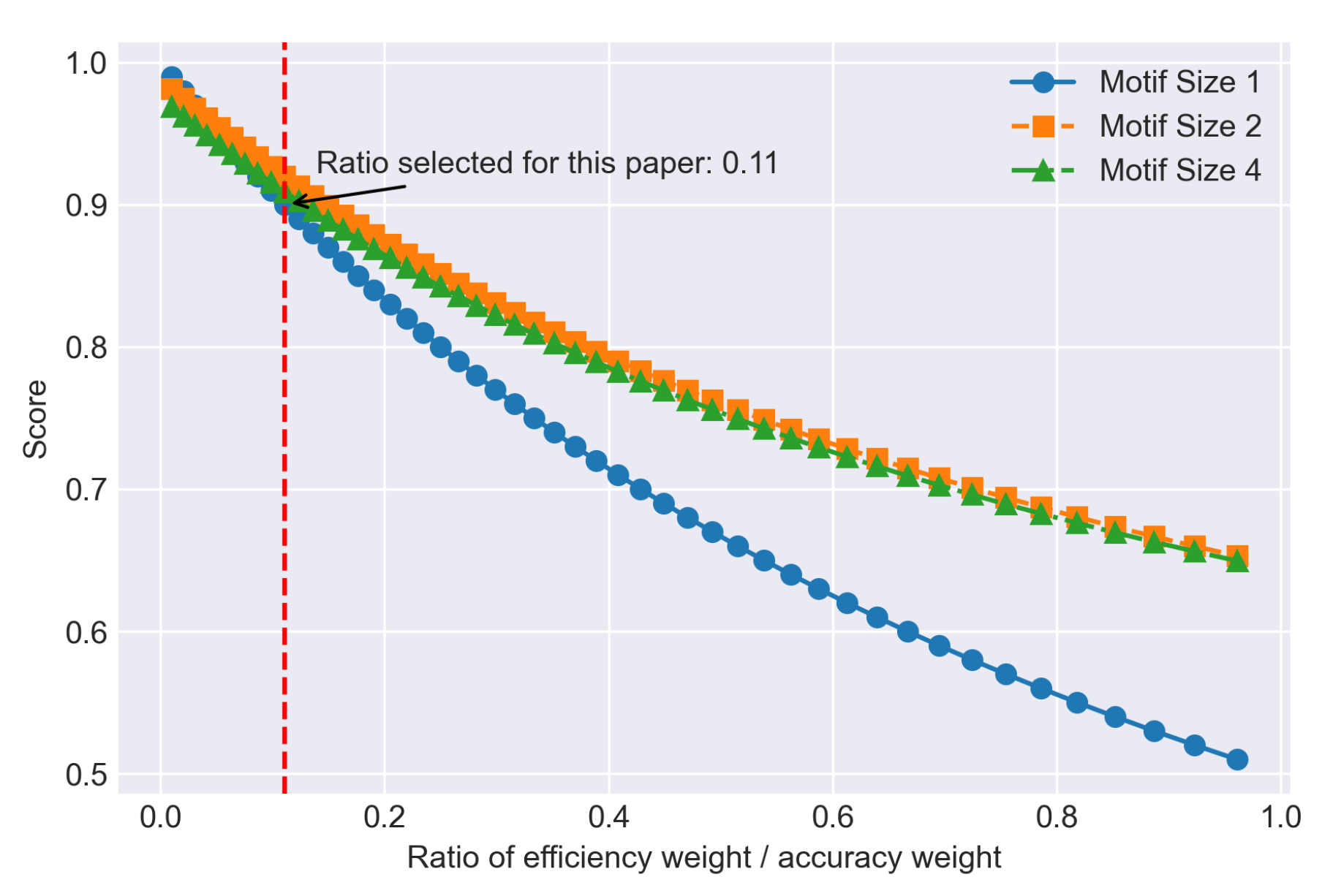

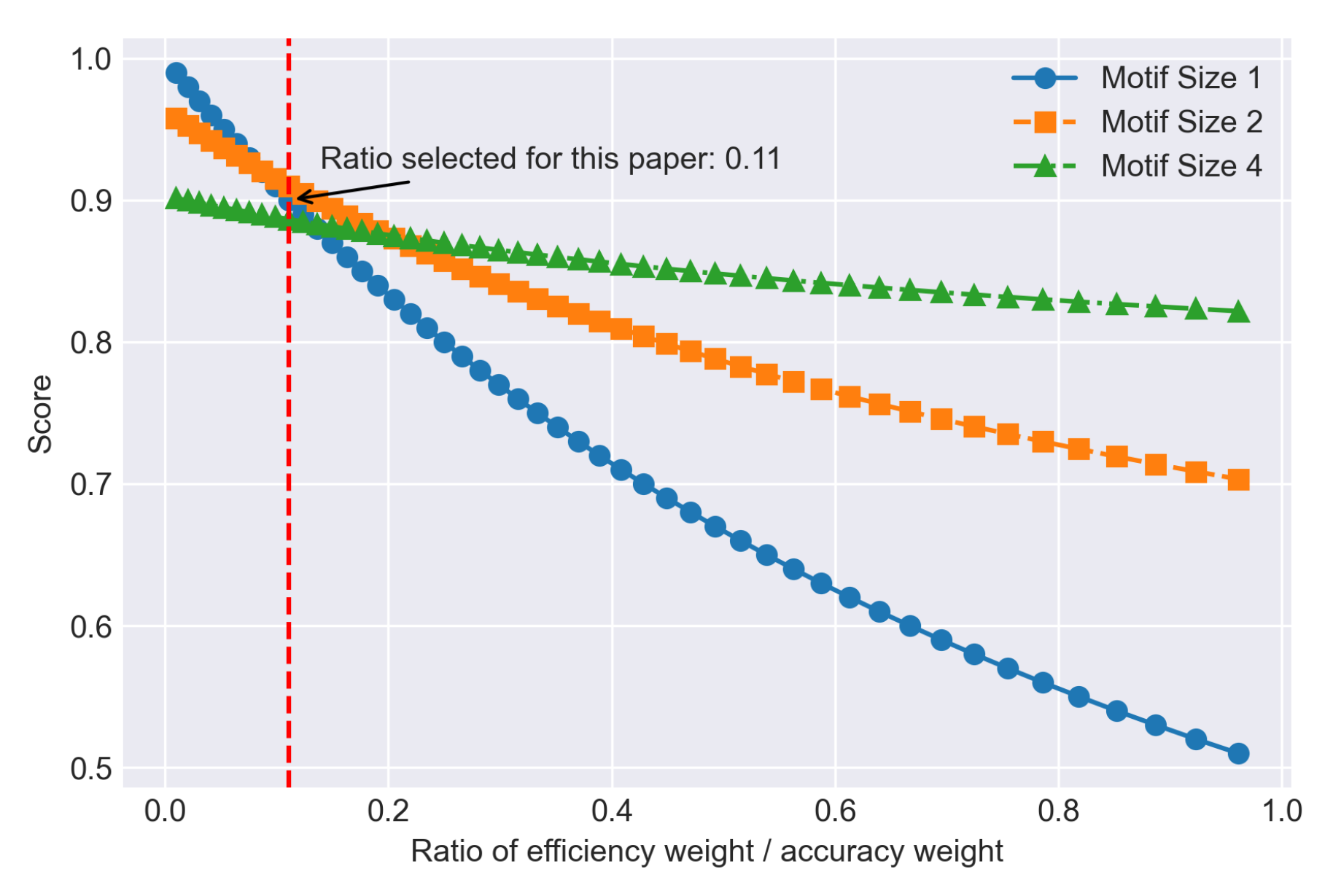

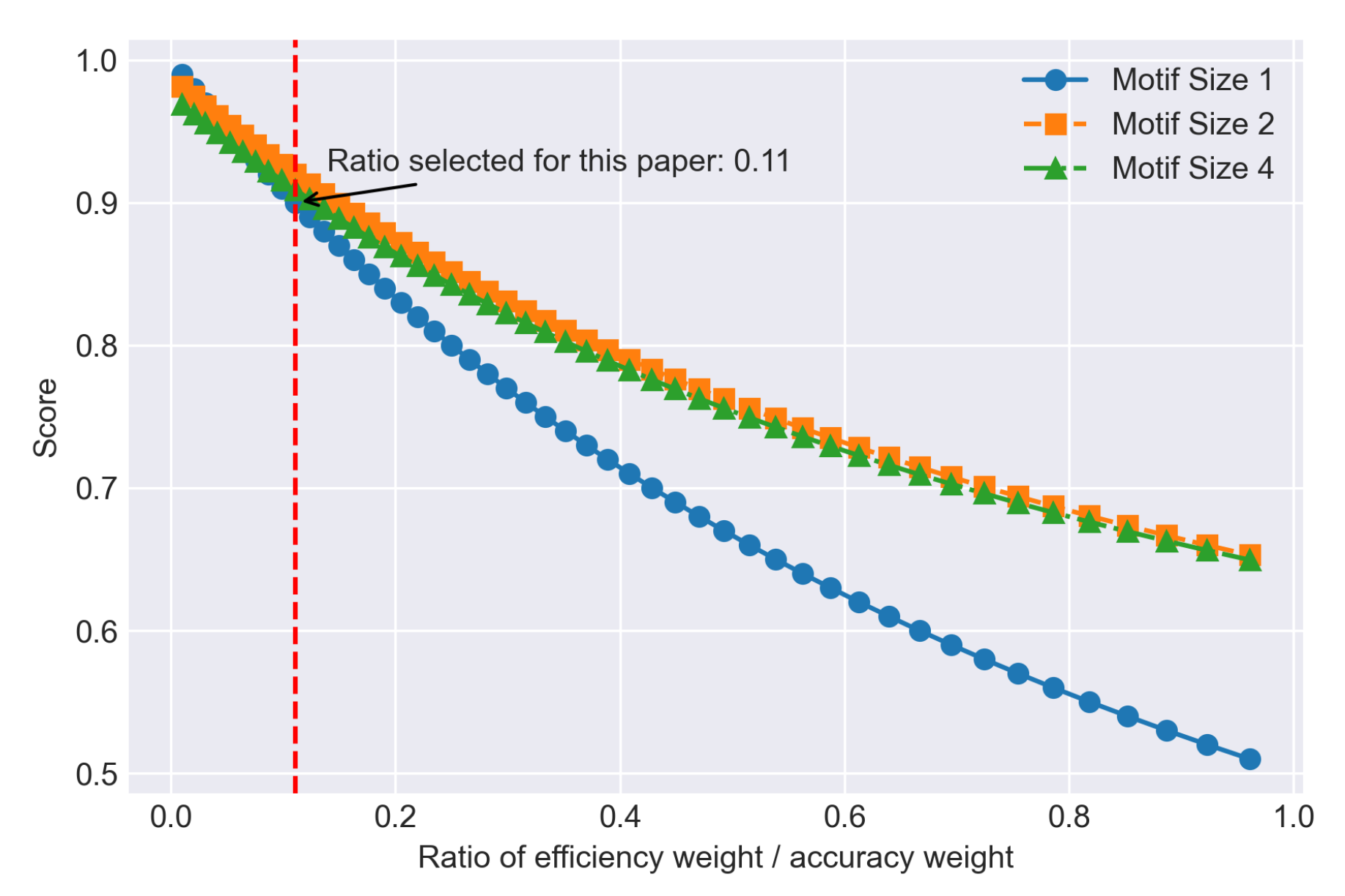

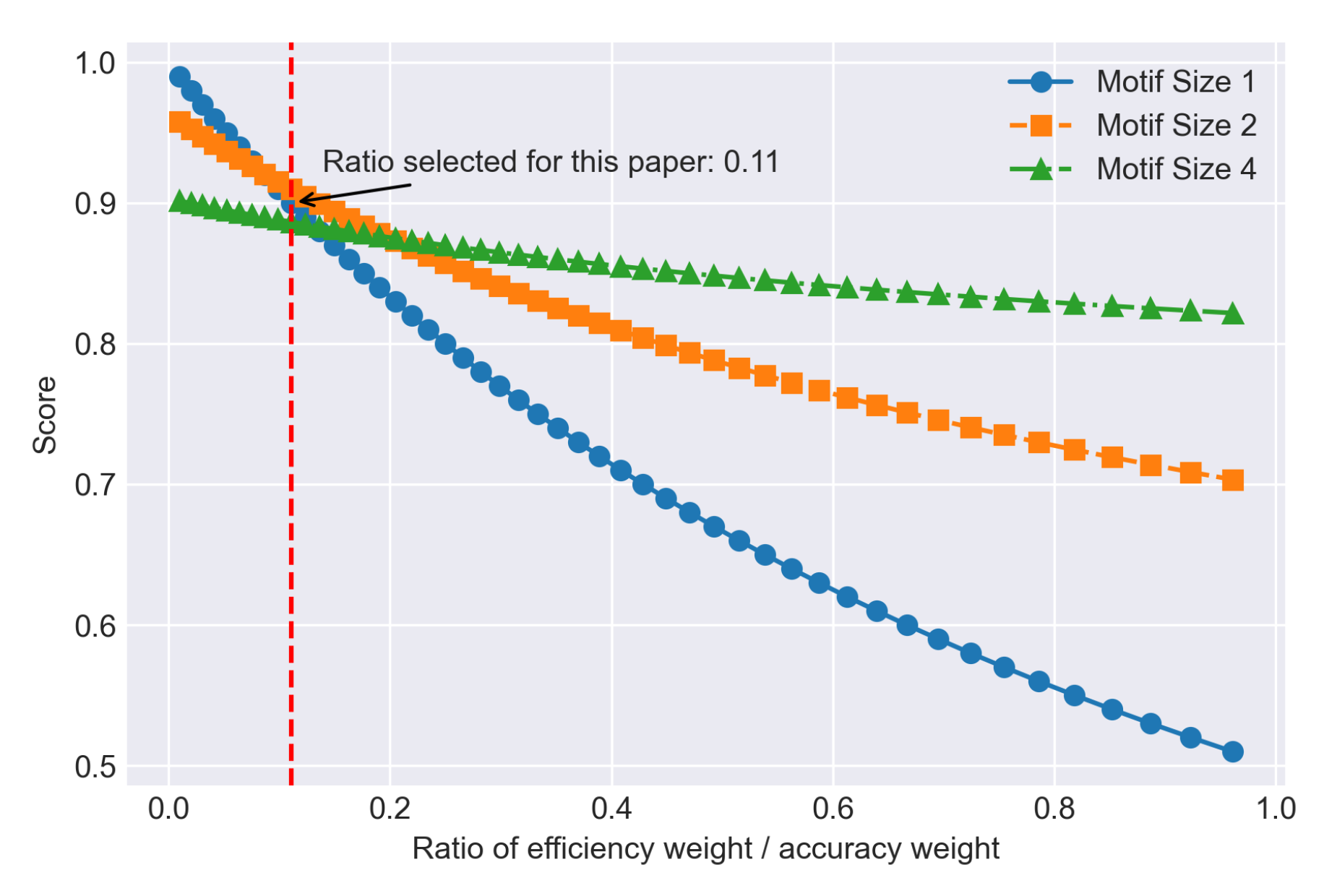

5.3. Trade-off Relationship

5.4. Application Scenarios

- Mobile Devices: In mobile devices, computational resources and battery life are critically limited. Motif-based models, due to their efficiency, can provide high-quality predictions without significantly consuming device resources, making them suitable for mobile applications and embedded systems [29].

- Autonomous Driving: Autonomous vehicles need to process large amounts of sensor data in a very short time to make driving decisions. Motif-based models can significantly reduce the computation time while maintaining accuracy.

- Financial Trading: In high-frequency trading and financial market prediction, motif-based models can provide rapid market predictions.

- Smart Home: Smart home devices must respond quickly to user commands and environmental changes. Motif-based models can efficiently process sensor data and user commands.

6. Discussion

6.1. Result Analysis

6.2. Trade-off Relationship

6.3. Application Scenarios

- Mobile Devices: On mobile devices, computational resources and battery life are critically constrained. Motif-based models, due to their efficiency, can provide high-quality predictions without significantly consuming device resources, making them suitable for mobile applications and embedded systems [29].

- Autonomous Driving: Autonomous vehicles need to process large amounts of sensor data in a very short time to make driving decisions. Motif-based models can significantly reduce the computation time while maintaining accuracy.

- Financial Trading: In high-frequency trading and financial market prediction, motif-based models can provide rapid market predictions.

- Smart Home: Smart home devices need to quickly respond to user commands and environmental changes. Motif-based models can efficiently process sensor data and users’ commands.

7. Conclusions

8. Reflection and Future Work

References

- Ardakani, A.; Condo, C.; Gross, W.J. Sparsely-Connected Neural Networks: Towards Efficient VLSI Implementation of Deep Neural Networks, [1611.01427 [cs]]. [CrossRef]

- Bellec, G.; Kappel, D.; Maass, W.; Legenstein, R. Deep Rewiring: Training very sparse deep networks, [1711.05136 [cs, stat]]. [CrossRef]

- Mocanu, D.C.; Mocanu, E.; Stone, P.; Nguyen, P.H.; Gibescu, M.; Liotta, A. Scalable training of artificial neural networks with adaptive sparse connectivity inspired by network science. 9, 2383. [CrossRef]

- Zhang, S.; Yin, B.; Zhang, W.; Cheng, Y. Topology Aware Deep Learning for Wireless Network Optimization. IEEE Transactions on Wireless Communications 2022, 21, 9791–9805. [CrossRef]

- Altman, N.S. An Introduction to Kernel and Nearest-Neighbor Nonparametric Regression. 46, 175–185. [CrossRef]

- Liu, S.; Van der Lee, T.; Yaman, A.; Atashgahi, Z.; Ferraro, D.; Sokar, G.; Pechenizkiy, M.; Mocanu, D.C. Topological Insights into Sparse Neural Networks, [2006.14085 [cs, stat]]. [CrossRef]

- Milo, R.; Shen-Orr, S.; Itzkovitz, S.; Kashtan, N.; Chklovskii, D.; Alon, U. Network Motifs: Simple Building Blocks of Complex Networks. 298, 824–827. [CrossRef]

- LeCun, Y.; Denker, J.; Solla, S. Optimal Brain Damage. In Proceedings of the Advances in Neural Information Processing Systems. Morgan-Kaufmann, 1989, Vol. 2.

- Hassibi, B.; Stork, D.; Wolff, G. Optimal Brain Surgeon and general network pruning. In Proceedings of the IEEE International Conference on Neural Networks, 1993, pp. 293–299 vol.1. [CrossRef]

- Han, S.; Mao, H.; Dally, W.J. Deep Compression: Compressing Deep Neural Networks with Pruning, Trained Quantization and Huffman Coding, 2016. arXiv:1510.00149 [cs], . [CrossRef]

- Mostafa, H.; Wang, X. Parameter efficient training of deep convolutional neural networks by dynamic sparse reparameterization.

- Yin, Z.; Shen, Y. On the Dimensionality of Word Embedding, 2018. arXiv:1812.04224 [cs, stat], . [CrossRef]

- Bin, Z.; Zhi-chun, G.; Wen, C.; Qiang-qiang, H.; Jian-feng, H. Topology Optimization of Complex Network based on NSGA-II. In Proceedings of the 2019 IEEE 4th Advanced Information Technology, Electronic and Automation Control Conference (IAEAC), Chengdu, China, 2019; pp. 1680–1685. [CrossRef]

- Hayase, T.; Karakida, R. MLP-Mixer as a Wide and Sparse MLP. [CrossRef]

- Wang, M.; Cui, Y.; Xiao, S.; Wang, X.; Yang, D.; Chen, K.; Zhu, J. Neural Network Meets DCN: Traffic-driven Topology Adaptation with Deep Learning. Proceedings of the ACM on Measurement and Analysis of Computing Systems 2018, 2, 1–25. [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need, 2023. arXiv:1706.03762 [cs], . [CrossRef]

- Barabási, A.L.; Albert, R. Emergence of Scaling in Random Networks. 286, 509–512. [CrossRef]

- Changpinyo, S.; Sandler, M.; Zhmoginov, A. The Power of Sparsity in Convolutional Neural Networks, 2017. arXiv:1702.06257 [cs], . [CrossRef]

- Bullmore, E.; Sporns, O. Complex brain networks: graph theoretical analysis of structural and functional systems. Nature Reviews Neuroscience 2009, 10, 186–198. [CrossRef]

- Xiao, H.; Rasul, K.; Vollgraf, R. Fashion-MNIST: a Novel Image Dataset for Benchmarking Machine Learning Algorithms, 2017. arXiv:1708.07747 [cs, stat], . [CrossRef]

- Sun, Y.; Huang, X.; Kroening, D.; Sharp, J.; Hill, M.; Ashmore, R. Testing Deep Neural Networks, 2019. arXiv:1803.04792 [cs], . [CrossRef]

- Wang, Z.; Choi, J.; Wang, K.; Jha, S. Rethinking Diversity in Deep Neural Network Testing, 2024. arXiv:2305.15698 [cs], . [CrossRef]

- Sophia, J.J.; Jacob, T.P. A Comprehensive Analysis of Exploring the Efficacy of Machine Learning Algorithms in Text, Image, and Speech Analysis. Journal of Electrical Systems 2024, 20, 910–921. [CrossRef]

- Swink, M.; Talluri, S.; Pandejpong, T. Faster, better, cheaper: A study of NPD project efficiency and performance tradeoffs. Journal of Operations Management 2006, 24, 542–562. [CrossRef]

- Tan, J.; Wang, L. Flexibility–efficiency tradeoff and performance implications among Chinese SOEs. Journal of Business Research 2010, 63, 356–362. [CrossRef]

- Wu, F.; Kim, K.; Pan, J.; Han, K.J.; Weinberger, K.Q.; Artzi, Y. Performance-Efficiency Trade-Offs in Unsupervised Pre-Training for Speech Recognition. In Proceedings of the ICASSP 2022 - 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 7667–7671. ISSN: 2379-190X, . [CrossRef]

- Atashgahi, Z.; Sokar, G.; van der Lee, T.; Mocanu, E.; Mocanu, D.C.; Veldhuis, R.; Pechenizkiy, M. Quick and Robust Feature Selection: the Strength of Energy-efficient Sparse Training for Autoencoders. [CrossRef]

- The Theano Development Team.; Al-Rfou, R.; Alain, G.; Almahairi, A.; Angermueller, C.; Bahdanau, D.; Ballas, N.; Bastien, F.; Bayer, J.; Belikov, A.; et al. Theano: A Python framework for fast computation of mathematical expressions, 2016. arXiv:1605.02688 [cs], . [CrossRef]

- Zhang, S.; Du, Z.; Zhang, L.; Lan, H.; Liu, S.; Li, L.; Guo, Q.; Chen, T.; Chen, Y. Cambricon-X: An accelerator for sparse neural networks. In Proceedings of the 2016 49th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), 2016, pp. 1–12. [CrossRef]

| Motif Size | Running Time (s) | Accuracy | Average Running Time (s) |

| Motif Size:1 (SET) | 25236.2 | 0.7610 | 17.73 |

| Motif Size:2 | 14307.5 | 0.7330 | 9.14 |

| Motif Size:4 | 9209.3 | 0.6920 | 6.74 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).