Submitted:

24 May 2025

Posted:

27 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose DS-LoRA, a novel PEFT method that integrates dynamic gating and learned sparsity into the LoRA framework, specifically designed for nuanced NLP tasks.

- We demonstrate through extensive experiments that DS-LoRA significantly outperforms standard LoRA and other competitive baselines in detecting offensive language, especially in challenging cases involving subtlety and context-dependency, while maintaining or improving parameter efficiency.

- We provide an analysis of the learned gate behaviors and sparsity patterns, offering insights into how DS-LoRA achieves its performance gains and contributing to a better understanding of adaptive finetuning mechanisms.

2. Related Work

3. Methodology

3.1. Preliminaries: LoRA

3.2. DS-LoRA

3.2.1. Input-Dependent Gating Mechanism

3.2.2. LoRA Parameter Sparsification

3.2.3. DS-LoRA Layer Forward Pass

3.3. Model Architecture and Training

3.3.1. Base Model and DS-LoRA Application

3.3.2. Training Objective

- is the standard cross-entropy loss for the offensive language classification task:where N is the batch size, C is the number of classes, is the true label (1 if sample j belongs to class c, 0 otherwise), and is the model’s predicted probability for sample j belonging to class c.

- is the L1 sparsity regularization term defined in Equation 5.

3.3.3. DS-LoRA Finetuning Algorithm

| Algorithm 1 DS-LoRA Finetuning Algorithm |

|

4. Experimental Setup

4.1. Base Models

- Llama-3 8B Instruct: A decoder-only transformer model from Meta AI with 8 billion parameters. We specifically use the instruction-tuned variant, which has been aligned for better instruction following and safety, providing a strong foundation for downstream task adaptation.

- Gemma 7B Instruct: A decoder-only transformer model from Google with 7 billion parameters, also an instruction-tuned variant. Gemma models are built using similar architectures and techniques as Google’s Gemini models. .

4.2. Datasets

- OLID [30]: This dataset, from SemEval-2019 Task 6, contains English tweets annotated for three hierarchical levels. We focus on Sub-task A: Offensive language identification (OFF vs. NOT). This task requires identifying whether a tweet contains any form of offensive language, including insults, threats, and profanity. We use the official training, development, and test splits.

- HateXplain [23]: This dataset provides fine-grained annotations for English posts from Twitter and Gab, distinguishing between hate speech, offensive language, and normal language. Crucially, it also includes human-annotated rationales (token-level explanations) for each classification, which, while not directly used for training our classification model, underscores the dataset’s focus on nuanced and explainable offensiveness. We use the three-class classification task (hate, offensive, normal) and also report a binary offensive vs. normal version for comparison.

4.3. Evaluation Metrics

- Accuracy: The proportion of correctly classified instances.

- Precision: The proportion of true positive predictions among all positive predictions.

- Recall: The proportion of true positive predictions among all actual positive instances.

- F1-Score (Macro and Weighted): The harmonic mean of precision and recall. We report both Macro-F1 (unweighted average across classes, crucial for imbalanced datasets) and Weighted-F1 (average weighted by class support). For binary tasks, we specifically focus on the F1-score for the “offensive" or “hate speech" class when it’s the minority and primary target.

4.4. Baselines

- Zero-Shot LLM: The base Llama-3 8B and Gemma 7B models without any finetuning, using carefully crafted prompts to perform offensive language classification in a zero-shot manner.

- Full Finetuning: Full finetuning of the base LLMs. Due to computational constraints, this might be limited to finetuning only the top few layers or a smaller version of the model as an indicative upper bound.

- LoRA [13]: The original LoRA implementation applied to the same target modules () as DS-LoRA, using various ranks (r) for comparison.

- Adapters [31]: Finetuning using Houlsby adapters inserted into the Transformer layers.

- QLoRA [28]: If comparing parameter efficiency with quantization, QLoRA provides a strong baseline for memory-efficient finetuning.

4.5. Implementation Details

5. Results and Analysis

5.1. Main Results

5.2. Ablation Studies

5.3. Analysis of DS-LoRA Components

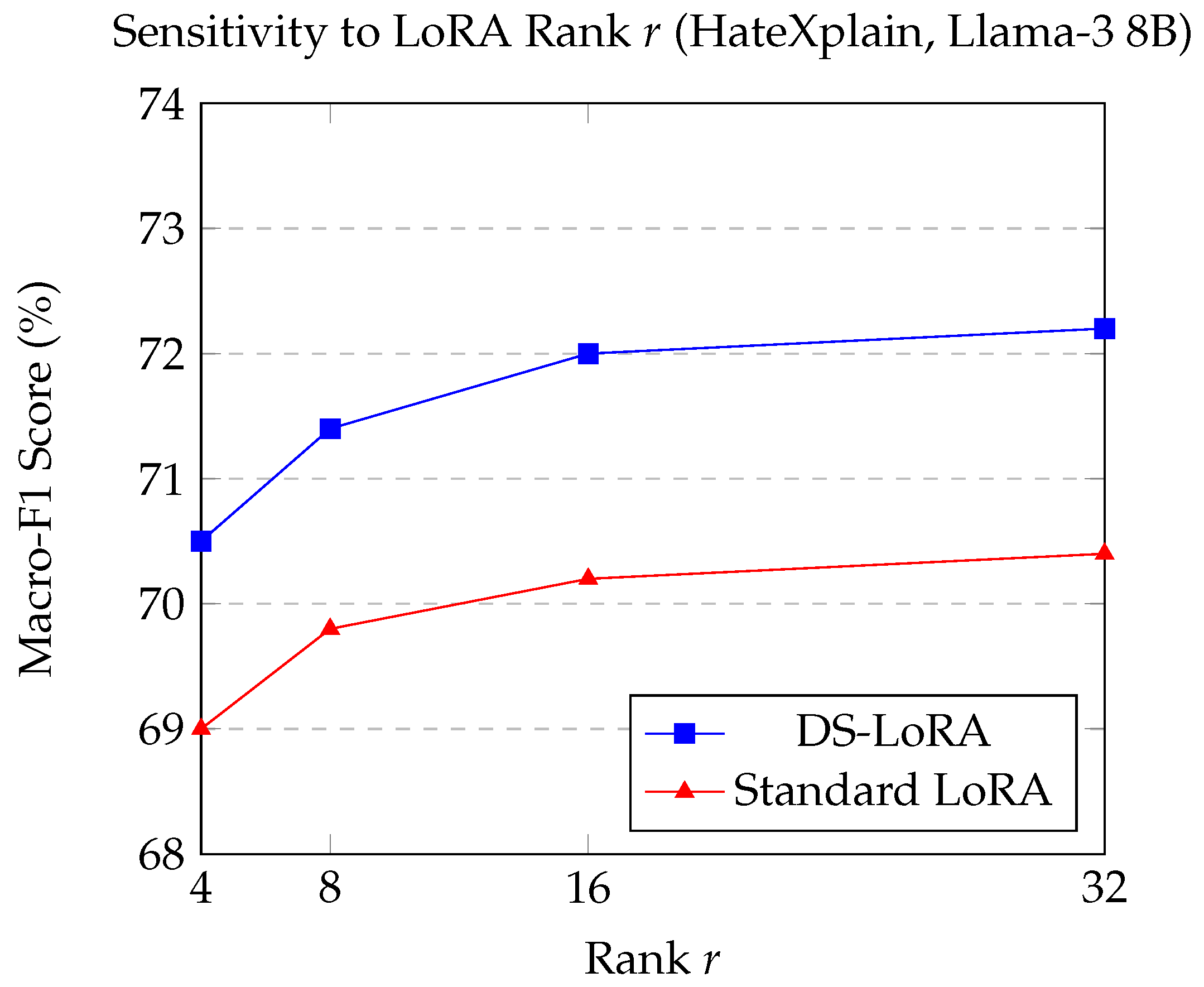

5.3.1. Sensitivity to LoRA Rank r

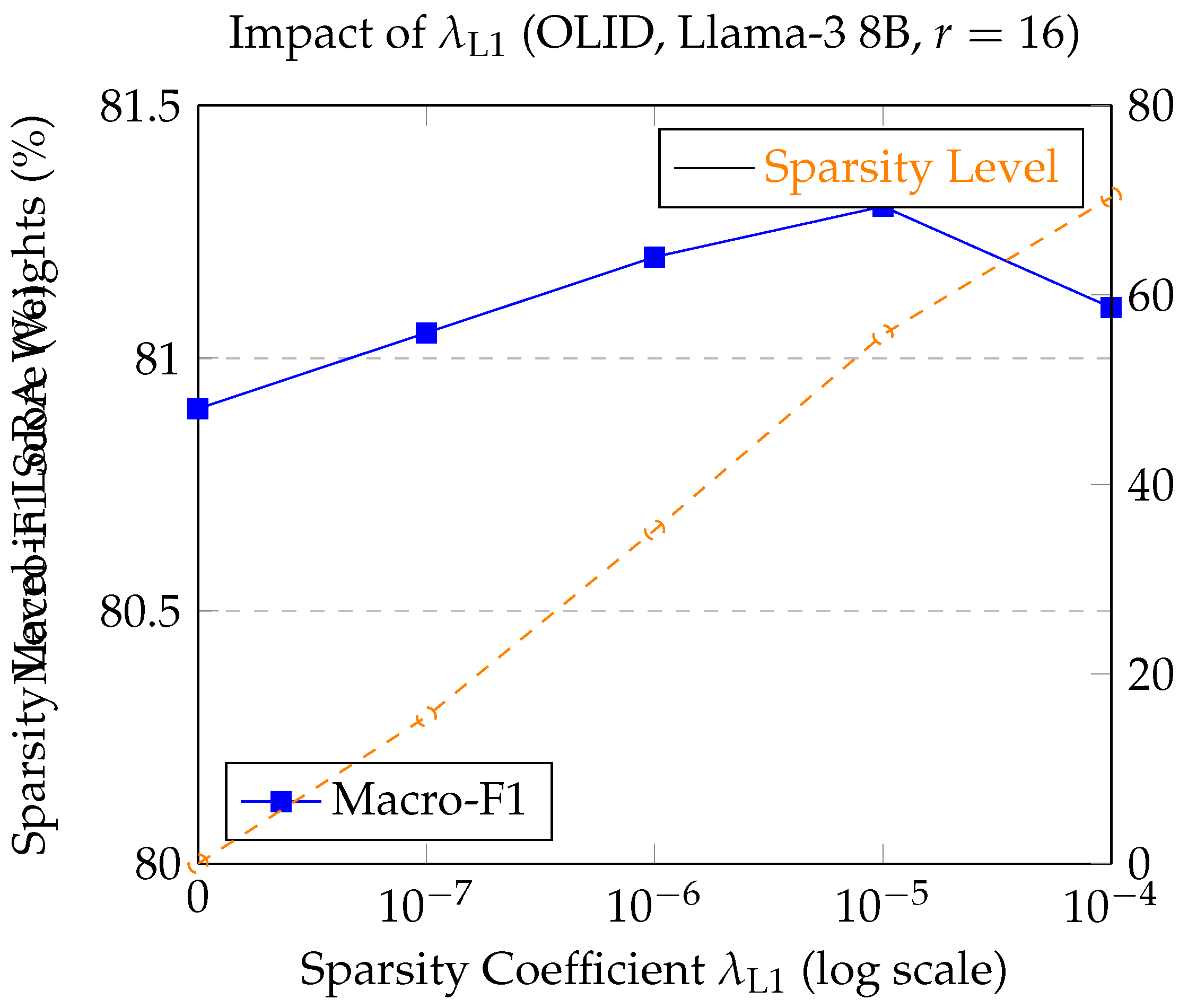

5.3.2. Impact of Sparsity Coefficient

5.3.3. Qualitative Analysis of Gate Activations

6. Conclusion

References

- Fortuna, P.; Nunes, S. A survey on automatic detection of hate speech in text. Acm Computing Surveys (Csur) 2018, 51, 1–30. [CrossRef]

- Mutanga, R.T.; Naicker, N.; Olugbara, O.O. Detecting hate speech on twitter network using ensemble machine learning. International Journal of Advanced Computer Science and Applications 2022, 13. [CrossRef]

- Davidson, T.; Warmsley, D.; Macy, M.; Weber, I. Automated hate speech detection and the problem of offensive language. In Proceedings of the Proceedings of the international AAAI conference on web and social media, 2017, Vol. 11, pp. 512–515.

- Van Bruwaene, D.; Huang, Q.; Inkpen, D. A multi-platform dataset for detecting cyberbullying in social media. Language Resources and Evaluation 2020, 54, 851–874. [CrossRef]

- Shi, X.; Liu, X.; Xu, C.; Huang, Y.; Chen, F.; Zhu, S. Cross-lingual offensive speech identification with transfer learning for low-resource languages. Computers and Electrical Engineering 2022, 101, 108005. [CrossRef]

- Zhu, S.; Xu, S.; Sun, H.; Pan, L.; Cui, M.; Du, J.; Jin, R.; Branco, A.; Xiong, D.; et al. Multilingual Large Language Models: A Systematic Survey. arXiv preprint arXiv:2411.11072 2024.

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774 2023.

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971 2023.

- Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; Roberts, A.; Barham, P.; Chung, H.W.; Sutton, C.; Gehrmann, S.; et al. Palm: Scaling language modeling with pathways. Journal of Machine Learning Research 2023, 24, 1–113.

- He, J.; Zhou, C.; Ma, X.; Berg-Kirkpatrick, T.; Neubig, G. Towards a unified view of parameter-efficient transfer learning. arXiv preprint arXiv:2110.04366 2021.

- Lester, B.; Al-Rfou, R.; Constant, N. The Power of Scale for Parameter-Efficient Prompt Tuning. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021, pp. 3045–3059.

- Li, X.L.; Liang, P. Prefix-Tuning: Optimizing Continuous Prompts for Generation. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 2021, pp. 4582–4597.

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W.; et al. Lora: Low-rank adaptation of large language models. ICLR 2022, 1, 3.

- Zhang, Q.; Chen, M.; Bukharin, A.; Karampatziakis, N.; He, P.; Cheng, Y.; Chen, W.; Zhao, T. Adalora: Adaptive budget allocation for parameter-efficient fine-tuning. arXiv preprint arXiv:2303.10512 2023.

- Pradhan, R.; Chaturvedi, A.; Tripathi, A.; Sharma, D.K. A review on offensive language detection. Advances in Data and Information Sciences: Proceedings of ICDIS 2019 2020, pp. 433–439.

- Nobata, C.; Tetreault, J.; Thomas, A.; Mehdad, Y.; Chang, Y. Abusive language detection in online user content. In Proceedings of the Proceedings of the 25th international conference on world wide web, 2016, pp. 145–153.

- Gambäck, B.; Sikdar, U.K. Using convolutional neural networks to classify hate-speech. In Proceedings of the Proceedings of the first workshop on abusive language online, 2017, pp. 85–90.

- Badjatiya, P.; Gupta, S.; Gupta, M.; Varma, V. Deep learning for hate speech detection in tweets. In Proceedings of the Proceedings of the 26th international conference on World Wide Web companion, 2017, pp. 759–760.

- Pamungkas, E.W.; Patti, V. Cross-domain and cross-lingual abusive language detection: A hybrid approach with deep learning and a multilingual lexicon. In Proceedings of the Proceedings of the 57th annual meeting of the association for computational linguistics: Student research workshop, 2019, pp. 363–370.

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, volume 1 (long and short papers), 2019, pp. 4171–4186.

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692 2019.

- Caselli, T.; Basile, V.; Mitrović, J.; Granitzer, M. Hatebert: Retraining bert for abusive language detection in english. arXiv preprint arXiv:2010.12472 2020.

- Mathew, B.; Saha, P.; Yimam, S.M.; Biemann, C.; Goyal, P.; Mukherjee, A. Hatexplain: A benchmark dataset for explainable hate speech detection. In Proceedings of the Proceedings of the AAAI conference on artificial intelligence, 2021, Vol. 35, pp. 14867–14875.

- Zhu, S.; Pan, L.; Xiong, D. FEDS-ICL: Enhancing translation ability and efficiency of large language model by optimizing demonstration selection. Information Processing & Management 2024, 61, 103825. [CrossRef]

- Zhang, D.; Feng, T.; Xue, L.; Wang, Y.; Dong, Y.; Tang, J. Parameter-Efficient Fine-Tuning for Foundation Models. arXiv preprint arXiv:2501.13787 2025.

- Xie, T.; Li, T.; Zhu, W.; Han, W.; Zhao, Y. PEDRO: Parameter-Efficient Fine-tuning with Prompt DEpenDent Representation MOdification. arXiv preprint arXiv:2409.17834 2024.

- Zhang, L.; Zhang, L.; Shi, S.; Chu, X.; Li, B. Lora-fa: Memory-efficient low-rank adaptation for large language models fine-tuning. arXiv preprint arXiv:2308.03303 2023.

- Dettmers, T.; Pagnoni, A.; Holtzman, A.; Zettlemoyer, L. Qlora: Efficient finetuning of quantized llms. Advances in neural information processing systems 2023, 36, 10088–10115.

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. Transformers: State-of-the-art natural language processing. In Proceedings of the Proceedings of the 2020 conference on empirical methods in natural language processing: system demonstrations, 2020, pp. 38–45.

- Zampieri, M.; Malmasi, S.; Nakov, P.; Rosenthal, S.; Farra, N.; Kumar, R. Predicting the type and target of offensive posts in social media. arXiv preprint arXiv:1902.09666 2019.

- Houlsby, N.; Giurgiu, A.; Jastrzebski, S.; Morrone, B.; De Laroussilhe, Q.; Gesmundo, A.; Attariyan, M.; Gelly, S. Parameter-efficient transfer learning for NLP. In Proceedings of the International conference on machine learning. PMLR, 2019, pp. 2790–2799.

| Base Model | Method | Trainable Params | Acc. | P(O) | R(O) | F1(O) | Macro-F1 |

|---|---|---|---|---|---|---|---|

| Llama-3 8B | Zero-Shot Prompting | 0 | 72.3 | 65.8 | 55.2 | 60.1 | 69.5 |

| Full Finetuning | ∼700M | 80.5 | 76.2 | 73.5 | 74.8 | 79.2 | |

| Adapters | ∼5.8M | 79.8 | 75.1 | 71.9 | 73.5 | 78.1 | |

| Standard LoRA | ∼4.2M | 81.2 | 77.0 | 74.8 | 75.9 | 80.1 | |

| DS-LoRA | ∼4.5M | 82.5 | 78.3 | 77.2 | 77.7 | 81.3 | |

| Gemma 7B | Zero-Shot Prompting | 0 | 71.5 | 64.5 | 53.8 | 58.7 | 68.8 |

| Full Finetuning | ∼650M | 79.6 | 75.0 | 72.1 | 73.5 | 78.3 | |

| Adapters | ∼5.2M | 78.9 | 74.2 | 70.5 | 72.3 | 77.2 | |

| Standard LoRA | ∼3.9M | 80.4 | 76.1 | 73.5 | 74.8 | 79.2 | |

| DS-LoRA | ∼4.1M | 81.8 | 77.5 | 76.0 | 76.7 | 80.5 |

| Base Model | Method | Trainable Params | Acc. | P(Macro) | R(Macro) | F1(H) | F1(Off) | Macro-F1 |

|---|---|---|---|---|---|---|---|---|

| Llama-3 8B | Zero-Shot Prompting | 0 | 60.2 | 58.1 | 55.3 | 45.1 | 50.3 | 56.5 |

| Full Finetuning (Top 2 layers) | ∼700M | 70.5 | 69.2 | 68.0 | 62.3 | 65.8 | 68.8 | |

| Adapters () | ∼5.8M | 69.3 | 67.8 | 66.5 | 60.1 | 63.2 | 67.0 | |

| Standard LoRA () | ∼4.2M | 71.8 | 70.5 | 69.9 | 64.0 | 67.5 | 70.2 | |

| DS-LoRA (ours, ) | ∼4.5M | 73.5 | 72.3 | 71.8 | 66.5 | 70.1 | 72.0 | |

| Gemma 7B | Zero-Shot Prompting | 0 | 59.1 | 57.0 | 54.1 | 43.8 | 49.0 | 55.2 |

| Full Finetuning (Top 2 layers) | ∼650M | 69.2 | 68.0 | 66.8 | 60.9 | 64.1 | 67.5 | |

| Adapters () | ∼5.2M | 68.1 | 66.5 | 65.2 | 58.8 | 61.9 | 65.7 | |

| Standard LoRA () | ∼3.9M | 70.6 | 69.1 | 68.5 | 62.5 | 66.0 | 68.8 | |

| DS-LoRA (ours, ) | ∼4.1M | 72.3 | 71.0 | 70.2 | 65.1 | 68.7 | 70.5 |

| Method Configuration | F1(O) | Macro-F1 |

|---|---|---|

| Standard LoRA (Baseline) | 75.9 | 80.1 |

| LoRA + Gate (No L1 Sparsity) | 77.1 | 80.9 |

| LoRA + L1 Sparsity (No Gate) | 76.5 | 80.4 |

| DS-LoRA (Gate + L1) | 77.7 | 81.3 |

| Layer Group | Average Gate Activation for Category | ||

|---|---|---|---|

| Normal | Offensive (Non-Hate) | Hate Speech | |

| Early Layers (1-10) | 0.35 ± 0.08 | 0.45 ± 0.10 | 0.52 ± 0.11 |

| Middle Layers (11-21) | 0.28 ± 0.06 | 0.58 ± 0.12 | 0.68 ± 0.14 |

| Late Layers (22-32) | 0.31 ± 0.07 | 0.50 ± 0.09 | 0.61 ± 0.13 |

| Overall Model Average | 0.31 ± 0.05 | 0.51 ± 0.08 | 0.60 ± 0.10 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).