Submitted:

28 March 2025

Posted:

31 March 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- To develop a hierarchical reinforcement learning framework for text summarization that can adapt to different time constraints and summary length requirements.

- To implement and compare the effectiveness of PPO, A2C, and SAC algorithms in the context of text summarization, offering insights into their relative strengths and weaknesses for this task.

- To create a summarization system that balances summary quality and generation speed, addressing the practical need for efficient summarization in time-sensitive applications.

- To evaluate the performance of the proposed system using a comprehensive set of metrics, including ROUGE scores and BERTScore, providing a comprehensive assessment of summary quality.

- To analyze the impact of various reinforcement learning algorithms on the summa- rization process, including their ability to handle trade-offs between summary length, quality, and generation time.

2. Background and Related Work

2.1. Text Summarization

2.2. Neural Approaches to Summarization

2.3. Reinforcement Learning in NLP

2.4. Hierarchical Reinforcement Learning

2.5. Adaptive Summarization

2.6. Evaluation of Summarization Systems

3. Methodology

3.1. Hierarchical Summarizer Model

- T5-based Encoder-Decoder: We use a pre-trained T5 model as the base for our summarizer. The T5 model has demonstrated strong performance in various NLP tasks, including summarization, and provides a solid foundation to generate coherent and informative summaries.

- Level Selector: We introduce a level selector module that determines the appropriate level of summarization based on the input and time constraints. This module is implemented as a linear layer on top of output from the T5 encoder.

3.2. Reinforcement Learning Framework

3.2.1. Proximal Policy Optimization (PPO)

3.2.2. Advantage Actor-Critic (A2C)

3.2.3. Soft Actor-Critic (SAC)

3.3. Reward Function

- ROUGE is the average of ROUGE-1, ROUGE-2, and ROUGE-L F1 scores

- BERTScore measures semantic similarity between generated summary and reference

- TimePenalty is calculated as max(0, )

3.4. Training Process

- The model generates summaries for a batch of input articles.

- Rewards are computed based on summary quality and generation time.

- The RL algorithm updates the model parameters to maximize the expected rewards.

4. Experimental Setup

- Training set: 5,000 articles

- Validation set: 500 articles

- Test set: 500 articles

4.1. Implementation Details

- Learning rate: 3e-5

- Batch size: 4

- Maximum number of epochs: 100

- Early stopping patience: 3 epochs

4.2. Evaluation Metrics

- ROUGE scores: We computed ROUGE-1, ROUGE-2, and ROUGE-L F1 scores to measure the overlap between generated summaries and reference summaries.

- BERTScore: This metric was used to evaluate the semantic similarity between generated and reference summaries.

- Generation Time: We measured the time taken to generate each summary to evaluate the model’s efficiency and adherence to time constraints.

4.3. Experimental Procedure

5. Results and Discussion

5.1. Quantitative Results

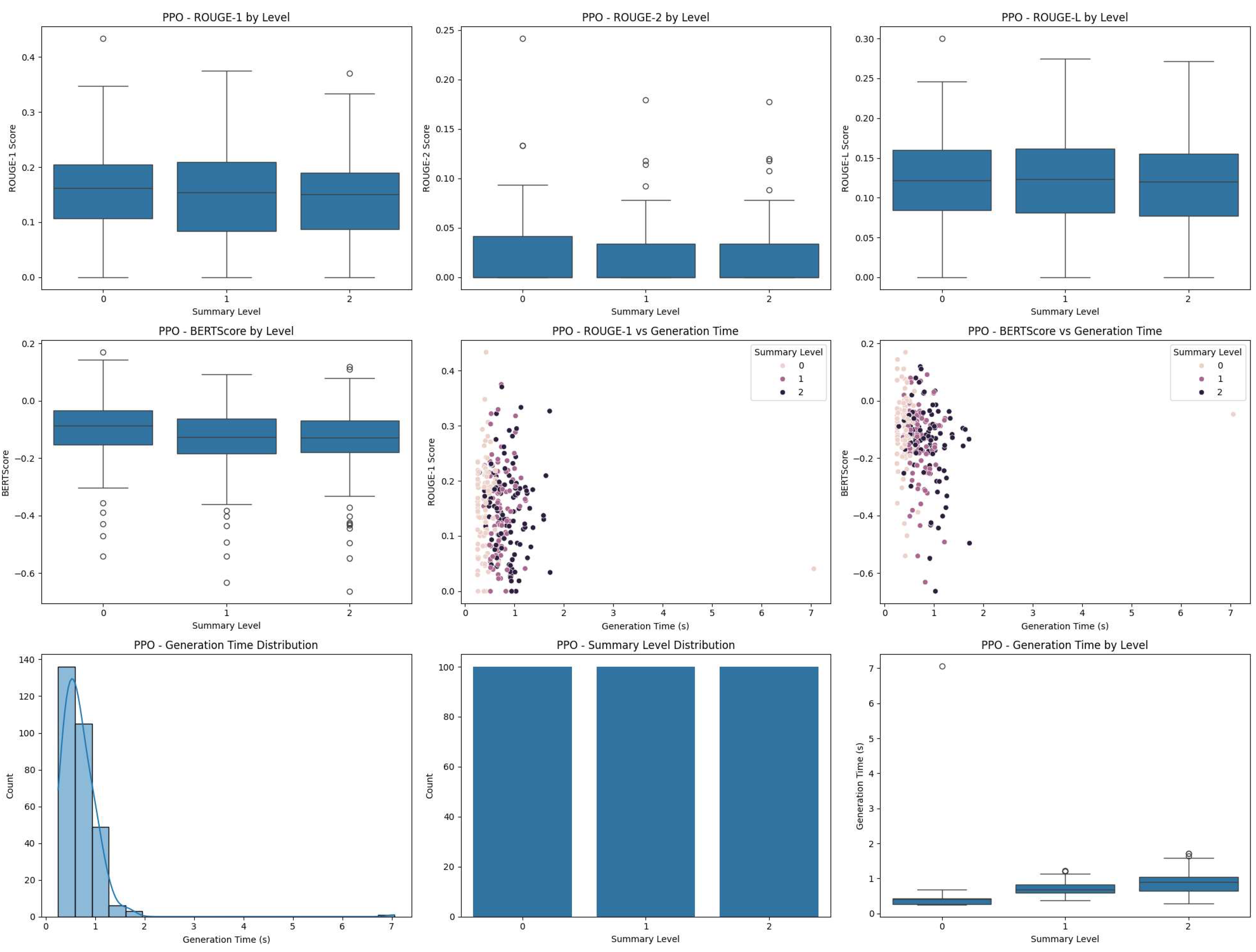

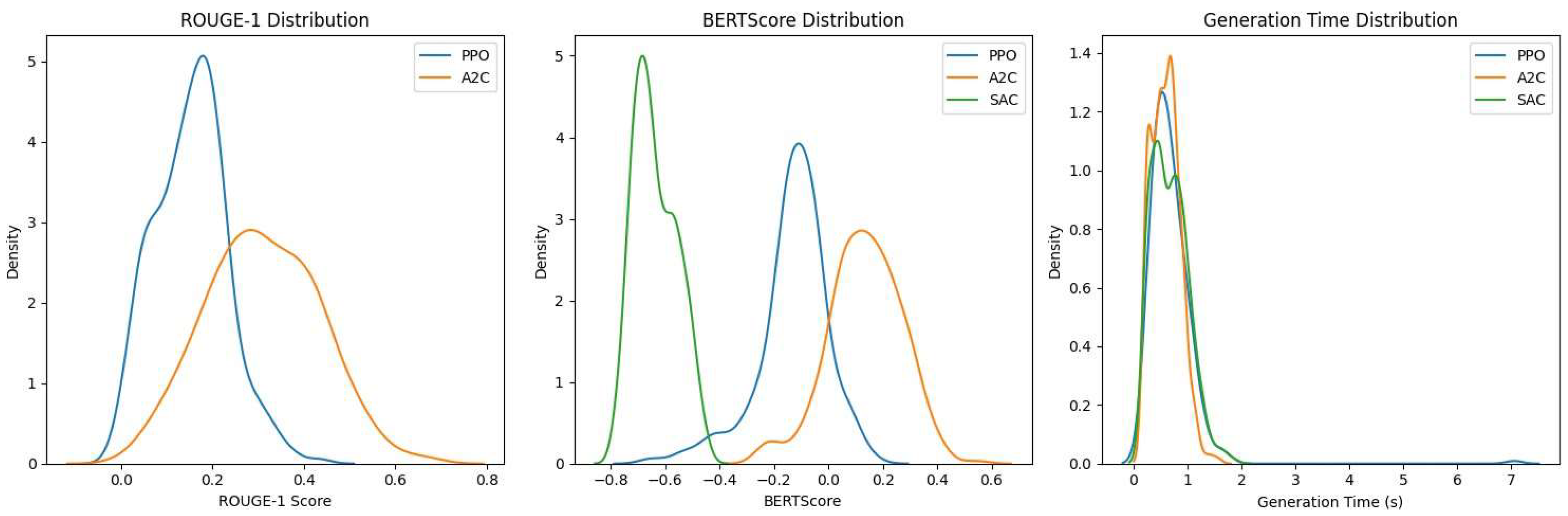

- The PPO model achieved the highest ROUGE scores and BERTScore among the three models, indicating that it produced summaries that were most similar to the reference summaries in terms of content overlap and semantic similarity.

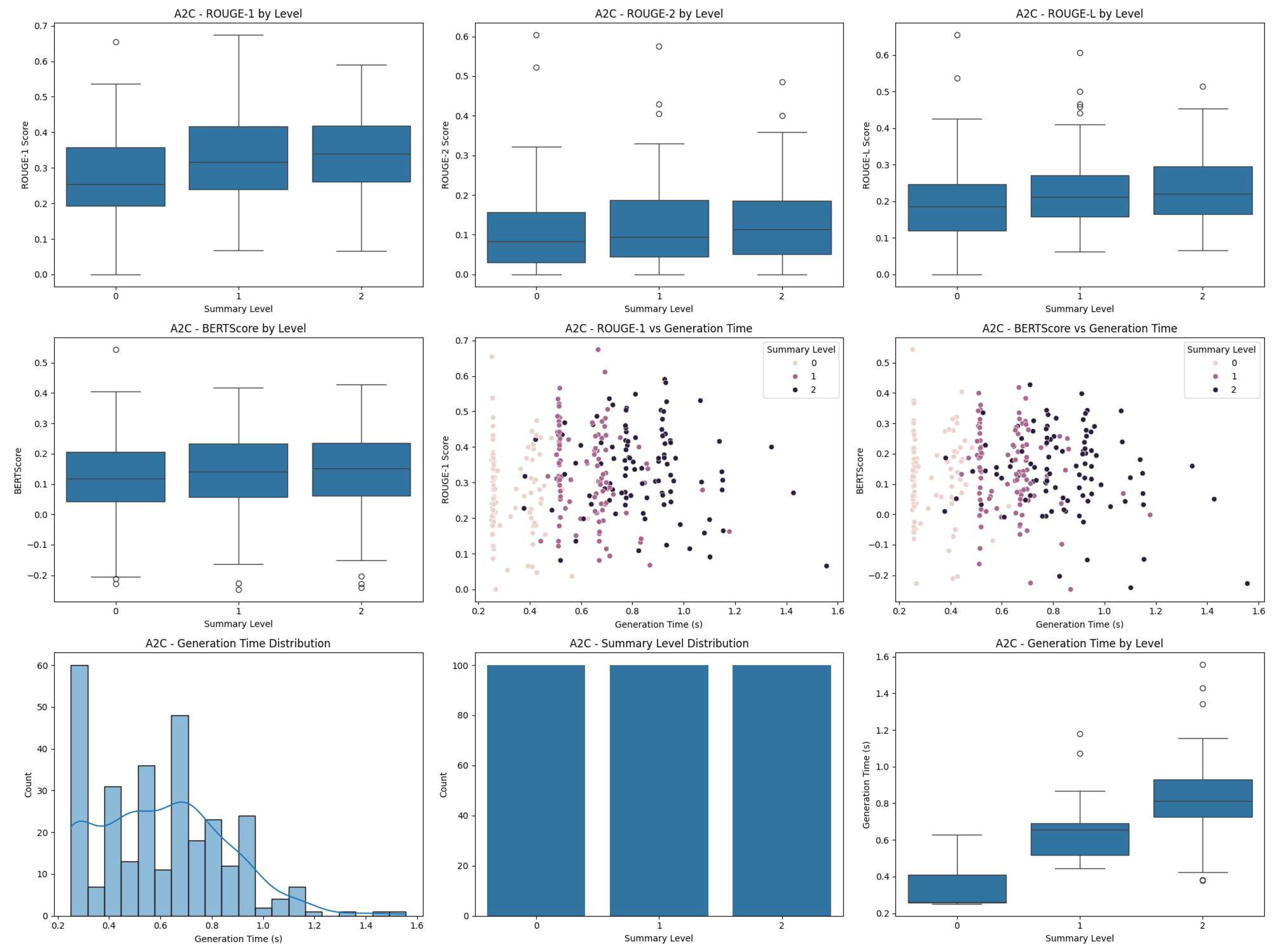

- The A2C model showed lower ROUGE scores and BERTScore compared to PPO, suggesting that its summaries were less similar to the reference summaries.

- Interestingly, despite having lower ROUGE and BERTScore values, the A2C model achieved a slightly better (less negative) test reward than the PPO model. This suggests that the A2C model may have been more effective at balancing summary quality with generation time and adherence to time constraints.

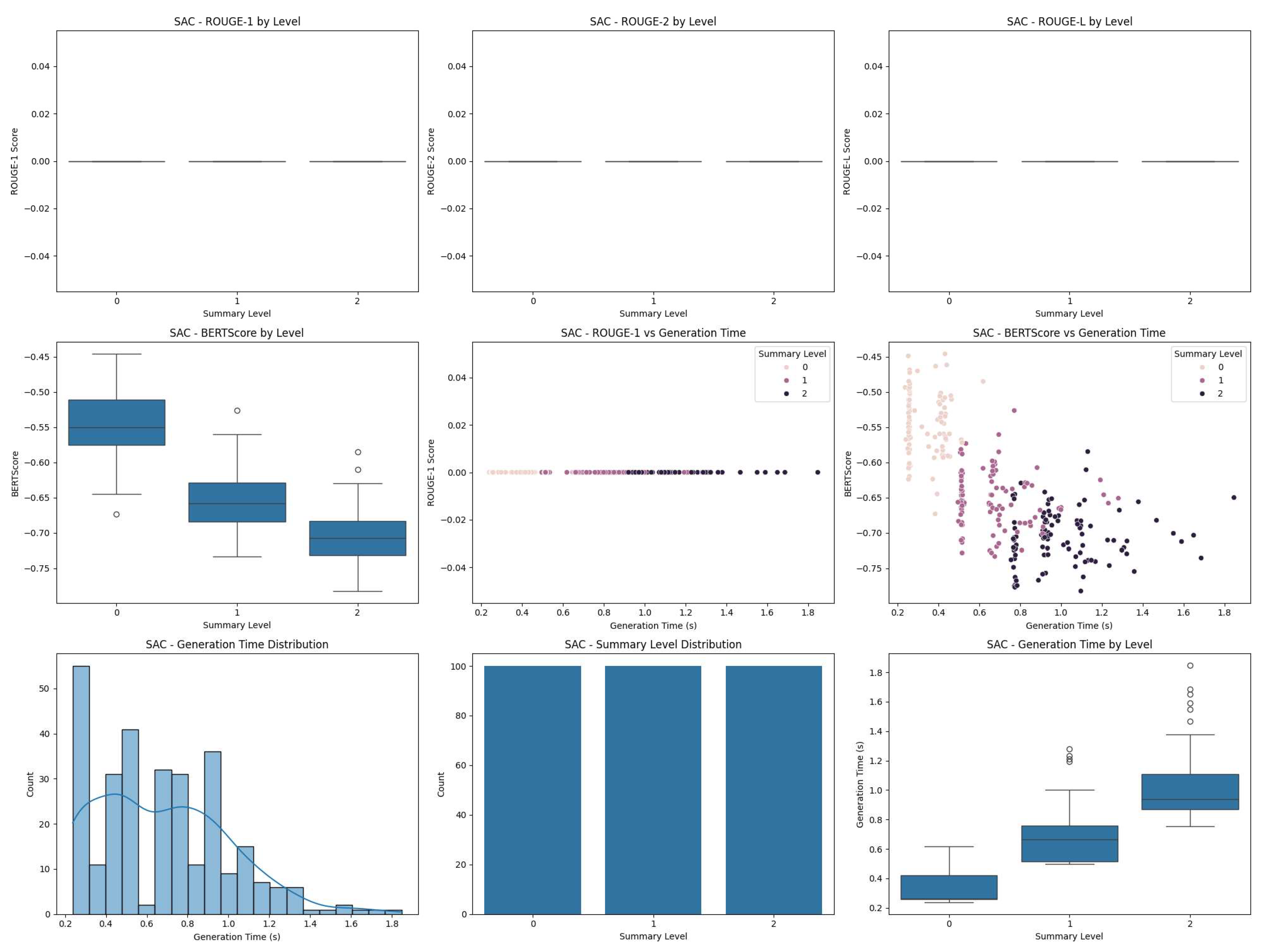

- The SAC model had the lowest test reward among the three models. The figures reveal that this was due to extremely low ROUGE scores and BERTScores across all summary levels.

- The overall negative test rewards for all models indicate that there is room for improvement in balancing summary quality with generation time constraints.

5.2. Discussion

- Trade-off between quality and speed: The discrepancy between ROUGE scores and test rewards, particularly for the A2C model, highlights the complex trade-off between summary quality and generation speed. While PPO produced summaries more similar to the references, A2C seems to have found a better balance between quality and time constraints.

- Challenges with SAC: The significantly lower test reward for the SAC model is clearly explained by its poor performance in ROUGE and BERTScore metrics, as shown in Figure 3 and Figure 4. This suggests that the SAC algorithm struggled to adapt to the summarization task or to effectively balance the multiple objectives (quality, semantic similarity, and time constraints). Further investigation would be needed to understand the reasons for its underperformance.

- Adaptation to summary levels: Figure 1, Figure 2, and Figure 3 show how each algorithm adapts to different summary levels. Generally, higher summary levels (longer summaries) tend to achieve better ROUGE and BERTScores, but at the cost of longer generation times. This illustrates the challenge of balancing summary quality with time constraints.

- Room for improvement: The overall low ROUGE scores and negative test rewards indicate that there is substantial room for improvement in our models. This could involve refining the reward function, adjusting the model architecture, or exploring alternative reinforcement learning algorithms.

- Limitations of evaluation metrics: The discrepancy between ROUGE scores and test rewards underscores the limitations of relying solely on ROUGE for evaluating summarization quality, especially in an adaptive setting where generation time is also a factor. The inclusion of BERTScore provides additional insight, but future work might explore more comprehensive evaluation metrics.

6. Conclusion and Future Work

- Reinforcement learning can be effectively applied to the task of adaptive summarization, allowing models to balance summary quality with generation time.

- The PPO algorithm demonstrated the best performance in terms of traditional summarization metrics (ROUGE scores and BERTScore), suggesting its effectiveness in generating summaries that closely match reference summaries.

- The A2C algorithm achieved a slightly better overall test reward despite lower ROUGE scores, indicating a potentially better balance between summary quality and adherence to time constraints.

- The SAC algorithm underperformed compared to PPO and A2C, highlighting the challenges of applying this approach to the summarization task.

- All models showed room for improvement, as evidenced by the overall low ROUGE scores and negative test rewards.

6.1. Reward Function Refinement

6.2. Model Architecture Improvements

6.3. Hyperparameter Tuning

6.4. Exploration of Other RL Algorithms

6.5. Larger-Scale Experiments

6.6. Analysis of Generated Summaries

6.7. Time Constraint Adaptation

6.8. Human Evaluation

6.9. Application to Other Domains

Acknowledgments

Conflicts of Interest

References

- Nallapati, R.; Zhou, B.; Gulcehre, C.; Xiang, B.; et al. Abstractive text summarization using sequence-to-sequence rnns and beyond. arXiv 2016, arXiv:1602.06023. [Google Scholar]

- Liu, Y.; Lapata, M. Text summarization with pretrained encoders. arXiv 2019, arXiv:1908.08345. [Google Scholar]

- Lin, C.Y. Rouge: A package for automatic evaluation of summaries. In Proceedings of the Text summarization branches out; 2004; pp. 74–81. [Google Scholar]

- Hermann, K.M.; Kocisky, T.; Grefenstette, E.; Espeholt, L.; Kay, W.; Suleyman, M.; Blunsom, P. Teaching machines to read and comprehend. Advances in neural information processing systems 2015, 28. [Google Scholar]

- Lewis, M.; Liu, Y.; Goyal, N.; Ghazvininejad, M.; Mohamed, A.; Levy, O.; Stoyanov, V.; Zettlemoyer, L. Bart: Denoising sequence- to-sequence pre-training for natural language generation, translation, and comprehension. arXiv 2019, arXiv:1910.13461. [Google Scholar]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of machine learning research 2020, 21, 1–67. [Google Scholar]

- Paulus, R.; Xiong, C.; Socher, R. A deep reinforced model for abstractive summarization. arXiv arXiv:1705.04304, 2017.

- El-Kassas, W.S.; Salama, C.R.; Rafea, A.A.; Mohamed, H.K. Automatic text summarization: A comprehensive survey. Expert systems with applications 2021, 165, 113679. [Google Scholar] [CrossRef]

- Luhn, H.P. The automatic creation of literature abstracts. IBM Journal of research and development 1958, 2, 159–165. [Google Scholar] [CrossRef]

- Khan, R.; Qian, Y.; Naeem, S. Extractive based text summarization using k-means and tf-idf. International Journal of Information Engineering and Electronic Business 2019, 12, 33. [Google Scholar] [CrossRef]

- Nallapati, R.; Zhai, F.; Zhou, B. Summarunner: A recurrent neural network based sequence model for extractive summarization of documents. Proc. AAAI Conf. Artif. Intell. 2017, 31. [Google Scholar] [CrossRef]

- Erkan, G.; Radev, D.R. Lexrank: Graph-based lexical centrality as salience in text summarization. Journal of artificial intelligence research 2004, 22, 457–479. [Google Scholar] [CrossRef]

- Sutskever, I.; Vinyals, O.; Le, Q.V. Sequence to sequence learning with neural networks. Advances in neural information processing systems 2014, 27. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural machine translation by jointly learning to align and translate. arXiv arXiv:1409.0473, 2014.

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv arXiv:1810.04805, 2018.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Narayan, S.; Cohen, S.B.; Lapata, M. Ranking sentences for extractive summarization with reinforcement learning. arXiv arXiv:1802.08636, 2018.

- Barto, A.G.; Mahadevan, S. Recent advances in hierarchical reinforcement learning. Discrete event dynamic systems 2003, 13, 341–379. [Google Scholar] [CrossRef]

- Saleh, A.; Jaques, N.; Ghandeharioun, A.; Shen, J.; Picard, R. Hierarchical reinforcement learning for open-domain dialog. Proc. AAAI Conf. Artif. Intell. 2020, 34, 8741–8748. [Google Scholar] [CrossRef]

- Wu, Y.; Hu, B. Learning to extract coherent summary via deep reinforcement learning. Proc. AAAI Conf. Artif. Intell. 2018, 32. [Google Scholar] [CrossRef]

- Saito, I.; Nishida, K.; Nishida, K.; Otsuka, A.; Asano, H.; Tomita, J.; Shindo, H.; Matsumoto, Y. Length-controllable abstractive summarization by guiding with summary prototype. arXiv arXiv:2001.07331, 2020.

- Zhang, T.; Kishore, V.; Wu, F.; Weinberger, K.Q.; Artzi, Y. Bertscore: Evaluating text generation with bert. arXiv arXiv:1904.09675, 2019.

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L | BERTScore | Test Reward |

| PPO | 0.1222 | 0.0527 | 0.0789 | 0.7519 | -0.4853 |

| A2C | 0.0629 | 0.0202 | 0.0336 | 0.6139 | -0.4597 |

| SAC | * | * | * | * | -2.1521 |

| 1. | Individual ROUGE and BERTScore values for the SAC model were not reported in the output. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).