Submitted:

15 July 2024

Posted:

16 July 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

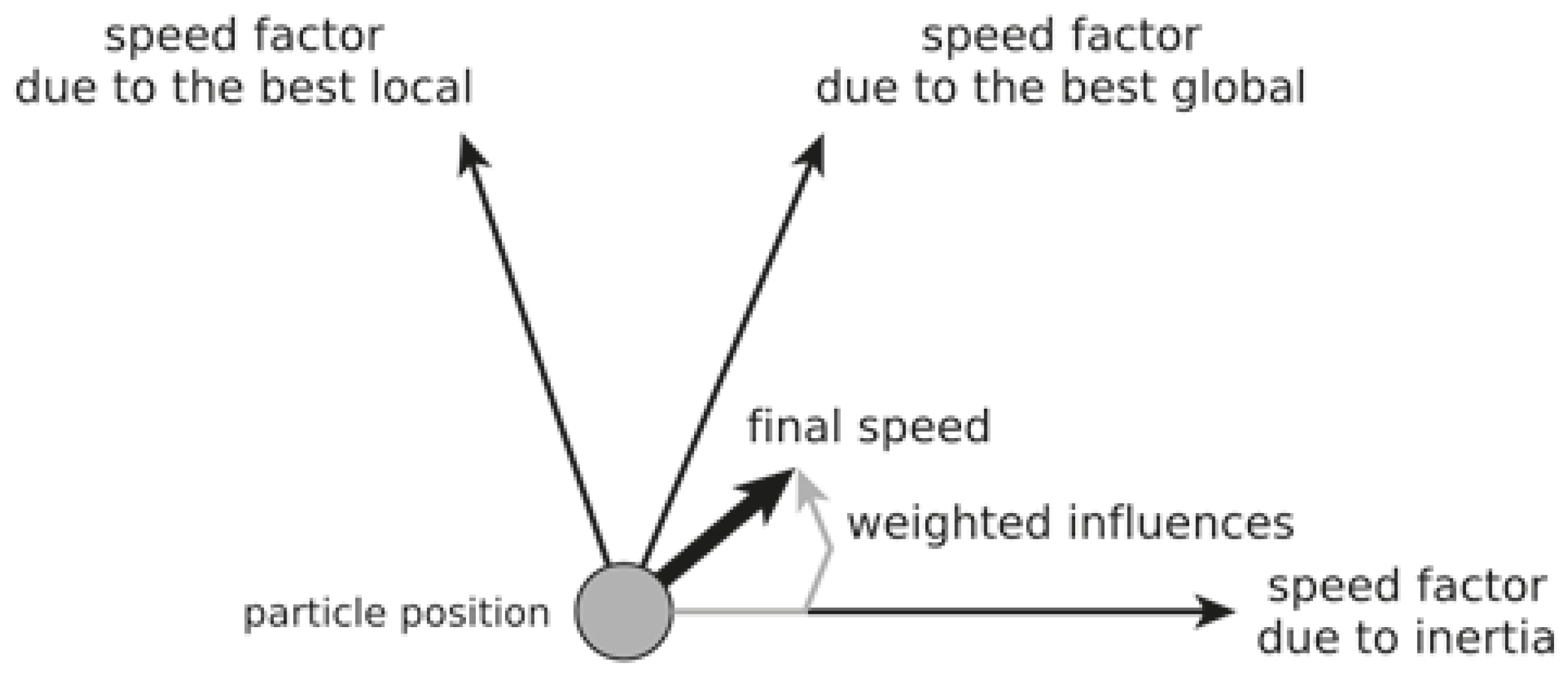

2. Particle Swarm Optimization

2.1. Complexity of the PSO and Approaches for Its Parallelization in a Cluster

- As a metaheuristic algorithm, no specific knowledge of the problem to be solved is required

- As an evolutionary algorithm, it is easy to adapt to parallel computing structures.

- Being able to work with different solutions, it has a higher tolerance to local maxima or minima.

- The main disadvantage is that the PSO algorithm needs a large amount of time to reach a good solution in problems of high complexity, i.e., with a large number of particles or with many dimensions, because many evaluations of the fitness function are required.

2.2. Background Information Based on Recent Research on the Parallelization of the PSO

| Main strategy | References | Years |

|---|---|---|

| Accelerating PSO in CUDA/GPU | [1,2] | 2011, 2005 |

| Adjustment of control parameters | [13] | 2002 |

| Hybrid mechanisms with PSO | [24,25,31] | 2019, 2015, 2020 |

| Big Data & PSO | [32] | 2020 |

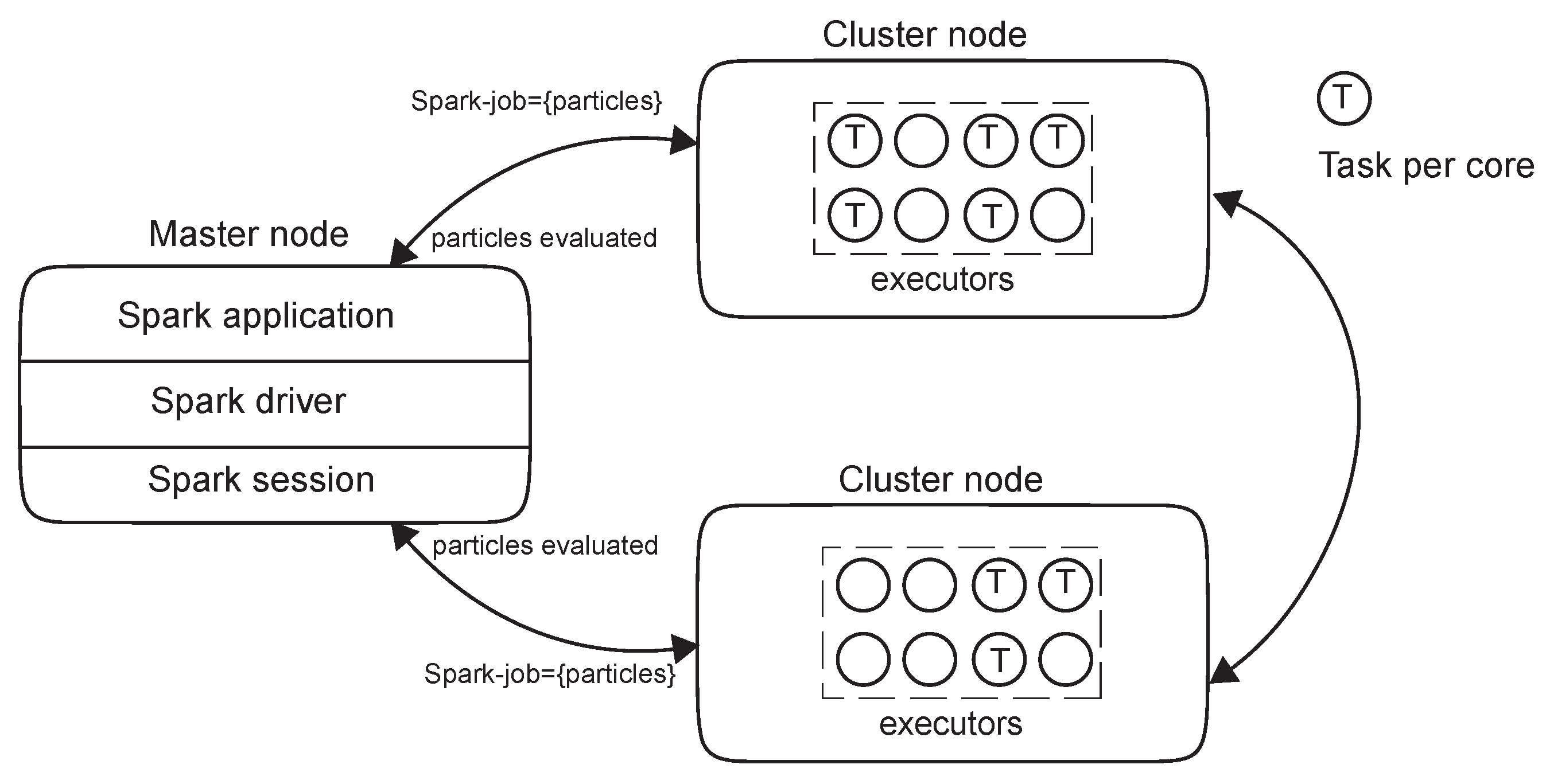

3. PSO Parallelization Based on Apache Spark and RDD Applied to Training Neural Networks

3.1. Distributed Synchronous PSO

| Algorithm 1 DSPSO pseudocode |

|

3.1.1. Each Worker Node Should Perform the Following Steps:

- wait for a particle to be received from the master node,

- compute the fitness function f and update the personal best position ,

- return the evaluated particle to the master node,

- return to step 1 if the master node has not finished yet.

3.1.2. The Master Node Process Includes the Following Steps:

- initialize particle parameters, positions and velocities;

- assign the current iteration and initialize the state of the swarm and the received particles;

- start the current iteration by distributing all particles to the free executors;

- wait for the results of all evaluated particles (with calculated fitness function) and the personal best position for this iteration, which means for sync;

- computing the global best position for each incoming particle until there are no more particles left;

- updating the velocity vector and the position vector of each particle based on the personal best position and the global best position found by all particles:

- going back to step 3 if the last iteration has not been reached;

- return the global best position .

3.2. Distributed Asynchronous PSO

| Algorithm 2 DAPSO pseudocode |

|

3.2.1. The Master Node Process Consists of the Following Steps:

- initializing the particle parameters, positions and velocities,

- initializing the state of the swarm, as well as that of a particle queue to send to the worker nodes,

- loading the initial particles into the queue,

- distributing the particles from the queue to the available executors,

- waiting for the results of all evaluated particles (with calculated fitness function) of the subswarm and the personal best position for this iteration,

- updating the velocity vector and position vector of each particle based on the personal best position and the global best position found by all the particles,

- placing the particle back in the queue and returning to step 4 if the stop condition is not satisfied,

- return the best global position .

3.2.2. The Process of Worker Nodes

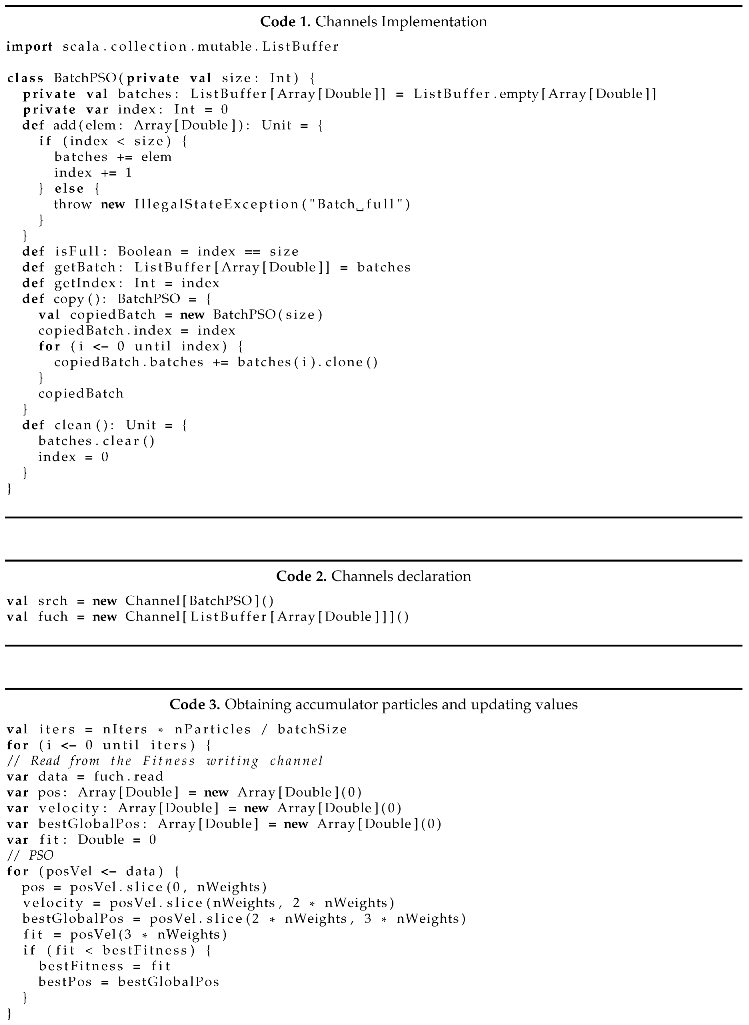

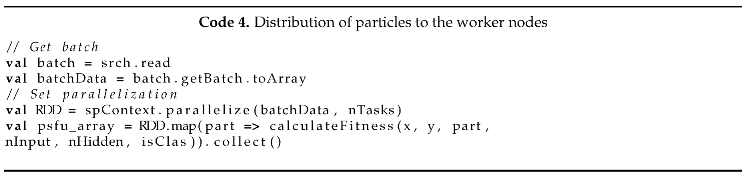

3.3. Apache Spark Implementation

4. Case Study and Applications

- efficiency improvement: parallelization leverages the computing power of multiple resources, such as graphics processing units (CPUs or GPUs) or central processing units (CPUs), thus speeding up the training process,

- faster scanning: parallelization techniques for the PSO algorithm allow multiple solutions to be evaluated simultaneously, speeding up the search for optimal solutions in a large parameter space,

- scalability: It can handle problems of varying size and complexity, from small tasks to large challenges with massive data sets,

- increased accuracy: by enabling faster and more efficient training, parallelization of the PSO algorithm can improve the quality of the neural network models that are designed, resulting in better performance in prediction and classification tasks,

- reduction of development time:parallelization reduces development time by speeding up the training process, leading to faster development of machine learning models.

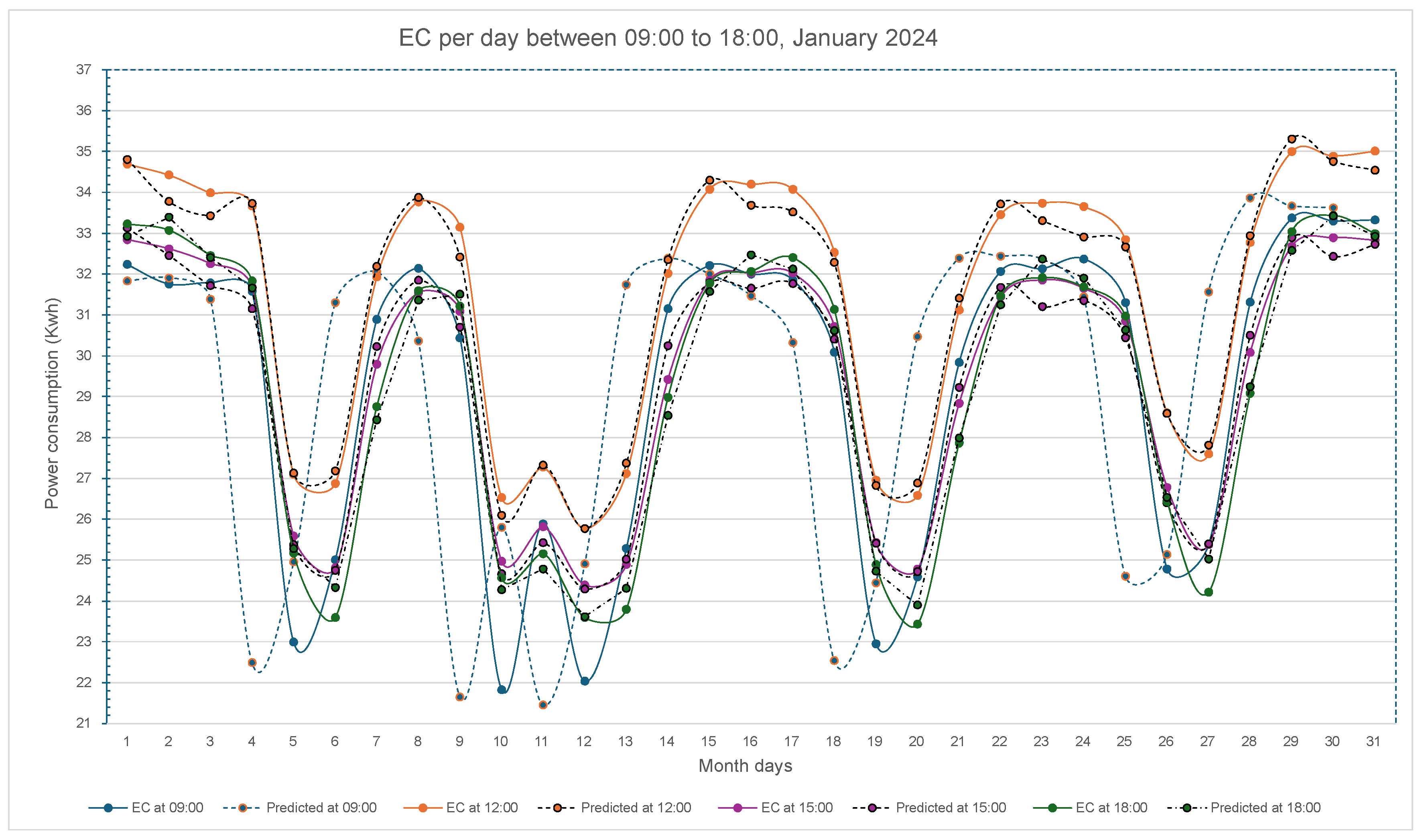

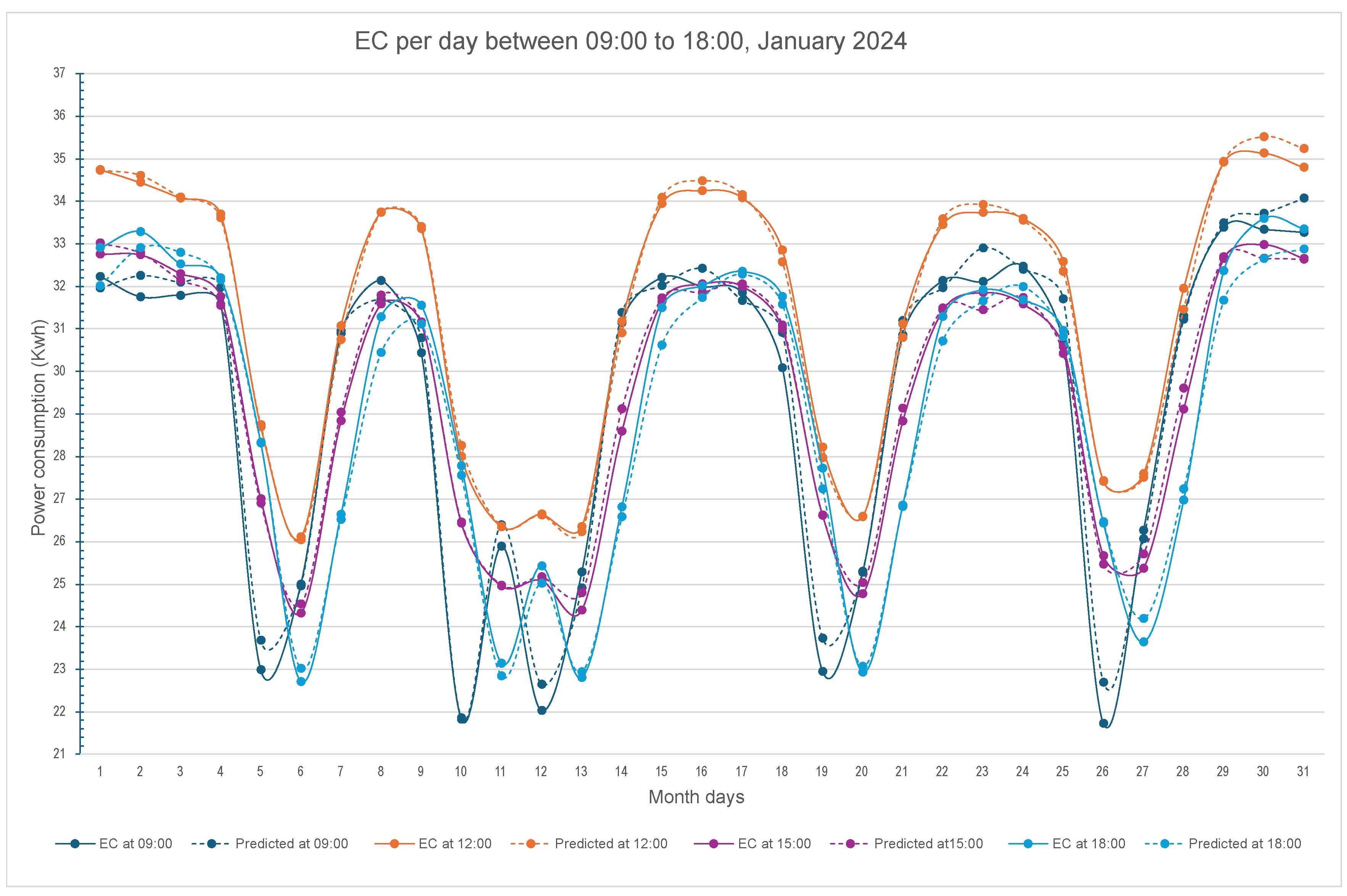

4.1. A Regression Problem: Prediction of the Electrical Consumption of Institution Buildings

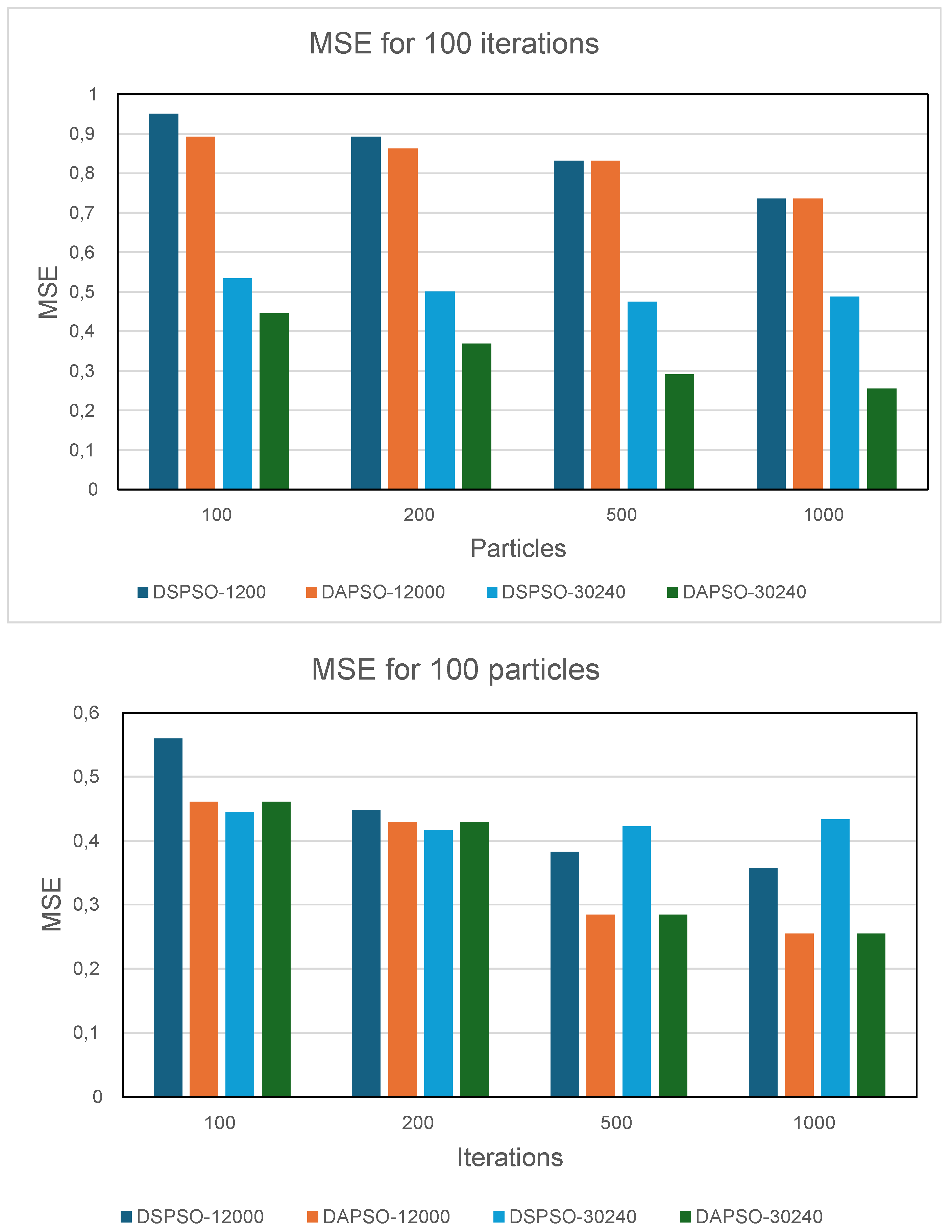

4.2. Performance Evaluation of a Regression Problem Implementation

4.3. A Classification Problem: Binary Prediction of Smoker Status Using Bio-Signals

4.3.1. Statistical Study of Covariates

- Low: Those between the 0th percentile and the 25th percentile are classified here

- Medium low: Those between the 25th percentile and the 50th percentile are classified here

- Medium high: Those between the 50th percentile and the 75th percentile are classified here

- High: Those between the 75th percentile and the 100th percentile are classified here

4.3.2. Odds Ratio Calculation

4.3.3. Relative Risk Calculation

4.4. Evaluation of Classification Accuracy Based on Neural Networks Trained with the PSO

- -

- Precision: The precision is the probability value that a detected class element is valid. It is given by the equation

- -

- Recall: The recall is the probability value that a detected class element is detected in the ground truth. It is given by the equation

- -

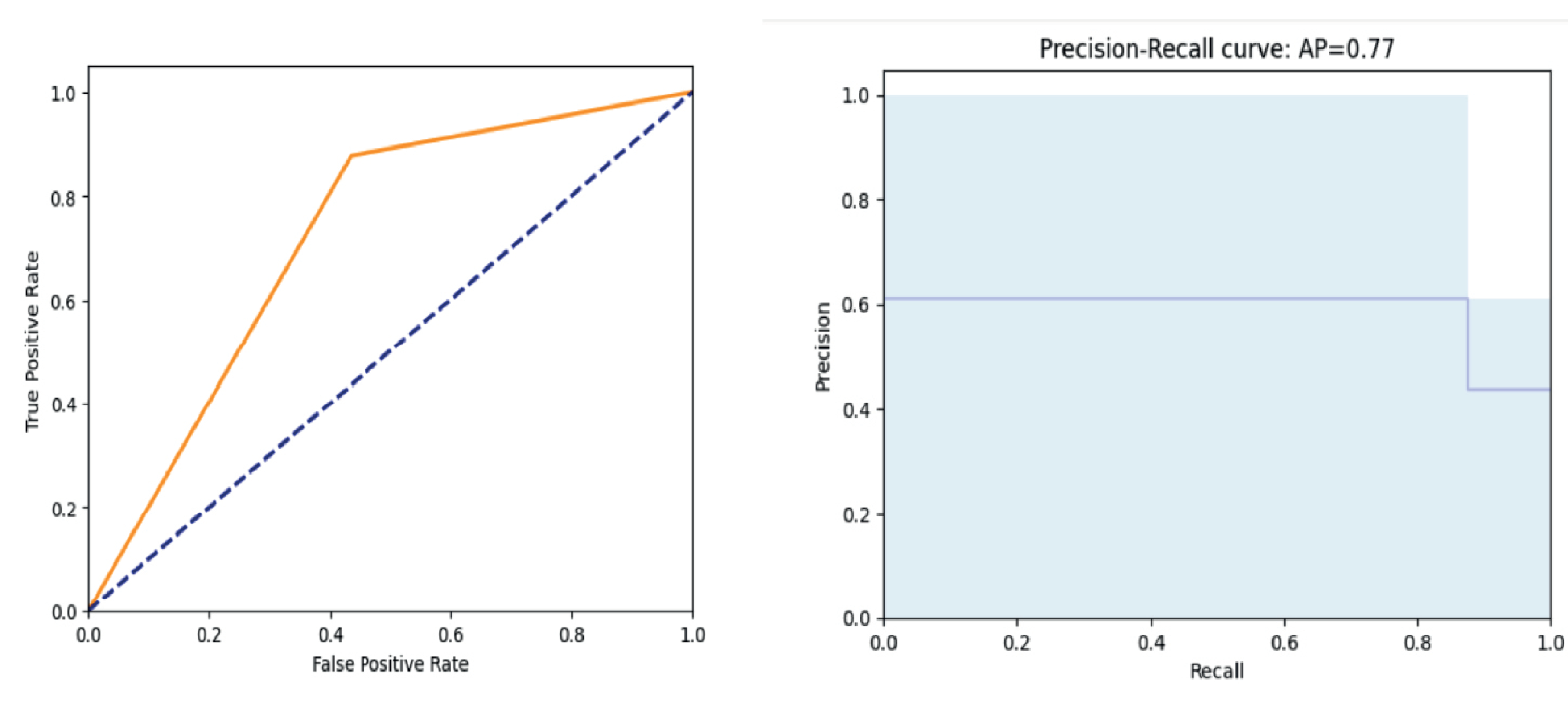

- F1 score: This is a metric usually calculated to evaluate the performance of a binary classification model. It is given by the equationThe results obtained when contrasted with the interpretation of the ROC and Precision/Recall plots (Figure 7), indicate that the binary classification model we have obtained has a balanced performance. The obtained precision of 77% on average indicates that this percentage of positive predictions is correct and that the obtained classification has been performed reliably by the implemented PSO variants. The obtained recall implies that the model also correctly identifies 77% of all positive cases in the ground truth. F1–score calculated for 100 particles and 100 iterations: 0.7697050147492625, confirms that both measures of precision and recall are consistent with the results obtained, showing a balance between them and that the results have also been obtained with performance.

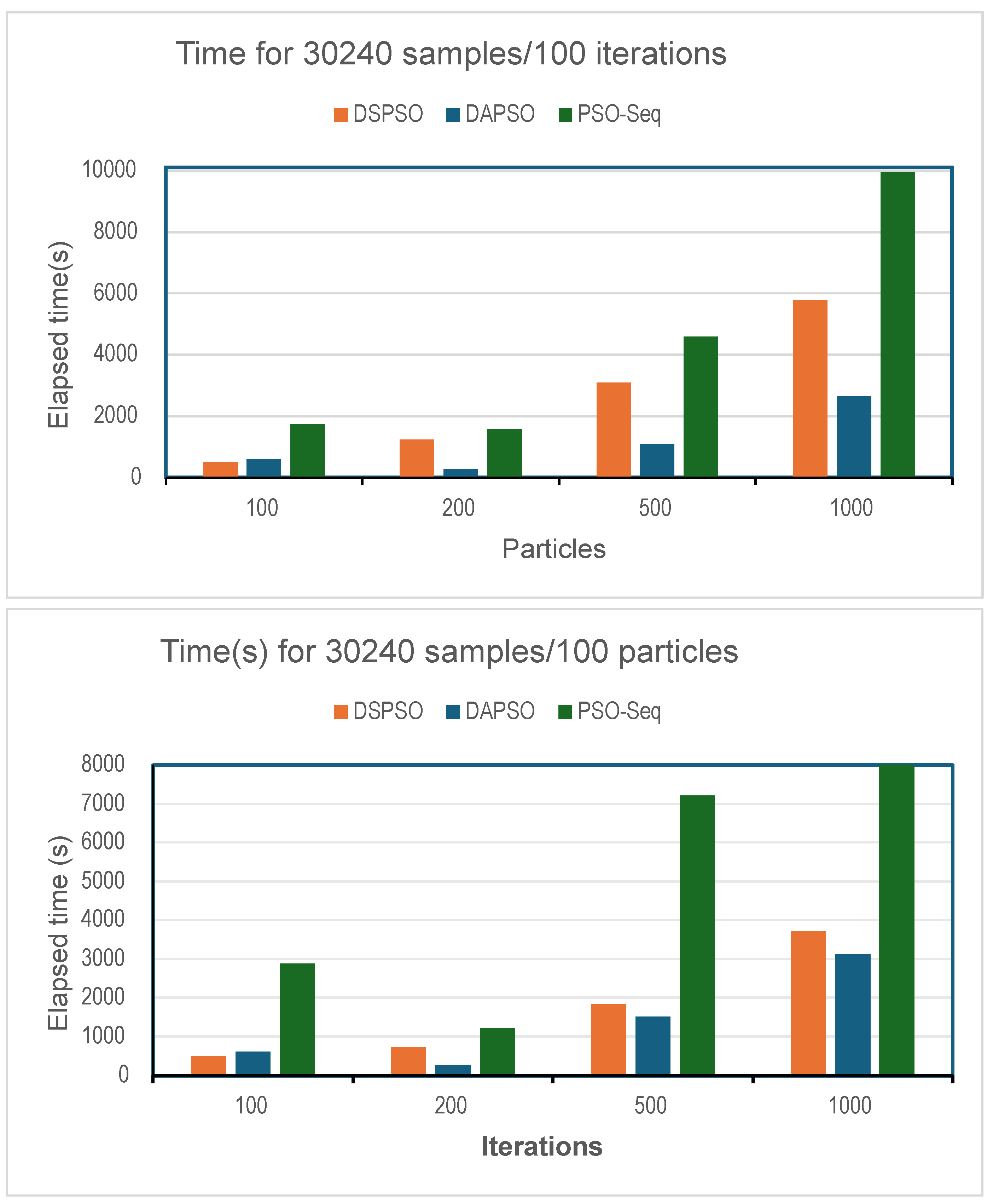

4.5. Assessment of Implementation Performance

5. Conclusions

Funding

Data Availability Statement

Abbreviations

| ANN | Artificial Neural Network |

| DAPSO | Distributed Asynchronous PSO |

| DLNN | Deep Learning Neural Networks |

| DSPSO | Distributed Synchronous PSO |

| EA | Evolutionary Algorithms |

| EC | Energy consumption |

| GPGPU | General-purpose computing on Graphics Processing Units |

| MSE | Mean Squared Error |

| NN | Neural Network |

| PSO | Particle Swarm Optimization |

| RDD | Resilient Distributed Datasets |

Appendix A. DAPSO Implementation

References

- Daniel Leal Souza, Tiago Carvalho Martins, V.A.D. PSO-GPU: Accelerating Particle Swarm Optimization in CUDA-Based Graphics Processing Units. GECCO11, 2011.

- Gerhard Venter, J.S.S. A Parallel Particle Swarm Optimization Algorithm Accelerated by Asynchronous Evaluations. 6-th World Congresses of Structural and Multidisciplinary Optimization, 2005.

- Iruela, J.; Ruiz, L.; Pegalajar, M.; Capel, M. A parallel solution with GPU technology to predict energy consumption in spatially distributed buildings using evolutionary optimization and artificial neural networks. Energy Conversion and Management 2020, 207, 112535. [Google Scholar] [CrossRef]

- Iruela, J.R.S.; Ruiz, L.G.B.; Capel, M.I.; Pegalajar, M.C. A TensorFlow Approach to Data Analysis for Time Series Forecasting in the Energy-Efficiency Realm. Energies 2021, 14. [Google Scholar] [CrossRef]

- Busetti, R.; El Ioini, N.; Barzegar, H.R.; Pahl, C. A Comparison of Synchronous and Asynchronous Distributed Particle Swarm Optimization for Edge Computing. Proceedings of the 13th International Conference on Cloud Computing and Services Science–CLOSER. INSTICC.SciTePress, 2023, Vol. I, pp. 194–203.

- Ruiz, L.; Capel, M.; Pegalajar, M. Parallel memetic algorithm for training recurrent neural networks for the energy efficiency problem. Applied Soft Computing 2019, 76, 356–368. [Google Scholar] [CrossRef]

- Ruiz, L.; Rueda, R.; Cuéllar, M.; Pegalajar, M. Energy consumption forecasting based on Elman neural networks with evolutive optimization. Expert Systems with Applications 2018, 92, 380–389. [Google Scholar] [CrossRef]

- Ruiz, L.G.B.; Cuéllar, M.P.; Calvo-Flores, M.D.; Jiménez, M.D.C.P. An Application of Non-Linear Autoregressive Neural Networks to Predict Energy Consumption in Public Buildings. Energies 2016, 9. [Google Scholar] [CrossRef]

- Pegalajar, M.; Ruiz, L.; Cuéllar, M.; Rueda, R. Analysis and enhanced prediction of the Spanish Electricity Network through Big Data and Machine Learning techniques. International Journal of Approximate Reasoning 2021, 133, 48–59. [Google Scholar] [CrossRef]

- Criado-Ramón, D.; Ruiz, L.; Pegalajar, M. Electric demand forecasting with neural networks and symbolic time series representations. Applied Soft Computing 2022, 122, 108871. [Google Scholar] [CrossRef]

- Sahoo, B.M.; Amgoth, T.; Pandey, H.M. Particle swarm optimization based energy efficient clustering and sink mobility in heterogeneous wireless sensor network. Ad Hoc Networks 2020, 106, 102237. [Google Scholar] [CrossRef]

- Malik, S.; Kim, D. Prediction-Learning Algorithm for Efficient Energy Consumption in Smart Buildings Based on Particle Regeneration and Velocity Boost in Particle Swarm Optimization Neural Networks. Energies 2018, 11. [Google Scholar] [CrossRef]

- Shami, T.M.; El-Saleh, A.A.; Alswaitti, M.; Al-Tashi, Q.; Summakieh, M.A.; Mirjalili, S. Particle swarm optimization: A comprehensive survey. IEEE Access 2022, 10, 10031–10061. [Google Scholar] [CrossRef]

- Determination of industrial energy demand in Turkey using MLR, ANFIS and PSO-ANFIS. Journal of Artificial Intelligence and Systems 2021, 3. [CrossRef]

- Subramoney, D.; Nyirenda, C.N. Multi-Swarm PSO Algorithm for Static Workflow Scheduling in Cloud-Fog Environments. IEEE Access 2022, 10, 117199–117214. [Google Scholar] [CrossRef]

- Wang, J.; Chen, X.; Zhang, F.; Chen, F.; Xin, Y. Building Load Forecasting Using Deep Neural Network with Efficient Feature Fusion. Journal of Modern Power Systems and Clean Energy 2021, 9, 160–169. [Google Scholar] [CrossRef]

- Yong, Z.; Li-juan, Y.; Qian, Z.; Xiao-yan, S. Multi-objective optimization of building energy performance using a particle swarm optimizer with less control parameters. Journal of Building Engineering 2020, 32, 101505. [Google Scholar] [CrossRef]

- Ghalambaz, M.; Jalilzadeh, Y.R.; Davami, A.H. Building energy optimization using butterfly optimization algorithm. Thermal Science 2022, 26, 3975–3986. [Google Scholar] [CrossRef]

- Duran Toksarı, M. Ant colony optimization approach to estimate energy demand of Turkey. Energy Policy 2007, 35, 3984–3990. [Google Scholar] [CrossRef]

- Sundareswaran, K.; Sankar, P.; Nayak, P.S.R.; Simon, S.P.; Palani, S. Enhanced Energy Output From a PV System Under Partial Shaded Conditions Through Artificial Bee Colony. IEEE Transactions on Sustainable Energy 2015, 6, 198–209. [Google Scholar] [CrossRef]

- Nazari-Heris, M.; Mohammadi-Ivatloo, B.; Asadi, S.; Kim, J.H.; Geem, Z.W. Harmony search algorithm for energy system applications: an updated review and analysis. Journal of Experimental & Theoretical Artificial Intelligence 2019, 31, 723–749. [Google Scholar] [CrossRef]

- dos Santos Coelho, L.; Mariani, V.C. Improved firefly algorithm approach applied to chiller loading for energy conservation. Energy and Buildings 2013, 59, 273–278. [Google Scholar] [CrossRef]

- Nadjemi, O.; Nacer, T.; Hamidat, A.; Salhi, H. Optimal hybrid PV/wind energy system sizing: Application of cuckoo search algorithm for Algerian dairy farms. Renewable and Sustainable Energy Reviews 2017, 70, 1352–1365. [Google Scholar] [CrossRef]

- Rong-Zhi Qi, Zhi-Jian Wang, S. Y.L. A Parallel Genetic Algorithm Based on Spark for Pairwise Test Suite Generationk. Journal of Computer Science and Technology 2015. [Google Scholar] [CrossRef]

- J. R.S. Iruela, L.G.B. Ruiz, M.C. A parallel solution with GPU technology to predict energy consumption in spatially distributed buildings using evolutionary optimization and artificial neural networks. Energy Conversion and Management 2020. [Google Scholar] [CrossRef]

- Kennedy, J.; Eberhart, R. Particle swarm optimization. Proceedings of ICNN’95-international conference on neural networks. IEEE, 1995, Vol. 4, pp. 1942–1948.

- Panapakidis, I.P.; Dagoumas, A.S. Day-ahead electricity price forecasting via the application of artificial neural network based models. Applied Energy 2016, 172, 132–151. [Google Scholar] [CrossRef]

- Bouzerdoum, M.; Mellit, A.; Massi Pavan, A. A hybrid model (SARIMA–SVM) for short-term power forecasting of a small-scale grid-connected photovoltaic plant. Solar Energy 2013, 98, 226–235. [Google Scholar] [CrossRef]

- Lahouar, A.; Ben Hadj Slama, J. Day-ahead load forecast using random forest and expert input selection. Energy Conversion and Management 2015, 103, 1040–1051. [Google Scholar] [CrossRef]

- Marcel Waintraub, Roberto Schirru, C. M.P. Multiprocessor modeling of parallel Particle Swarm Optimization applied to nuclear engineering problems. Progress in Nuclear Energy 2009. [Google Scholar] [CrossRef]

- Fan, D.; Lee, J. A Hybrid Mechanism of Particle Swarm Optimization and Differential Evolution Algorithms based on Spark. Transactions on Internet and Information Systems 2019. [Google Scholar] [CrossRef]

- Yinan Xu, Hui Liu, Z. L. A distributed computing framework for wind speed big data forecasting on Apache Spark. Sustainable Energy Technologies and Assessments 2020. [Google Scholar] [CrossRef]

- Foundation, A.S. Apache Spark™ - Unified Engine for large-scale data analytics. https://spark.apache.org, 2018. [Resource online, accessed July 3, 2024]. 3 July.

- Zaharia, M.; Chowdhury, M.; Das, T.; Dave, A.; Ma, J.; McCauly, M.; Franklin, M.J.; Shenker, S.; Stoica, I. Resilient Distributed Datasets: A Fault-Tolerant Abstraction for In-Memory Cluster Computing. 9th USENIX Symposium on Networked Systems Design and Implementation (NSDI 12); USENIX Association: San Jose, CA, 2012; pp. 15–28. [Google Scholar]

- Oh, K.S.; Jung, K. GPU implementation of neural networks. Pattern Recognition 2004, 37, 1311–1314. [Google Scholar] [CrossRef]

| Parameter | Value |

|---|---|

| Number of PSO iterations | 100, 200, 500, 1000 |

| Number of neurons in the input layer | 15 |

| Number of neurons in the hidden layer | 30 |

| Number of particles | 100, 200, 500, 1000 |

| SuperRDDs | 4 |

| Batch size | 10 |

| Interval of particle positions | [-1,1] |

| Particle velocity ranges | [-0.6,0.6] (0.6) |

| w | 1 |

| 0.8 | |

| 0.2 |

| Variable | Chi-square value | P-value |

|---|---|---|

| height | 35178.19 | 0.0 |

| weight | 20419.24 | 0.0 |

| waist | 10849.84 | 0.0 |

| eyesight(left) | 2819.29 | 0.0 |

| eyesight(right) | 3432.92 | 0.0 |

| hearing(left) | 232.12 | 2.06e-52 |

| hearing(right) | 215.86 | 7.25e-49 |

| systolic | 1090.97 | 3.31e-236 |

| relaxation | 1875.90 | 0.0 |

| fasting blood sugar | 2089.03 | 0.0 |

| Cholesterol | 1215.51 | 3.16e-263 |

| triglyceride | 18319.41 | 0.0 |

| HDL | 10973.96 | 0.0 |

| LDL | 1067.00 | 5.24e-231 |

| hemoglobin | 34114.14 | 0.0 |

| Urine protein | 165.31 | 7.82e-38 |

| serum creatinine | 13429.07 | 0.0 |

| AST | 725.64 | 5.77e-157 |

| ALT | 7484.18 | 0.0 |

| Gtp | 26874.75 | 0.0 |

| dental caries | 1810.41 | 0.0 |

| Variable | Odds ratio |

|---|---|

| weight(kg)_Low | 0.20961654947072159 |

| weight(kg)_Medium_Low | 1.218644191656455 |

| weight(kg)_Medium_High | 2.097852237464934 |

| weight(kg)_High | 2.8299577733161576 |

| … | |

| fasting blood sugar_Low | 0.6308223587839904 |

| fasting blood sugar_Medium_Low | 0.9541383540661215 |

| fasting blood sugar_Medium_High | 1.173636730372843 |

| fasting blood sugar_High | 1.4547915548094907 |

| … | |

| triglyceride_Low | 0.23407715488962197 |

| triglyceride_Medium_Low | 0.7200827234227996 |

| triglyceride_Medium_High | 1.6370622749650663 |

| triglyceride_High | 3.1767870705800836 |

| HDL_Low | 2.365531709348869 |

| HDL_Medium_Low | 1.4317551296401139 |

| HDL_Medium_High | 0.7715794823329627 |

| HDL_High | 0.3282304510981655 |

| LDL_Low | 1.1629428483574764 |

| LDL_Medium_Low | 1.1890457266489936 |

| LDL_Medium_High | 1.0438026307432897 |

| LDL_High | 0.6844025582281992 |

| … | |

| Variable | Relative Risk |

|---|---|

| weight(kg)_Low | 0.38230844375970974 |

| weight(kg)_Medium_Low | 1.1138572402084614 |

| weight(kg)_Medium_High | 1.4722921983586552 |

| weight(kg)_High | 1.657394753125524 |

| … | |

| fasting blood sugar_Low | 0.7625790496264635 |

| fasting blood sugar_Medium_Low | 0.9737980190980333 |

| fasting blood sugar_Medium_High | 1.0923983061205762 |

| fasting blood sugar_High | 1.2241433259236203 |

| … | |

| triglyceride_Low | 0.38822905484291464 |

| triglyceride_Medium_Low | 0.8257440932973402 |

| triglyceride_Medium_High | 1.2999795981567317 |

| triglyceride_High | 1.7644652462095984 |

| HDL_Low | 1.552294793364521 |

| HDL_Medium_Low | 1.21516619862005 |

| HDL_Medium_High | 0.8603055475766019 |

| HDL_High | 0.49401247683404703 |

| LDL_Low | 1.0871634378867505 |

| LDL_Medium_Low | 1.1002852299103176 |

| LDL_Medium_High | 1.0242964148293716 |

| LDL_High | 0.80065254077625 |

| … | |

| Classifier | Precision | Recall | F1-score | |||

|---|---|---|---|---|---|---|

| 10 | 100 | 10 | 100 | 10 | 100 | |

| DSPSO | 0.62 | 0.74 | 0.73 | 0.74 | 0.67 | 0.74 |

| DAPSO | 0.68 | 0.77 | 0.74 | 0.77 | 0.71 | 0.77 |

| DSPSO | DAPSO | |||

|---|---|---|---|---|

| # cores | 16 | 16 | ||

| Storage memory | 25 MiB | 135 KiB | ||

| Max. active tasks | 15 | 5 | ||

| Total number of created tasks | 2960 | 992 | ||

| Task execution (CPU) accumul. time | 28800 s. | 660 s. | ||

| Task execution (GPU) accumul. time | 384 s. | 7 s. | ||

| Average time of 1 parallel run | 1554 s. | 1060 s. | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).