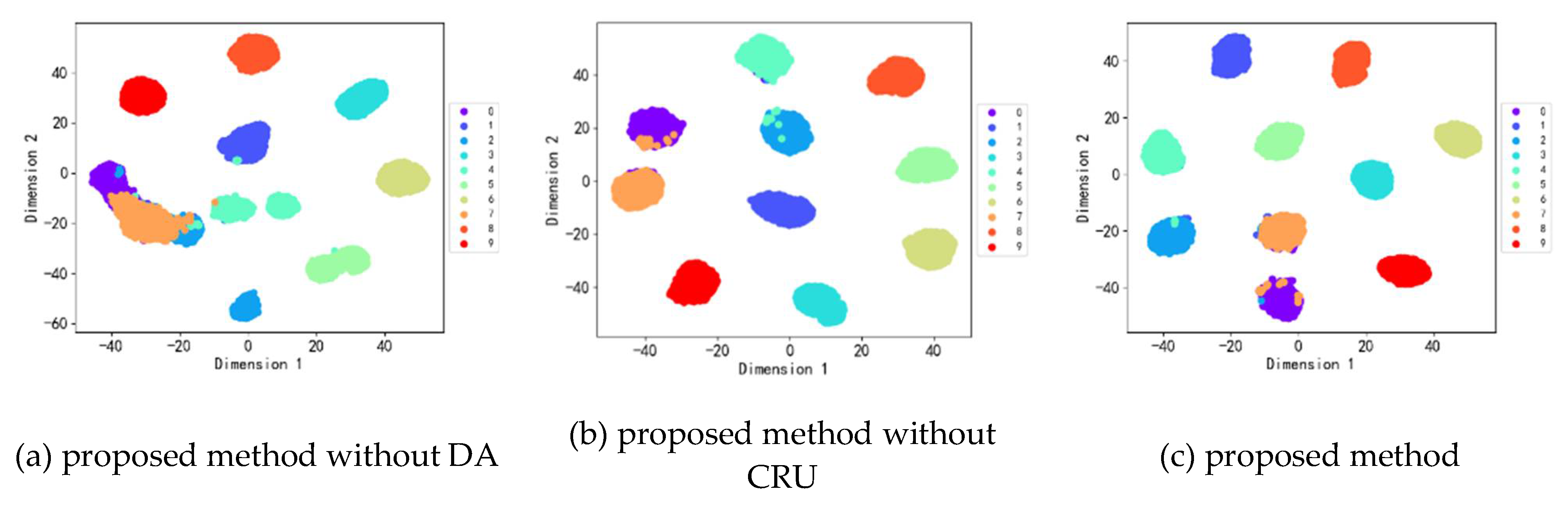

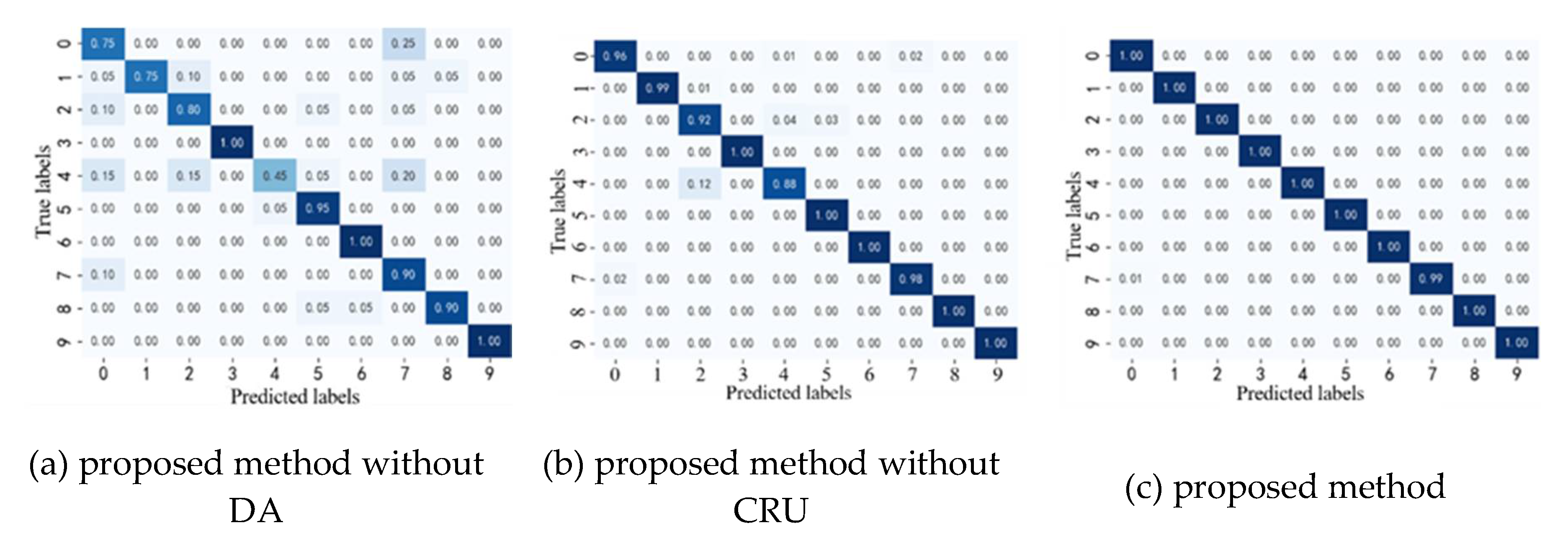

3. Fault Diagnosis Transfer Model

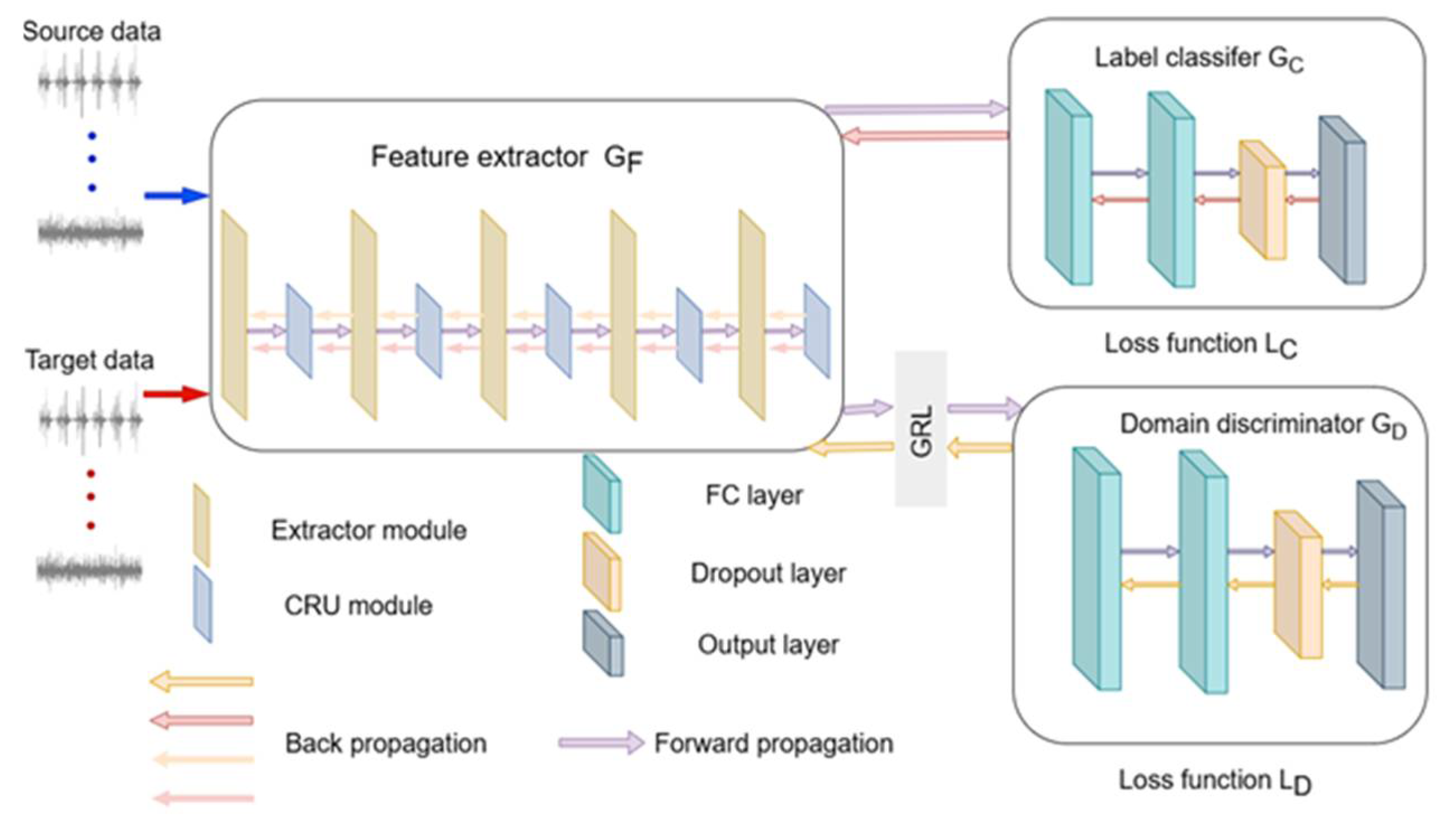

To enable bearing fault diagnosis under different loading conditions, this paper proposes a novel transfer learning method by combining the domain adversarial neural network with the channel reconstruction unit module (CRU). Initially, the signal undergoes preprocessing, converting the original signal into a one-dimensional tensor, which is then fed into the feature extractor. Subsequently, domain invariable features are extracted through adversarial learning to facilitate modal recognition for unlabeled target domain samples. The model structure depicted in

Figure 1, with further details described below.

3.1 Feature Extractor

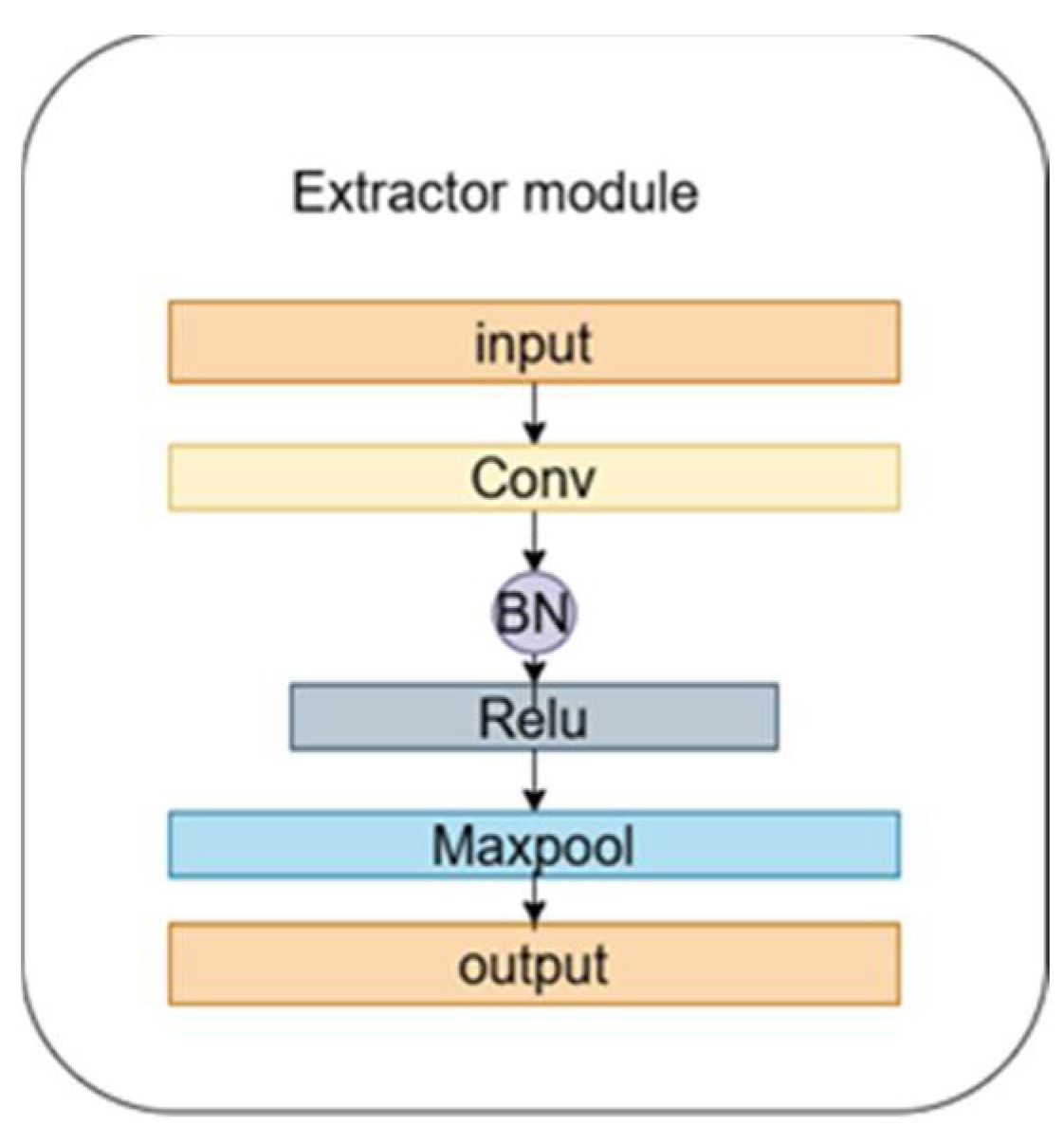

The feature extractor comprises five feature extraction modules and five channel reconstruction modules. Each feature extraction module includes a one-dimensional convolutional layer, a normalization layer, and a maximum pooling layer, as illustrated in

Figure 2. The parameters of each feature extraction module are detailed in

Table 1.

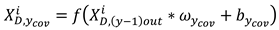

The space formed by the source domain samples and the target domain samples is , is the data length for every smple. The convolution operation on the input data is expressed as Equation 5.

Where represents the output of the last feature extraction module, denotes the output of the current yth module, denotes the training parameters for the weights and biases in the convolutional computation, denotes the linear rectifier unit (RELU) activation function, the input to the first feature extraction module is a data sample that has undergone signal preprocessing.

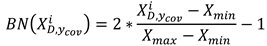

Then the samples after the convolution operation are input into the normalization layer for normalization operation to prevent the order of magnitude difference of the input variables from affecting the operation results too much. The normalization operation process is shown in Equation 6.

Where shows the maximum and minimum values in the operational data. Following normalization, the maximum pooling operation is applied on the normalized to reduce the model parameters and the operation cost. The maximum pooling operation is shown in Equation (7).

Where represents the length of the segment and is the starting point of the pooling position.

3.2. Basic Theory of CRU

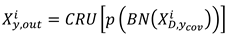

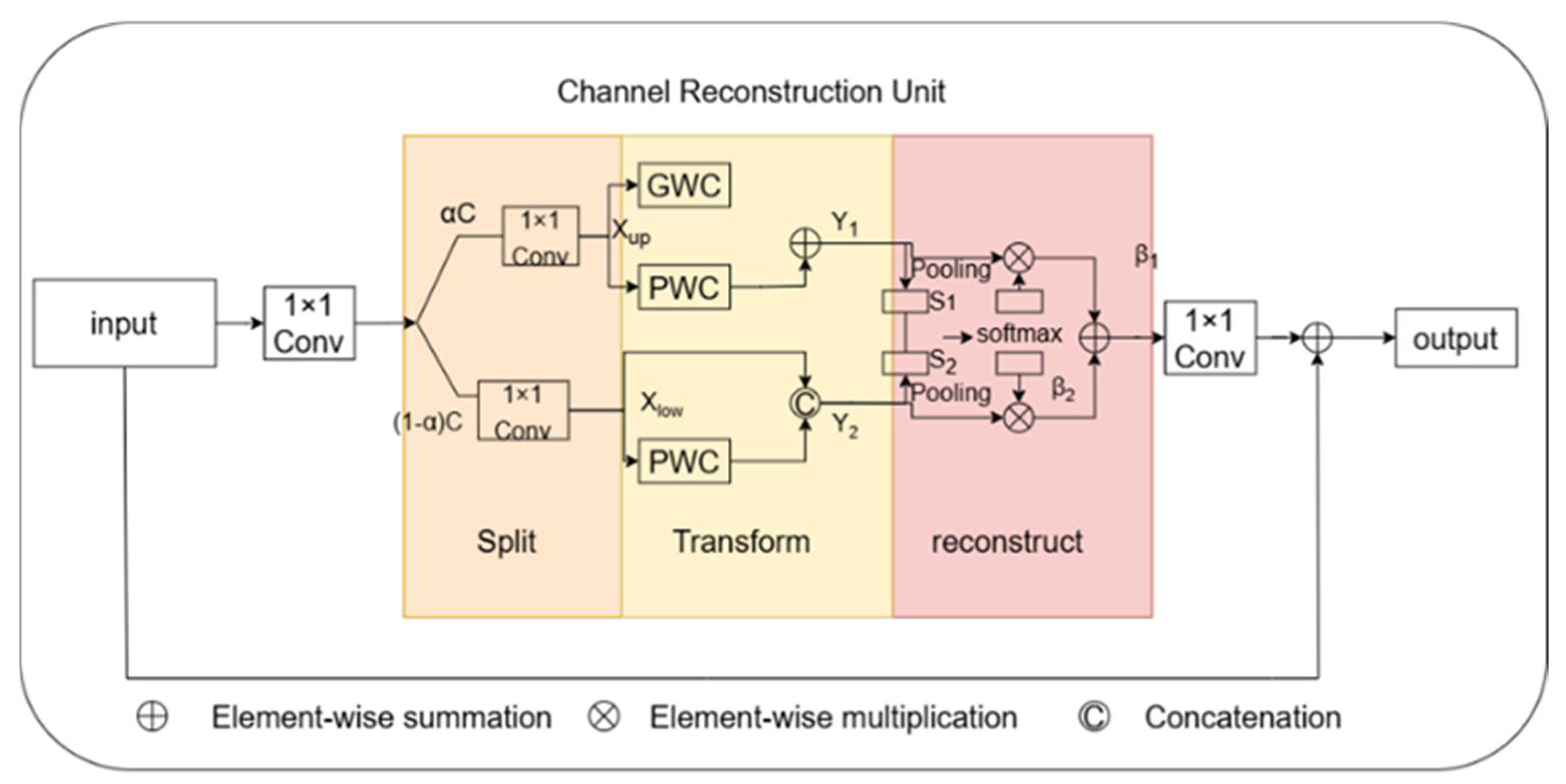

Following feature extraction by the

yth feature extraction module, the output is then input to the channel reconstruction unit (CRU). The CRU, as depicted in

Figure 3 was proposed by Li et al [

25]. Unlike standard convolution, CRU employs splitting, transforming, and reconstruction strategies.

The first is the splitting strategy, which divides the pass into two parts,

and

channels, as depicted in figure 3. Here

is the splitting ratio. The channels of feature mapping are subsequently compressed using

convolution to improve the efficiency of computation. Following splitting, the feature channel is squeezed and the squeezing ratio

is 2. Following splitting and squeezing, the feature

from the feature module is divided into the upper portion

and the lower portion

, then enters into the transformation stage, with

is inputted to the upper transformation stage. In the upper transformation stage, Group-by-Group Convolution (GWC) [

26] and Point-by-Point Convolution (PWC) are used instead of convolution operation to extract deep information. The

GWC and

PWC operations are performed on the upper part of the output. After that, we sum the outputs to form the merged feature

. The upper transform stage can be expressed by Equation 8 as:

Where , are the relevant parameters of GWC and PWC.

is input to the lower transform stage to generate features with shallow hidden details using PWC operation. This serves as a complement to the rich features generated earlier. Utilizing allows for the generation of additional features without incurring extra costs. Finally, the newly generated features and the reused features are concatenated to form the feature , and the lower transformation stage formula is shown in Equation (9):

Where is the relevant parameters of the lower input PWC.

After the transformation, the output features

,

of the upper and lower transformation stages are fused using the simplified Sknet method [

27], as depicted in the fusion section in

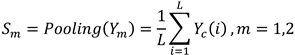

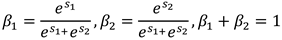

Figure 3. Applying global average pooling to collect global spatial message for

, which is computed as in Equation (10).

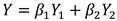

The global up-channel descriptions and global down-channel descriptions are then stacked together, and a channelized soft attention operation is used to generate the feature importance vectors and . Finally, the up- and down-channel descriptions are combined with by combining the upper feature and lower feature are merged by channel to obtain the channelized refinement feature . As in Equation (11) and Equation (12).

The result of the final processing of the features input from the feature module by the channel reconstruction unit can be expressed as Equation (13).

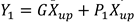

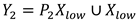

3.3. Fault Recognition Module

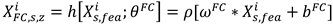

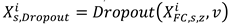

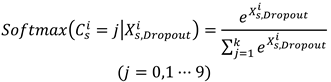

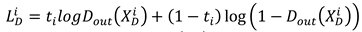

The output of the feature extractor is , which contains feature information learned from both domains. represents features learned from source domains that contain useful knowledge. The fault identification module consists of two fully connected layers, a layer and the output layer. The zth fully connected layer processes as in Equation (14).

Where represents the parameters of the fully connected layer. Dropout's process for the second fully connected layer can be expressed as Equation (15).

Where is the deactivation rate, which is taken as 0.5 in this paper. The softmax function is used as the output layer to identify the class of faults, the softmax function can be expressed as Equation (16)

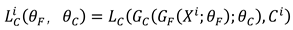

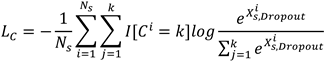

Where denotes the fault label recognized by the output layer. The loss function of the fault recognition module is expressed as Equation (17).

Where is the quantity of fault categories in the data sample and represents the indicator function.

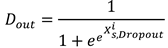

3.4. Domain Discriminator Module

The structure of the domain discrimination module mirrors that of the fault recognition module, but the domain discrimination module is used to differentiate the domain labels of the samples by the features output from the feature extractor. The domain class of the sample is judged after first performing a convolution operation on the feature through two fully-connected layers as in Equation (14), then processed through a dropout layer as in Equation (15), and finally through an output layer. The output can be expressed as Equation (18).

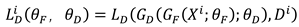

When the output layer cannot discriminate the samples from that domain, its learned features are domain invariant features. Equation (19) is the loss function of the domain discrimination module .

where the domain label “0” or “1” and is the prediction label.

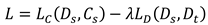

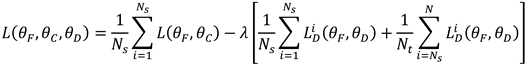

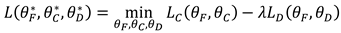

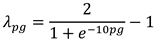

3.5.Model Loss Function

In the domain adversarial neural network adversarial training, the total loss function is expressed as Equation (20).

Where the equilibrium parameter varies dynamically with the number of iterations and the expression is shown in Equation (20).

Where represents the training iteration relative process, and represents the rate of the times of current iterations to the total times of iterations.