Submitted:

10 January 2024

Posted:

11 January 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Human Pose Estimation

2.2. Attention enhanced Convolution

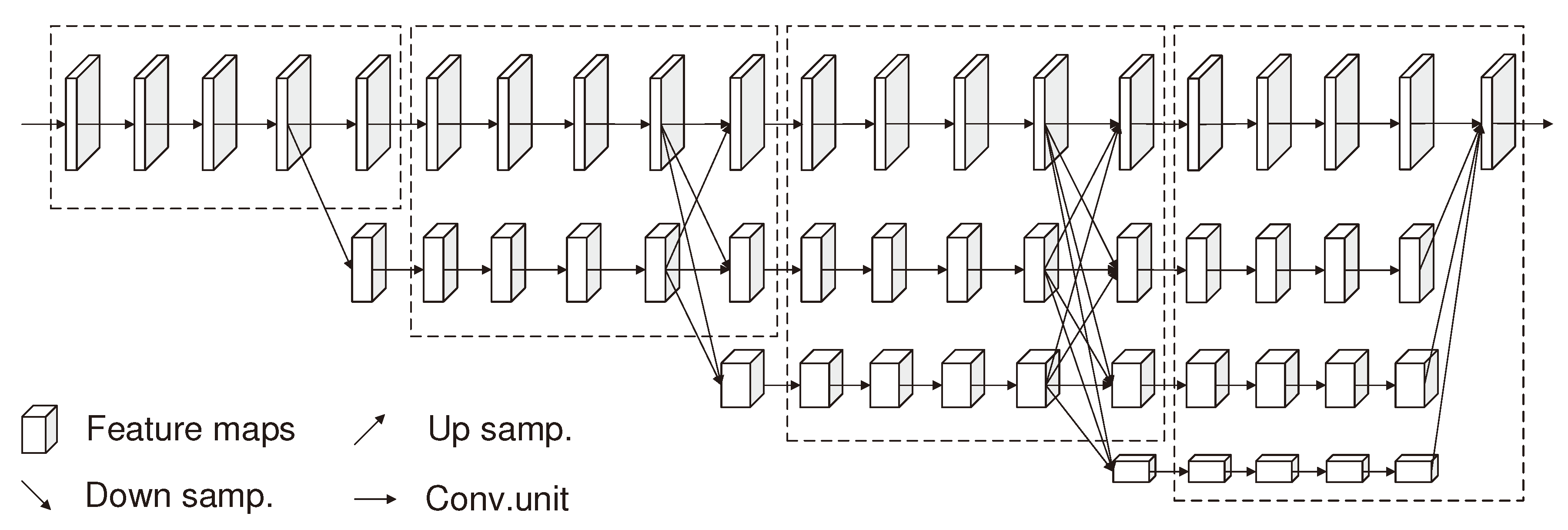

2.3. HRNet

3. Methods

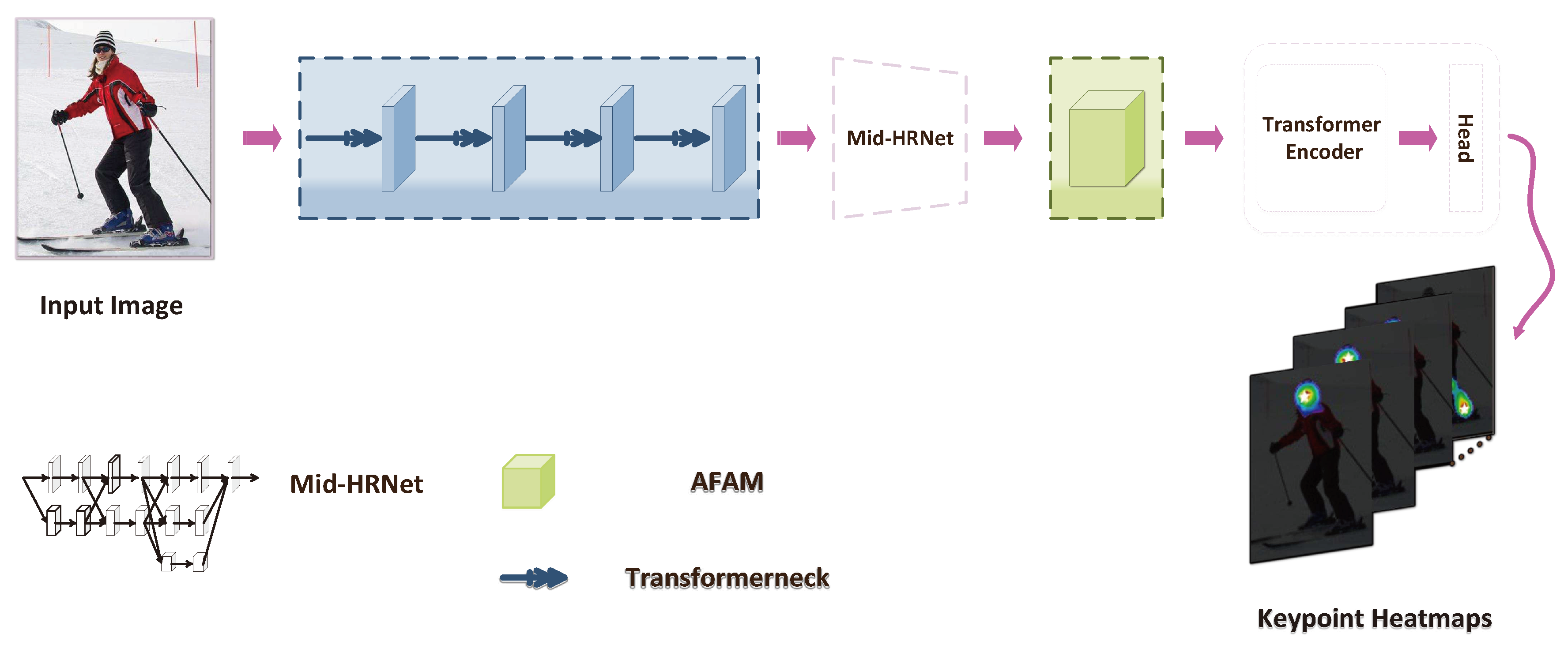

3.1. Context-aware Feature TransformerNetwork(CaFTNet)

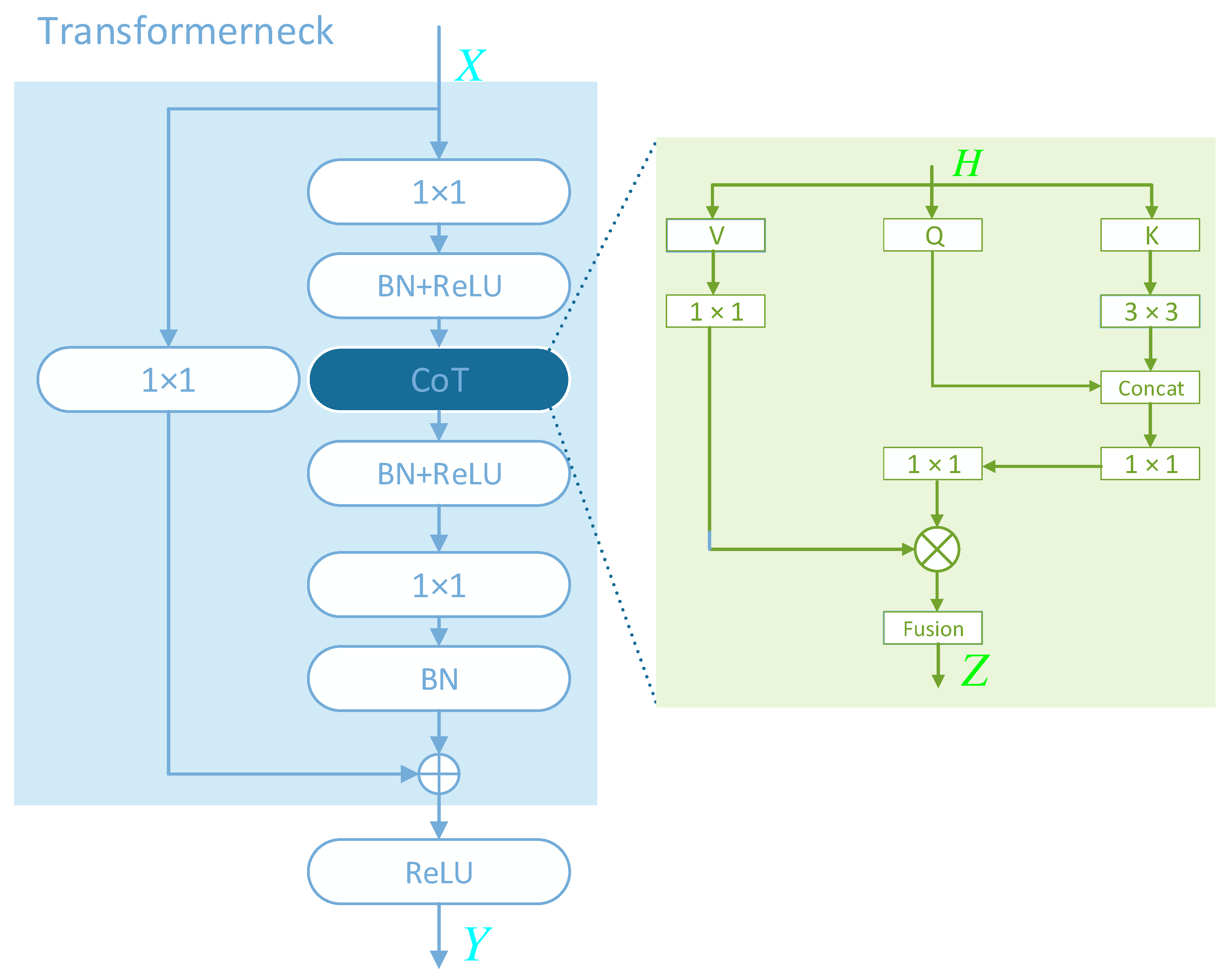

3.2. Transfomerneck

3.3. Attention Feature Aggregation Module(AFAM)

4. Experiments

4.1. Model Variants

4.2. Technical details

4.3. Results on COCO

4.3.1. Dataset and Evaluation Metrics

4.3.2. Quantitative Results

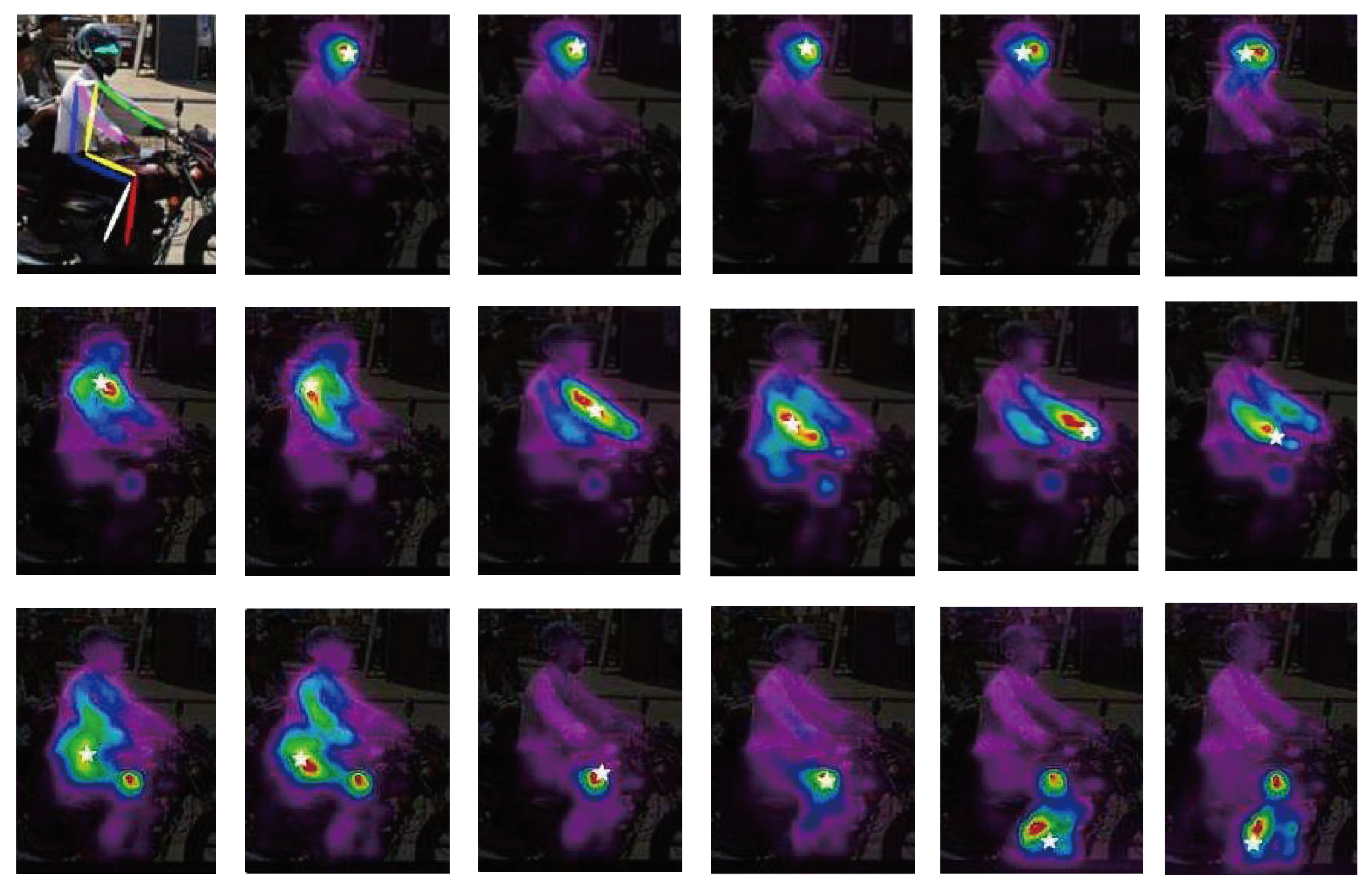

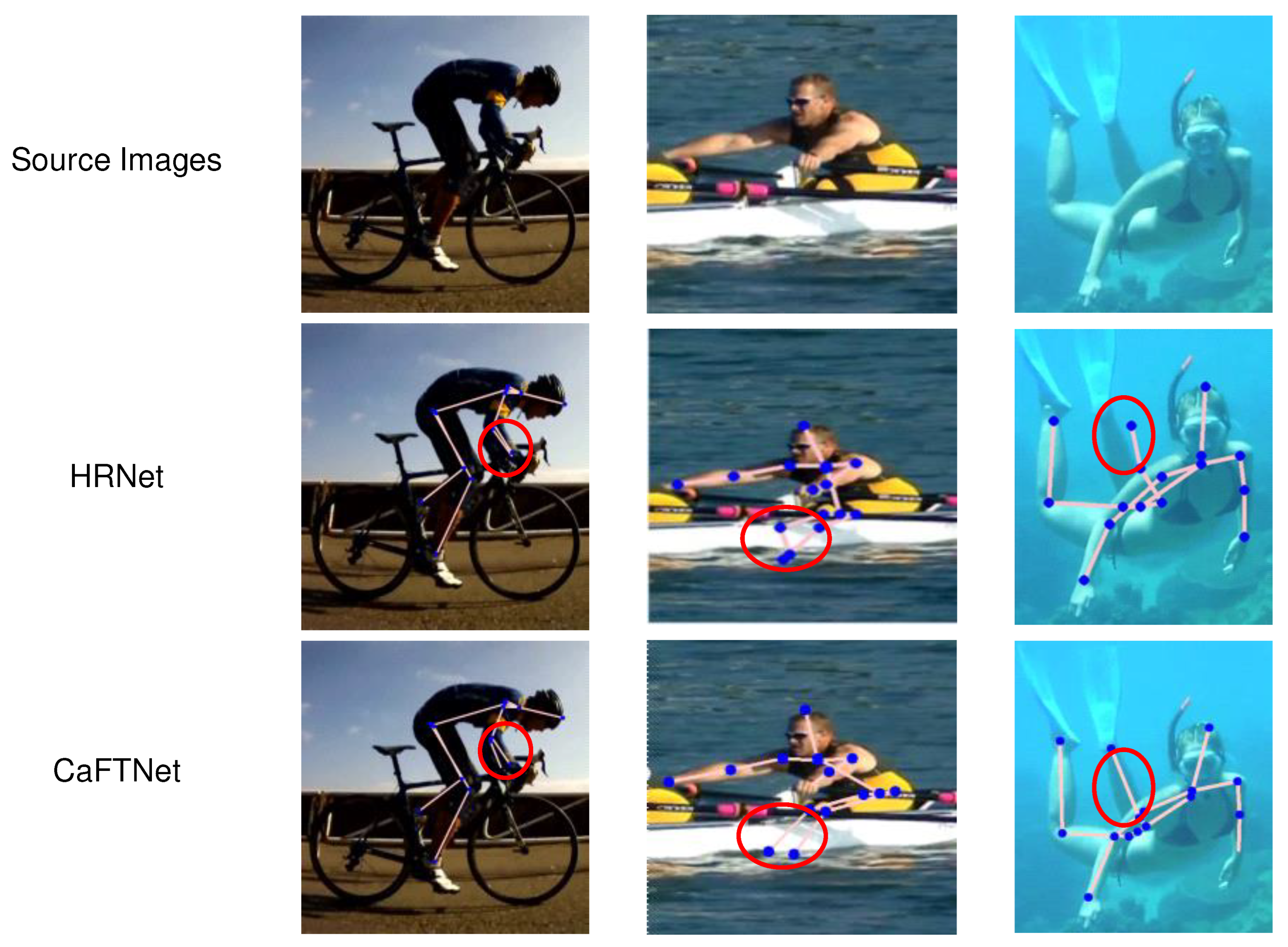

4.3.3. Qualitative Comparisons

4.4. Results on MPII

4.4.1. Dataset and Evaluation metric

4.4.2. Quantitative Results

4.4.3. Qualitative Comparisons

4.5. Ablation experiments

4.5.1. Transformerneck

4.5.2. Attention Feature Aggregation Module(AFAM)

| Model | Baseline | SE | ECA | CBAM | AFAM | AP |

|---|---|---|---|---|---|---|

| CaFTNet-R | ✓ | 72.6 | ||||

| CaFTNet-R | ✓ | ✓ | 72.7 | |||

| CaFTNet-R | ✓ | ✓ | 72.8 | |||

| CaFTNet-R | ✓ | ✓ | 73.0 | |||

| CaFTNet-R | ✓ | ✓ | 73.2 |

| Model | Hea | Sho | Elb | Wri | Hip | Kne | Ank | Mean | Params |

|---|---|---|---|---|---|---|---|---|---|

| SimpleBaseline-Res50[24] | 96.4 | 95.3 | 89.0 | 83.2 | 88.4 | 84.0 | 79.6 | 88.5 | 34.0M |

| SimpleBaseline-Res101[24] | 96.9 | 95.9 | 89.5 | 84.4 | 88.4 | 84.5 | 80.7 | 89.1 | 53.0M |

| SimpleBaseline-Res152[24] | 97.0 | 95.9 | 90.0 | 85.0 | 89.2 | 85.3 | 81.3 | 89.6 | 68.6M |

| HRNet-W32[14] | 97.1 | 95.9 | 90.3 | 86.4 | 89.1 | 87.1 | 83.3 | 90.3 | 28.5M |

| TokenPose-L/D24[58] | 97.1 | 95.9 | 90.4 | 86.0 | 89.3 | 87.1 | 82.5 | 90.2 | 28.1M |

| CaFTNet-H4 | 97.2 | 96.1 | 90.5 | 86.5 | 89.3 | 86.9 | 82.8 | 90.4 | 17.3M |

5. Conclusions

6. Patents

Funding

Abbreviations

| MDPI | Multidisciplinary Digital Publishing Institute |

| DOAJ | Directory of open access journals |

| TLA | Three letter acronym |

| LD | Linear dichroism |

References

- Si, C.; Chen, W.; Wang, W.; Wang, L.; Tan, T. An attention enhanced graph convolutional lstm network for skeleton-based action recognition. In Proceedings of the proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2019; pp. 1227–1236. [Google Scholar]

- Yang, C.; Xu, Y.; Shi, J.; Dai, B.; Zhou, B. Temporal pyramid network for action recognition. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 591–600.

- Rahnama, A.; Esfahani, A.; Mansouri, A. Adaptive Frame Selection In Two Dimensional Convolutional Neural Network Action Recognition. In Proceedings of the 2022 8th Iranian Conference on Signal Processing and Intelligent Systems (ICSPIS). IEEE; 2022; pp. 1–4. [Google Scholar]

- Sun, Z.; Ke, Q.; Rahmani, H.; Bennamoun, M.; Wang, G.; Liu, J. Human action recognition from various data modalities: A review. IEEE transactions on pattern analysis and machine intelligence 2022. [Google Scholar] [CrossRef] [PubMed]

- Snower, M.; Kadav, A.; Lai, F.; Graf, H.P. 15 keypoints is all you need. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020, pp. 6738–6748.

- Ning, G.; Pei, J.; Huang, H. Lighttrack: A generic framework for online top-down human pose tracking. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2020, pp. 1034–1035.

- Wang, M.; Tighe, J.; Modolo, D. Combining detection and tracking for human pose estimation in videos. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020, pp. 11088–11096.

- Rafi, U.; Doering, A.; Leibe, B.; Gall, J. Self-supervised keypoint correspondences for multi-person pose estimation and tracking in videos. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part XX 16. Springer, 2020; pp. 36–52.

- Kwon, O.H.; Tanke, J.; Gall, J. Recursive bayesian filtering for multiple human pose tracking from multiple cameras. In Proceedings of the Proceedings of the Asian Conference on Computer Vision; 2020. [Google Scholar]

- Kocabas, M.; Athanasiou, N.; Black, M.J. Vibe: Video inference for human body pose and shape estimation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 5253–5263.

- Chen, H.; Guo, P.; Li, P.; Lee, G.H.; Chirikjian, G. Multi-person 3d pose estimation in crowded scenes based on multi-view geometry. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference; Glasgow, UK, August 23–28, 2020, Proceedings, Part III 16. Springer, 2020. pp. 541–557.

- Kolotouros, N.; Pavlakos, G.; Black, M.J.; Daniilidis, K. Learning to reconstruct 3D human pose and shape via model-fitting in the loop. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019, pp. 2252–2261.

- Qiu, H.; Wang, C.; Wang, J.; Wang, N.; Zeng, W. Cross view fusion for 3d human pose estimation. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019, pp. 4342–4351.

- Sun, K.; Xiao, B.; Liu, D.; Wang, J. Deep High-Resolution Representation Learning for Human Pose Estimation. arXiv e-prints, 2019. [Google Scholar]

- Cai, Y.; Wang, Z.; Luo, Z.; Yin, B.; Du, A.; Wang, H.; Zhang, X.; Zhou, X.; Zhou, E.; Sun, J. Learning delicate local representations for multi-person pose estimation. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference; Glasgow, UK, August 23–28, 2020, Proceedings, Part III 16. Springer, 2020. pp. 455–472.

- Fang, H.S.; Xie, S.; Tai, Y.W.; Lu, C. Rmpe: Regional multi-person pose estimation. In Proceedings of the Proceedings of the IEEE international conference on computer vision, 2017, pp. 2334–2343.

- Newell, A.; Huang, Z.; Deng, J. Associative embedding: End-to-end learning for joint detection and grouping. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Papandreou, G.; Zhu, T.; Kanazawa, N.; Toshev, A.; Tompson, J.; Bregler, C.; Murphy, K. Towards accurate multi-person pose estimation in the wild. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 4903–4911.

- Wei, S.E.; Ramakrishna, V.; Kanade, T.; Sheikh, Y. Convolutional pose machines. In Proceedings of the Proceedings of the IEEE conference on Computer Vision and Pattern Recognition, 2016, pp. 4724–4732.

- Yang, W.; Li, S.; Ouyang, W.; Li, H.; Wang, X. Learning feature pyramids for human pose estimation. In Proceedings of the proceedings of the IEEE international conference on computer vision, 2017, pp. 1281–1290.

- Jiang, W.; Jin, S.; Liu, W.; Qian, C.; Luo, P.; Liu, S. PoseTrans: A Simple Yet Effective Pose Transformation Augmentation for Human Pose Estimation. In Proceedings of the Computer Vision–ECCV 2022: 17th European Conference; Tel Aviv, Israel, October 23–27, 2022, Proceedings, Part V. Springer, 2022. pp. 643–659.

- Tang, W.; Yu, P.; Wu, Y. Deeply learned compositional models for human pose estimation. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018, pp. 190–206.

- Ren, F. Distilling Token-Pruned Pose Transformer for 2D Human Pose Estimation. arXiv 2019, arXiv:2304.05548 2023. [Google Scholar]

- Xiao, B.; Wu, H.; Wei, Y. Simple baselines for human pose estimation and tracking. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018, pp. 466–481.

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556 2014. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 1–9.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. arXiv 2017. [Google Scholar]

- Raaj, Y.; Idrees, H.; Hidalgo, G.; Sheikh, Y. Efficient online multi-person 2d pose tracking with recurrent spatio-temporal affinity fields. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2019, pp. 4620–4628.

- Luvizon, D.C.; Picard, D.; Tabia, H. Multi-task deep learning for real-time 3D human pose estimation and action recognition. IEEE transactions on pattern analysis and machine intelligence 2020, 43, 2752–2764. [Google Scholar] [CrossRef]

- Ye, M.; Shen, J.; Lin, G.; Xiang, T.; Shao, L.; Hoi, S.C. Deep learning for person re-identification: A survey and outlook. IEEE transactions on pattern analysis and machine intelligence 2021, 44, 2872–2893. [Google Scholar] [CrossRef] [PubMed]

- Srinivas, A.; Lin, T.Y.; Parmar, N.; Shlens, J.; Vaswani, A. Bottleneck Transformers for Visual Recognition 2021.

- Li, Y.; Yao, T.; Pan, Y.; Mei, T. Contextual Transformer Networks for Visual Recognition 2021. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016.

- Liu, Z.; Mao, H.; Wu, C.Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. A convnet for the 2020s. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 11976–11986.

- Pan, X.; Ge, C.; Lu, R.; Song, S.; Chen, G.; Huang, Z.; Huang, G. On the integration of self-attention and convolution. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022, pp. 815–825.

- Newell, A.; Yang, K.; Deng, J. Stacked hourglass networks for human pose estimation. In Proceedings of the Computer Vision–ECCV 2016: 14th European Conference; Amsterdam, The Netherlands, October 11-14, 2016, Proceedings, Part VIII 14. Springer, 2016. pp. 483–499.

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International conference on machine learning. PMLR, 2019, pp. 6105–6114.

- Pfister, T.; Charles, J.; Zisserman, A. Flowing convnets for human pose estimation in videos. In Proceedings of the Proceedings of the IEEE international conference on computer vision, 2015, pp. 1913–1921.

- Chu, X.; Yang, W.; Ouyang, W.; Ma, C.; Yuille, A.L.; Wang, X. Multi-context attention for human pose estimation. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 1831–1840.

- Cheng, B.; Xiao, B.; Wang, J.; Shi, H.; Huang, T.S.; Zhang, L. Higherhrnet: Scale-aware representation learning for bottom-up human pose estimation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 5386–5395.

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 2117–2125.

- Hu, J.; Shen, L.; Albanie, S.; Sun, G.; Vedaldi, A. Gather-excite: Exploiting feature context in convolutional neural networks. Advances in neural information processing systems 2018, 31. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 7132–7141.

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient channel attention for deep convolutional neural networks. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 11534–11542.

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018, pp. 3–19.

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13. Springer, 2014, pp. 740–755.

- Andriluka, M.; Pishchulin, L.; Gehler, P.; Schiele, B. 2d human pose estimation: New benchmark and state of the art analysis. In Proceedings of the Proceedings of the IEEE Conference on computer Vision and Pattern Recognition, 2014, pp. 3686–3693.

- Chen, Y.; Wang, Z.; Peng, Y.; Zhang, Z.; Yu, G.; Sun, J. Cascaded pyramid network for multi-person pose estimation. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 7103–7112.

- Gao, P.; Lu, J.; Li, H.; Mottaghi, R.; Kembhavi, A. Container: Context aggregation network. arXiv 2021, arXiv:2106.01401 2021. [Google Scholar]

- Bello, I.; Zoph, B.; Vaswani, A.; Shlens, J.; Le, Q.V. Attention augmented convolutional networks. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019, pp. 3286–3295.

- Ramachandran, P.; Parmar, N.; Vaswani, A.; Bello, I.; Levskaya, A.; Shlens, J. Stand-alone self-attention in vision models. Advances in neural information processing systems 2019, 32. [Google Scholar]

- Zhao, H.; Jia, J.; Koltun, V. Exploring self-attention for image recognition. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 10076–10085.

- Huang, J.; Zhu, Z.; Guo, F.; Huang, G. The devil is in the details: Delving into unbiased data processing for human pose estimation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 5700–5709.

- Zhang, F.; Zhu, X.; Dai, H.; Ye, M.; Zhu, C. Distribution-aware coordinate representation for human pose estimation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 7093–7102.

- Li, W.; Wang, Z.; Yin, B.; Peng, Q.; Du, Y.; Xiao, T.; Yu, G.; Lu, H.; Wei, Y.; Sun, J. Rethinking on multi-stage networks for human pose estimation. arXiv 2019, arXiv:1901.00148 2019. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980 2014. [Google Scholar]

- Yang, S.; Quan, Z.; Nie, M.; Yang, W. Transpose: Keypoint localization via transformer. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 11802–11812.

- Li, Y.; Zhang, S.; Wang, Z.; Yang, S.; Yang, W.; Xia, S.T.; Zhou, E. Tokenpose: Learning keypoint tokens for human pose estimation. In Proceedings of the Proceedings of the IEEE/CVF International conference on computer vision, 2021, pp. 11313–11322.

- Sun, X.; Xiao, B.; Wei, F.; Liang, S.; Wei, Y. Integral human pose regression. In Proceedings of the Proceedings of the European conference on computer vision (ECCV), 2018, pp. 529–545.

| Model | Backbone | Layers | Heads | Flops | Params |

|---|---|---|---|---|---|

| CaFTNet-R | ResNet | 4 | 8 | 5.29G | 5.55M |

| CaFTNet-H3 | HRNet-W32 | 4 | 1 | 8.46G | 17.03M |

| CaFTNet-H4 | HRNet-W48 | 4 | 1 | 8.73G | 17.30M |

| Model | Backbone | AP | AR | Flops | Params |

|---|---|---|---|---|---|

| CaFTNet-R | ResNet | 73.7 | 79.0 | 5.29G | 5.55M |

| CaFTNet-H3 | HRNet-W32 | 75.6 | 80.9 | 8.46G | 17.03M |

| CaFTNet-H4 | HRNet-W48 | 76.2 | 81.2 | 8.73G | 17.30M |

| Model | Input Size | AP | AR | Flops | Params |

|---|---|---|---|---|---|

| ResNet-50[33] | 256×192 | 70.4 | 76.3 | 8.9G | 34.0M |

| ResNet-101[33] | 256×192 | 71.4 | 76.3 | 12.4G | 53.0M |

| ResNet-152[33] | 256×192 | 72 | 77.8 | 35.3G | 68.6M |

| TransPose-R-A3[57] | 256×192 | 71.7 | 77.1 | 8.0G | 5.2M |

| TransPose-R-A4[57] | 256×192 | 72.6 | 78.0 | 8.9G | 6.0M |

| CaFTNet-R | 256×192 | 73.7 | 79.0 | 5.29G | 5.55M |

| HRNet-W32[14] | 256×192 | 74.7 | 79.8 | 7.2G | 28.5M |

| HRNet-W48[14] | 256×192 | 75.1 | 80.4 | 14.6G | 63.6M |

| TransPose-H-A4[57] | 256×192 | 75.3 | 80.3 | 17.5G | 17.3M |

| TransPose-H-A6[57] | 256×192 | 75.8 | 80.8 | 21.8G | 17.5M |

| TokenPose-L/D6[58] | 256×192 | 75.4 | 80.4 | 9.1G | 20.8M |

| TokenPose-L/D24[58] | 256×192 | 75.8 | 80.9 | 11.0G | 27.5M |

| CaFTNet-H3 | 256×192 | 75.6 | 80.9 | 8.46G | 17.03M |

| CaFTNet-H4 | 256×192 | 76.2 | 81.2 | 8.73G | 17.30M |

| Model | Input Size | AP | Params | ||||

|---|---|---|---|---|---|---|---|

| G-RMI[18] | 357×257 | 64.9 | 85.5 | 71.3 | 62.3 | 70.0 | 42.6M |

| Integral[59] | 256×256 | 67.8 | 88.2 | 74.8 | 63.9 | 74.0 | 45.0M |

| CPN [48] | 384×288 | 72.1 | 91.4 | 80.0 | 68.7 | 77.2 | 58.8M |

| RMPE[16] | 320×256 | 72.3 | 89.2 | 79.1 | 68.0 | 78.6 | 28.1M |

| SimpleBaseline[24] | 384×288 | 73.7 | 91.9 | 81.8 | 70.3 | 80.0 | 68.6M |

| HRNet-W32[14] | 384×288 | 74.9 | 92.5 | 82.8 | 71.3 | 80.9 | 28.5M |

| HRNet-W48[14] | 256×192 | 74.2 | 92.4 | 82.4 | 70.9 | 79.7 | 63.6M |

| TransPose-H-A4[57] | 256×192 | 74.7 | 91.6 | 82.2 | 71.4 | 80.7 | 17.3M |

| TransPose-H-A6[57] | 256×192 | 75.0 | 92.2 | 82.3 | 71.3 | 81.1 | 17.5M |

| TokenPose-L/D6[58] | 256×192 | 74.9 | 90.0 | 81.8 | 71.8 | 82.4 | 20.8M |

| TokenPose-L/D24[58] | 256×192 | 75.1 | 90.3 | 82.5 | 72.3 | 82.7 | 27.5M |

| CaFTNet-H3 | 256×192 | 75.0 | 90.0 | 82.0 | 71.5 | 82.5 | 17.03M |

| CaFTNet-H4 | 256×192 | 75.5 | 90.4 | 82.8 | 72.5 | 83.3 | 17.30M |

| Model | bottleneck | Transformerneck | AP |

|---|---|---|---|

| CaFTNet-R | ✓ | 72.6 | |

| CaFTNet-R | ✓ | 73.2 | |

| CaFTNet-H | ✓ | 75.3 | |

| CaFTNet-H | ✓ | 75.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).