Submitted:

15 December 2023

Posted:

18 December 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation

1.2. Empathy Modeling

1.3. Structure of the Paper

2. Materials and Methods

2.1. Empathy Theory

- Emotional and cognitive empathy model – the model was developed from medical and neuroscientific research of human brain and has its justification in the brain structure. It assumes that empathy can be divided into parts: 1). responsible for recognizing and reacting to emotions; 2). a part responsible for cognitive, more logical, and deductive mechanisms of understanding the inner states of others [16].

- Russian doll model – the model assumes that empathy is learned during human life - it resembles a Russian doll, with layers of different levels of understanding others. The first, most inner layers are mimicry and automatic emotional reactions, the next layers are understanding others’ feelings and the outer layers are taking the perspective of others, sympathizing, and experiencing schadenfreude [17].

- Multi-dimensional model - This model assumes that we have four dimensions of empathy - antecedents, processes, interpersonal outcomes, and intrapersonal outcomes. Antecedents encompass the agent’s characteristics: biological capacities, learning history, and situation. Processes produce empathetic behaviours: non-cooperative mechanisms, simple cognitive mechanisms, and advanced cognitive mechanisms. Intrapersonal outcomes are to resonate or not with the empathy target, and interpersonal outcomes are relationship related [18].

2.2. Available Experimental Environments

-

Stand-alone robots, allowing the construction and modeling of swarm behavior.

- Kilobot [3]: is a swarm-adapted robot with a diameter of 3.3 cm, developed in 2010 at Harvard University. It operates in a swarm of up to a thousand copies, carrying out user-programmed commands. The total cost of Kilobot parts was less than $15. Kilobots move in a vibration-based manner. In addition, they are capable of recognizing light intensity, communicating and measuring distance to nearby units. Currently, the project is not being actively developed, but it is still popular among researchers.

- e-puck2 [39]: is a 7 cm diameter mini mobile robot developed in 2018 at the Swiss Federal Institute of Technology in Lausanne. It supports Wi-Fi and USB connectivity. It has numerous sensors including IR proximity, sound, IMU, distance sensor, camera. The project is being developed in open source and open hardware.

- MONA [40]: is an open-hardware/open source swarm research robotic platform developed in 2017 at the University of Menchester. MONA is a small, round robot with a diameter of 8 cm, equipped with 5 IR transmitters, based on Arduino architecture.

- Colias [41]: is an inexpensive 4 cm diameter micro-robot for swarm simulation, developed in 2012 at the University of Lincoln. Long-range infrared modules with adjustable output power allow the robot to communicate with its immediate neighbors at a range of 0.5 cm to 2 m. The robot has two boards - an upper board responsible for high-level functions (such as communication), and a lower board for low-level functions such as power management and motion control.

- SwarmUS [42]: is a project that helps create swarms of mobile robots using existing devices. It is a generic software platform that allows researchers and robotics enthusiasts to easily deploy code in their robots. SwarmUS provides the basic infrastructure needed for robots to form a swarm: a decentralized communication stack and a localization module that helps robots locate each other without the need for a common reference. The project is not in development as of 2021.

-

Robot simulation software

- AWS Robomaker: is a cloud-based simulation service released in 2018 by Amazon, with which robotics developers can run, scale and automate simulations without managing any infrastructure, and create user-defined, random 3D environments. Using the simulation service, you can speed up application testing and create hundreds of new worlds based on templates you define.

- CoppeliaSim [1]: is a robotics simulator with an integrated development environment, it is based on the concept of distributed control: each object/model can be individually controlled using a built-in script, plug-in, ROS node, remote API client or other custom solution. This makes it versatile and ideal for multi-robot modeling applications. It is used for rapid algorithm development, simulation automation of complex processes, rapid prototyping and verification, and robotics-related education.

- EyeSim [43]: is a virtual reality mobile robot simulator based on the Unity engine, which is able to simulate all the main functions of RoBIOS-7. Users can build custom 3D simulation environments, place any number of robots, and add custom objects to the simulation. Thanks to Unity’s physics engine, robot motion simulations are highly realistic. Users can also add bugs to the simulation, using built-in simulated bug functions.

-

Comprehensive services including simulator and hardware platform.

- AWS DeepRacer: is a 1/18 scale fully autonomous racing car designed in 2017 by Amazon and controlled by Reinforcement Learning algorithms. It offers a graphical user interface that can be used to train the model and to evaluate its performance in a simulator. AWS DeepRacer, on the other hand, is a Wi-Fi enabled physical vehicle that can drive autonomously on a physical track using a model created in simulations.

- Kilogrid [44]: is an open-source Kilobot robot virtualization and tracking environment. It was designed in 2016 at the Free University of Brussels to extend Kilobot’s sensorimotor capabilities, simplify the task of collecting data during experiments, and provide researchers with a tool to precisely control the experiment’s configuration and parameters. Kilogrid leverages the robot’s infrared communication capabilities to provide a reconfigurable environment. In addition, Kilogrid enables researchers to automatically collect data during an experiment, simplifying the design of collective behavior and its analysis.

3. Results

3.1. Artificial Empathy of a Swarm

3.1.1. Egoistic Behaviour Evaluation Module

3.1.2. Artificially Empathetic Behaviour Evaluation Module

3.1.3. Memory Module

3.1.4. Decision Making

3.1.5. Learning

3.2. Simulation Results

3.2.1. Problem Description

- Call for help

- Encircling the rat

- Helping

- Another robot nearby

- Rat nearby

-

Detection of a rat in the warehouse – solitary pursuit

- −

- Robot 1 patrols the warehouse

- −

- Robot 1 notices a rat

- −

- Robot 1 starts chasing the rat

- −

- Robot 1 catches the rat, meaning it approaches the rat to a certain distance

-

Detection of a rat in the warehouse – pursuit handover

- −

- Robot 1 patrols the warehouse

- −

- Robot 1 notices a rat in the adjacent area

- −

- Robot 1 lights up the appropriate color on the LED tower to inform Robot 2 that there is a rat in Robot 2’s area

- −

- Robot 2, noticing the appropriate LED color, starts chasing the rat

- −

- Robot 2 catches the rat, meaning it approaches the rat to a certain distance

-

Detection of a rat in the warehouse – collaboration

- −

- Robot 1 patrols the warehouse

- −

- Robot 1 notices a rat

- −

- Robot 1 starts chasing the rat

- −

- The rat goes beyond Robot 1’s patrol area

- −

- Robot 1 lights up the appropriate color on the LED tower to inform Robot 2 that the rat entered its area

- −

- Robot 2, noticing the appropriate LED color, continues chasing the rat

- −

- Robot 2 catches the rat, meaning it approaches the rat to a certain distance

-

Change of grain color

- −

- Robot 1 patrols the warehouse

- −

- Robot 1 notices that the grain color is different than it should be

- −

- Robot 1 records the event in a report

- −

- Robot 1 continues patrolling

-

Change of grain color - uncertainty

- −

- Robot 1 patrols the warehouse

- −

- Robot 1 notices that the grain color is possibly different than it should be – uncertain information

- −

- Robot 1 lights up the appropriate color on the LED tower

- −

- Robot 2, noticing the appropriate LED color, expresses a willingness to help and approaches Robot 1

- −

- Robot 2 from the adjacent area checks the grain color and confirms or denies Robot 1’s decision

- −

- Robot 1 records the event in a report if confirmed by Robot 2

- −

- Robot 2 from the adjacent area returns and continues patrolling

- −

- Robot 1 also continues patrolling

-

Weak battery

- −

- Robot 1 has a weak battery

- −

- Robot 1 lights up the appropriate color on the LED tower, expressing a desire to recharge its battery

- −

- Robot 2, noticing the appropriate LED color, agrees to let Robot 1 recharge the battery

- −

- Robot 1 goes to recharge

- −

- Robot 2 additionally takes over Robot 1’s area for patrolling

-

Exchange of patrol zones

- −

- Robot 1 has passed through its patrol area several times without any events.

- −

- Robot 1 lights up the appropriate color on the LED tower, expressing a desire to exchange the patrol area

- −

- Robot 2, noticing the appropriate LED color, expresses a desire to exchange the patrol area

- −

- Robot 1 and Robot 2 exchange patrol areas

3.2.2. Implementation

3.2.3. Results

- Egoistic, two rats. Shortly after starting a patrol, both robots spot the same rat and start chasing it. Meanwhile, the second rat destroys the grain located in the middle of the arena. After neutralizing the first rat, one of the robots begins chasing the second pest.

- Empathetic, two rats. The robot on the right spots a rat and signals it with an LED strip. The second robot, noticing this, continues to patrol the surroundings in search of other pests. After a while, it detects the second rat and starts following it. As a result, both rats are neutralized and grain loss is reduced.

- Egoistic, robots run out of battery. Robots detect the same rat. During the chase, the robots interfere with each other, making it difficult to follow and neutralize the rat. Eventually, the rat is neutralized, but before the robots can spot and begin their pursuit of the other pest, both of them run out of battery and the second rat escapes.

- Empathetic, low battery help. The robot on the right starts chasing the detected rat. During this action, the agent signals with an LED strip that it needs assistance, due to a low battery level. The other robot notices this and decides to help to catch the weaker rat. After neutralizing it, the second robot starts searching for other pests.

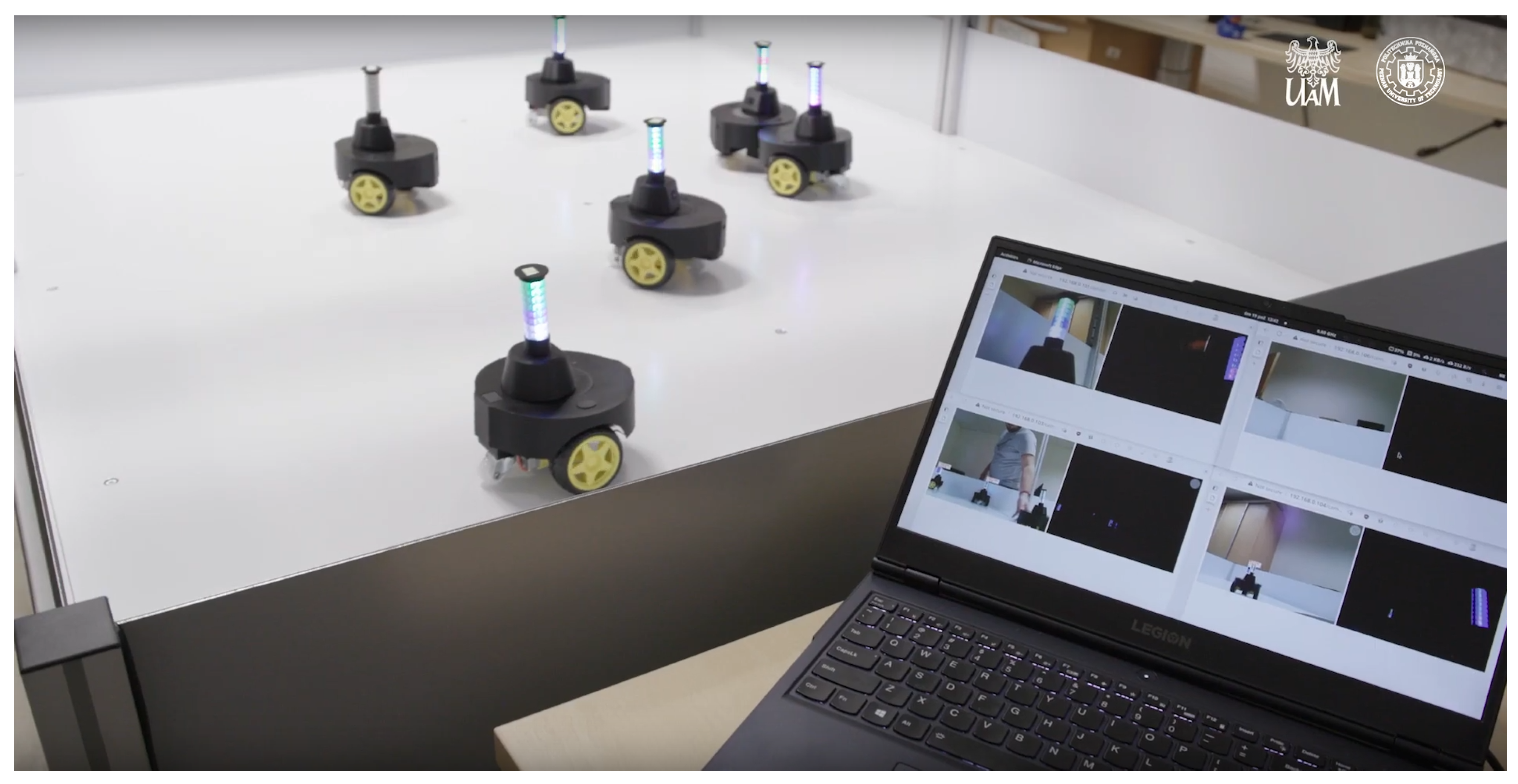

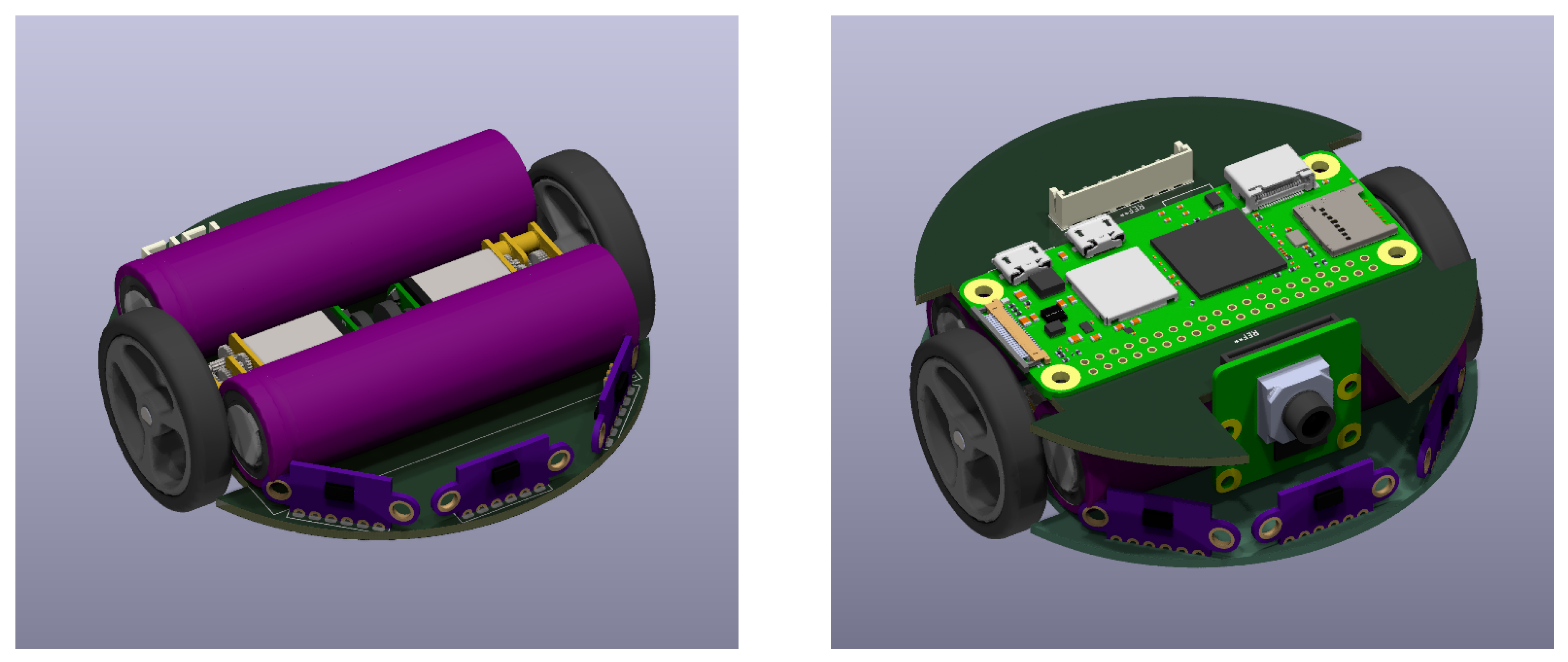

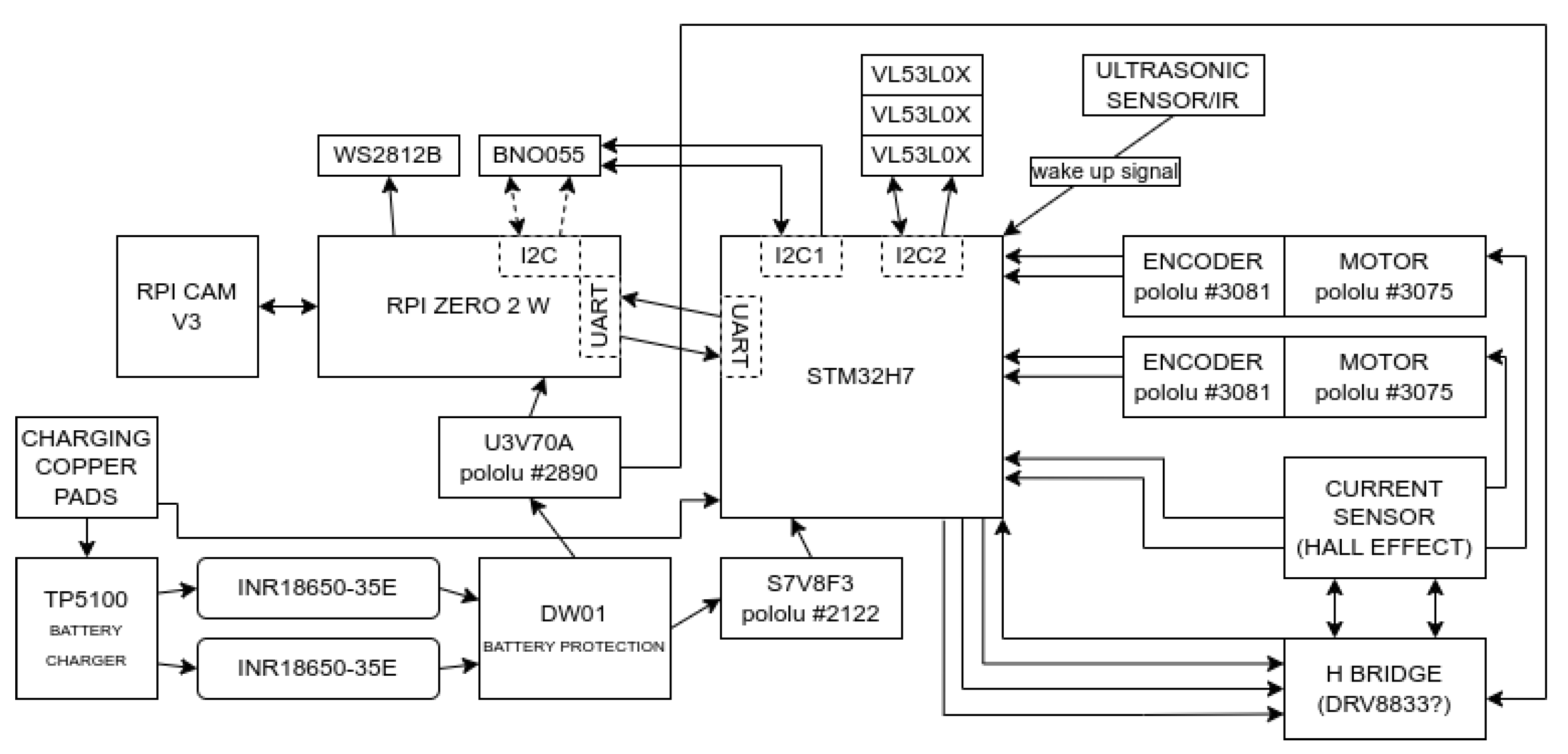

3.3. Open-Source Physical-Based Experimentation Platform

3.3.1. Proposed Platform Features and Architecture

- Comprehensive Support for Swarm Design Process using Hardware Platform This feature corresponds to the need to verify AI algorithms in a hardware environment, including early-stage development, consideration of environment parameters unavailable in simulations, and the ability to study algorithms considering variable environments and interactions. In this area, there are two alternatives: comprehensive algorithm evaluation (Kilogrid + Kilobots, DeepRacer) and simulation software (CoppeliaSim, DynaVizXMR, EyeSim, Microsoft Robotics). Alternative solutions only support the design process in simulated environments or require significant financial investment for prototyping, limiting accessibility in early development stages.

- Low Cost of Building and Size of Swarm Robots This feature corresponds to the need for evaluating complex behaviors and the latest AI algorithms in a large swarm of robots, considering the requirement for low cost and easy availability of solutions. Alternatives includes miniature robots like Kilobots and minisumo robots. Those solutions are expensive, with costs often including additional resources and services. Additionally, computational power drastically decreases with the robot’s size, limiting capabilities such as running a vision system.

- Remote Programming of Robots This feature addresses the need for sharing research/educational infrastructure without physical access, fostering interdisciplinary and international research collaborations. Alternatives include cloud-based robot simulators like AWS Robomaker and DeepRacer. In competitive solutions, this functionality is only available in simulations or limited environments and specific research areas.

- Standardization and Scalability of Experimental Environment This feature corresponds to the need to adapt and expand the experimental platform to different projects while maintaining standardization for experiment repeatability and reproducibility, facilitating comparison across research centers. Alternatives include open-source software and hardware projects like SwarmUS, Kilobots, and colias.robot, as well as simulation software. They lack the ability to expand robot software in any way using high-level languages. Moreover, existing solutions are not designed for result repeatability (e.g., randomness in Kilobots’ movements).

- Open Specification and Hardware This feature corresponds to the need for independently building a complete experimental platform. Current solutions include open-source software and hardware projects such as SwarmUS, Kilobots, and colias.robot. Most competitive solutions are closed, and open solutions often have limited computational resources.

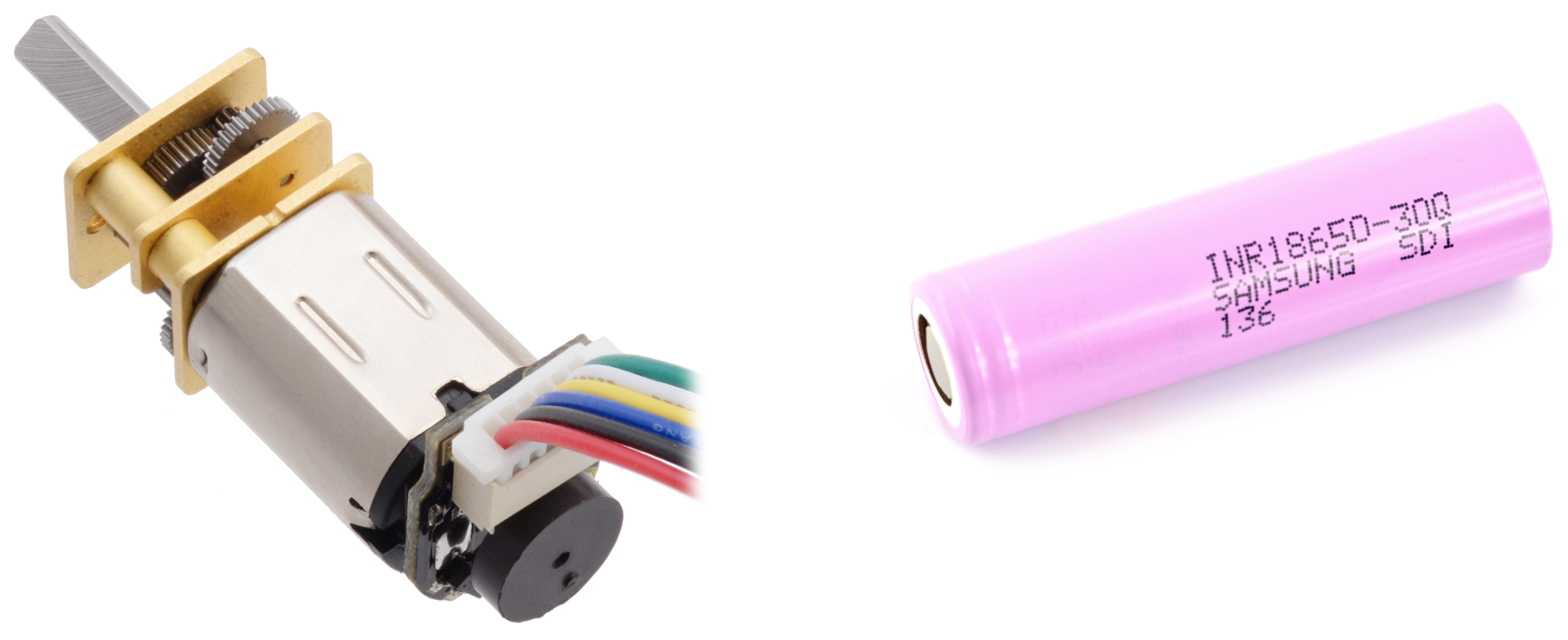

3.3.2. Platform Implementation

First Prototype

Second Prototype

- The robots need to be stopped and physically plugged in for charging when the batteries run out. This causes delays in conducting experiments.

- Current robots are characterized by large dimensions compared to the work area. This minimizes the simultaneous number of robots that can move around the arena.

- The presence of an experimenter is required to activate the robots. This makes it impossible to conduct remote experiments.

Experimentation Arena

4. Conclusions

References

- Rohmer, E.; Singh, S.P.N.; Freese, M. CoppeliaSim (formerly V-REP): a Versatile and Scalable Robot Simulation Framework. Proc. of The International Conference on Intelligent Robots and Systems (IROS), 2013.

- Balaji, B.; Mallya, S.; Genc, S.; Gupta, S.; Dirac, L.; Khare, V.; Roy, G.; Sun, T.; Tao, Y.; Townsend, B.; Calleja, E.; Muralidhara, S.; Karuppasamy, D. DeepRacer: Educational Autonomous Racing Platform for Experimentation with Sim2Real Reinforcement Learning. 2019; arXiv:cs.LG/1911.01562. [Google Scholar]

- Rubenstein, M.; Ahler, C.; Nagpal, R. Kilobot: A low cost scalable robot system for collective behaviors. 2012 IEEE International Conference on Robotics and Automation, 2012, pp. 3293–3298. [CrossRef]

- Drigas, A.S.; Papoutsi, C. A new layered model on emotional intelligence. Behavioral Sciences 2018, 8, 45. [Google Scholar] [CrossRef]

- Barbey, A.K.; Colom, R.; Grafman, J. Distributed neural system for emotional intelligence revealed by lesion mapping. Social cognitive and affective neuroscience 2014, 9, 265–272. [Google Scholar] [CrossRef]

- Decety, J.; Lamm, C. Human empathy through the lens of social neuroscience. The Scientific World Journal 2006, 6, 1146–1163. [Google Scholar] [CrossRef]

- Xiao, L.; Kim, H.j.; Ding, M. An introduction to audio and visual research and applications in marketing. Review of Marketing Research 2013. [Google Scholar]

- Yalçın, Ö.N.; DiPaola, S. Modeling empathy: building a link between affective and cognitive processes. Artificial Intelligence Review 2020, 53, 2983–3006. [Google Scholar] [CrossRef]

- Fougères, A.J. A modelling approach based on fuzzy agents. arXiv, 2013; arXiv:1302.6442. [Google Scholar]

- Yulita, I.N.; Fanany, M.I.; Arymuthy, A.M. Bi-directional long short-term memory using quantized data of deep belief networks for sleep stage classification. Procedia computer science 2017, 116, 530–538. [Google Scholar] [CrossRef]

- Mohmed, G.; Lotfi, A.; Pourabdollah, A. Enhanced fuzzy finite state machine for human activity modelling and recognition. Journal of Ambient Intelligence and Humanized Computing 2020, 11, 6077–6091. [Google Scholar] [CrossRef]

- Dubois, D.; Prade, H. The three semantics of fuzzy sets. Fuzzy sets and systems 1997, 90, 141–150. [Google Scholar] [CrossRef]

- Żywica, P.; Baczyński, M. An effective similarity measurement under epistemic uncertainty. Fuzzy sets and systems 2022, 431, 160–177. [Google Scholar] [CrossRef]

- Asada, M. Development of artificial empathy. Neuroscience research 2015, 90, 41–50. [Google Scholar] [CrossRef]

- Suga, Y.; Ikuma, Y.; Nagao, D.; Sugano, S.; Ogata, T. Interactive evolution of human-robot communication in real world. 2005 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2005, pp. 1438–1443.

- Asada, M. Towards artificial empathy. International Journal of Social Robotics 2015, 7, 19–33. [Google Scholar] [CrossRef]

- De Waal, F.B. The ‘Russian doll’model of empathy and imitation. On being moved: From mirror neurons to empathy 2007, pp. 35–48.

- Yalçın, Ö.N. Empathy framework for embodied conversational agents. Cognitive Systems Research 2020, 59, 123–132. [Google Scholar] [CrossRef]

- Morris, R.R.; Kouddous, K.; Kshirsagar, R.; Schueller, S.M. Towards an artificially empathic conversational agent for mental health applications: system design and user perceptions. Journal of medical Internet research 2018, 20, e10148. [Google Scholar] [CrossRef]

- Vargas Martin, M.; Pérez Valle, E.; Horsburgh, S. Artificial Empathy for Clinical Companion Robots with Privacy-By-Design. International Conference on Wireless Mobile Communication and Healthcare. Springer, 2020, pp. 351–361.

- Montemayor, C.; Halpern, J.; Fairweather, A. In principle obstacles for empathic AI: why we can’t replace human empathy in healthcare. Ai & Society 2021, pp. 1–7.

- Fiske, A.; Henningsen, P.; Buyx, A.; others. Your robot therapist will see you now: ethical implications of embodied artificial intelligence in psychiatry, psychology, and psychotherapy. Journal of medical Internet research 2019, 21, e13216. [Google Scholar] [CrossRef]

- Leite, I.; Pereira, A.; Castellano, G.; Mascarenhas, S.; Martinho, C.; Paiva, A. Modelling empathy in social robotic companions. International conference on user modeling, adaptation, and personalization. Springer, 2011, pp. 135–147.

- Possati, L.M. Psychoanalyzing artificial intelligence: the case of Replika. AI & SOCIETY 2022, pp. 1–14.

- Affectiva Inc. . Media Analytics. Accessed Feb 2023.

- Leite, I.; Mascarenhas, S. ; others. Why can’t we be friends? An empathic game companion for long-term interaction. International Conference on Intelligent Virtual Agents. Springer, 2010, pp. 315–321.

- Sierra Rativa, A.; Postma, M.; Van Zaanen, M. The influence of game character appearance on empathy and immersion: Virtual non-robotic versus robotic animals. Simulation & Gaming 2020, 51, 685–711. [Google Scholar]

- Blanchard, L. Creating empathy in video games. The University of Dublin, Dublin, 2016. [Google Scholar]

- Aylett, R.; Barendregt, W.; Castellano, G.; Kappas, A.; Menezes, N.; Paiva, A. An embodied empathic tutor. 2014 AAAI Fall Symposium Series, 2014.

- Obaid, M.; Aylett, R.; others. Endowing a robotic tutor with empathic qualities: design and pilot evaluation. International Journal of Humanoid Robotics 2018, 15, 1850025. [Google Scholar] [CrossRef]

- Affectiva Inc.. Interior Sensing. Accessed Feb 2023.

- Ebert, J.T.; Gauci, M.; Nagpal, R. Multi-feature collective decision making in robot swarms. Proceedings of the 17th International Conference on Autonomous Agents and MultiAgent Systems, 2018, pp. 1711–1719.

- Huang, F.W.; Takahara, M.; Tanev, I.; Shimohara, K. Effects of Empathy, Swarming, and the Dilemma between Reactiveness and Proactiveness Incorporated in Caribou Agents on Evolution of their Escaping Behavior in the Wolf-Caribou Problem. SICE Journal of Control, Measurement, and System Integration 2018, 11, 230–238. [Google Scholar] [CrossRef]

- Witkowski, O.; Ikegami, T. Swarm Ethics: Evolution of Cooperation in a Multi-Agent Foraging Model. Proceedings of the First International Symposium on Swarm Behavior and Bio-Inspired Robotics 2015.

- Chen, J.; Zhang, D.; Qu, Z.; Wang, C. Artificial Empathy: A New Perspective for Analyzing and Designing Multi-Agent Systems. IEEE Access 2020, 8, 183649–183664. [Google Scholar] [CrossRef]

- Li, H.; Oguntola, I.; Hughes, D.; Lewis, M.; Sycara, K. Theory of Mind Modeling in Search and Rescue Teams. IEEE International Conference on Robot and Human Interactive Communication, 2022, pp. 483–489. [CrossRef]

- Li, H.; Zheng, K.; Lewis, M.; Hughes, D.; Sycara, K. Human theory of mind inference in search and rescue tasks. Proceedings of the Human Factors and Ergonomics Society Annual Meeting. SAGE Publications Sage CA: Los Angeles, CA, 2021, Vol. 65, pp. 648–652.

- Huang, F.; Takahara, M.; Tanev, I.; Shimohara, K. Emergence of collective escaping strategies of various sized teams of empathic caribou agents in the wolf-caribou predator-prey problem. IEEJ Transactions on Electronics, Information and Systems 2018, 138, 619–626. [Google Scholar] [CrossRef]

- Mondada, F.; Bonani, M.; Raemy, X.; Pugh, J.; Cianci, C.; Klaptocz, A.; Magnenat, S.; Zufferey, J.C.; Floreano, D.; Martinoli, A. The e-puck, a robot designed for education in engineering. Proceedings of the 9th conference on autonomous robot systems and competitions. IPCB: Instituto Politécnico de Castelo Branco, 2009, Vol. 1, pp. 59–65.

- Arvin, F.; Espinosa, J.; Bird, B.; West, A.; Watson, S.; Lennox, B. Mona: an affordable open-source mobile robot for education and research. Journal of Intelligent & Robotic Systems 2019, 94, 761–775. [Google Scholar]

- Arvin, F.; Murray, J.; Zhang, C.; Yue, S. Colias: An autonomous micro robot for swarm robotic applications. International Journal of Advanced Robotic Systems 2014, 11, 113. [Google Scholar] [CrossRef]

- Villemure, É.; Arsenault, P.; Lessard, G.; Constantin, T.; Dubé, H.; Gaulin, L.D.; Groleau, X.; Laperrière, S.; Quesnel, C.; Ferland, F. SwarmUS: An open hardware and software on-board platform for swarm robotics development. arXiv 2022, arXiv:2203.02643. [Google Scholar]

- Bräunl, T. The EyeSim Mobile Robot Simulator. Technical report, CITR, The University of Auckland, New Zealand, 2000.

- Valentini, G.; Antoun, A.; Trabattoni, M.; Wiandt, B.; Tamura, Y.; Hocquard, E.; Trianni, V.; Dorigo, M. Kilogrid: a novel experimental environment for the Kilobot robot. Swarm Intelligence 2018, 12, 245–266. [Google Scholar] [CrossRef]

- MacQueen, J. ; others. Some methods for classification and analysis of multivariate observations. Proceedings of the fifth Berkeley symposium on mathematical statistics and probability. Oakland, CA, USA, 1967, Vol. 1, pp. 281–297.

- Redmon, J.; Farhadi, A. YOLO9000: Better, Faster, Stronger. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. 6517–6525. [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition, 2014. [CrossRef]

- Żywica, P.; Wójcik, A.; Siwek, P. Open-source Physical-based Experimentation Platform source code repository, 2023.

| 1 | Videos available on https://github.com/open-pep/coppelia-simulations

|

| 2 | The project is in the process of migrating from an internal repository to GitHub and not all components are available yet |

| Name | Sym | Description of boundary values |

| others close | a | 1 many other agents in the vicinity, 0 for none |

| in touch | n | 1 for long contact time, 0 for none |

| long search | t | 1 for long duration of current search, 0 for not searching |

| calling for help | c | 1 for calling for a long time, 0 for not calling |

| neutralized | e | 1 if "I am inactive" signal was received from newly inactive agent; 0 if not |

| close to neighbour | d | 1 if the distance to neighbour is 0; 0 if distance to neighbour is far |

| target at right | p | 1 for agent at the immediate right, 0 for agent not in sight |

| target at left | l | 1 for agent at the immediate left, 0 for agent not in sight |

| fully charged | f | 1 for robot fully charged, 0 for not charged |

| helping | h | 1 for long duration of helping, 0 for not helping |

| reward | describes the chance of success of the current action sequence |

| parameter | ||||||

|---|---|---|---|---|---|---|

| a | 0.5 | 1.0 | 0.5 | 0.5 | 0.0 | 0.1 |

| n | 0.5 | 0.5 | 0.5 | 0.0 | 1.0 | 1.0 |

| t | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| c | 1.0 | 1.0 | 1.0 | 0.5 | 1.0 | 0.5 |

| d | 0.5 | 1.0 | 0.5 | 0.5 | 0.0 | 0.1 |

| p | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| l | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| f | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| h | 0.5 | 0.5 | 0.5 | 1.0 | 0.5 | 1.0 |

| 1.0 | 1.0 | 1.0 | 1.0 | 0.0 | 0.0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).