Submitted:

22 November 2023

Posted:

23 November 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

Better Call Saul

| Outcome | ||||

| + | − | |||

| Factor | + | a | c | a + c |

| − | b | d | b + d | |

| a + b | c + d | N | ||

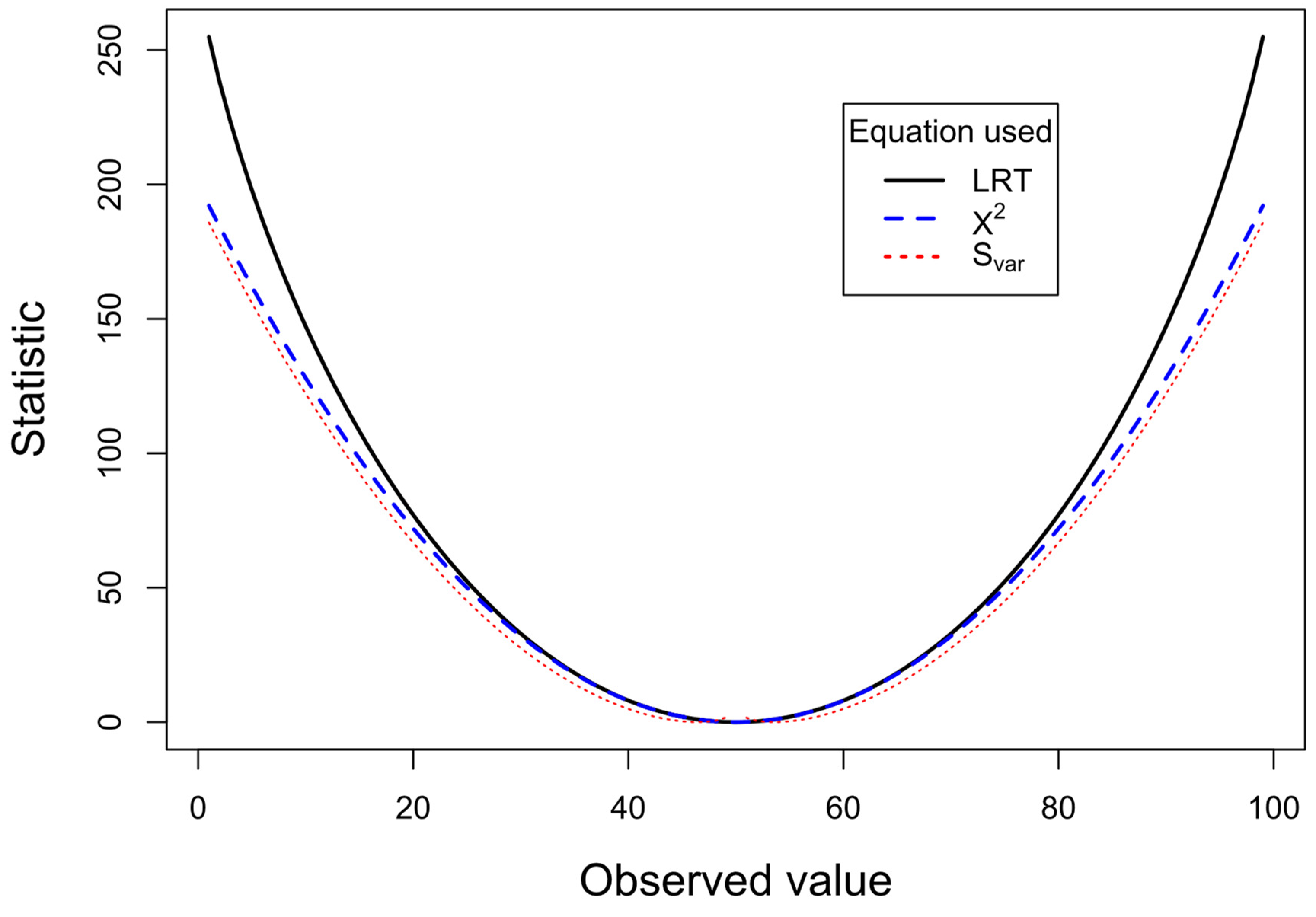

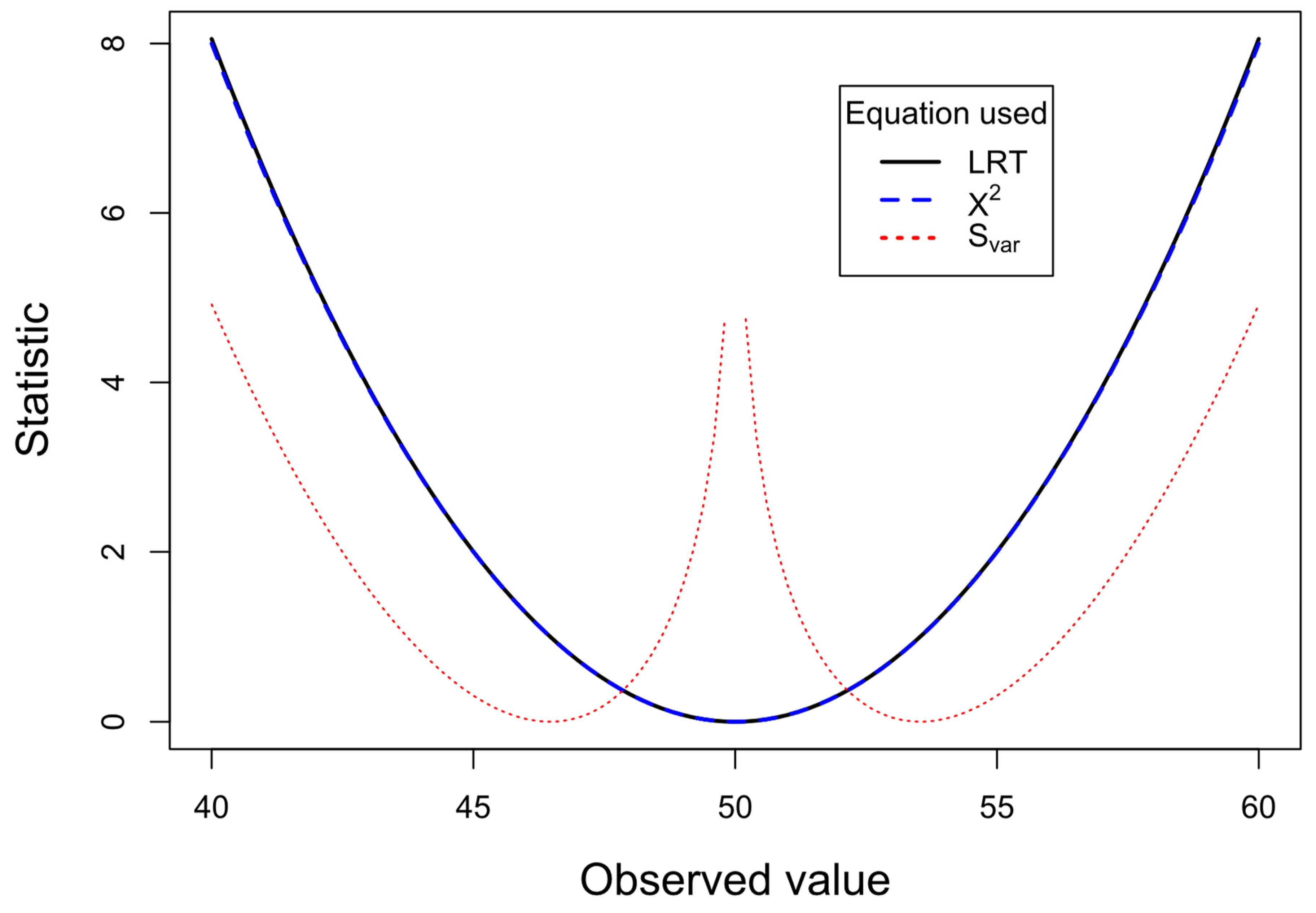

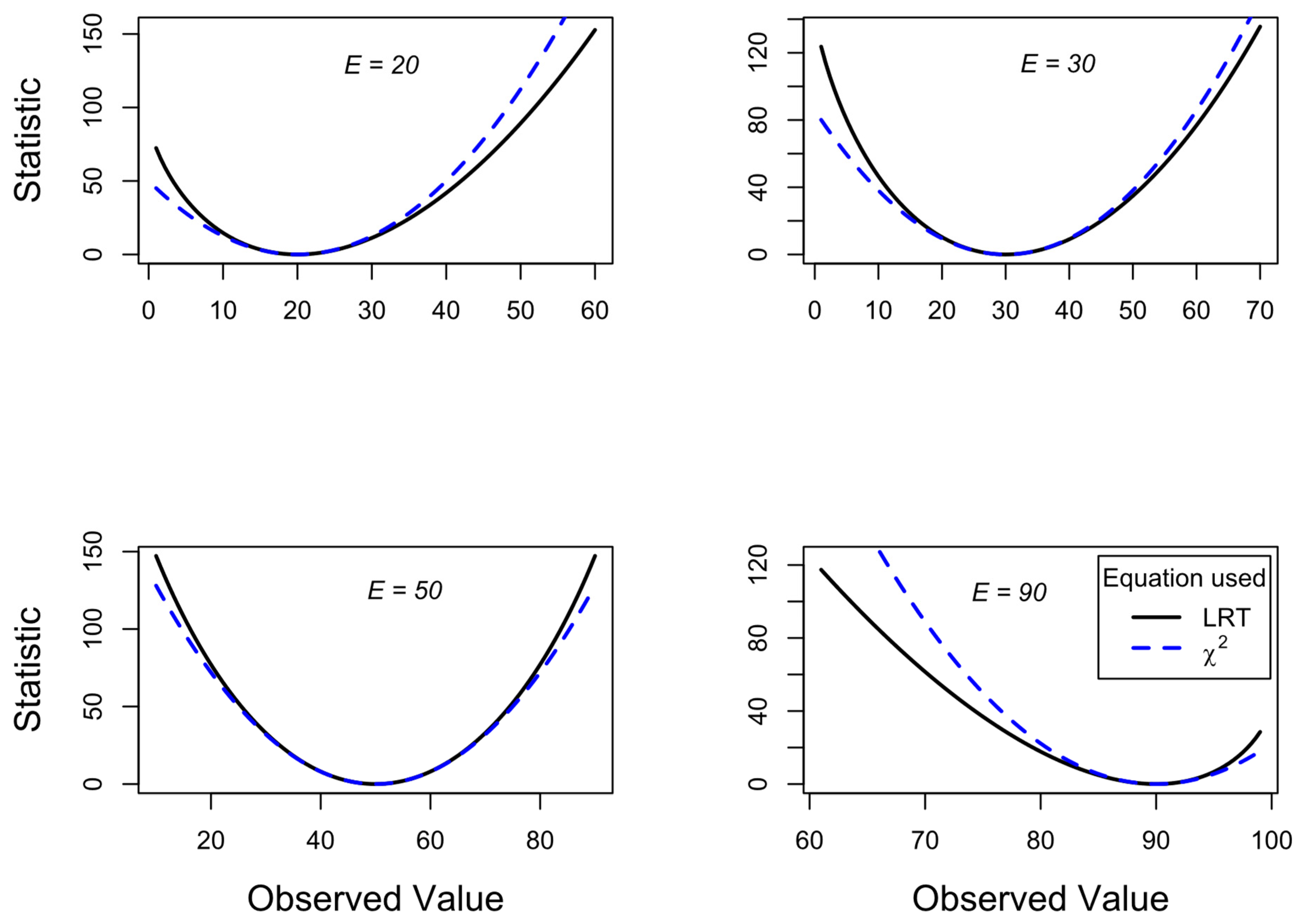

2. LRT Statistic versus χ2 Test Statistic

3. Empirical Data

| Death | Survival | ||

| Treatment | 80 | 9920 | 10000 |

| Placebo | 120 | 9880 | 10000 |

| 200 | 19800 | 20000 |

| Death | Survival | ||

| Modified treatment | 3 | 997 | 1000 |

| Placebo | 10 | 990 | 1000 |

| 13 | 1987 | 2000 |

| Death | Survival | |

| Treatment | 0.004 | 0.496 |

| Placebo | 0.006 | 0.494 |

4. Discussion

“…as is explained at various places throughout the text, G has general theoretical advantages over X2, as well as being computationally simpler for tests of independence. It may be confusing to the reader to have two alternative tests presented for most types of problems and our inclination would be to drop the chi-square tests entirely and teach G only. …to the newcomer to statistics, however, we would recommend that he familiarize himself principally with the G-tests.”

“…as we will explain, G has theoretical advantages over X2 in addition to being computationally simpler, not only by computer but also on most calculators.”

“…it is important that the likelihood always exists, and is directly calculable. It is usually convenient to tabulate its logarithm…”

| S | Interpretation H1 vs H2 |

| 0 | No evidence either way |

| 1 | Weak evidence |

| 2 | Moderate evidence |

| 3 | Strong evidence |

| 4 | Extremely strong evidence |

Consent Statement/Ethical Approval

Declaration of Competing Interest

Submission Declaration

Supplementary Materials

Funding

References

- Agresti, A. (2013), Categorical data analysis (3rd ed.): John Wiley & Sons.

- Armitage, P., Berry, G., and Matthews, J. N. S. (2002), Statistical Methods in Medical Research (Vol. 4): WileyBlackwell.

- Cahusac, P.M.B. (2020), Evidence-Based Statistics: An Introduction to the Evidential Approach – from Likelihood Principle to Statistical Practice, New Jersey: John Wiley & Sons.

- Cochran, W.G. (1936), "The X2 Distribution for the Binomial and Poisson Series with Small Expectations," Annals of Eugenics, 7 (3), 207-217. [CrossRef]

- Dennis, B., Ponciano, J. M., Taper, M. L., and Lele, S. R. (2019), "Errors in Statistical Inference Under Model Misspecification: Evidence, Hypothesis Testing, and AIC," Frontiers in Ecology and Evolution, 7. [CrossRef]

- Edwards, A. (1986a), "More on the too-good-to-be-true paradox and Gregor Mendel," Journal of Heredity, 77 (2), 138-138.

- Edwards, A.W.F. (1972), Likelihood, Cambridge: Cambridge University Press.

- --- (1986b), "Are Mendel's Results Really Too Close?," Biological Reviews, 61 (4), 295-312. [CrossRef]

- --- (1992), Likelihood: expanded edition (Vol. 2nd edition), Baltimore: John Hopkins University Press.

- Fisher, R.A. (1922), "On the mathematical foundations of theoretical statistics," Philosophical Transactions of the Royal Society of London. Series A, Containing Papers of a Mathematical or Physical Character, 222 (594-604), 309-368.

- Fisher, R.A. (1936), "Has Mendel's work been rediscovered?," Annals of Science, 1 (2), 115-137. [CrossRef]

- Fisher, R.A. (1950), "The significance of deviations from expectation in a Poisson series," Biometrics, 6 (1), 17-24.

- Fisher, R.A. (1956), Statistical Methods and Scientific Inference (1st ed.), Edinburgh: Oliver & Boyd.

- Goodman, S.N. (1989), "Meta-analysis and evidence," Controlled Clinical Trials, 10 (2), 188-204.

- Goodman, S. N., and Royall, R. M. (1988), "Evidence and Scientific Research," American Journal of Public Health, 78 (12), 1568-1574. [CrossRef]

- Jeffreys, H. 1936. Further significance tests. In Mathematical Proceedings of the Cambridge Philosophical Society: Cambridge University Press.

- MacDougall, M. (2014), "Assessing the Integrity of Clinical Data: When is Statistical Evidence Too Good to be True?," Topoi, 33 (2), 323-337.

- Markatou, M., and Sofikitou, E. M. (2019), "Statistical distances and the construction of evidence functions for model adequacy," Frontiers in Ecology and Evolution, 7, 447.

- Neyman, J., and Pearson, E. S. (1928), "On the use and interpretation of certain test criteria for purposes of statistical inference," Biometrika, 175-240, 263-294.

- Neyman, J., Pearson, E. S., and Pearson, K. (1933), "On the problem of the most efficient tests of statistical hypotheses," Philosophical Transactions of the Royal Society of London. Series A, Containing Papers of a Mathematical or Physical Character, 231 (694-706), 289-337. [CrossRef]

- Pearson, K. (1900), "On the criterion that a given system of deviations from the probable in the case of a correlated system of variables is such that it can be reasonably supposed to have arisen from random sampling," The London, Edinburgh, and Dublin Philosophical Magazine and Journal of Science, 50 (302), 157-175. [CrossRef]

- Royall, R. (2000), "On the Probability of Observing Misleading Statistical Evidence," Journal of the American Statistical Association, 95 (451), 760-768. [CrossRef]

- Royall, R.M. (1997), Statistical Evidence: a Likelihood Paradigm, London: Chapman & Hall.

- Seaman, J. E., and Allen, I. E. (2018), "How Good Are My Data?," Quality Progress, 51 (7), 49-52.

- Sokal, R. R., and Rohlf, F. J. (1969), Biometry: The principles and practice of statistics in biological research, San Francisco: W. H. Freeman and Company.

- --- (1995), Biometry: the principles and practice of statistics in biological research (3rd ed.), New York: W. H. Freeman and Company.

- --- (2009), Introduction to Biostatistics (2nd ed.), New York: Dover Publications, Inc.

- Stuart, A. (1954), "Too Good to be True?," Journal of the Royal Statistical Society: Series C (Applied Statistics), 3 (1), 29-32. [CrossRef]

- Taper, M. L., and Lele, S. R. (eds). (2004), The Nature of Scientific Evidence: Statistical, Philosophical, and Empirical Considerations: University of Chicago Press.

- Taper, M. L., Lele, S. R., Ponciano, J. M., Dennis, B., and Jerde, C. L. (2021), "Assessing the global and local uncertainty of scientific evidence in the presence of model misspecification," Frontiers in Ecology and Evolution, 9, 679155.

- Taper, M. L., and Ponciano, J. M. (2016), "Evidential statistics as a statistical modern synthesis to support 21st century science," Population Ecology, 58 (1), 9-29.

- Taper, M. L., Ponciano, J. M., and Toquenaga, Y. 2022. Evidential Statistics, Model Identification, and Science. Frontiers Media SA.

- Wilks, S.S. (1938), "The Large-Sample Distribution of the Likelihood Ratio for Testing Composite Hypotheses," The Annals of Mathematical Statistics, 9 (1), 60-62.

- Williams, D.A. (1976), "Improved likelihood ratio tests for complete contingency tables," Biometrika, 63 (1), 33-37. [CrossRef]

- Woolf, B. (1957), "The log likelihood ratio test (the G test)," Annals of Human Genetics, 21 (4), 397-409. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).