Submitted:

01 August 2023

Posted:

02 August 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Hypothesis 1. Research Objective: Research Objective: The research aims to assess the audience's response to Llama 2 and verify Meta's expectations that an open-source model will experience faster development compared to closed-source models [2].

- Hypothesis 2. Research Objective: The research aims to assess the challenges encountered by early adopters in deploying the Llama 2 model.

- Hypothesis 3. Research Objective: The research aims to assess the challenges encountered by early adopters in fine-tuning the Llama 2 model.

- Hypothesis 4. Research Objective: The research seeks to unveil that the medical domain consistently ranks among the primary domains that early adopters engage with, undertaking fine-tuning of models.

2. Background and Context

2.1. Evolution of Language Models

2.2. Natural Language Processing (NLP)

2.3. Transformer Architecture

2.4. Supervised fine-tuning

3. Llama 2 Models and Licensing

3.1. Accessibility and Licensing

3.2. Llama 2 Models and Versions

4. Training Process

4.1. Pretraining Data

4.2. Llama 2 Fine-tuning

4.3. Llama 2 Eco-consciousness

- Llama 2 7B: 184,320 GPU hours, 400W power consumption, and 31.22 tCO2eq carbon emissions.

- Llama 2 13B: 368,640 GPU hours, 400W power consumption, and 62.44 tCO2eq carbon emissions.

- Llama 2 70B: 1,720,320 GPU hours, 400W power consumption, and 291.42 tCO2eq carbon emissions.

5. Llama 2: Early Adopters' Case Studies and Projects

5.1. Official Llama2 Recipes Repository

5.2. Llama2.c by @karpathykarpathy

5.3. Llama2-Chinese by @FlagAlpha

5.4. Llama2-chatbot by @a16z-infra

5.5. Llama2-webui by @liltom-eth

5.6. Llama-2-Open-Source-LLM-CPU-Inference by @kennethleungty

5.7. Docker-llama2-chat by @soulteary

5.8. Llama2 by @dataprofessor

5.9. Llama-2-jax by @ayaka14732

5.10. LLaMA2-Accessory by @Alpha-VLLM

5.11. Llama2-Medical-Chatbot by @AIAnytime

5.12. Llama2-haystack by @anakin87

5.13. Llama2 Chatbot by Perplexity AI

5.14. Llama2 Chatbot by NimbleBox AI

5.15. Indian-LawyerGPT by @NisaarAgharia

5.16. Document-based_question_answering_system_using_LLamaV2-7b Pu by @10deepaktripathi

5.17. Llama2-flask-api by @unconv

5.18. Amulets by @blackle

5.19. LLM-Pruner by @horseee

5.20. Llama2-discord-bot by @davidgm3

5.21. Llama2-qlora-finetunined-Arabic by @h9-tect

5.22. H2ogpt by @h2oai

5.23. Llama2-burn by @Gadersd

5.24. Llm_finetuning by @ssbuild

5.25. Llama2win by @xunboo

5.26. VietAI-experiment-LLaMA-2 by @longday1102

5.27. Llama2.go by @nikolaydubina

5.28. Llama2.openvino by @OpenVINO-dev-contest

5.29. Llama2_chat_templater by @samrawal

5.30. LLaMA-Efficient-Tuning by @hiyouga

5.31. Llama2-Prompt-Reverse-Engineered by @mosama1994

5.32. DemoGPT by @melih-unsal

6. Results

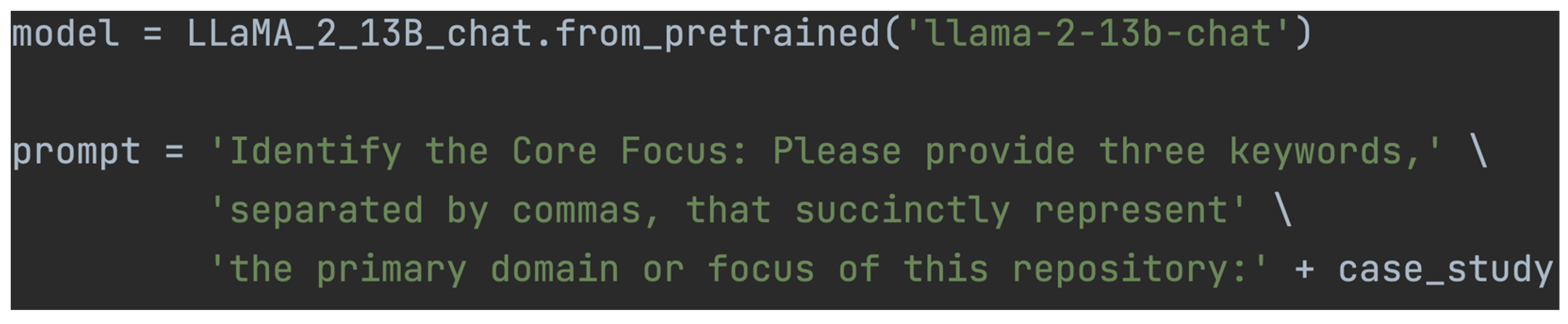

6.1. Data Pre-Processing and Llama 2 keyword Extraction

6.2. Data Analysis

- The dataset used in our study consists of projects' keywords, specifically the "Areas of Focus," retrieved from a CSV file. To begin the analysis, we preprocess the data, removing any irrelevant characters, and converting all text to lowercase to ensure consistency.

- Next, we perform feature extraction using Term Frequency-Inverse Document Frequency (TF-IDF) vectorization. This technique transforms the textual entries into numerical representations, capturing the importance of each keyword within the entire dataset [74].

- The K-Means clustering algorithm is then applied to the TF-IDF matrix to group similar projects' keywords into a pre-defined number of clusters (in our case, five). Each project is assigned a cluster label based on its similarity to other projects in the same cluster [Table 1].

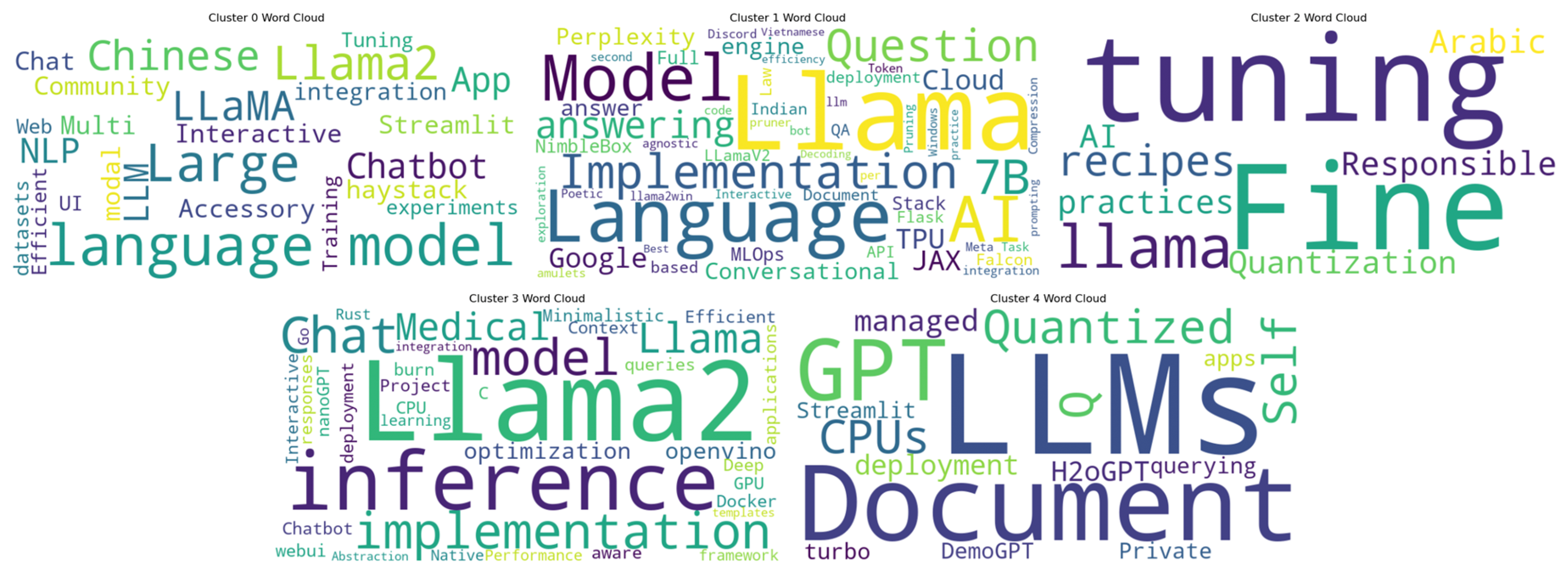

- Furthermore, we generate Word Clouds for each cluster, which display the most common keywords in each group. The size of each word in the Word Cloud reflects its frequency within the cluster [73]. By visually analyzing these Word Clouds, we gain insights into the main themes and focus areas within each cluster. The outcome of visualizing the Word Clouds for each cluster is depicted in Figure A1, presented in Appendix A.

- Cluster 0 - Language Model and Model Integration: Projects in Cluster 0 are predominantly related to language models, model compression, and integration with different frameworks or platforms. These projects seem to focus on improving the performance and efficiency of Llama 2 models for various applications.

- Cluster 1 - Language Model Applications: Cluster 1 comprises projects that involve language model applications in different languages, such as Chinese, Vietnamese, and Arabic. Additionally, this cluster includes projects related to question answering and document-based QA using Llama 2.

- Cluster 2 - Model Implementation and Inference: Projects in Cluster 2 center around the implementation and inference aspects of Llama 2 models, including implementations in specific programming languages (e.g., Go) and integration with hardware accelerators like Google Cloud TPU.

- Cluster 3 - Chatbot and Interactive Applications: Cluster 3 consists of projects primarily focused on chatbot development and interactive applications using Llama 2 models. These projects emphasize creating conversational AI solutions with the help of large language models.

- Cluster 4 - GPT-3.5 and Streamlit Apps: Projects in Cluster 4 are specifically associated with GPT-3.5 models and their applications in developing Streamlit apps. This cluster demonstrates the utilization of Llama 2 models in creating interactive and user-friendly applications.

6.3. Data Analysis Findings

- Hypothesis 1

- Null Hypothesis (H0): There is no significant difference in the audience's response to Llama 2 between the open-source model and closed models.

- Alternative Hypothesis (H1): There is a significant difference in the audience's response to Llama 2, with the open-source model experiencing faster development compared to closed models, as expected by Meta.

- Hypothesis 2

- Null Hypothesis (H0): There is no significant difference in the challenges encountered by early adopters in deploying the Llama 2 model.

- Alternative Hypothesis (H1): There is a significant difference in the challenges encountered by early adopters in deploying the Llama 2 model.

- Hypothesis 3

- Null Hypothesis (H0): There is no significant difference in the challenges encountered by early adopters in fine-tuning the Llama 2 model.

- Alternative Hypothesis (H1): Early adopters encounter significant challenges in fine-tuning the Llama 2 model.

- Hypothesis 4

- Null Hypothesis (H0): There is no significant difference in the interest shown by early adopters of Llama 2 between the medical domain and other domains.

- Alternative Hypothesis (H1): Early adopters of Llama 2 prioritize the medical domain significantly more than other domains, indicating a greater interest in utilizing LLMs for medical applications.

7. Responsible AI and Ethical Considerations

- Bias Mitigation and Fairness: Early adopters' experiences with Llama 2 have highlighted the importance of addressing biases in AI outputs. As a pre-trained model trained on diverse data sources, Llama 2 may inadvertently inherit biases present in the training data. Researchers and developers must implement robust techniques to identify and mitigate biases to ensure fairness and equitable outcomes across diverse user populations [79,80,81].

- Transparency and Interpretability: The complexity of deep learning models like Llama 2 can present challenges in understanding their decision-making processes. To promote transparency and interpretability, early adopters have emphasized the need for methods that provide insights into the model's internal workings. Future research should focus on developing techniques to make AI models more interpretable, enabling users to comprehend the rationale behind model's predictions [82].

- Privacy and Data Protection: Llama 2's success heavily relies on the vast amount of data used during pretraining. Early adopters recognize the significance of safeguarding user data and respecting privacy concerns. Employing privacy-preserving methods, such as federated learning or differential privacy, can uphold the confidentiality of user data while ensuring the model's effectiveness [83,84].

- Ethical Use-Cases and Societal Impact: As AI technologies like Llama 2 become increasingly integrated into various domains, early adopters have stressed the importance of identifying and promoting ethically sound use cases. Research should extend to analyze the societal impact of Llama 2's deployment, considering potential consequences on individuals, communities, and societal values. Striking a balance between innovation and responsible AI practices is crucial to harness the full potential of LLMs while mitigating unintended negative effects [85,86].

- Continuous Monitoring and Auditing: To maintain ethical AI practices, early adopters advocate for continuous monitoring and auditing of Llama 2's performance. Regular assessments can help identify potential biases or deviations in the model's behavior, enabling timely adjustments to ensure compliance with ethical standards [87,88].

- End-User Empowerment and Informed Consent: As AI models like Llama 2 become integral to user experiences, early adopters have emphasized the significance of end-user empowerment and informed consent. Users should be well-informed about the AI's involvement in their interactions and have the right to control and modify the extent of AI-driven recommendations or decisions [89].

8. Future Directions and Research

9. Discussion

- The investigation into application diversity and effectiveness of Llama 2 indicates a notable level of interest among early adopters across a broad spectrum of AI projects. These adopters have demonstrated successful deployment of the model on multiple platforms and technologies, particularly when fine-tuned for domain-specific tasks. This observation underscores the model's versatility and effectiveness in addressing various AI tasks, making it a potential solution of interest for researchers and developers seeking a unified model suitable for multiple applications.

- Early Adopters' Feedback and Challenges: Despite the limited availability of feedback, early adopters reported encountering minimal challenges in both deployment and fine-tuning processes of Llama 2. This outcome reflects favorably on Meta for synchronously launching the model with model recipes [40], which seemingly contributed to a smooth user experience and implementation for the adopters.

- Cross-Model Comparisons: In order to obtain a comprehensive assessment of Llama 2's standing within the AI landscape, it is suggested that future studies conduct comparative analyses with other prominent pretrained models. By undertaking cross-model comparisons, researchers can glean valuable insights into Llama 2's distinctive contributions, advantages, and areas in which it outperforms existing alternatives. Such analyses would aid in elucidating the specific strengths and capabilities of Llama 2, contributing to a more holistic understanding of its potential in the field of artificial intelligence.

- Extended Use Cases and Domains: While the present study provides insights into early adopters' deployment of Llama 2 in specific applications, future research endeavors could extend the investigation to encompass its implementation across additional domains and diverse use cases. Exploring Llama 2's potential in emerging fields, such as healthcare, finance, and environmental sciences, would not only exemplify its versatility but also widen its potential impact across various industries and research domains.

10. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; et al. Llama 2: Open Foundation and fine-tuned chat models. arXiv 2023, arXiv:2307.09288. [Google Scholar]

- Meta and Microsoft introduce the next generation of Llama. Available online: https://ai.meta.com/blog/llama-2/ (accessed on 28 July 2023).

- Roumeliotis, K.I.; Tselikas, N.D. ChatGPT and Open-AI Models: A Preliminary Review. Future Internet 2023, 15, 192. [Google Scholar] [CrossRef]

- Dillion, D.; Tandon, N.; Gu, Y.; Gray, K. Can ai language models replace human participants? Trends in Cognitive Sciences 2023, 27, 597–600. [Google Scholar] [CrossRef]

- Rahali, A.; Akhloufi, M.A. End-to-End Transformer-Based Models in Textual-Based NLP. AI 2023, 4, 54–110. [Google Scholar] [CrossRef]

- Piris, Y.; Gay, A.-C. Customer satisfaction and natural language processing. Journal of Business Research 2021, 124, 264–271. [Google Scholar] [CrossRef]

- Dash, G.; Sharma, C.; Sharma, S. Sustainable Marketing and the Role of Social Media: An Experimental Study Using Natural Language Processing (NLP). Sustainability 2023, 15, 5443. [Google Scholar] [CrossRef]

- Arowosegbe, A.; Oyelade, T. Application of Natural Language Processing (NLP) in Detecting and Preventing Suicide Ideation: A Systematic Review. Int. J. Environ. Res. Public Health 2023, 20, 1514. [Google Scholar] [CrossRef]

- Tyagi, N.; Bhushan, B. Demystifying the role of natural language processing (NLP) in Smart City Applications: Background, motivation, recent advances, and future research directions. Wireless Personal Communications 2023, 130, 857–908. [Google Scholar] [CrossRef]

- Tyagi, N.; Bhushan, B. Demystifying the role of natural language processing (NLP) in Smart City Applications: Background, motivation, recent advances, and future research directions. Wireless Personal Communications 2023, 130, 857–908. [Google Scholar] [CrossRef]

- Pruneski, J.A.; Pareek, A.; Nwachukwu, B.U.; Martin, R.K.; Kelly, B.T.; Karlsson, J.; Pearle, A.D.; Kiapour, A.M.; Williams, R.J. Natural language processing: Using artificial intelligence to understand human language in Orthopedics. Knee Surgery, Sports Traumatology, Arthroscopy 2022, 31, 1203–1211. [Google Scholar] [CrossRef]

- Mukhamadiyev, A.; Mukhiddinov, M.; Khujayarov, I.; Ochilov, M.; Cho, J. Development of Language Models for Continuous Uzbek Speech Recognition System. Sensors 2023, 23, 1145. [Google Scholar] [CrossRef] [PubMed]

- Ahmed, A.; Leroy, G.; Lu, H.Y.; Kauchak, D.; Stone, J.; Harber, P.; Rains, S.A.; Mishra, P.; Chitroda, B. Audio Delivery of Health Information: An NLP study of information difficulty and bias in listeners. Procedia Computer Science 2023, 219, 1509–1517. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Xu, G.; Yan, F.; Wang, J.; Wang, Z. Defect transformer: An efficient hybrid transformer architecture for surface defect detection. Measurement 2023, 211, 112614. [Google Scholar] [CrossRef]

- Drosouli, I.; Voulodimos, A.; Mastorocostas, P.; Miaoulis, G.; Ghazanfarpour, D. TMD-BERT: A Transformer-Based Model for Transportation Mode Detection. Electronics 2023, 12, 581. [Google Scholar] [CrossRef]

- Philippi, D.; Rothaus, K.; Castelli, M. A Vision Transformer architecture for the automated segmentation of retinal lesions in spectral domain optical coherence tomography images. Scientific Reports 2023, 13. [Google Scholar] [CrossRef] [PubMed]

- Aleissaee, A.A.; Kumar, A.; Anwer, R.M.; Khan, S.; Cholakkal, H.; Xia, G.-S.; Khan, F.S. Transformers in Remote Sensing: A Survey. Remote Sens. 2023, 15, 1860. [Google Scholar] [CrossRef]

- Panopoulos, I.; Nikolaidis, S.; Venieris, S.I.; Venieris, I.S. Exploring the performance and efficiency of Transformer models for NLP on mobile devices. arXiv 2023, arXiv:2306.11426. [Google Scholar]

- Ohri, K.; Kumar, M. Supervised fine-tuned approach for automated detection of diabetic retinopathy. Multimedia Tools and Applications 2023. [Google Scholar] [CrossRef]

- Li, H.; Zhu, C.; Zhang, Y.; Sun, Y.; Shui, Z.; Kuang, W.; Zheng, S.; Yang, L. Task-specific fine-tuning via variational information bottleneck for weakly-supervised pathology whole slide image classification. arXiv 2023, arXiv:2303.08446. [Google Scholar]

- Lodagala, V.S.; Ghosh, S.; Umesh, S. Pada: Pruning assisted domain adaptation for self-supervised speech representations. In Proceedings of the 2022 IEEE Spoken Language Technology Workshop (SLT) 2023. [CrossRef]

- Han, X.; Zhang, Z.; Ding, N.; Gu, Y.; Liu, X.; Huo, Y.; Qiu, J.; Yao, Y.; Zhang, A.; Zhang, L.; et al. Pre-trained models: Past, present and future. AI Open 2021, 2, 225–250. [Google Scholar] [CrossRef]

- Prottasha, N.J.; Sami, A.A.; Kowsher, M.; Murad, S.A.; Bairagi, A.K.; Masud, M.; Baz, M. Transfer Learning for Sentiment Analysis Using BERT Based Supervised Fine-Tuning. Sensors 2022, 22, 4157. [Google Scholar] [CrossRef] [PubMed]

- Xu, Z.; Huang, S.; Zhang, Y.; Tao, D. Webly-supervised fine-grained visual categorization via deep domain adaptation. IEEE Transactions on Pattern Analysis and Machine Intelligence 2018, 40, 1100–1113. [Google Scholar] [CrossRef] [PubMed]

- Tang, C.I.; Qendro, L.; Spathis, D.; Kawsar, F.; Mascolo, C.; Mathur, A. Practical self-supervised continual learning with continual fine-tuning. arXiv 2023, arXiv:2303.17235. [Google Scholar]

- Skelton, J.; Llama 2. A model overview and demo tutorial with Paperspace Gradient. Available online: https://blog.paperspace.com/llama-2/ (accessed on 28 July 2023).

- Hugging Face llama-2-7b. Available online: https://huggingface.co/meta-llama/Llama-2-7b (accessed on 28 July 2023).

- Llama 2 - Resource Overview - META AI. Available online: https://ai.meta.com/resources/models-and-libraries/llama/ (accessed on 28 July 2023).

- Llama 2 - Responsible Use Guide. Available online: https://ai.meta.com/llama/responsible-use-guide/ (accessed on 28 July 2023).

- Llama 2 License Agreement. Available online: https://github.com/facebookresearch/llama/blob/main/LICENSE (accessed on 28 July 2023).

- Inference code for Llama Models - GitHub. Available online: https://github.com/facebookresearch/llama/tree/main (accessed on 28 July 2023).

- Hugging Face Llama 2 Models. Available online: https://huggingface.co/models?other=llama-2 (accessed on 28 July 2023).

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. arXiv 2023, arXiv:1706.03762. [Google Scholar]

- Sennrich, R.; Haddow, B.; Birch, A. Neural machine translation of rare words with Subword units. arXiv 2016, arXiv:1508.07909. [Google Scholar]

- Shazeer, N. Glu variants improve transformer. arXiv 2020, arXiv:2002.05202. [Google Scholar]

- Song, F.; Yu, B.; Li, M.; Yu, H.; Huang, F.; Li, Y.; Wang, H. Preference ranking optimization for human alignment. arXiv 2023, arXiv:2306.17492. [Google Scholar]

- Taecharungroj, V. “What Can ChatGPT Do?” Analyzing Early Reactions to the Innovative AI Chatbot on Twitter. Big Data Cogn. Comput. 2023, 7, 35. [Google Scholar] [CrossRef]

- Sotnikov, V.; Chaikova, A. Language Models for Multimessenger Astronomy. Galaxies 2023, 11, 63. [Google Scholar] [CrossRef]

- Maroto-Gómez, M.; Castro-González, Á.; Castillo, J.C.; Malfaz, M.; Salichs, M.Á. An adaptive decision-making system supported on user preference predictions for Human–Robot Interactive Communication. User Modeling and User-Adapted Interaction 2022, 33, 359–403. [Google Scholar] [CrossRef] [PubMed]

- Facebookresearch/llama-recipes: Examples and recipes for Llama 2 model. Available online: https://github.com/facebookresearch/llama-recipes (accessed on 28 July 2023).

- Karpathy/LLAMA2.C: Inference llama 2 in one file of pure C. Available online: https://github.com/karpathy/llama2.c (accessed on 28 July 2023).

- Flagalpha/LLAMA2-Chinese. Available online: https://github.com/FlagAlpha/Llama2-Chinese (accessed on 28 July 2023).

- A16Z-infra/LLAMA2-chatbot. Available online: https://github.com/a16z-infra/llama2-chatbot (accessed on 28 July 2023).

- Liltom-Eth Liltom-eth/LLAMA2-webui. Available online: https://github.com/liltom-eth/llama2-webui (accessed on 28 July 2023).

- Kennethleungty/llama-2-open-source-llm-cpu-inference. Available online: https://github.com/kennethleungty/Llama-2-Open-Source-LLM-CPU-Inference (accessed on 28 July 2023).

- Soulteary Soulteary/docker-LLAMA2-chat. Available online: https://github.com/soulteary/docker-llama2-chat (accessed on 28 July 2023).

- Dataprofessor/Llama2. Available online: https://github.com/dataprofessor/llama2 (accessed on 28 July 2023).

- AYAKA14732/llama-2-jax. Available online: https://github.com/ayaka14732/llama-2-jax (accessed on 28 July 2023).

- Alpha-VLLM/LLAMA2-accessory. Available online: https://github.com/Alpha-VLLM/LLaMA2-Accessory (accessed on 28 July 2023).

- AIANYTIME/LLAMA2-Medical-chatbot. Available online: https://github.com/AIAnytime/Llama2-Medical-Chatbot (accessed on 28 July 2023).

- Anakin87/LLAMA2-Haystack. Available online: https://github.com/anakin87/llama2-haystack (accessed on 28 July 2023).

- Llama2 Chatbot by Perplexity AI. Available online: https://labs.perplexity.ai/ (accessed on 28 July 2023).

- Llama2 Chatbot by NimbleBox AI. Available online: https://chat.nbox.ai/ (accessed on 28 July 2023).

- Fine-tuning Falcon-7B, Llama 2 with Qlora to create an advanced AI model with a profound understanding of the Indian legal context. Available online: https://github.com/NisaarAgharia/Indian-LawyerGPT (accessed on 28 July 2023).

- Designed a question answering system Uisng LAAMAV2-7B, langchain, and vetcor database chromadb. Available online: https://github.com/10deepaktripathi/Document-based_question_answering_system_using_LLamaV2-7b#document-based_question_answering_system_using_llamav2-7b (accessed on 28 July 2023).

- CHATGPT compatible API for Llama 2. Available online: https://github.com/unconv/llama2-flask-api (accessed on 28 July 2023).

- Hunting amulets with Llama 2. Available online: https://github.com/blackle/amulets (accessed on 28 July 2023).

- On the structural pruning of large language models. support llama, llama-2, Vicuna, Baichuan, etc. Available online: https://github.com/horseee/LLM-Pruner (accessed on 28 July 2023).

- Chat with the new llama 2 model on discord. Available online: https://github.com/davidgm3/llama2-discord-bot (accessed on 28 July 2023).

- LLAMA2-qlora-finetunined-arabic. Available online: https://github.com/h9-tect/llama2-qlora-finetunined-Arabic (accessed on 28 July 2023).

- Private Q&A and summarization of documents+images or chat with local GPT, 100% private, Apache 2.0. Available online: https://gpt.h2o.ai/ https://github.com/h2oai/h2ogpt (accessed on 28 July 2023).

- LLAMA2 LLM ported to Rust Burn. Available online: https://github.com/Gadersd/llama2-burn (accessed on 28 July 2023).

- Ssbuild Ssbuild/LLM_FINETUNING. Available online: https://github.com/ssbuild/llm_finetuning (accessed on 28 July 2023).

- Xunboo Xunboo/llama2win: Run baby llama 2 model in windows. Available online: https://github.com/xunboo/llama2win (accessed on 28 July 2023).

- longday1102 LONGDAY1102/VietAI-experiment-LLAMA-2: lama-2 model experiment. Available online: https://github.com/longday1102/VietAI-experiment-LLaMA-2 (accessed on 28 July 2023).

- Nikolaydubina Nikolaydubina/llama2.go: Llama-2 in pure go. Available online: https://github.com/nikolaydubina/llama2.go (accessed on 28 July 2023).

- OpenVINO-dev-contest/llama2.openvino. Available online: https://github.com/OpenVINO-dev-contest/llama2.openvino (accessed on 28 July 2023).

- Samrawal Samrawal/llama2_chat_templater: Wrapper to easily generate the chat template for LLAMA2. Available online: https://github.com/samrawal/llama2_chat_templater (accessed on 28 July 2023).

- Hiyouga Hiyouga/Llama-efficient-tuning: Easy-to-use fine-tuning framework using PEFT (PT+SFT+RLHF with Qlora). Available online: https://github.com/hiyouga/LLaMA-Efficient-Tuning (accessed on 28 July 2023).

- MOSAMA1994/LLAMA2-prompt-reverse-engineered: Llama 2 prompting style. Available online: https://github.com/mosama1994/Llama2-Prompt-Reverse-Engineered (accessed on 28 July 2023).

- Melih-Unsal Melih-UNSAL/DEMOGPT. Available online: https://github.com/melih-unsal/DemoGPT (accessed on 28 July 2023).

- Abualigah, L.; Gandomi, A.H.; Elaziz, M.A.; Hamad, H.A.; Omari, M.; Alshinwan, M.; Khasawneh, A.M. Advances in Meta-Heuristic Optimization Algorithms in Big Data Text Clustering. Electronics 2021, 10, 101. [Google Scholar] [CrossRef]

- Turki, T.; Roy, S.S. Novel Hate Speech Detection Using Word Cloud Visualization and Ensemble Learning Coupled with Count Vectorizer. Appl. Sci. 2022, 12, 6611. [Google Scholar] [CrossRef]

- Gupta, A.; Sharma, U. Machine learning based sentiment analysis of Hindi data with TF-IDF and count vectorization. In Proceedings of the 2022 7th International Conference on Computing, Communication and Security (ICCCS) 2022. [Google Scholar] [CrossRef]

- Hao, K. OpenAI is giving Microsoft exclusive access to its GPT-3 language model. Available online: https://www.technologyreview.com/2020/09/23/1008729/openai-is-giving-microsoft-exclusive-access-to-its-gpt-3-language-model/ (accessed on 28 July 2023).

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the dangers of stochastic parrots. Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency 2021.

- Weidinger, L.; Mellor, J.; Rauh, M.; Griffin, C.; Uesato, J.; Huang, P.-S.; Cheng, M.; Glaese, M.; Balle, B.; Kasirzadeh, A.; et al. Ethical and social risks of harm from language models. arXiv 2021, arXiv:2112.04359. [Google Scholar]

- Solaiman, I.; Talat, Z.; Agnew, W.; Ahmad, L.; Baker, D.; Blodgett, S.L.; Daumé III, H.; Dodge, J.; Evans, E.; Hooker, S.; et al. Evaluating the social impact of Generative AI systems in Systems and Society. arXiv 2023, arXiv:2306.05949. [Google Scholar]

- Li, Y.; Zhang, Y. Fairness of chatgpt. arXiv 2023, arXiv:2305.18569. [Google Scholar]

- Abramski, K.; Citraro, S.; Lombardi, L.; Rossetti, G.; Stella, M. Cognitive Network Science Reveals Bias in GPT-3, GPT-3.5 Turbo, and GPT-4 Mirroring Math Anxiety in High-School Students. Big Data Cogn. Comput. 2023, 7, 124. [Google Scholar] [CrossRef]

- Rozado, D. The Political Biases of ChatGPT. Soc. Sci. 2023, 12, 148. [Google Scholar] [CrossRef]

- Carvalho, D.V.; Pereira, E.M.; Cardoso, J.S. Machine Learning Interpretability: A Survey on Methods and Metrics. Electronics 2019, 8, 832. [Google Scholar] [CrossRef]

- Mazurek, G.; Małagocka, K. Perception of privacy and data protection in the context of the development of Artificial Intelligence. Journal of Management Analytics 2019, 6, 344–364. [Google Scholar] [CrossRef]

- Goldsteen, A.; Saadi, O.; Shmelkin, R.; Shachor, S.; Razinkov, N. Ai Privacy Toolkit. SoftwareX 2023, 22, 101352. [Google Scholar] [CrossRef]

- Hagerty, A.; Rubinov, I. Global AI Ethics: A review of the social impacts and ethical implications of Artificial Intelligence. arXiv 2019, arXiv:1907.07892. [Google Scholar]

- Khakurel, J.; Penzenstadler, B.; Porras, J.; Knutas, A.; Zhang, W. The Rise of Artificial Intelligence under the Lens of Sustainability. Technologies 2018, 6, 100. [Google Scholar] [CrossRef]

- Minkkinen, M.; Laine, J.; Mäntymäki, M. Continuous auditing of Artificial Intelligence: A conceptualization and assessment of tools and Frameworks. Digital Society 2022, 1. [Google Scholar] [CrossRef]

- Mökander, J.; Floridi, L. Ethics-based auditing to develop trustworthy AI. Minds and Machines 2021, 31, 323–327. [Google Scholar] [CrossRef]

- Usmani, U.A.; Happonen, A.; Watada, J. Human-centered artificial intelligence: Designing for user empowerment and ethical considerations. In Proceedings of the 2023 5th International Congress on Human-Computer Interaction, Optimization and Robotic Applications (HORA) 2023. [Google Scholar] [CrossRef]

- Zeng, C.; Li, S.; Li, Q.; Hu, J.; Hu, J. A Survey on Machine Reading Comprehension—Tasks, Evaluation Metrics and Benchmark Datasets. Appl. Sci. 2020, 10, 7640. [Google Scholar] [CrossRef]

- Eleftheriadis, P.; Perikos, I.; Hatzilygeroudis, I. Evaluating Deep Learning Techniques for Natural Language Inference. Appl. Sci. 2023, 13, 2577. [Google Scholar] [CrossRef]

| Llama 2: Early Adopters' Projects | Areas of Focus | Cluster |

|---|---|---|

| Recipes Repository [40] | llama-recipes, Fine-tuning, Responsible AI practices | 2 |

| Llama2.c by [41] | Llama-2 C inference, nanoGPT, Minimalistic implementation | 3 |

| Llama2-Chinese [42] | Llama2 Chinese Community, Chinese NLP, Large language models | 0 |

| Llama2-chatbot [43] | LLaMA 2 Chatbot App, Interactive chatbot, Large language models | 0 |

| Llama2-webui [44] | llama2-webui, Llama 2 models, GPU/CPU inference | 3 |

| Llama-2-Open-Source-LLM-CPU-Inference [45] | Quantized LLMs on CPUs, Document Q&A, Self-managed deployment | 4 |

| Docker-llama2-chat [46] | Docker LLaMA2 Chat, Efficient deployment, Interactive chat applications | 3 |

| Llama2 [47] | Llama 2 Chat, Large Language Model, Streamlit app | 0 |

| Llama-2-jax [48] | JAX Implementation, Llama 2 model, Google Cloud TPU | 1 |

| LLaMA2-Accessory [49] | LLaMA2-Accessory, Large Language Models, Multi-modal LLMs | 0 |

| Llama2-Medical-Chatbot [50] | Llama2-Medical-Chatbot, Medical queries, Context-aware responses | 3 |

| Llama2-haystack [51] | llama2-haystack, Llama2 integration, NLP/LLM experiments | 0 |

| Llama2 Chatbot [52] | Perplexity AI, Conversational answer engine, Llama 2 implementation | 1 |

| Llama2 Chatbot [53] | NimbleBox AI, Full-Stack MLOps, Llama 2 deployment | 1 |

| Indian-LawyerGPT [54] | Falcon-7B, LLAMA 2, Indian Law AI | 1 |

| Document-based_question_answering_system_using_LLamaV2-7b [55] | LLamaV2-7b, Question answering, Document-based QA | 1 |

| Llama2-flask-api [56] | Llama 2, Flask API, Language Model integration | 1 |

| Amulets [57] | Llama 2, Poetic amulets, Language and code exploration | 1 |

| LLM-Pruner [58] | llm-pruner, Language Model Compression, Task-agnostic Pruning | 1 |

| Llama2-discord-bot [59] | Discord bot, Meta Llama 2, Interactive | 1 |

| Llama2-qlora-finetunined-Arabic [60] | Fine-tuning, Arabic, Quantization | 2 |

| H2ogpt [61] | H2oGPT, Document querying, Private GPT LLMs | 4 |

| Llama2-burn [62] | Llama2-burn Project, Deep learning framework, Rust implementation | 3 |

| Llm_finetuning [63] | Language models, Training, Chinese datasets | 0 |

| Llama2win [64] | llama2win, Llama 2 on Windows, Token-per-second efficiency | 1 |

| VietAI-experiment-LLaMA-2 [65] | LLaMA-2, Vietnamese language, Question answering | 1 |

| Llama2.go [66] | Llama2 Go implementation, Native inference, Performance optimization | 3 |

| Llama2.openvino [67] | llama2.openvino, OpenVINO integration, Model optimization | 3 |

| Llama2_chat_templater [68] | Chat templates, Llama2 models, Abstraction | 3 |

| LLaMA-Efficient-Tuning [69] | LLaMA Efficient Tuning, Large language models, Web UI | 0 |

| Llama2-Prompt-Reverse-Engineered [70] | Llama 2 prompting, Decoding, Best practice | 1 |

| DemoGPT [71] | DemoGPT, GPT-3.5-turbo, Streamlit apps | 4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).