Submitted:

19 June 2023

Posted:

21 June 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

2.1. Convolutional Neural Network (CNN)

2.2. Approximate Neural Networks

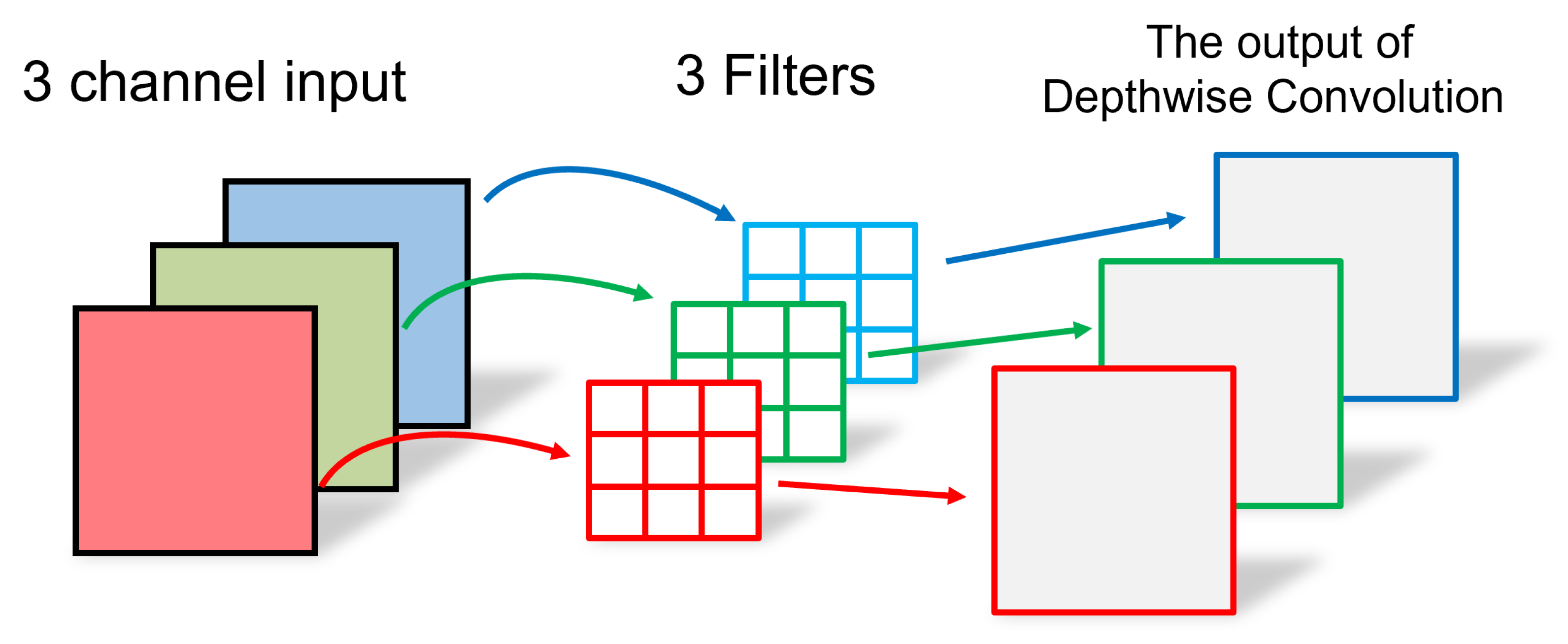

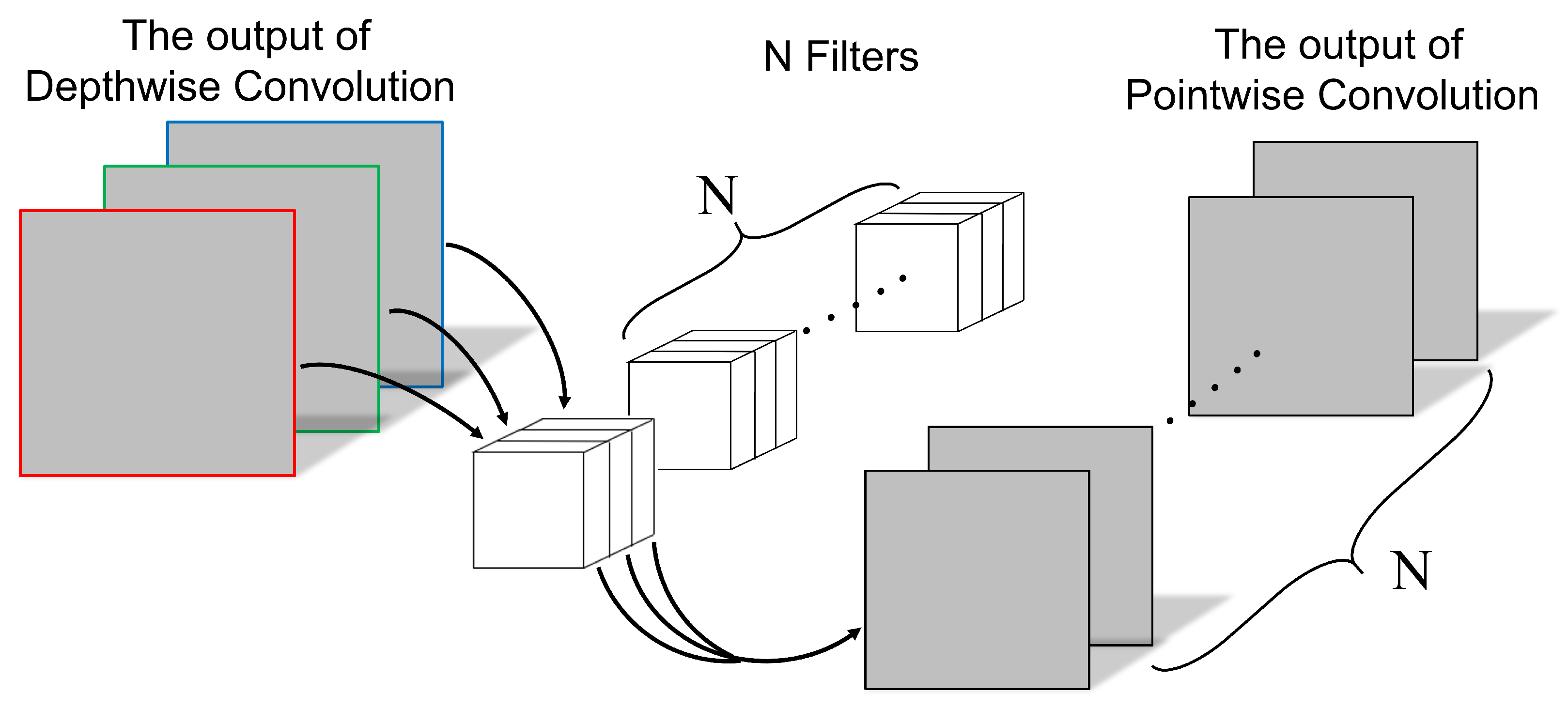

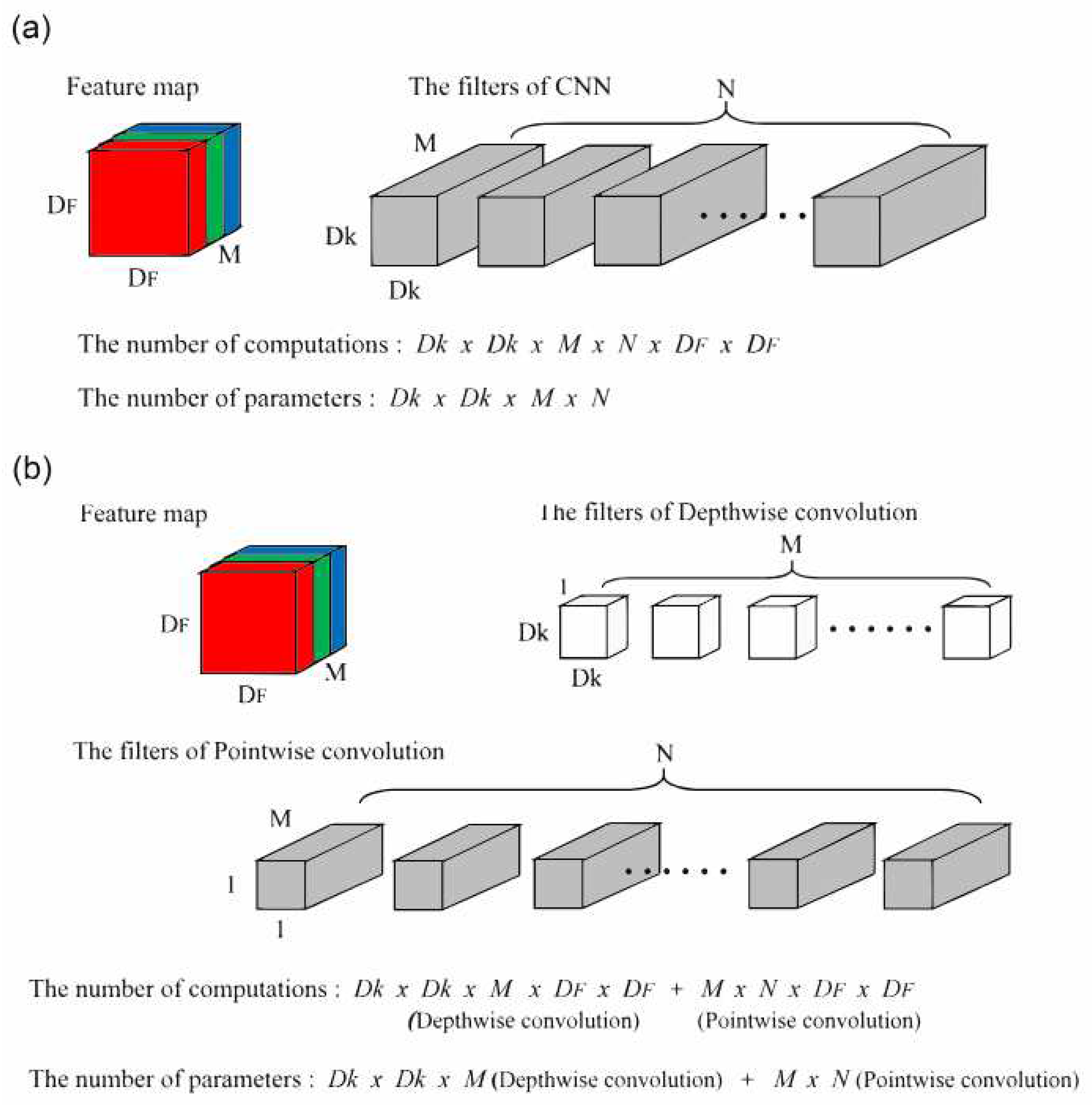

2.3. Depthwise Separable Convolution (DSC)

3. Proposed Method

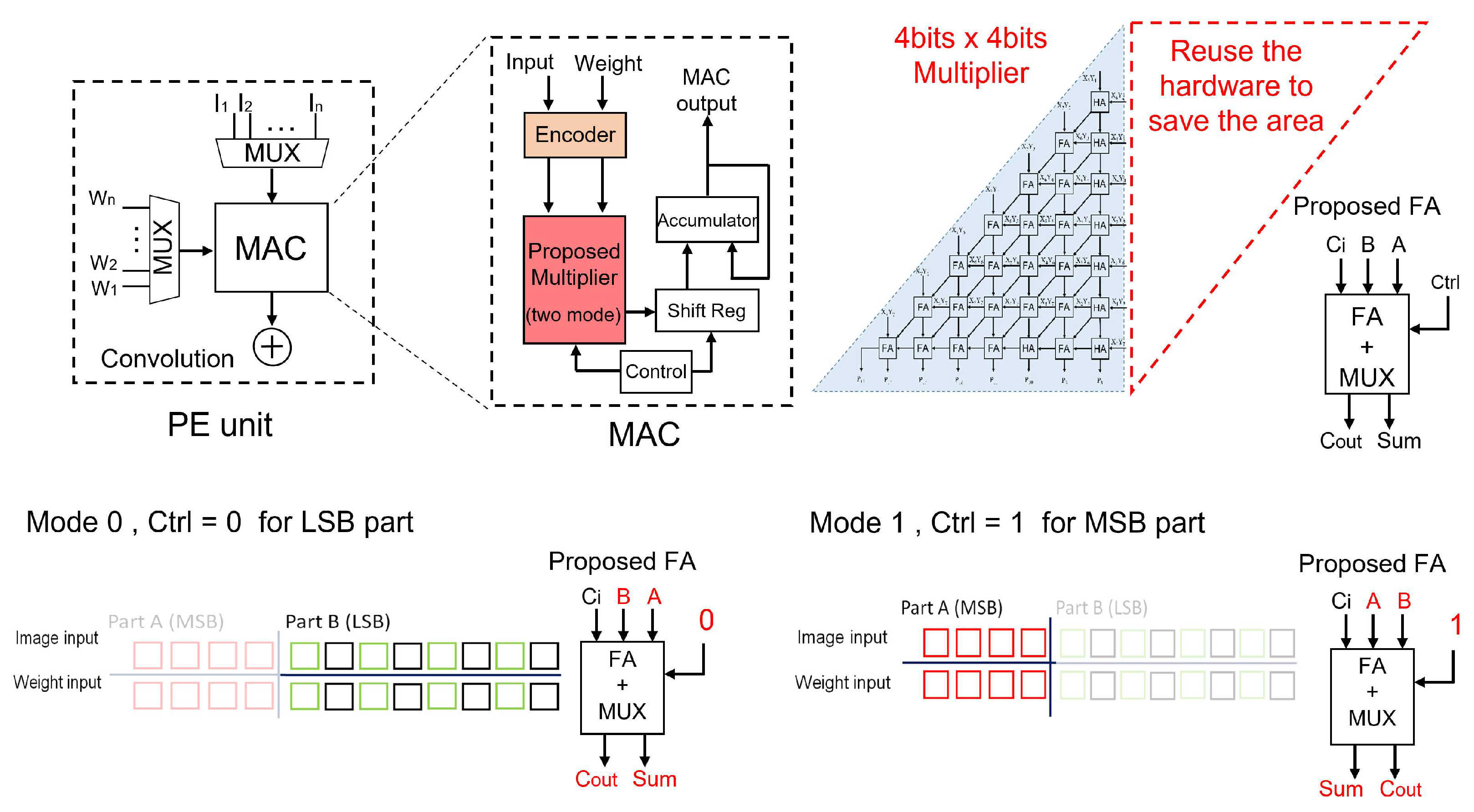

3.1. Multi-mode Approximate Multiplier

3.2. DSCNN with Multi-mode Approximate Multiplier

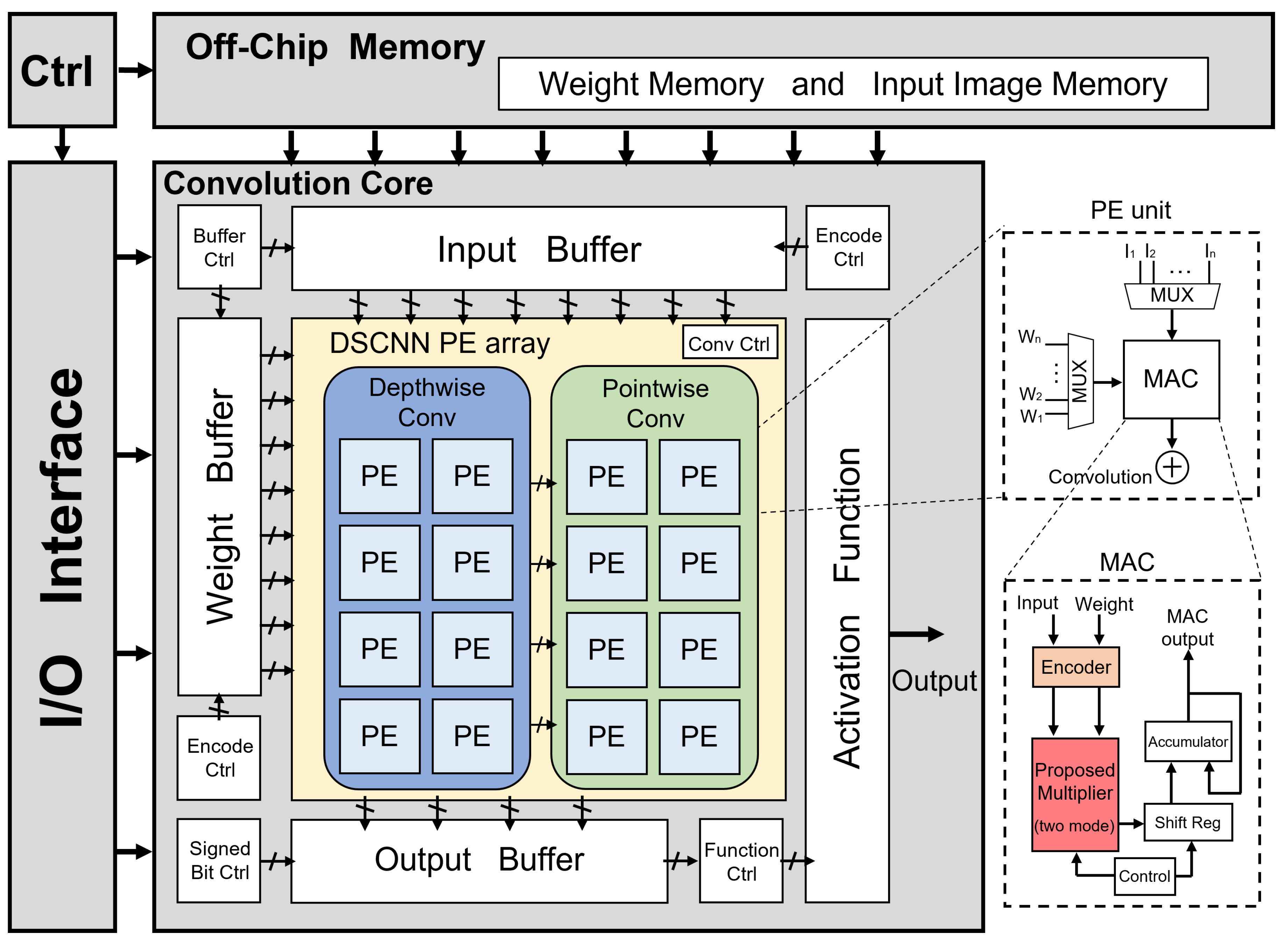

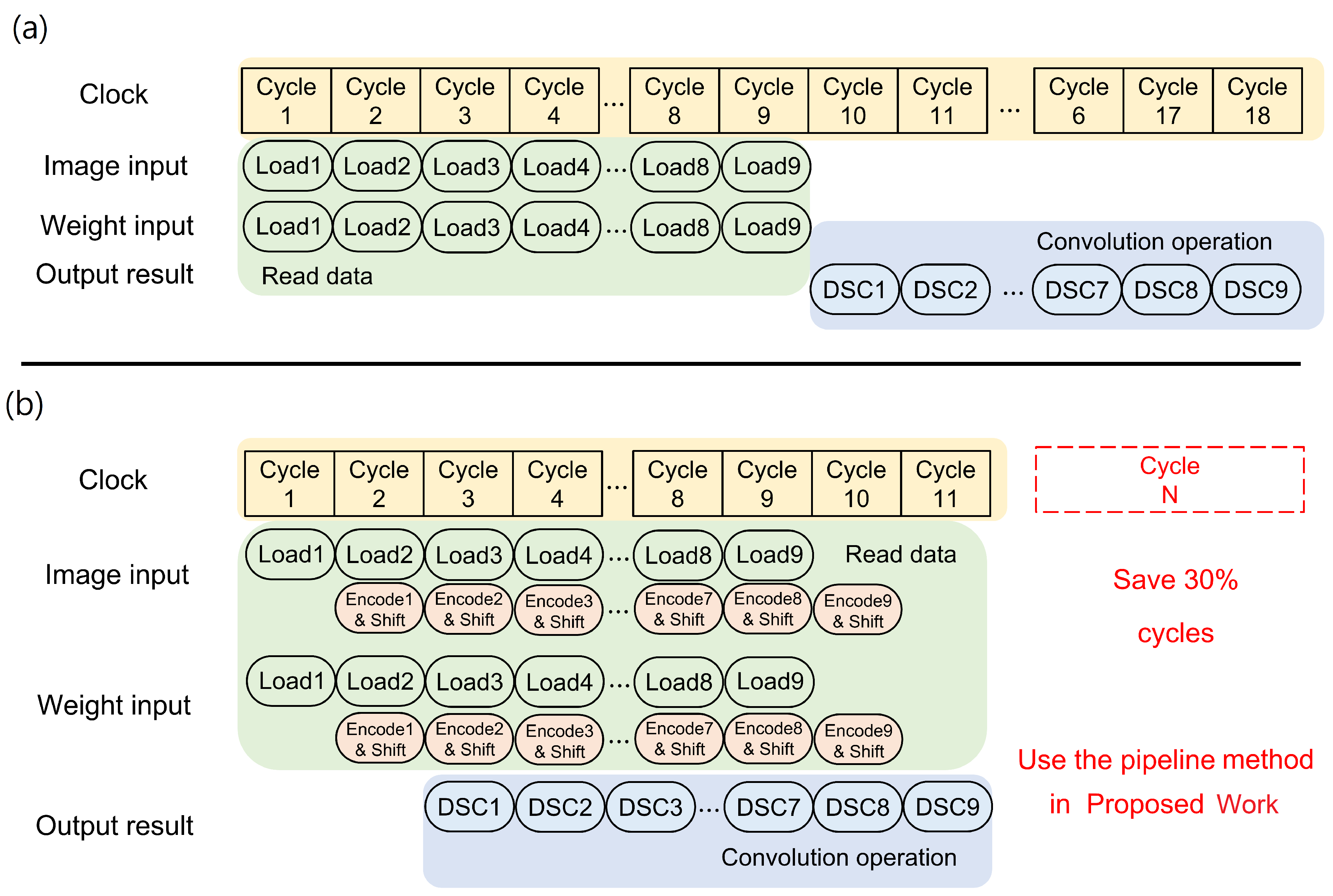

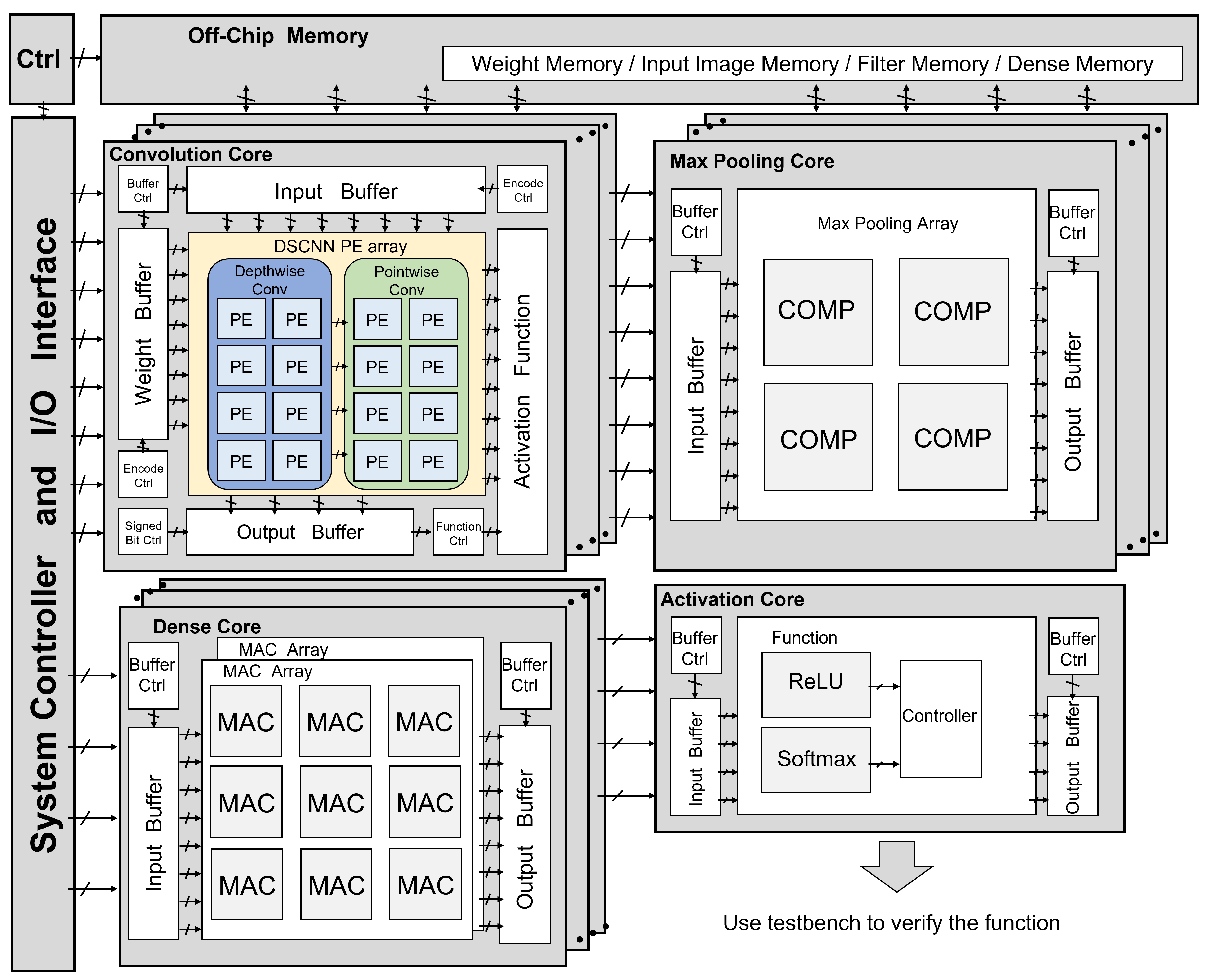

- Initially, the image input and weight inputs are loaded from an off-chip memory by the control unit and stored in the input buffer and weight buffer, respectively.

- The encoder control determines whether the input buffer and weight buffer data should undergo encoding, and accordingly, the reformatted data is obtained.

- The reformatted data is then supplied to the convolution core in the A-DSCNN PE array for computation.

- The convolution control unit within the A-DSCNN PE array decides whether to perform depthwise convolution and pointwise convolution, generating a new job.

- The newly created job comprises a set of instructions pipelined for processing.

- After scheduling, individual instructions are sent to the multi-mode approximate multiplier for computation. Control signals determine if the computed data needs to be shifted.

- Once the computations are completed, the computed results are accumulated and sent back to the output buffer to finalize the convolution operation.

4. Performance Results

5. Conclusion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| A-DSCNN | Approximate-DSCNN. 1, 5, 7, 8, 10, 11 |

| AI | Artificial Intelligence. 1 |

| ASIC | Application Specific Integrated Circuit. 1, 5 |

| CIFAR | Canadian Institute for Advanced Research. 11, 12 |

| CMOS | Complimentary Metal Oxide Semiconductor. 1, 6 |

| CNN | Convolutional Neural Network. 1–5, 7, 8, 10–12 |

| DSC | Depthwise Separable Convolution. 2, 5, 9 |

| DSCNN | Depthwise Separable CNN. 1, 3–5, 7, 8, 10–12 |

| DWC | Depthwise Convolution. 3, 5, 8 |

| EDA | Electronic Design Automation. 11 |

| HDL | Hardware Descriptor Language. 9 |

| IC | Integrated Circuits. 1 |

| LSB | Least Significant Bit. 5, 6 |

| MAC | Multiply-Accumulate. 2, 8 |

| MSB | Most Significant Bit. 5, 6 |

| PE | Processing Element. 1, 2, 5, 10 |

| PnR | Place and Route. 11 |

| PWC | Pointwise Convolution. 3, 5, 8 |

| ReLU | Rectified Linear Unit. 9 |

| TSMC | Taiwan Semiconductor Manufacturing Company. 1, 6, 10, 11 |

| VGG | Visual Geometry Group. 9, 11 |

References

- Kulkarni, P.; Gupta, P.; Ercegovac, M. Trading Accuracy for Power with an Underdesigned Multiplier Architecture. 2011 24th Internatioal Conference on VLSI Design, 2011, pp. 346–351. [CrossRef]

- Shin, D.; Gupta, S.K. Approximate logic synthesis for error tolerant applications. 2010 Design, Automation and Test in Europe Conference and Exhibition (DATE 2010), 2010, pp. 957–960. [CrossRef]

- Gupta, V.; Mohapatra, D.; Raghunathan, A.; Roy, K. Low-Power Digital Signal Processing Using Approximate Adders. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2013, 32, 124–137. [Google Scholar] [CrossRef]

- Mahdiani, H.R.; Ahmadi, A.; Fakhraie, S.M.; Lucas, C. Bio-Inspired Imprecise Computational Blocks for Efficient VLSI Implementation of Soft-Computing Applications. IEEE Transactions on Circuits and Systems I: Regular Papers 2010, 57, 850–862. [Google Scholar] [CrossRef]

- Shin, D.; Gupta, S.K. A Re-design Technique for Datapath Modules in Error Tolerant Applications. 2008 17th Asian Test Symposium, 2008, pp. 431–437. [CrossRef]

- Elbtity, M.E.; Son, H.W.; Lee, D.Y.; Kim, H. High Speed, Approximate Arithmetic Based Convolutional Neural Network Accelerator. 2020 International SoC Design Conference (ISOCC), 2020, pp. 71–72. [CrossRef]

- Jou, J.M.; Kuang, S.R.; Chen, R.D. Design of low-error fixed-width multipliers for DSP applications. IEEE Transactions on Circuits and Systems II: Analog and Digital Signal Processing 1999, 46, 836–842. [Google Scholar] [CrossRef]

- Guo, C.; Zhang, L.; Zhou, X.; Qian, W.; Zhuo, C. A Reconfigurable Approximate Multiplier for Quantized CNN Applications. 2020 25th Asia and South Pacific Design Automation Conference (ASP-DAC), 2020, pp. 235–240. [CrossRef]

- Chen, Y.H.; Krishna, T.; Emer, J.S.; Sze, V. Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks. IEEE Journal of Solid-State Circuits 2017, 52, 127–138. [Google Scholar] [CrossRef]

- Yue, J.; Liu, Y.; Yuan, Z.; Wang, Z.; Guo, Q.; Li, J.; Yang, C.; Yang, H. A 3.77TOPS/W Convolutional Neural Network Processor With Priority-Driven Kernel Optimization. IEEE Transactions on Circuits and Systems II: Express Briefs 2019, 66, 277–281. [Google Scholar] [CrossRef]

- Spagnolo, F.; Perri, S.; Corsonello, P. Approximate Down-Sampling Strategy for Power-Constrained Intelligent Systems. IEEE Access 2022, 10, 7073–7081. [Google Scholar] [CrossRef]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications, 2017, [arXiv:1704.04861]. arXiv:1704.04861].

- Chen, Y.G.; Chiang, H.Y.; Hsu, C.W.; Hsieh, T.H.; Jou, J.Y. A Reconfigurable Accelerator Design for Quantized Depthwise Separable Convolutions. 2021 18th International SoC Design Conference (ISOCC), 2021, pp. 290–291. [CrossRef]

- Li, B.; Wang, H.; Zhang, X.; Ren, J.; Liu, L.; Sun, H.; Zheng, N. Dynamic Dataflow Scheduling and Computation Mapping Techniques for Efficient Depthwise Separable Convolution Acceleration. IEEE Transactions on Circuits and Systems I: Regular Papers 2021, 68, 3279–3292. [Google Scholar] [CrossRef]

- Chong, Y.S.; Goh, W.L.; Ong, Y.S.; Nambiar, V.P.; Do, A.T. An Energy-Efficient Convolution Unit for Depthwise Separable Convolutional Neural Networks. 2021 IEEE International Symposium on Circuits and Systems (ISCAS), 2021, pp. 1–5. [CrossRef]

- Balasubramanian, P.; Nayar, R.; Maskell, D.L. Approximate Array Multipliers. Electronics 2021, 10. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 2014, arXiv:1409.1556 2014. [Google Scholar]

- Krizhevsky, A.; Hinton, G. Convolutional deep belief networks on cifar-10. Master’s thesis, University of Toronto, 2010.

- Cadence: Computational Software for Intelligent System Design. https://www.cadence.com/en_US/home.html. Accessed: 2023-03-30.

- Synopsys: EDA Tools, Semiconductor IP and Application Security Solutions. https://www.synopsys.com/. Accessed: 2023-03-30.

| Mode-0/1 | Standard Multiplier | ||

|---|---|---|---|

| Number of Bits | 12 | ||

| Input Pattern | Random Numbers () | ||

| RMSE | 19,259.06 | 19,017.31 | |

| Normalized | 1.2% | - | |

| Maximum: 12 bits × 12 bits = 16,777,216 (4,096 × 4,096) | |||

| Performance | Eyeriss | KOP3 | Energy-Efficient | A-DSCNN |

|---|---|---|---|---|

| [9] | [10] | [15] | (This Work) | |

| Process Technology | TSMC 40-nm | TSMC 40-nm | TSMC 40-nm | TSMC 40-nm |

| Frequency (Hz) | 200M | 200M | 200M | 200M |

| Voltage (V) | 0.9 | 0.9 | 0.9 | 0.9 |

| Power (W) | 364.76m | 153.51m | 126.92m | 95.04m |

| Area () | 7.086 | 2.302 | 0.846 | 0.398 |

| Accuracy Loss (%) | - | - | 3.3 | 3.8 |

| Throughput (GOPs) | 175.08 | 383.77 | 397.25 | 464.79 |

| Efficiency (GOPs/mW) | 0.48 | 2.5 | 3.13 | 4.88 |

| Normalized (ratio) |

| VGG16 | Modified VGG-net |

|---|---|

| { Conv, 64, ReLU} | DSC, 8, ReLU |

| Max-pooling | DSC, 8, ReLU |

| { Conv, 128, ReLU} | Max-pooling |

| Max-pooling | DSC, 16, ReLU |

| { Conv, 256, ReLU} | DSC, 16, ReLU |

| Max-pooling | Max-pooling |

| { Conv, 512, ReLU} | DSC, 32, ReLU |

| Max-pooling | DSC, 32, ReLU |

| { Conv, 512, ReLU} | Max-pooling |

| Max-pooling | Flatten, 128 |

| Dense, 4096, ReLU | Dense, 10, Softmax |

| Dense, 4096, ReLU | |

| Dense, 10, Softmax |

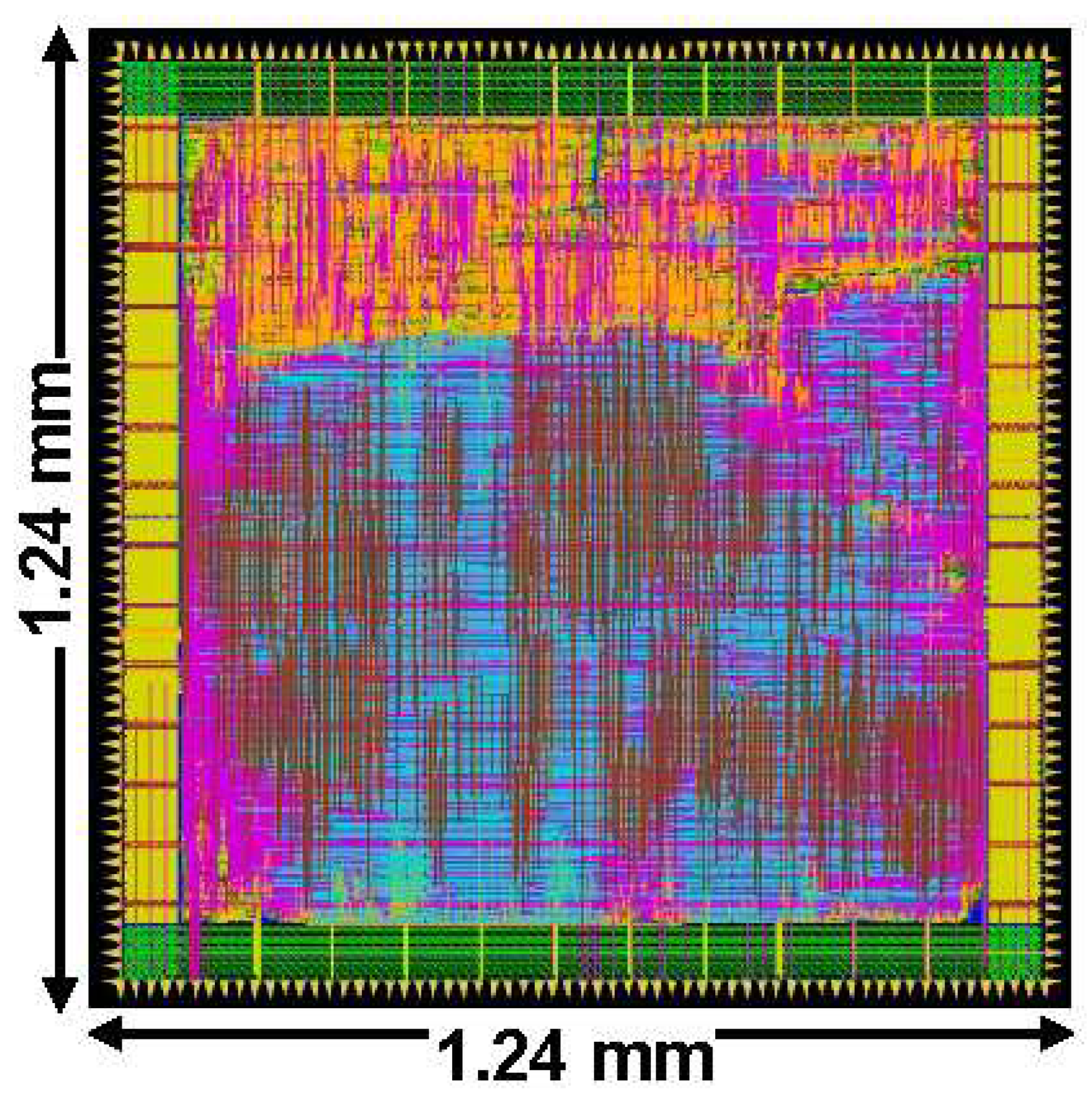

| Performance | Proposed DSCNN chip |

|---|---|

| Process Technology | TSMC 40-nm CMOS |

| Frequency | 200 MHz |

| Voltage Supply | 0.9 V |

| Chip Size | 1.24 mm × 1.24 mm |

| Chip Area | 1.16 () |

| Core Power | 486.81 mW |

| Efficiency | 4.78 GOPs/mW |

| Precision | 4-bit/8-bit |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).