2. Theoretical background and Main theorem

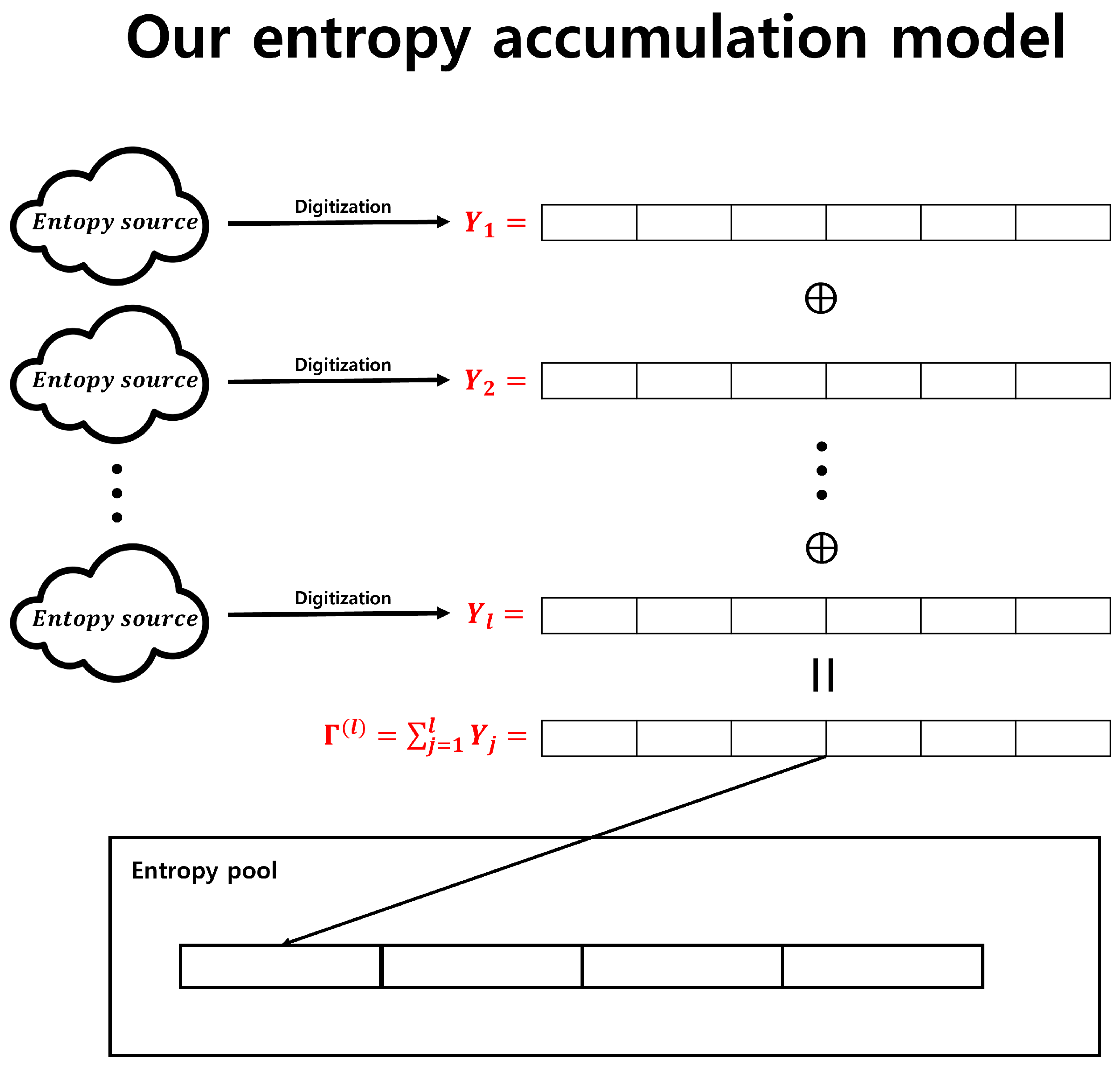

In this section, we describe the theoretical background of entropy accumulation using only the XOR operation. In particular, we use the following notation:

: Direct product of n copies of the group . Note that the bitwise XOR operation corresponds to the + operation over .

: The space of all complex valued functions on .

, where, is random variable that represents the input sequence.

, .

, .

: Probability distribution of the random variable X.

. implies the min-entropy of .

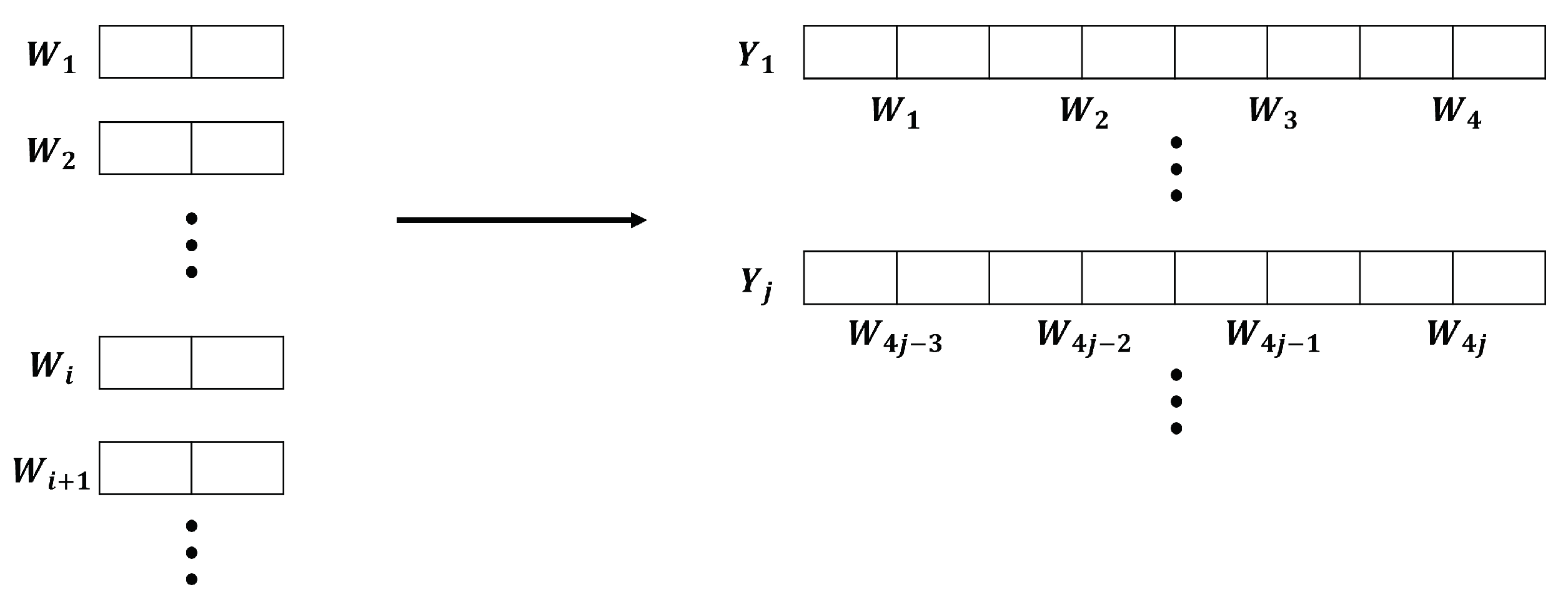

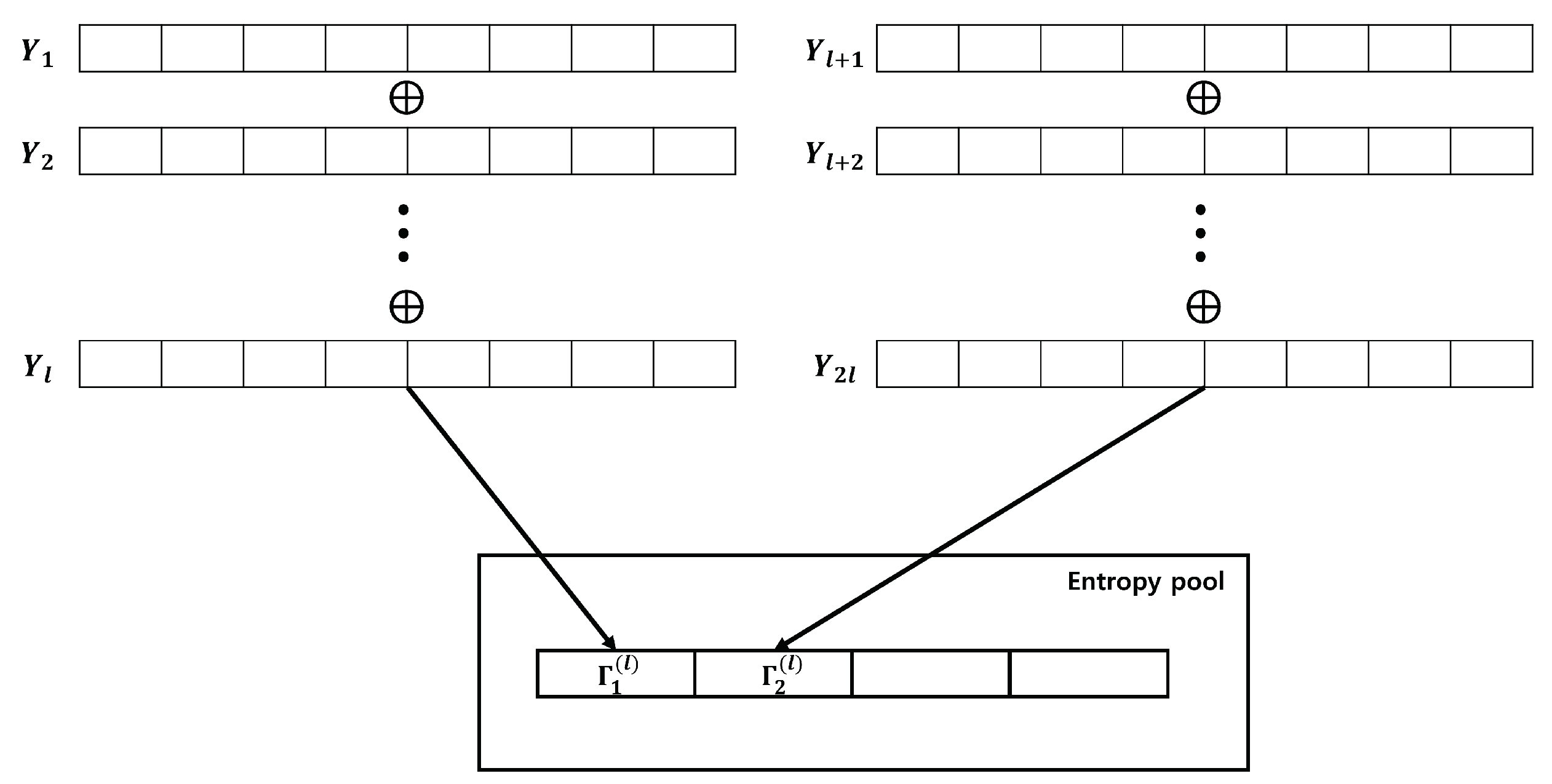

We show that, as the number of input sequences required to generate one output sequence, represented by l, approaches infinity, converges to n. Furthermore, we provide the optimal value of l necessary to surpass the specified min-entropy . First, we provide a solution for the case and explain why this solution is inappropriate for the general case. Thereafter, we provide a general solution using a Discrete Fourier Transform and Convolution.

First, we show why the problem we’re trying to solve is challenging. The difficult point of our problem is that in order to determine the value of

, complex linear operations must be performed on the function values of

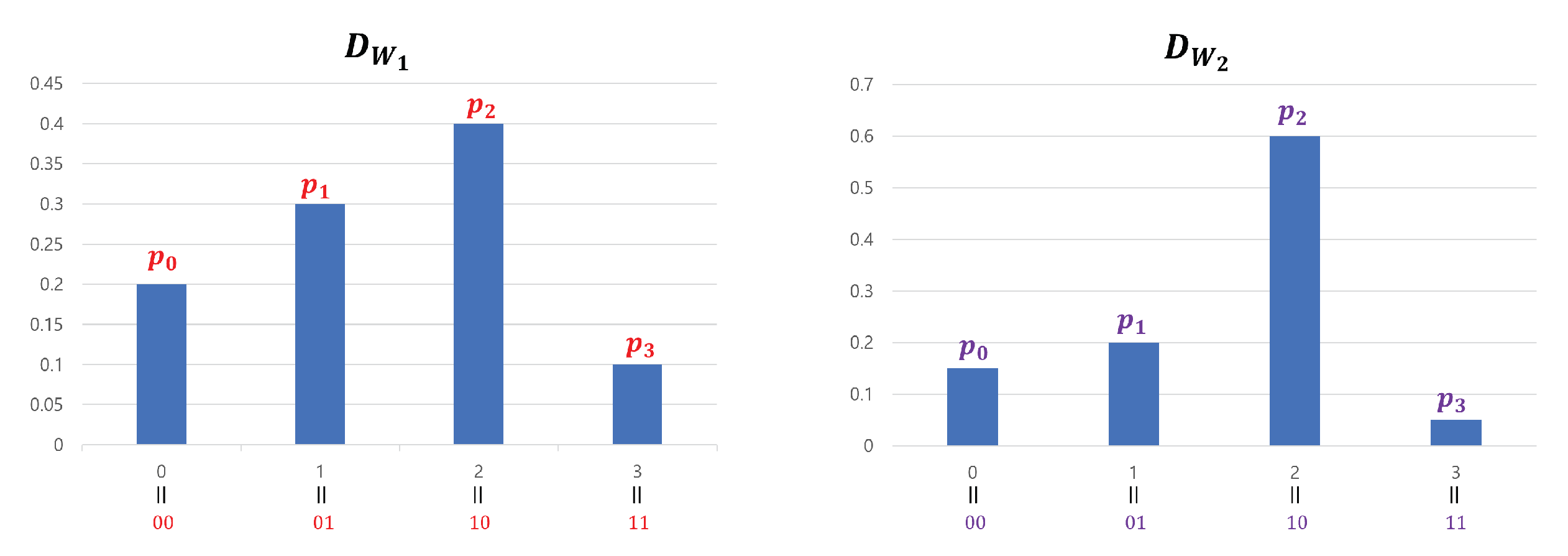

. For example, Suppose

,

are independent, and

are identical to the distribution

D. The distribution

D is determined as

. Let us calculate

which suffices to show the complexity of computation.

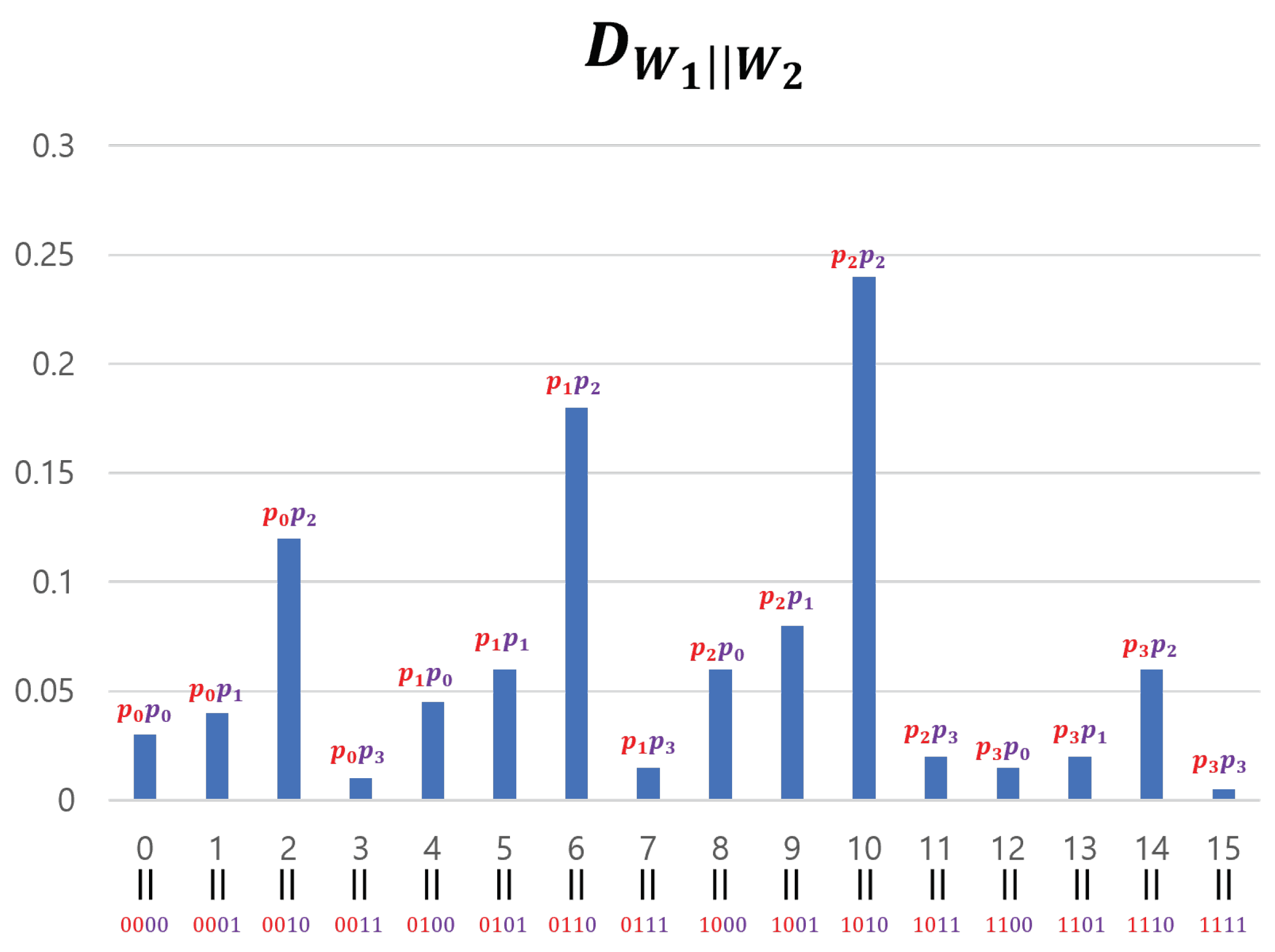

From the above calculations, we derive two features. First, to calculate one function value of

, we must sum

terms. That is, to calculate

the first

terms

could be any value and the last term

is automatically determined by equation

. Because there is

choices respectively, the total terms would be

; however, it is difficult to calculate. Second, as

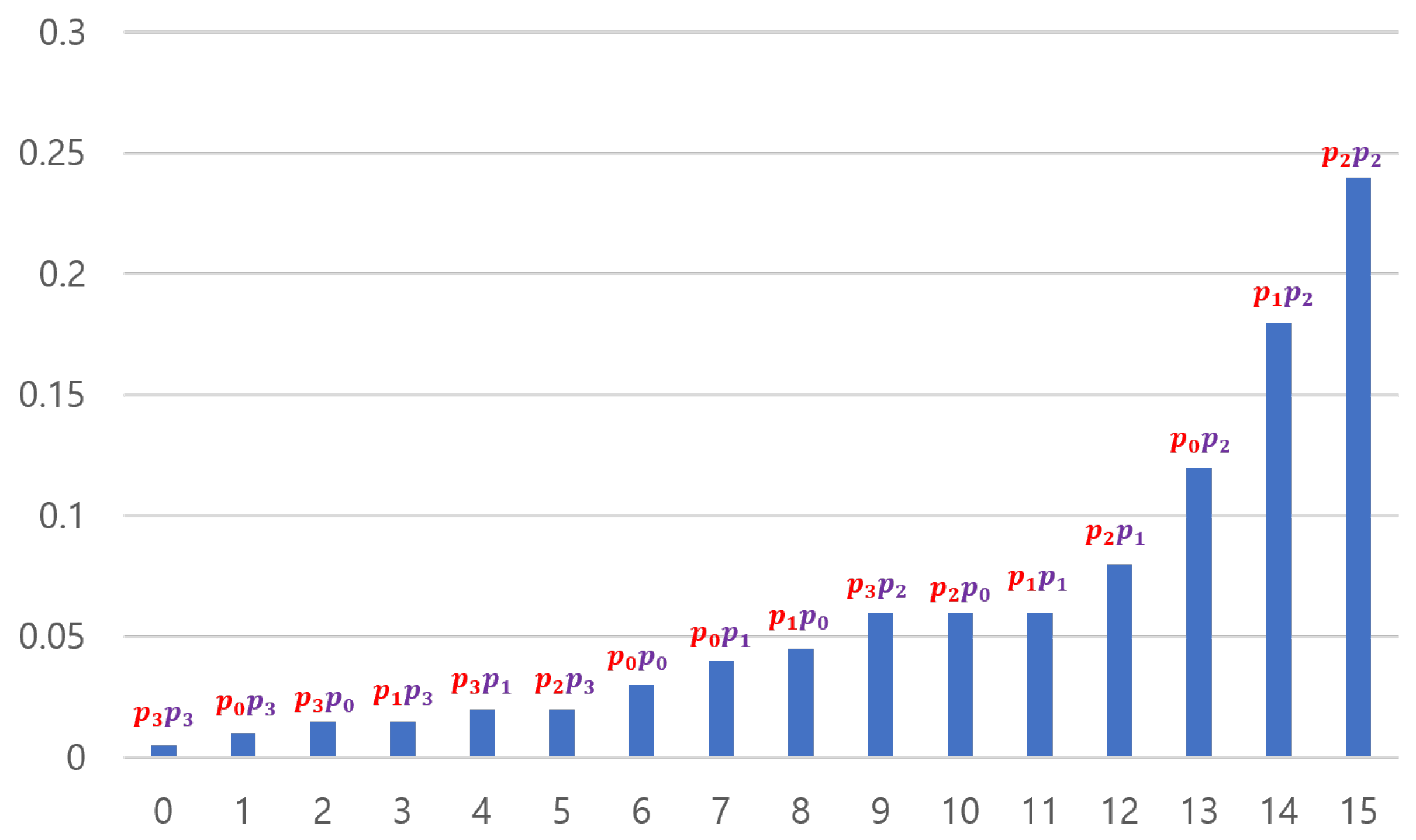

l grows,

tends to uniform distribution. The distance between original distribution

D and the uniform distribution

I with respective to infinite norm

of above example is

. However, we can observe that

is

. As

l grows, the terms that should be computed to calculate the function value grow rapidly; consequently, the impact of one function value will decrease. Although this phenomenon seems natural, still the following questions are remain: Under what conditions does this convergence happen? How about the convergence rate? How can we prove the related results?

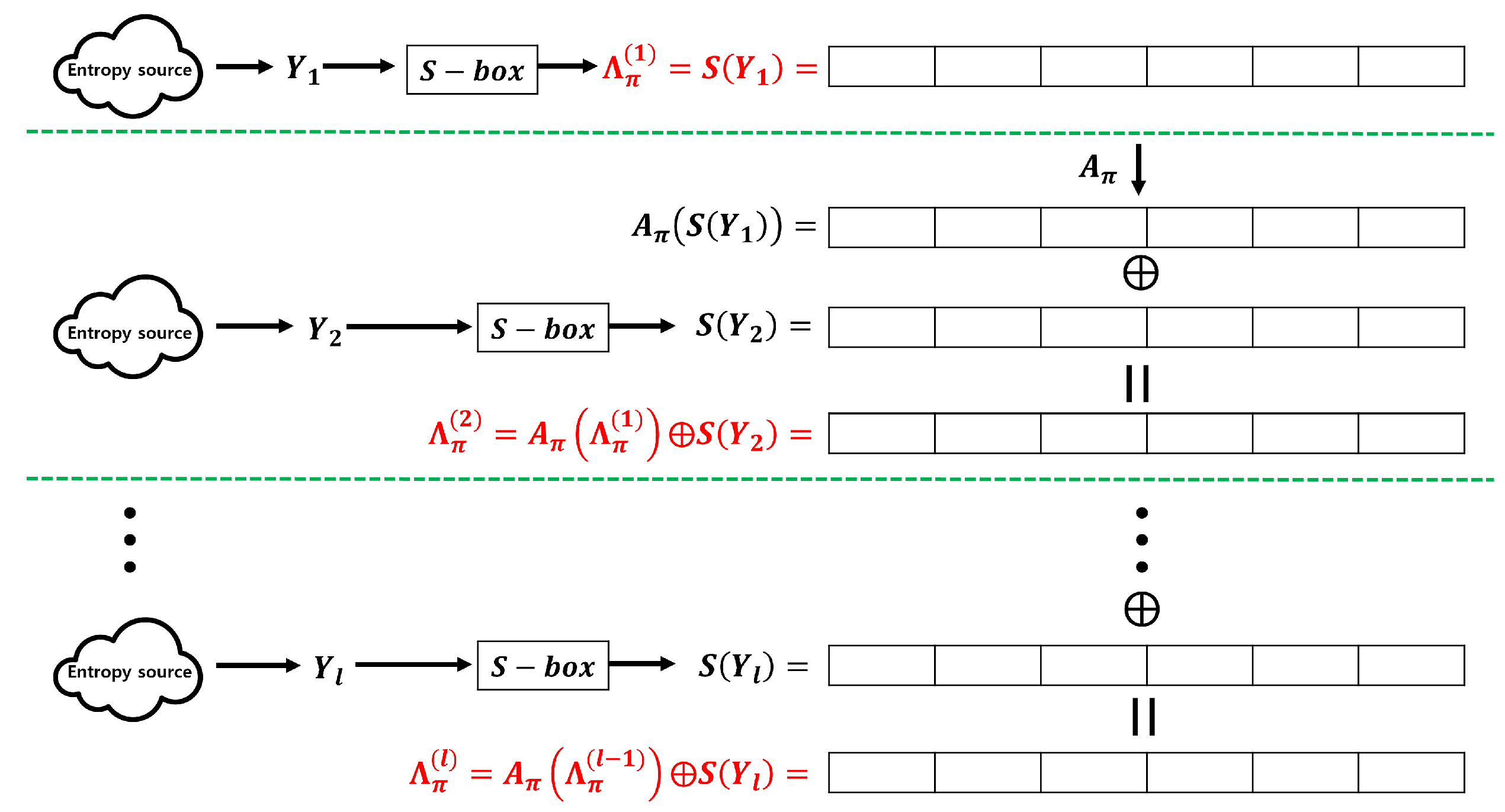

2.1. Entropy accumulation with

Let us consider the relatively simple case of

and

following an independent and identical distribution(IID). In this case, all

follow the same distribution

and a recursive relationship

is established. Thus, we can express

based on the following relationship:

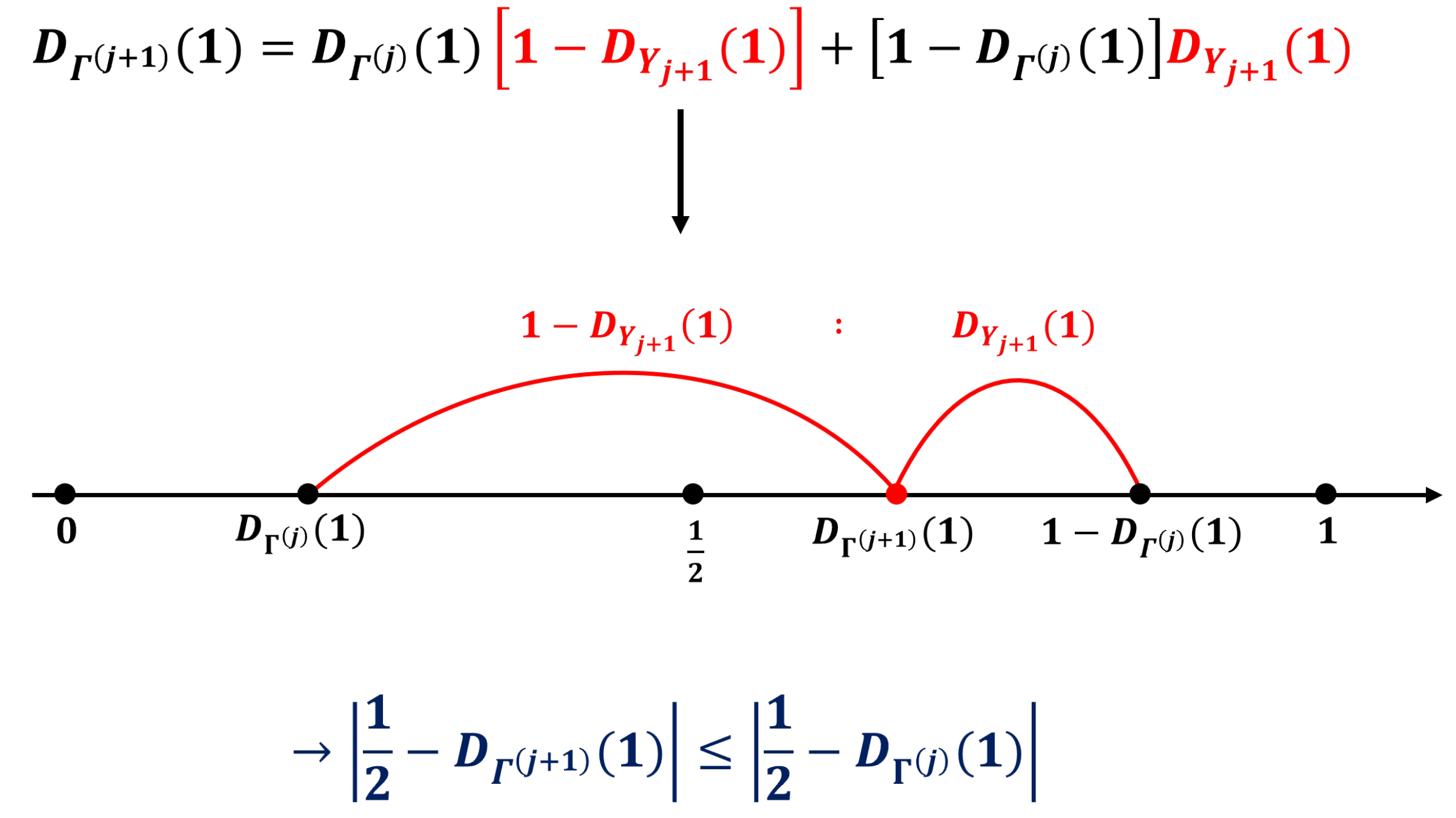

(2) is derived based on the property that the sum of two bits resulting in 1 can be obtained by adding 1 and 0, or 0 and 1. Moreover,

in (2) can be interpreted as a point where

and

are internalized into

. Because

and

are symmetric about

, the condition

causes the convergence of

to

(i.e., the maximum possible entropy) as

j increases.

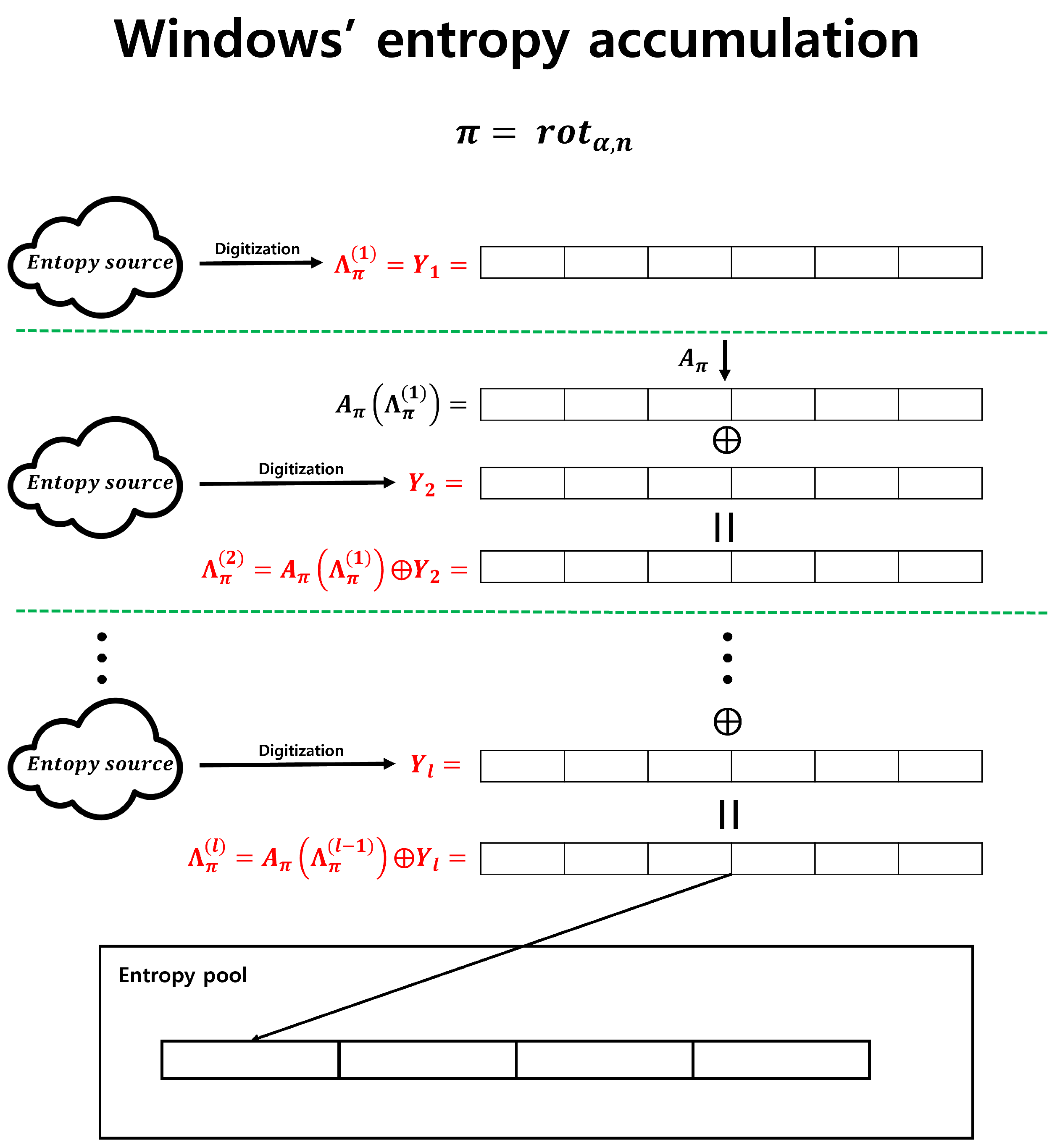

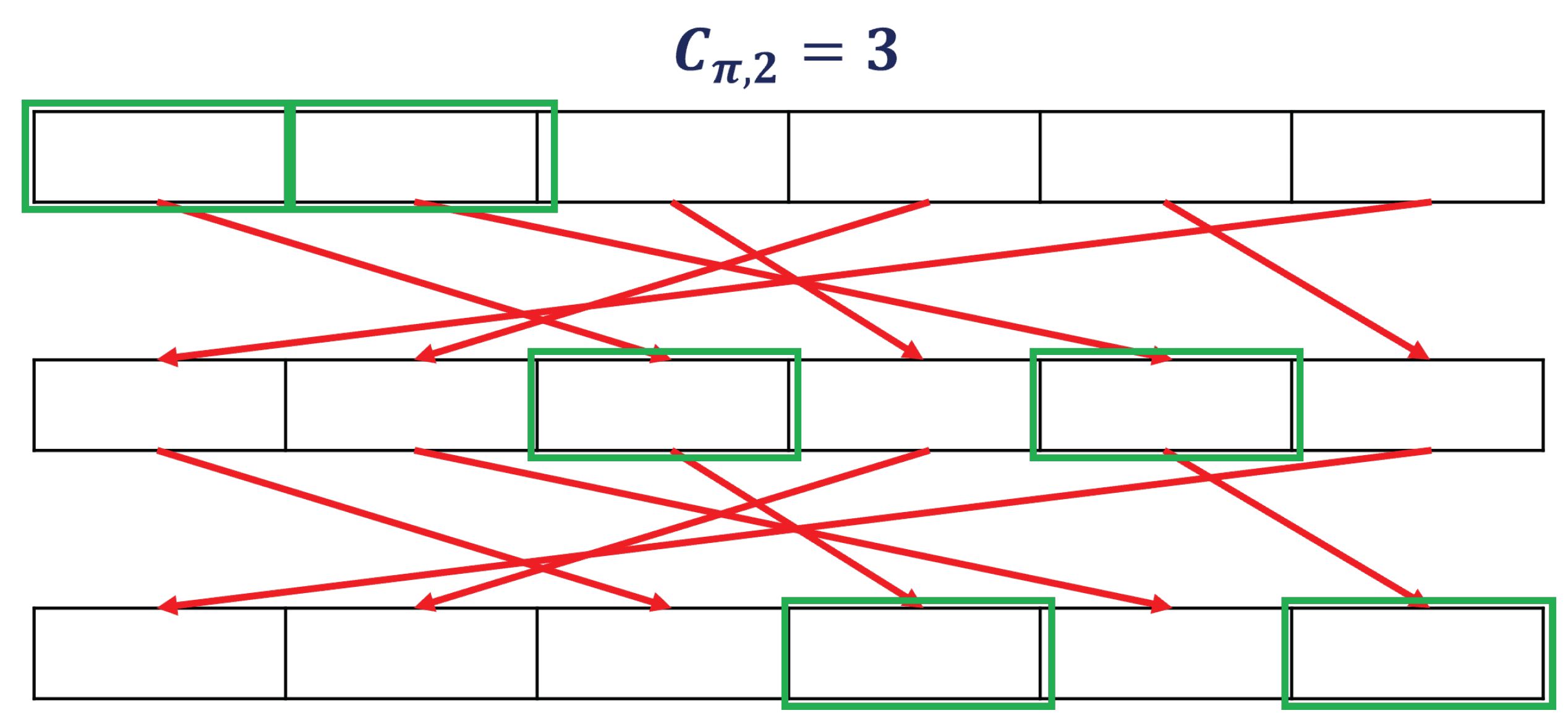

Figure 5 illustrates the scenario.

A more specific formula exists to accurately illustrate this situation. The following lemma, often referred to as the "Piling Up Lemma," further details this [

5].

Fact 1 (Piling Up Lemma [

5]).

Let be independent one-bit random variables, and let . Then, the following equation holds:

Because for each j, converges to 0 and converges to 1/2 as l approaches infinity.

This equation cannot be applied when n is greater than 2. When or more, the probability that each bit produces 0 or 1 converges to 1/2; however, we cannot sum up the min-entropies of each position to calculate the total min-entropy because it is allowed only when all bits are independent of each other. Therefore, a new method is required for addressing these problems.

2.2. Convolution and Discrete Fourier Transform

In this subsection, we describe techniques applicable in the special case where n equals 1 as well as in more general cases. First, we reformulated the problem using the concept of convolution.

Definition 1 (Convolution).

The Convolution of is defined as follows:

For the entropy accumulation problem of interest, . Using the language of convolution, (1) becomes . The entropy accumulation problem is reduced to a problem of handling this convolution. Fortunately, there exists a mathematical concept, the "Fourier Transform," that harmonizes well with convolution.

Definition 2 (Discrete Fourier Transform).

The Discrete Fourier Transform of is defined as:

A Discrete Fourier Transform is the mapping from to . In fact, this transform is one-to-one mapping. Proposition 1 supports this.

Lemma 1. If , .

Proof.

We define

as:

Then,

is a homomorphism :

As

,

. Therefore, for every

,

contain the same number of elements. Let the number of element be

N, then

The last equality holds because each is the root of complex equation . □

Lemma 2.

Let f be the element in and be the Fourier transform function. Thus, the following holds.

Proof. By Lemma 1,

if

. Therefore,

□

Proposition 1. Let f and g be the elements of . If , .

Proof. We assume that

. Then,

holds for every

from Lemma 2.

□

The following theorem asserts that the convolution product of functions is represented as a multiplication in the transformed space. This plays a significant role in proving our main theorem.

Proposition 2. Let f and g be the elements of . Then, .

Proof. By the definitions of convolution and Discrete Fourier Transform,

□

To intuitively determine why converges to a uniform distribution, we must understand both the properties of the Discrete Fourier Transform applied to the distribution and the Discrete Fourier Transform of a uniform distribution.

Proposition 3. Let D be an arbitrary probability distribution and I be a uniform distribution of . Then,

-

(i)

For all .

-

(ii)

.

-

(iii)

For all .

The symbols denote the Kronecker delta. The Kronecker delta is defined as 1 when t is zero vector and 0 for all other t.

Proof. proof of (a) :

proof of (b) :

Proof of (c): We know from part (a) that

. For the remaining

,

The last equality is based on Lemma 1. □

From Proposition 2, we have . By Proposition 3, , whereas for , the value of approaches 0 as l increases. Specifically, converges to as l increases. Since the Discrete Fourier Transform is an one-to-one function by Proposition 1, we can infer that converges to I as l increases.

2.3. Main Theorem

In the previous subsection, we confirmed that converges to a uniform distribution I as l increases. In this subsection, we present a solution to the entropy accumulation problem based on this approach. Specifically, we aim to find a condition for the random variable and a value for l such that achieves a specific min-entropy. The following theorem is one of the main results of our study:

Theorem 1.

Let be independent random variables, and . We define . Then,

Note that the condition for random variables is not an IID. Because the above theorem only requires the independence of random variables without the condition of identical distribution, it can be effectively applied when using parallel entropy sources. We provide the proof of Theorem 1.

Proof. For any function

, we have

This is obtained from Lemma 2 with

. Using the function

of Lemma 2, (3) and (4) can be written as

We apply (5) to each

with

. Because

and

,

The final approximation is based on the Taylor theorem. □