3. Results and Discussion

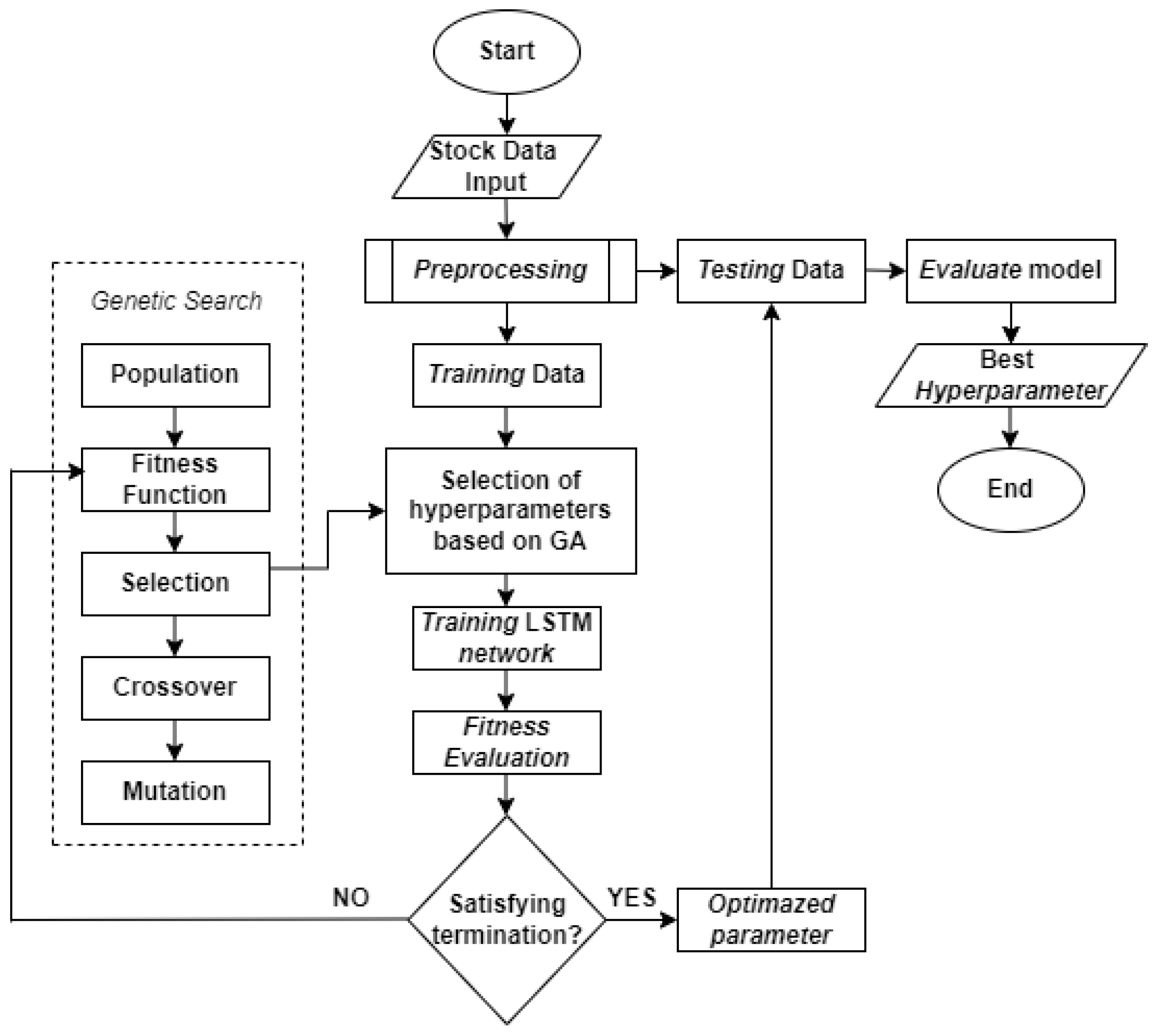

The research uses the GA-LSTM method as a calculation process by applying several different optimizations to find hyperparameters of the number of epochs, window sizes, and the number of LSTM units in the hidden layer. In this study, it is limited to using close for this type of prediction price. The results of this study are the model with the hyperparameters obtained from training and testing data with the lowest MAE and MAPE values, then the obtained model can be used to predict the stock’s price.

Root Mean Square Error (RMSE) is used to measure the difference between the estimated target and the actual target by calculating the square root value of the MSE. The higher the value produced by the RMSE, the lower the level of accuracy, and vice versa, if the value of the resulting RMSE is lower, the level of accuracy is higher [

29]. The RMSE formula is shown in the following equation.

Description:

= value of the i

= result forecast

= amount of data

Mean Absolute Percentage Error (MAPE) to measure error by calculating the average method the average absolute error divided by the true value, which results show the absolute percentage error value of the predicted model results. The prediction model is getting better if the MAPE value is lower [

30]. The RMSE formula is shown in the following equation.

Description:

= value of forecast results

= value of observation to -i

= amount of data

Table 1.

Range MAPE value [

31].

Table 1.

Range MAPE value [

31].

| Range MAPE |

Meaning |

| <10% |

Accuracy rate is very good |

| 10 – 20% |

Accuracy rate is good |

| 20 – 50% |

Accuracy rate is decent |

| >50% |

Accuracy rate bad |

After doing all stages of the research, the output is in the form of RMSE and MAPE values, as well as a graph of the comparison of the original price with the predicted data, the results are shown as follows.

Case-1 Shares of Bank BRI

Table 2.

Forecasting results with Adagrad optimizer.

Table 2.

Forecasting results with Adagrad optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 33 |

[4, 4, 3] |

1 |

Training |

100.58 |

1.49 |

| Testing |

94.43 |

1.86 |

| 38 |

[4, 4, 3] |

1 |

Training |

108.31 |

1.53 |

| Testing |

95.39 |

1.88 |

In the model using Adagrad optimizer with hyperparameter, the number of epochs is 33, window size is1, and the number of LSTM units in the hidden layer are [4, 4, 3], the RMSE and MAPE values during training are 100.58 and 1.49%, while in testing are 94.43 and 1.86%. Then the model with hyperparameter number epochs is 38, window size is 1, and the number of LSTM units in the hidden layer is [4, 4, 3], the RMSE and MAPE values during training are 108.30 and 1.53%, while in testing namely 95.39 and 1.88%.

Table 3.

Forecasting results with Adadelta optimizer.

Table 3.

Forecasting results with Adadelta optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 35 |

[1, 3, 2] |

1 |

Training |

106.43 |

1.51 |

| Testing |

94.47 |

1.86 |

| 18 |

[2, 1, 4] |

2 |

Training |

99.69 |

1.58 |

| Testing |

98.09 |

1.91 |

In the model using Adadelta optimizer with hyperparameter, the number of epochs is 35, window size is 1, and the number of LSTM units in the hidden layer are [1, 3, 2], RMSE and MAPE values during training namely 106.43 and 1.51%, while in testing it is 94.47 and 1.86%. Then the model with hyperparameter number epochs is 18, window size is 2, and the number of LSTM units in the hidden layer is [2, 1, 4], the RMSE and MAPE values during training are 99, 69 and 1.58%, while in testing namely 98.09 and 1.91%.

Table 4.

Forecasting results with RMSprop optimizer.

Table 4.

Forecasting results with RMSprop optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 41 |

[4, 5, 5] |

1 |

Training |

102.91 |

1.52 |

| Testing |

94.40 |

1.88 |

| 43 |

[3, 1, 4] |

5 |

Training |

102.83 |

1.53 |

| Testing |

95.49 |

1.91 |

In the model that uses RMSprop optimizer with hyperparameter, the number of epochs is 41, window size is 1, and the number of LSTM units is hidden layers are [4, 5, 5], the RMSE and MAPE values during training are 102.91 and 1.52%, while in testing are 94.40 and 1.88%. Then the model with hyperparameter number epochs is 43, window size is 5, and the number of LSTM units in the hidden layer is [3, 1, 4], the RMSE and MAPE values during training are 102.83 and 1.53%, while in testing namely 95.49 and 1.91%.

Table 5.

Forecasting results with Adam optimizer.

Table 5.

Forecasting results with Adam optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 38 |

[4, 5, 2] |

4 |

Training |

94.28 |

1.51 |

| Testing |

95.49 |

1.87 |

| 24 |

[4, 5, 2] |

2 |

Training |

95.93 |

1.51 |

| Testing |

95.06 |

1.87 |

In the model using Adam optimizer with hyperparameter, the number of epochs is 38, window size is 4, and the number of LSTM units is hidden layers are [4, 5, 2], the RMSE and MAPE values during training are 94.28 and 1.51%, while in testing are 95.49 and 1.87%. Then the model with hyperparameter number epochs is 24, window size is 4, and the number of LSTM units in the hidden layer is [4, 5, 2], the RMSE and MAPE values during training are 95.93 and 1.51%, while in testing namely 95.06 and 1.87%.

Table 6.

Forecasting results using Nadam optimizer.

Table 6.

Forecasting results using Nadam optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 15 |

[3, 3, 2] |

6 |

Training |

93.03 |

1.51 |

| Testing |

95.62 |

1.87 |

| 28 |

[4, 5, 4] |

2 |

Training |

95.99 |

1.50 |

| Testing |

95.17 |

1.87 |

In the model using Nadam optimizer with hyperparameters, the number of epochs is 15, window size is 6, and the number of LSTM units is hidden layers are [3, 3, 2], the RMSE and MAPE values during training are 93.03 and 1.51%, while in testing are 95.62 and 1.87%. Then the model with hyperparameter number epochs is 28, window size is 2, and the number of LSTM units in the hidden layer is [4, 5, 4], the RMSE and MAPE values during training are 95.99 and 1.51%, while in testing namely 95.17 and 1.87%.

Case-2 Shares of Bank Mandiri

Table 7.

Forecasting results with Adagrad optimizer.

Table 7.

Forecasting results with Adagrad optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 36 |

[1, 5, 1] |

4 |

Training |

105.87 |

1.37 |

| Testing |

153.16 |

1.89 |

| 14 |

[4, 3, 2] |

5 |

Training |

109.39 |

1.42 |

| Testing |

152.89 |

1.92 |

In the model using Adagrad optimizer with hyperparameter, the number epochs is 36, window size is 4, and the number of LSTM units in the hidden layer are [1, 5, 1], the RMSE and MAPE values during training are 105.87 and 1.37%, while in testing are 153.16 and 1.89%. Then the model with hyperparameter number epochs is 14, window size is 5, and the number of LSTM units in the hidden layer is [4, 3, 2], the RMSE and MAPE values during training are 109.39 and 1.42%, while in testing namely 152.89 and 1.92%.

Table 8.

Forecasting results with Adadelta optimizer.

Table 8.

Forecasting results with Adadelta optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 35 |

[3, 5, 4] |

3 |

Training |

108.21 |

1.43 |

| Testing |

152.84 |

1.94 |

| 30 |

[5, 2, 5] |

6 |

Training |

110.35 |

1.45 |

| Testing |

153.51 |

1.93 |

In the model that uses Adadelta optimizer with hyperparameter, the number of epochs is 35, window size is 3, and the number of LSTM units in the hidden layer are [3, 5, 4], the RMSE and MAPE values during training namely 1068.21 and 1.43%, while in testing it is 152.84 and 1.94%. Then the model with hyperparameter number epochs is 30, window size is 6, and the number of LSTM units in the hidden layer is [5, 2, 5], the RMSE and MAPE values during training are 110.35 and 1.45%, while in testing namely 153.51 and 1.93%.

Table 9.

Forecasting results with RMSprop optimizer.

Table 9.

Forecasting results with RMSprop optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 20 |

[2, 2, 2] |

2 |

Training |

104.99 |

1.35 |

| Testing |

150.06 |

1.88 |

| 20 |

[3, 3, 5] |

5 |

Training |

105.00 |

1.35 |

| Testing |

151.44 |

1.89 |

In the model that uses RMSprop optimizer with hyperparameter, the number of epochs is 20, window size is 2, and the number of LSTM units is hidden layers are [2, 2, 2], the RMSE and MAPE values during training are 104.99 and 1.35%, while in testing are 150.06 and 1.88%. Then the model with hyperparameter number epochs is 20, window size is 5, and the number of LSTM units in the hidden layer is [3, 3, 5], the RMSE and MAPE values during training are 105.00 and 1.35%, while in testing namely 151.44 and 1.89%.

Table 10.

Forecasting results with Adam optimizer.

Table 10.

Forecasting results with Adam optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 15 |

[5, 1, 4] |

3 |

Training |

105.15 |

1.35 |

| Testing |

150.37 |

1.88 |

| 25 |

[3, 3, 2] |

5 |

Training |

105.10 |

1.35 |

| Testing |

150,41 |

1.88 |

In the model using Adam optimizer with hyperparameter, the number of epochs is 15, window size is 3 and the number of LSTM units in the hidden layer namely [5, 1, 4], the RMSE and MAPE values during training were 105.15 and 1.35%, while in testing were 150.37 and 1.88%. Then the model with hyperparameter number epochs is 25, window size is 5, and the number of LSTM units in the hidden layer is [3, 3, 2], the RMSE and MAPE values during training are 105.10 and 1.35%, while in testing namely 150.41 and 1.88%.

Table 11.

Forecasting results with Nadam optimizer.

Table 11.

Forecasting results with Nadam optimizer.

| Epochs |

Neurons |

Window Size |

Data |

RMSE |

MAPE(%) |

| 44 |

[3, 5, 3] |

5 |

Training |

105.05 |

1.35 |

| Testing |

151.59 |

1.89 |

| 21 |

[4, 3, 5] |

6 |

Training |

105.02 |

1.35 |

| Testing |

150,91 |

1,89 |

In the model using Nadam optimizer with hyperparameters, the number of epochs is 44, window size is 5, and the number of LSTM units is hidden layers are [3, 5, 3], the RMSE and MAPE values during training are 105.05 and 1.35%, while in testing are 151.59 and 1.89%. Then the model with hyperparameter number epochs is 21, window size is 6, and the number of LSTM units in the hidden layer is [4, 3, 5], the RMSE and MAPE values during training are 105.02 and 1.35%, while in testing namely 150.91 and 1.89%.

From the results of training and testing of each optimization with a different case, it can be seen that the resulting RMSE value shows a small value, which means that the model generated from this prediction has a small error rate. And the results of the MAPE value have a value below 10% which shows the prediction model has a very good level of accuracy. With the results of using several optimizations to produce small RMSE and MAPE values, in this study it can be said that GA-LSTM can improve performance and save time. Therefore, in one process we can find hyperparameters to use. Even so, if you look at tables 2 to 6, it can be seen that the MAPE values generated by the optimizers Adam and Nadam have the same value. In the RMSE in Adam's first model between training and testing difference error of 1.21 and in the second model it has an error 0.87. While the RMSE in the first model of Nadam between training and testing difference error of 2.56 and in the second model it has an error 0.82. Then from table 7 to table 8 it can be seen that the MAPE value generated by the two models by optimizers has the same value. Therefore, it seems that the value is quite stable and small using the Adam optimizer.